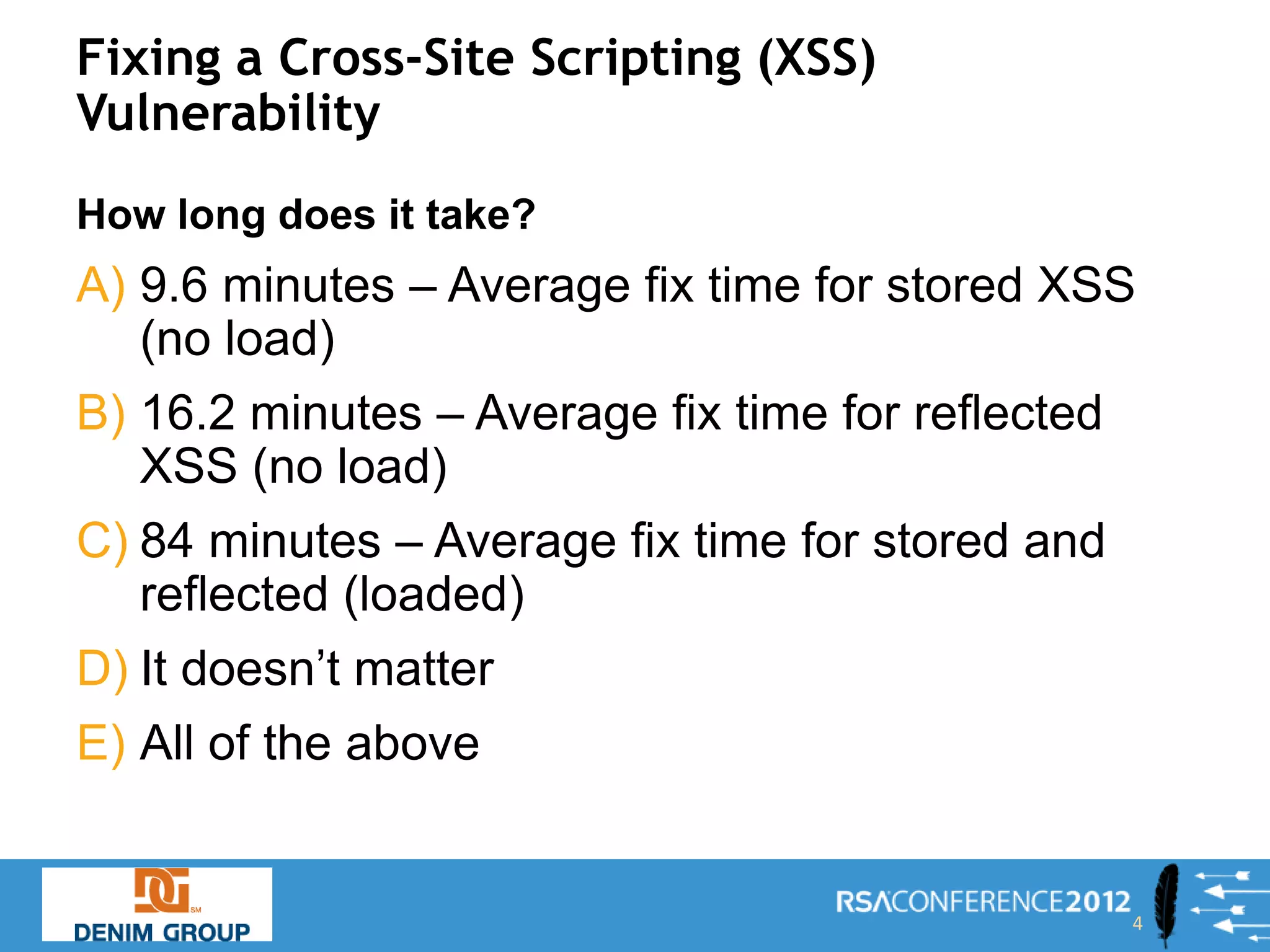

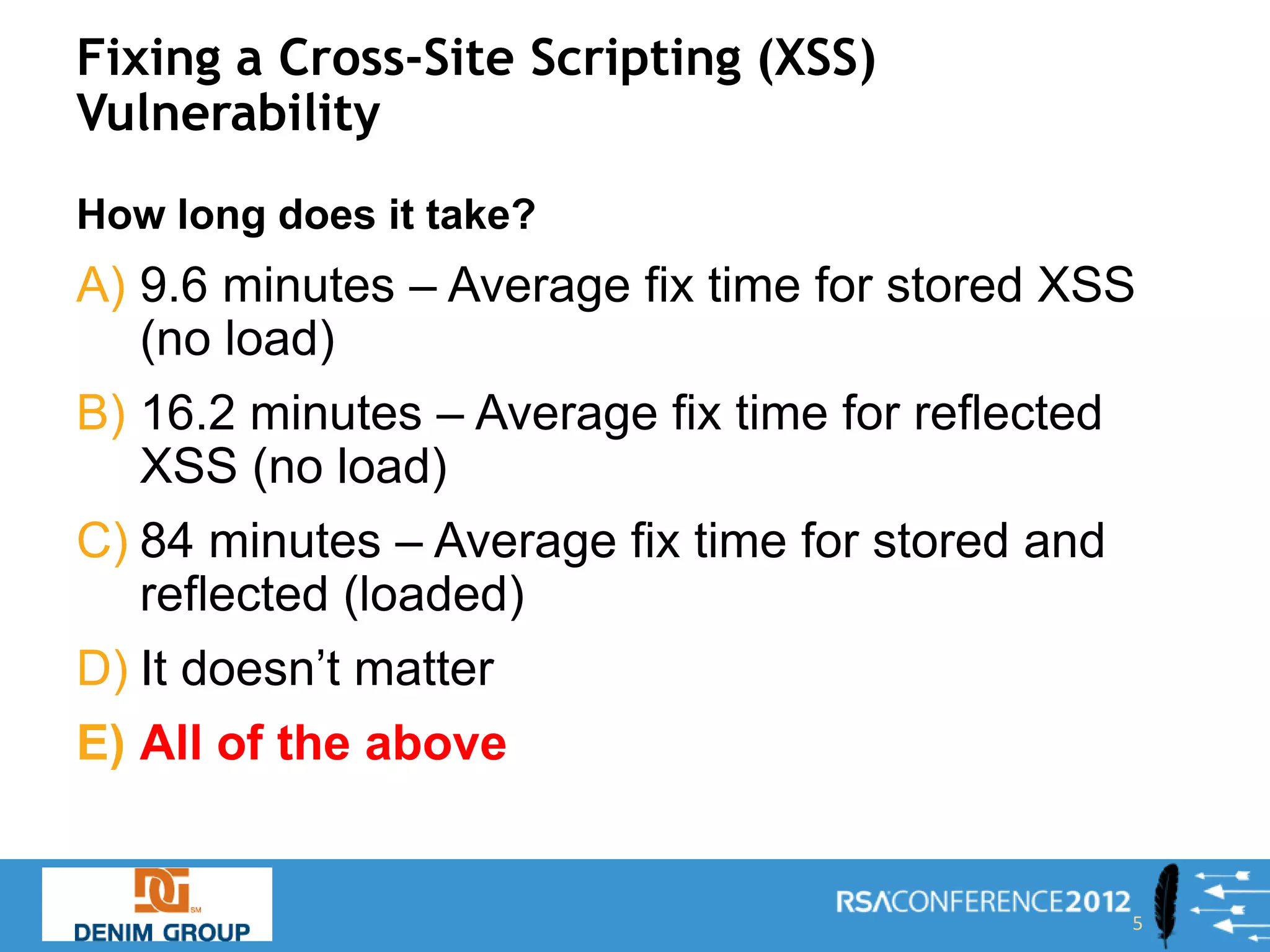

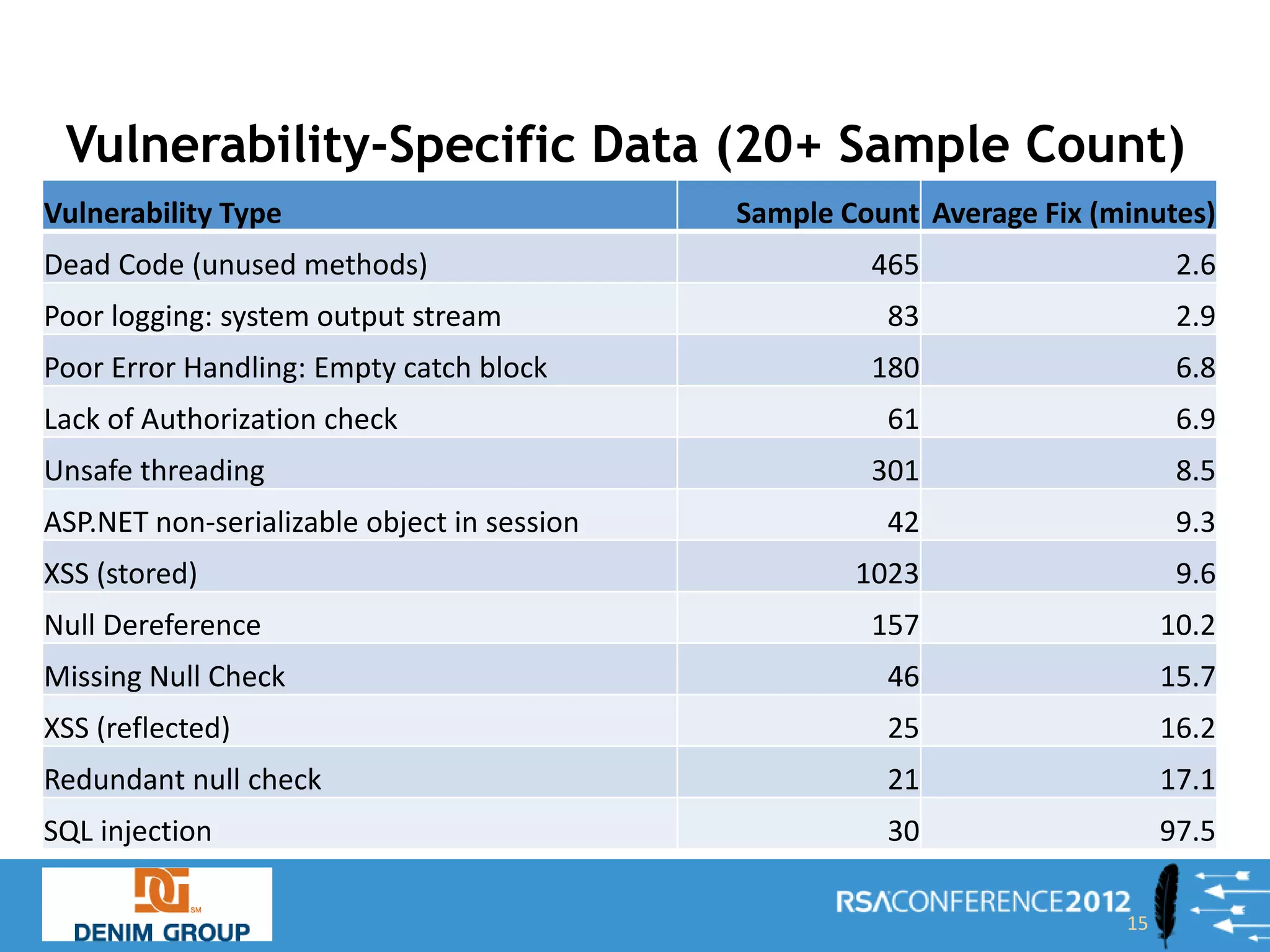

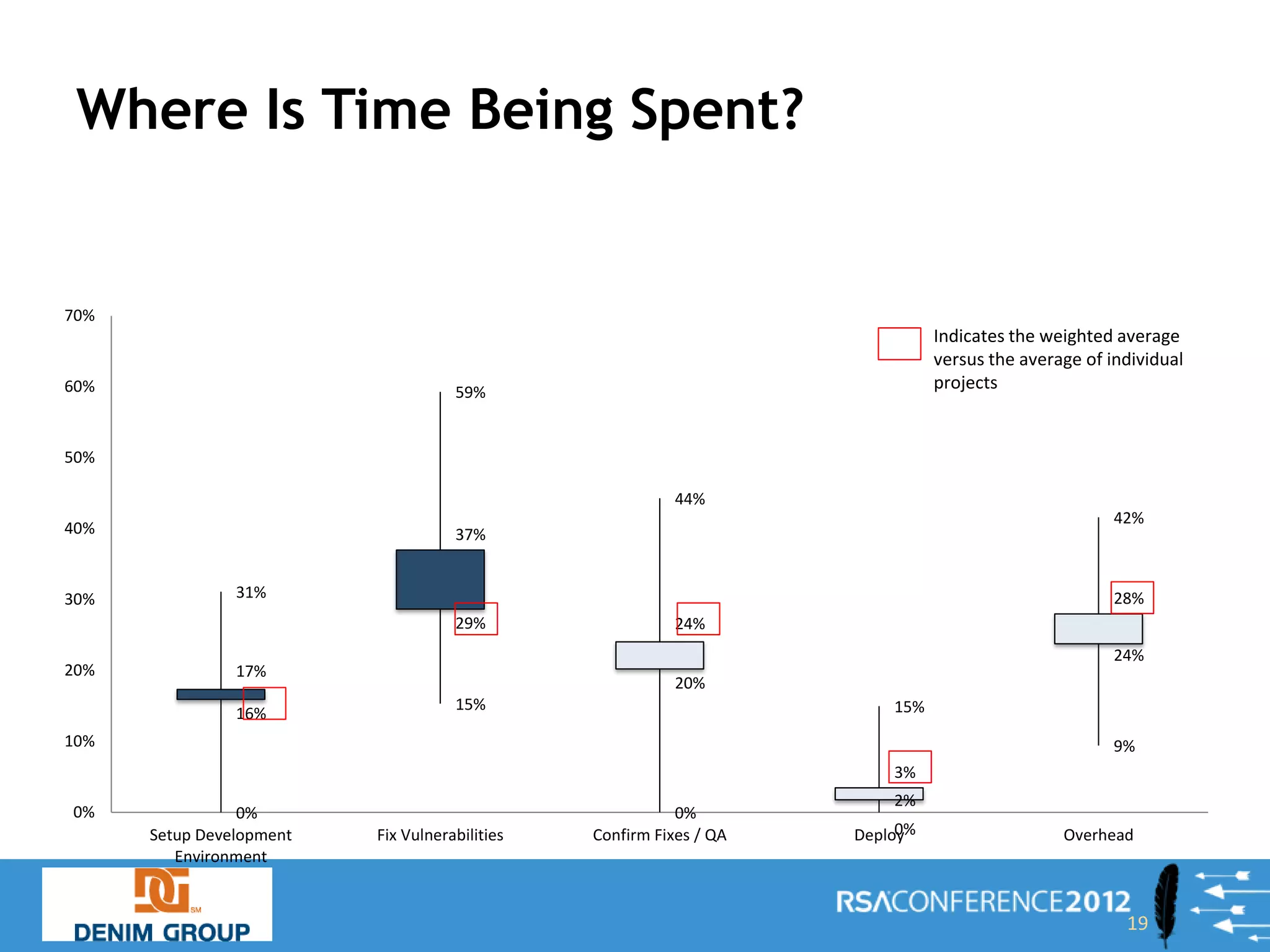

The document presents a comprehensive overview of the costs and time associated with fixing application vulnerabilities, specifically focusing on remediation projects. It discusses best practices, methodologies, and analysis from statistical data gathered on various vulnerabilities, emphasizing the importance of project structure, stakeholder involvement, and effective execution. The findings highlight potential biases and suggest strategies for minimizing remediation costs, including better access to development environments and automation in testing.