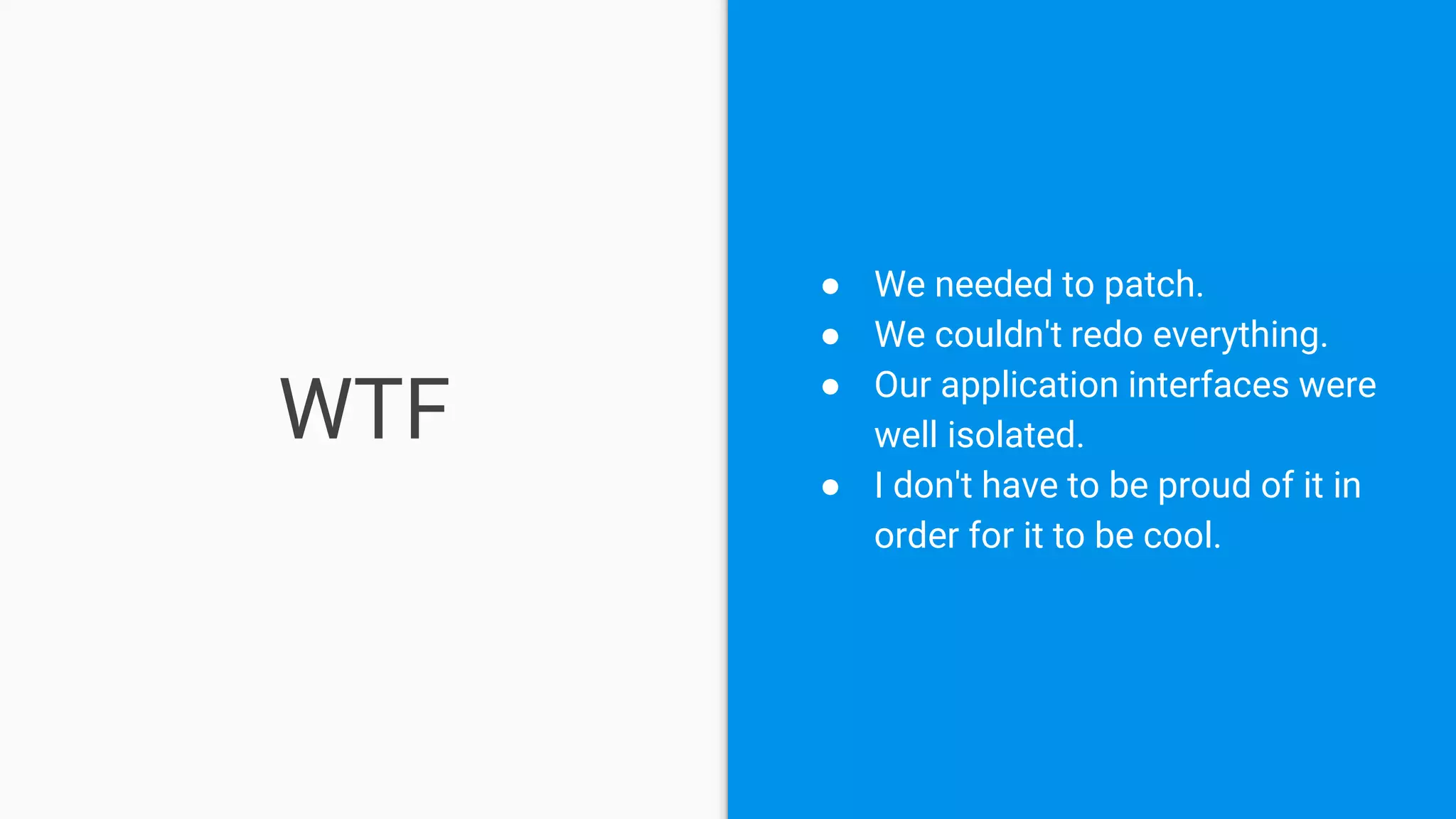

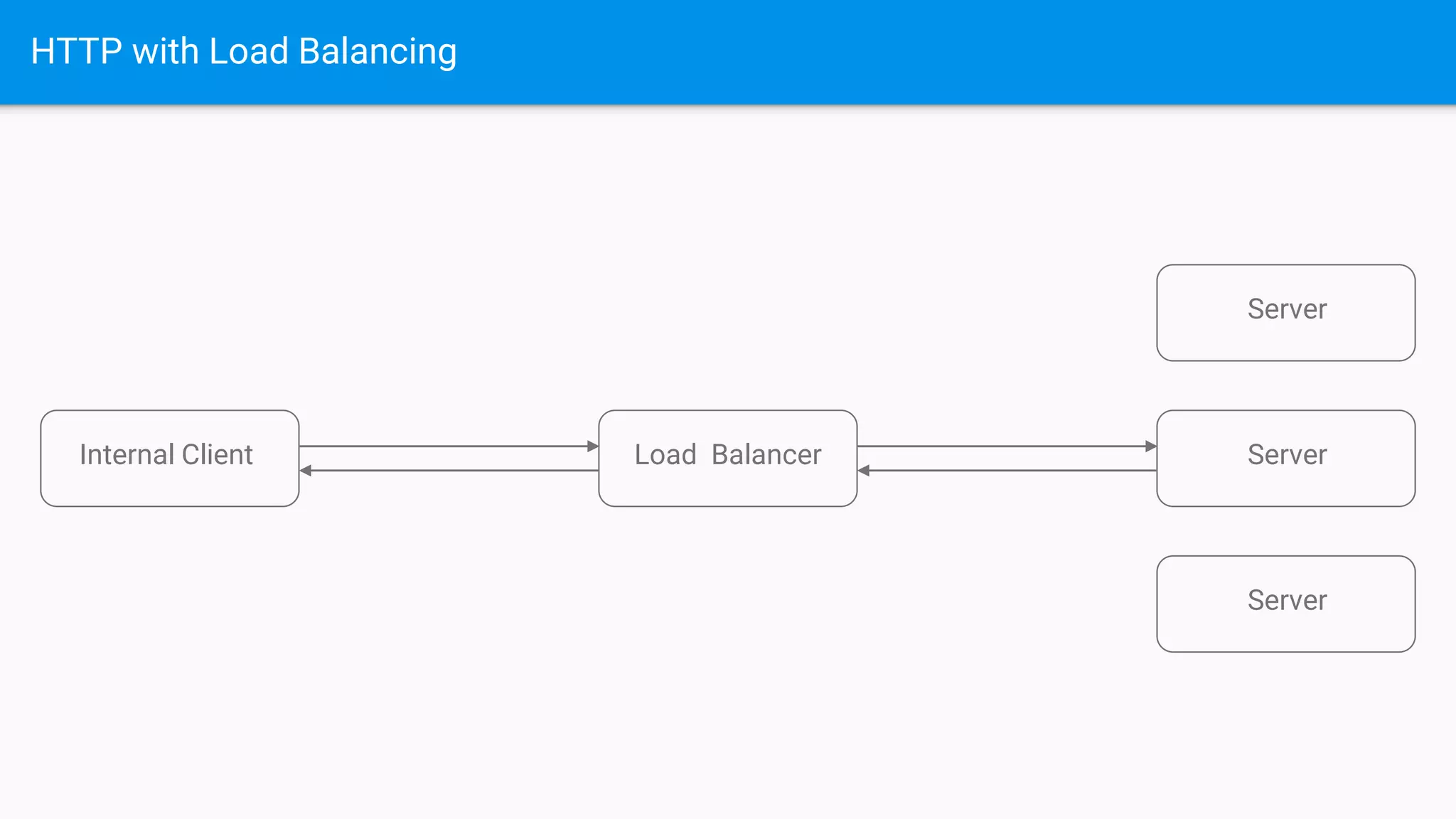

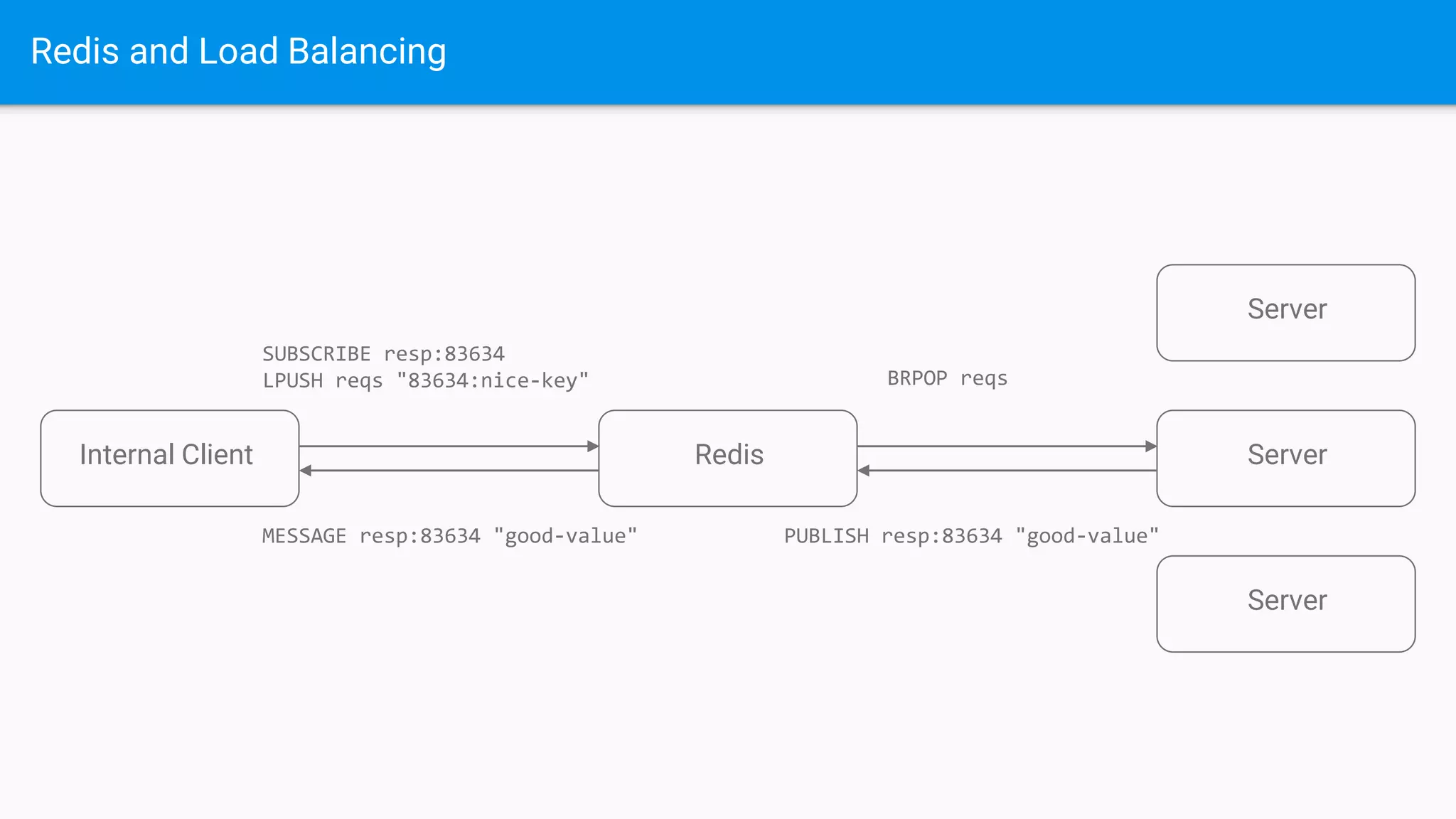

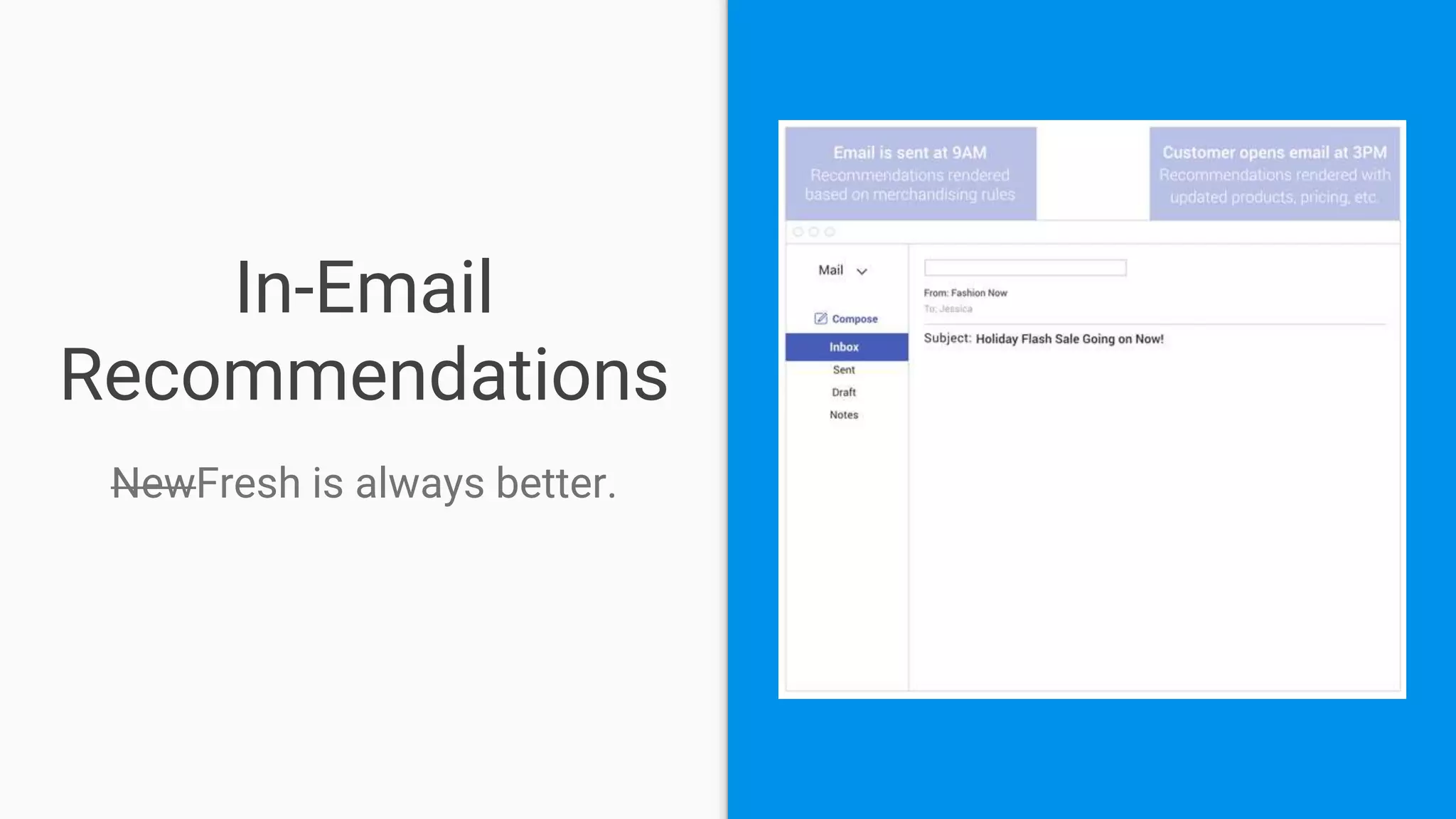

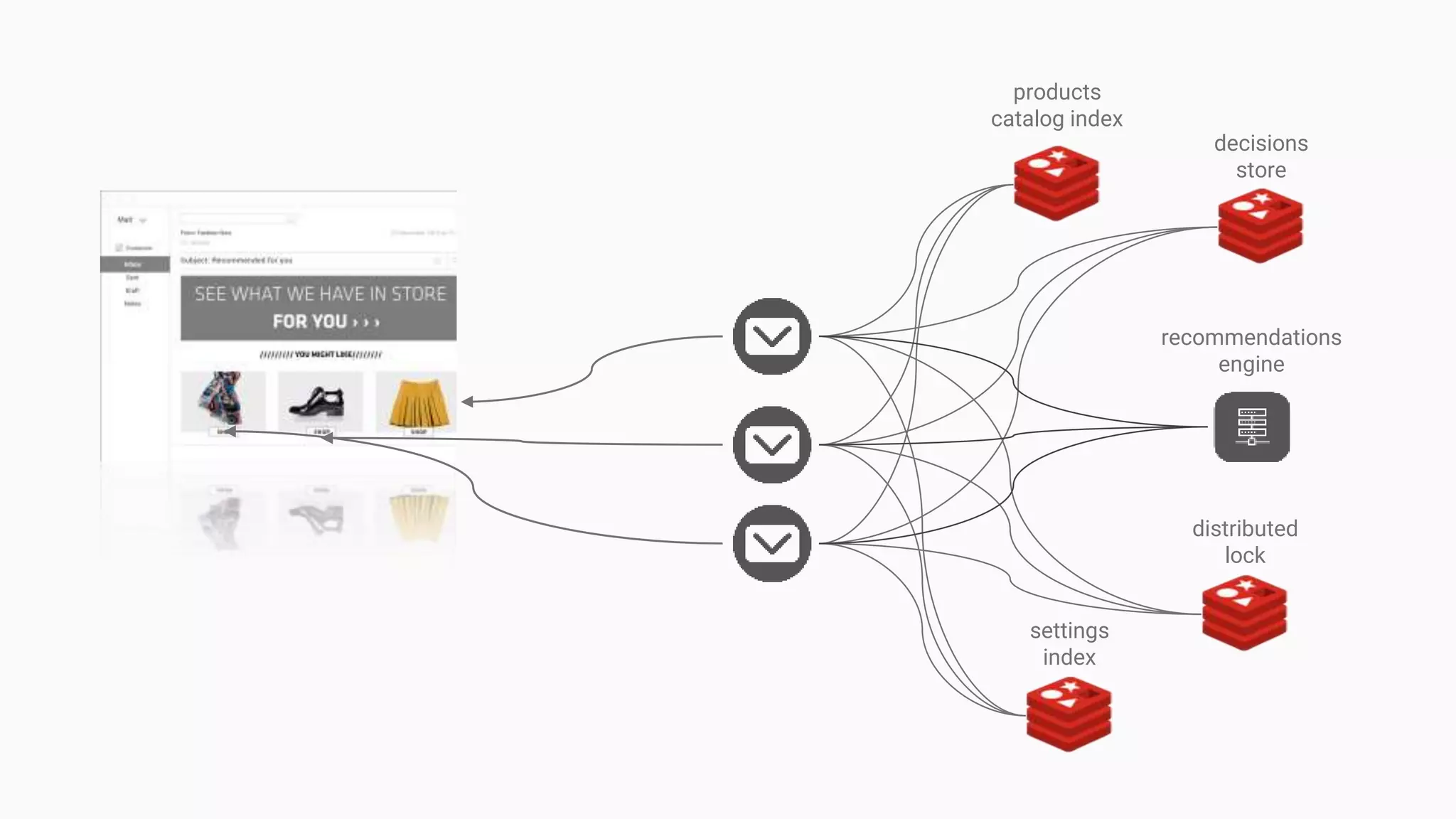

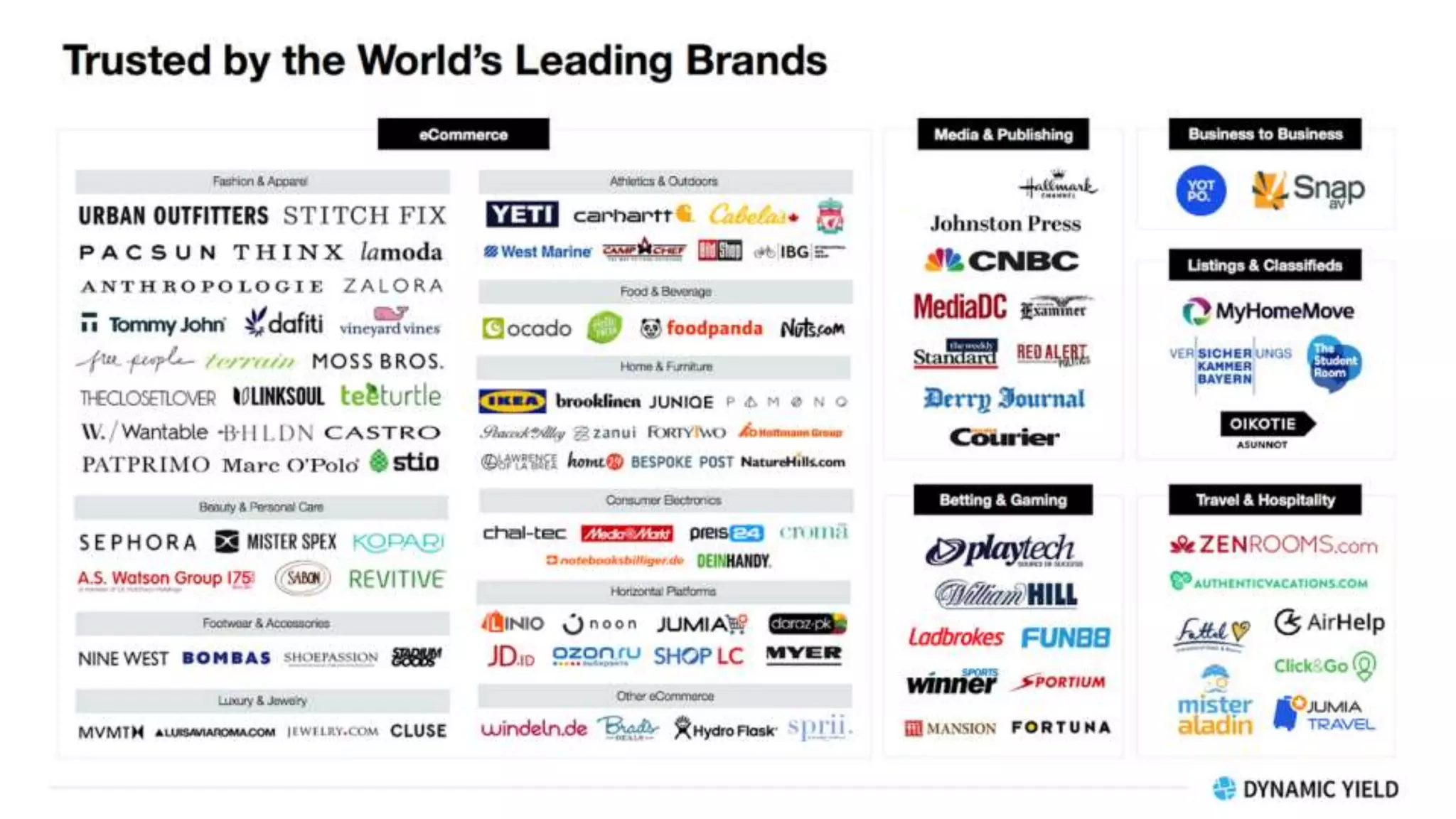

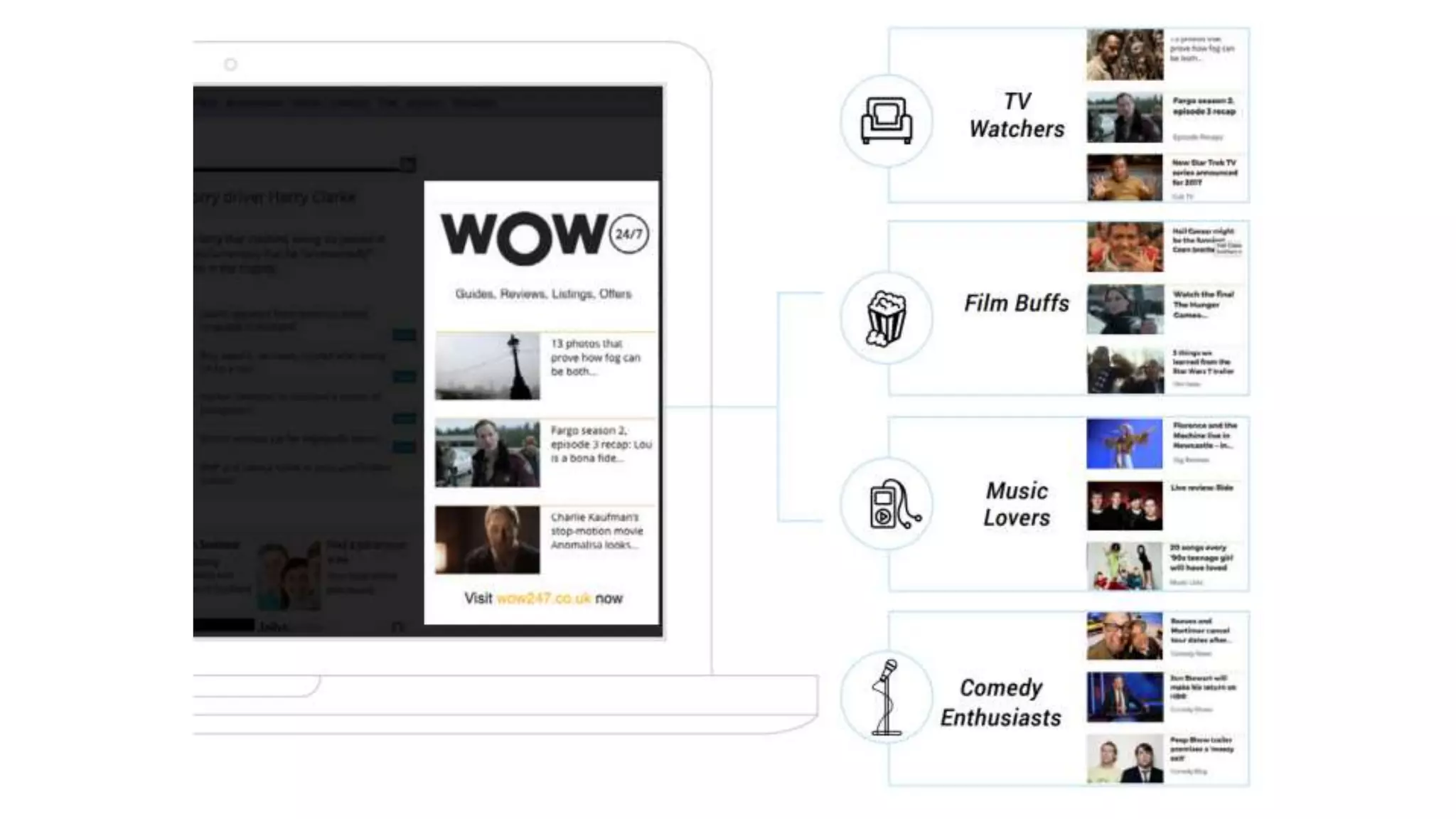

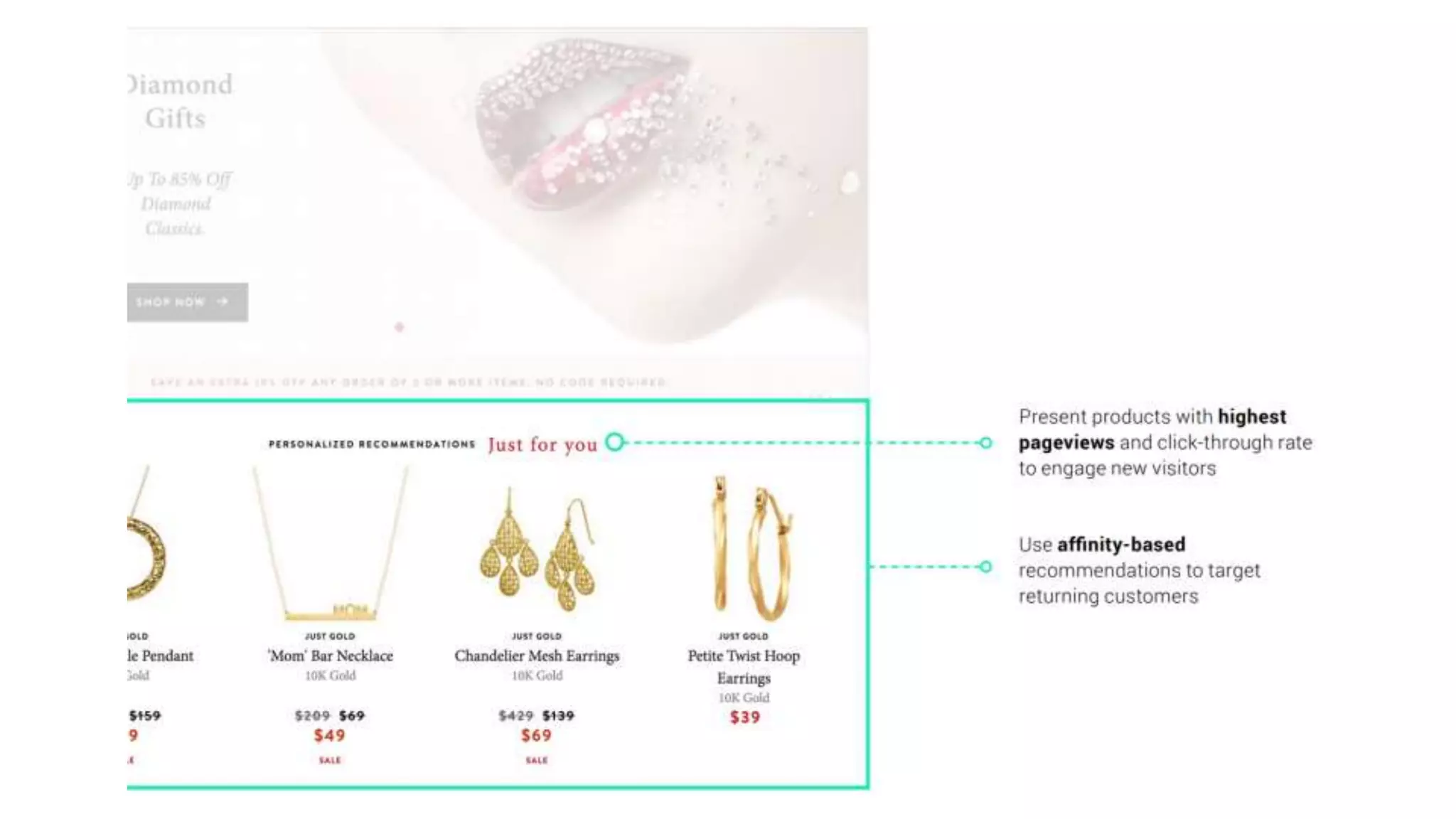

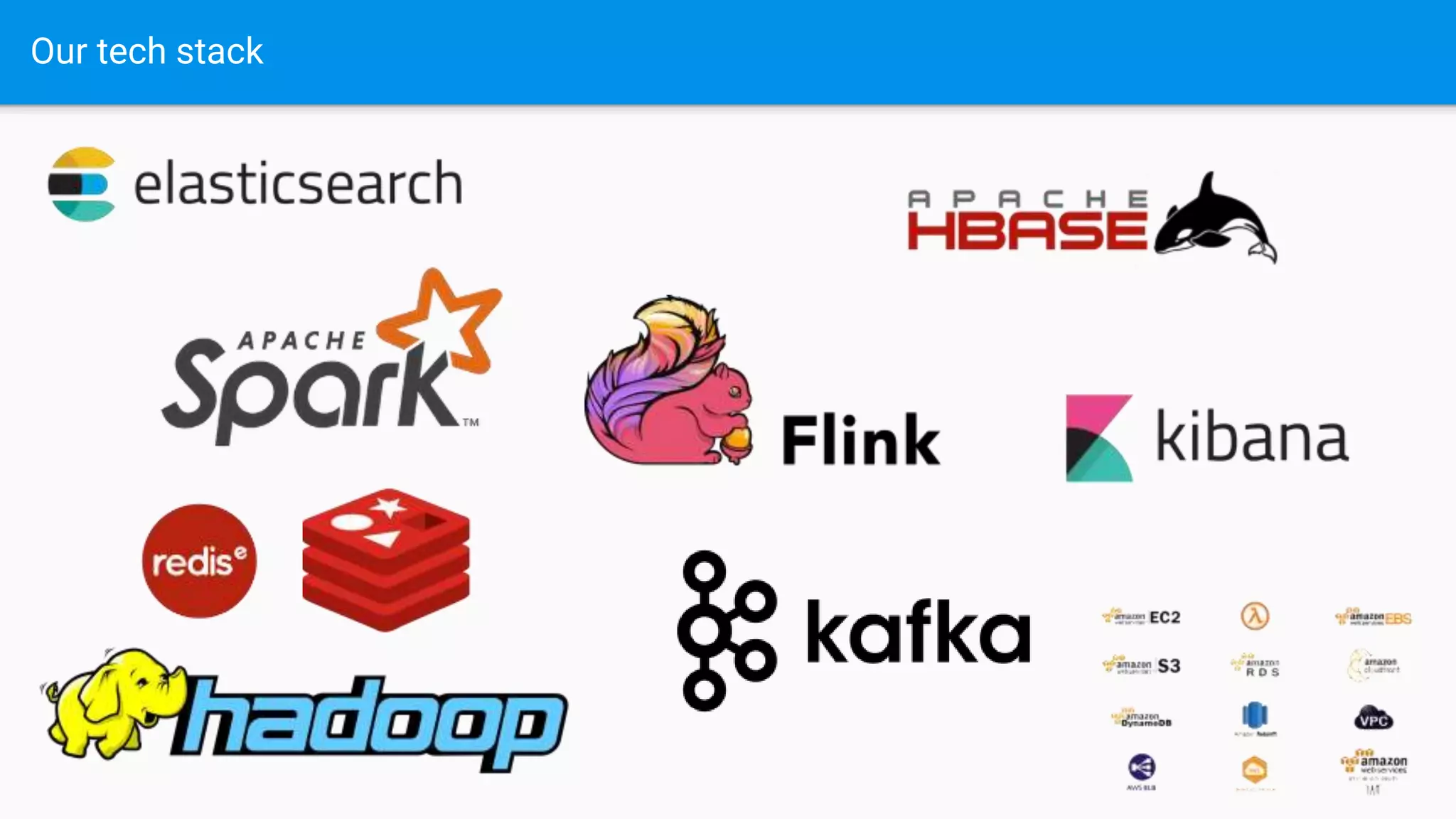

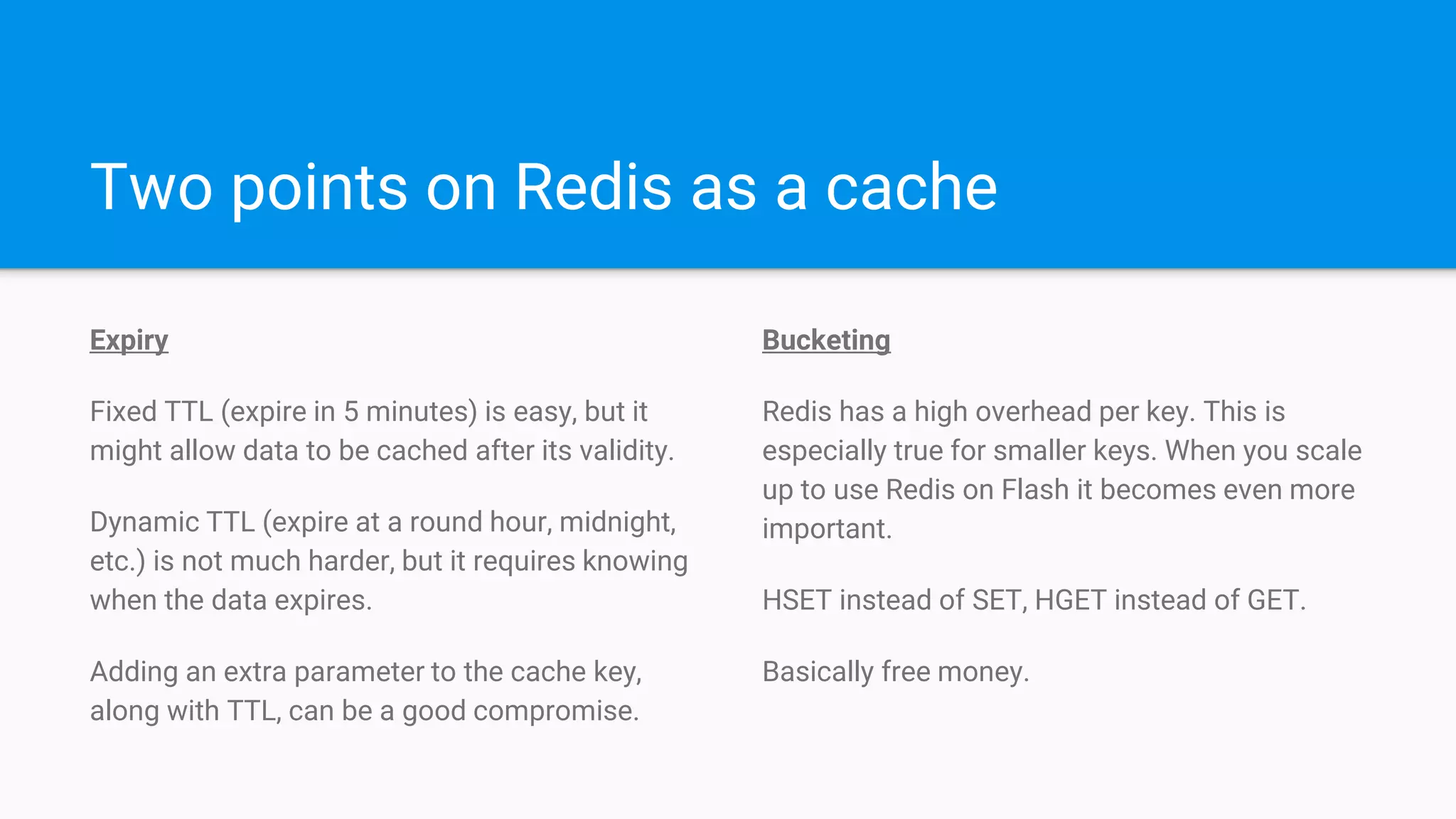

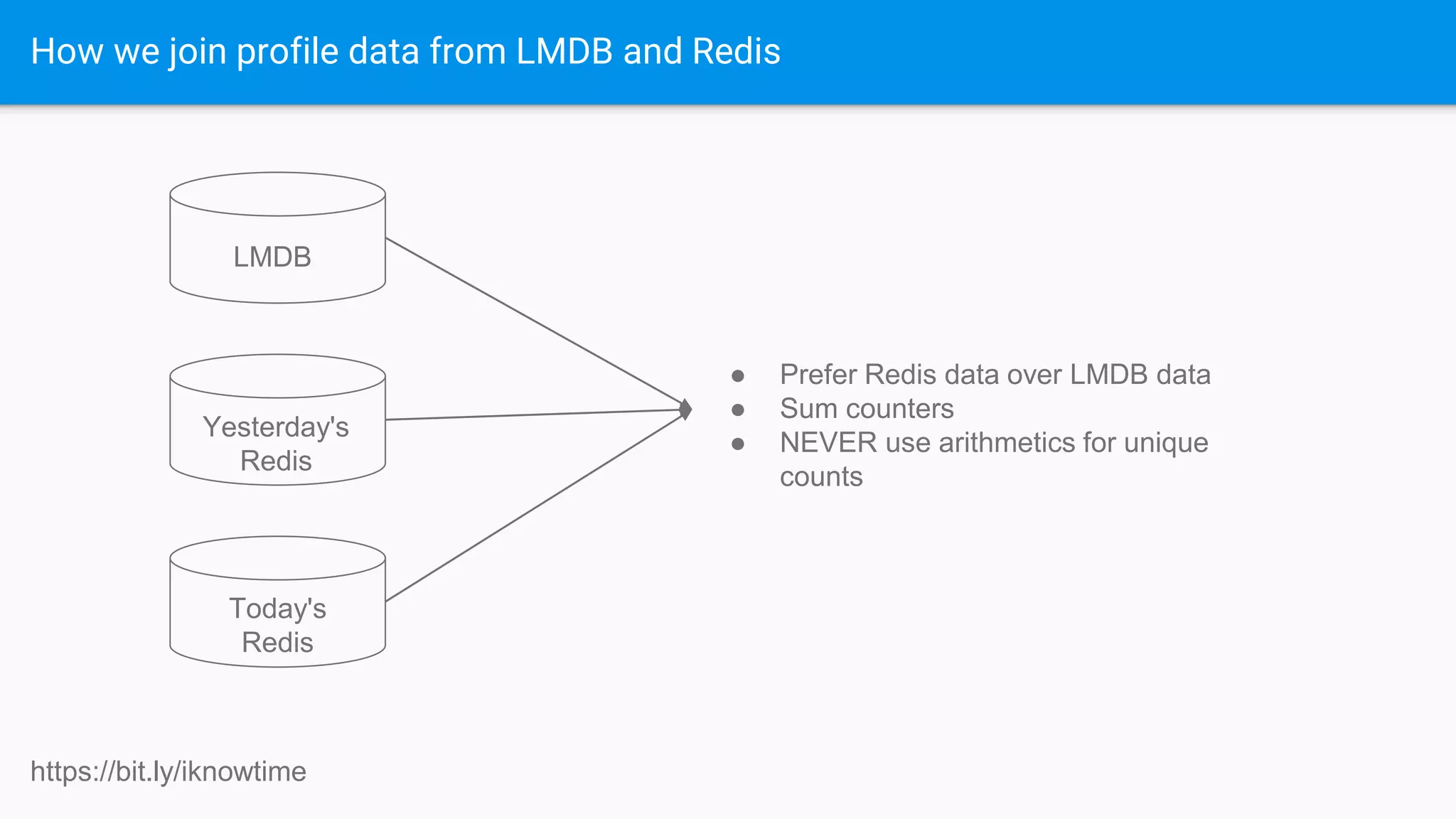

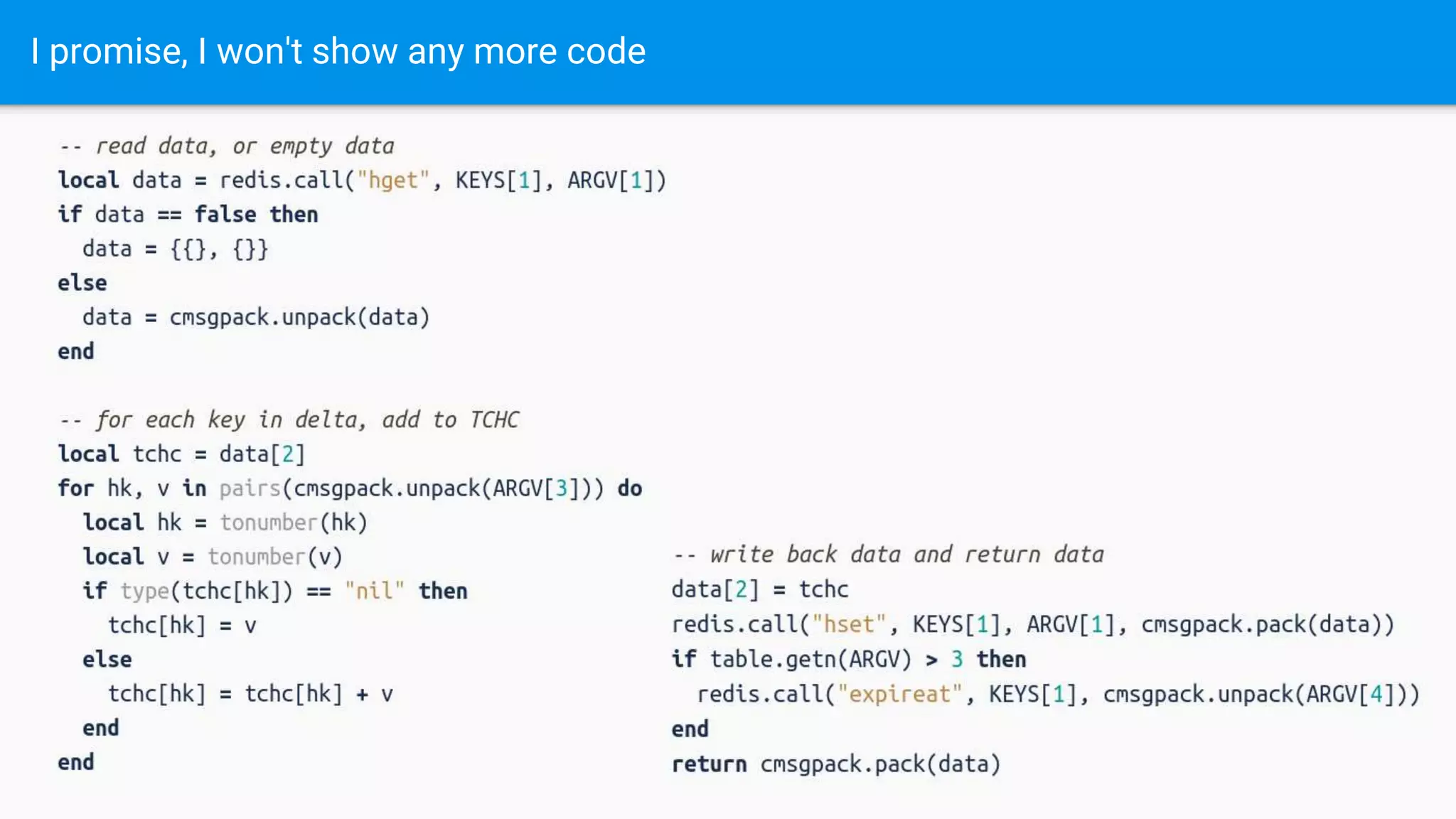

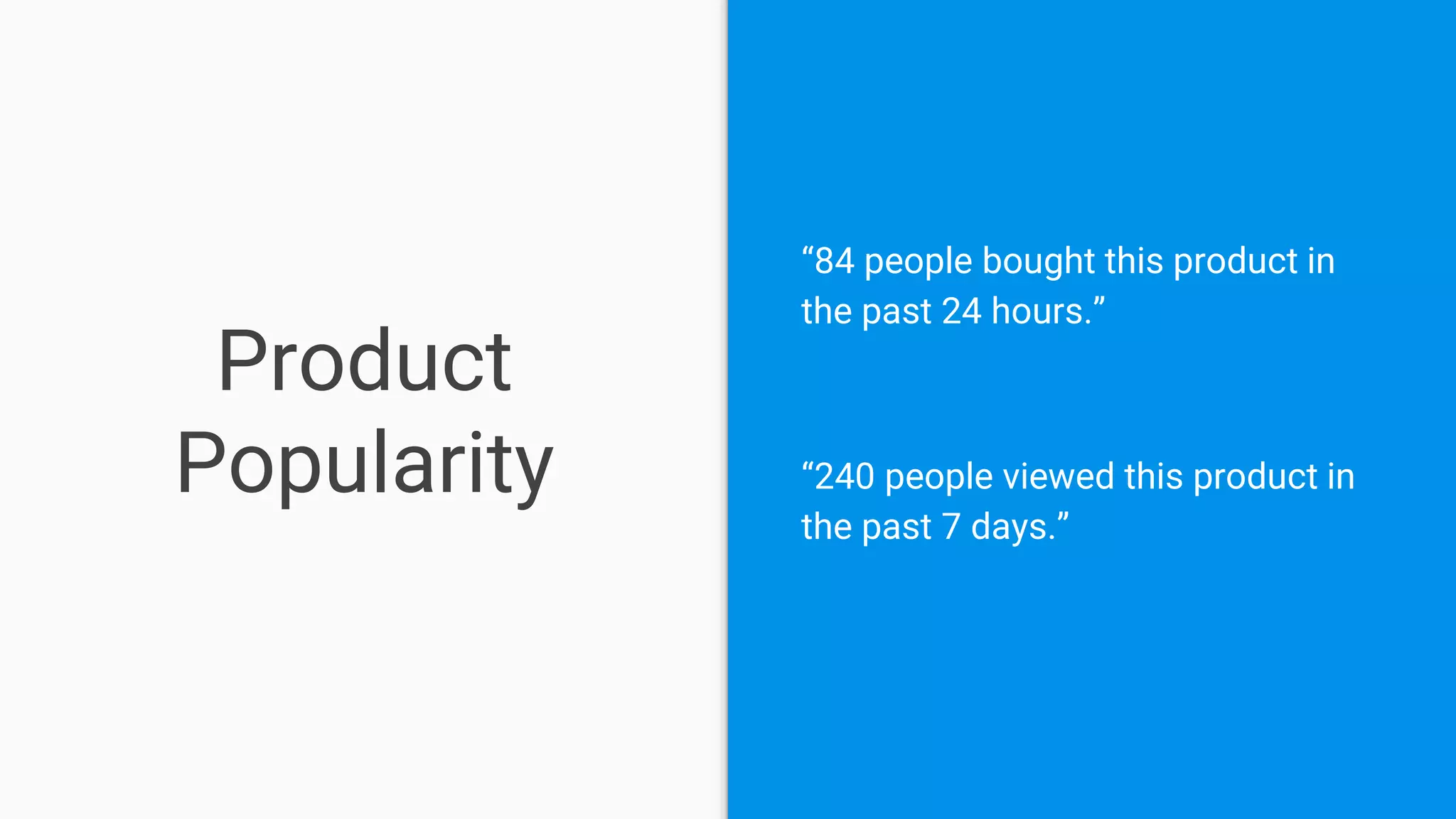

This document discusses using Redis as both a cache and primary data store. Redis is described as fast, simple, and easily scalable. It can be used as a cache for things like user profiles, with hot data stored in Redis and cold data stored elsewhere, like LMDB. Redis is also used as a primary store for tracking metrics like pageviews and purchases. The document provides examples of storing hyperloglog data in Redis to track unique counts and expiries. It also discusses techniques for load balancing and aggregating Redis data.

![Prepare the HLLs

PFADD [Nice Jacket]:hour:[Wednesday 4 p.m.] 550-00-6379

PFADD [Nice Jacket]:day:[April 25th, 2018] 550-00-6379

PFADD [Nice Jacket]:week:[4th week of April 2018] 550-00-6379

… and of course, EXPIREAT](https://image.slidesharecdn.com/ramonsnir-180523174739/75/RedisConf18-My-Other-Car-is-a-Redis-Cluster-25-2048.jpg)

![Read the HLLs

PFCOUNT [Nice Jacket]:hour:[Tuesday 4 p.m.]

...

[Nice Jacket]:hour:[Wednesday 12 a.m.]

...

[Nice Jacket]:hour:[Wednesday 4 p.m.]](https://image.slidesharecdn.com/ramonsnir-180523174739/75/RedisConf18-My-Other-Car-is-a-Redis-Cluster-26-2048.jpg)