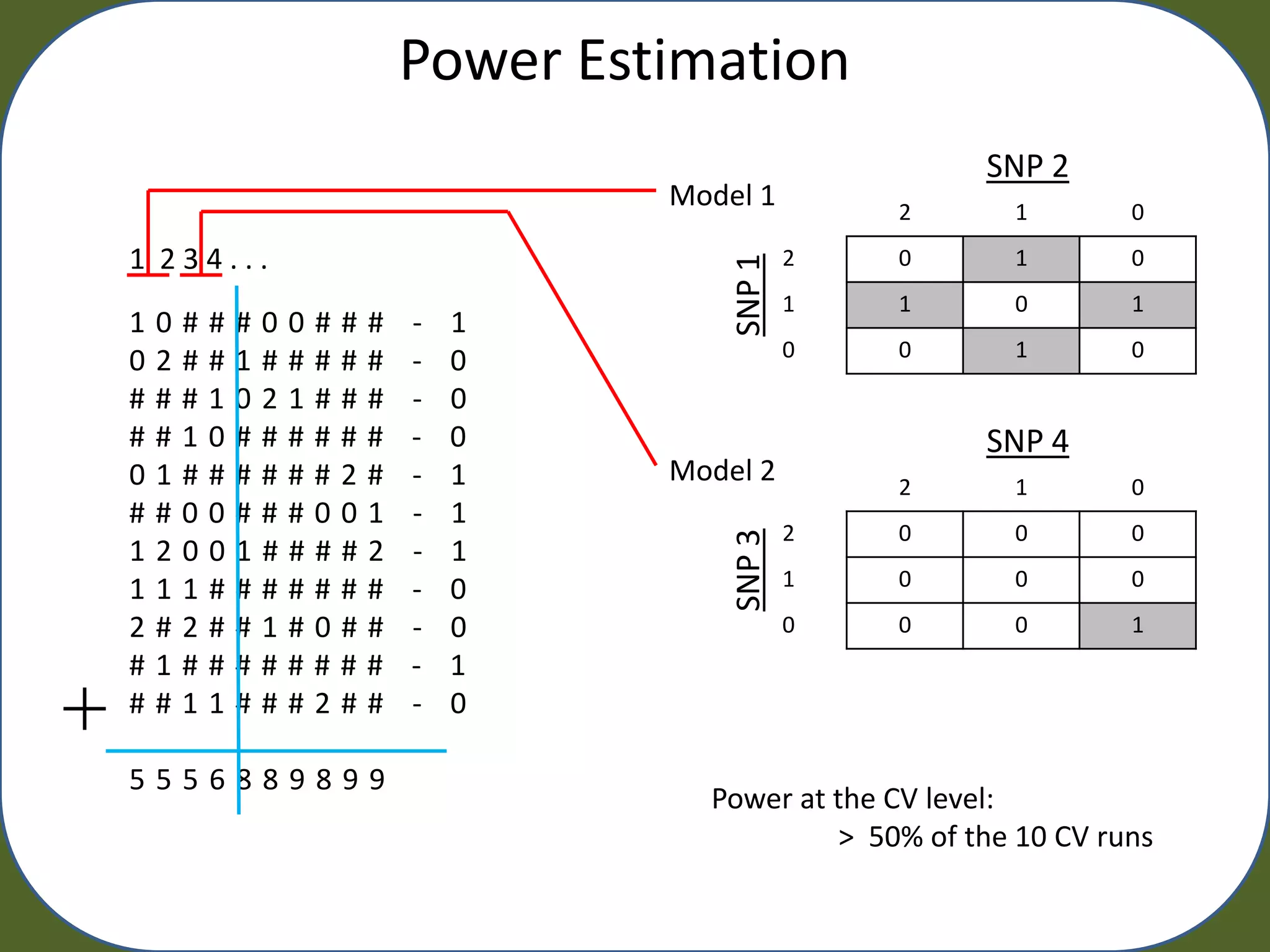

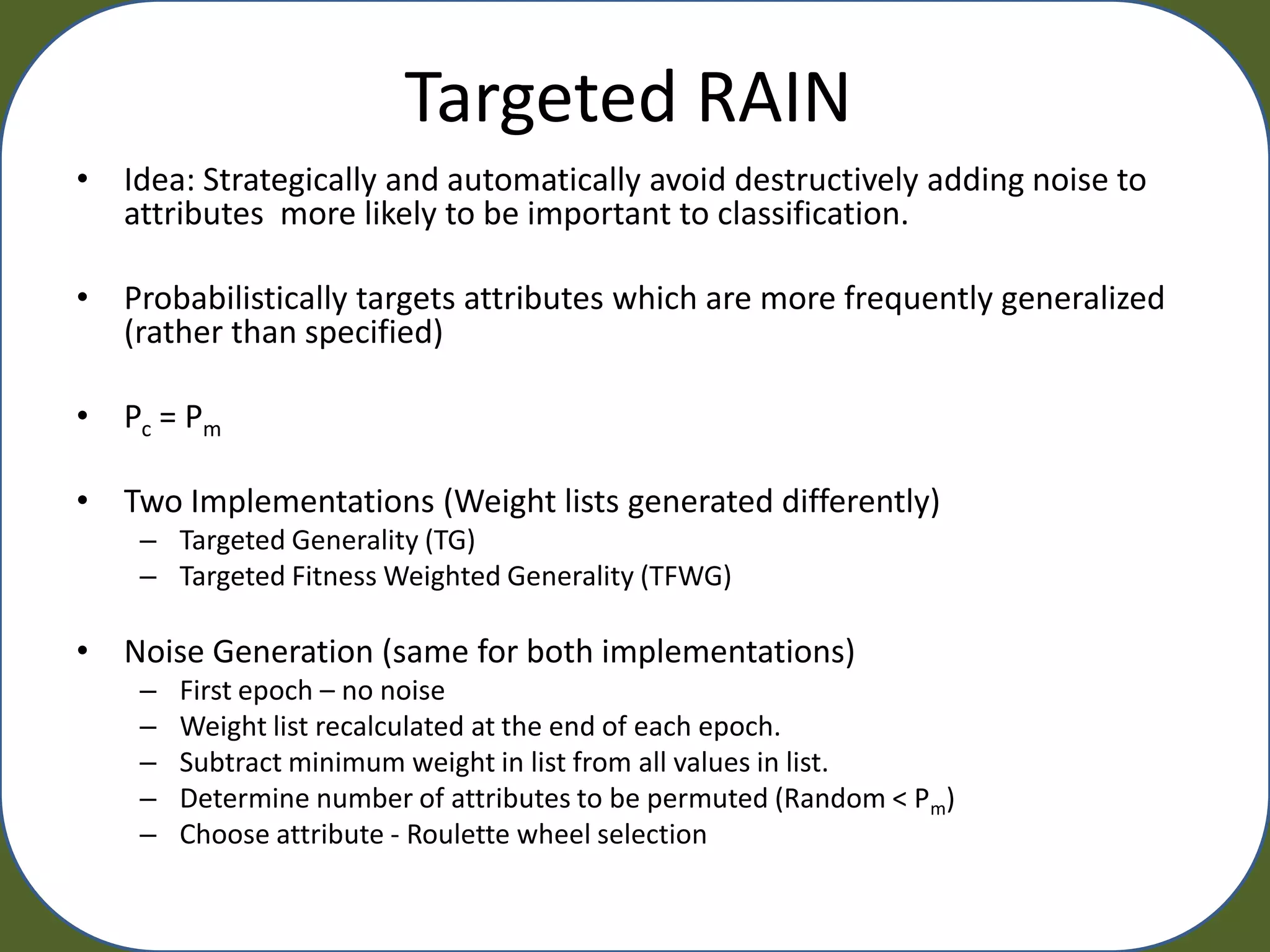

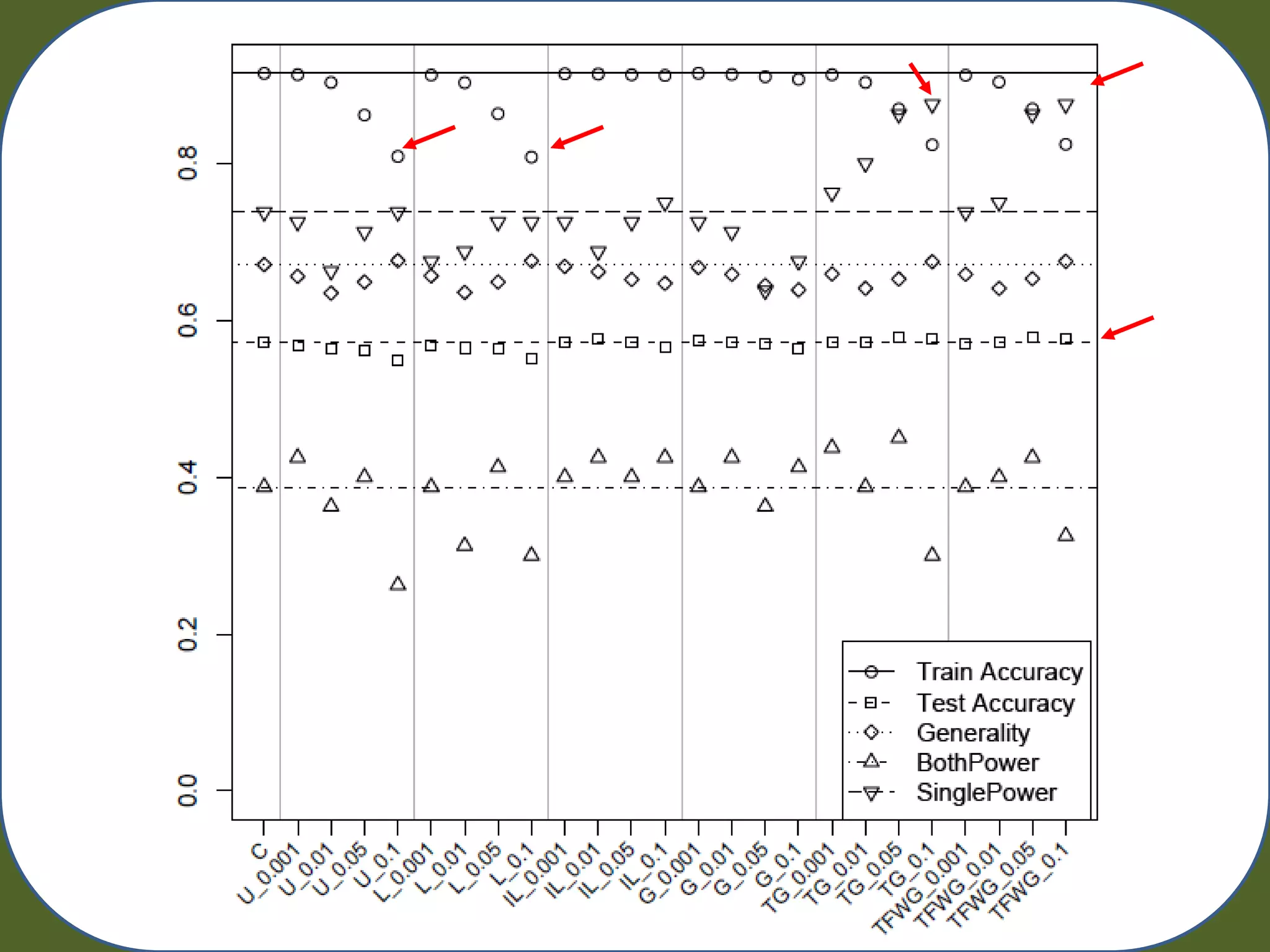

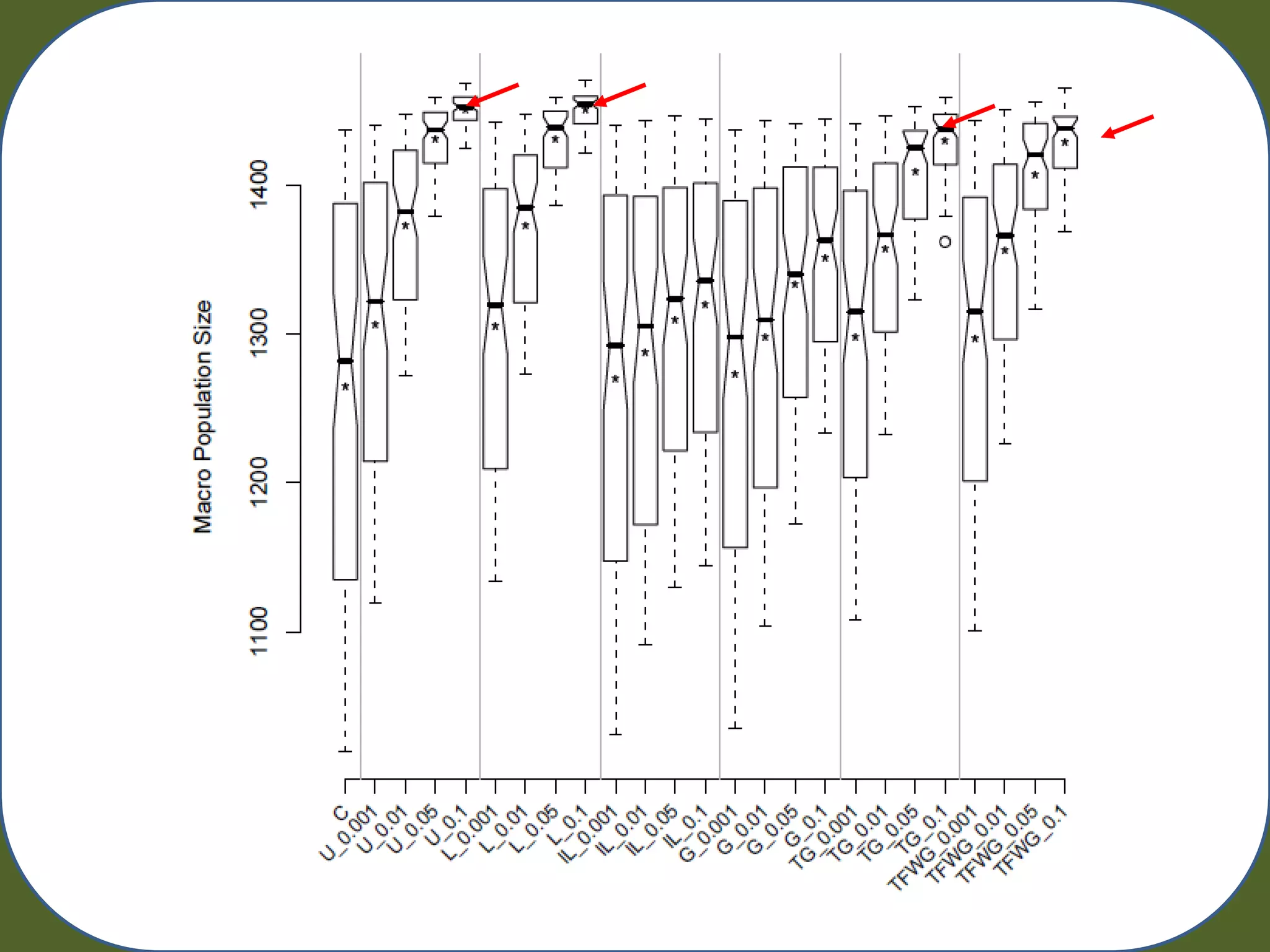

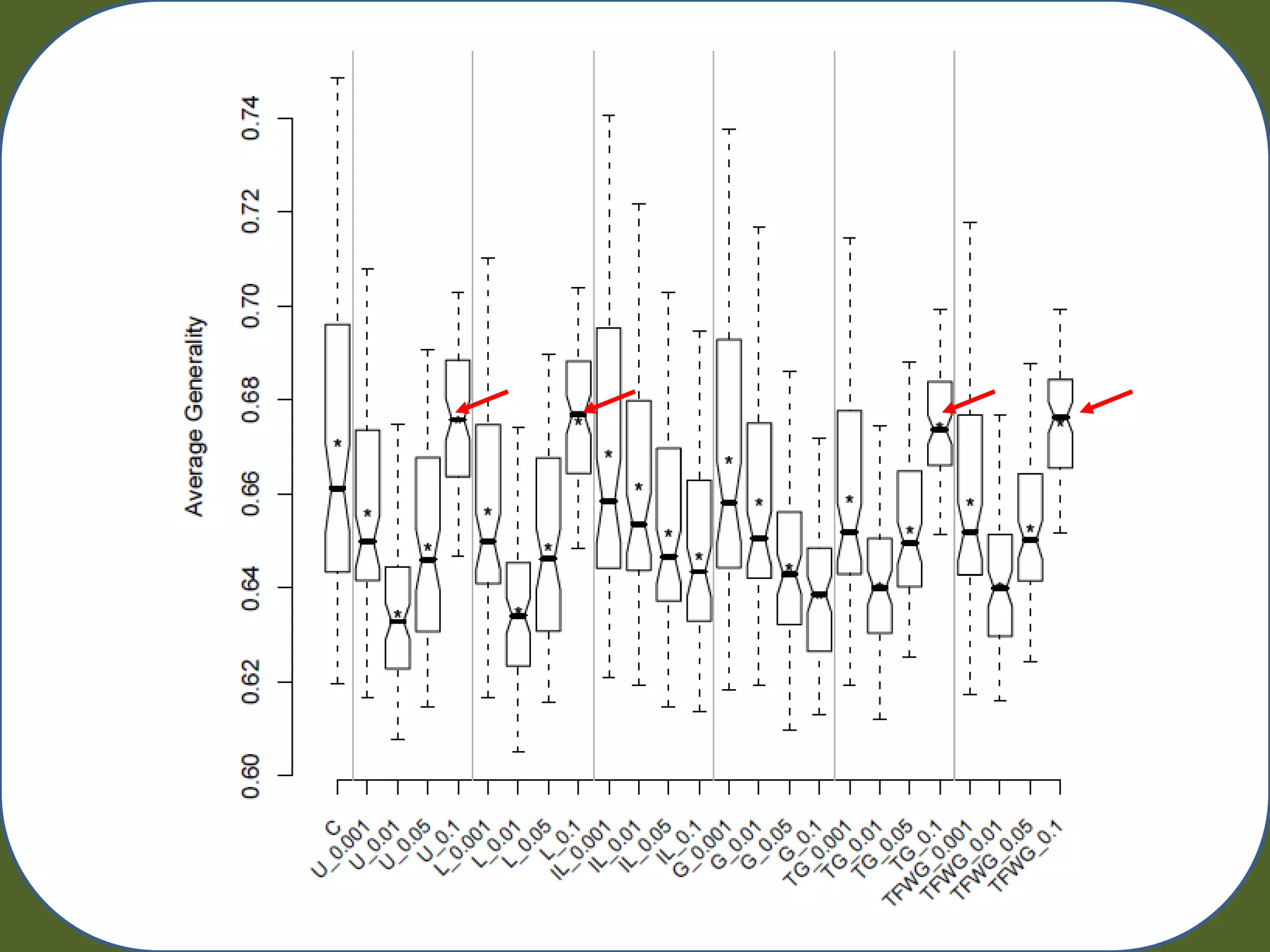

Random Artificial Incorporation of Noise in a Learning Classifier System (RAIN) is a technique that incorporates low levels of random classification noise into the training data presented to a Michigan-style learning classifier system (LCS). This is done to discourage overfitting and promote effective generalization on noisy problem domains. The document describes experiments testing two implementations of RAIN - Targeted Generality (TG) and Targeted Fitness Weighted Generality (TFWG) - on simulated genetic epidemiology datasets. The results show that Targeted RAIN was able to reduce overfitting without reducing testing accuracy, and may improve the ability of the LCS to identify predictive attributes, though power increases were not statistically significant. Future work is proposed to further

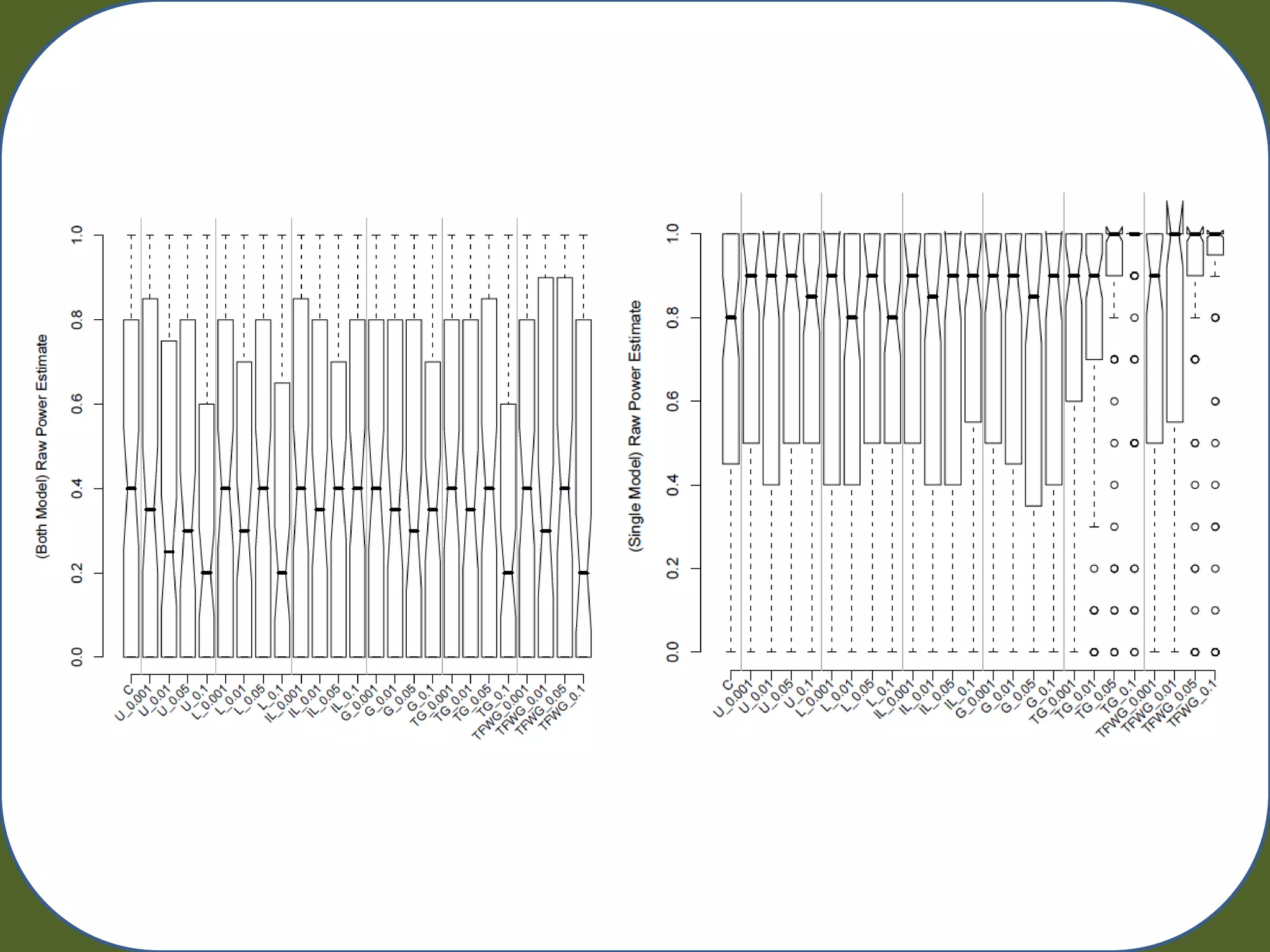

![Learning Classifier Systems

4

2 Population [P]

Covering

Classifiern = Condition : Action :: Parameter(s) Detectors

3

Action 5 1

10 Match Set [M] Selection 9

Genetic Classifierm Environment

Algorithm Prediction Array

6

Action Performed

Action Set [A] Effectors

Classifiera 7

8 Reward

[A]t-1 Learning Strategy

Classifiert-1 Credit Assignment

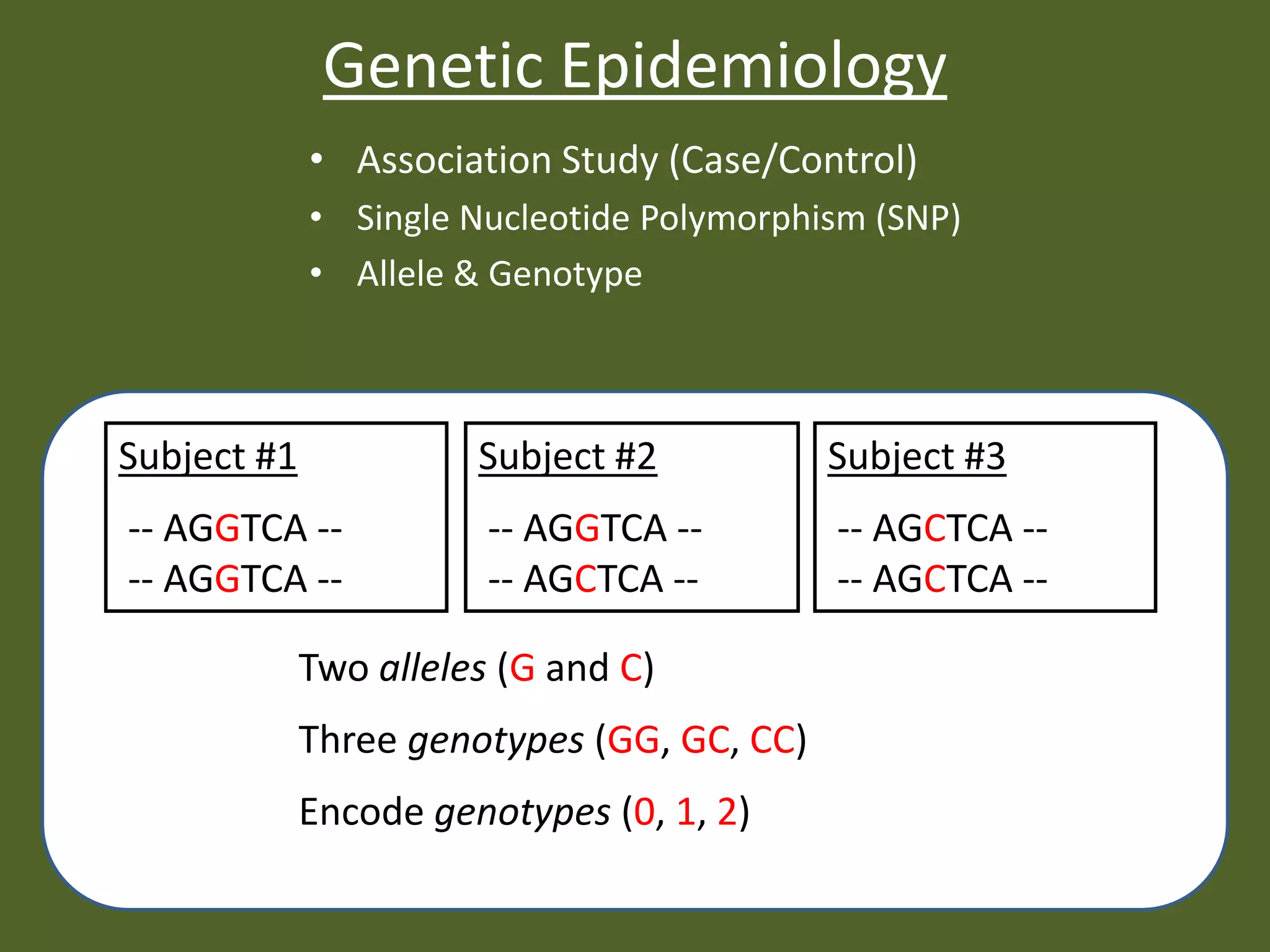

• Autonomous Robotics

Discovery Component • Complex Adaptive Systems

Performance Component • Function Approximation

Reinforcement Component • Classification

• Data Mining

Urbanowicz 2009 LCS: A Complete Introduction, Review, and Roadmap. Journal of Artificial Evolution and Applications](https://image.slidesharecdn.com/urbanowicz2011raingecco-110727142834-phpapp02/75/Random-Artificial-Incorporation-of-Noise-in-a-Learning-Classifier-System-Environment-6-2048.jpg)

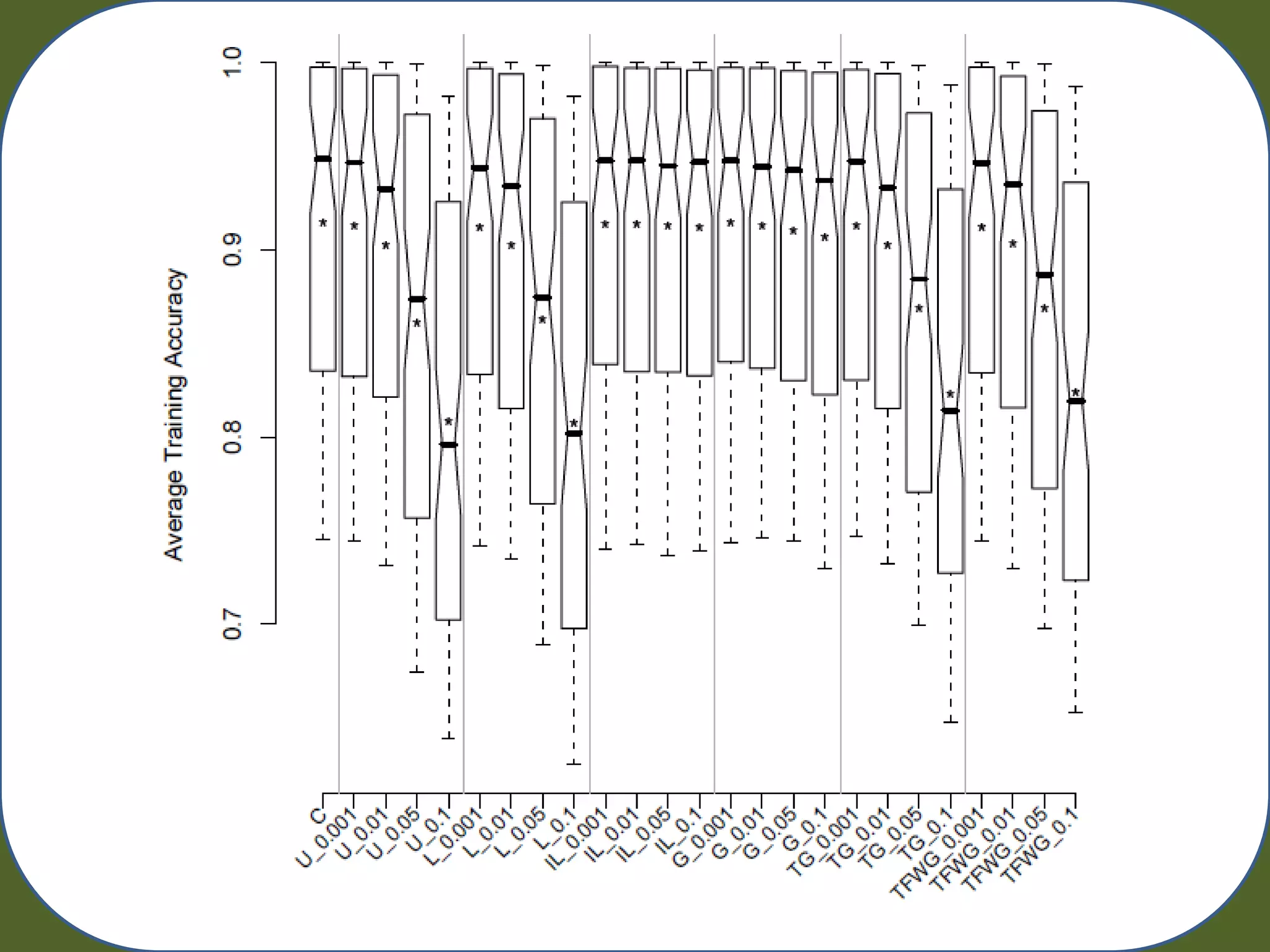

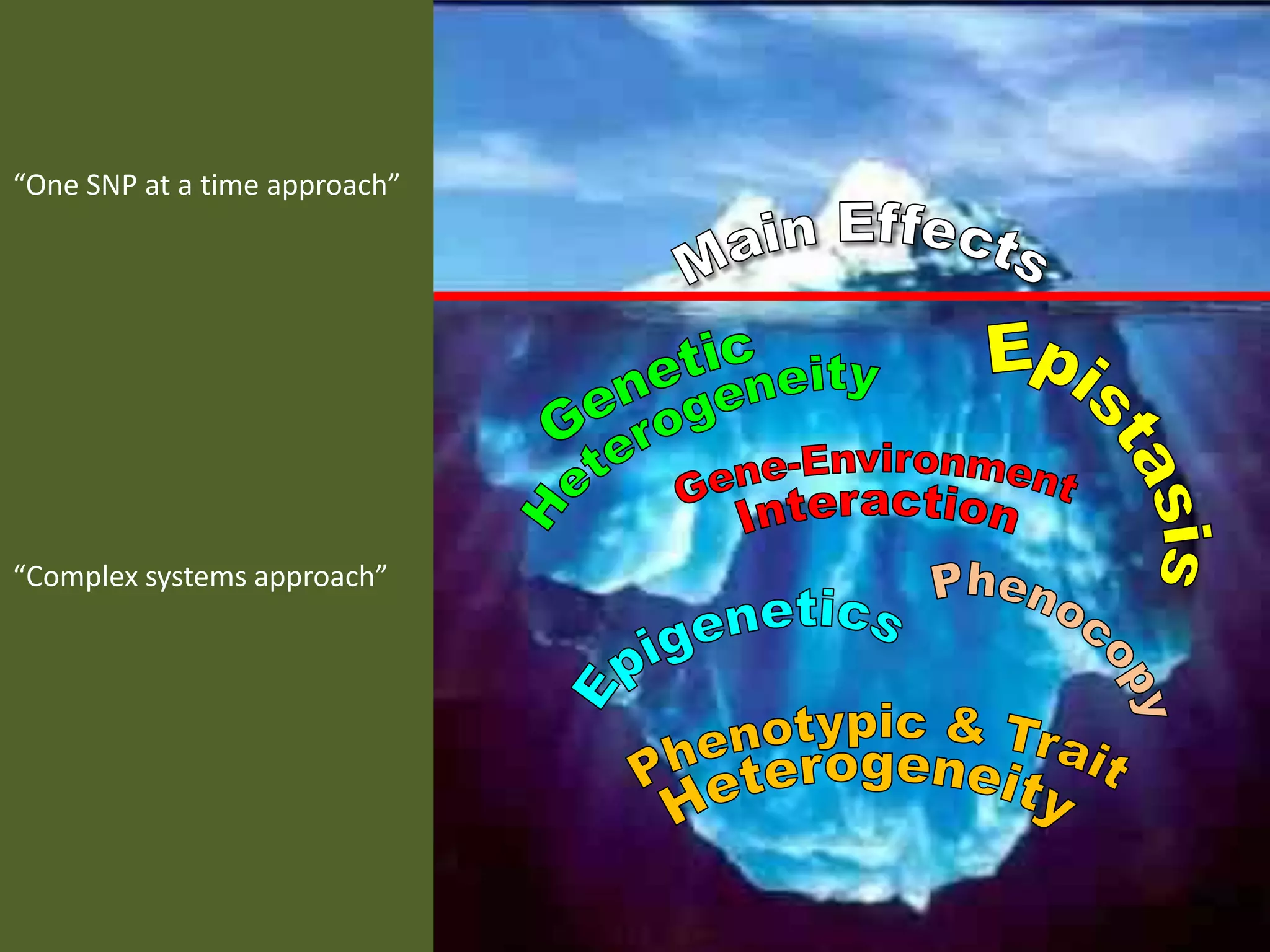

![RAIN

Random Artificial Incorporation of Noise

Environment

0210110220 -- 1 0210120220 -- 1

Population [P]

Match Set [M] Correct Set [C]](https://image.slidesharecdn.com/urbanowicz2011raingecco-110727142834-phpapp02/75/Random-Artificial-Incorporation-of-Noise-in-a-Learning-Classifier-System-Environment-10-2048.jpg)

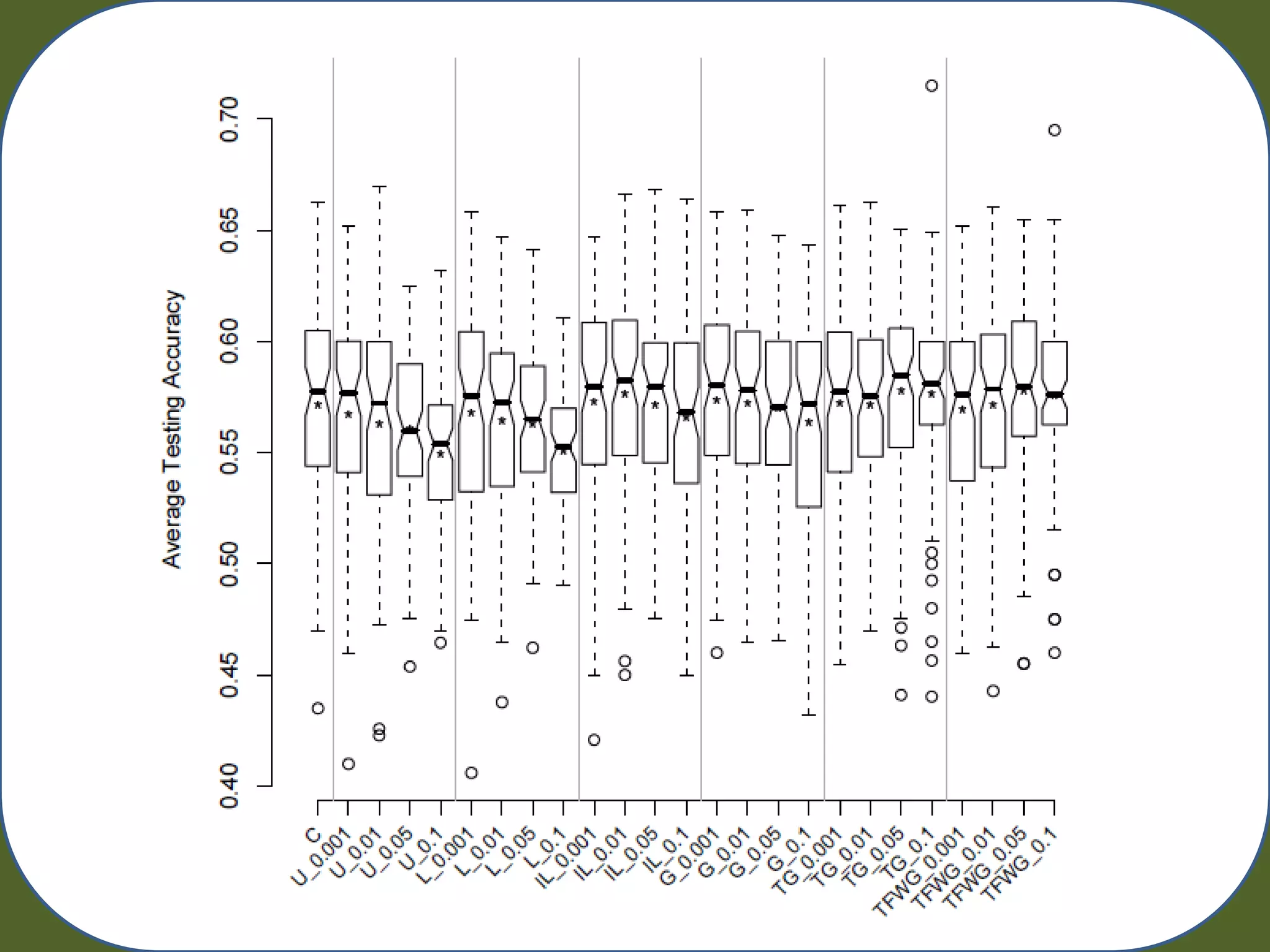

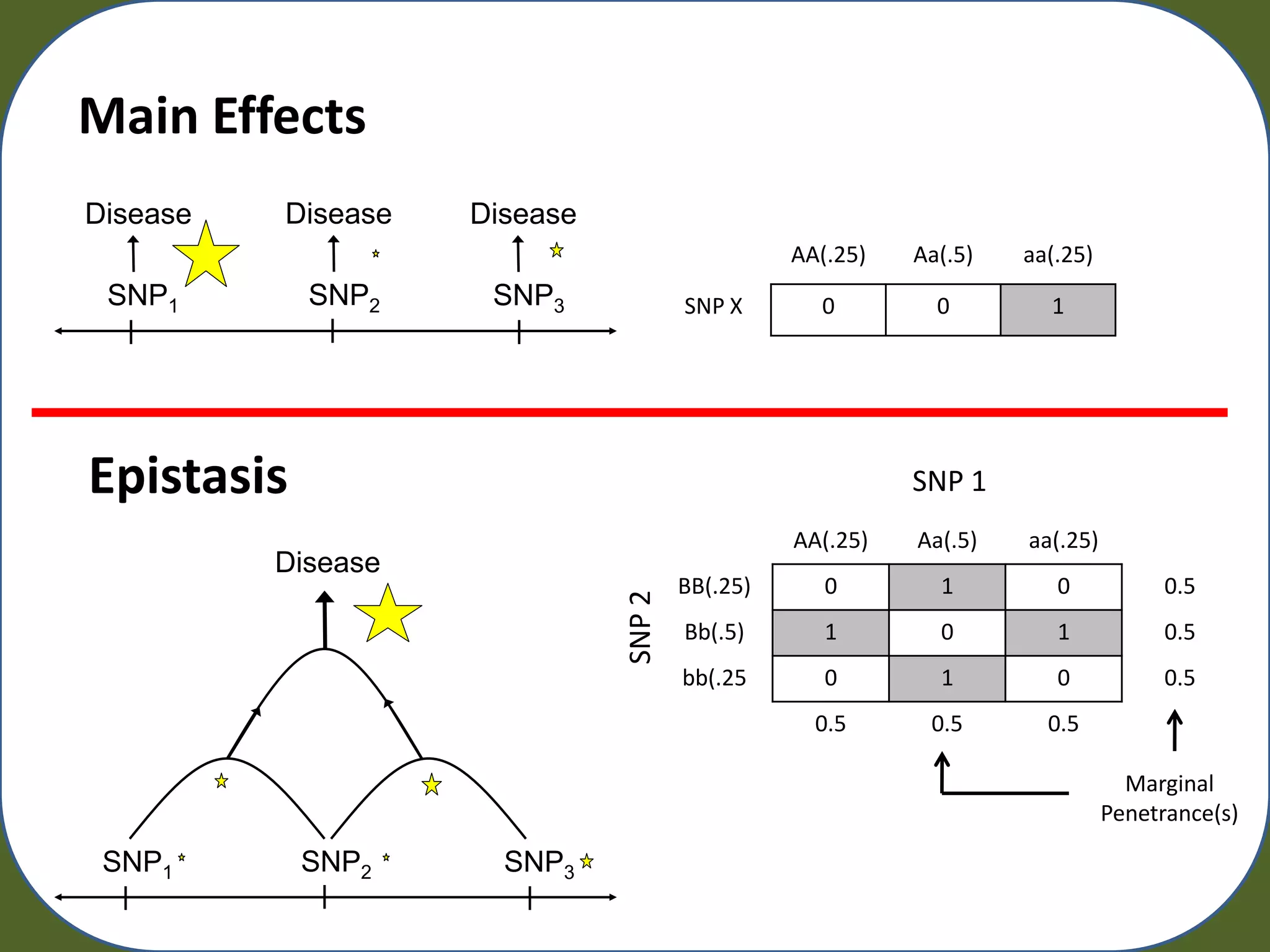

![Experimental Evaluation

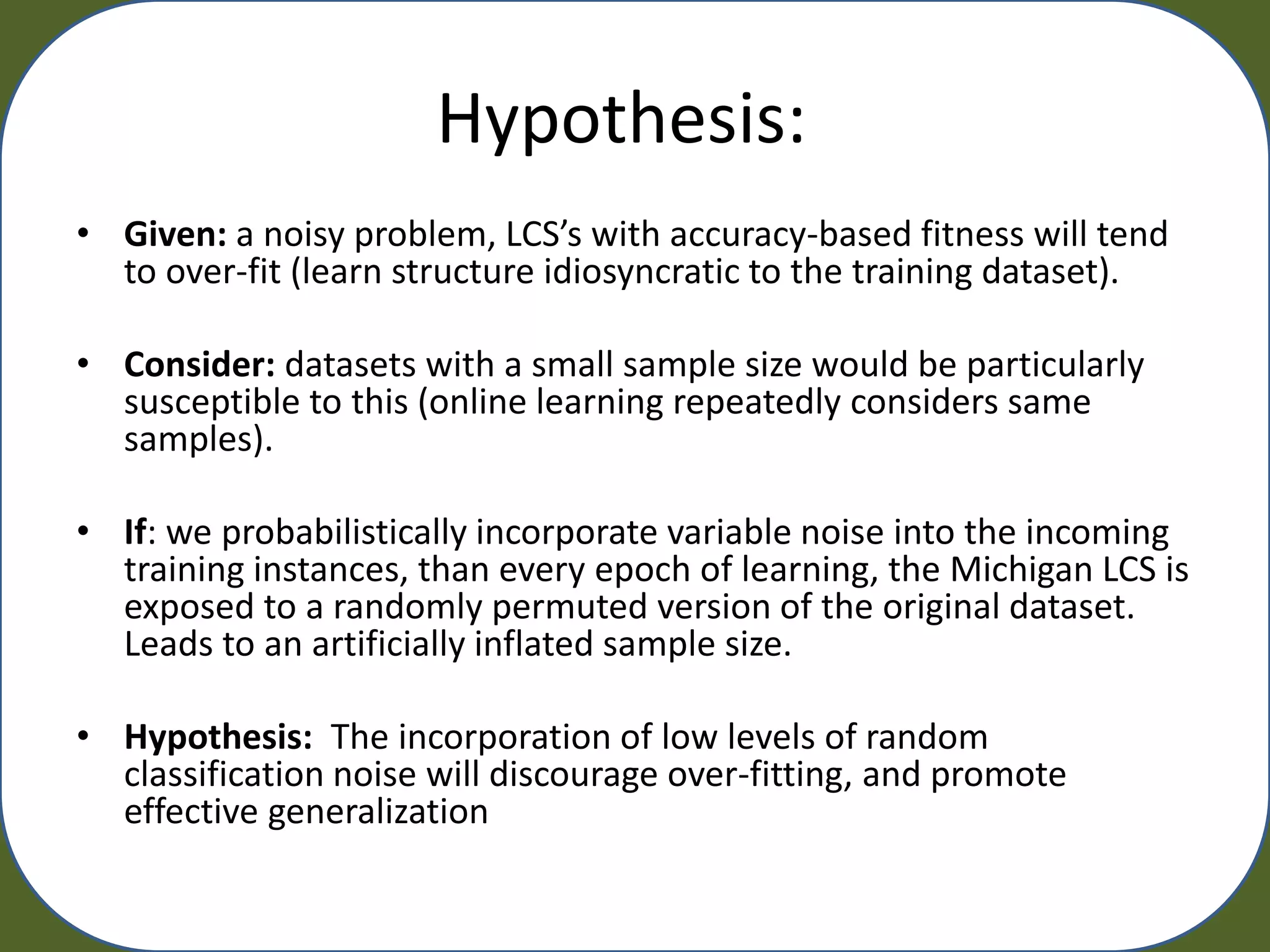

• UCS –

– Iterations = [50000,100000, 200000, 500000]

– Micro Pop. Size = 1600

– Other parameters are default

– Track: Training Acc. Testing Acc. Generality, Macro Pop. Size, Run Time

Power to find both or a single underlying model

– Pm = 0.001, 0.01, 0.05, 0.1

• Each Dataset

– Main effect free

– 2X two-locus epistatic interation

– 20 Attributes

– Balanced

– Minor allele frequencies = 0.2 G1 G2 G3 G4

– Heritability = 0.2

– Mix Ratio = 50:50

– Sample Sizes [200, 400, 800, 1600]

– 20 Replicates

• 80 Simulated Datasets + (10 Fold CV) 800 runs of UCS](https://image.slidesharecdn.com/urbanowicz2011raingecco-110727142834-phpapp02/75/Random-Artificial-Incorporation-of-Noise-in-a-Learning-Classifier-System-Environment-14-2048.jpg)

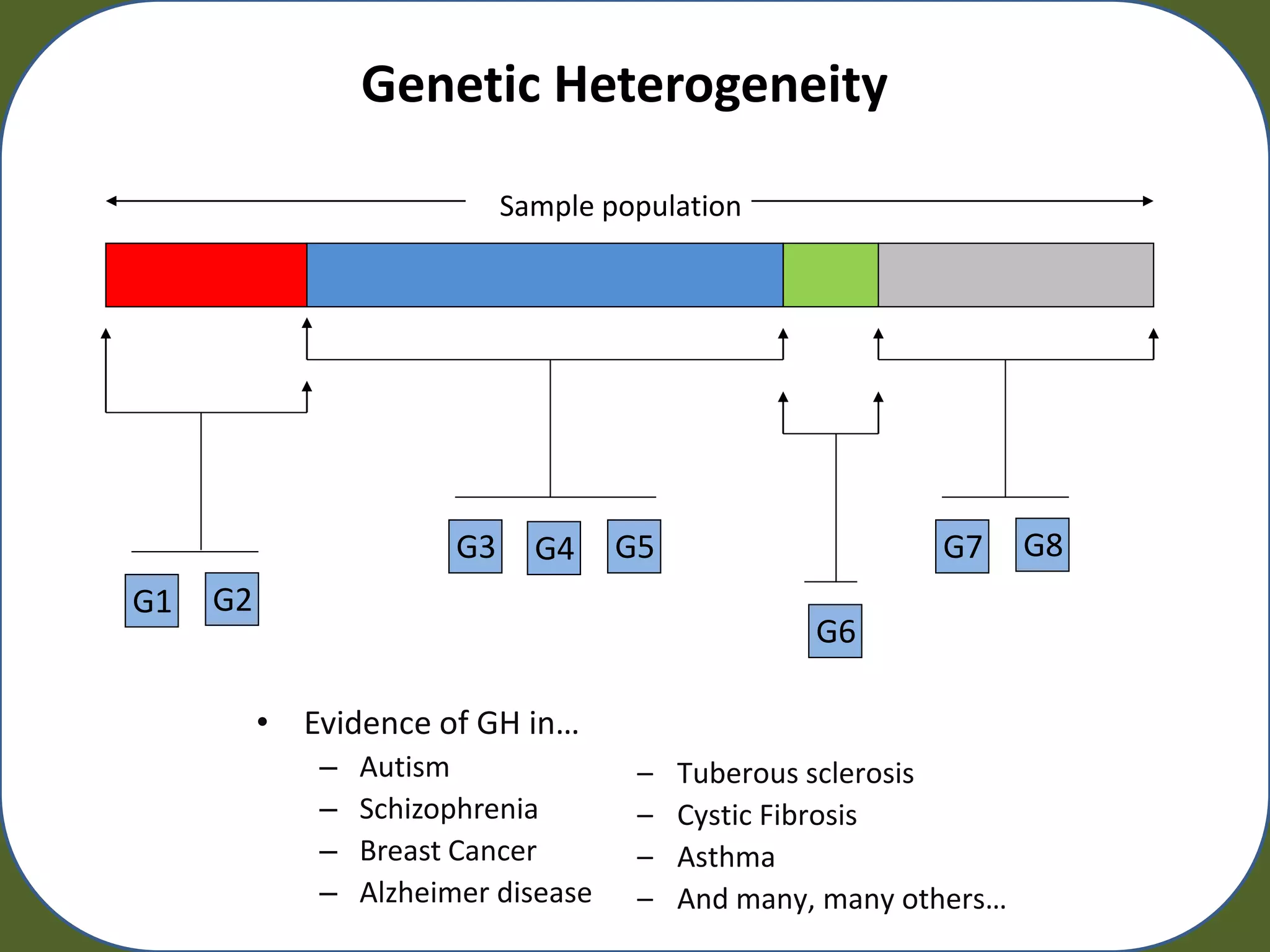

![Quaternary Rule Representation

[ #, 0, 1, 2]](https://image.slidesharecdn.com/urbanowicz2011raingecco-110727142834-phpapp02/75/Random-Artificial-Incorporation-of-Noise-in-a-Learning-Classifier-System-Environment-20-2048.jpg)