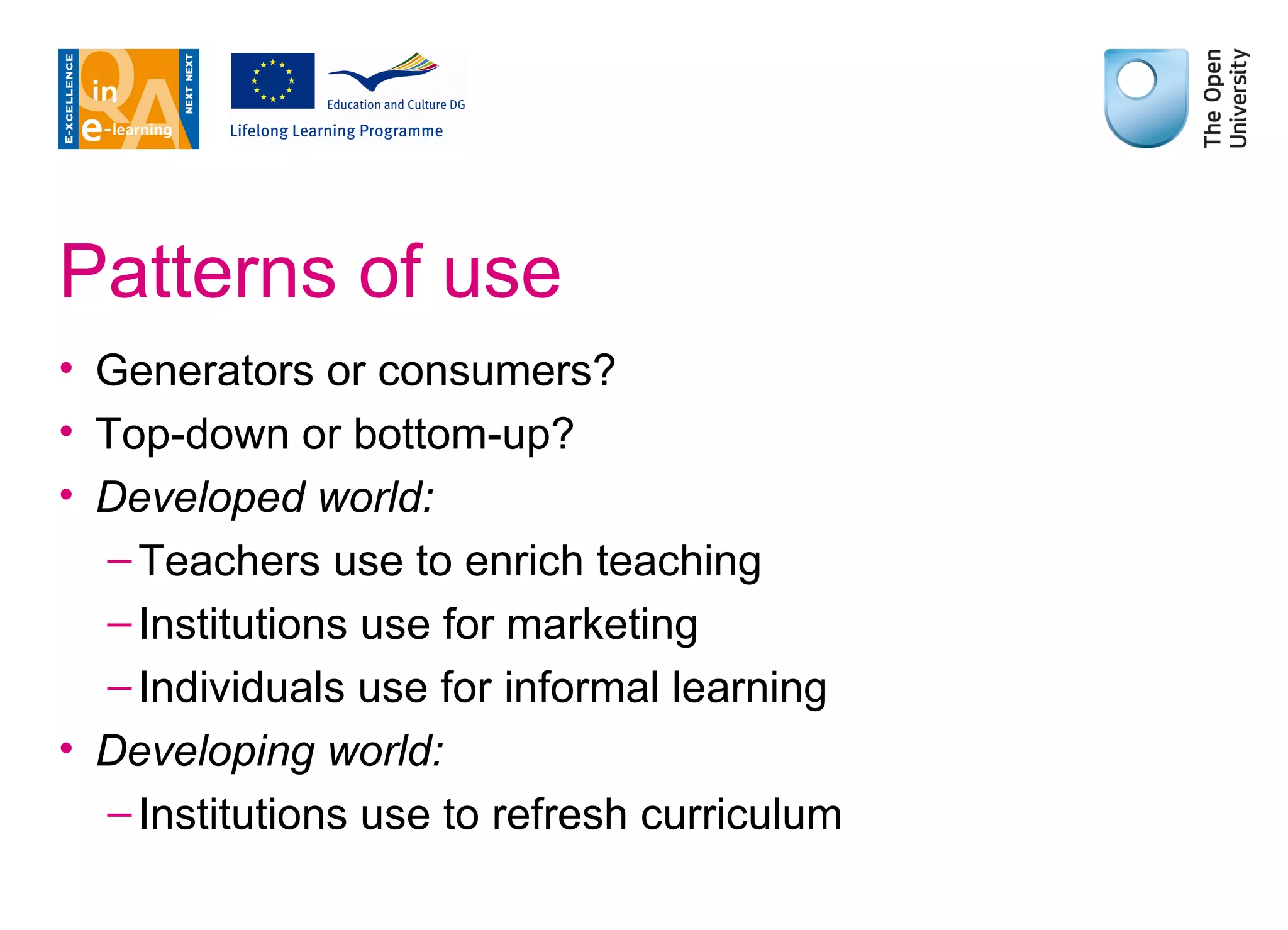

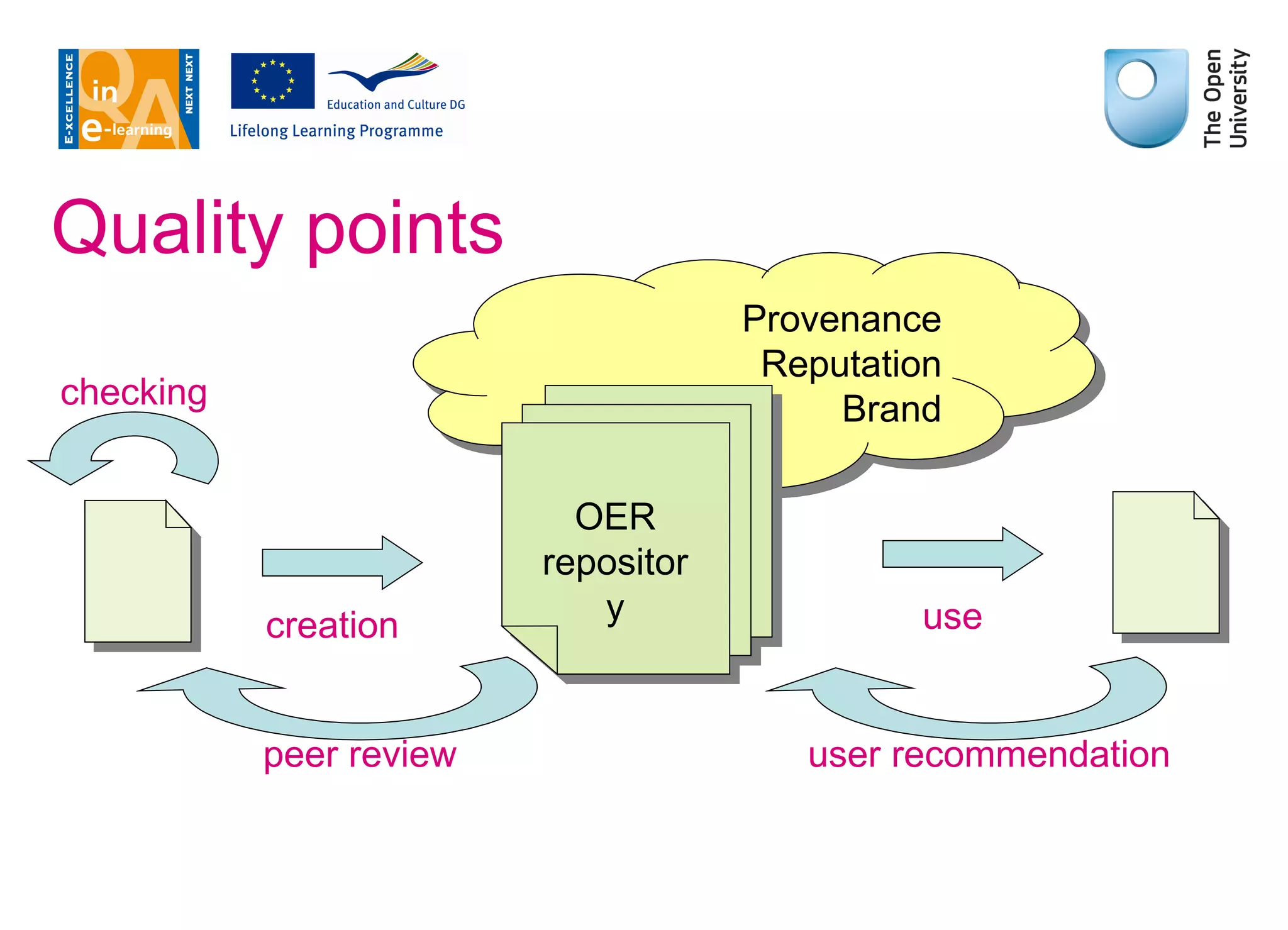

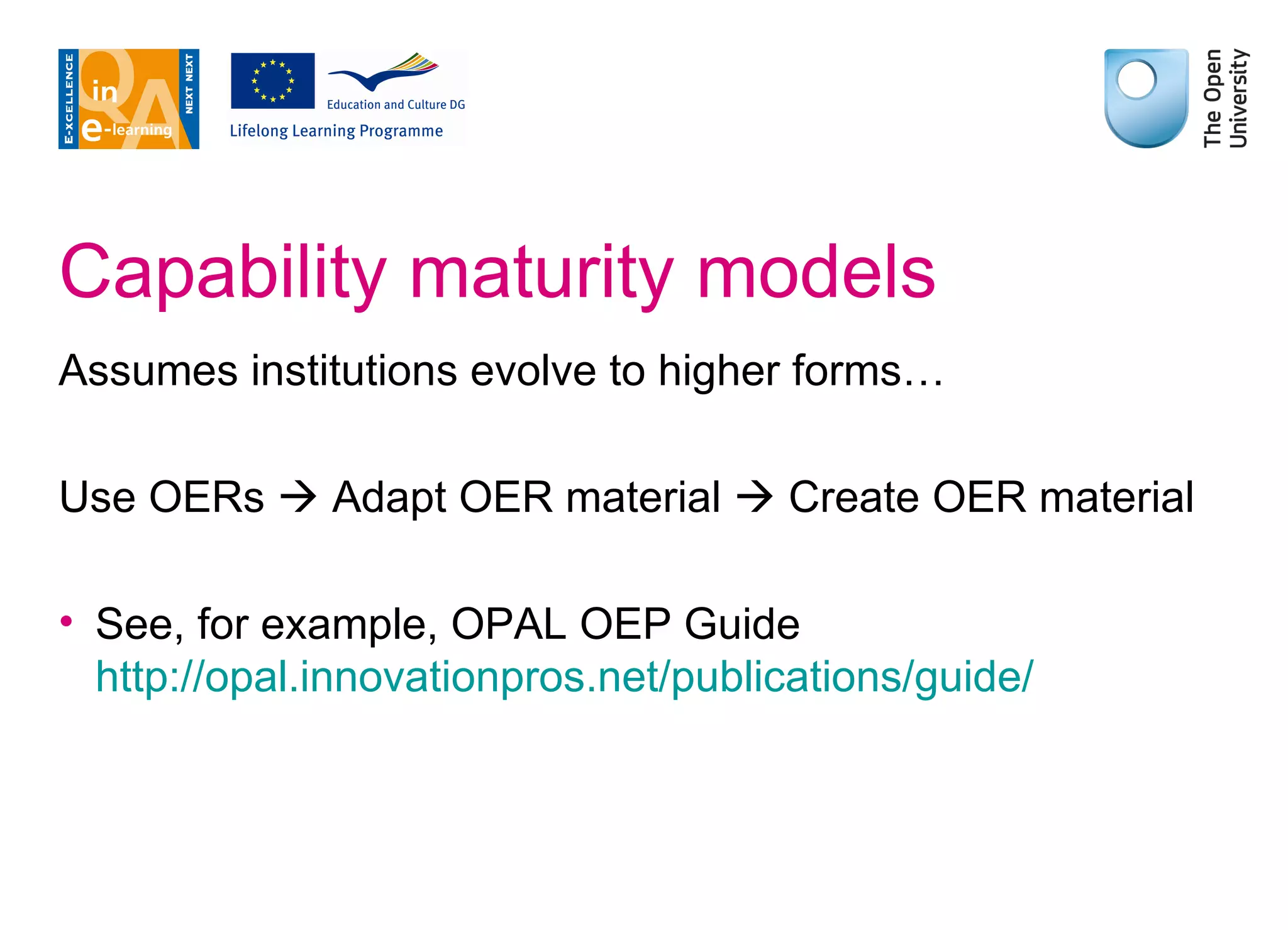

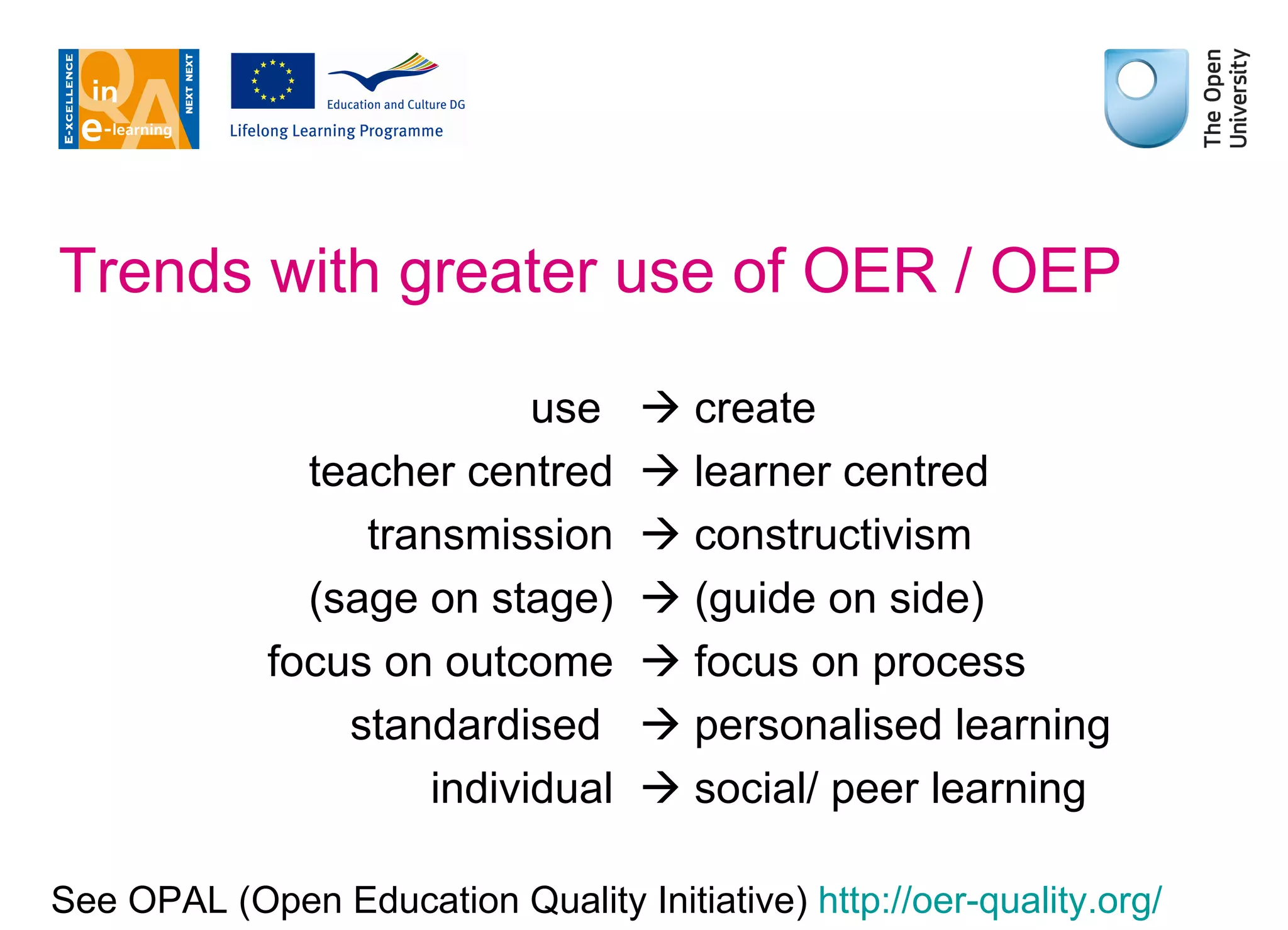

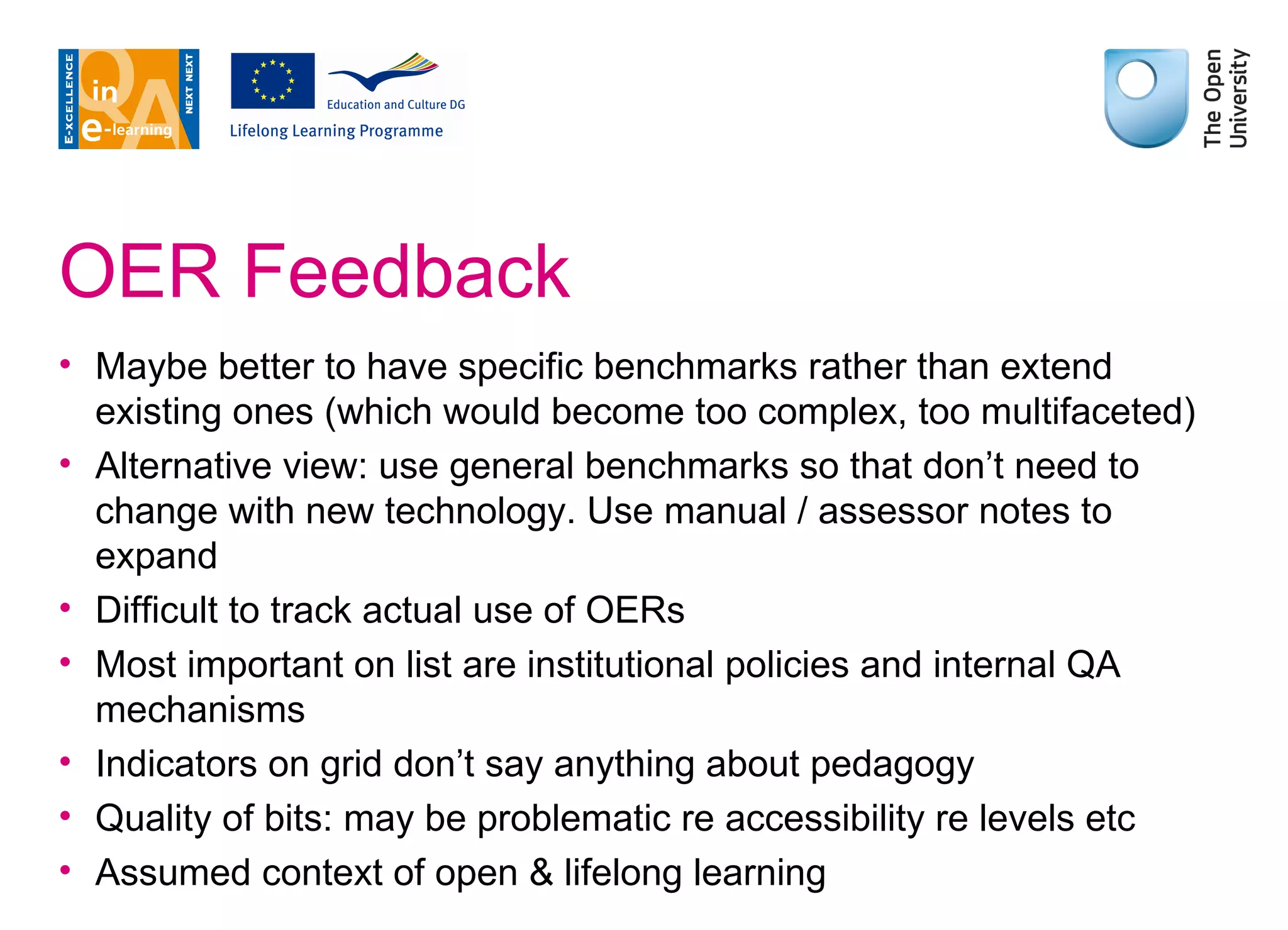

The document outlines the importance of quality assurance (QA) in Open Educational Resources (OER), defining OER as freely available digital materials for education. It discusses the motivations behind OER adoption, such as increasing accessibility and collaboration, while addressing challenges like licensing and quality evaluation. The text emphasizes the need for specific benchmarks for assessing OER quality and highlights various case studies to illustrate effective use and potential risks in an educational context.

![Jon Rosewell & Giselle Ferreira The Open University [email_address] [email_address] www.open.ac.uk](https://image.slidesharecdn.com/oerintro-110623052343-phpapp02/75/QA-in-e-Learning-and-Open-Educational-Resources-OER-2-2048.jpg)

![Jon Rosewell & Giselle Ferreira The Open University [email_address] [email_address] www.open.ac.uk](https://image.slidesharecdn.com/oerintro-110623052343-phpapp02/75/QA-in-e-Learning-and-Open-Educational-Resources-OER-30-2048.jpg)