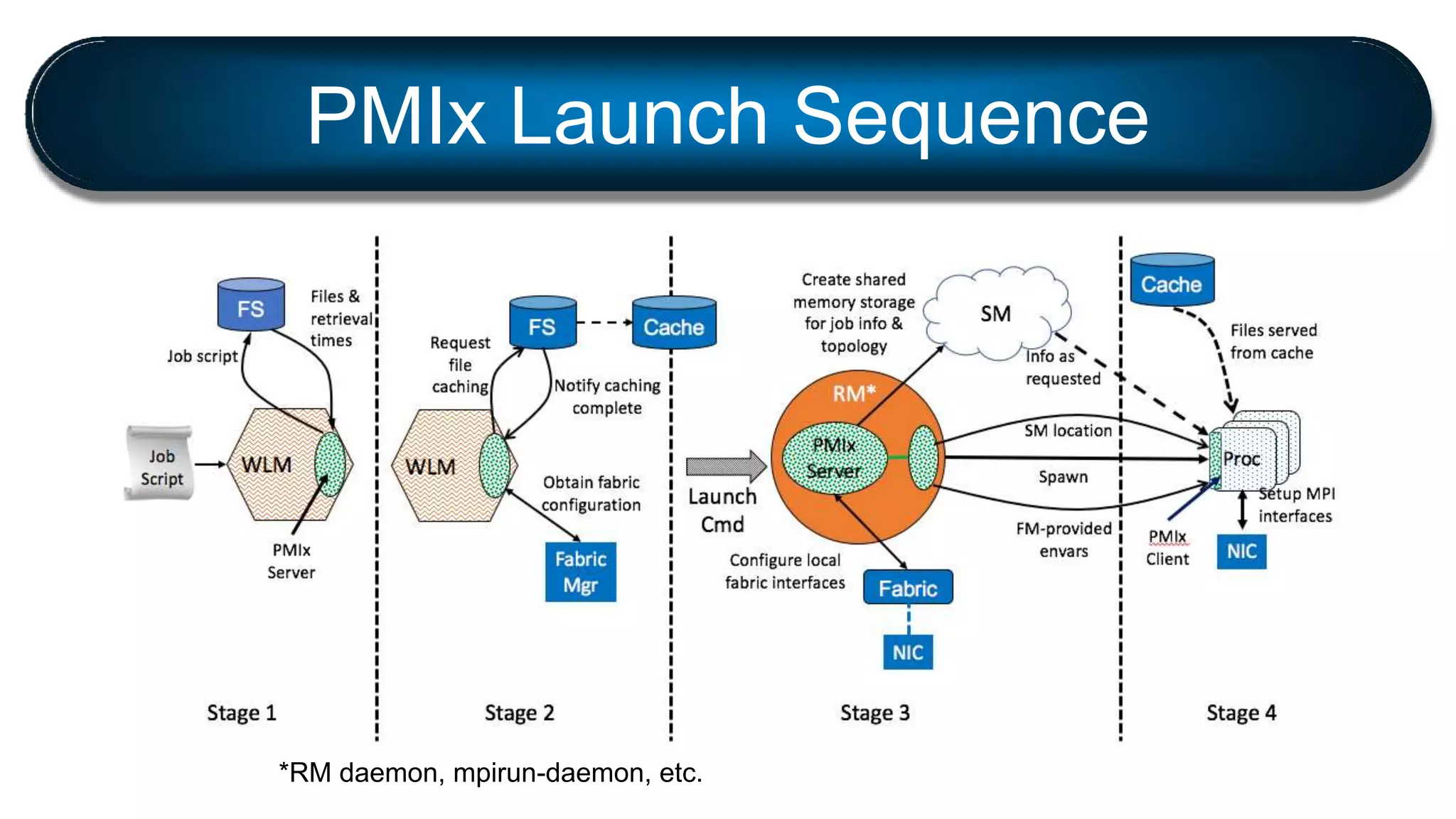

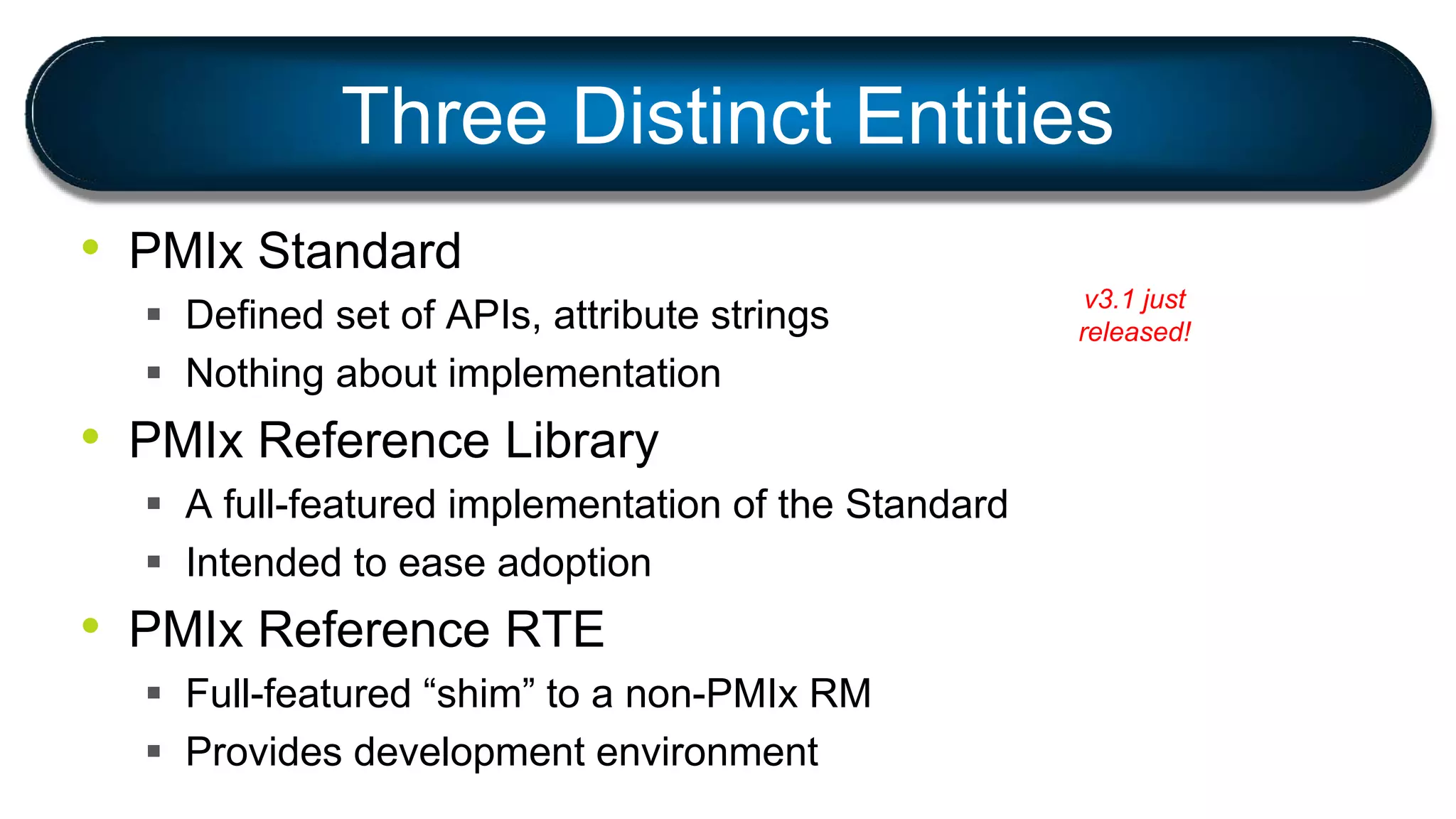

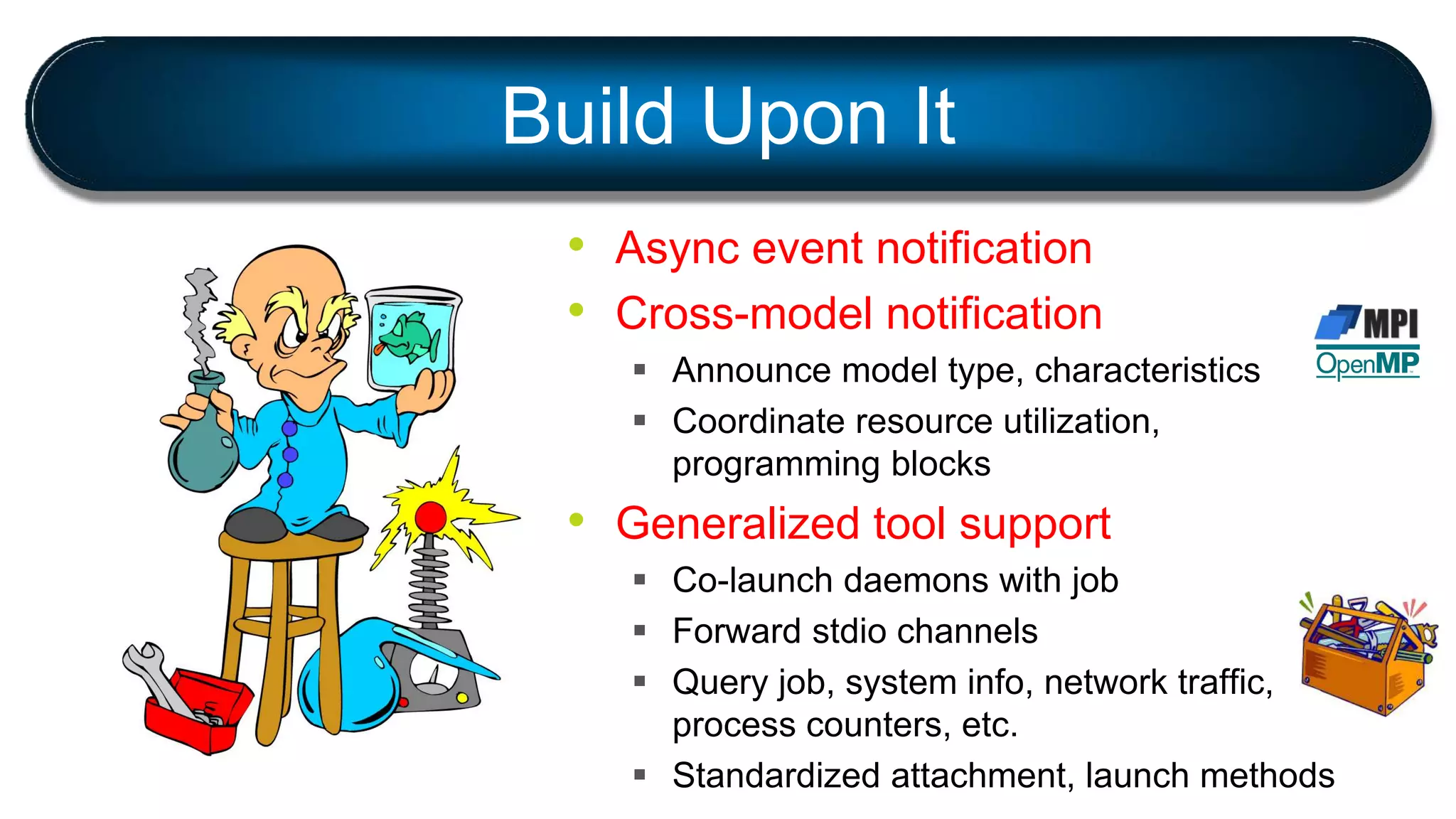

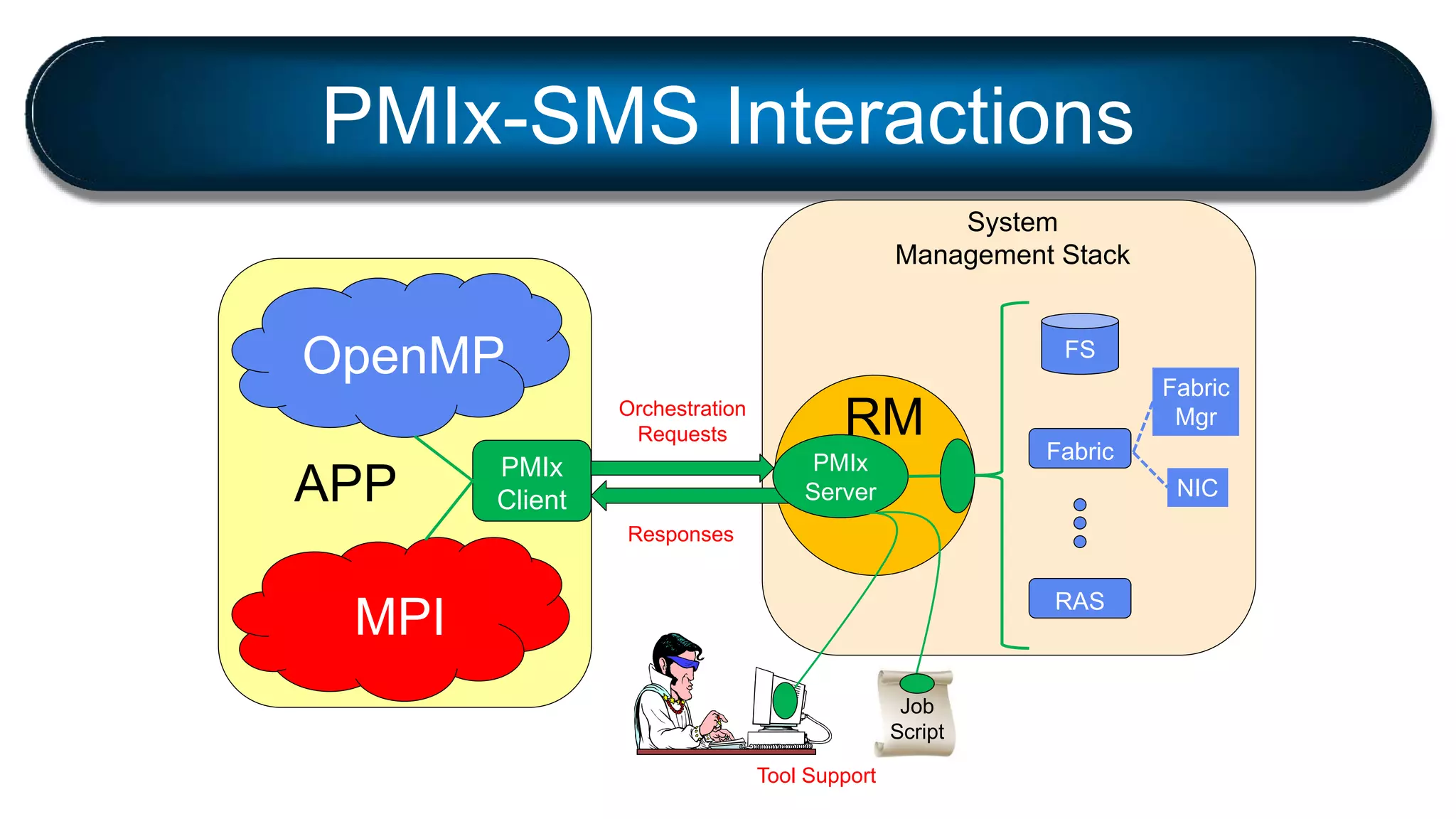

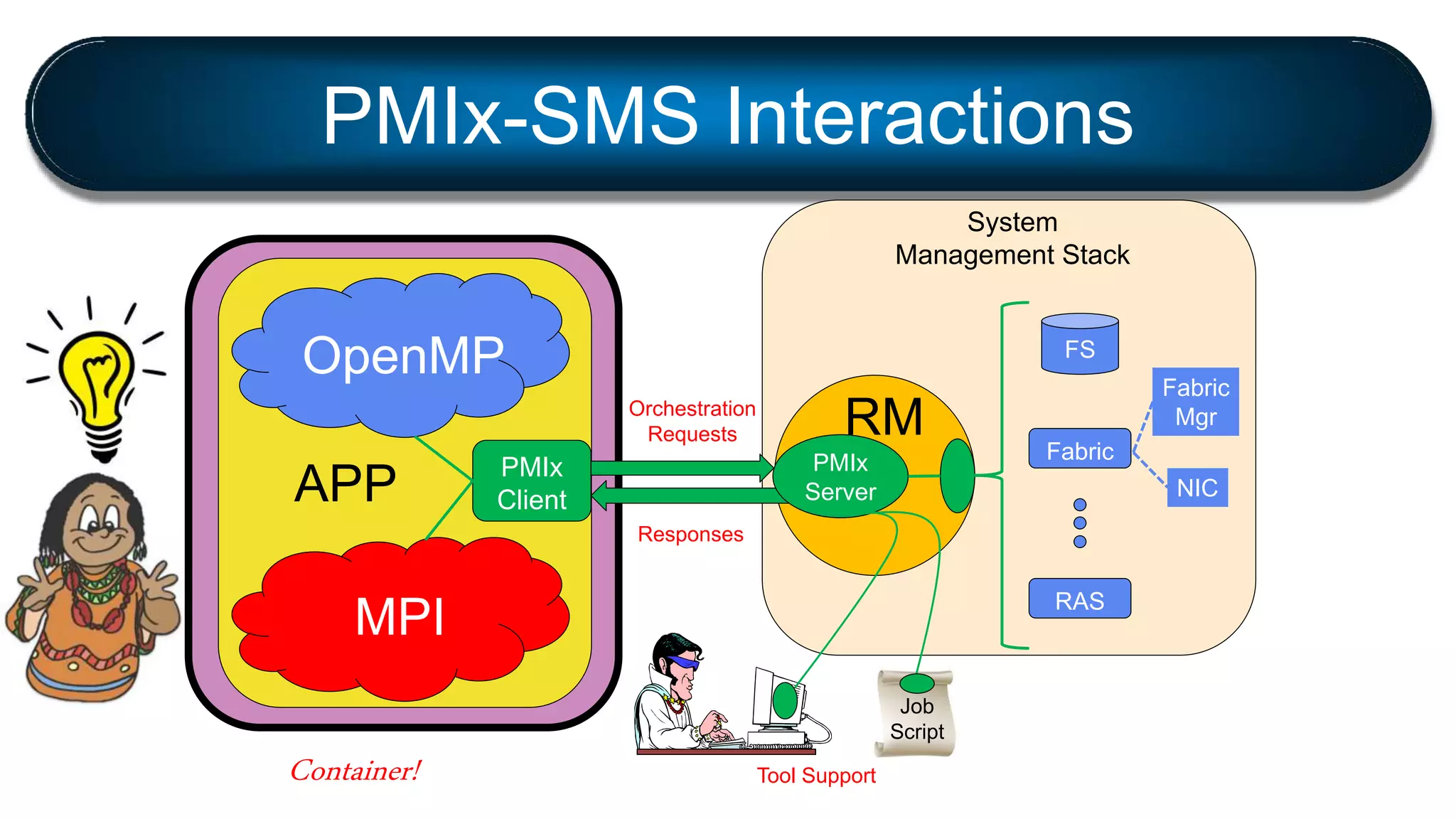

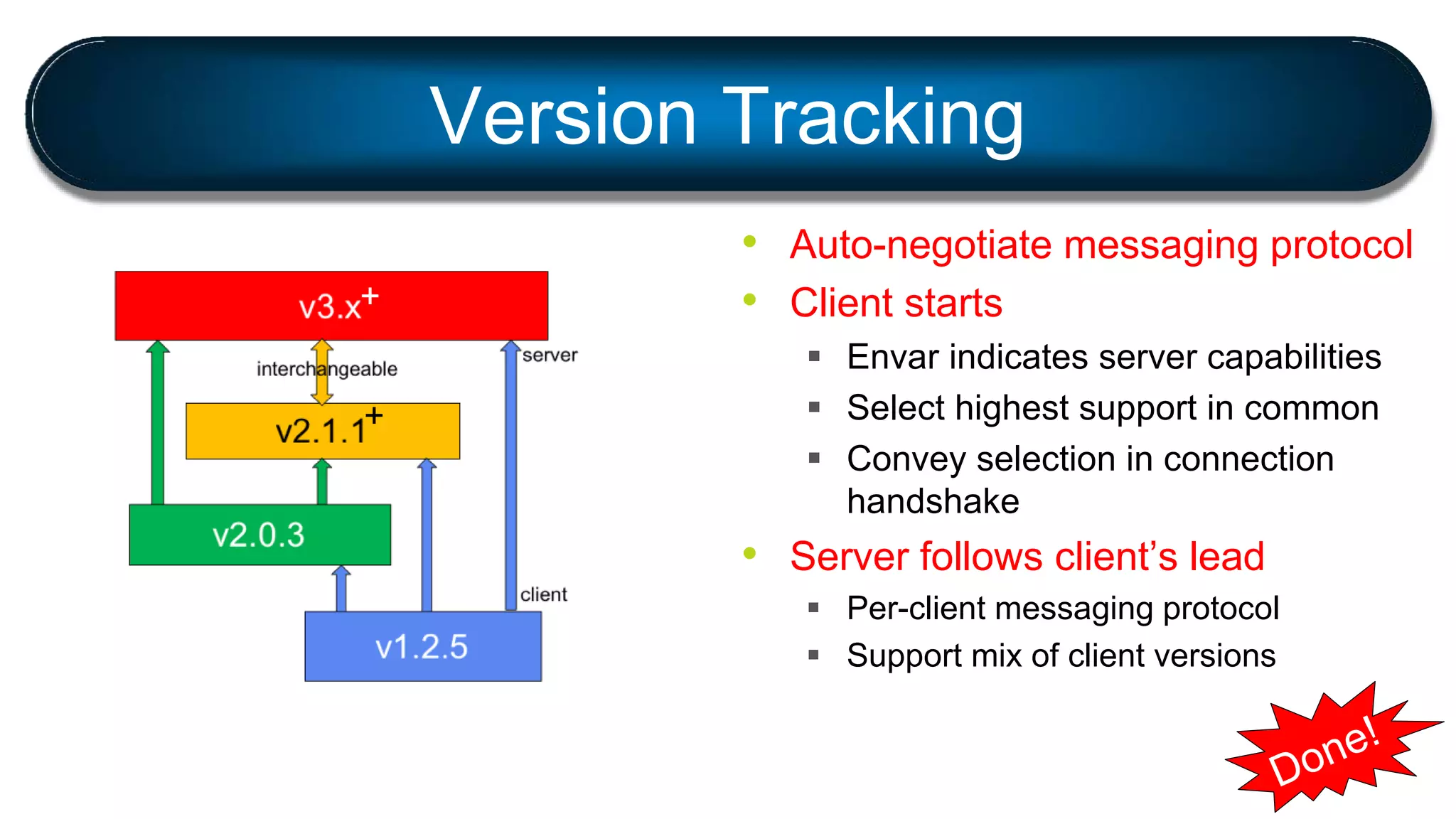

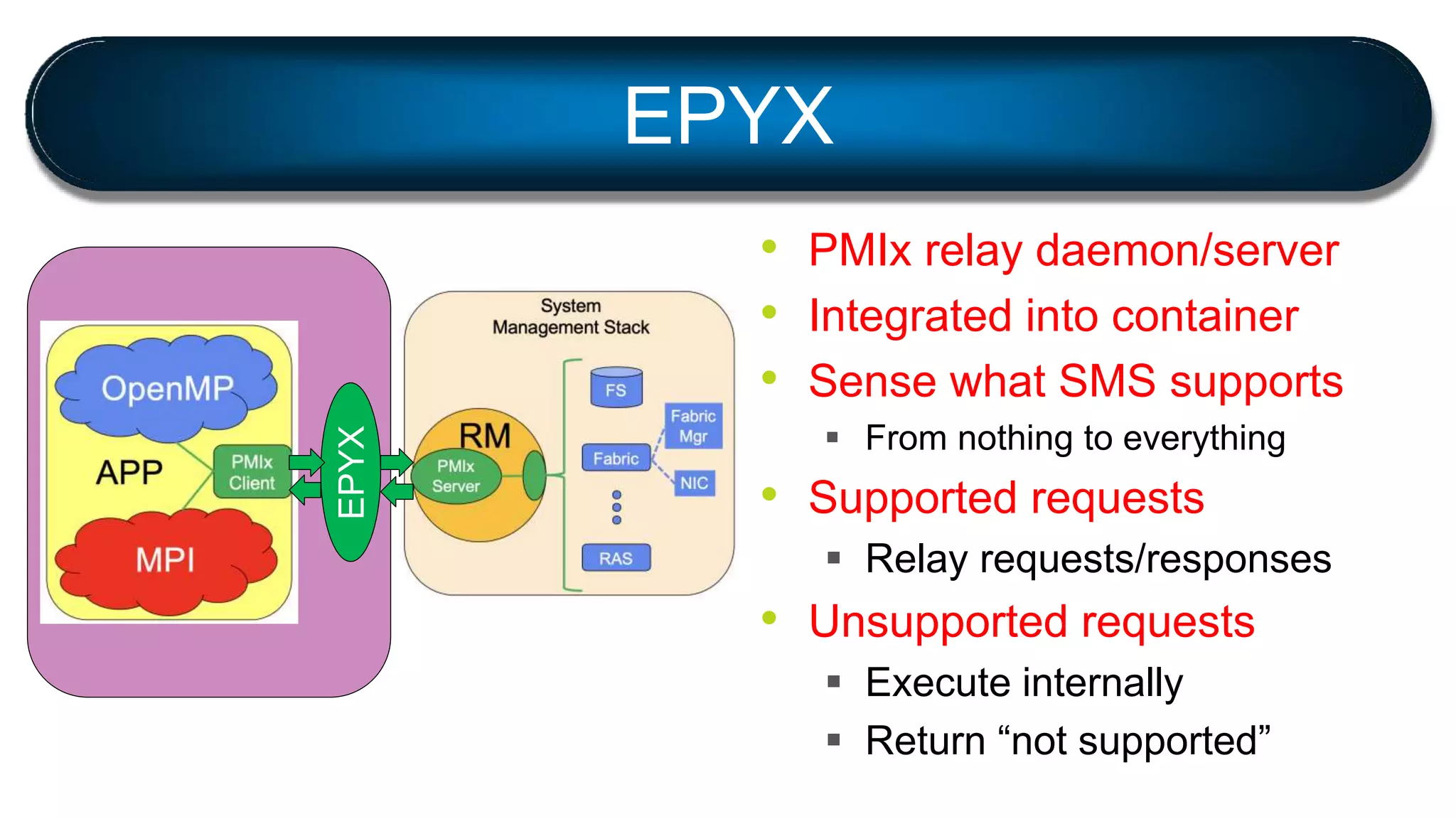

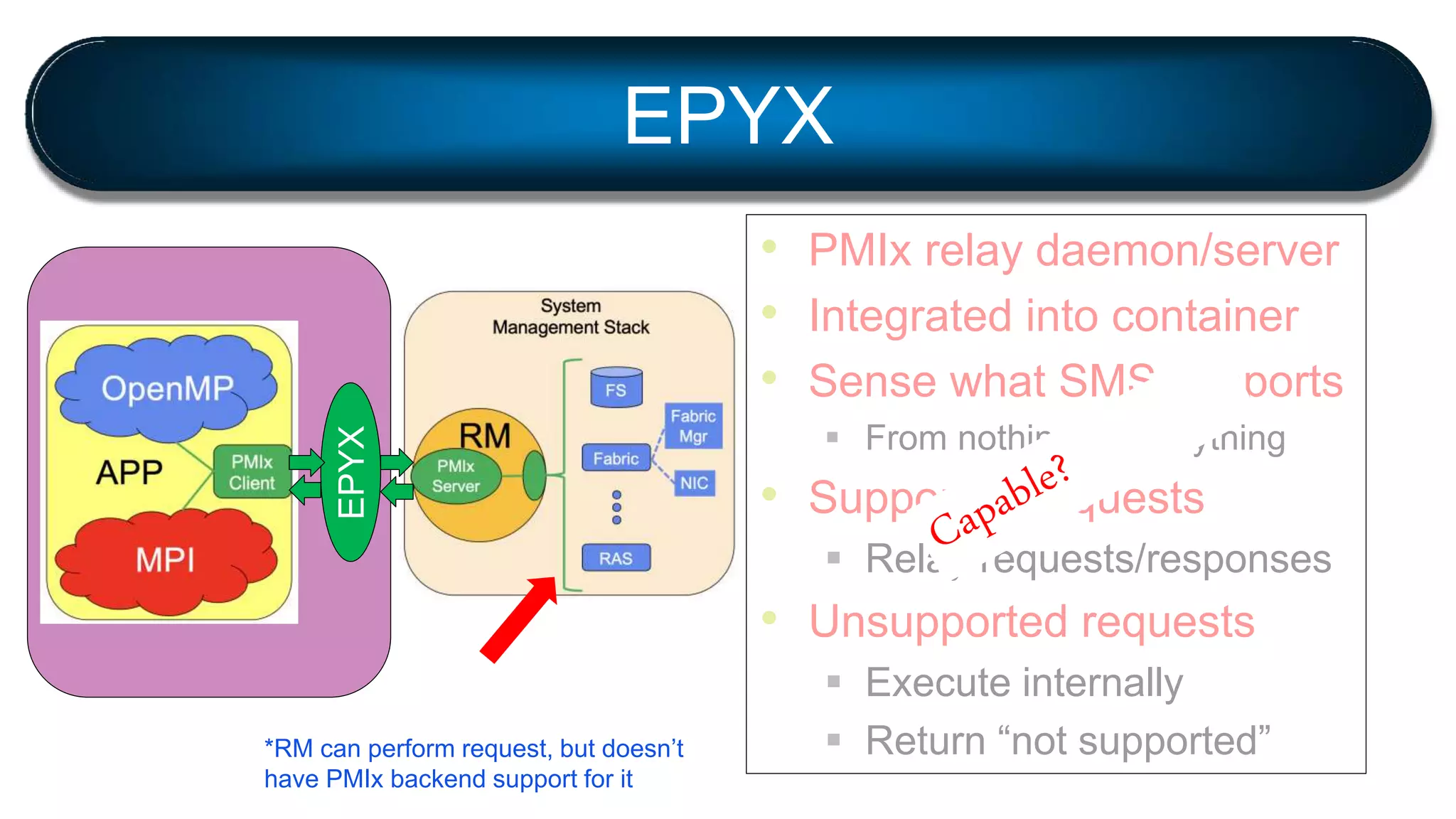

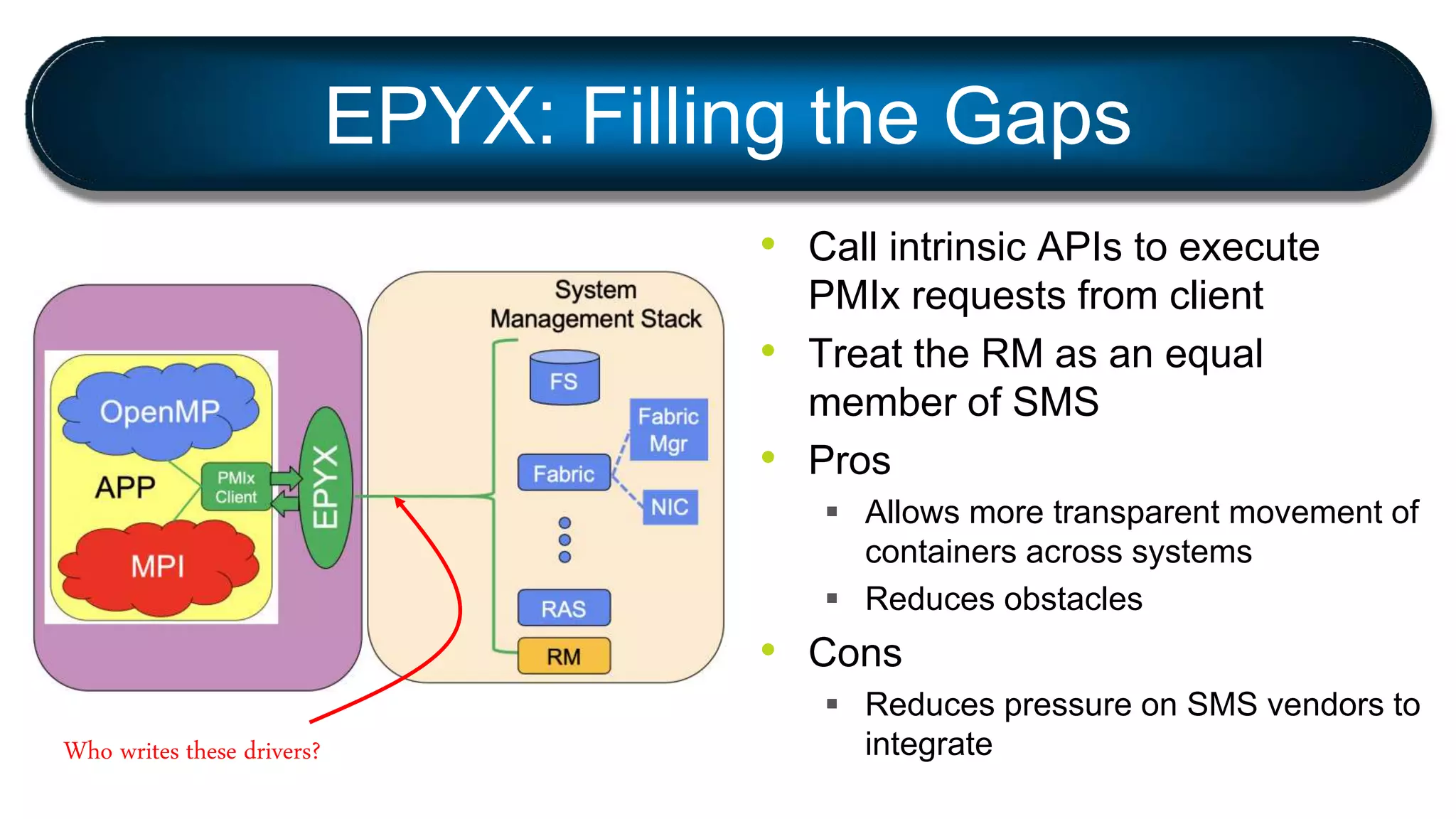

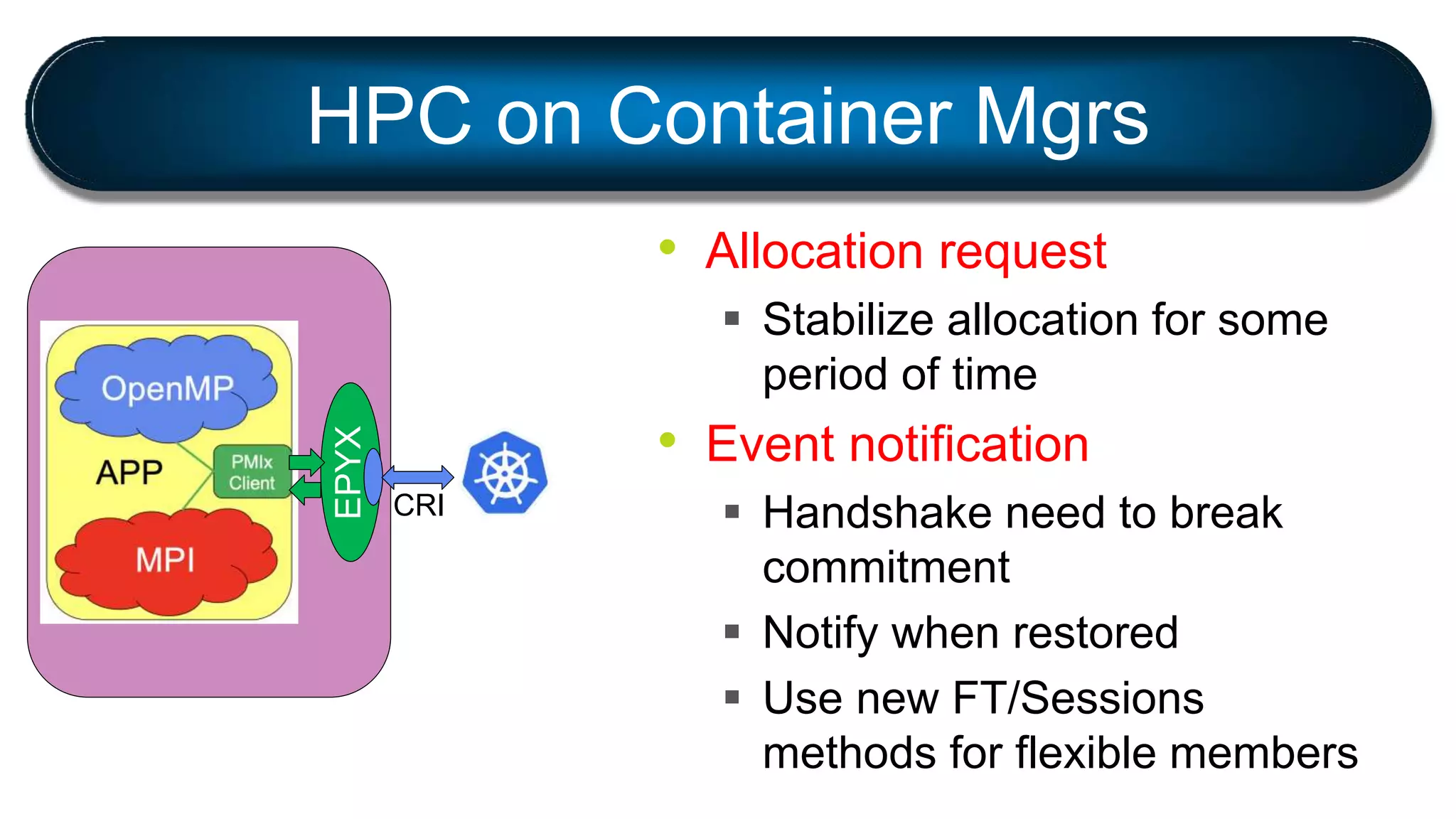

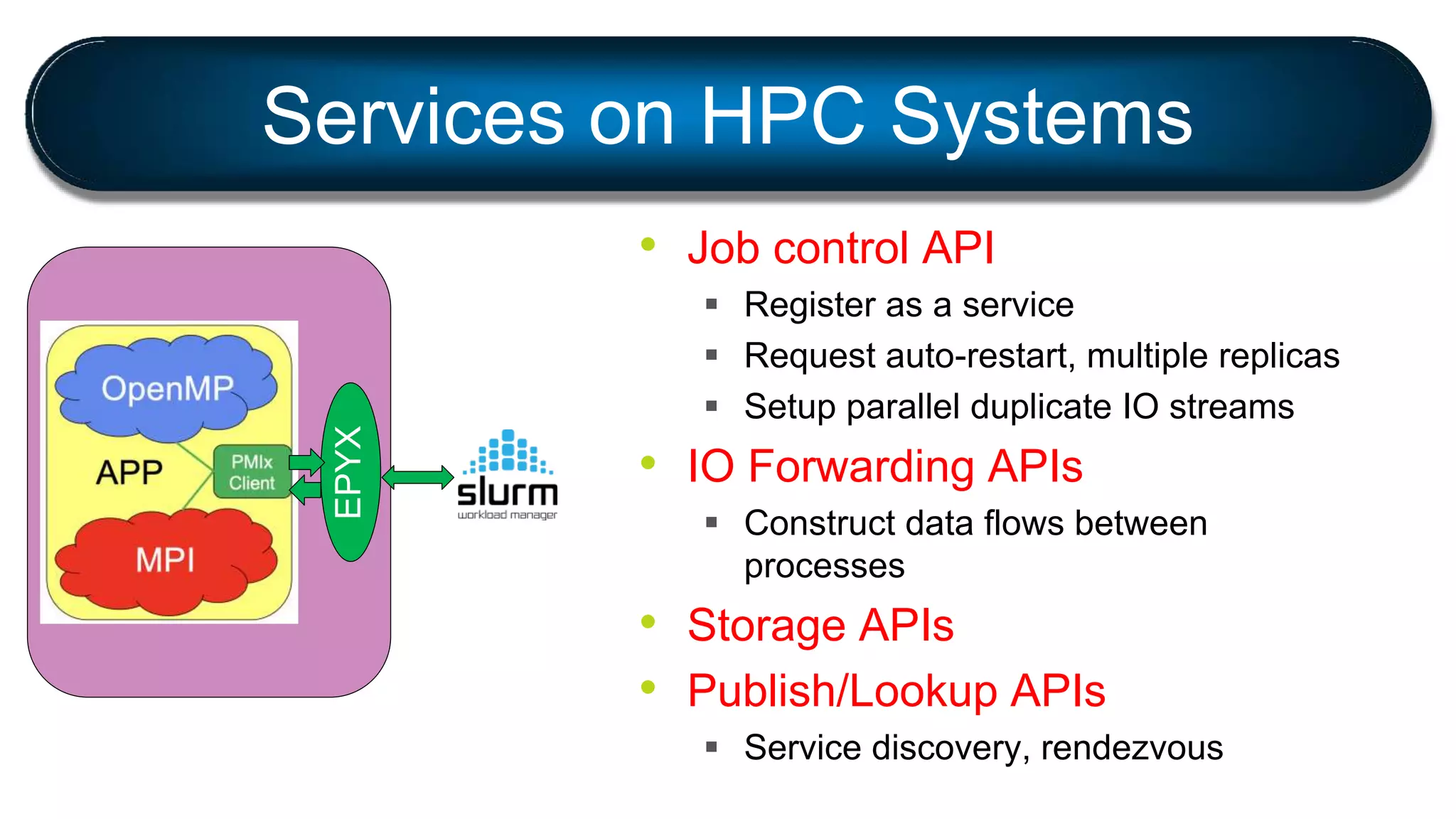

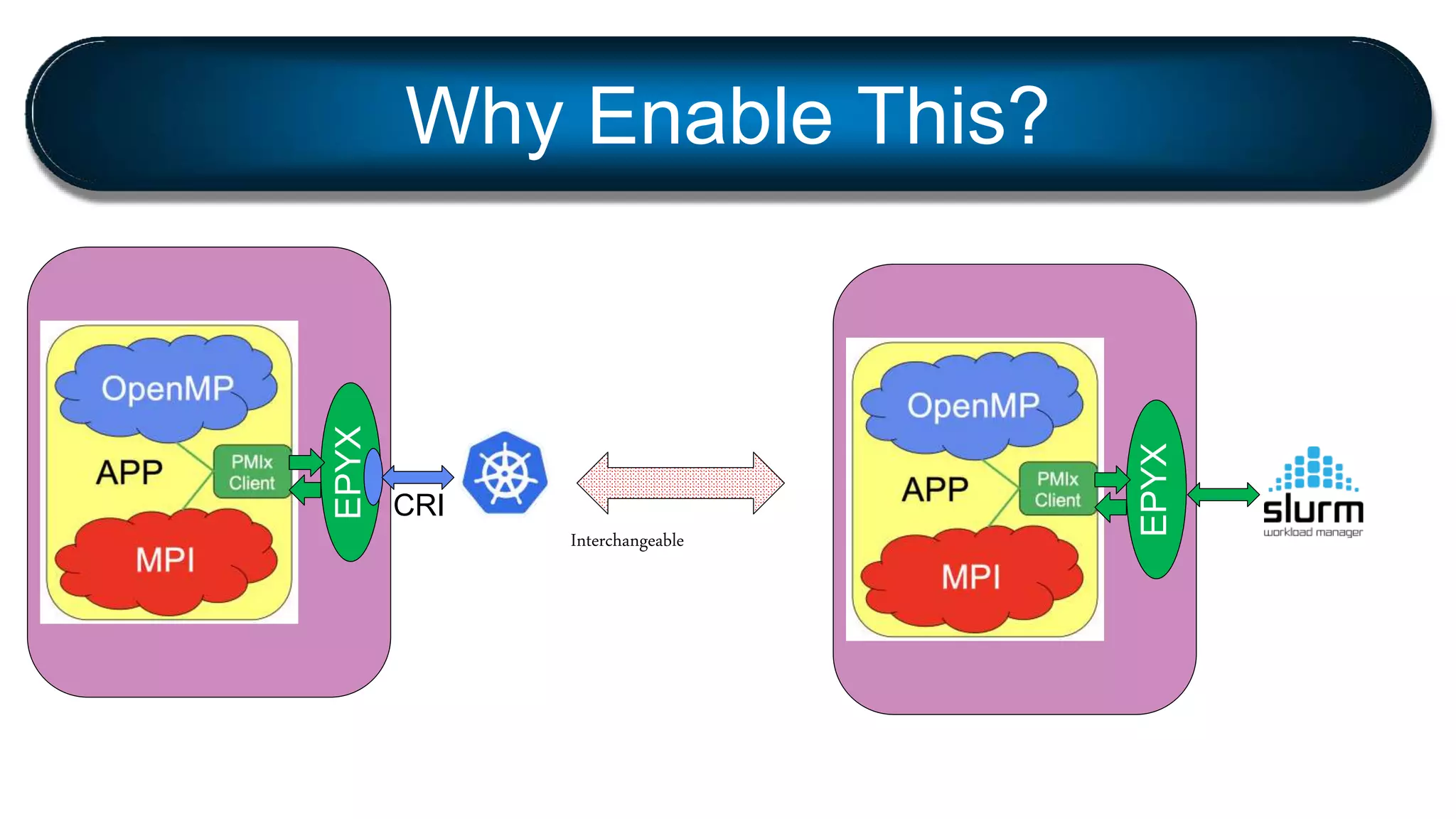

PMIx is a standard for process management that aims to bridge the gap between containers and HPC environments. It defines a set of APIs for tasks like resource management, launch coordination, and tool integration. The PMIx reference library provides an implementation of the standard to ease adoption. PMIx is now used in many HPC libraries and resource managers and enables new use cases like container support. Future work may expand PMIx to support dynamic resource allocation, failure handling, and integration with file systems and fabric managers.