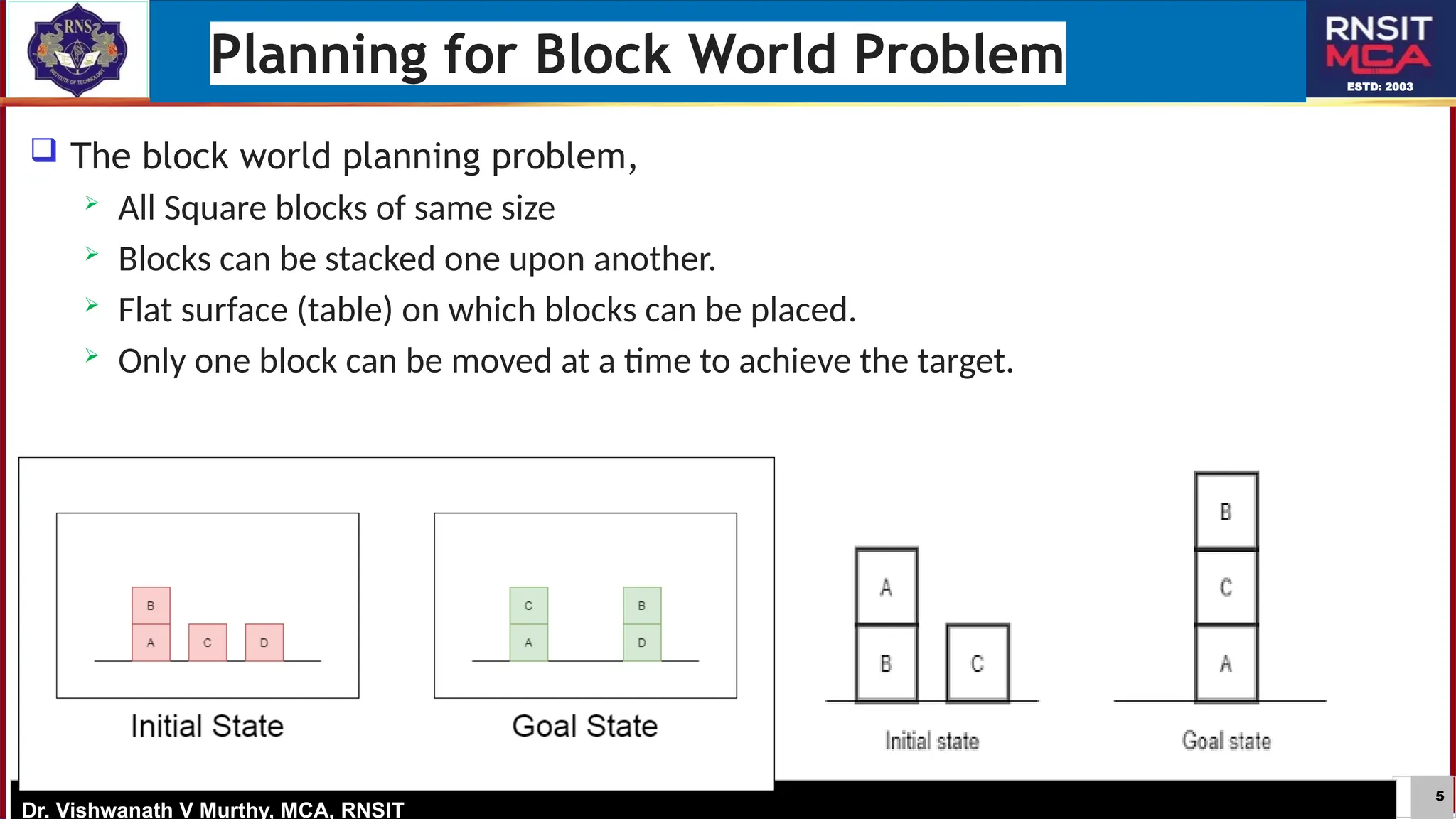

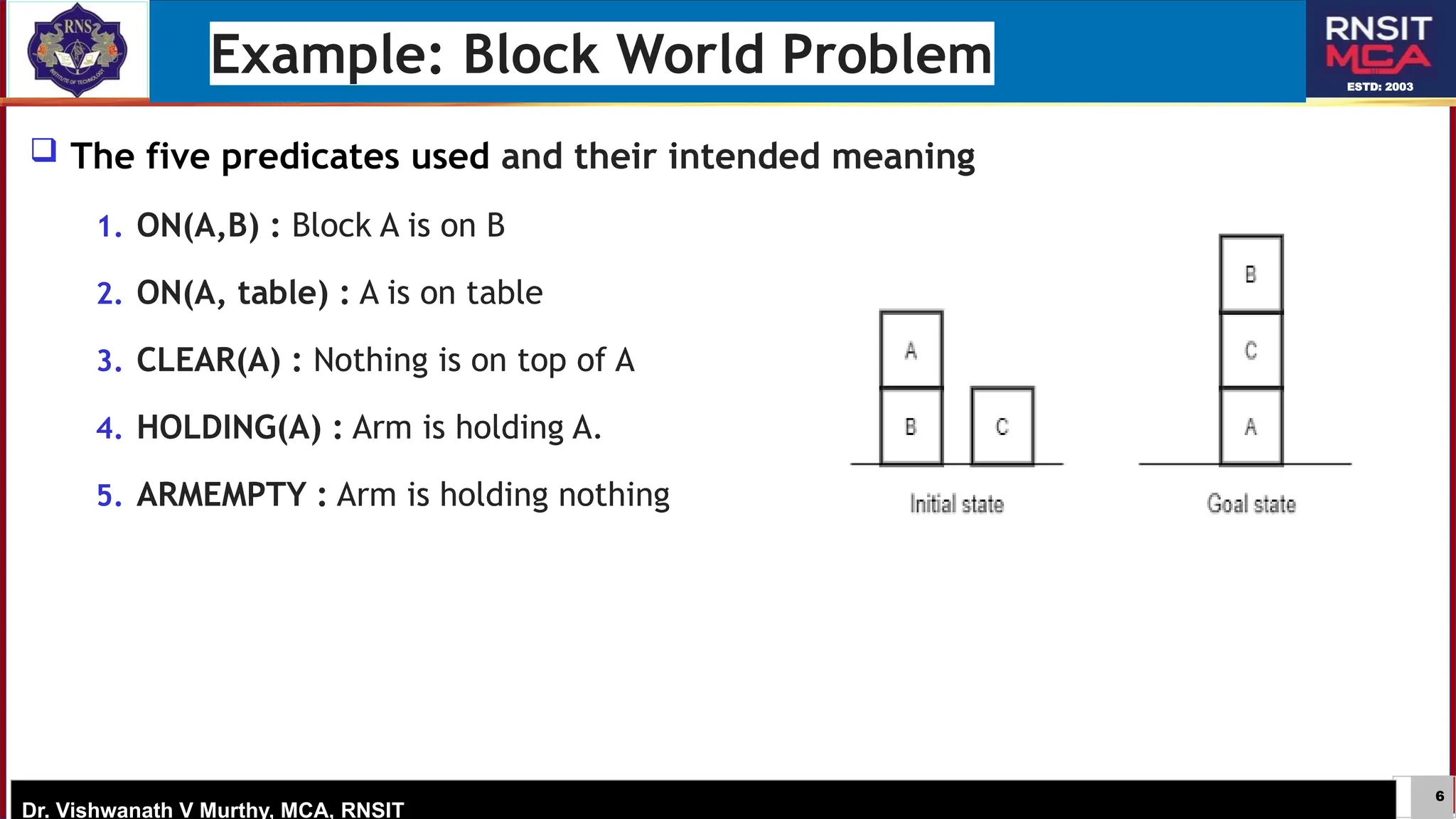

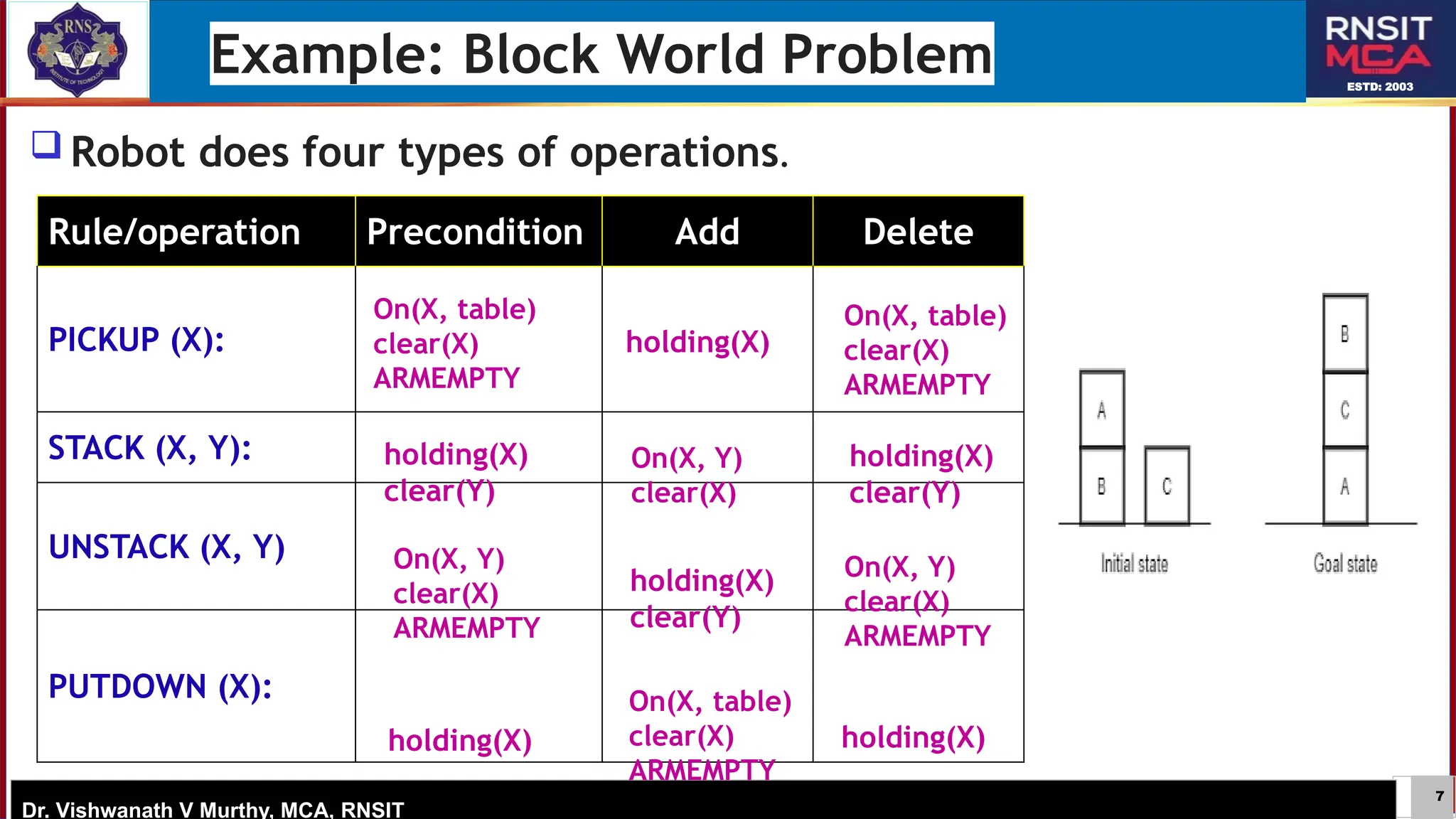

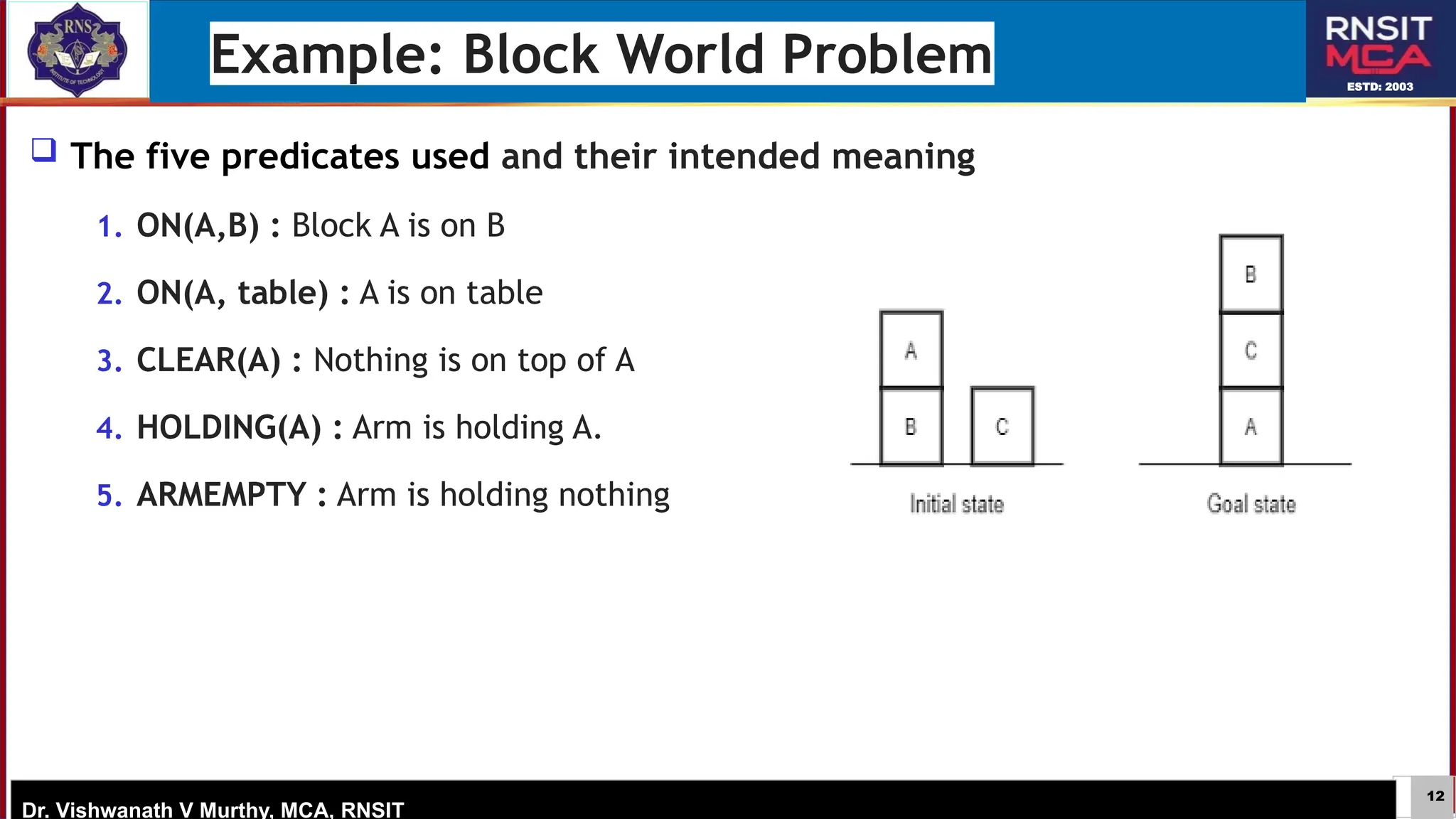

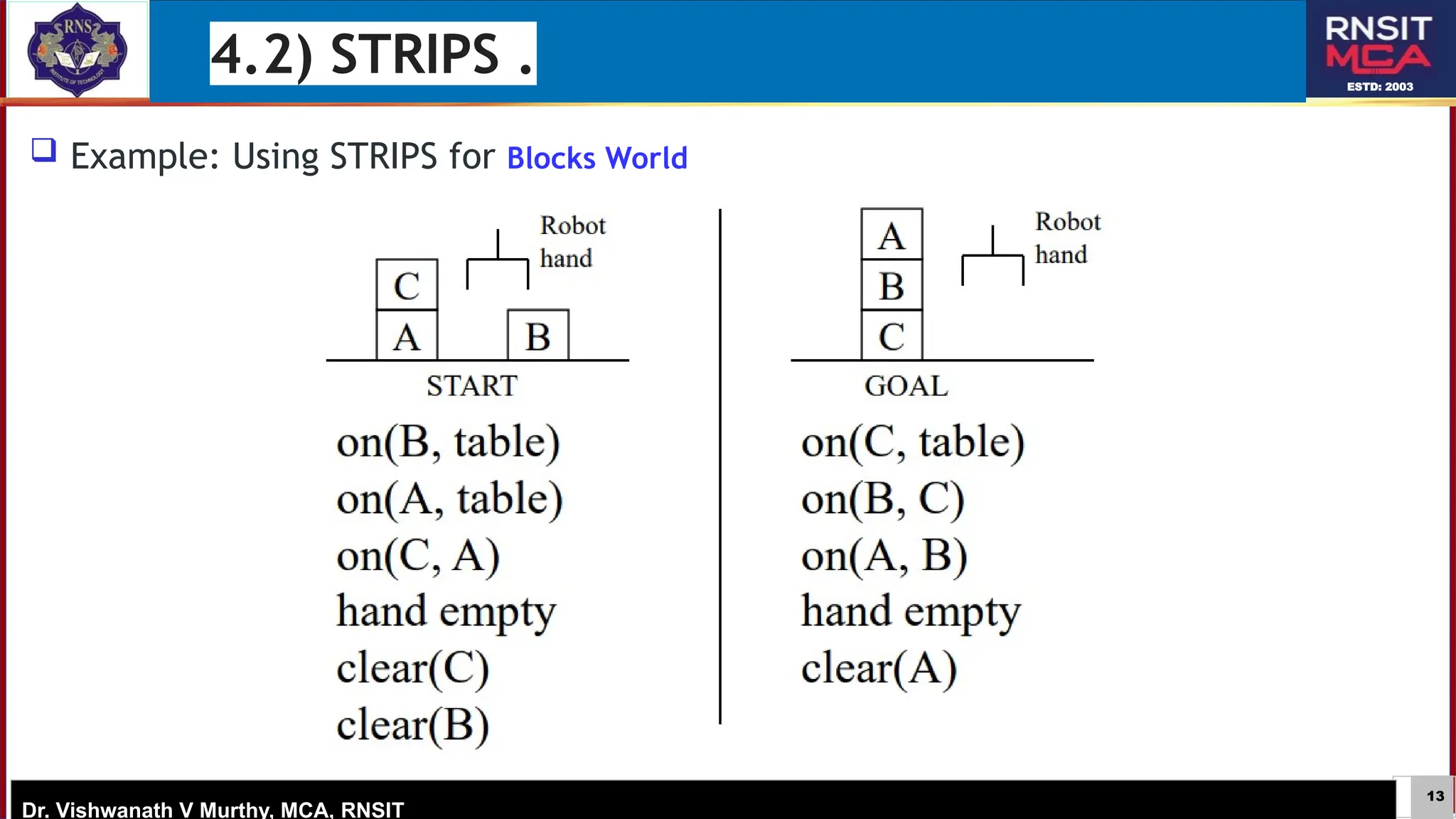

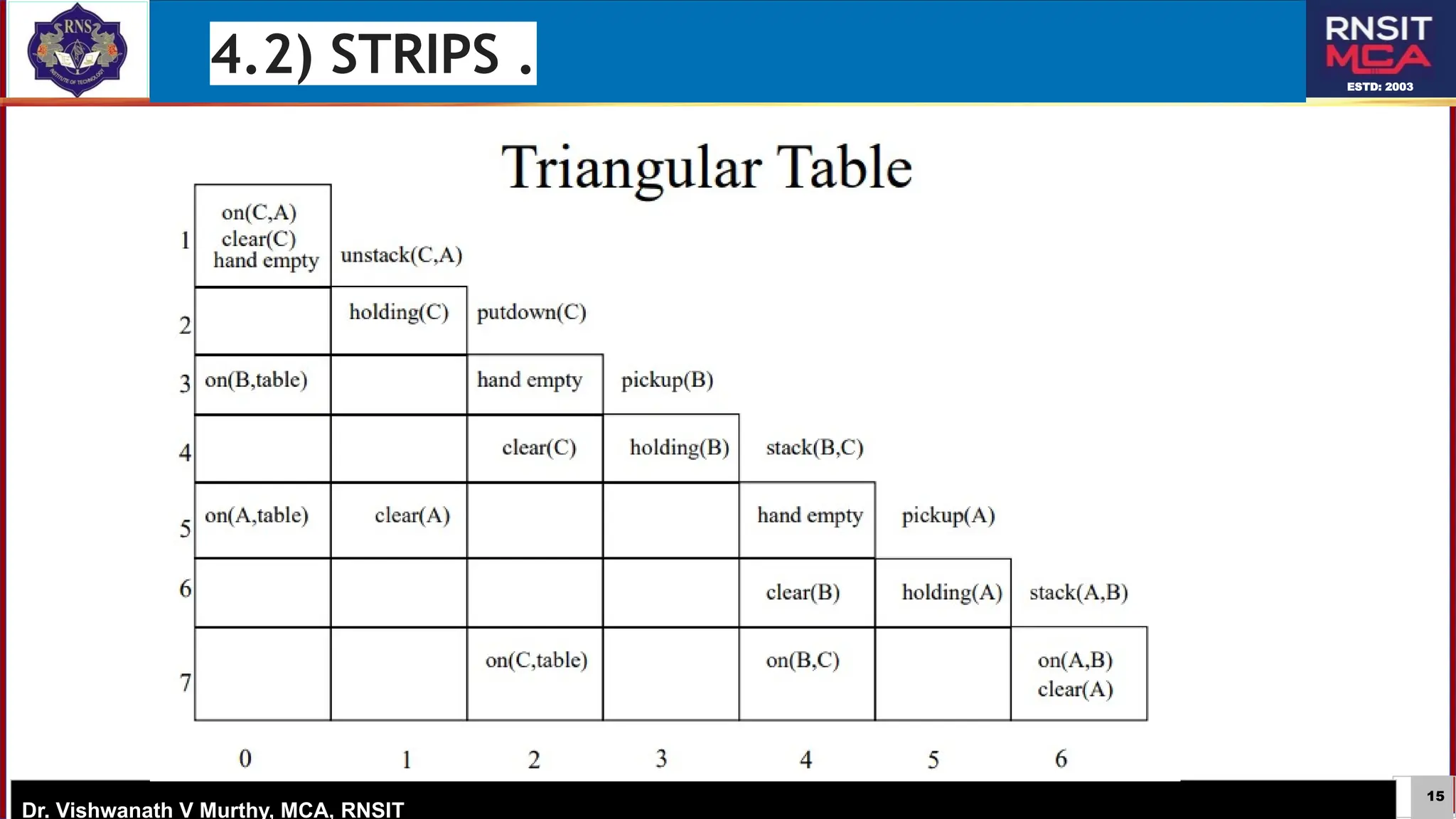

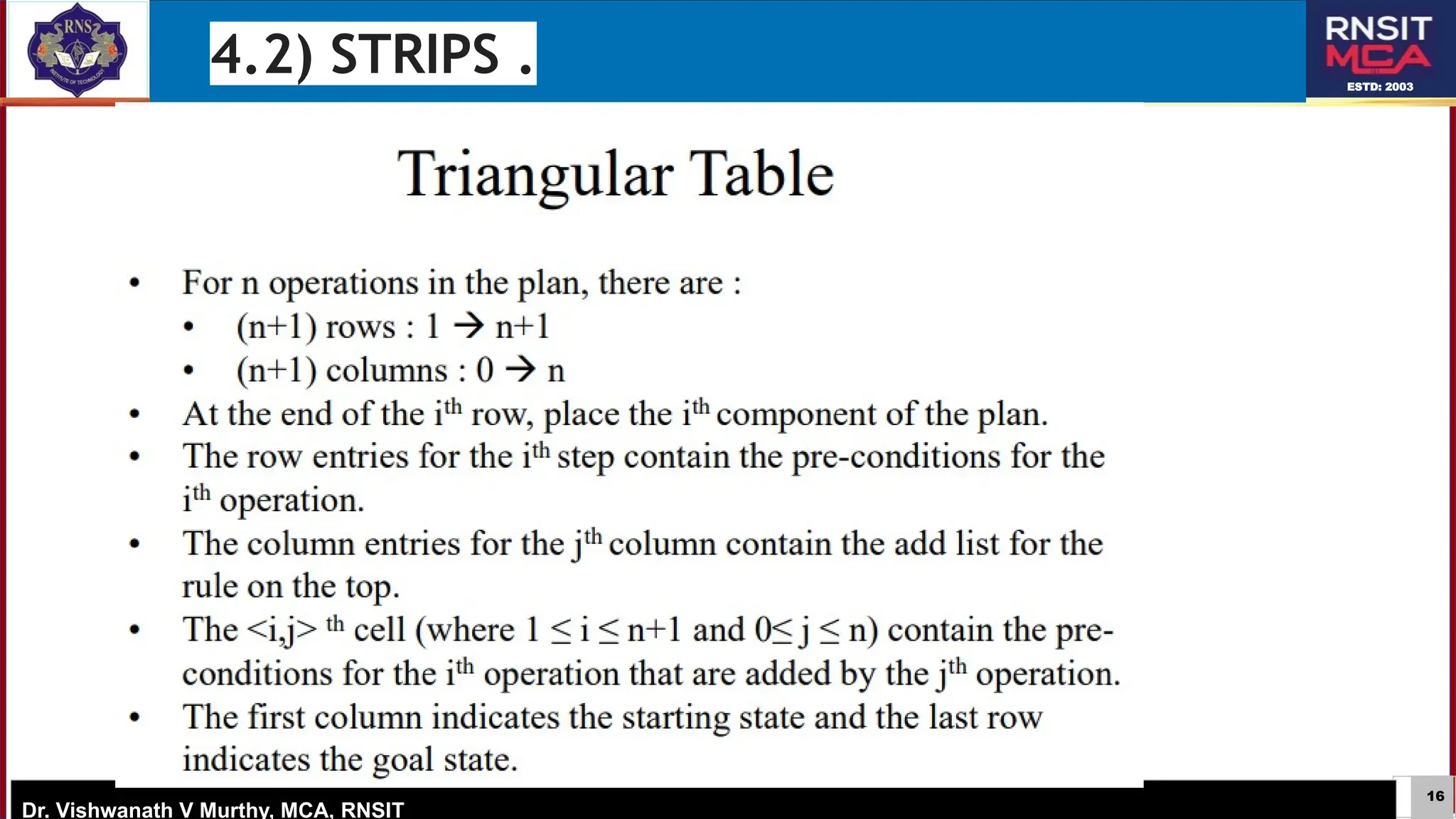

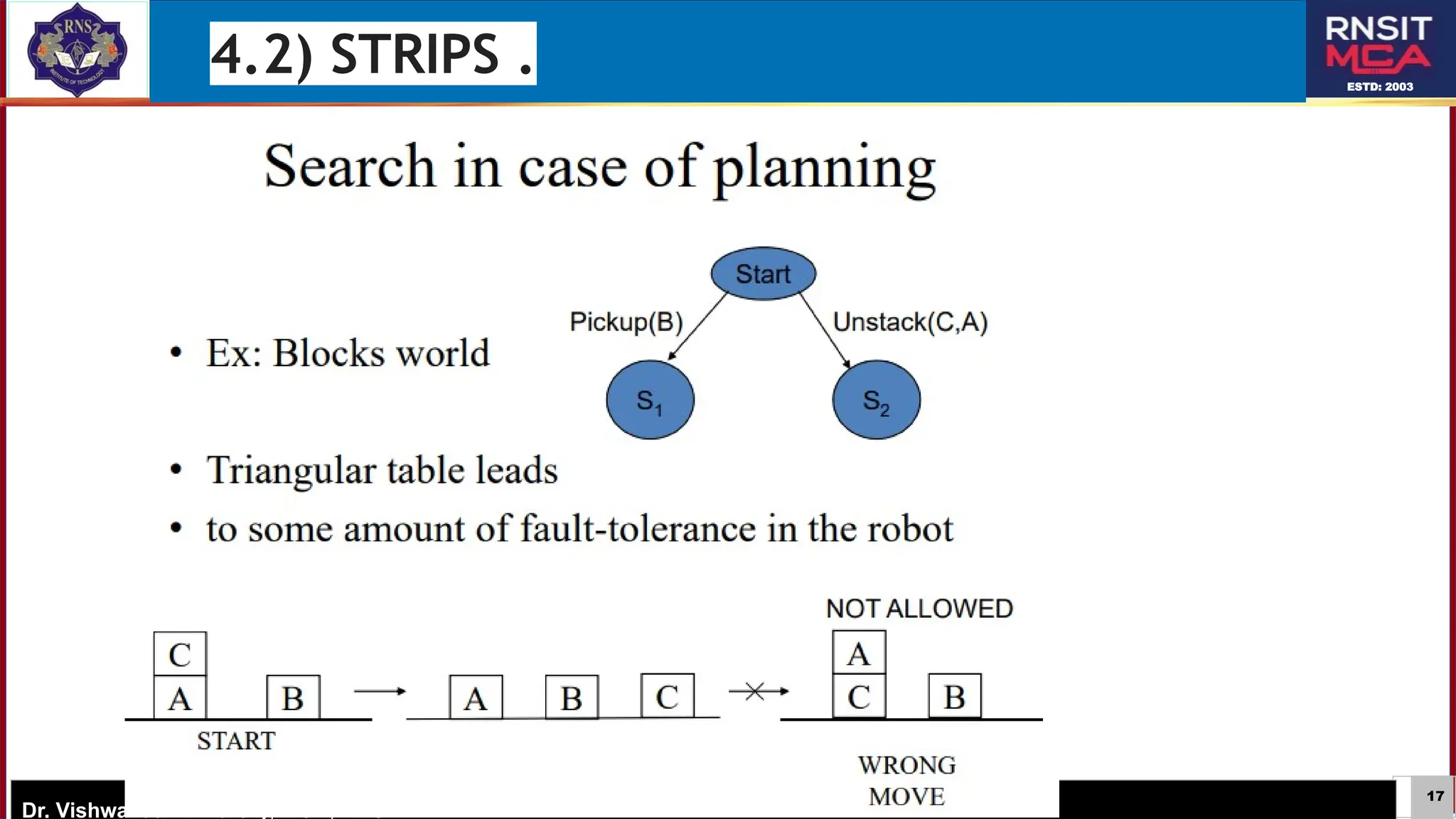

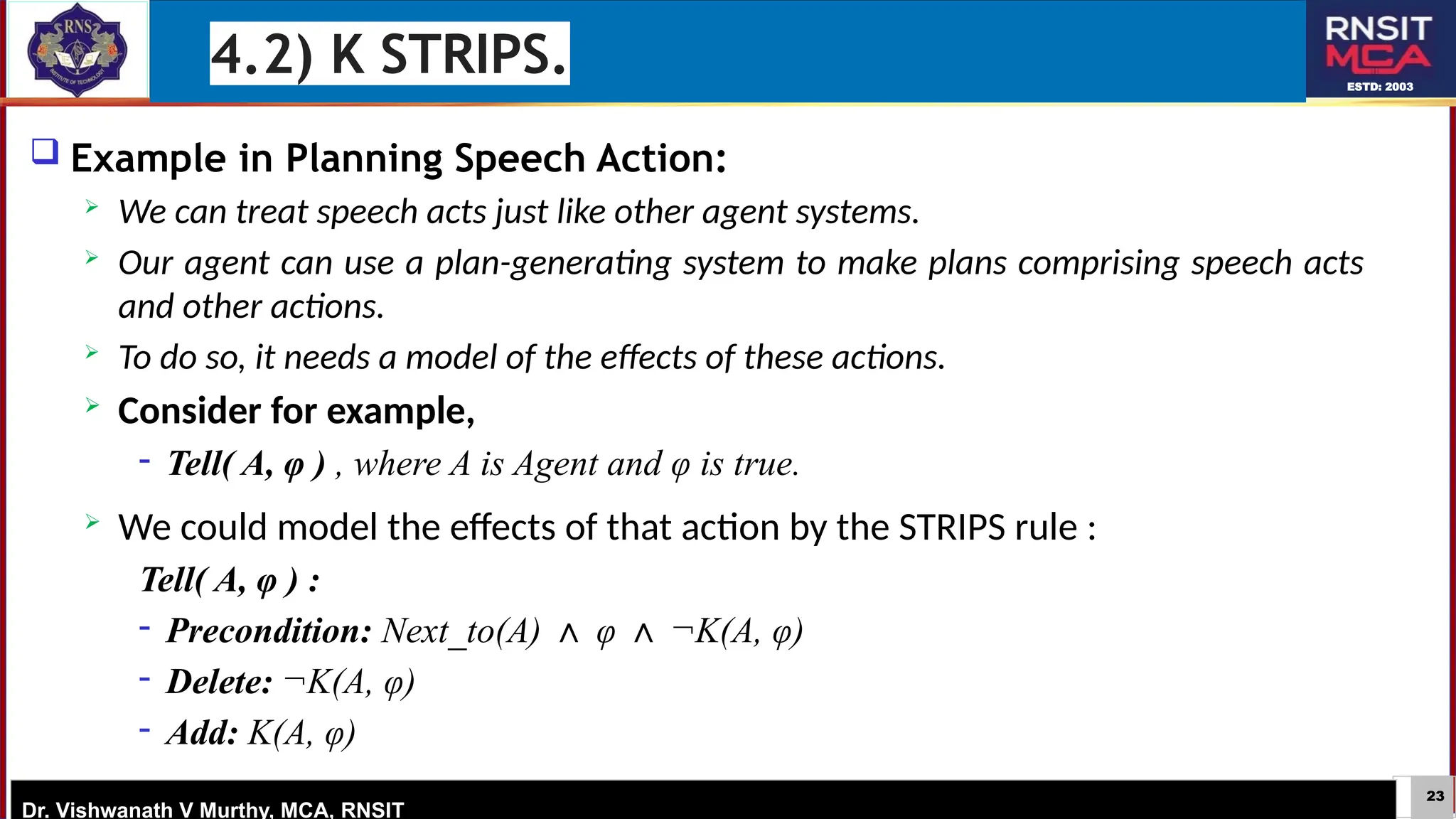

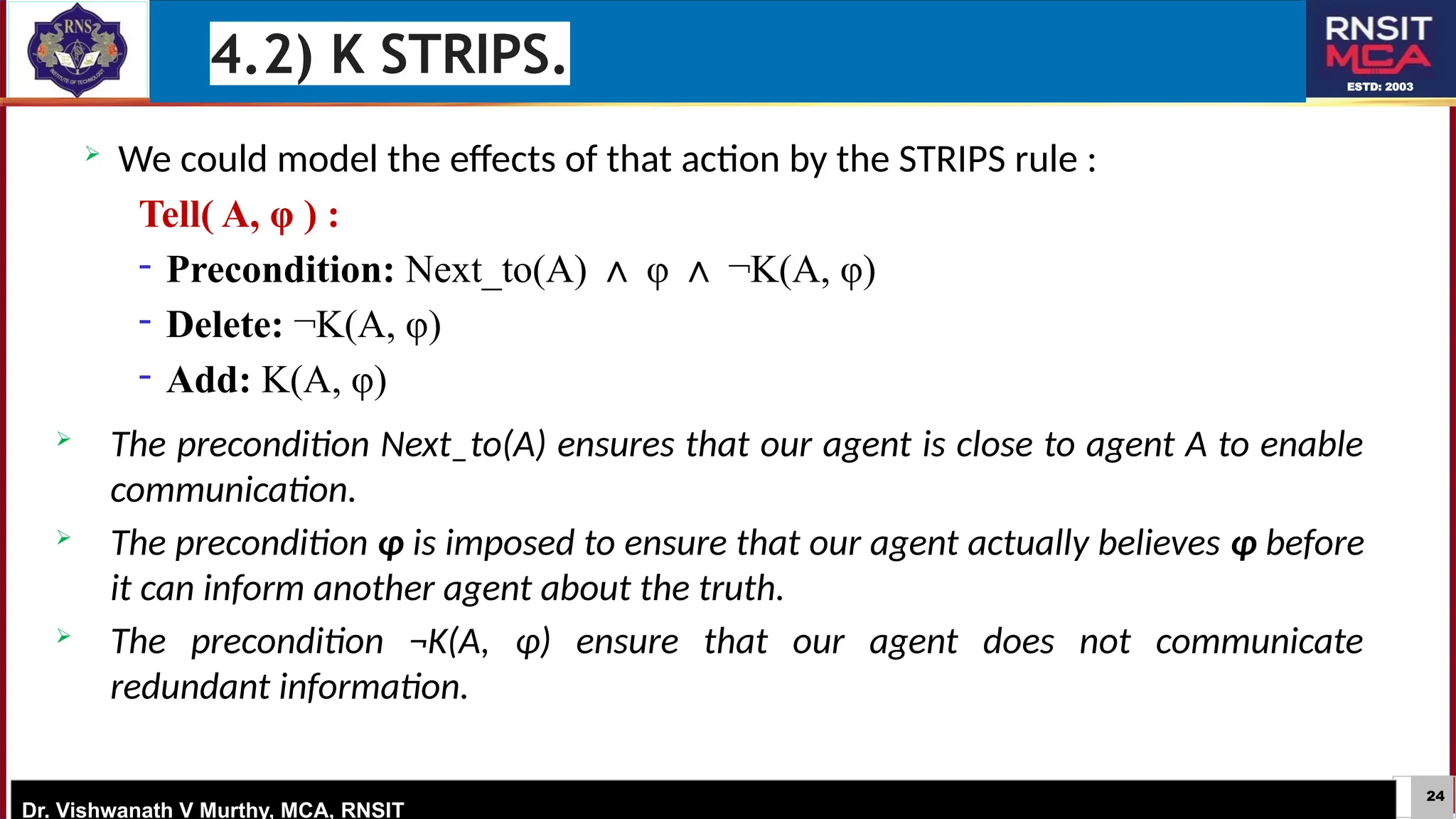

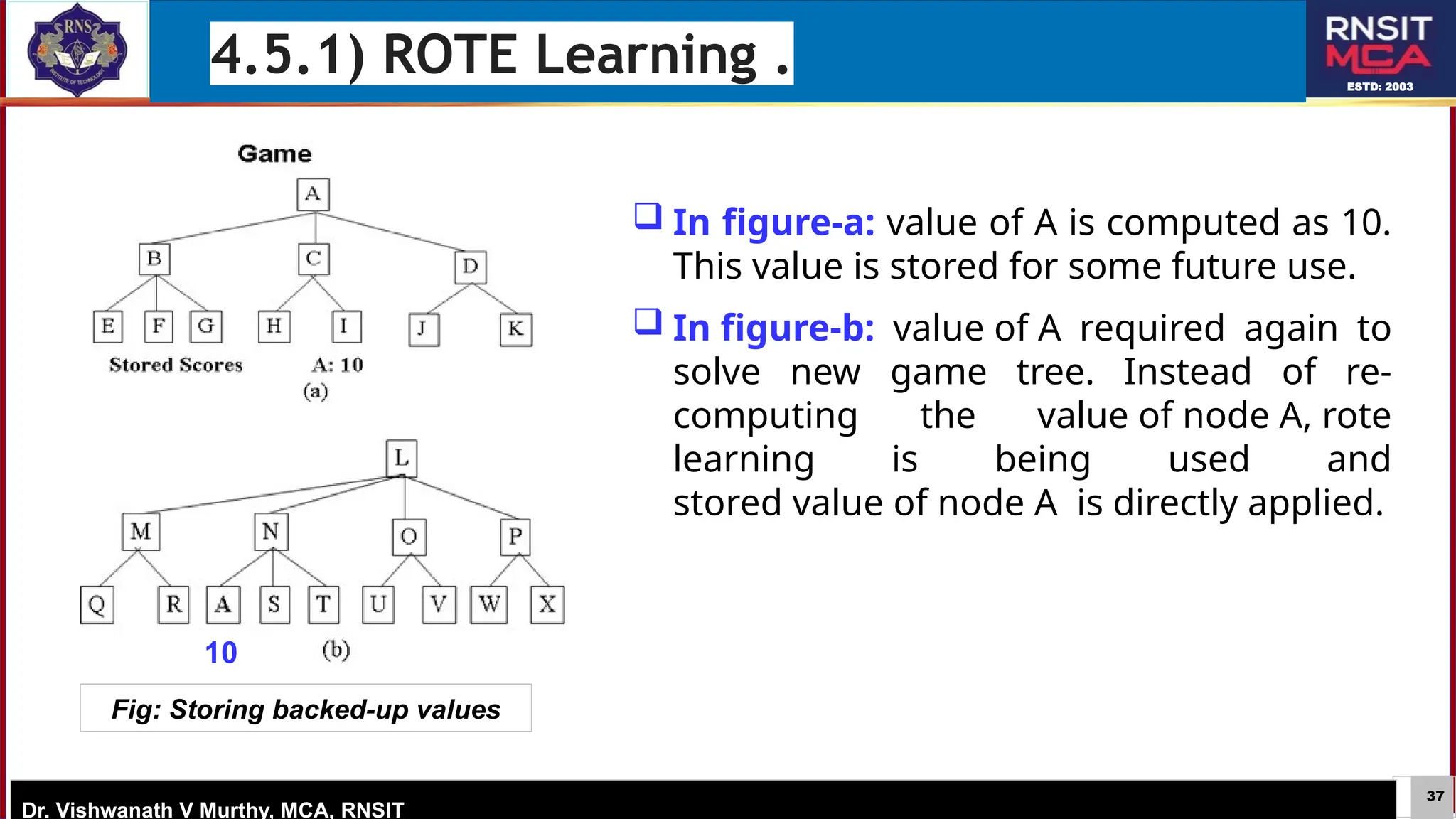

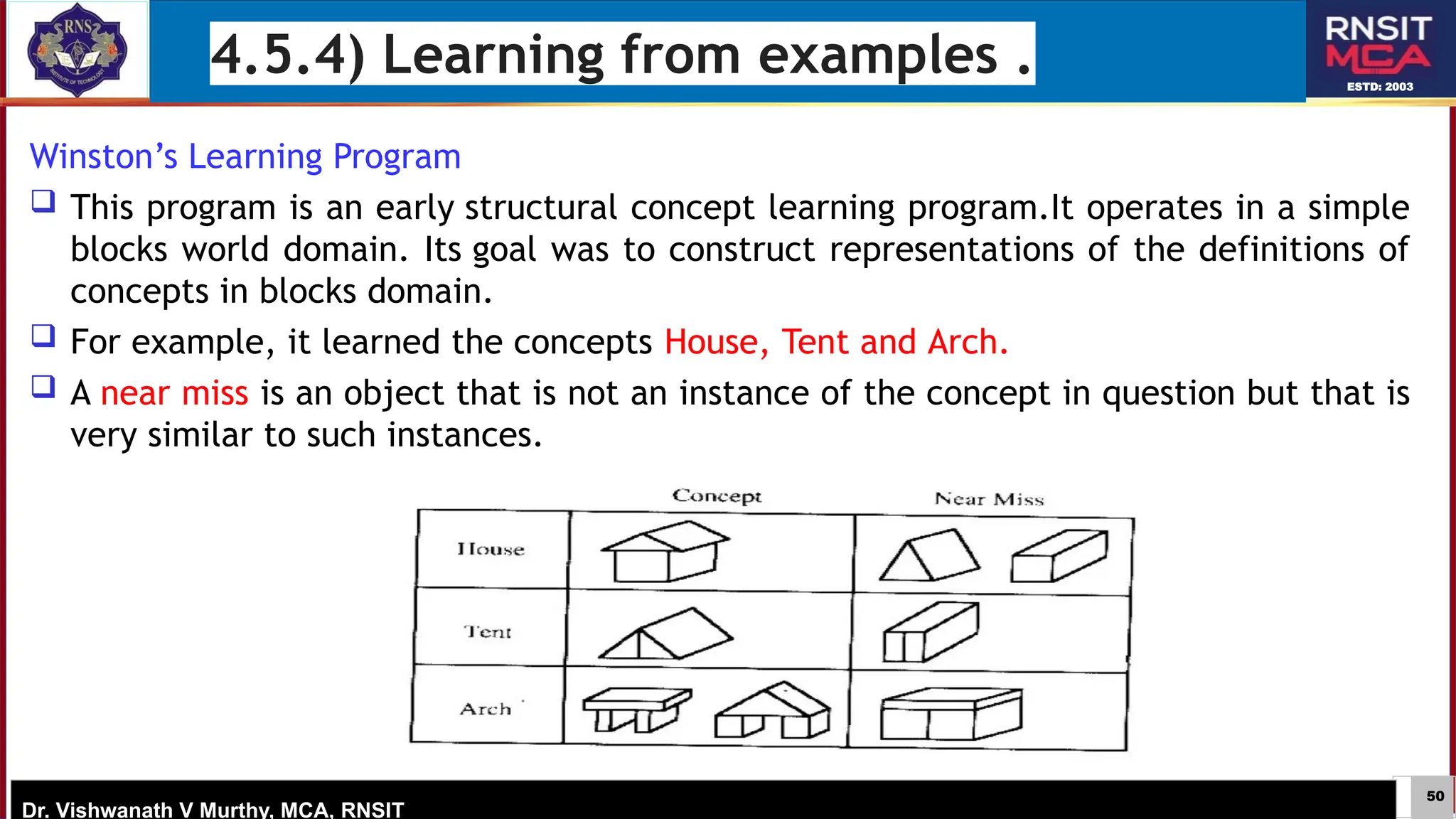

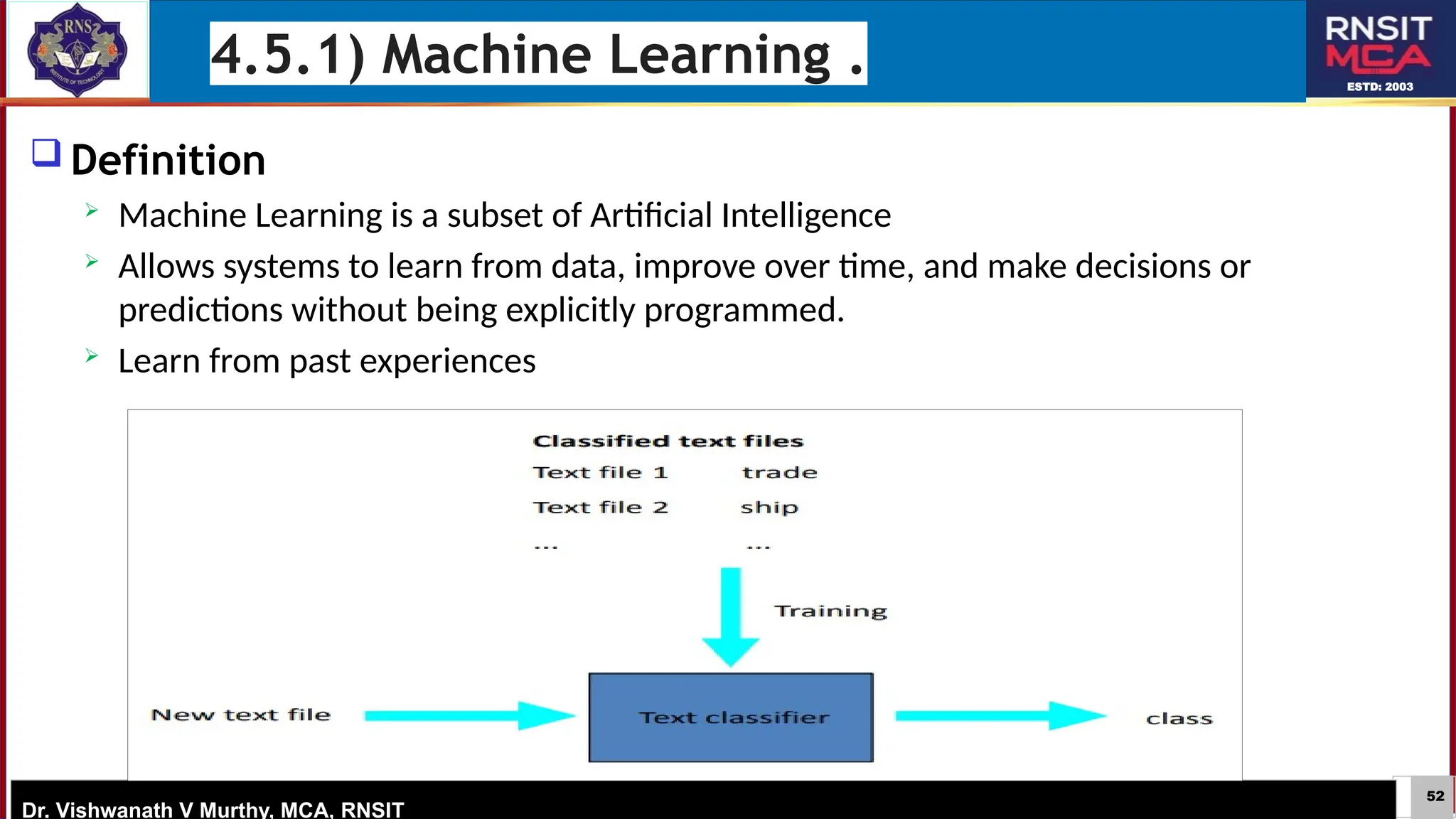

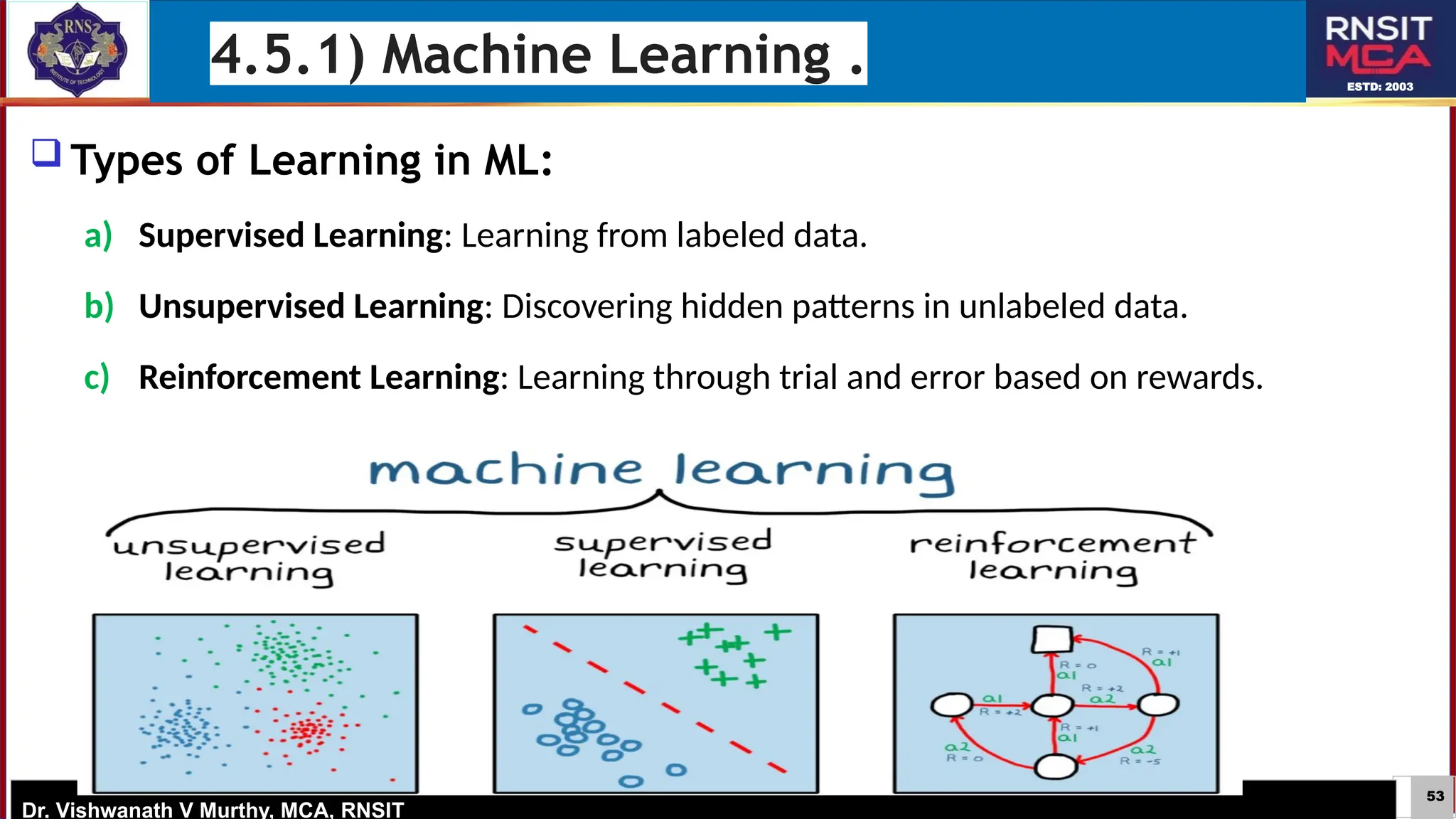

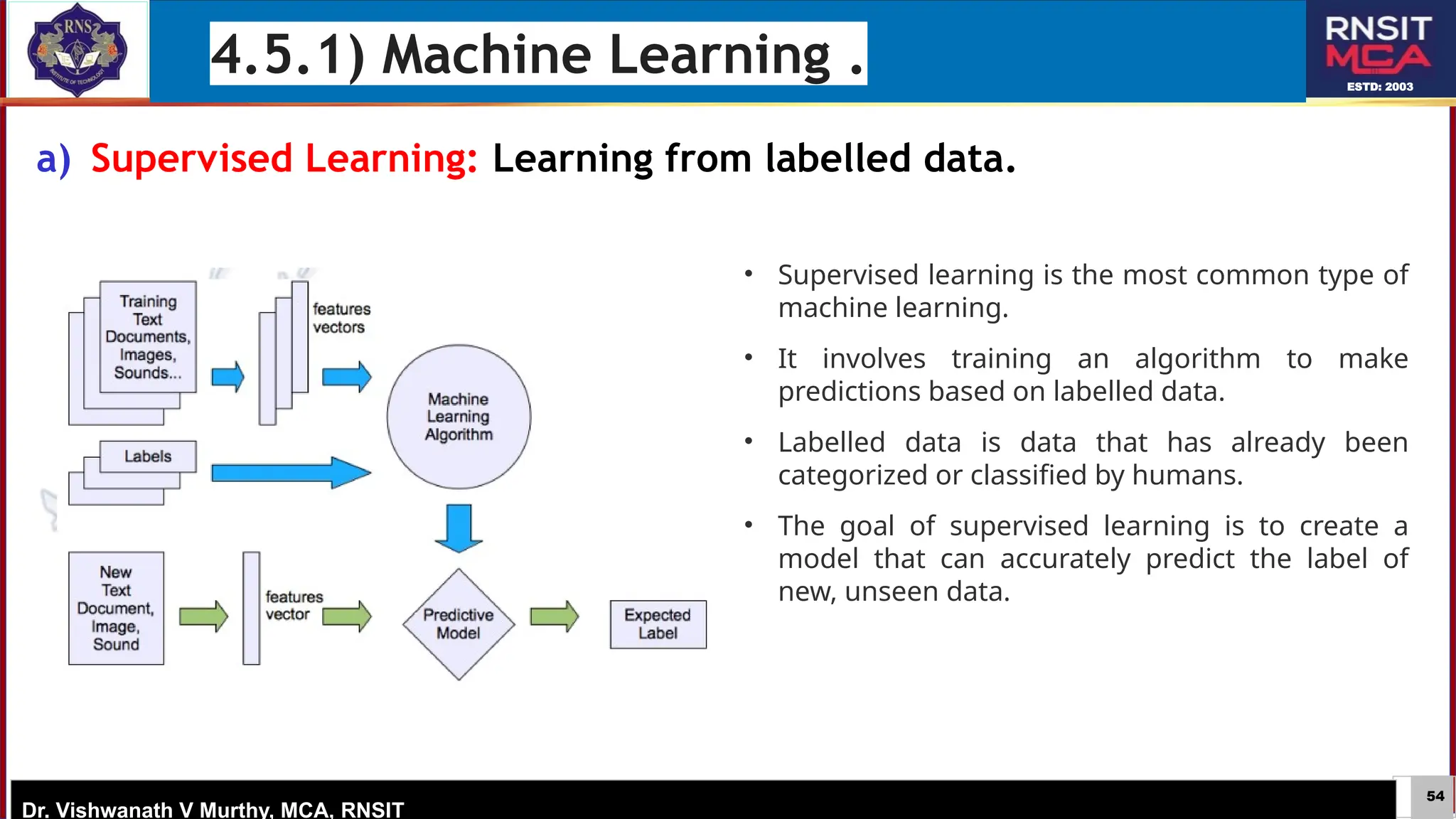

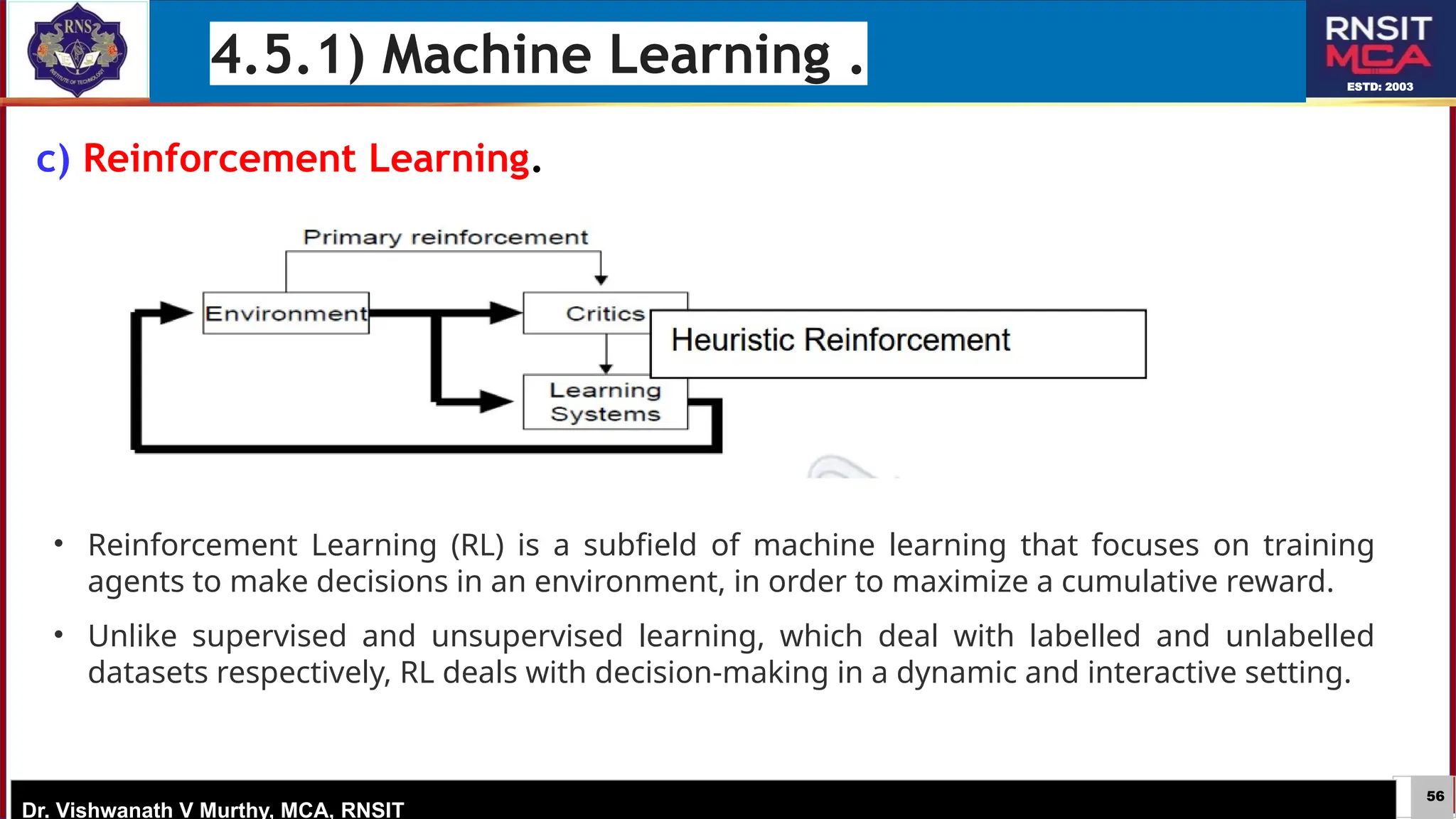

The document discusses artificial intelligence concepts, particularly focusing on planning and machine learning, with systems like STRIPS and K-STRIPS for automating planning processes. It outlines essential elements such as predicates, actions, and the framework for explanations in AI, emphasizing the importance of transparency and user understanding in AI decisions. Additionally, it covers learning mechanisms in AI, highlighting methods like rote learning and skill refinement to improve system efficiency.