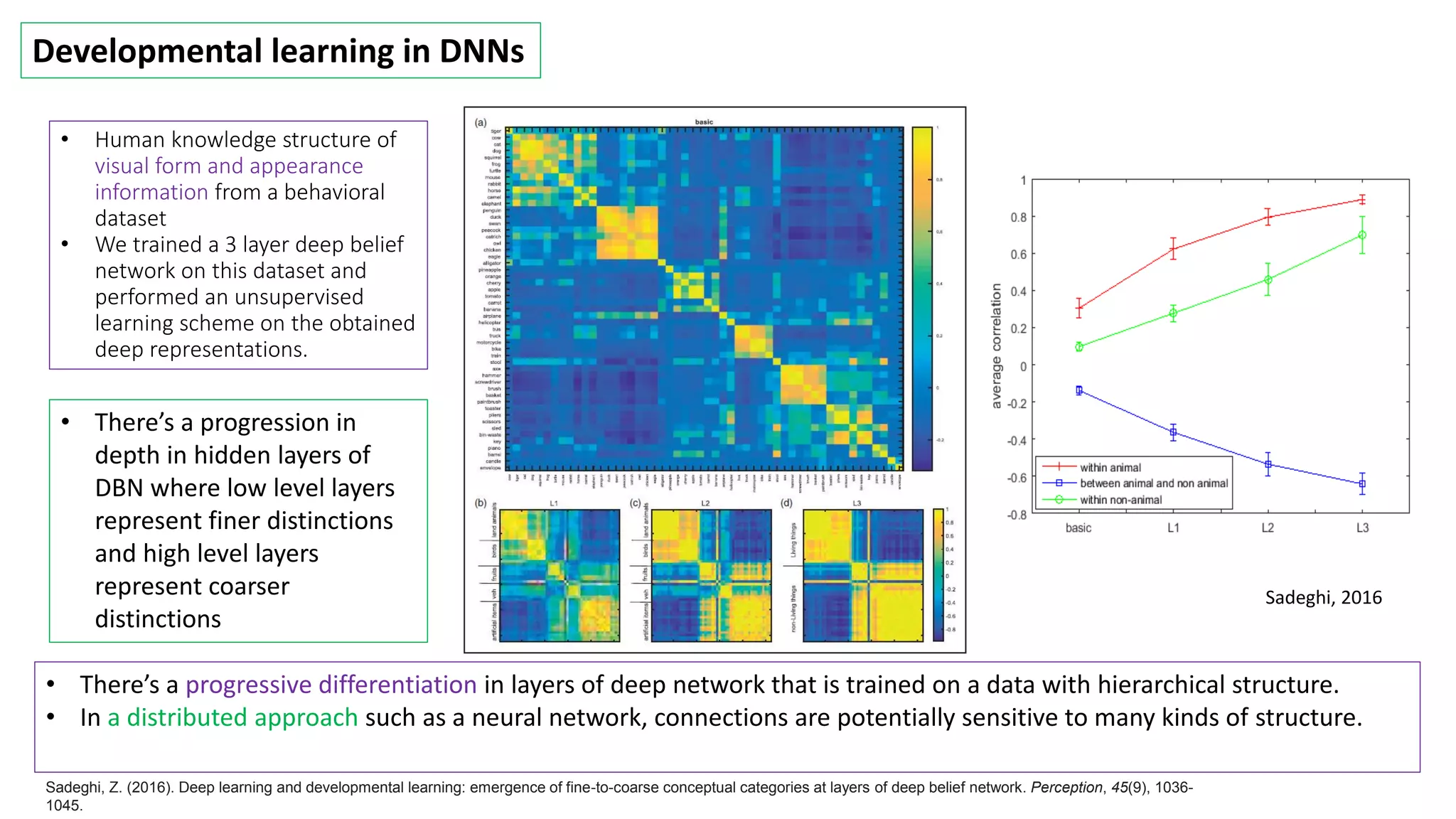

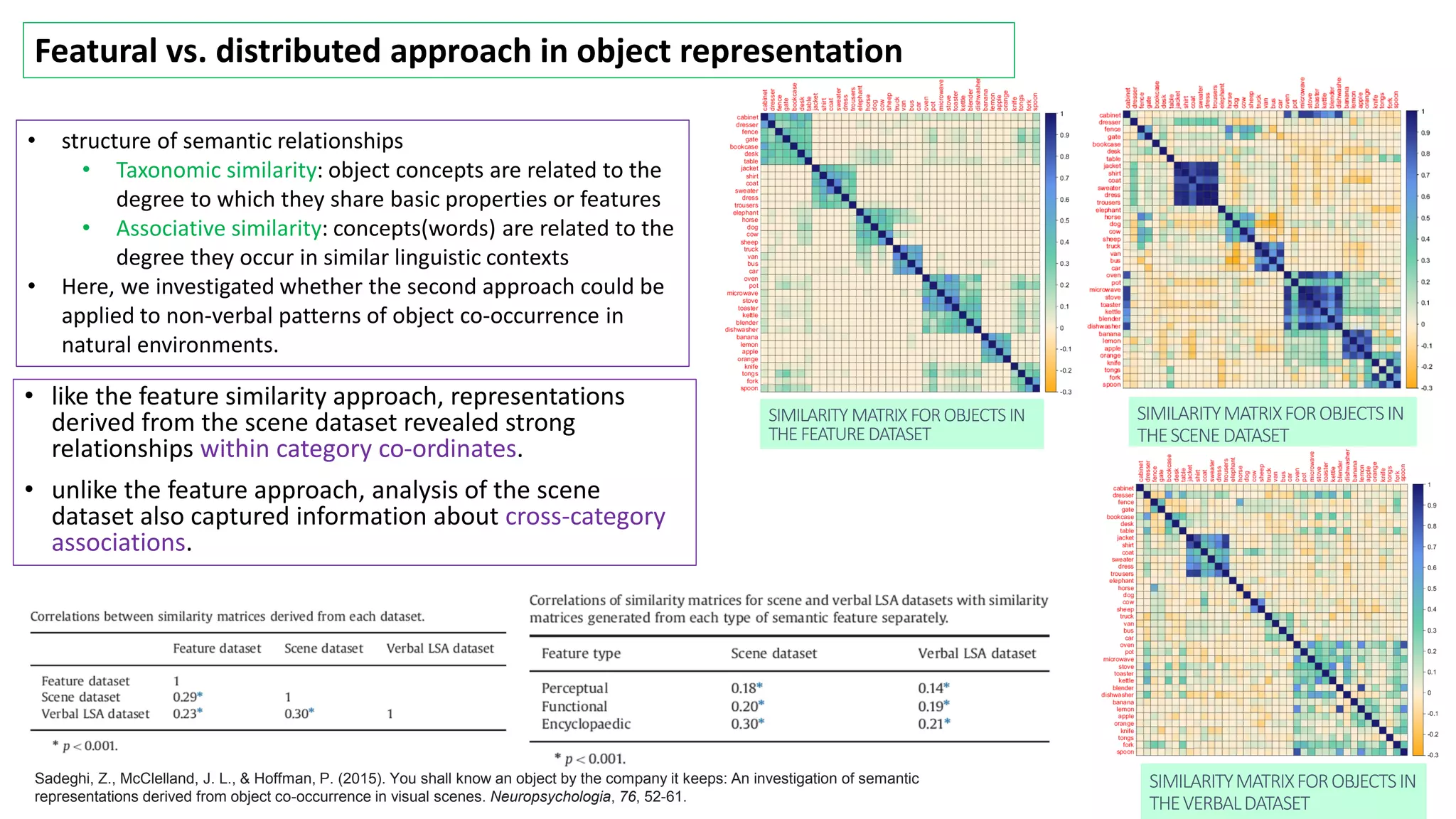

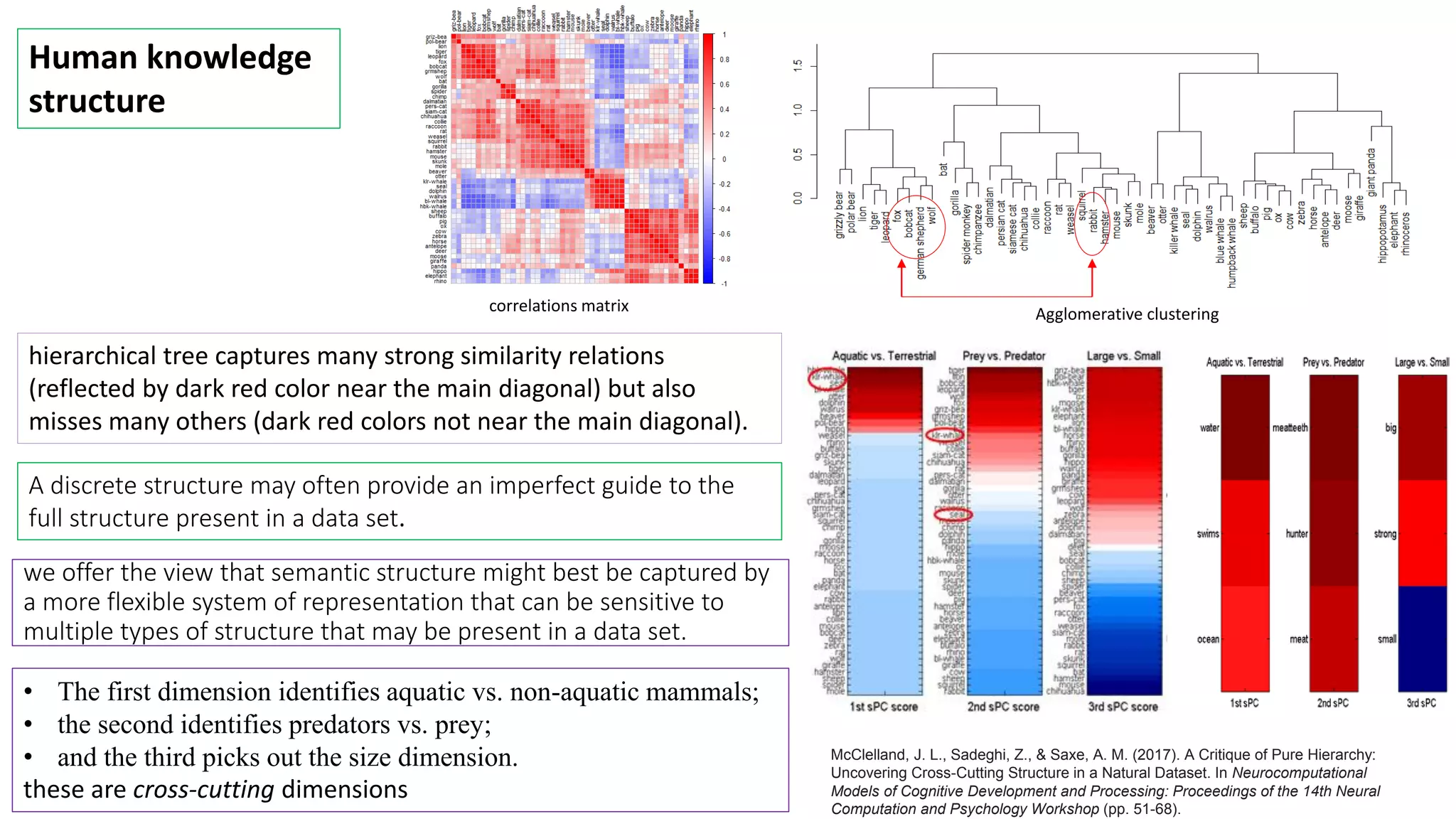

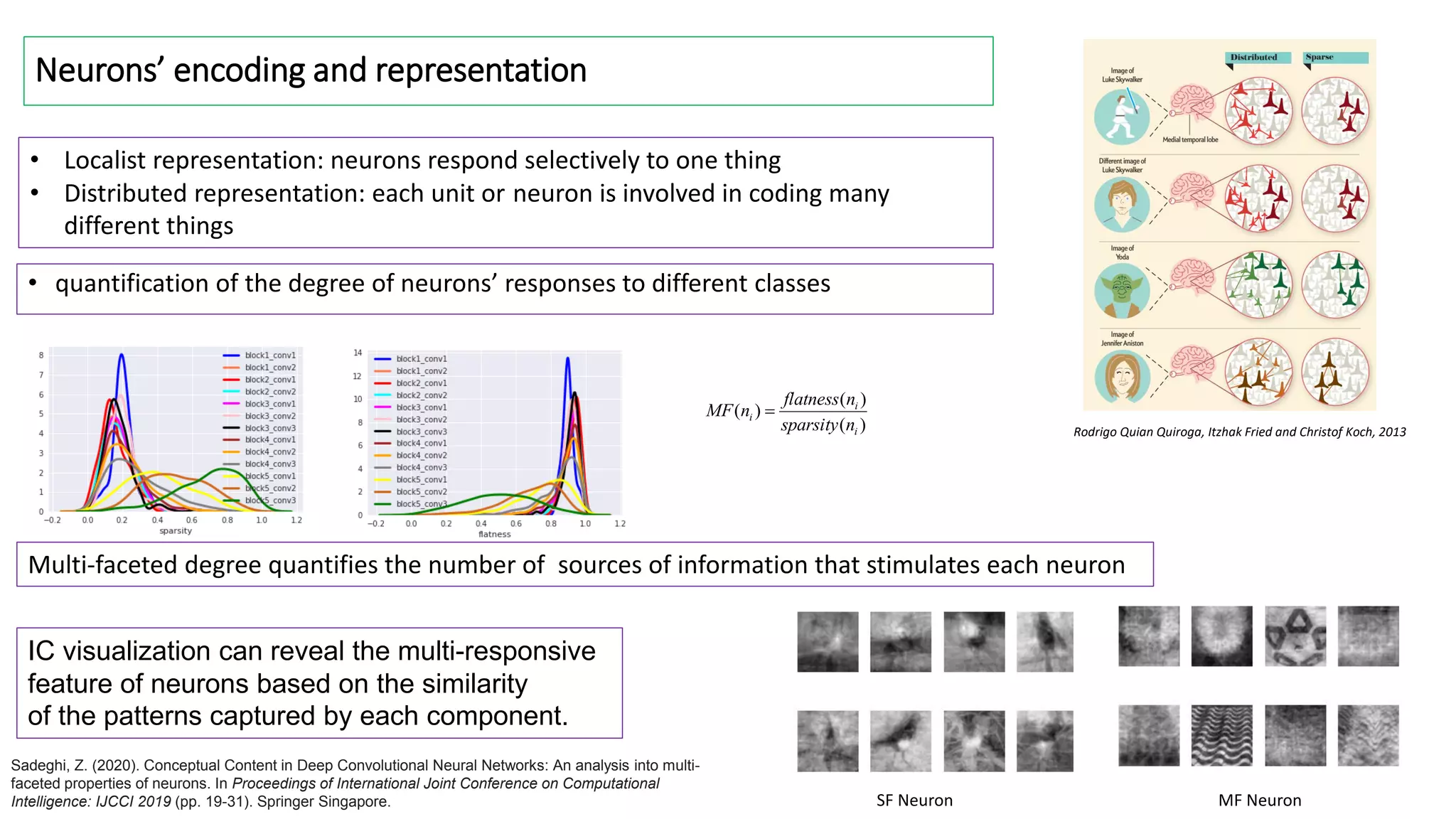

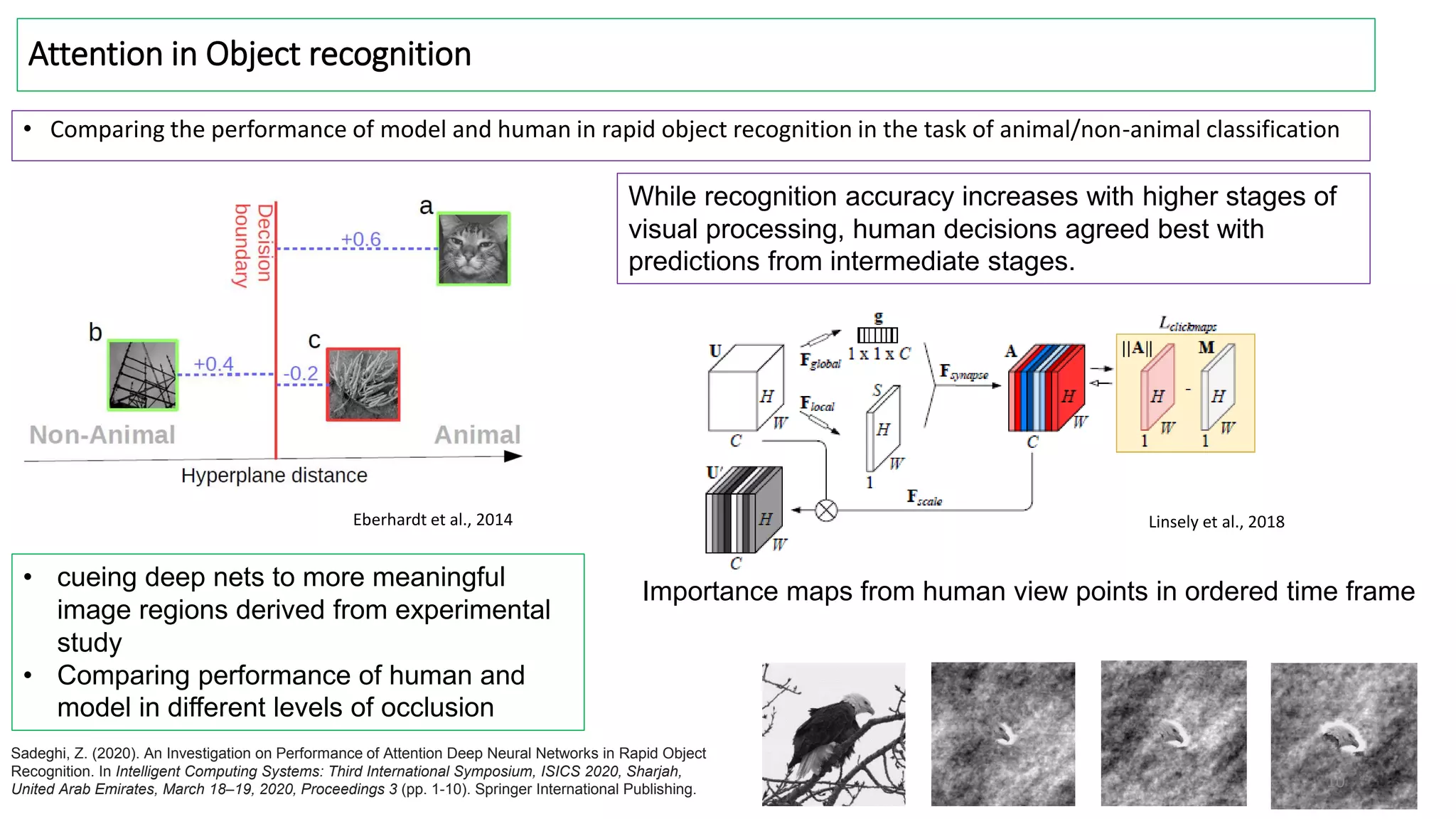

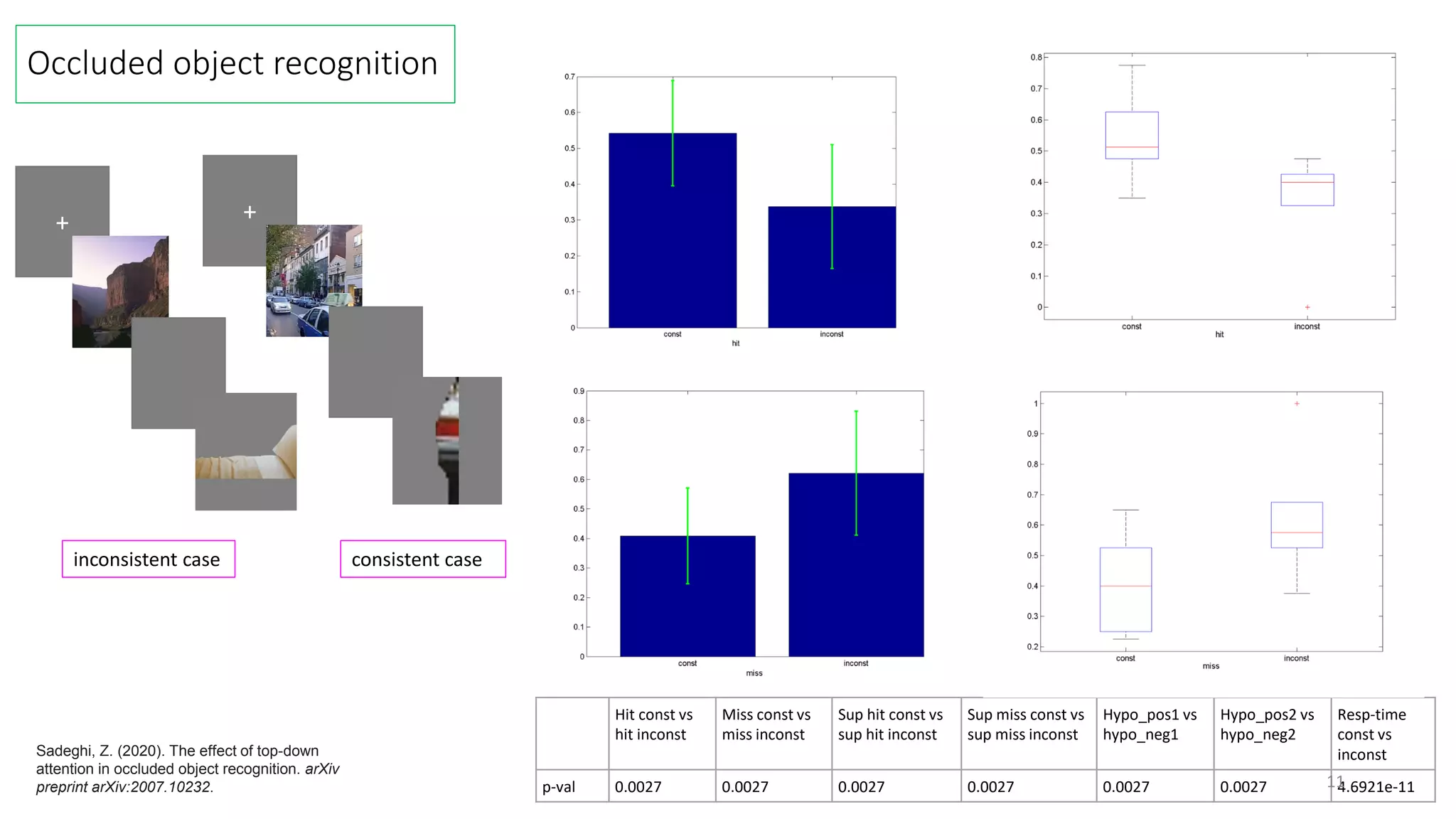

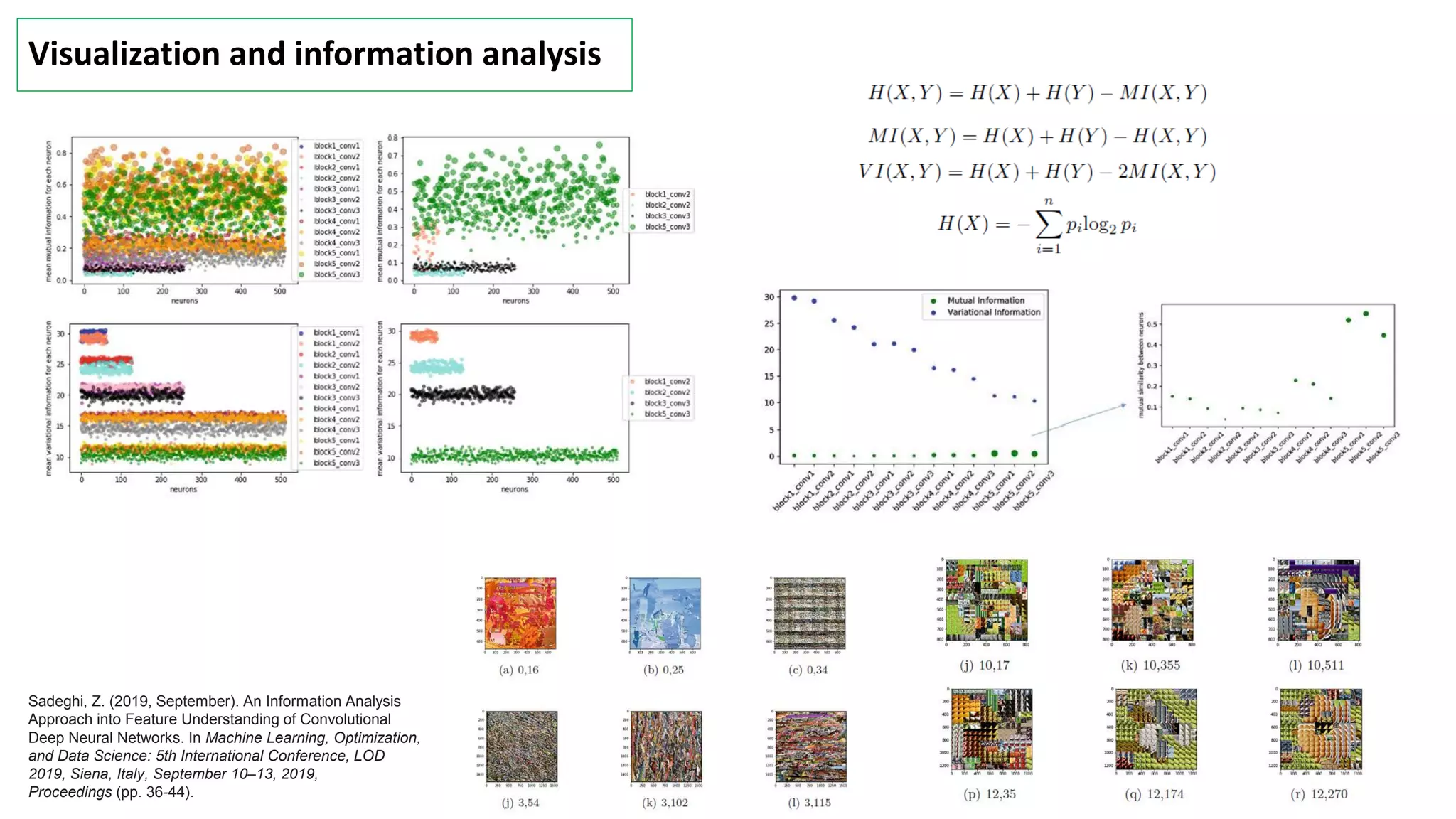

- The document discusses various topics related to perception, representation, structure, and recognition of visual concepts including taxonomic hierarchies, conceptual categories, and flexible knowledge structures.

- Different studies are mentioned that examine emerging conceptual categories at different layers of deep neural networks trained on visual datasets, as well as investigations into semantic representations derived from object co-occurrence in scenes.

- The analysis of neural network representations and human behavioral data suggests a more flexible representation of conceptual knowledge that captures cross-cutting relationships rather than a pure hierarchical structure.

![Basic (intermediate) level: Animal/Plant

0 50 100

0

50

100

vertical projection

0 50 100

0

50

horizontal projection

0 50 100

0

50

100

left profile

0 50 100

0

50

100

right profile

0 50 100

20

40

60

top profile

0 50 100

20

40

60

bottom profile

0 50 100

0

50

100

vertical projection

0 50 100

0

50

100

horizontal projection

0 50 100

0

50

100

left profile

0 50 100

0

50

right profile

0 50 100

0

50

100

top profile

0 50 100

0

50

100

bottom profile

raw 25 50 75 100

0.7

0.72

0.74

0.76

0.78

0.8

0.82

0.84

0.86

0.88

hidden units

accuracy

h

v

l

r

t

b

unsupervised h v l r t b [h,v,t,b]

P 68.57 69.17 60.94 59.08 67.77 65.07 70.21

R 59.98 59.70 53.70 58.87 58.02 55.68 60.39

F1-score 63.99 64.09 57.09 58.97 62.52 60.01 64.93

accuracy 60.57 60.93 52.86 52.17 59.37 56.66 61.91

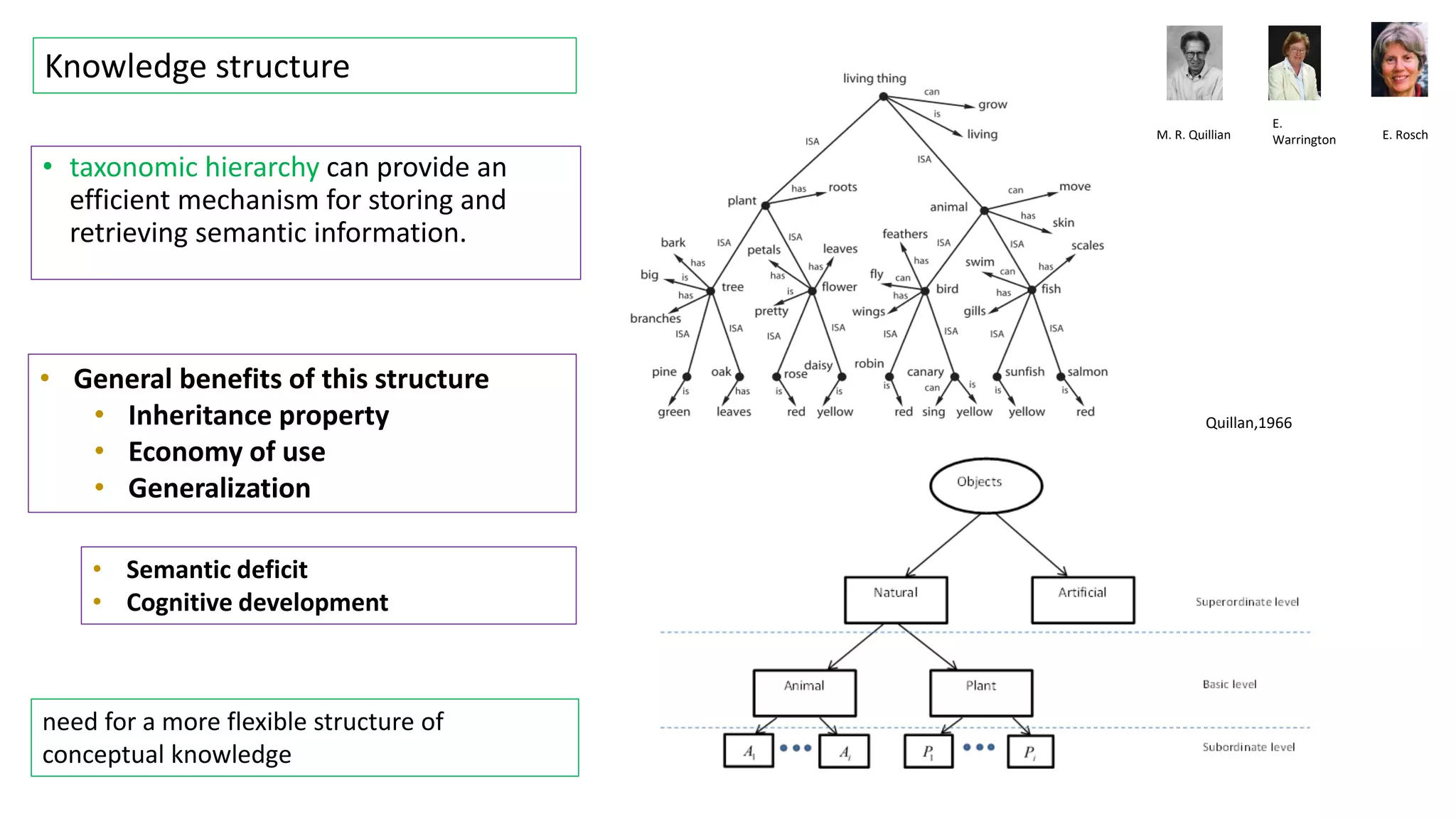

Shape descriptors: moment, profile an projection shape

descriptors

0

10

20

30

40

50

boys

girls

Children’s mental object representations: shape-concept

is the dominant strategy

Sadeghi, Z. (2019). Visual Categorization of Objects into Animal and Plant Classes Using Global Shape

Descriptors. arXiv preprint arXiv:1901.11398.](https://image.slidesharecdn.com/perceptionrepresentation-230630000941-4d9bdb59/75/Perception-representation-structure-and-recognition-4-2048.jpg)

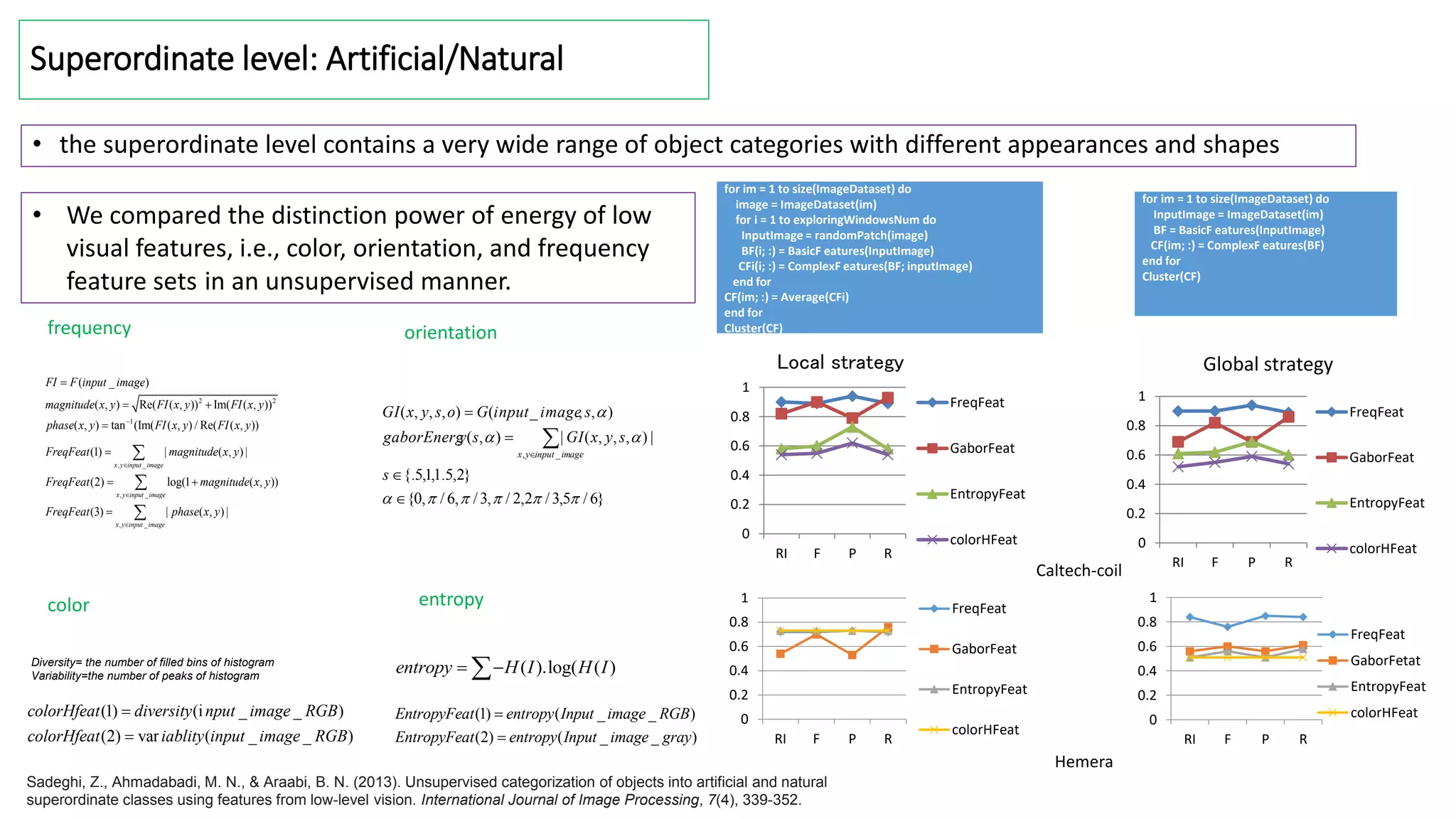

![Utilizing hierarchical information

we defined dictionary of features based on PCA approach in two modes of flat and hierarchical subspaces.

Our aim was to point out the effect of contextual prior information in improving the accuracy of recognition.

0 2 4 6 8 10 12 14

10

20

30

40

0 2 4 6 8 10 12 14

0

20

40

60

0 2 4 6 8 10 12 14

0

20

40

60

0 2 4 6 8 10 12 14

0

20

40

60

0 2 4 6 8 10 12 14

10

20

30

40

0 2 4 6 8 10 12 14

10

20

30

40

0

20

40

60

cugar-body

elephant

flamingo

gerenuk

pigeon

rooster

bonsai

joshua

t

ree

lotus

strawberry

sunflower

water-lilly

0

20

40

60

cugar-body

elephant

flamingo

gerenuk

pigeon

rooster

bonsai

joshua

t

ree

lotus

strawberry

sunflower

water-lilly

cugar-body

elephant

flamingo

gerenuk

pigeon

rooster

bonsai

joshua

t

ree

lotus

strawberry

sunflower

water-lilly

flat

conceptual

Total #eigenvectors Half #eigenvectors

#nt=20

#nt=18

#nt=30

#nt=24

flat mode

( )

[ S, S] conceptual mode

T

AP

T T

A P

u S

subFeat S

u u

SVM classification

Flat mode

Hierarchical mode

Eigen spaces

Plant subspace

Animal subspace

Sadeghi, Z., Araabi, B. N., & Ahmadabadi, M. N. (2015). A computational approach towards visual object

recognition at taxonomic levels of concepts. Computational intelligence and neuroscience, 2015, 72-72.](https://image.slidesharecdn.com/perceptionrepresentation-230630000941-4d9bdb59/75/Perception-representation-structure-and-recognition-5-2048.jpg)