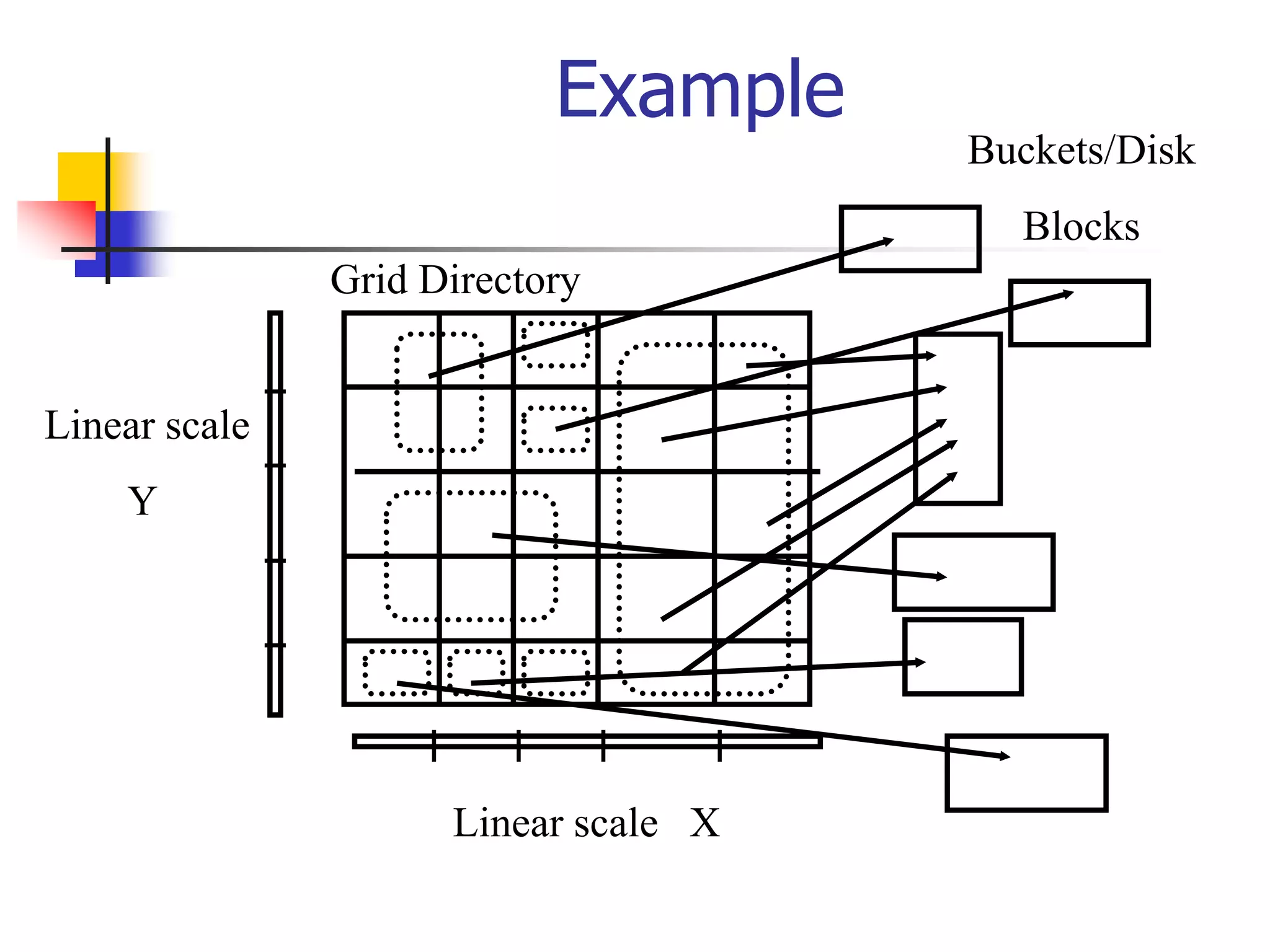

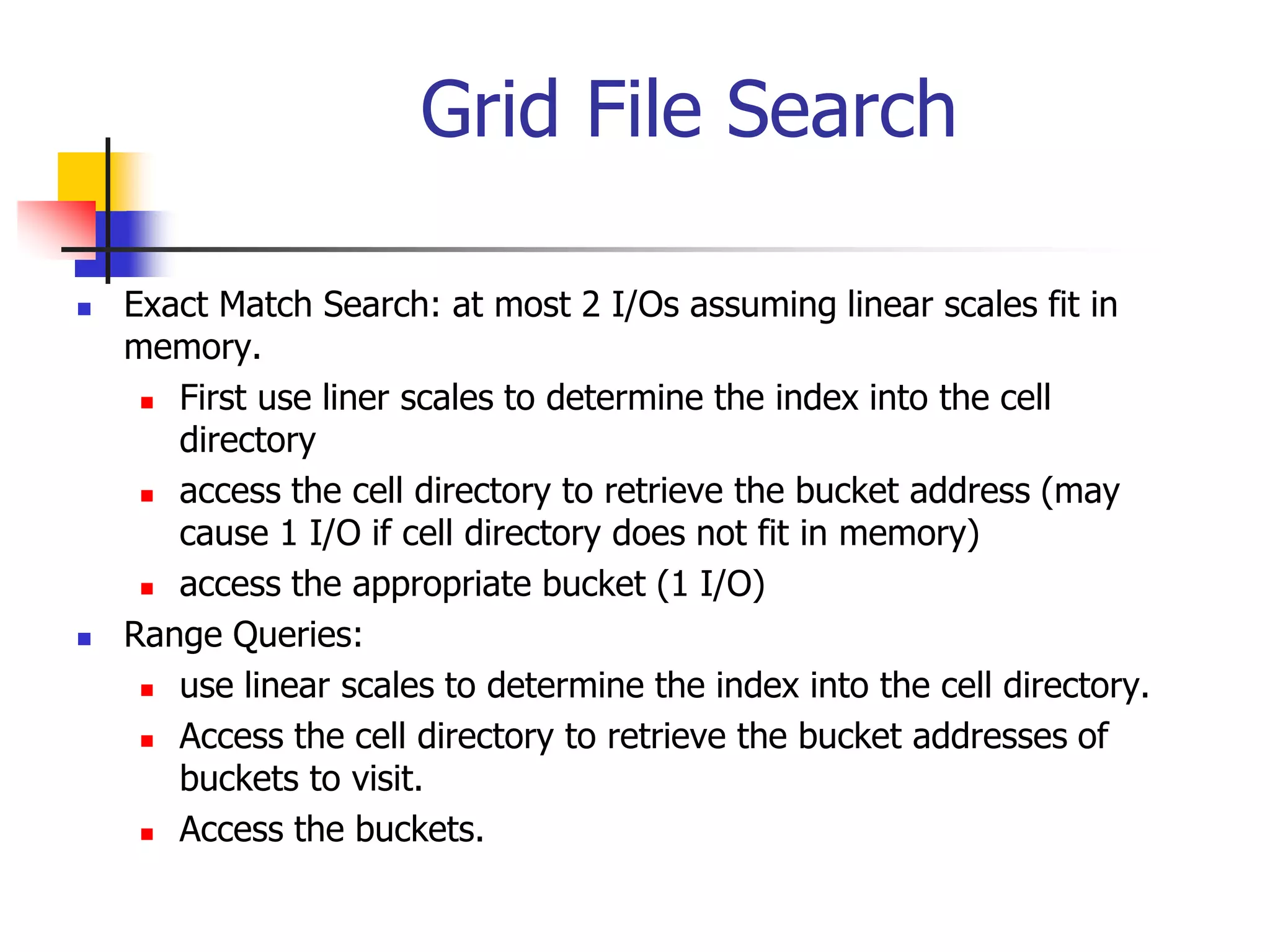

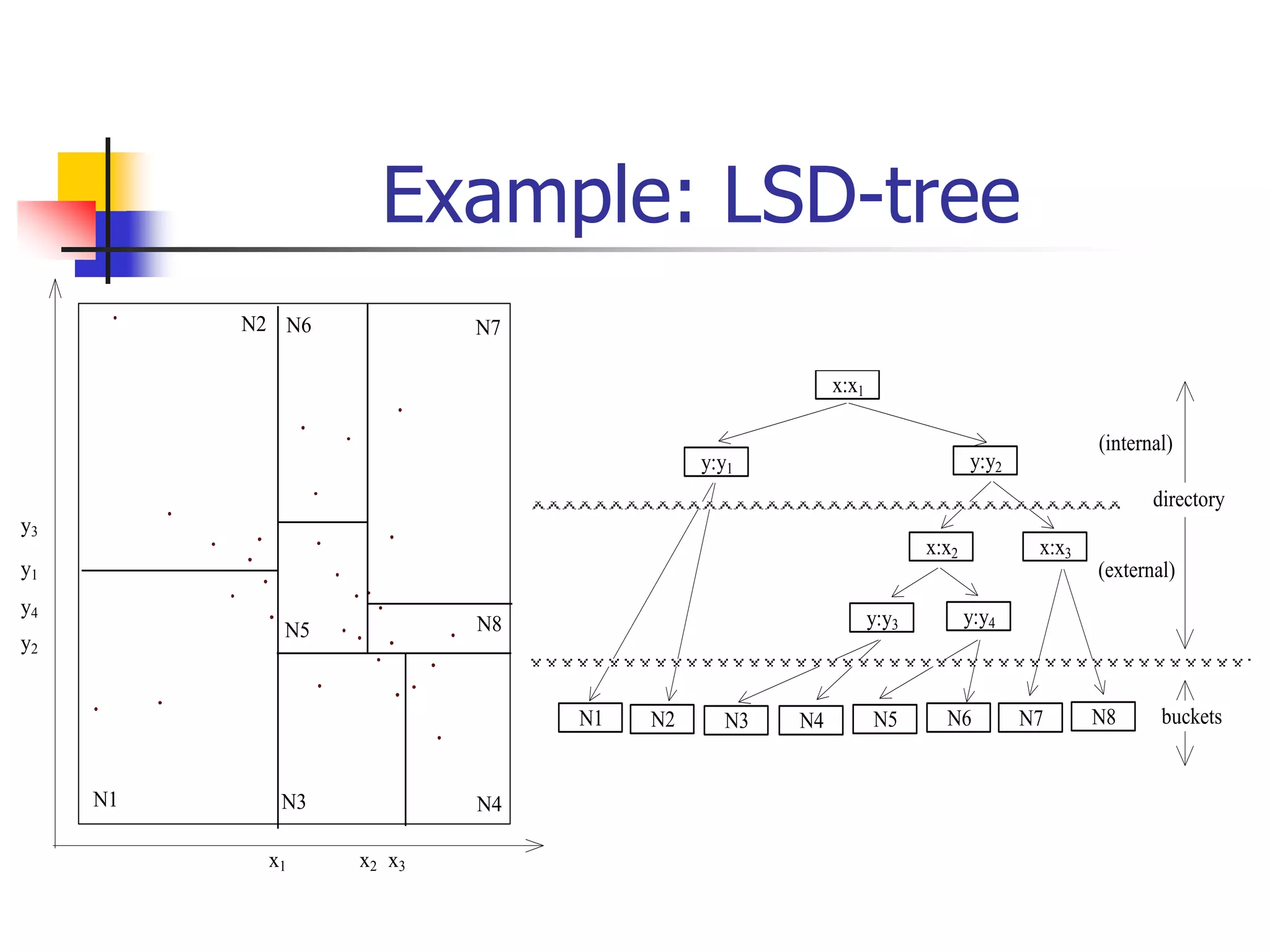

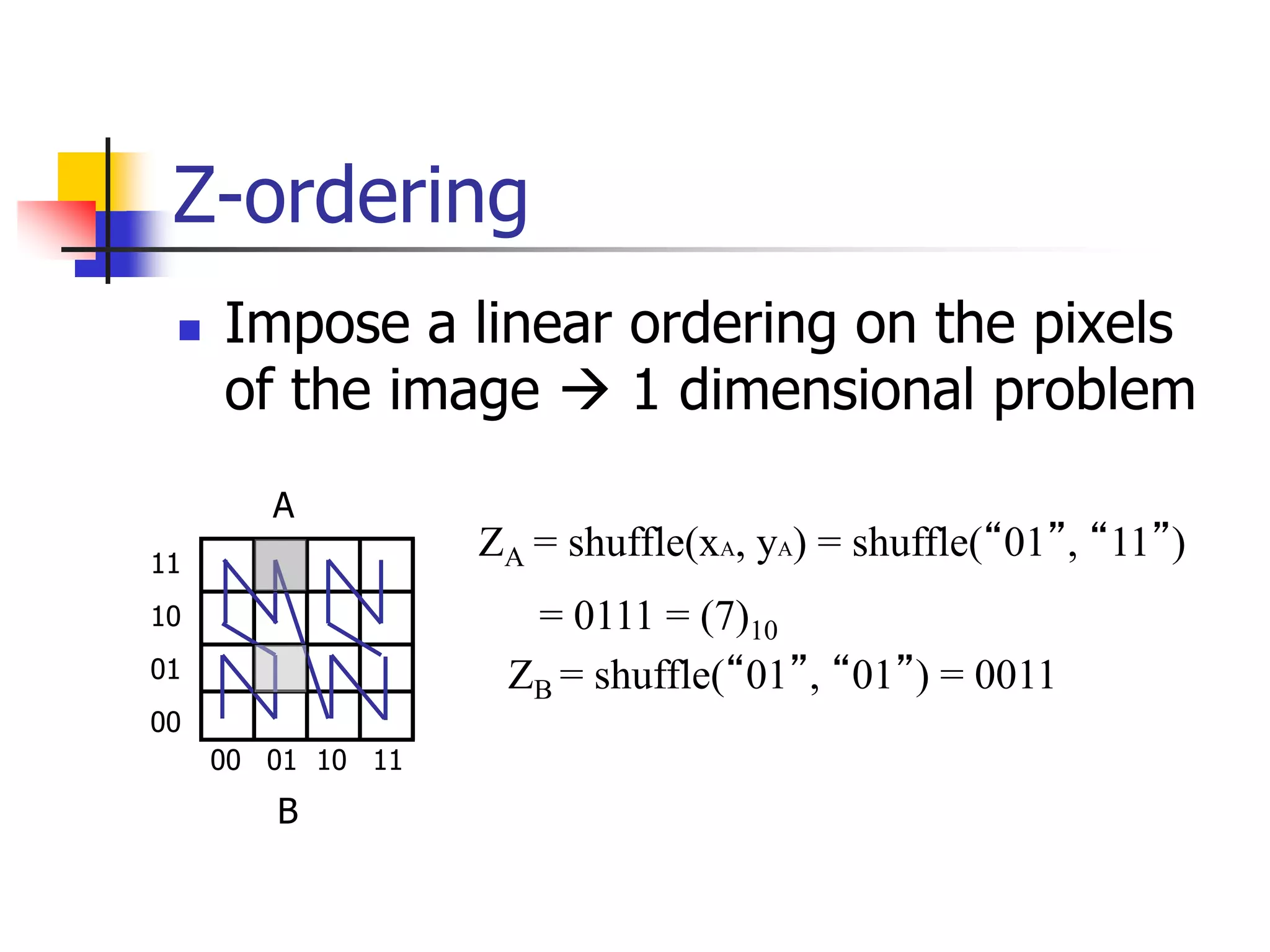

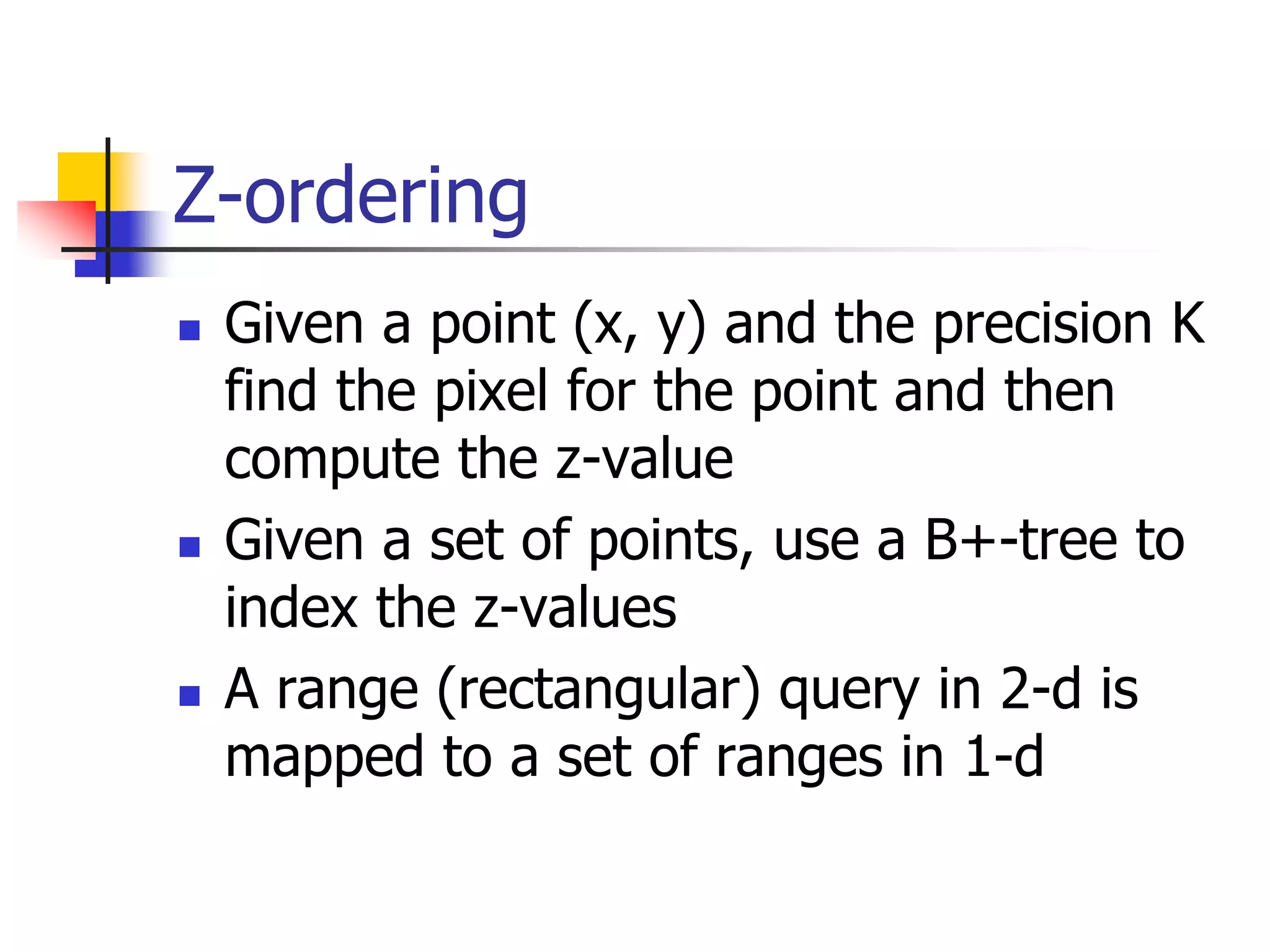

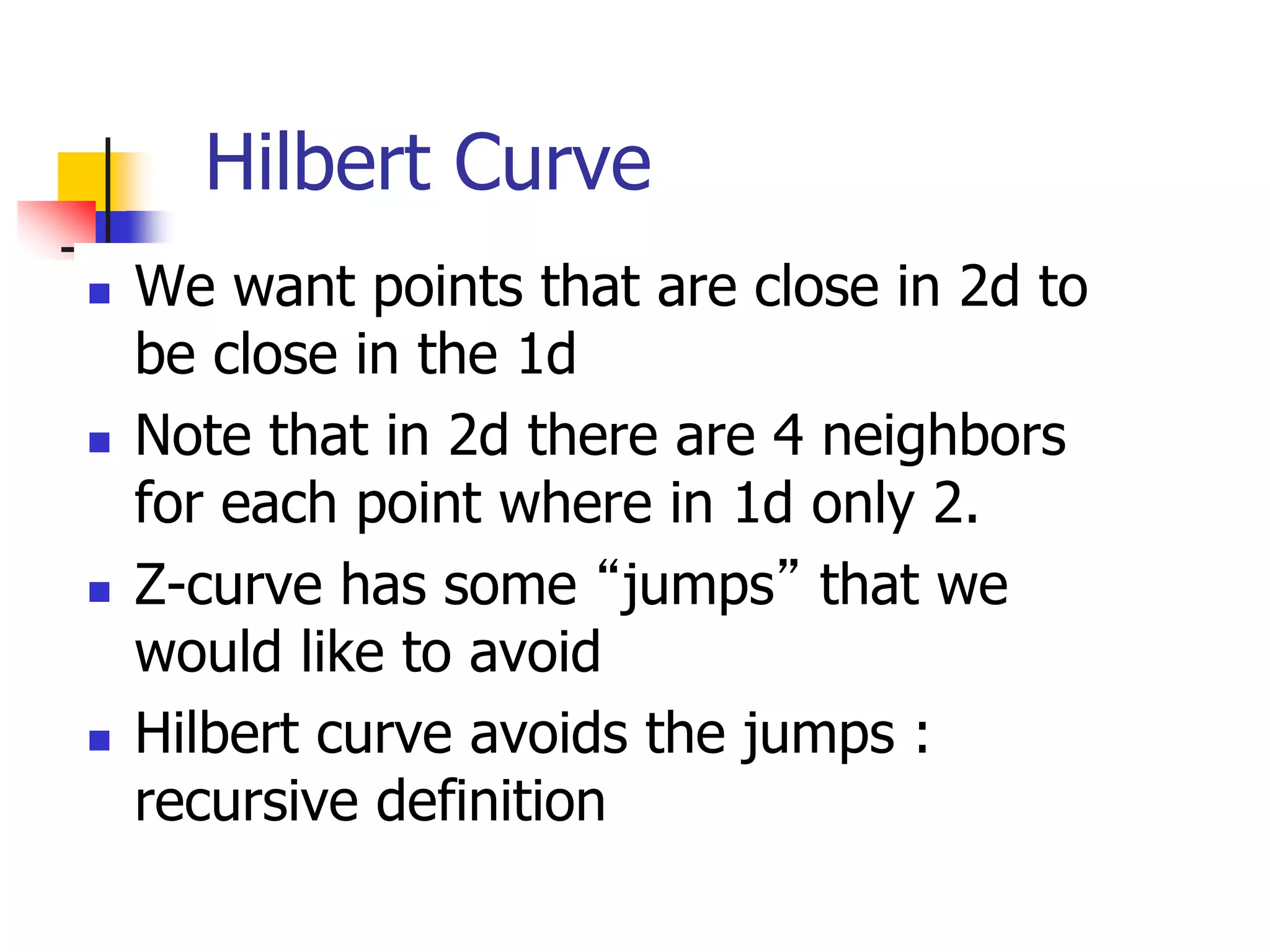

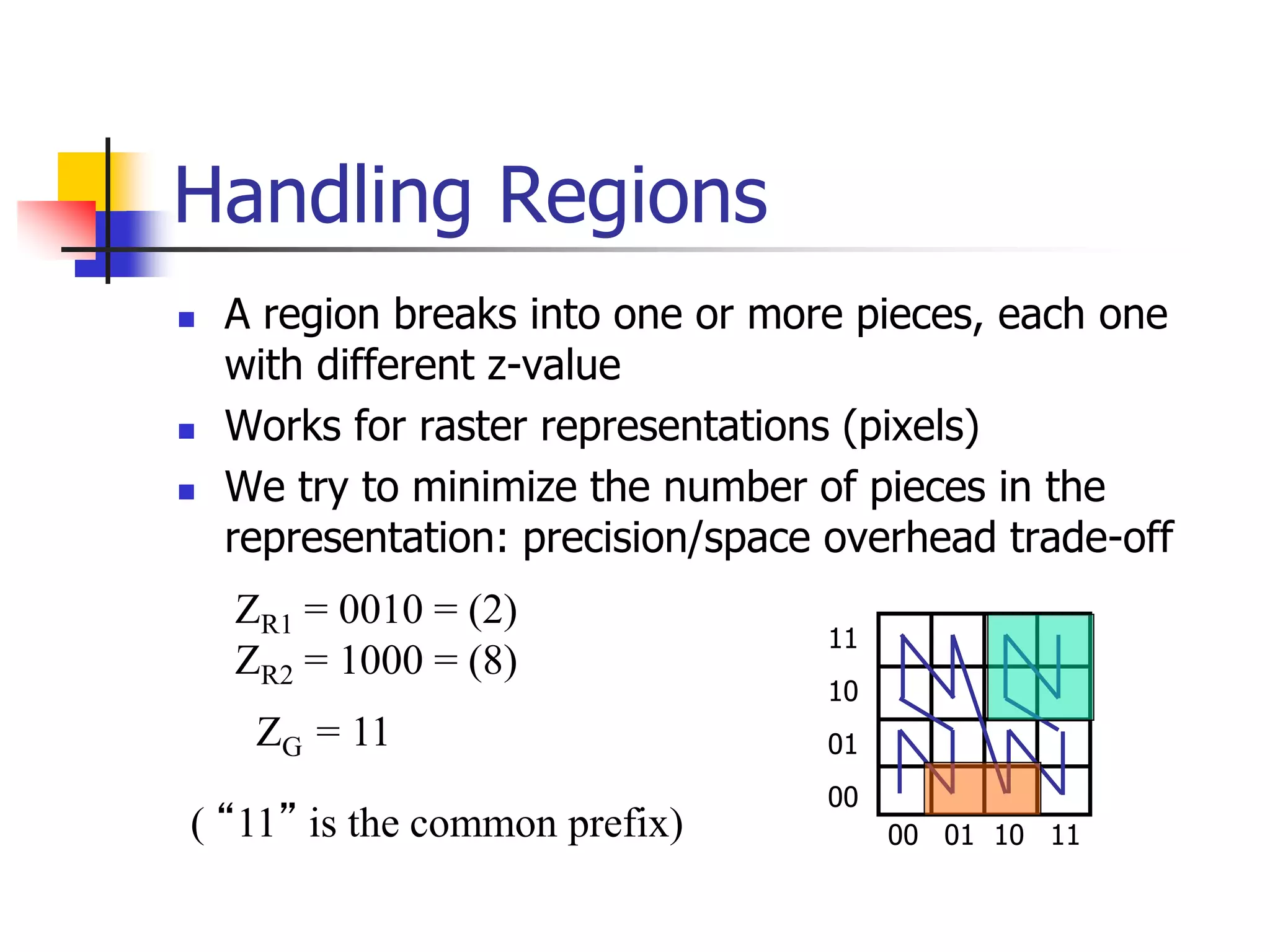

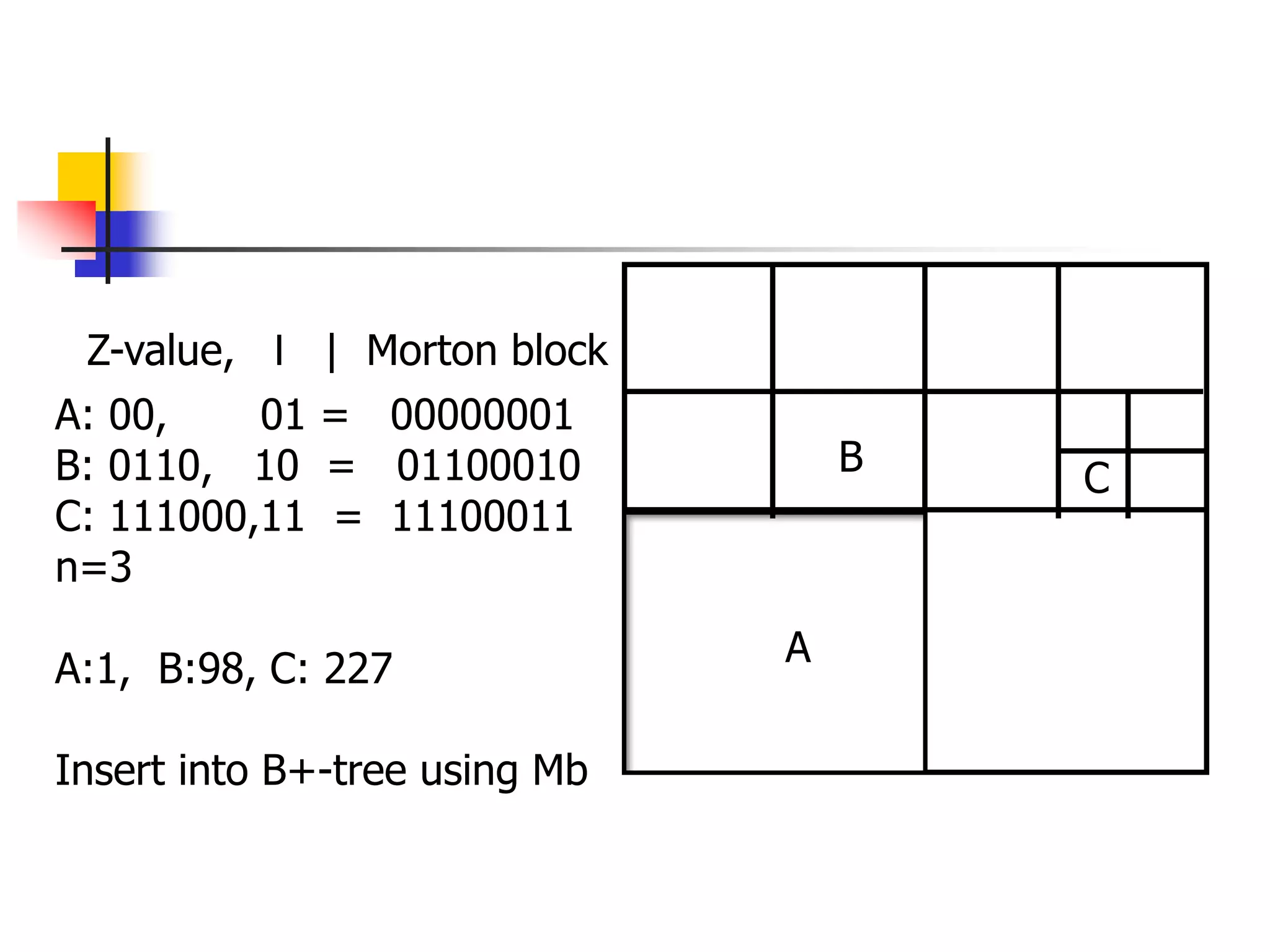

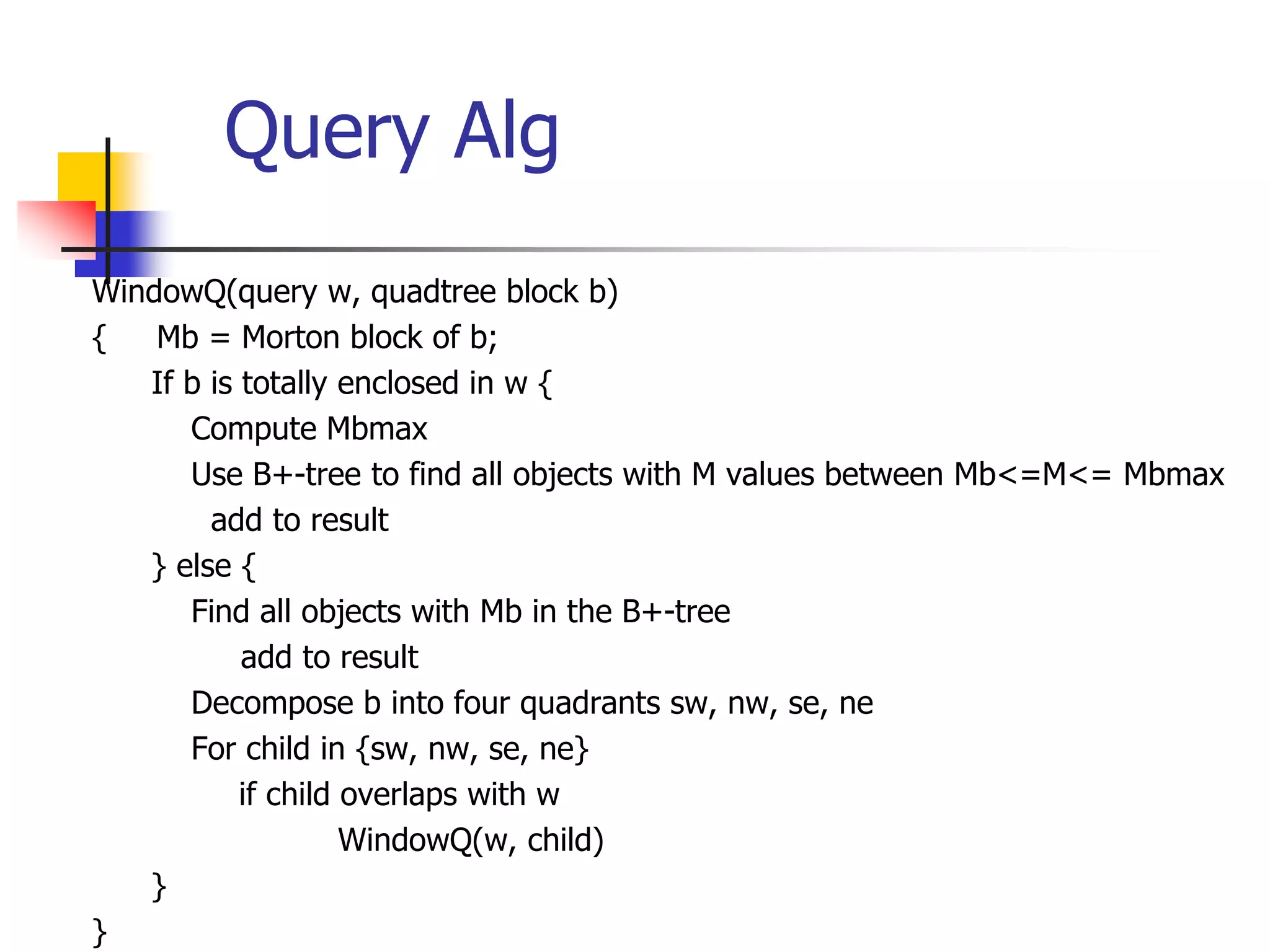

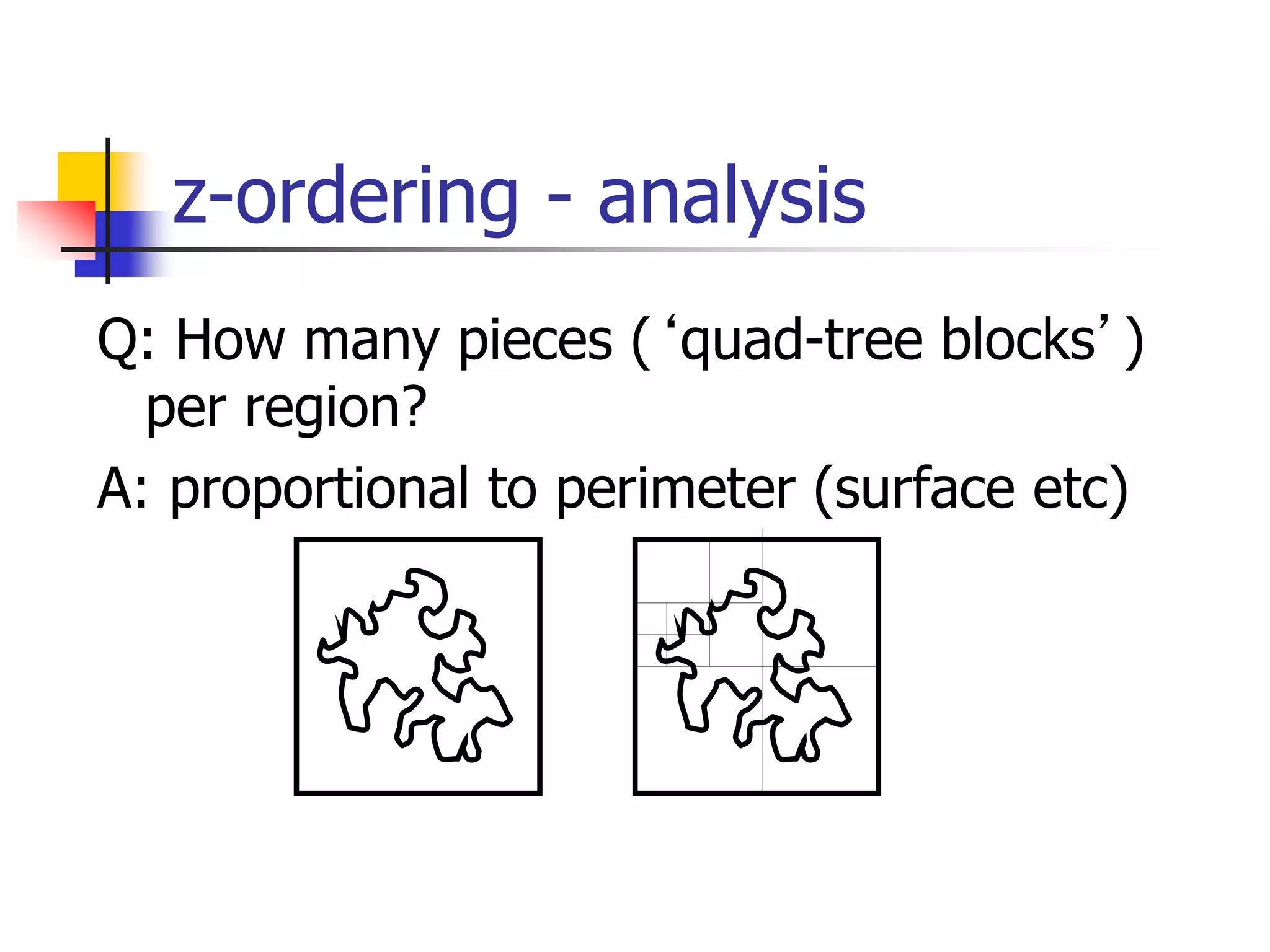

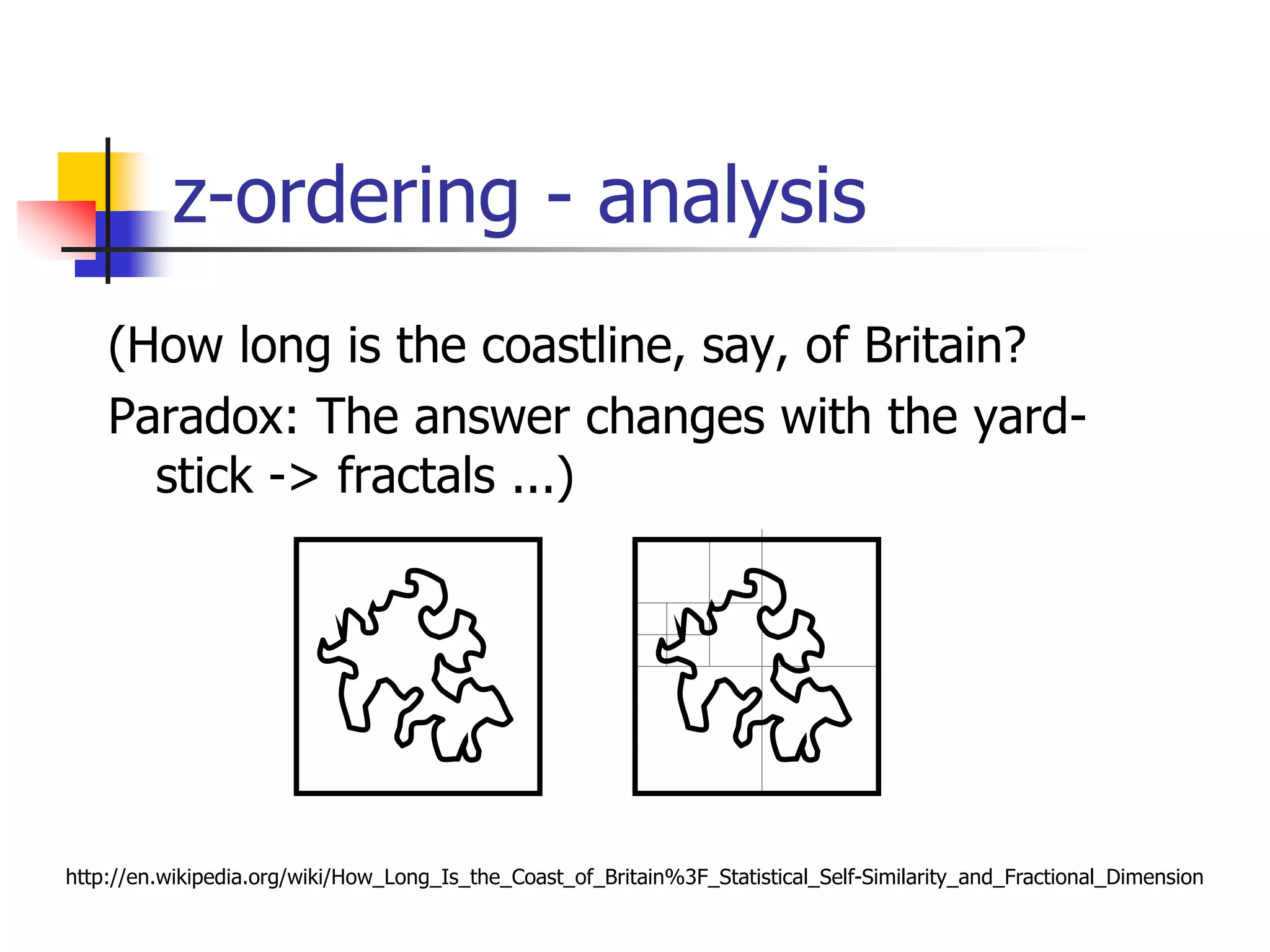

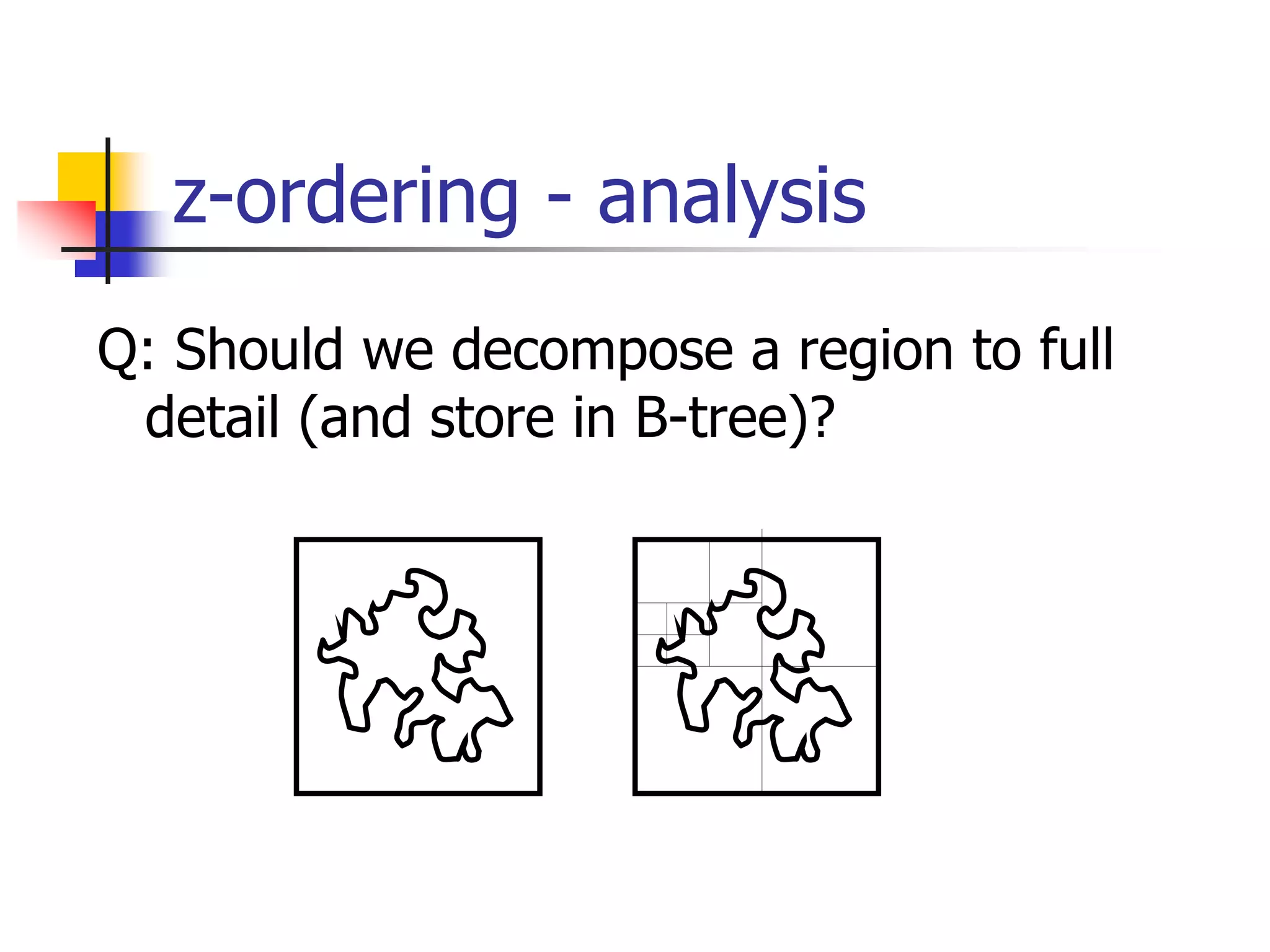

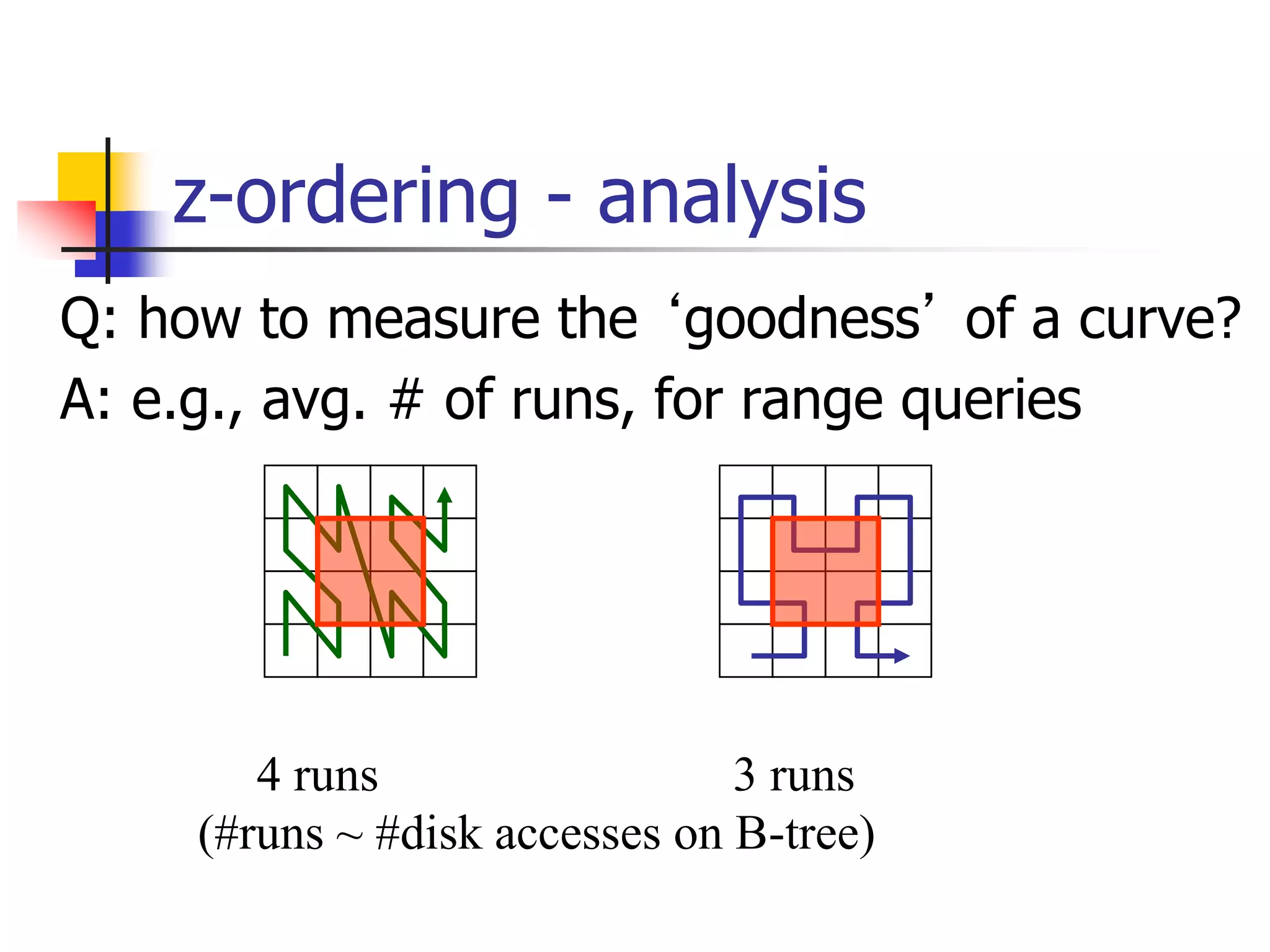

This document discusses spatial indexing techniques for multidimensional point data. It describes grid files which partition space into grid cells, each associated with a disk page. It also covers tree-based methods like the kd-tree which partitions space recursively based on dimension values. Z-ordering and space-filling curves like the Hilbert curve are presented as mapping multidimensional points to a linear ordering to enable range queries on a B-tree. The document compares techniques and analyzes properties like the number of disk accesses for range queries.

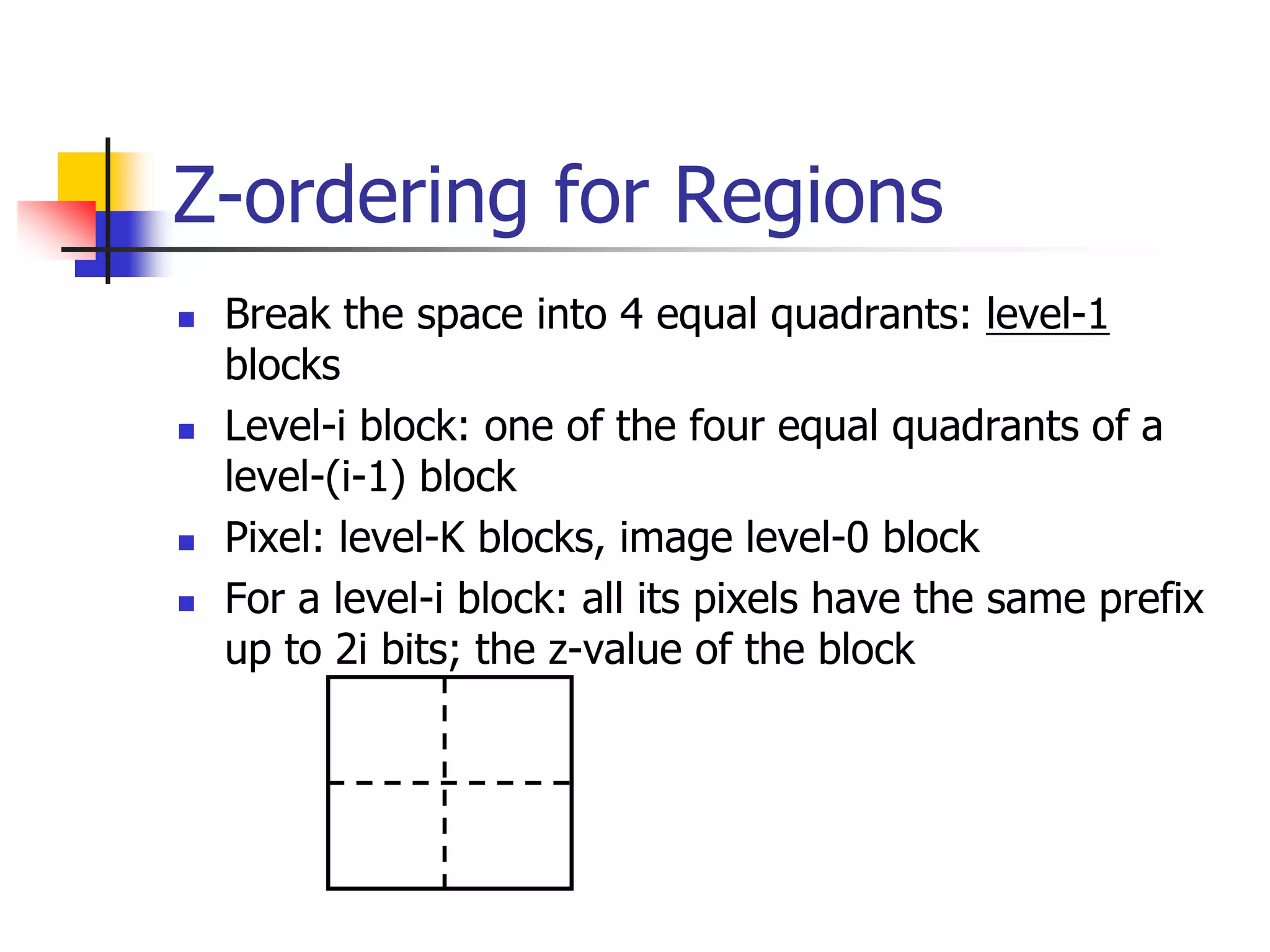

![Queries

Find the z-values that contained in the

query and then the ranges

00 01 10 11

00

01

10

11

QA range [4, 7]

QA

QB

QB ranges [2,3] and [8,9]](https://image.slidesharecdn.com/pam-220714041542-27bcdc38/75/PAM-ppt-35-2048.jpg)

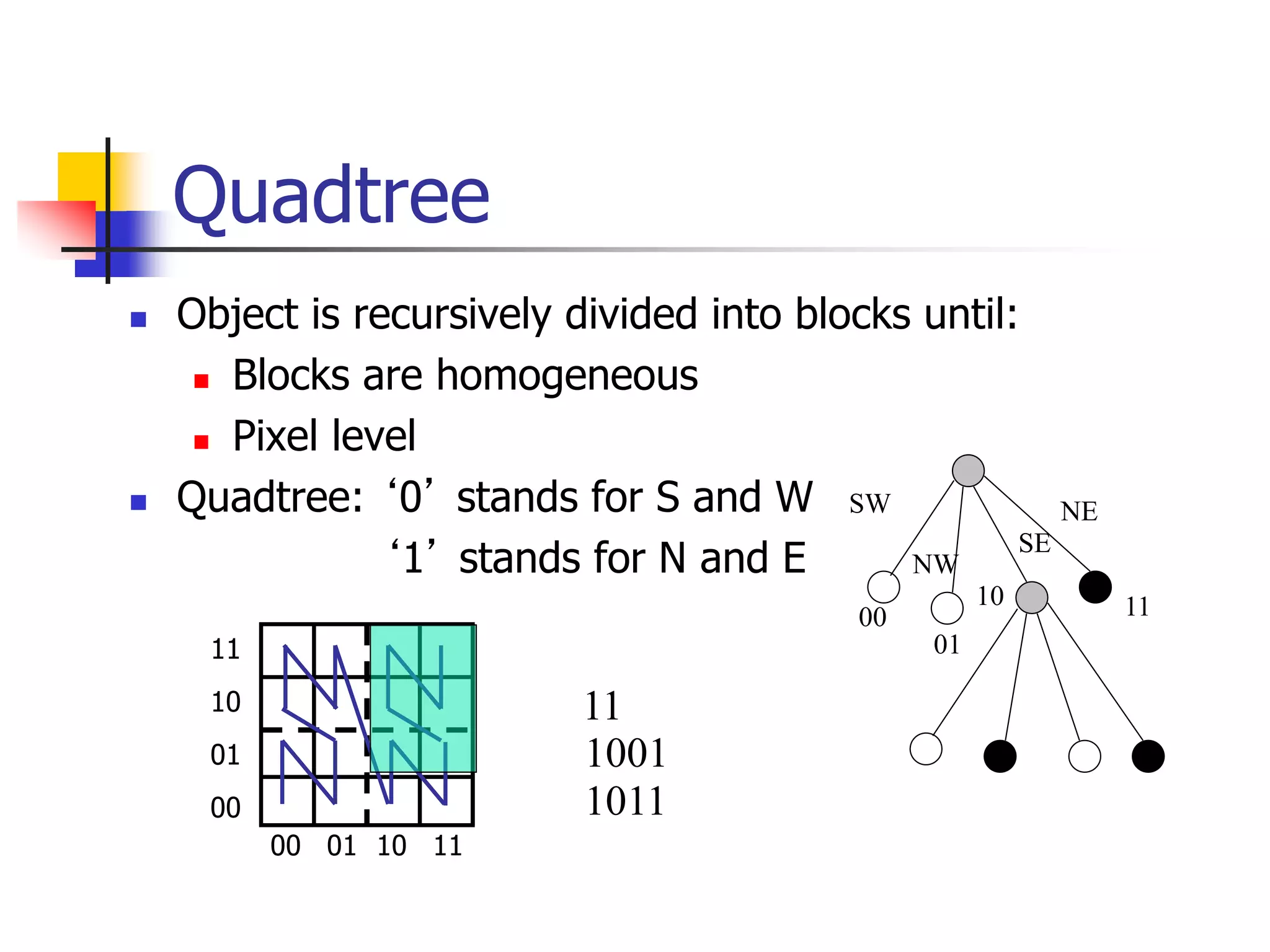

![Hilbert Curve- example

It has been shown that in general Hilbert is better

than the other space filling curves for retrieval

[Jag90]

Hi (order-i) Hilbert curve for 2ix2i array

H1

H2 ... H(n+1)](https://image.slidesharecdn.com/pam-220714041542-27bcdc38/75/PAM-ppt-37-2048.jpg)

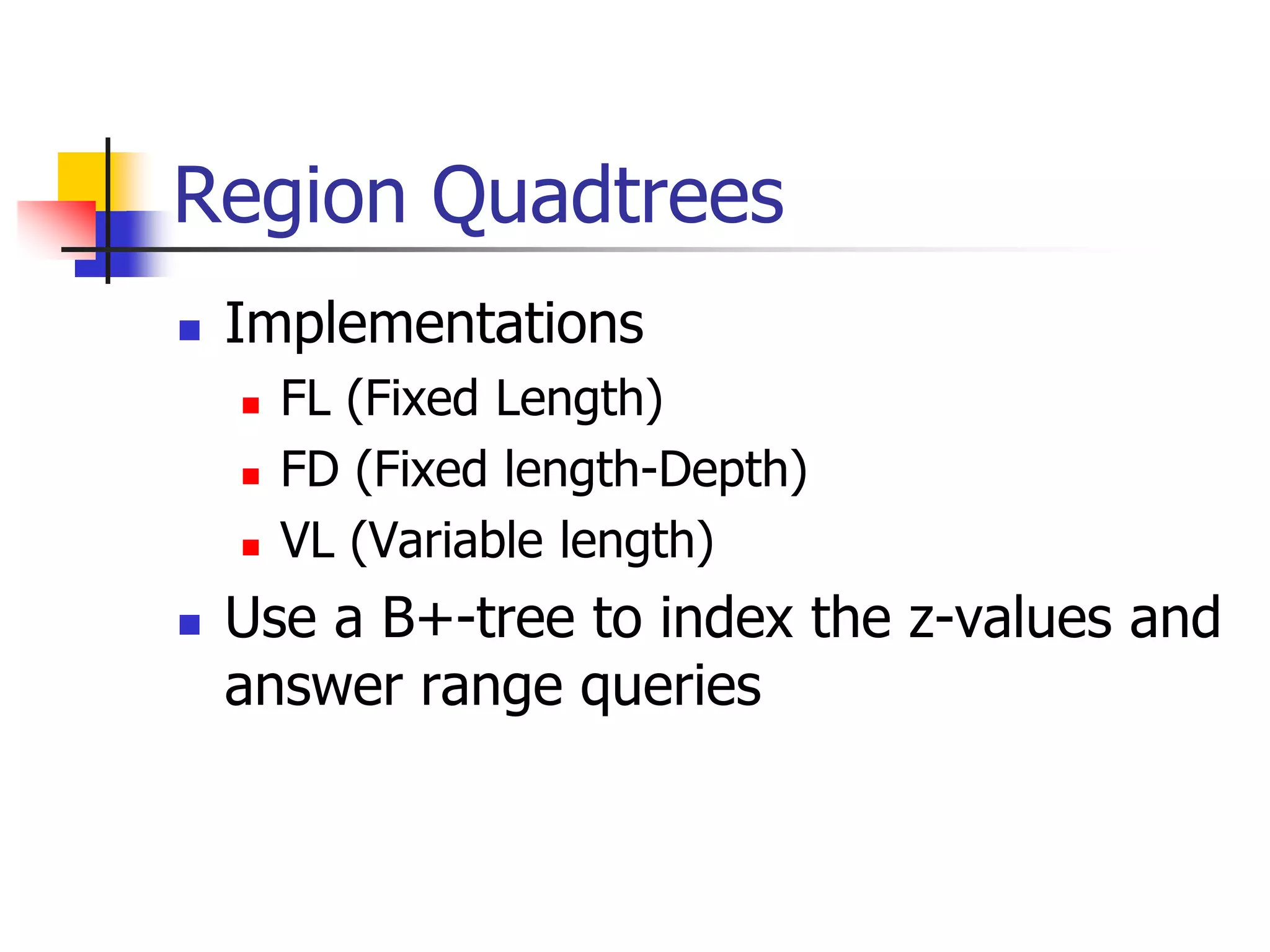

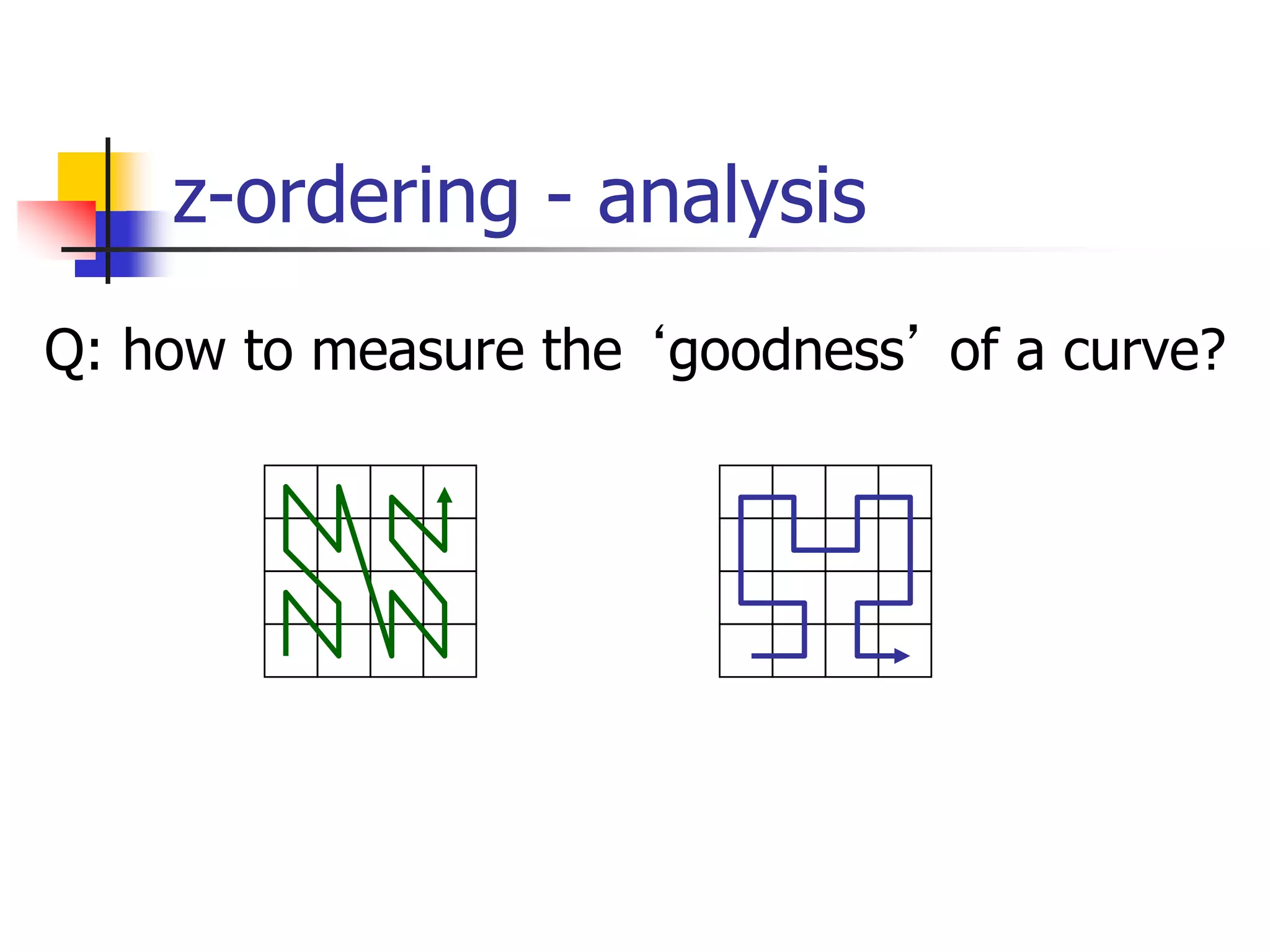

![z-ordering - analysis

Q: Should we decompose a region to full

detail (and store in B-tree)?

A: NO! approximation with 1-5 pieces/z-

values is best [Orenstein90]](https://image.slidesharecdn.com/pam-220714041542-27bcdc38/75/PAM-ppt-49-2048.jpg)

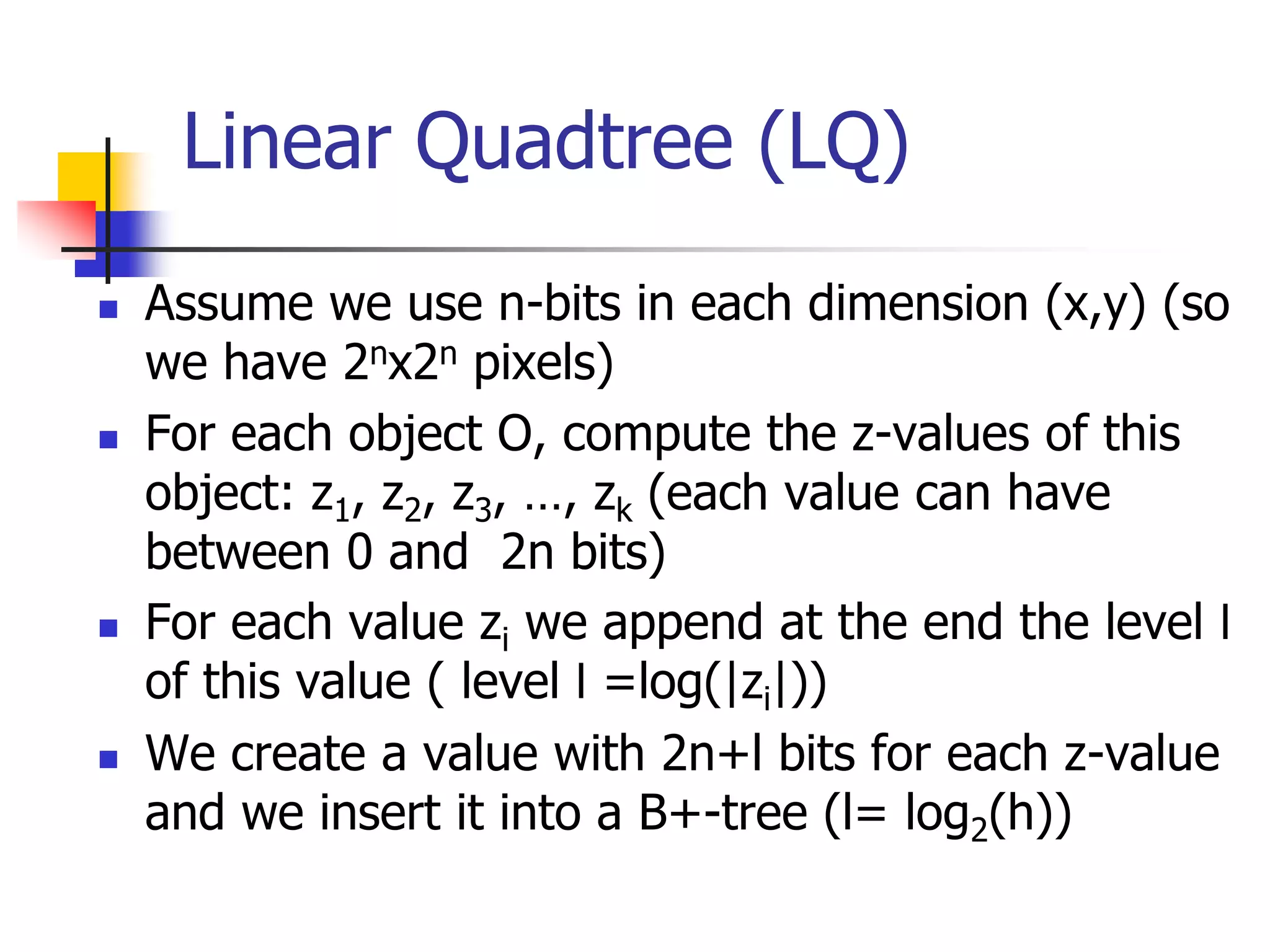

![z-ordering - analysis

Q: So, is Hilbert really better?

A: 27% fewer runs, for 2-d (similar for 3-d)

Q: are there formulas for #runs, #of

quadtree blocks etc?

A: Yes, see a paper by [Jagadish ’90]

H.V. Jagadish. Linear clustering of objects with multiple attributes.

SIGMOD 1990. http://www.cs.ucr.edu/~tsotras/cs236/W15/hilbert-curve.pdf](https://image.slidesharecdn.com/pam-220714041542-27bcdc38/75/PAM-ppt-52-2048.jpg)