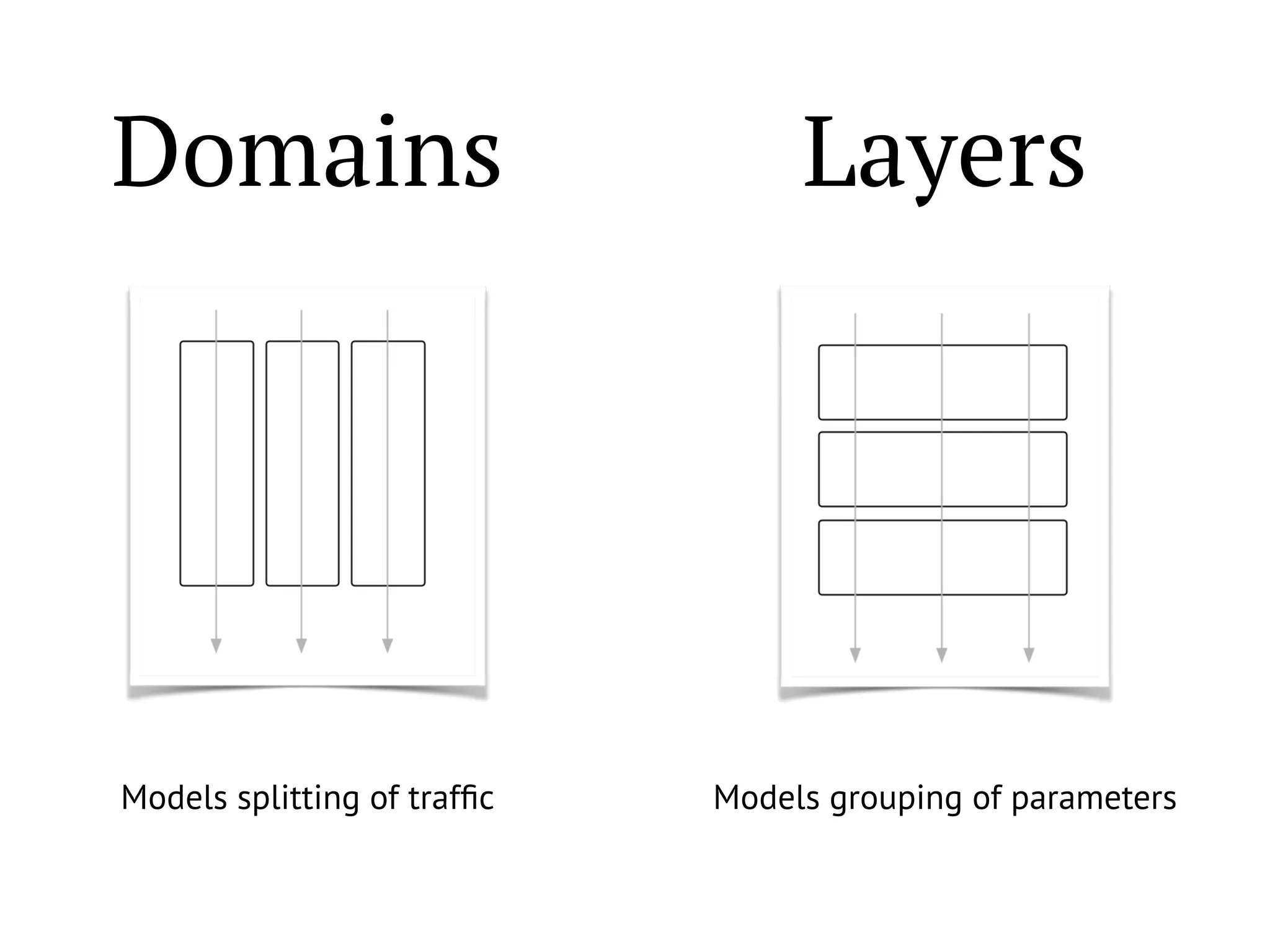

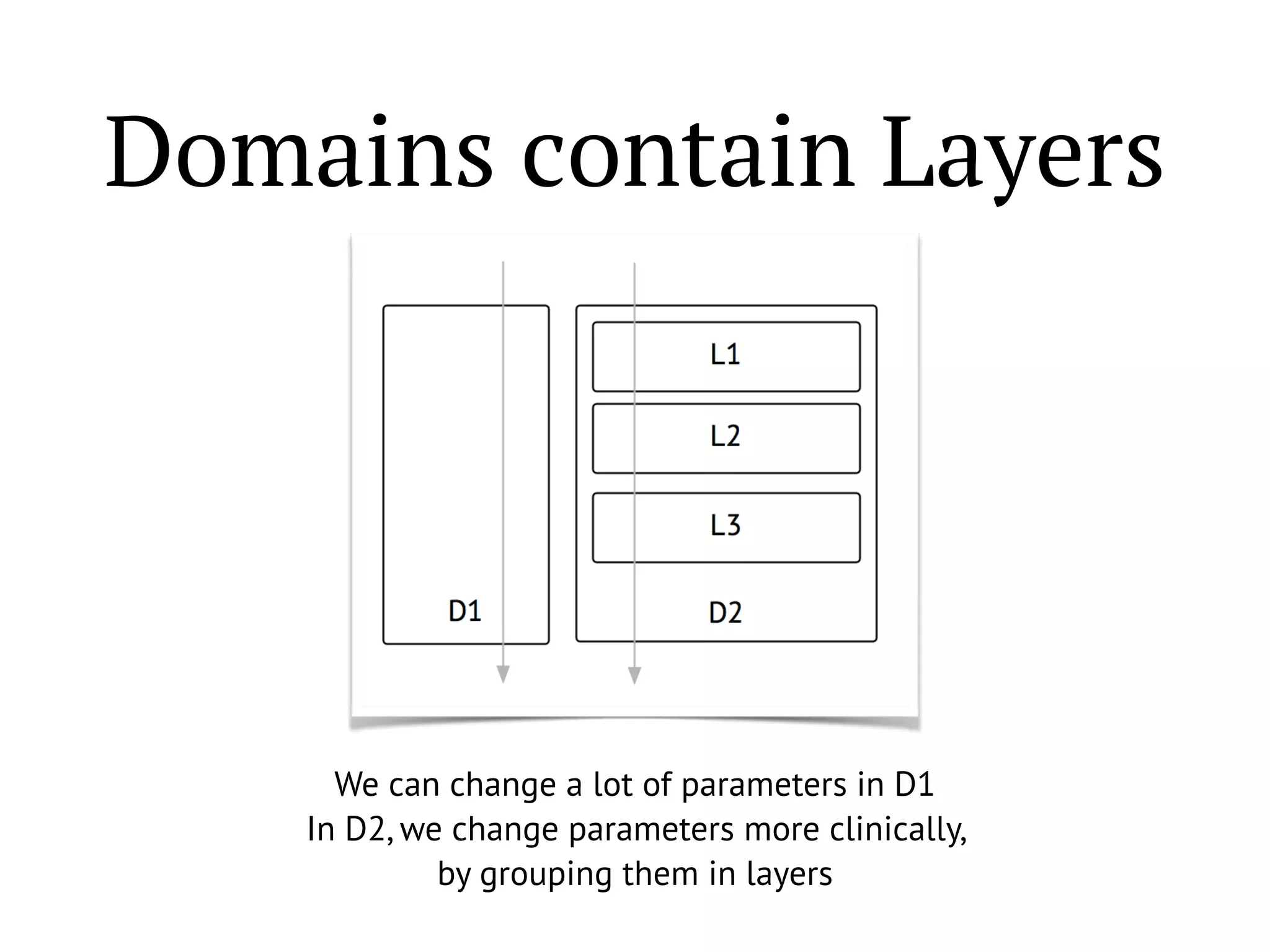

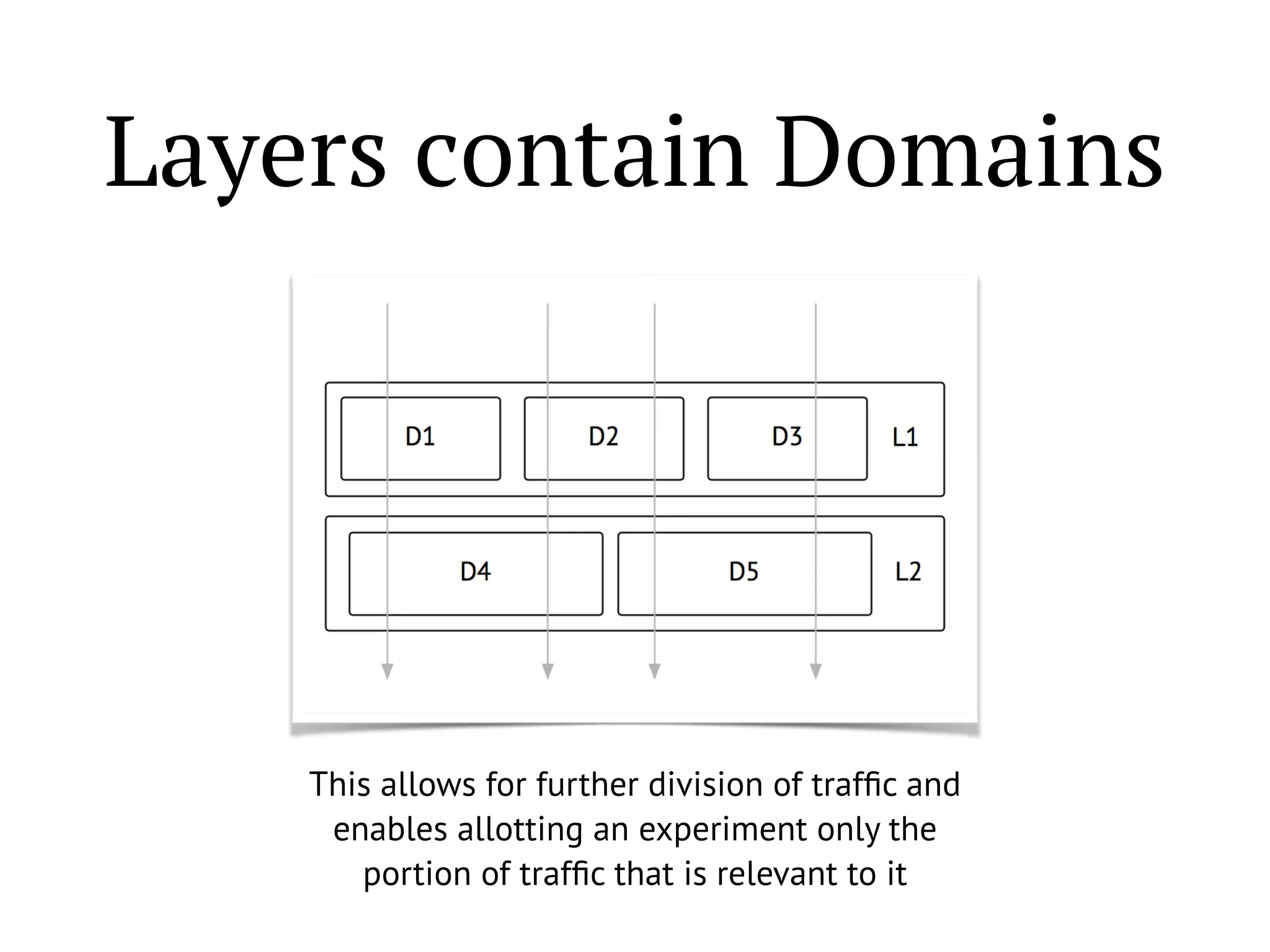

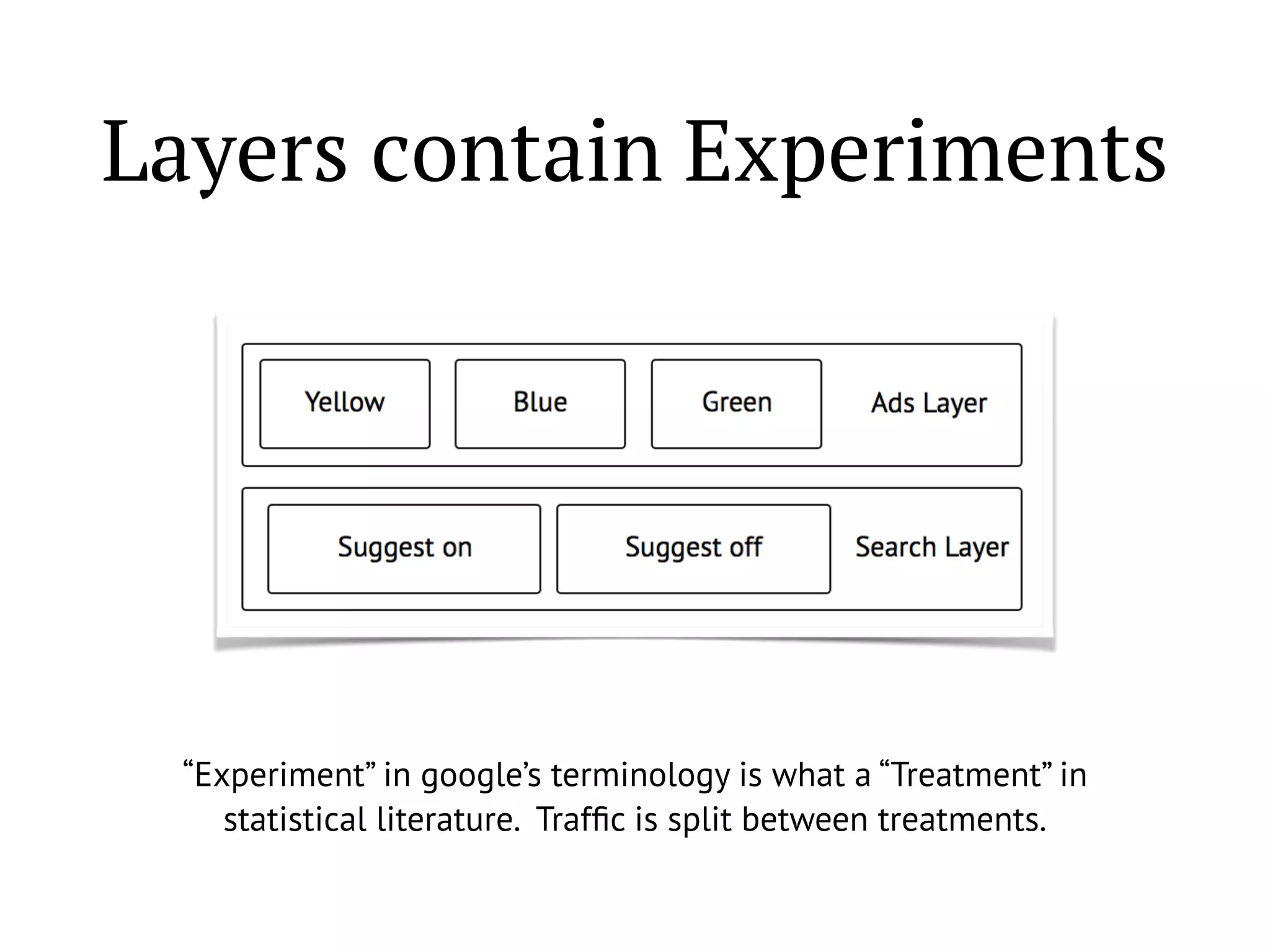

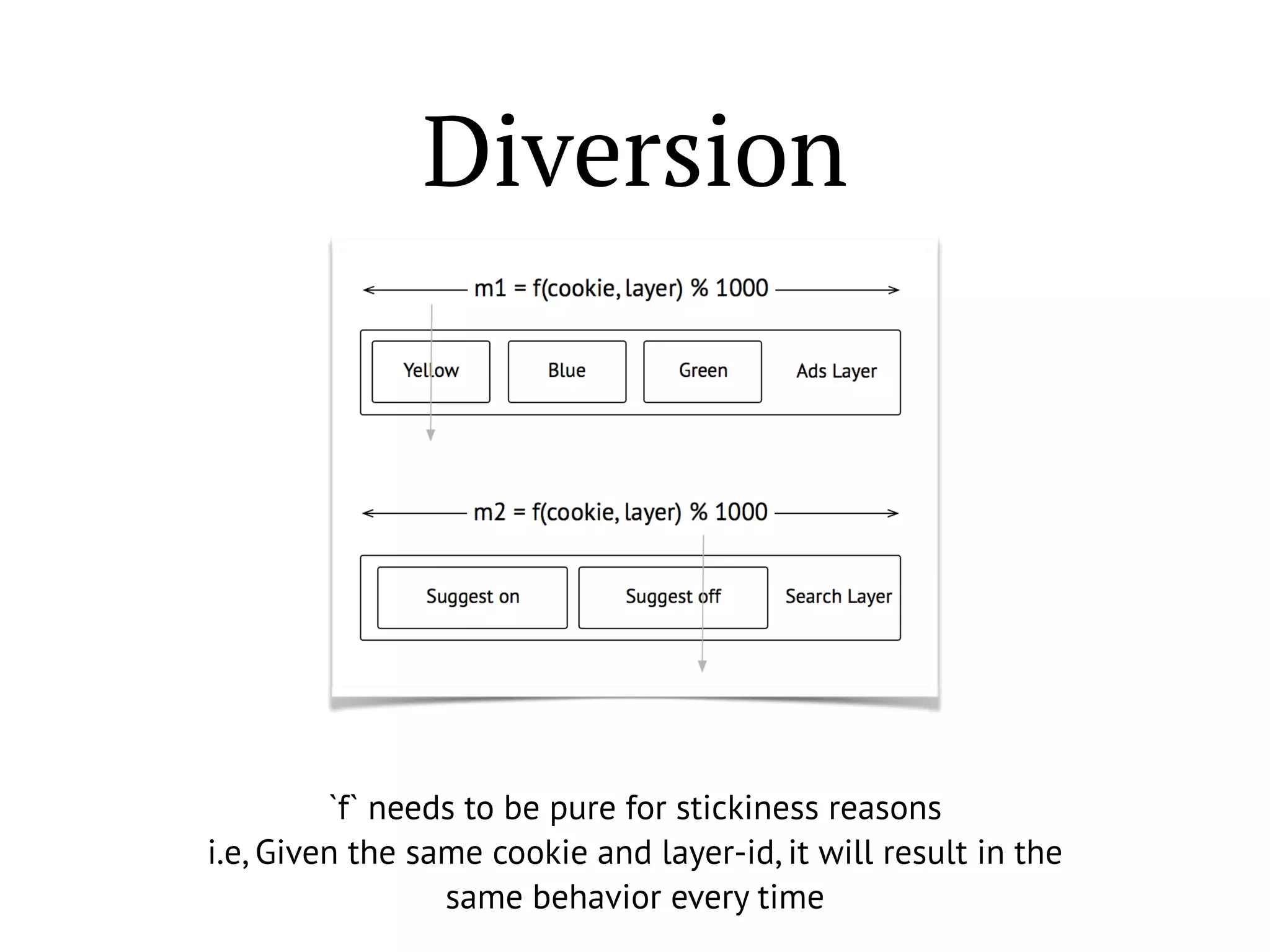

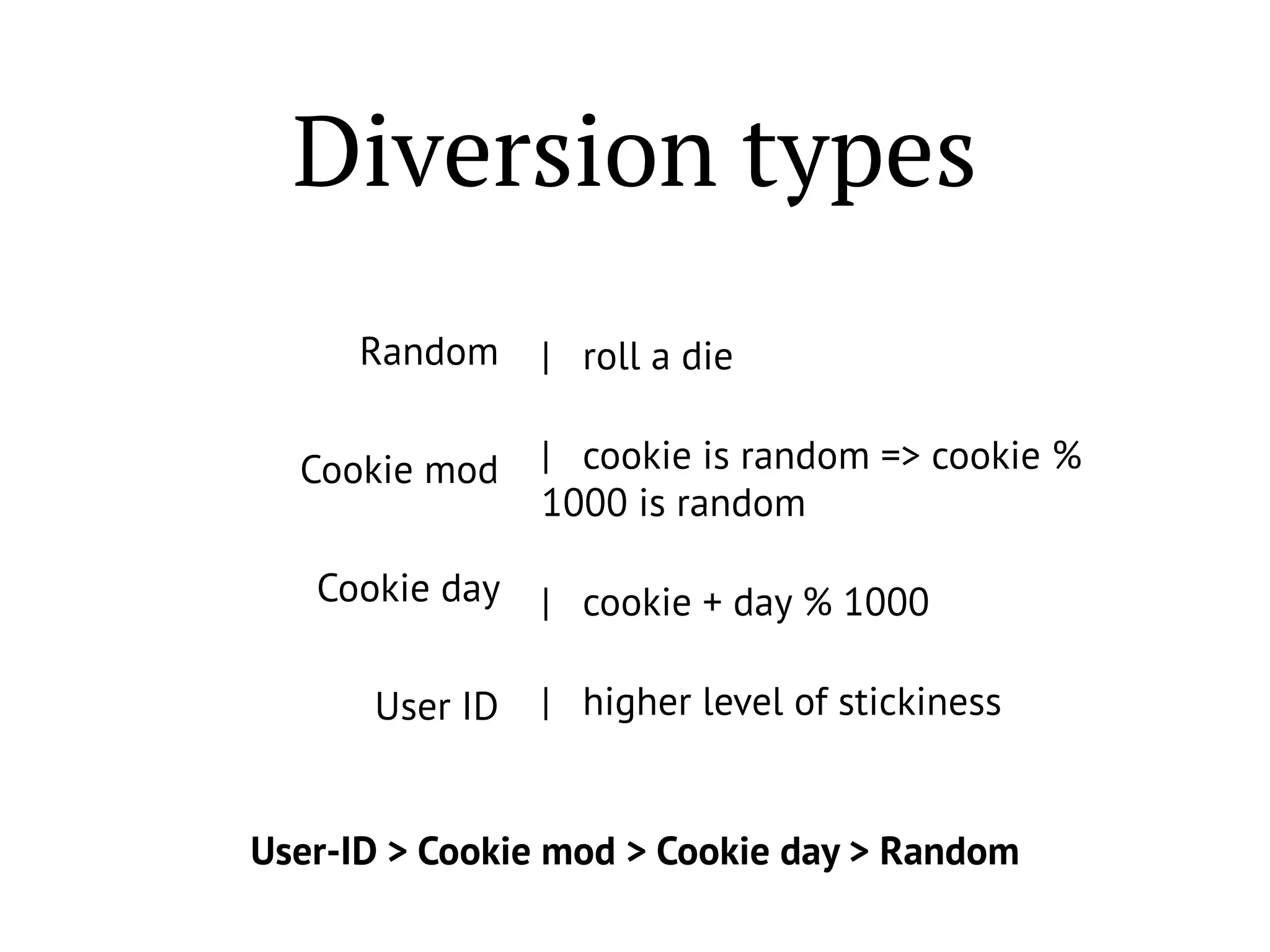

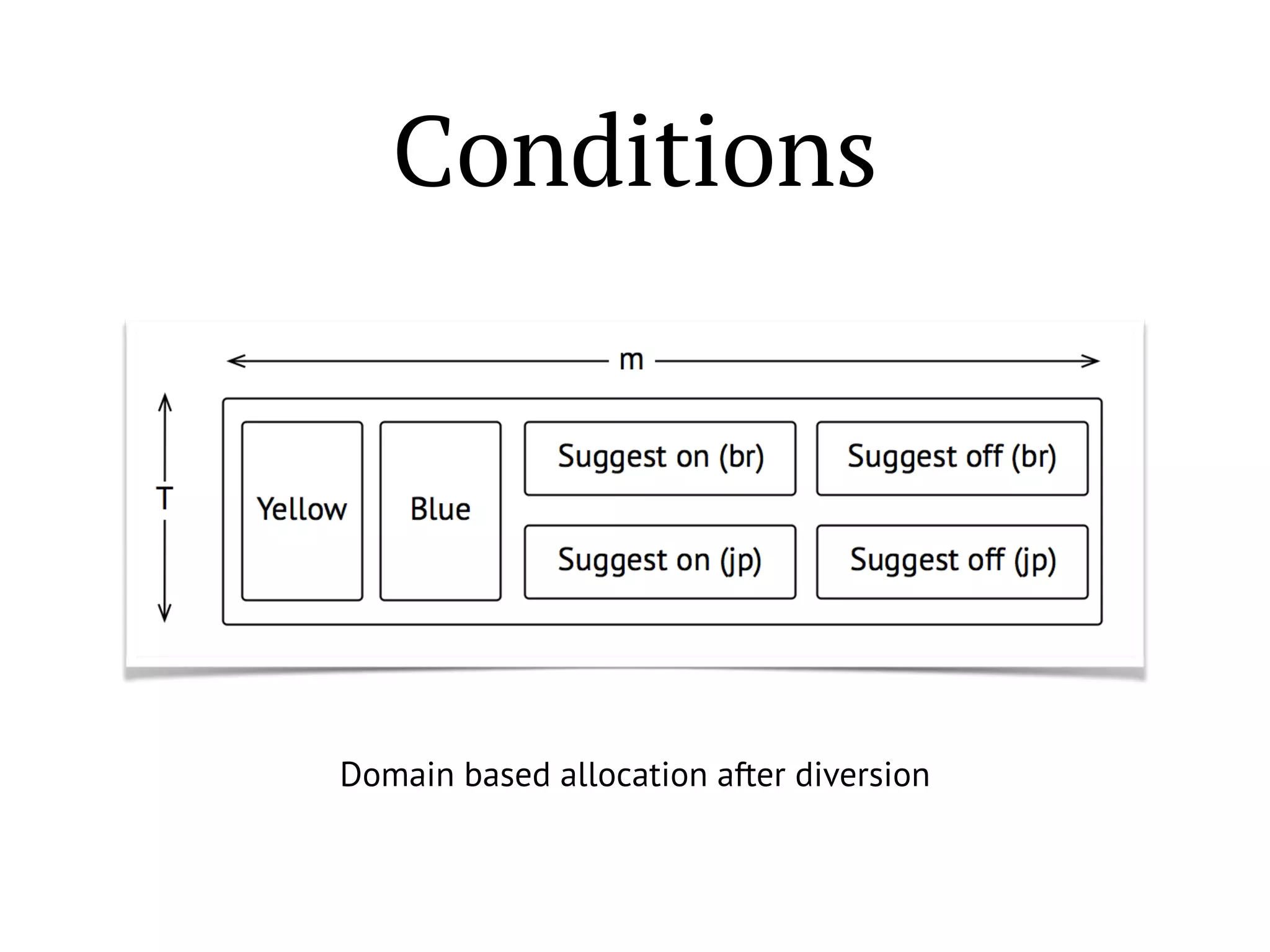

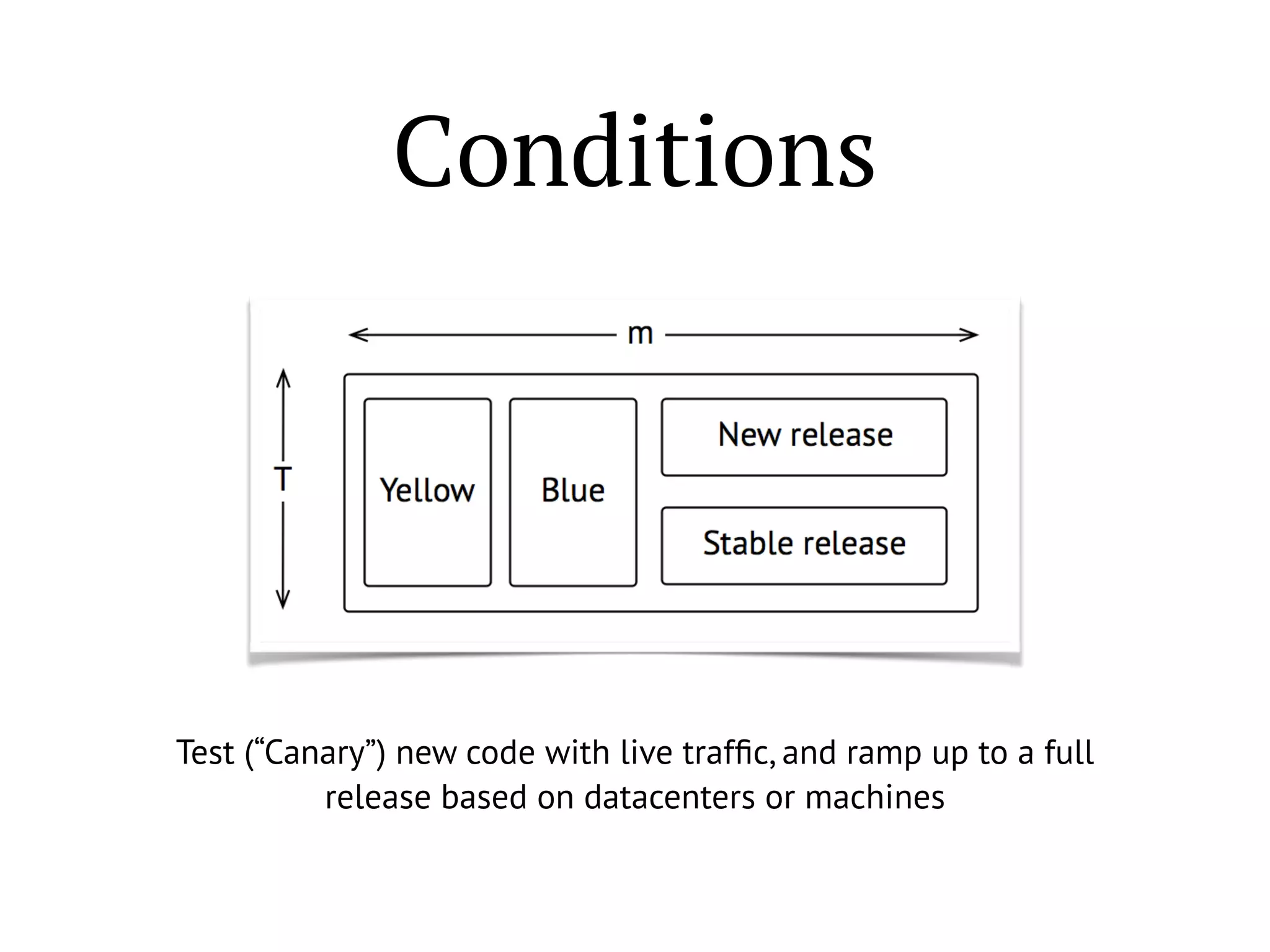

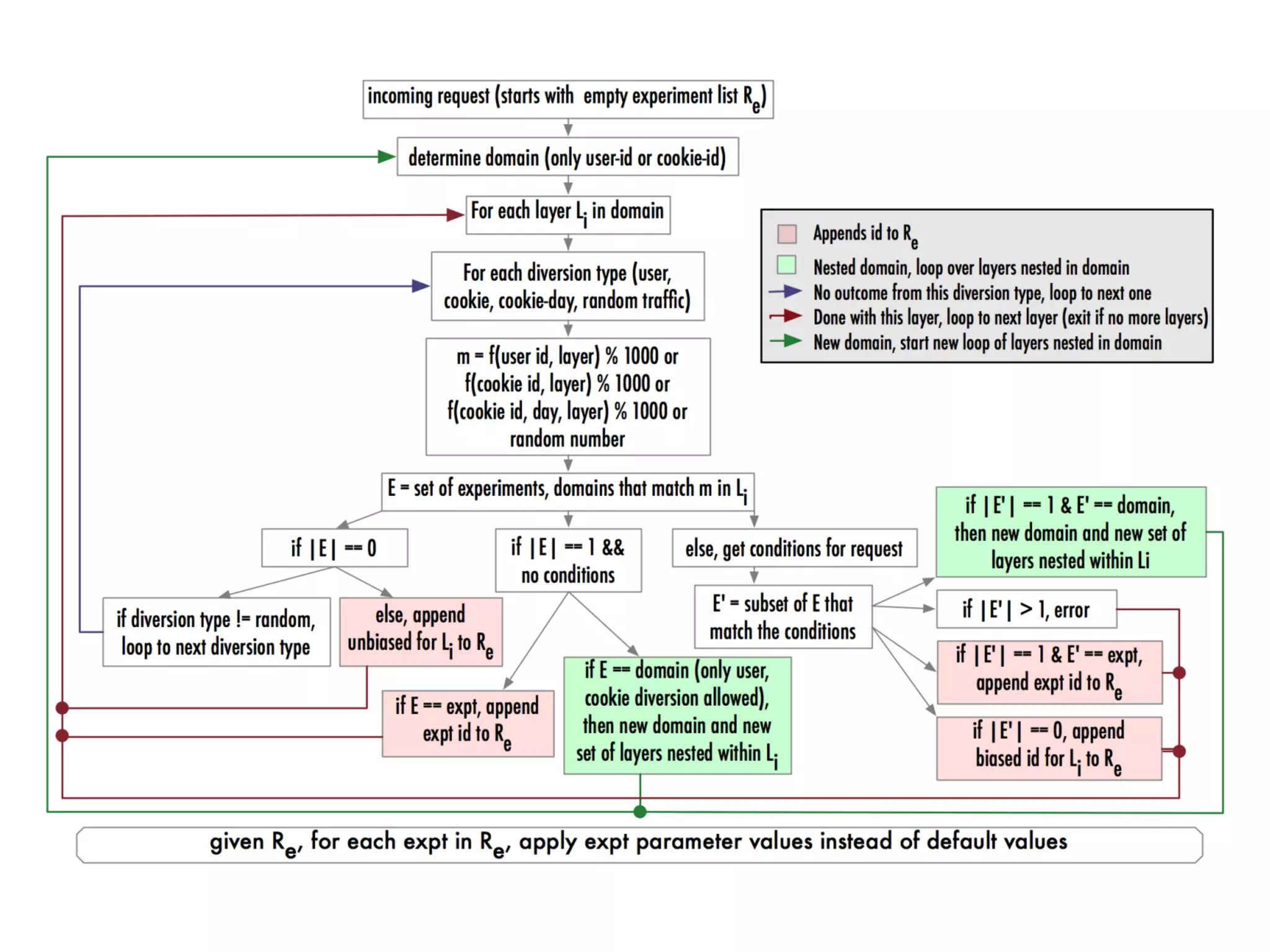

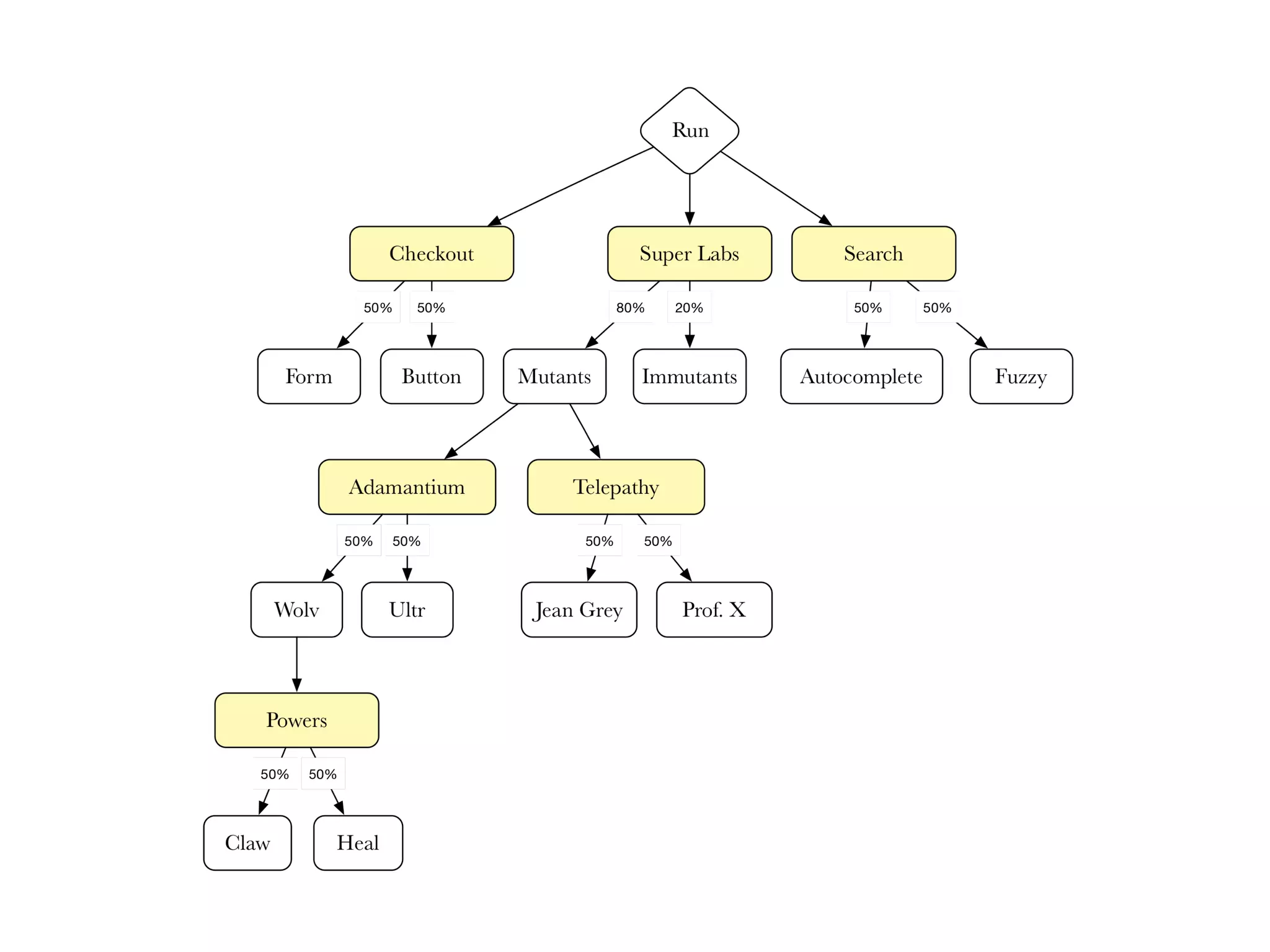

The document discusses Google's overlapping experiment infrastructure, highlighting the challenges and methodologies in conducting large-scale experiments to achieve statistically significant results. It details concepts such as layers, domains, and various experimentation types, including A/B testing and multivariable testing, while addressing the need for efficient traffic management. The paper emphasizes the importance of education, scheduling, coverage, and reporting in designing and executing robust experiments.