The document discusses an agenda for a meetup about Redis Labs and Kubernetes operators. Key points:

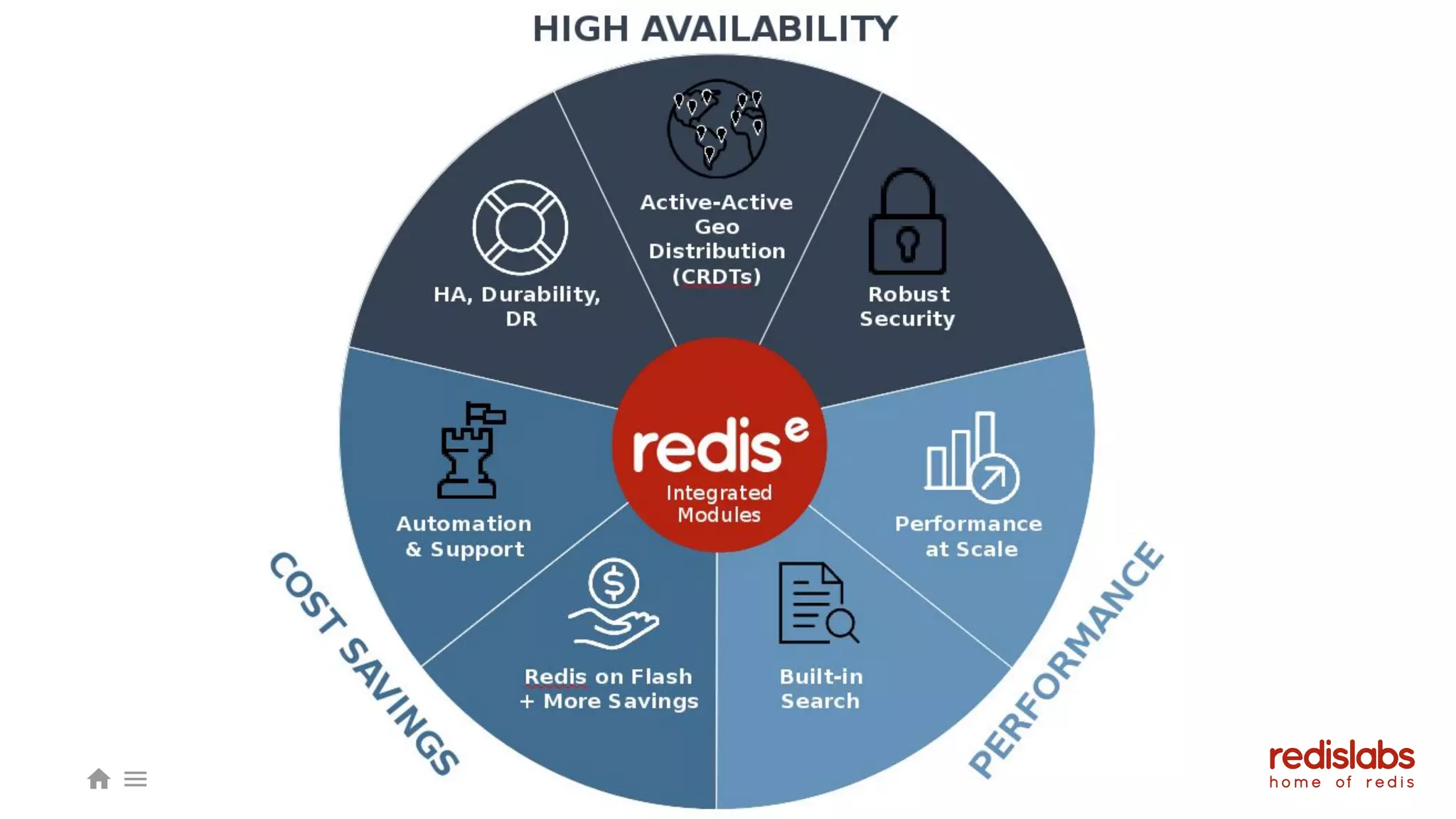

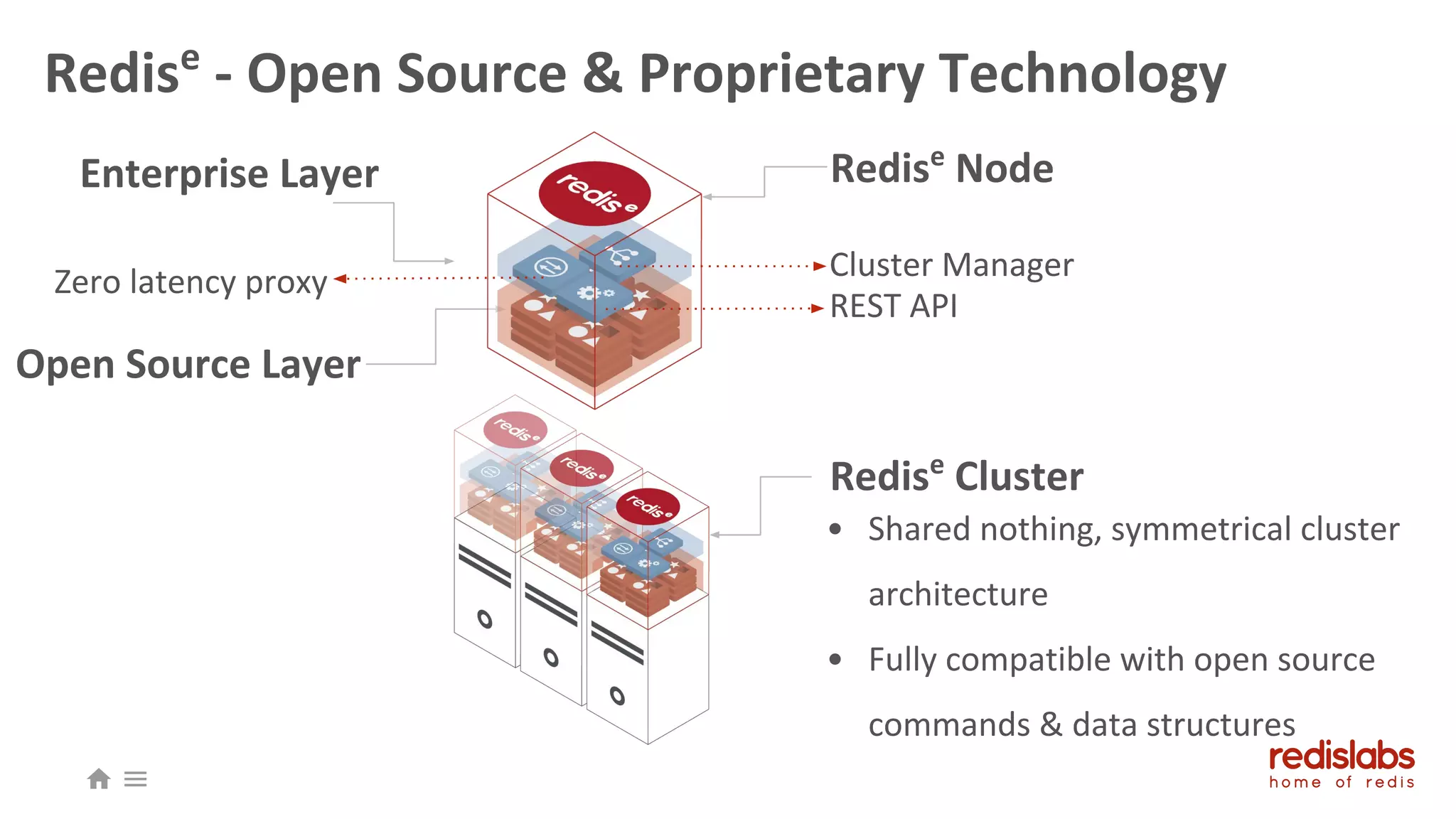

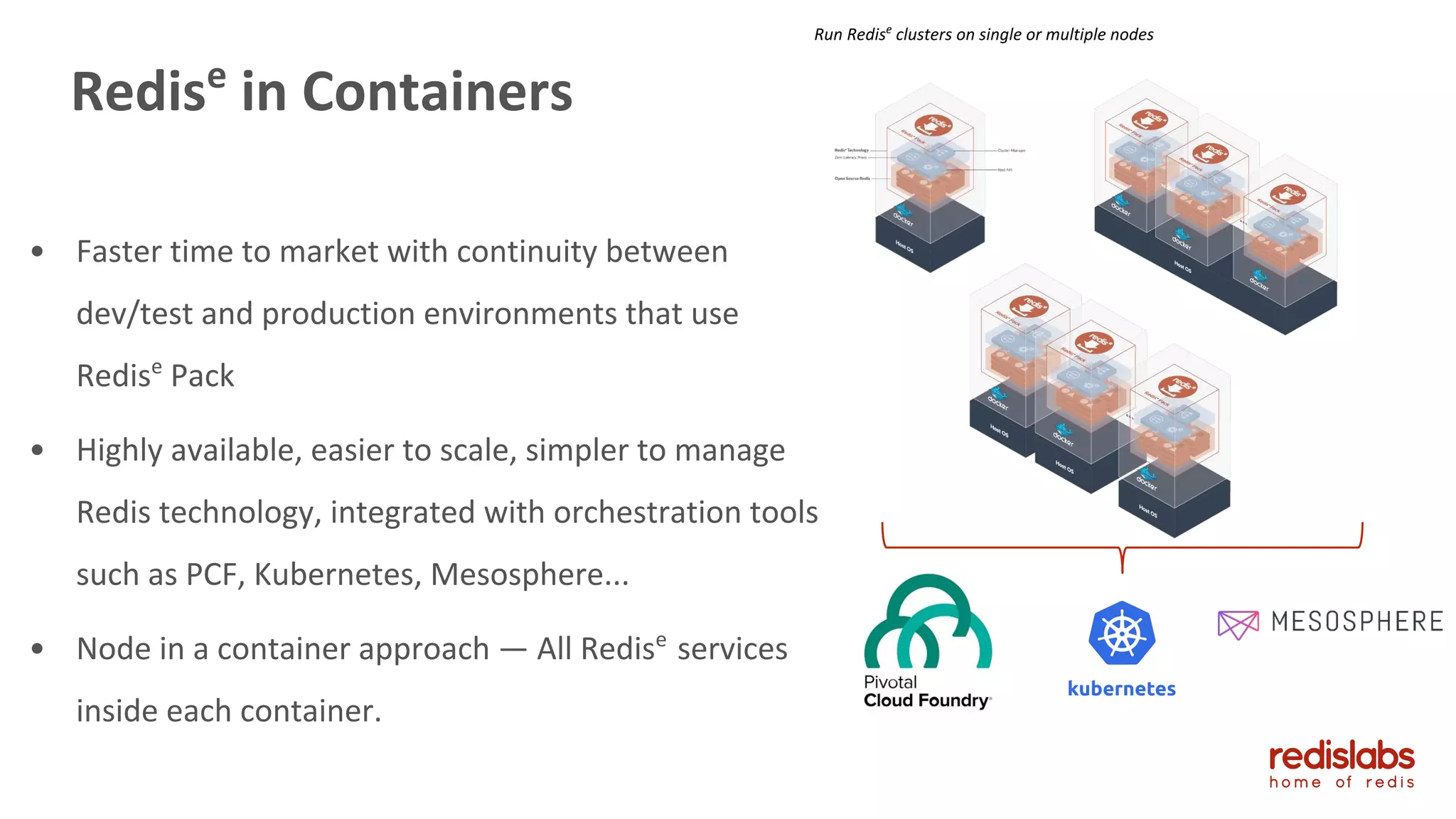

- An introduction to Redis Enterprise architecture and Redis Labs products.

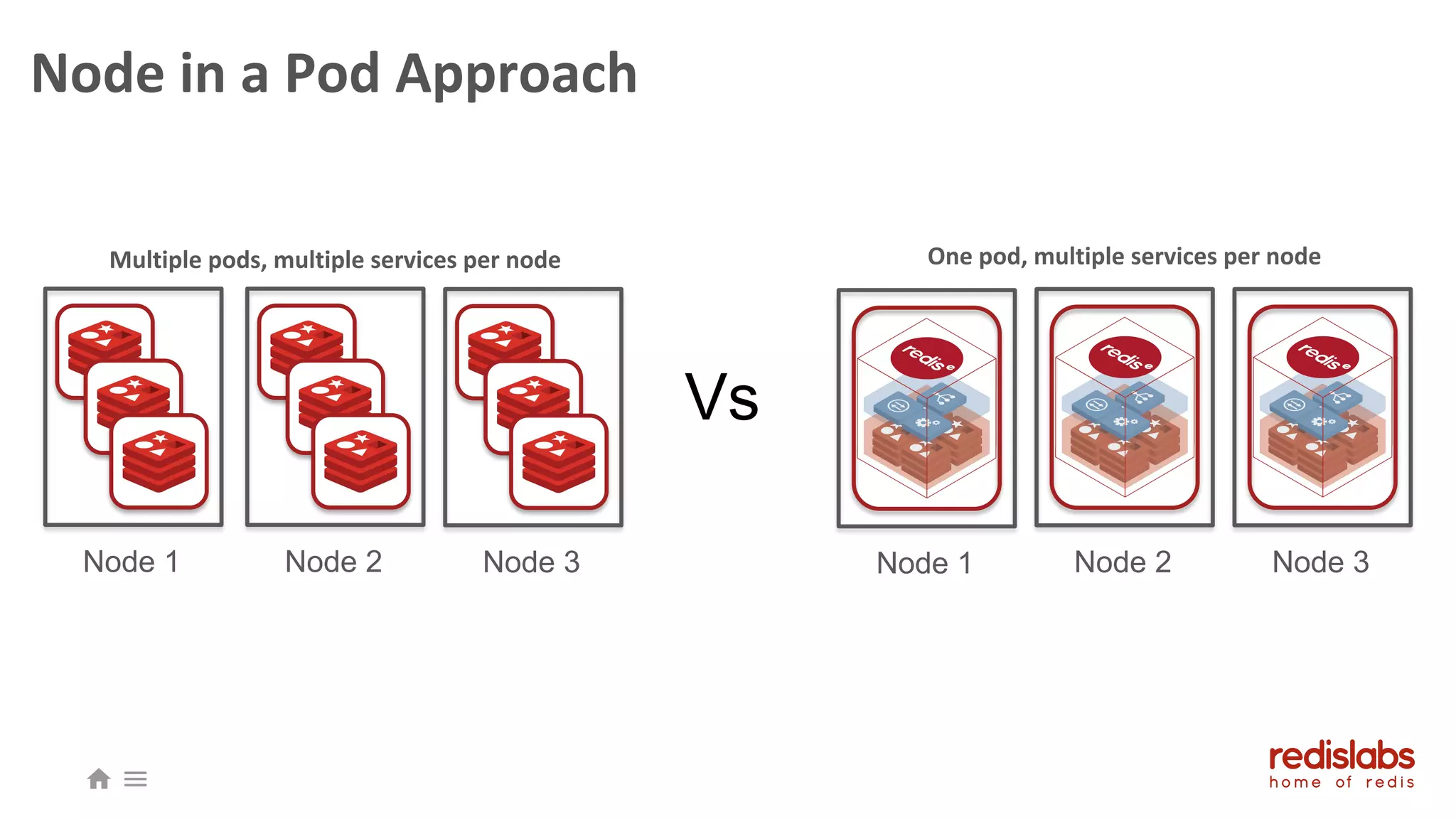

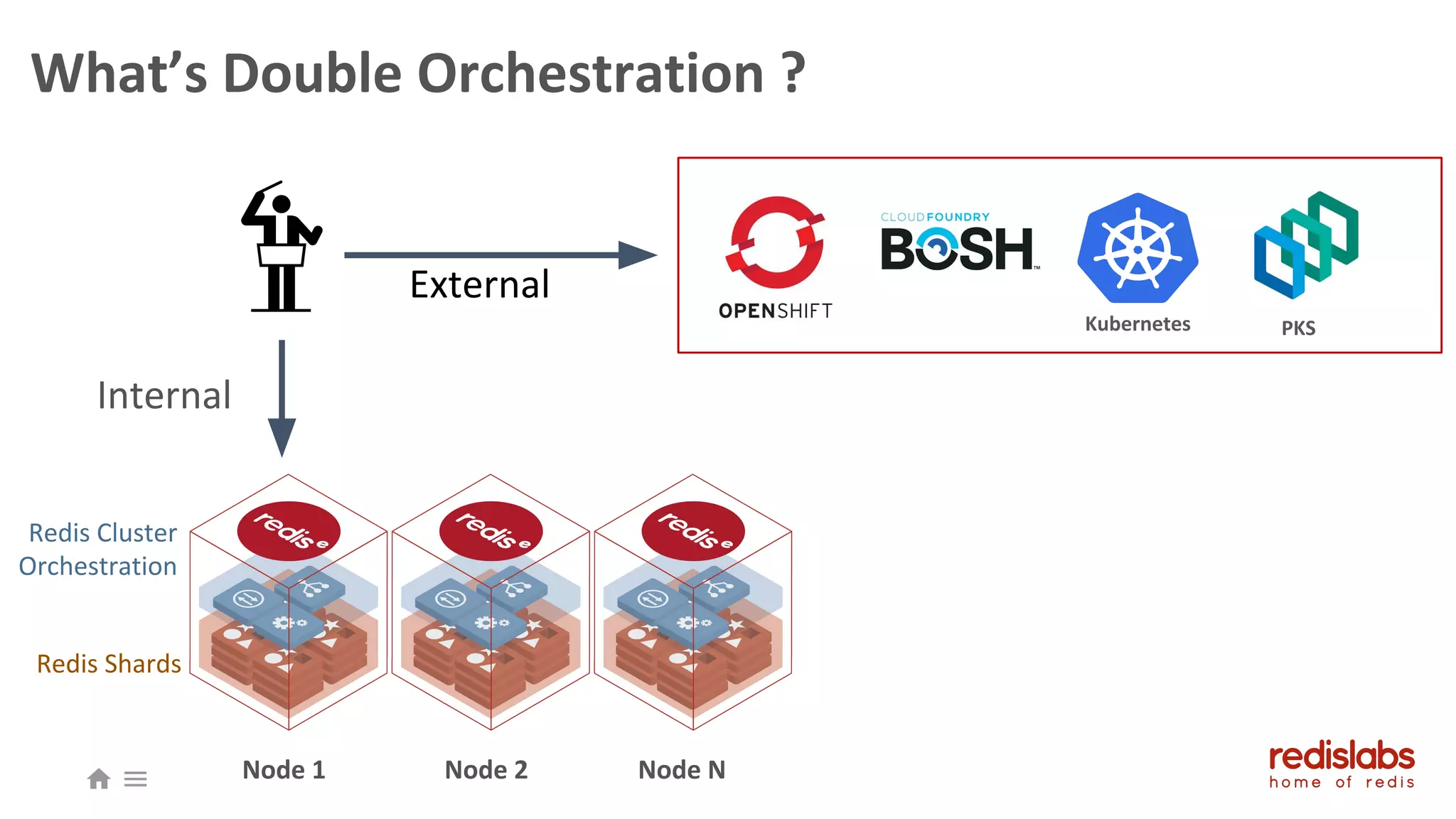

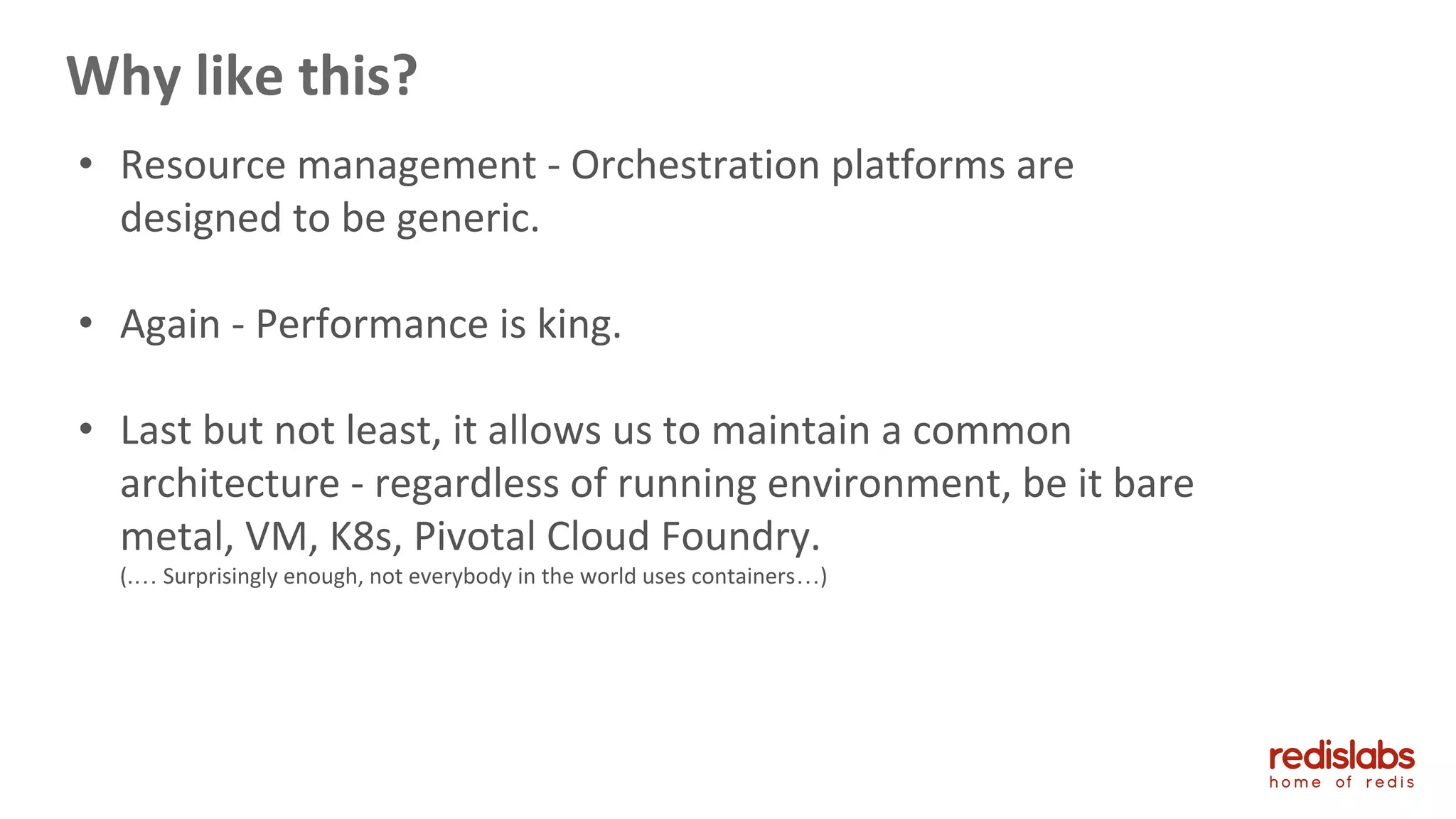

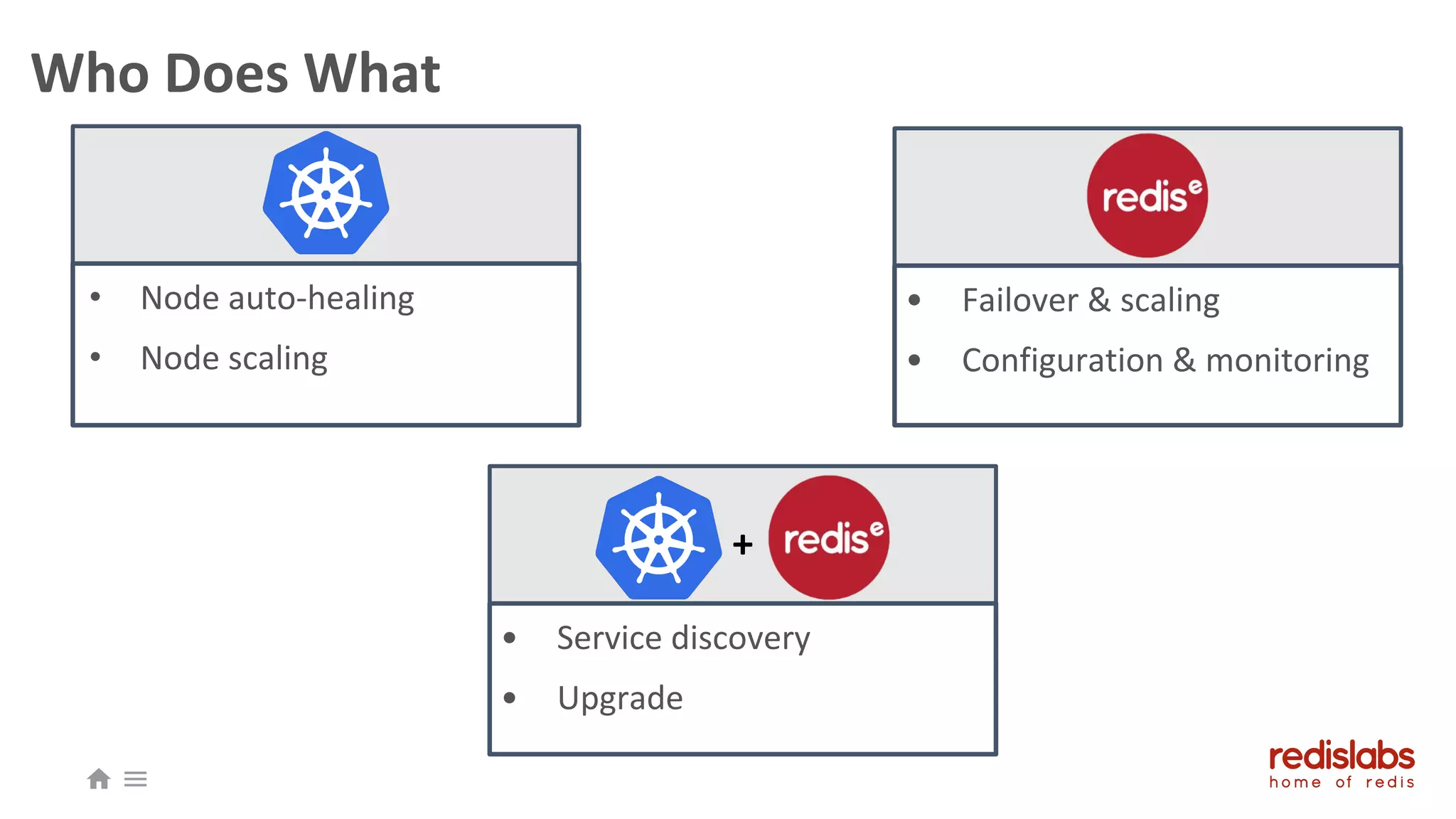

- A discussion of "double orchestration" using Kubernetes and PKS to manage Redis clusters for performance and resource management.

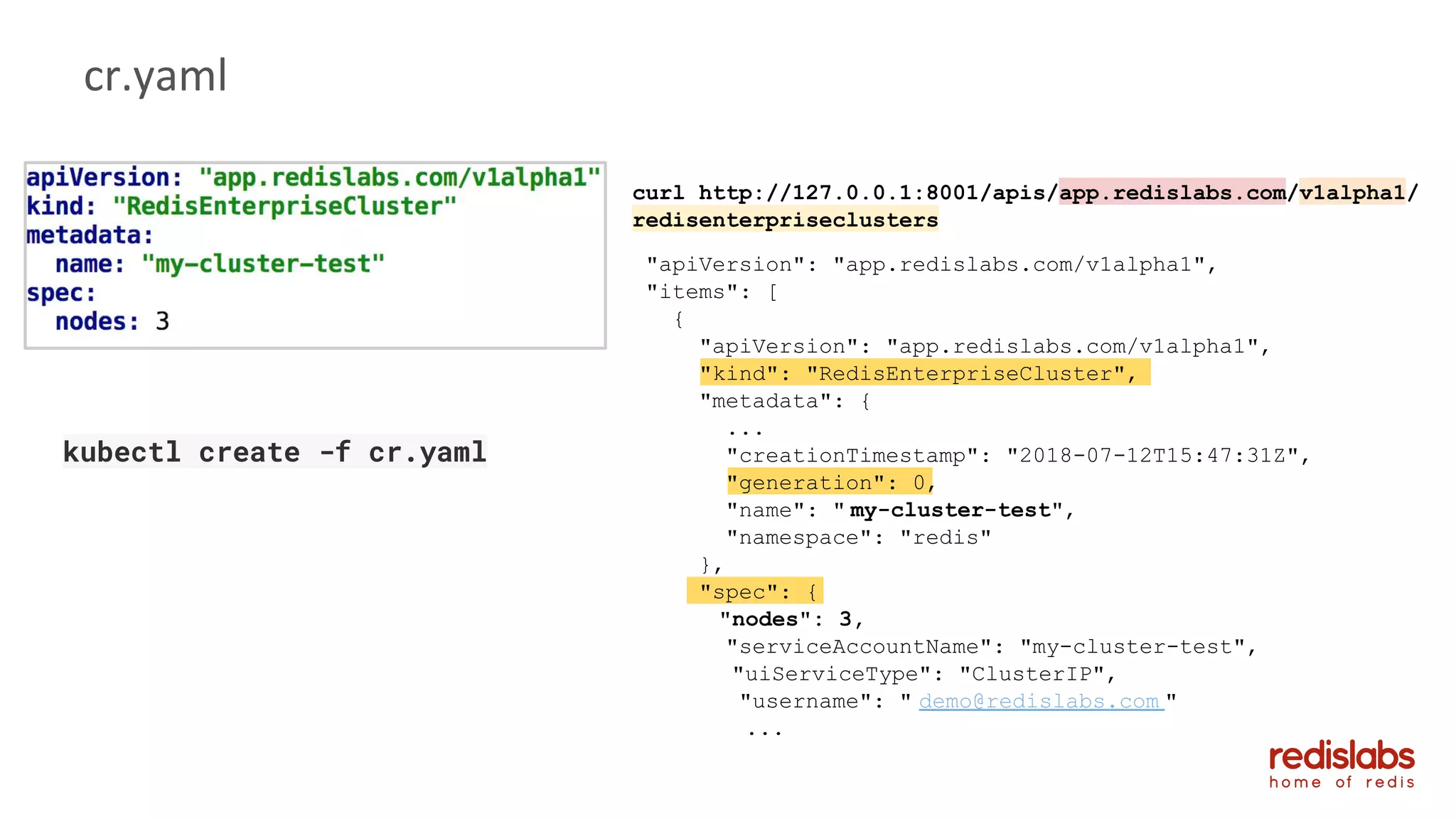

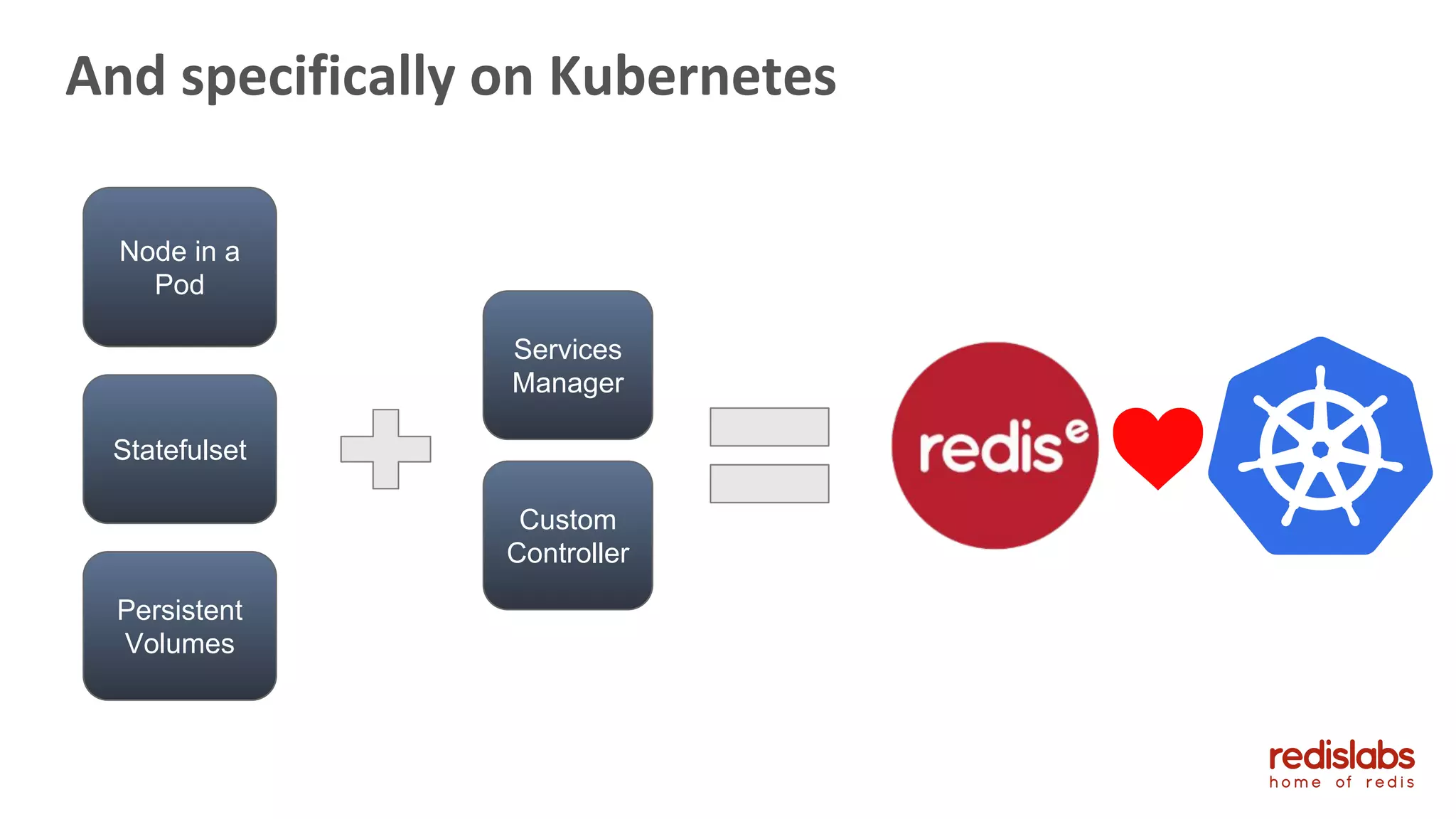

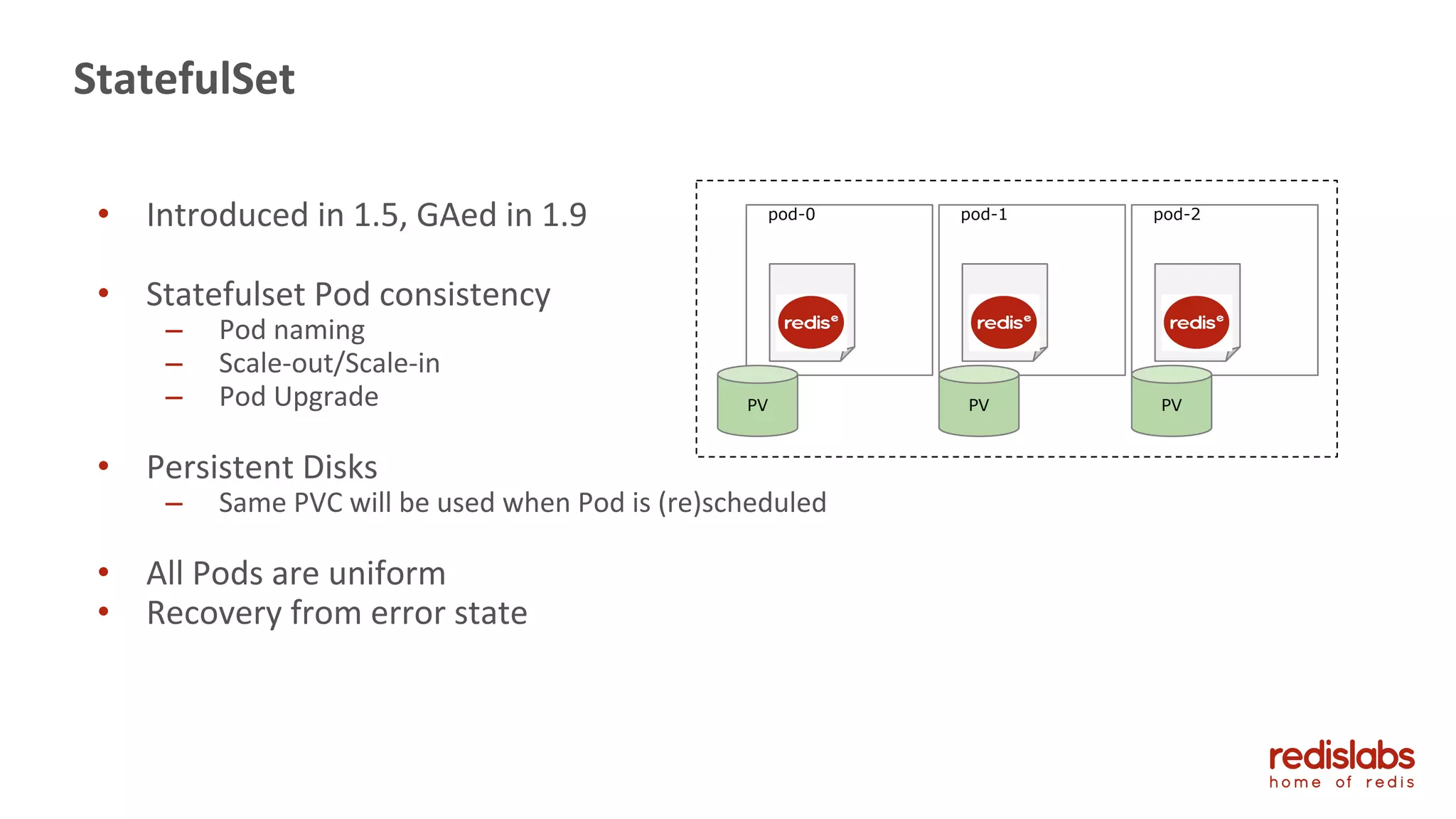

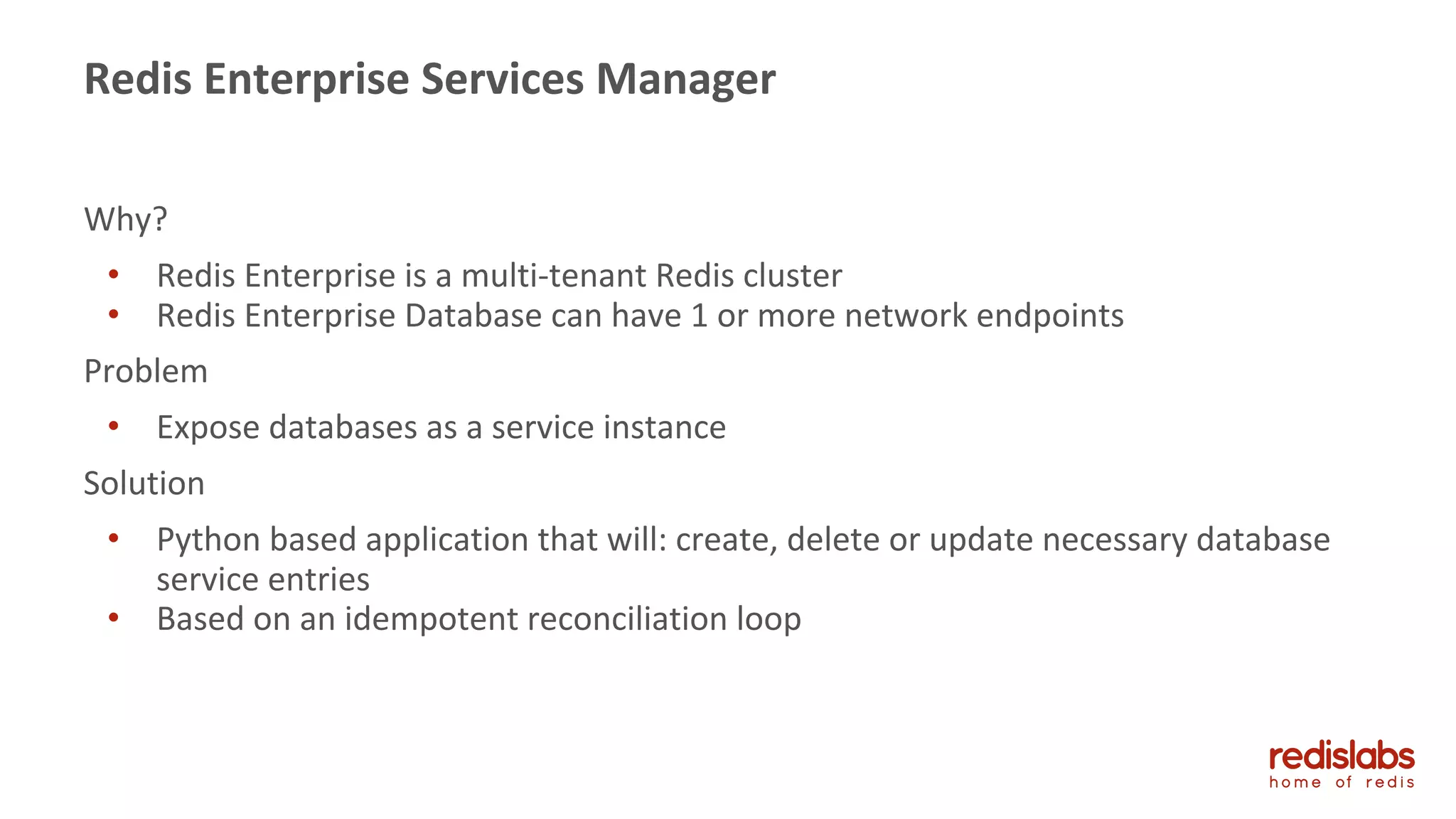

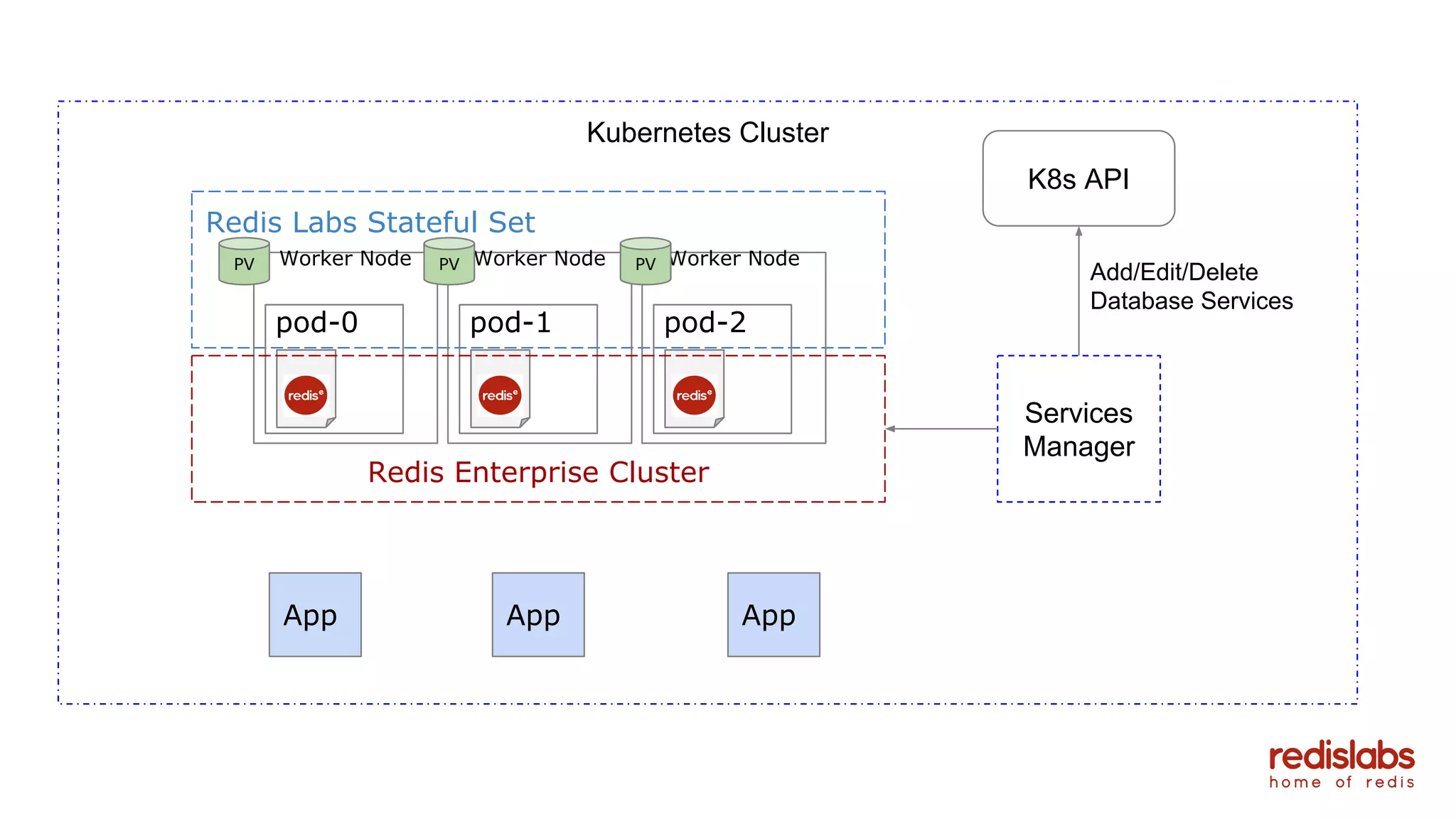

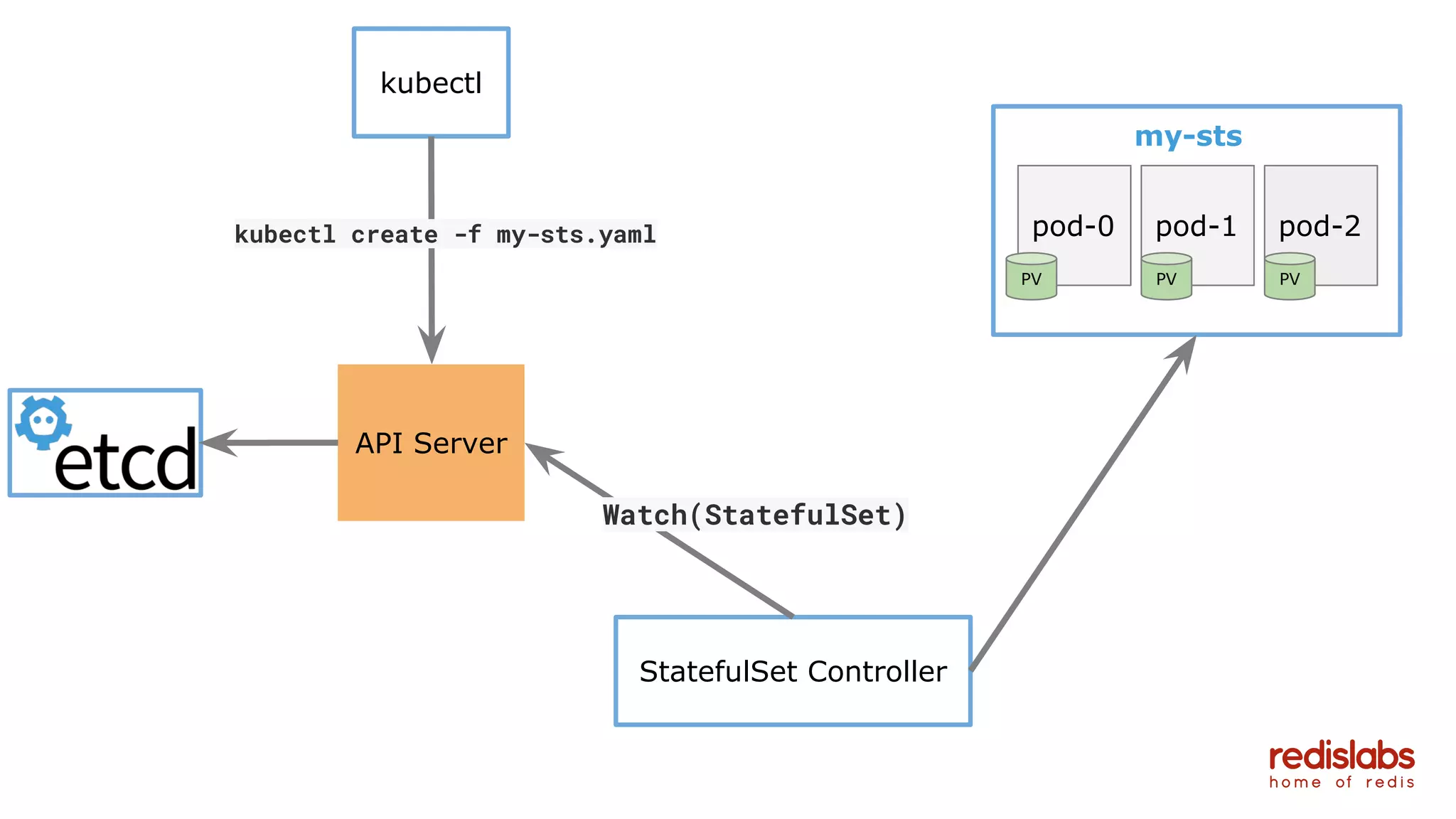

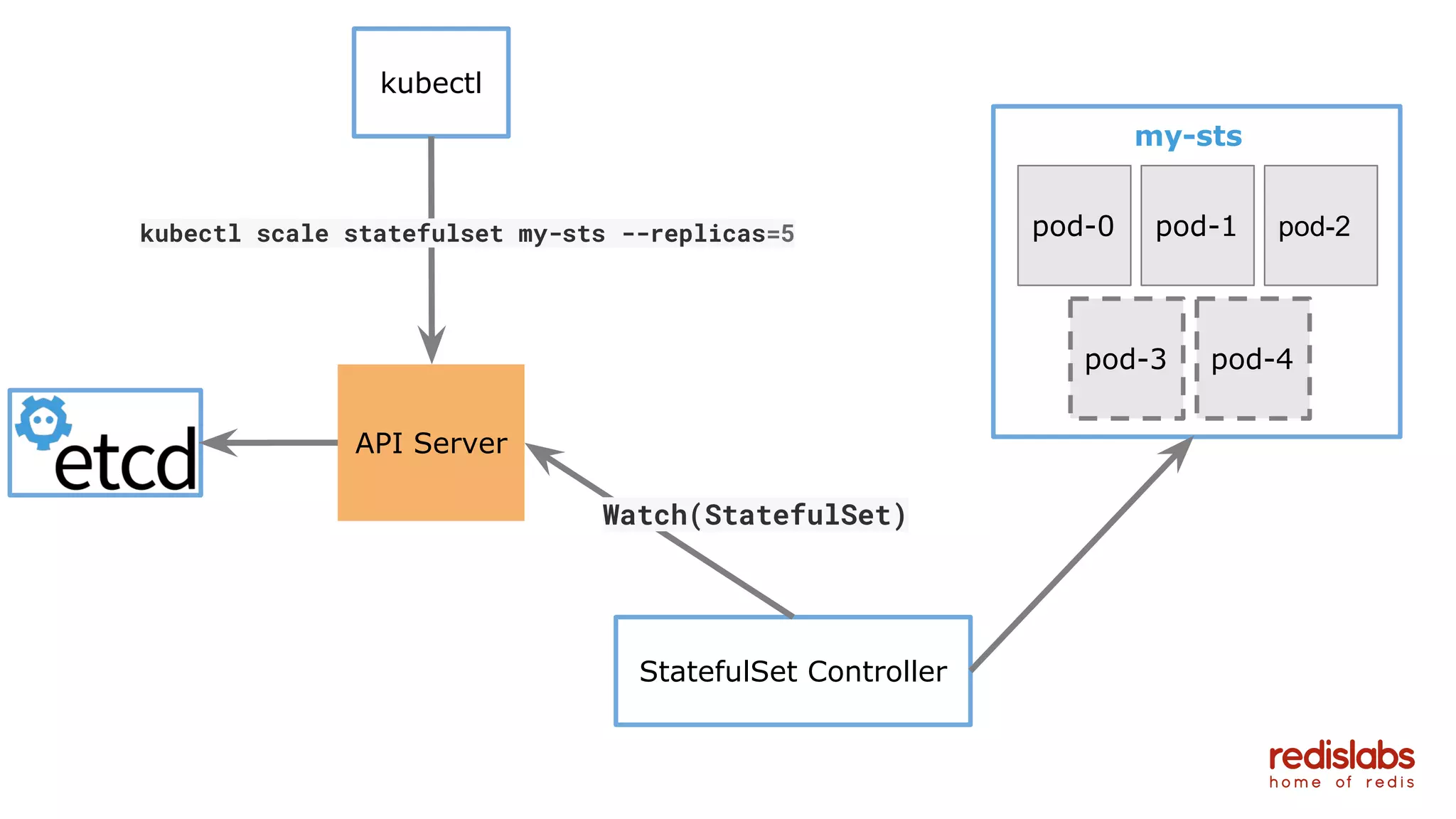

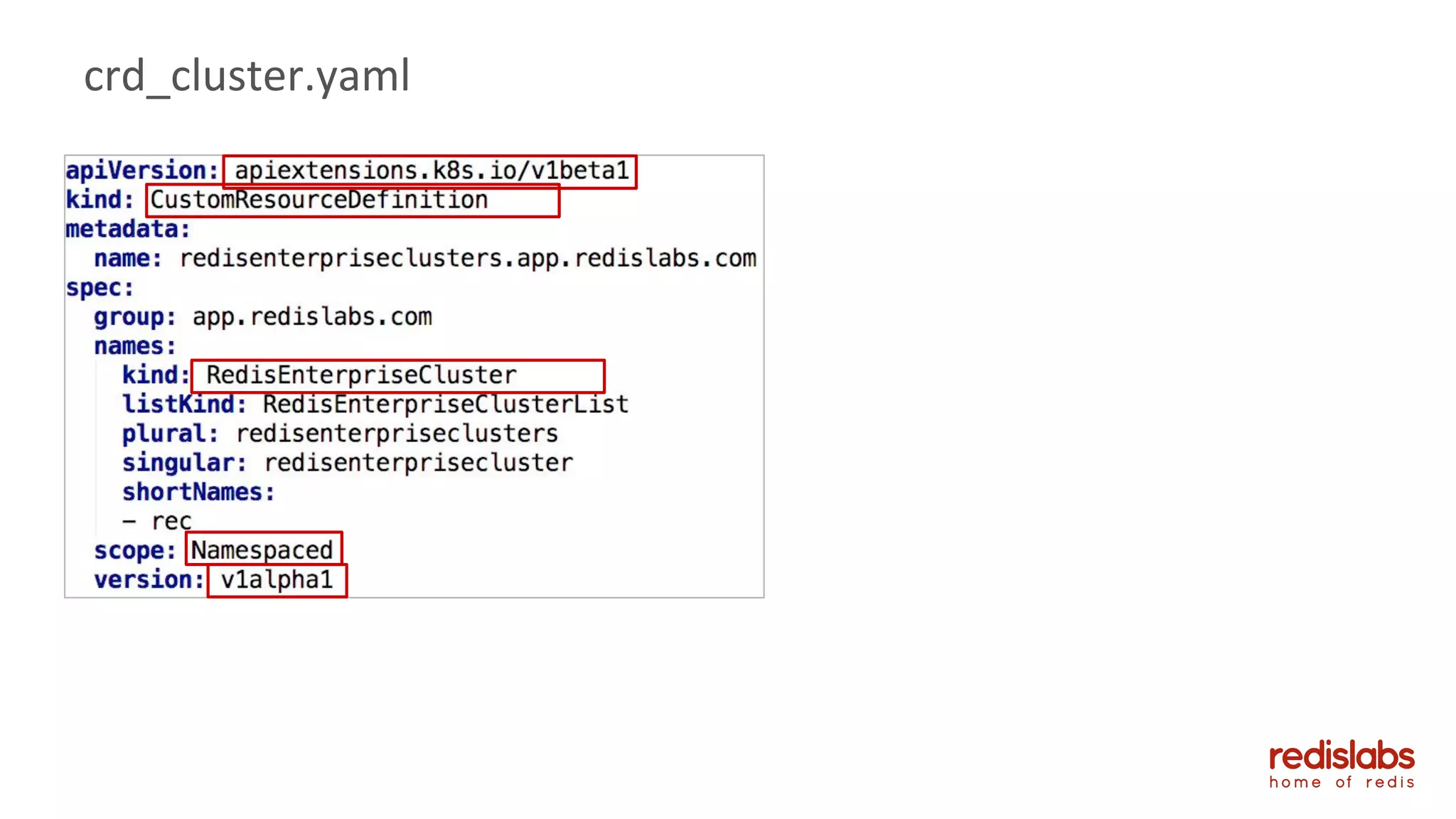

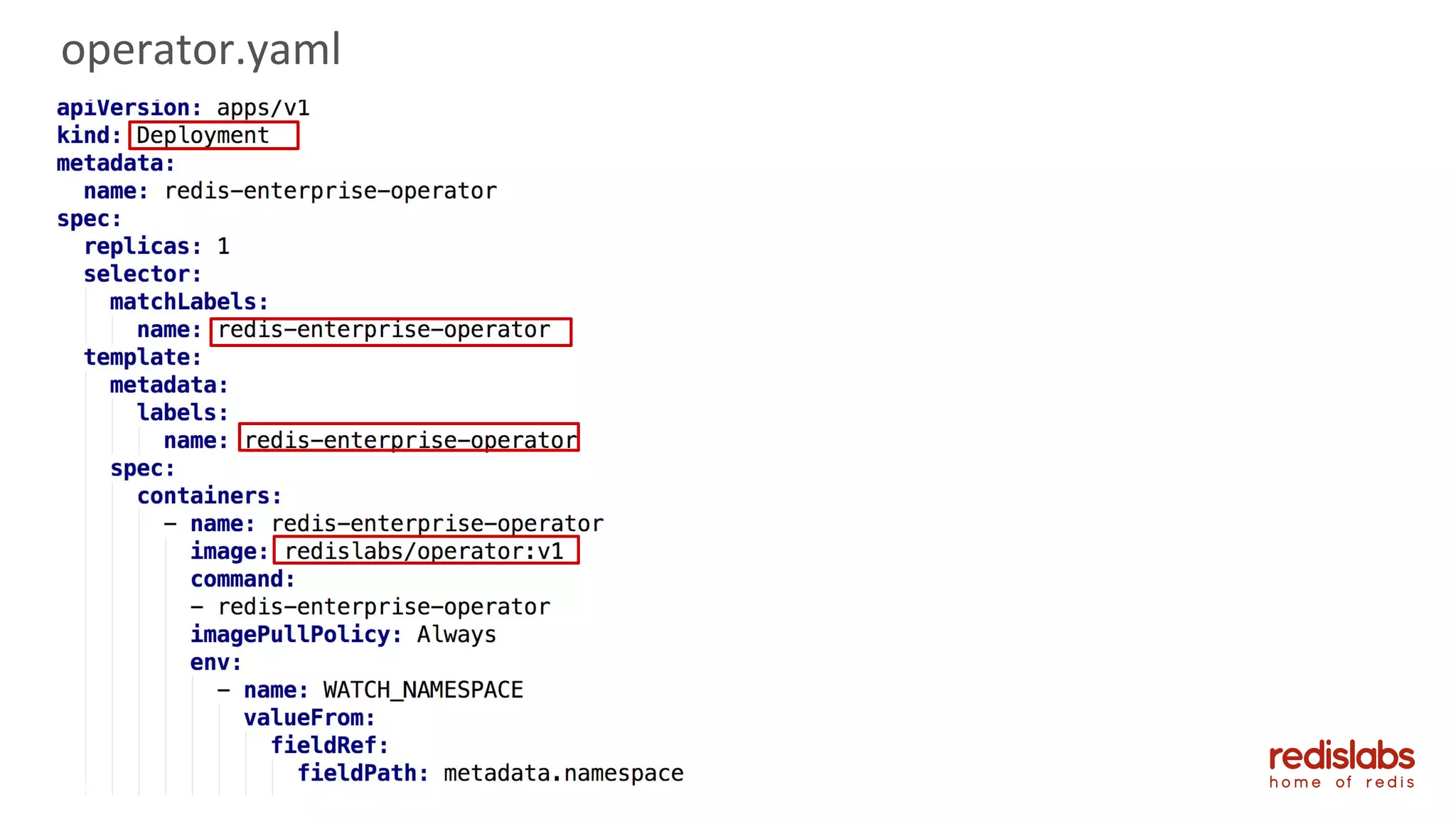

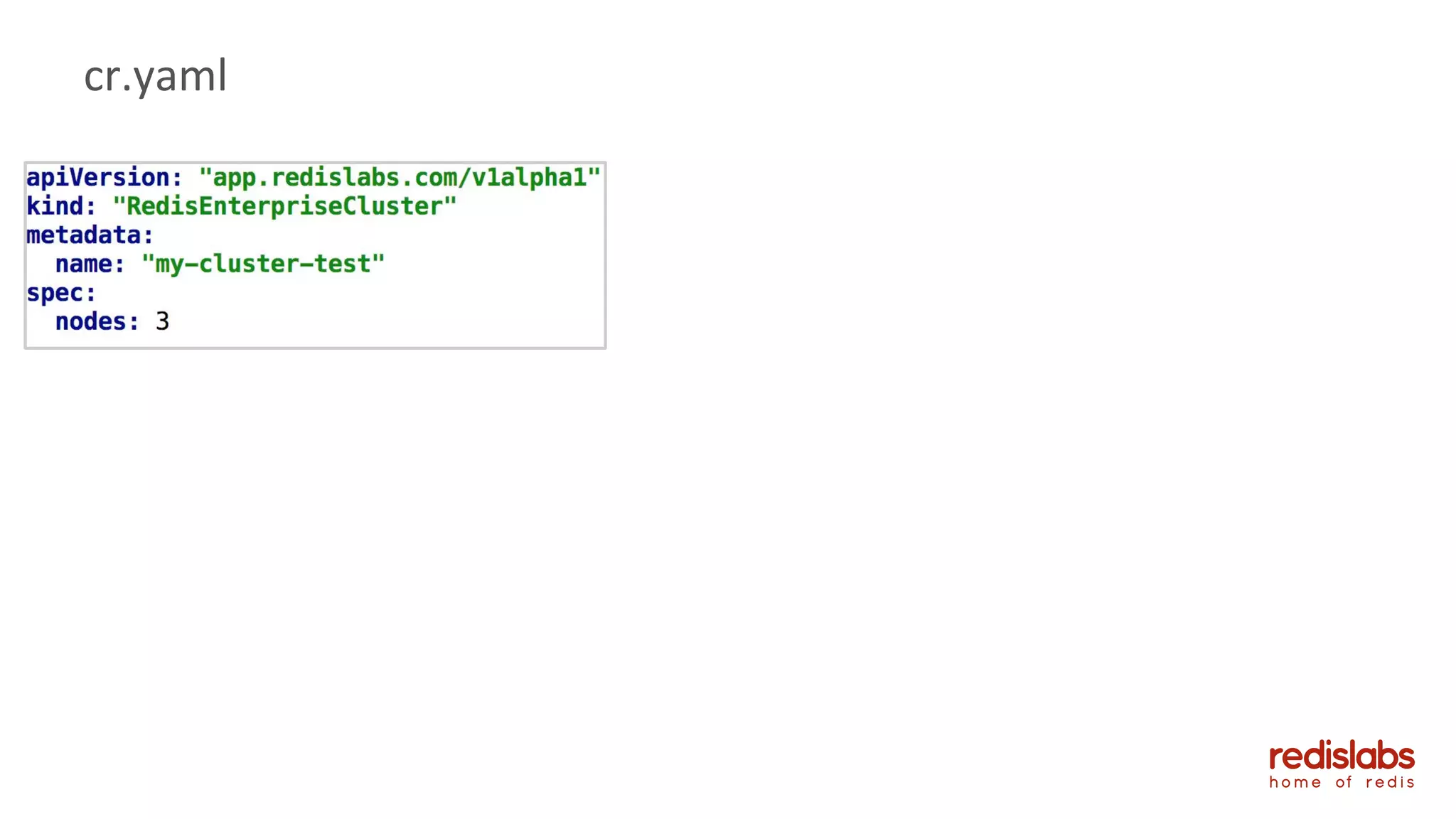

- An overview of Redis Labs' Kubernetes solution using StatefulSets, services, and a custom controller.

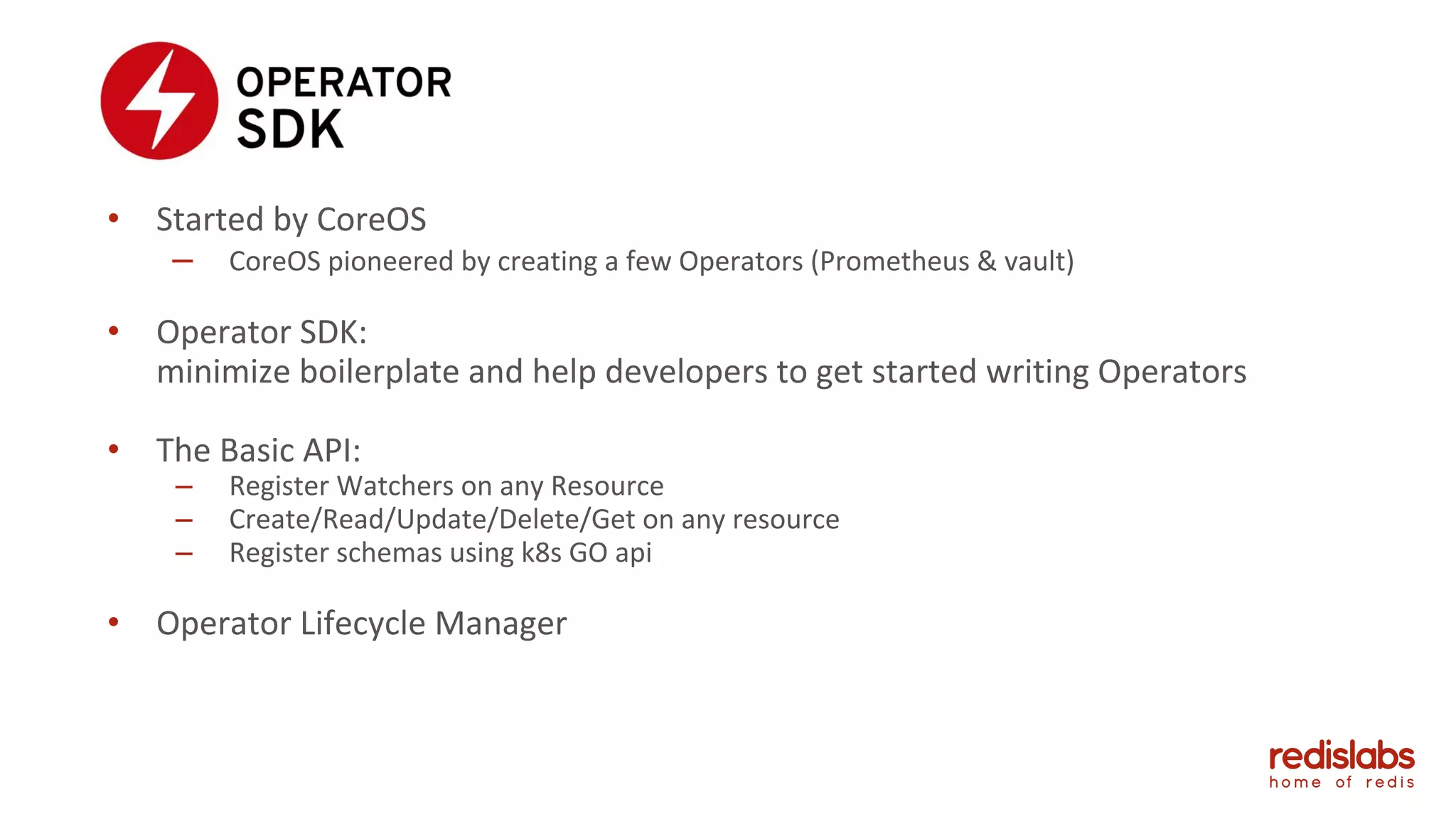

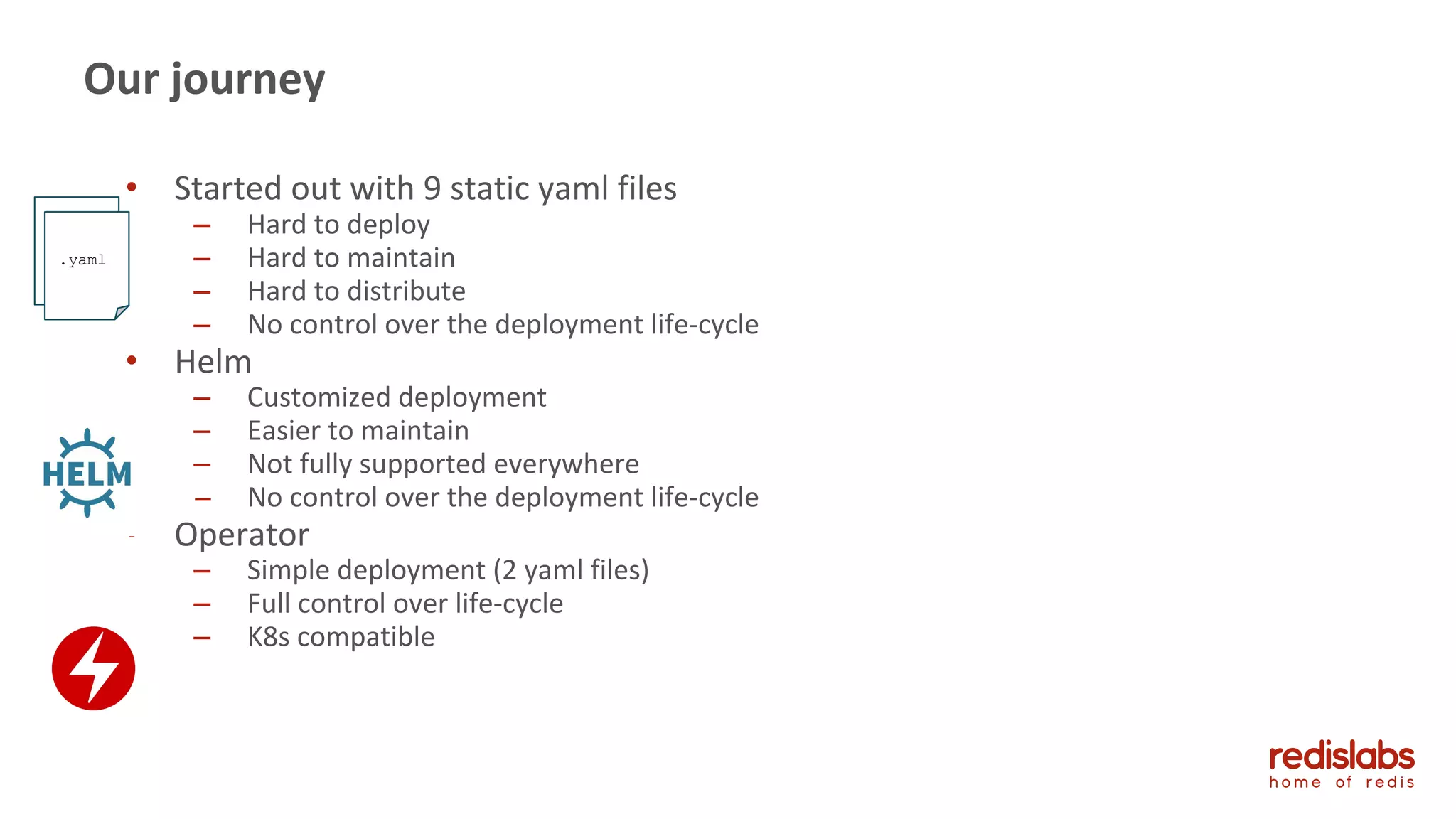

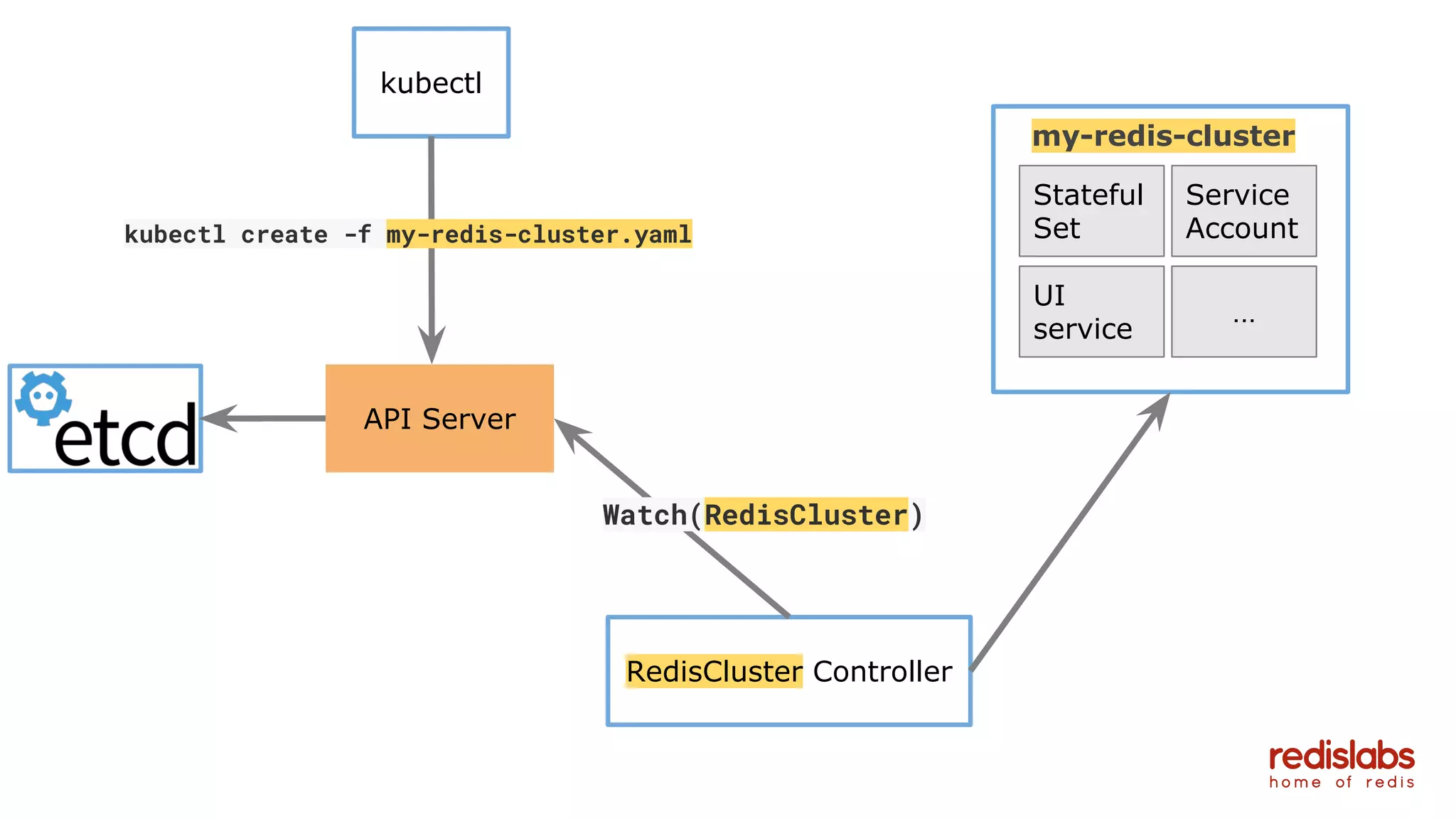

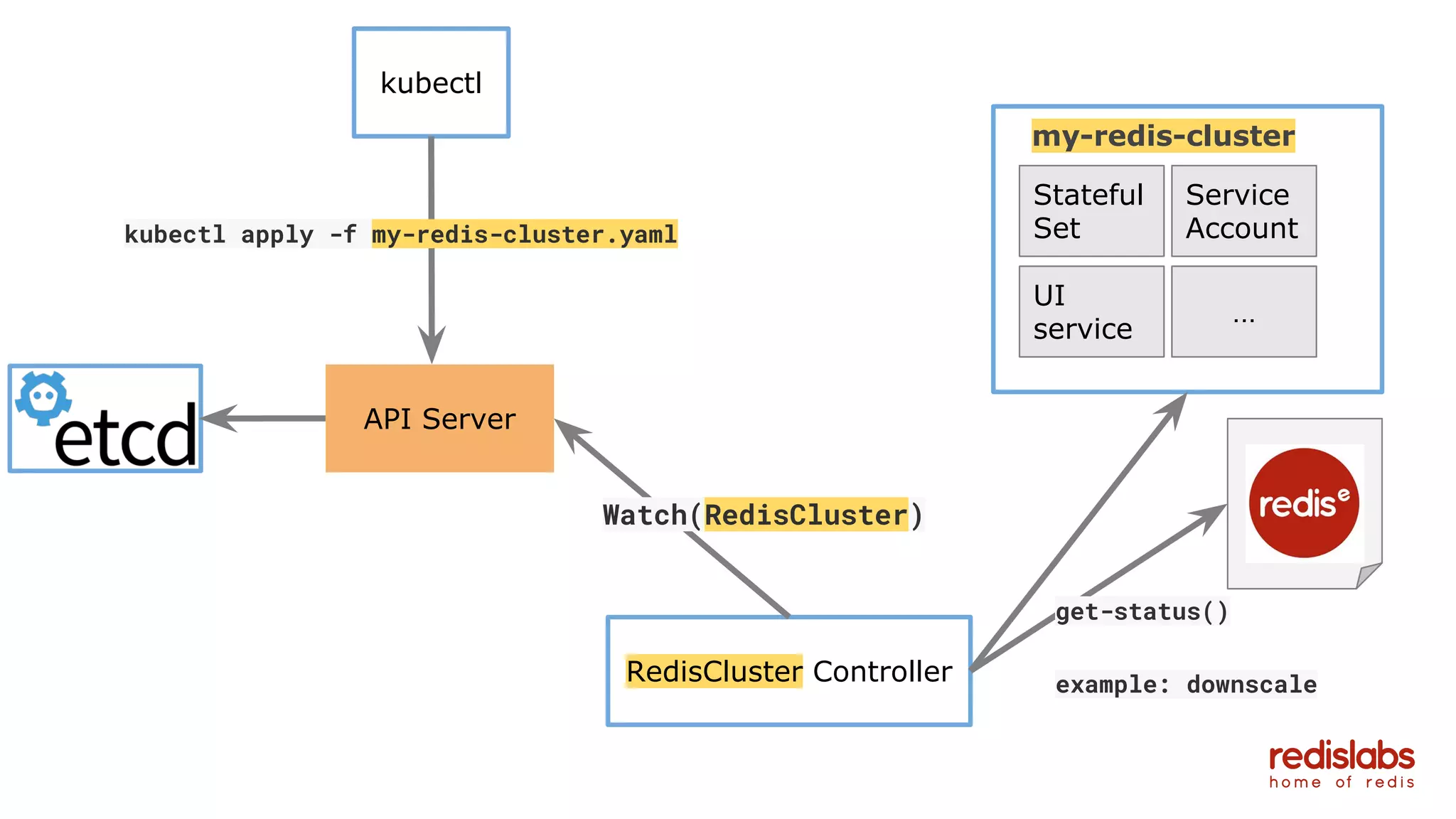

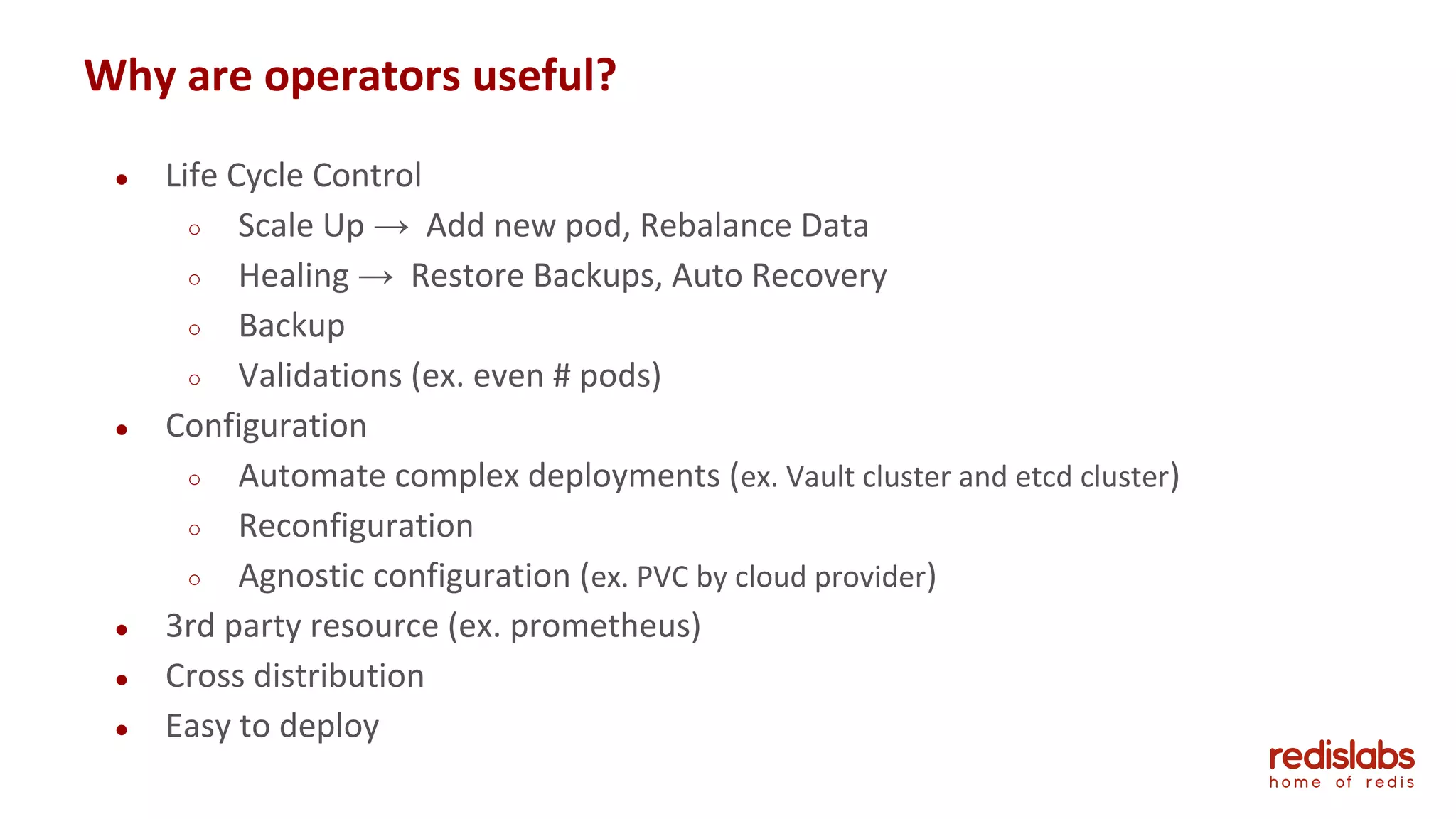

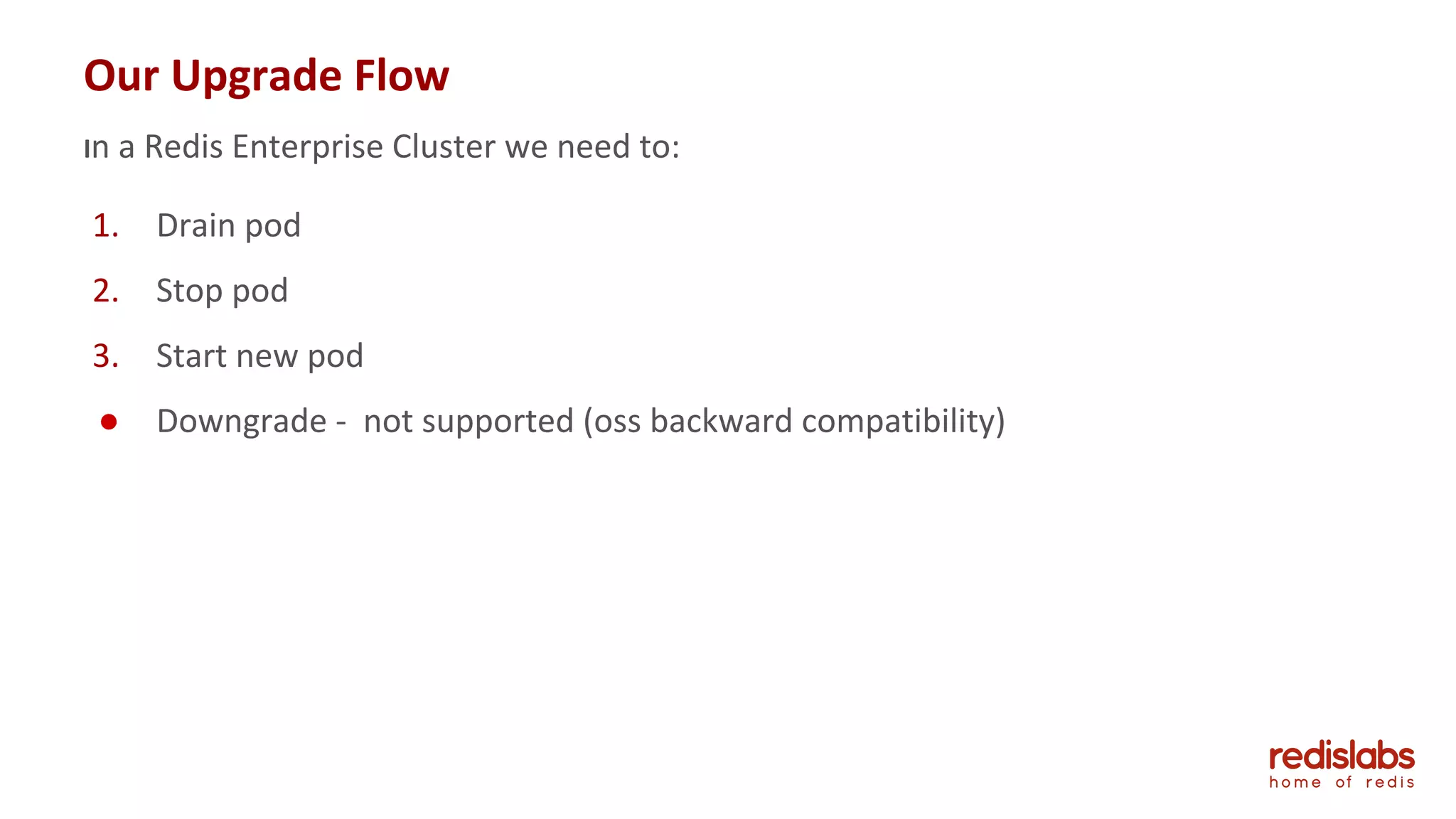

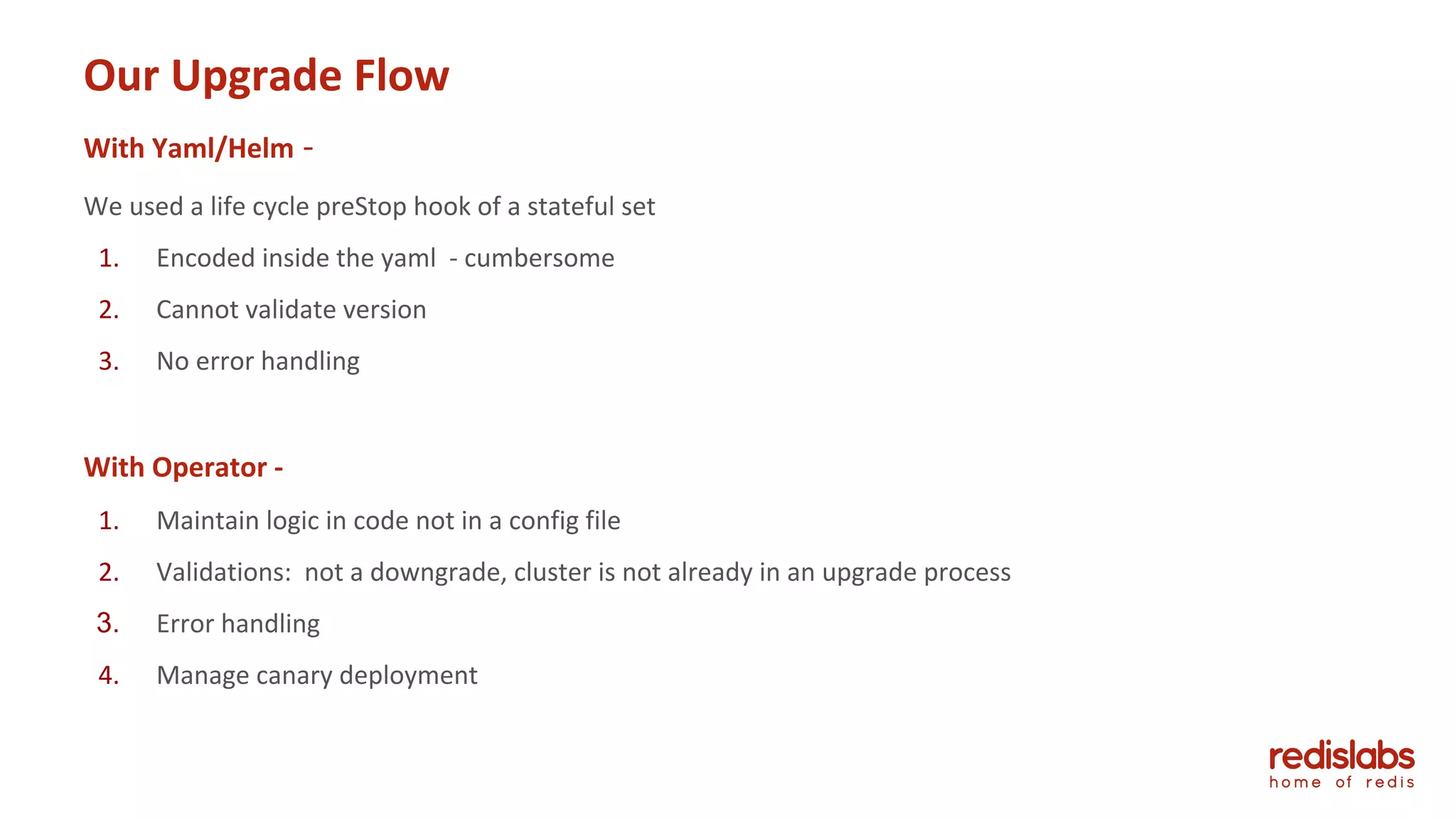

- An introduction to operators, how they provide lifecycle management and simplify deployments compared to static YAML files or Helm.

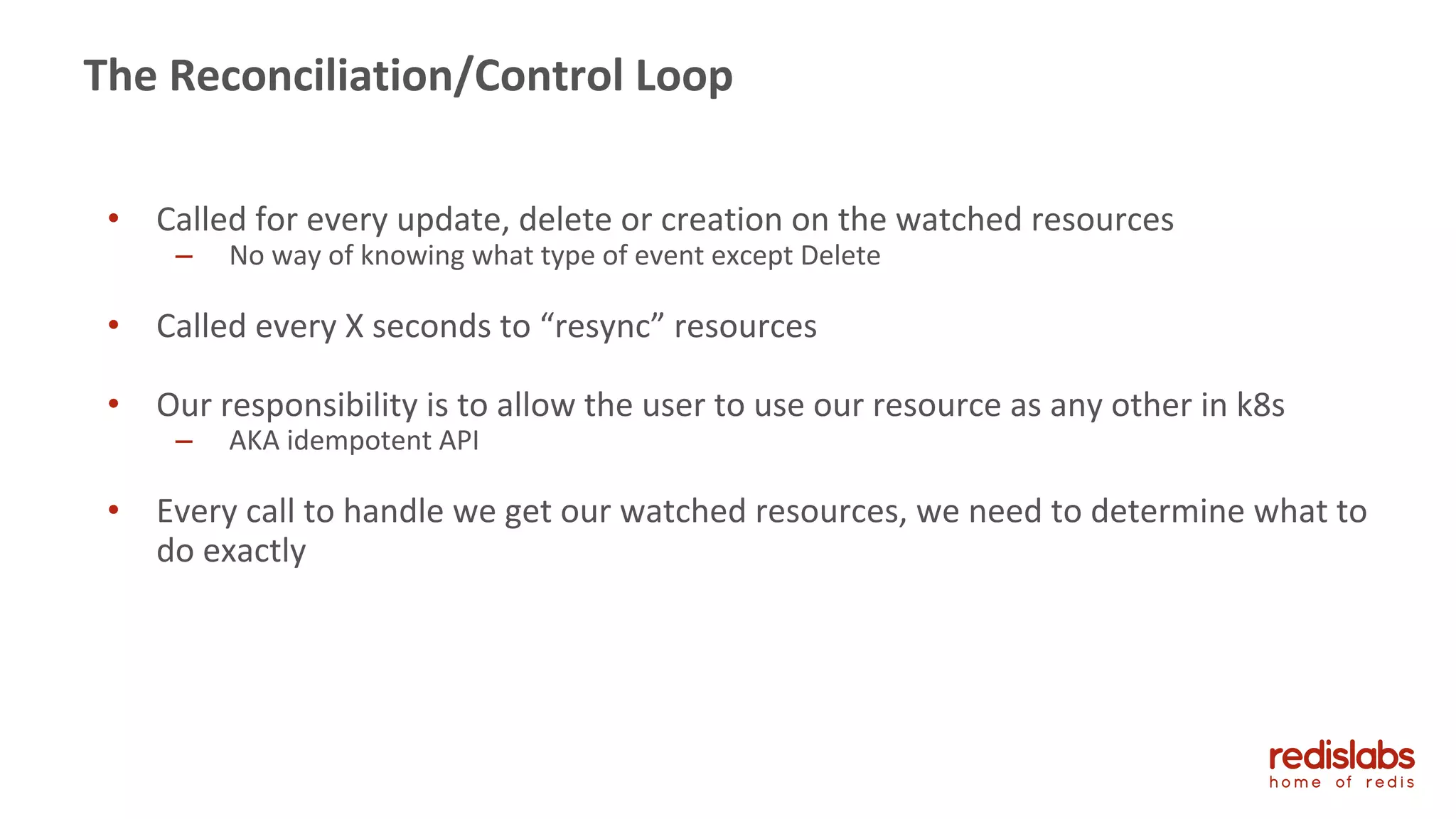

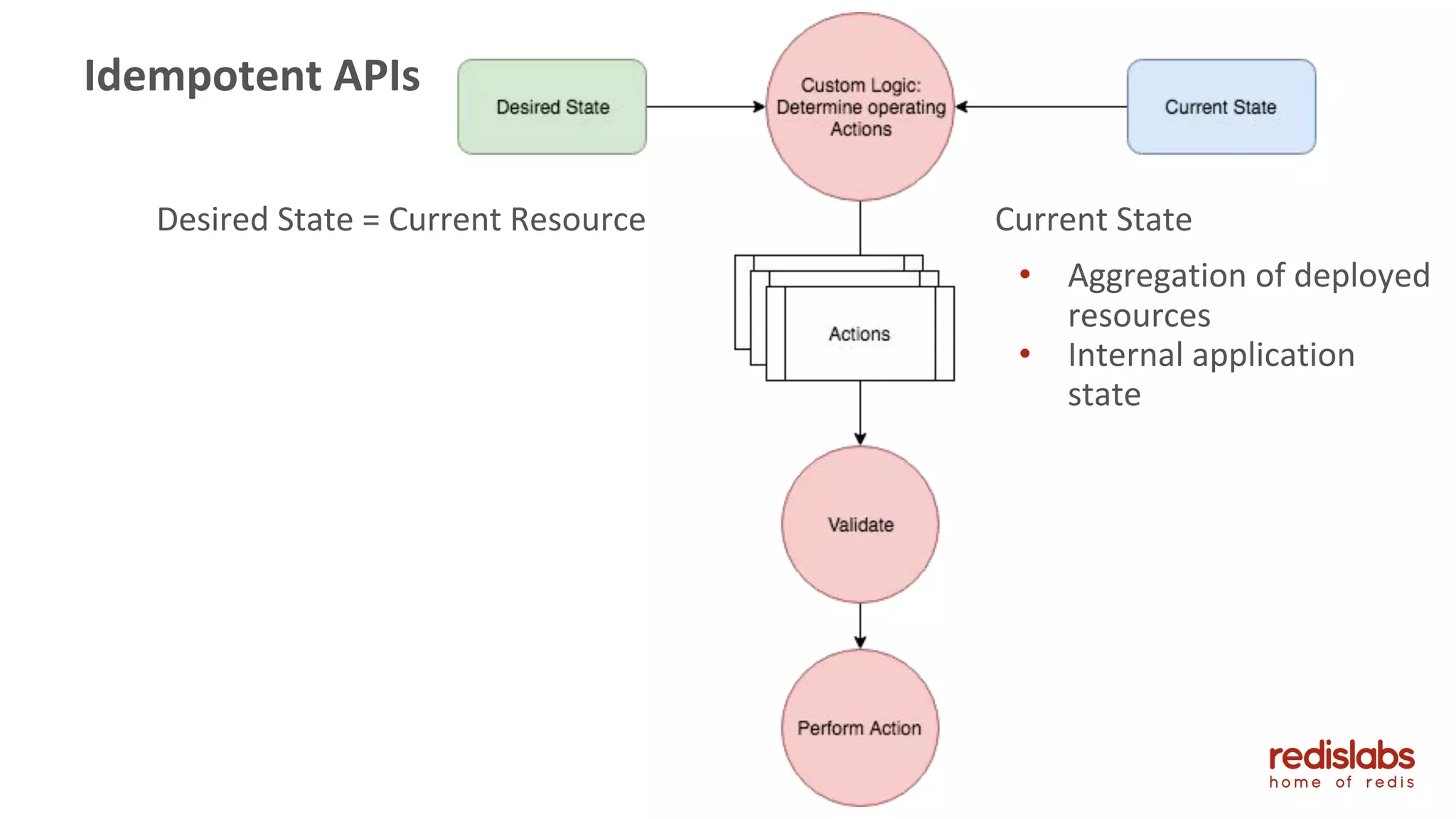

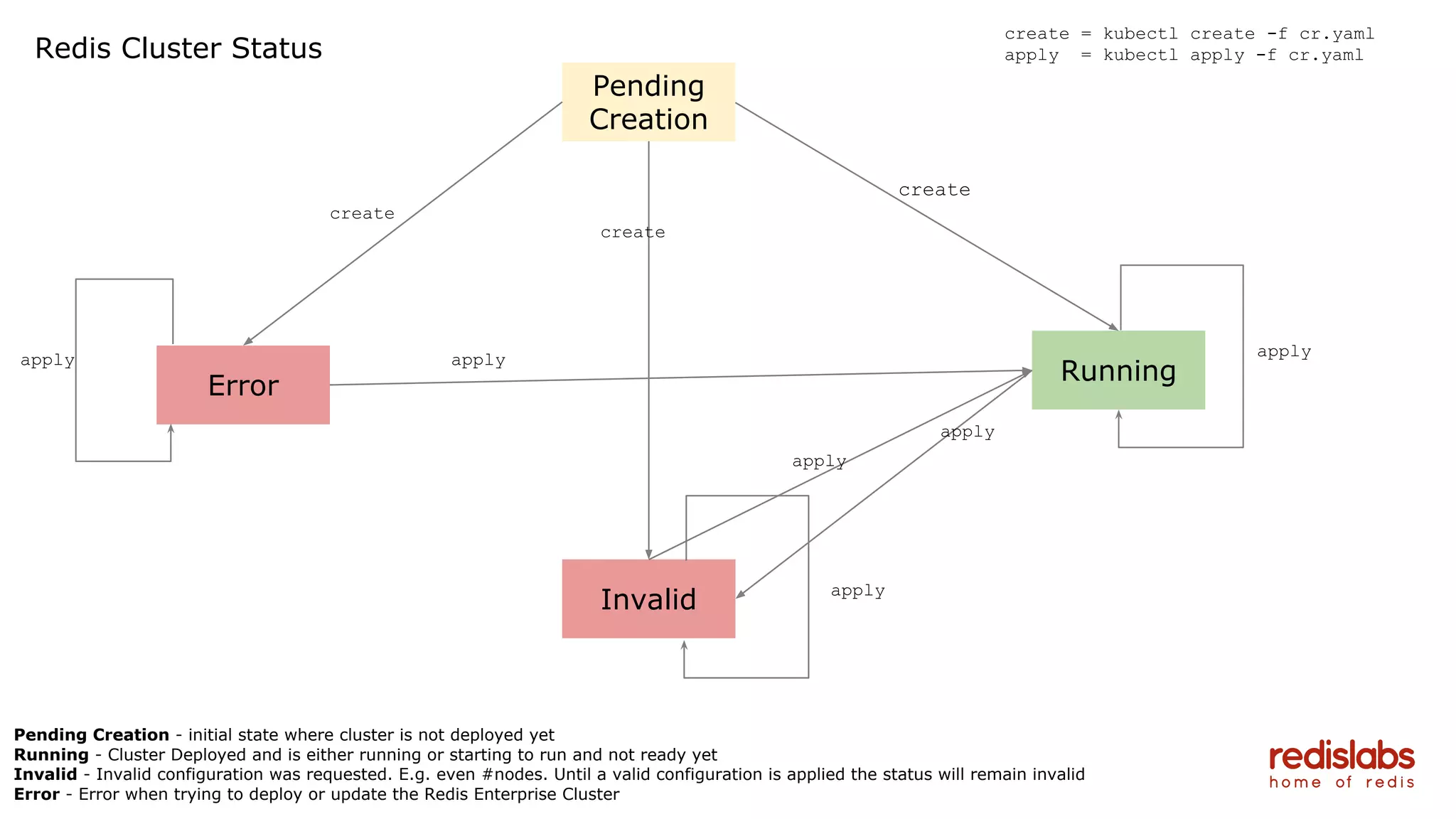

- Details on Redis Labs' operator development process and challenges in building idempotent APIs and handling state changes and validation in the reconciliation loop.

![37

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

labelSelector:

matchExpressions:

key: app

operator: In

values:

{{ template "redisenterprise.name" . }}

key: release

operator: In

values:

{{ .Release.Name }}

key: redis.io/role

operator: In

values:

node

topologyKey: kubernetes.io/hostname

terminationGracePeriodSeconds: 31536000

serviceAccountName: {{ template "redisenterprise.serviceAccountName" . }}

{{ with .Values.imagePullSecrets }}

imagePullSecrets:

{{ toYaml . | indent 8 }}

{{ end }}

containers:

name: redis

image: {{ .Values.redisImage.repository }}:{{ .Values.redisImage.tag }}

imagePullPolicy: {{ .Values.redisImage.pullPolicy }}

readinessProbe:

exec:

command:

# check that the node is bootstrapped and that its connected and synced.

bash

c

curl silent localhost:8080/v1/bootstrap && /opt/redislabs/bin/rladmin status

nodes | grep node:$(cat /etc/opt/redislabs/node.id) | grep OK

initialDelaySeconds: 20

timeoutSeconds: 5

lifecycle:

preStop:

exec:

command:

# enslave the node, if this current node is master, change the master to

the first slave node.

bash

c

/opt/redislabs/bin/rladmin node $(cat /etc/opt/redislabs/node.id) enslave

&& ((/opt/redislabs/bin/rladmin status nodes | grep node:$(cat

/etc/opt/redislabs/node.id) | grep q master) && /opt/redislabs/bin/rlutil

change_master master=$(/opt/redislabs/bin/rladmin status nodes | grep slave |

head 1 | cut d " " f 1| cut d ":" f2) && sleep 10) || /bin/true

resources:

{{ toYaml .Values.redisResources | indent 10 }}

ports:

containerPort: 8001

containerPort: 8443

containerPort: 9443

securityContext:

capabilities:

add:

SYS_RESOURCE

{{ if .Values.persistentVolume.enabled }}

volumeMounts:

mountPath: "/opt/persistent"

name: redisstorage

{{ end }}

env:

name: K8S_ORCHASTRATED_DEPLOYMENT

value: "yes"

name: JOIN_HOSTNAME

value: {{ template "redisenterprise.fullname" . }}

{{ if .Values.persistentVolume.enabled }}

name: PERSISTANCE_PATH

value: /opt/persistent

{{ end }}

name: K8S_SERVICE_NAME

value: {{ template "redisenterprise.fullname" . }}

name: BOOTSTRAP_HANDLE_REDIRECTS

value: "enabled"

name: BOOTSTRAP_CLUSTER_FQDN

value: {{ template "redisenterprise.clusterDNS" . }}

name: BOOTSTRAP_DMC_THREADS

value: "10"

name: BOOTSTRAP_USERNAME

valueFrom:

secretKeyRef:

name: {{ template "redisenterprise.fullname" . }}

key: username

name: BOOTSTRAP_PASSWORD

valueFrom:

secretKeyRef:

name: {{ template "redisenterprise.fullname" . }}

key: password

name: BOOTSTRAP_LICENSE

valueFrom:

secretKeyRef:

name: {{ template "redisenterprise.fullname" . }}

key: license

apiVersion: apps/v1beta1

kind: StatefulSet

metadata:

name: {{ template "redisenterprise.statefulsetName" . }}

labels:

app: {{ template "redisenterprise.name" . }}

chart: {{ template "redisenterprise.chart" . }}

release: {{ .Release.Name }}

heritage: {{ .Release.Service }}

spec:

{{ if .Values.persistentVolume.enabled }}

volumeClaimTemplates:

metadata:

name: redisstorage

labels:

app: {{ template "redisenterprise.name" . }}

chart: {{ template "redisenterprise.chart" . }}

release: {{ .Release.Name }}

heritage: {{ .Release.Service }}

spec:

accessModes: [ "ReadWriteOnce" ]

resources:

requests:

storage: {{ .Values.persistentVolume.size | quote }}

{{ if .Values.persistentVolume.storageClass }}

{{ if (eq "" .Values.persistentVolume.storageClass) }}

storageClassName: ""

{{ else }}

storageClassName: "{{ .Values.persistentVolume.storageClass }}"

{{ end }}

{{ end }}

{{ end }}

serviceName: {{ template "redisenterprise.fullname" . }}

replicas: {{ .Values.replicas }}

updateStrategy:

type: "RollingUpdate"

template:

metadata:

labels:

redis.io/role: node

app: {{ template "redisenterprise.name" . }}

chart: {{ template "redisenterprise.chart" . }}

release: {{ .Release.Name }}

heritage: {{ .Release.Service }}

spec:

{{ with .Values.nodeSelector }}

nodeSelector:

{{ toYaml . | indent 8 }}

{{ end }}](https://image.slidesharecdn.com/k8smeetup-redisoperators-180718100601/75/Orchestrating-Redis-K8s-Operators-37-2048.jpg)