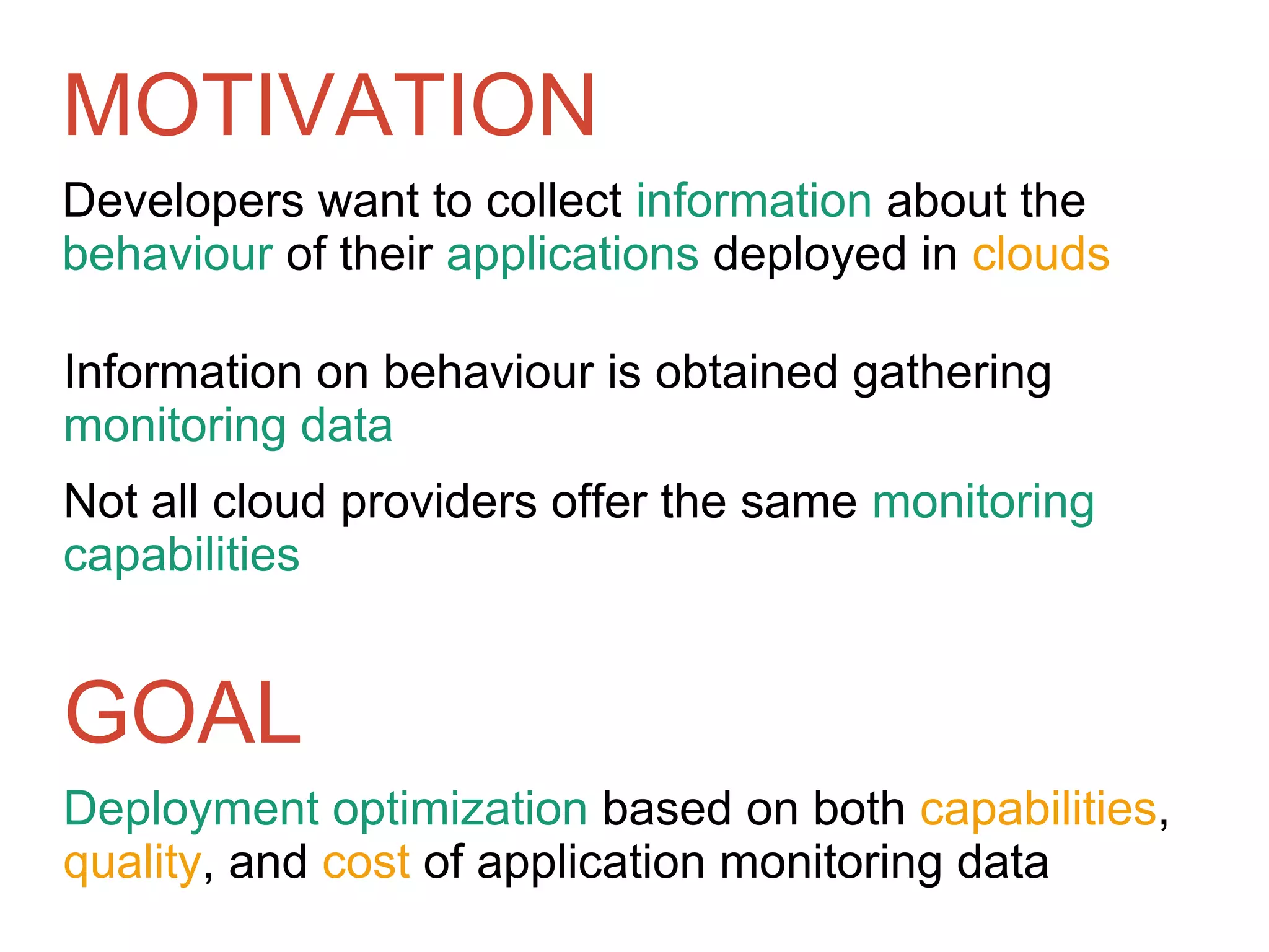

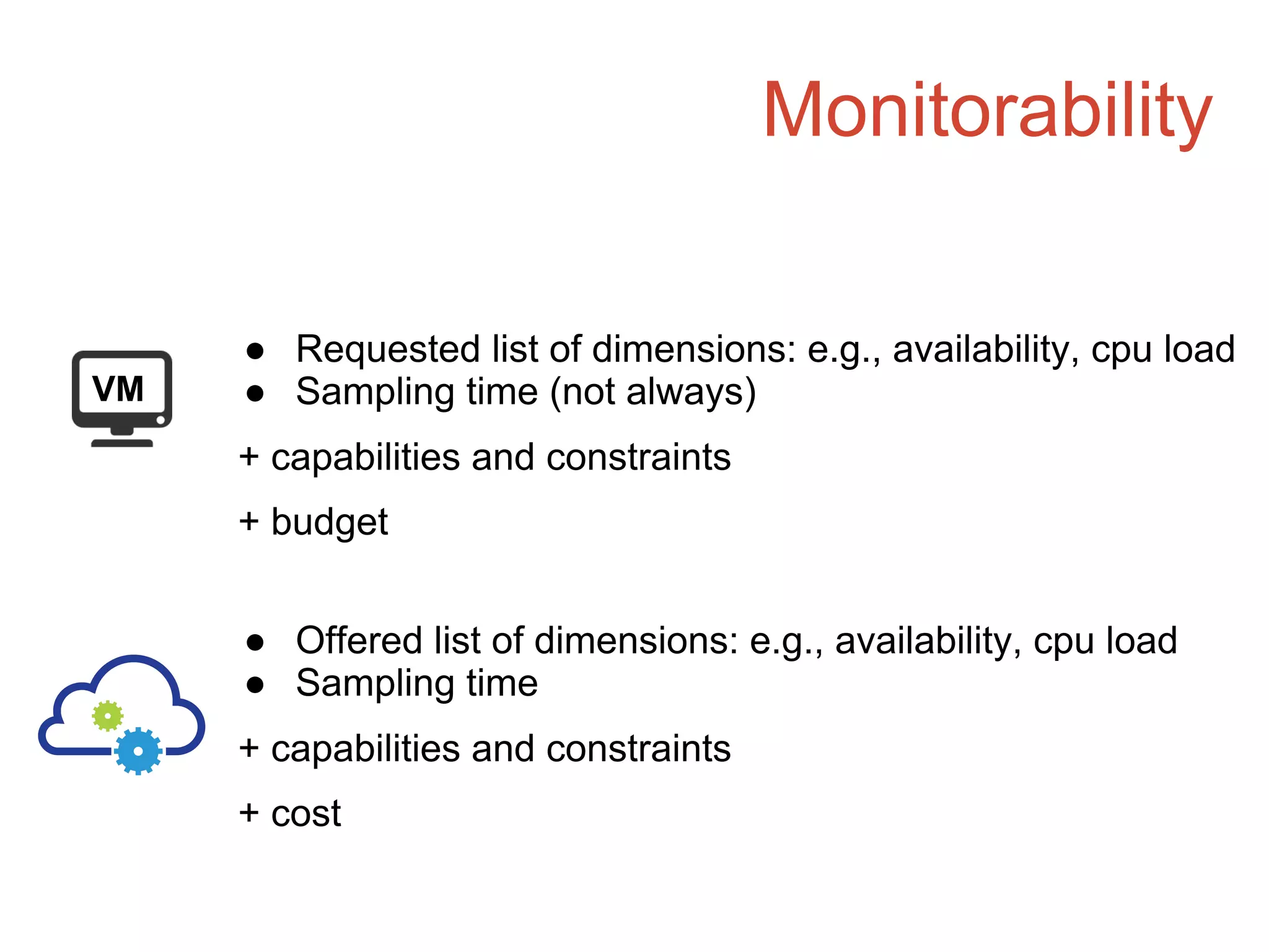

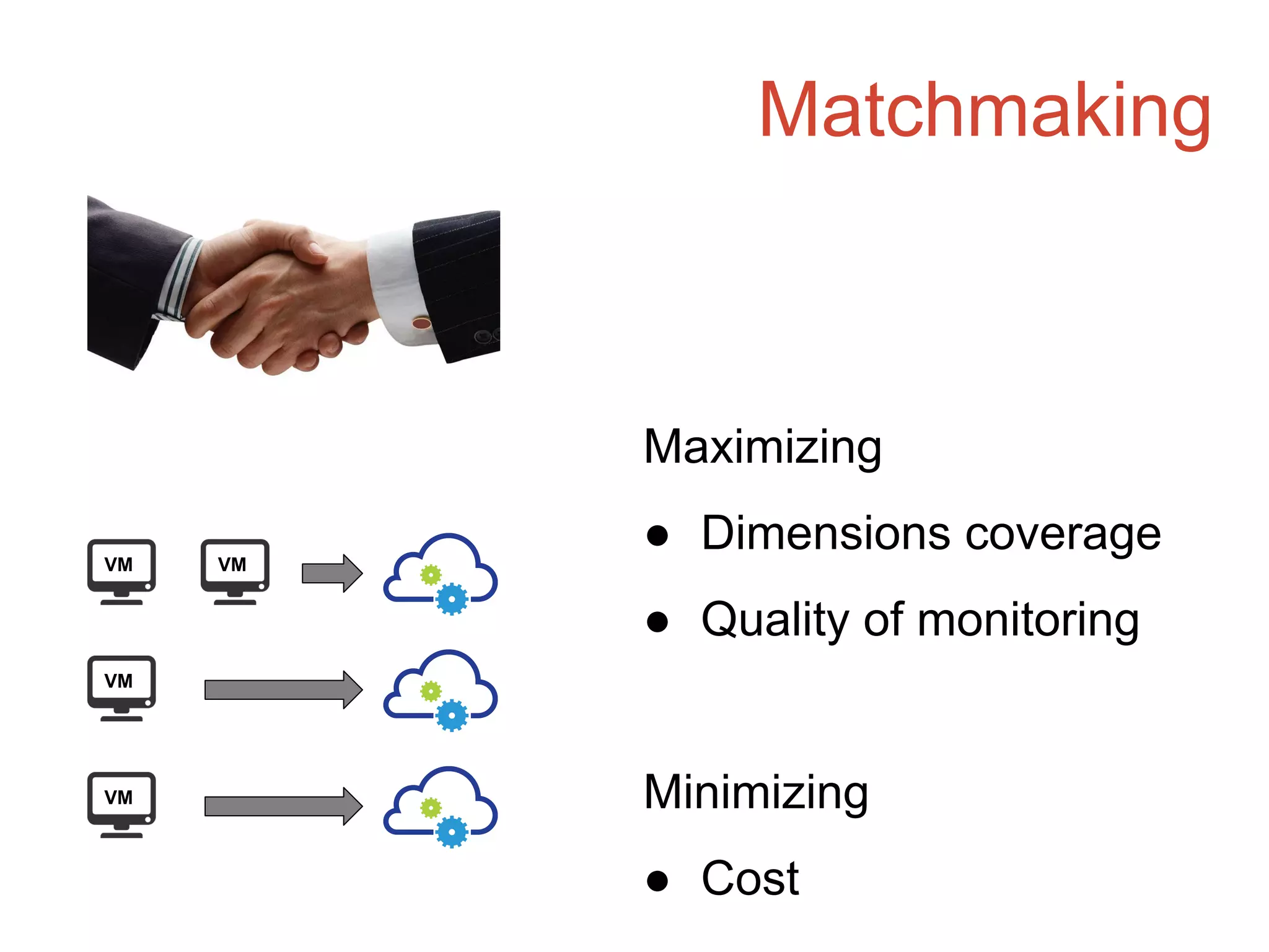

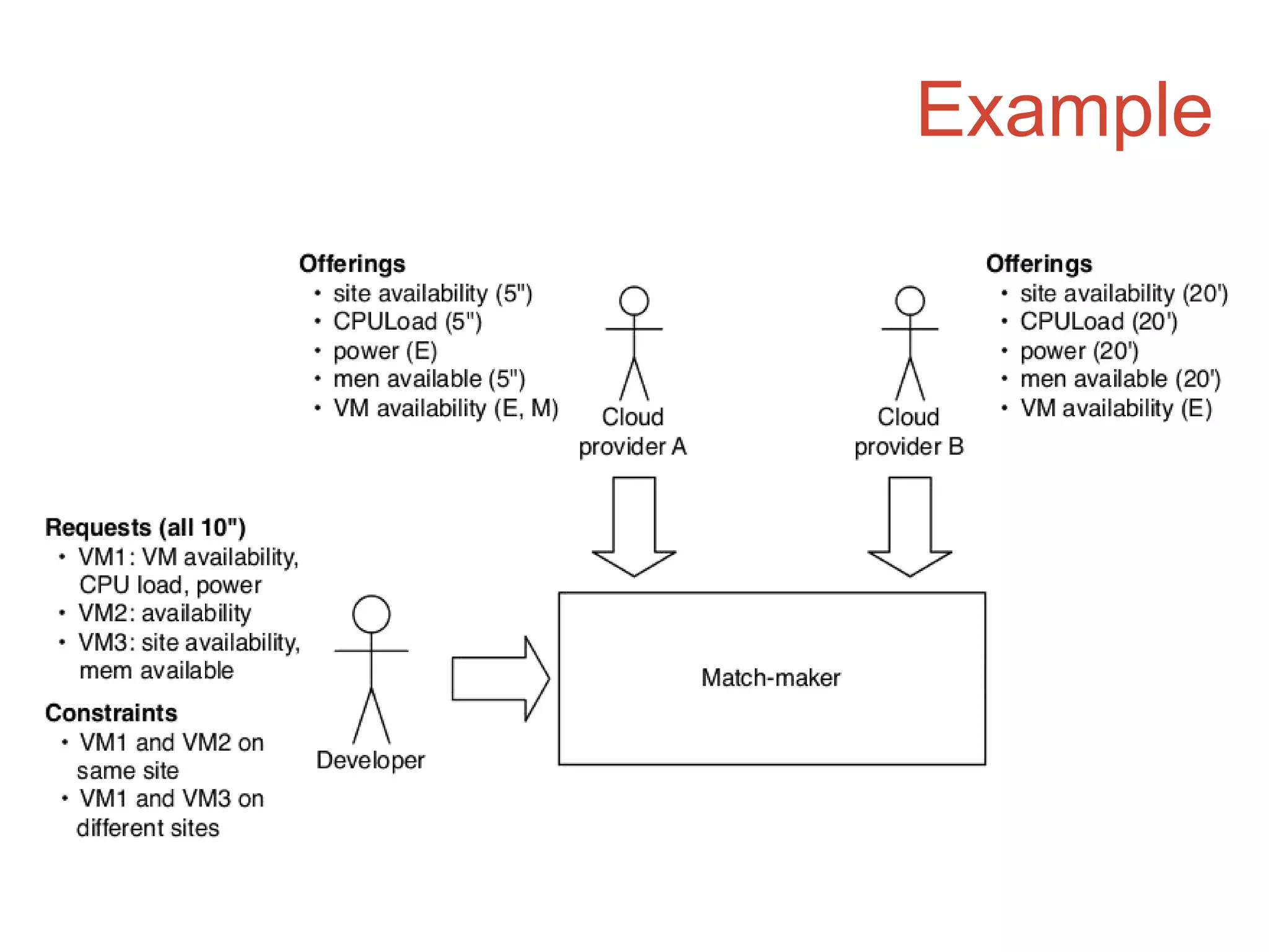

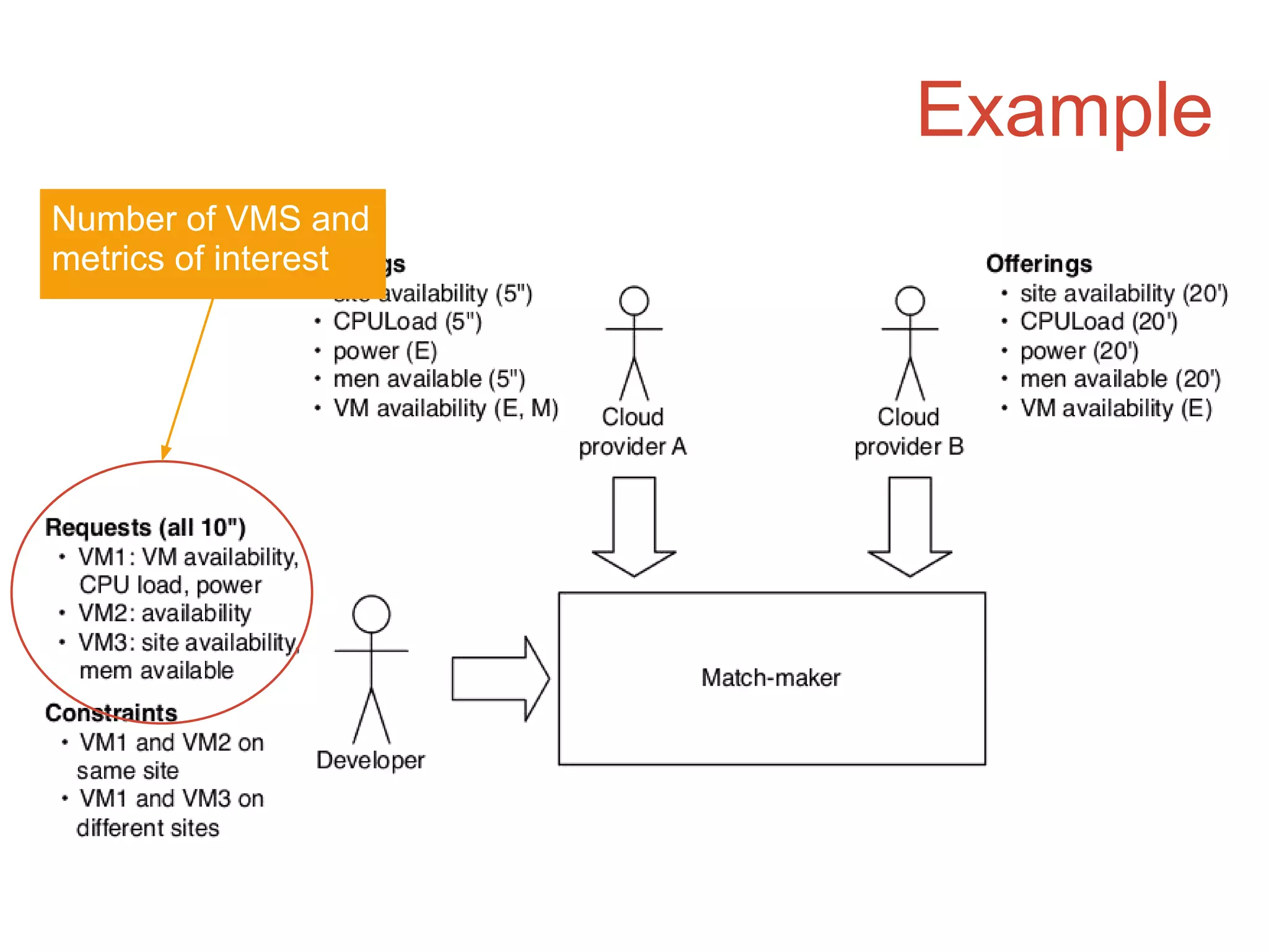

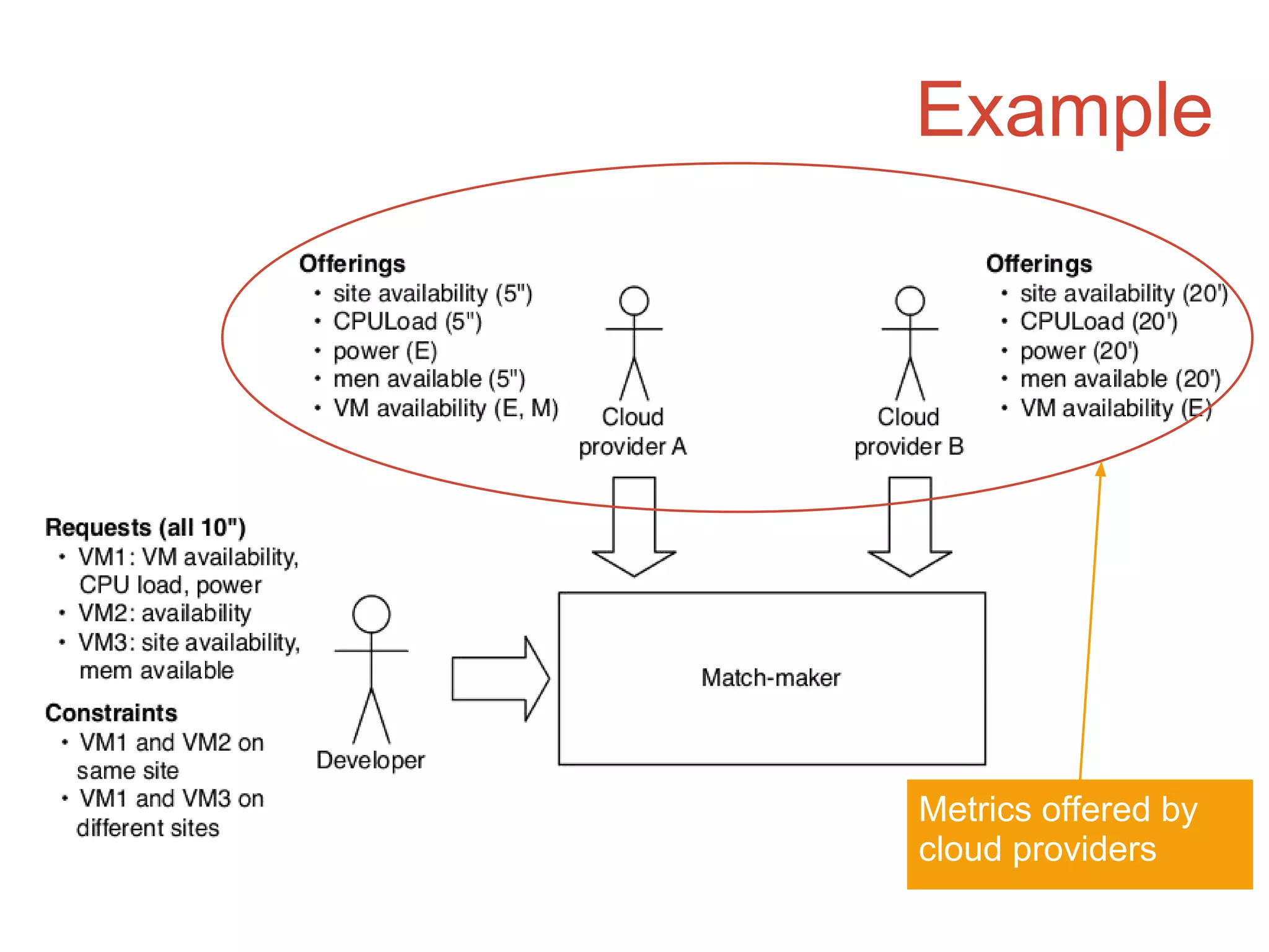

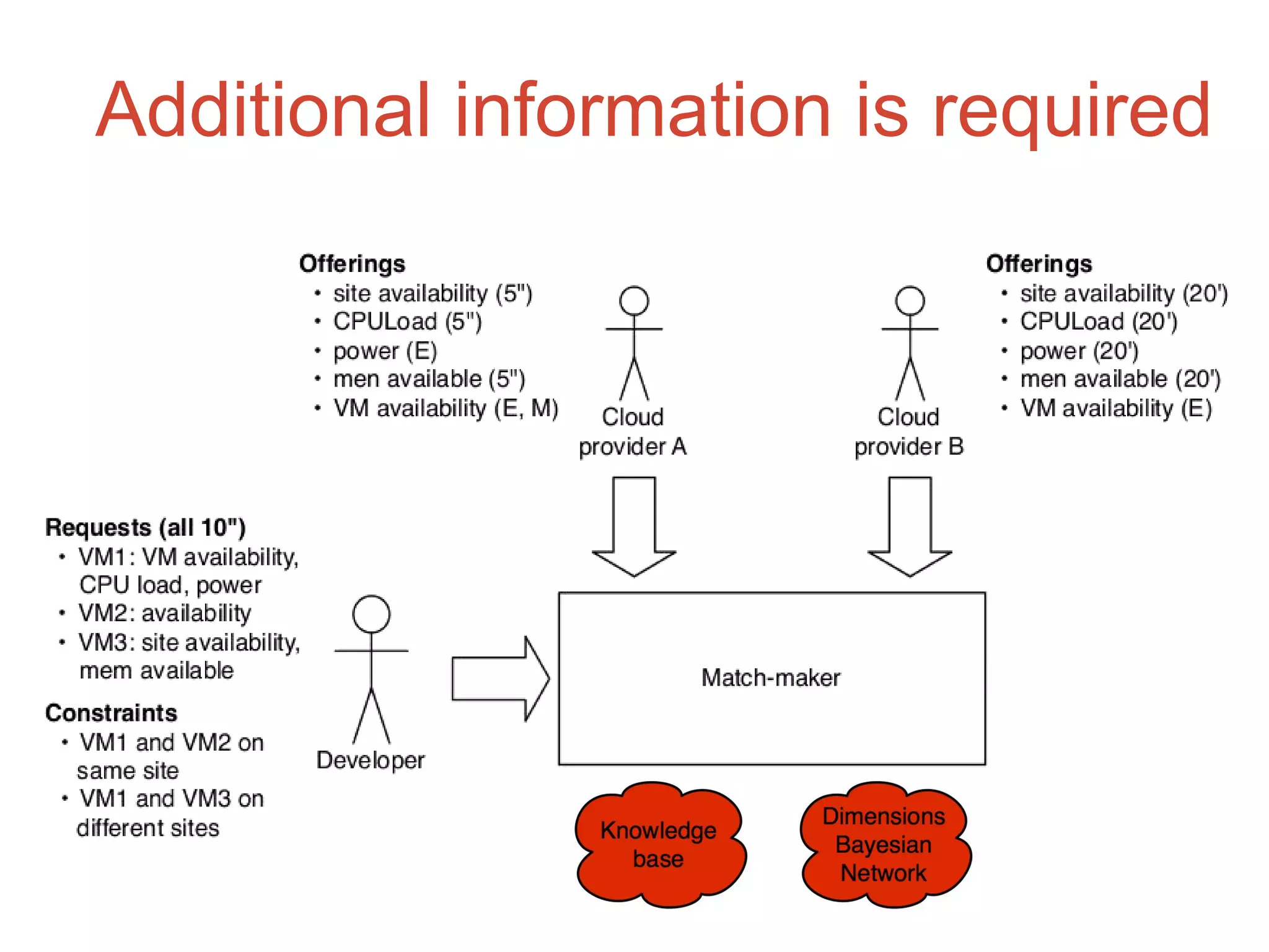

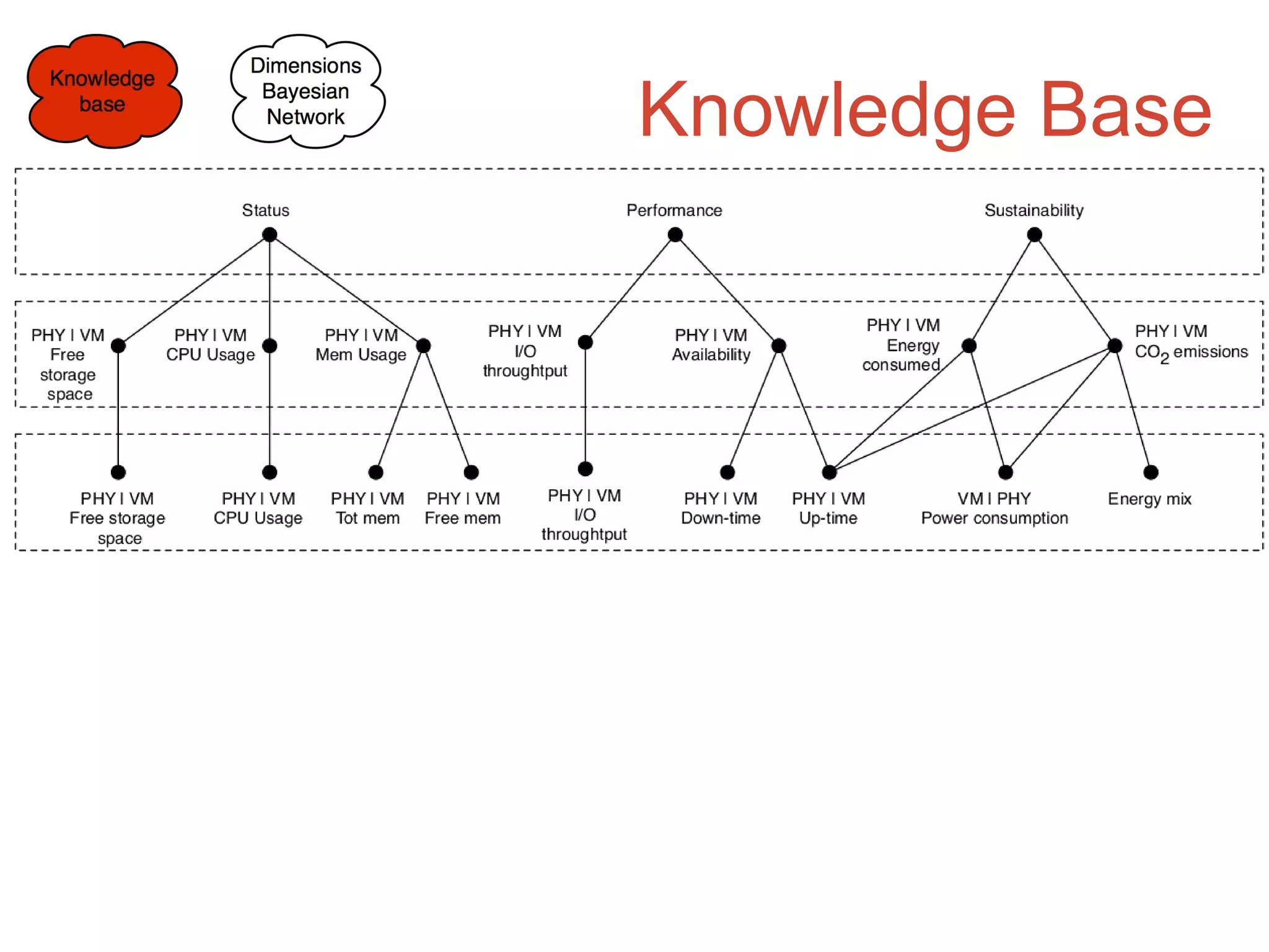

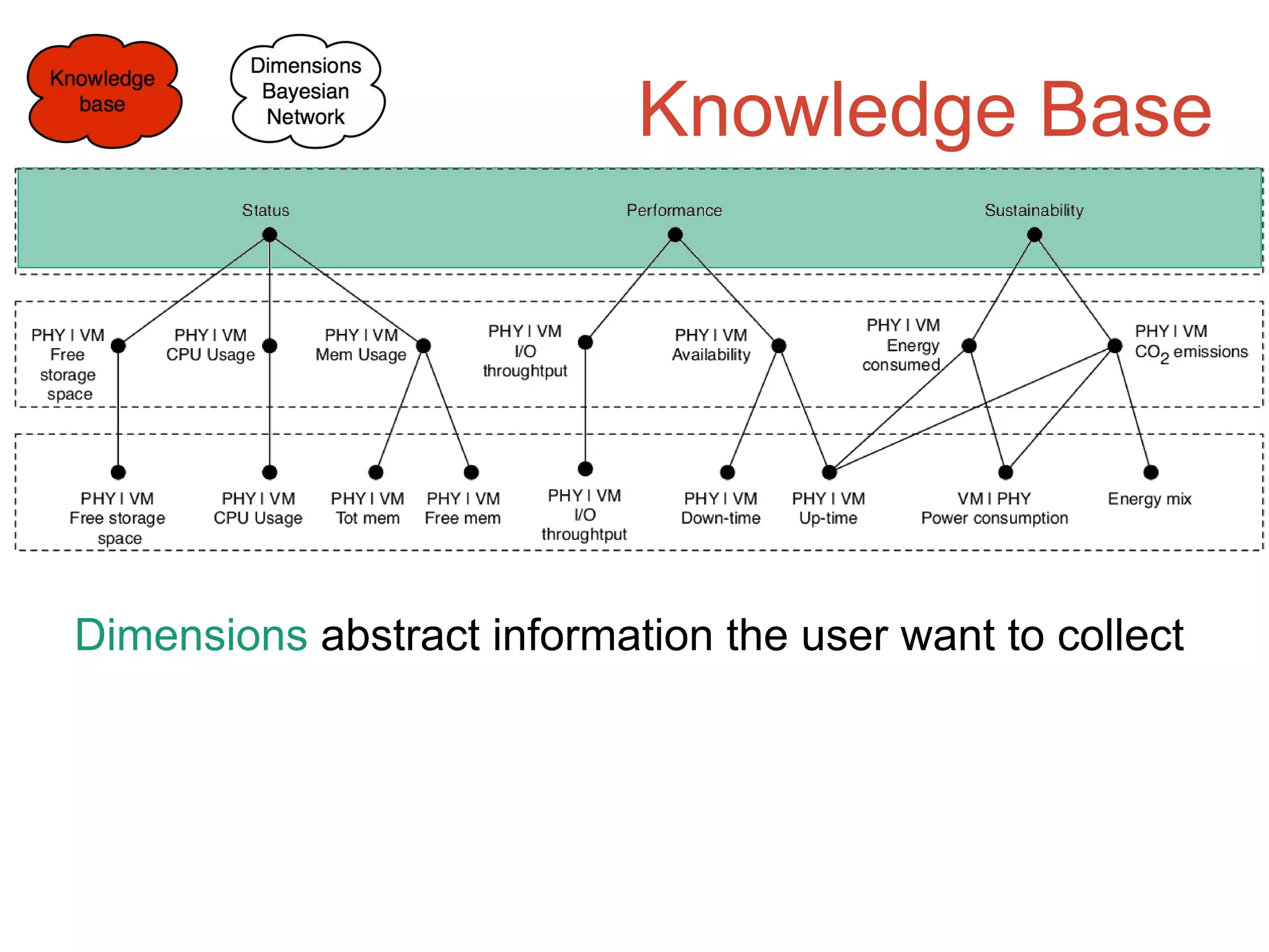

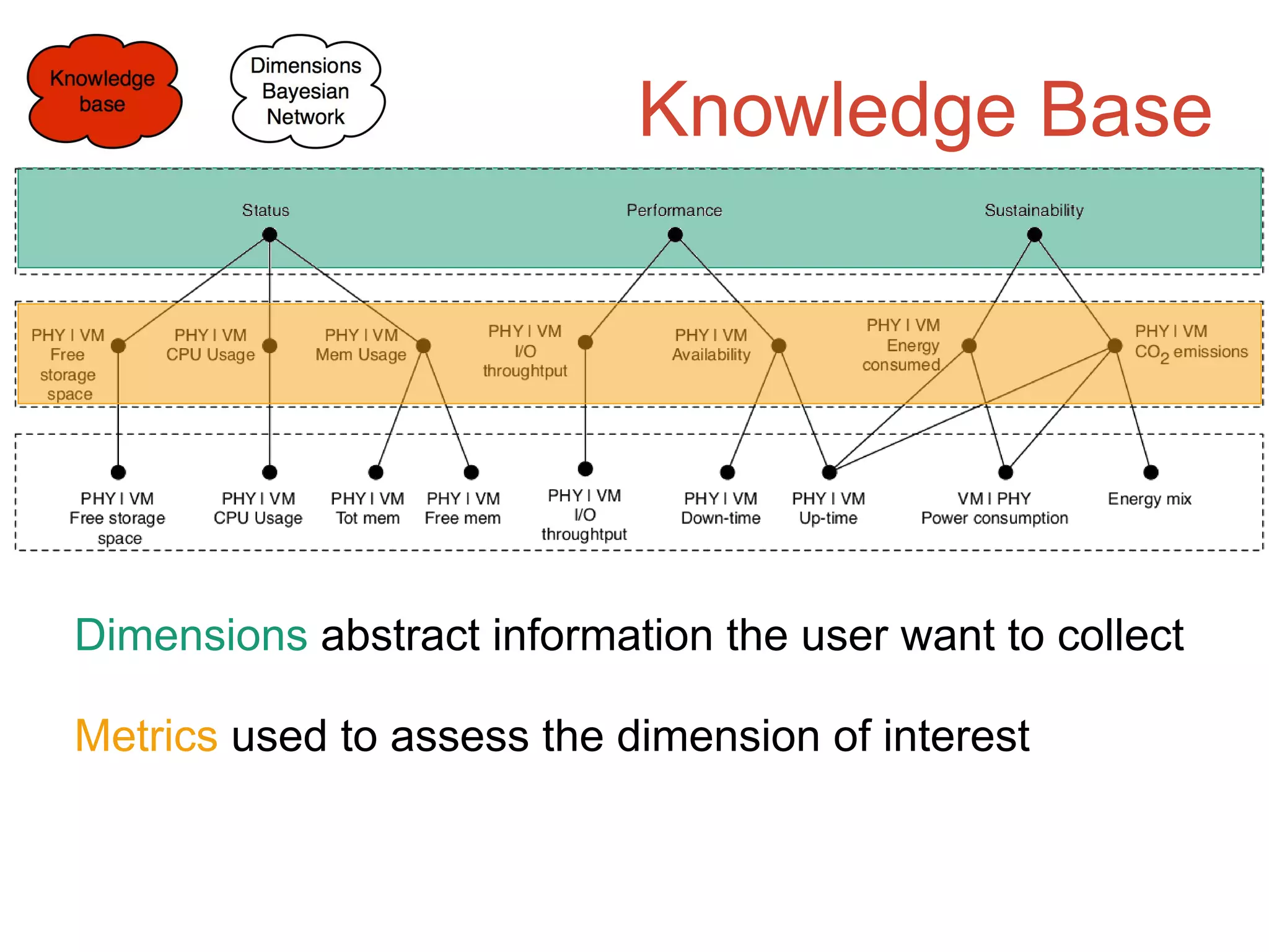

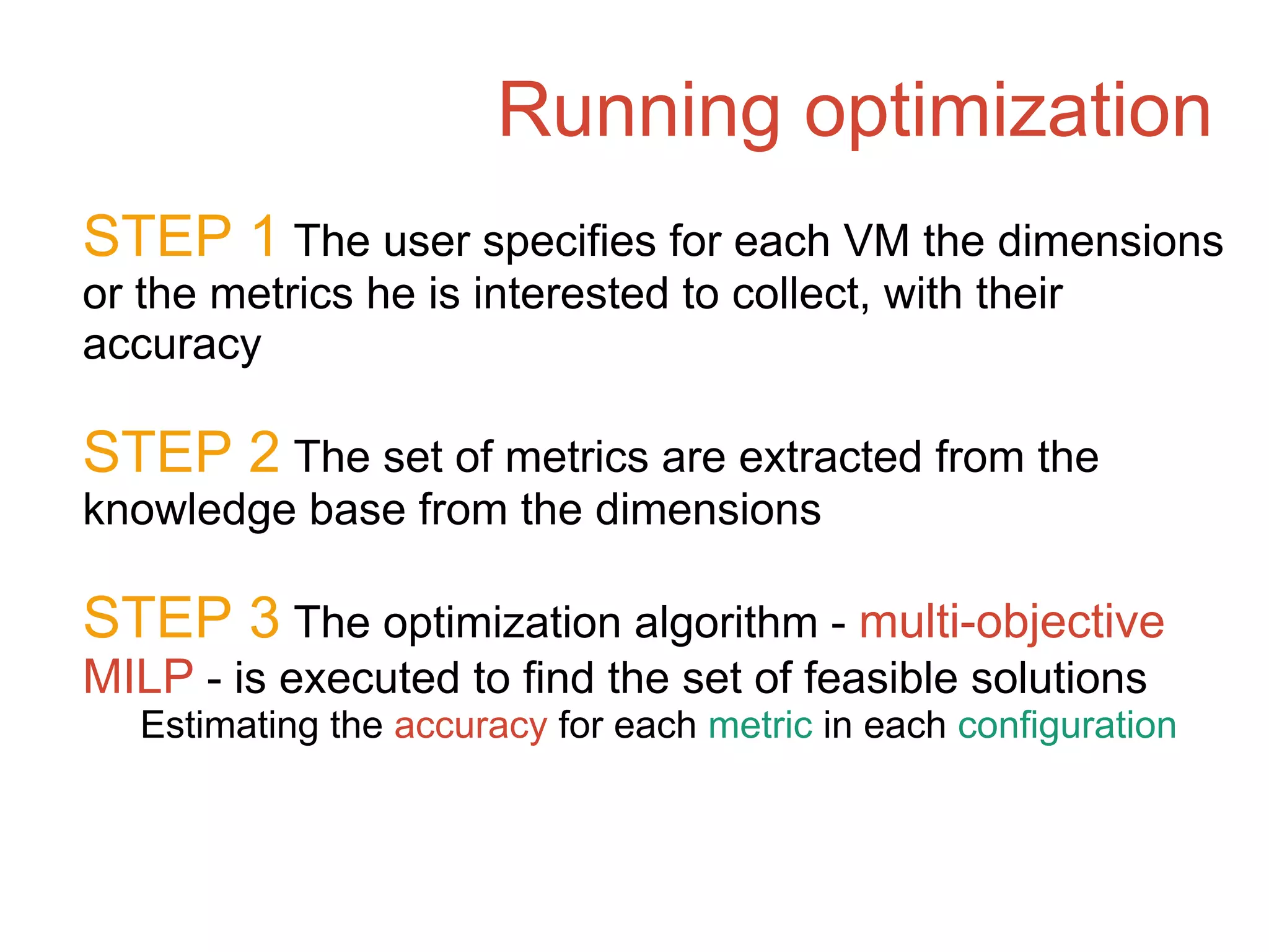

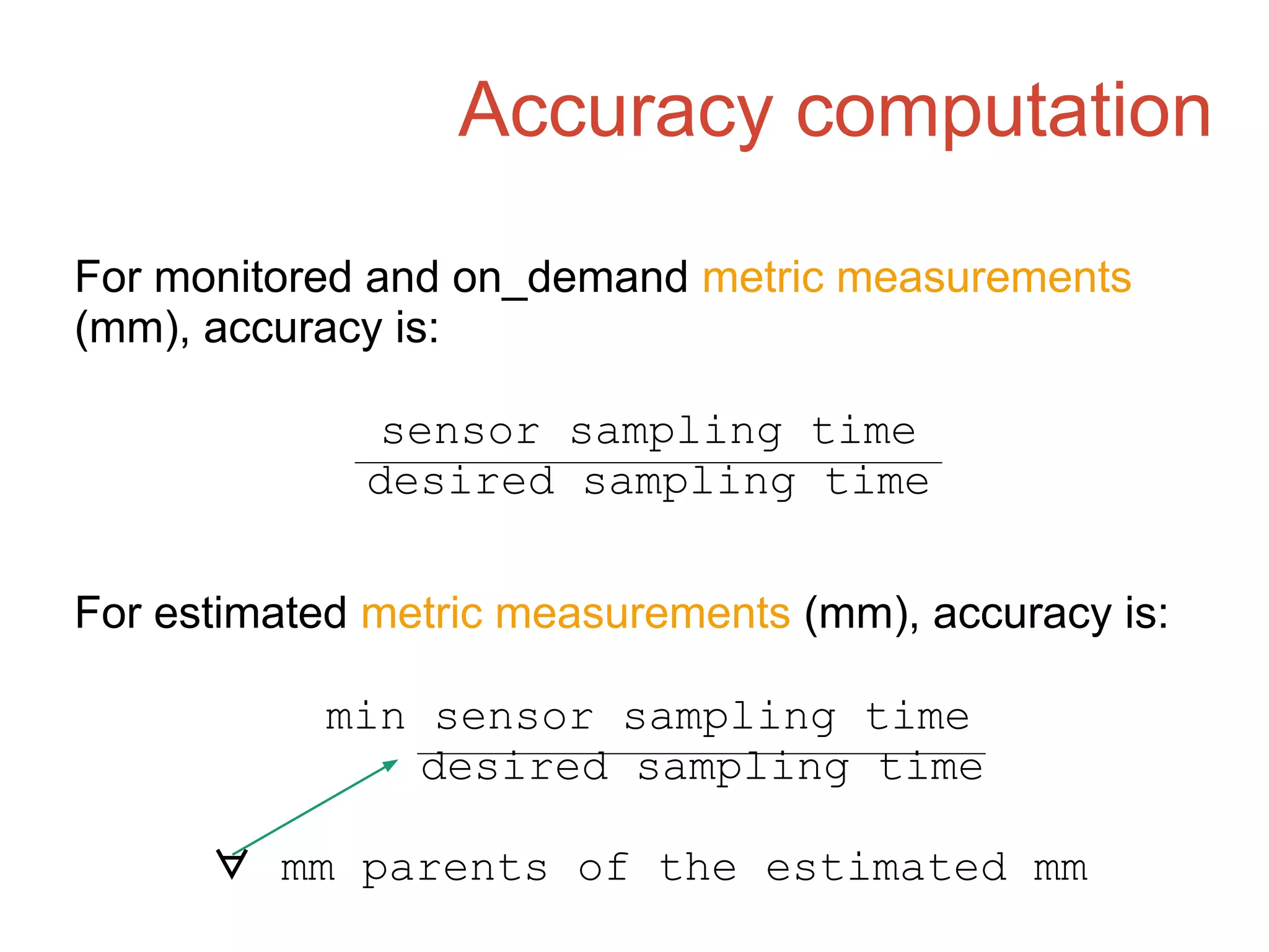

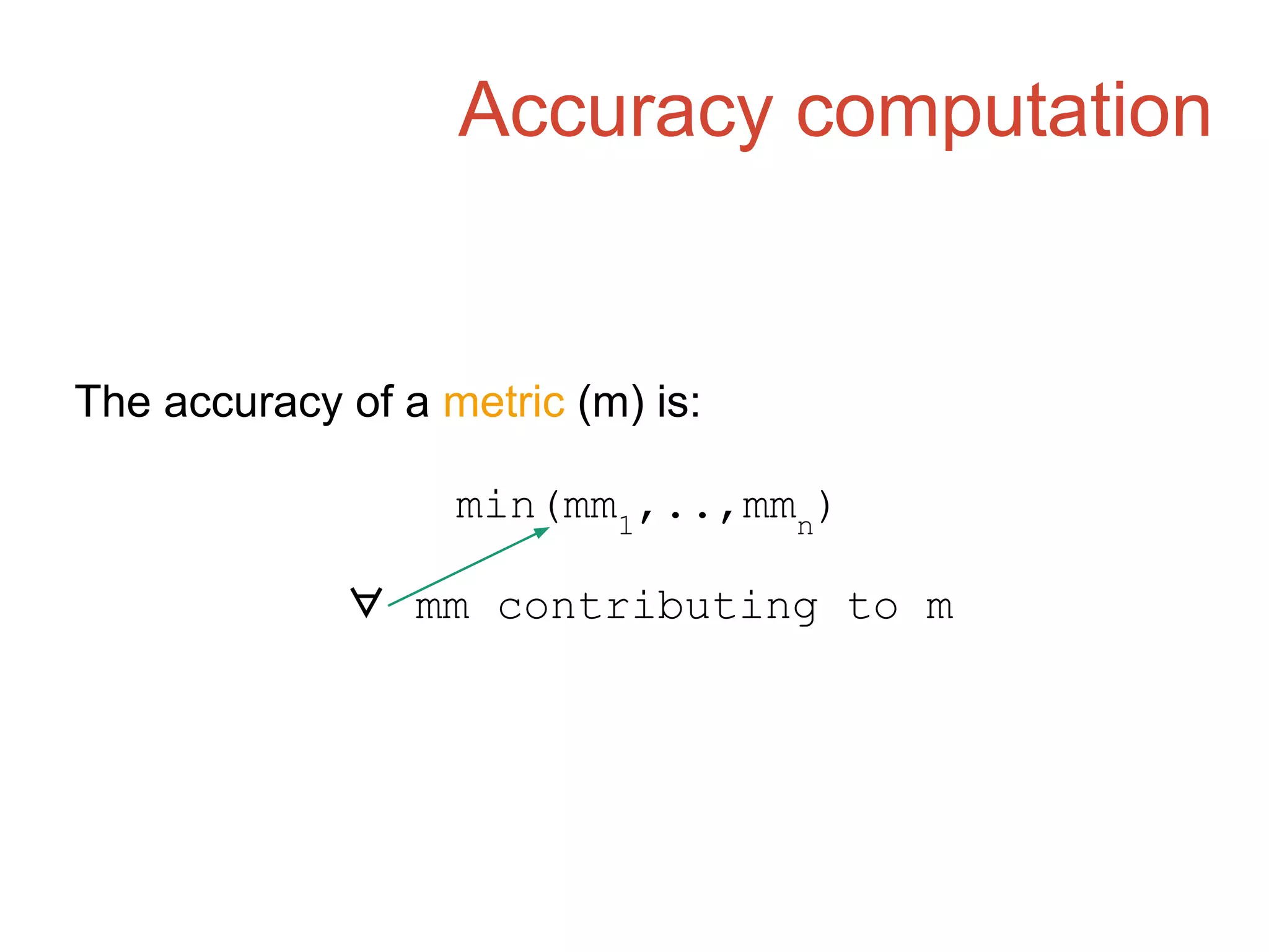

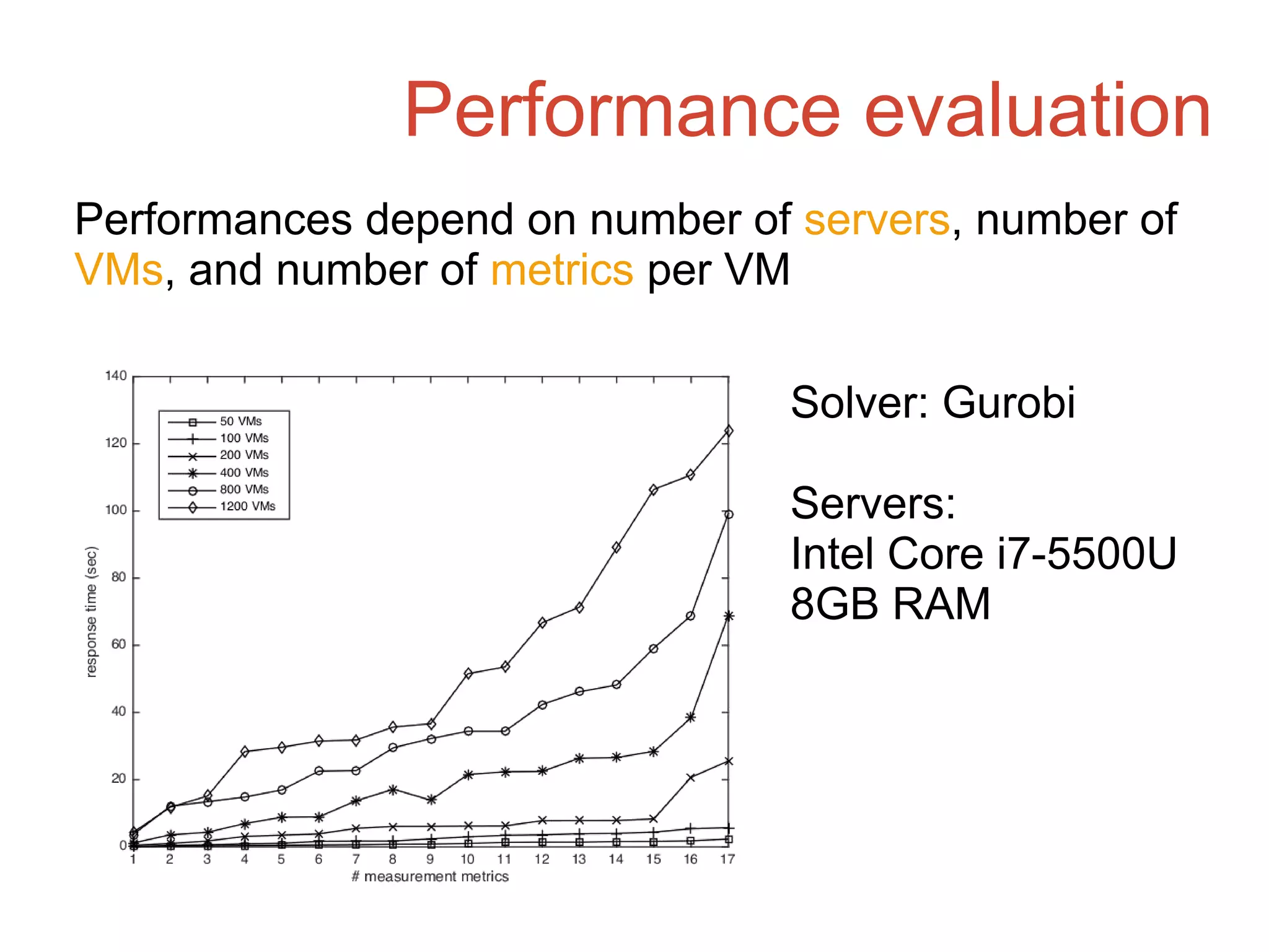

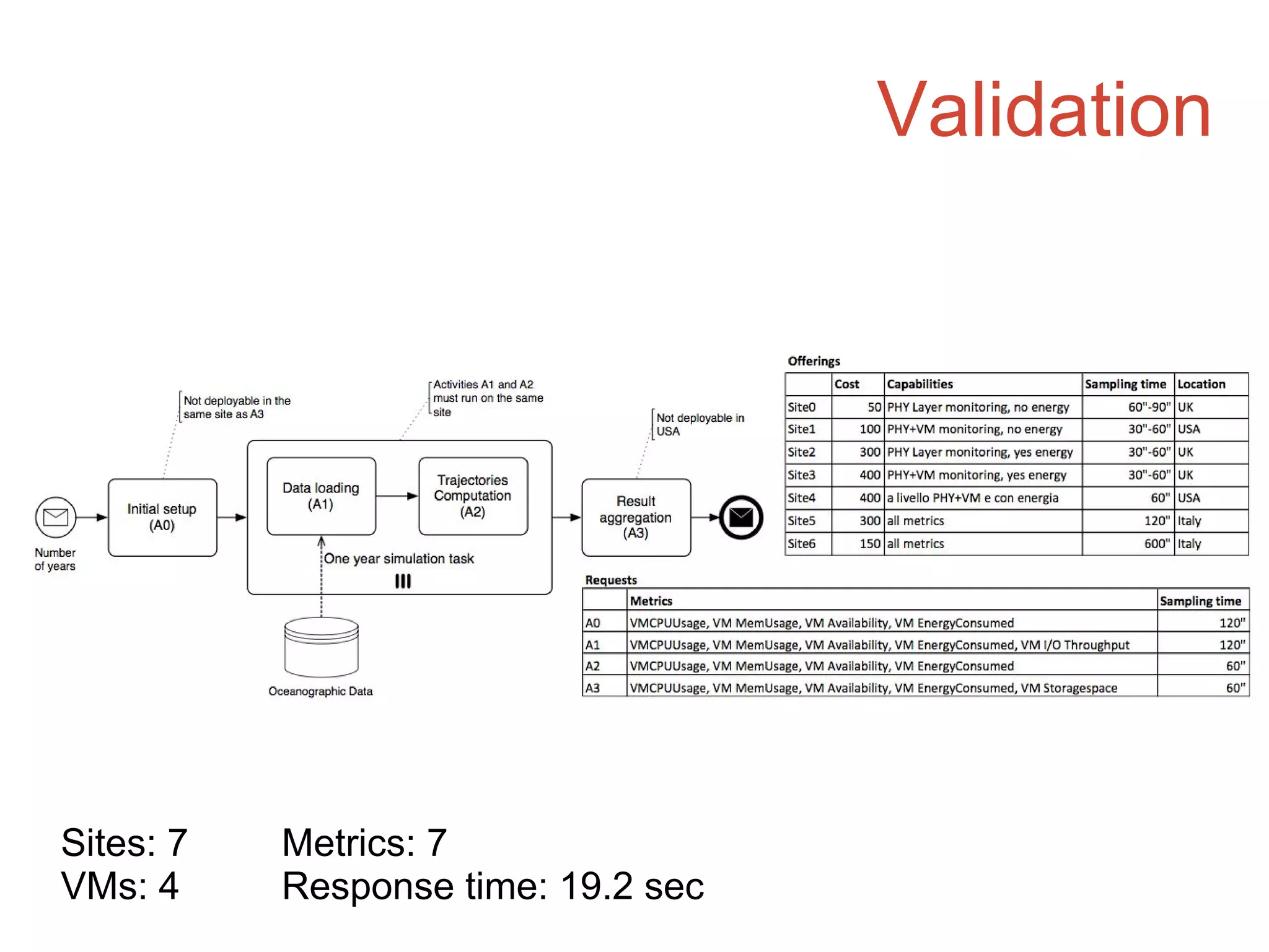

This document proposes an approach to optimize the monitorability of applications deployed across multiple cloud providers. It aims to maximize the number of application metrics that can be monitored while minimizing costs, based on each cloud provider's monitoring capabilities. A matchmaking algorithm finds the best deployment by considering users' monitoring requirements, providers' offered metrics and costs, and estimating metrics that are not directly measurable. The approach was validated on a sample deployment and showed response times around 19 seconds for optimizing monitoring of 4 VMs with 7 metrics each across 7 cloud providers.