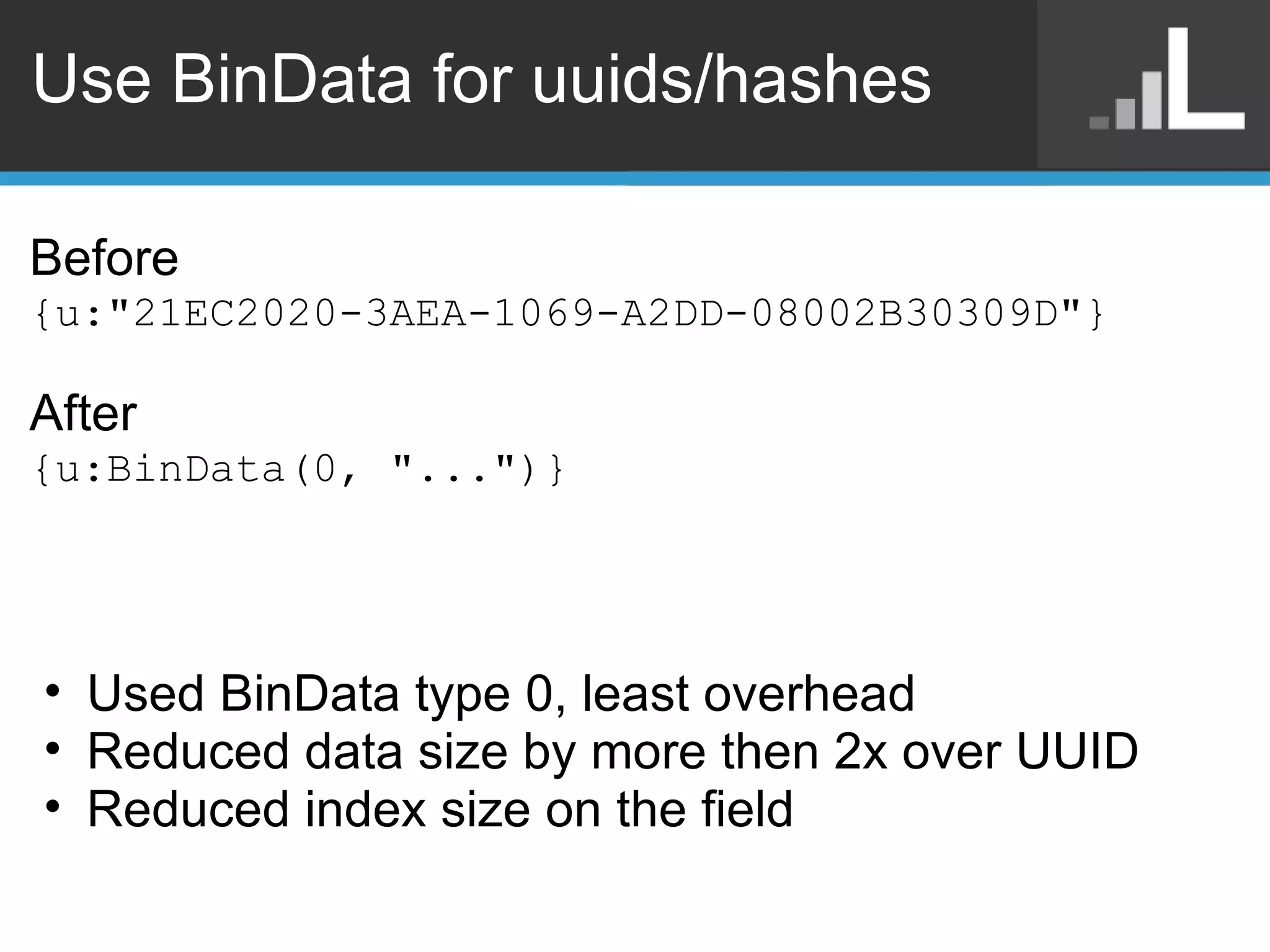

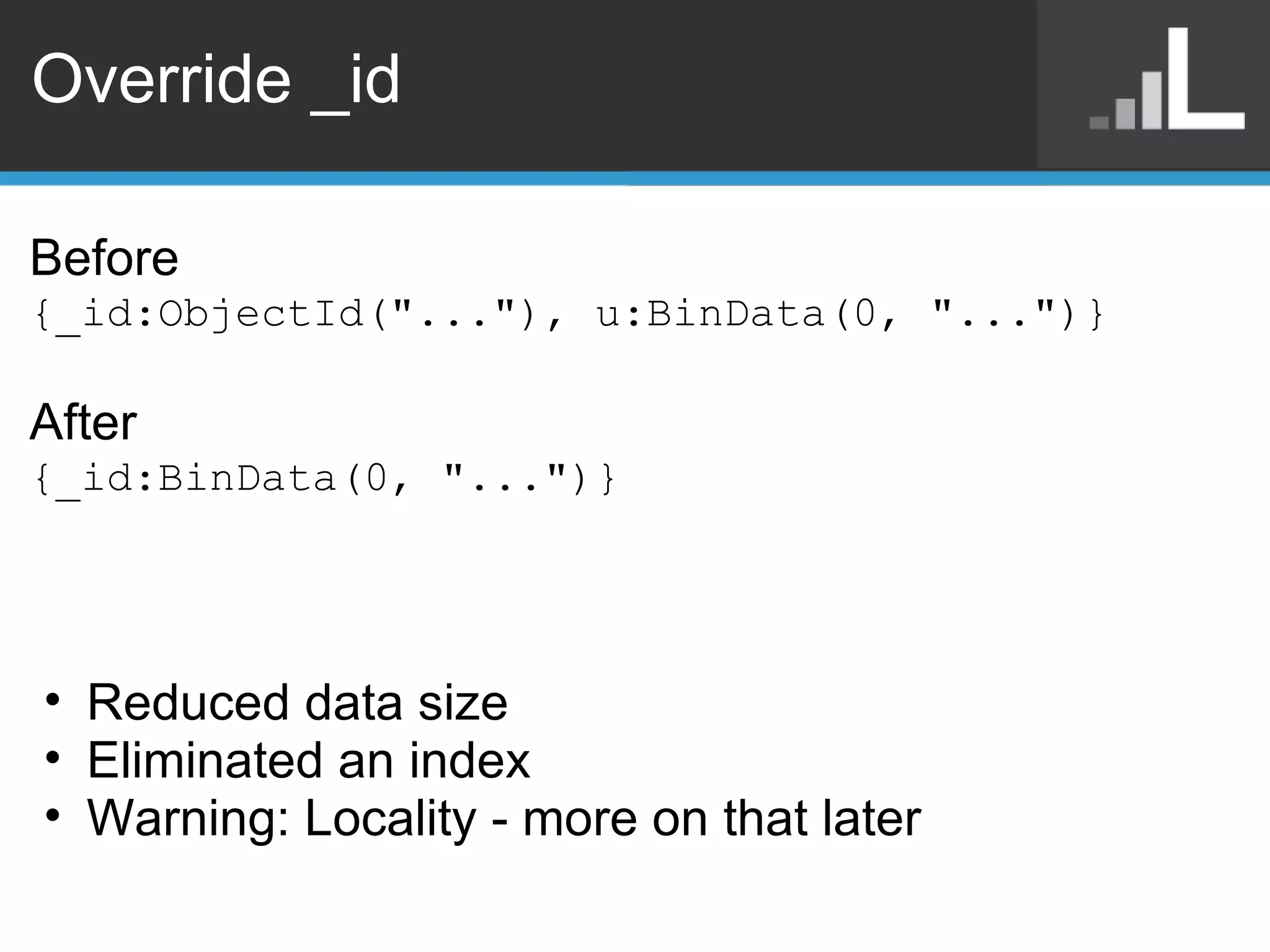

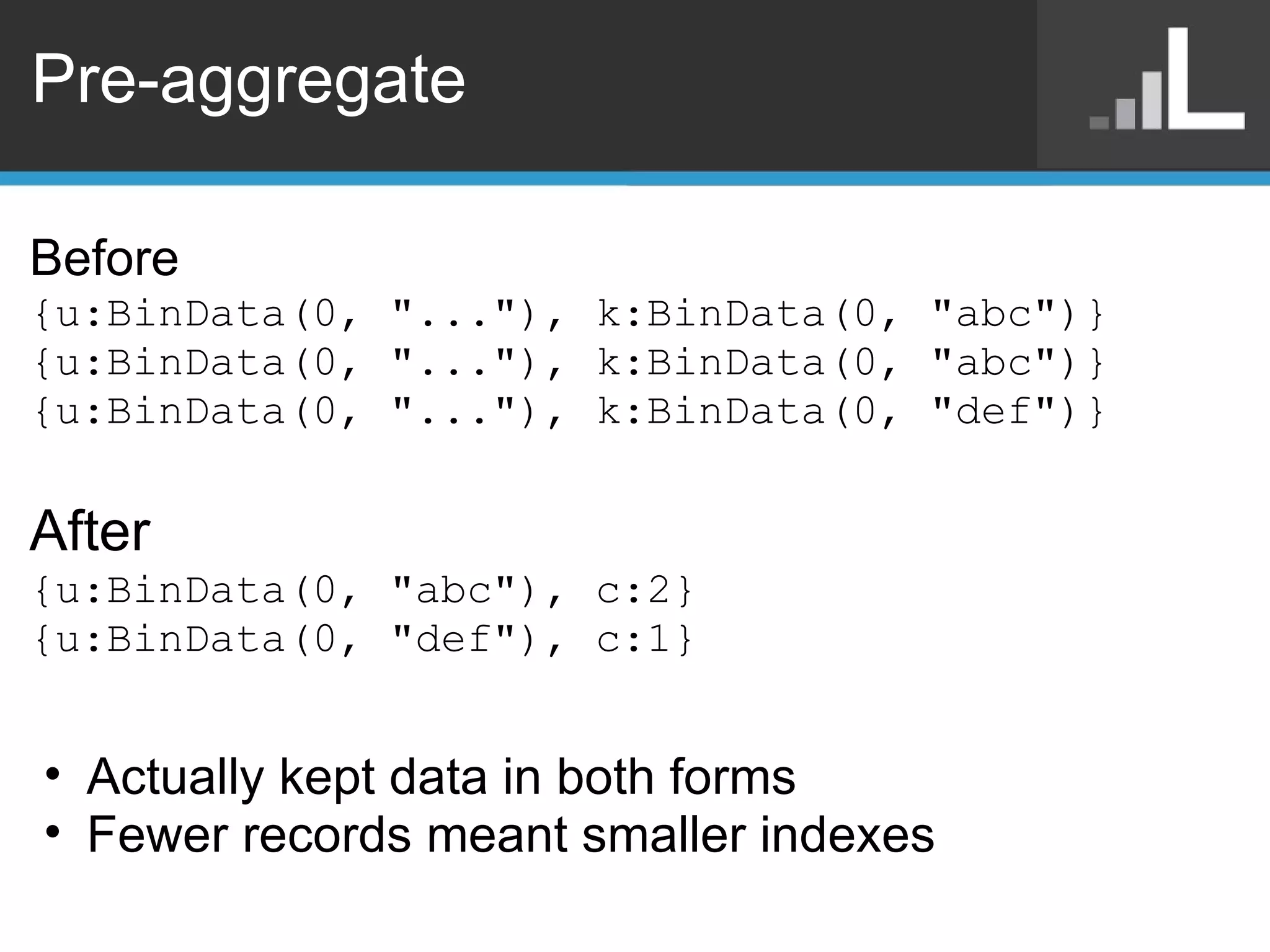

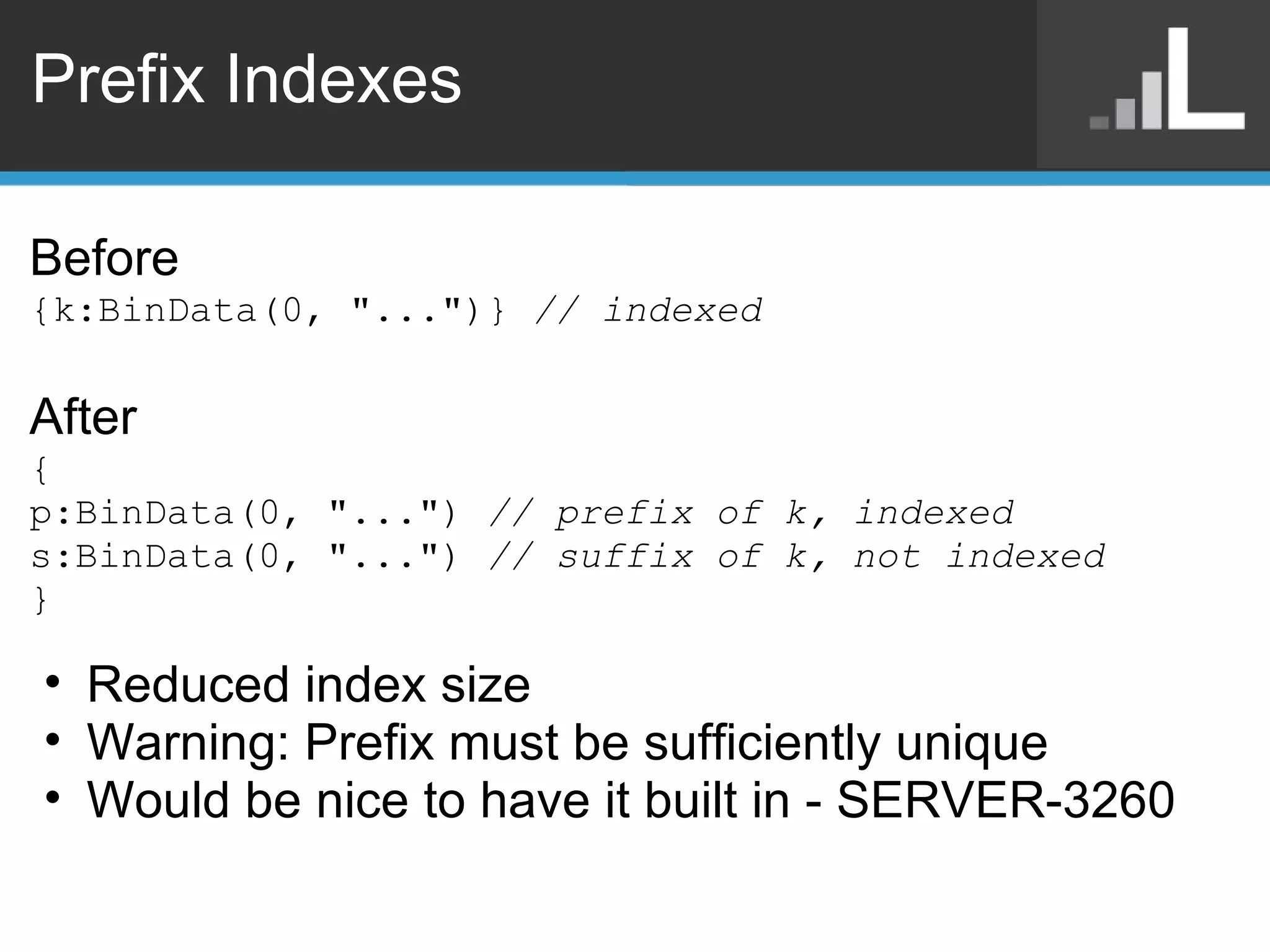

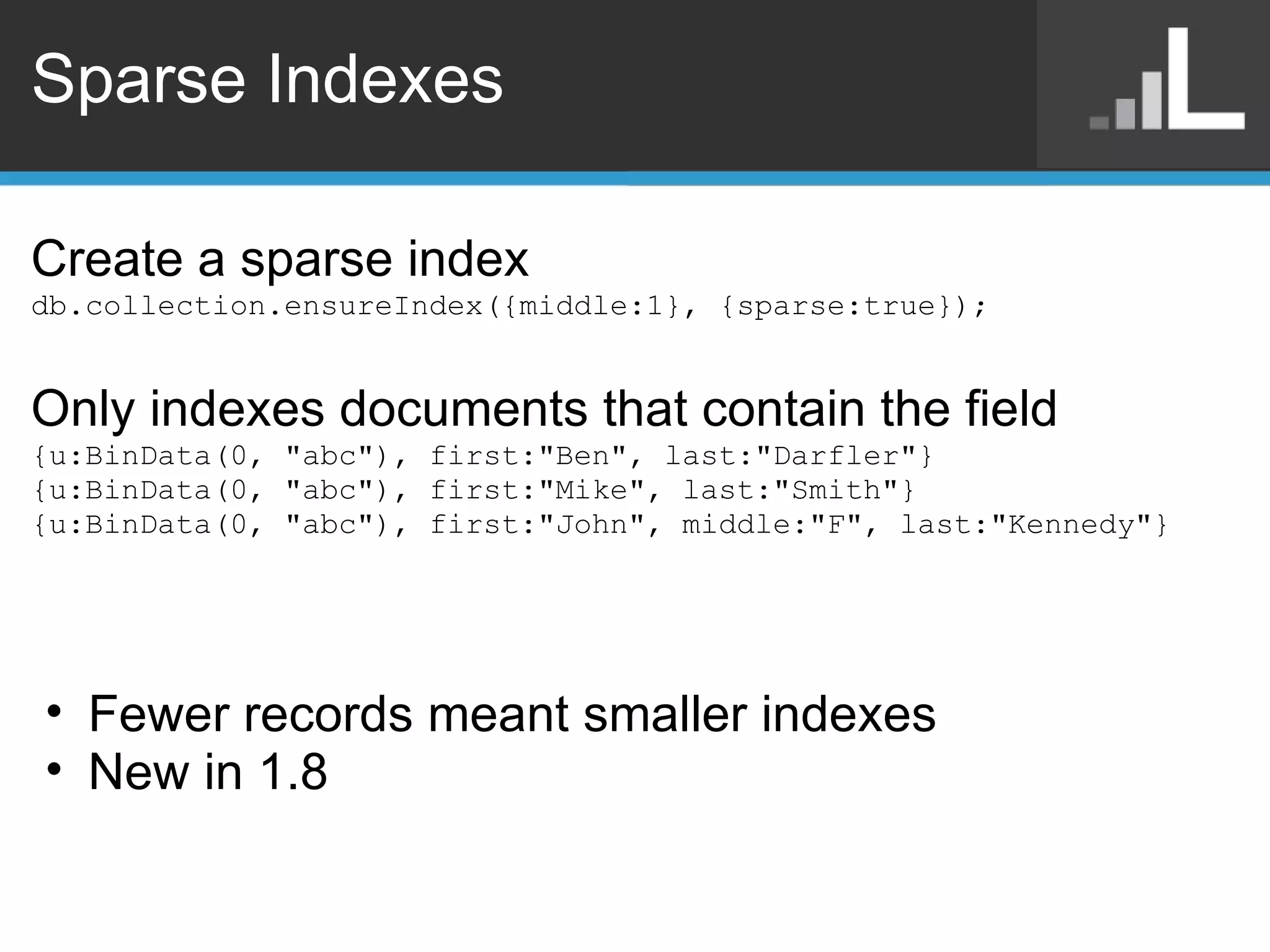

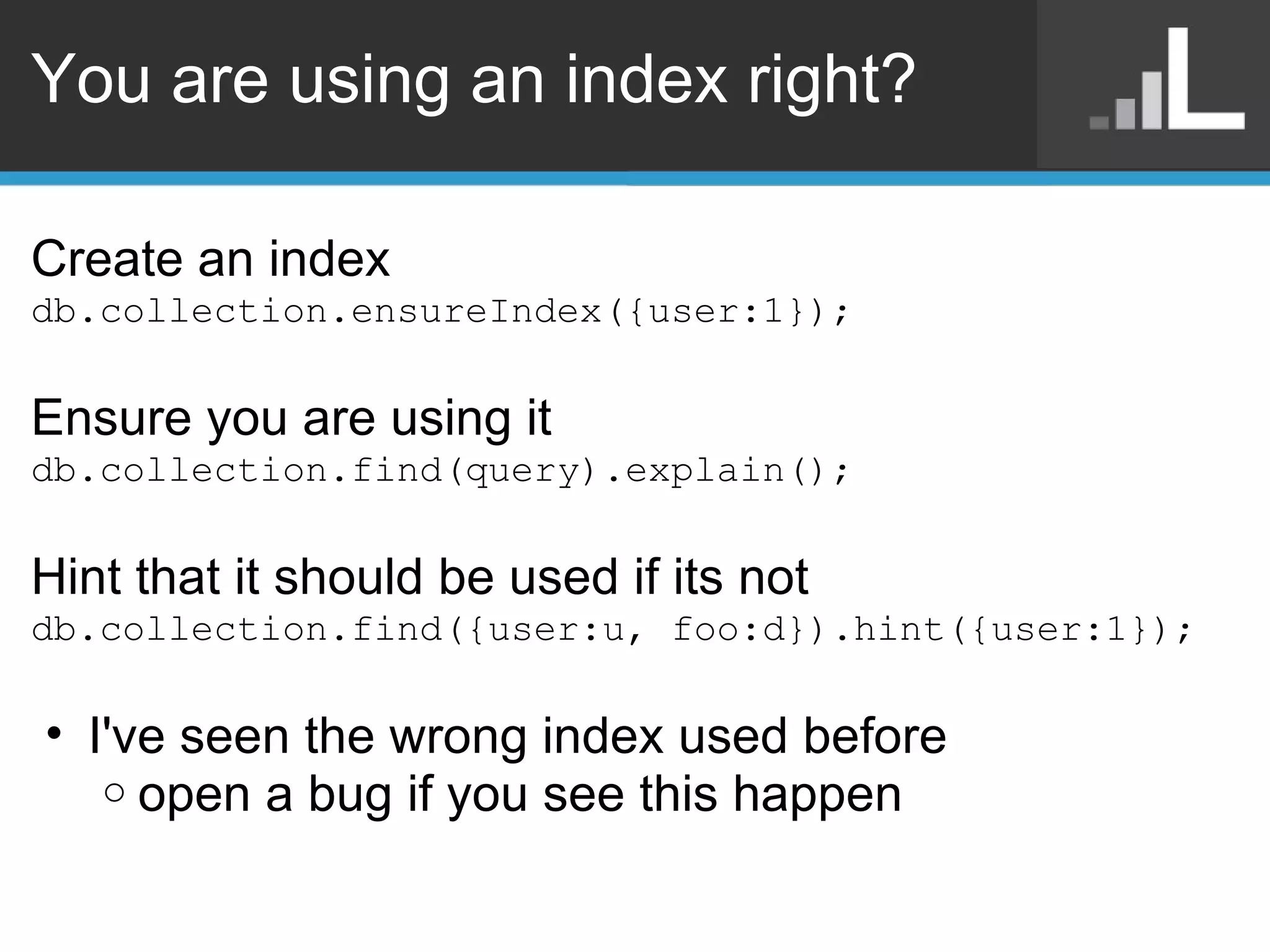

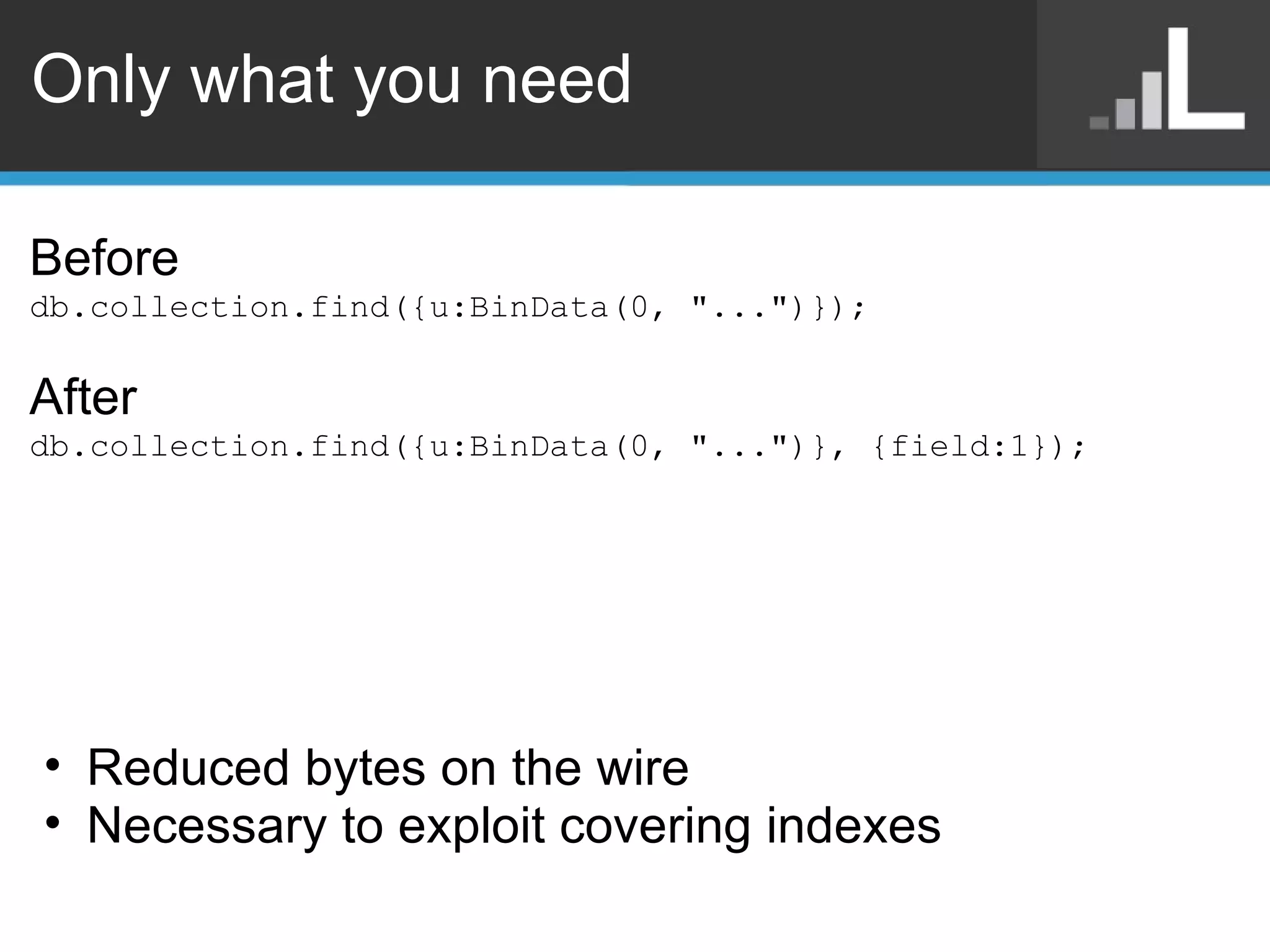

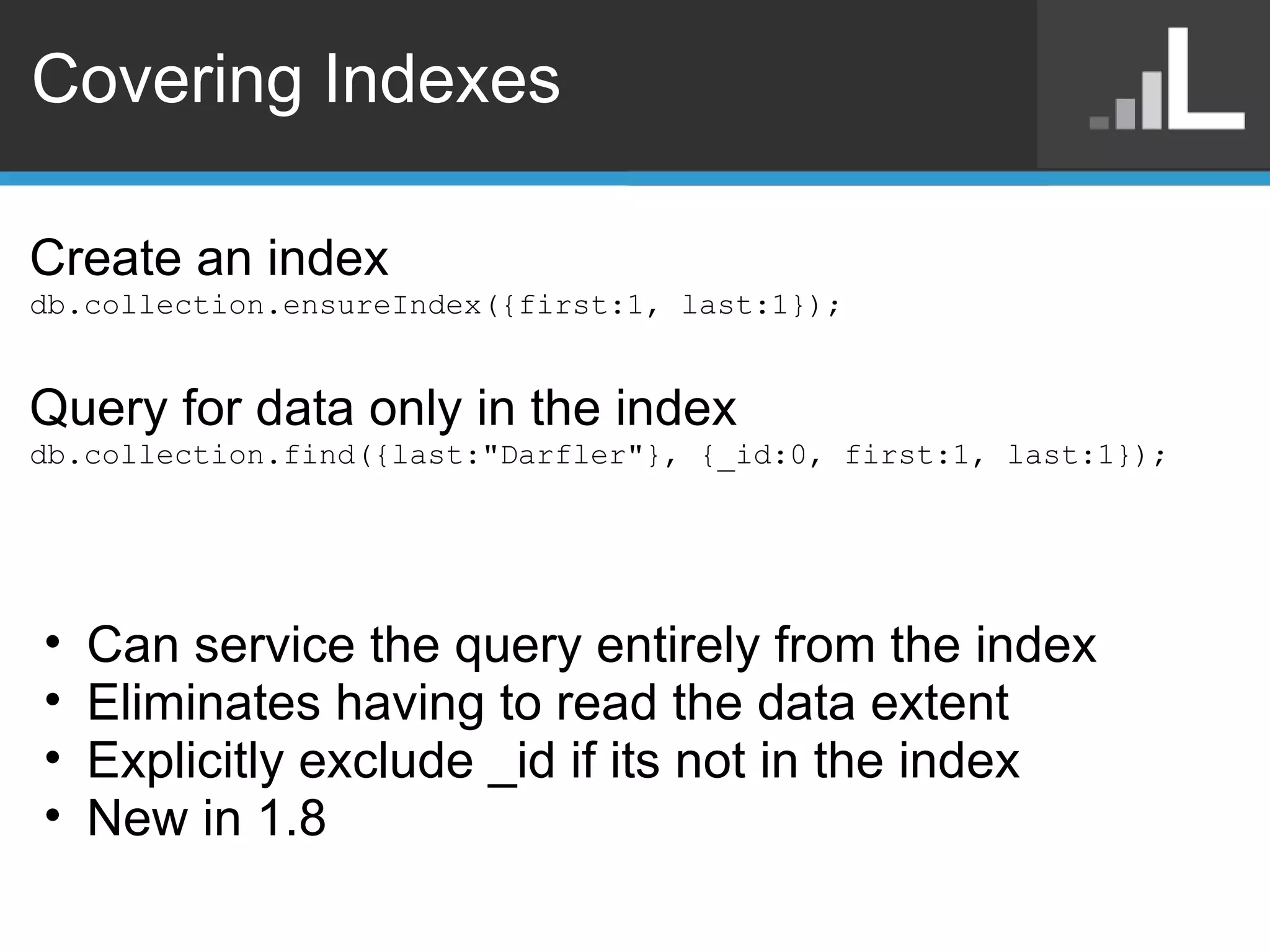

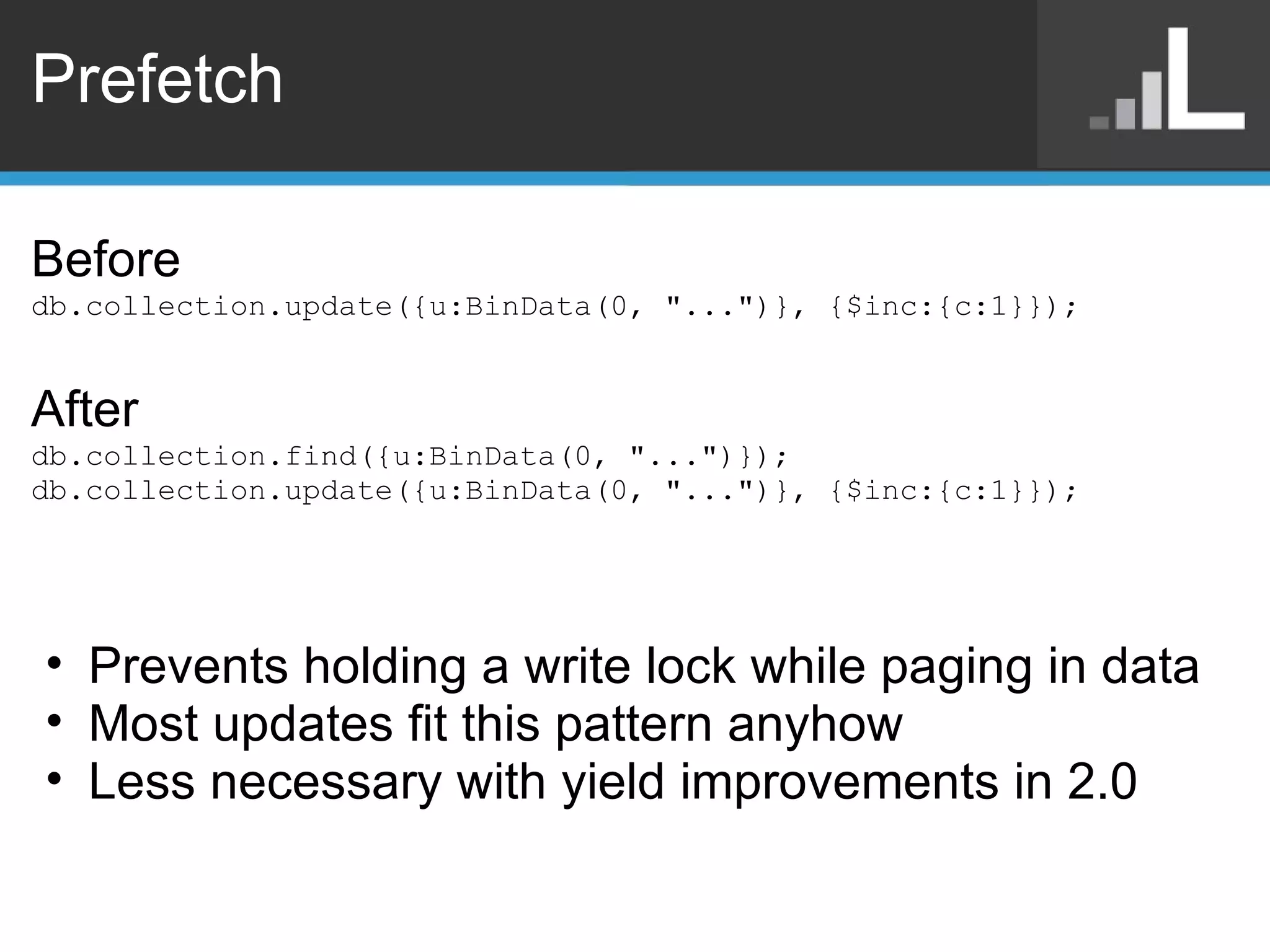

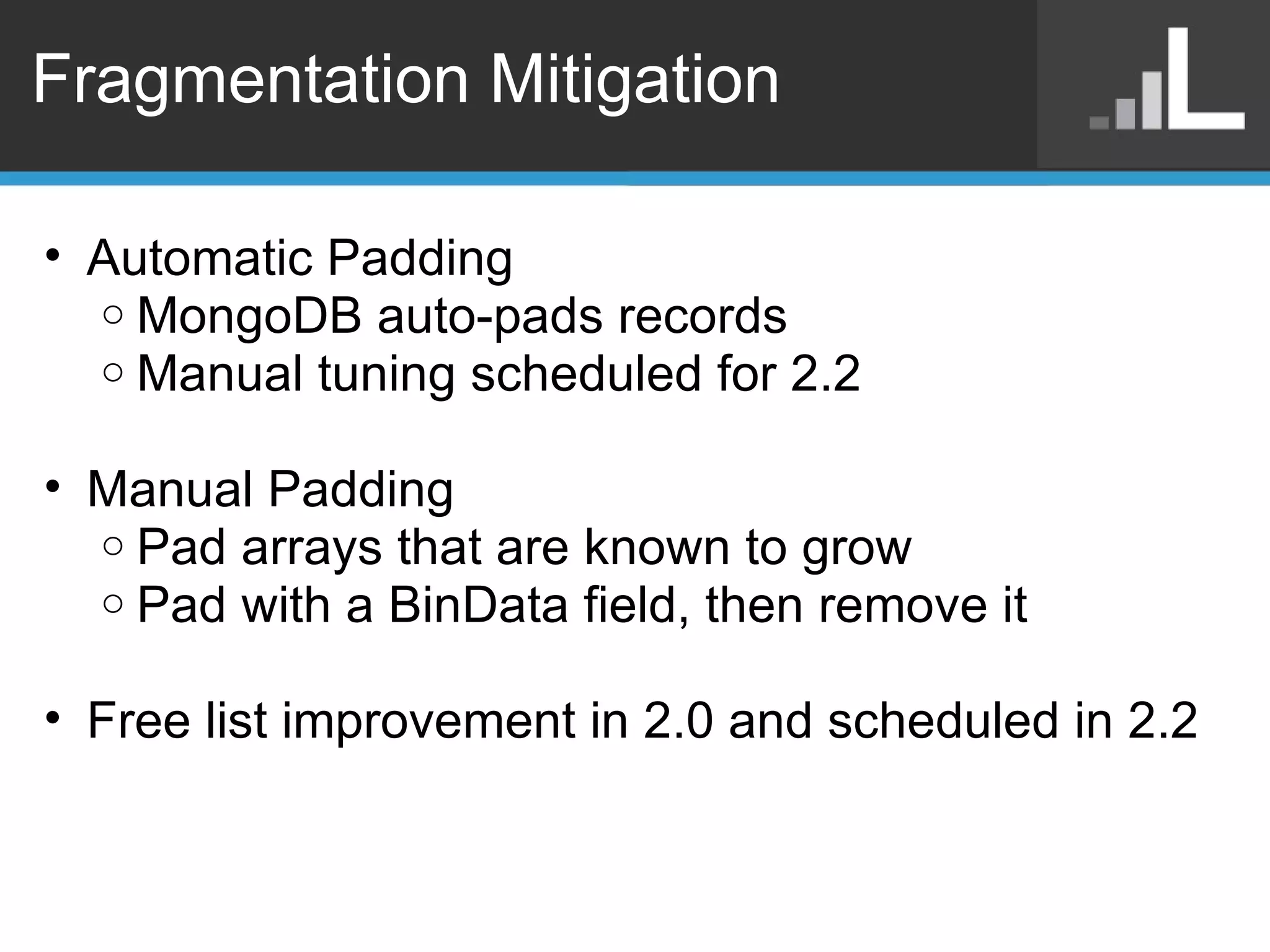

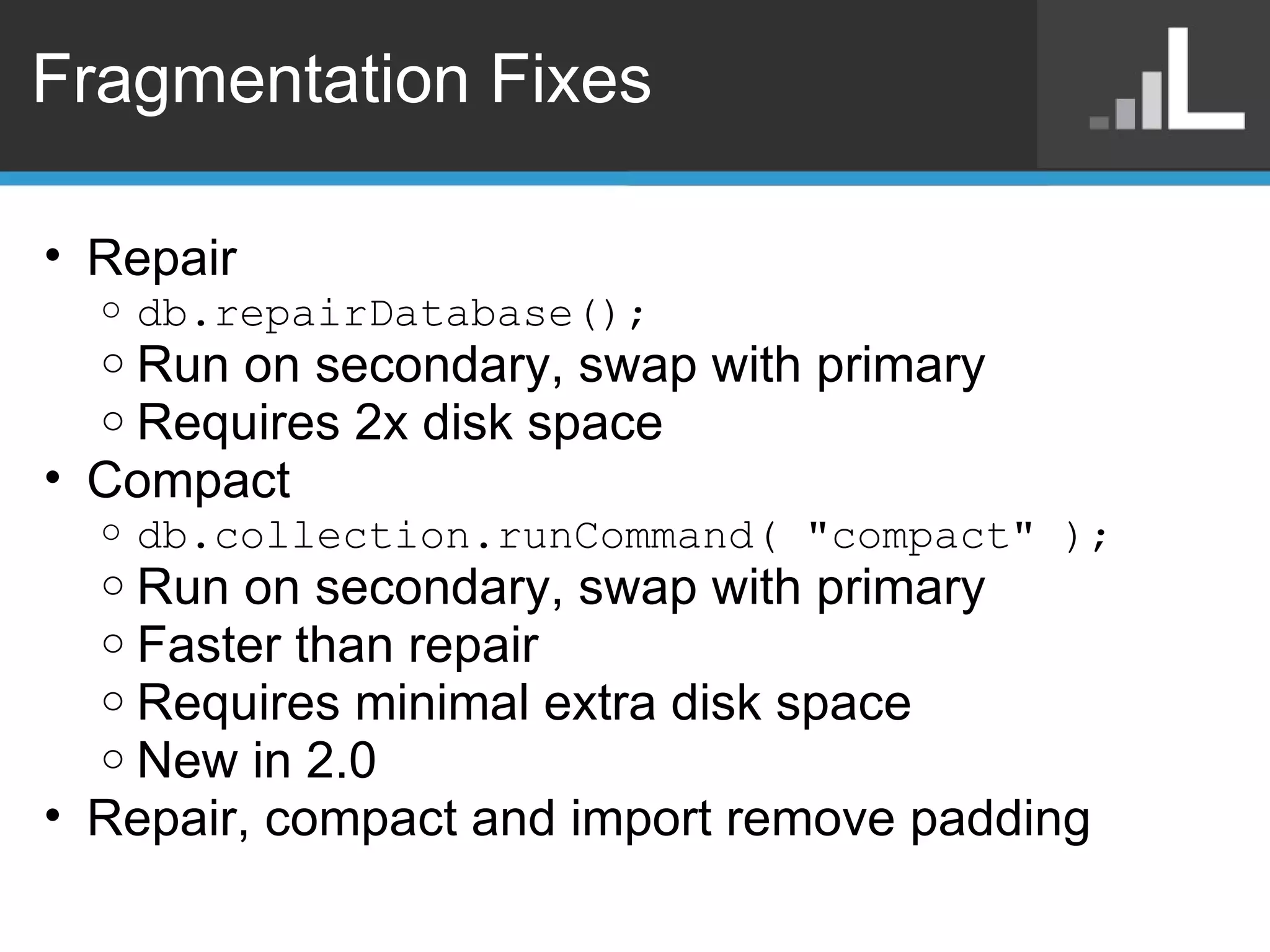

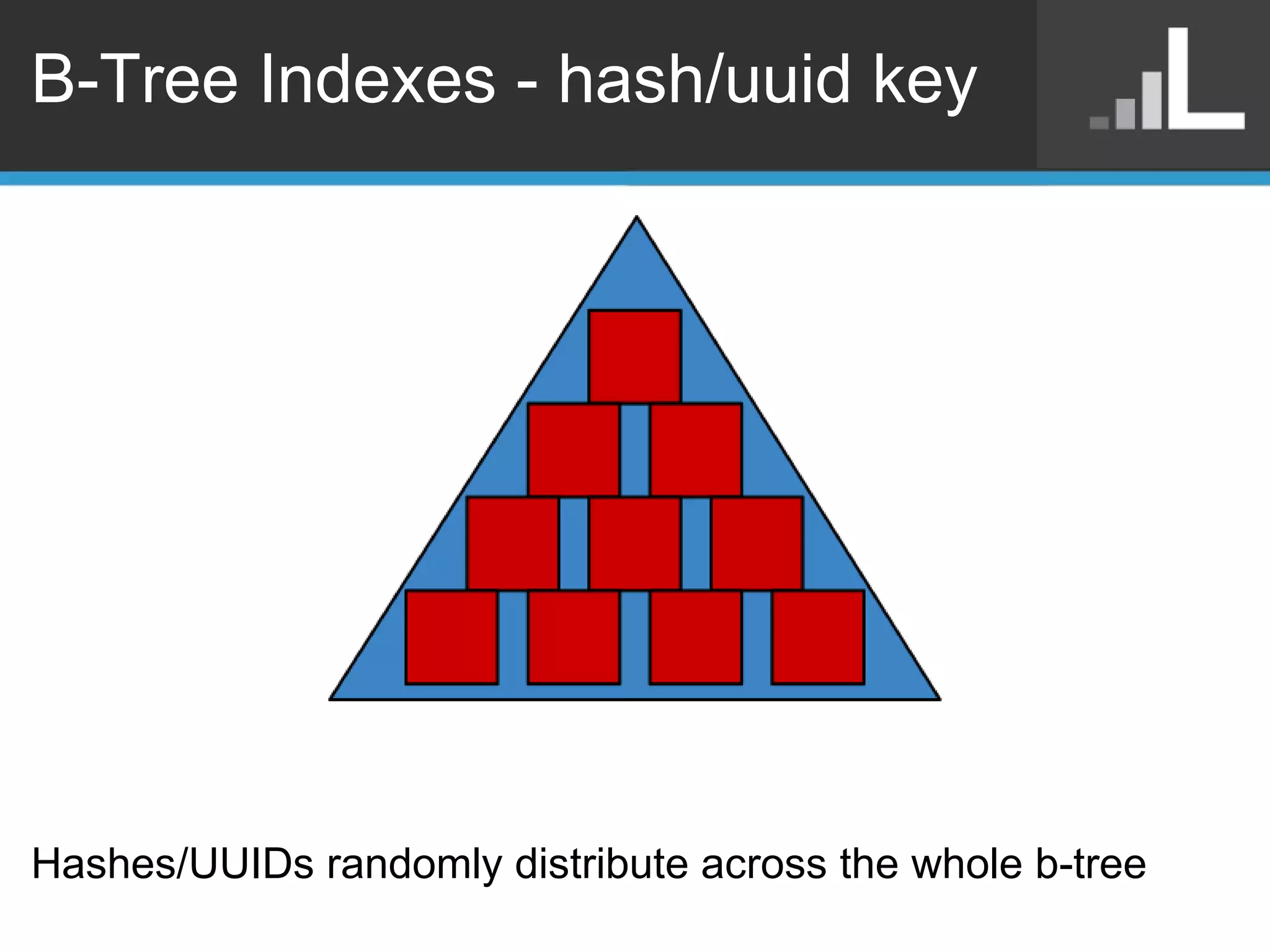

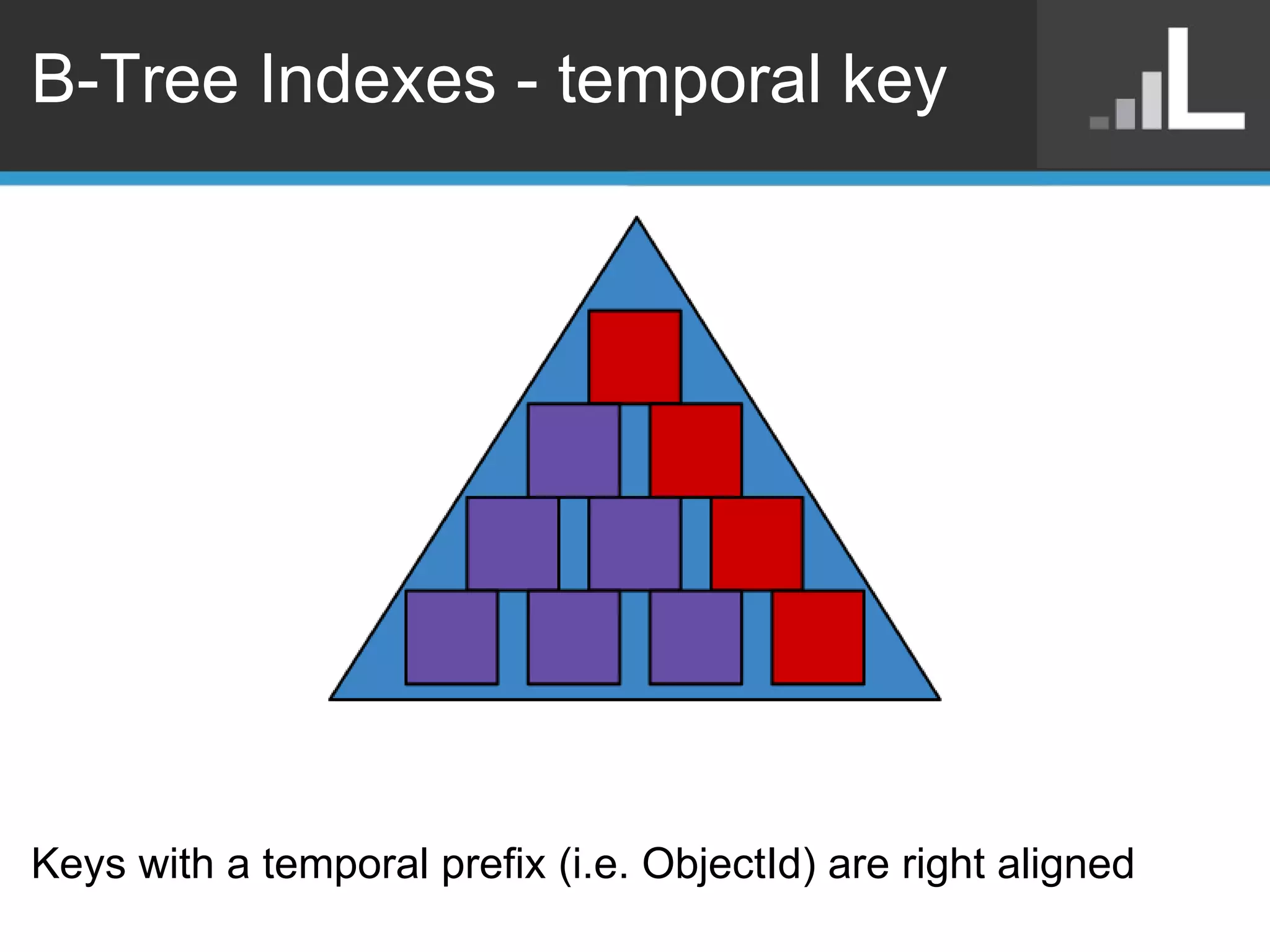

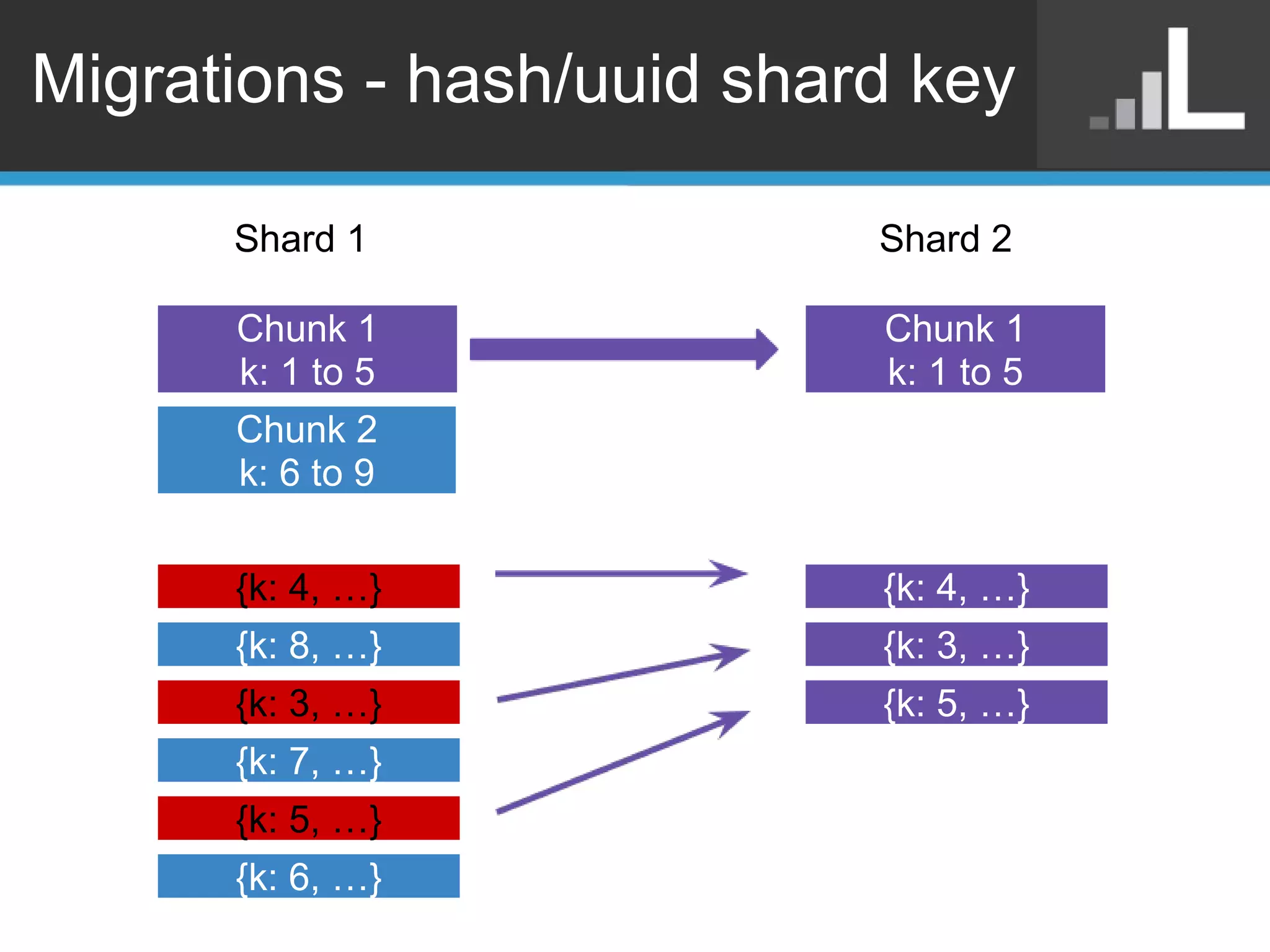

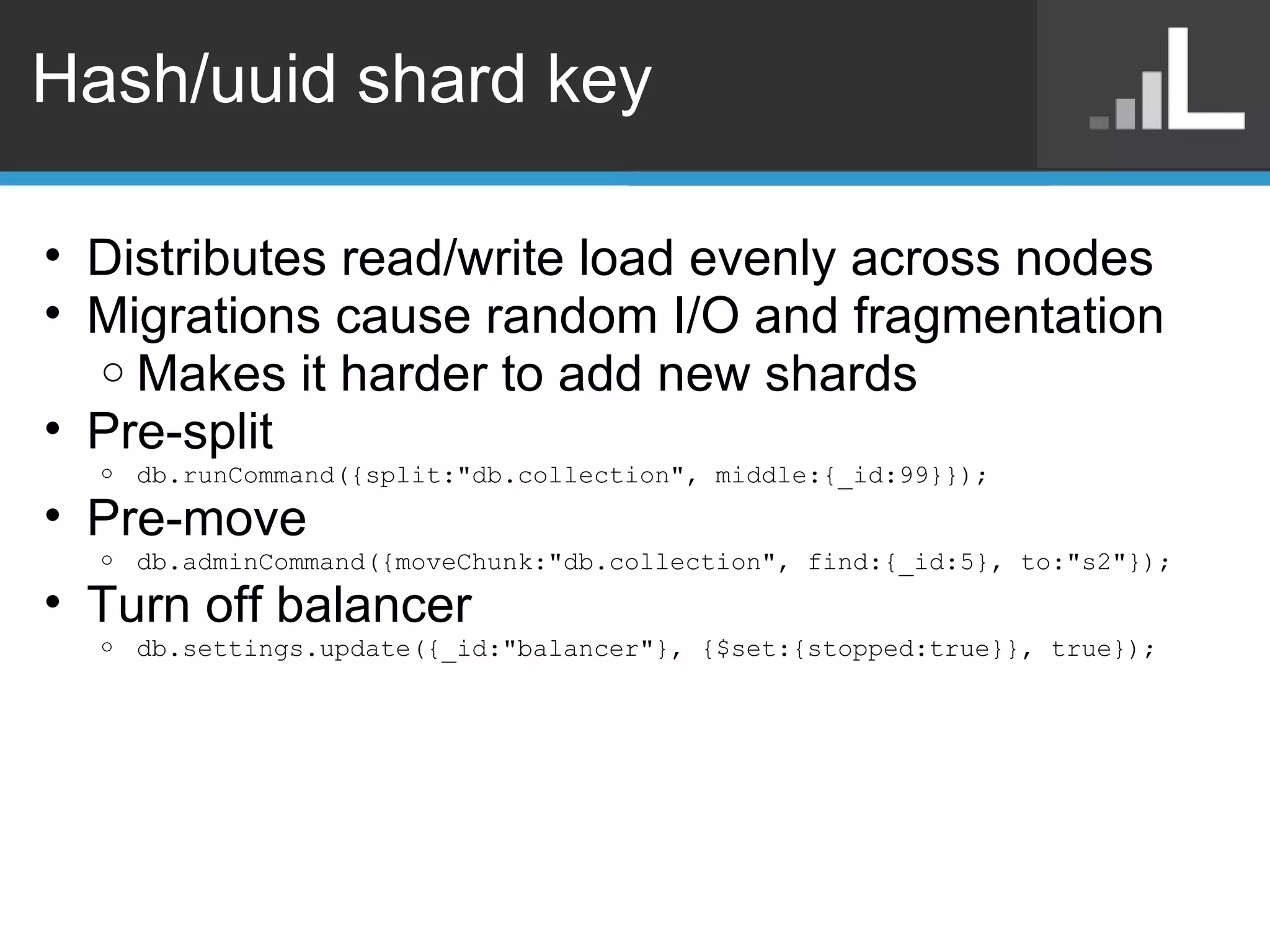

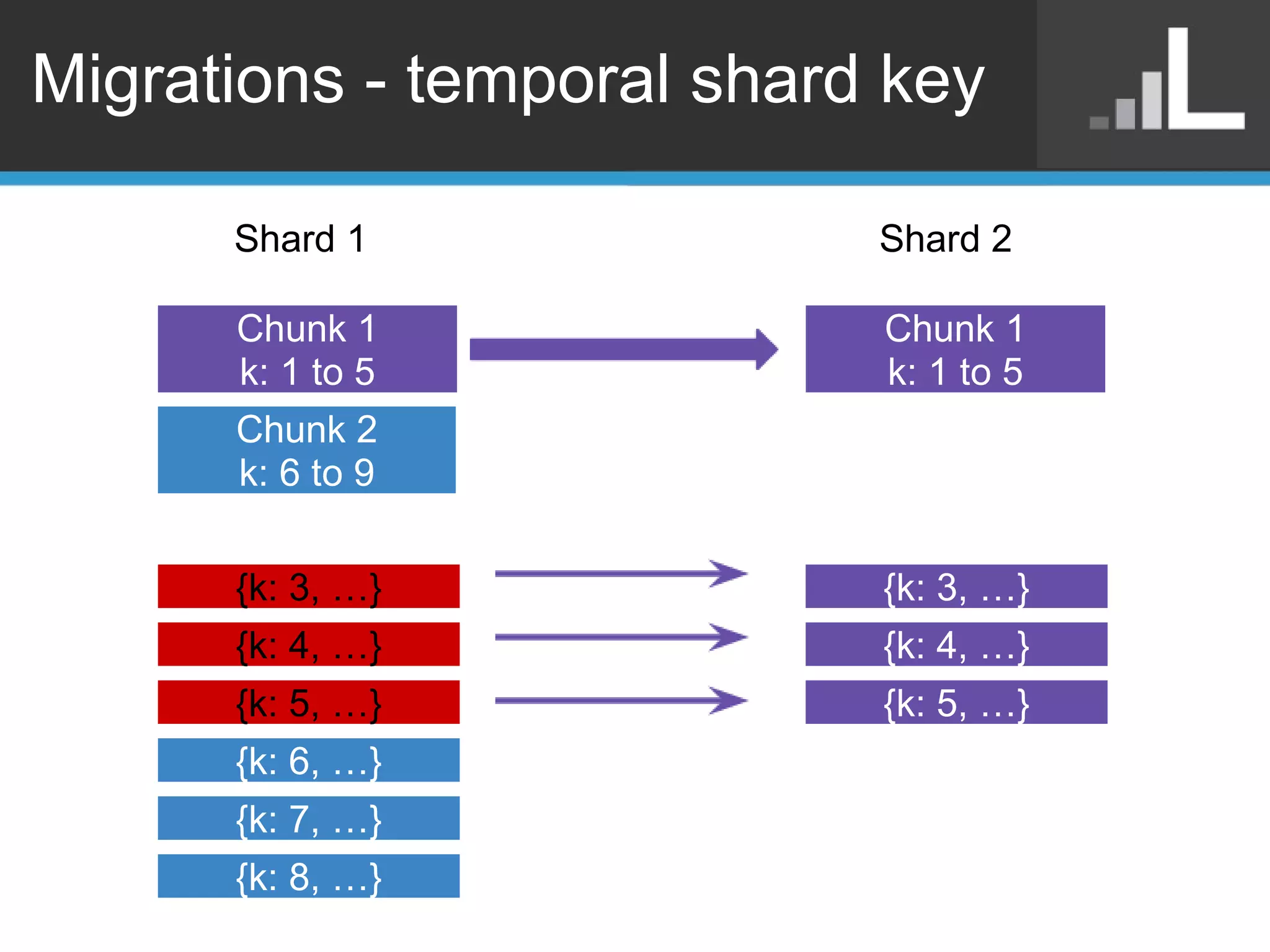

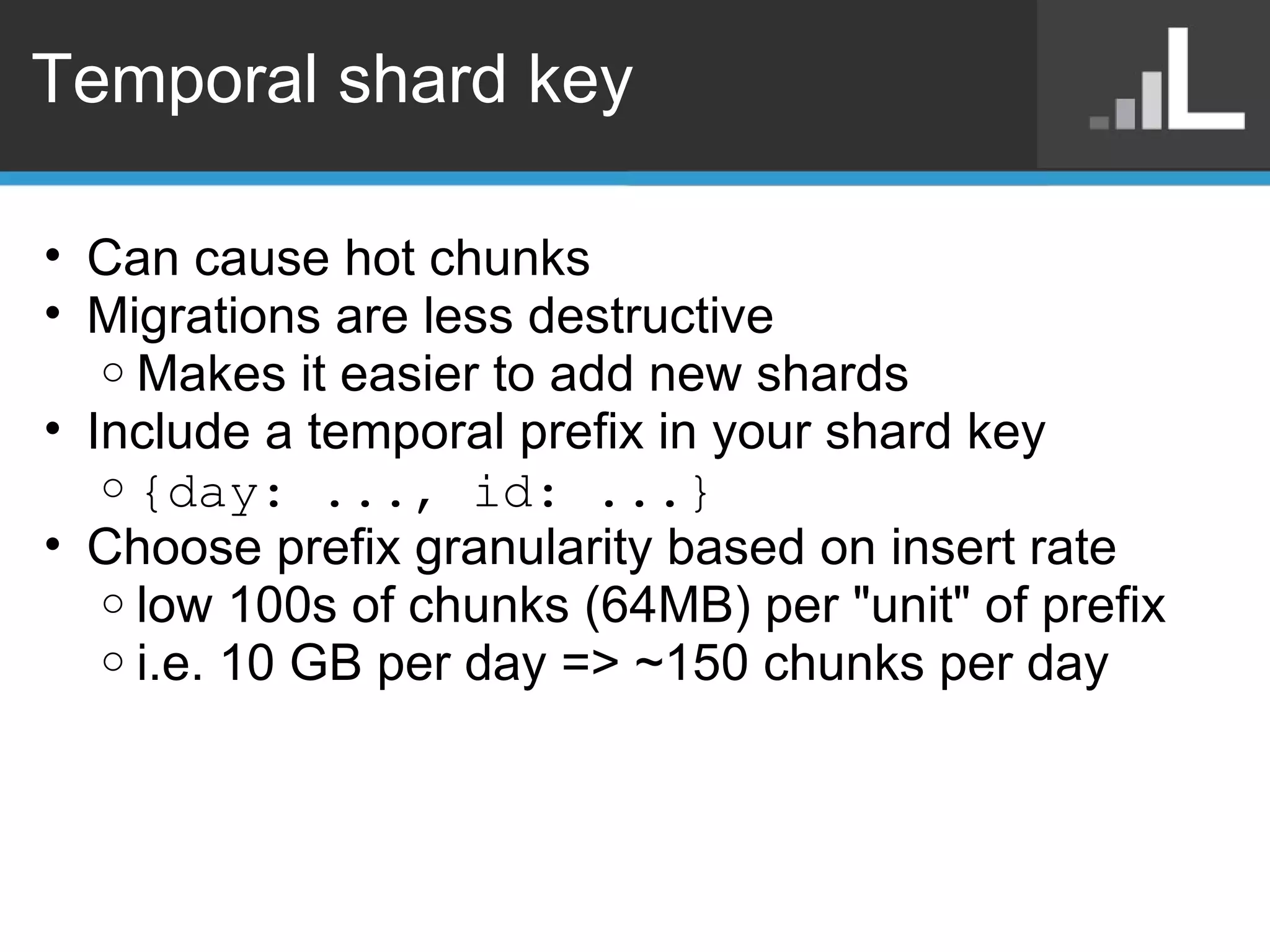

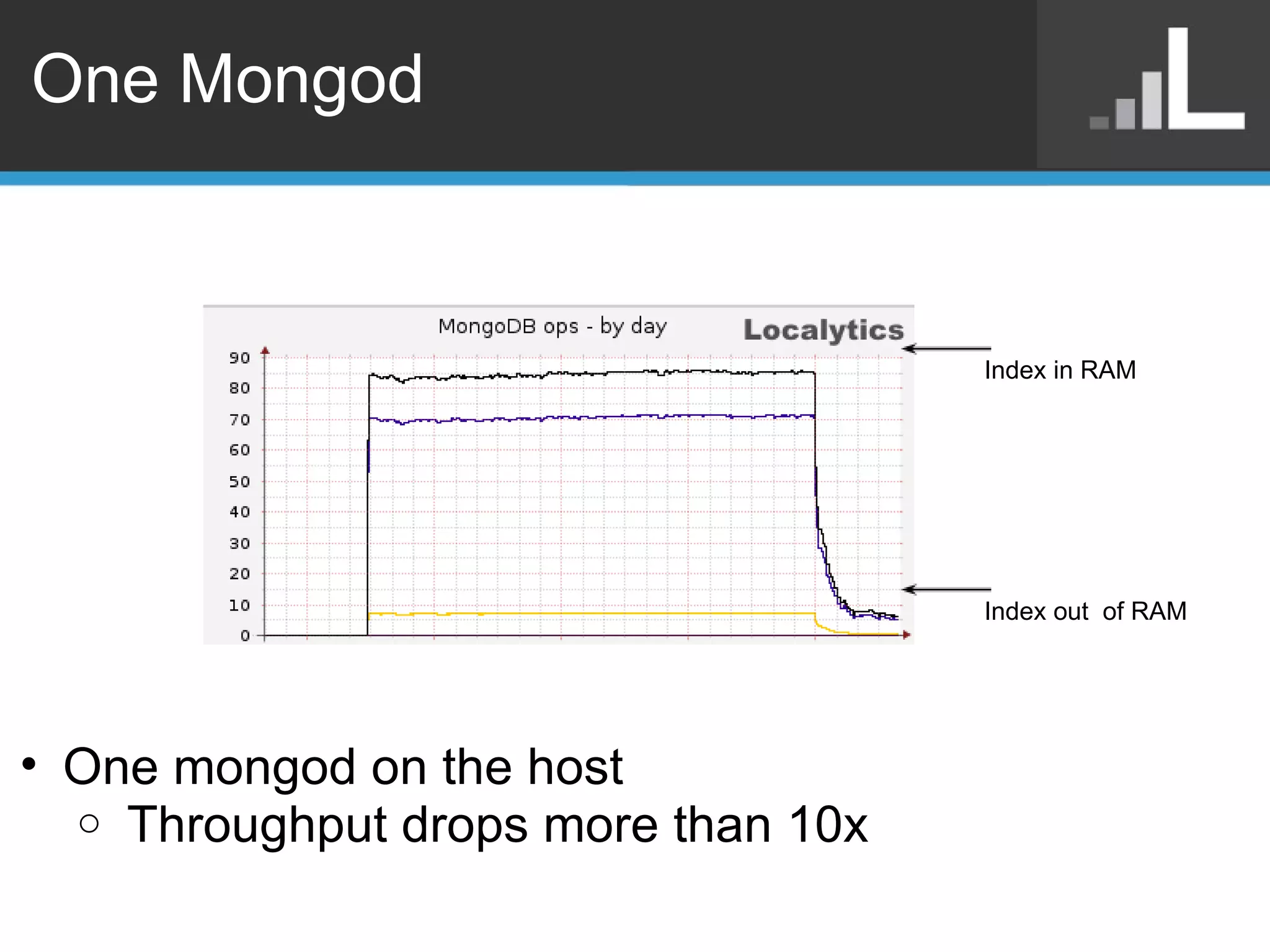

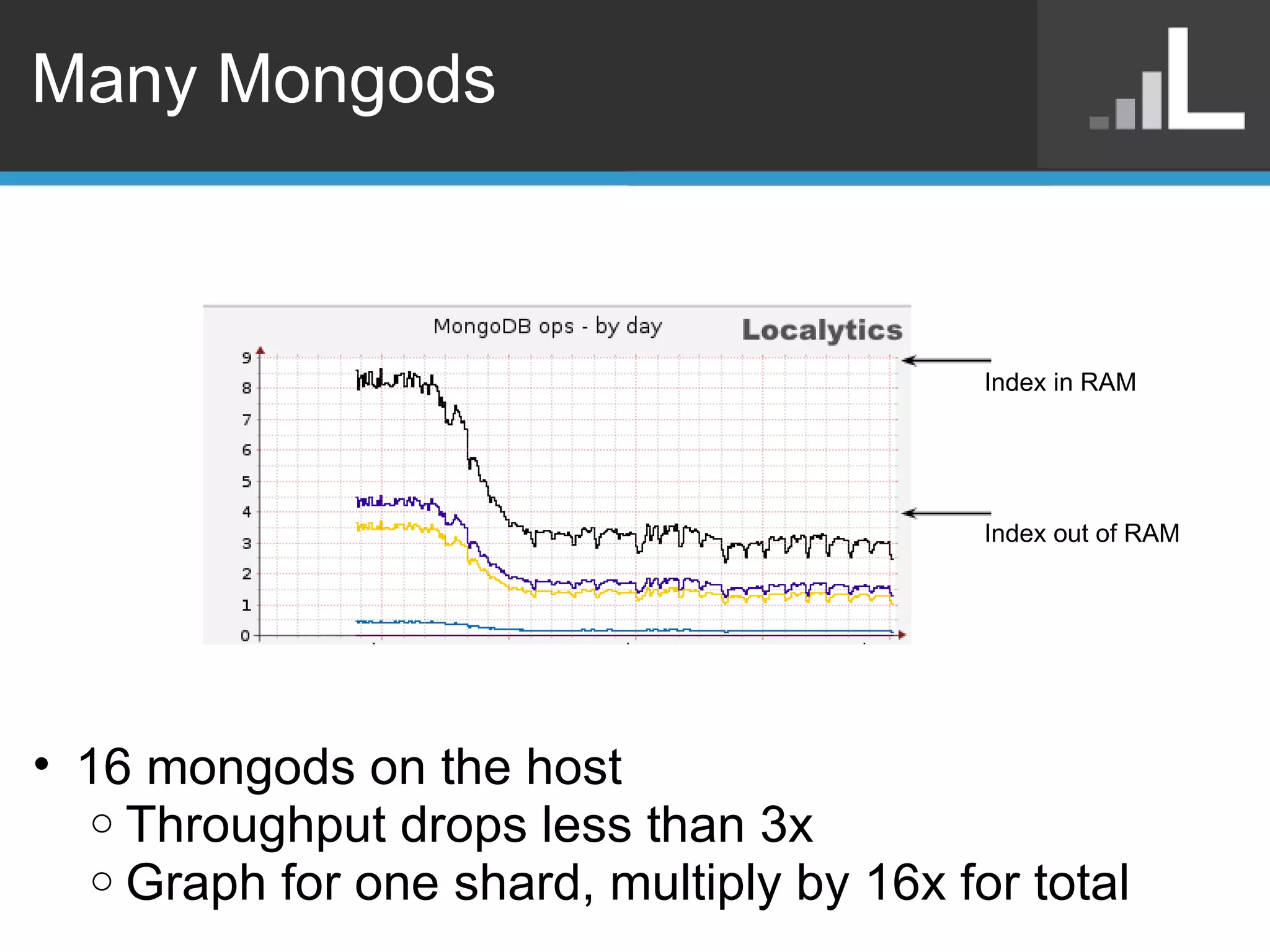

Benjamin Darfler presented lessons learned from optimizing MongoDB at Localytics to handle their increasing data and query loads. Some key optimizations included shortening document names, using binary data types for IDs, pre-aggregating data, creating covering indexes, and choosing a temporal field as the shard key. Hardware optimizations involved using larger EC2 instance types and RAIDing multiple large EBS volumes to reduce fragmentation during migrations. Testing changes thoroughly was emphasized.