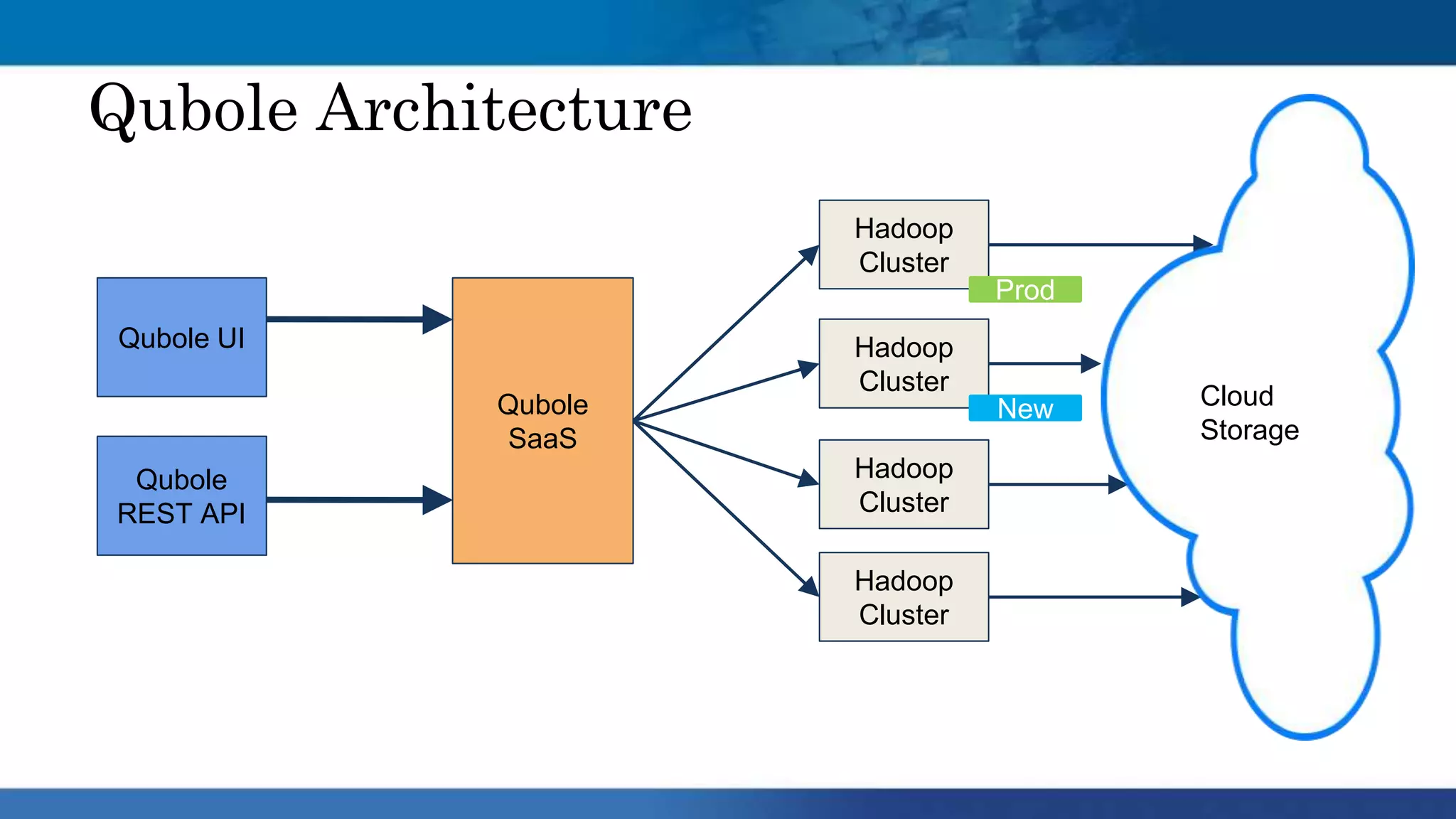

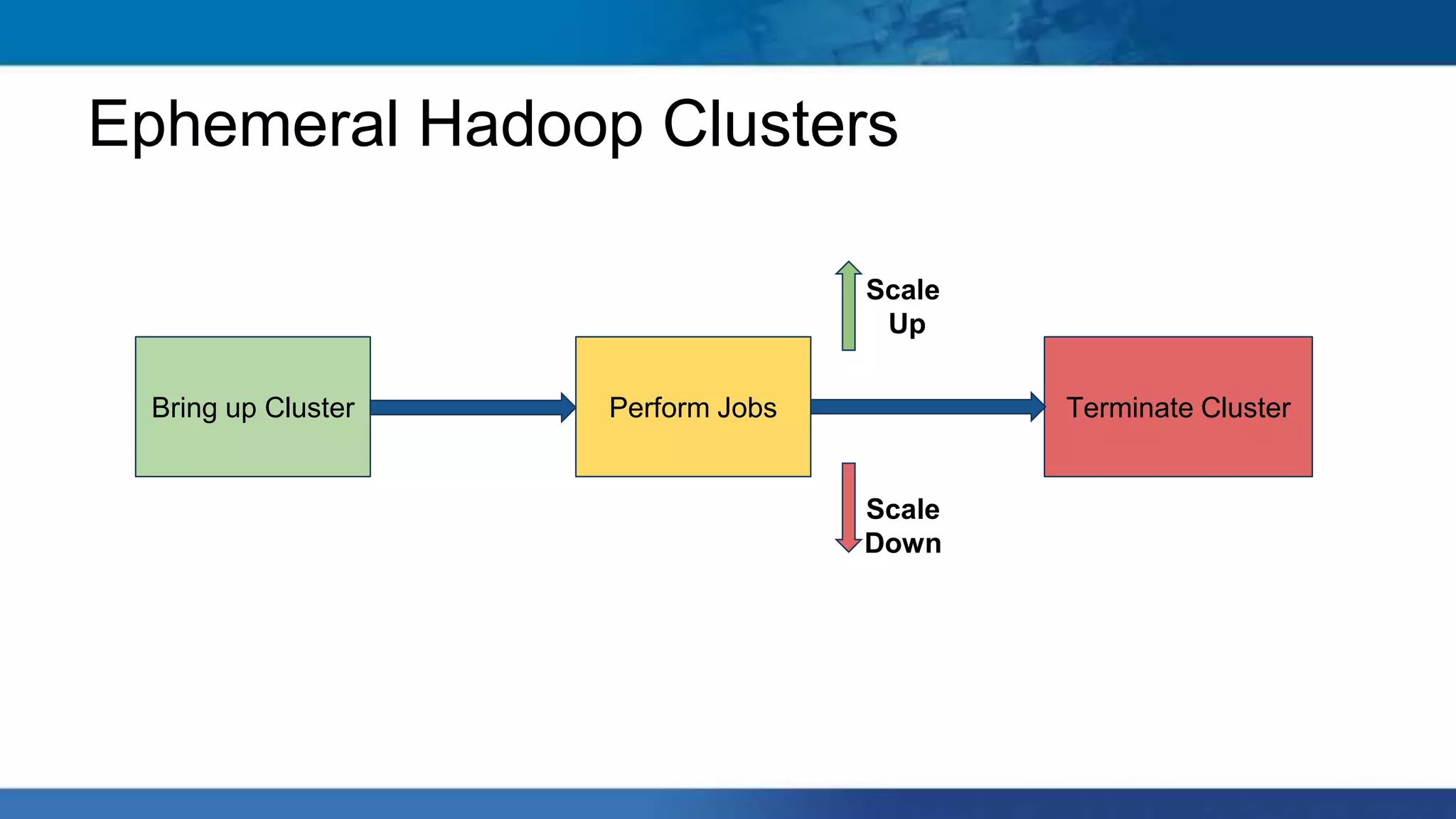

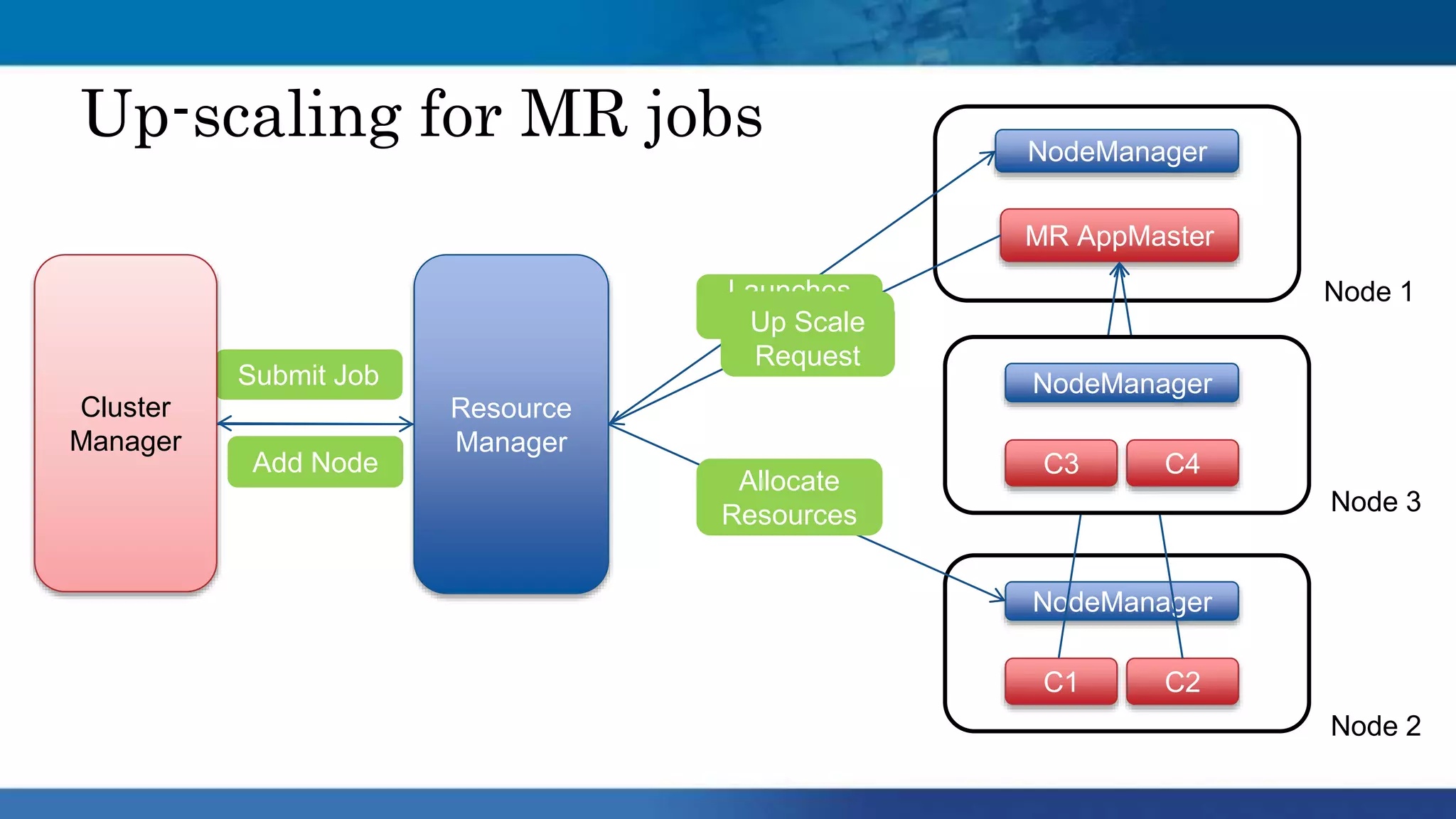

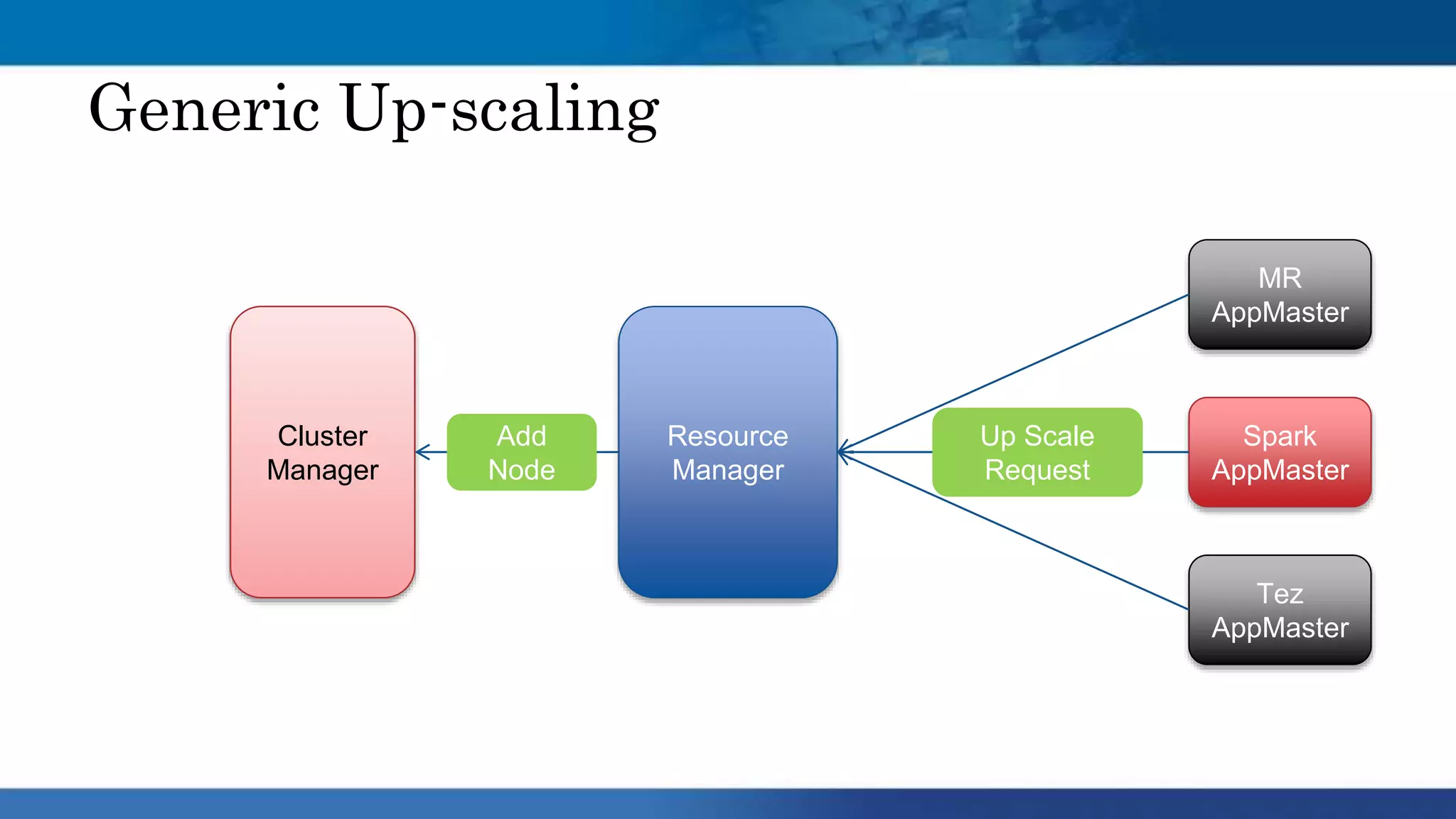

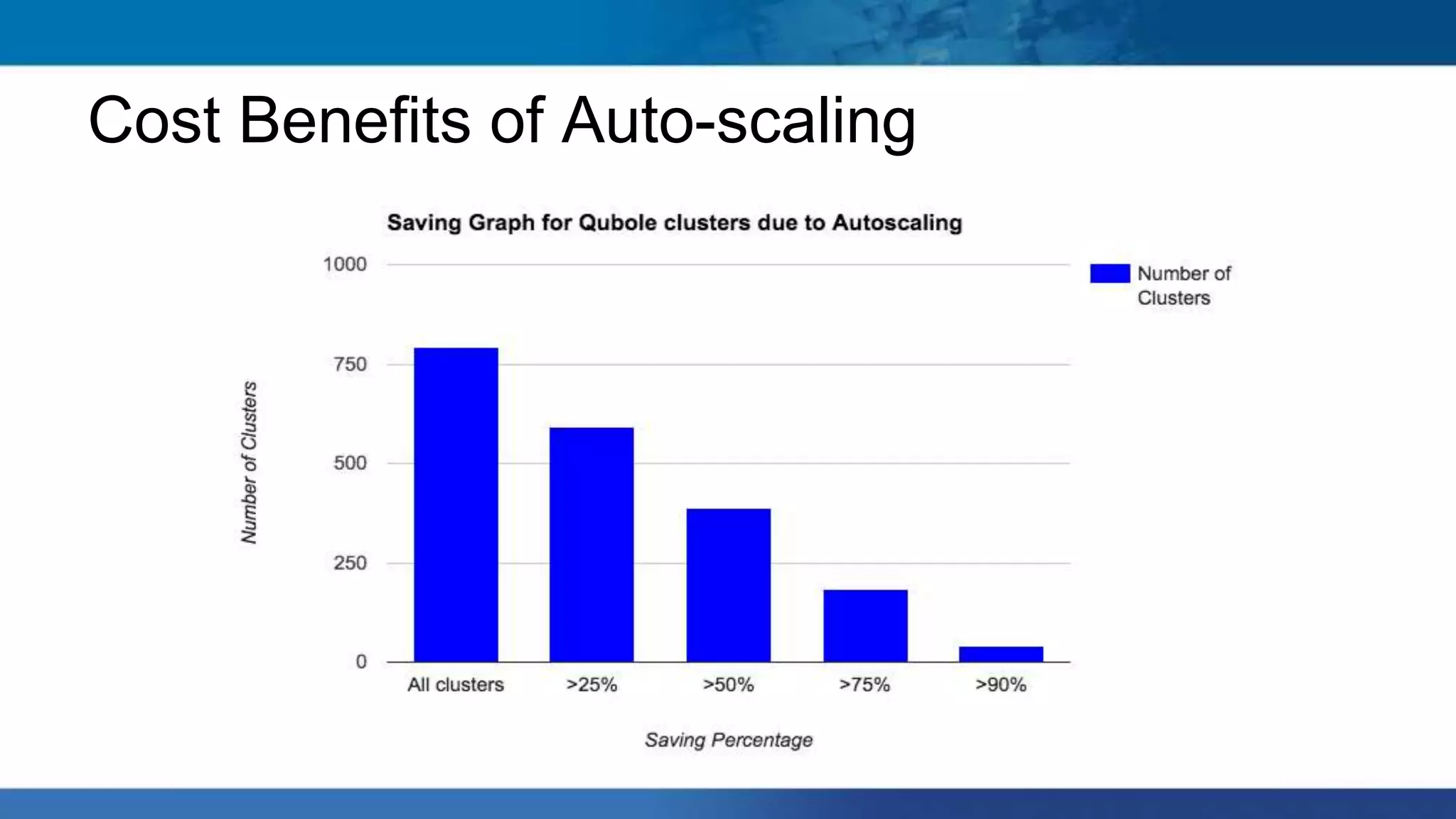

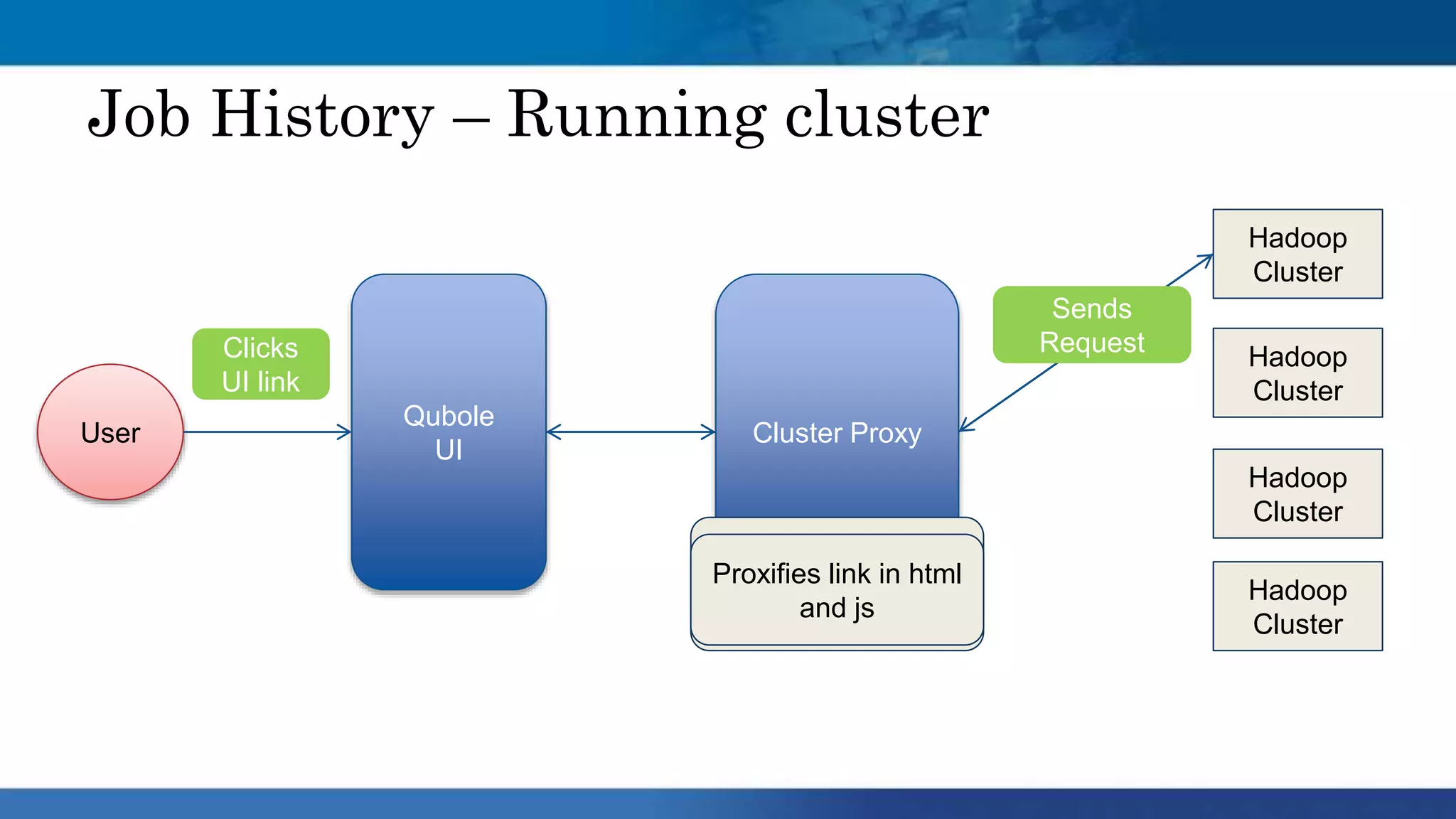

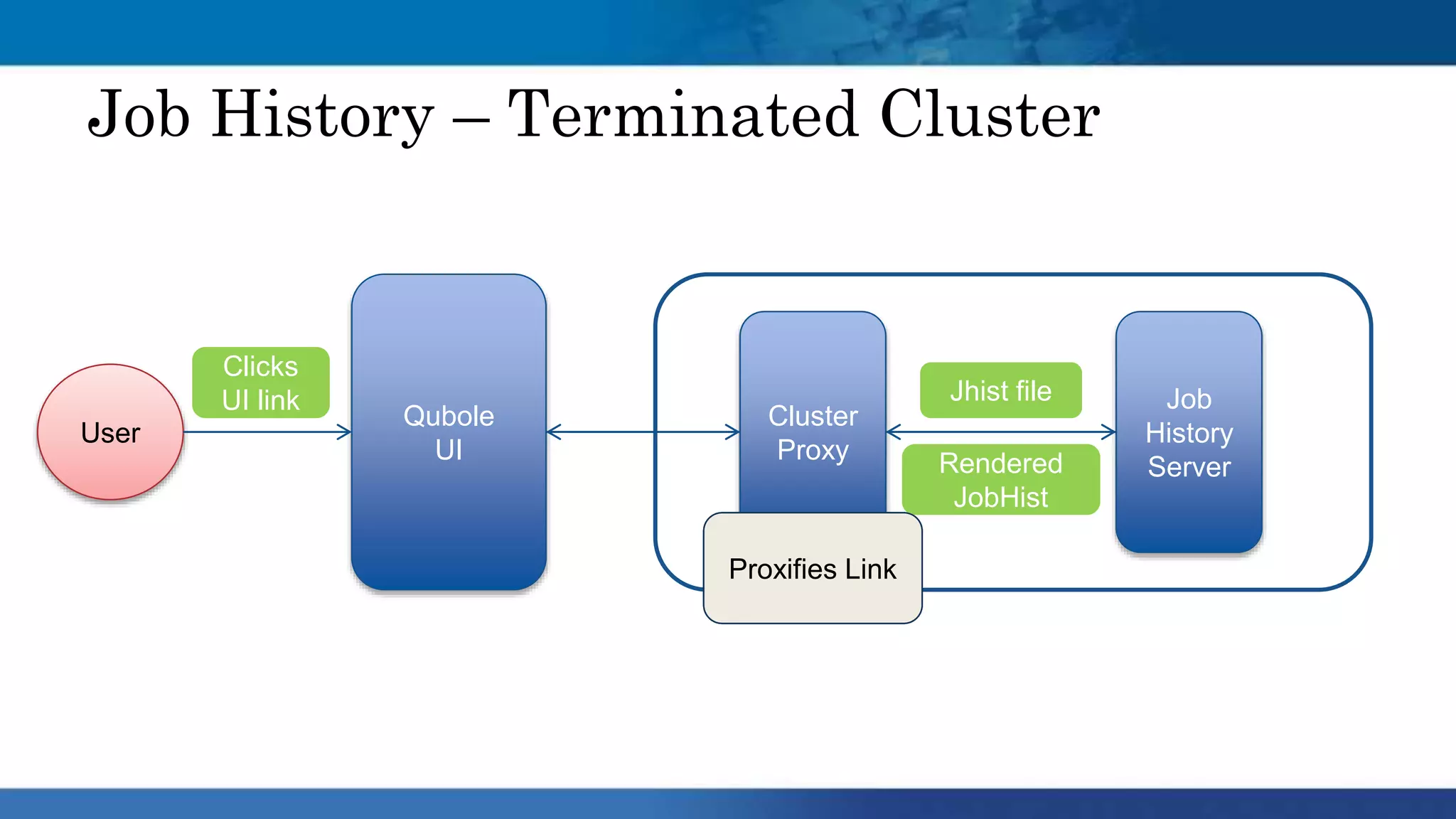

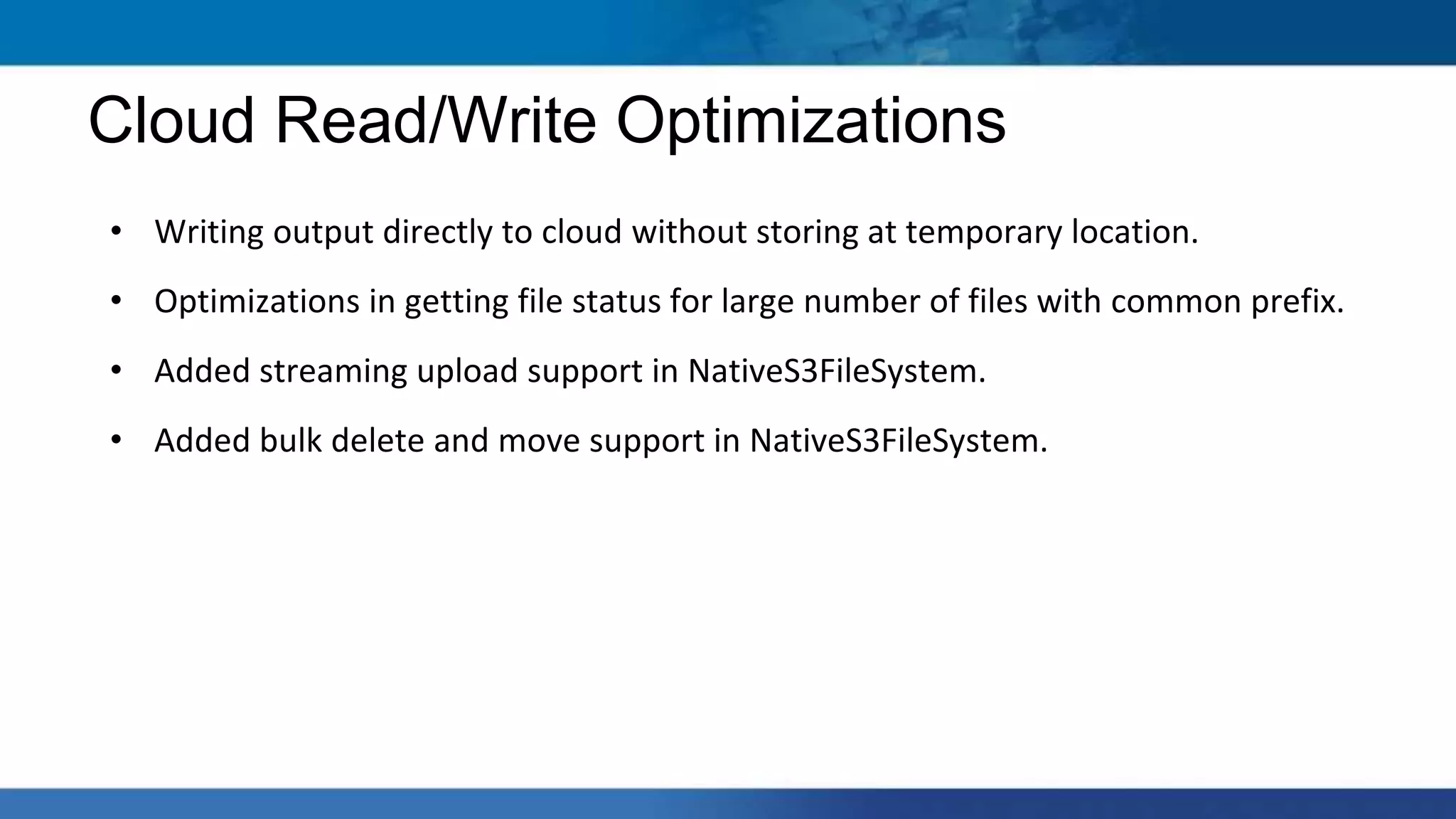

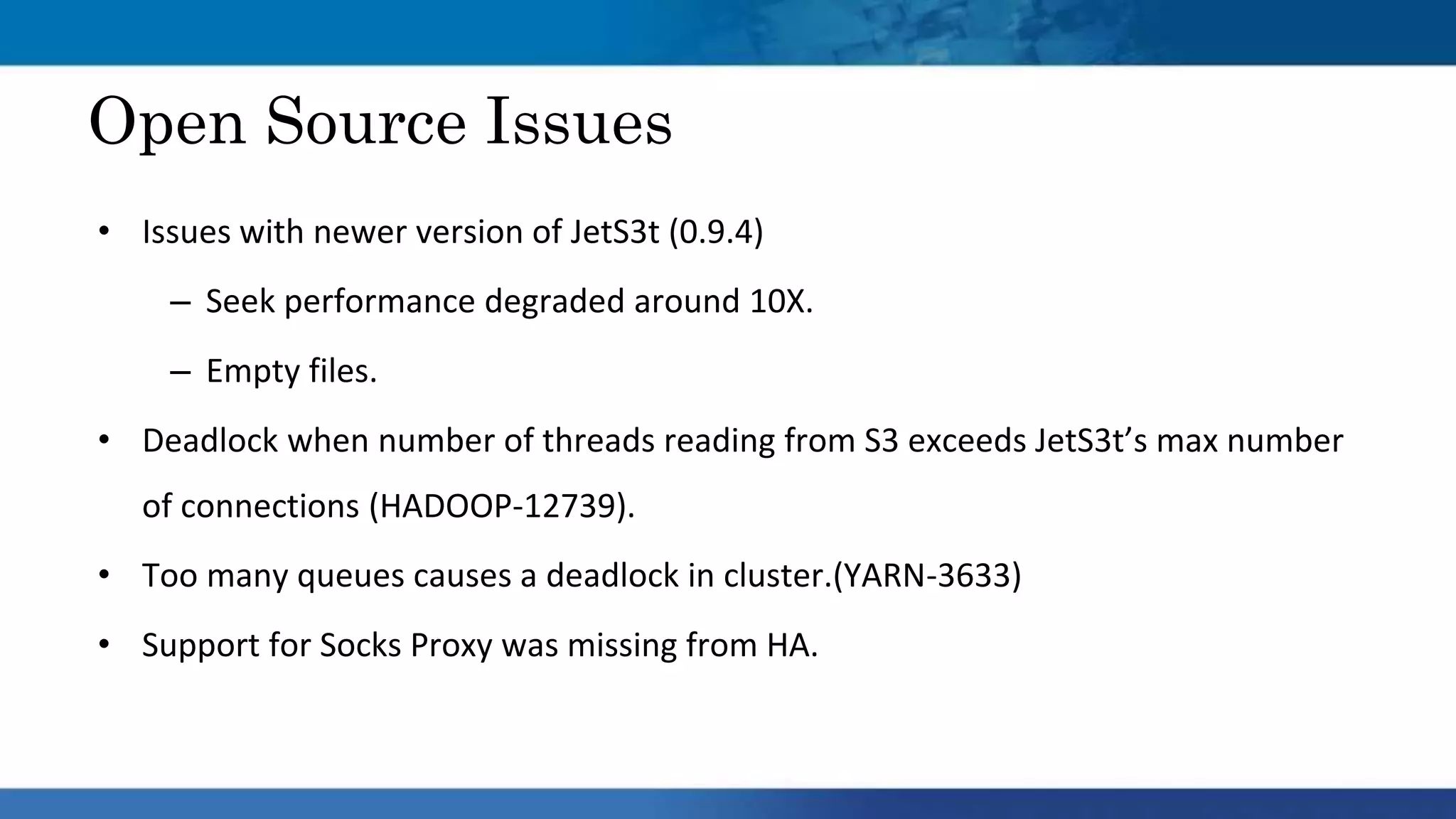

Hadoop clusters are operated on an ephemeral basis in the cloud by Qubole, processing over 300 petabytes of data per month across over 100 customers. Qubole addresses challenges of ephemeral clusters through auto-scaling of resources using YARN, optimizing performance for cloud storage, and storing job history remotely. Volatile low-cost nodes are leveraged through policies that ensure data replication despite potential node failures.