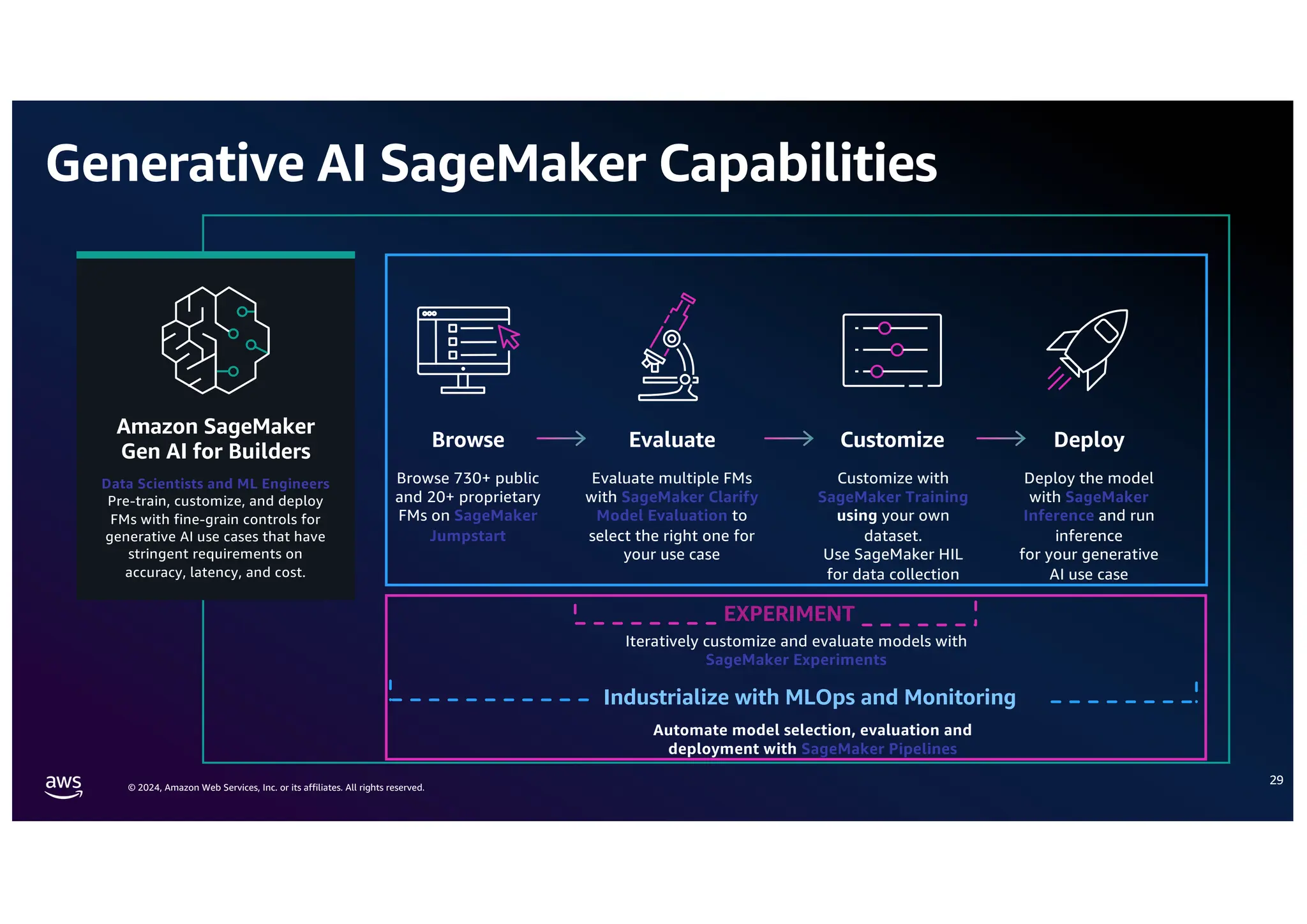

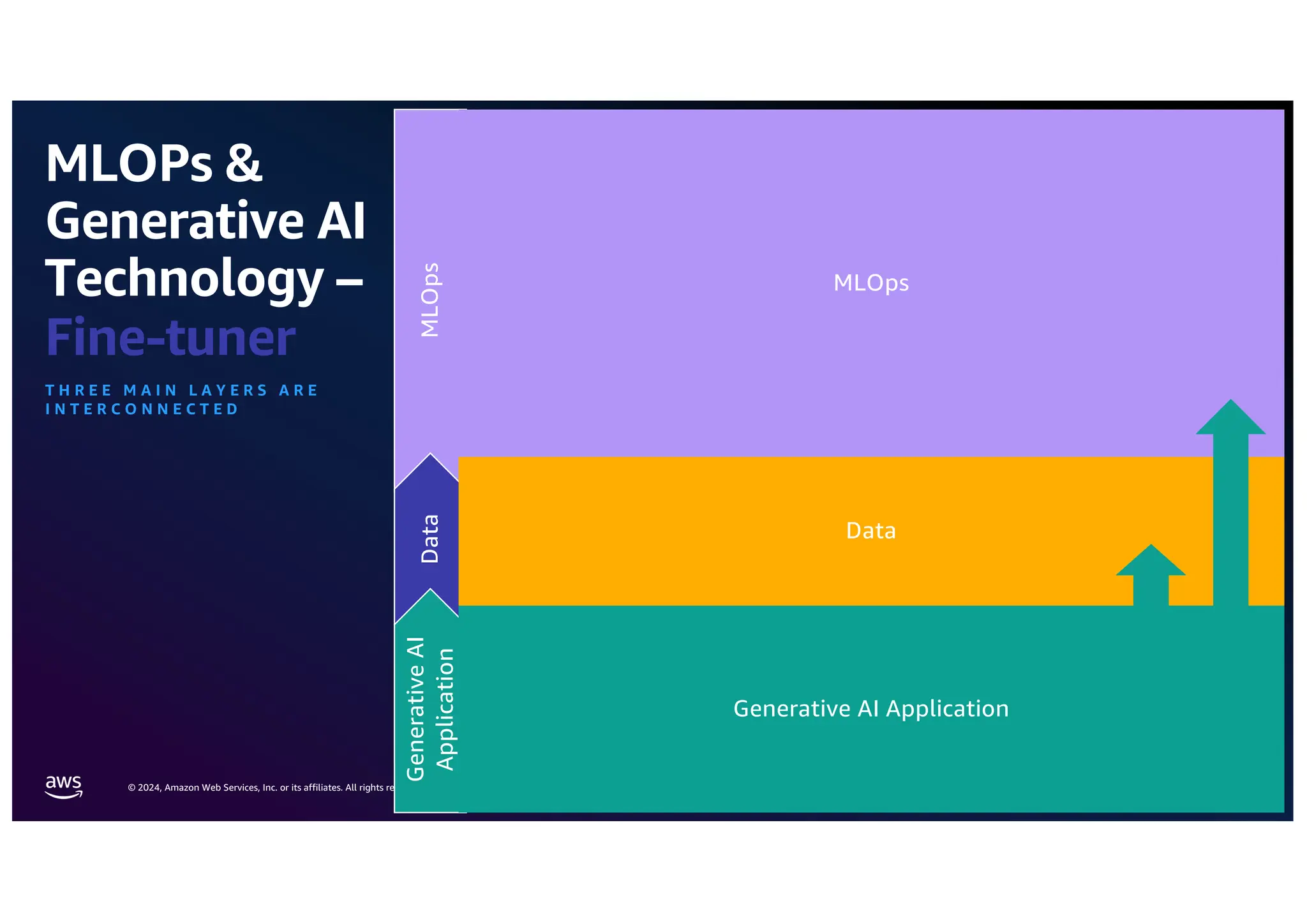

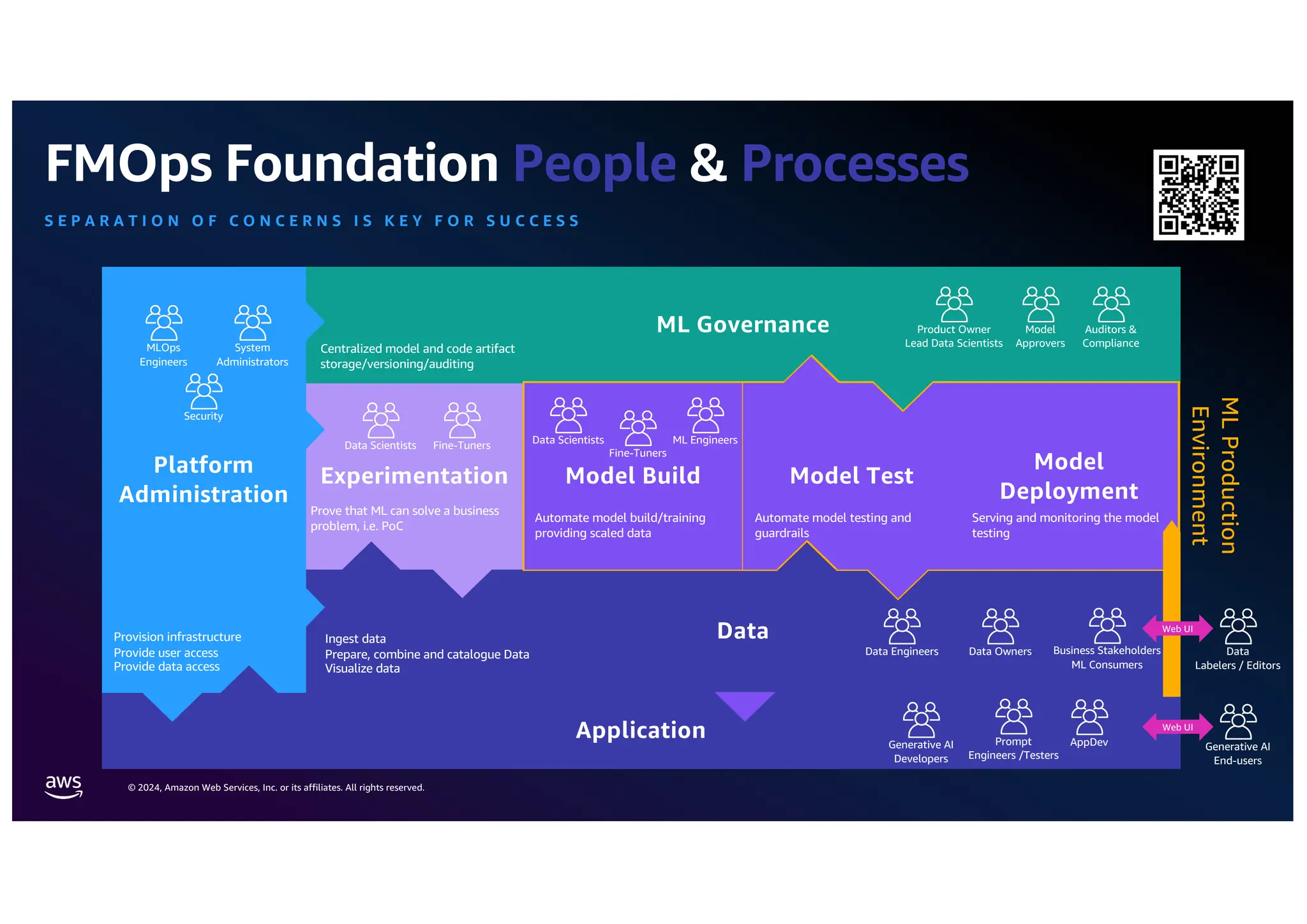

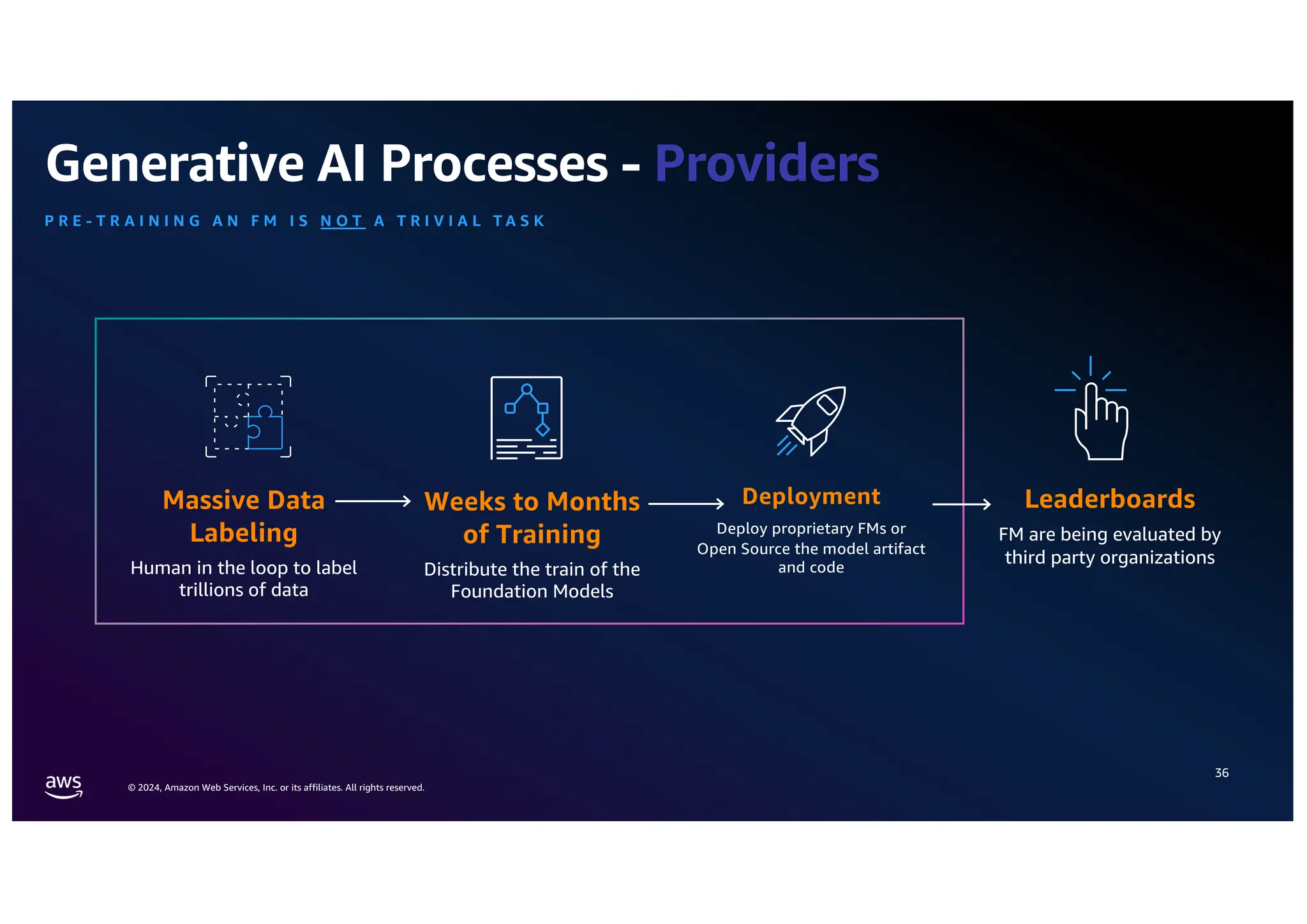

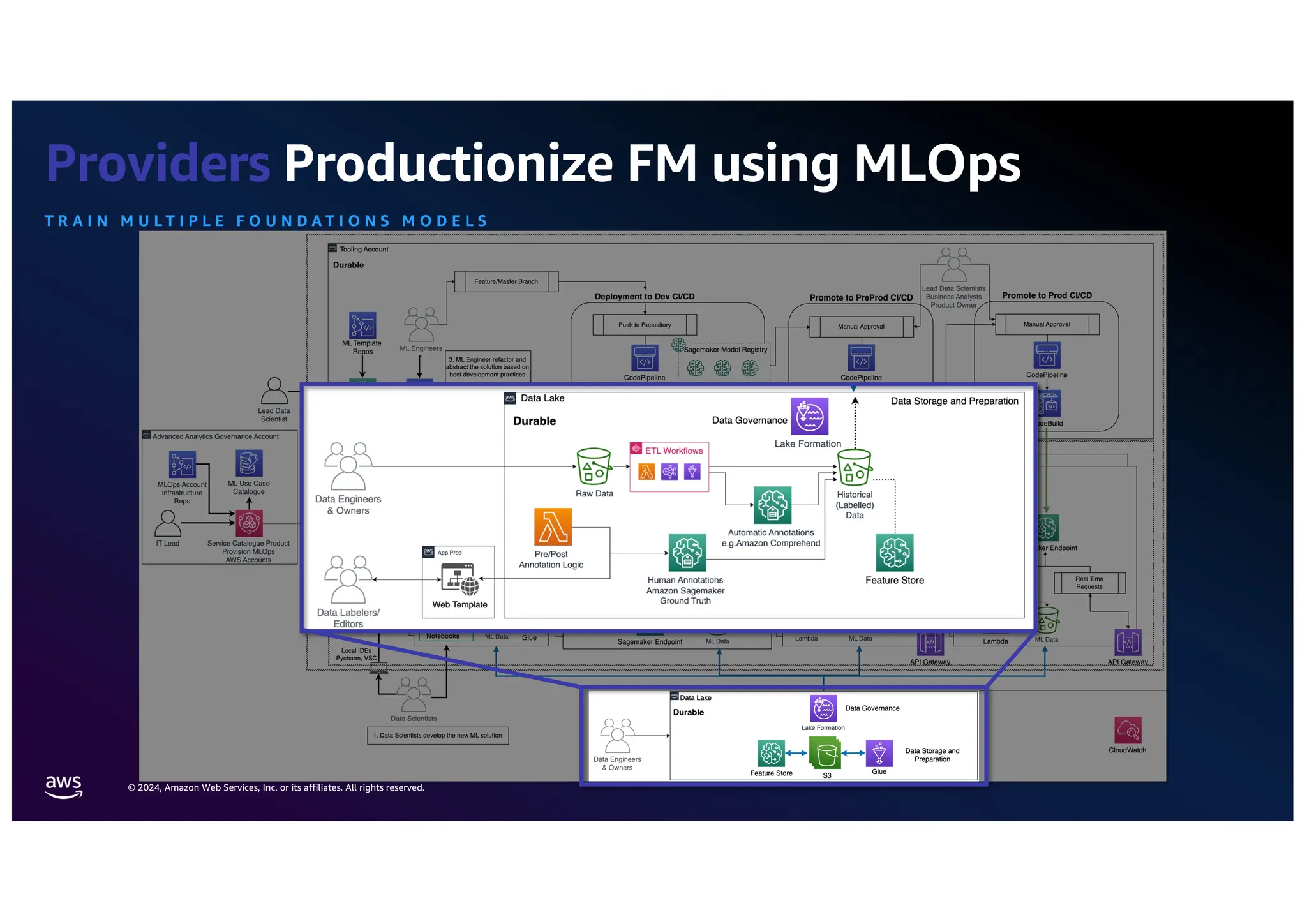

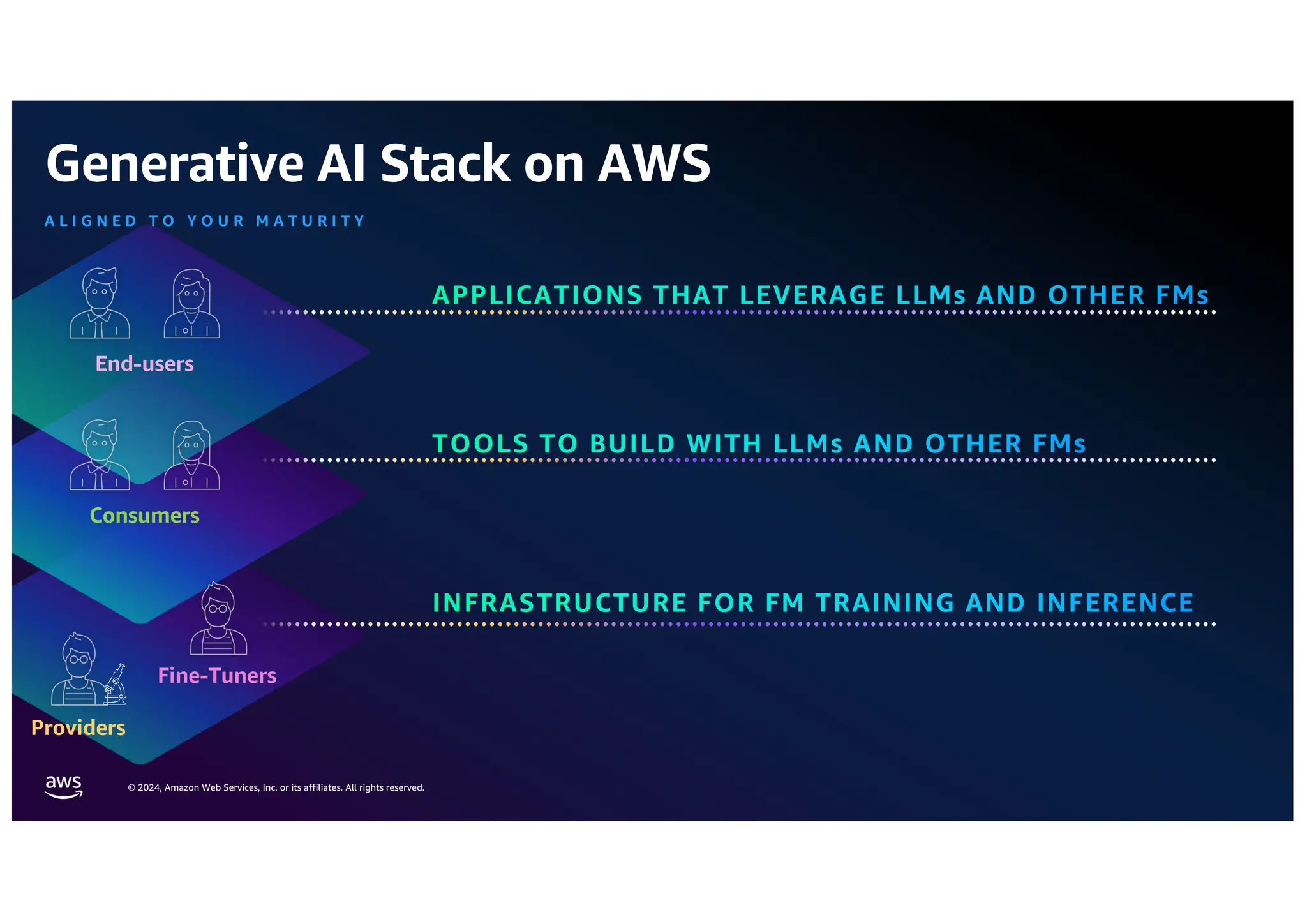

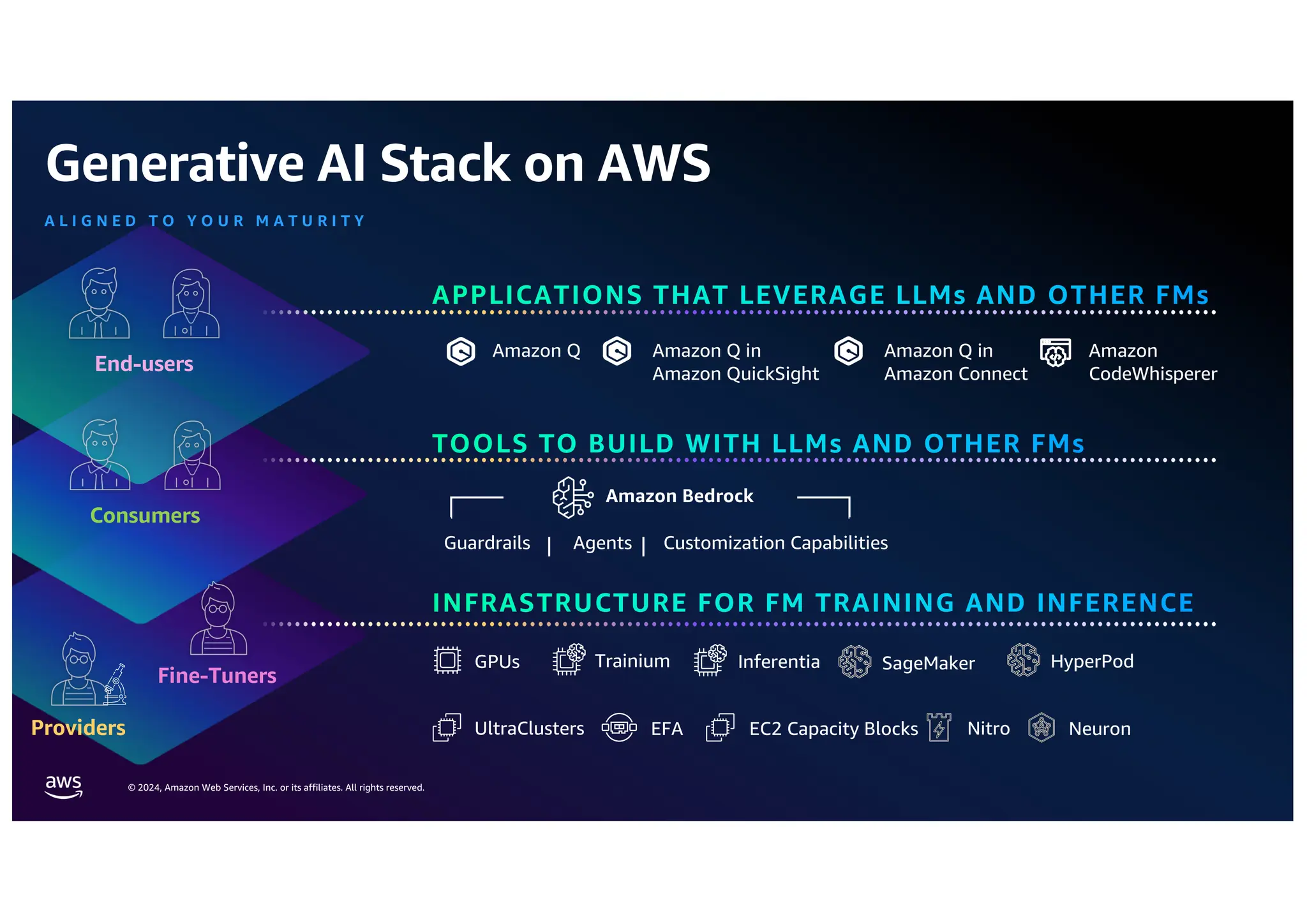

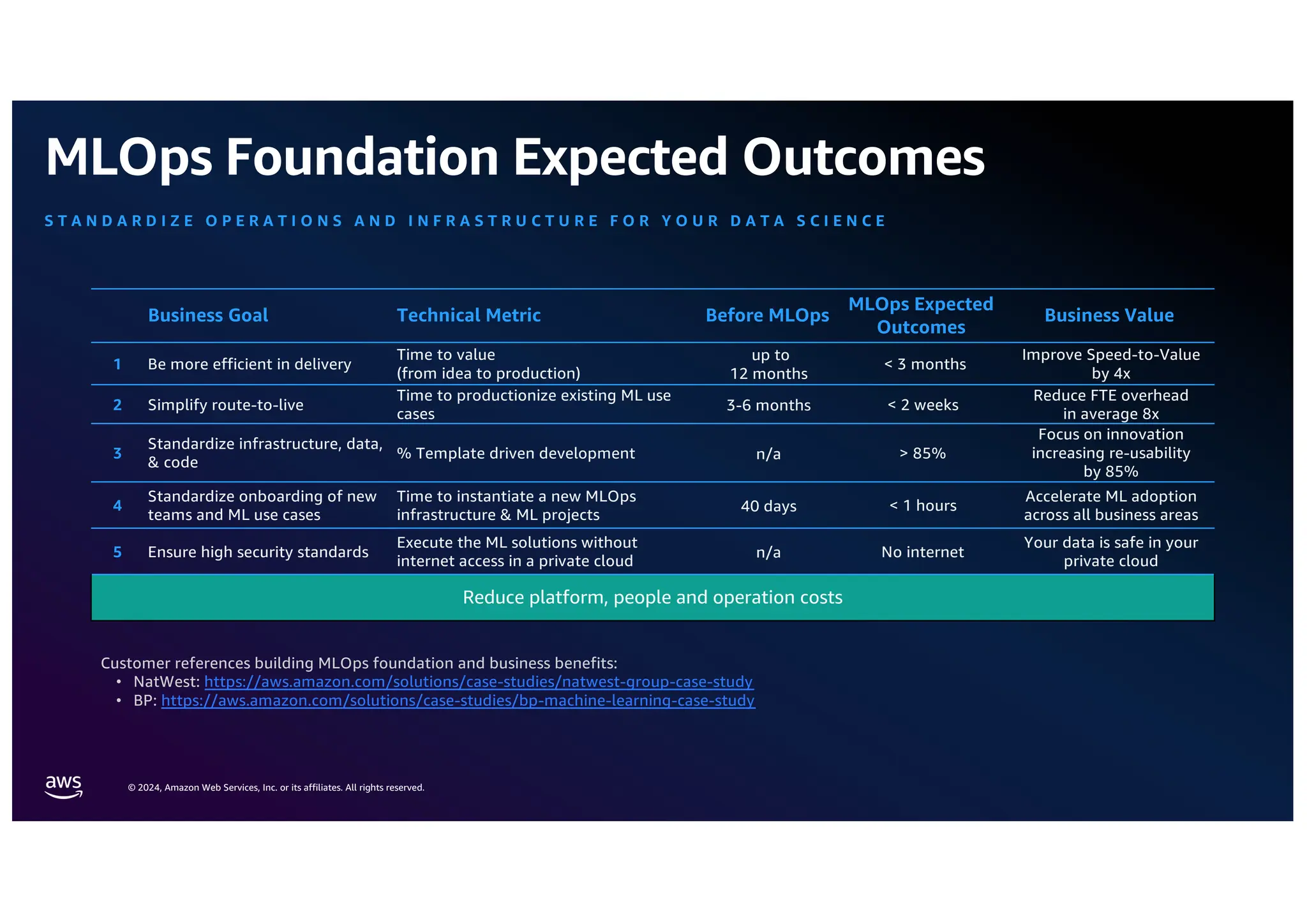

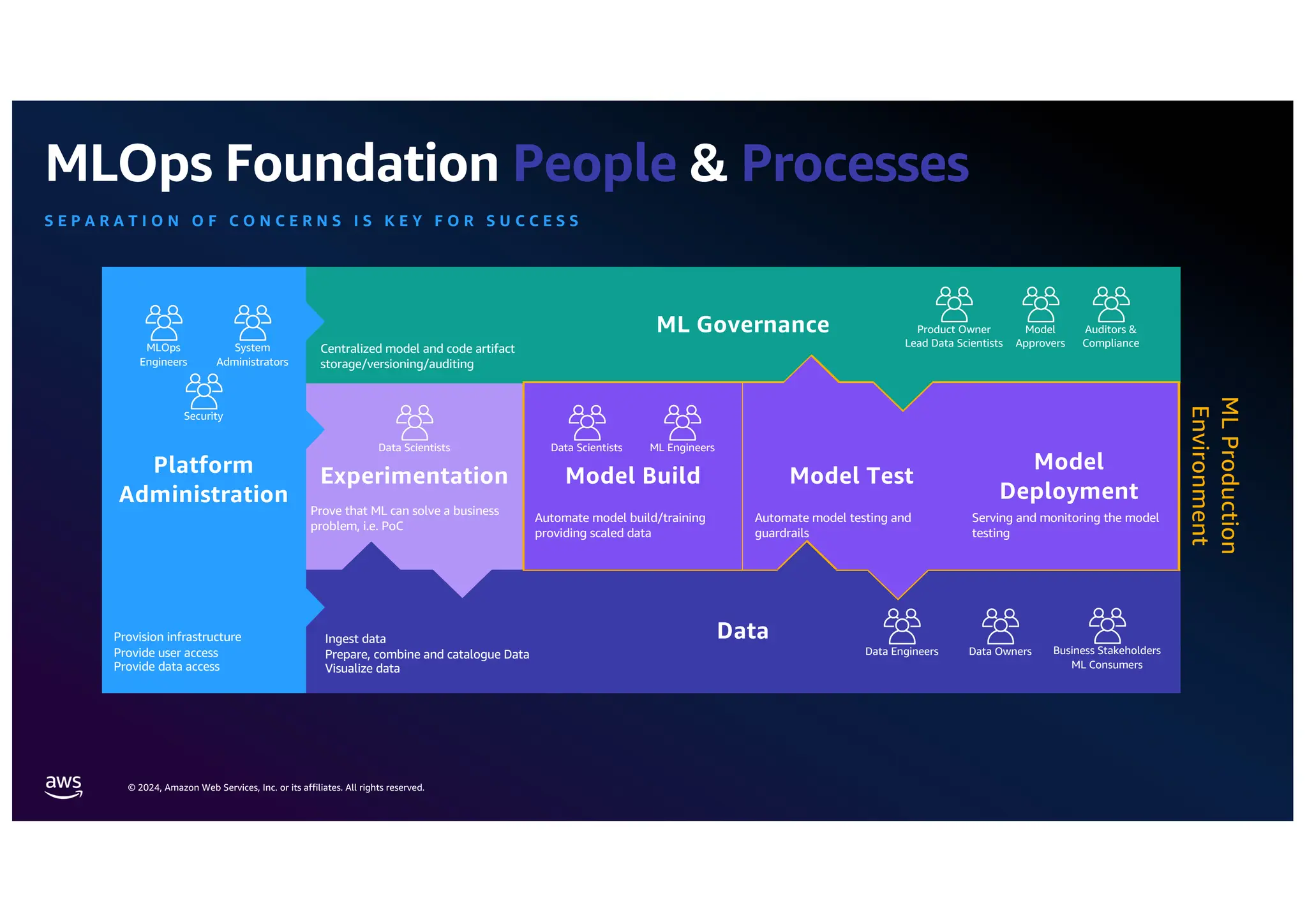

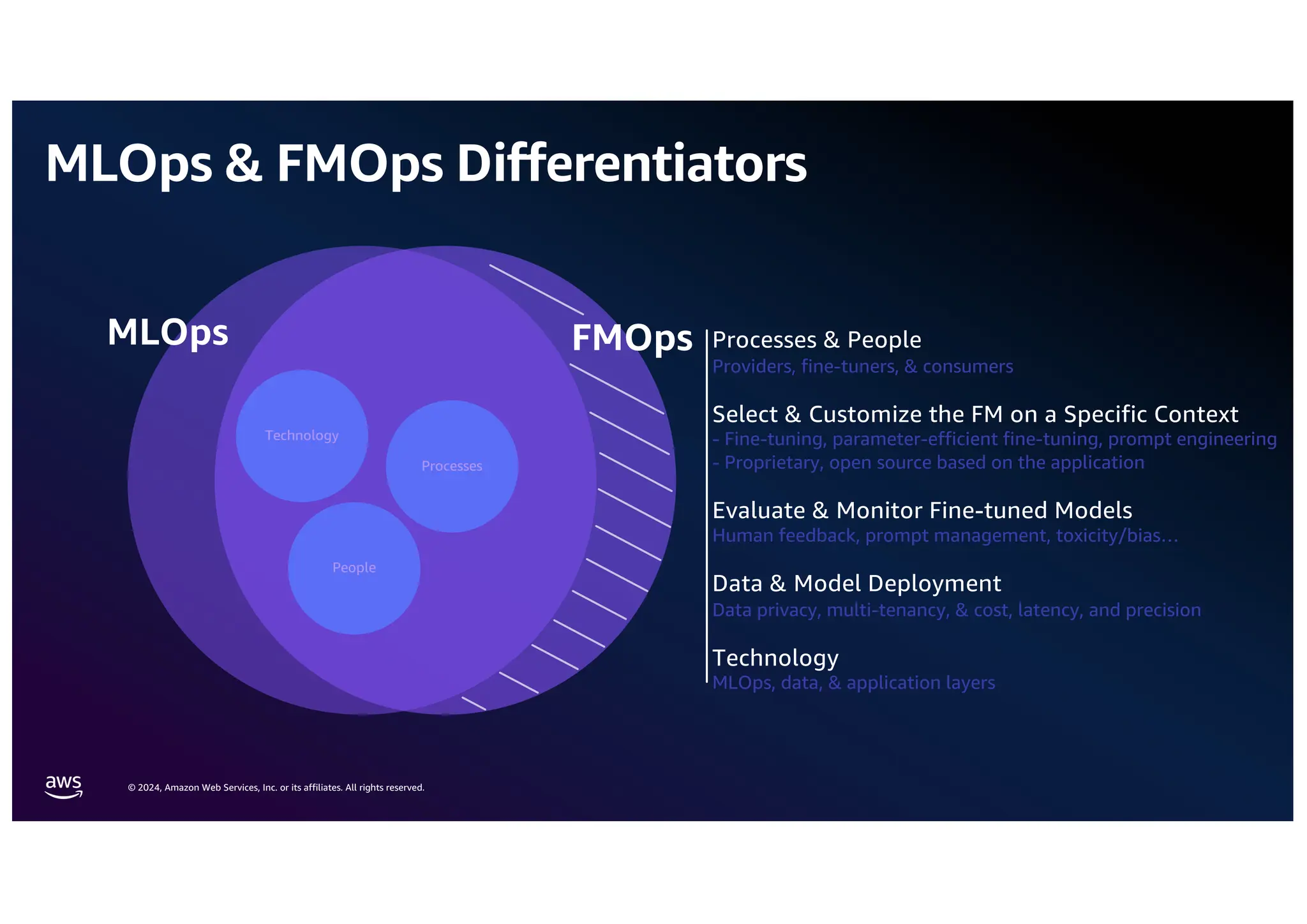

The document outlines the concept of MLOps (Machine Learning Operations) and its role in enhancing the efficiency of deploying machine learning solutions by standardizing processes, minimizing delivery times, and ensuring security. It distinguishes MLOps from FM (Foundation Model) and LLM (Large Language Model) operations, emphasizing their unique focuses on generative AI applications, evaluation of model performance, and prompt engineering. Additionally, it discusses the roles of various stakeholders, including providers, fine-tuners, and consumers in the MLOps lifecycle, and how platforms like Amazon SageMaker facilitate these processes.

![© 2024, Amazon Web Services, Inc. or its affiliates. All rights reserved.

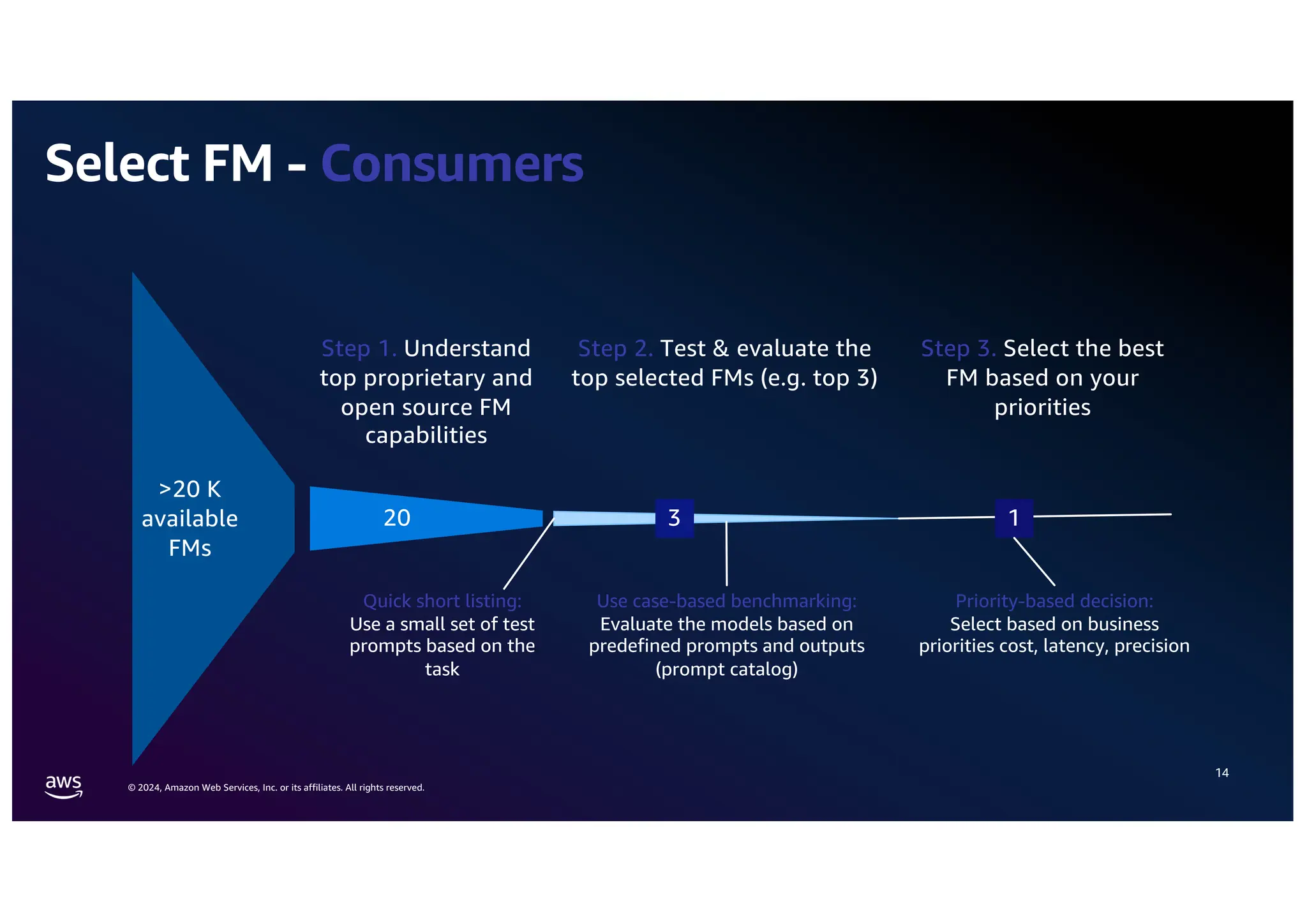

Step 2. Evaluate the top FMs

19

Does labelled data

exist?

Does the use case

provide discrete

outcomes/outputs?

Accuracy metrics

High evaluation precision but not

many use cases

Task-specific metrics

Medium evaluation precision that

might require human evaluation but

many use cases

Human in the Loop

(HIL)

High evaluation precision but costly

and time consuming

LLM

Unknown evaluation precision

(depend on the LLM) but automated

Should we automate

the test process?

Yes

Classic ML metrics e.g. precision,

…

Similarity: ROUGE, cosine similarity [0,1], …

Prompt Stereotyping: Cross-entropy loss

Toxicity: Detoxify, Toxigen

Factual Knowledge: HELM, LAMA Benchmark

Semantic Robustness: Word error rate

No Manually human feedback

based on predefined

assessment rules e.g. using

GroundTruth

Feed the outputs to a ”reliable”

LLM and instruct it to rate the

outcome with an score and

explanation

Yes

No

Yes

No](https://image.slidesharecdn.com/fmopsllmops-operationalisegenerativeai-240907090435-2081e4b5/75/Oleksii-Ivanchenko-FMOps-LLMOps-MLOps-UA-16-2048.jpg)