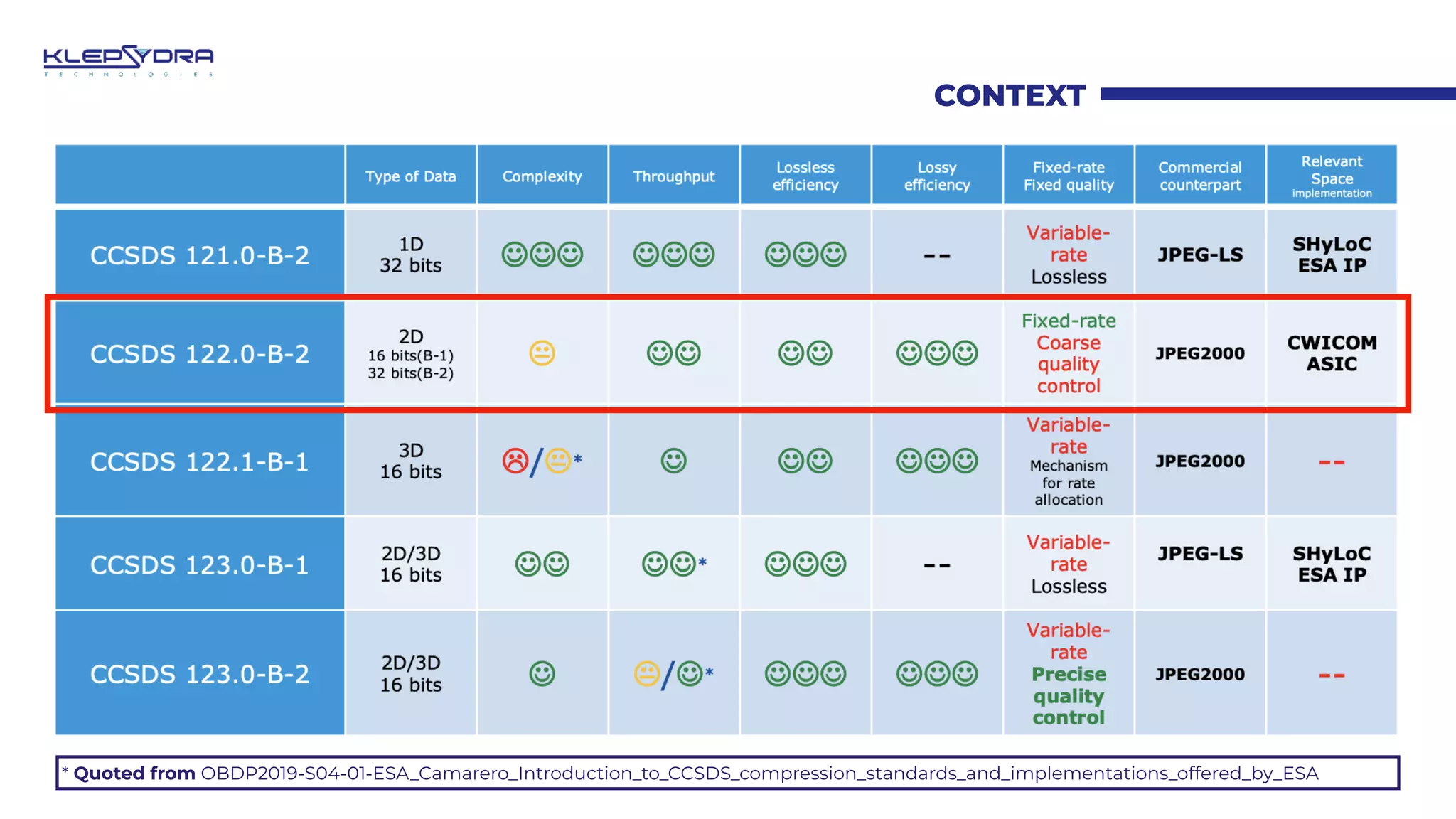

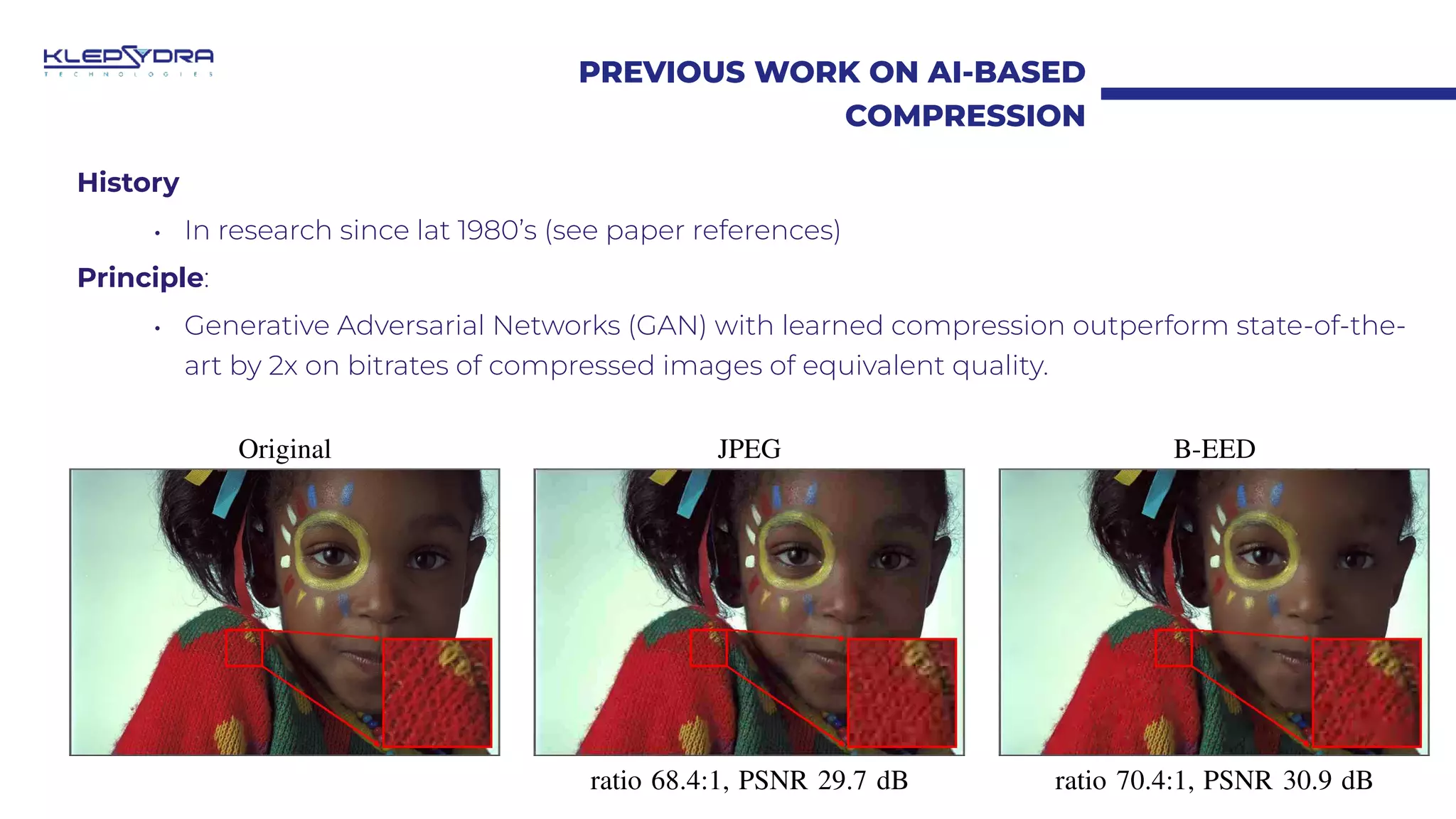

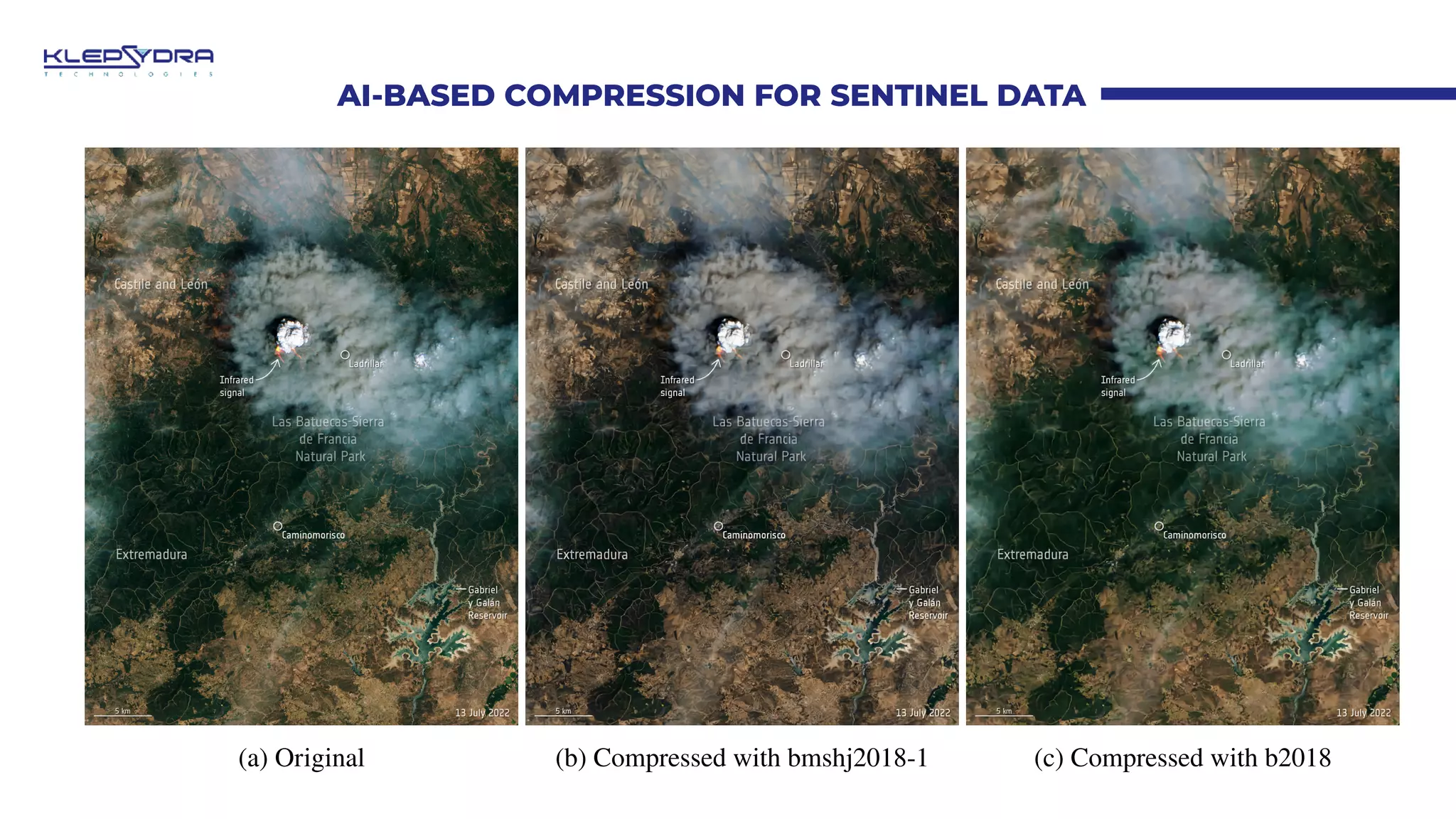

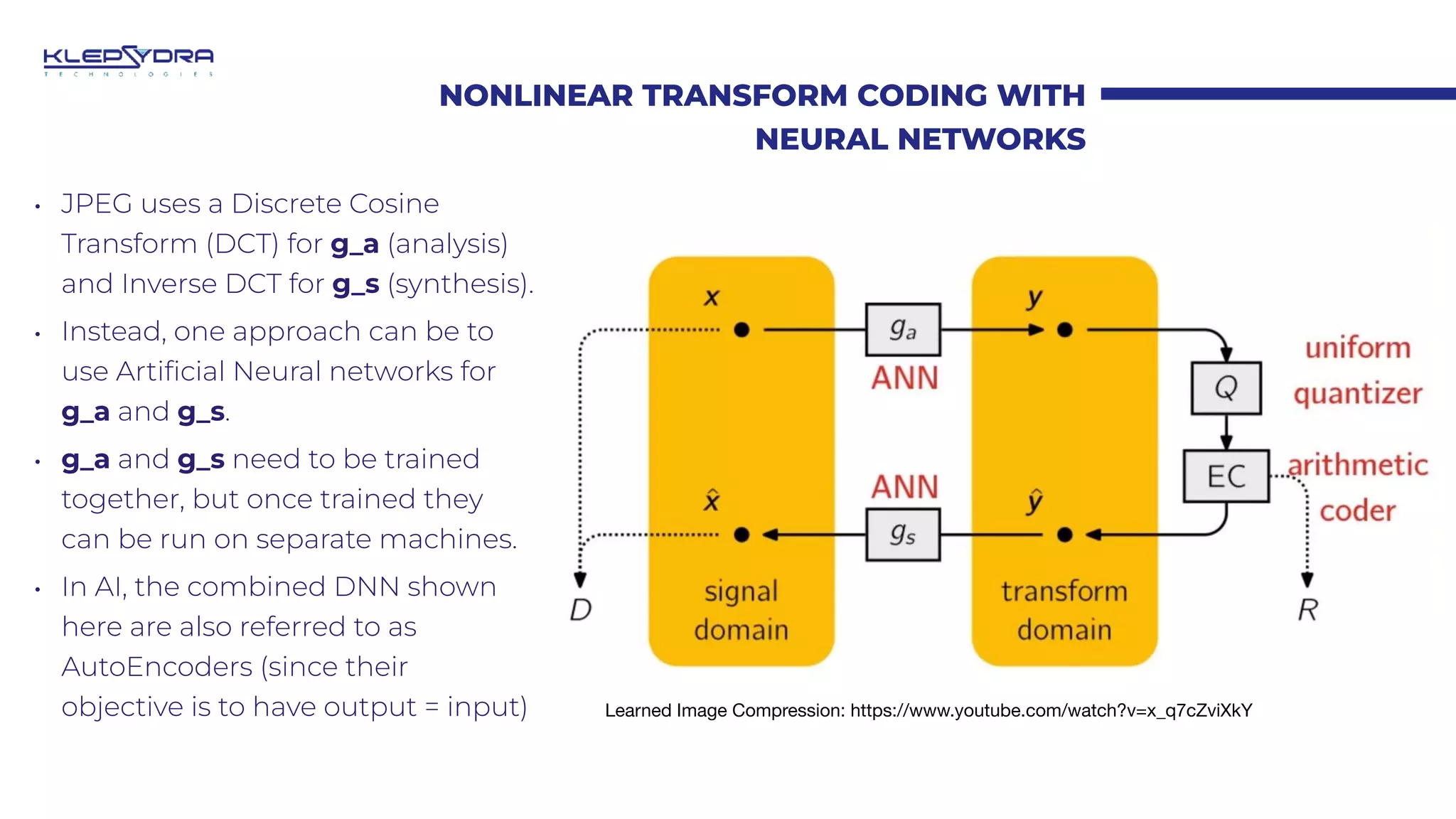

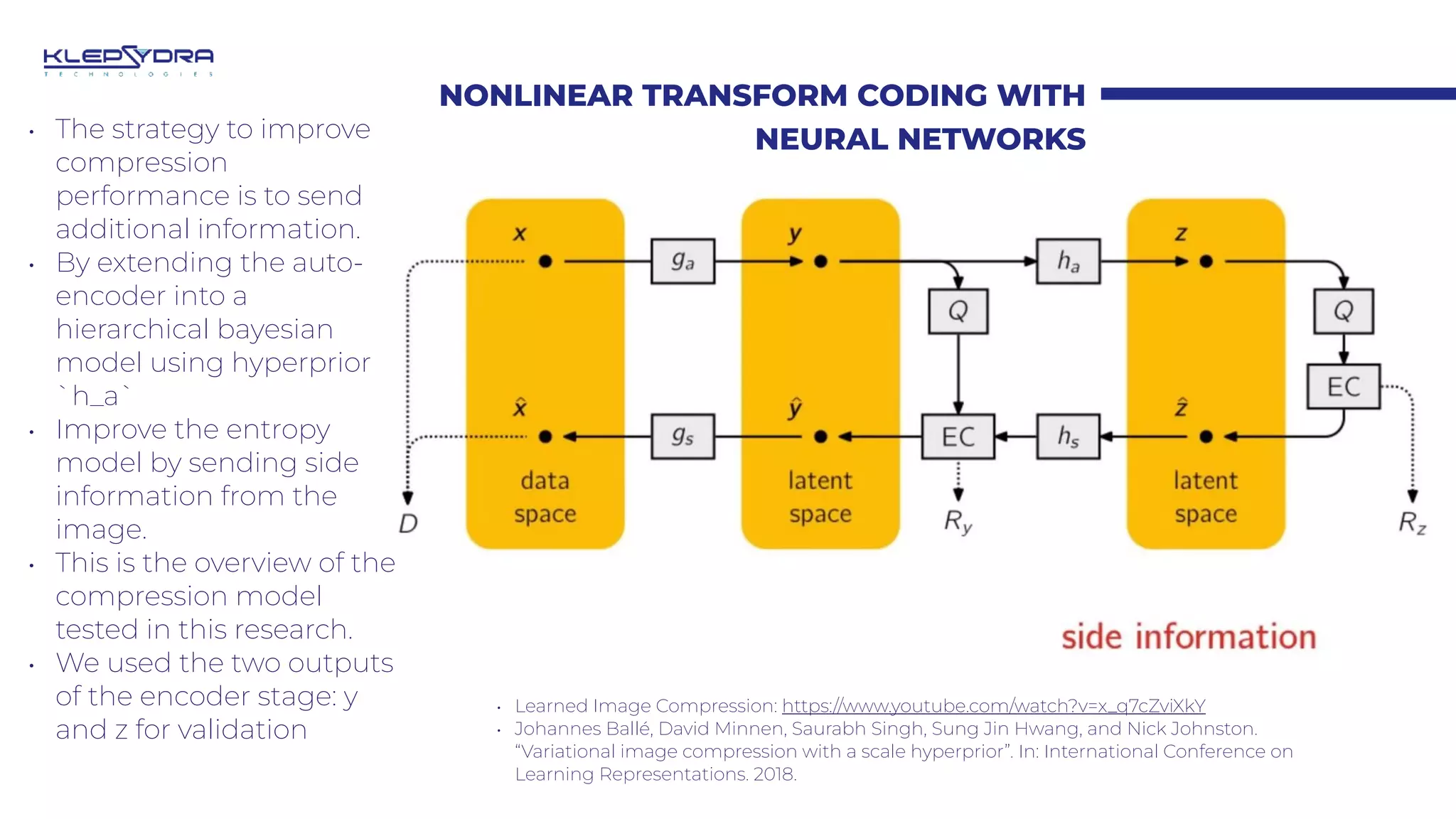

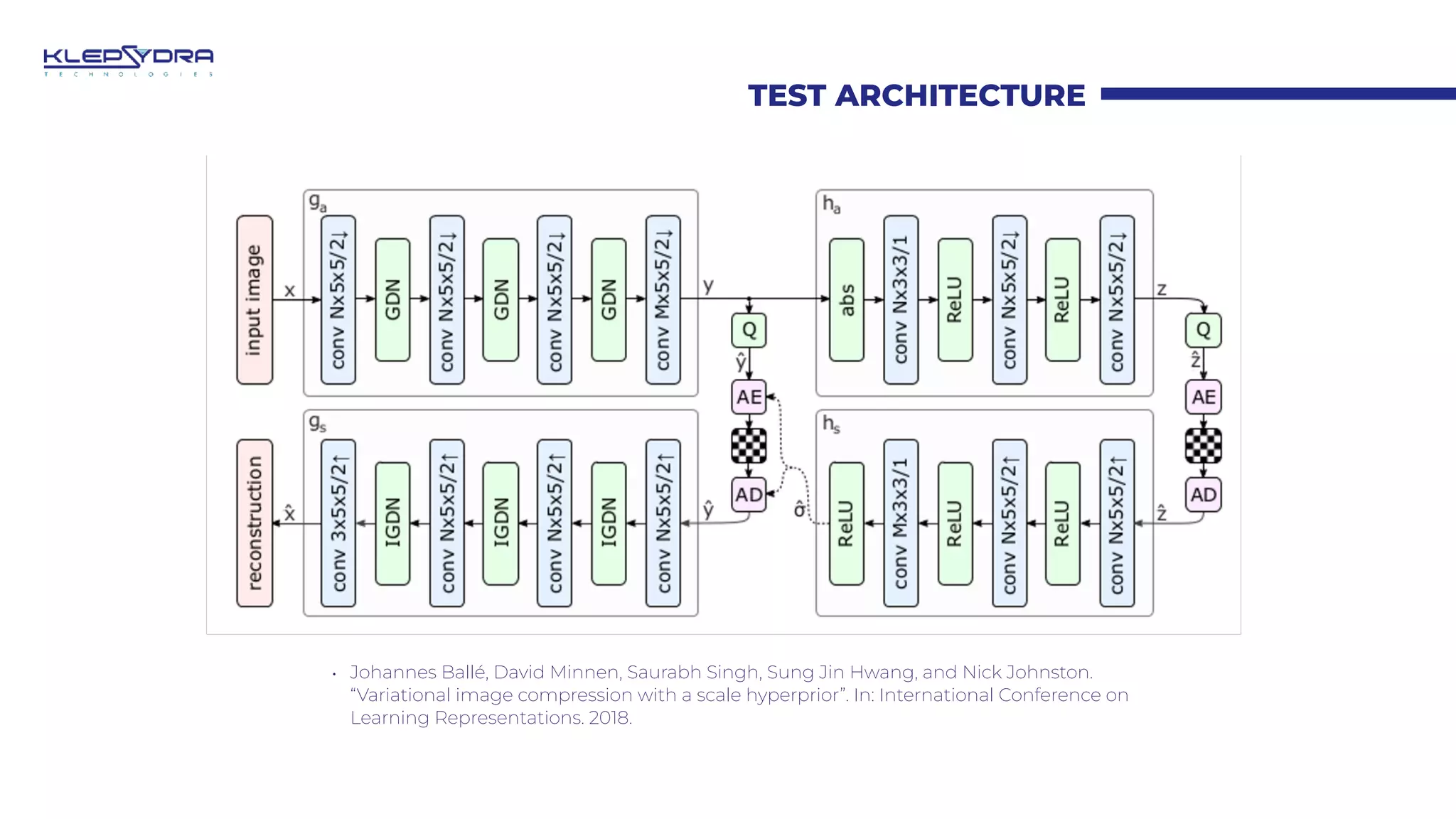

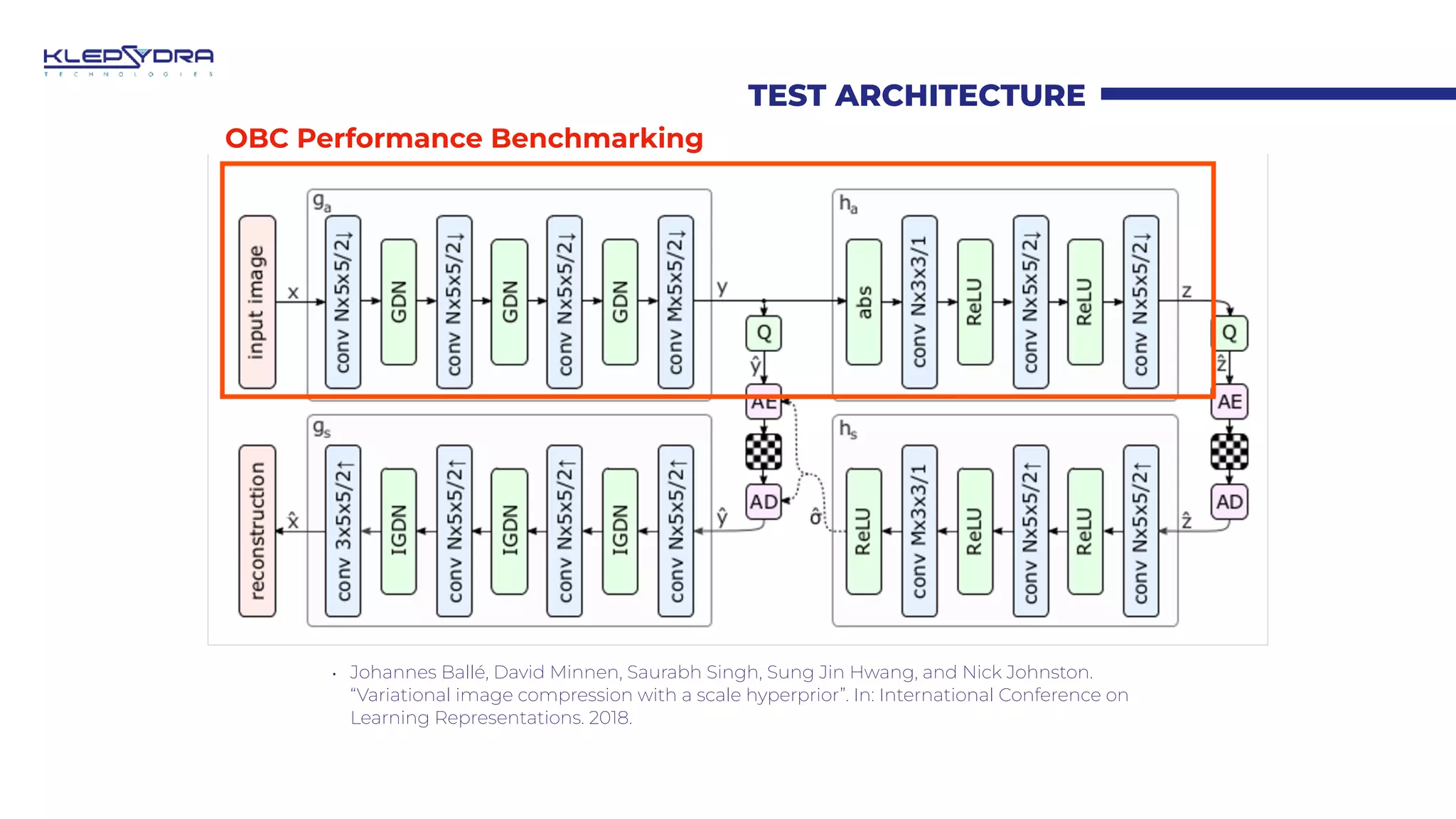

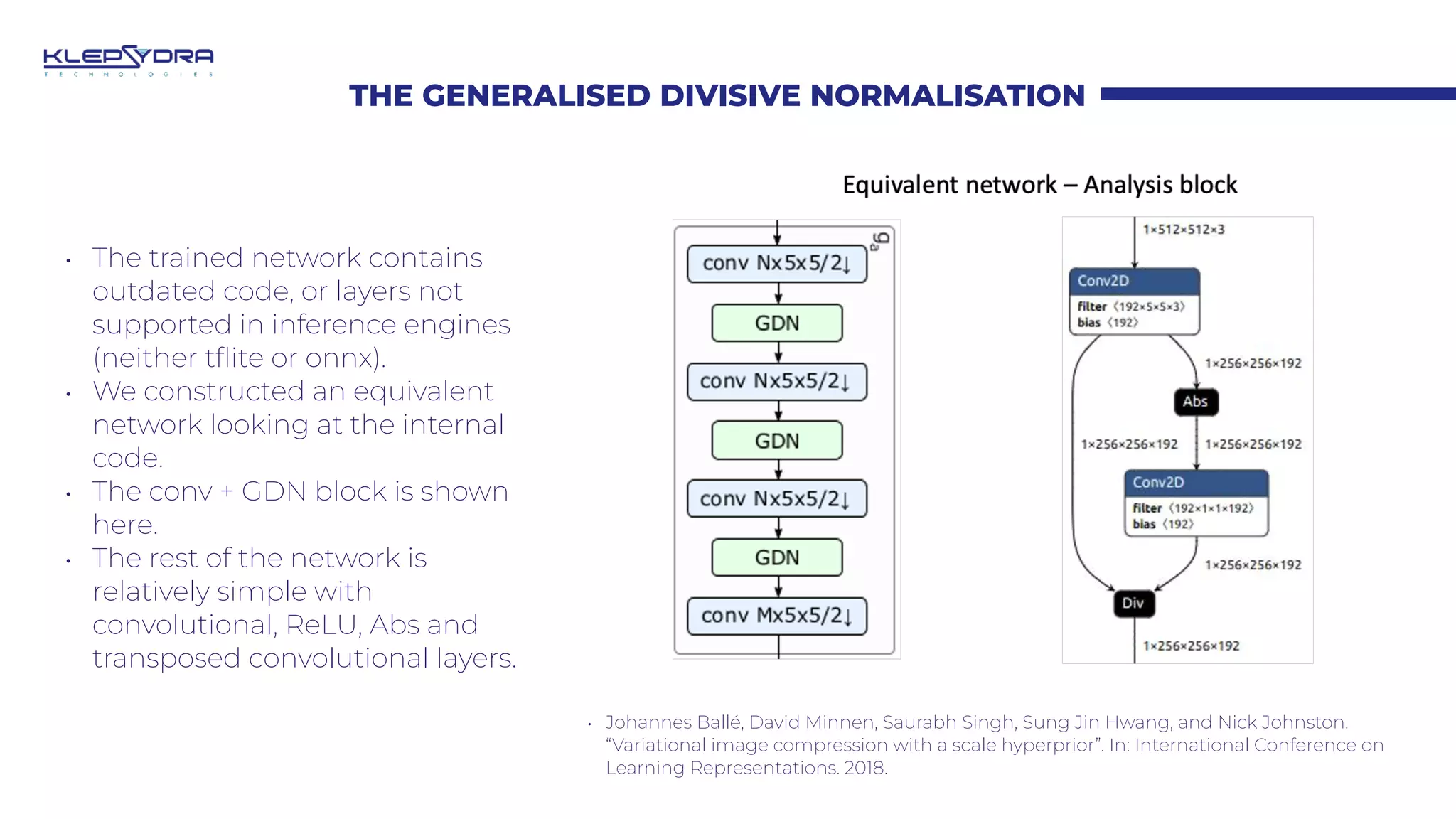

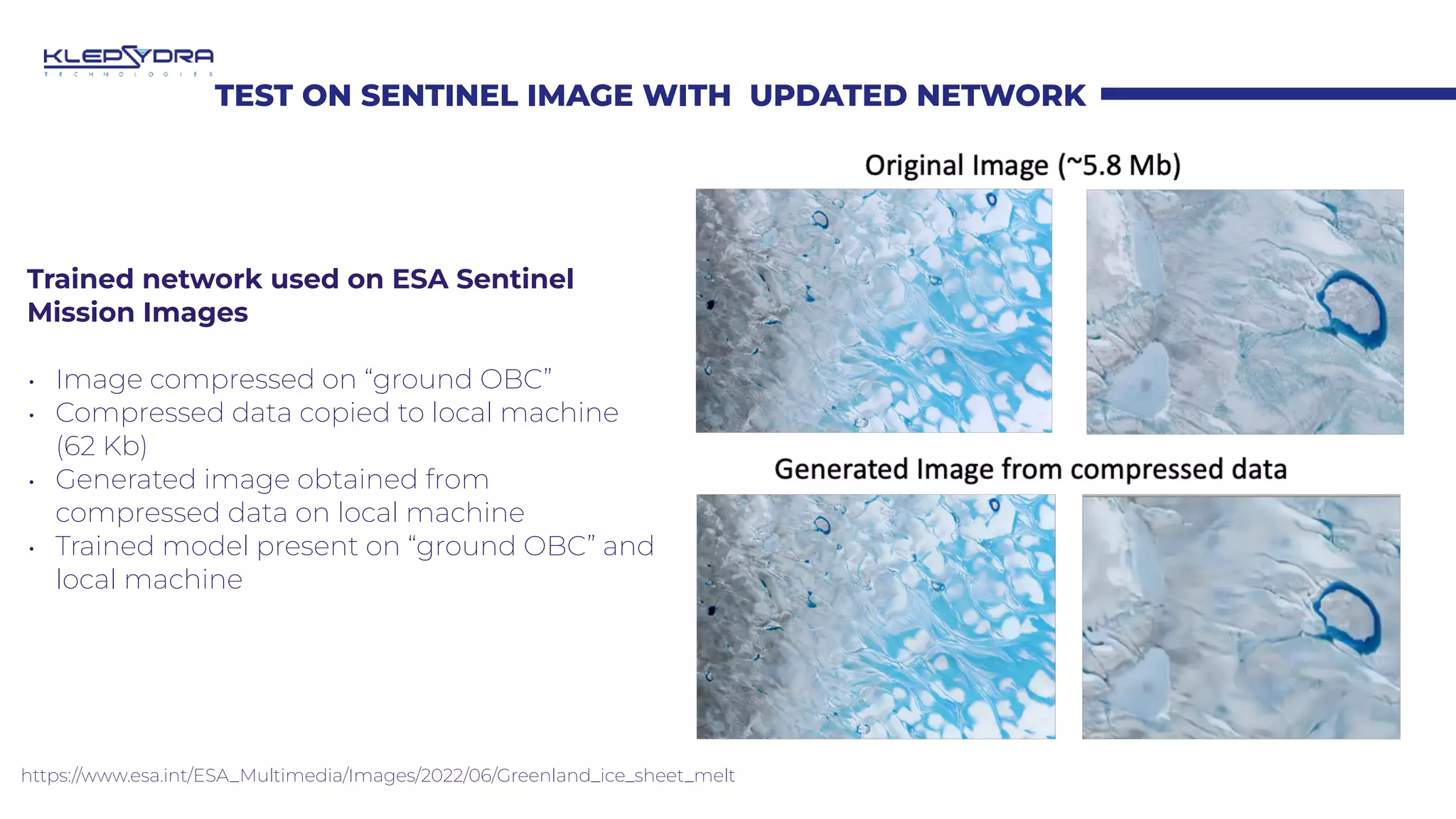

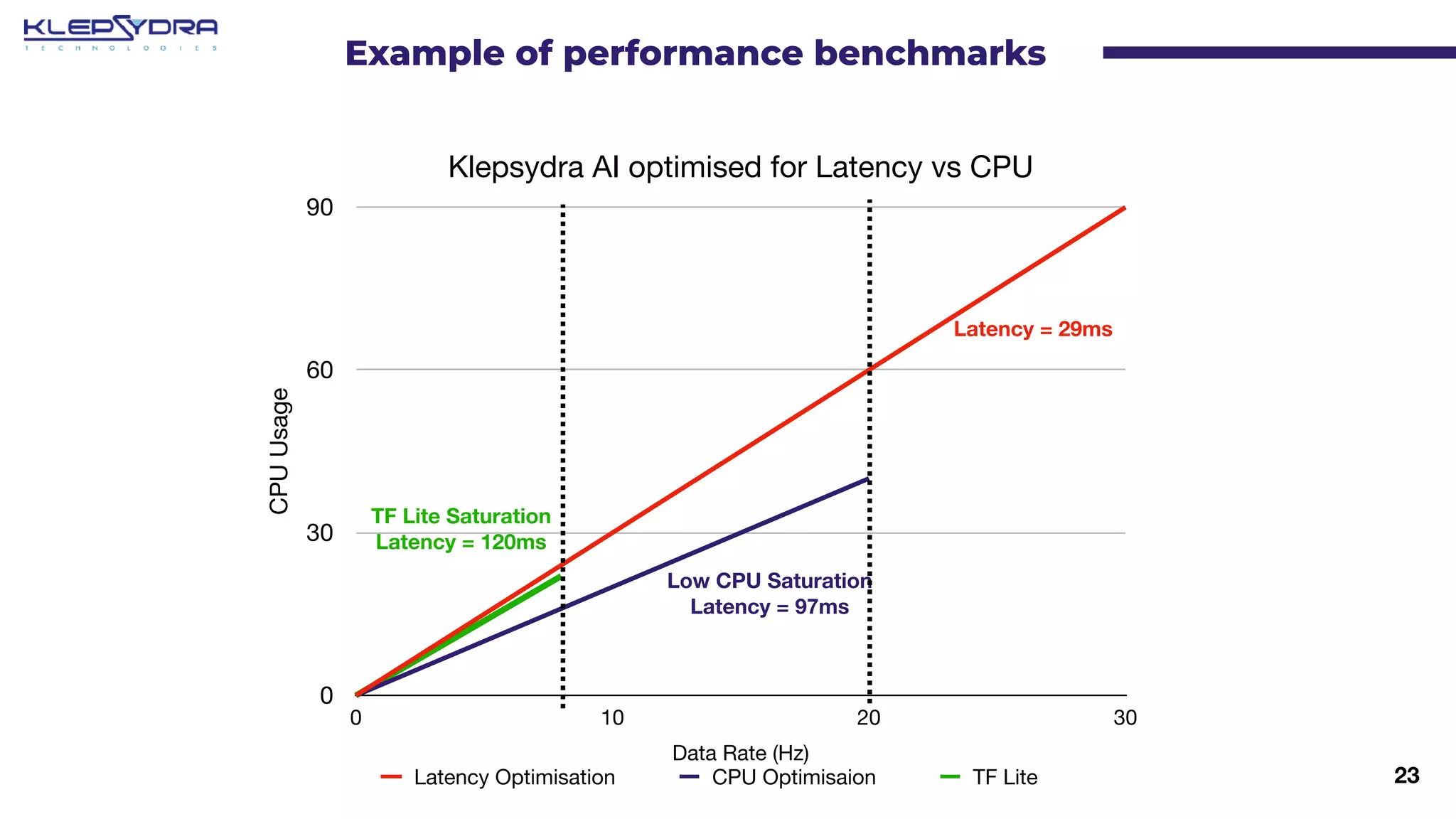

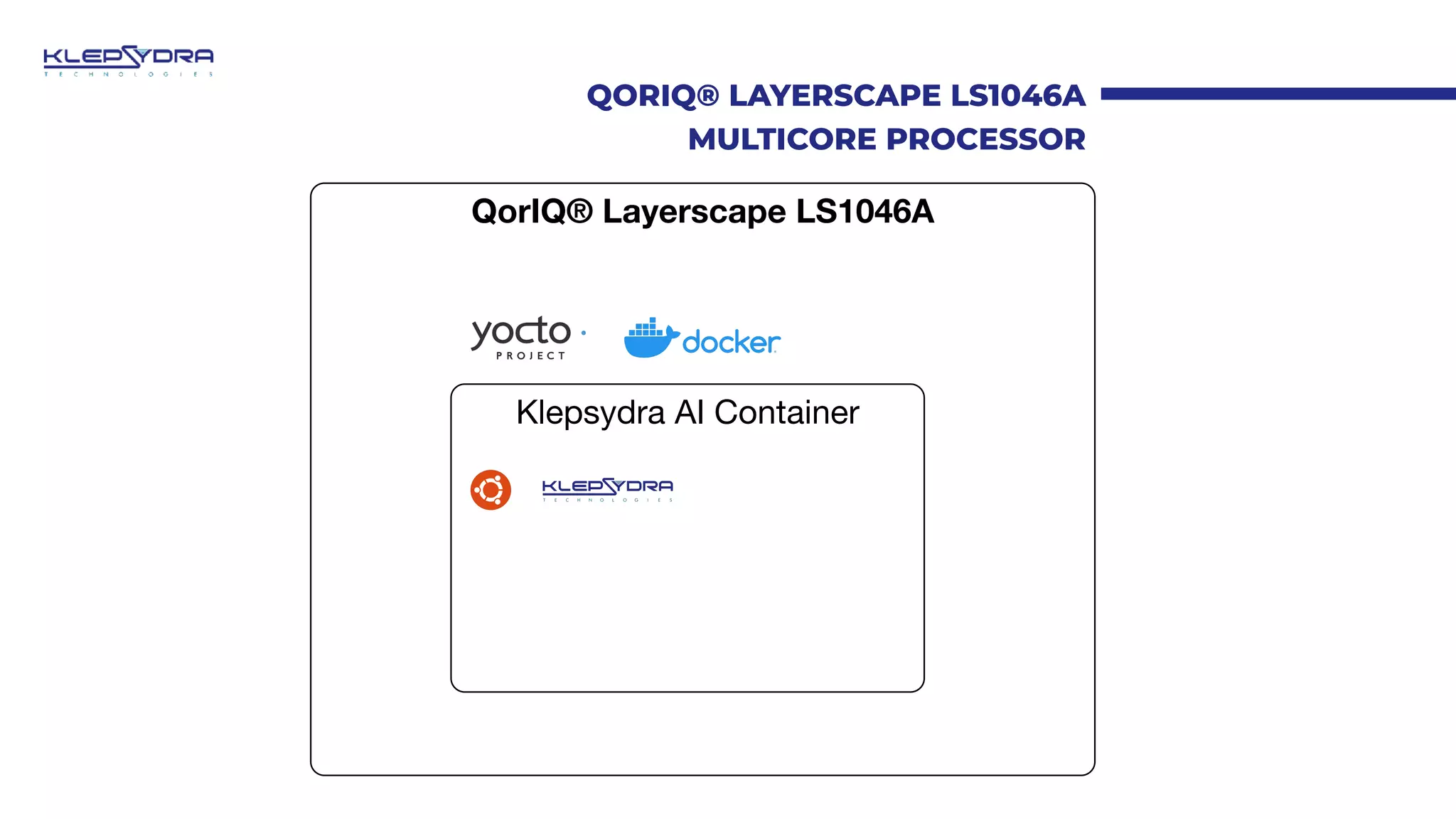

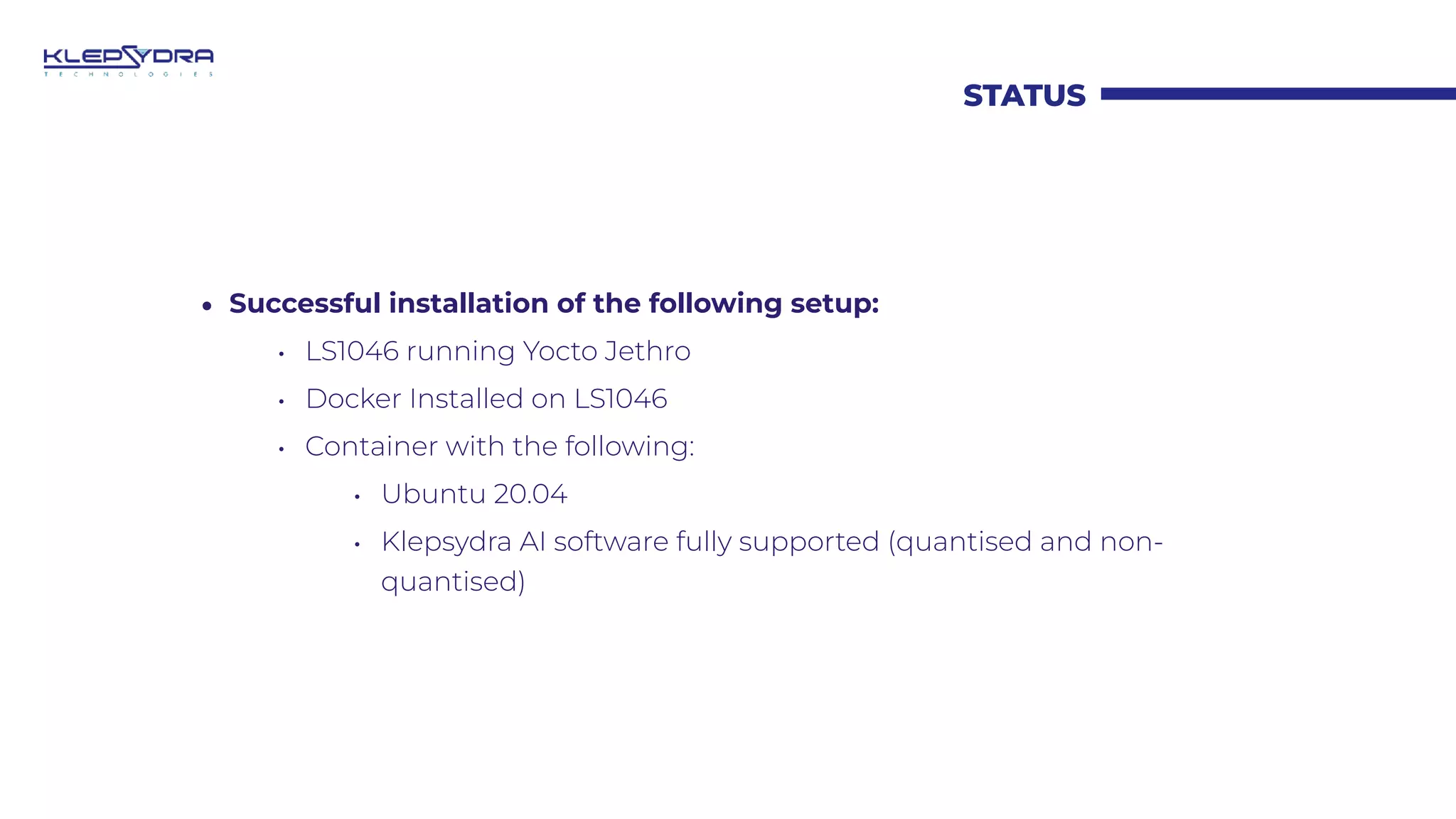

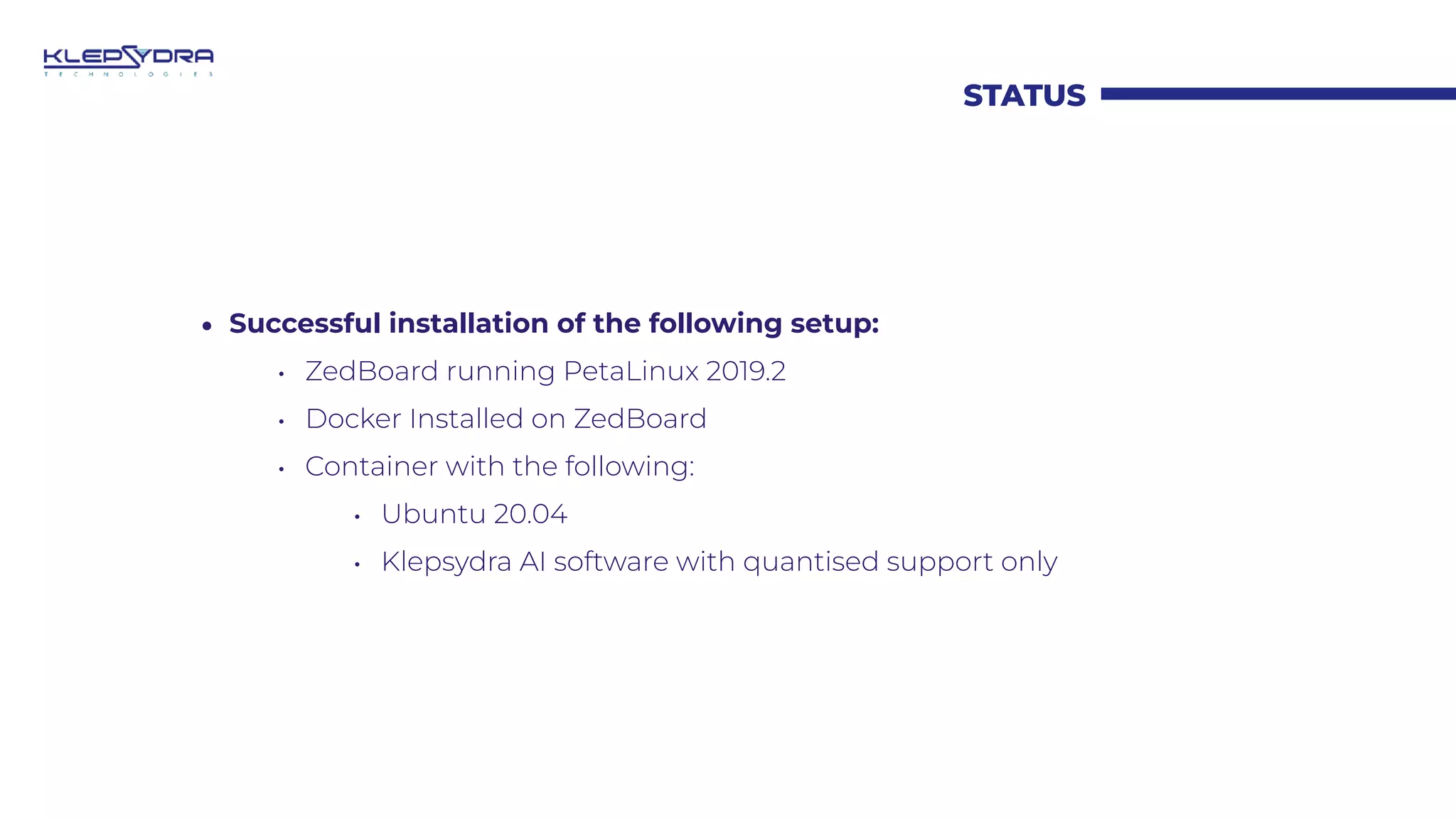

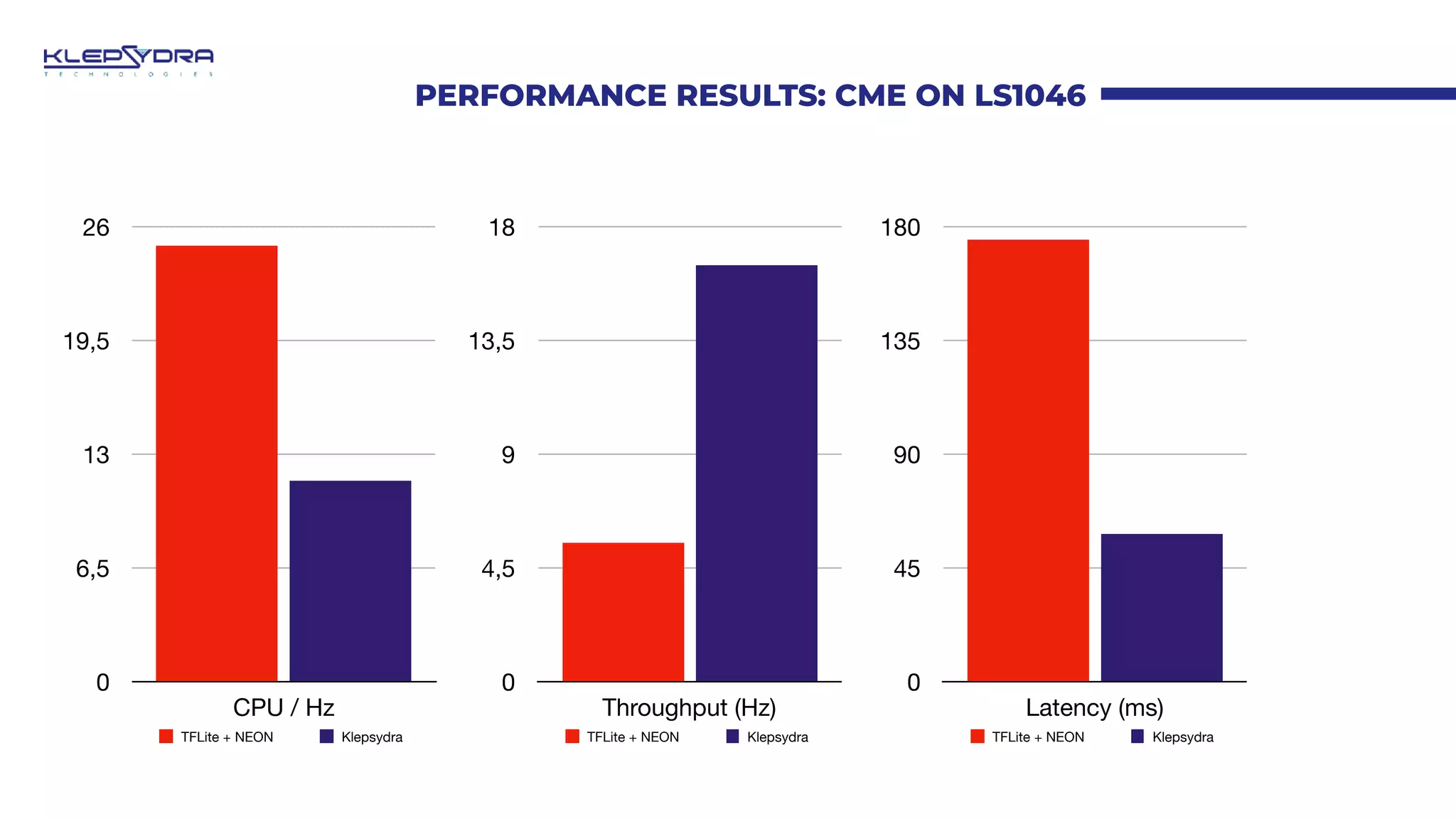

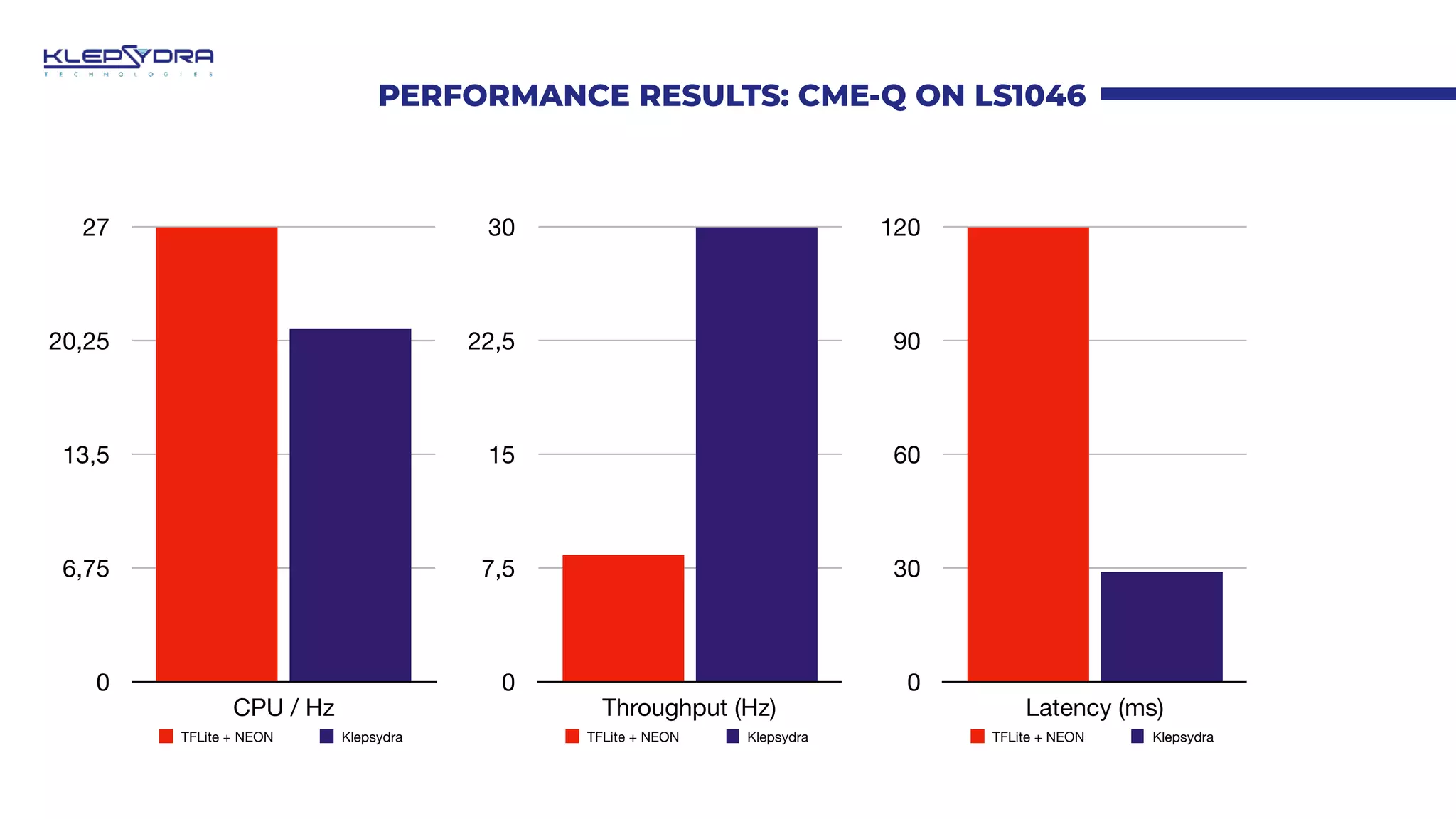

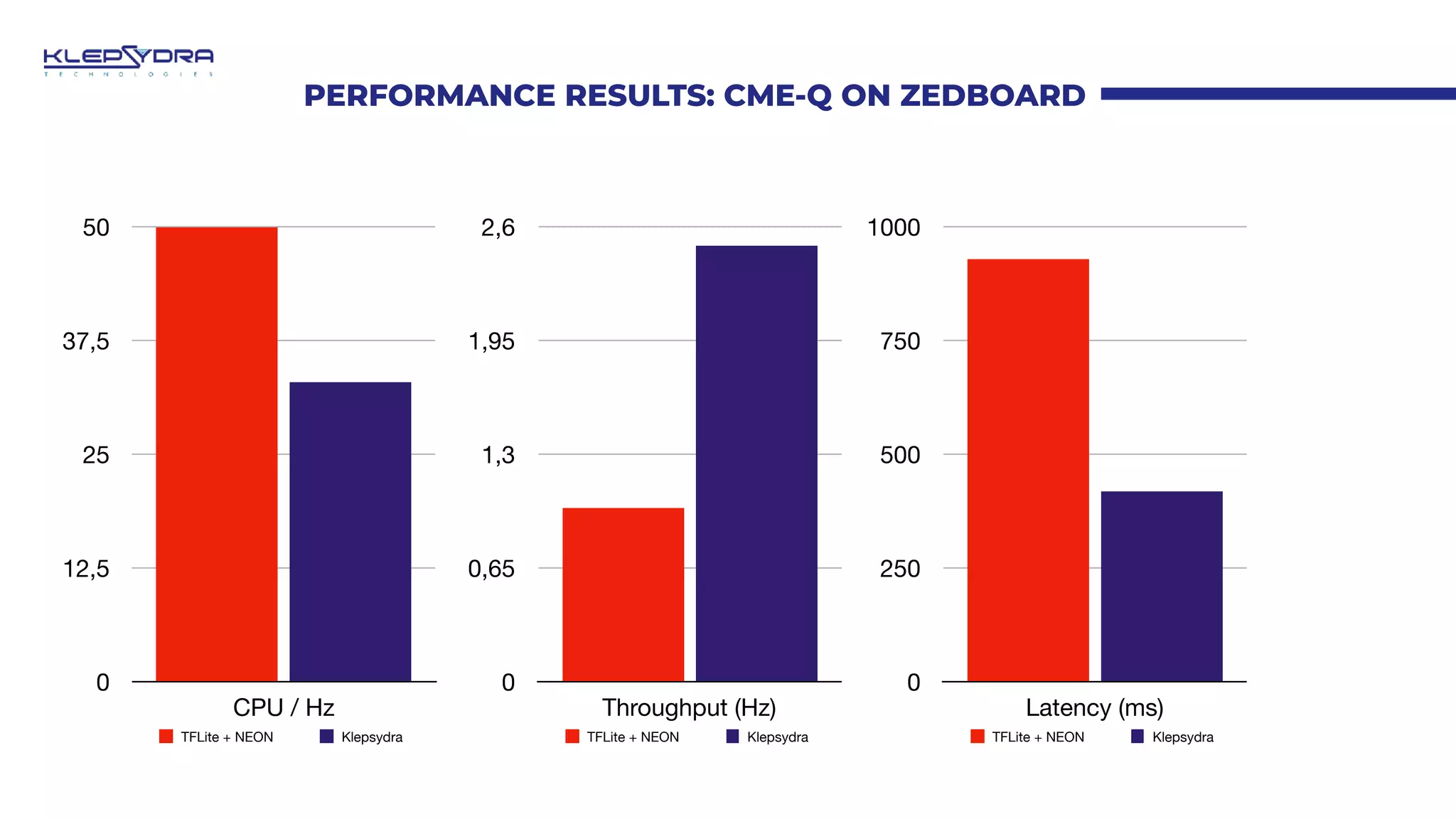

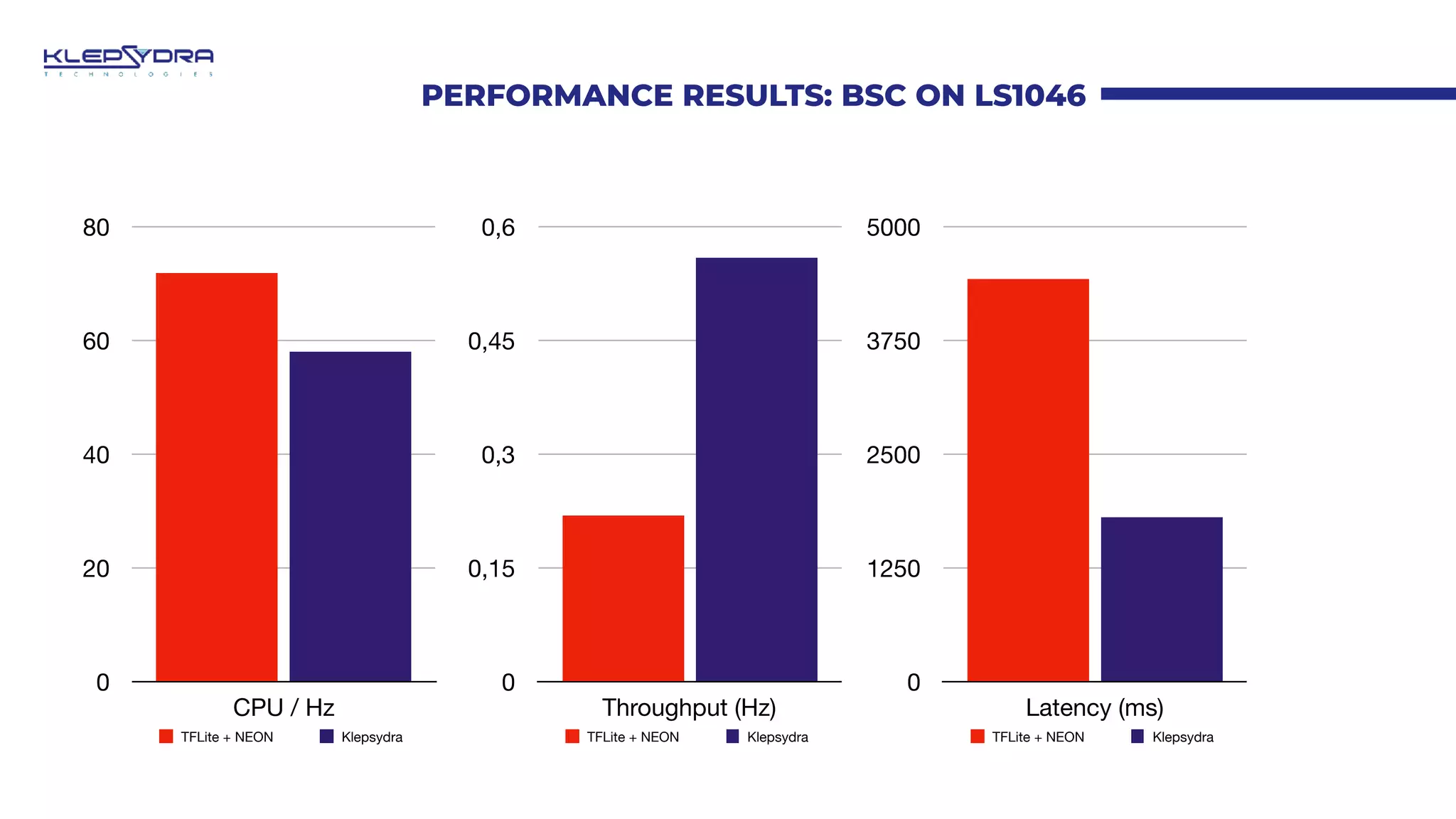

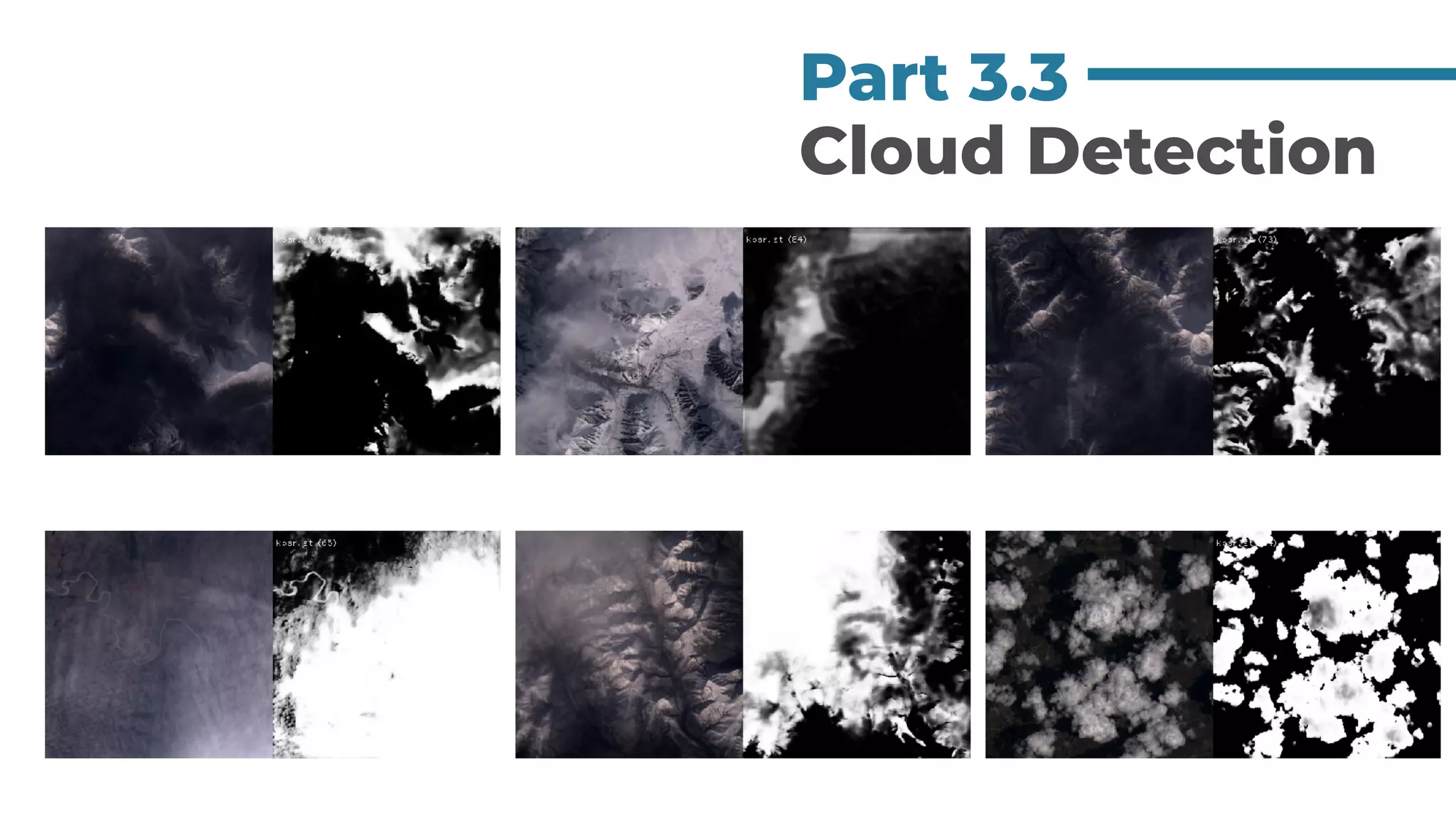

The document presents research on low power onboard image compression using deep neural networks, highlighting the advantages of AI-based compression techniques that outperform traditional methods like JPEG by achieving better compression ratios and image quality. It discusses the architecture and performance benchmarks of the compression models deployed on onboard systems, including the use of generative adversarial networks and advanced hierarchical Bayesian models. The conclusions indicate promising future work in further optimizing AI compression for space applications and enhancing performance on ARM architectures.