The document discusses several numerical methods for solving initial value problems for ordinary differential equations (ODEs), including:

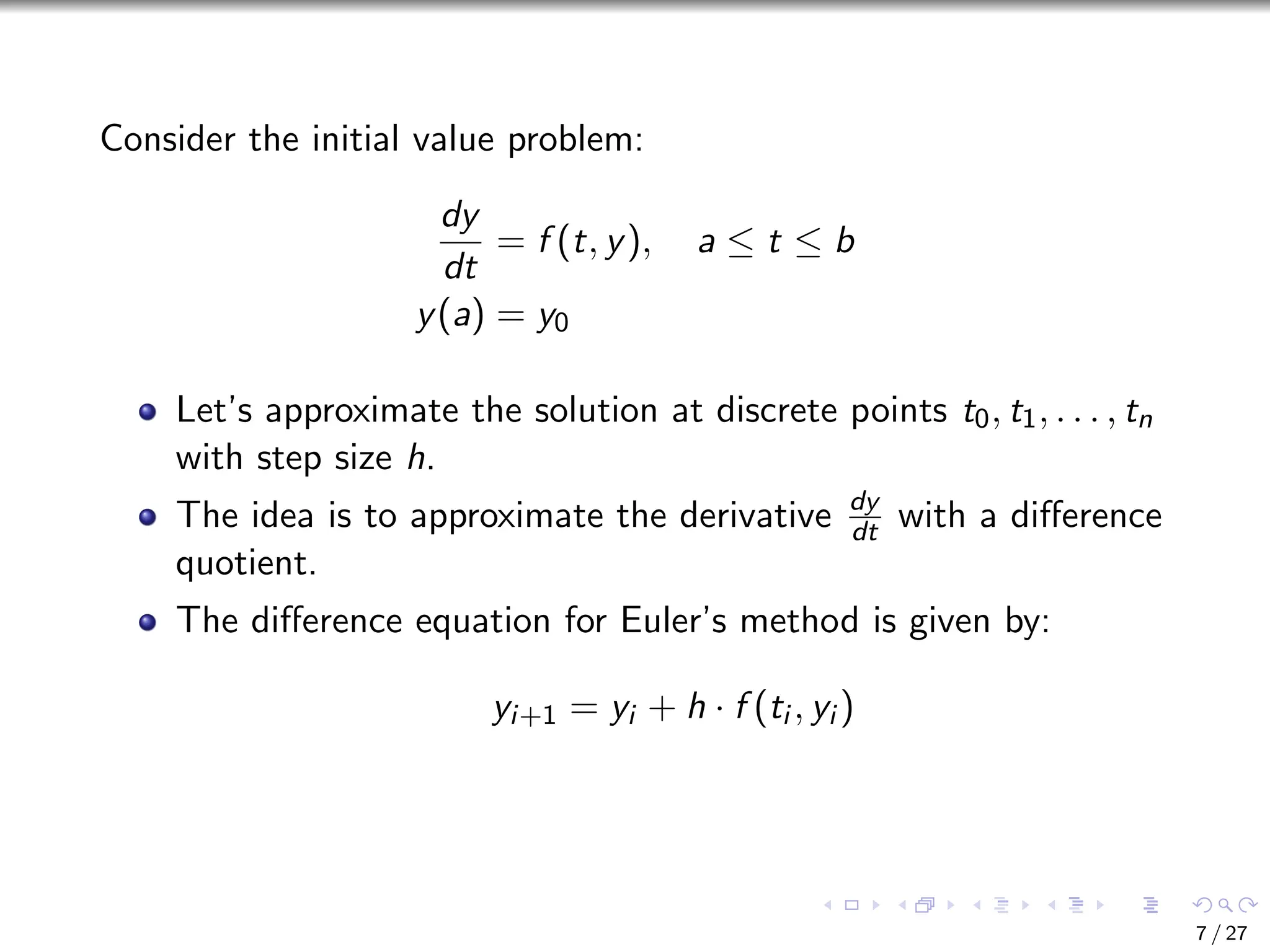

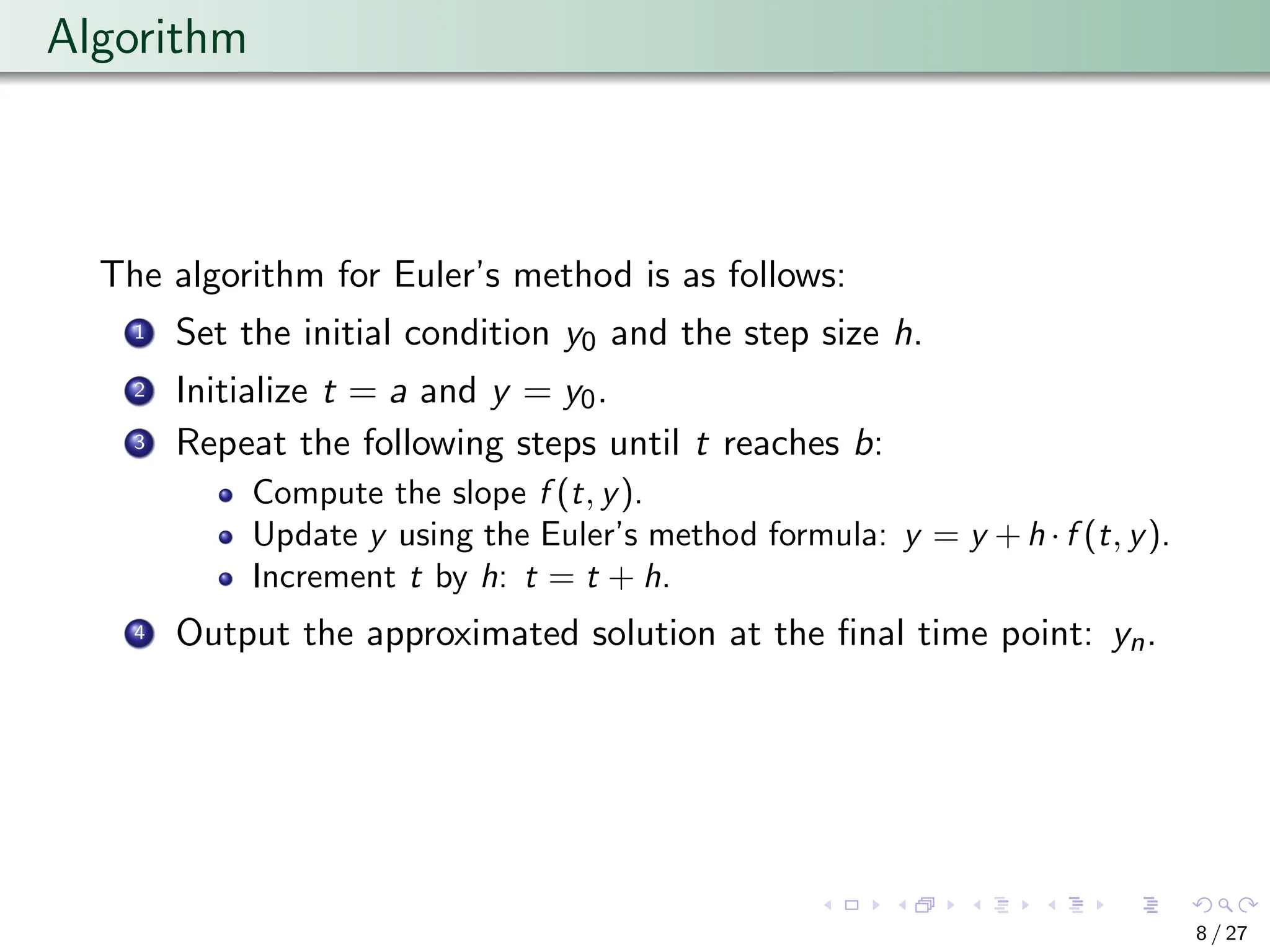

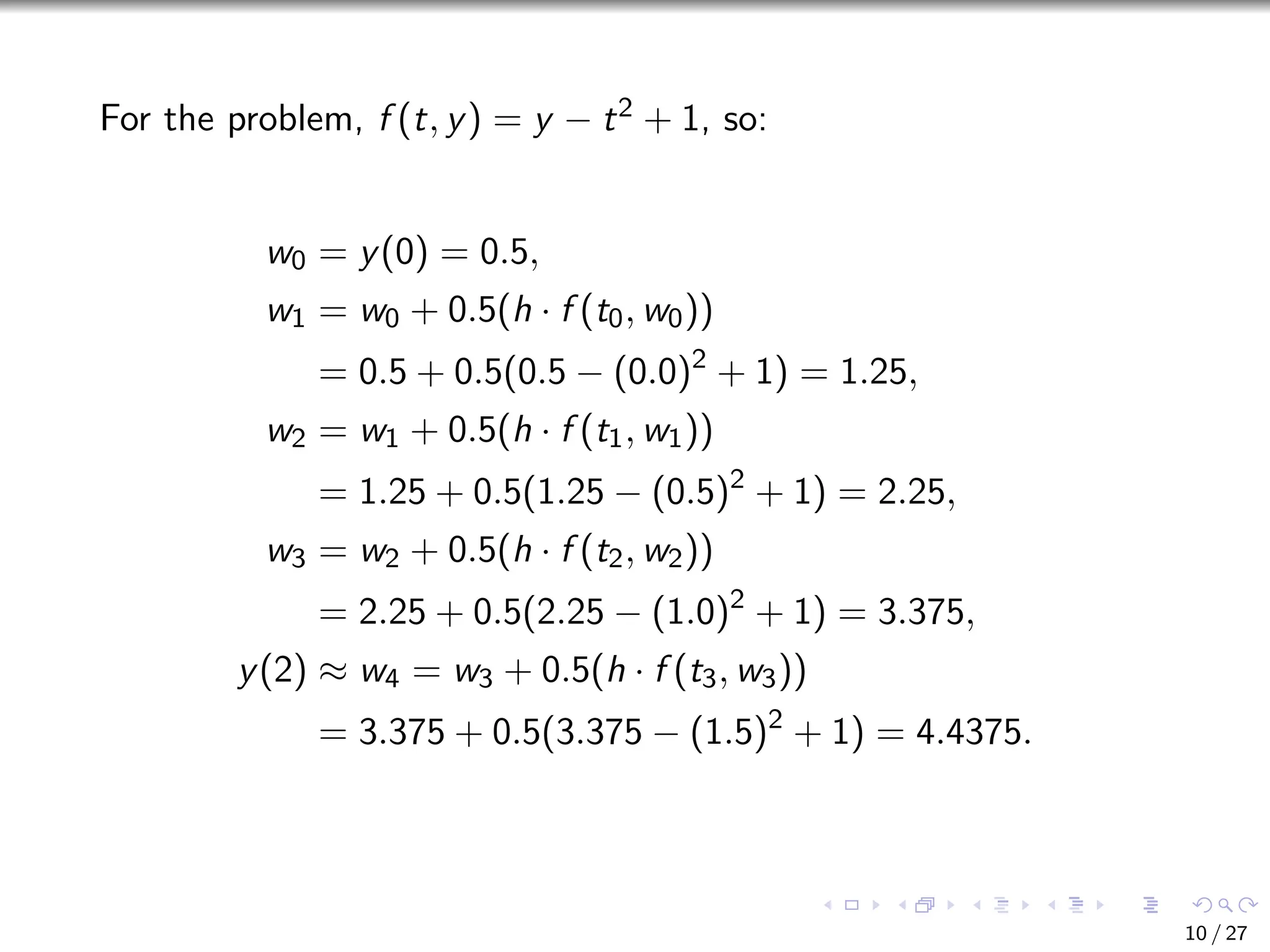

- Euler's method, which approximates the solution by dividing the interval into small steps based on a Taylor series expansion.

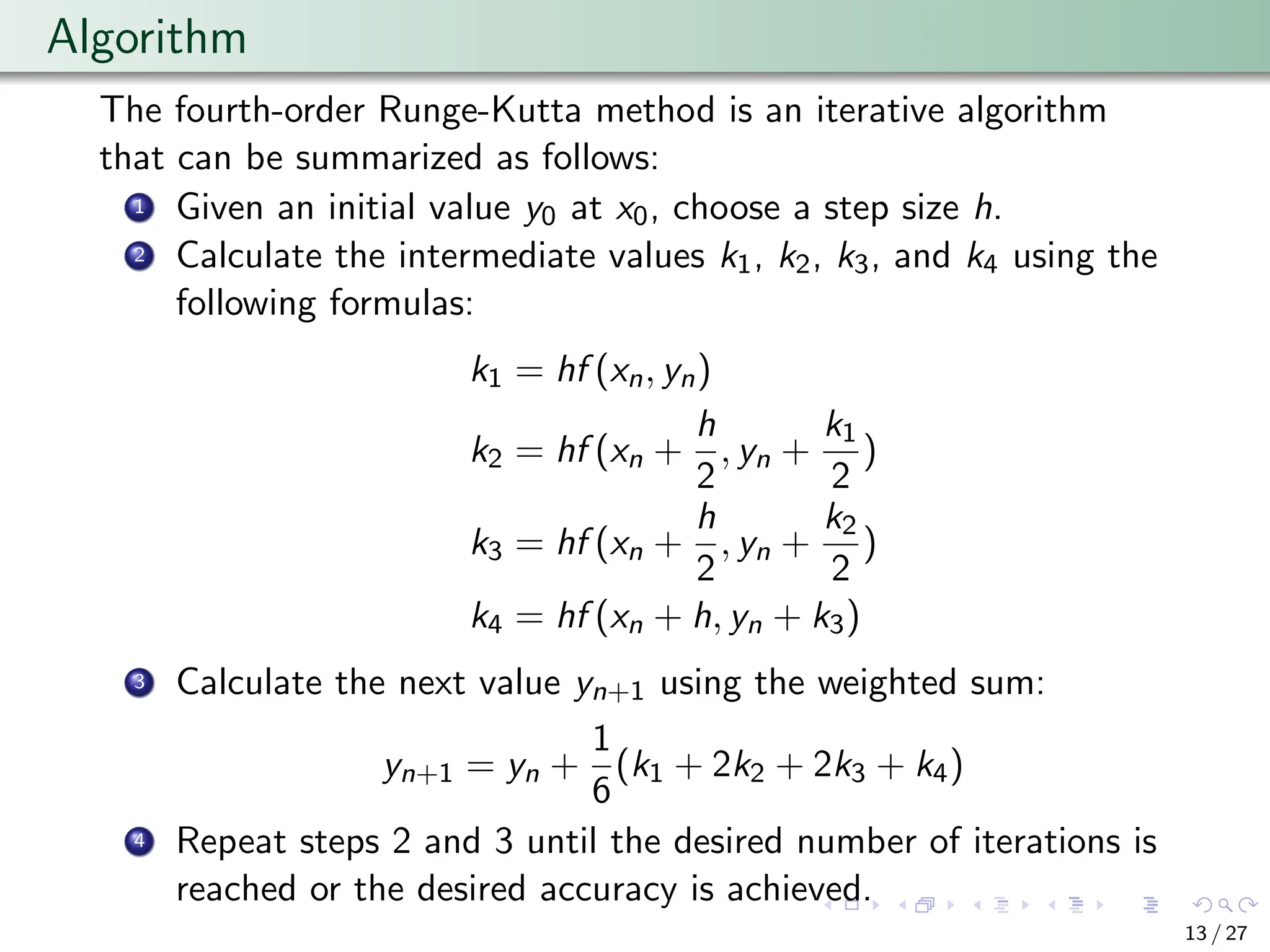

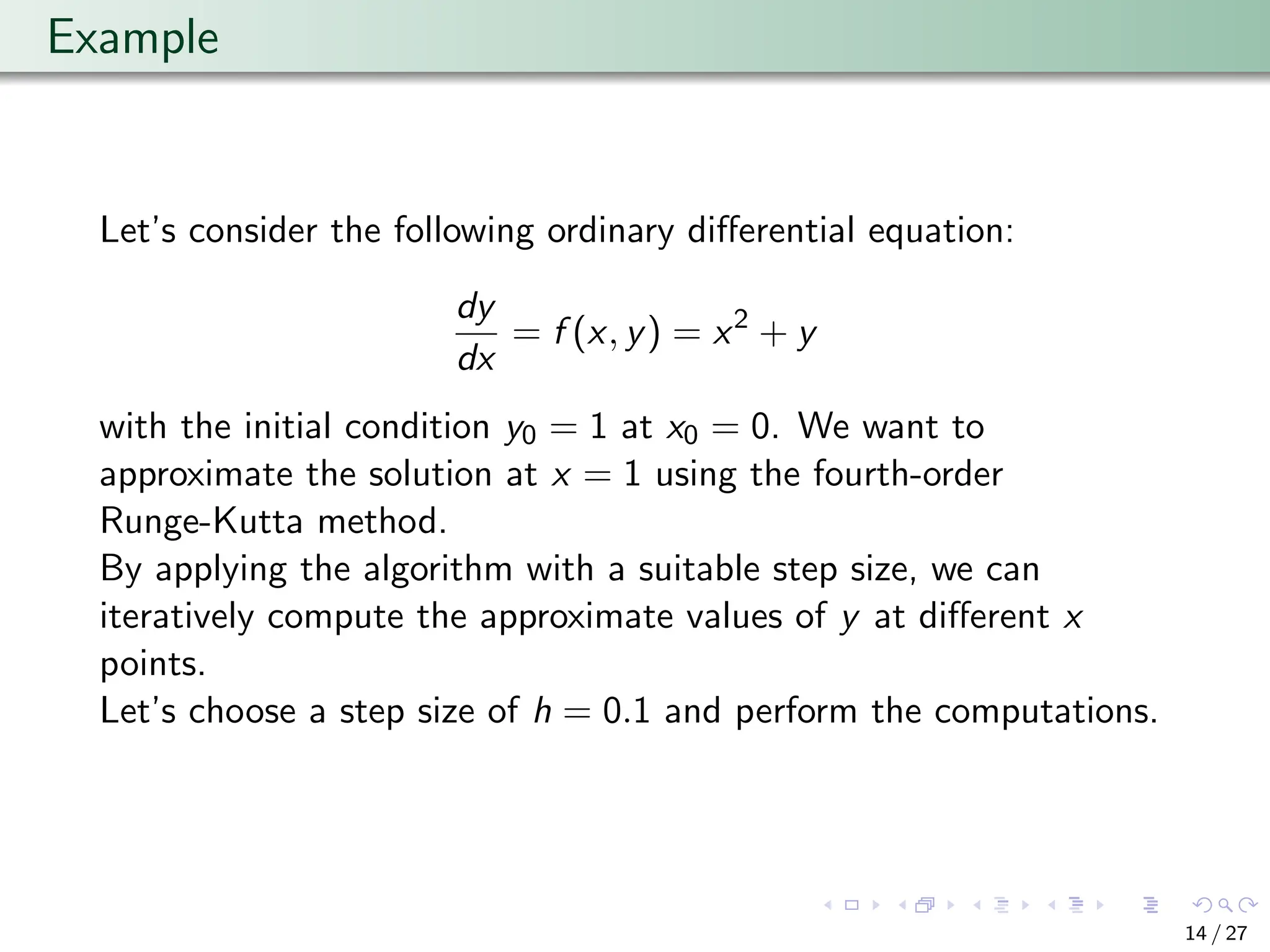

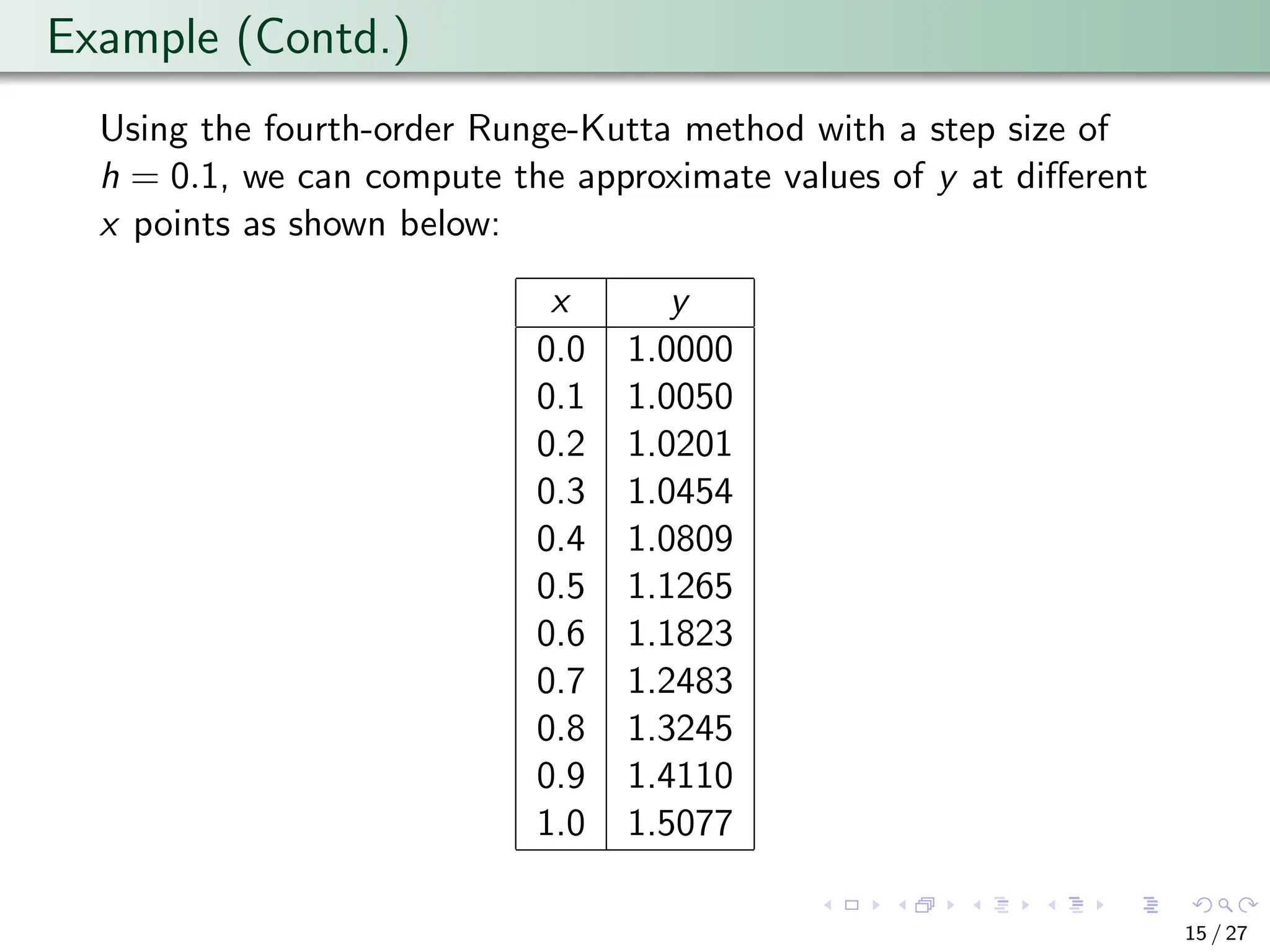

- The fourth-order Runge-Kutta method, which iteratively computes intermediate values to approximate the solution at discrete points.

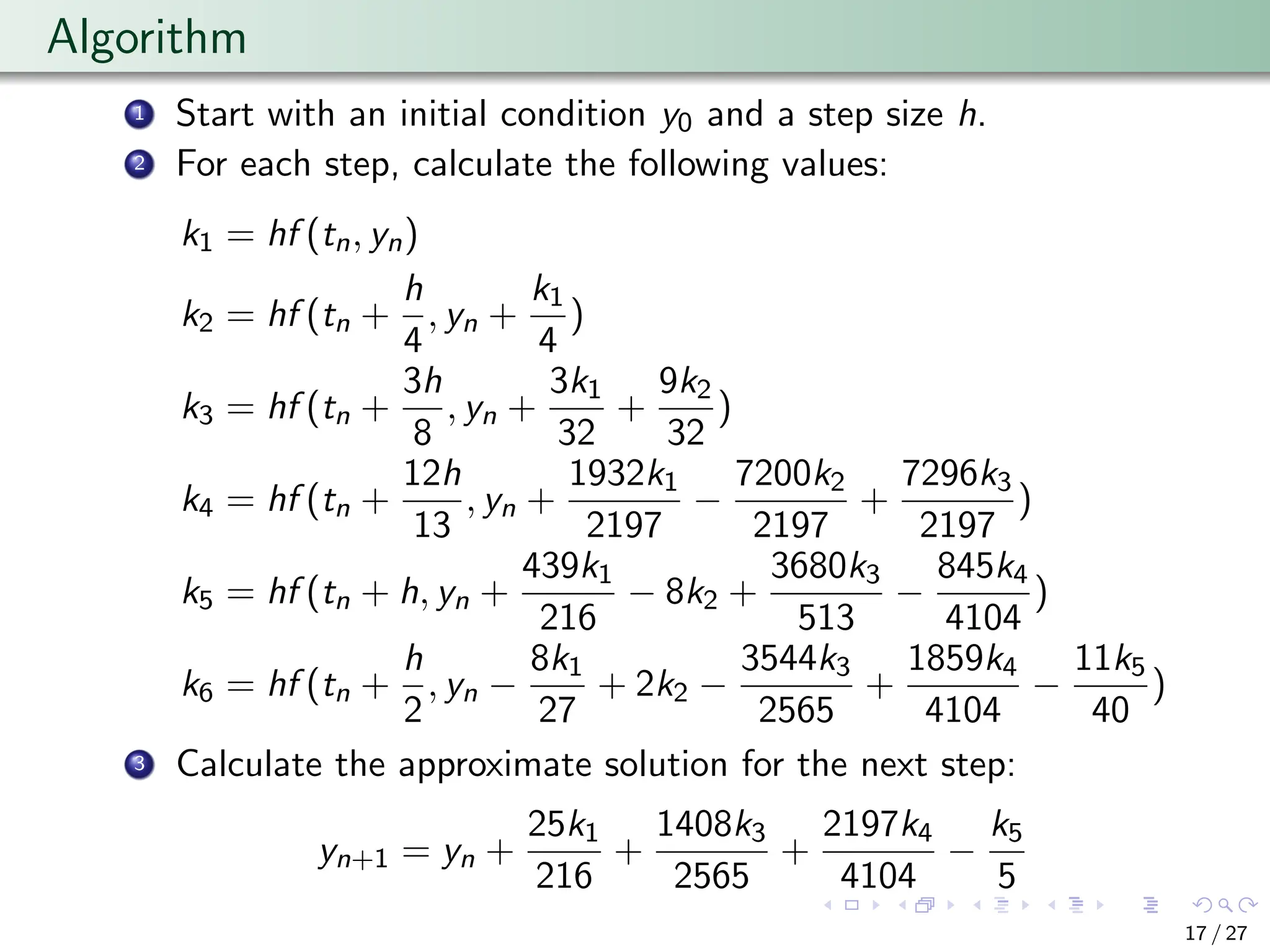

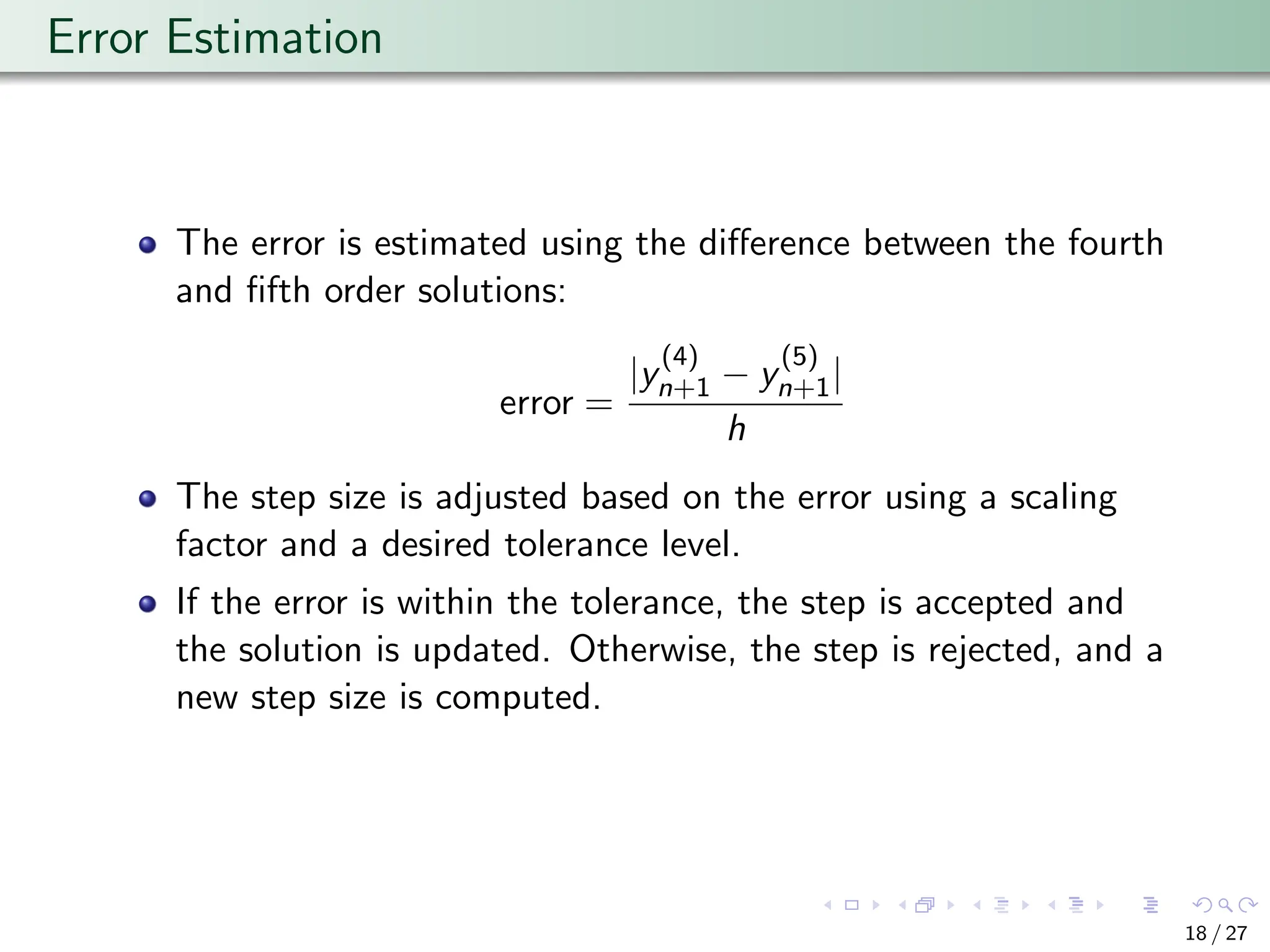

- The Runge-Kutta-Fehlberg method, which is an adaptive method that allows for error control by adjusting the step size based on error estimates.

- The Adams Fourth-Order Predictor-Corrector method, which combines Adams-Bashforth and Adams-Moulton methods for higher accuracy and stability.