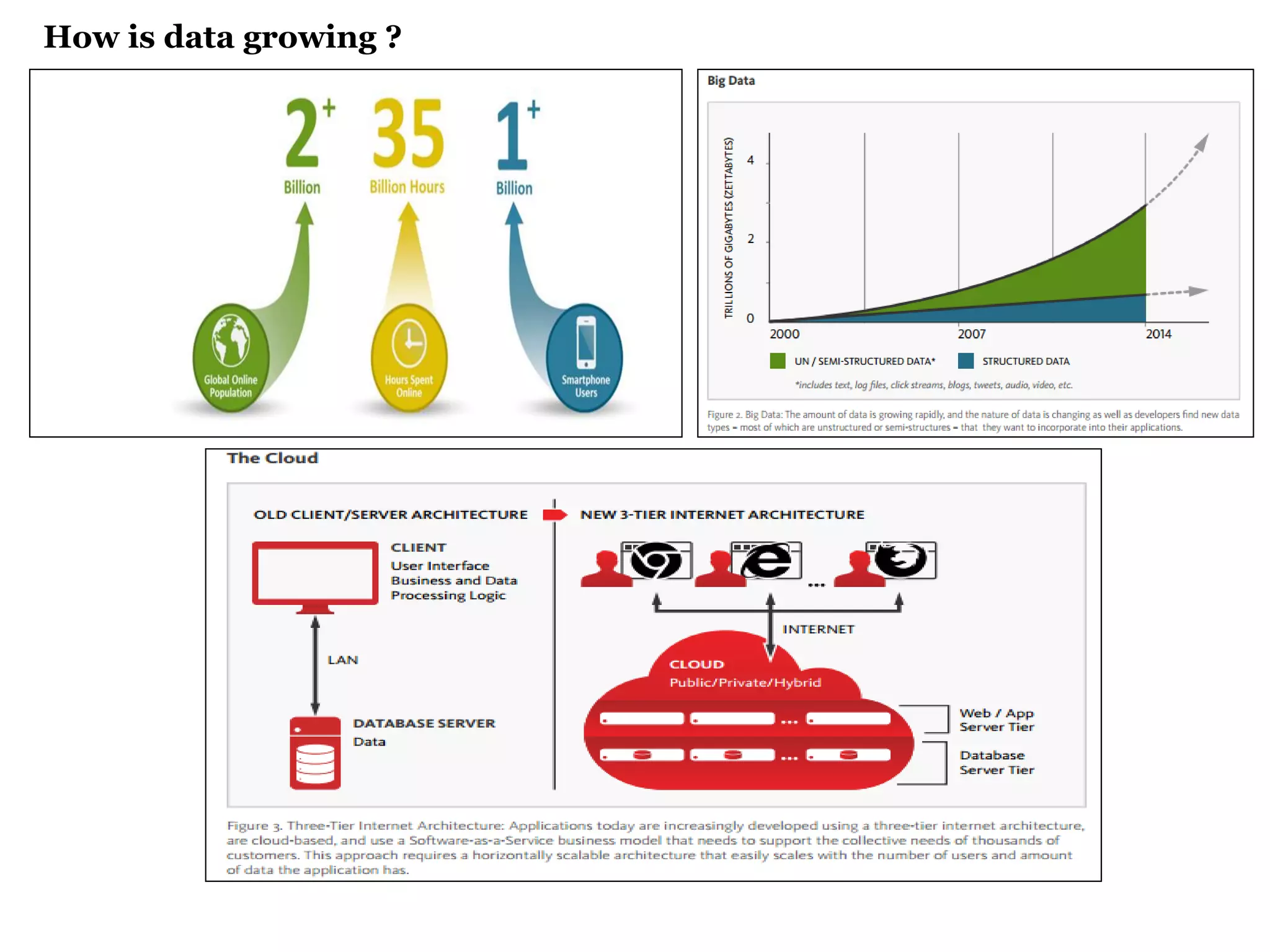

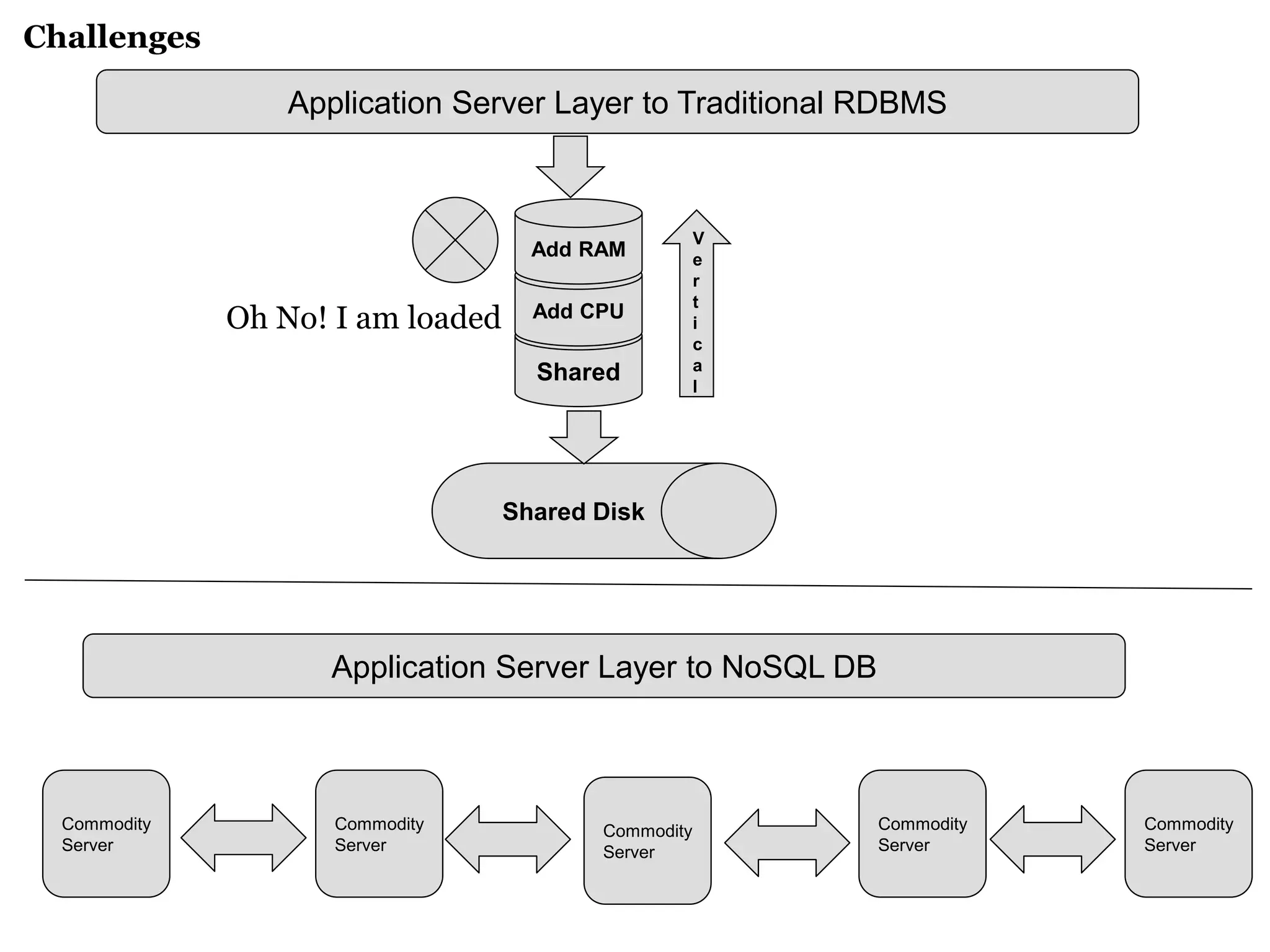

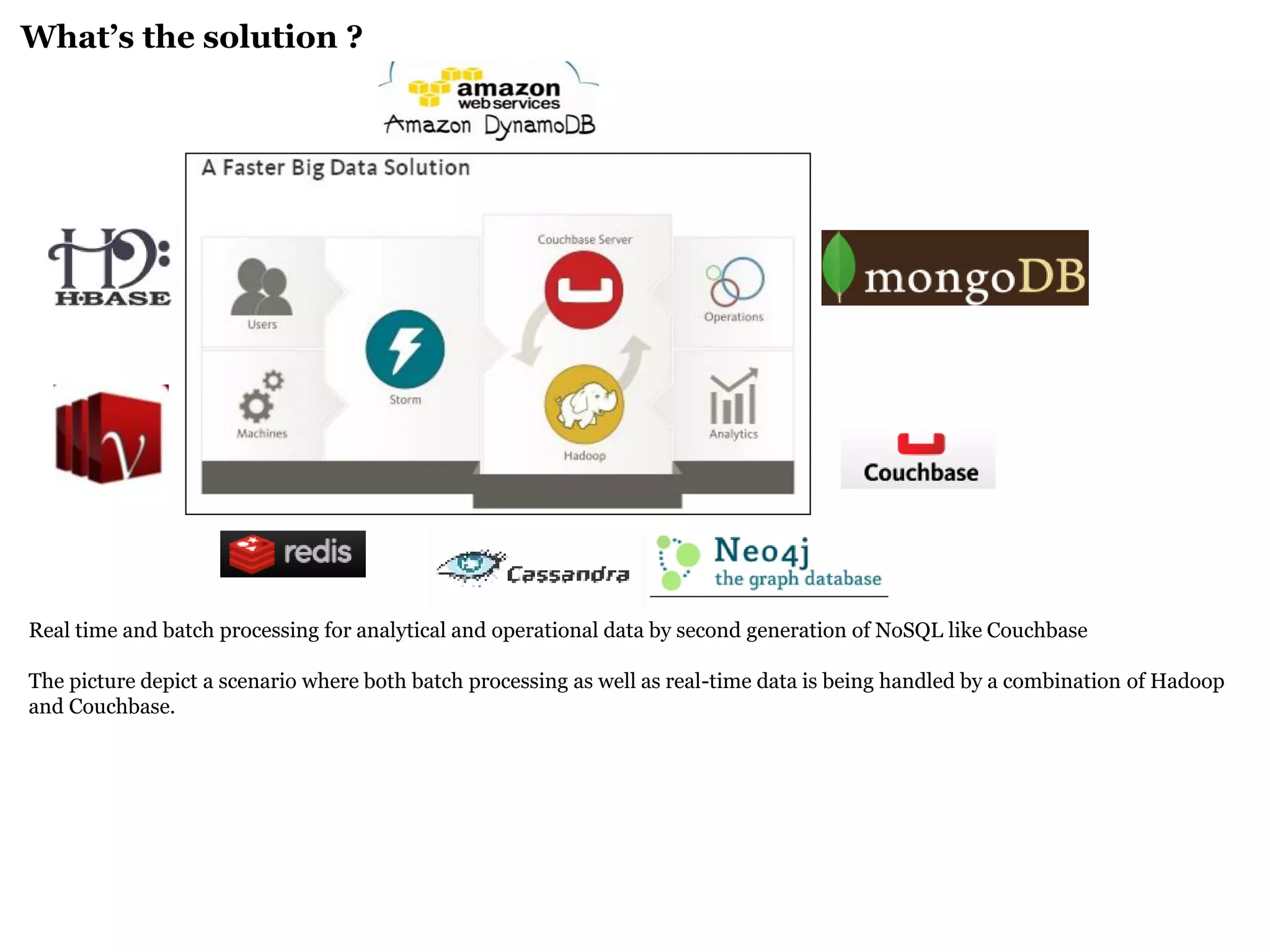

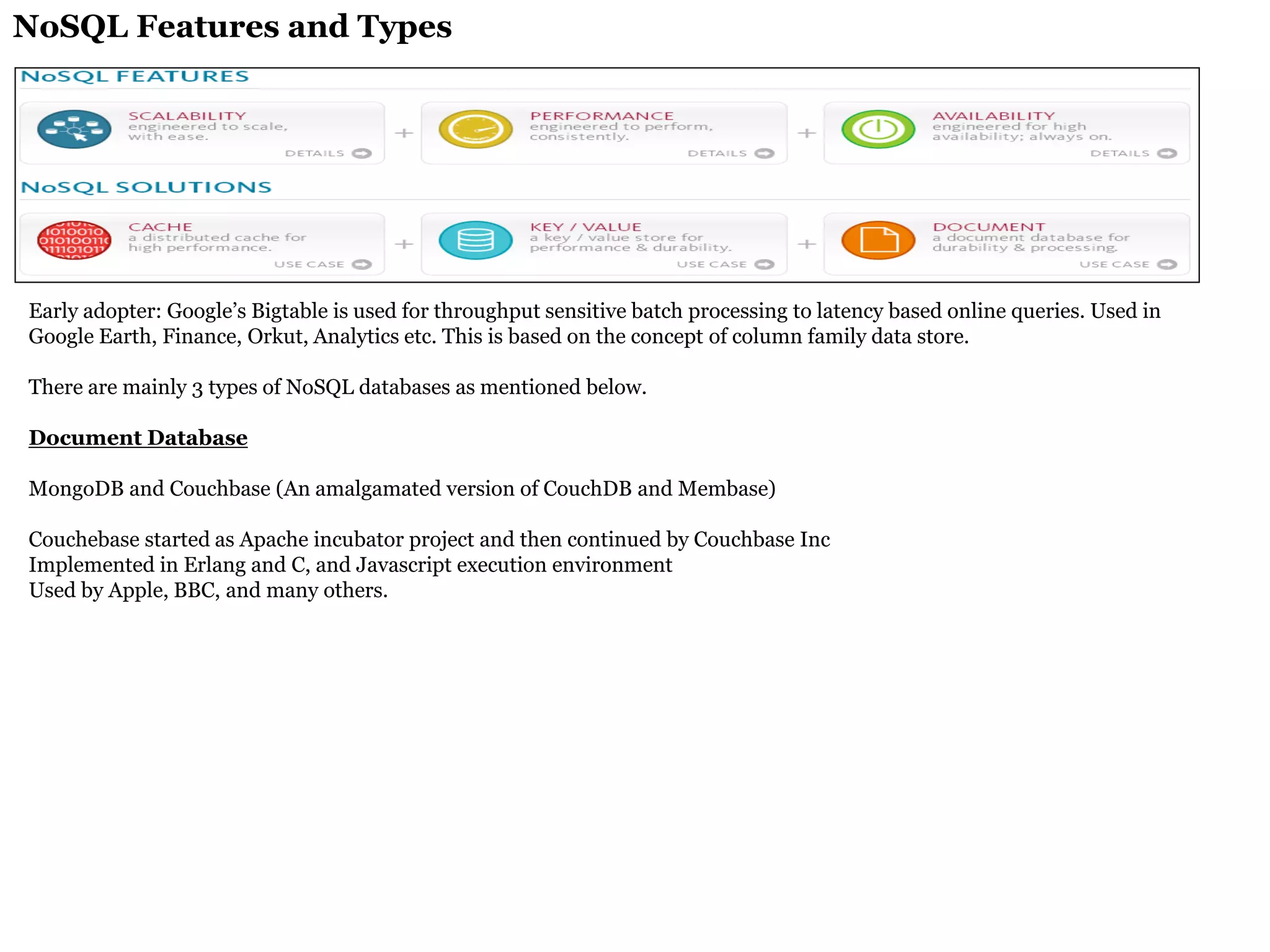

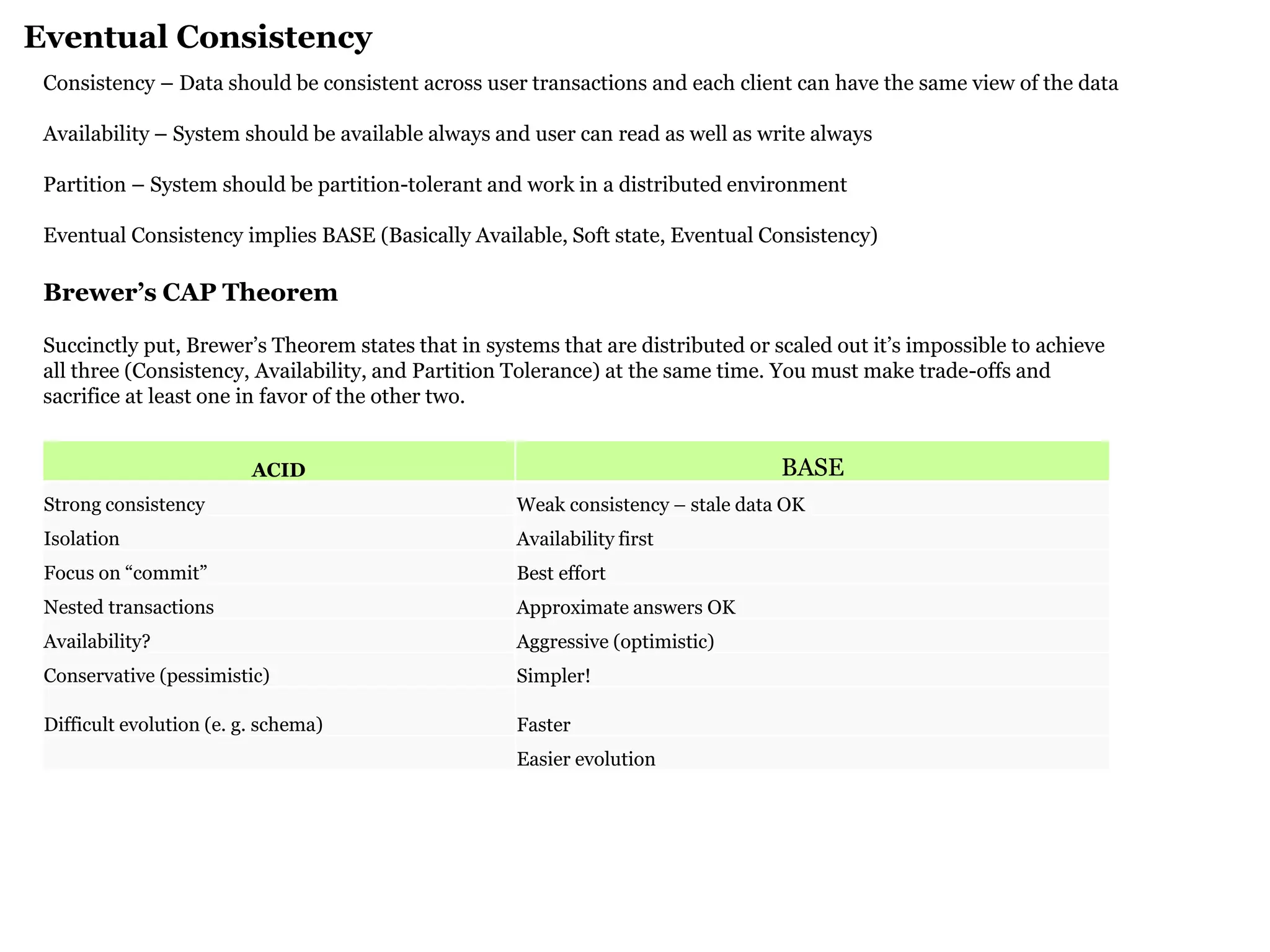

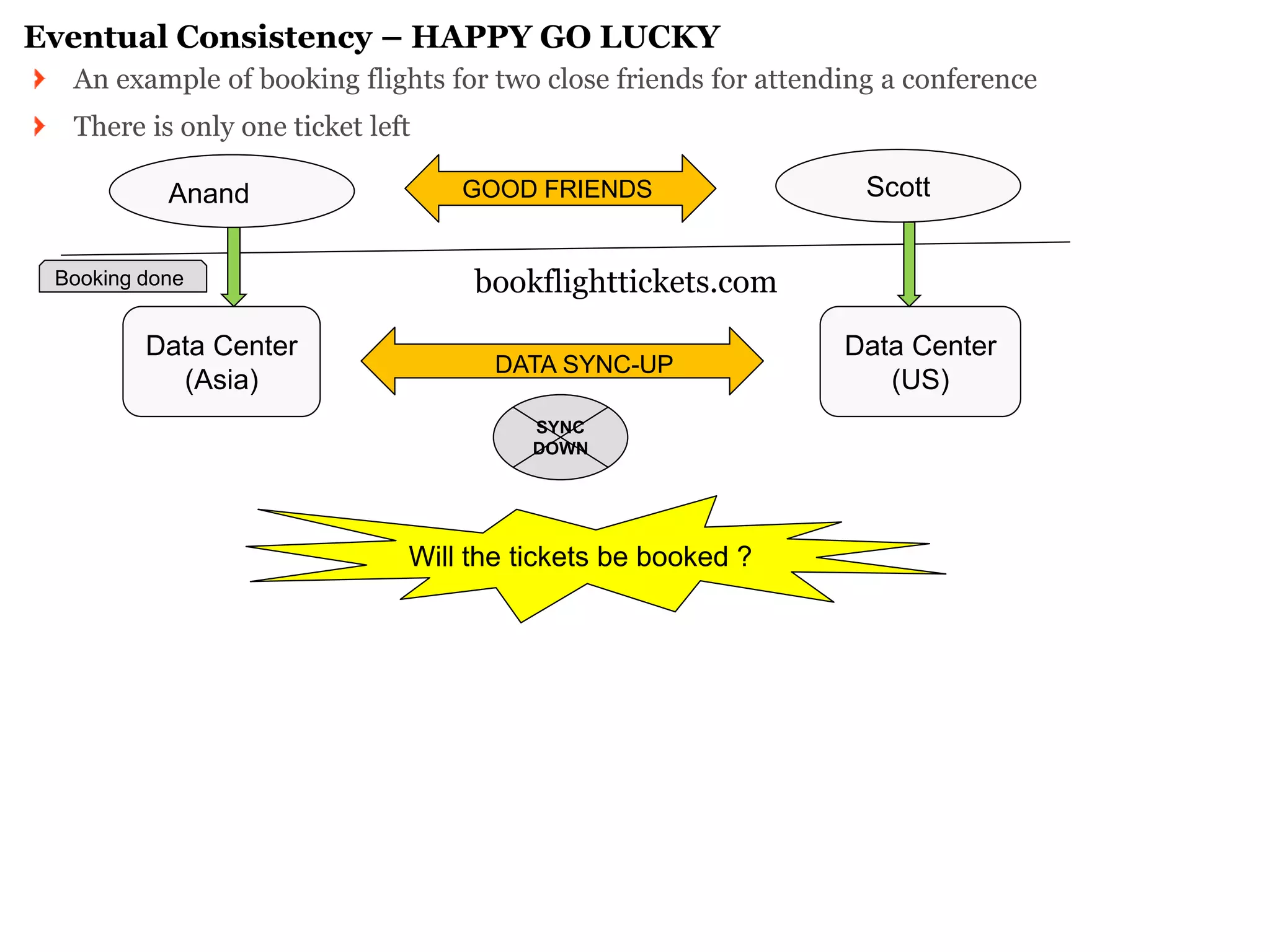

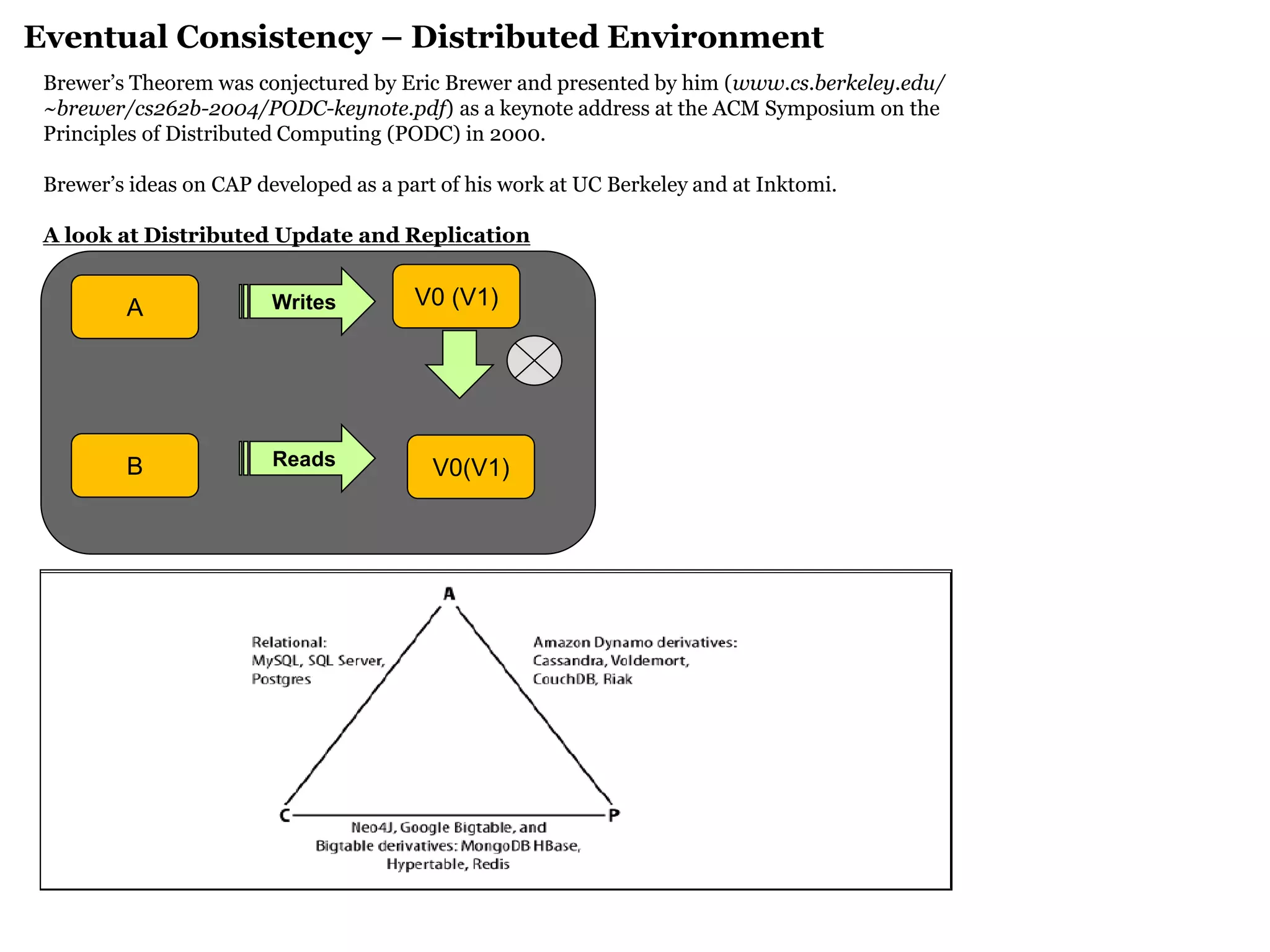

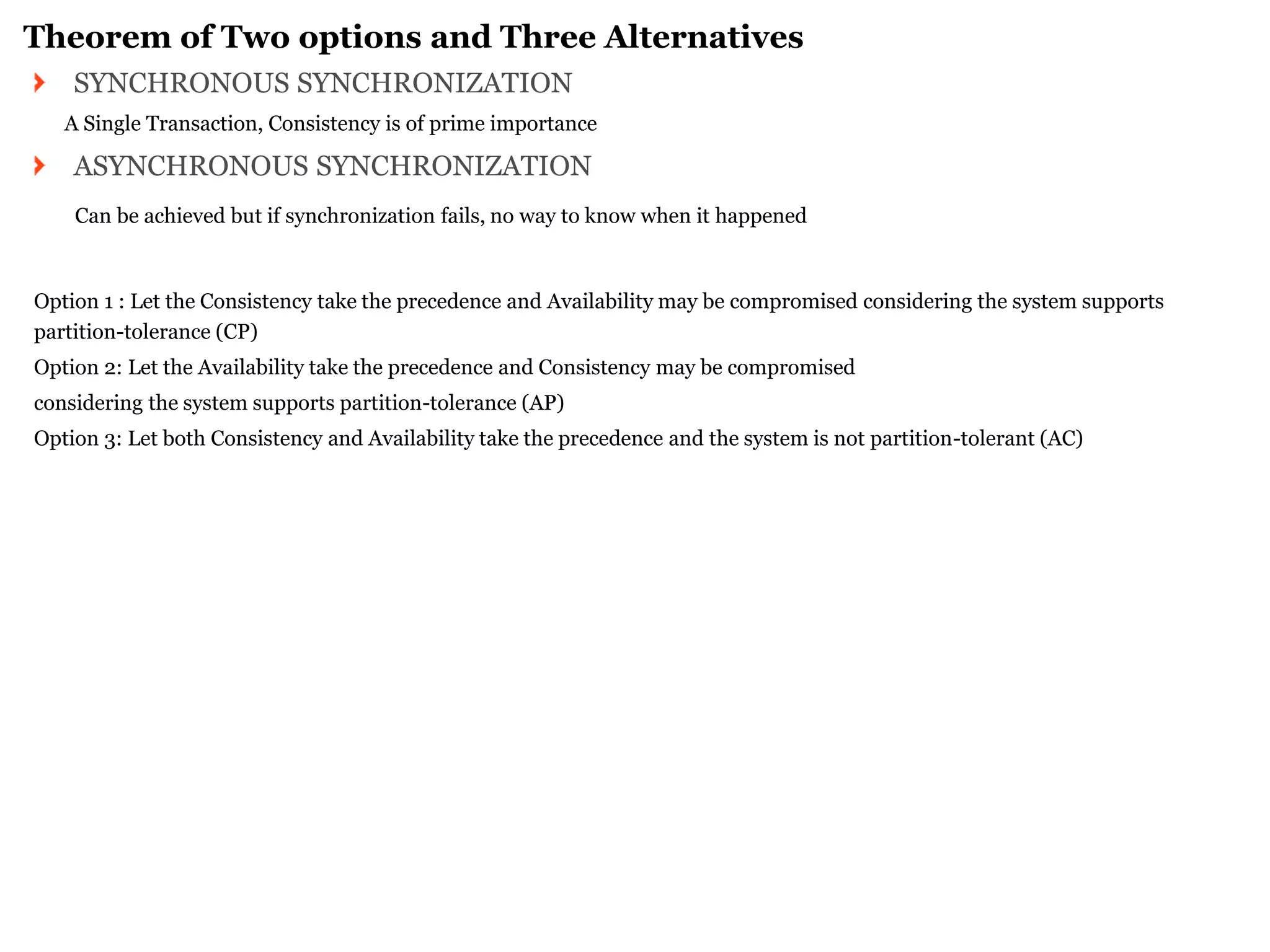

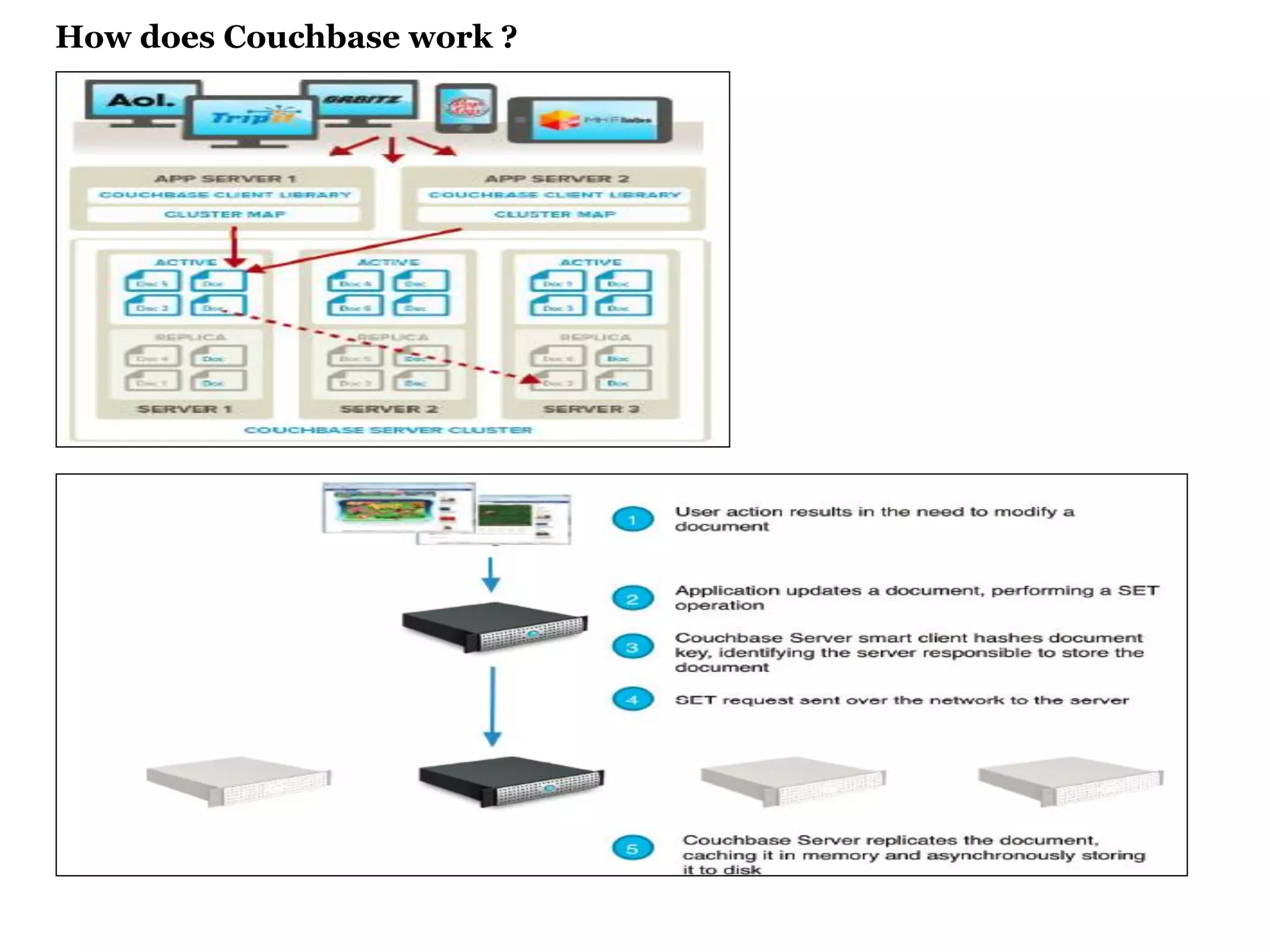

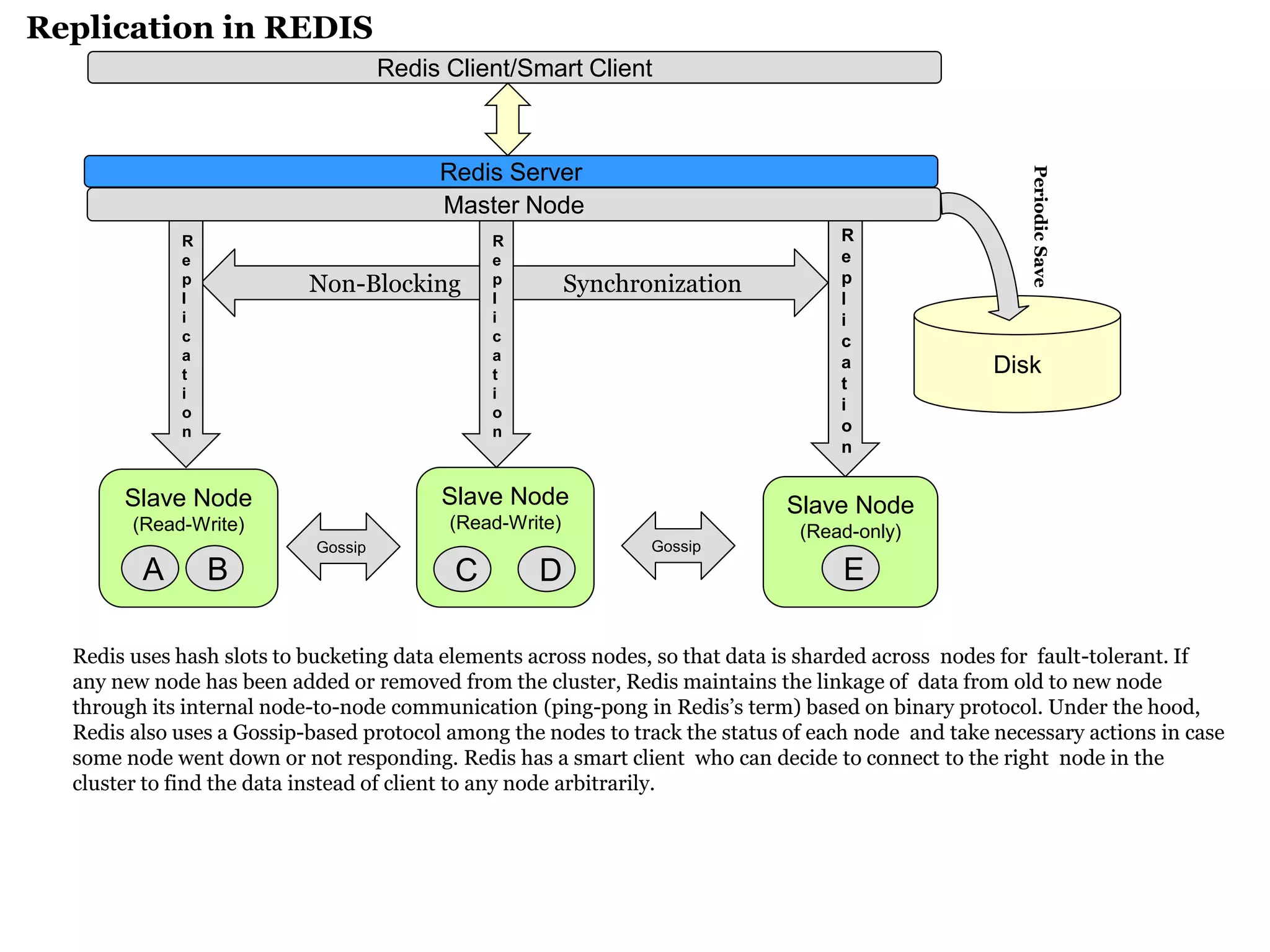

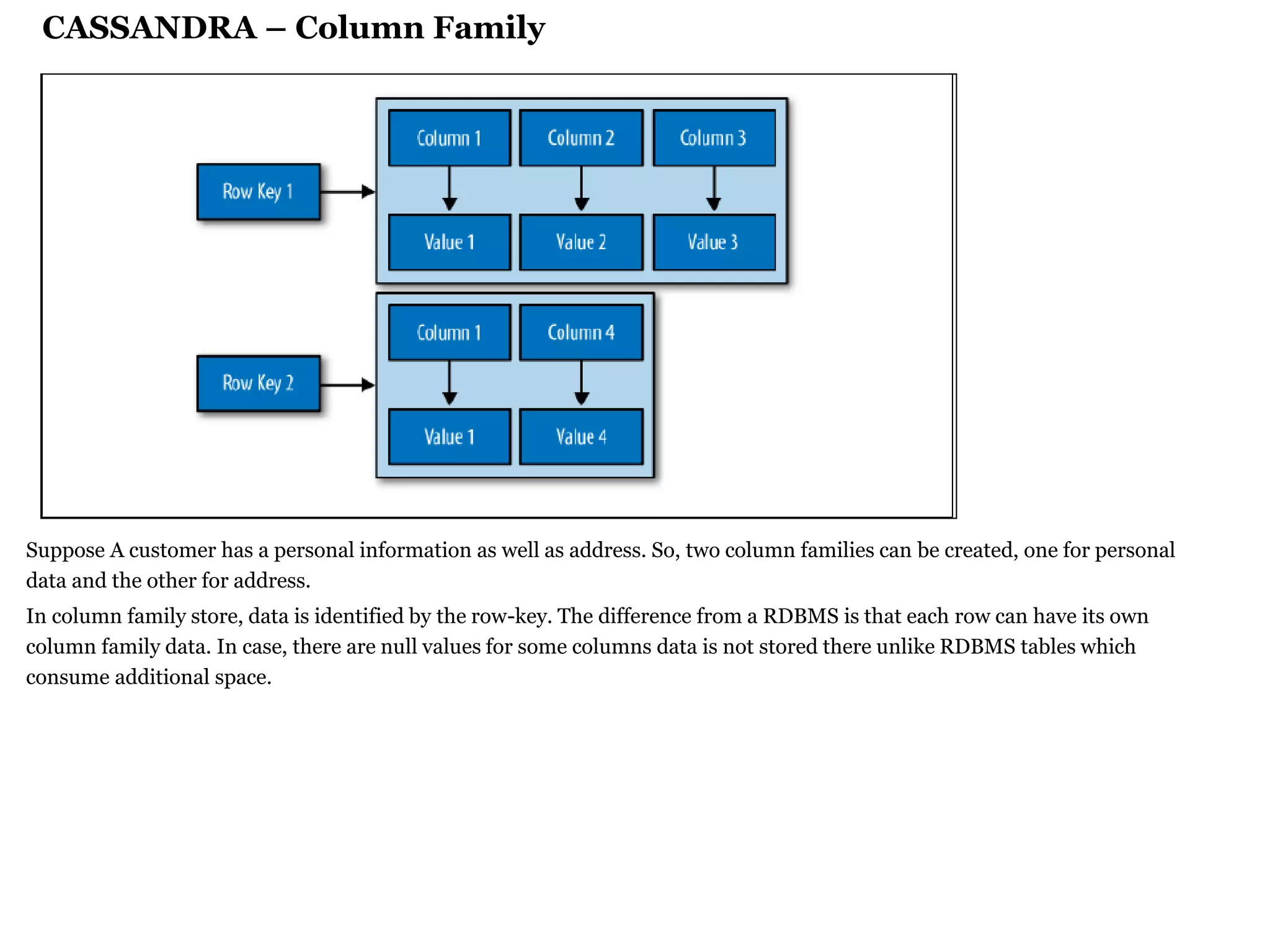

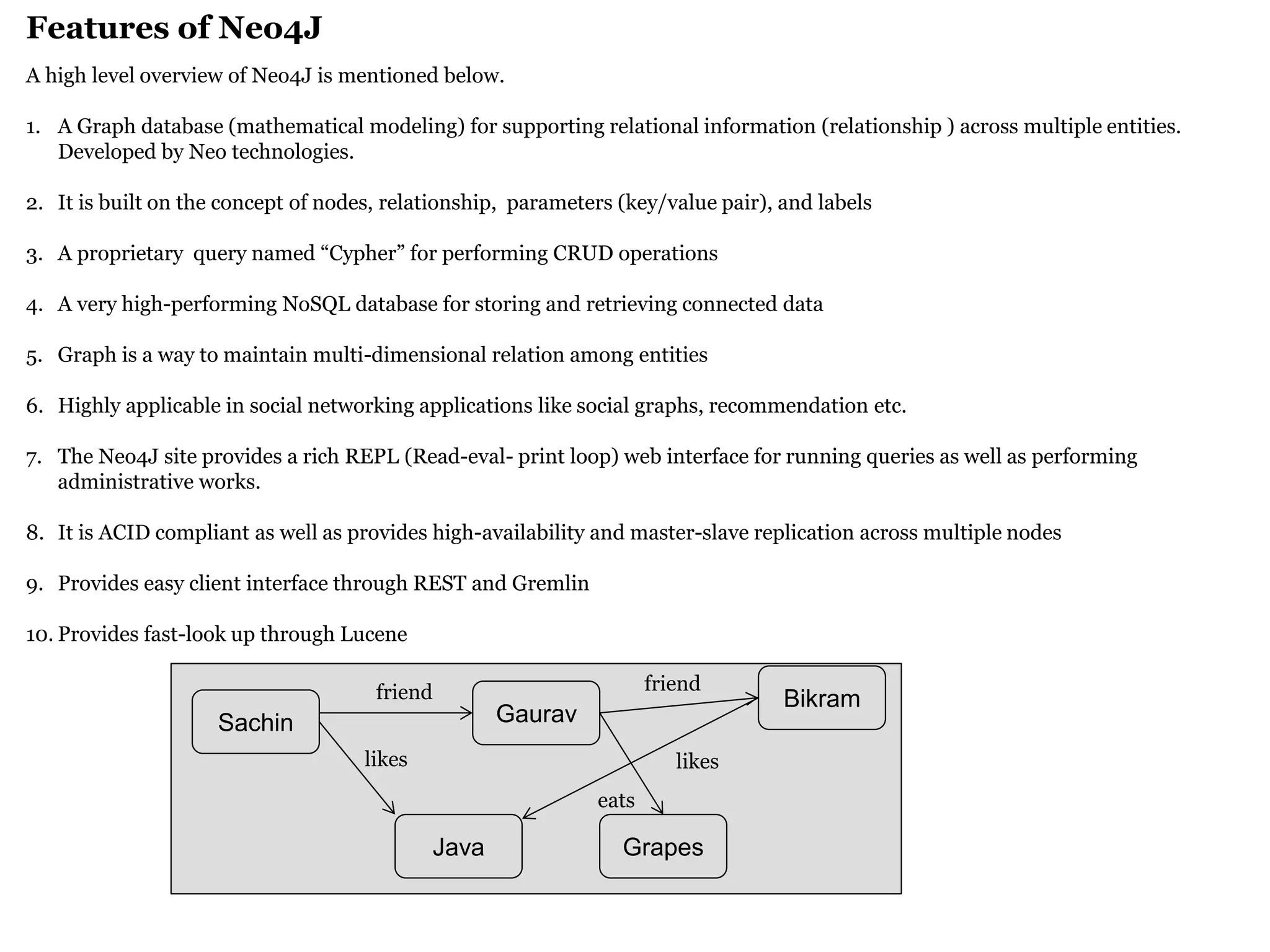

The document provides a comprehensive overview of NoSQL databases, which are designed for high-volume data storage and retrieval, particularly useful for unstructured data. It discusses the challenges presented by traditional relational databases, outlines various NoSQL database types (like key/value, document, and graph), and highlights features and applications of systems such as Couchbase, Redis, Cassandra, and Neo4j. The document emphasizes eventual consistency, scalability, and the evolving nature of data in modern cloud computing environments.