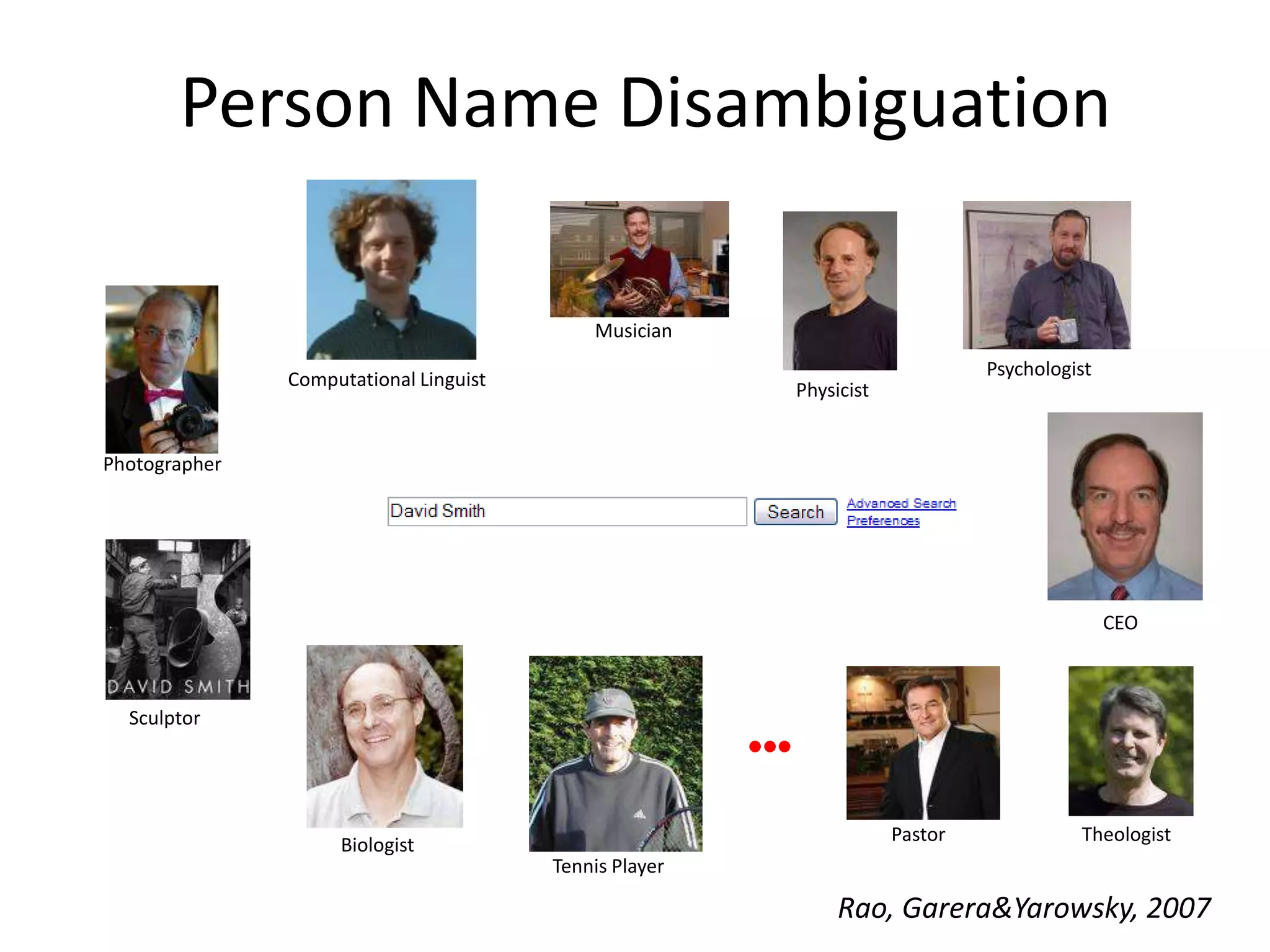

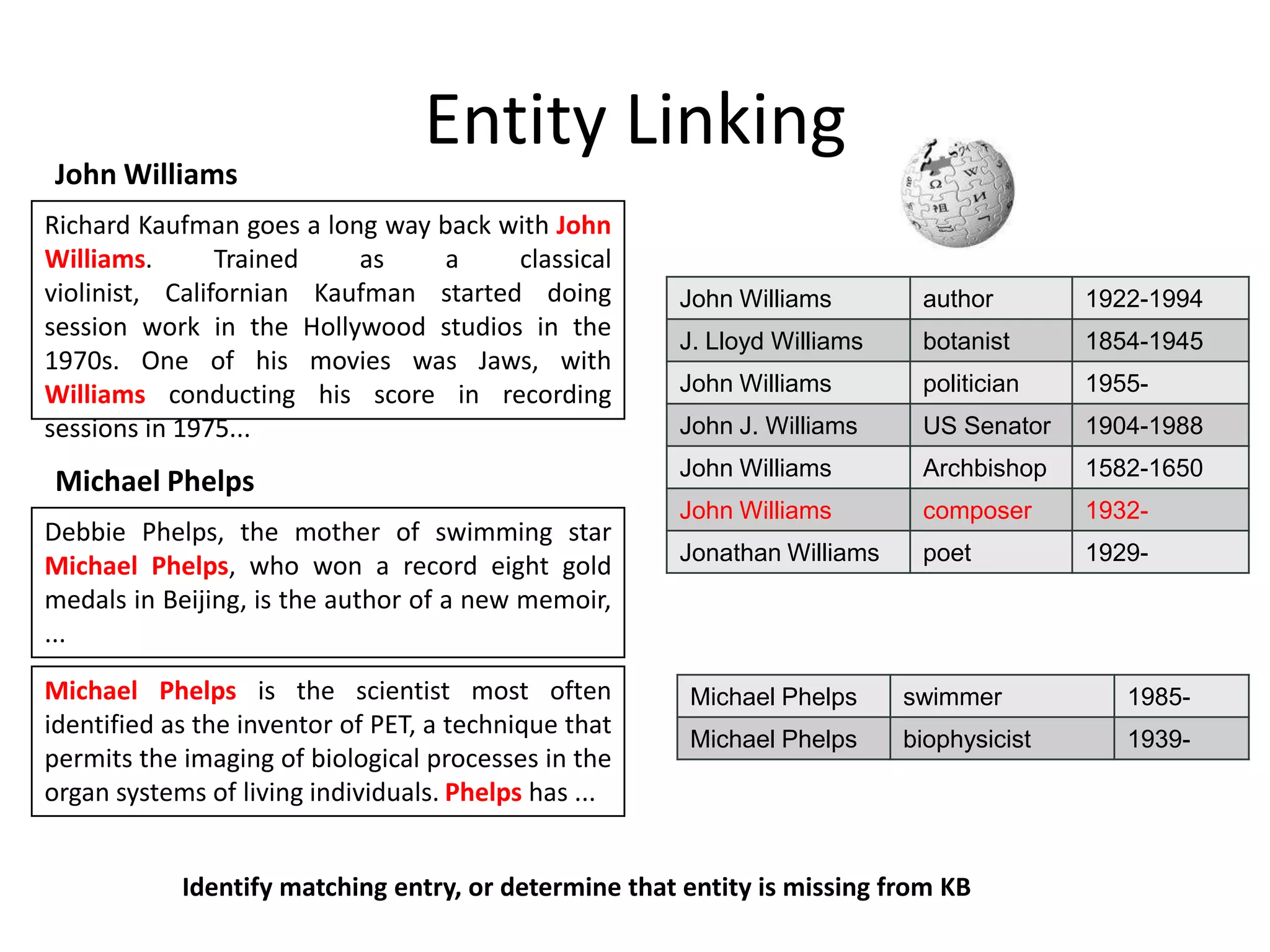

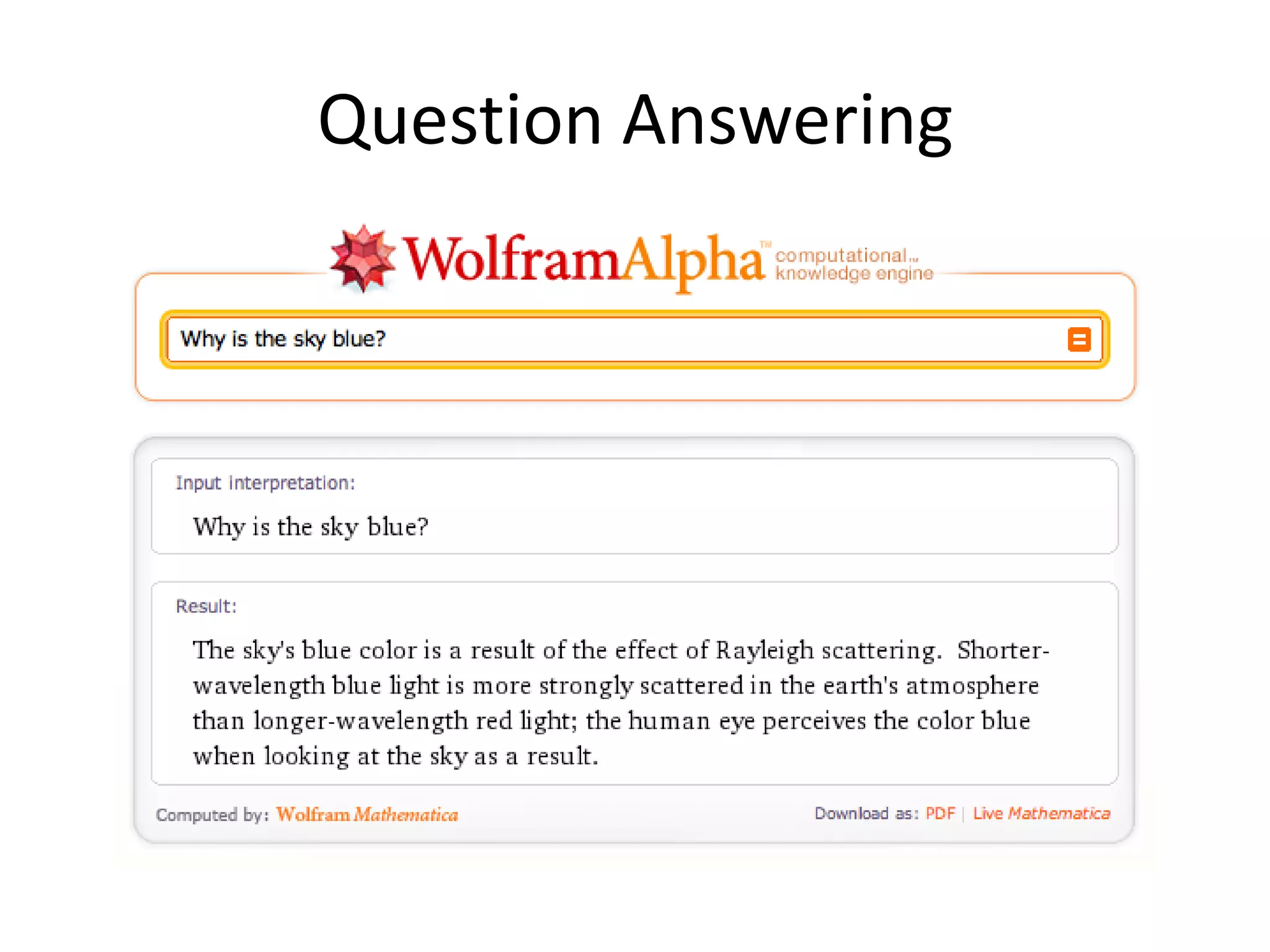

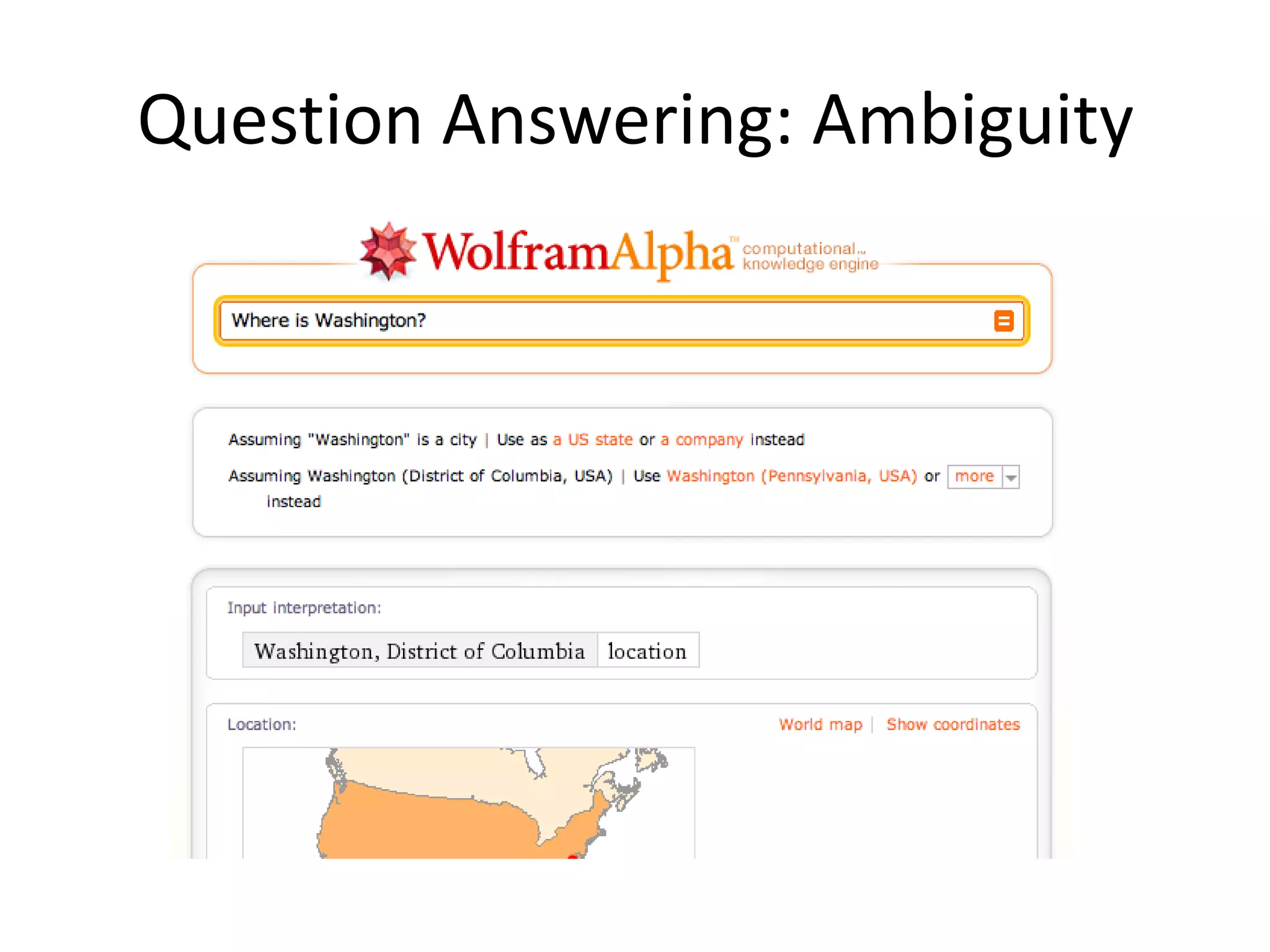

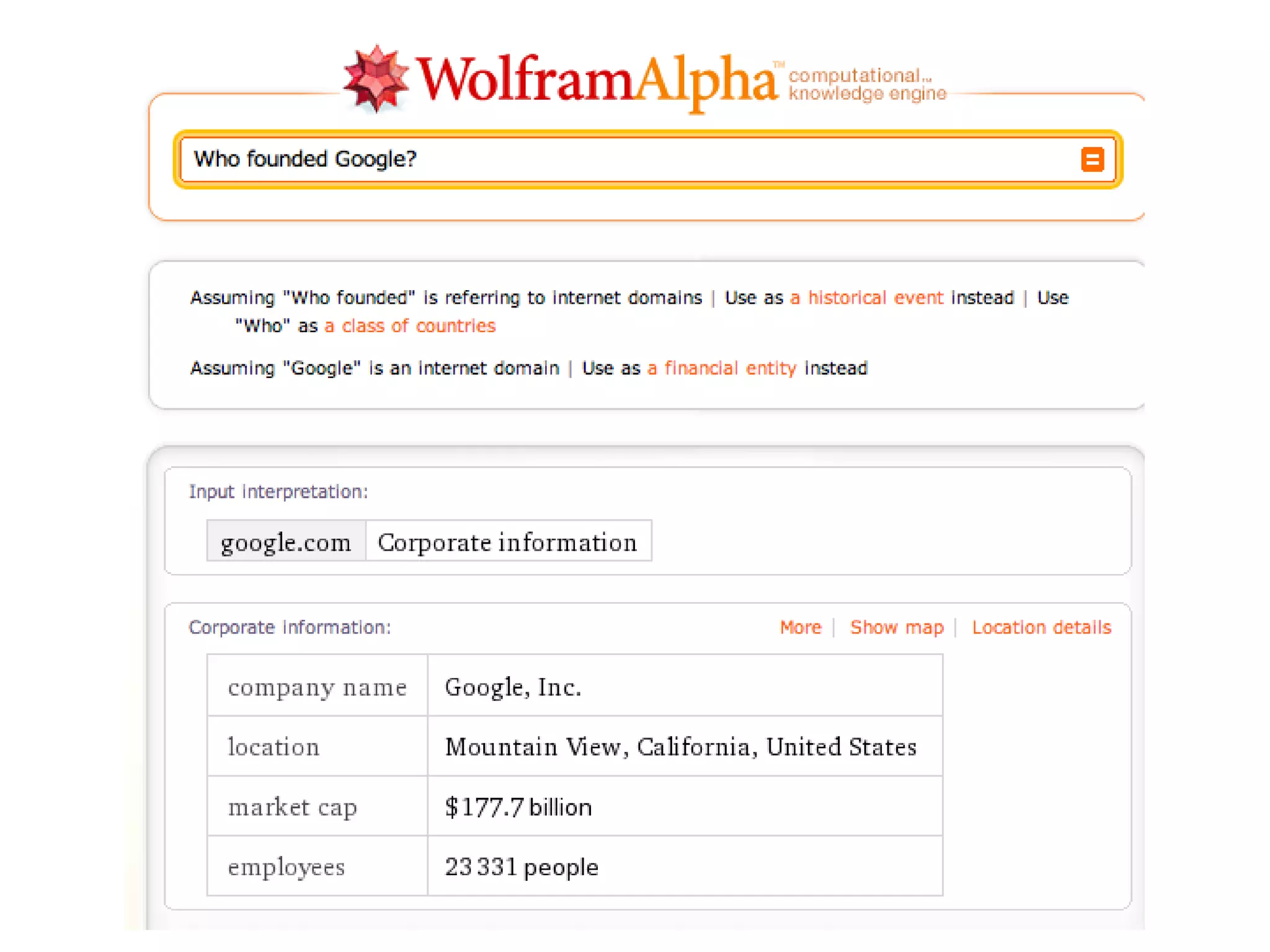

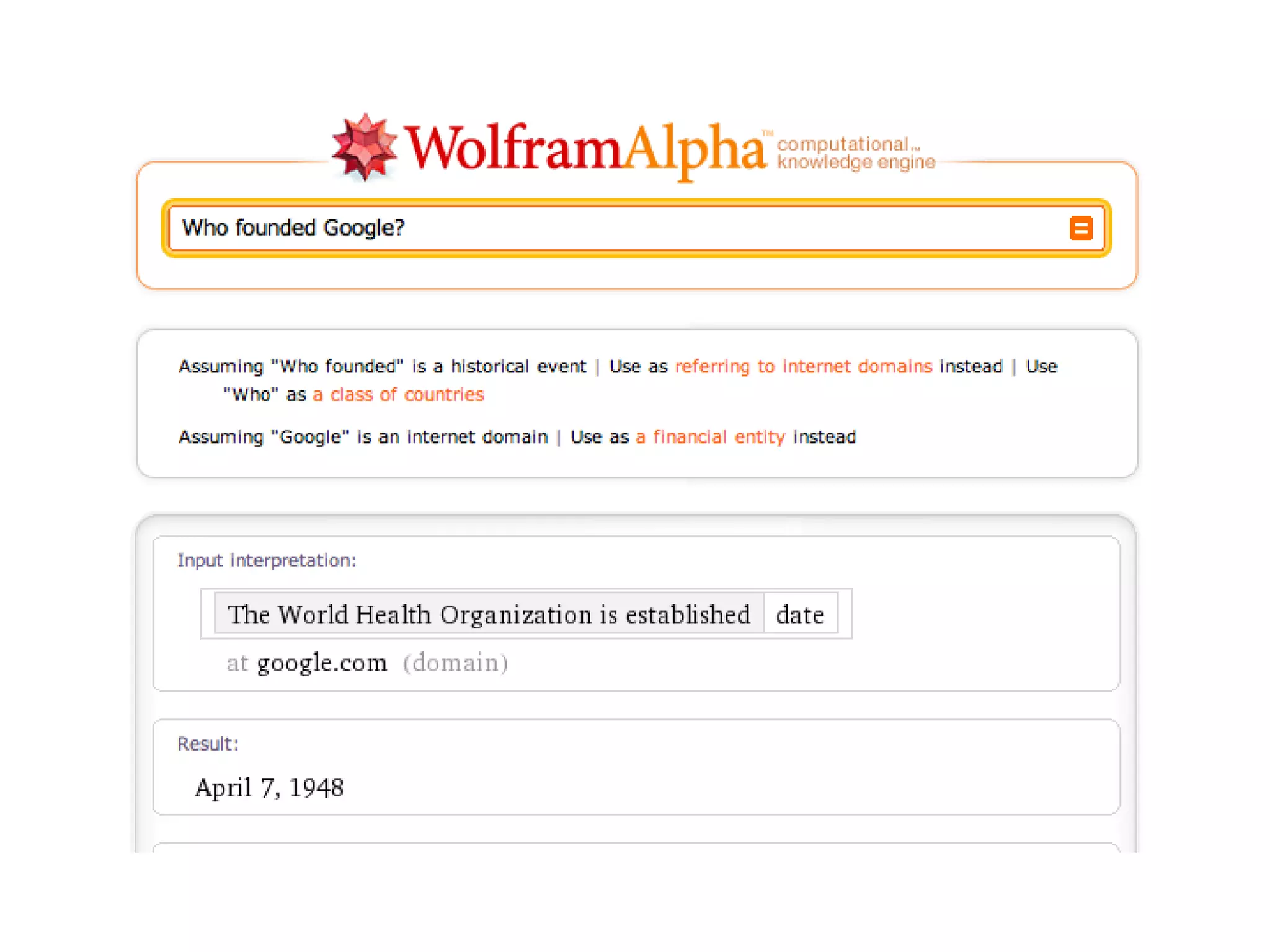

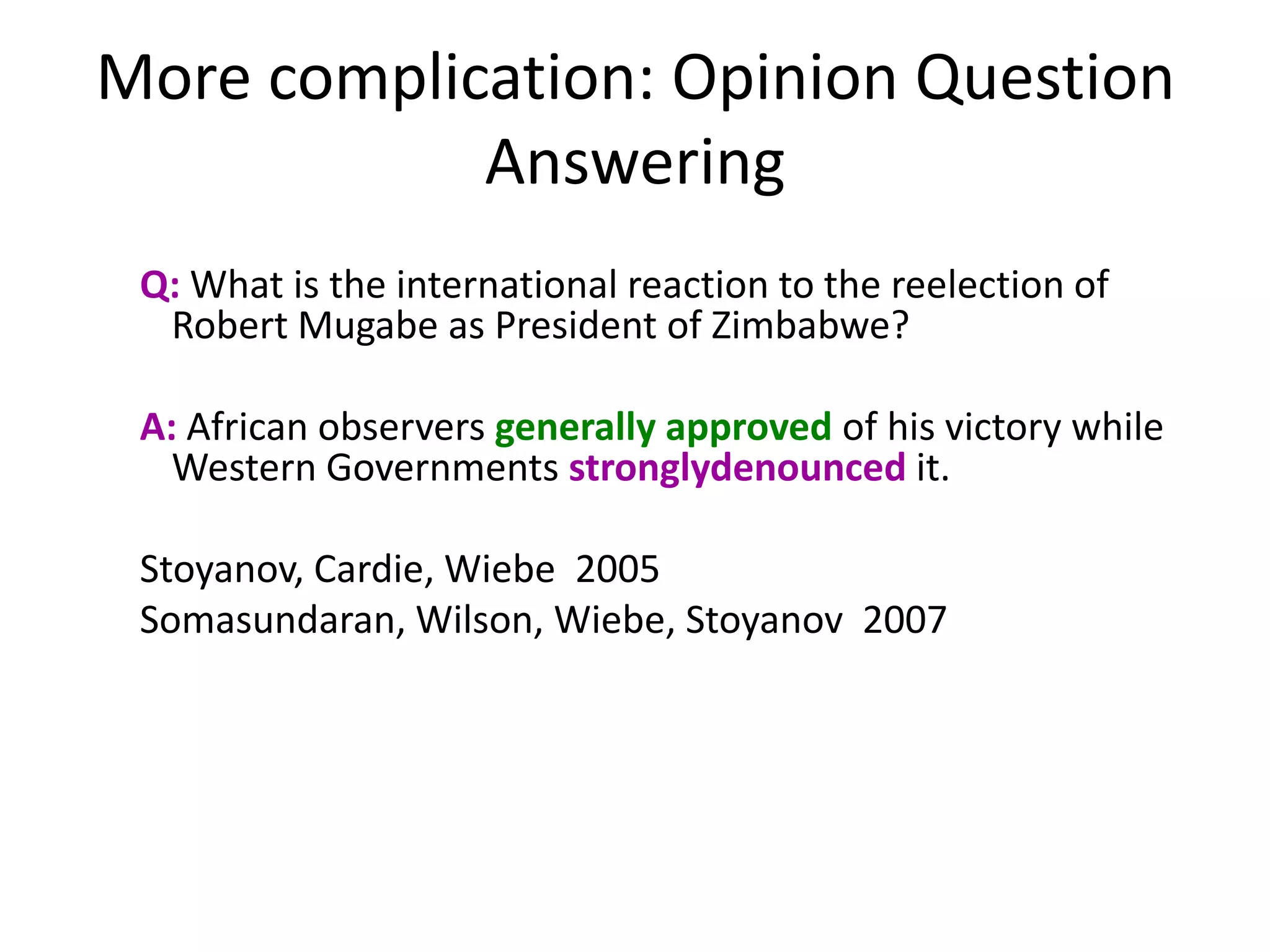

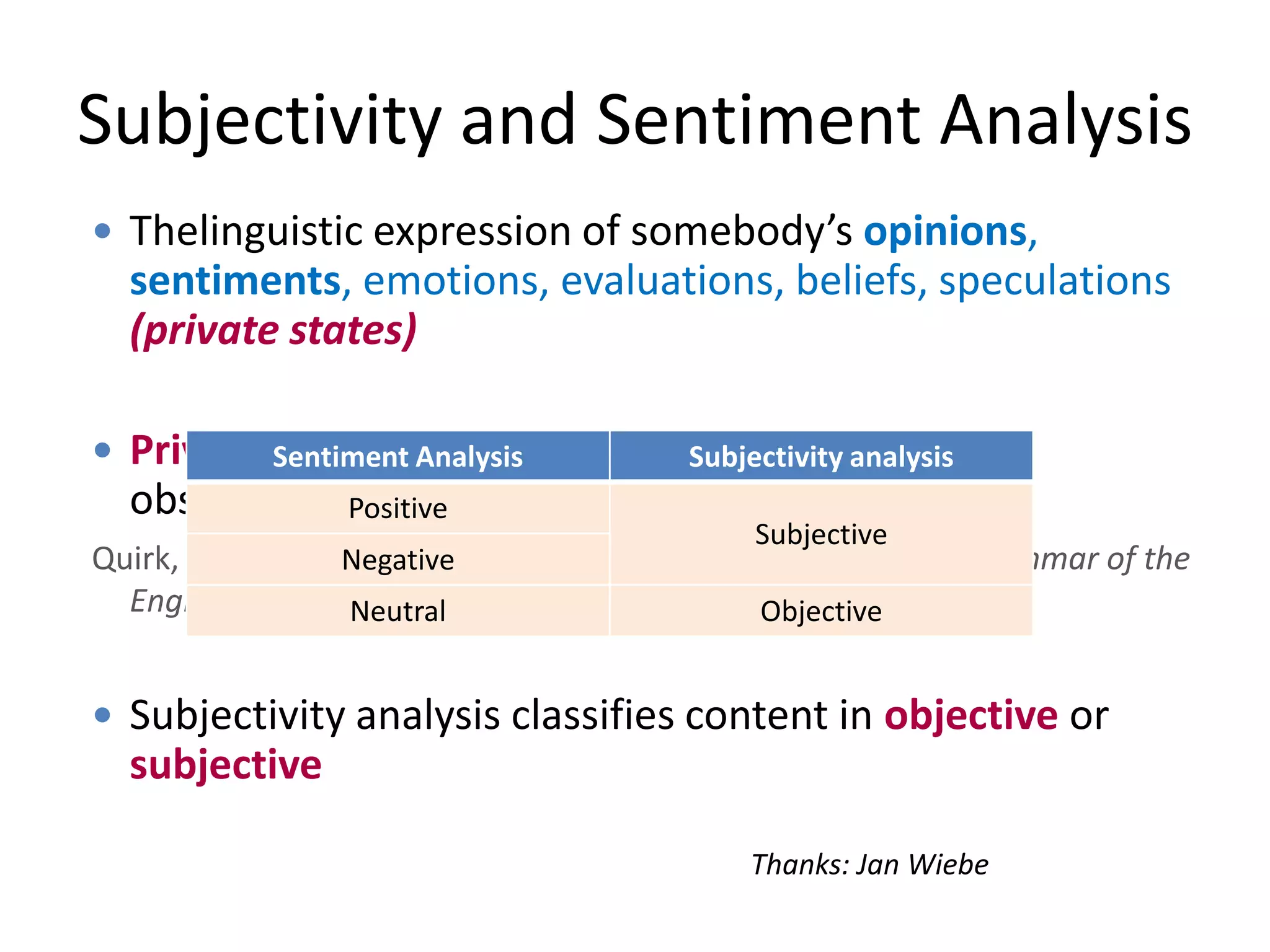

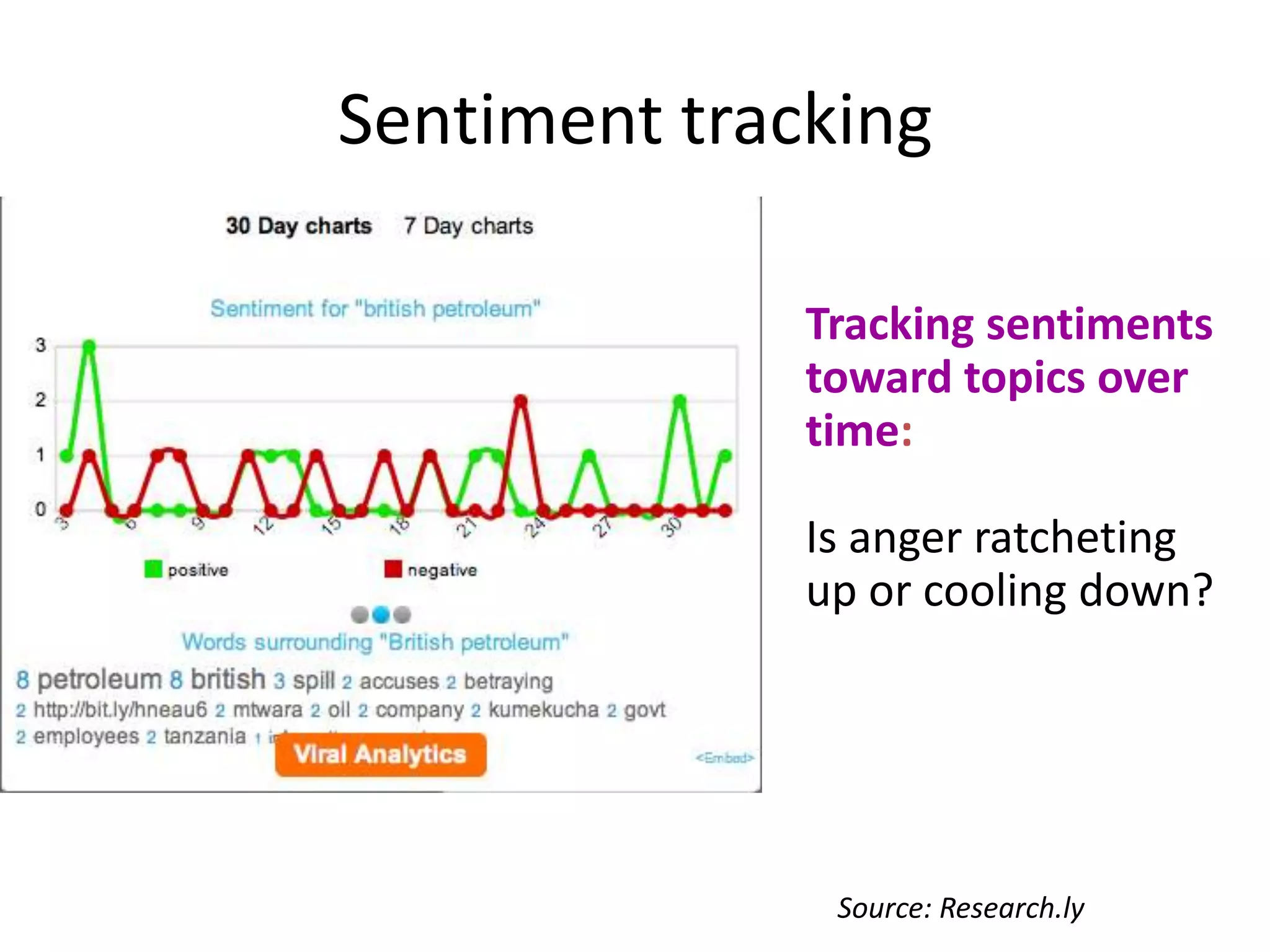

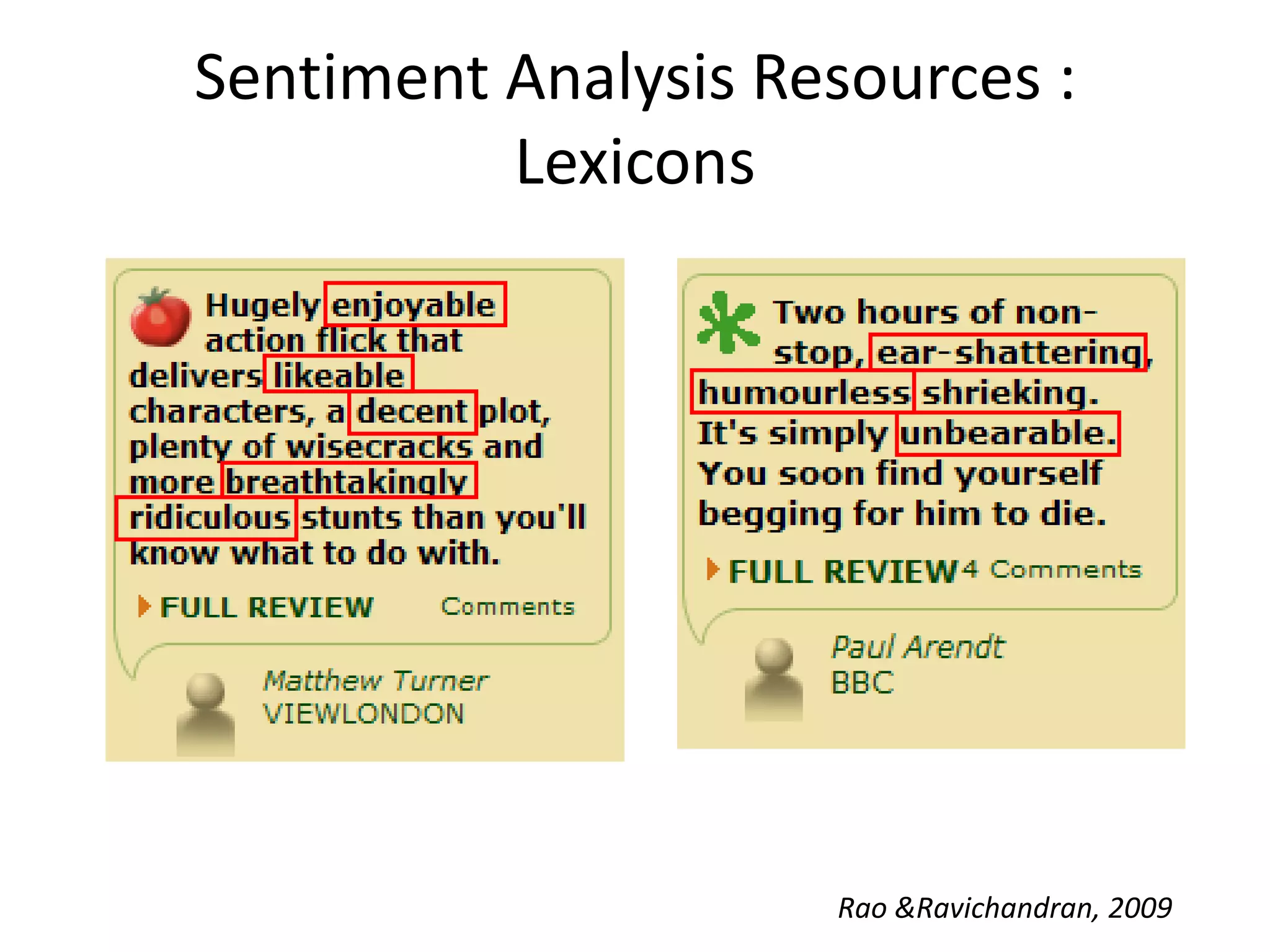

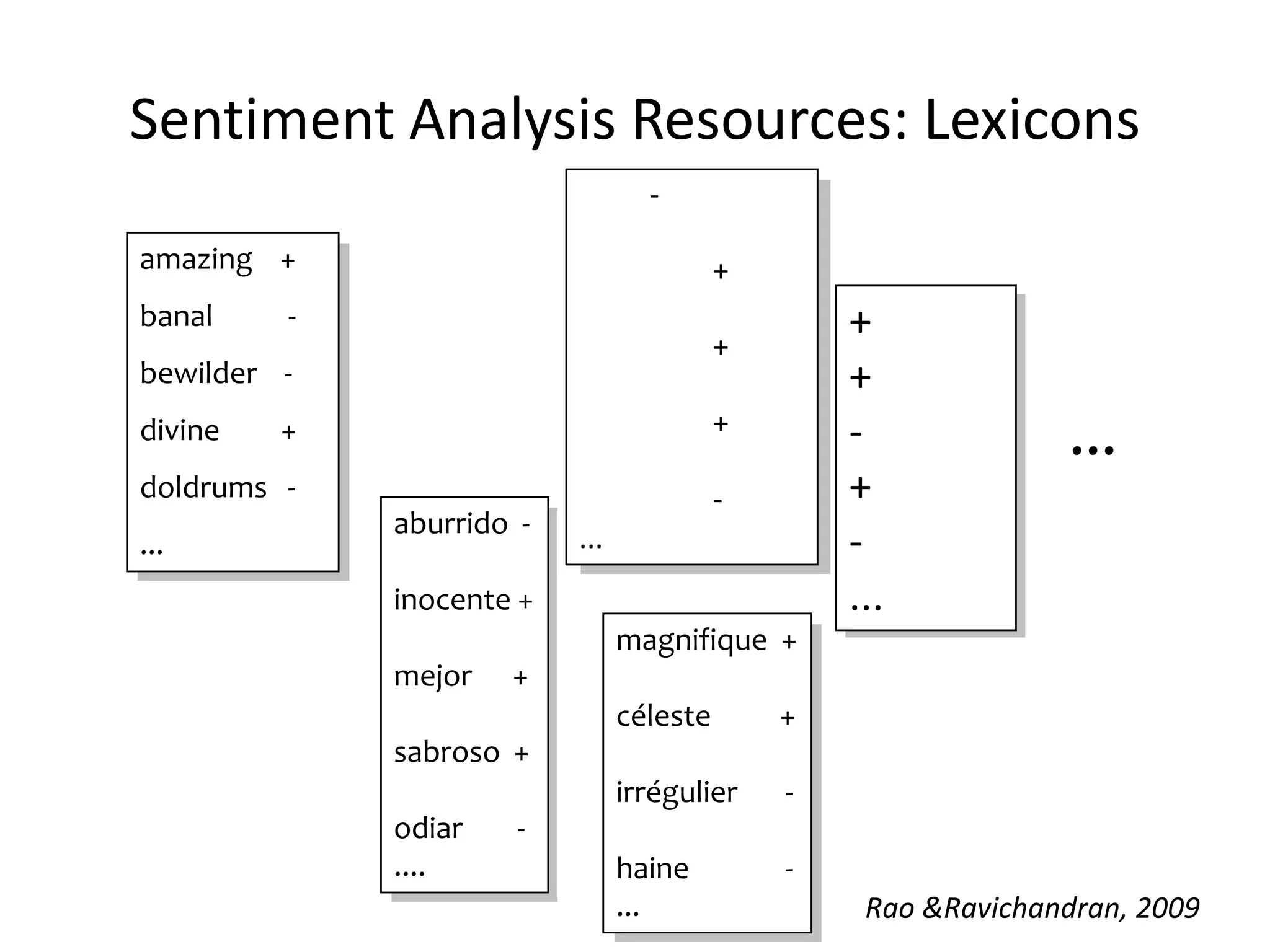

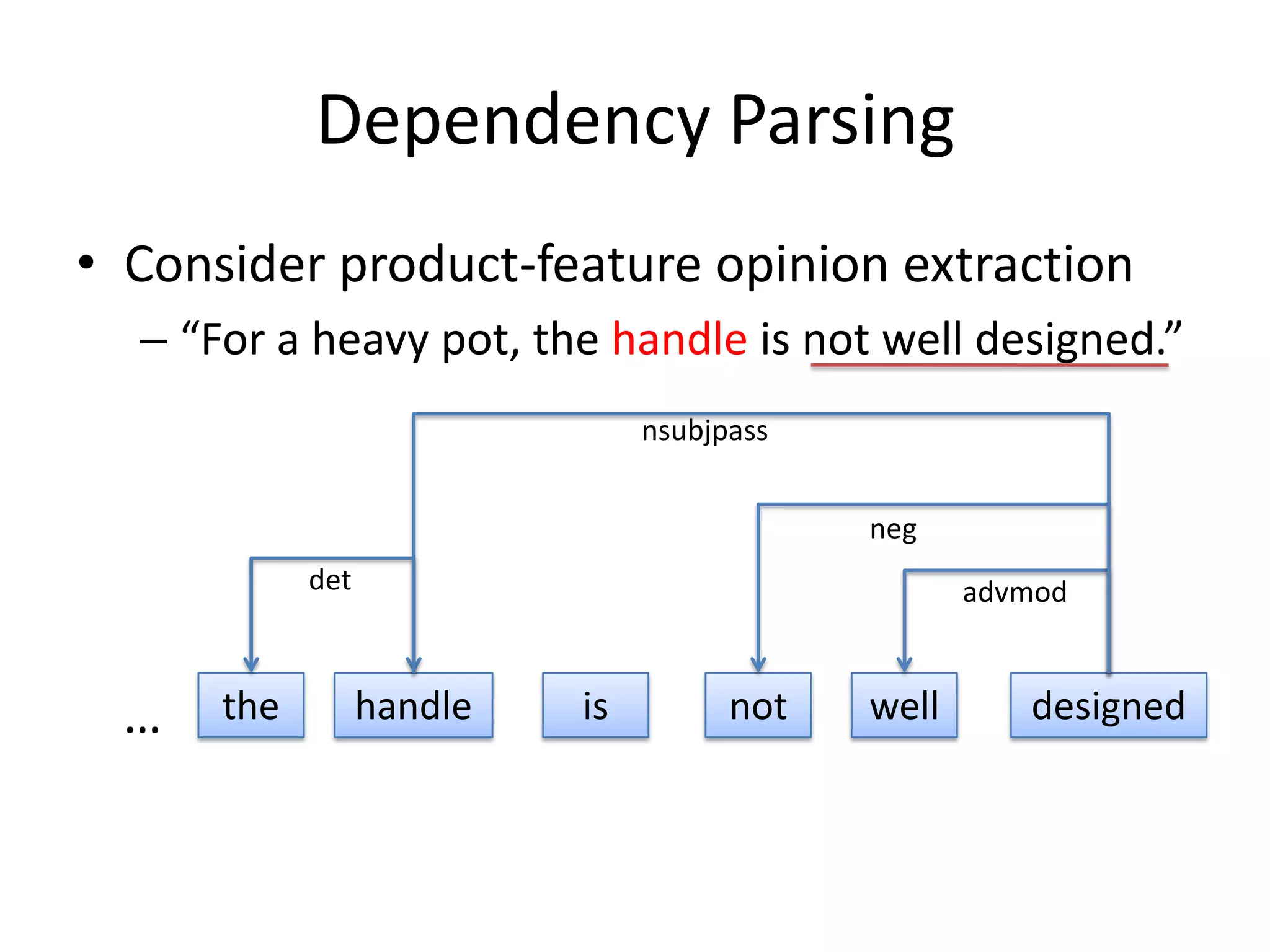

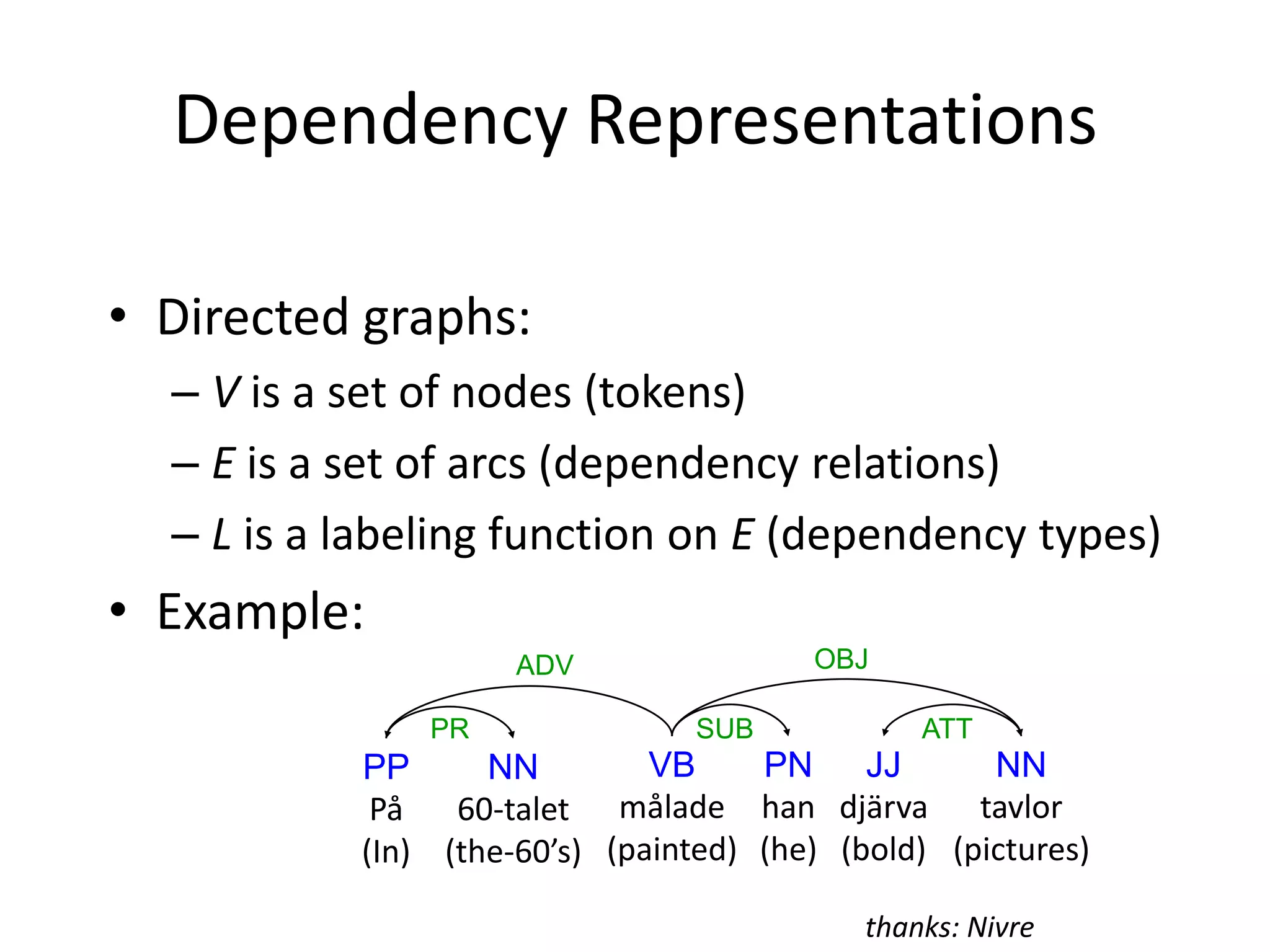

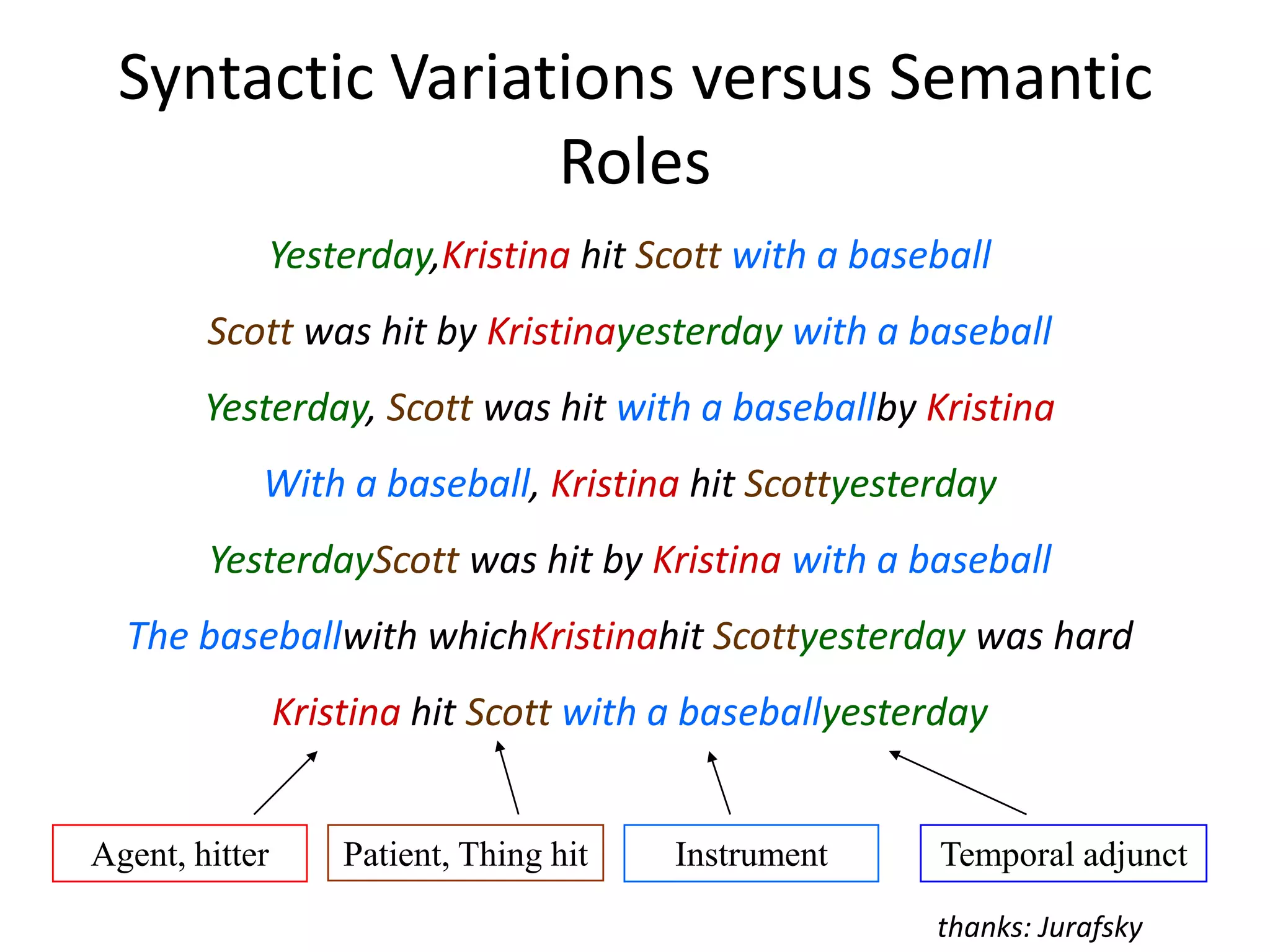

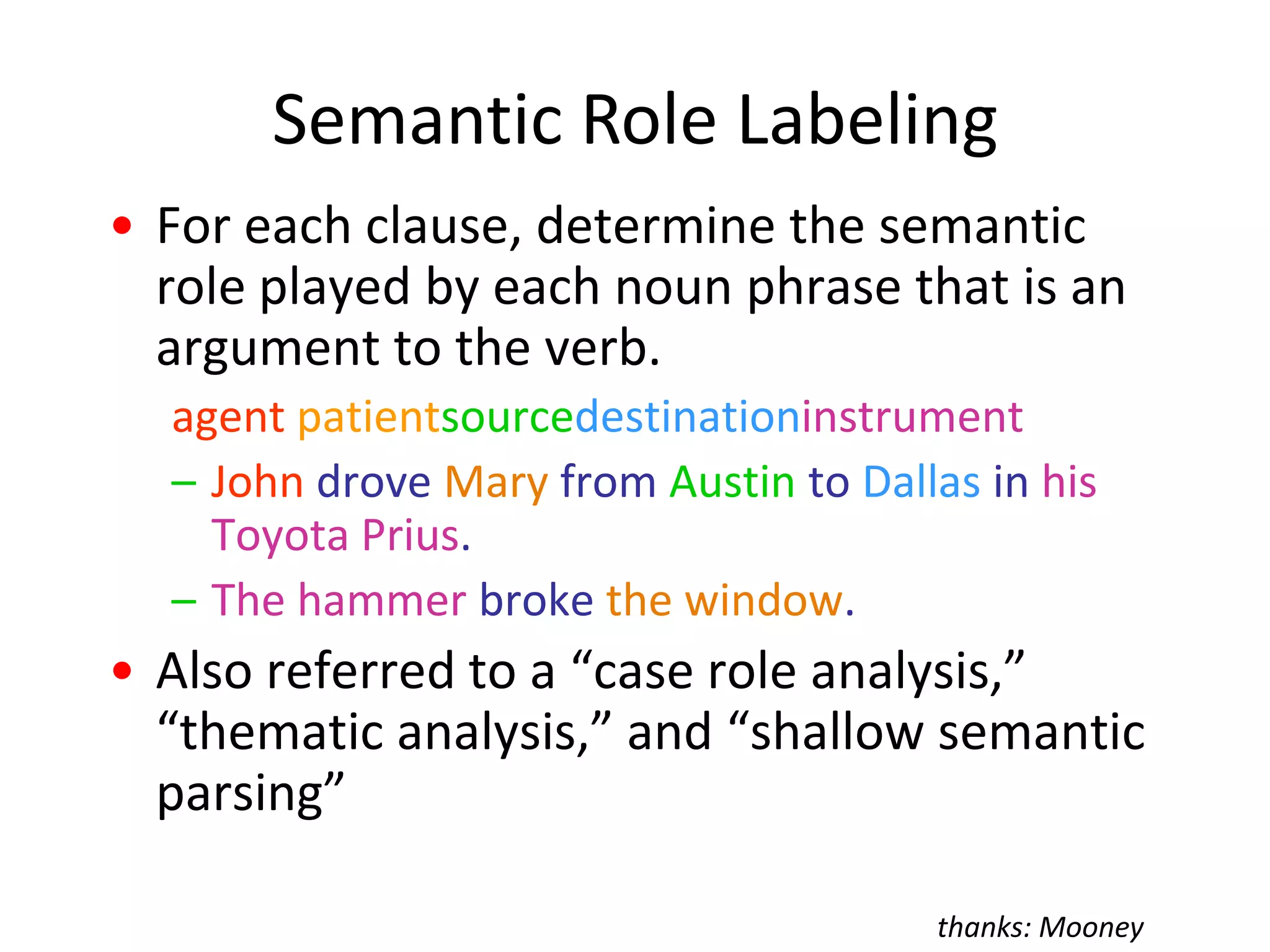

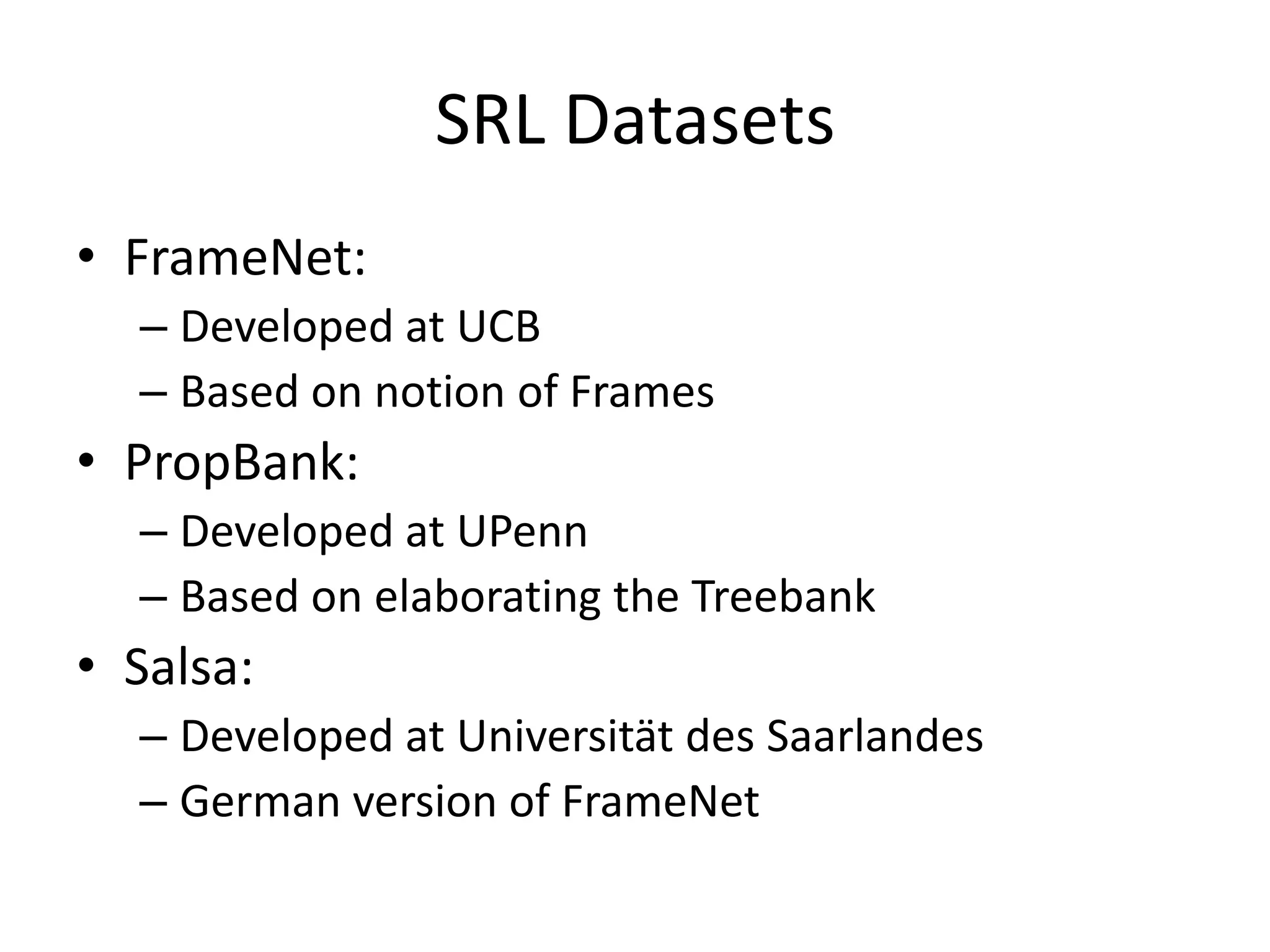

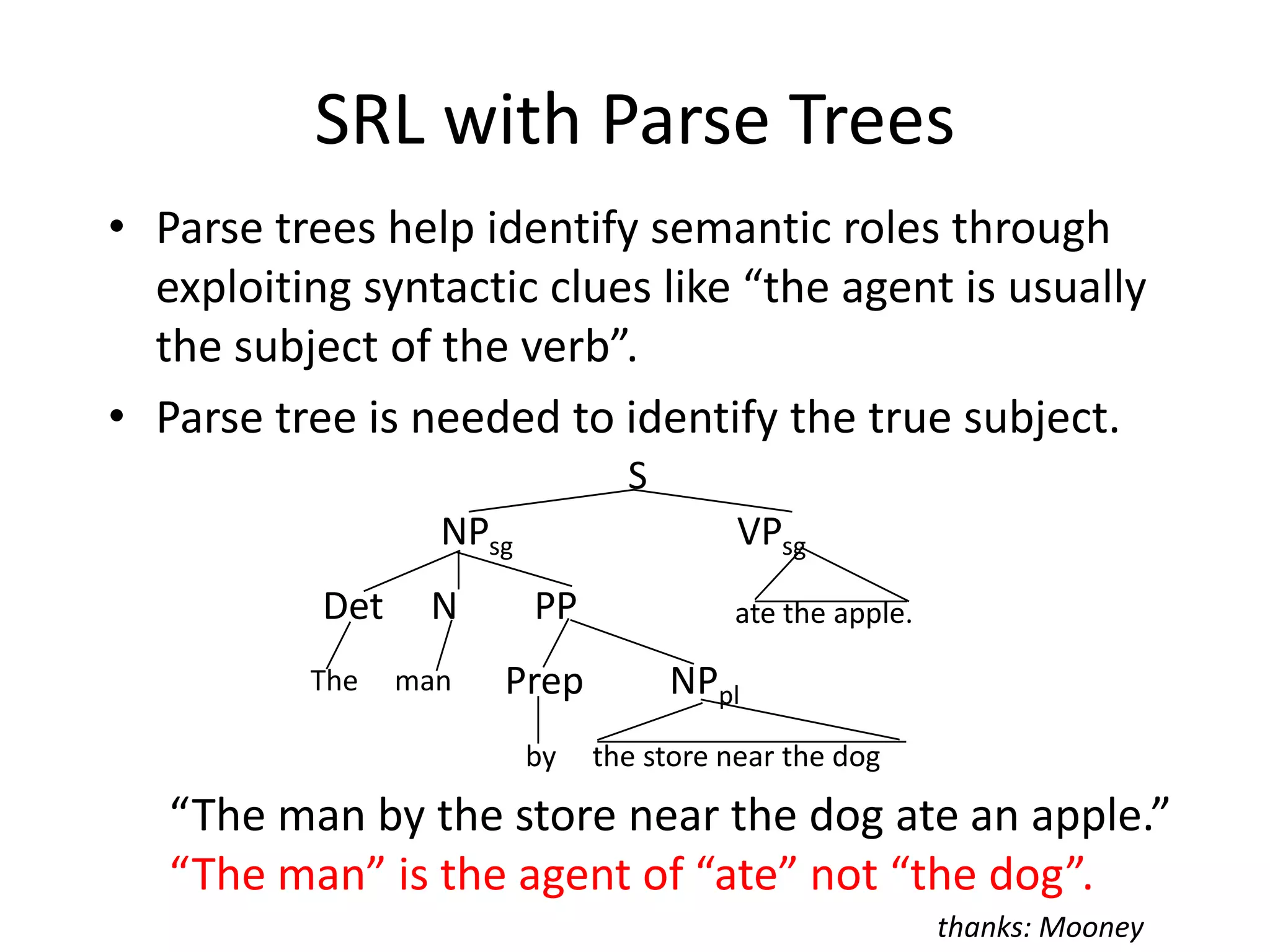

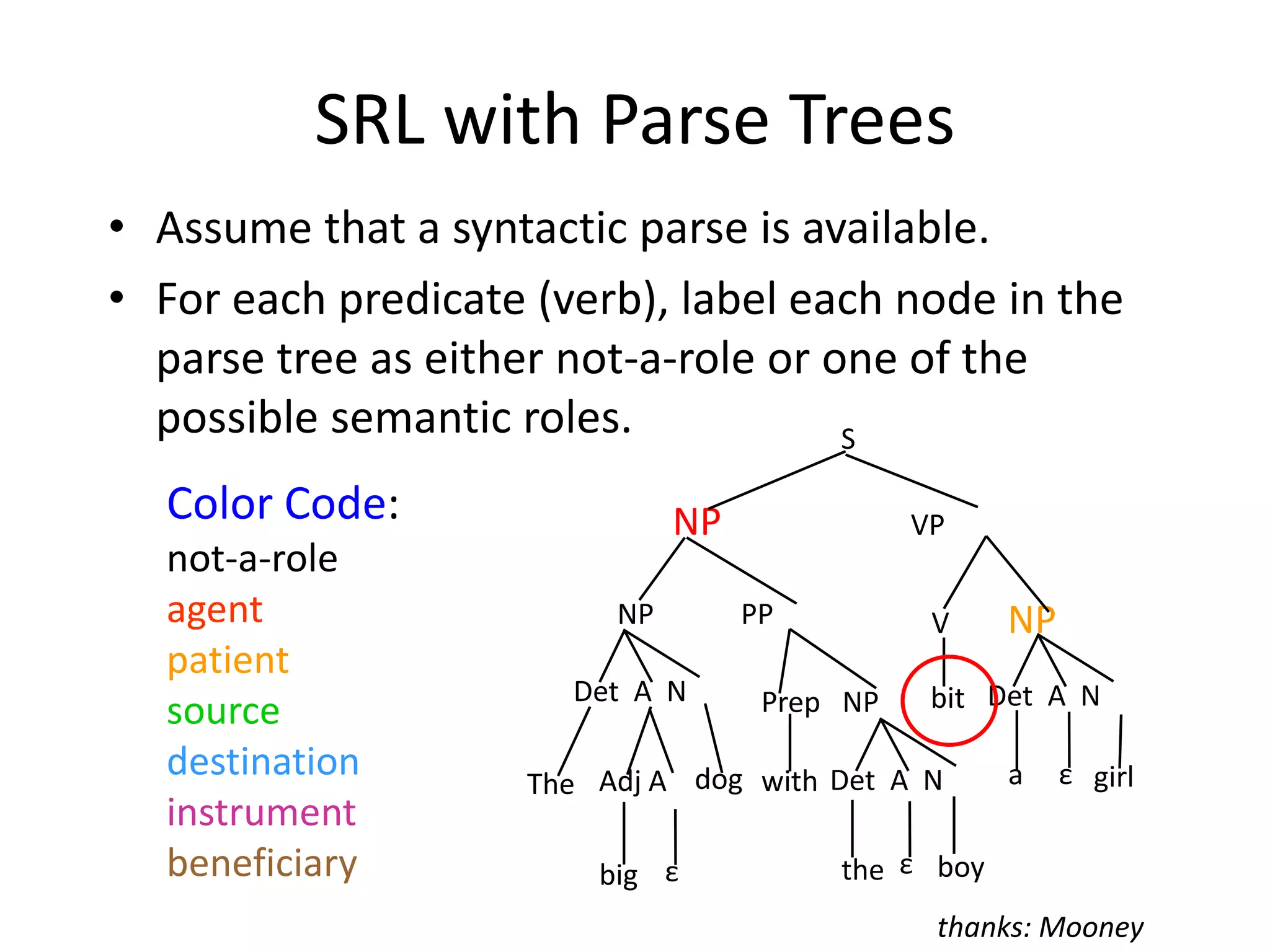

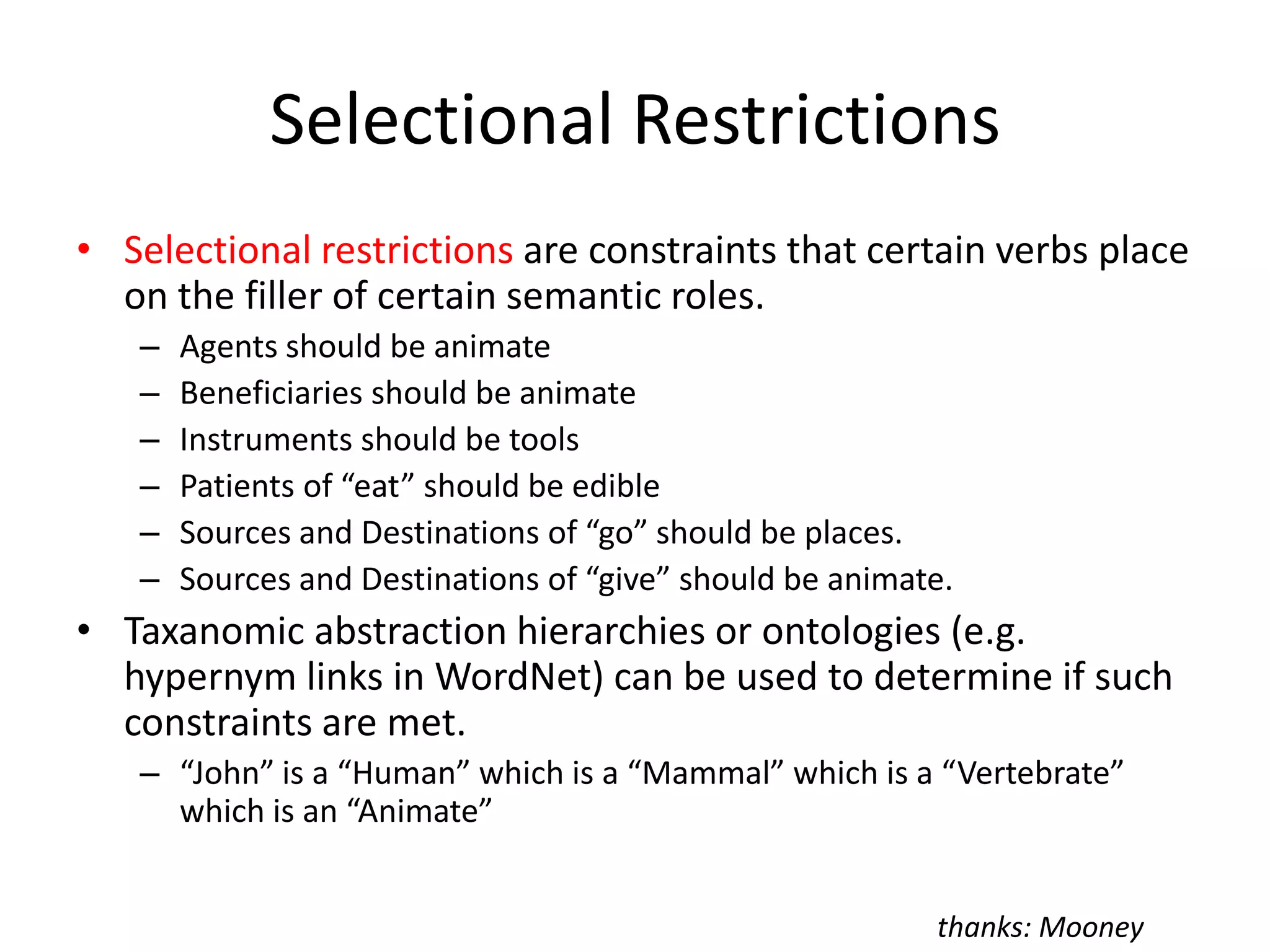

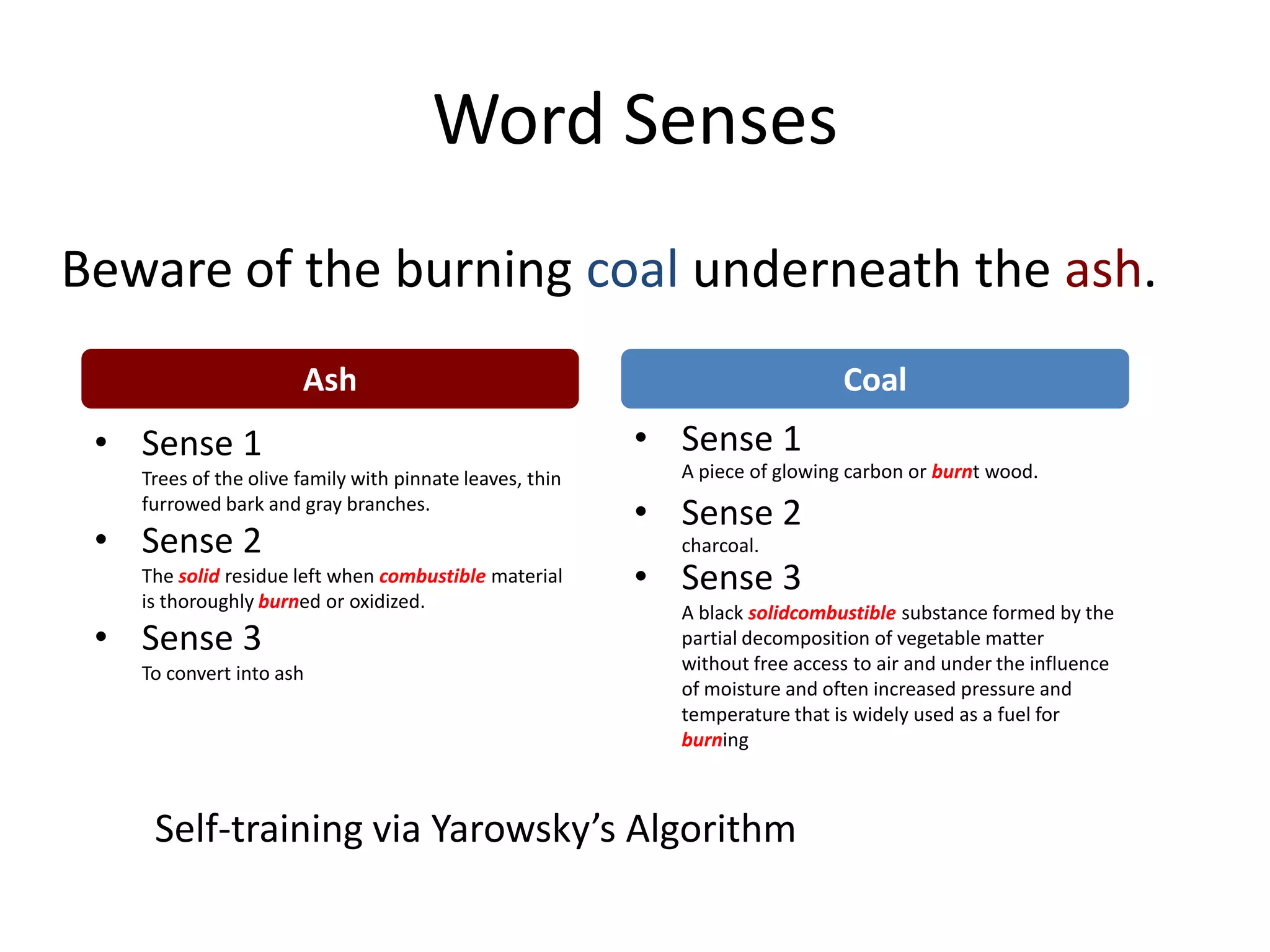

The document discusses various natural language processing (NLP) tasks including named entity recognition, entity linking, question answering, sentiment analysis, dependency parsing, and semantic role labeling. It provides examples and explanations of how each task can be approached, common challenges, and relevant datasets and resources.