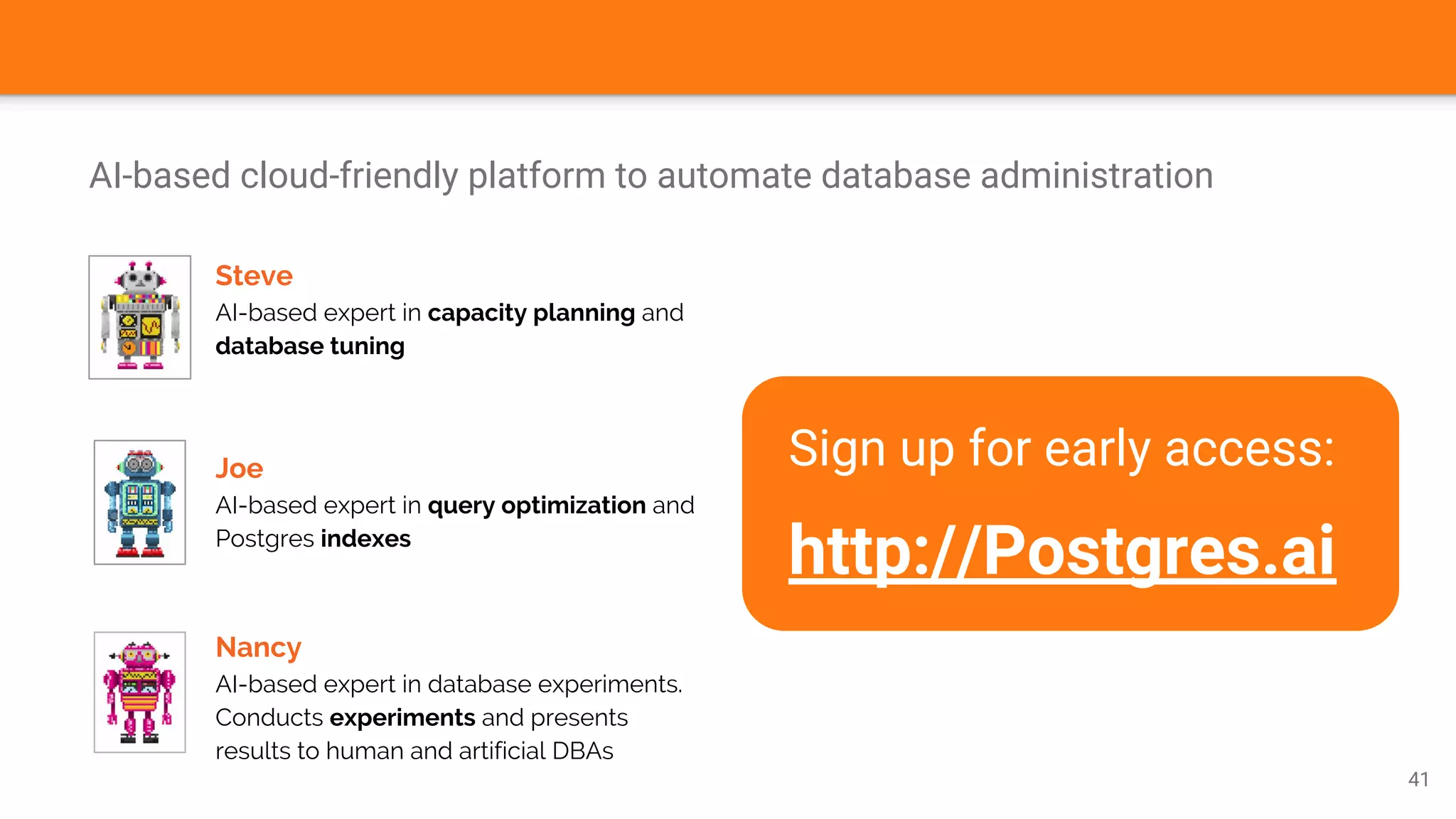

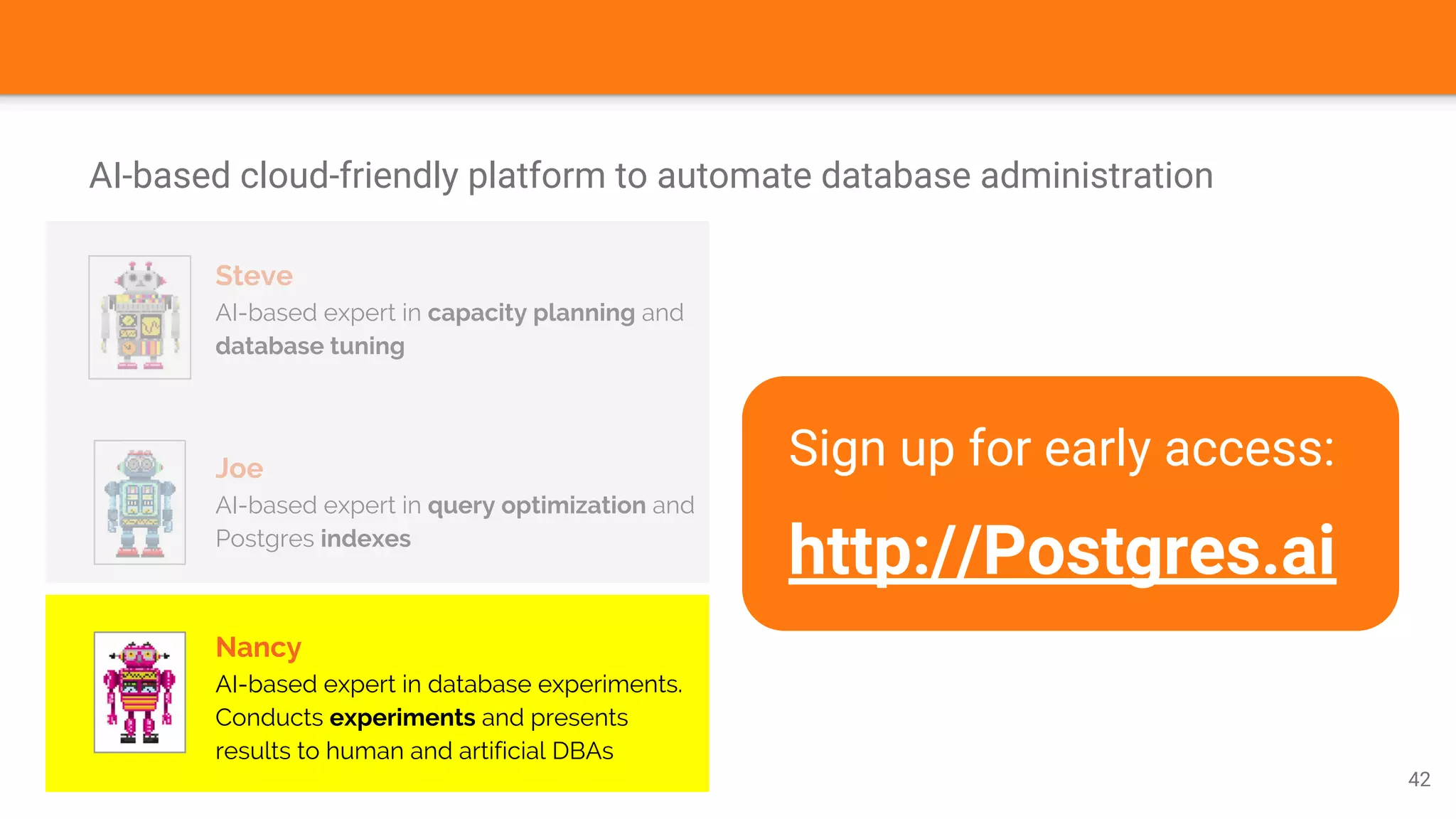

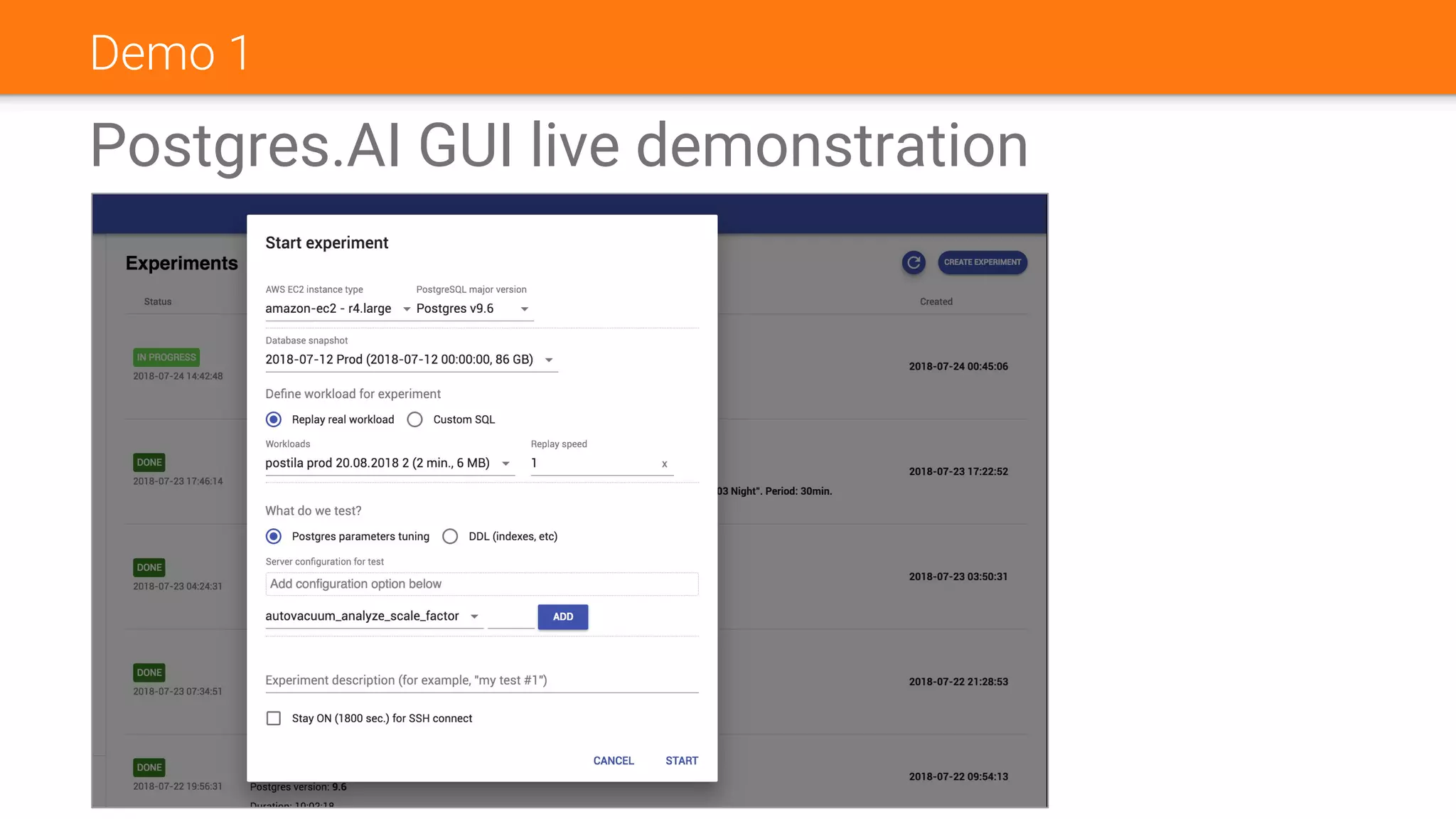

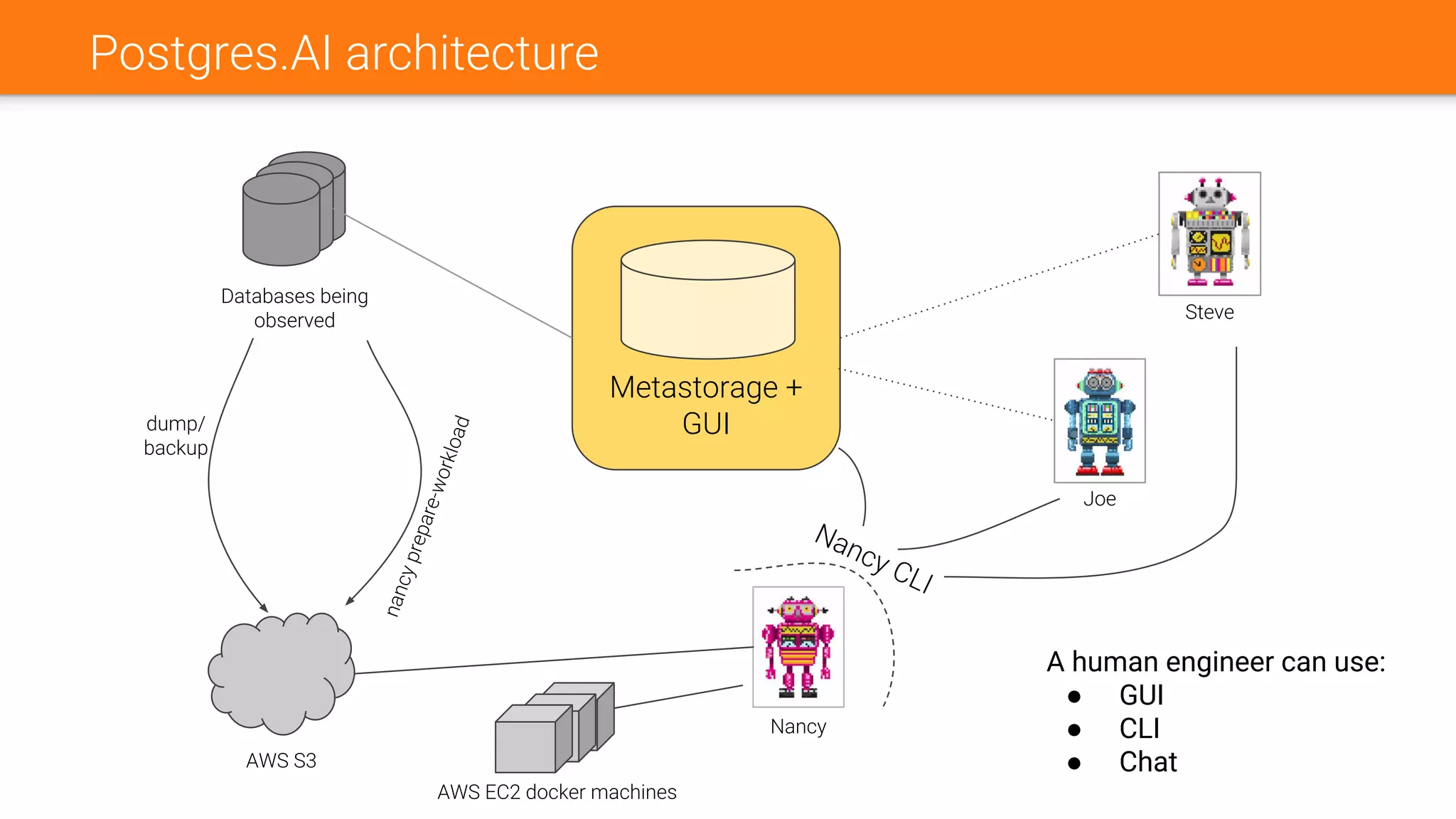

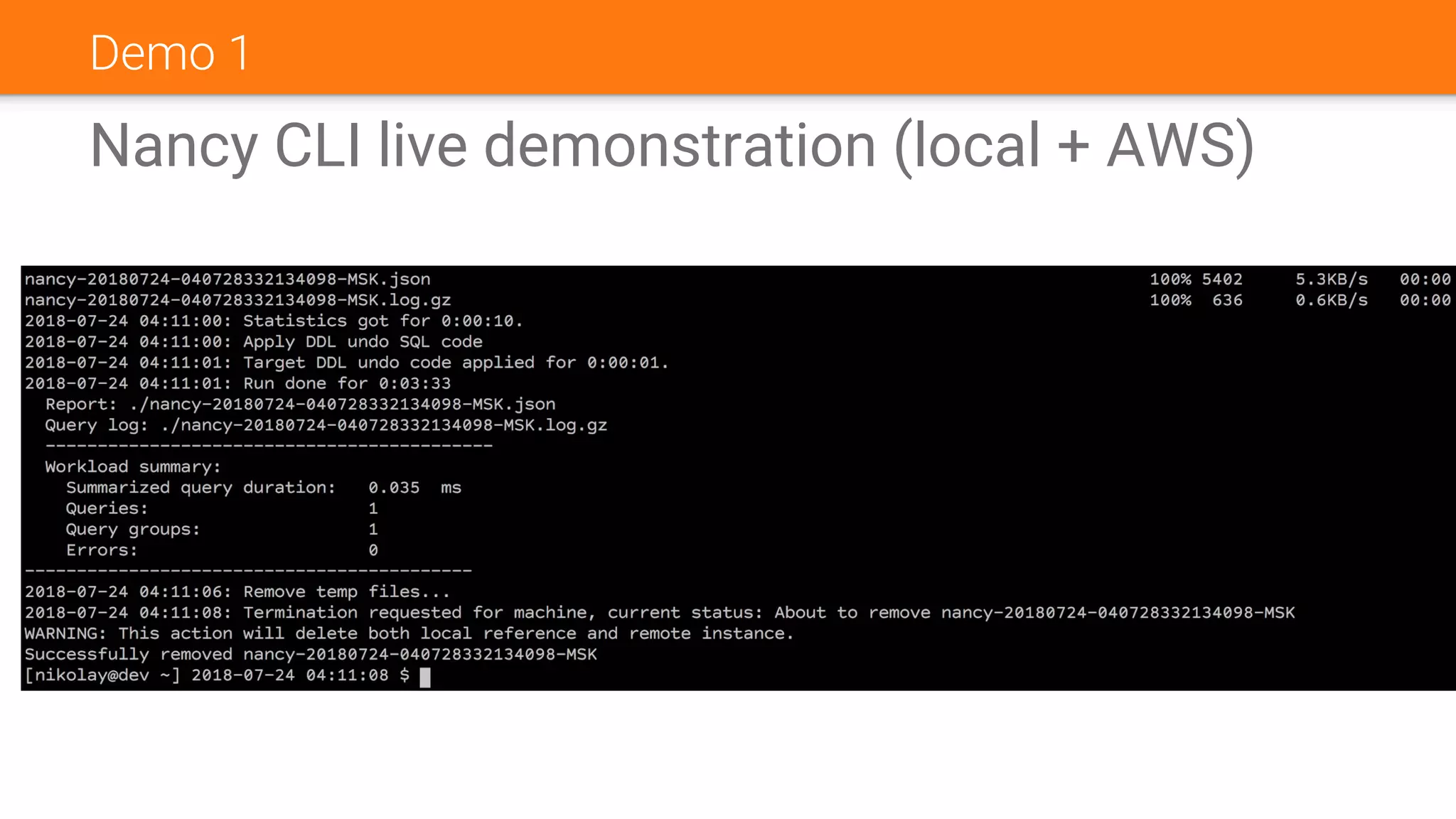

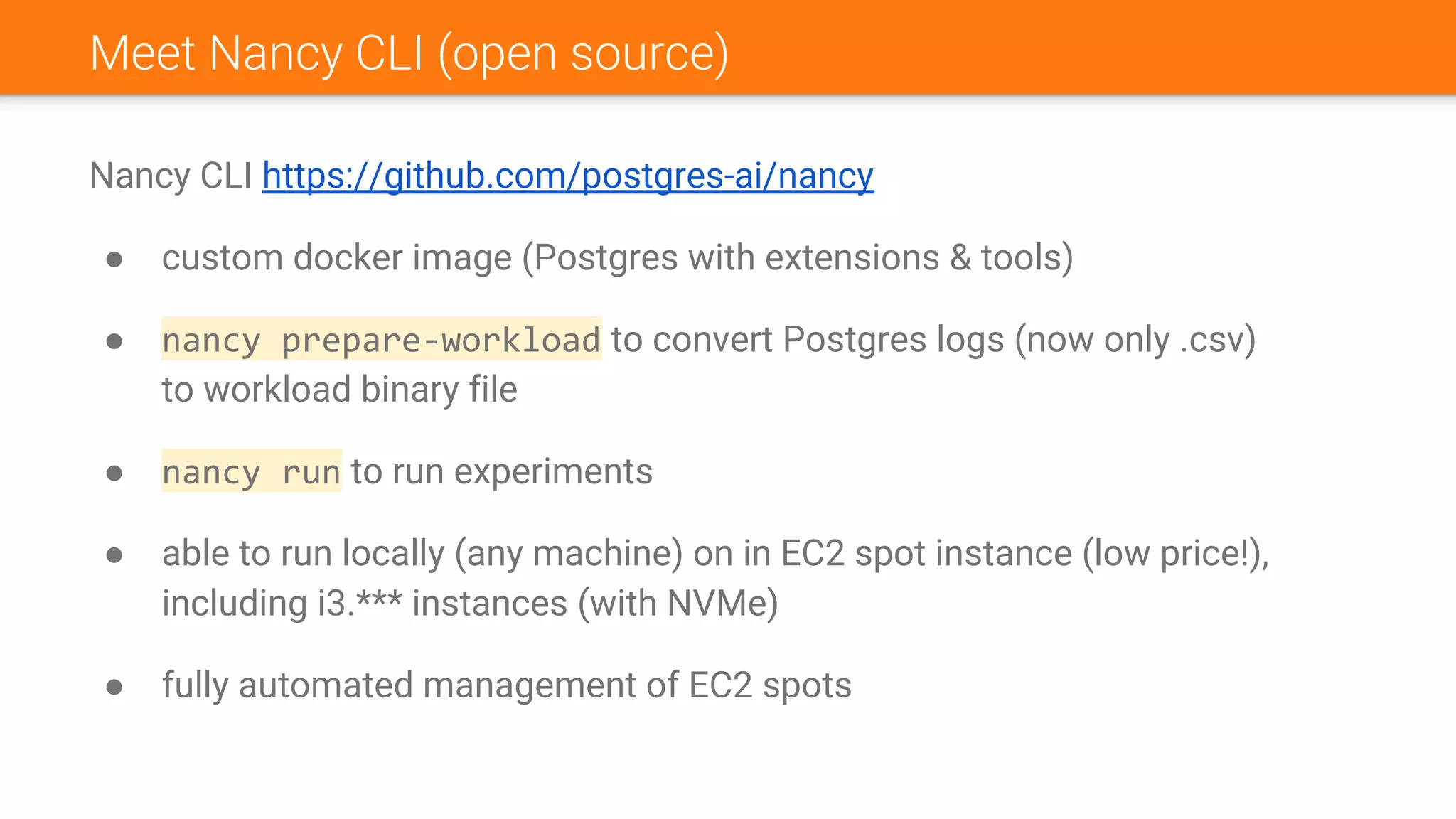

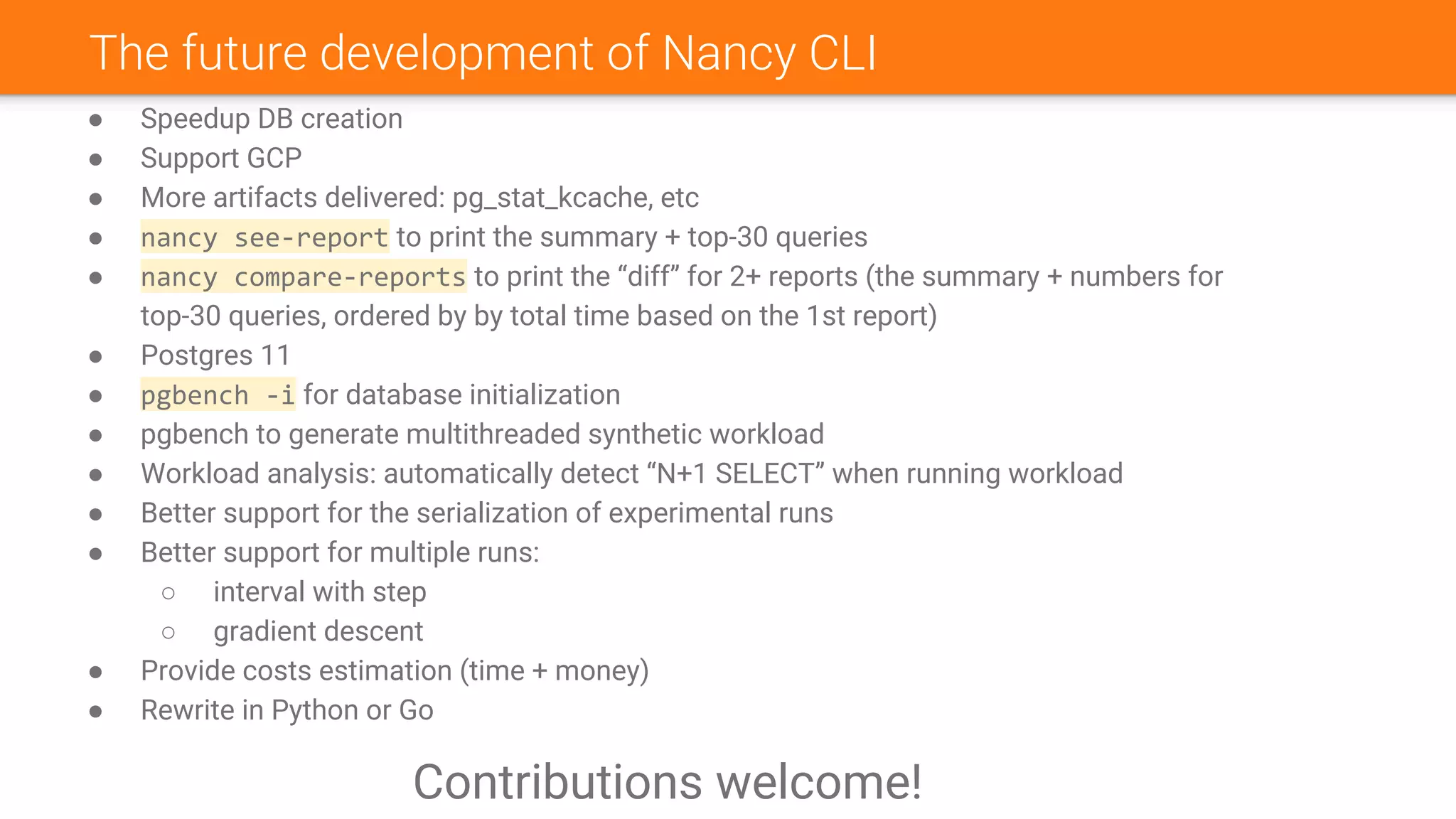

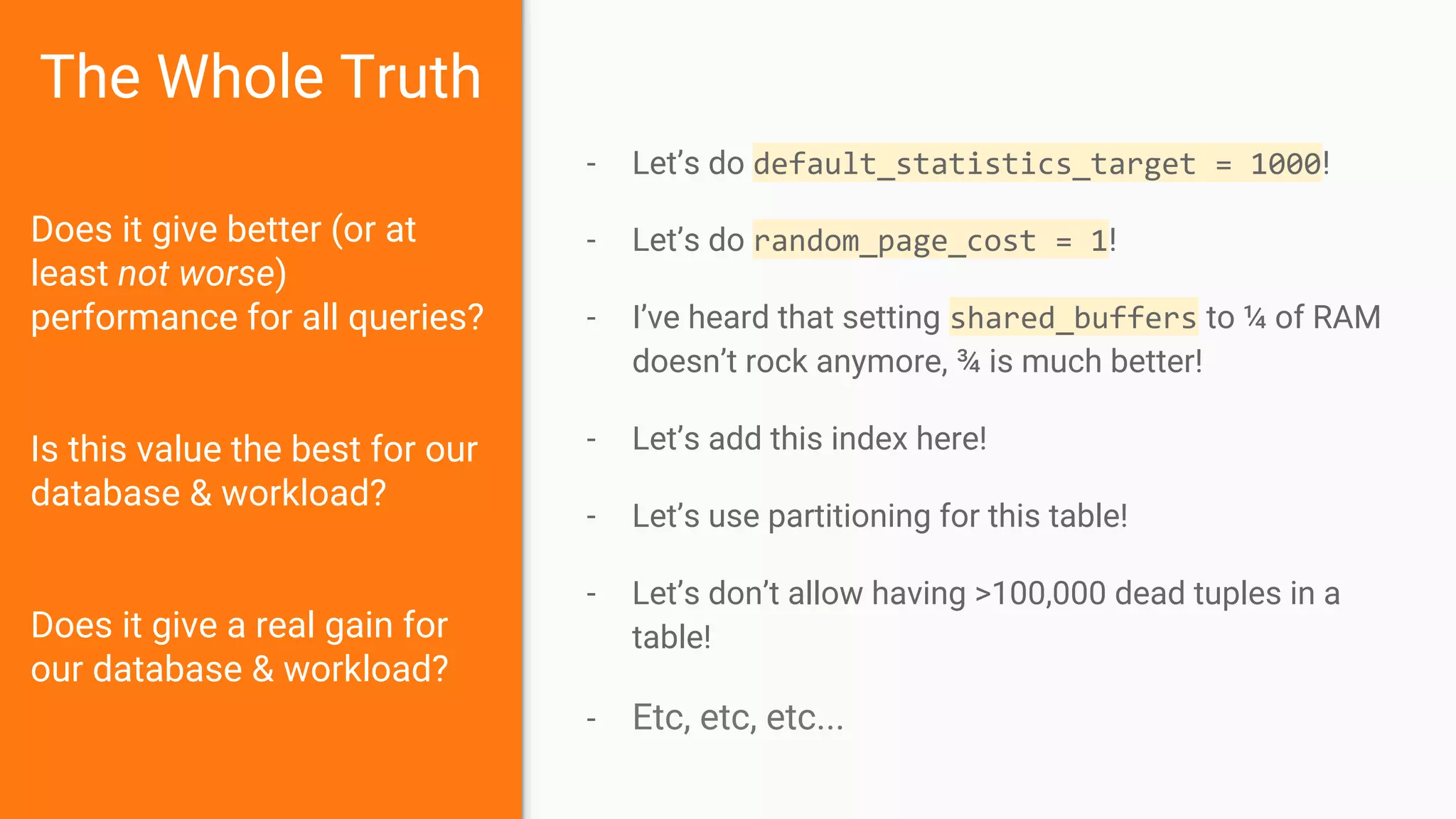

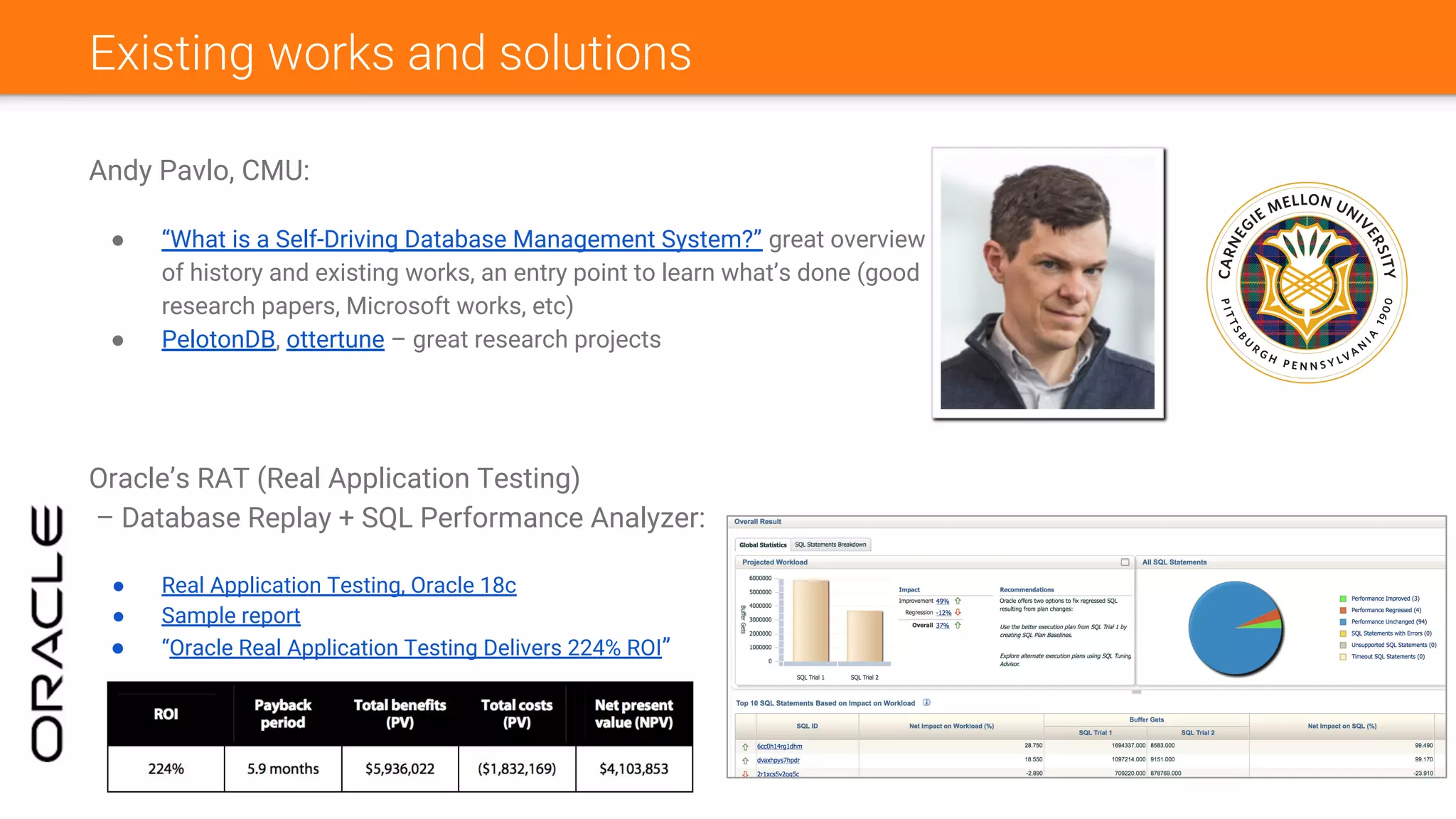

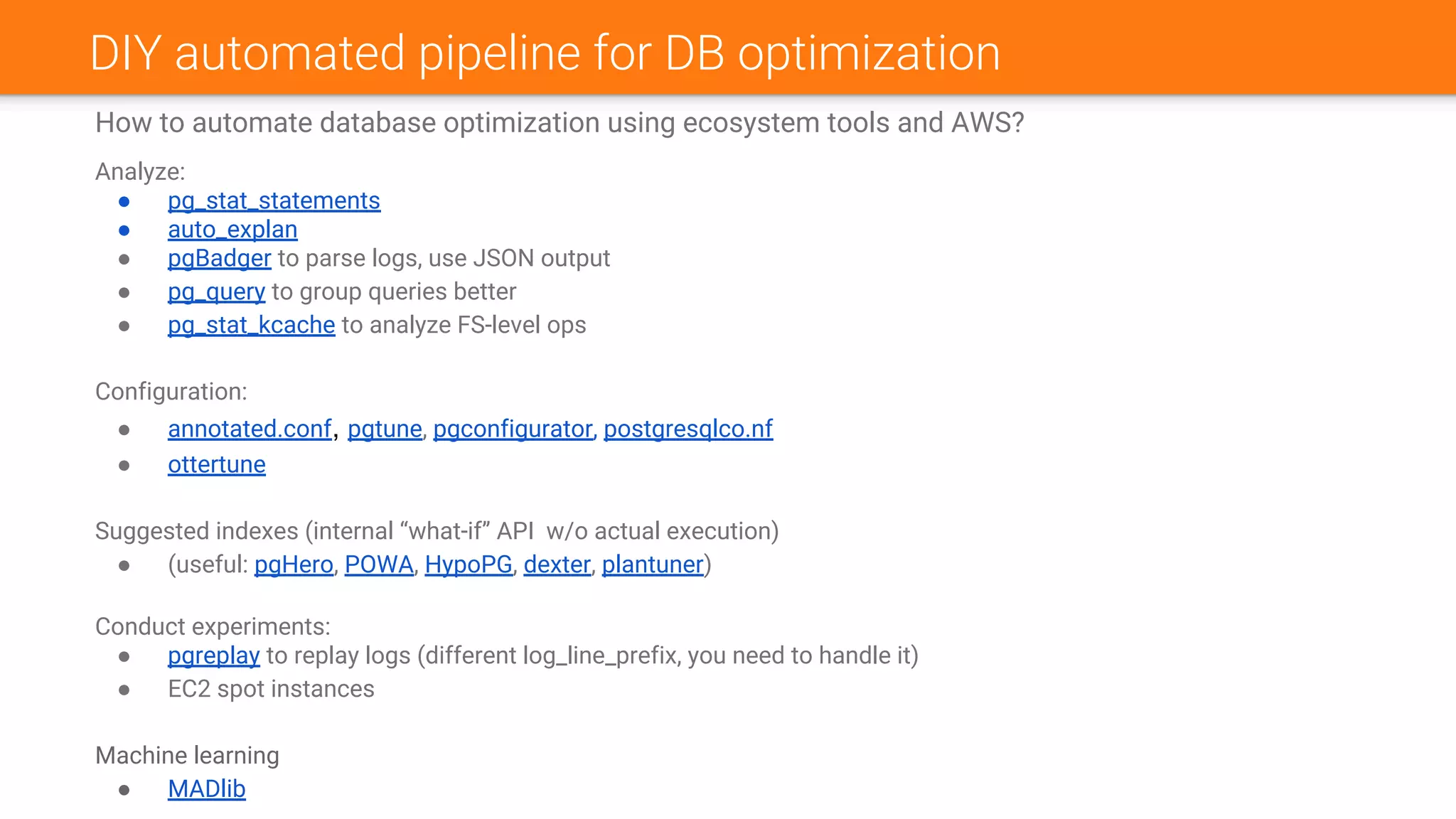

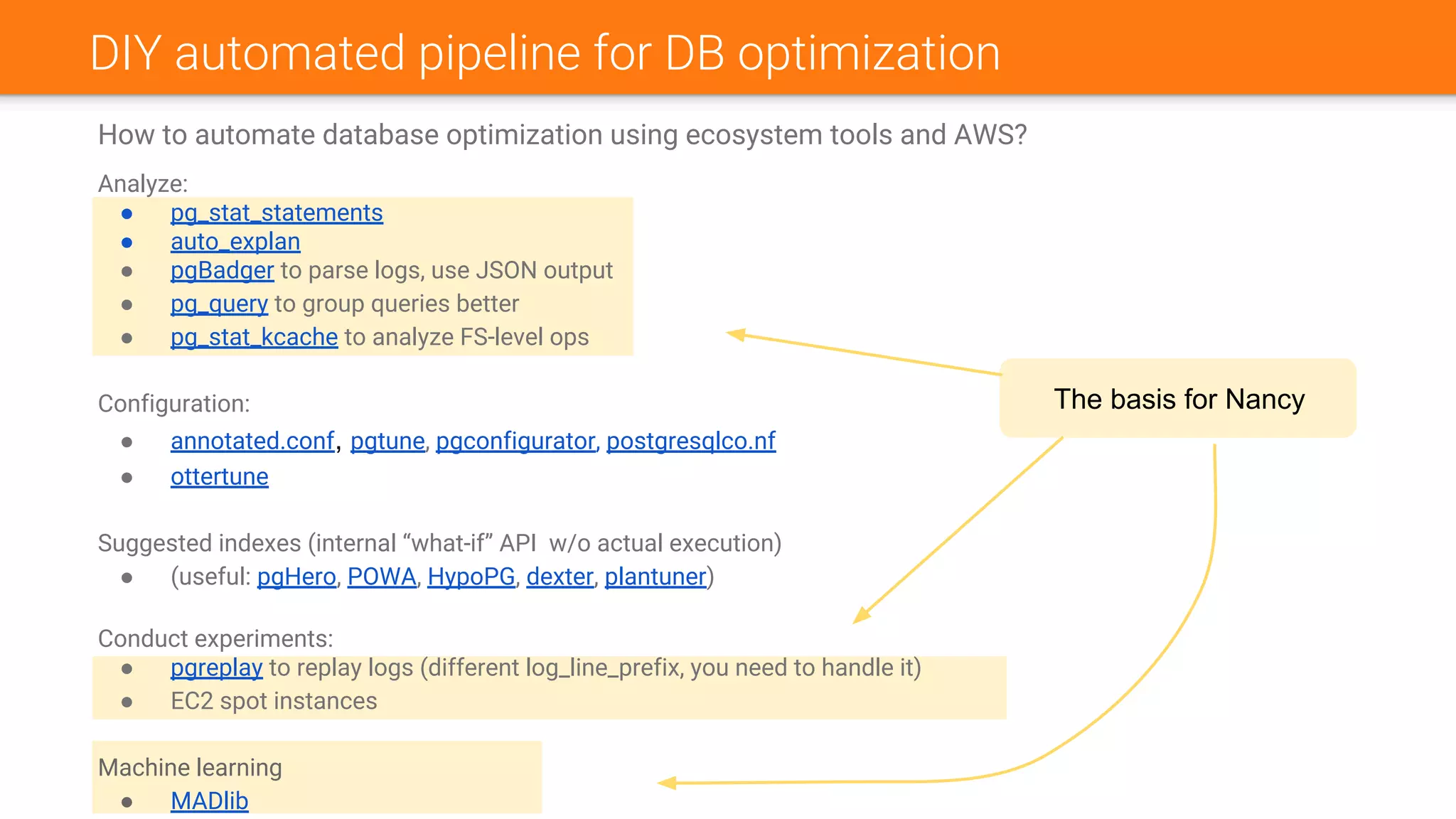

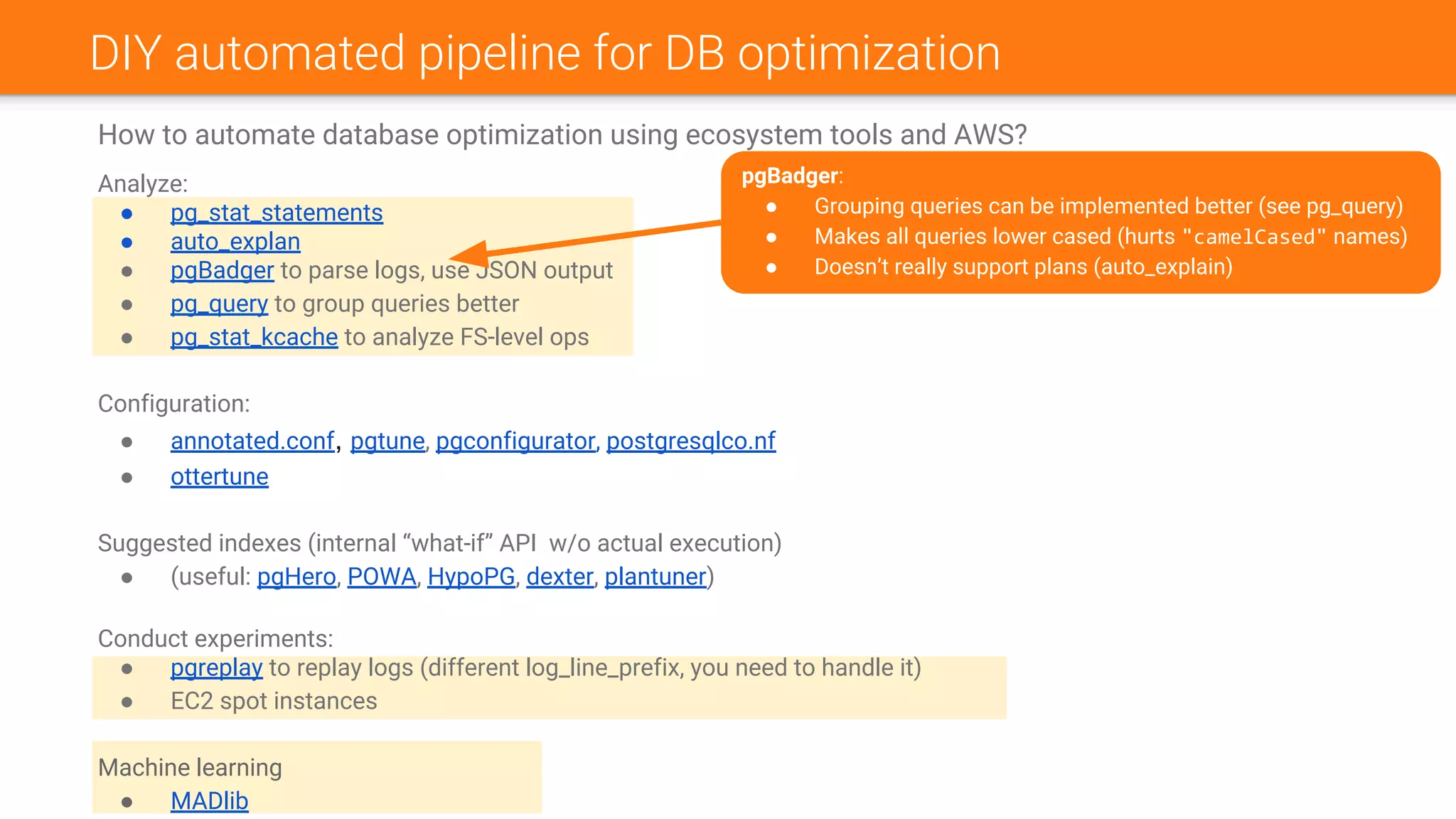

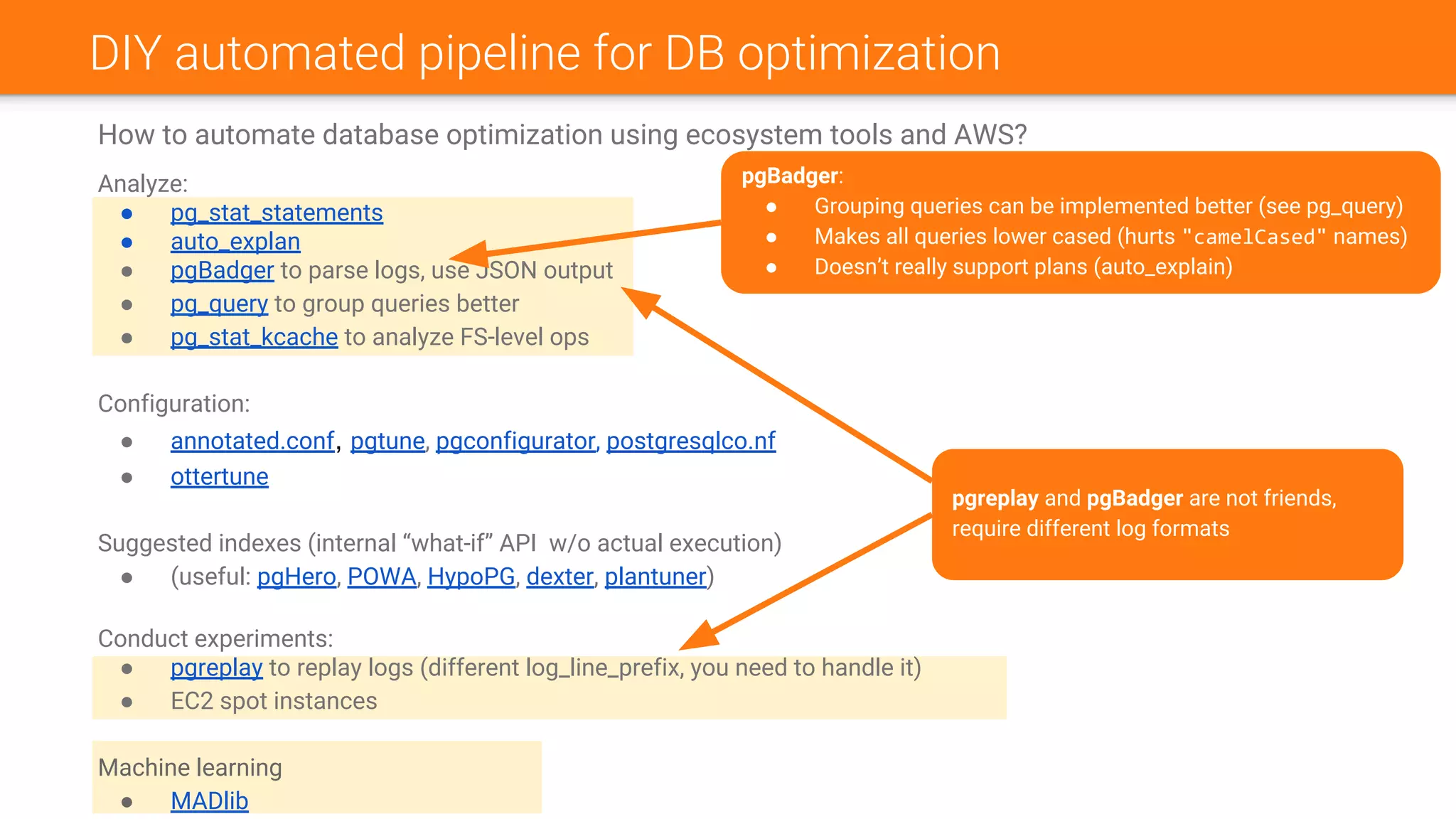

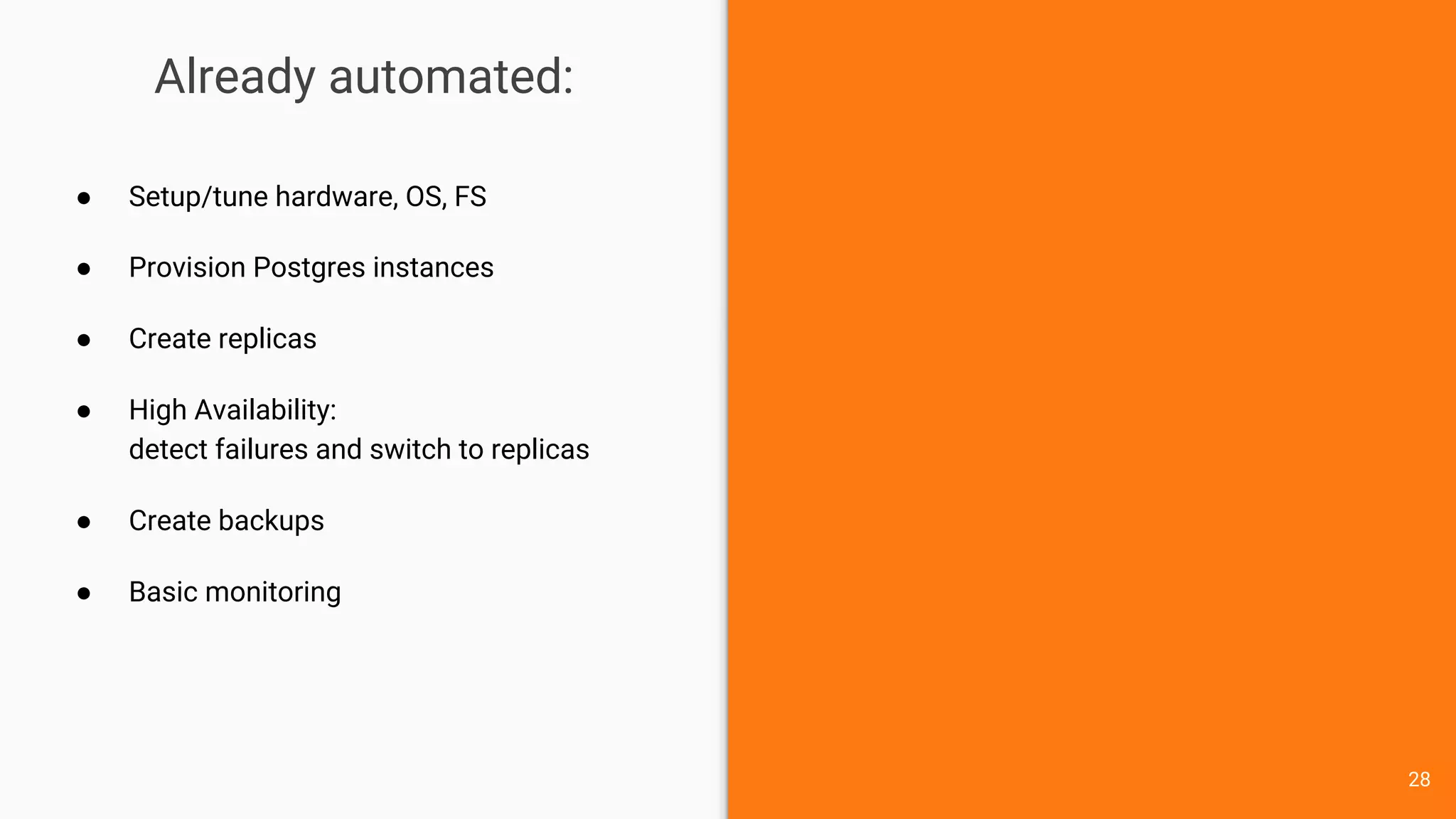

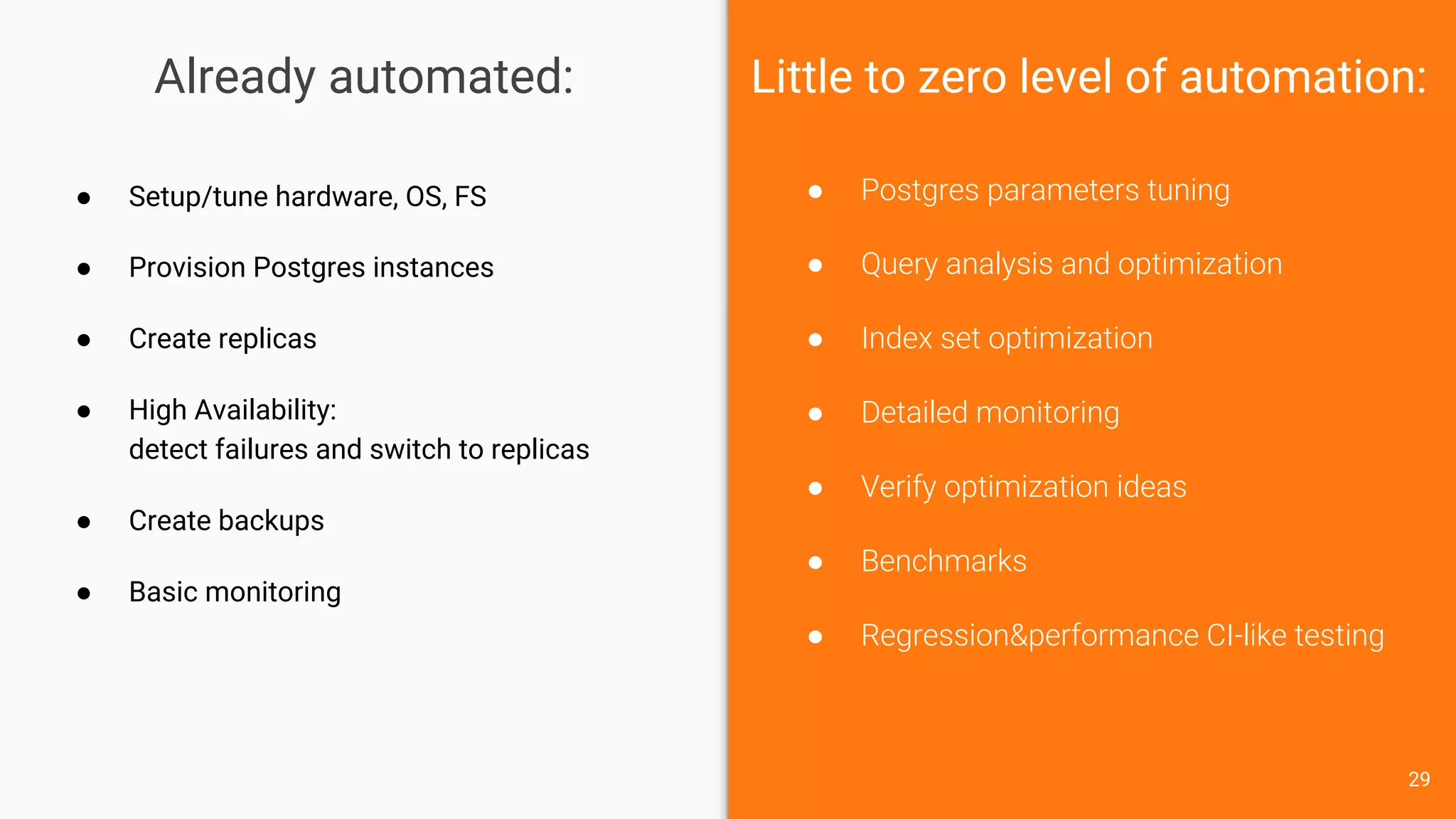

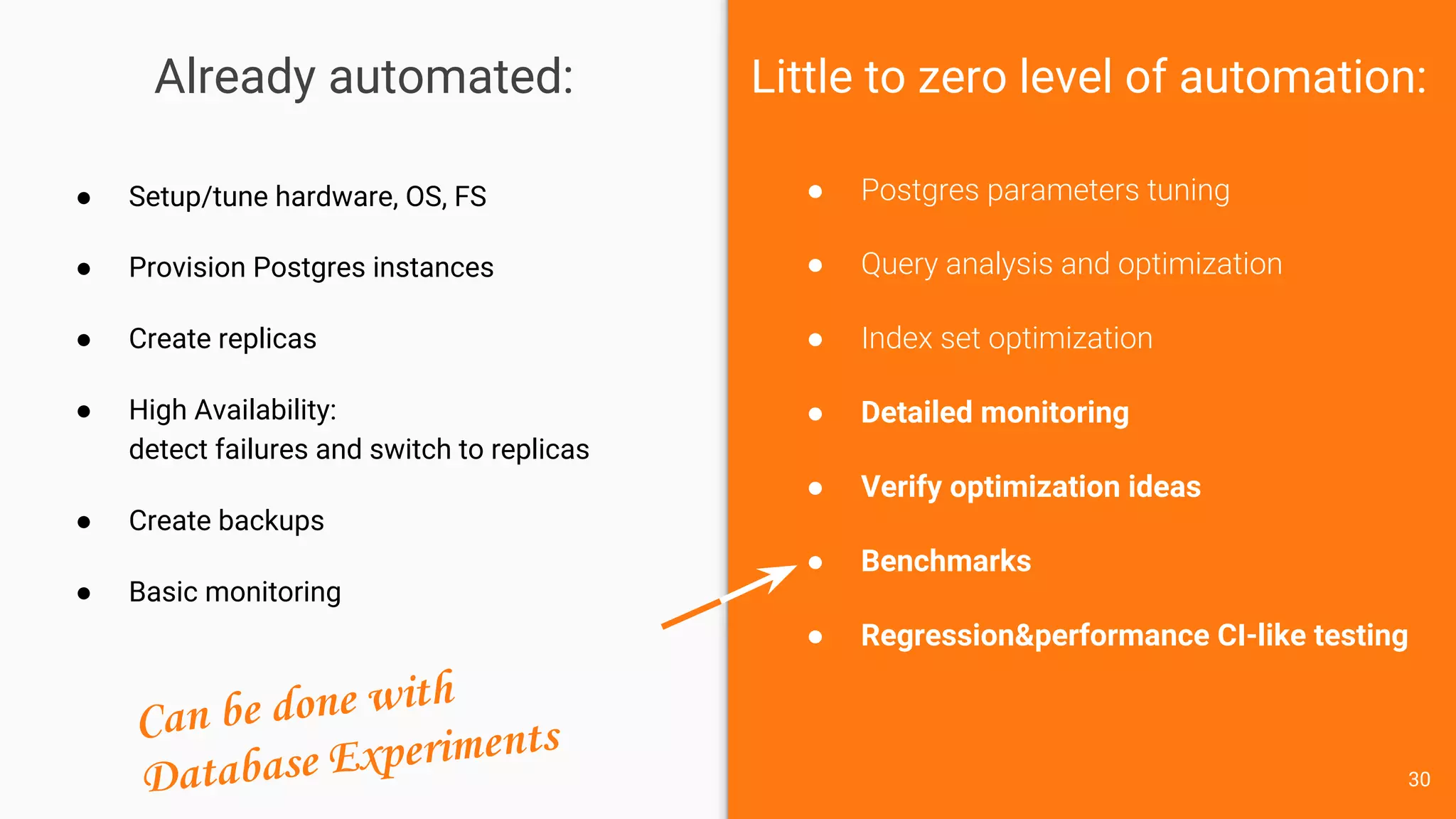

The document discusses Nancy CLI, an automated system for managing database experiments within PostgreSQL environments, highlighting its features including query performance analysis and workload management. It outlines the need for automation in database administration to improve performance and optimize configurations based on experimental data. Existing tools, the development pipeline, and future enhancements for Nancy CLI are also reviewed, emphasizing its potential in continuous database optimization and administration.

![What is the Database Experiment?

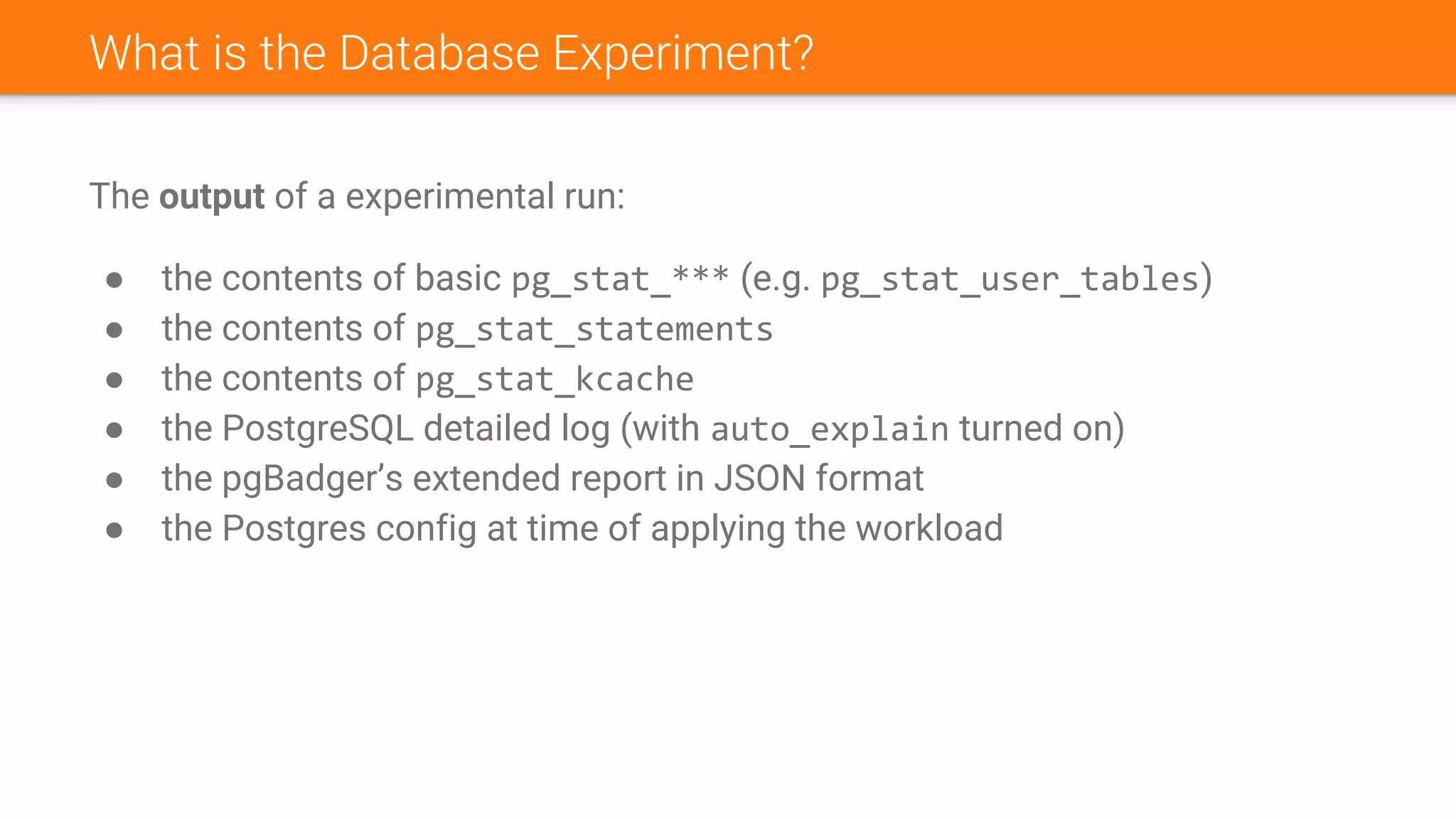

The input of a experimental run:

● Environment

○ Location (on-premise or GCP or AWS), hardware (CPU, RAM, disks)

○ System (OS, file system)

○ Postgres version

○ Postgres configuration

● Database snapshot. Can be:

○ A dump (regular or in directory format)

○ A physical archive (pg_basebackup or pgBackRest/WAL-E/WAL-G/…)

○ A replica promoted for experiments

○ Some synthetic one, a generated database (“create table as …”, pgbench -i, etc)

● Workload. Can be:

○ Synthetic (custom SQL), single-threaded

○ Synthetic (custom SQL), multi-threaded (with pgbench)

○ “Real workload” (based on logs)

● [Optional] Delta:

○ Configuration change(s) (e.g.: shared_buffers = 16GB)

○ Some DDL (e.g.: `create index …`). “Undo” DDL is required in this case `drop index …`) to enable

serialization of experiments](https://image.slidesharecdn.com/nancycli-180725050819/75/Nancy-CLI-Automated-Database-Experiments-39-2048.jpg)