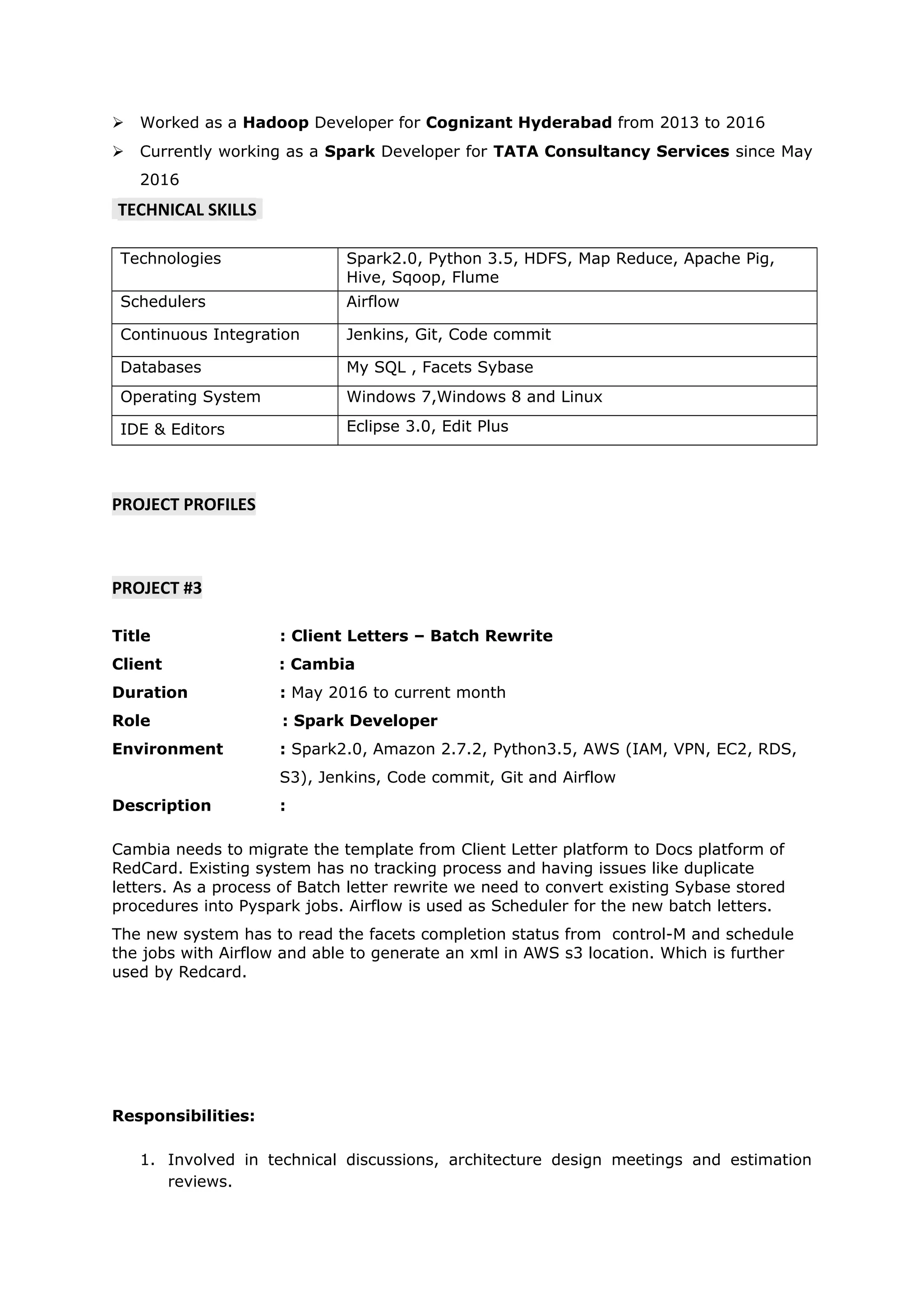

Nagarjuna Damarla is seeking a position that allows him to contribute fully and continue learning. He has 4 years of experience in big data tools like Spark, AWS, HDFS and reporting tools. His current role involves developing Spark jobs to migrate templates from one platform to another at Cambia Health. Previously he worked on data analysis projects involving Hadoop, Hive, Pig and SQL at Capital One and Great Eastern Life Insurance.