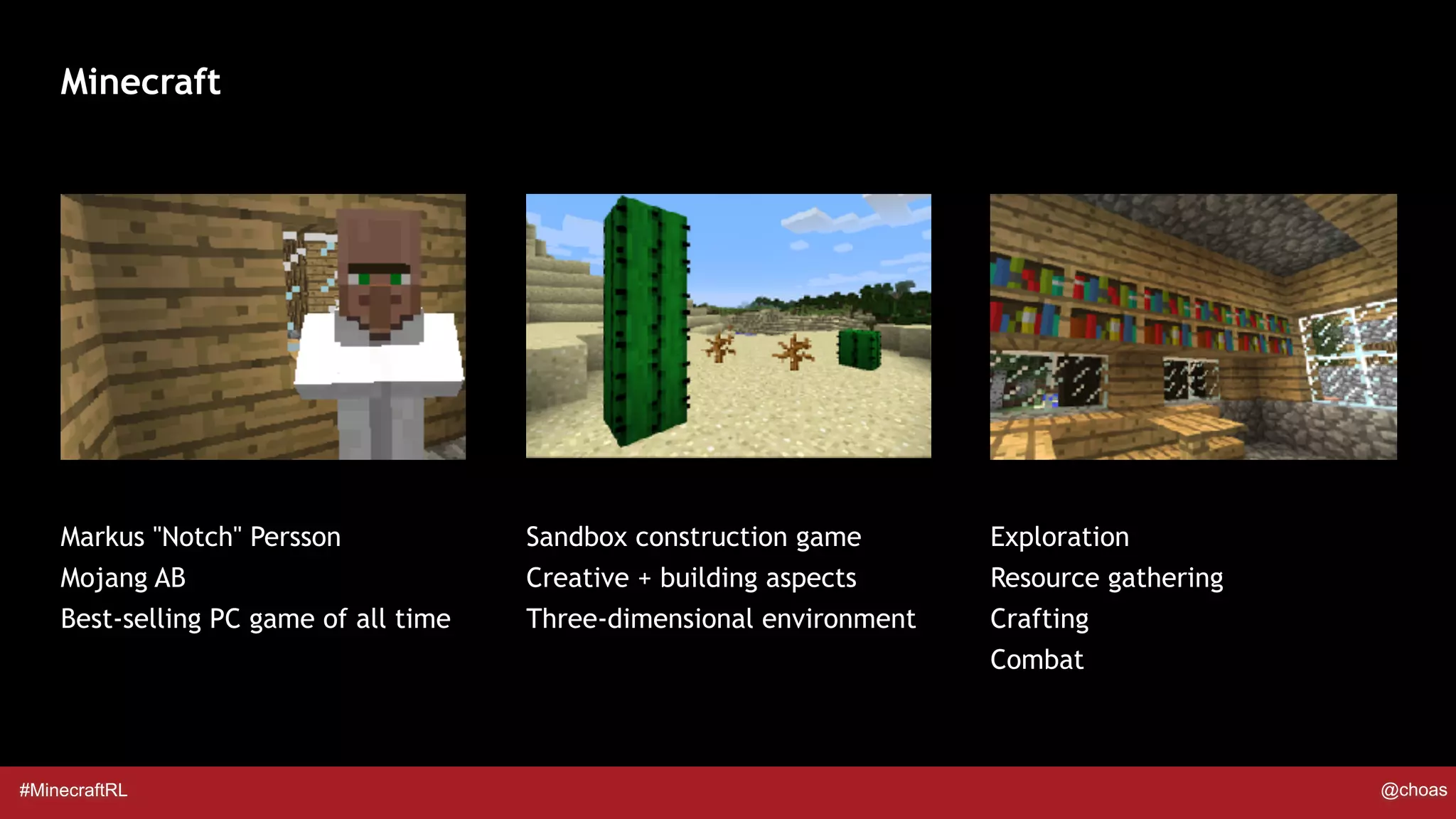

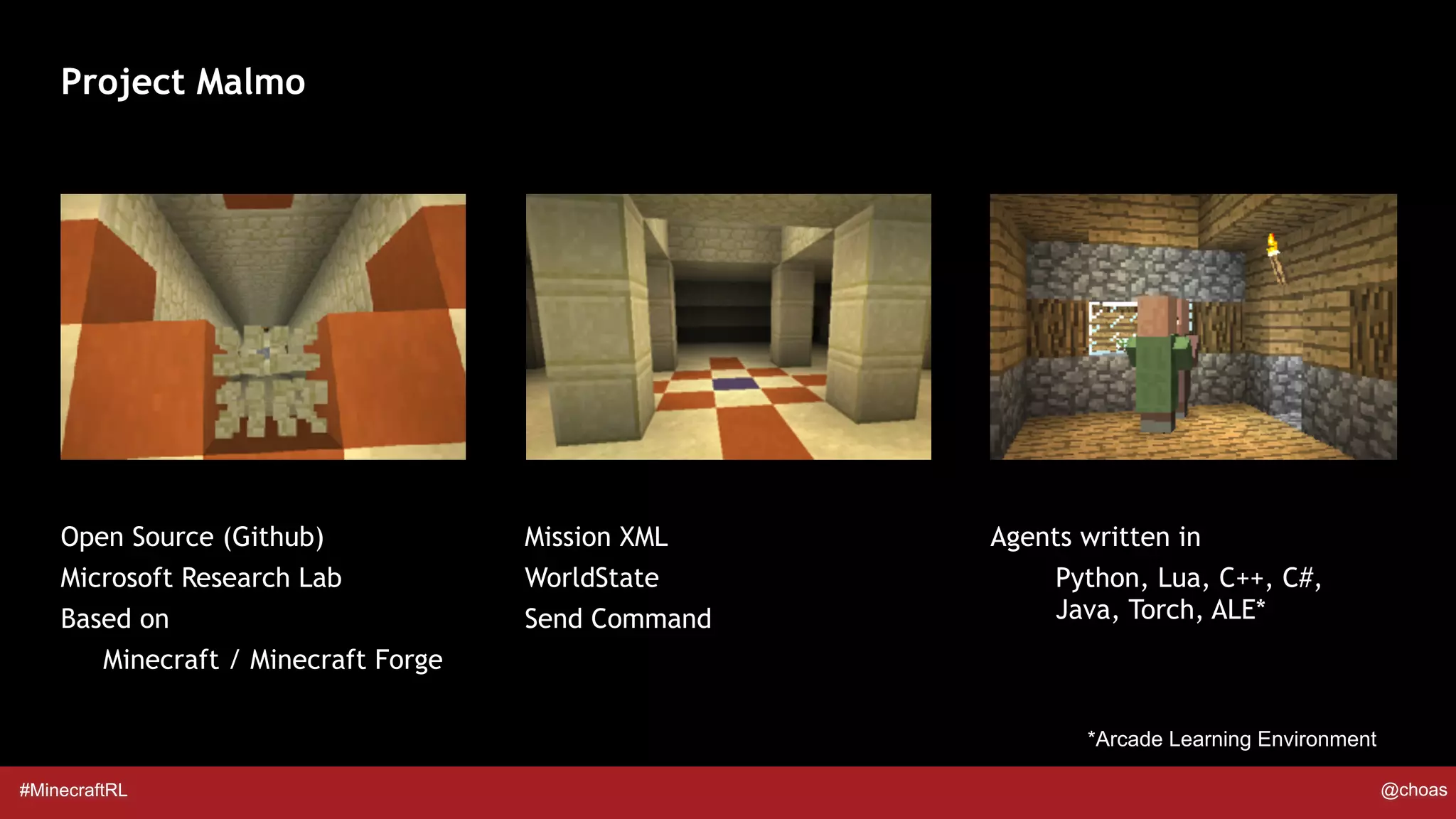

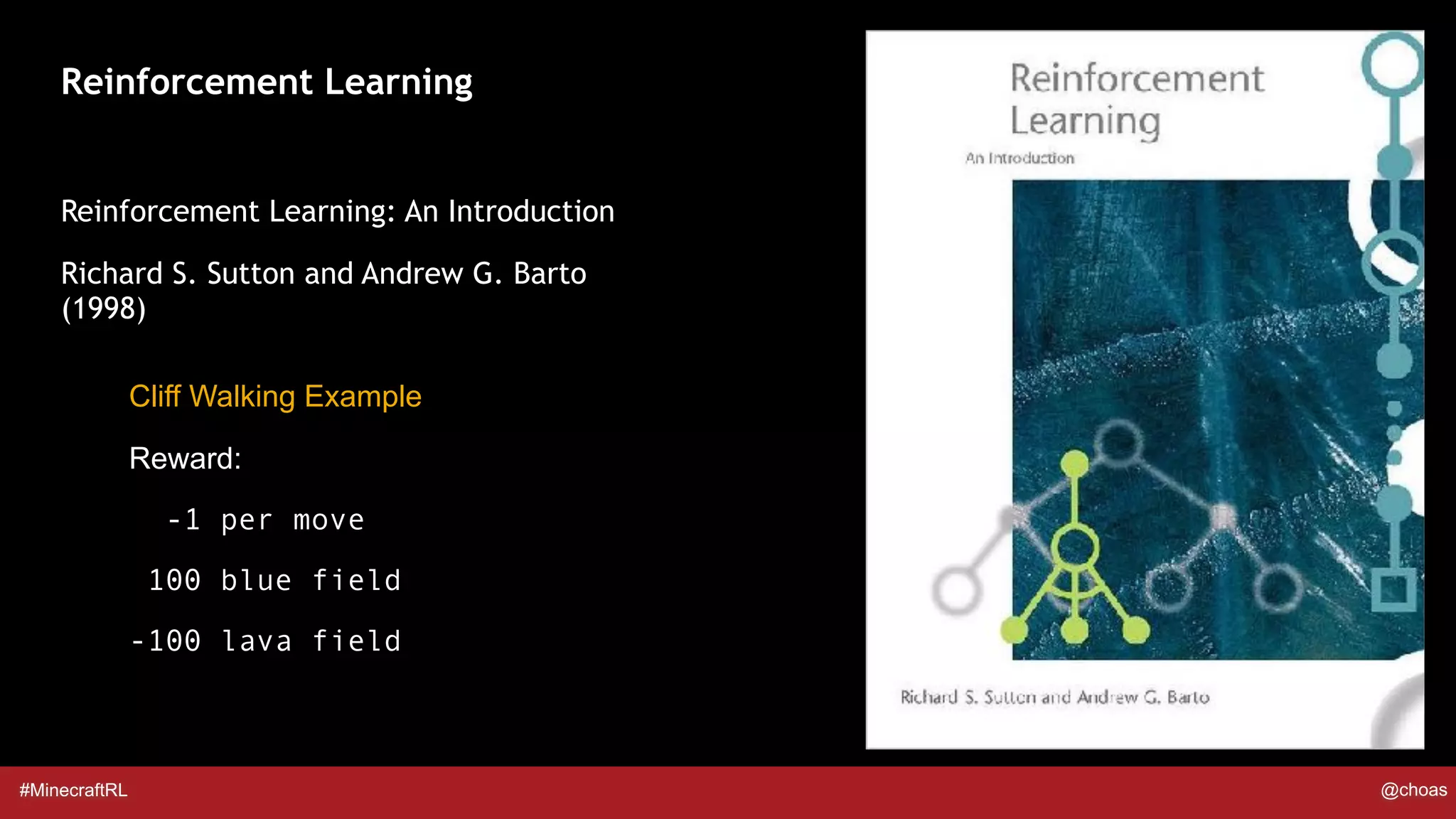

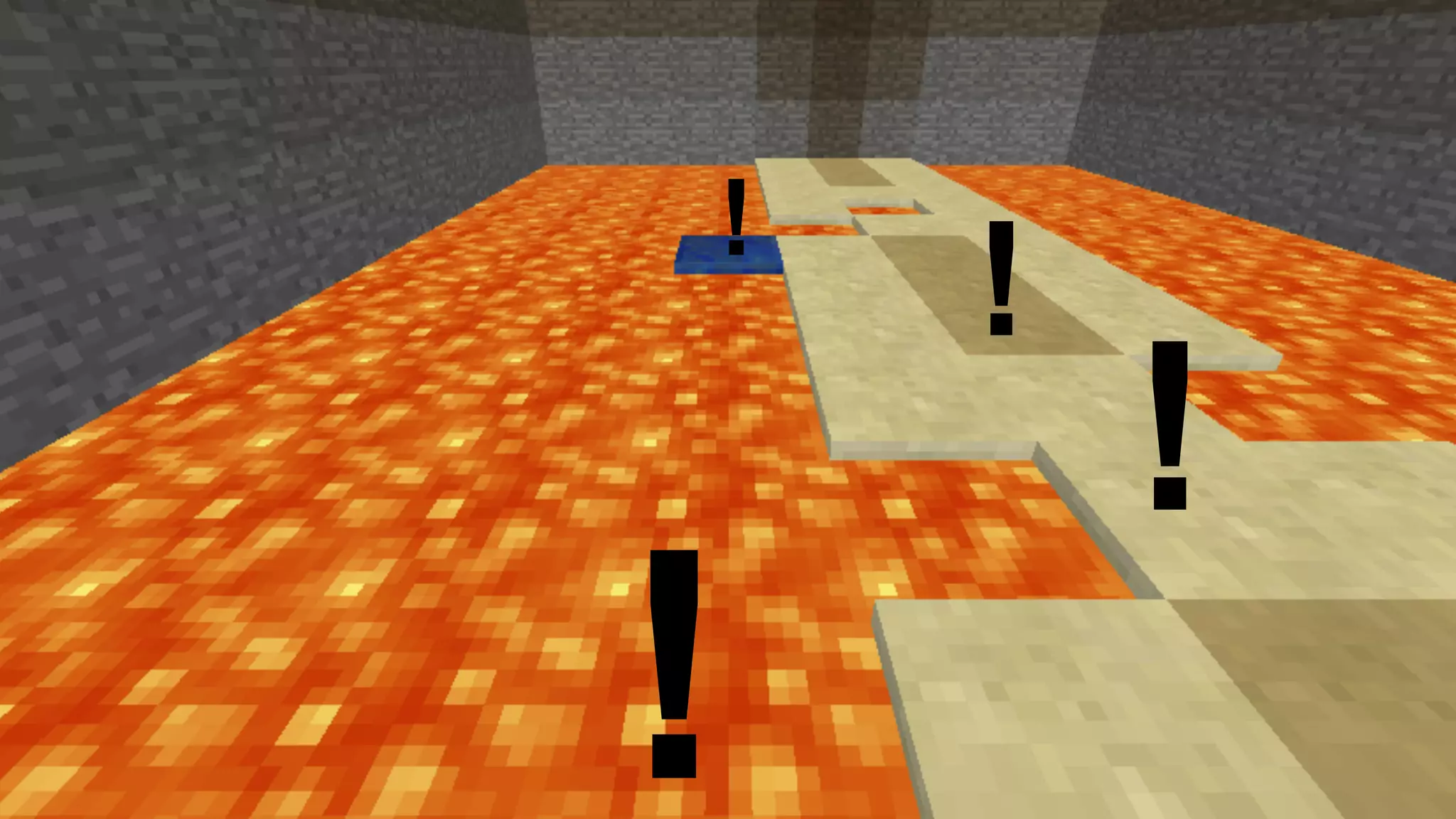

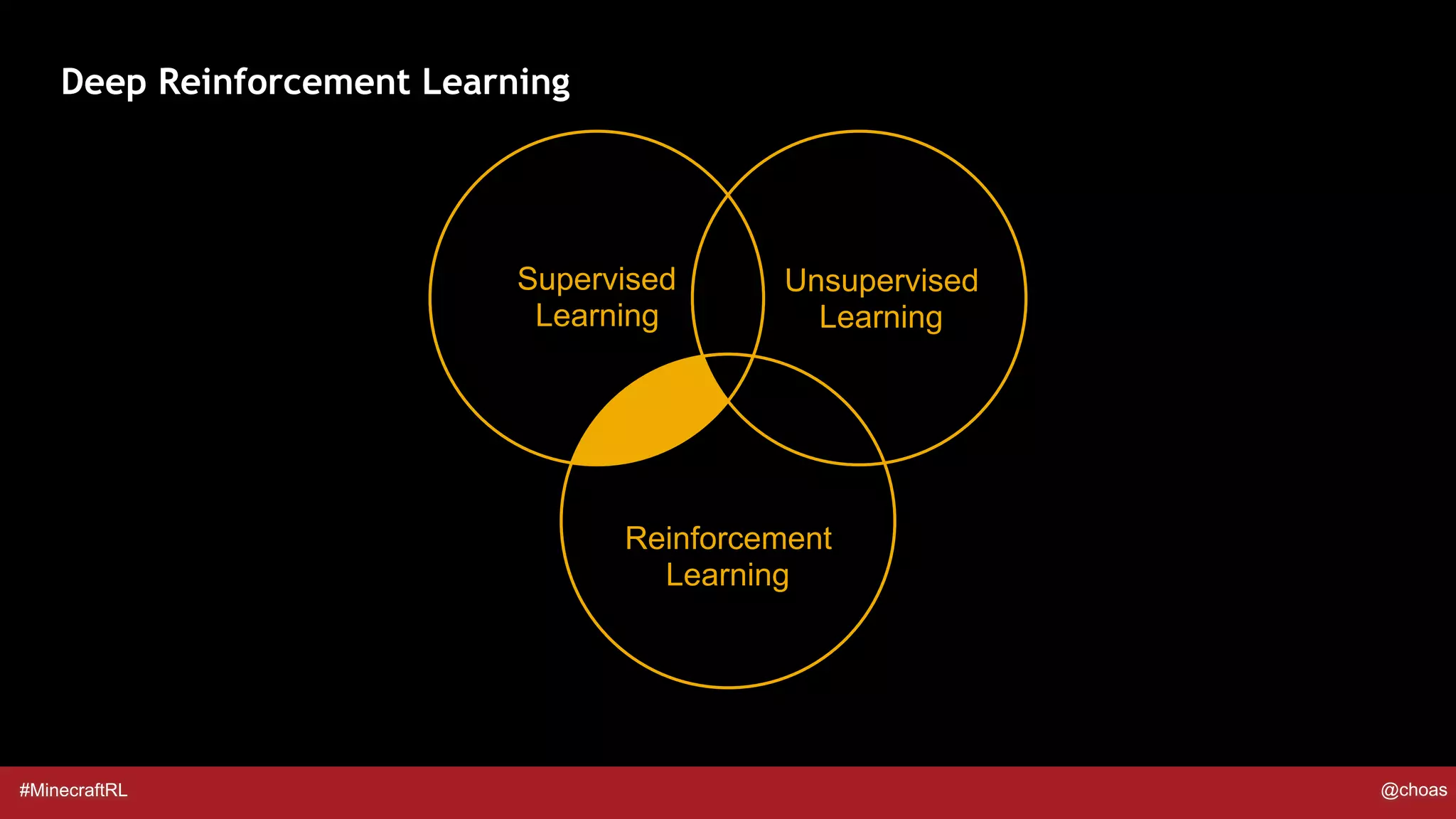

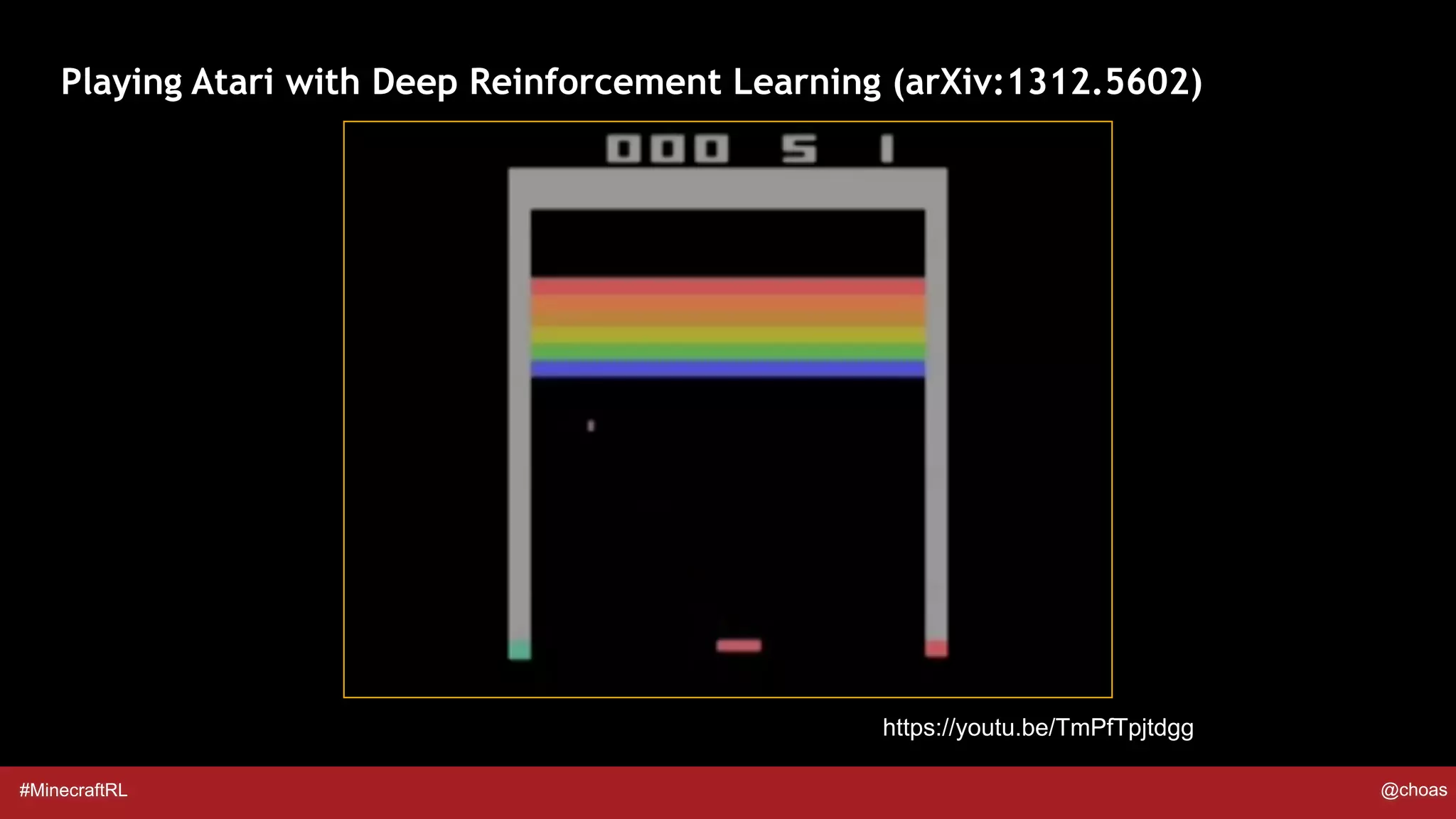

The document discusses Project Malmo, an open-source platform for AI experimentation based on Minecraft, aimed at facilitating research in areas like reinforcement learning, computer vision, and robotics. It details various reinforcement learning concepts, specifically Q-learning and the related parameters used for training agents in a simulated environment. Examples of Q-learning applications in the context of Minecraft are provided to illustrate the methodology.

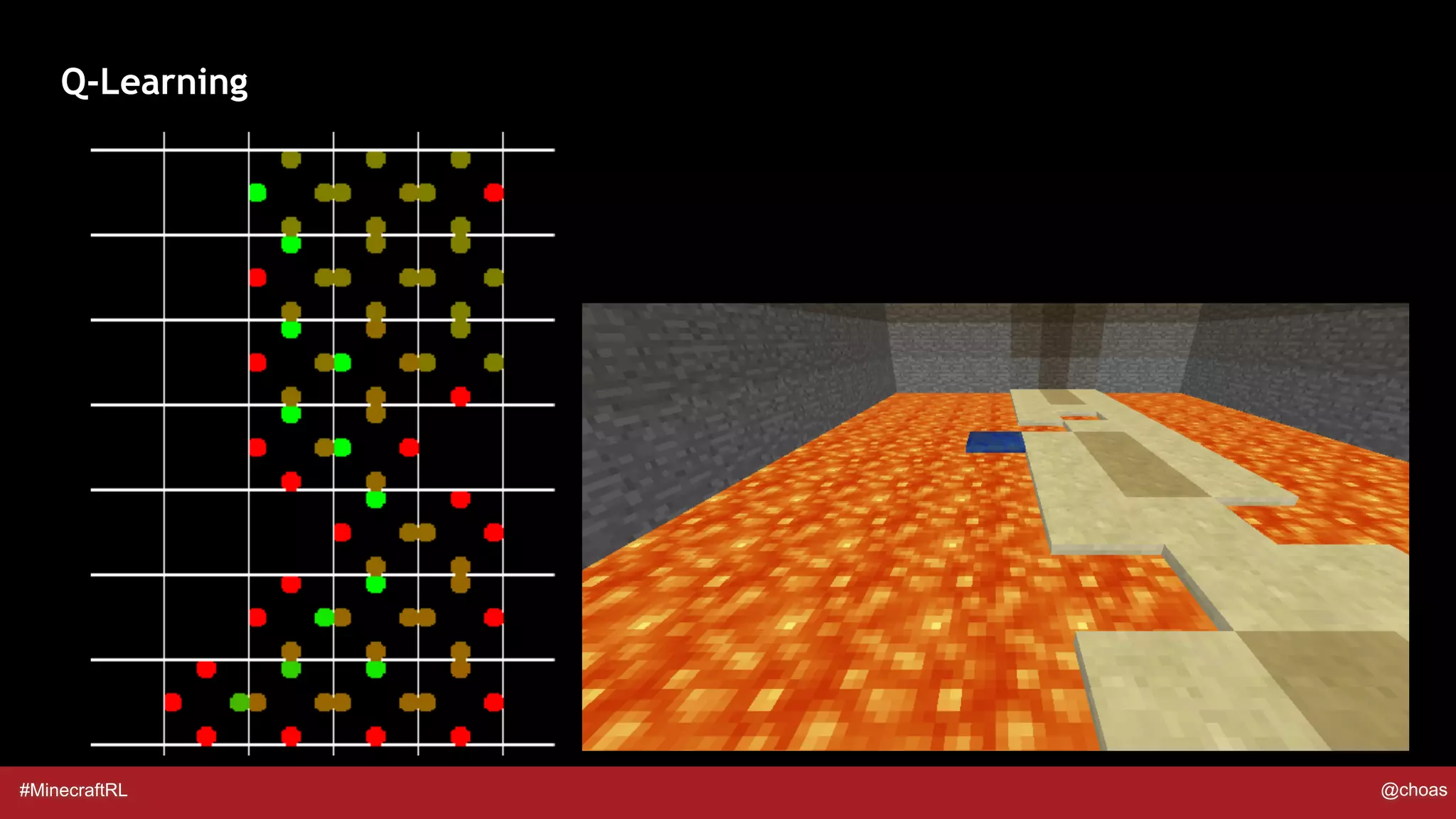

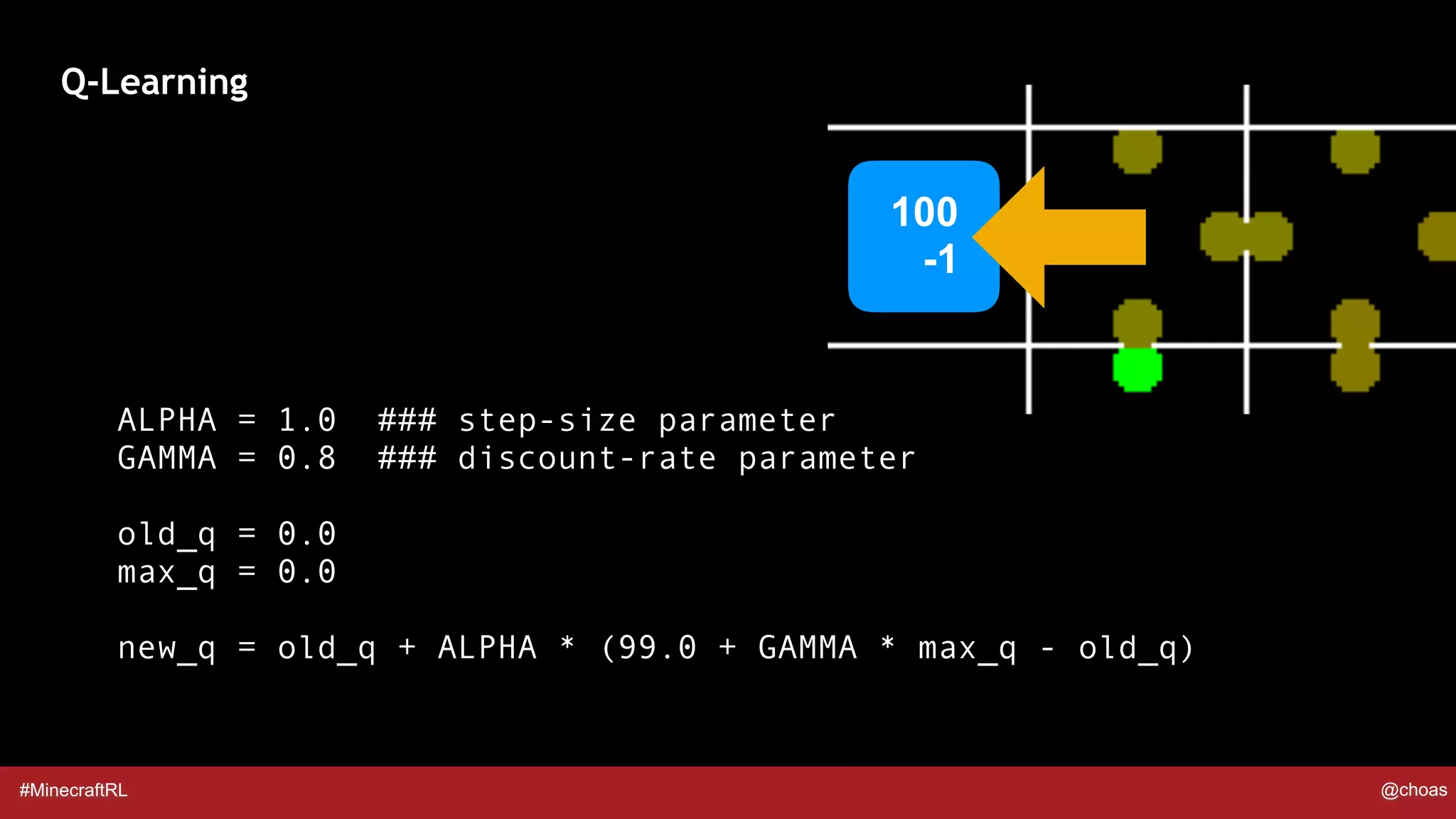

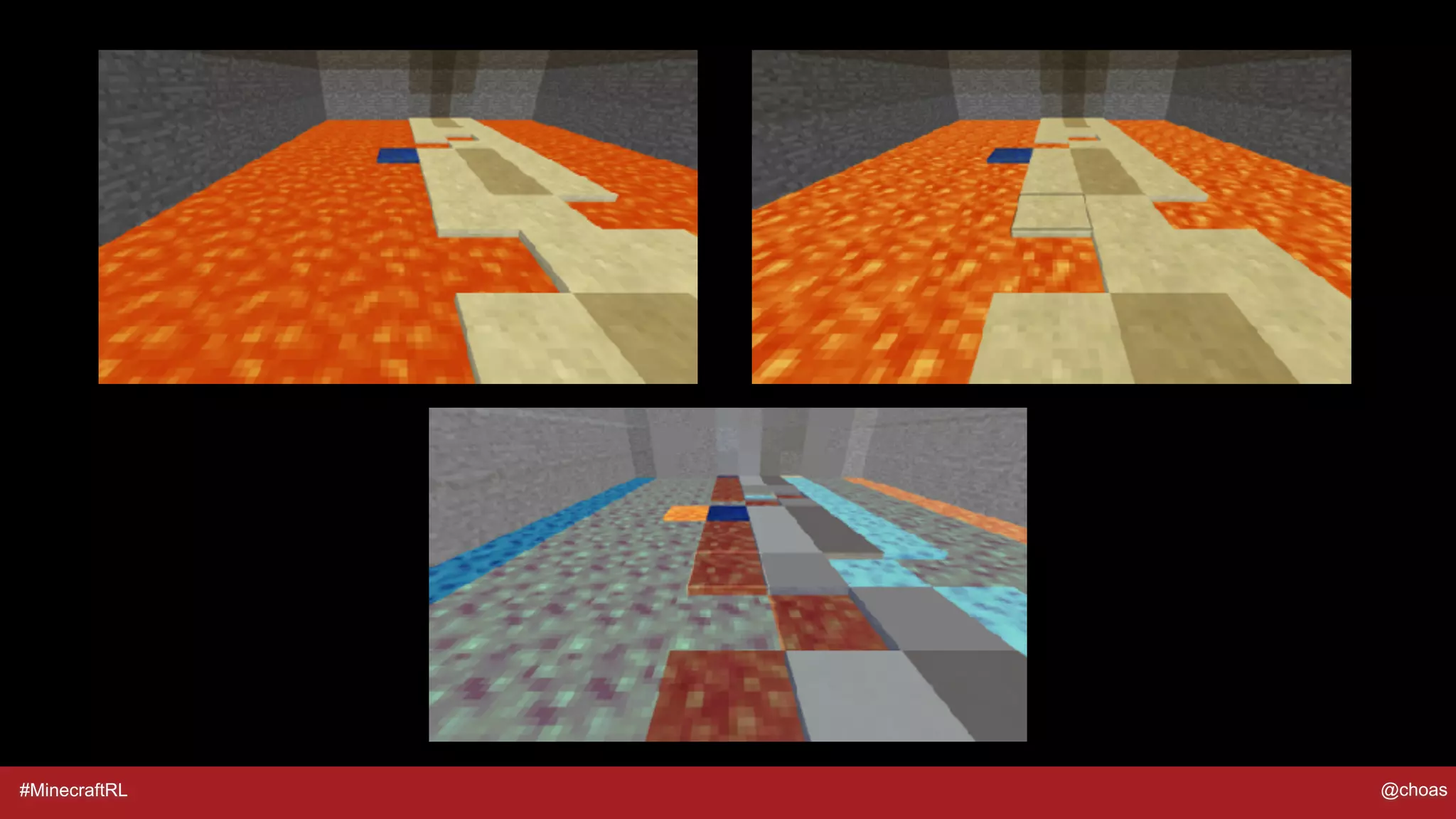

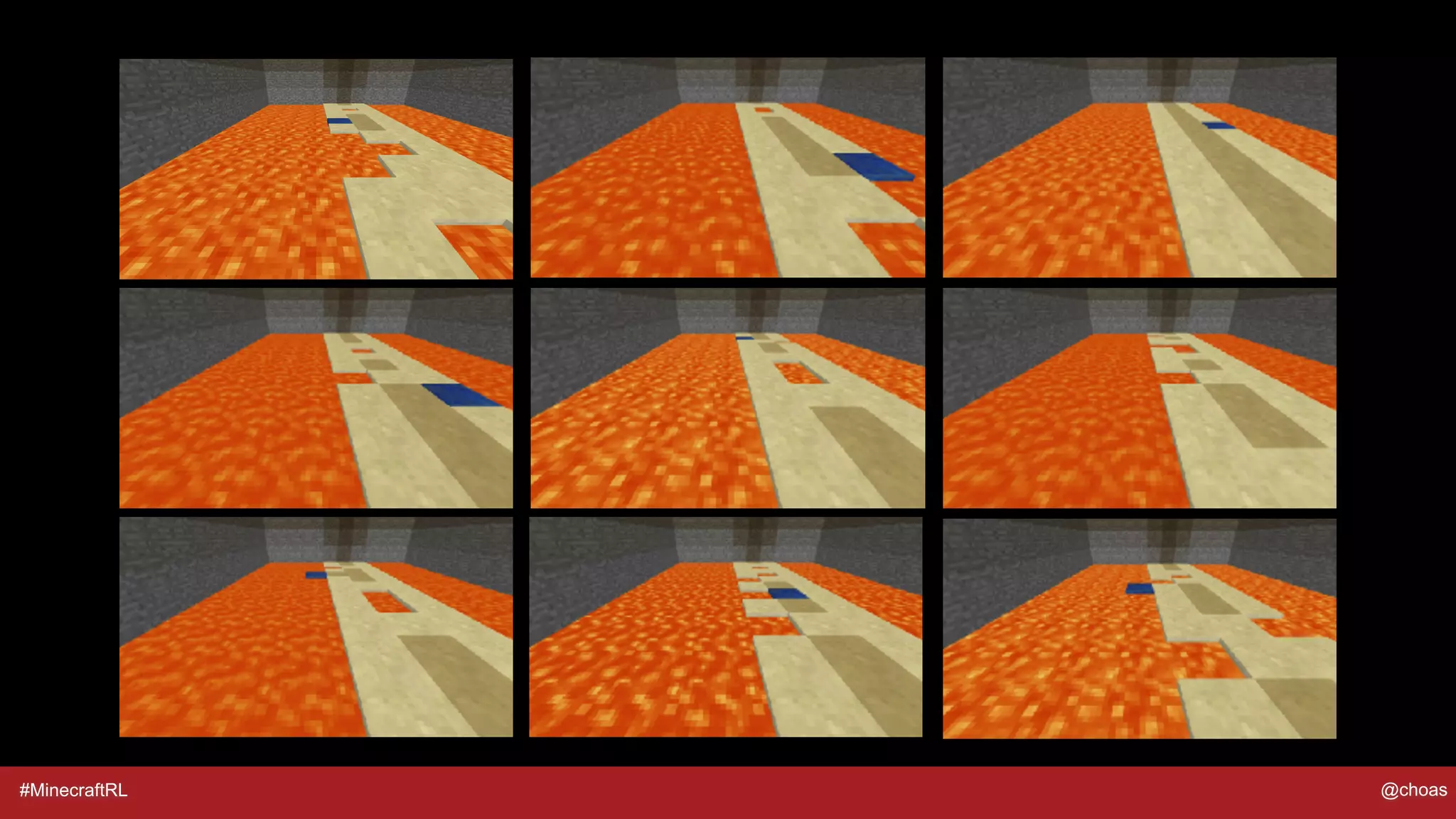

![#MinecraftRL @choas

Q-Learning

ALPHA = 1.0 ### step-size parameter

GAMMA = 0.8 ### discount-rate parameter

old_q = q_table[prev_state][prev_action]

max_q = max(q_table[current_state][:])

new_q = old_q + ALPHA * (reward + GAMMA * max_q - old_q)](https://image.slidesharecdn.com/minecraftandreinforcementlearning-180511112429/75/Minecraft-and-Reinforcement-Learning-16-2048.jpg)

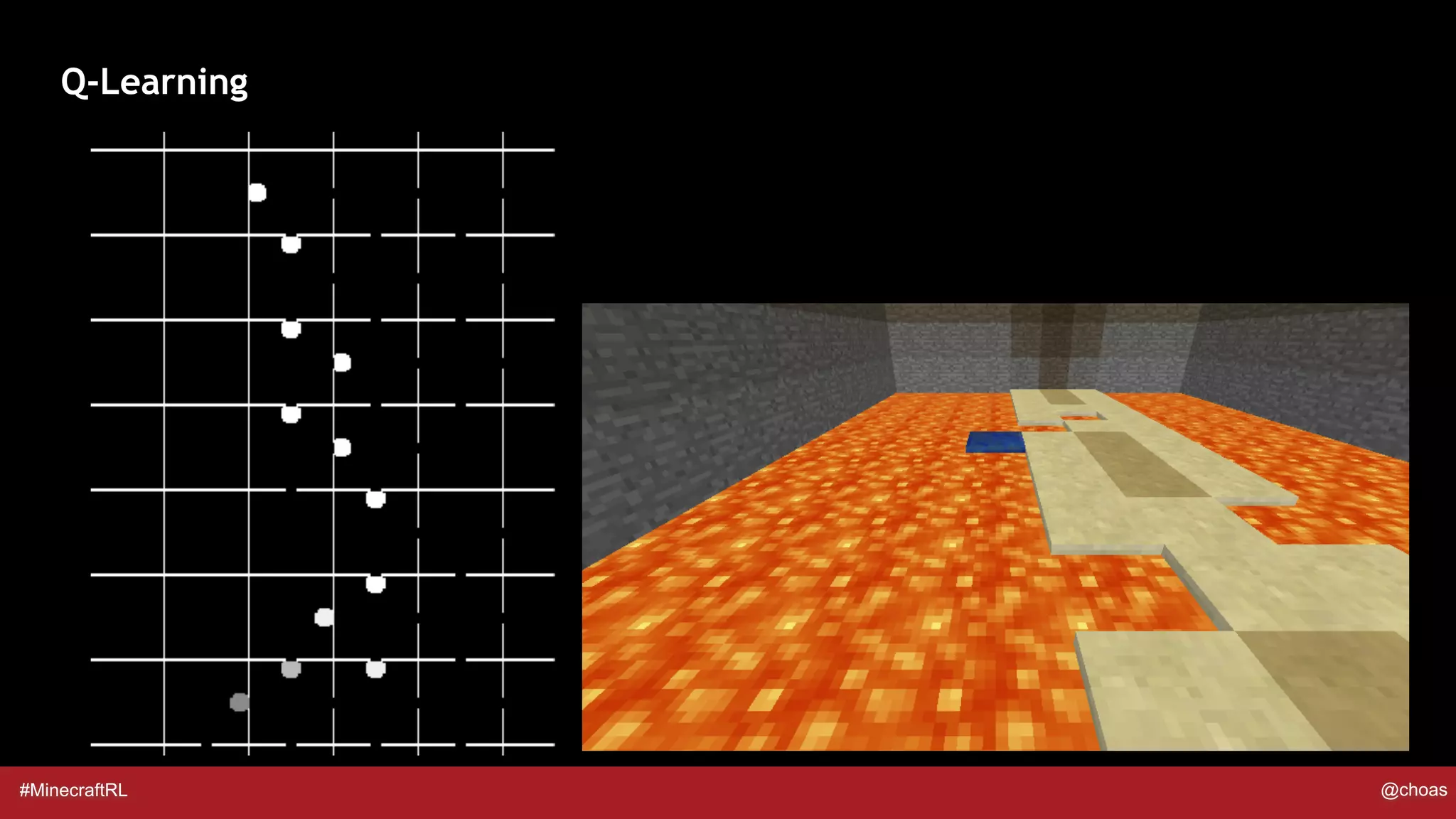

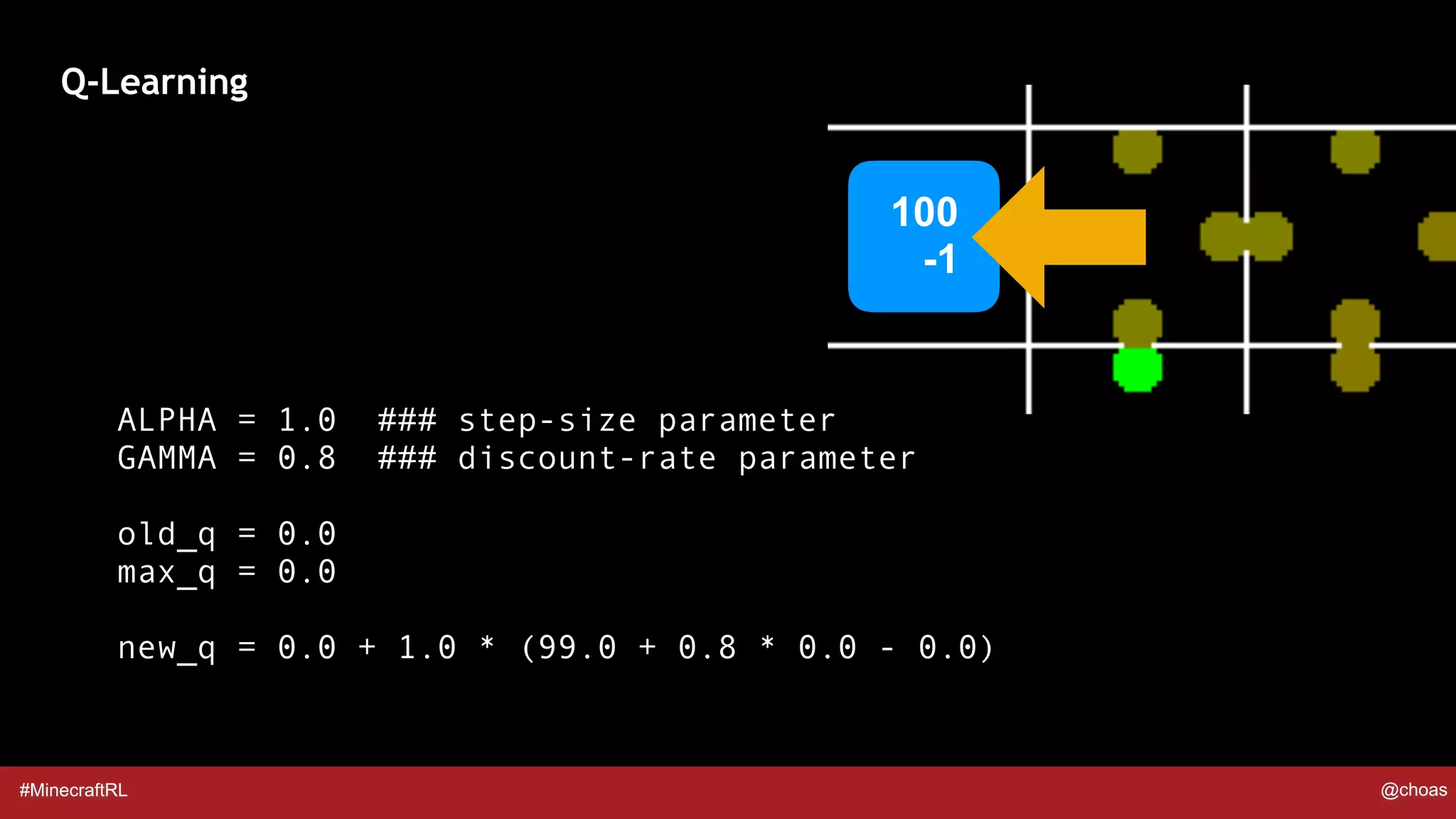

![#MinecraftRL @choas

Q-Learning

ALPHA = 1.0 ### step-size parameter

GAMMA = 0.8 ### discount-rate parameter

old_q = q_table[prev_state][prev_action]

max_q = max(q_table[current_state][:])

new_q = old_q + ALPHA * (reward + GAMMA * max_q - old_q)](https://image.slidesharecdn.com/minecraftandreinforcementlearning-180511112429/75/Minecraft-and-Reinforcement-Learning-18-2048.jpg)

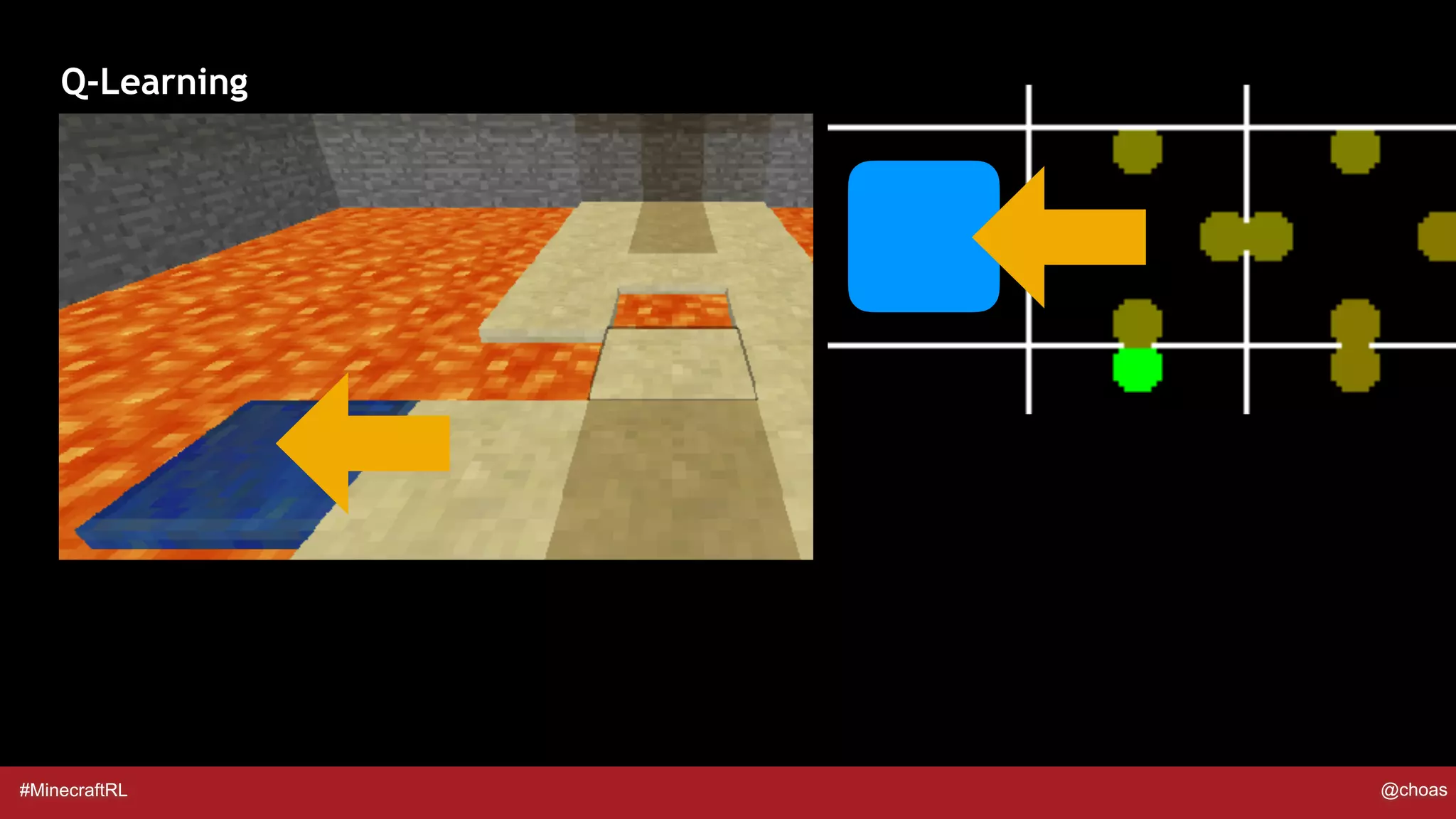

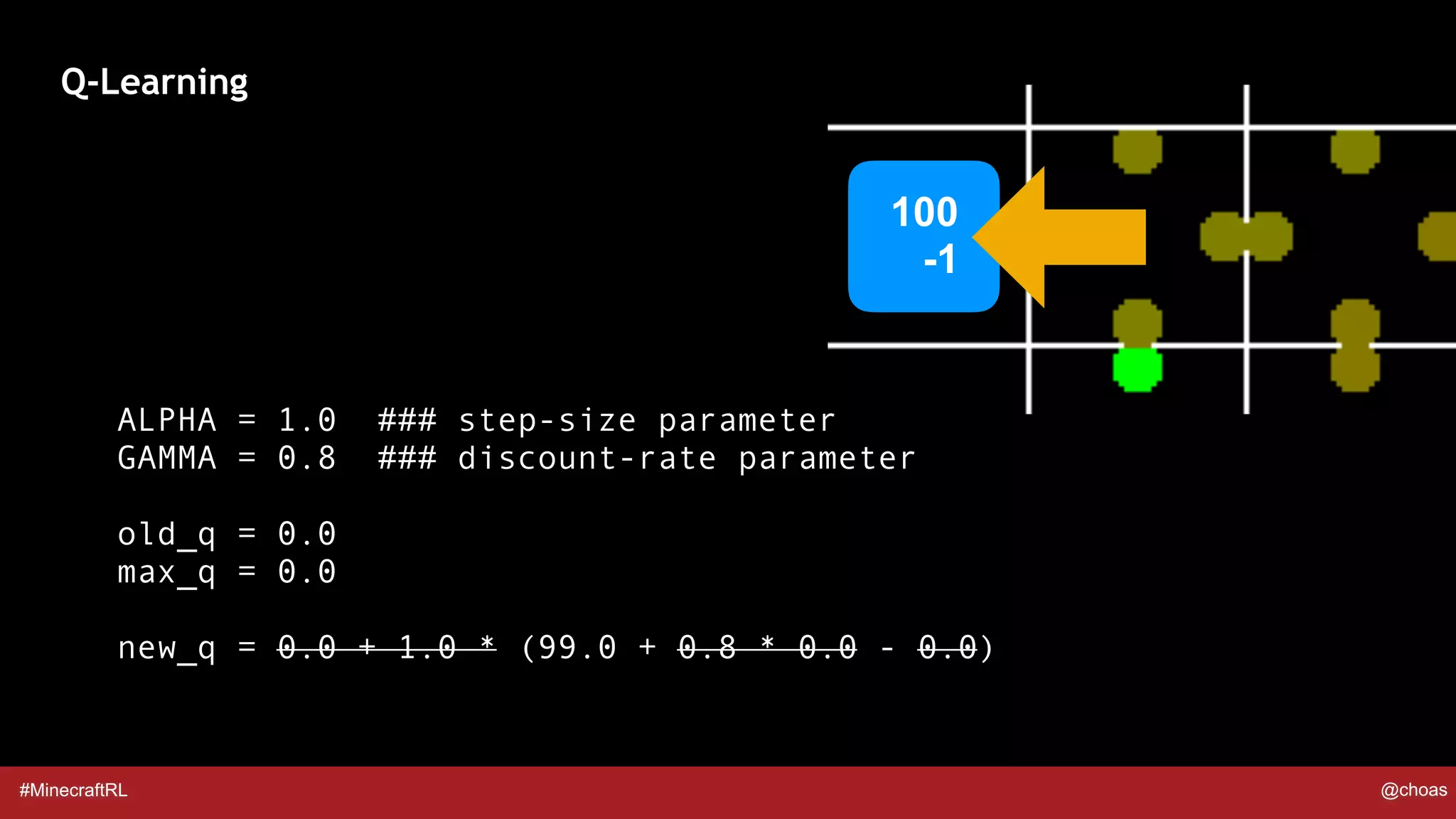

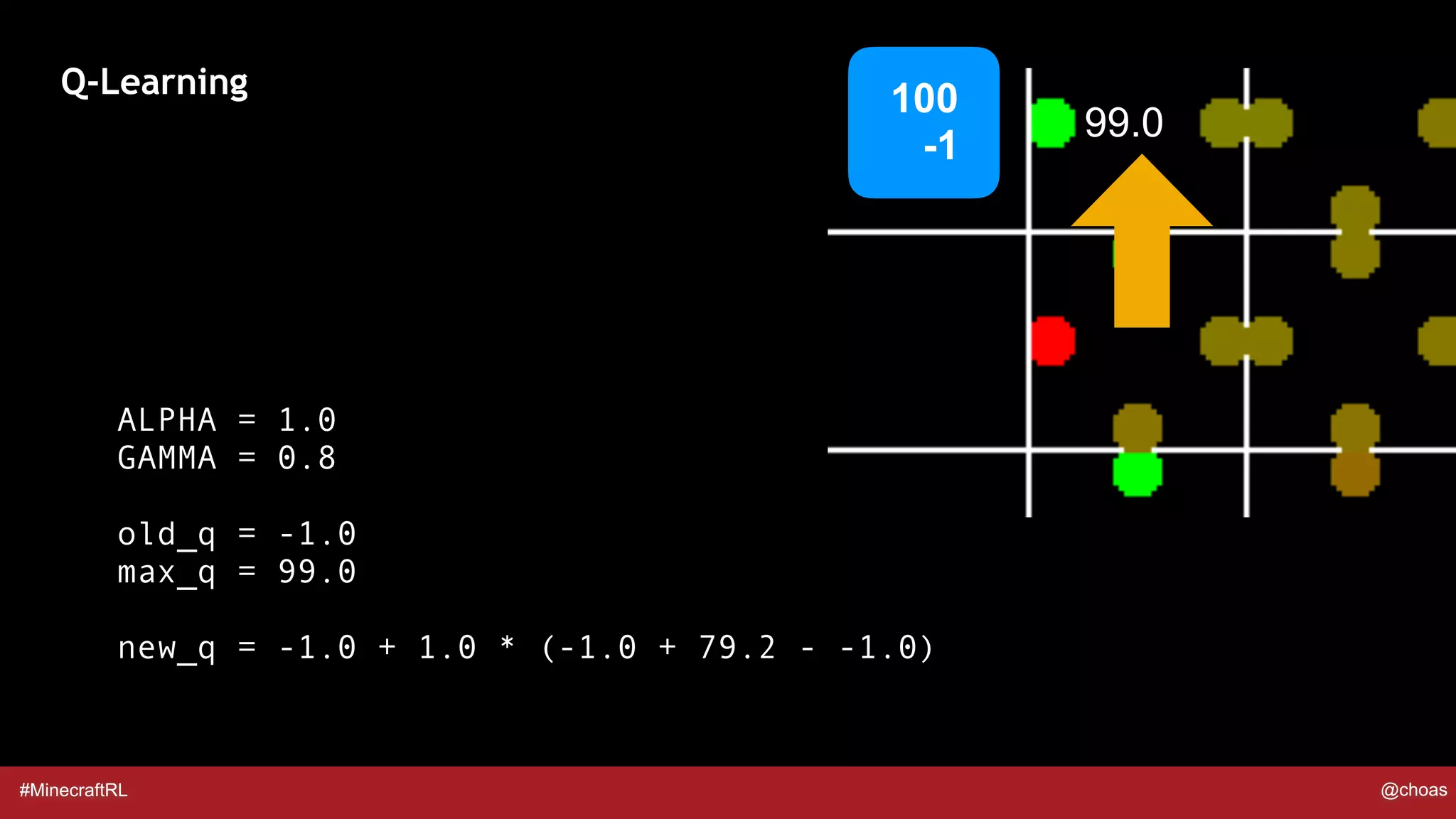

![#MinecraftRL @choas

Q-Learning

ALPHA = 1.0 ### step-size parameter

GAMMA = 0.8 ### discount-rate parameter

old_q = 0.0

max_q = max(q_table[current_state][:])

new_q = old_q + ALPHA * (reward + GAMMA * max_q - old_q)](https://image.slidesharecdn.com/minecraftandreinforcementlearning-180511112429/75/Minecraft-and-Reinforcement-Learning-19-2048.jpg)

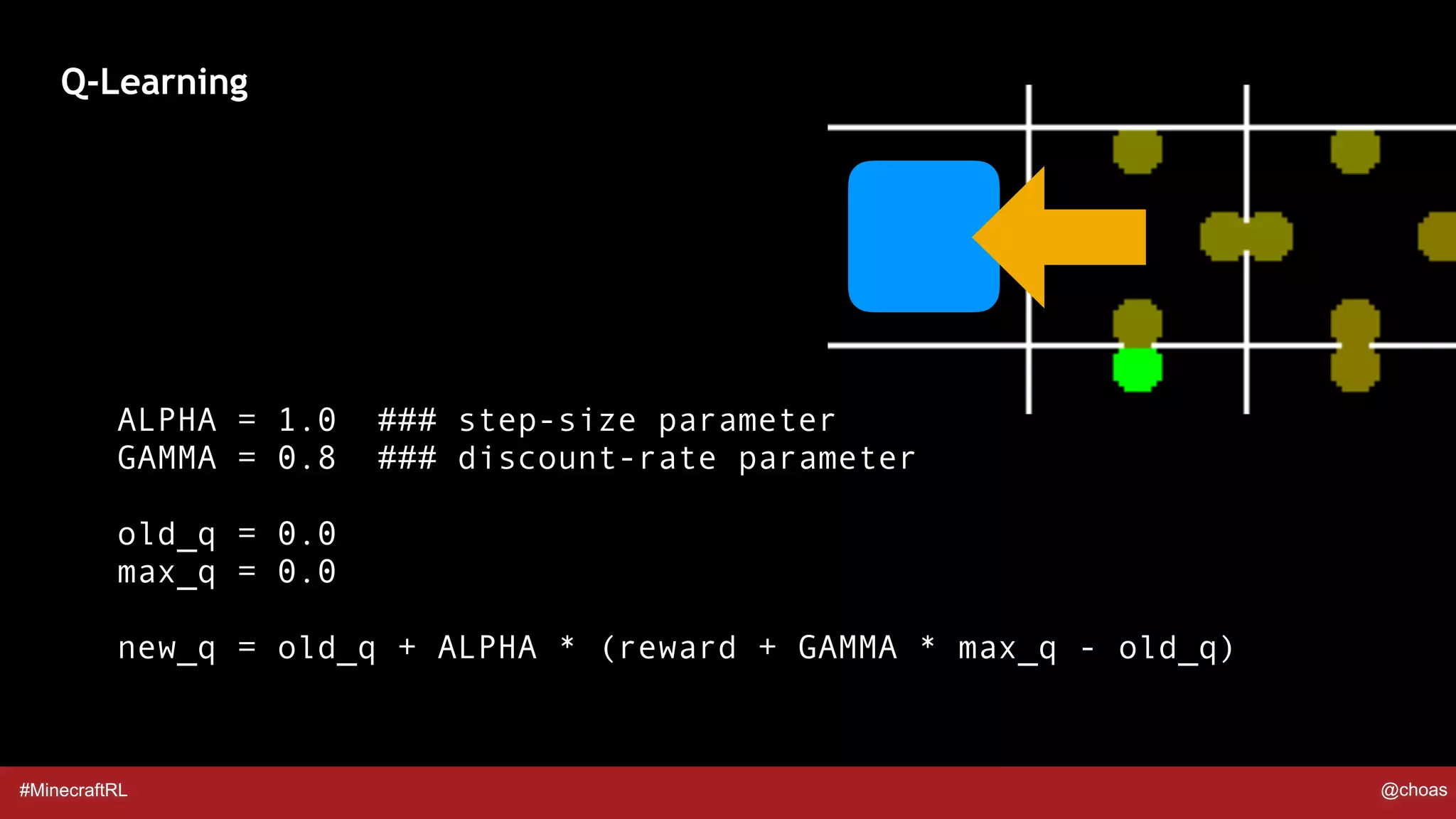

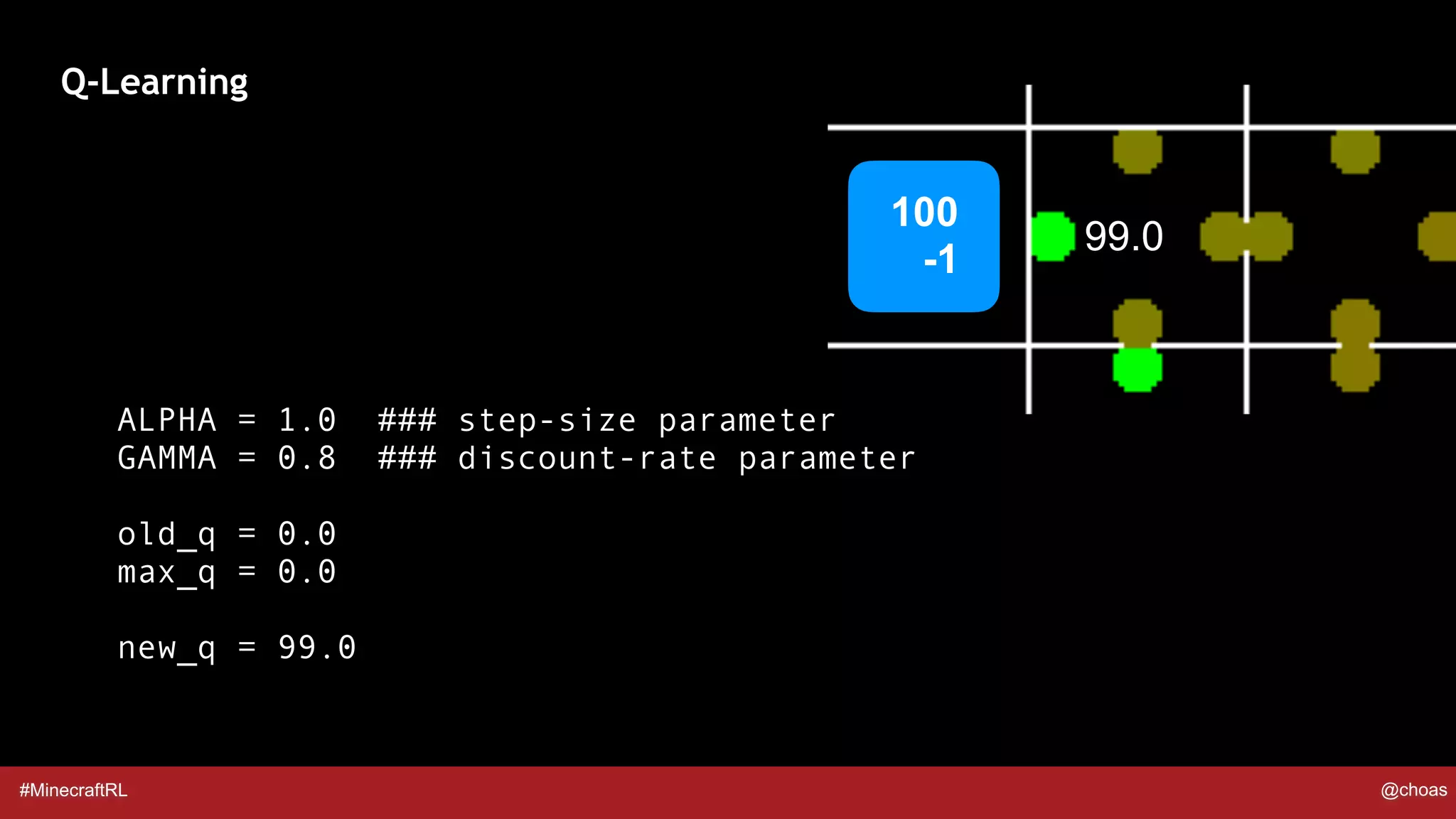

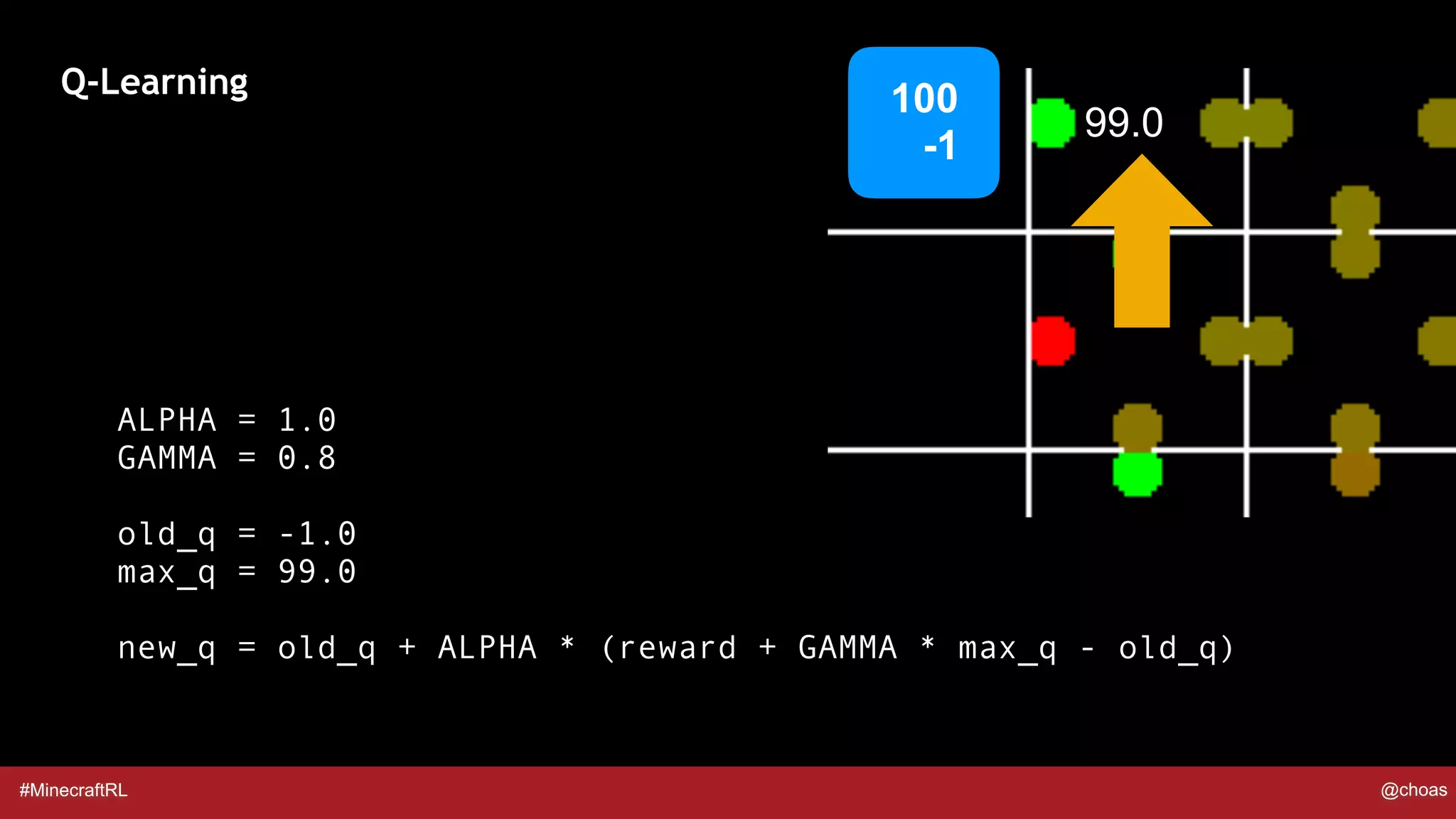

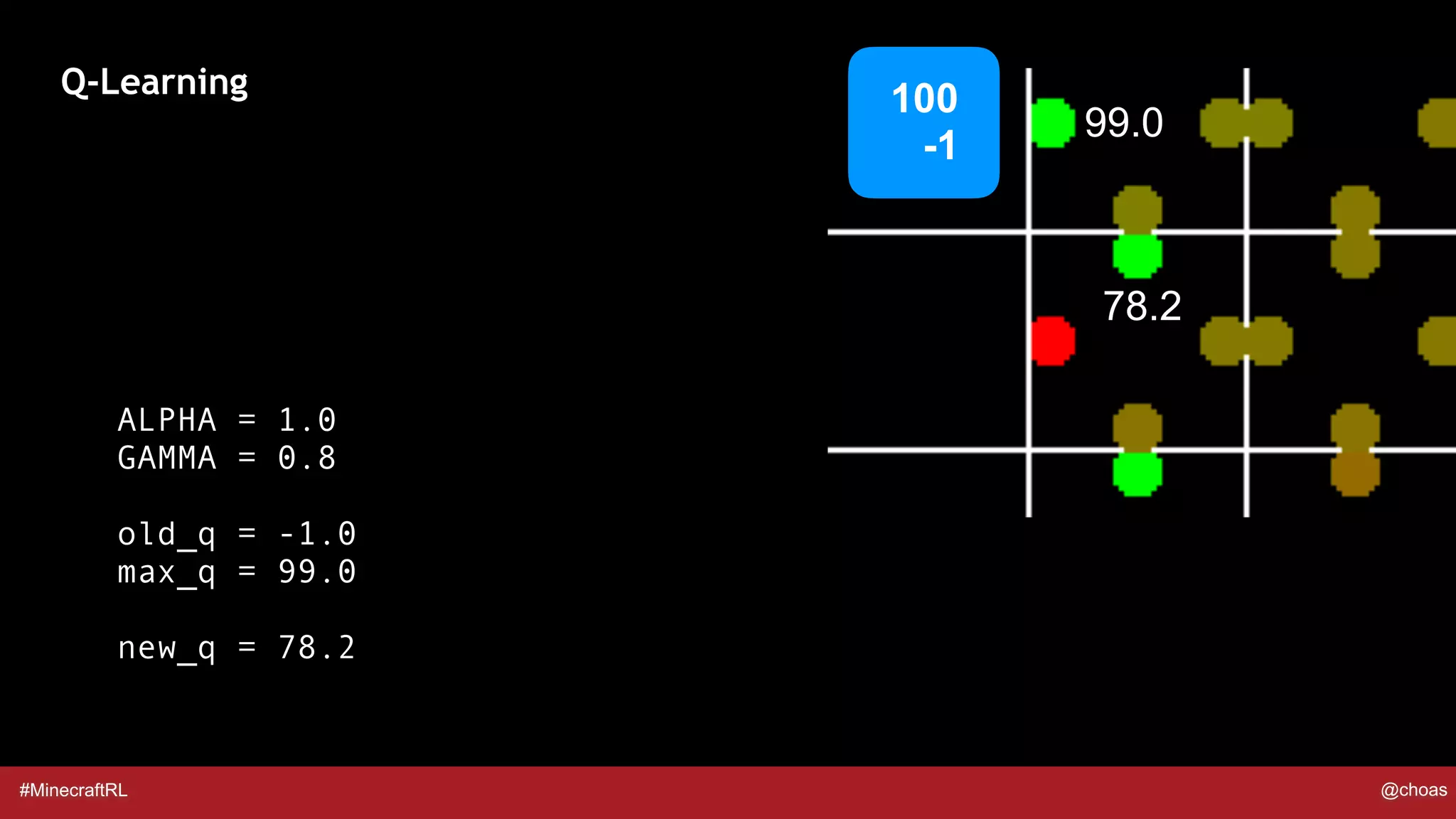

![#MinecraftRL @choas

Q-Learning

100

-1

99.0

ALPHA = 1.0

GAMMA = 0.8

old_q = q_table[prev_state][prev_action]

max_q = max(q_table[current_state][:])

new_q = old_q + ALPHA * (reward + GAMMA * max_q - old_q)](https://image.slidesharecdn.com/minecraftandreinforcementlearning-180511112429/75/Minecraft-and-Reinforcement-Learning-26-2048.jpg)

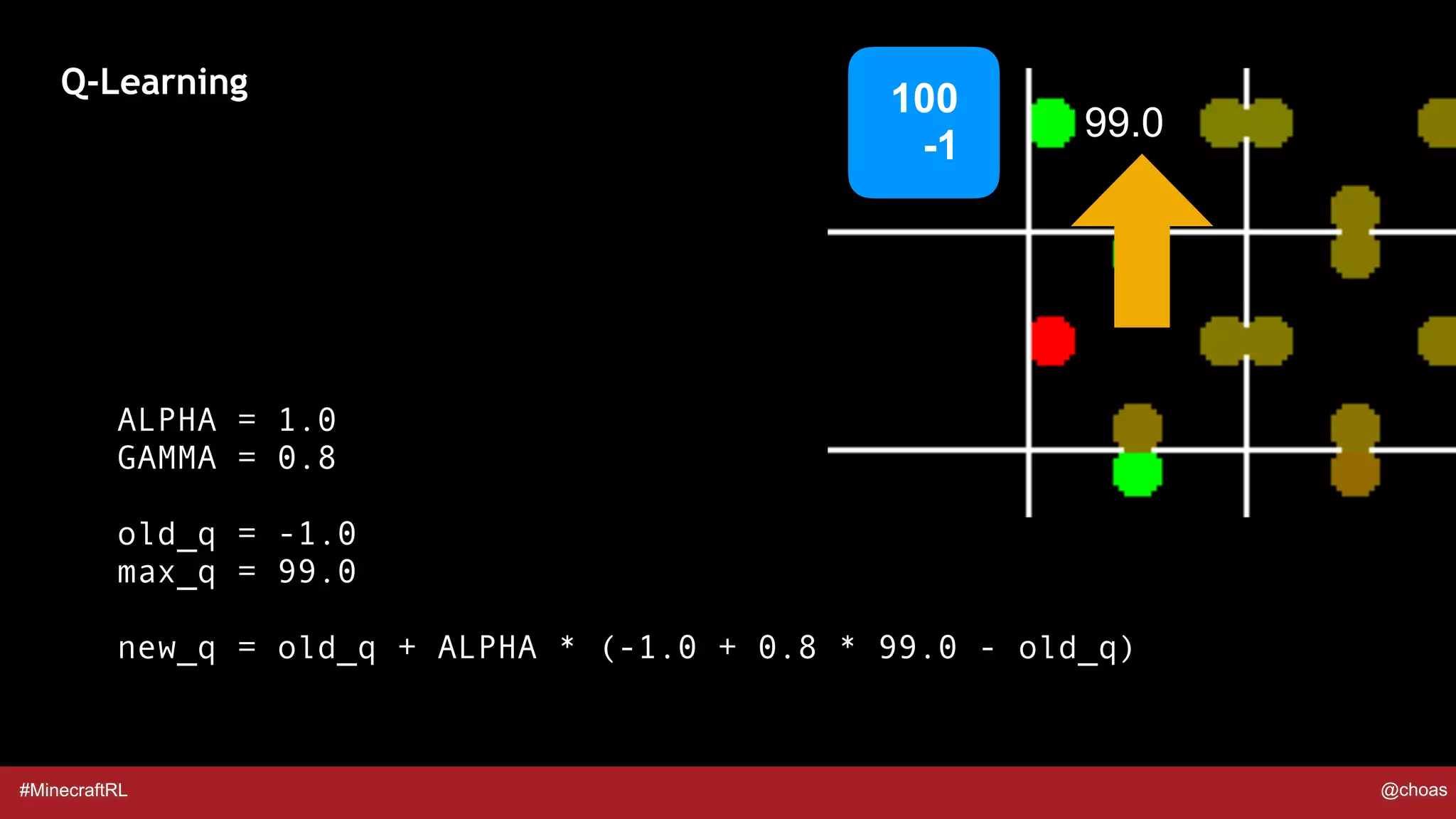

![#MinecraftRL @choas

Q-Learning

100

-1

99.0

ALPHA = 1.0

GAMMA = 0.8

old_q = -1.0

max_q = max(q_table[current_state][:])

new_q = old_q + ALPHA * (reward + GAMMA * max_q - old_q)](https://image.slidesharecdn.com/minecraftandreinforcementlearning-180511112429/75/Minecraft-and-Reinforcement-Learning-27-2048.jpg)

![#MinecraftRL @choas

[99 0 0 0] [ 0 -1 -1 0] [ 0 0 L 0]

[ L -1 -1 -1] [-1 -1 -1 -1] [-1 0 0 0]

[ L -1 -1 -1] [-1 -1 -1 -1] [-1 L 0 0]

[ L L -2 -1] [-2 -2 L -1]

[ L -2 -2 -2] [-2 -2 L L]

[ L -3 -2 L] [-2 -3 -2 -2] [-2 -3 L -2]

[ L L -3 L] [-3 L -3 -3] [-3 L -3 -3] [-2 L L -3]

Q Table

L = Lava

[ ← ↓ → ↑ ]](https://image.slidesharecdn.com/minecraftandreinforcementlearning-180511112429/75/Minecraft-and-Reinforcement-Learning-35-2048.jpg)

![#MinecraftRL @choas

[99 0 0 0] [ 0 -1 -1 0] [ 0 0 L 0]

[ L -1 -1 78] [-1 -1 -1 -1] [-1 0 0 0]

[ L -1 -1 -1] [-1 -1 -1 -1] [-1 L 0 0]

[ L L -2 -1] [-2 -2 L -1]

[ L -2 -2 -2] [-2 -2 L L]

[ L -3 -2 L] [-2 -3 -2 -2] [-2 -3 L -2]

[ L L -3 L] [-3 L -3 -3] [-3 L -3 -3] [-2 L L -3]

Q Table

L = Lava

[ ← ↓ → ↑ ]](https://image.slidesharecdn.com/minecraftandreinforcementlearning-180511112429/75/Minecraft-and-Reinforcement-Learning-36-2048.jpg)

![#MinecraftRL @choas

[99 0 0 0] [ 0 -1 -1 0] [ 0 0 L -1]

[ L -1 -1 78] [61 -1 -1 -1] [-1 -1 L -1]

[ L -2 -2 61] [-2 -1 -1 -1] [-1 L L -1]

[ L L -2 -2] [-2 -3 L -2]

[ L -2 -3 -2] [-3 -2 L L]

[ L -3 -3 L] [-3 -3 -3 -3] [-2 -3 L -3]

[ L L -3 L] [-3 L -3 -3] [-3 L -3 -3] [-3 L L -3]

Q Table

L = Lava

[ ← ↓ → ↑ ]](https://image.slidesharecdn.com/minecraftandreinforcementlearning-180511112429/75/Minecraft-and-Reinforcement-Learning-37-2048.jpg)

![#MinecraftRL @choas

[99 0 0 0] [ 0 -1 -1 0] [ 0 0 L -1]

[ L -1 -1 78] [61 -1 -1 -1] [-1 -1 L -1]

[ L -2 -2 61] [-2 -1 -1 -1] [-1 L L -1]

[ L L -2 48] [-2 -3 L -2]

[ L -2 -3 -2] [-3 -2 L L]

[ L -3 -3 L] [-3 -3 -3 -3] [-3 -3 L -3]

[ L L -3 L] [-3 L -3 -3] [-3 L -3 -3] [-3 L L -3]

Q Table

L = Lava

[ ← ↓ → ↑ ]](https://image.slidesharecdn.com/minecraftandreinforcementlearning-180511112429/75/Minecraft-and-Reinforcement-Learning-38-2048.jpg)

![#MinecraftRL @choas

[99 0 0 0] [78 -1 -1 0] [-1 -1 L -1]

[ L -1 -1 78] [61 -1 -1 -1] [48 -1 L -1]

[ L -2 -2 61] [-2 -2 -2 48] [-1 L L -1]

[ L L -2 48] [-2 -3 L 37]

[ L -3 -3 -2] [-3 -3 L L]

[ L -3 -3 L] [-3 -3 -3 -3] [-3 -3 L -3]

[ L L -3 L] [-3 L -3 -3] [-3 L -3 -3] [-3 L L -3]

Q Table

L = Lava

[ ← ↓ → ↑ ]](https://image.slidesharecdn.com/minecraftandreinforcementlearning-180511112429/75/Minecraft-and-Reinforcement-Learning-39-2048.jpg)

![#MinecraftRL @choas

[99 0 0 0] [78 -1 -1 0] [-1 -1 L -1]

[ L -1 -1 78] [61 -1 -1 -1] [48 -1 L -1]

[ L -2 -2 61] [-2 -2 -2 48] [-1 L L -1]

[ L L -2 48] [-2 -3 L 37]

[ L -3 -3 29] [-3 -3 L L]

[ L -4 -3 L] [-3 -3 -3 -3] [-3 -3 L -3]

[ L L -4 L] [-3 L -3 -3] [-3 L -3 -3] [-3 L L -3]

Q Table

L = Lava

[ ← ↓ → ↑ ]](https://image.slidesharecdn.com/minecraftandreinforcementlearning-180511112429/75/Minecraft-and-Reinforcement-Learning-40-2048.jpg)

![#MinecraftRL @choas

[99 0 0 0] [78 -1 -1 0] [-1 -1 L -1]

[ L -1 -1 78] [61 -1 -1 -1] [48 -1 L -1]

[ L -2 -2 61] [-2 -2 -2 48] [-1 L L -1]

[ L L -2 48] [-2 -3 L 37]

[ L -3 -3 29] [-3 -3 L L]

[ L -4 -3 L] [-3 -3 -3 22] [-3 -3 L -3]

[ L L -4 L] [-3 L -3 -3] [-3 L -3 -3] [-3 L L -3]

Q Table

L = Lava

[ ← ↓ → ↑ ]](https://image.slidesharecdn.com/minecraftandreinforcementlearning-180511112429/75/Minecraft-and-Reinforcement-Learning-41-2048.jpg)

![#MinecraftRL @choas

[99 0 0 0] [78 -1 -1 0] [-1 -1 L -1]

[ L -1 -1 78] [61 -1 -1 -1] [48 -1 L -1]

[ L -2 -2 61] [-2 -2 -2 48] [-1 L L -1]

[ L L -2 48] [-2 -3 L 37]

[ L -3 -3 29] [-3 -3 L L]

[ L -4 16 L] [-3 -3 -3 22] [-3 -3 L -3]

[ L L -4 L] [-4 L -3 -3] [-3 L -3 -3] [-3 L L -3]

Q Table

L = Lava

[ ← ↓ → ↑ ]](https://image.slidesharecdn.com/minecraftandreinforcementlearning-180511112429/75/Minecraft-and-Reinforcement-Learning-42-2048.jpg)

![#MinecraftRL @choas

[99 0 0 0] [78 -1 -1 0] [-1 -1 L -1]

[ L -1 -1 78] [61 -1 -1 -1] [48 -1 L -1]

[ L -2 -2 61] [-2 -2 -2 48] [-1 L L -1]

[ L L -2 48] [-2 -3 L 37]

[ L -3 -3 29] [-3 -3 L L]

[ L -4 16 L] [-3 -3 -3 22] [-3 -3 L -3]

[ L L -4 L] [-4 L -3 12] [-3 L -3 16] [-3 L L -3]

Q Table

L = Lava

[ ← ↓ → ↑ ]](https://image.slidesharecdn.com/minecraftandreinforcementlearning-180511112429/75/Minecraft-and-Reinforcement-Learning-43-2048.jpg)

![#MinecraftRL @choas

[99 0 0 0] [78 -1 -1 0] [-1 -1 L -1]

[ L -1 -1 78] [61 -1 -1 -1] [48 -1 L -1]

[ L -2 -2 61] [-2 -2 -2 48] [-1 L L -1]

[ L L -2 48] [-2 -3 L 37]

[ L -3 -3 29] [-3 -3 L L]

[ L -4 16 L] [-3 -3 -3 22] [-3 -3 L -3]

[ L L 8 L] [-4 L -3 12] [-3 L -3 16] [-3 L L -3]

Q Table

L = Lava

[ ← ↓ → ↑ ]](https://image.slidesharecdn.com/minecraftandreinforcementlearning-180511112429/75/Minecraft-and-Reinforcement-Learning-44-2048.jpg)

![#MinecraftRL @choas

[99 0 0 0] [78 -1 -1 0] [-1 -1 L -1]

[ L -1 -1 78] [61 -1 -1 -1] [48 -1 L -1]

[ L -2 -2 61] [-2 -2 -2 48] [-1 L L -1]

[ L L -2 48] [-2 -3 L 37]

[ L -3 -3 29] [-3 -3 L L]

[ L -4 16 L] [-3 -3 -3 22] [-3 -3 L -3]

[ L L 8 L] [-4 L -3 12] [-3 L -3 16] [-3 L L -3]

ALPHA = 1.0 GAMMA = 0.8](https://image.slidesharecdn.com/minecraftandreinforcementlearning-180511112429/75/Minecraft-and-Reinforcement-Learning-45-2048.jpg)

![#MinecraftRL @choas

[99 48 0 L] [48 0 0 0] [-1 0 L 0]

[ L 0 -1 97] [96 -1 -1 -1] [-1 -1 L -1]

[ L -1 -1 -1] [-1 -1 -1 92] [-1 L L -1]

[ L L -2 -1] [-2 -2 L 83]

[ L -3 -3 74] [-2 -4 L L]

[ L -5 -2 L] [-4 -4 -4 55] [-4 -4 L -4]

[ L L -1 L] [-6 L 11 -5] [-5 L -5 31] [-5 L L -4]

ALPHA = 0.5 GAMMA = 1.0 (40 moves)](https://image.slidesharecdn.com/minecraftandreinforcementlearning-180511112429/75/Minecraft-and-Reinforcement-Learning-46-2048.jpg)

![#MinecraftRL @choas

[99 48 0 L] [48 0 0 0] [-1 0 L 0]

[ L 0 -1 97] [96 -1 -1 -1] [-1 -1 L -1]

[ L -1 -1 47] [-2 -1 -1 95] [-1 L L -1]

[ L L -2 -1] [-2 45 L 94]

[ L -3 -3 93] [-2 -4 L L]

[ L -5 -2 L] [-4 -4 -4 92] [-4 -4 L -4]

[ L L 88 L] [-6 L 90 -5] [-5 L -5 91] [-5 L L -4]

ALPHA = 0.5 GAMMA = 1.0 (60 moves)](https://image.slidesharecdn.com/minecraftandreinforcementlearning-180511112429/75/Minecraft-and-Reinforcement-Learning-47-2048.jpg)

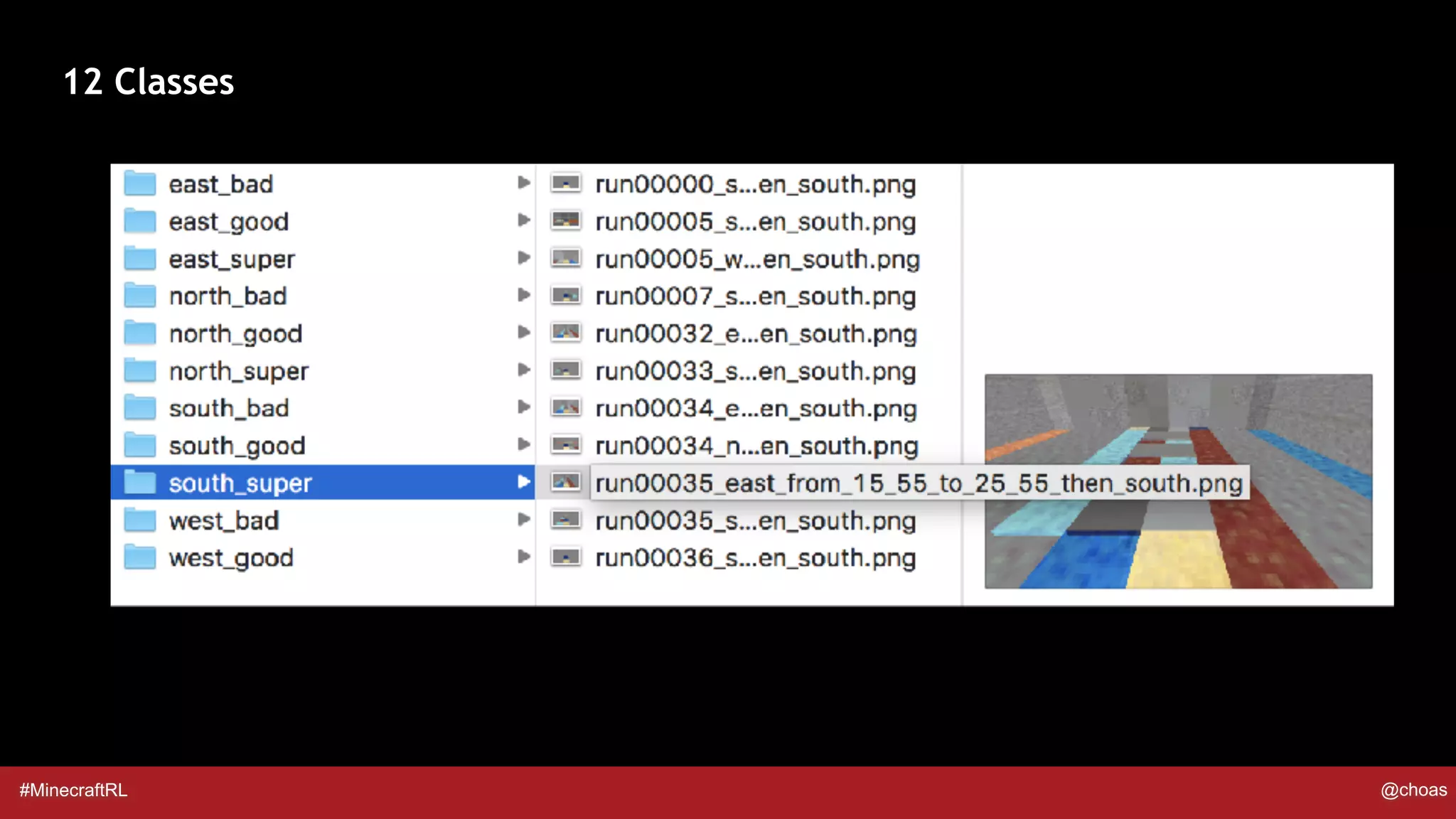

![#MinecraftRL @choas

### based on arXiv:1312.5602 (page 6)

model = Sequential()

model.add(Conv2D(16, (8, 8), strides=(4, 4), input_shape=input_shape))

model.add(Activation('relu'))

model.add(Conv2D(32, (4, 4), strides=(2, 2)))

model.add(Activation(‘relu'))

model.add(Flatten())

model.add(Dense(256))

model.add(Activation('relu'))

model.add(Dense(12, activation=‘sigmoid')) # 12 classes / actions

model.compile(loss='categorical_crossentropy',optimizer='adam',metrics=['accuracy'])

Keras Model](https://image.slidesharecdn.com/minecraftandreinforcementlearning-180511112429/75/Minecraft-and-Reinforcement-Learning-56-2048.jpg)