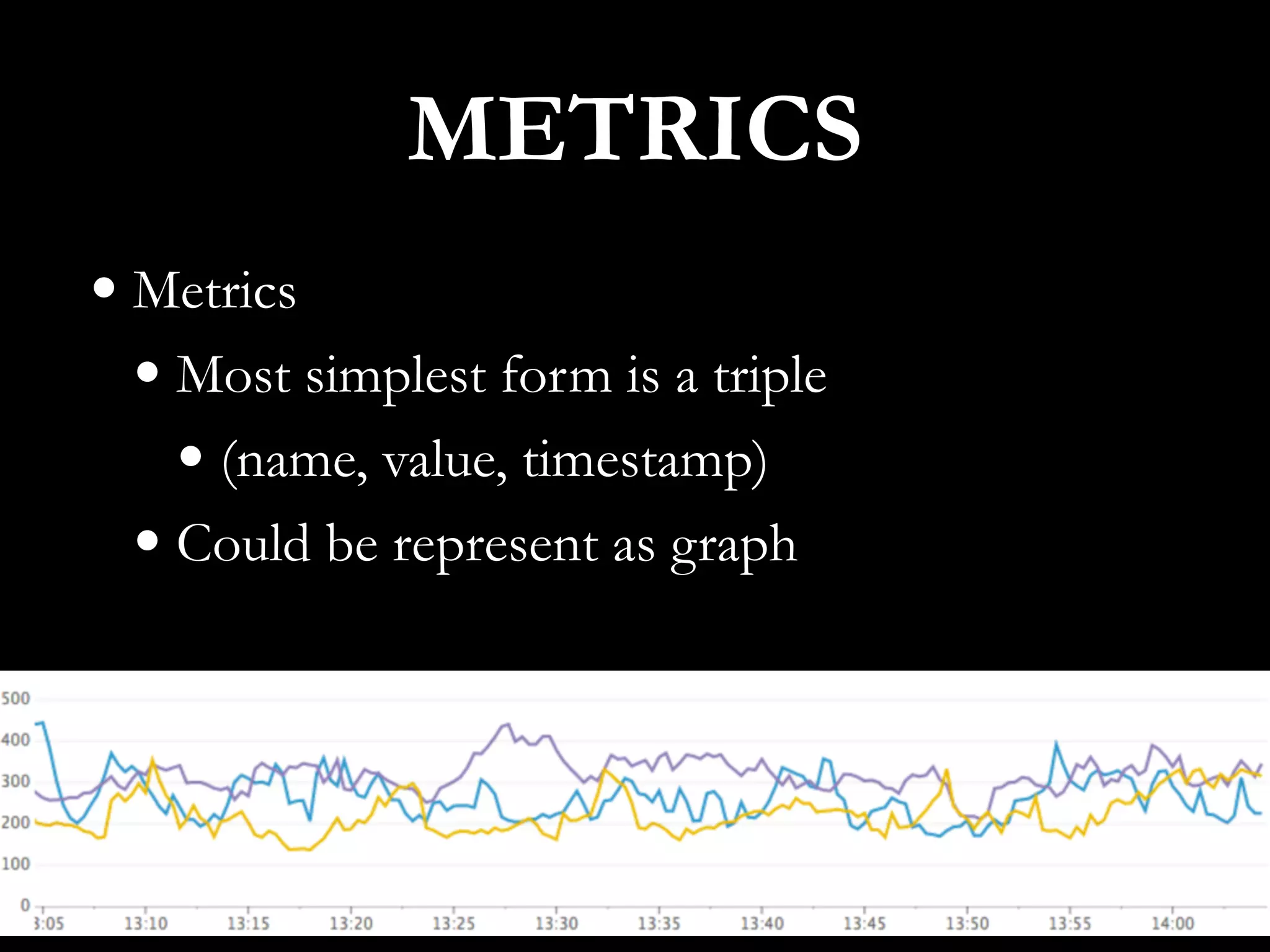

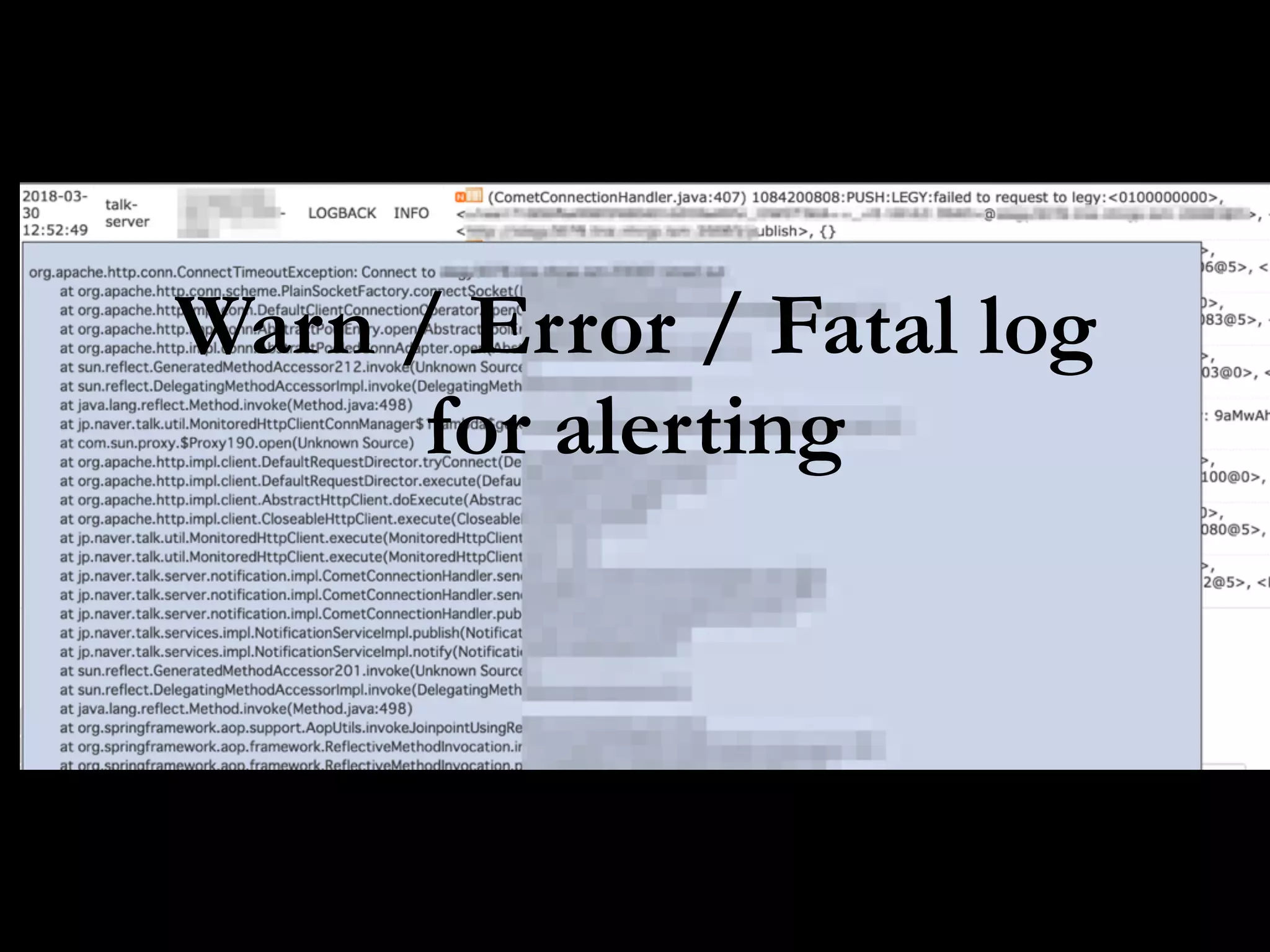

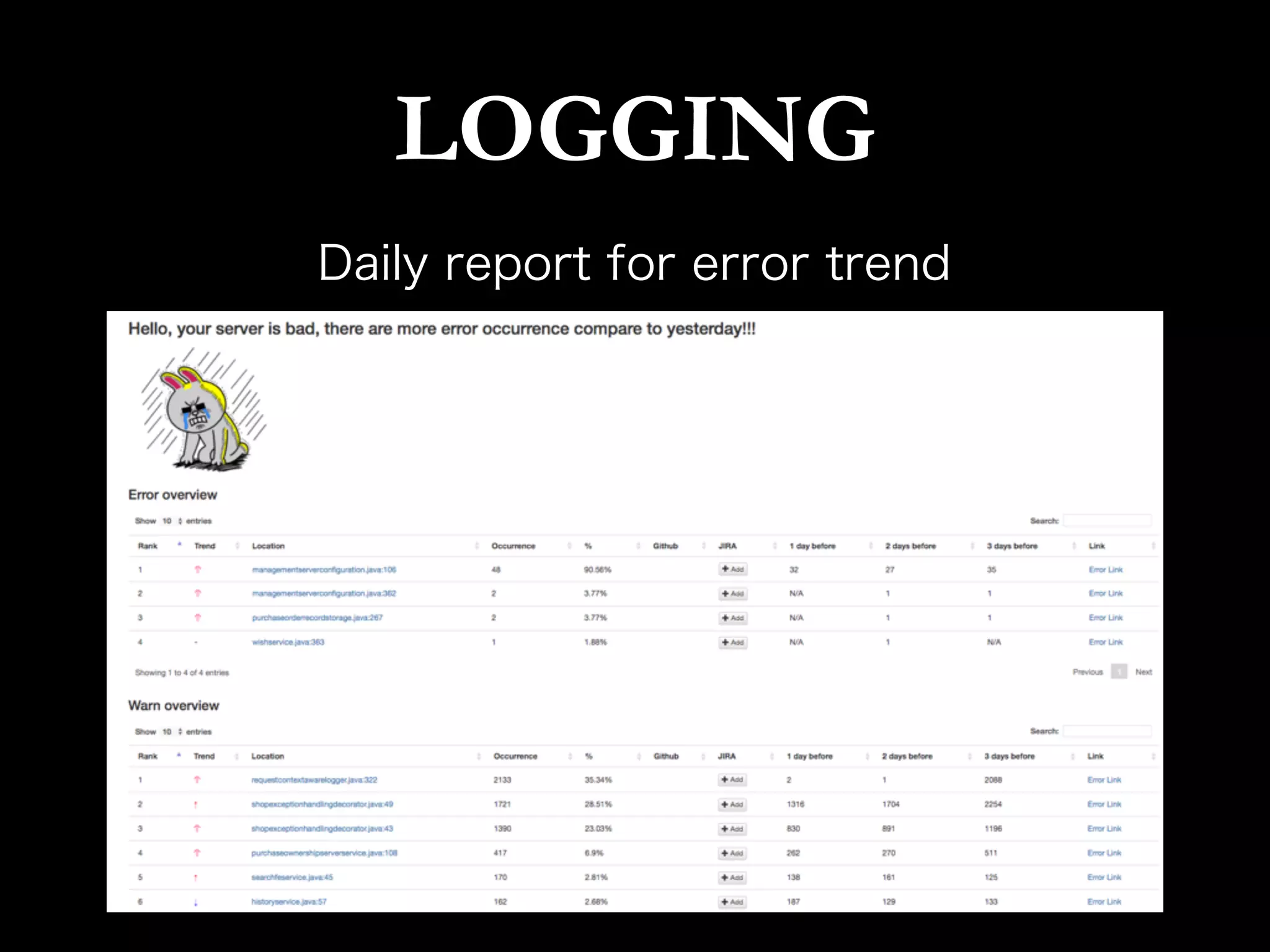

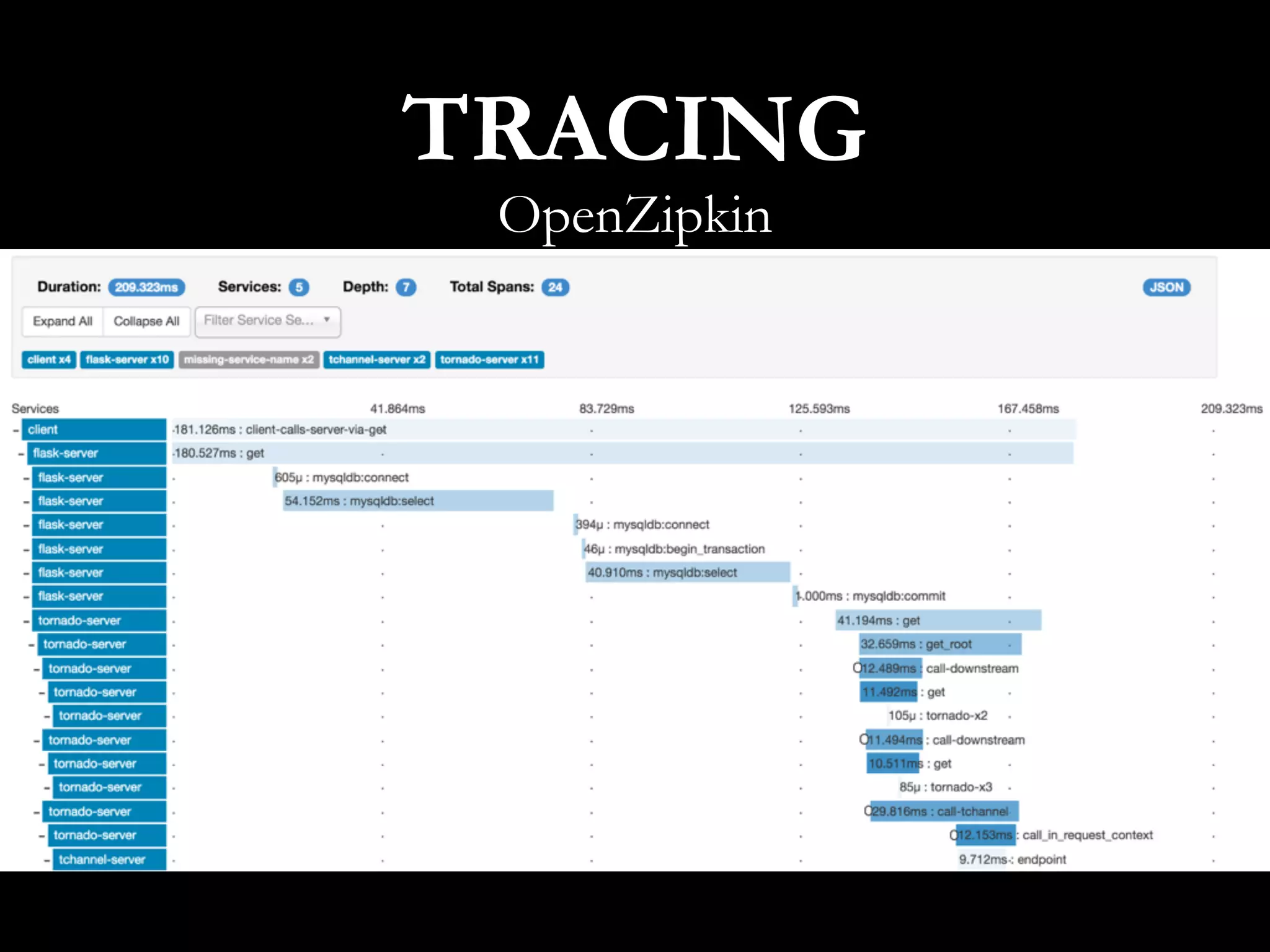

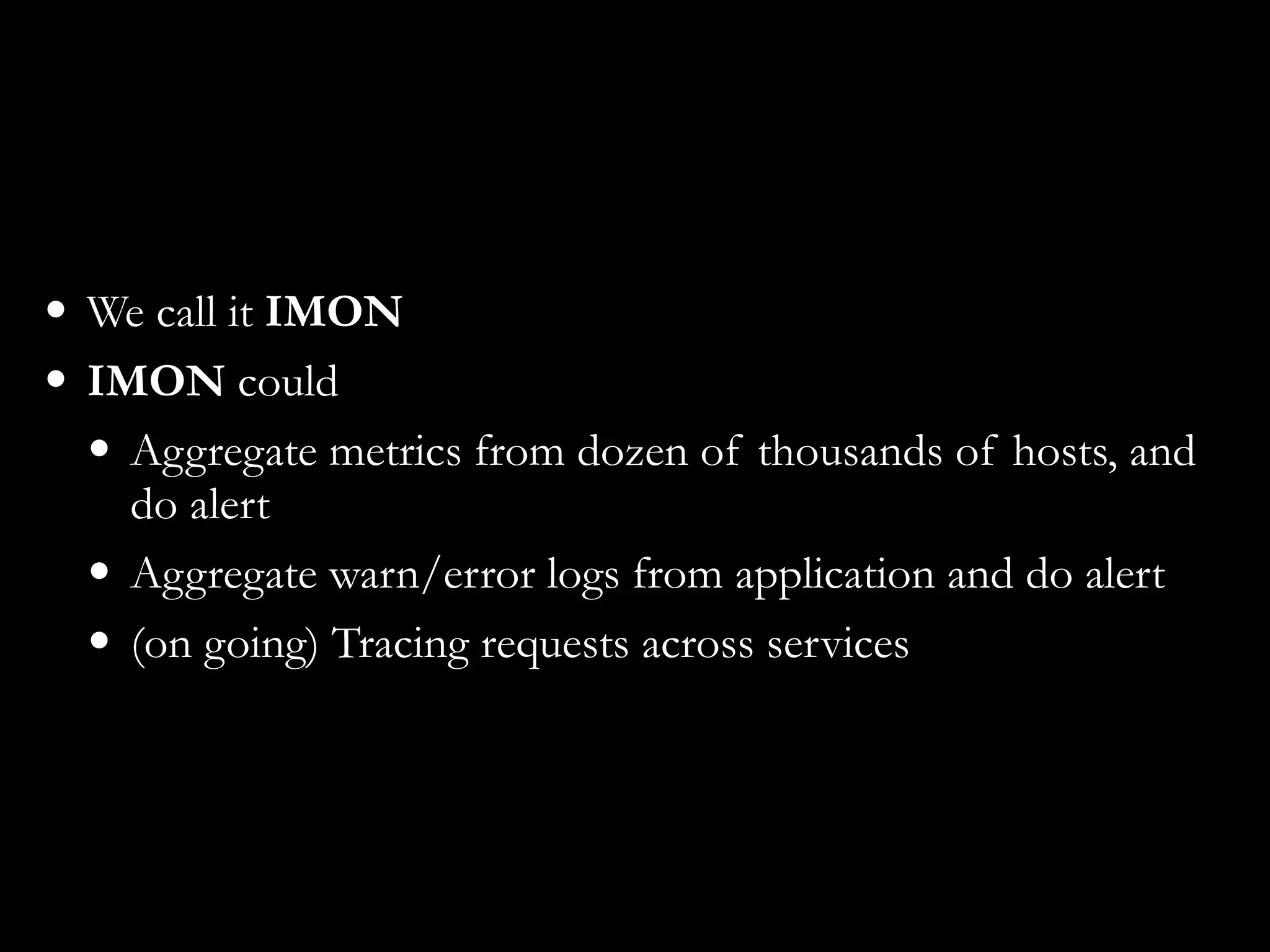

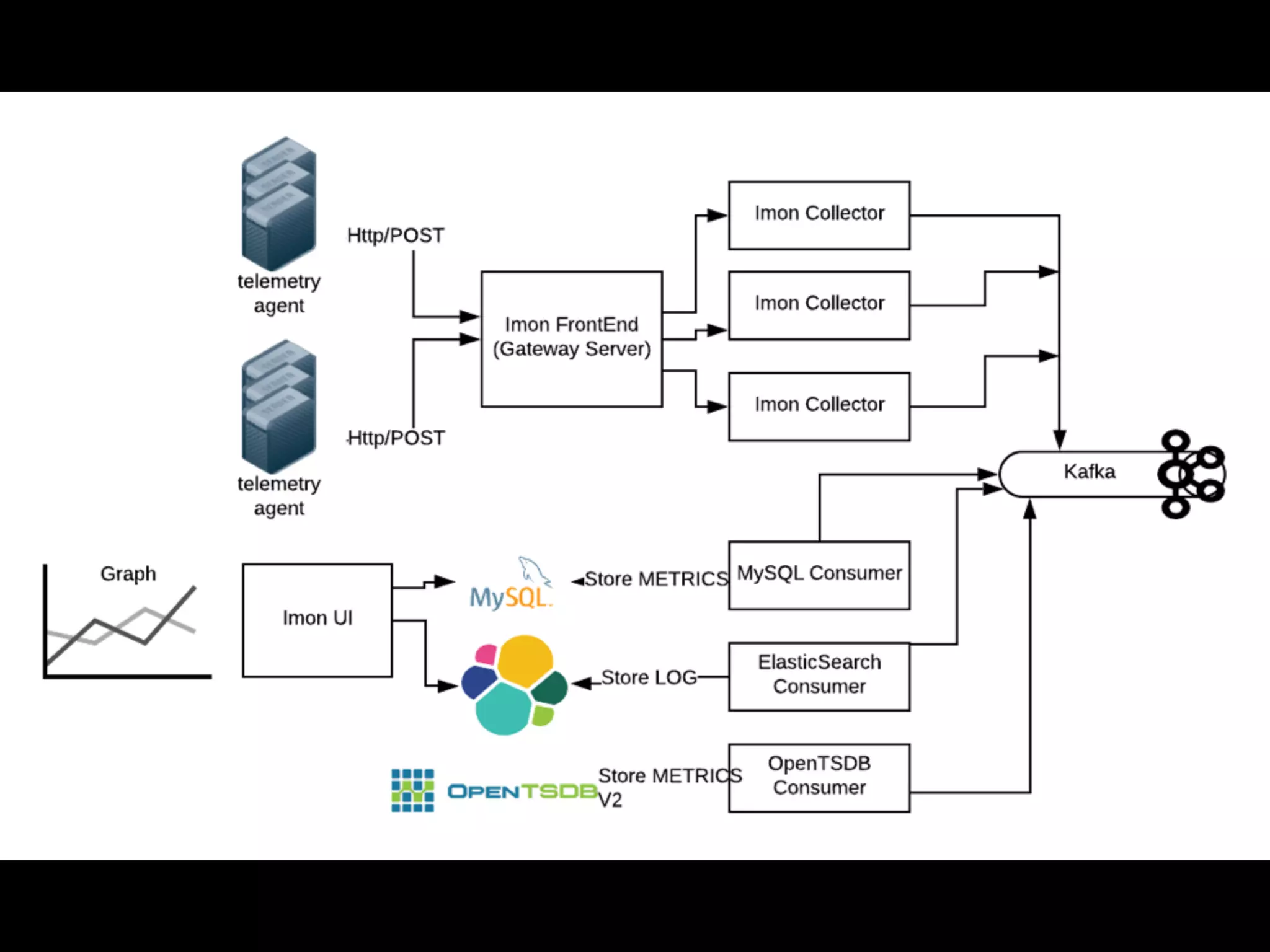

This document discusses metrics driven development from an observability perspective at LINE Corp. It summarizes LINE's observability stack, which includes metrics, logging, and tracing to monitor user experience and reliability across its many services and 170M users. The stack called IMON aggregates millions of metrics and log entries per minute from thousands of servers. Engineers are responsible for monitoring their applications and are required to do on-call rotations. Future work includes improving the telemetry system and driving an autonomous, data-driven engineering culture focused on stability.