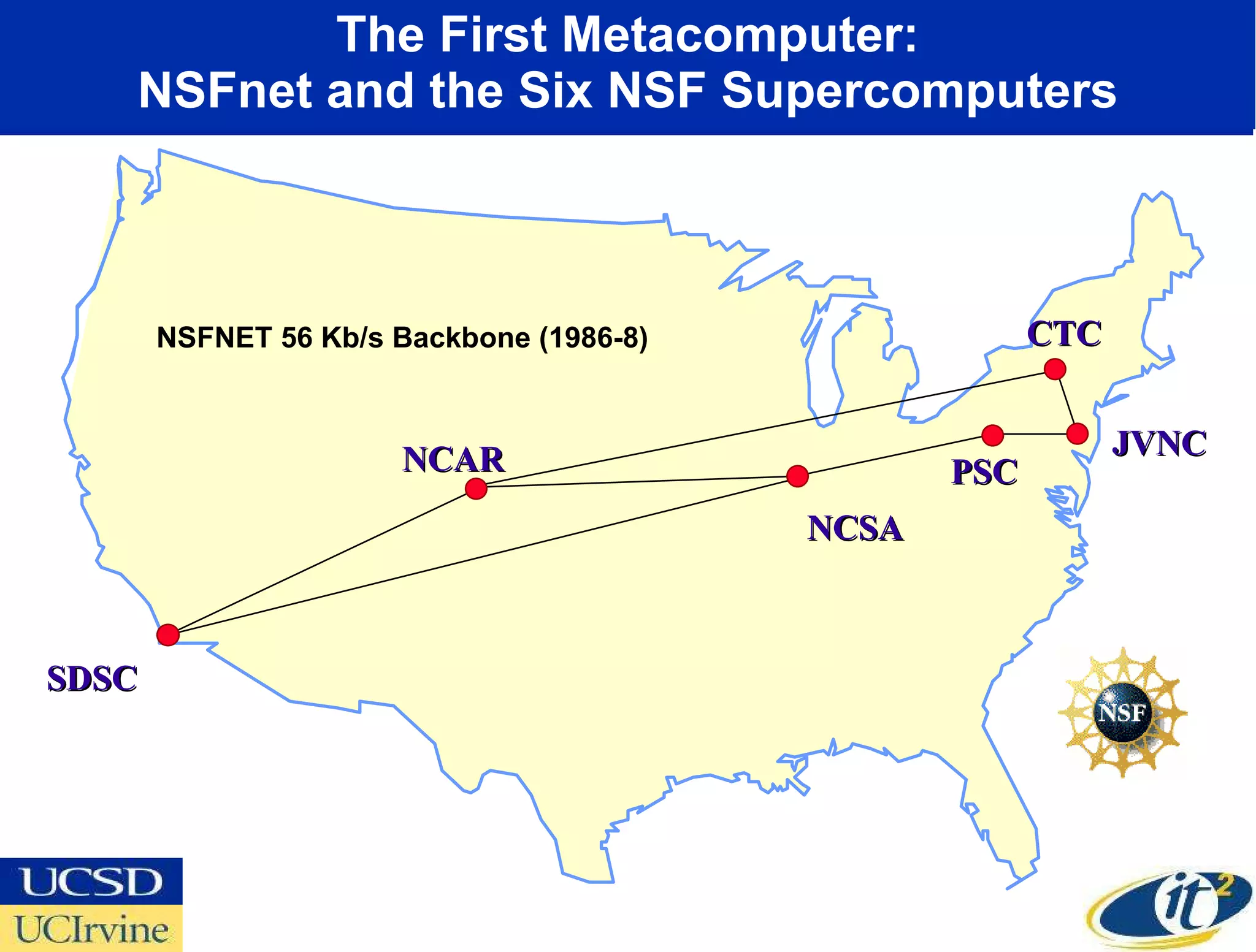

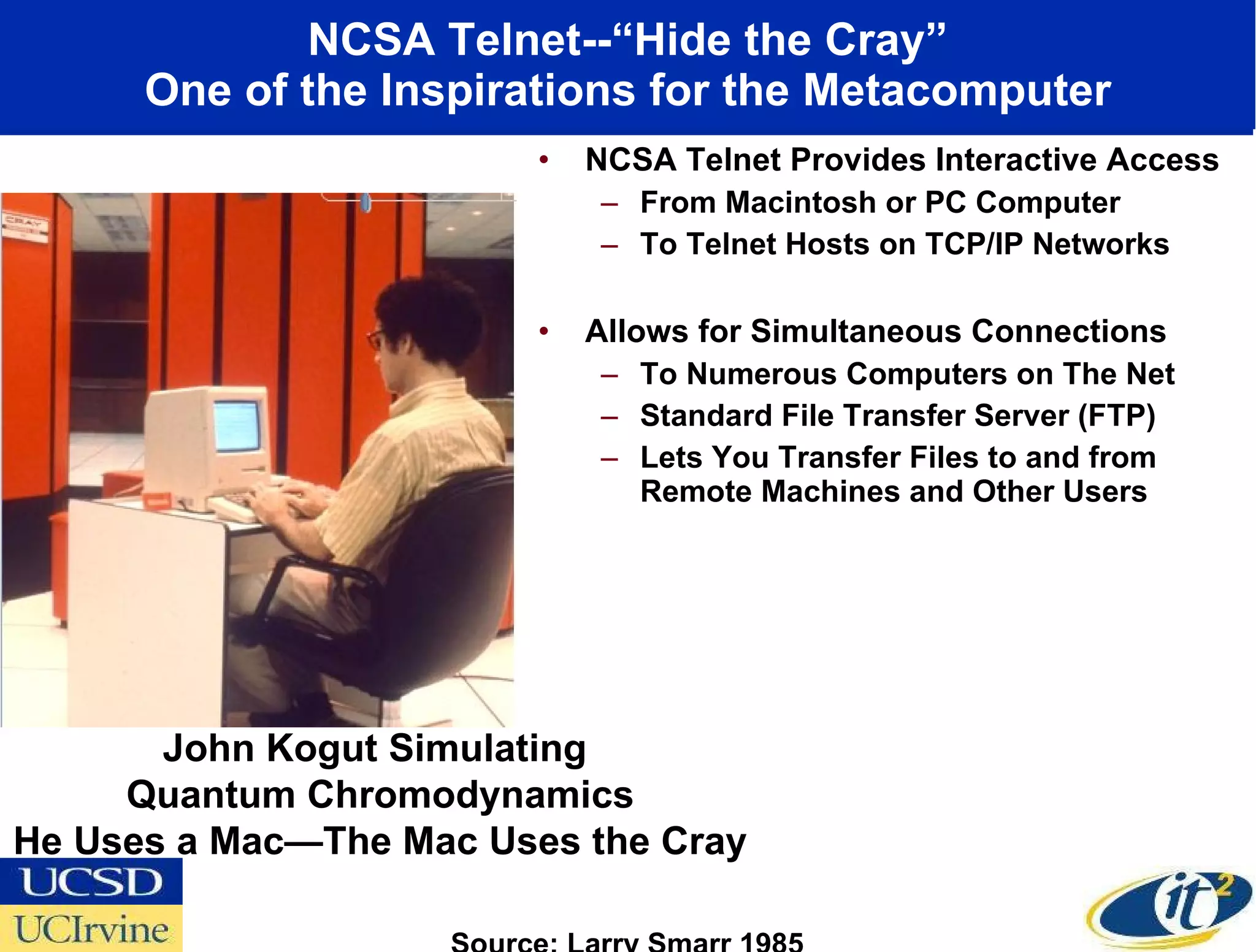

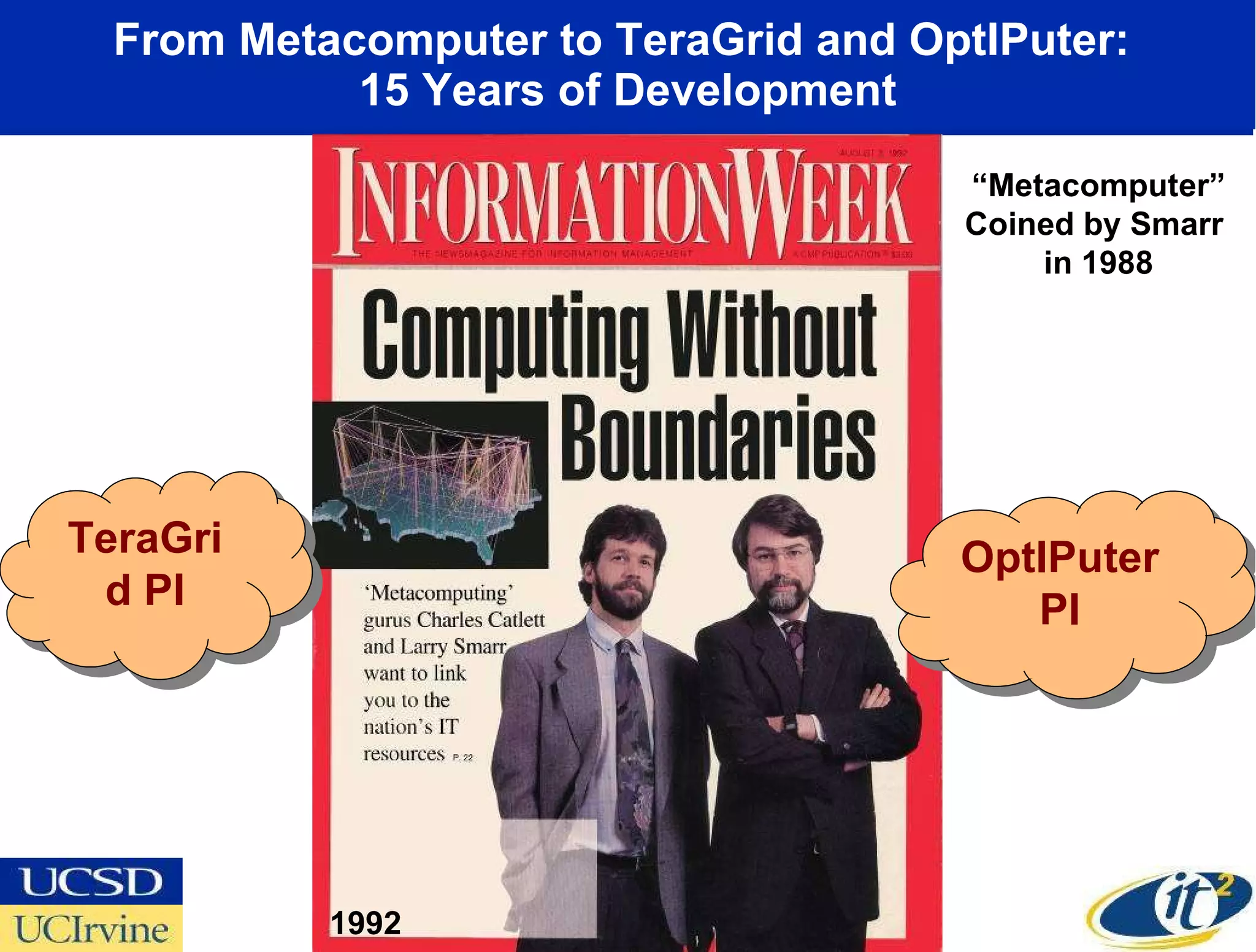

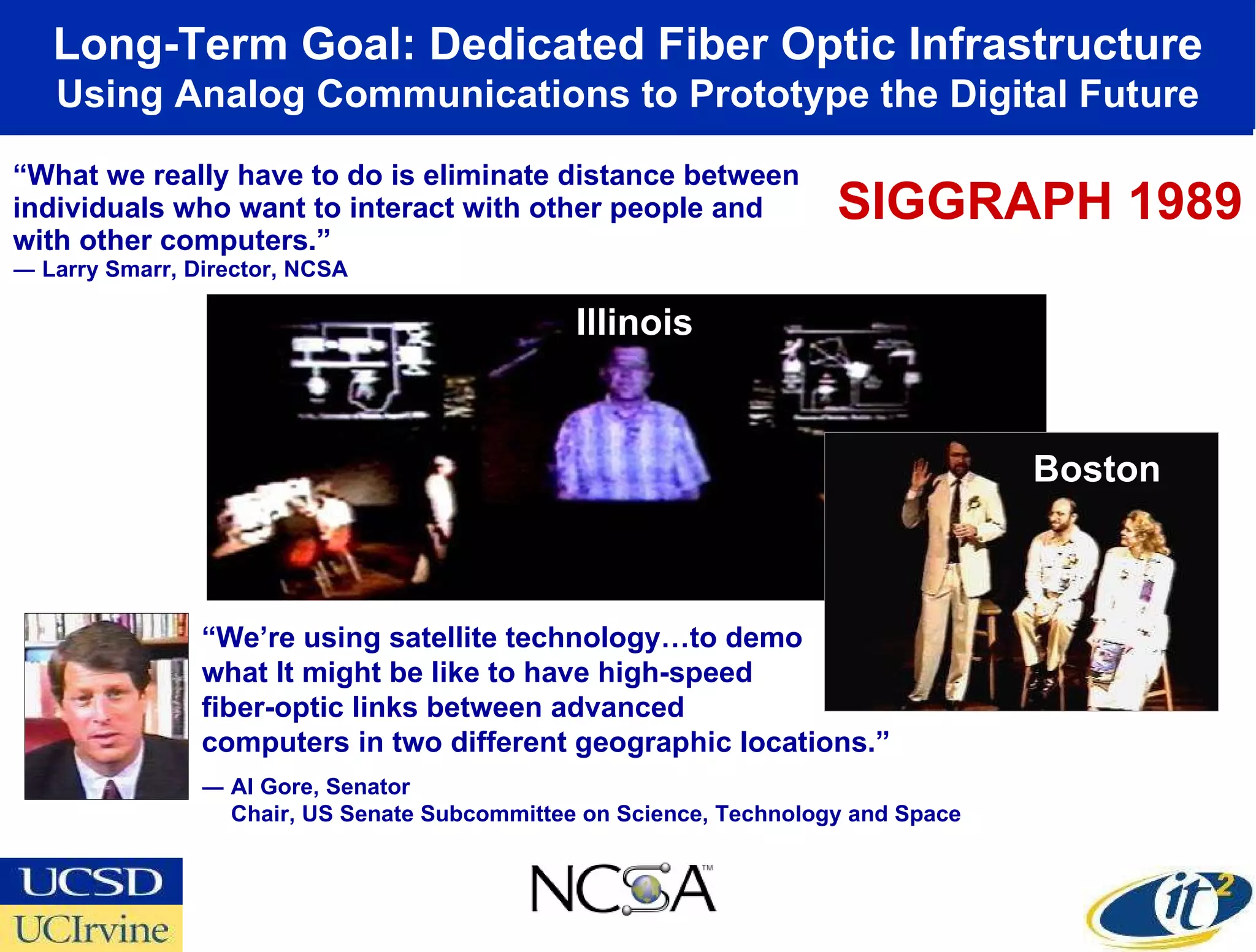

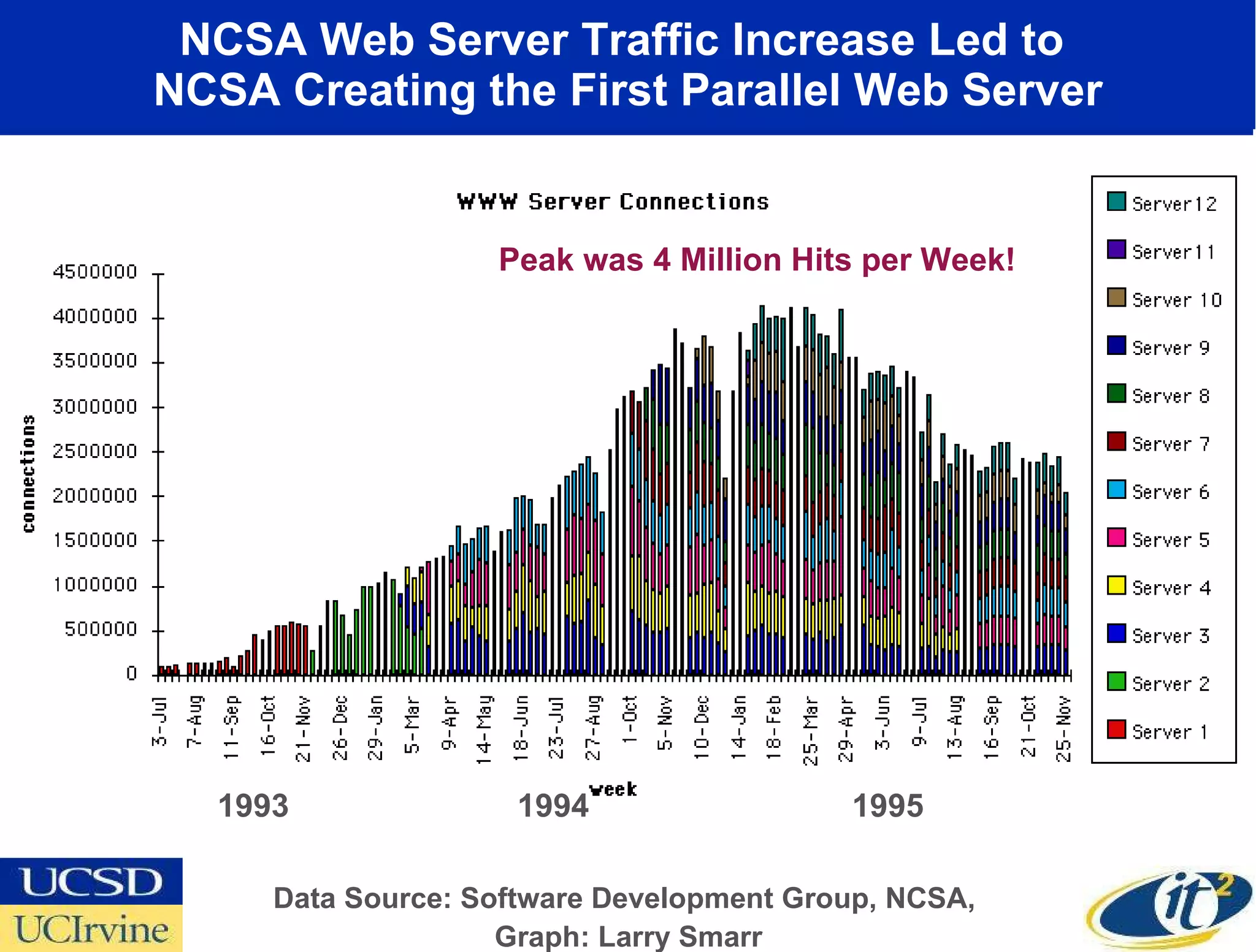

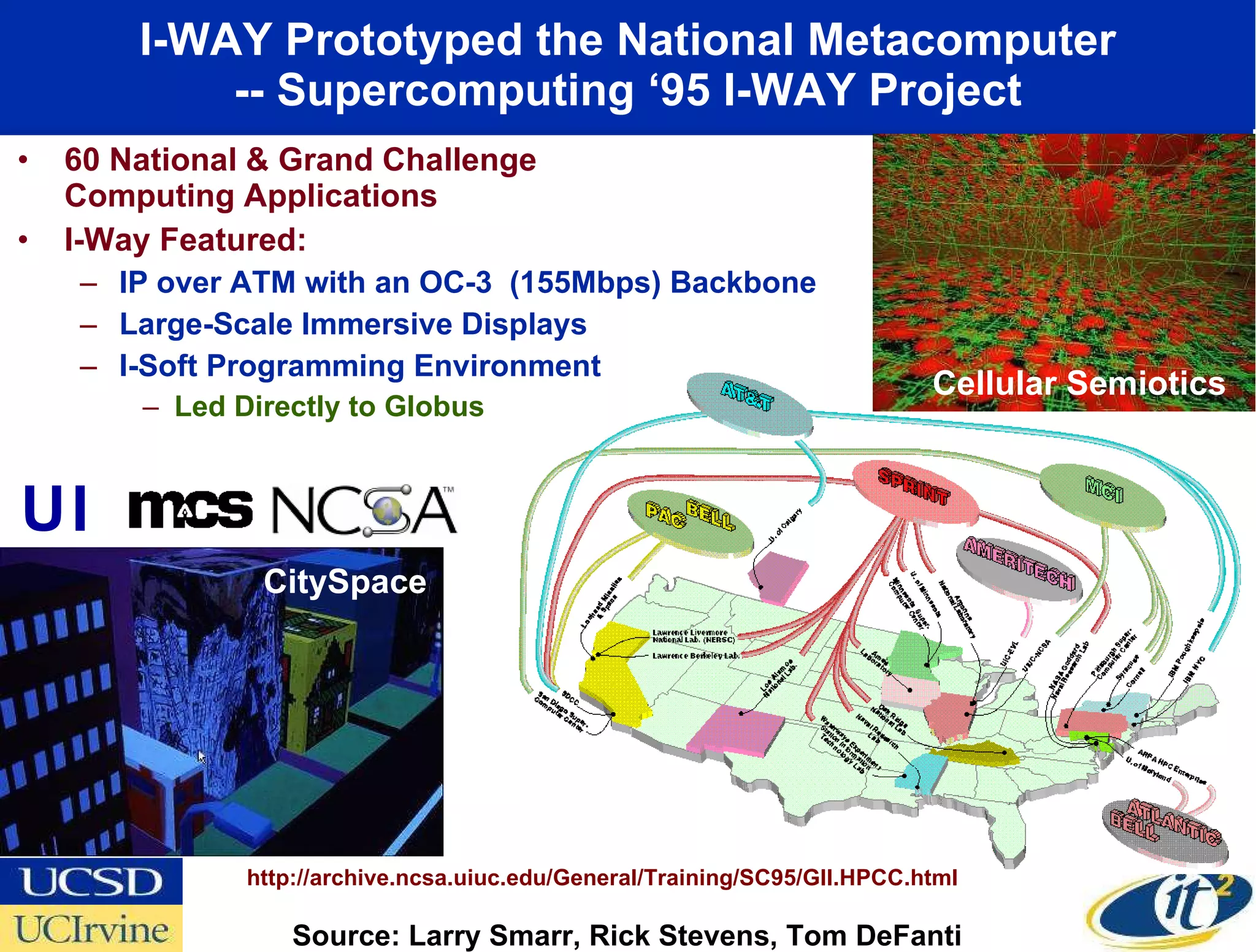

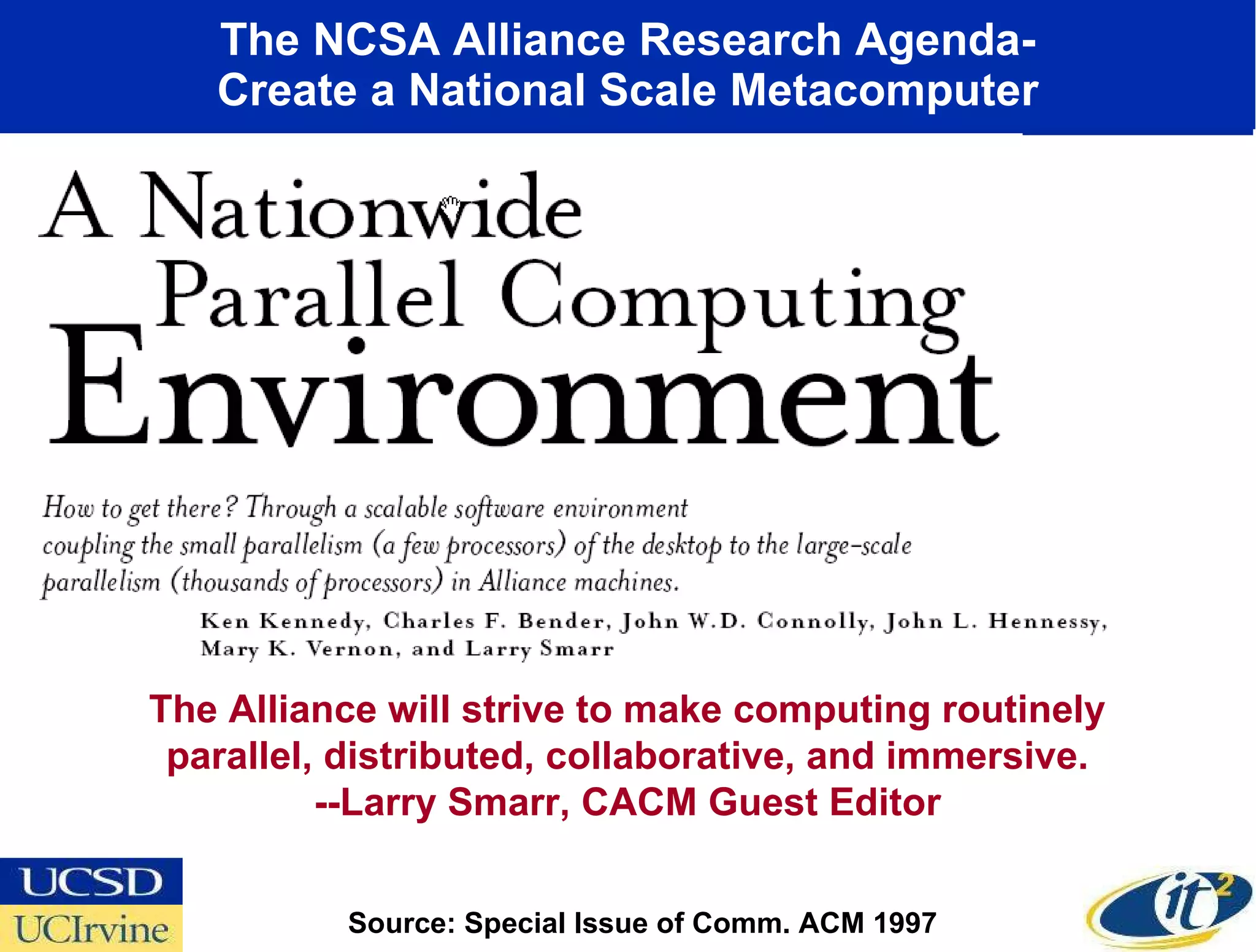

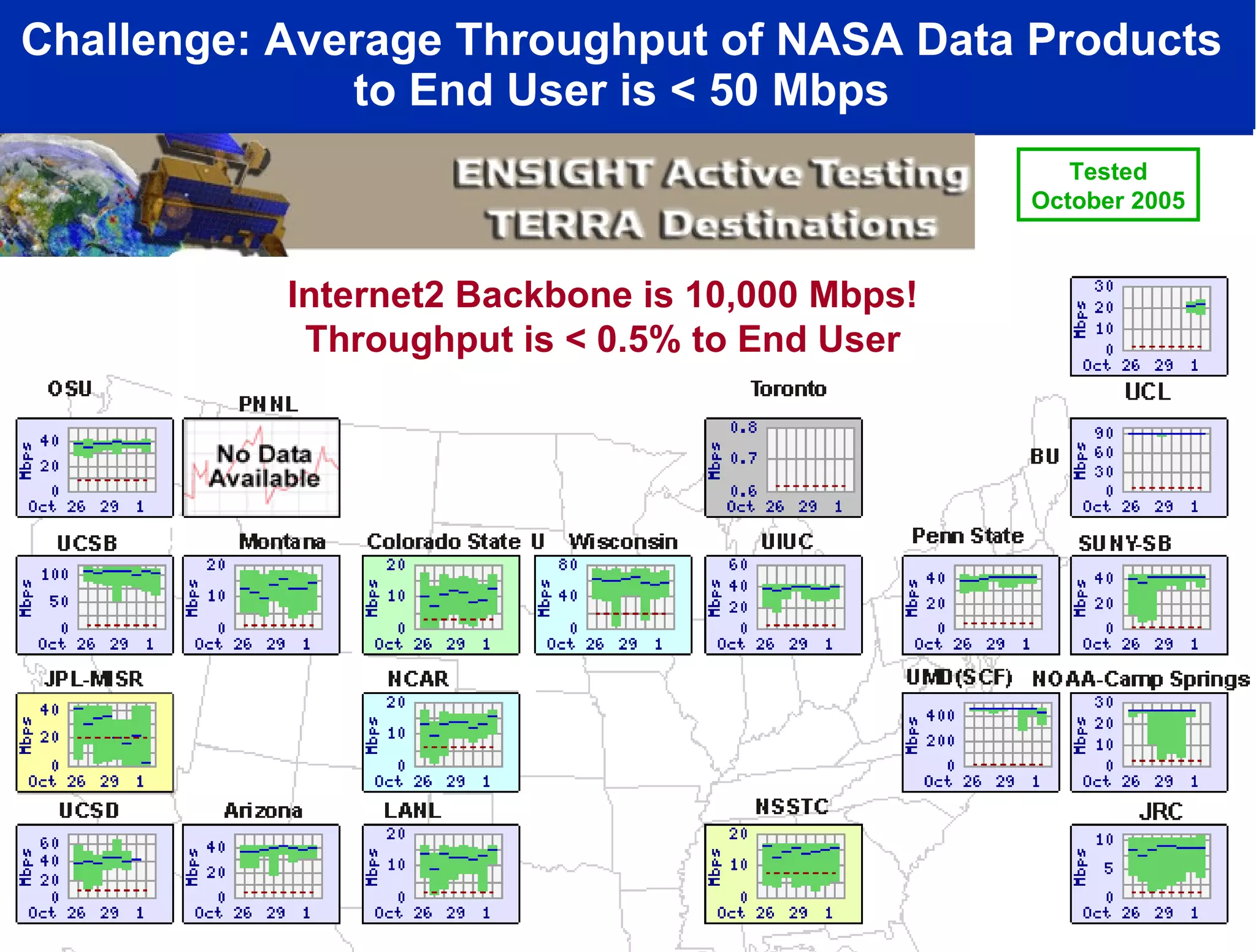

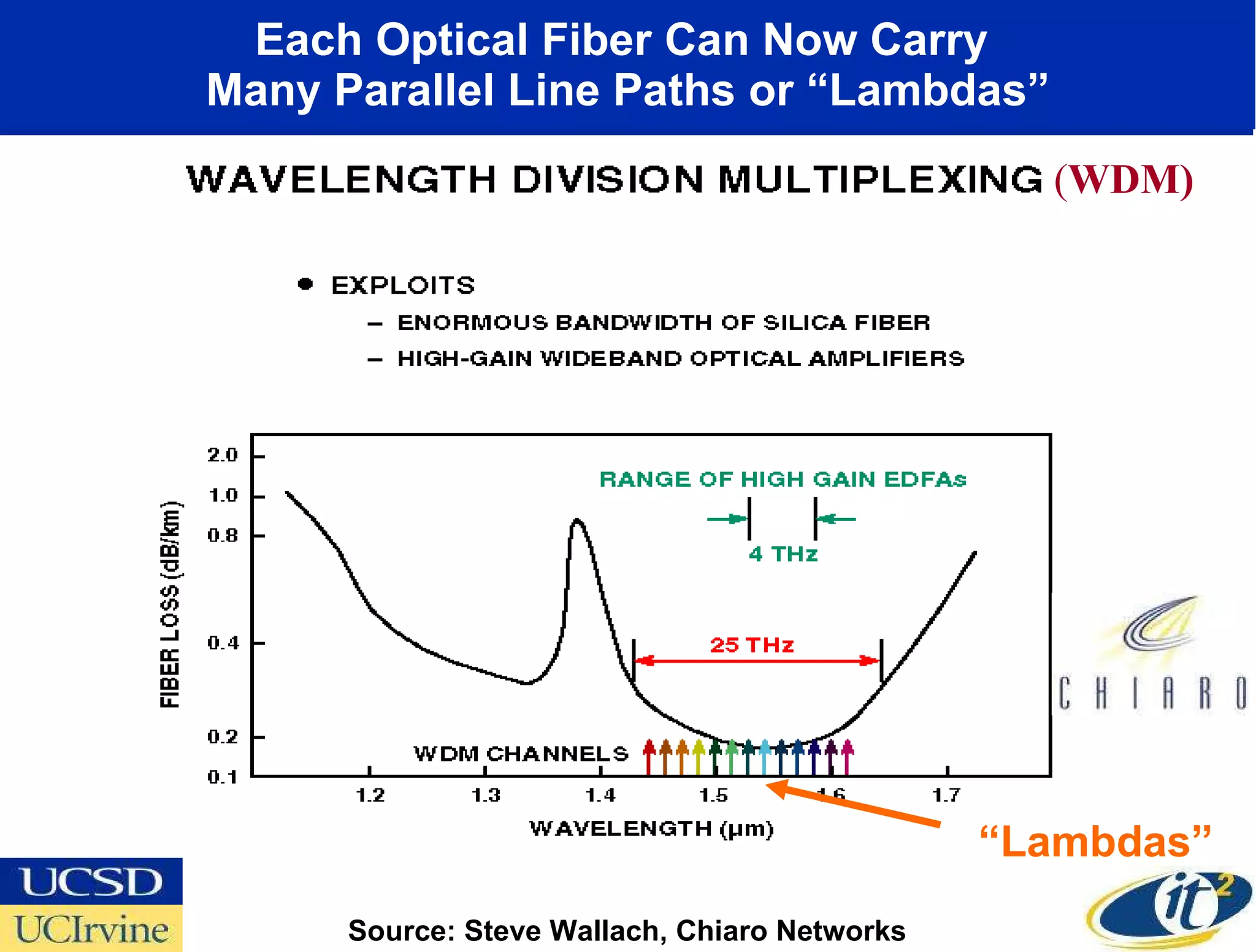

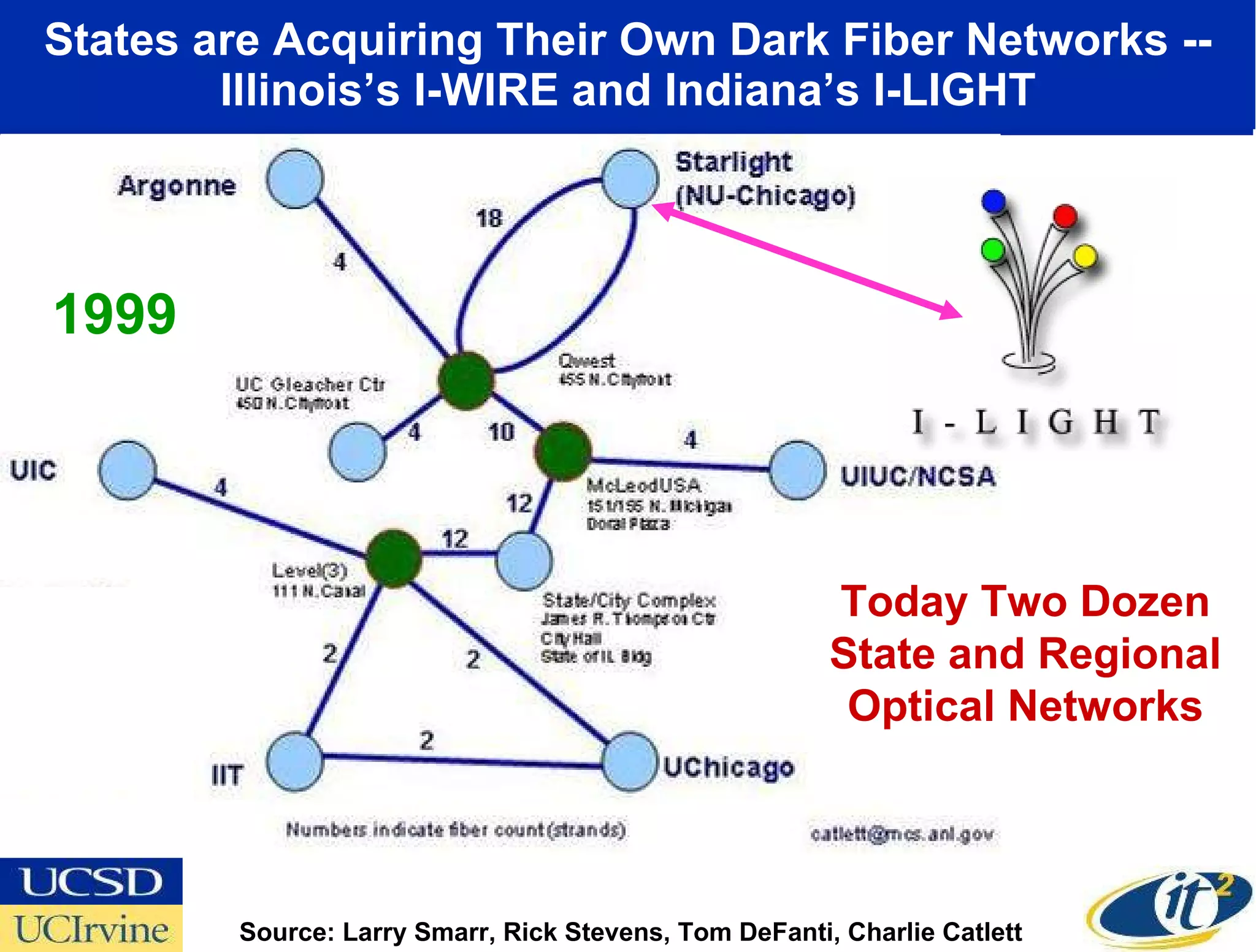

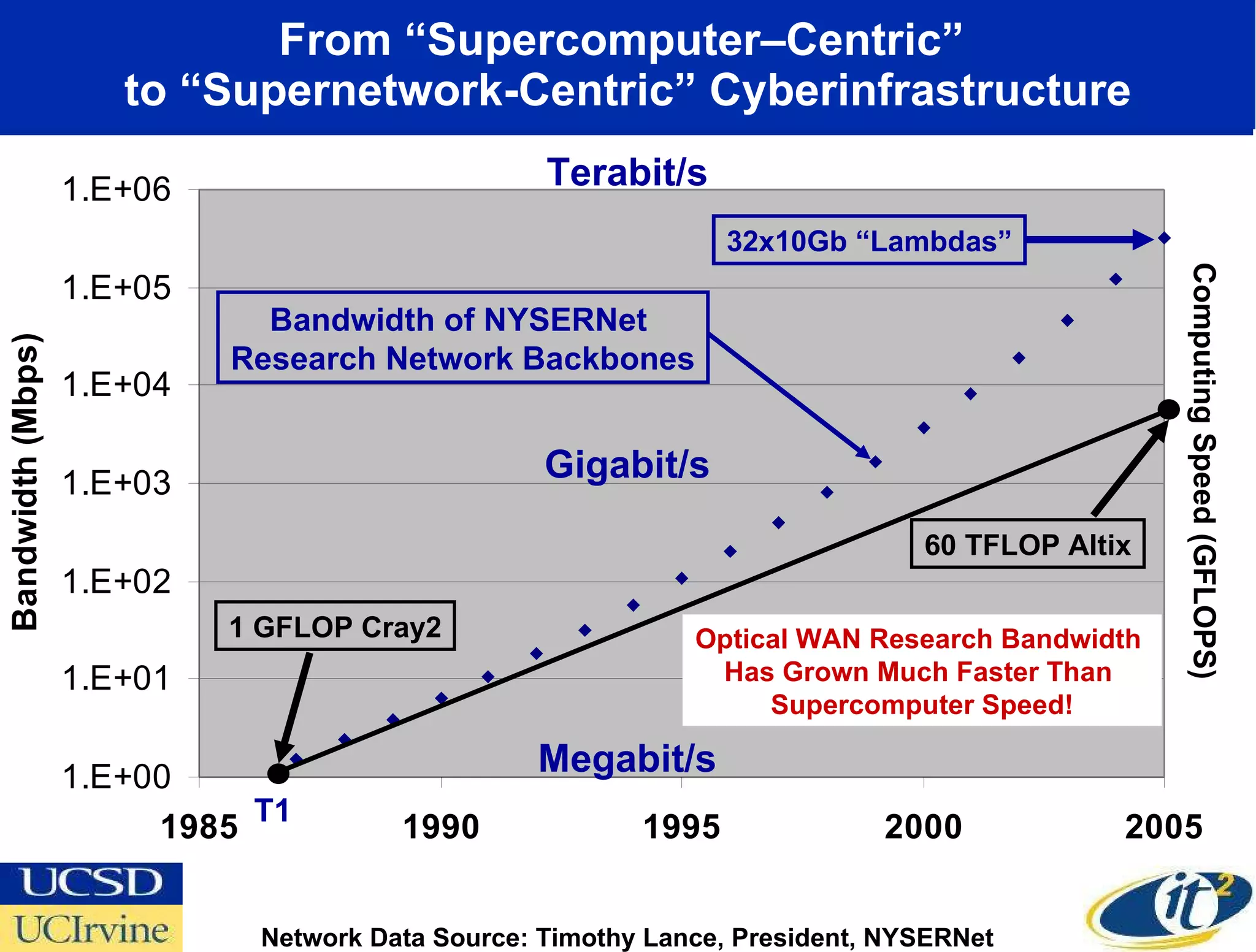

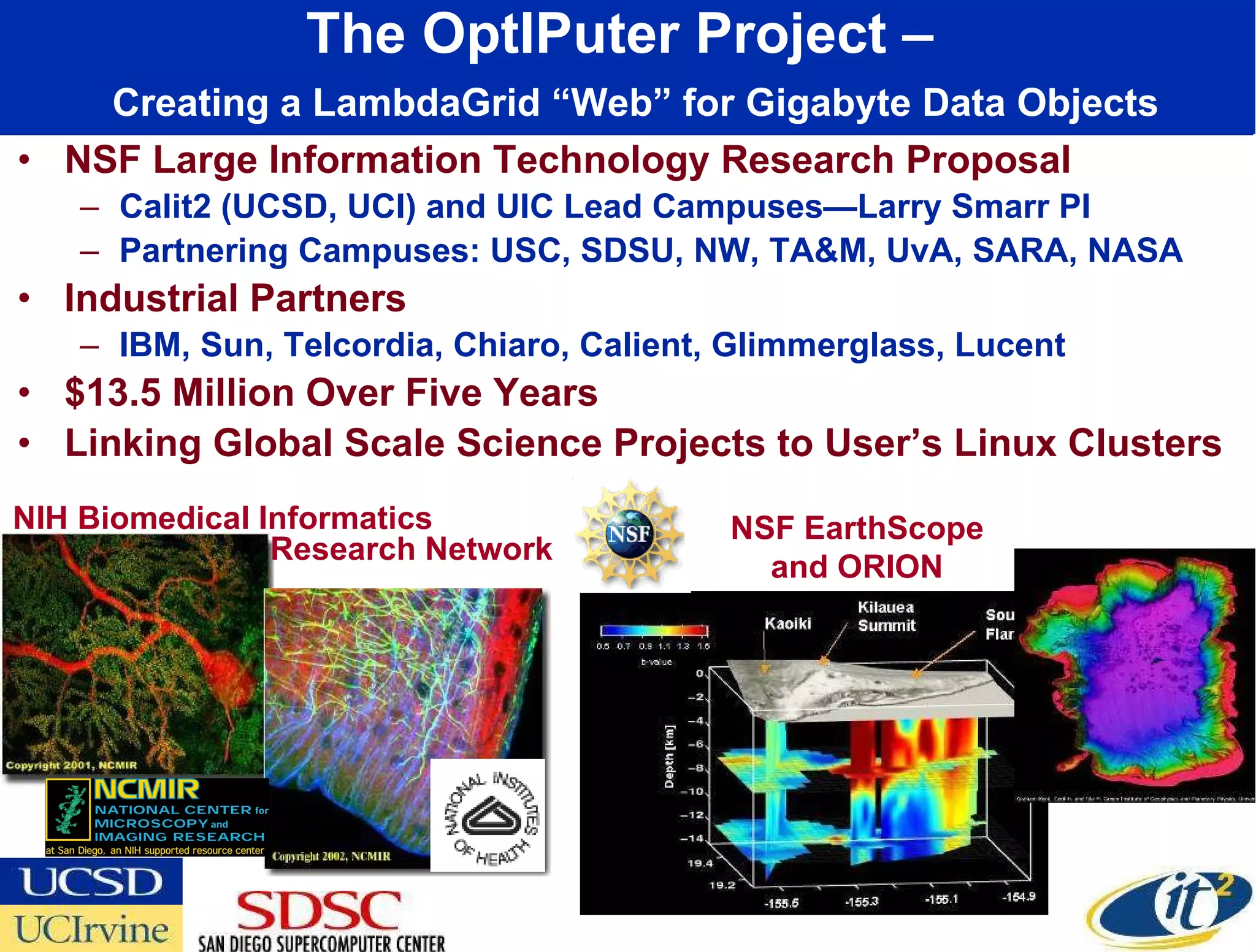

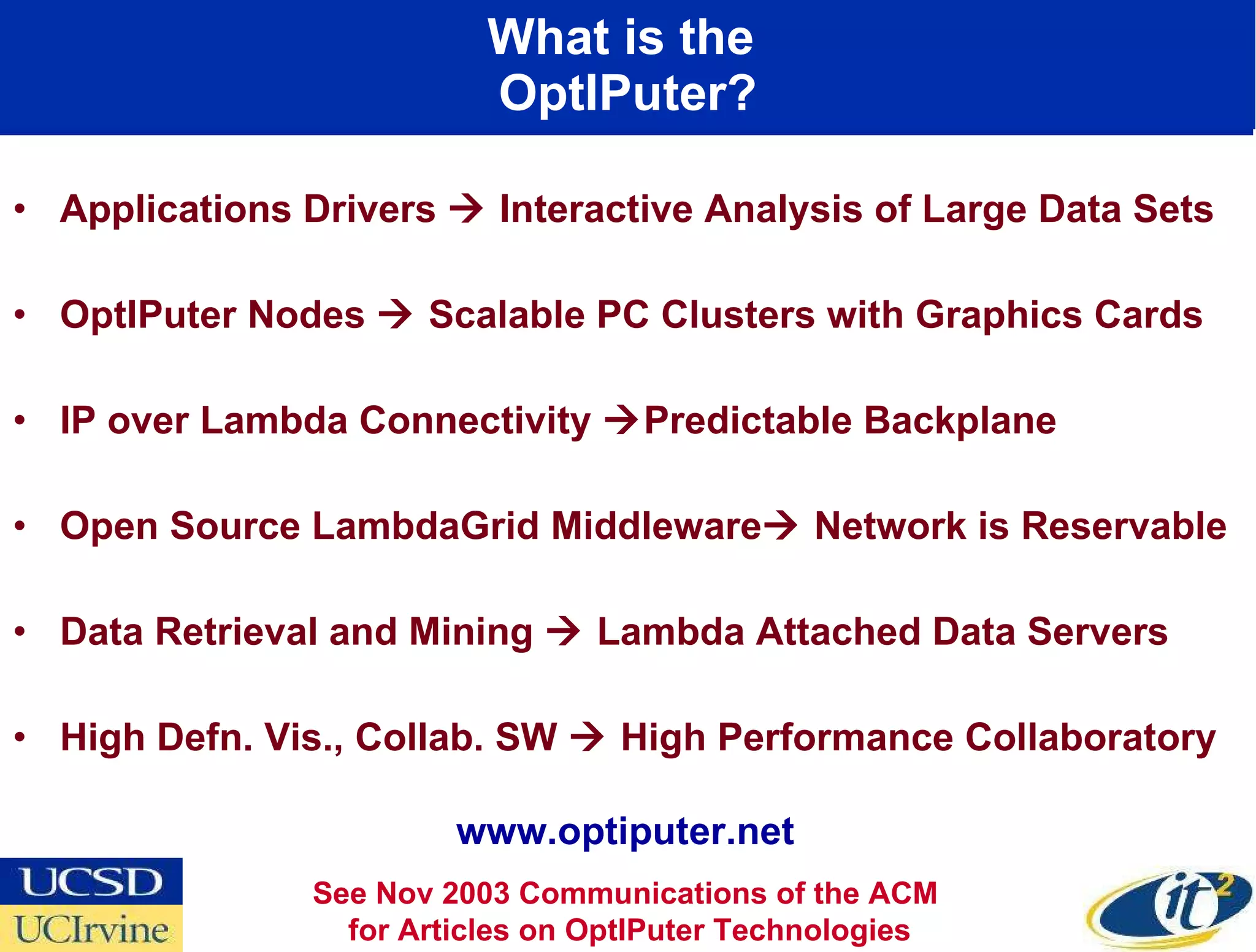

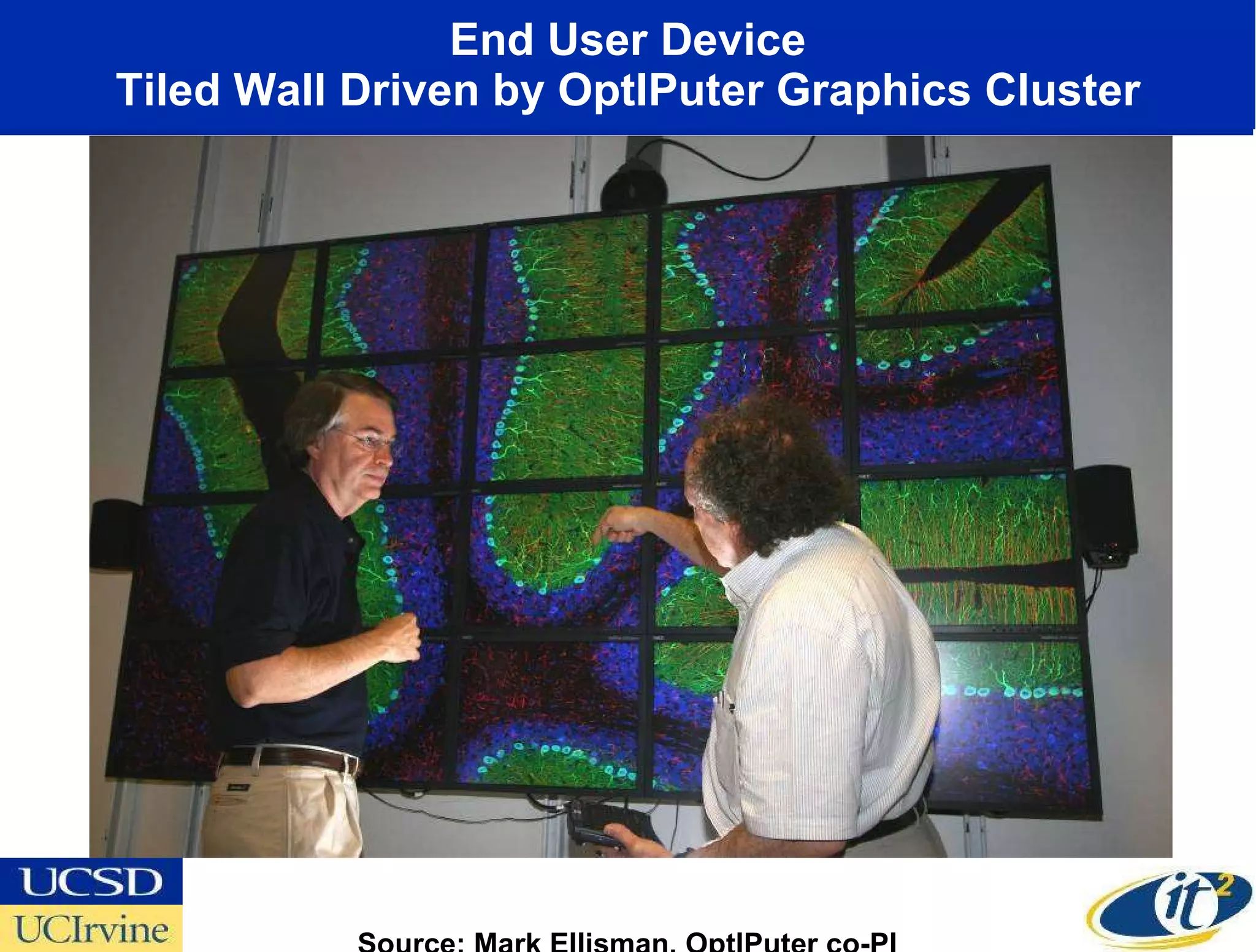

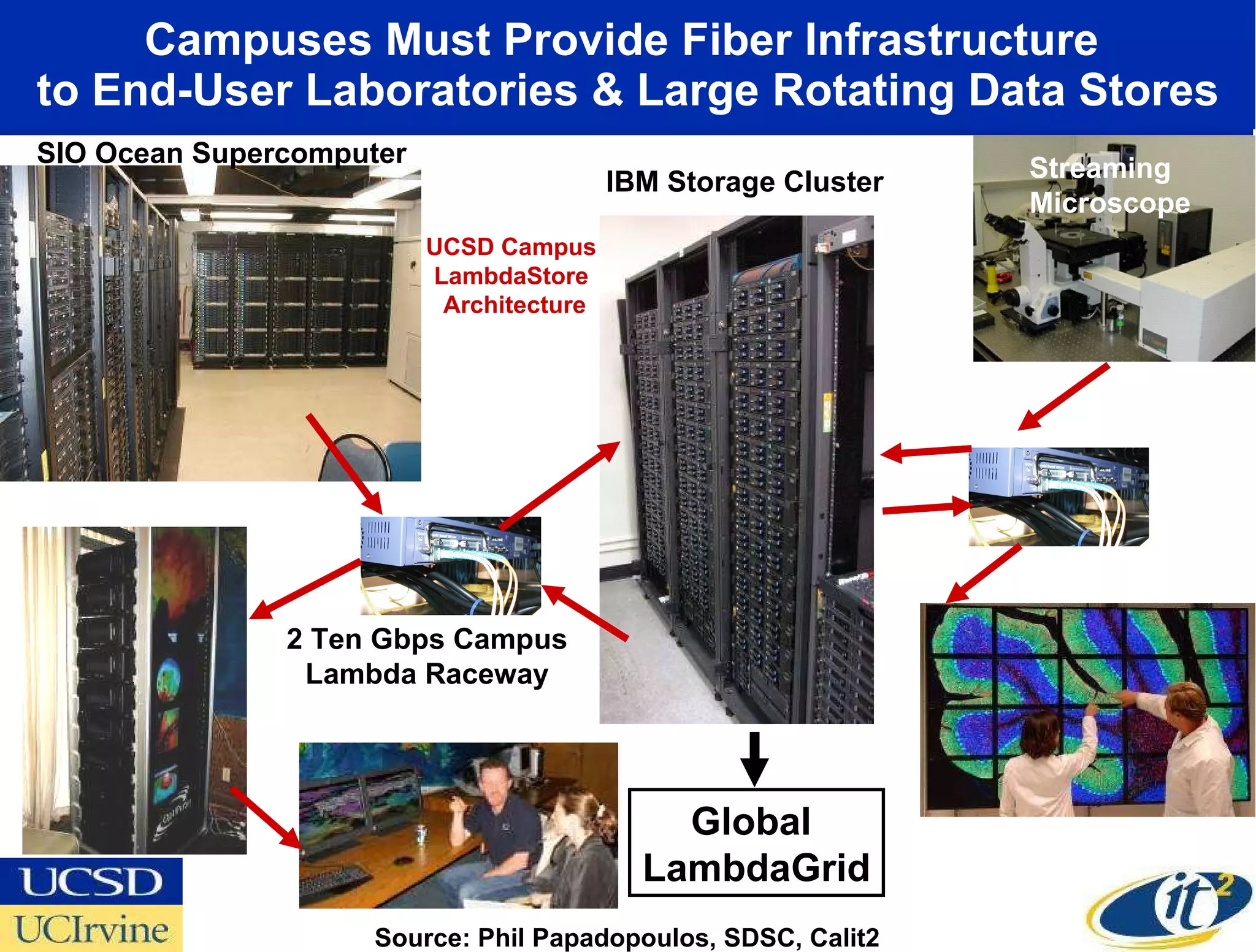

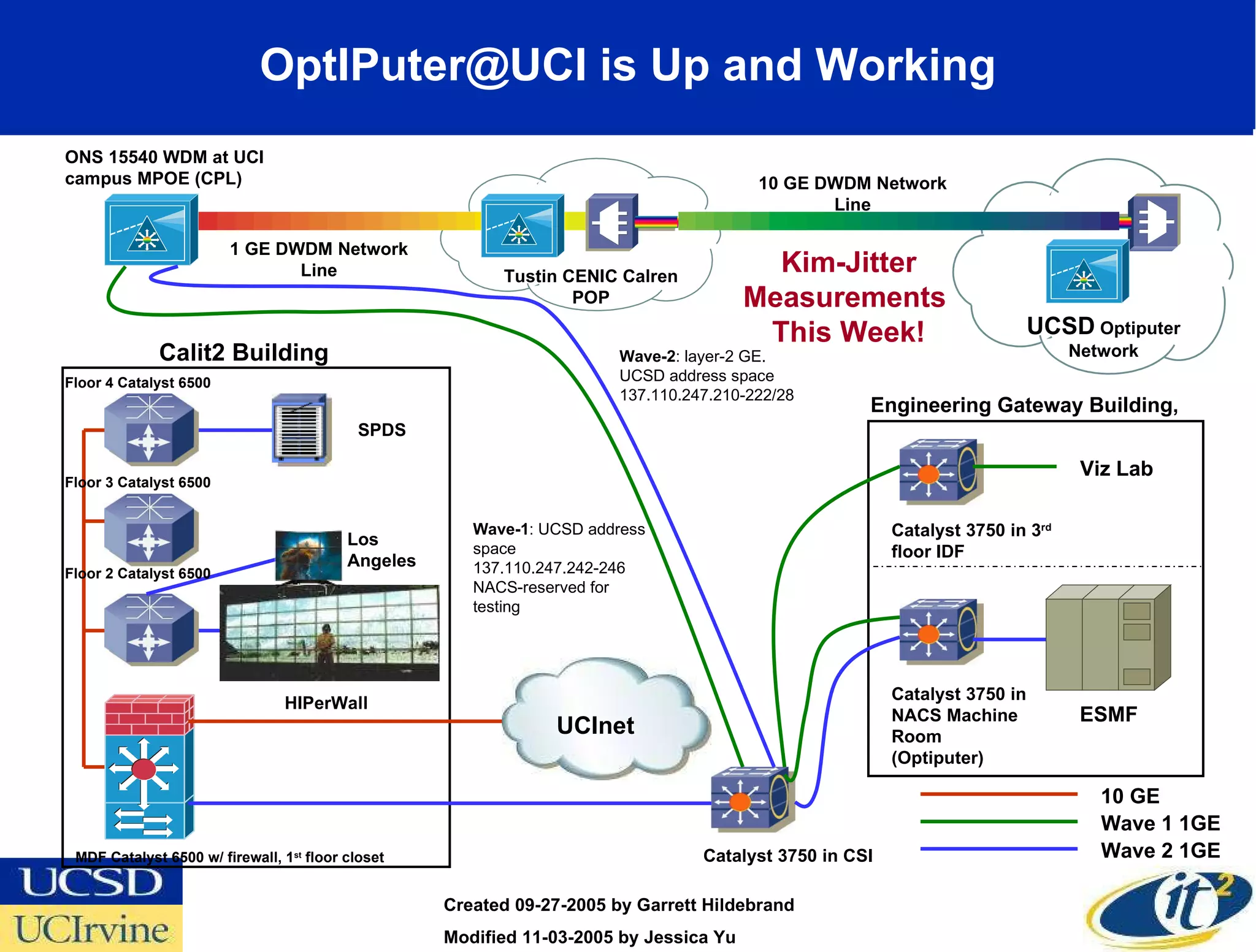

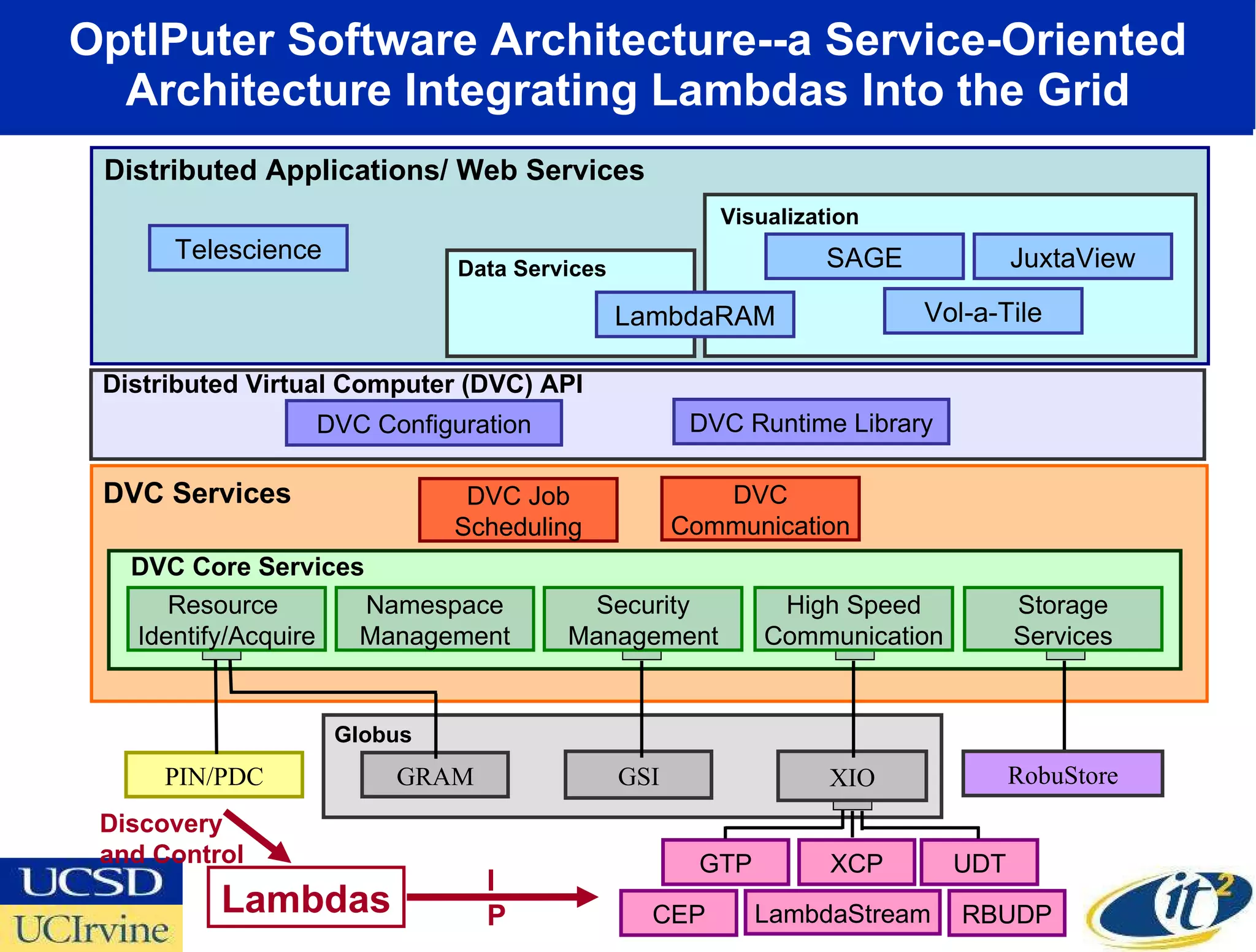

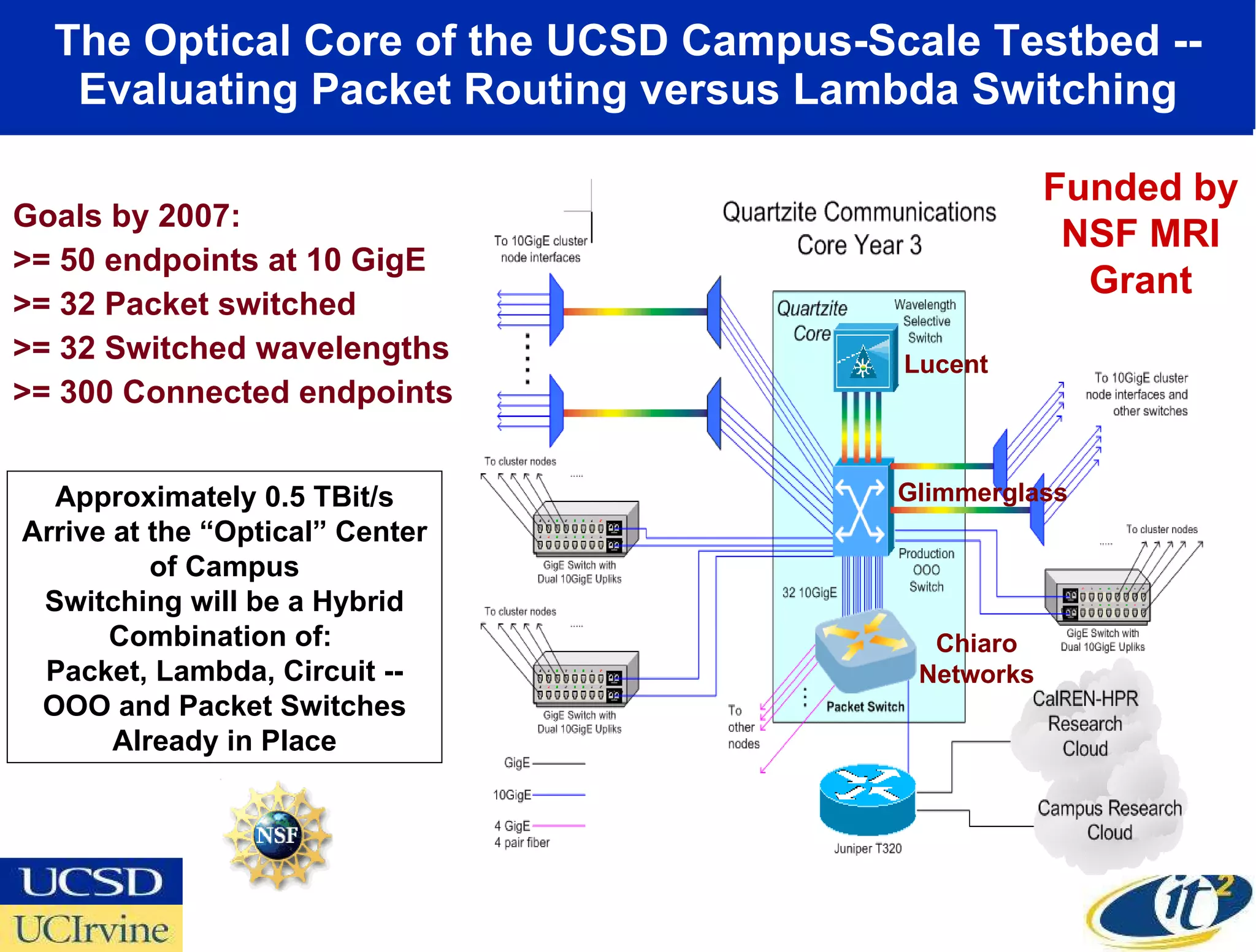

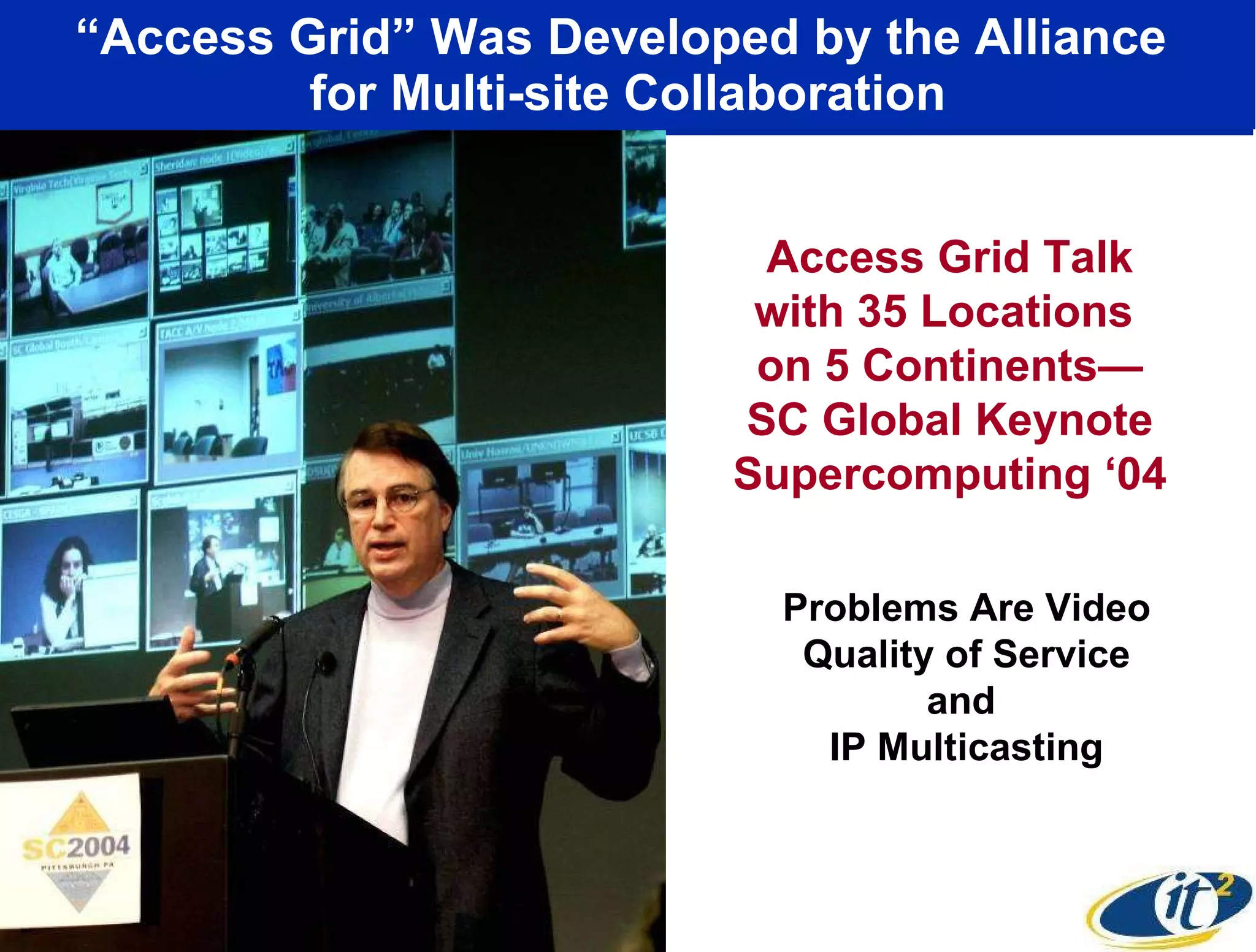

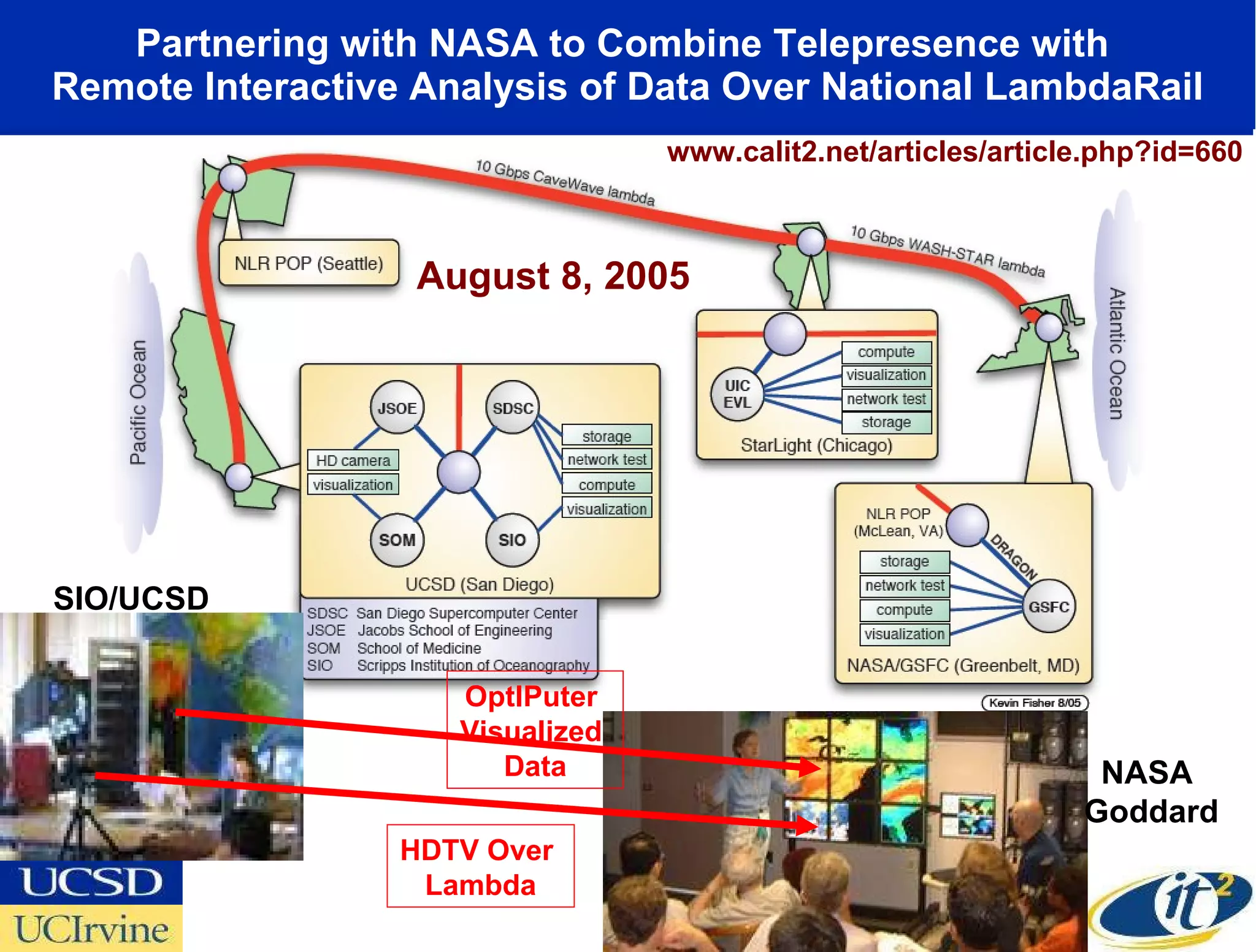

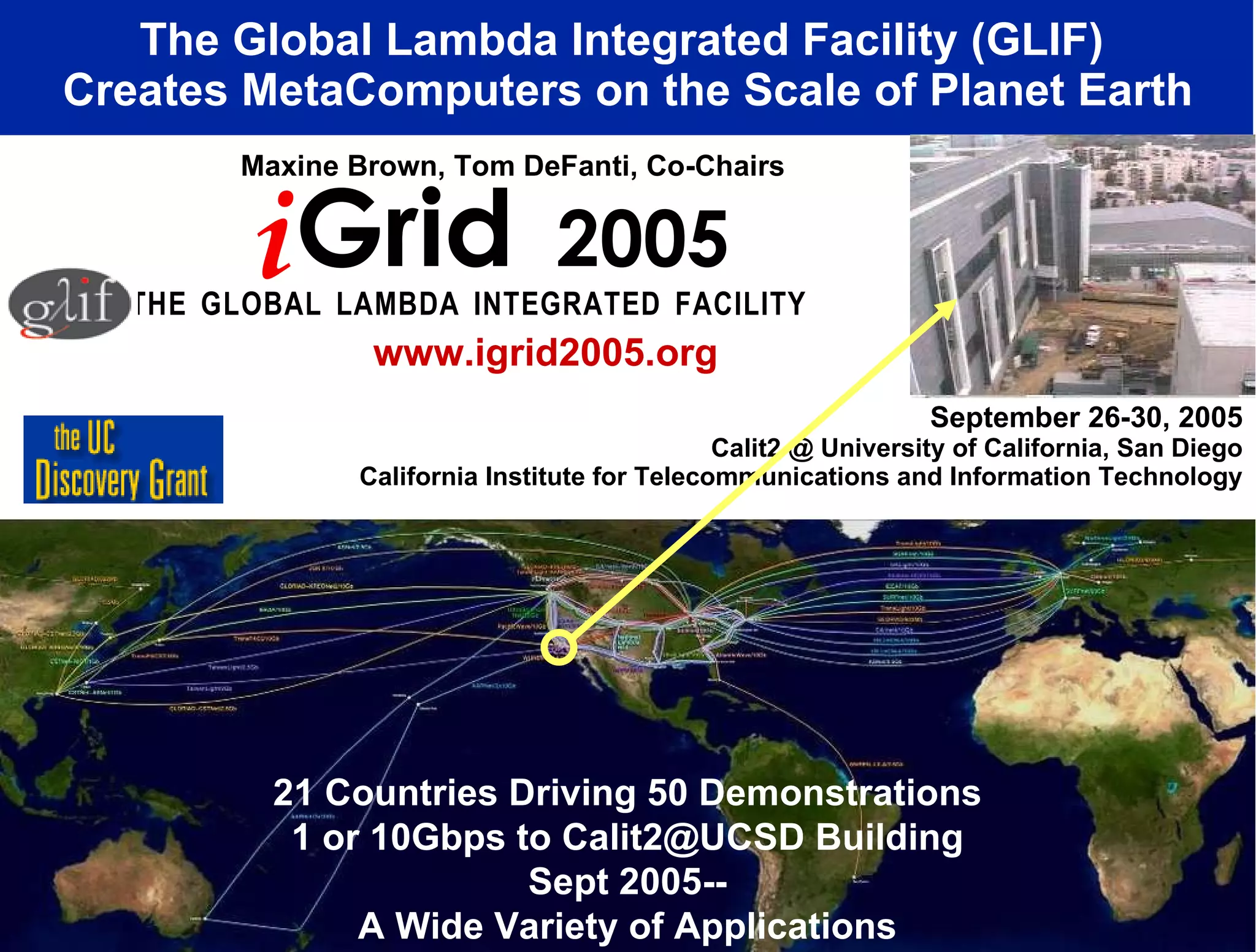

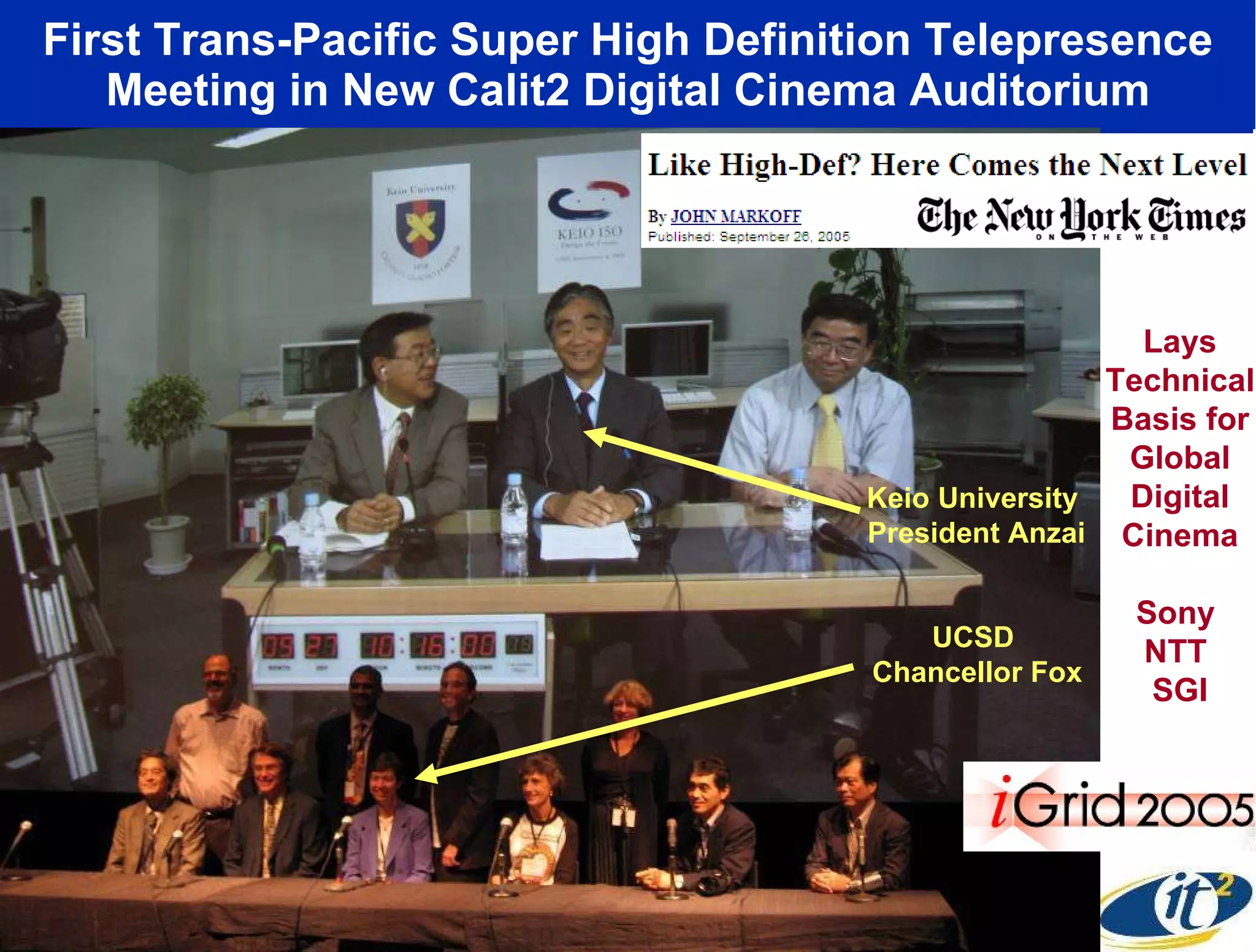

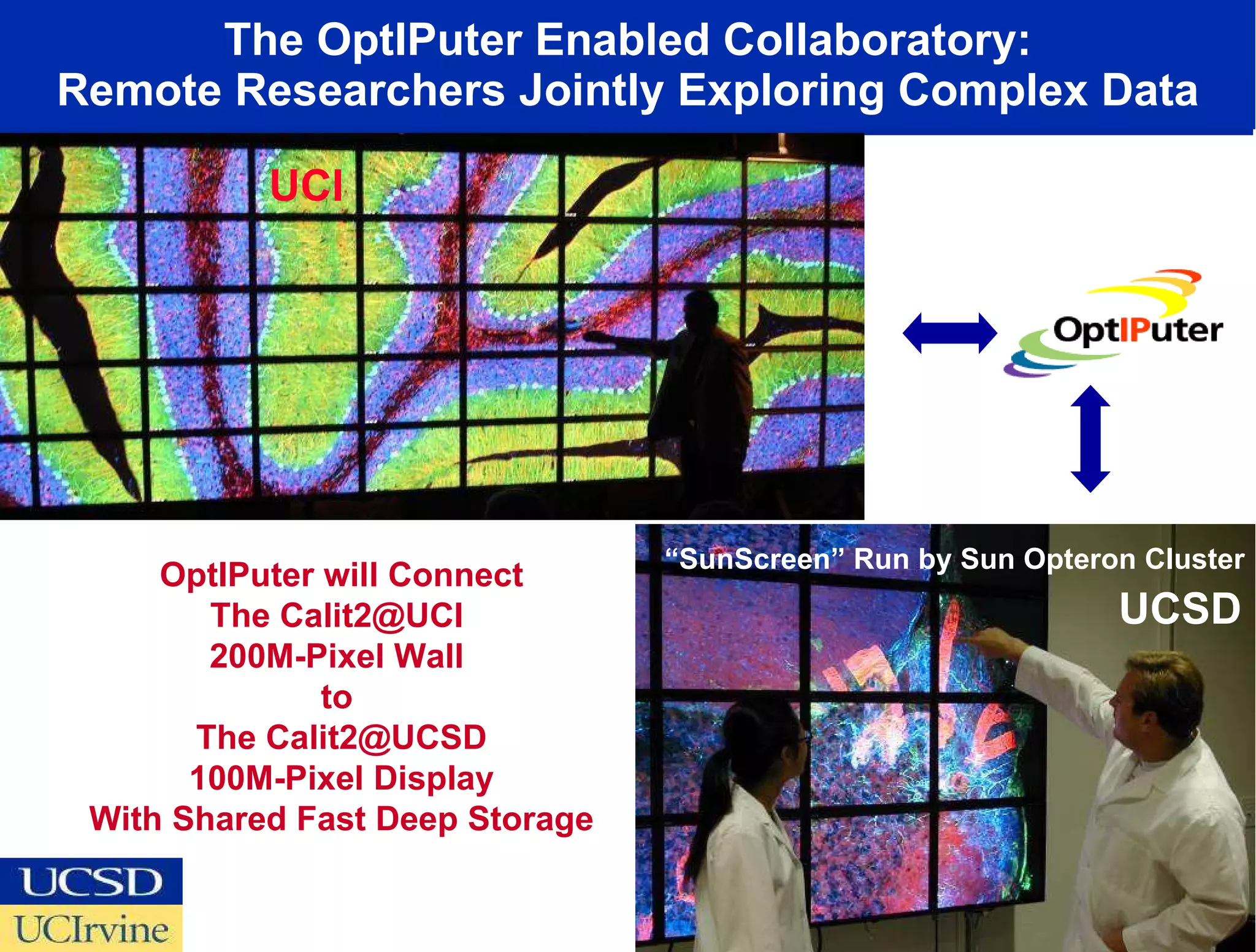

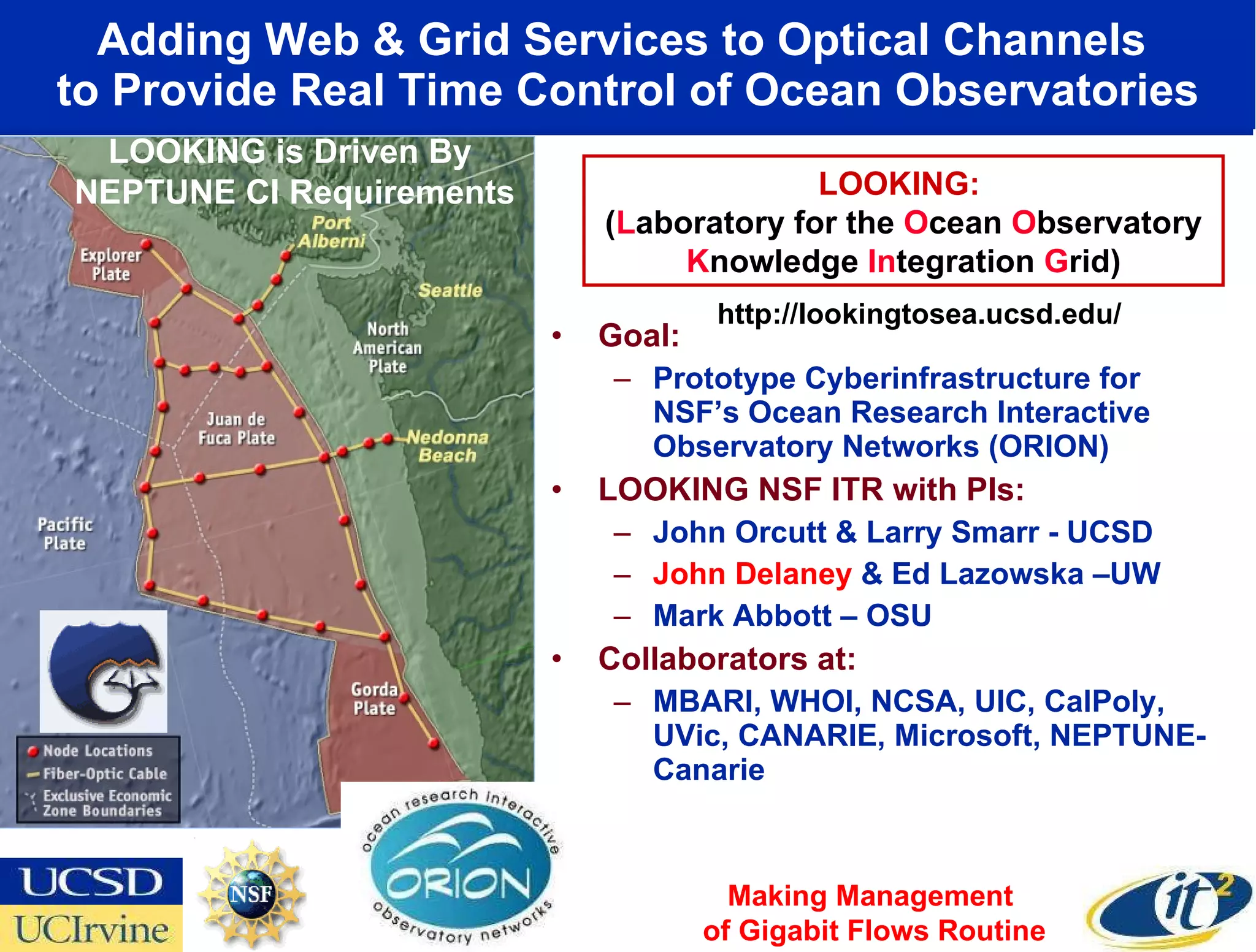

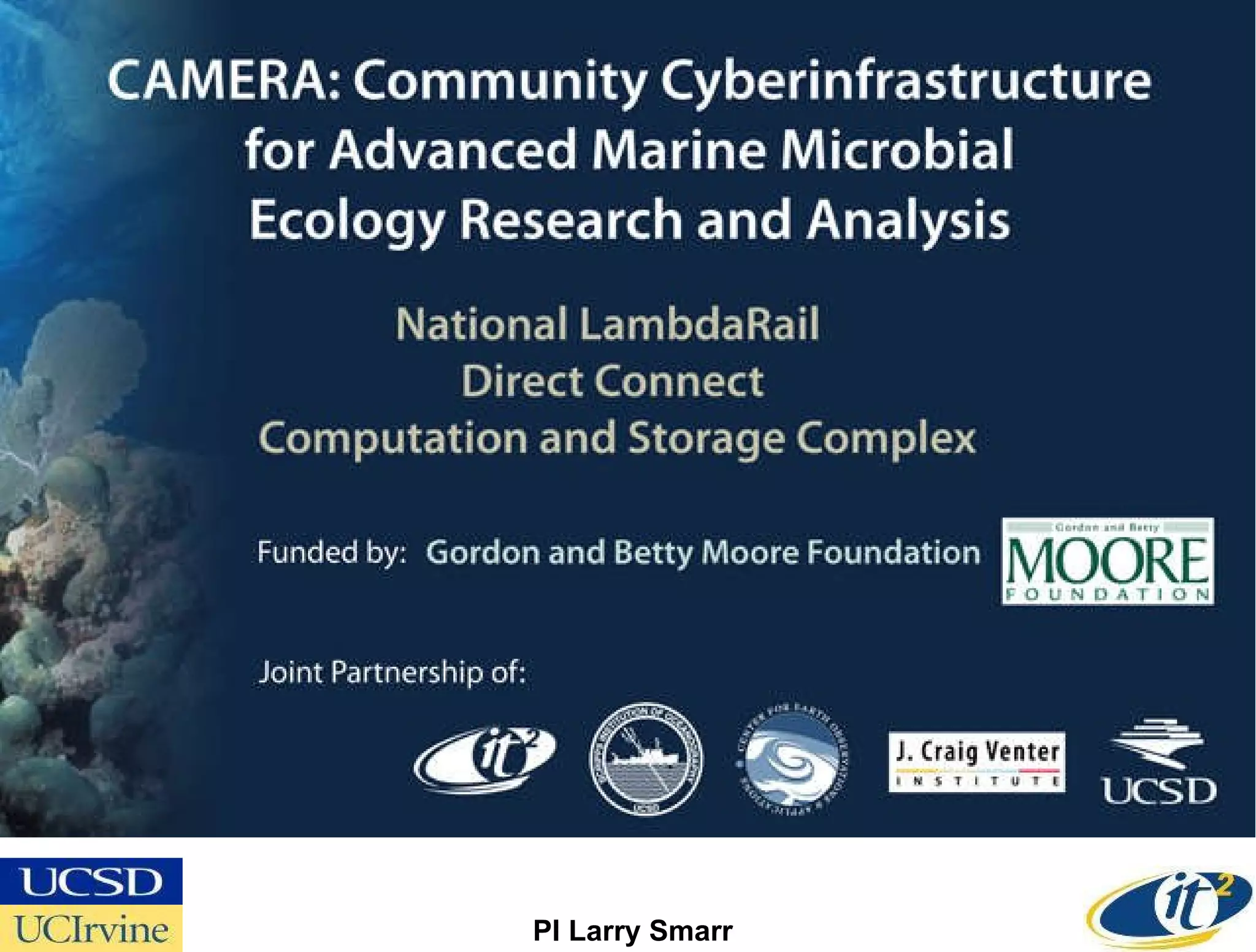

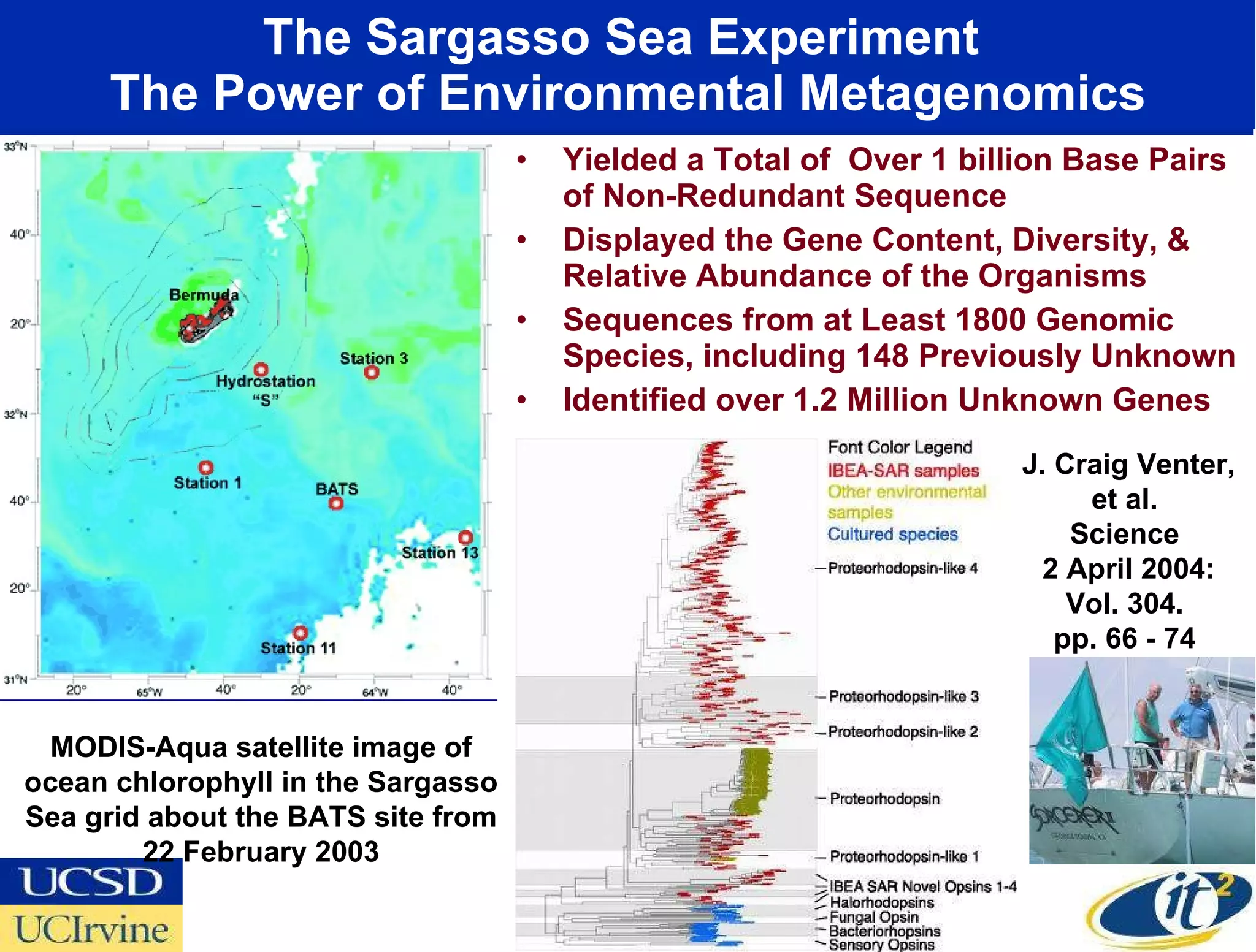

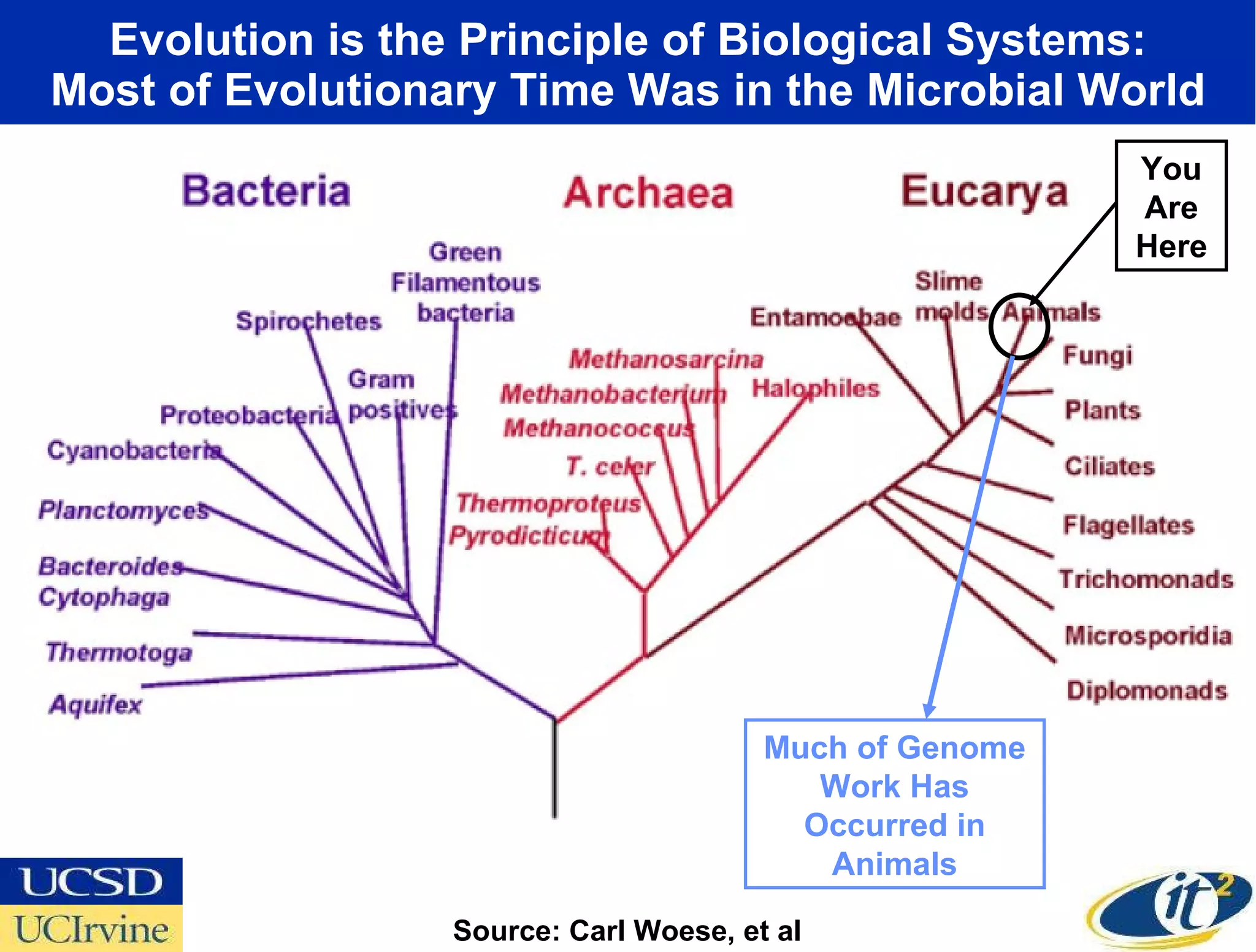

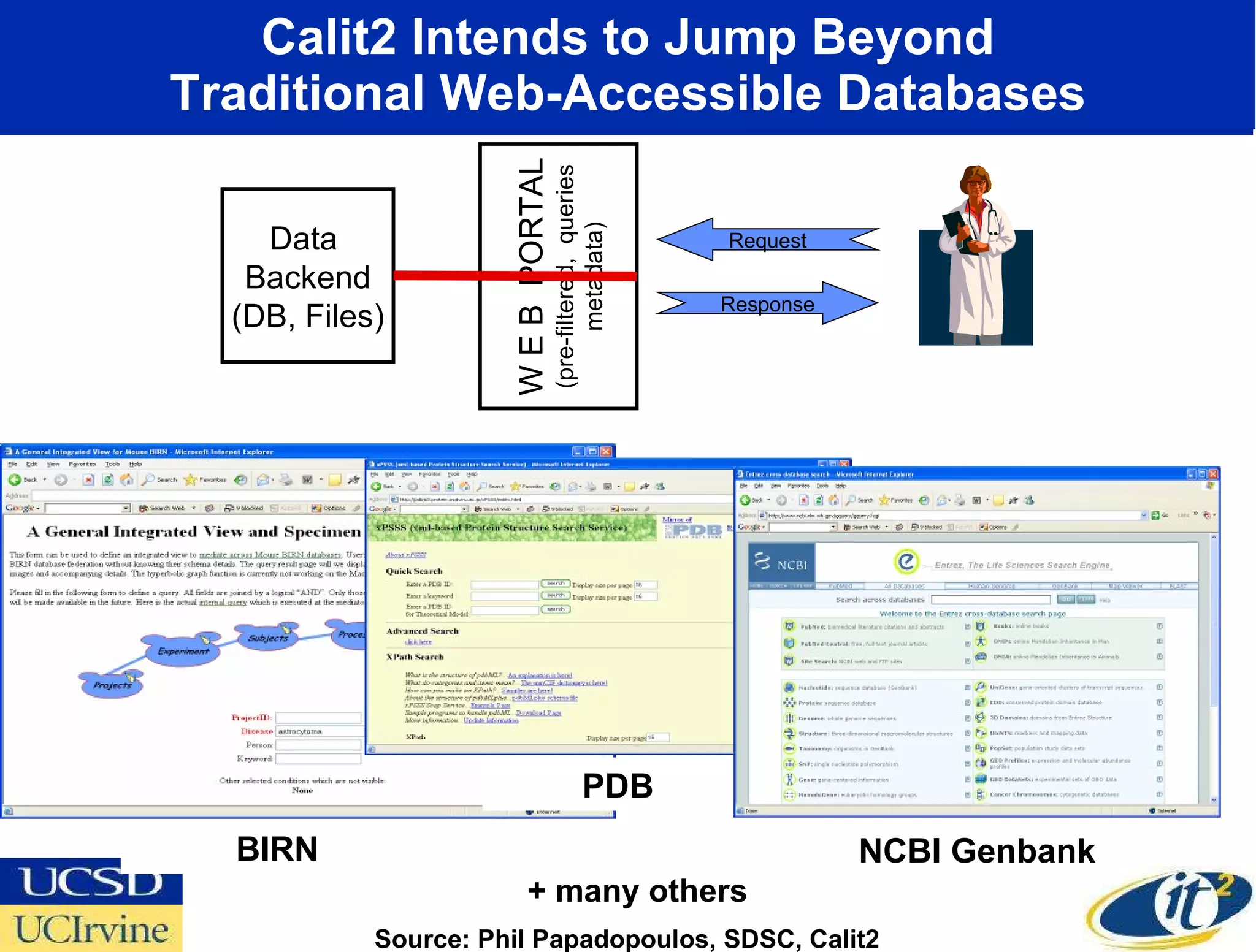

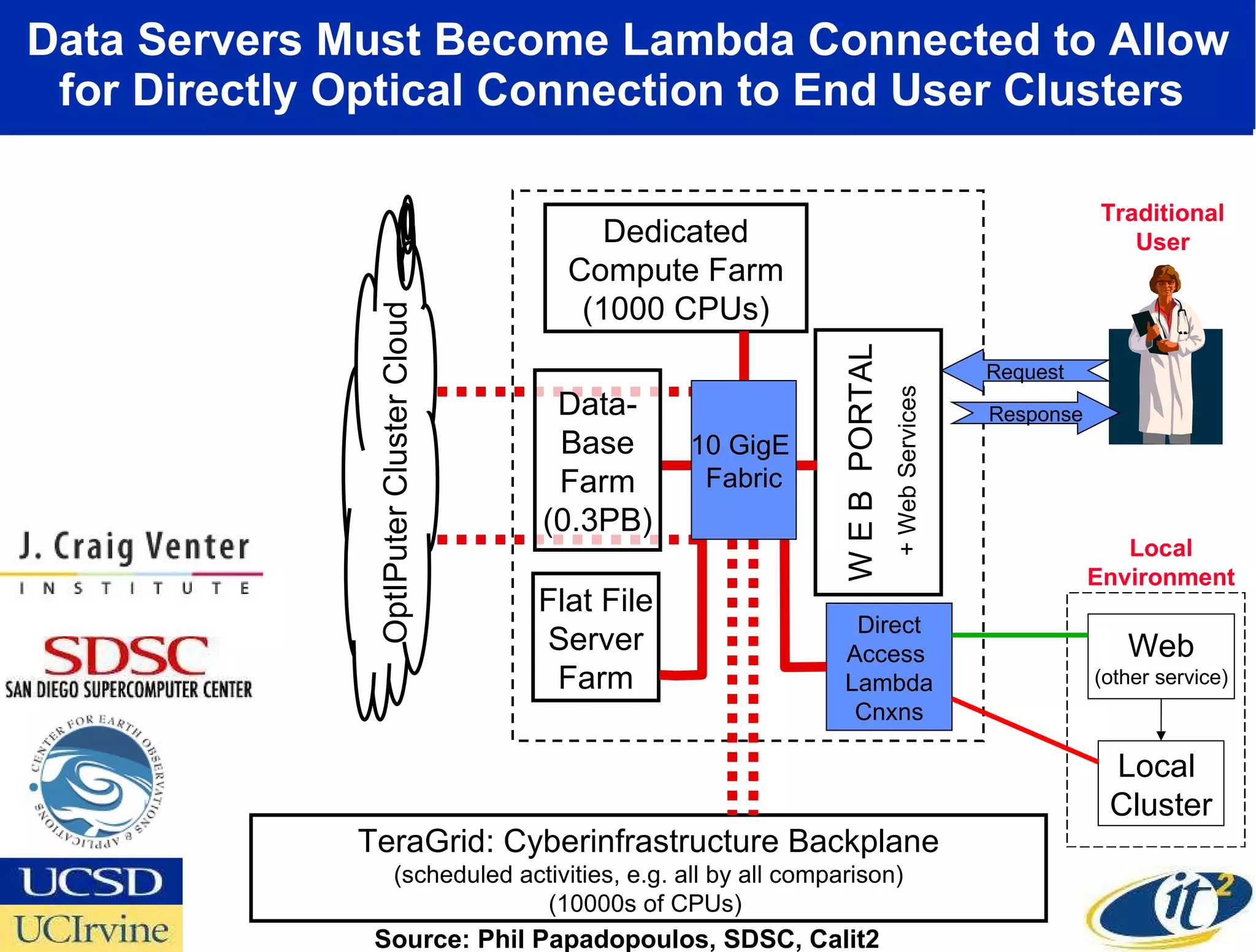

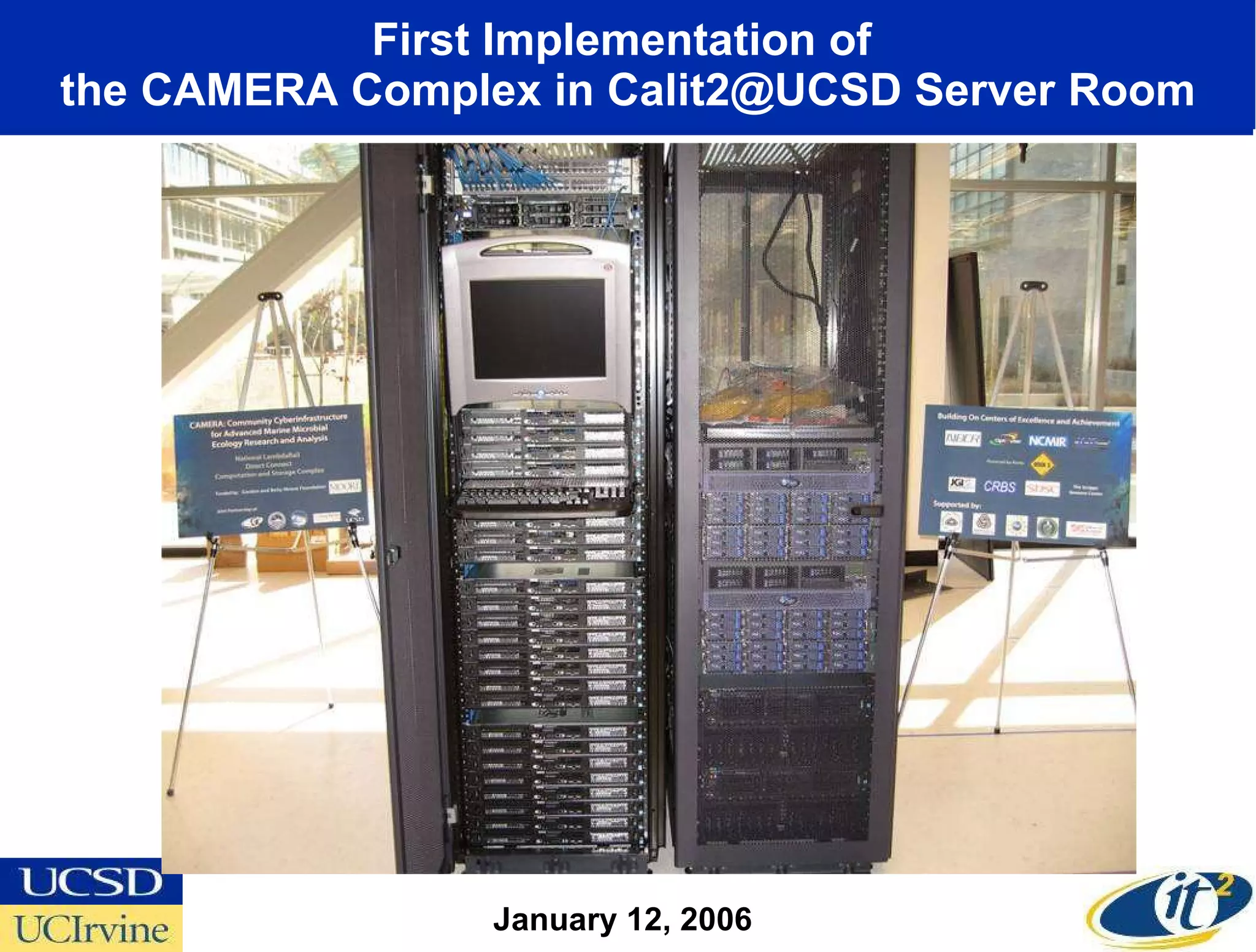

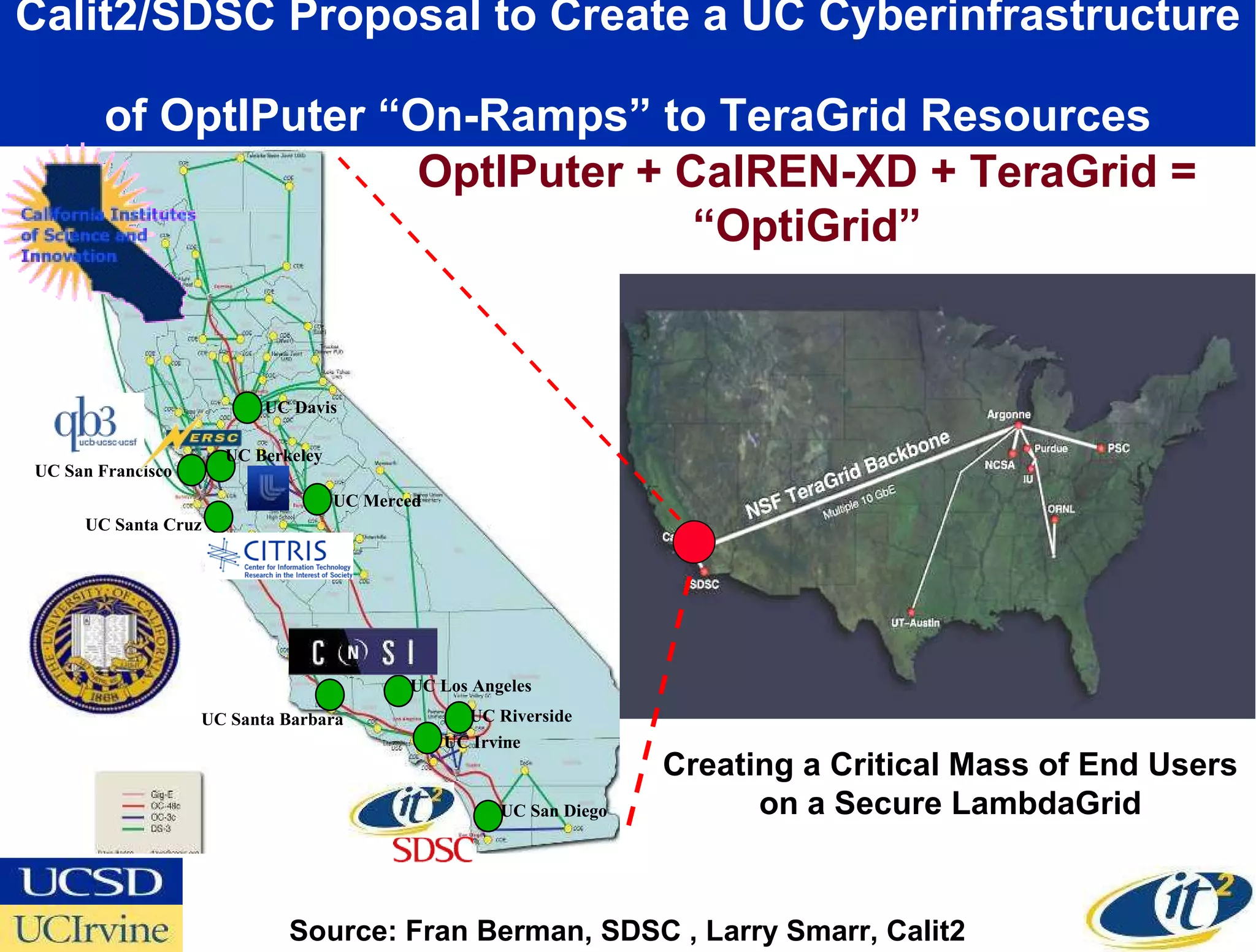

The document outlines Dr. Larry Smarr's research on metacomputer architecture, focusing on the evolution and implementation of global lambdagrids that integrate computing, storage, and network technologies. It discusses advancements made since the early concept of metacomputers in 1988, highlighting four key eras of development and the innovative use of optical paths, or lambdas, to enhance data throughput for scientific research. The research also emphasizes various grants and collaborative projects aimed at applying this technology in fields like biomedical imaging and oceanographic studies.