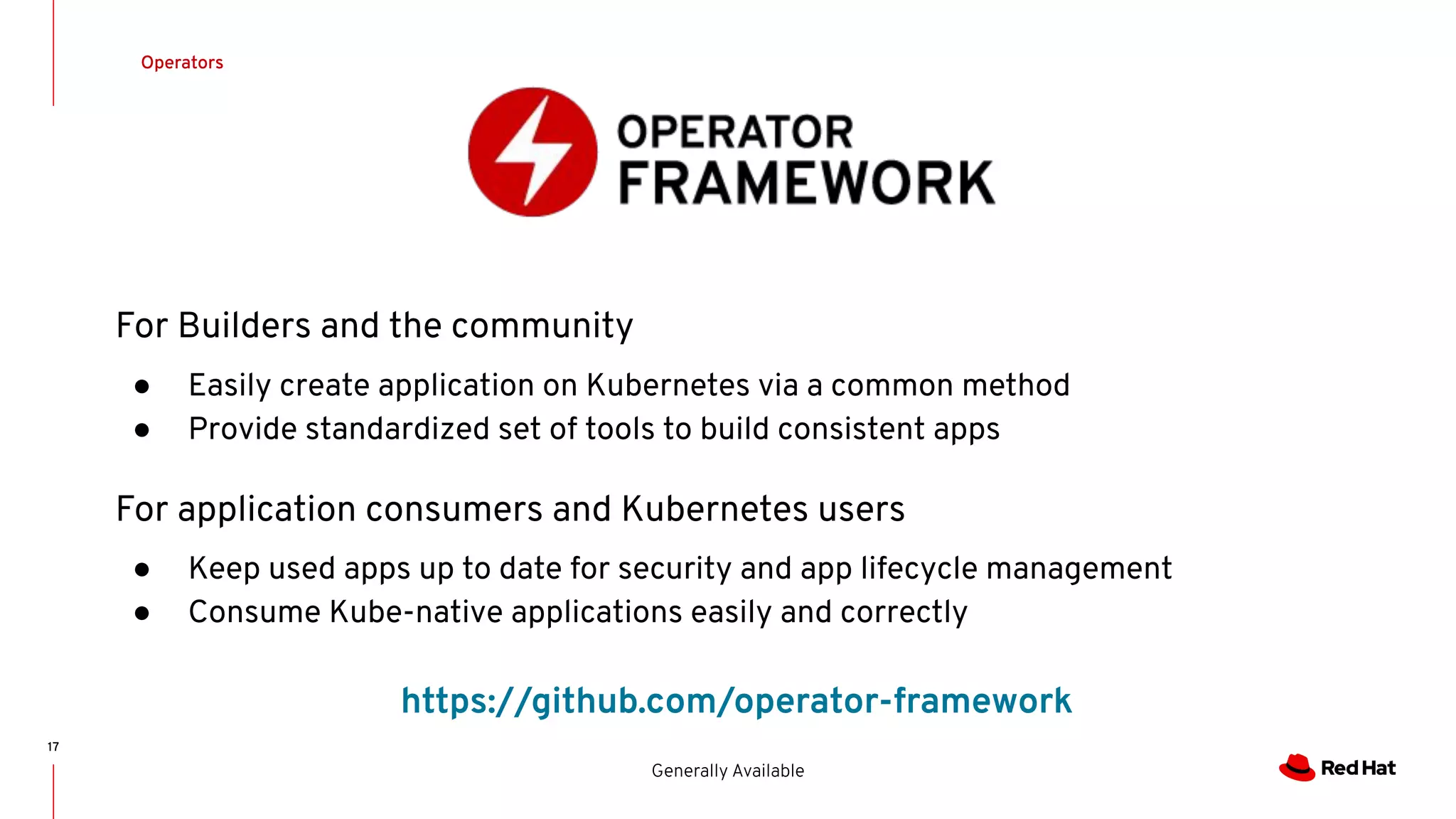

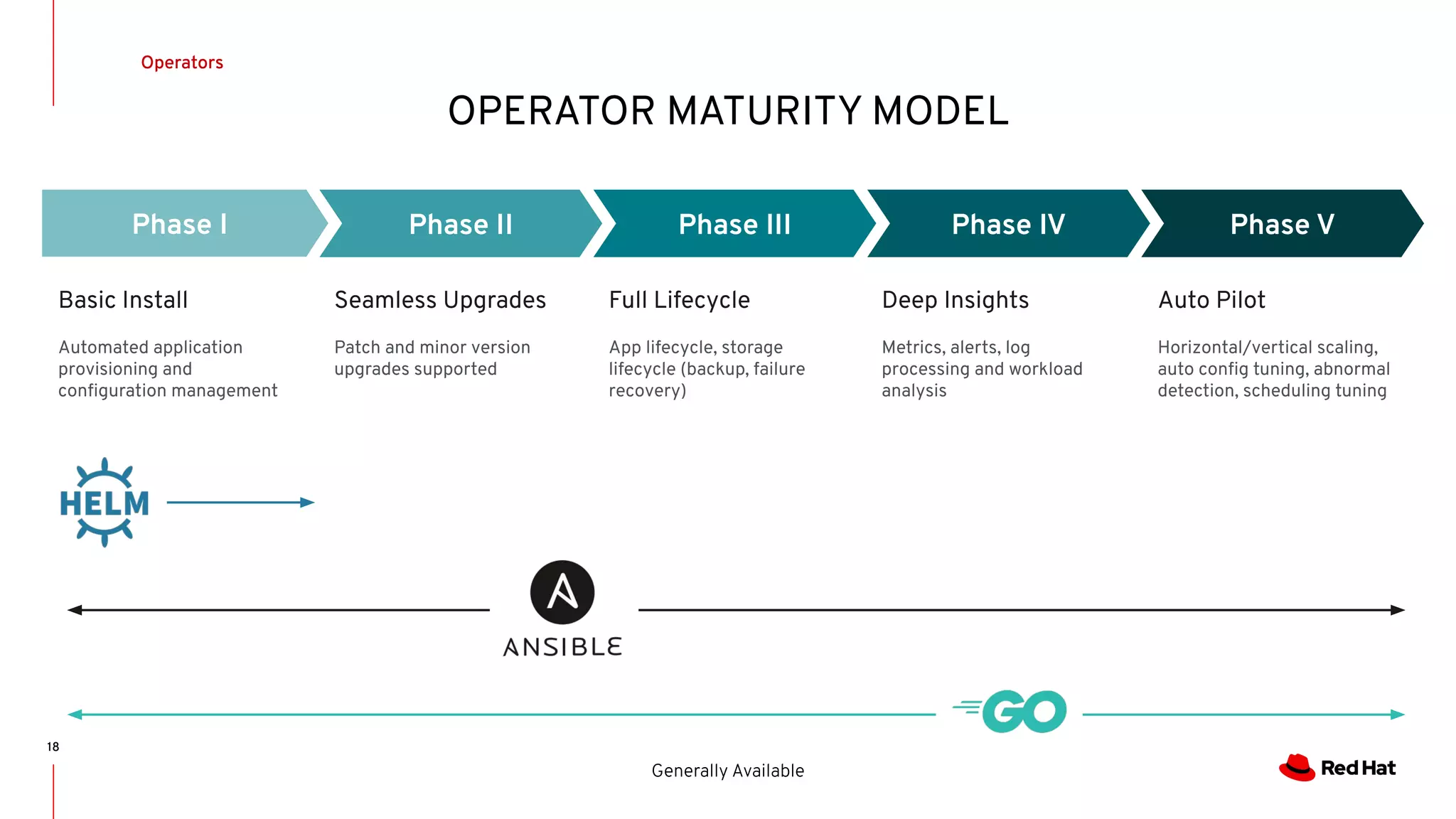

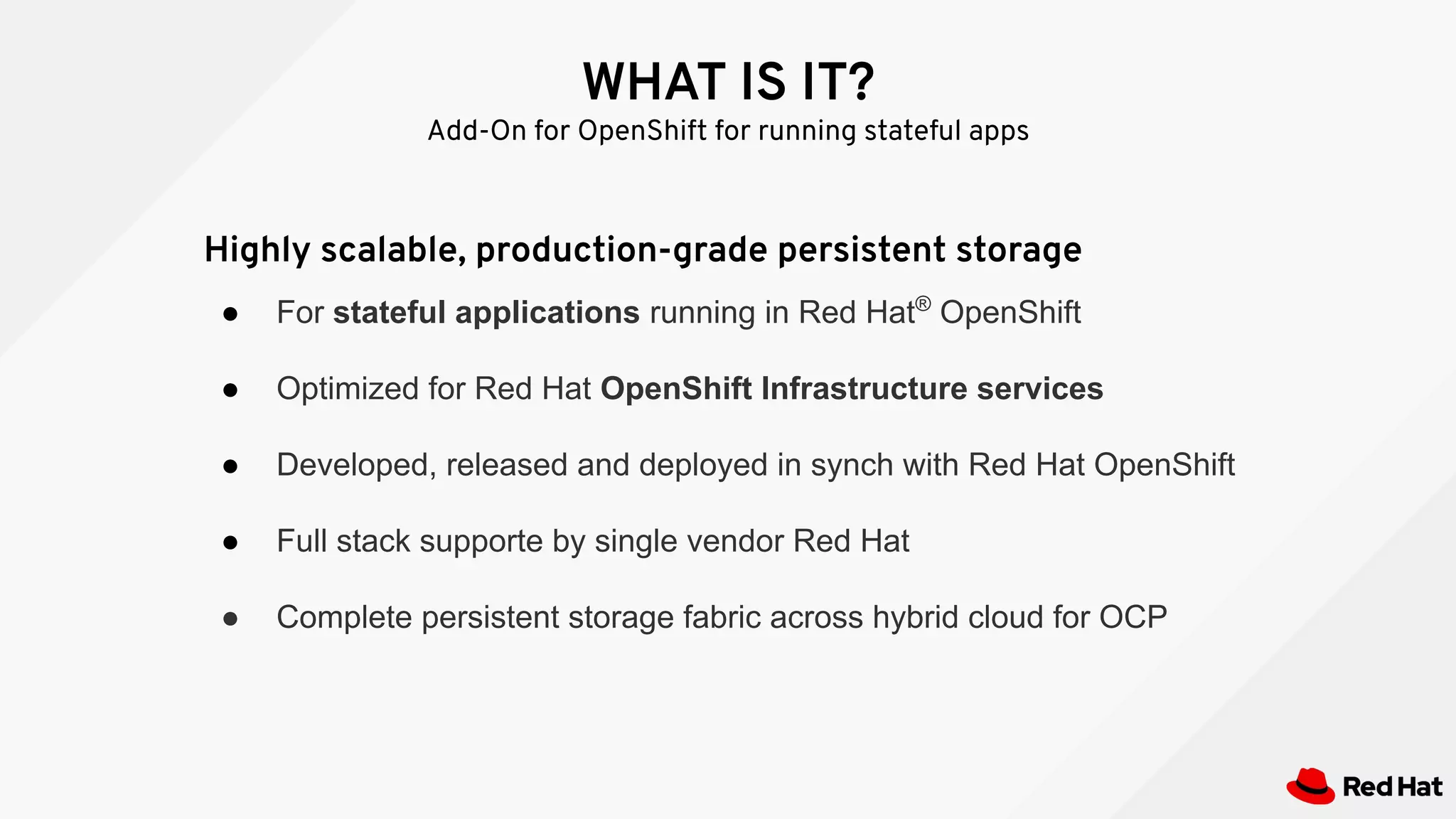

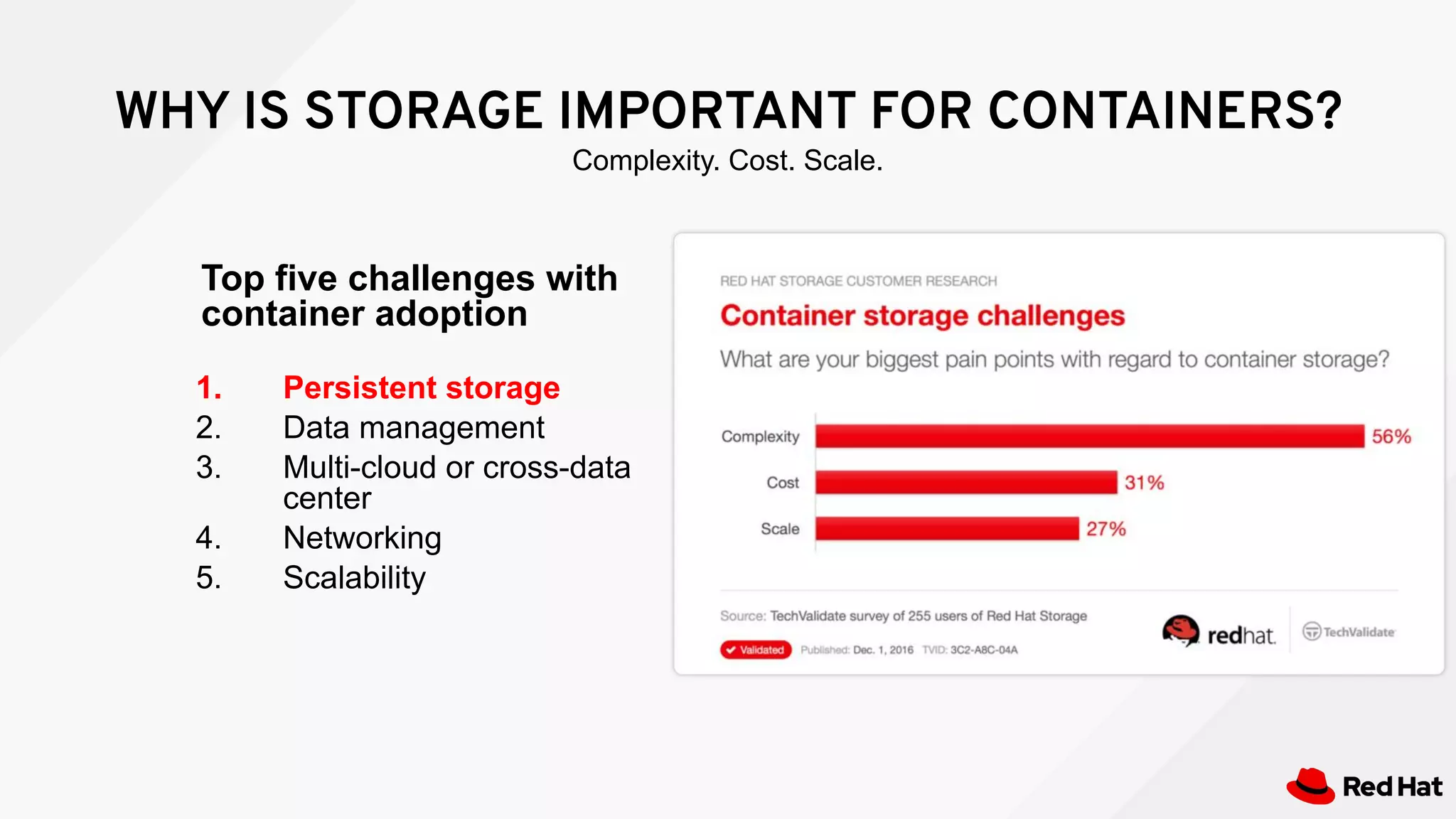

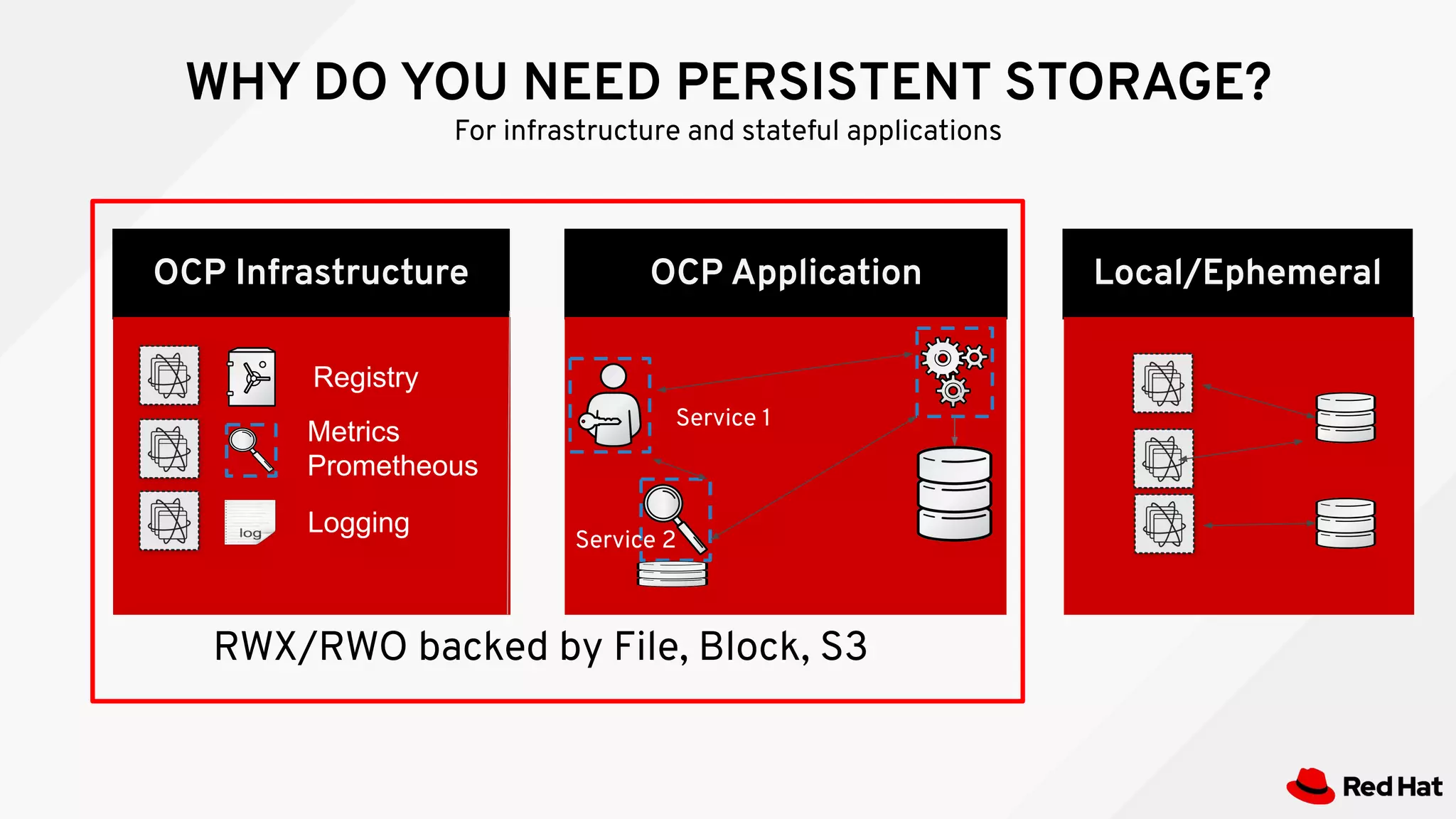

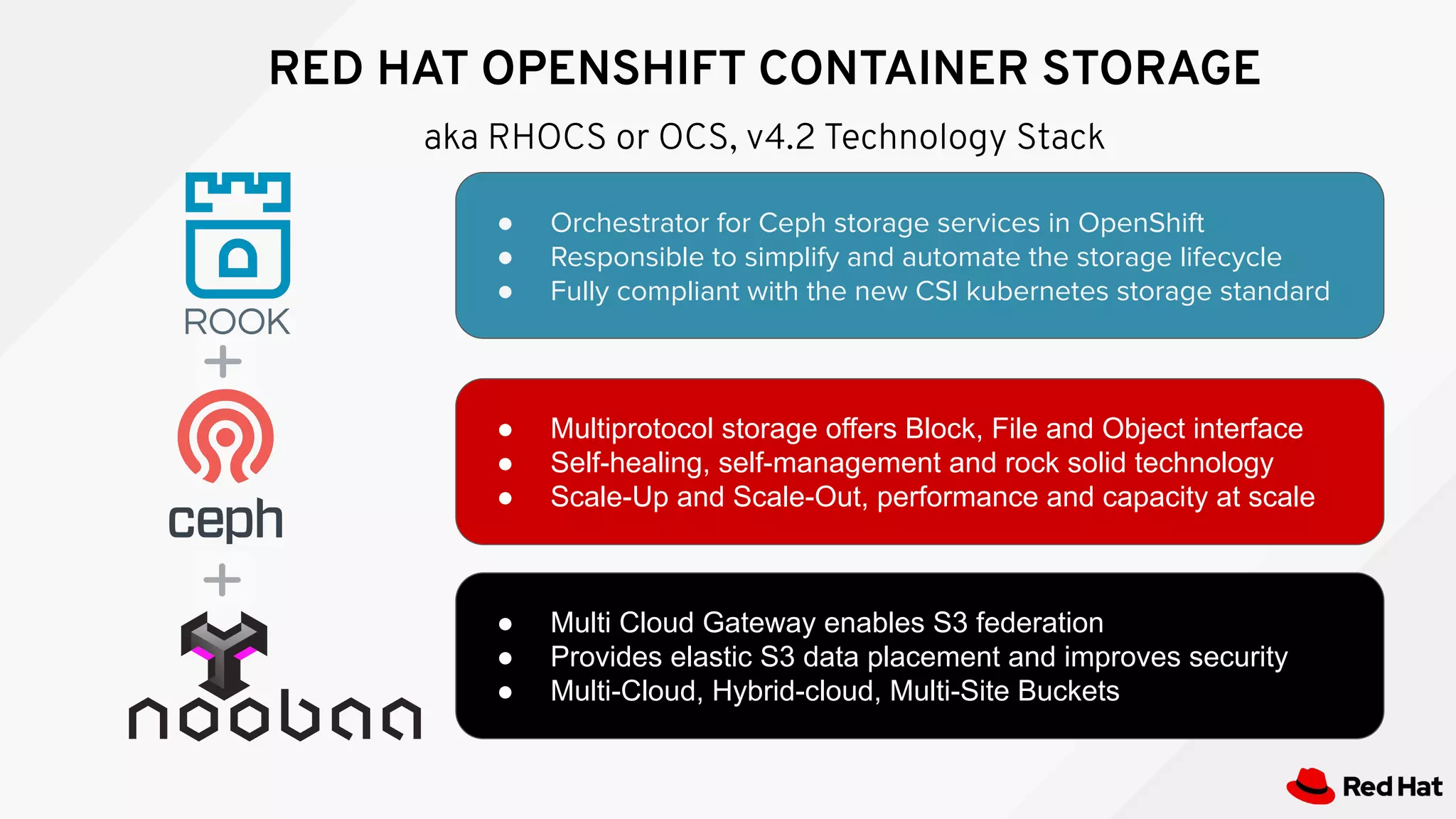

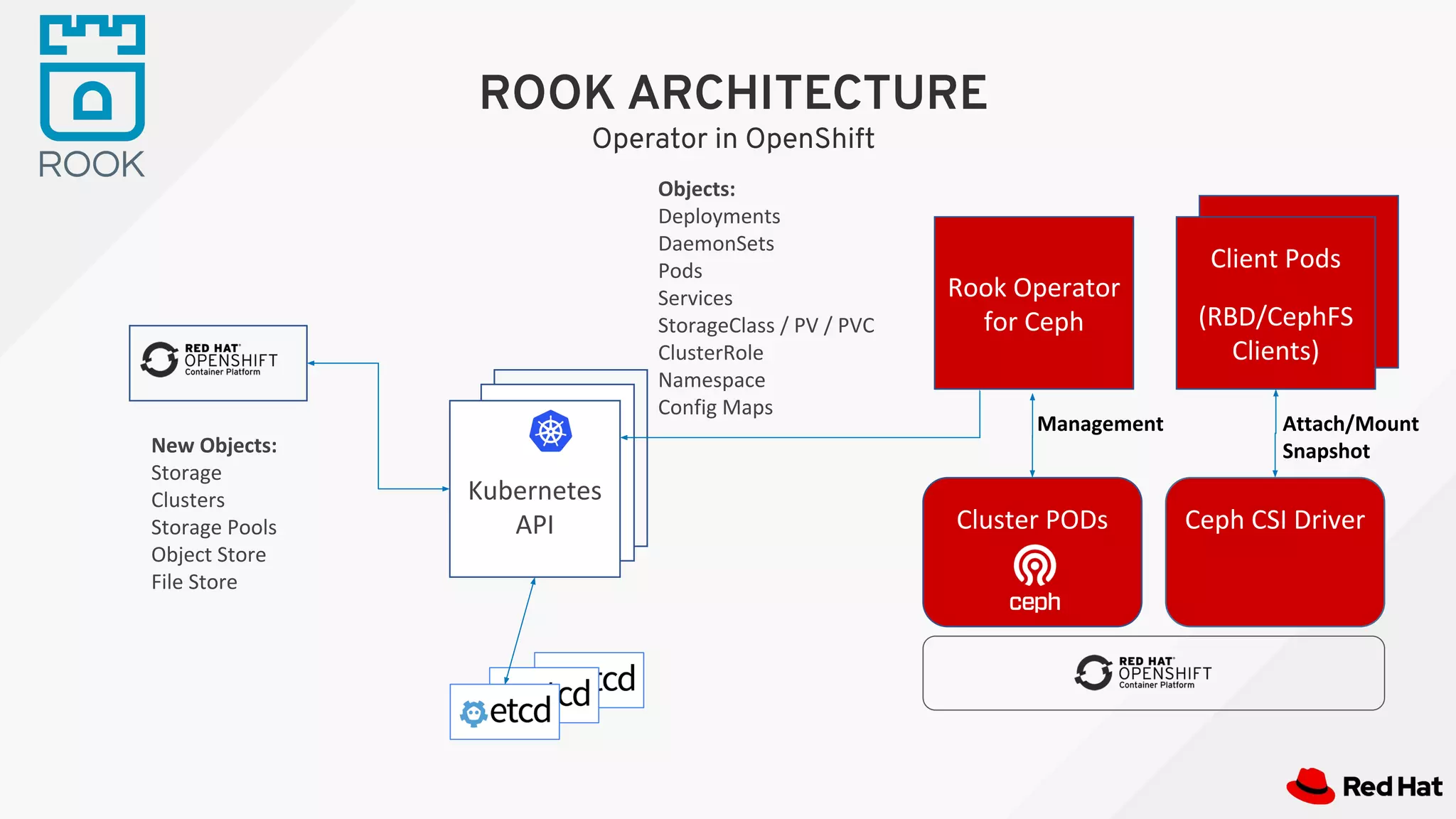

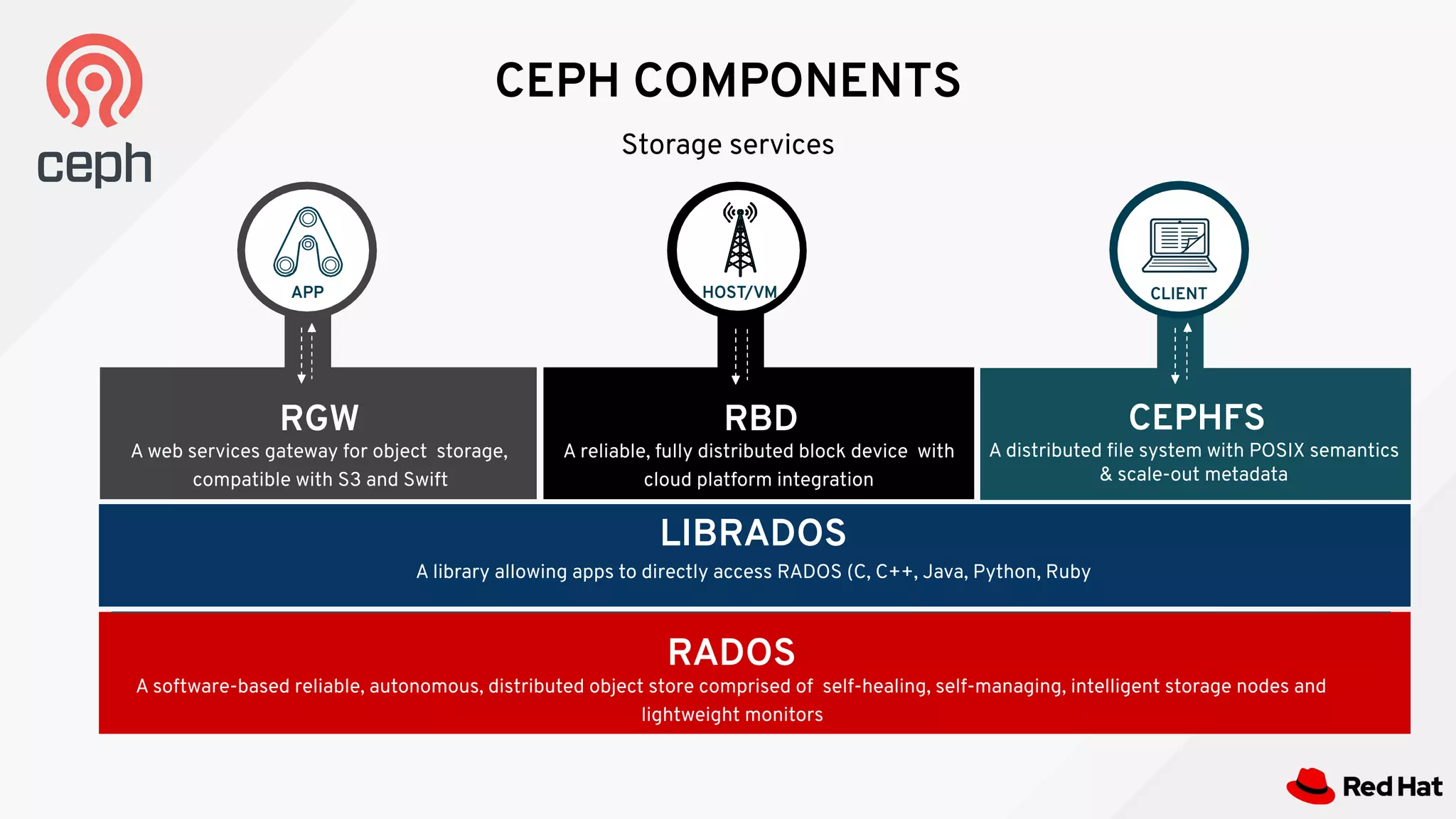

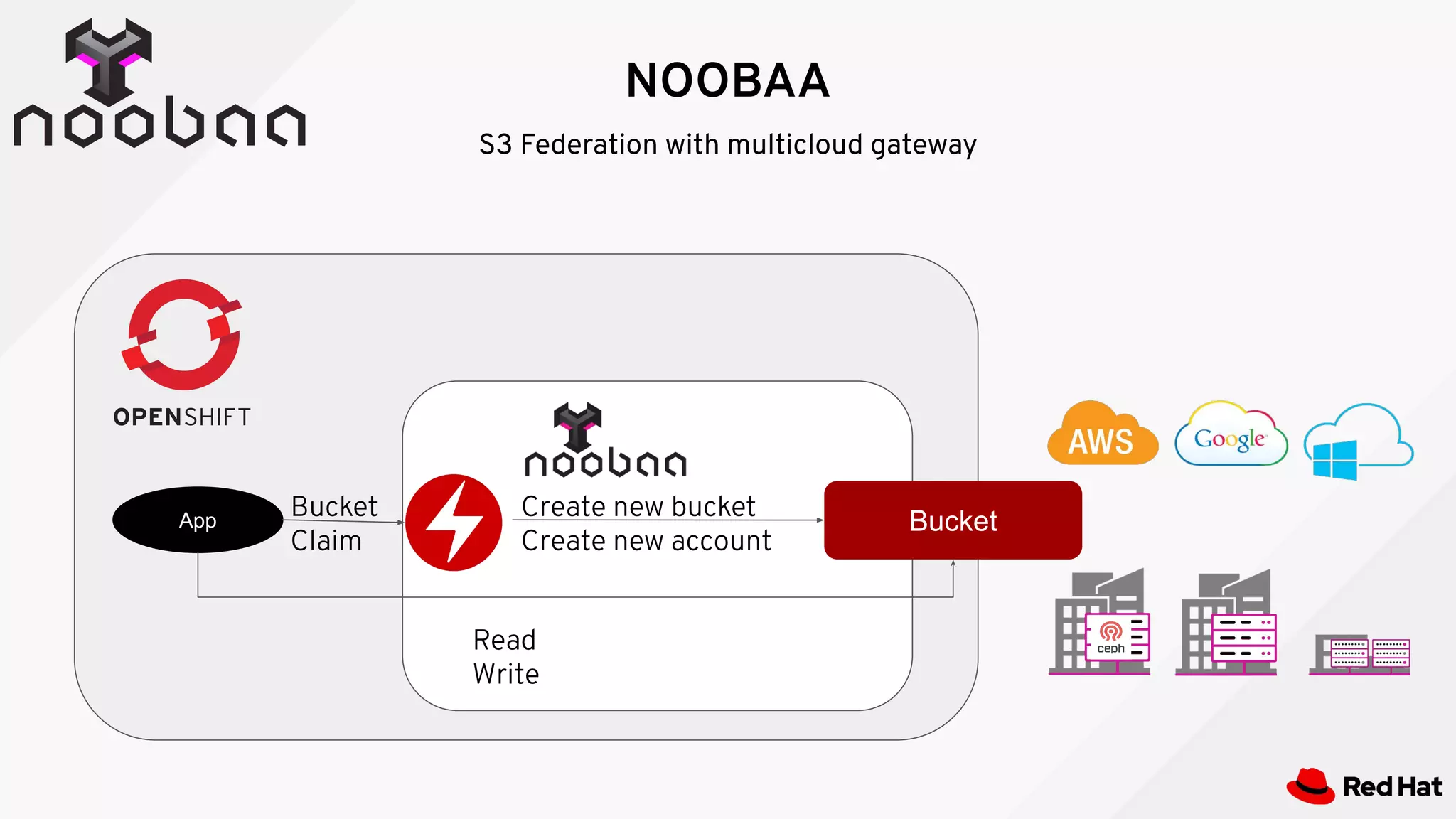

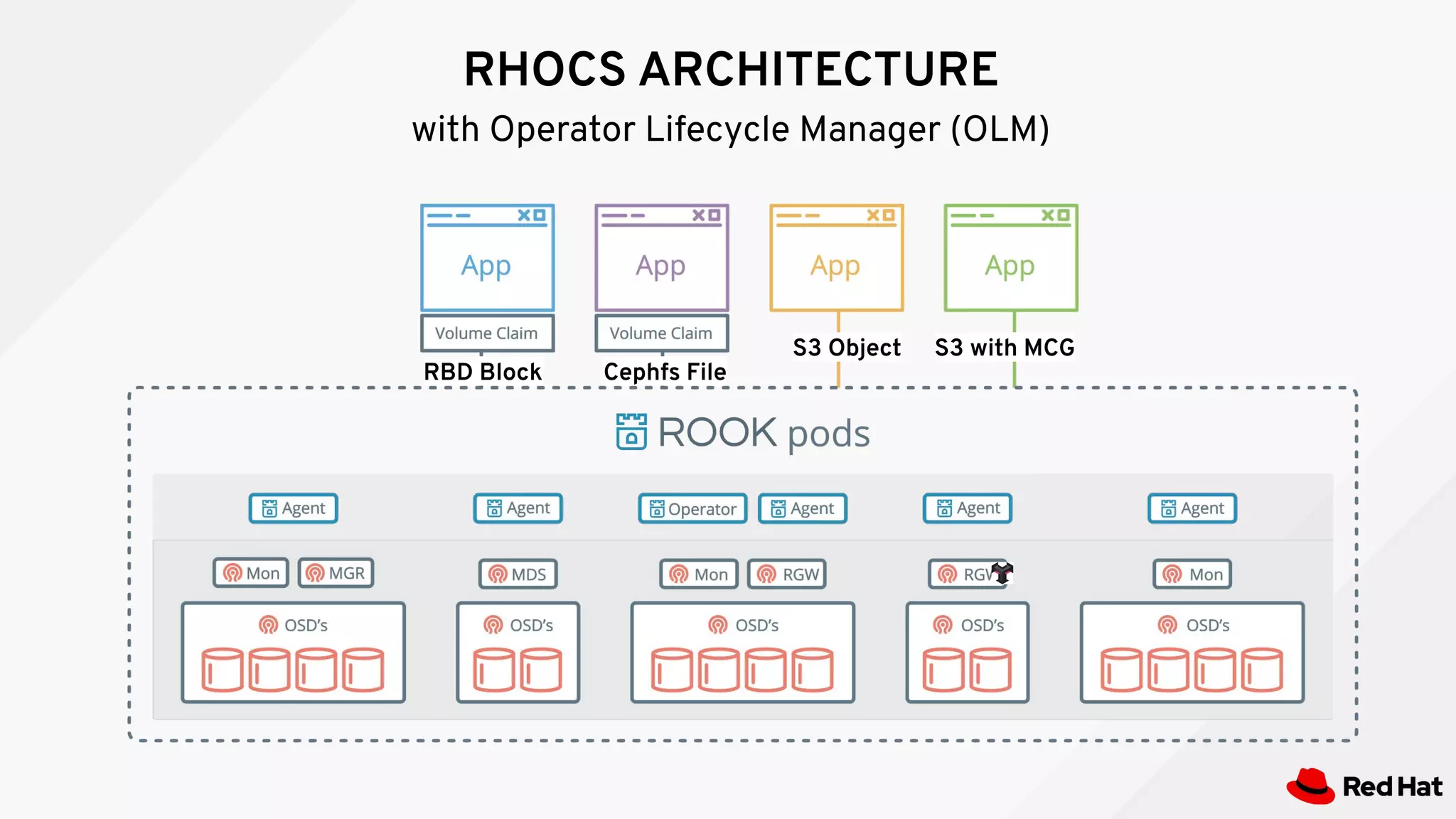

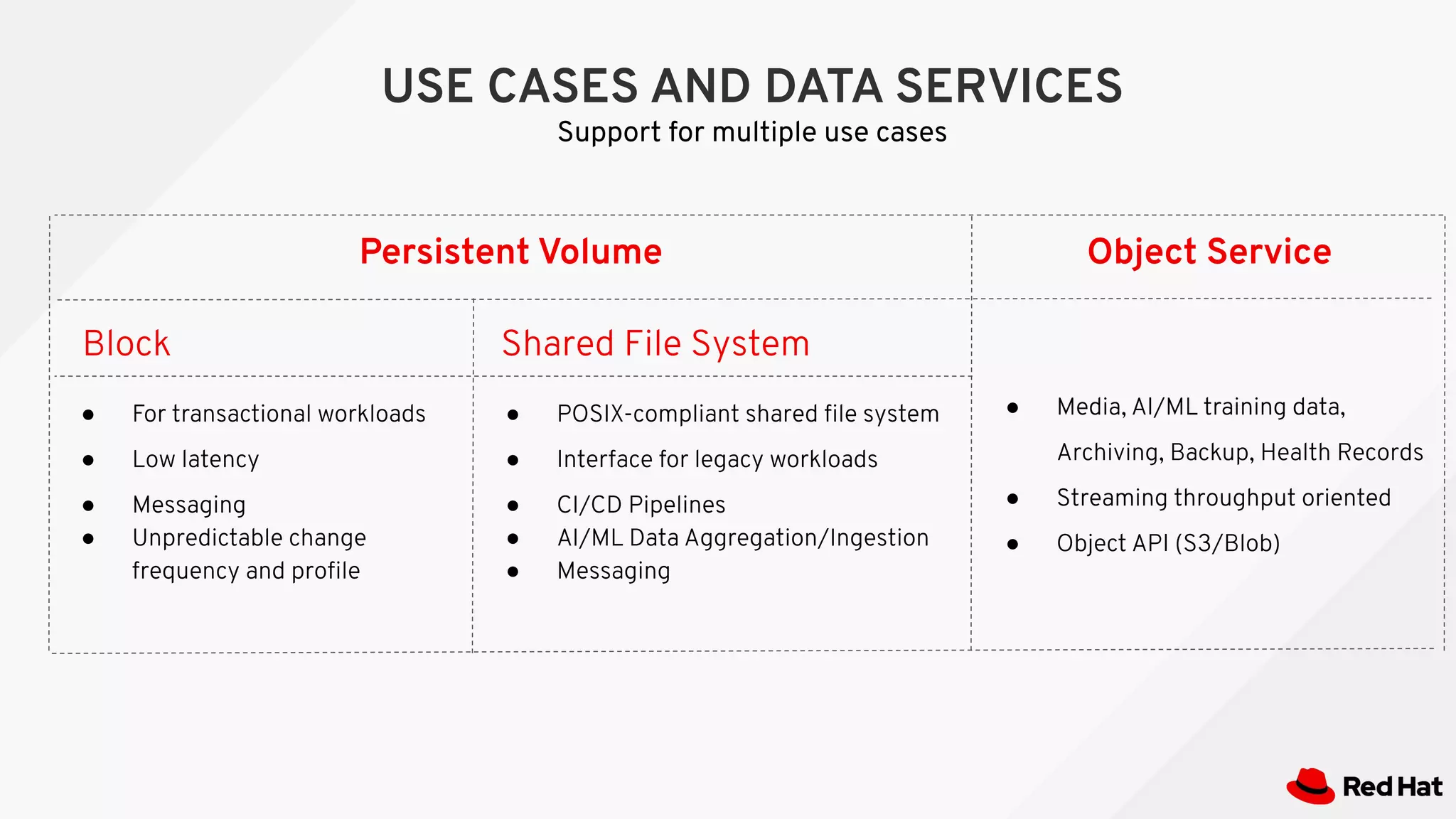

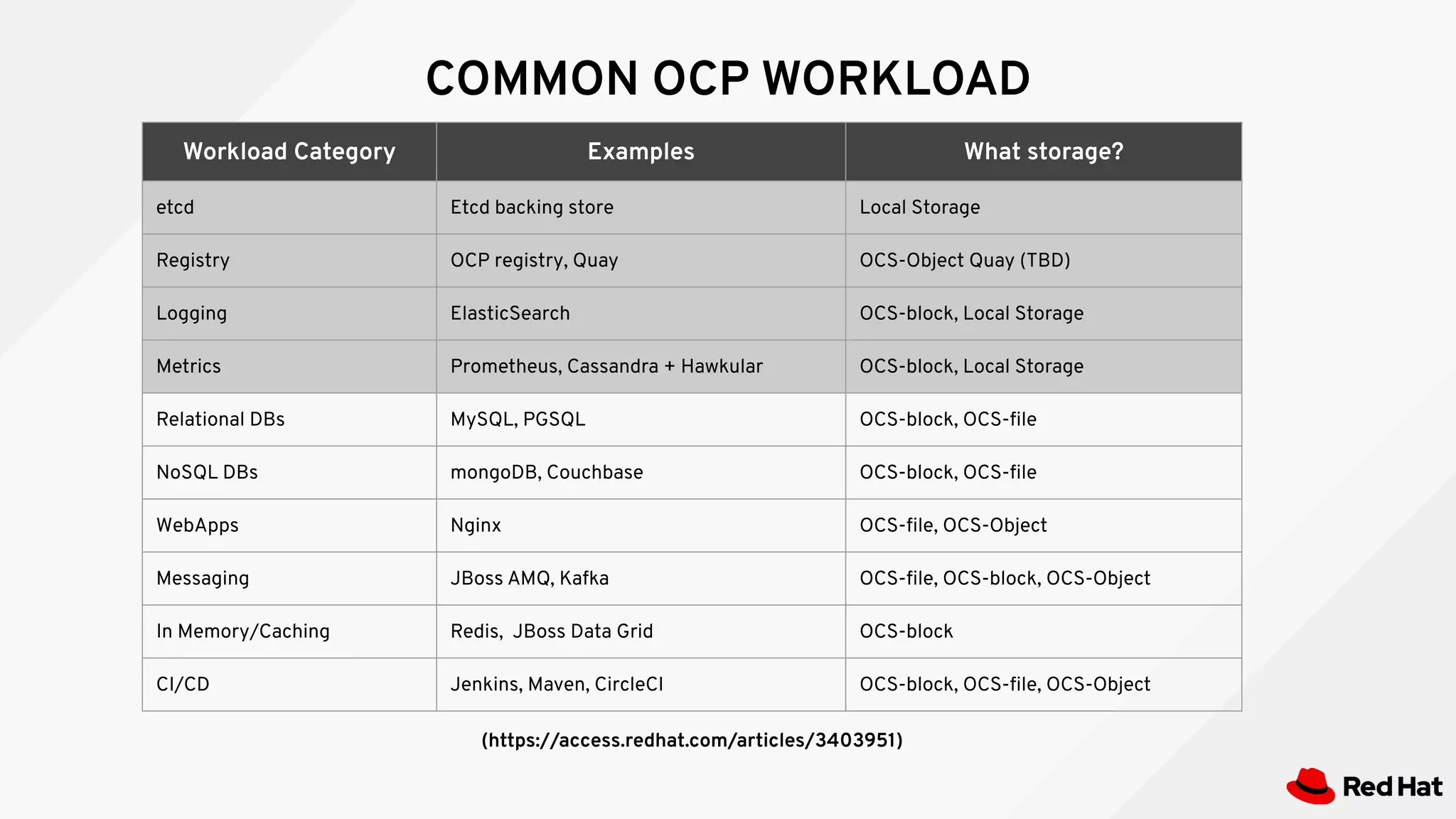

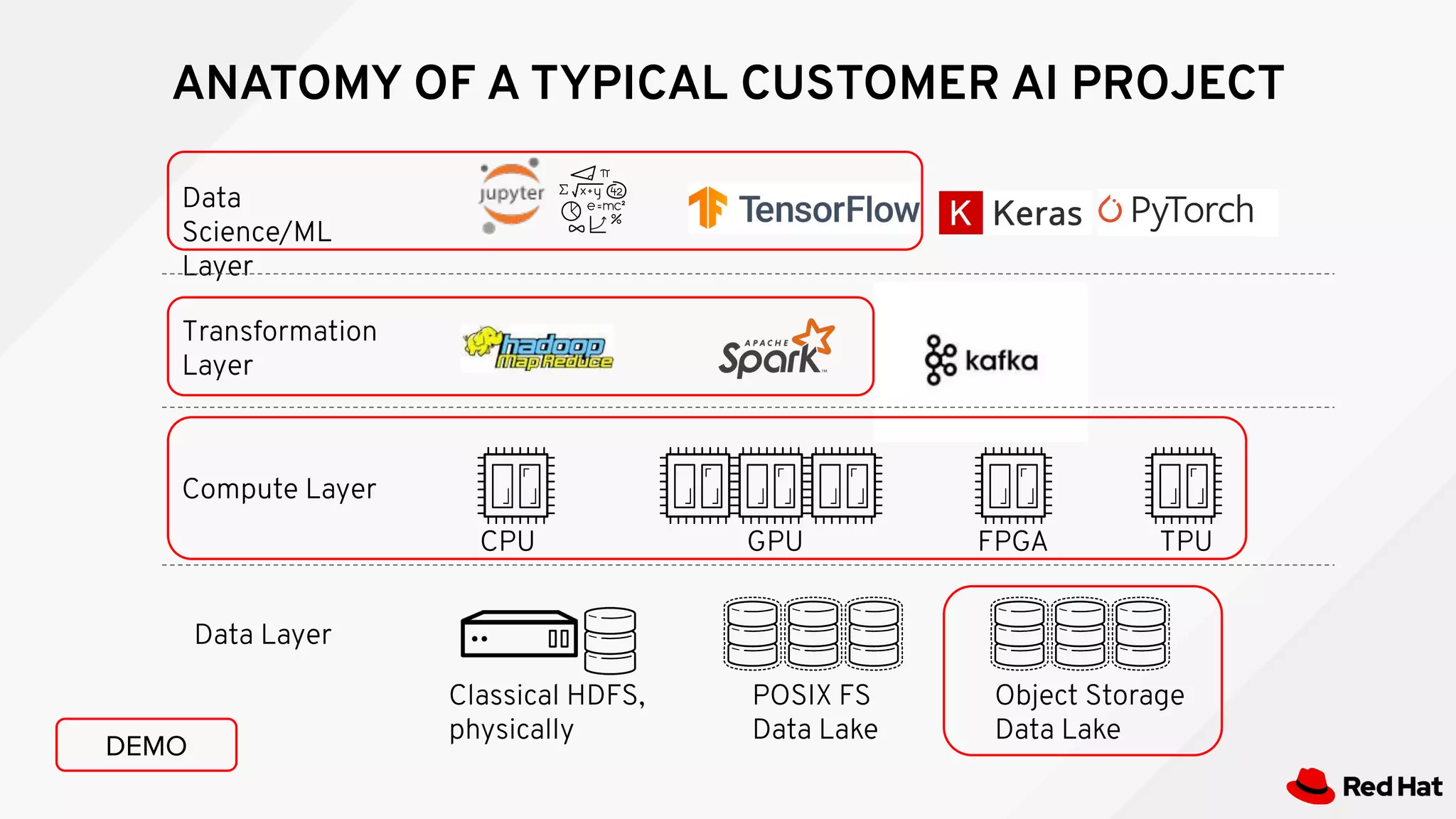

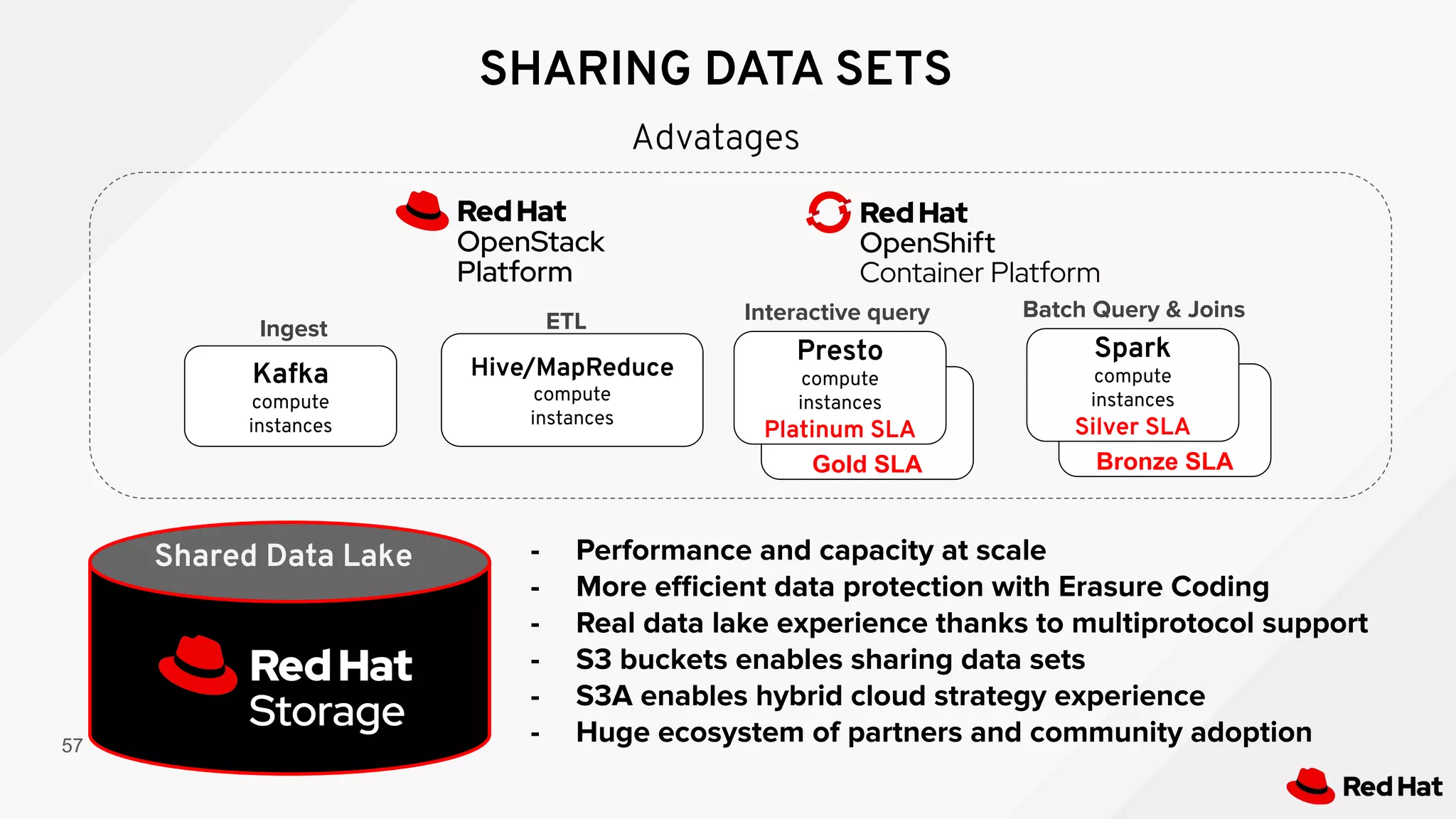

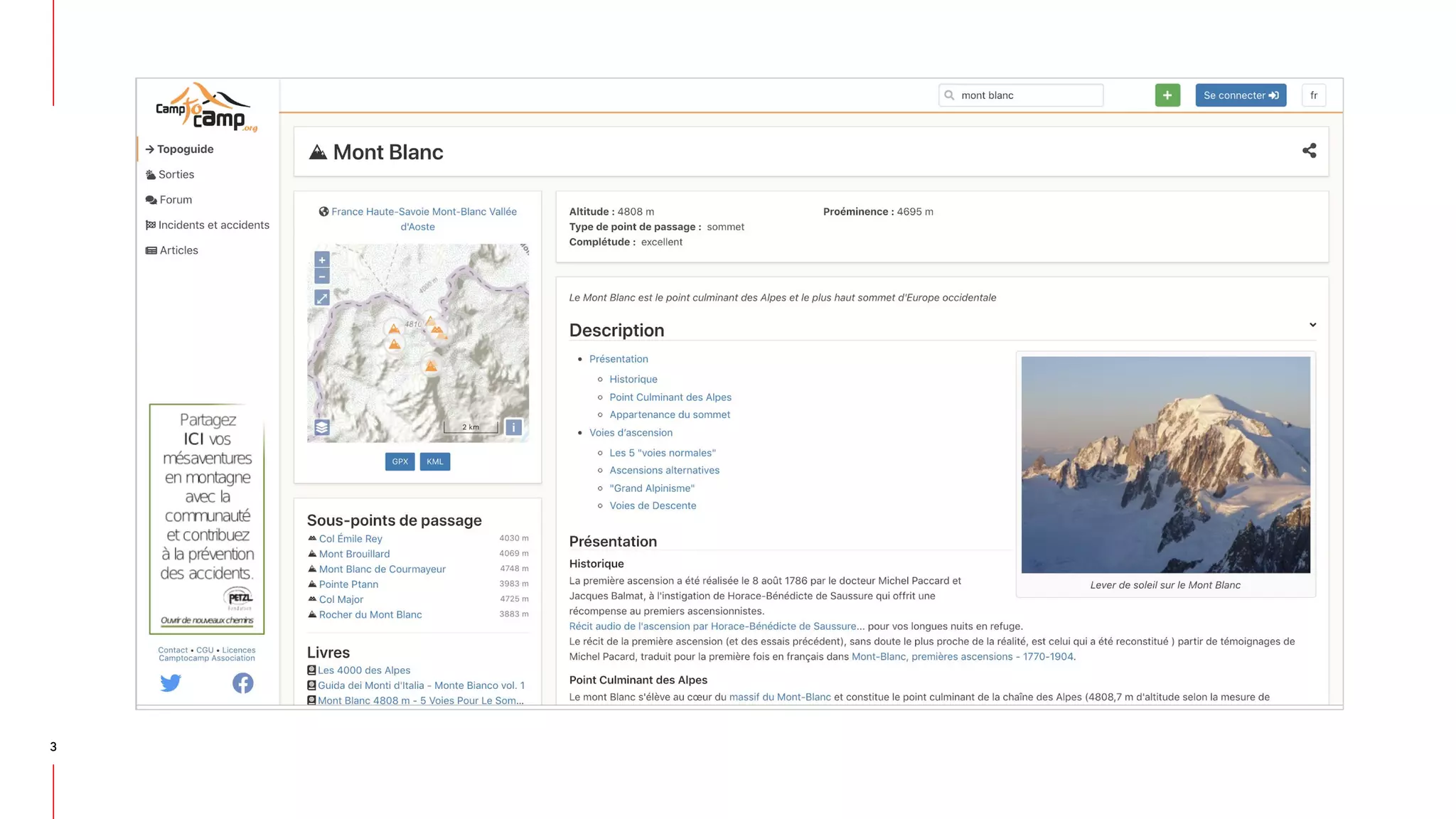

Operators allow for the automated deployment, management, and operation of applications on Kubernetes clusters. They help address issues like configuring applications, automated upgrades, and monitoring throughout the application lifecycle. The demo showed how Operators can deploy Kafka on OpenShift with minimal manual configuration compared to traditional Kubernetes manifests. Rook provides storage services like Ceph on OpenShift to enable stateful applications through features like block storage, object storage, and a distributed file system. It offers high scalability and availability with support from Red Hat.

![Introduction to Operators

Generally Available

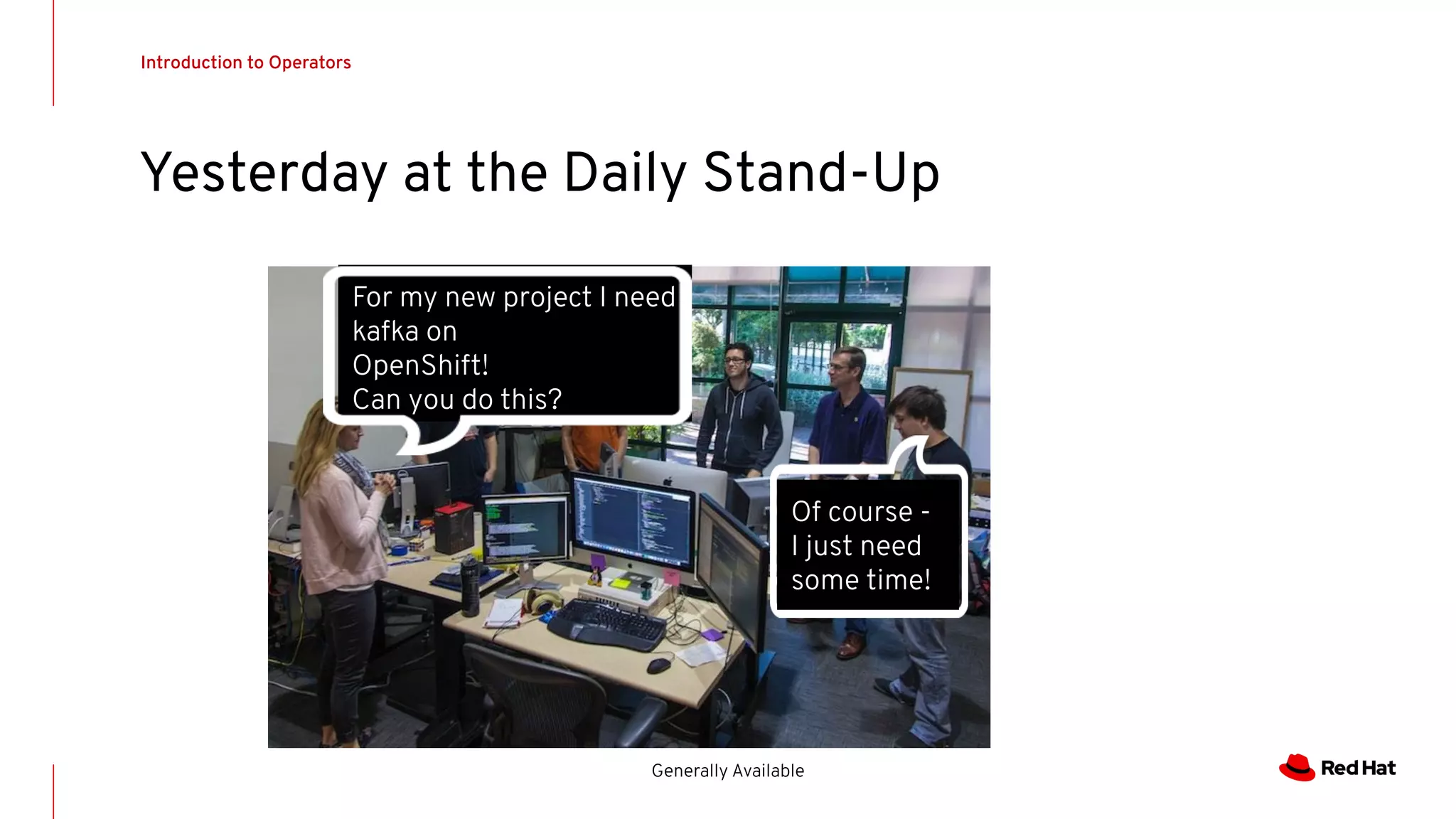

Spending hours bouncing back and forth in yml

apiVersion: v1

kind: Service

metadata:

name: kafka-service

spec:

selector:

app: kafka-app

ports:

- protocol: TCP

port: 80

targetPort: 8000

[root@ocp ~]# oc create -f kafka.yml

Error on line 1: v1 does not exist

[root@ocp ~]# oc create -f kafka.yml

Error on line 24: syntax error

[root@ocp ~]# oc create -f kafka.yml

Error on line 26: syntax error

[root@ocp ~]# oc create -f kafka.yml

Success ->

[root@ocp ~]#](https://image.slidesharecdn.com/meetup03101-191004125852/75/Meetup-Openshift-Geneva-03-10-9-2048.jpg)

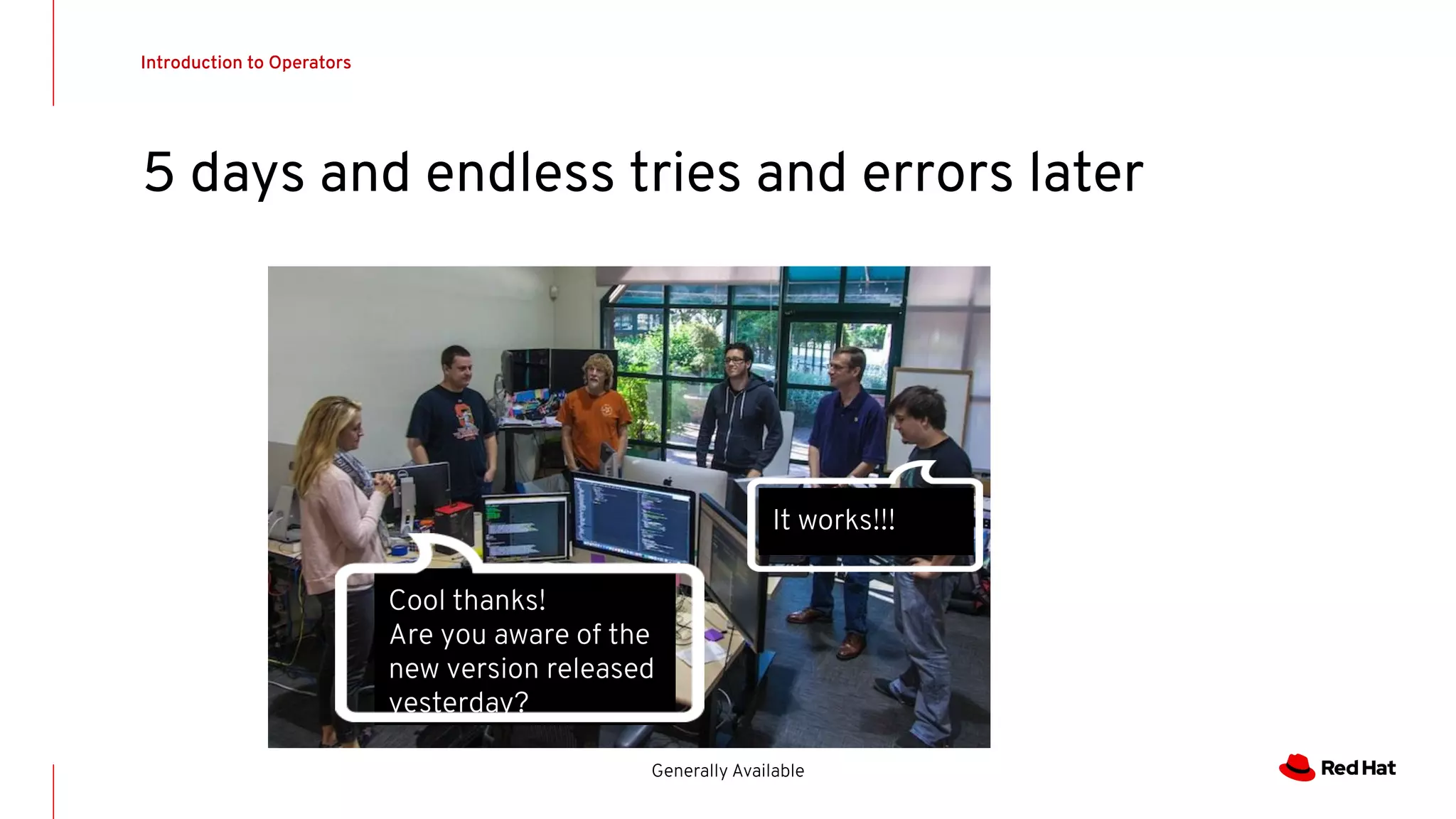

![Introduction to Operators

Generally Available

bouncing back and forth in yaml - AGAIN???

apiVersion: v1

kind: Service

metadata:

name:

example-service

spec:

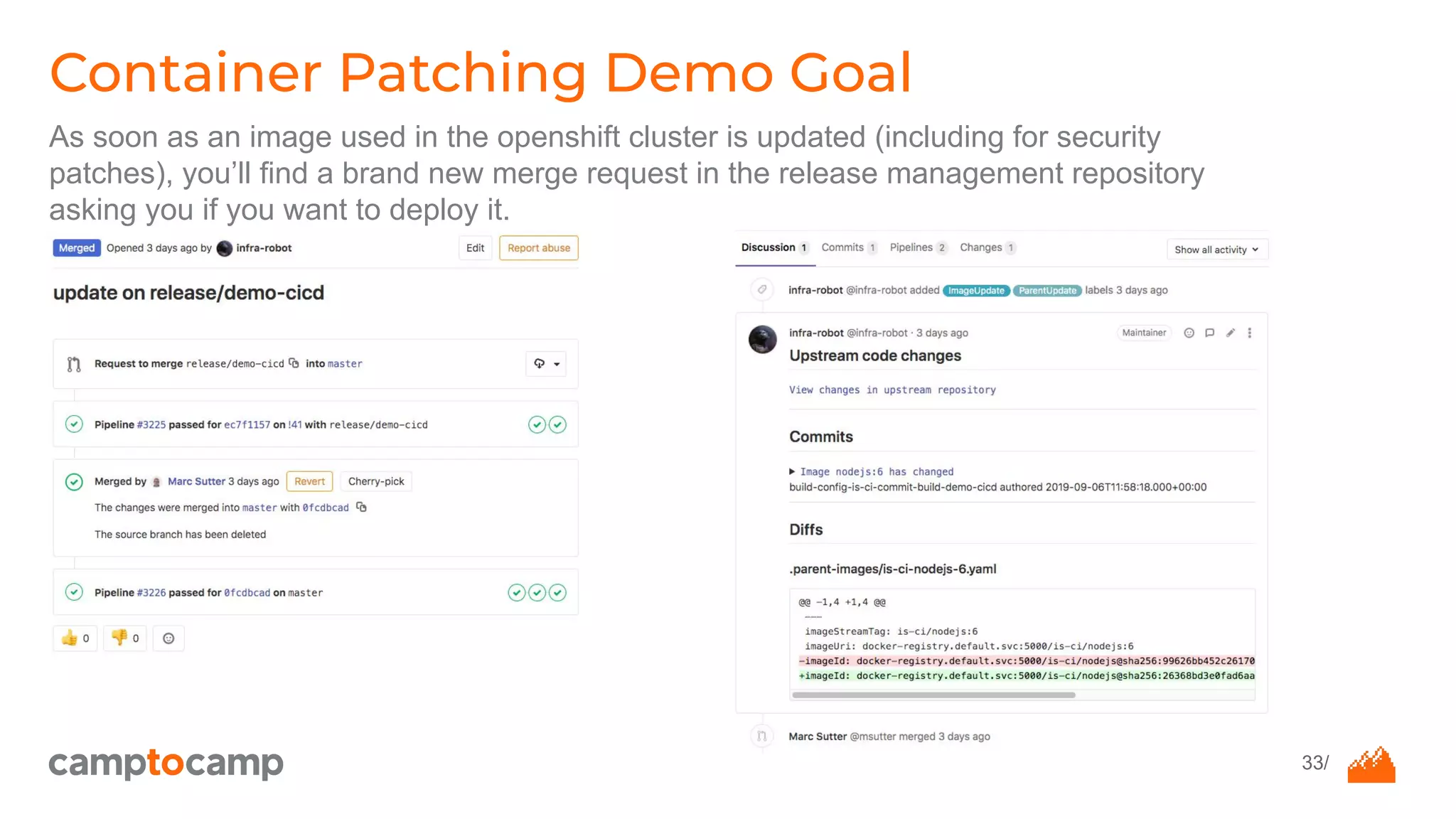

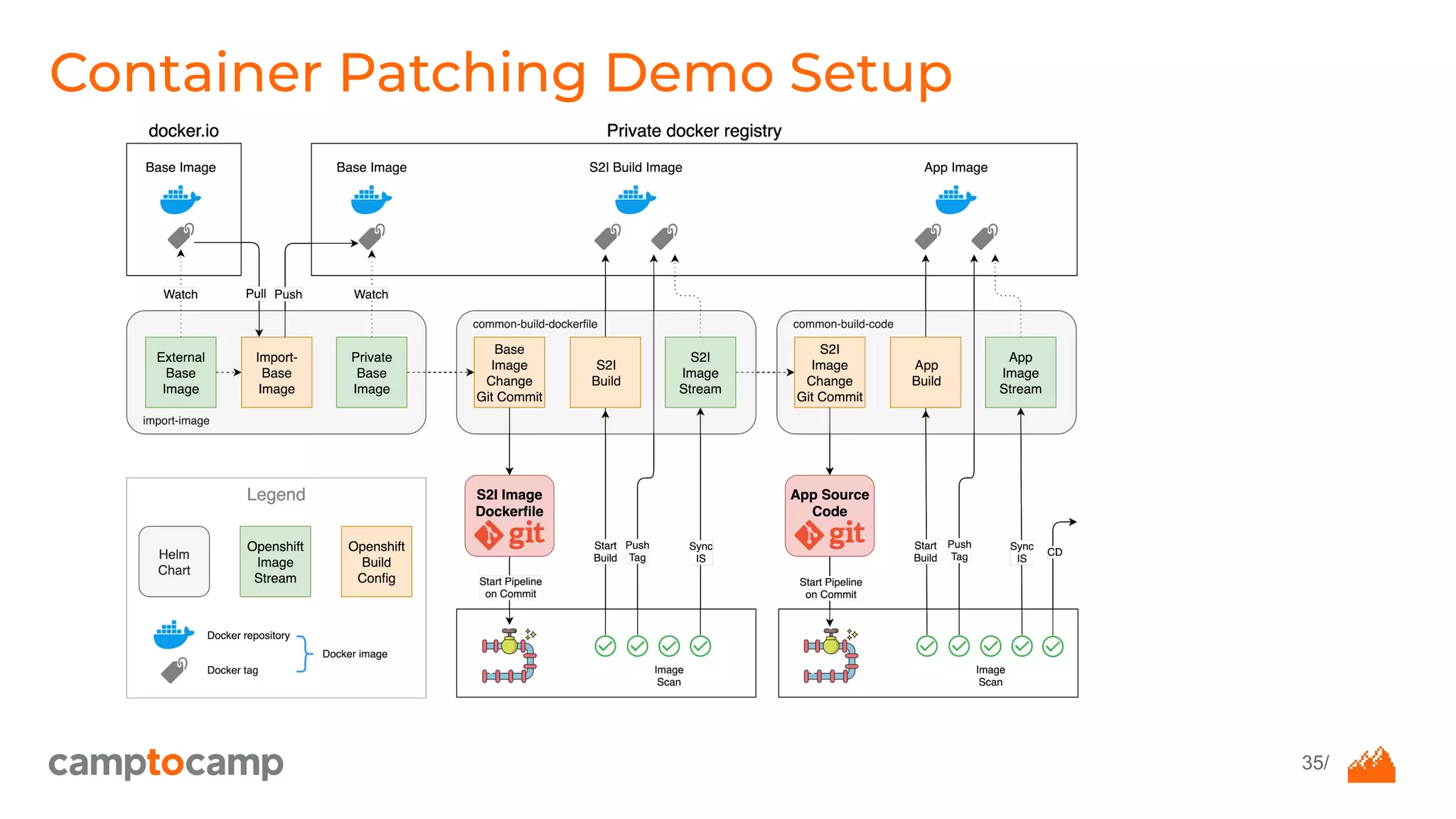

selector:

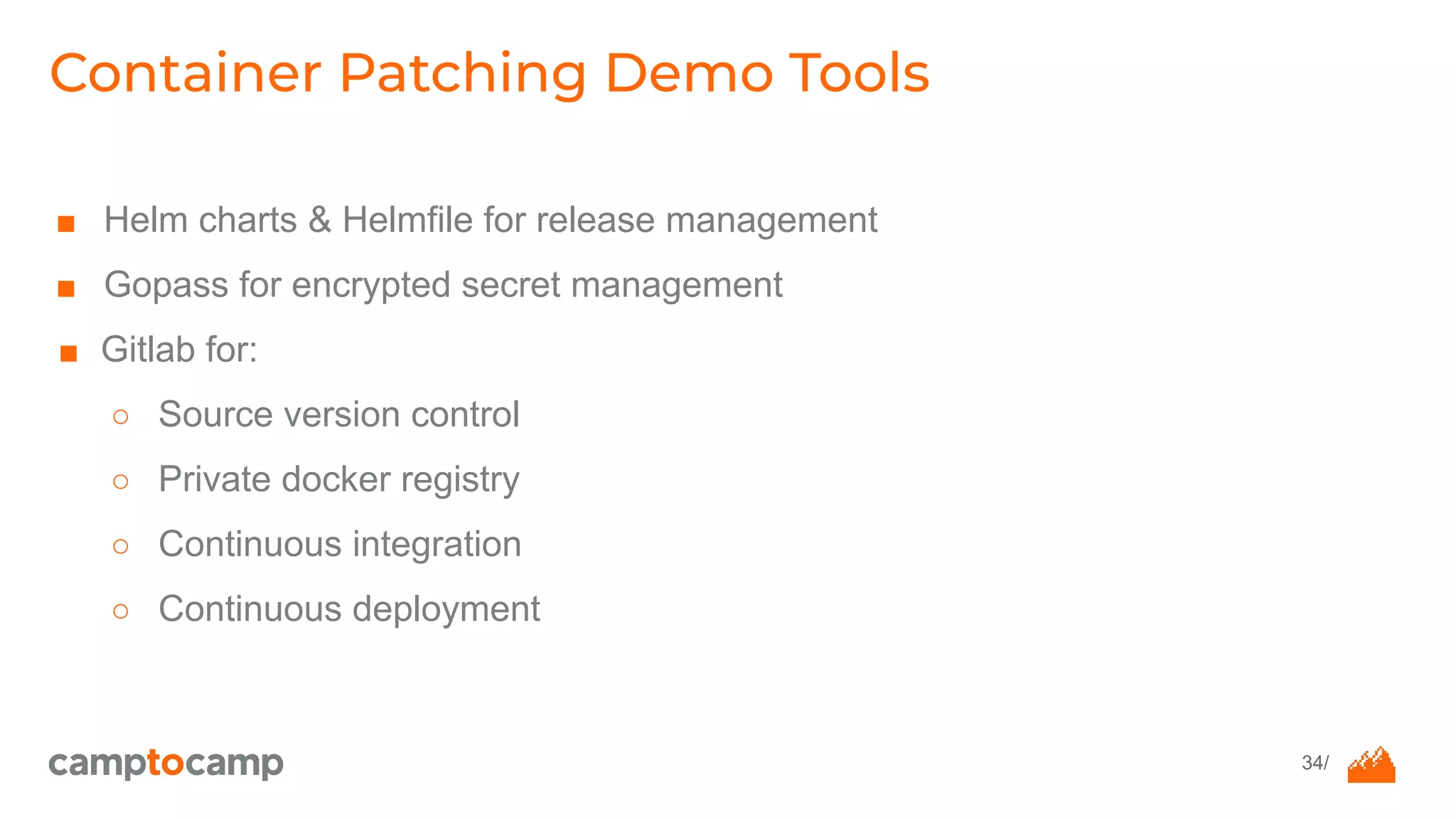

app: example-app

ports:

- protocol: TCP

port: 80

targetPort: 8000

[root@ocp ~]# oc create -f kafkav2.yml

Error on line 1: v1 does not exist

[root@ocp ~]# oc create -f kafkav2.yml

Error on line 24: syntax error

[root@ocp ~]# oc create -f kafkav2.yml

Error on line 26: syntax error

[root@ocp ~]# oc create -f kafkav2.yml

Success ->

[root@ocp ~]#](https://image.slidesharecdn.com/meetup03101-191004125852/75/Meetup-Openshift-Geneva-03-10-12-2048.jpg)