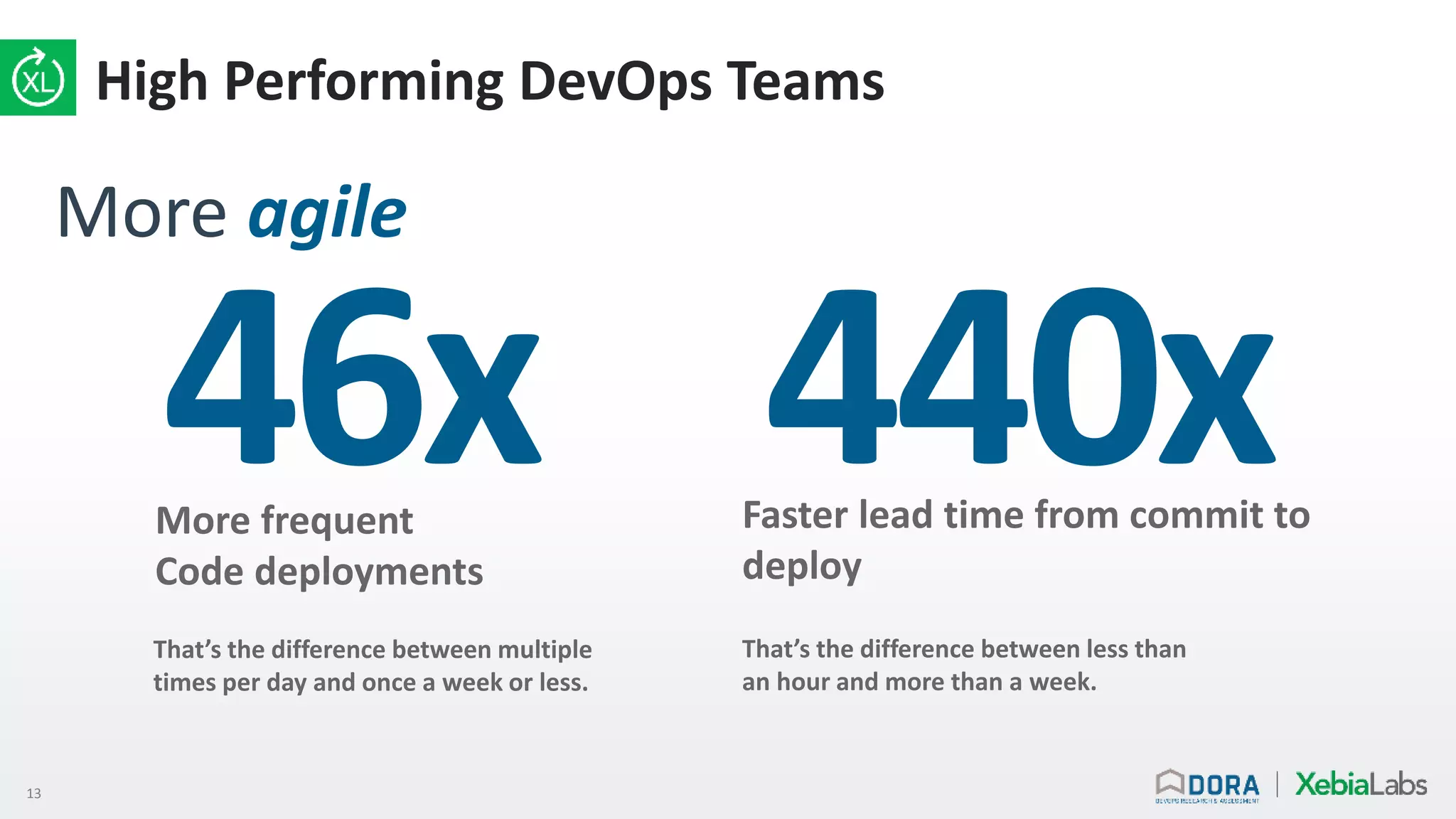

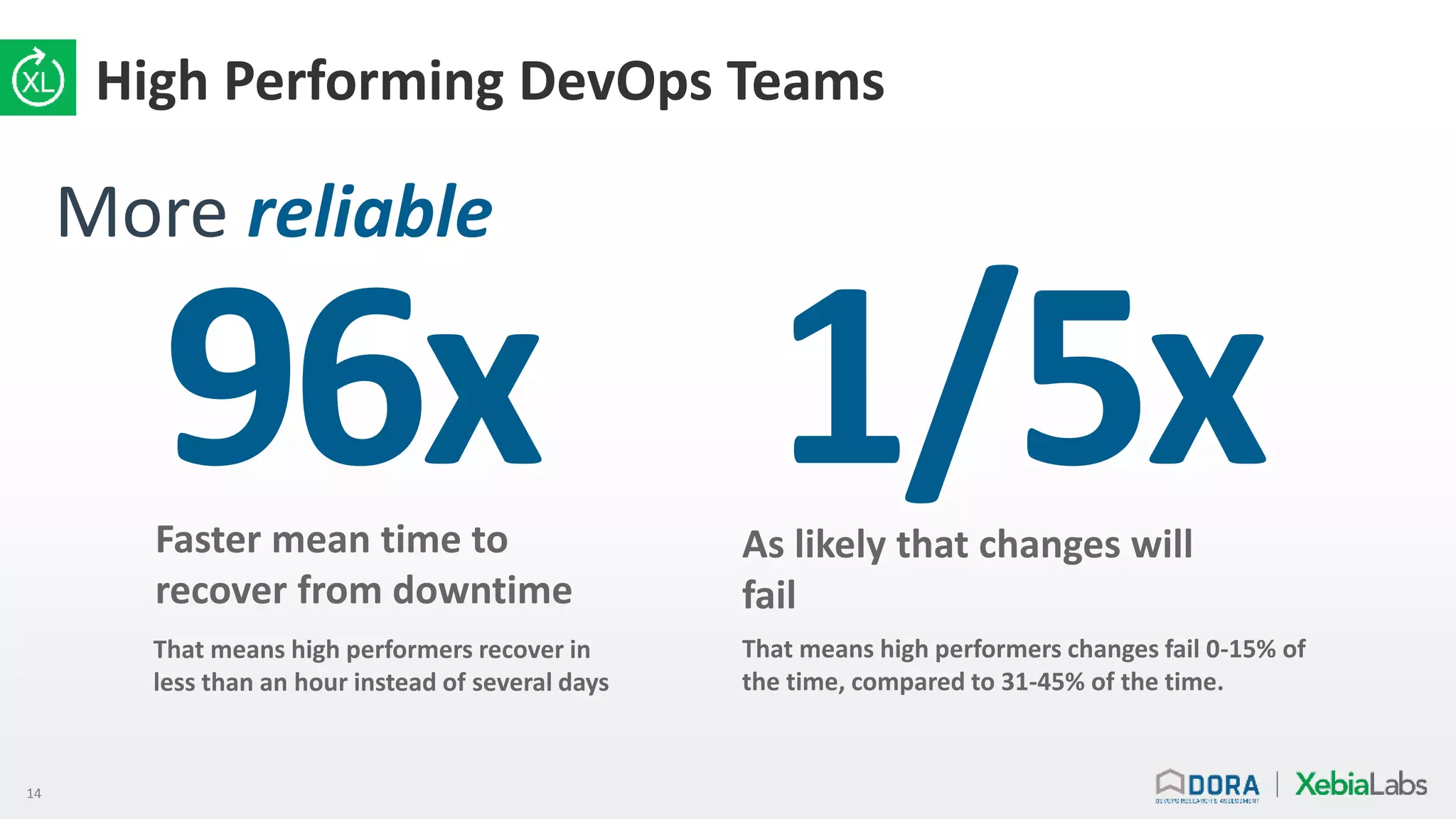

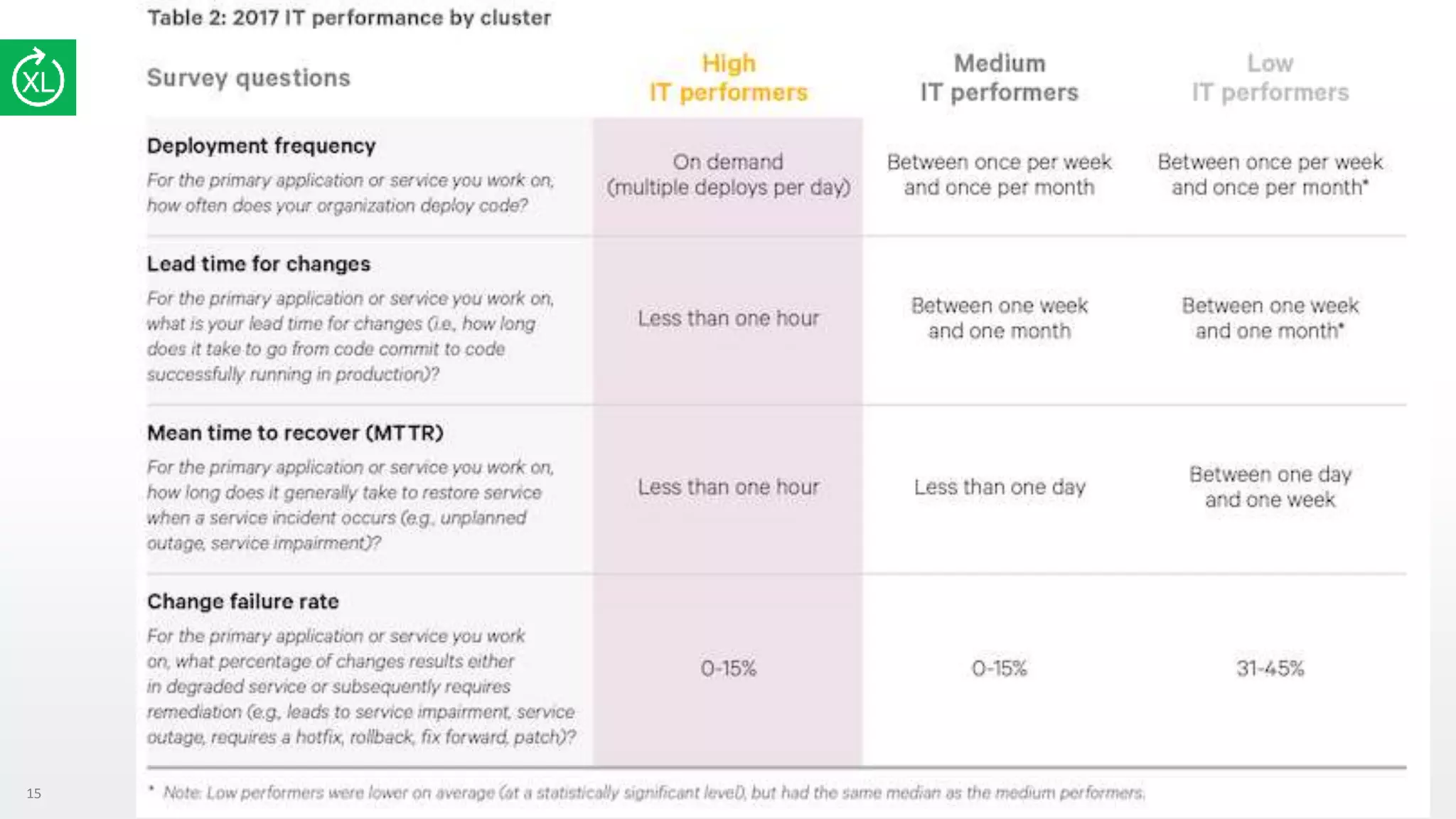

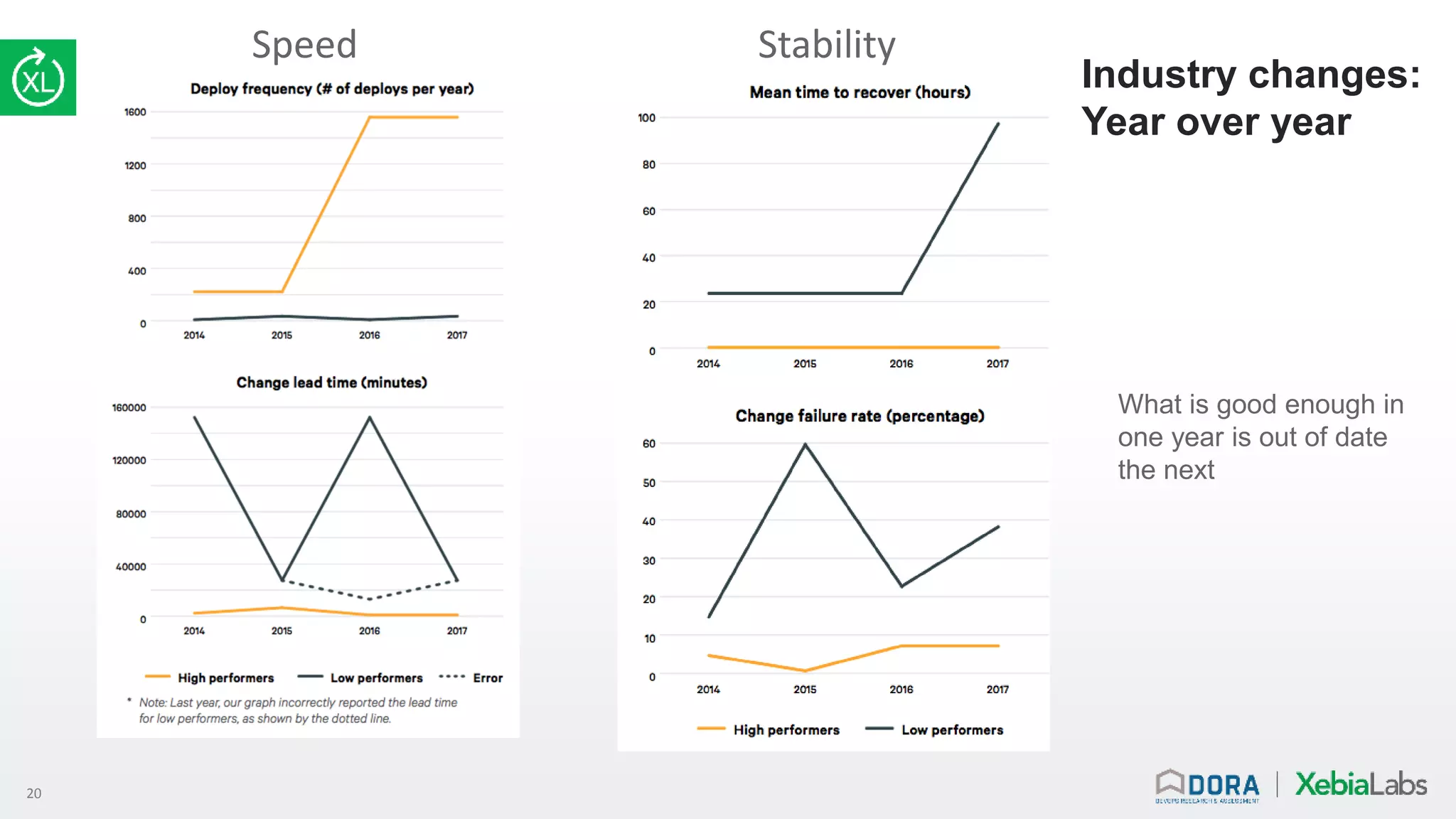

This webinar discusses measuring software delivery performance and improving it. Common mistakes in measuring things like lines of code, velocity, and utilization are outlined. The presentation recommends measuring outcomes like deploy frequency, lead time, mean time to recover from outages, and change fail rates. Maturity models are criticized for not accounting for constant industry changes. Research is presented showing high performing teams have significantly better outcomes. Key capabilities to focus on improving are identified in the areas of technology, processes, measurements, and culture. The presentation encourages leaders to start measuring outcomes, identify constraints, and iteratively improve capabilities to accelerate their performance journey.