Embed presentation

Downloaded 16 times

![$ whois jamespage

[ ceph | ubuntu | debian| openstack | juju | charms ]](https://image.slidesharecdn.com/jamespage-managingcephoperationalcomplexityusingjuju-191105152834/75/Managing-Ceph-operational-complexity-with-Juju-2-2048.jpg)

![Operations

[ Demo ]](https://image.slidesharecdn.com/jamespage-managingcephoperationalcomplexityusingjuju-191105152834/75/Managing-Ceph-operational-complexity-with-Juju-16-2048.jpg)

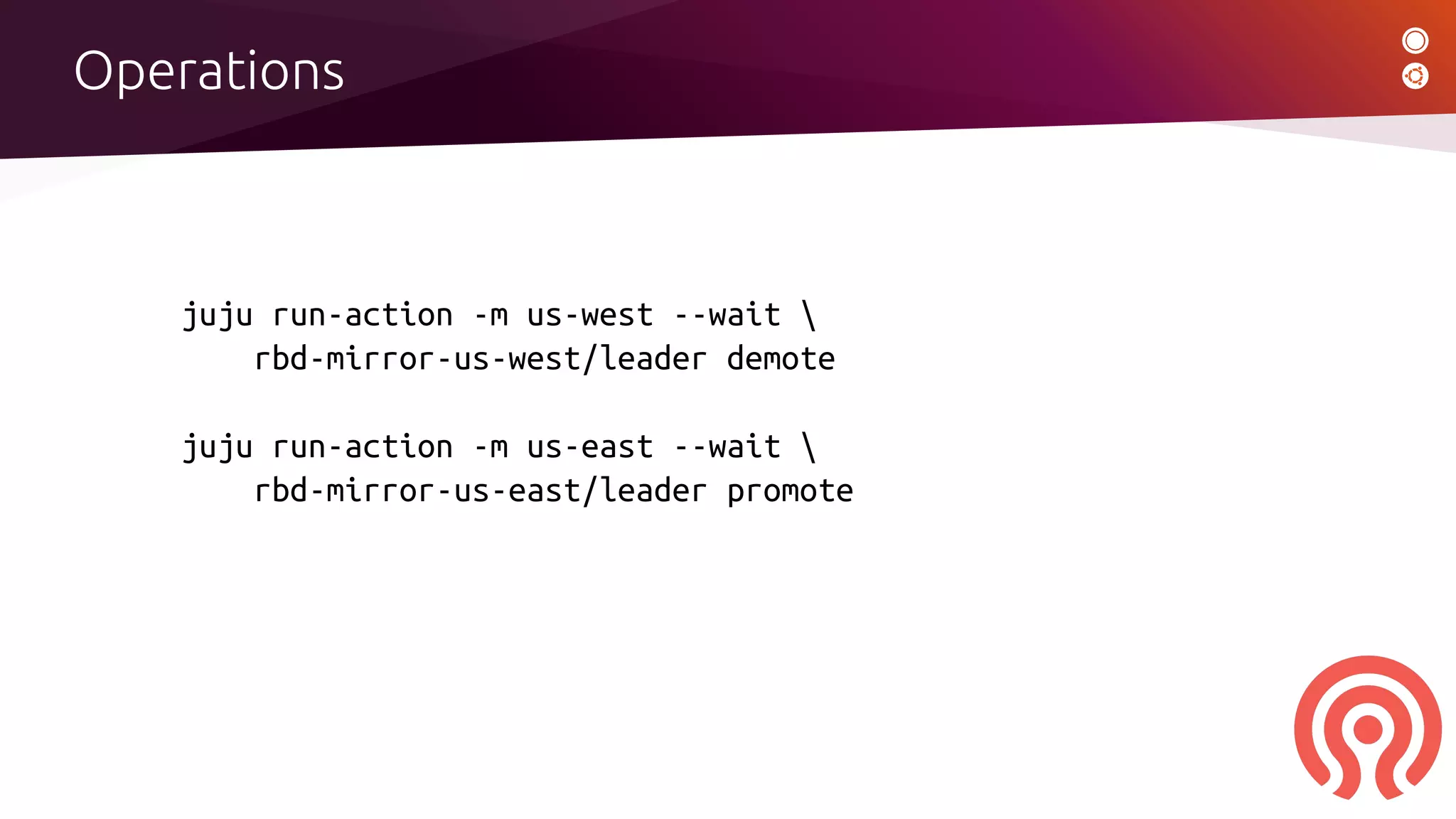

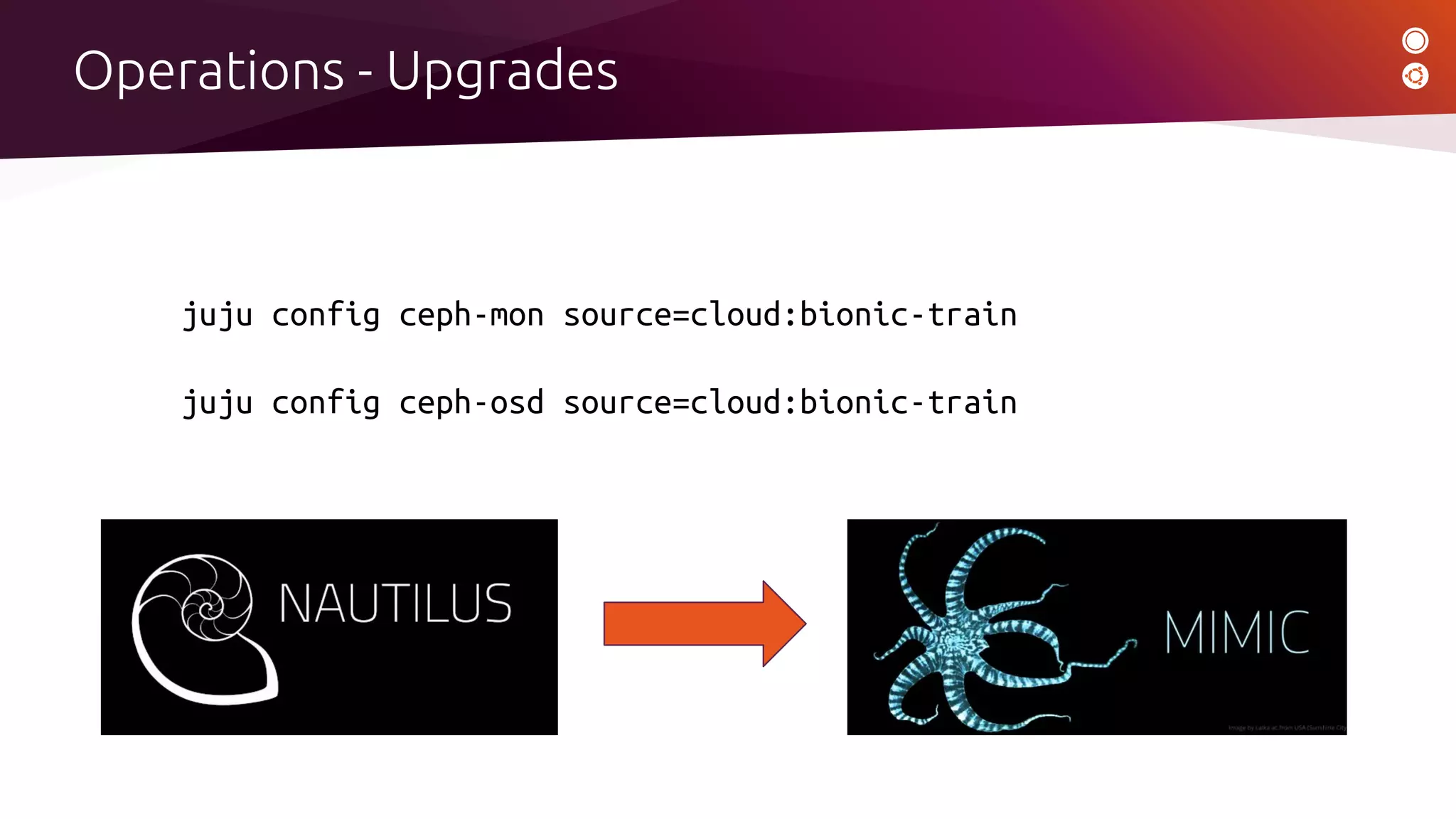

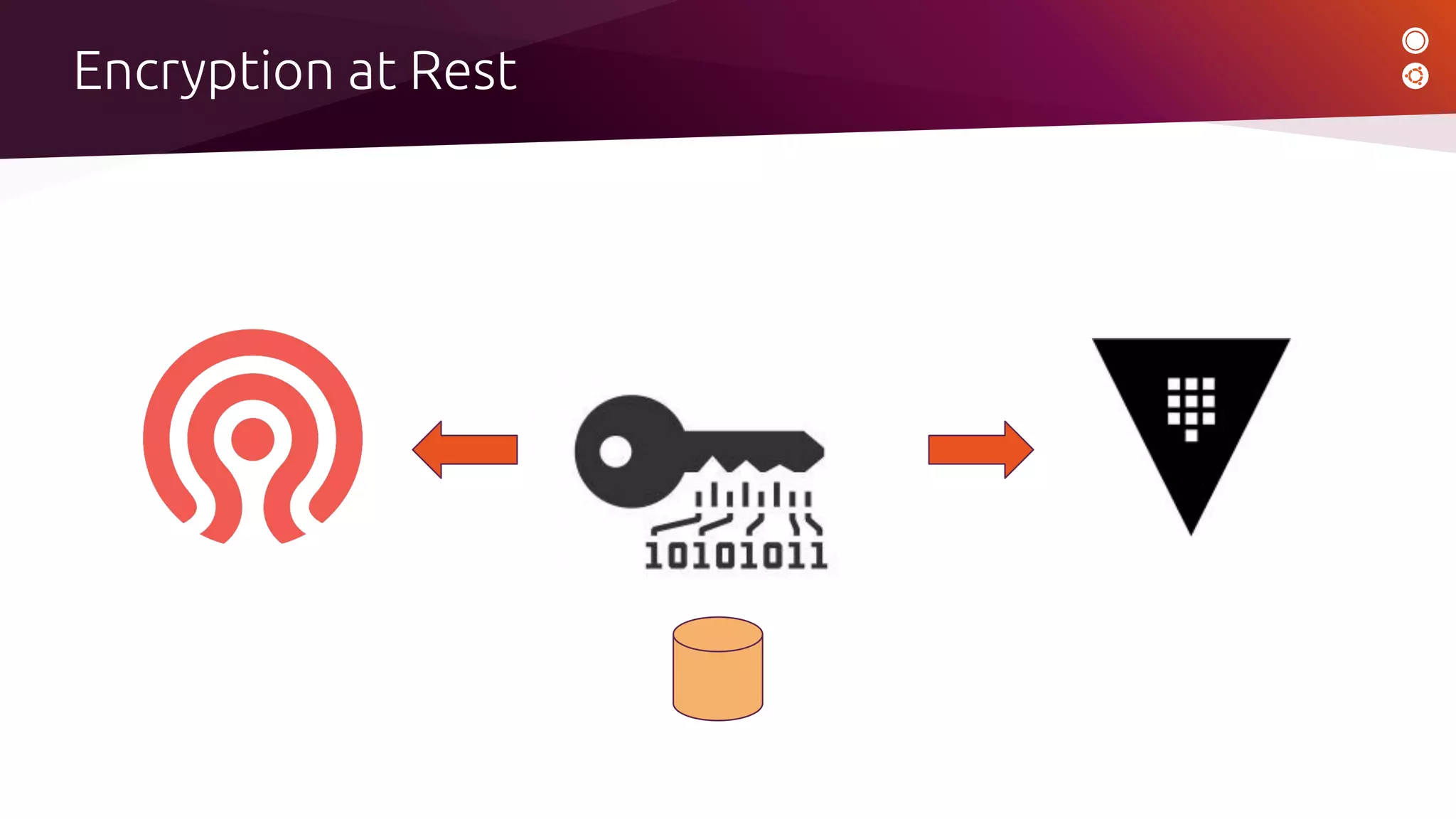

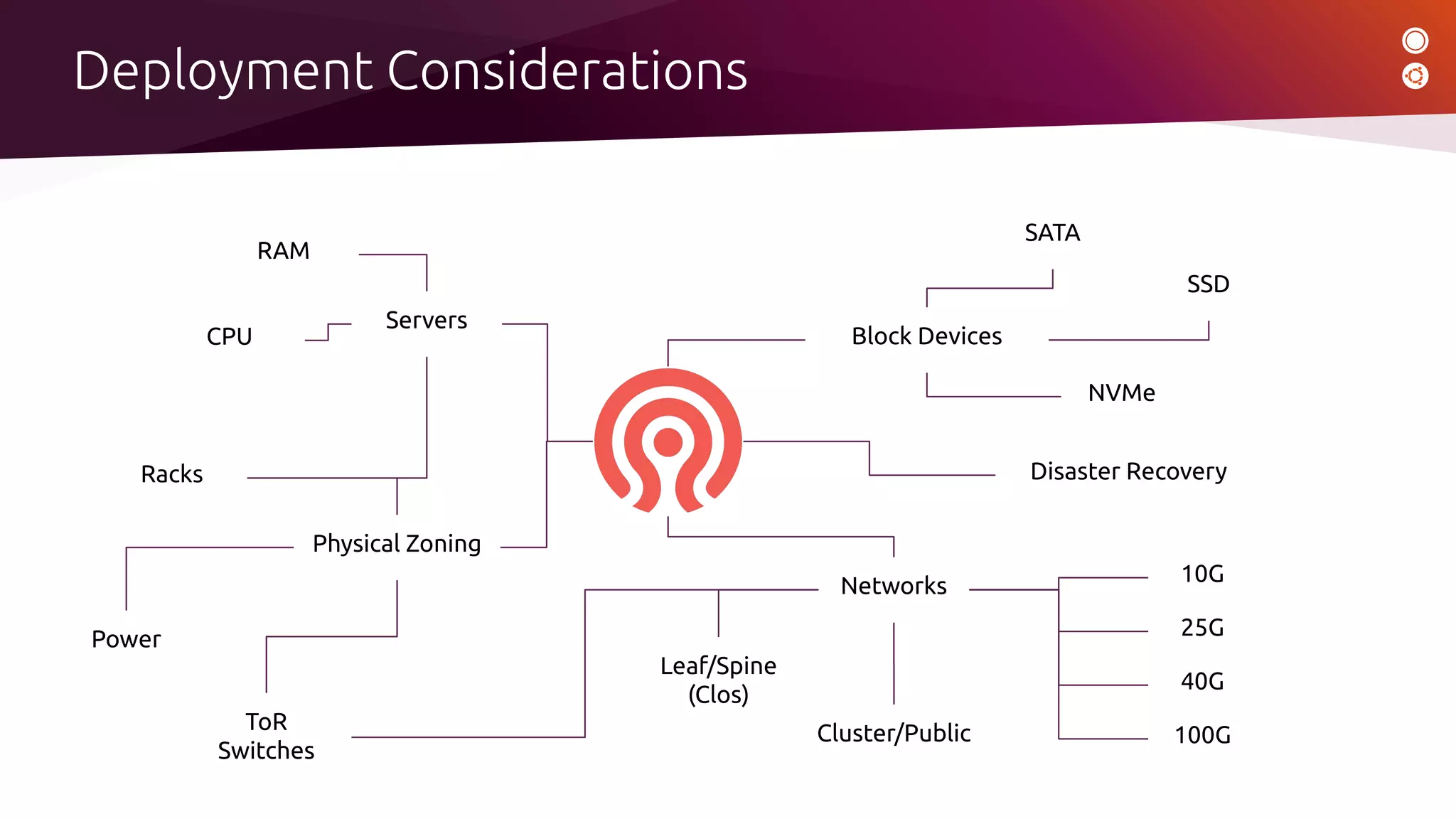

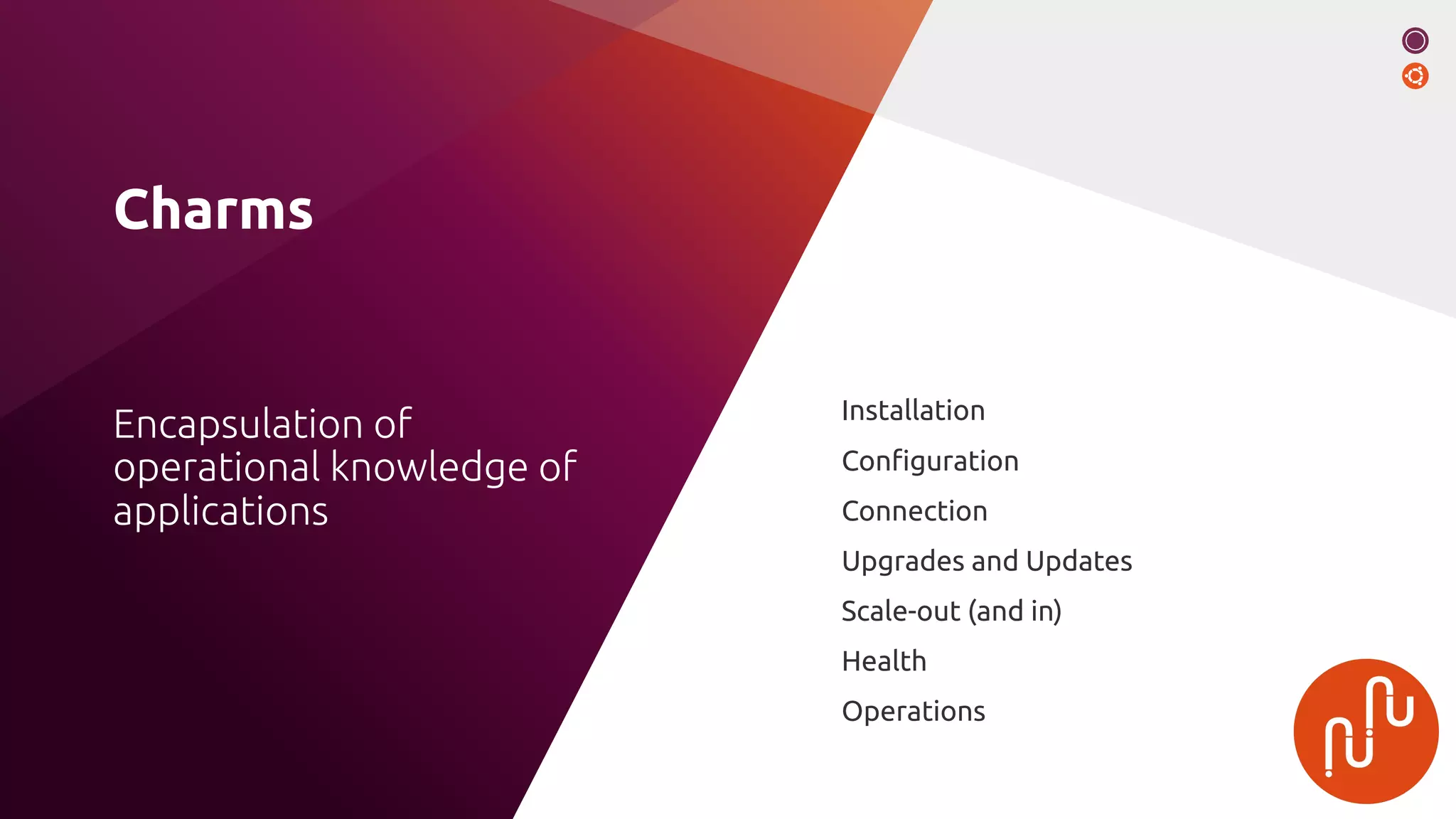

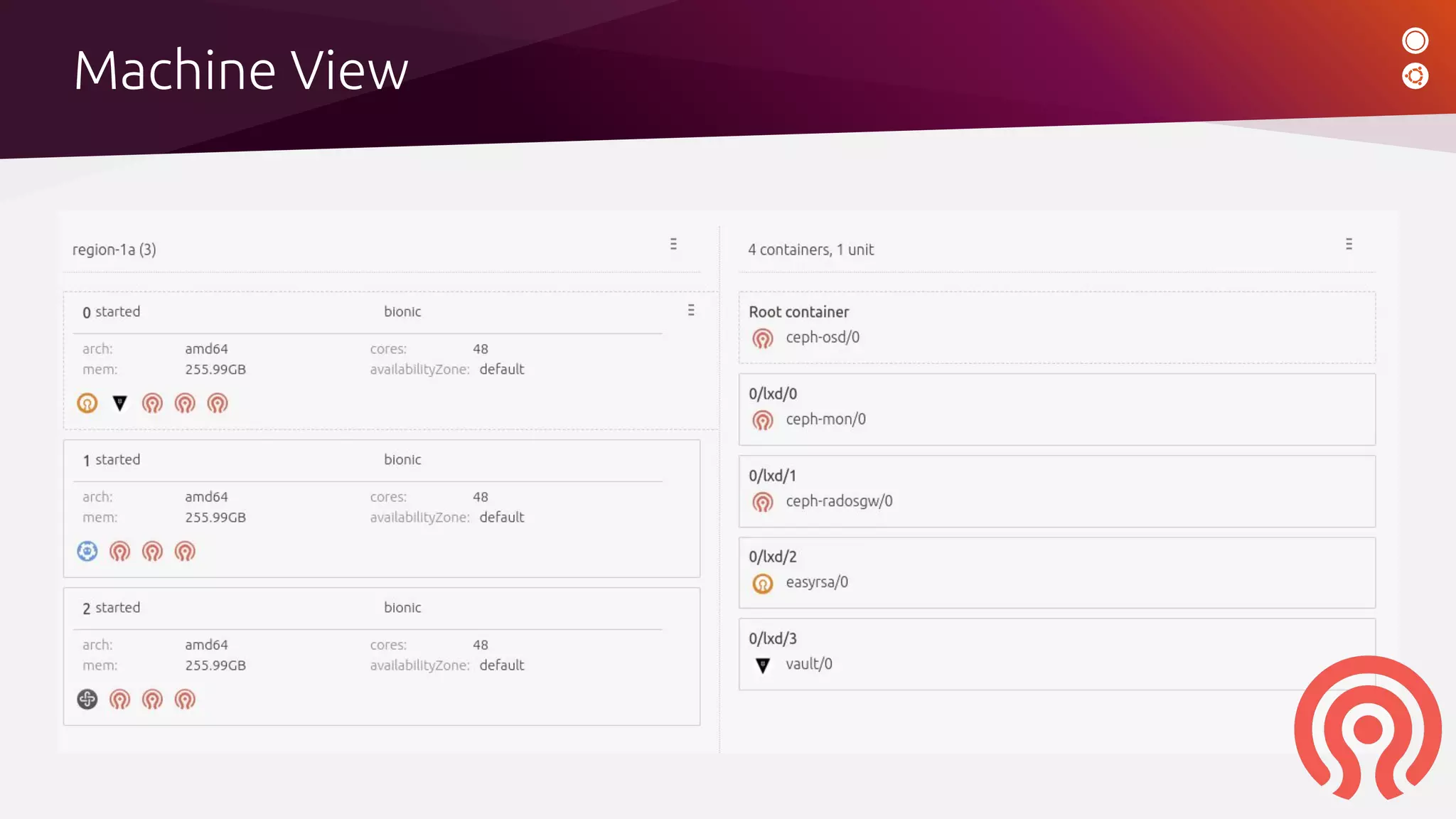

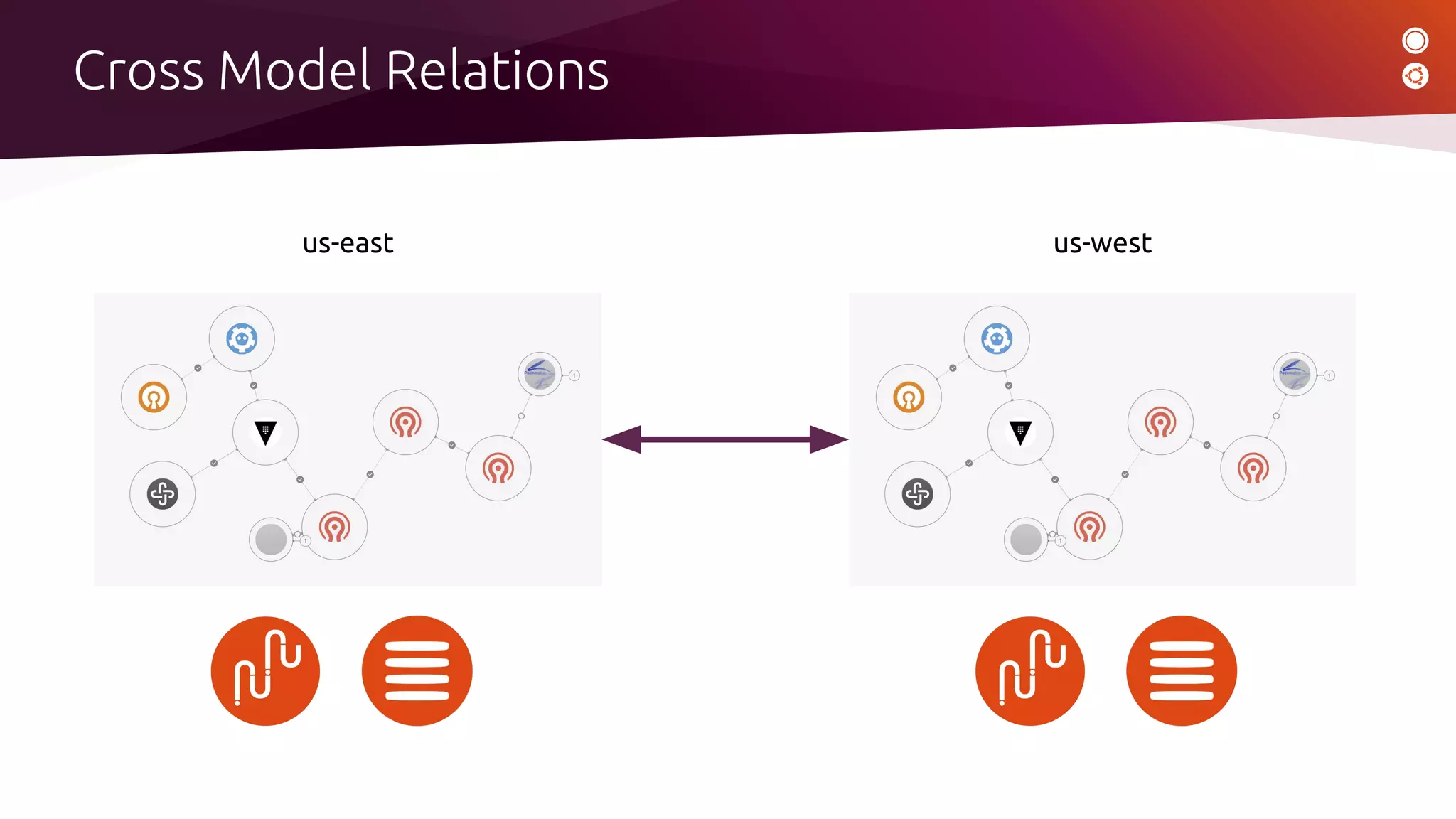

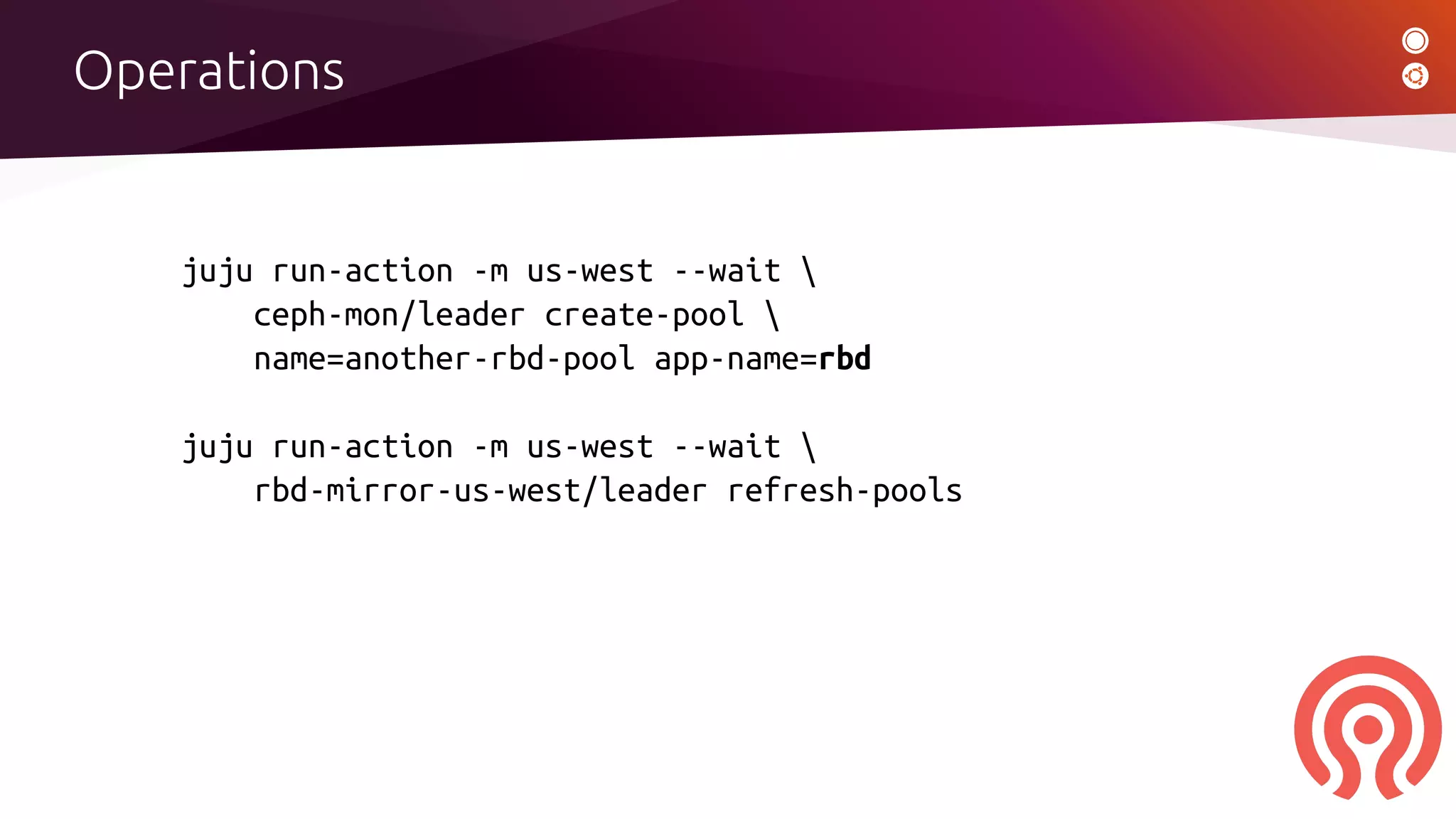

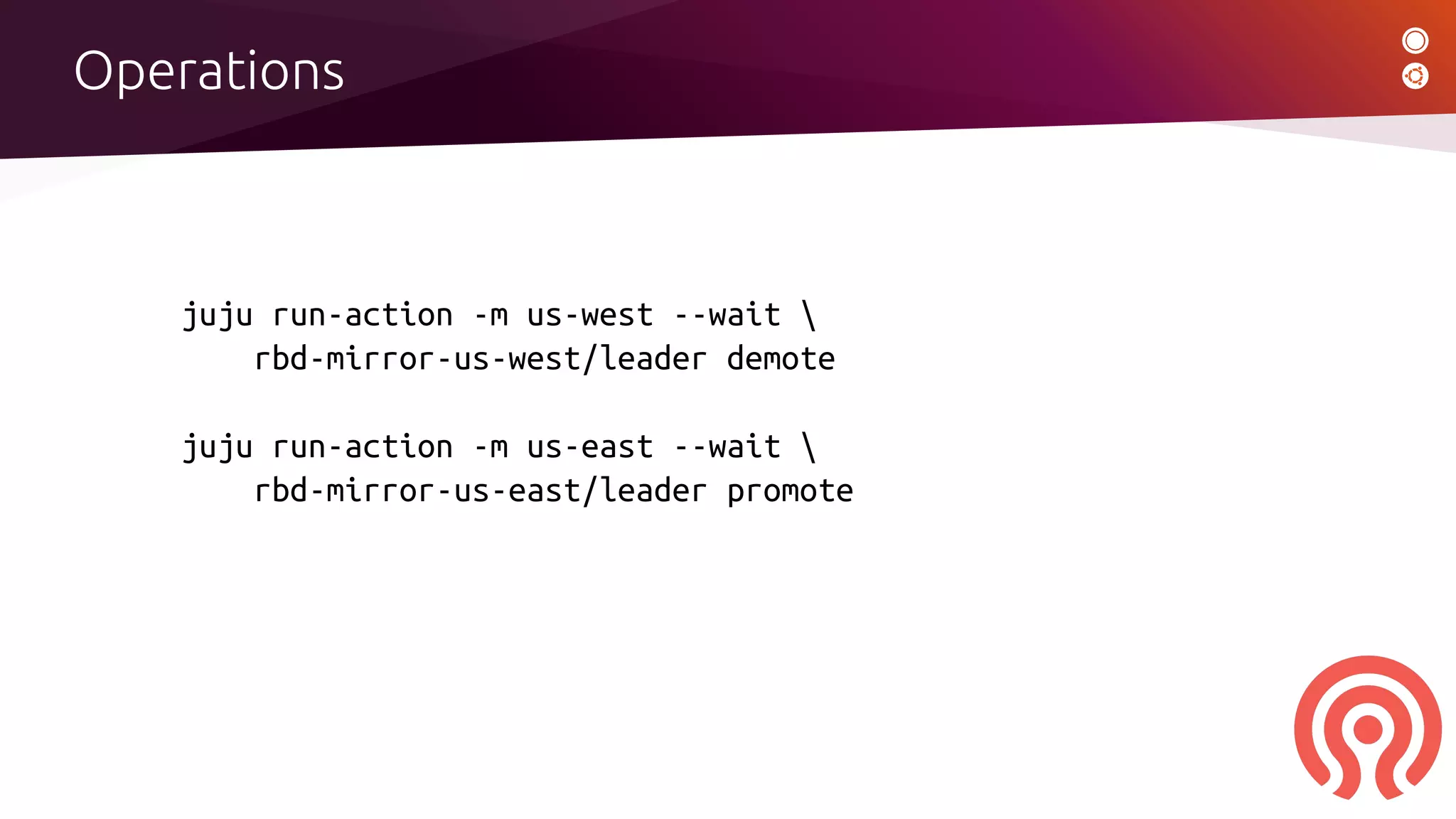

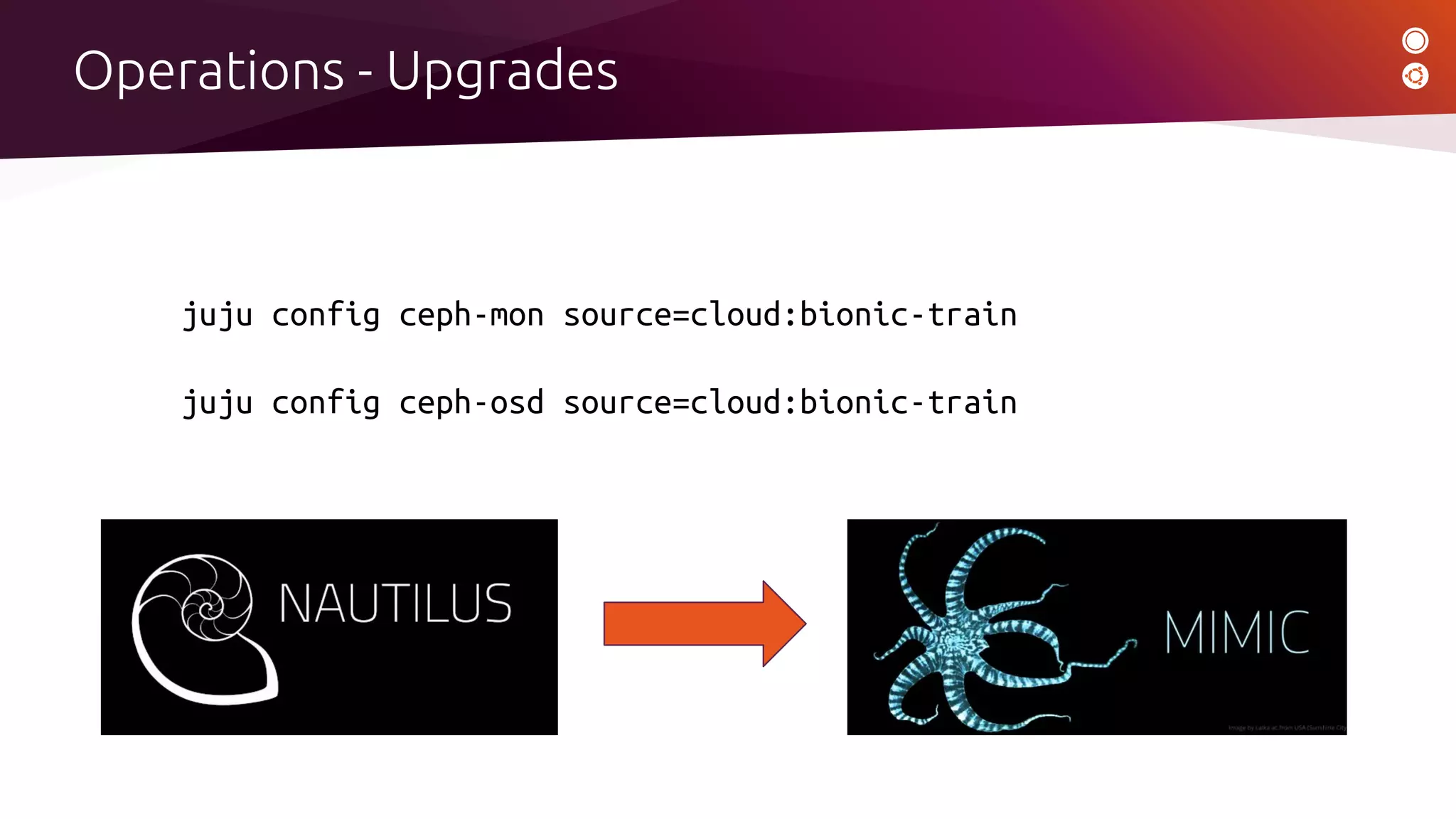

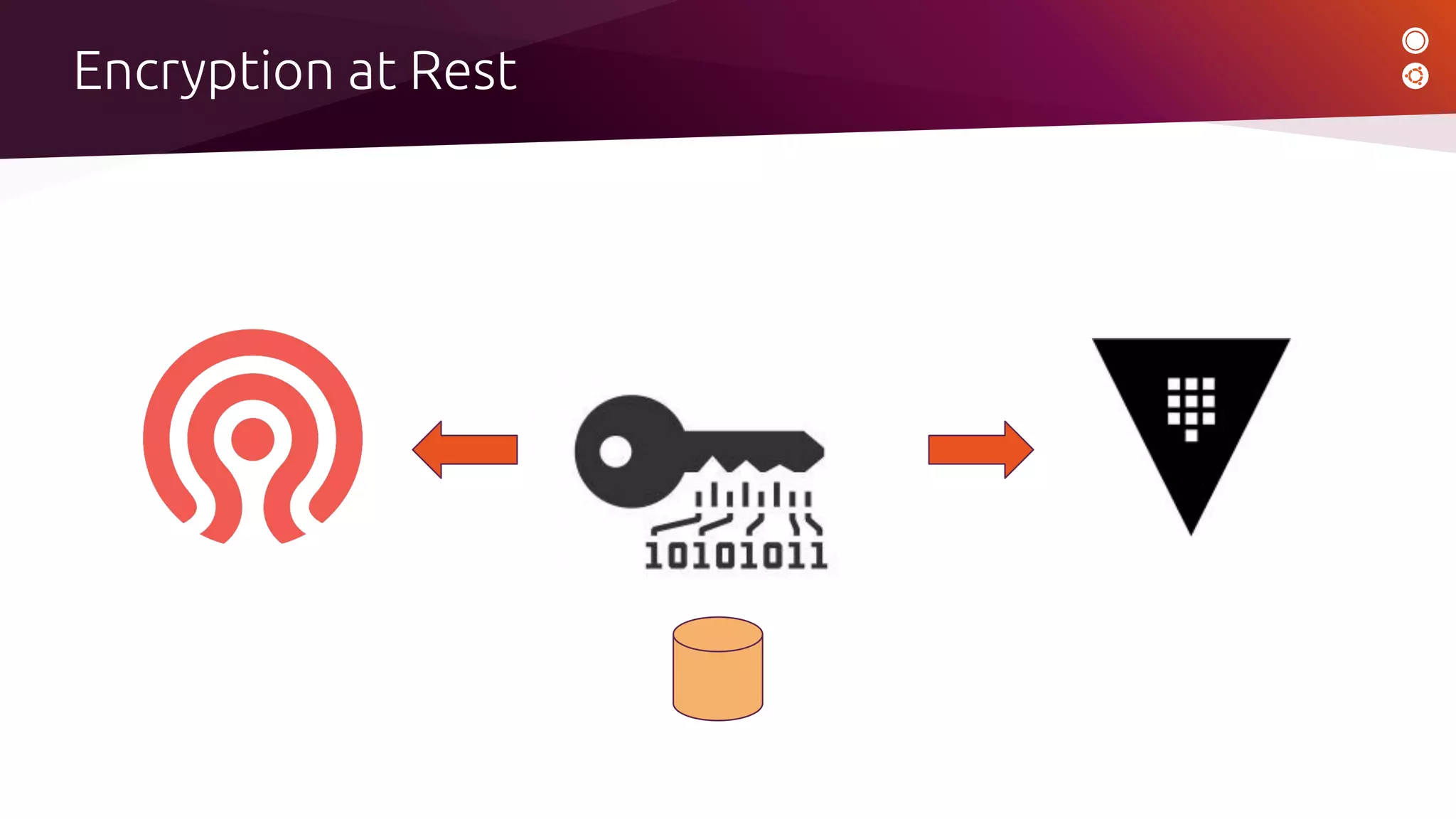

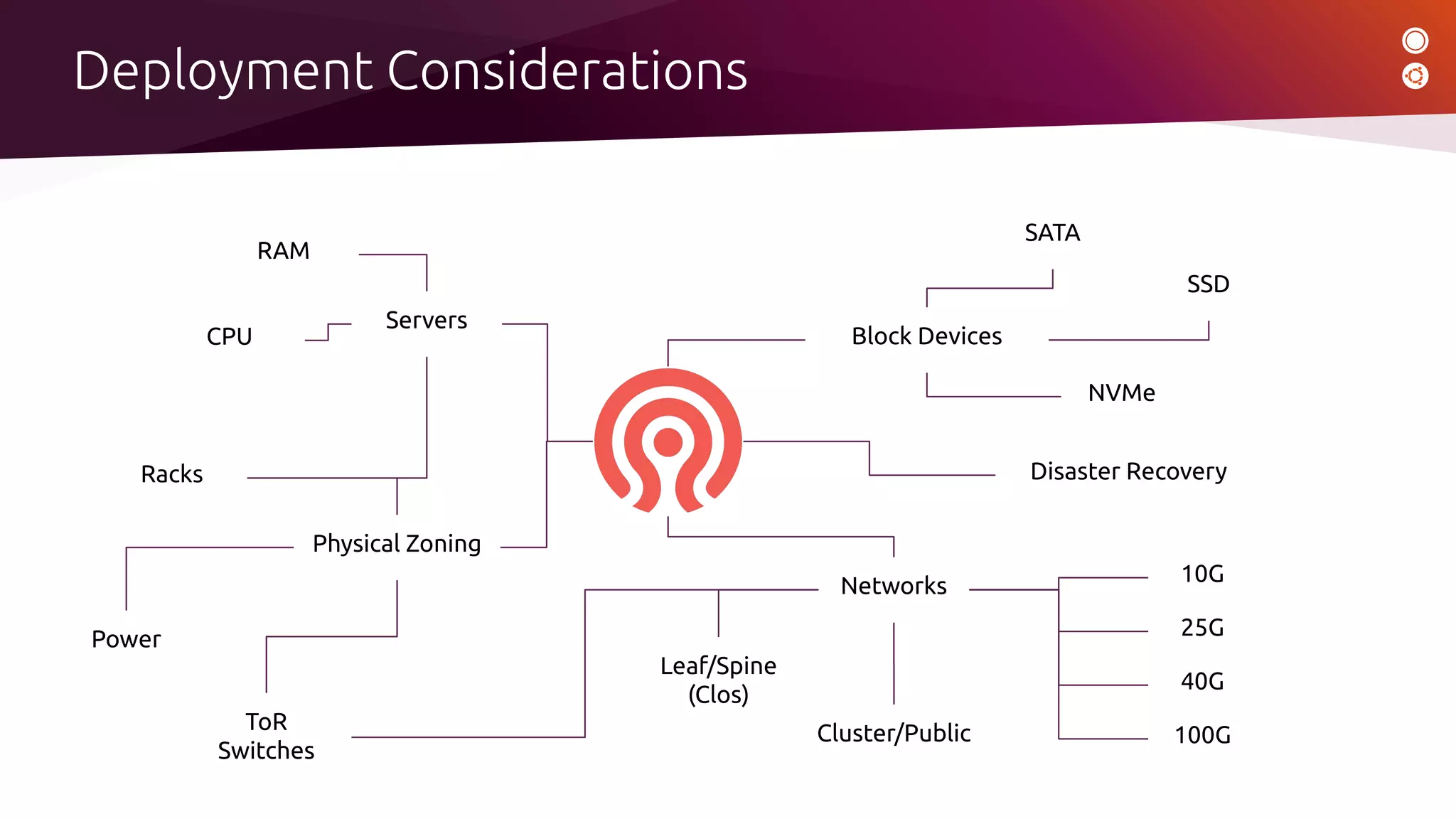

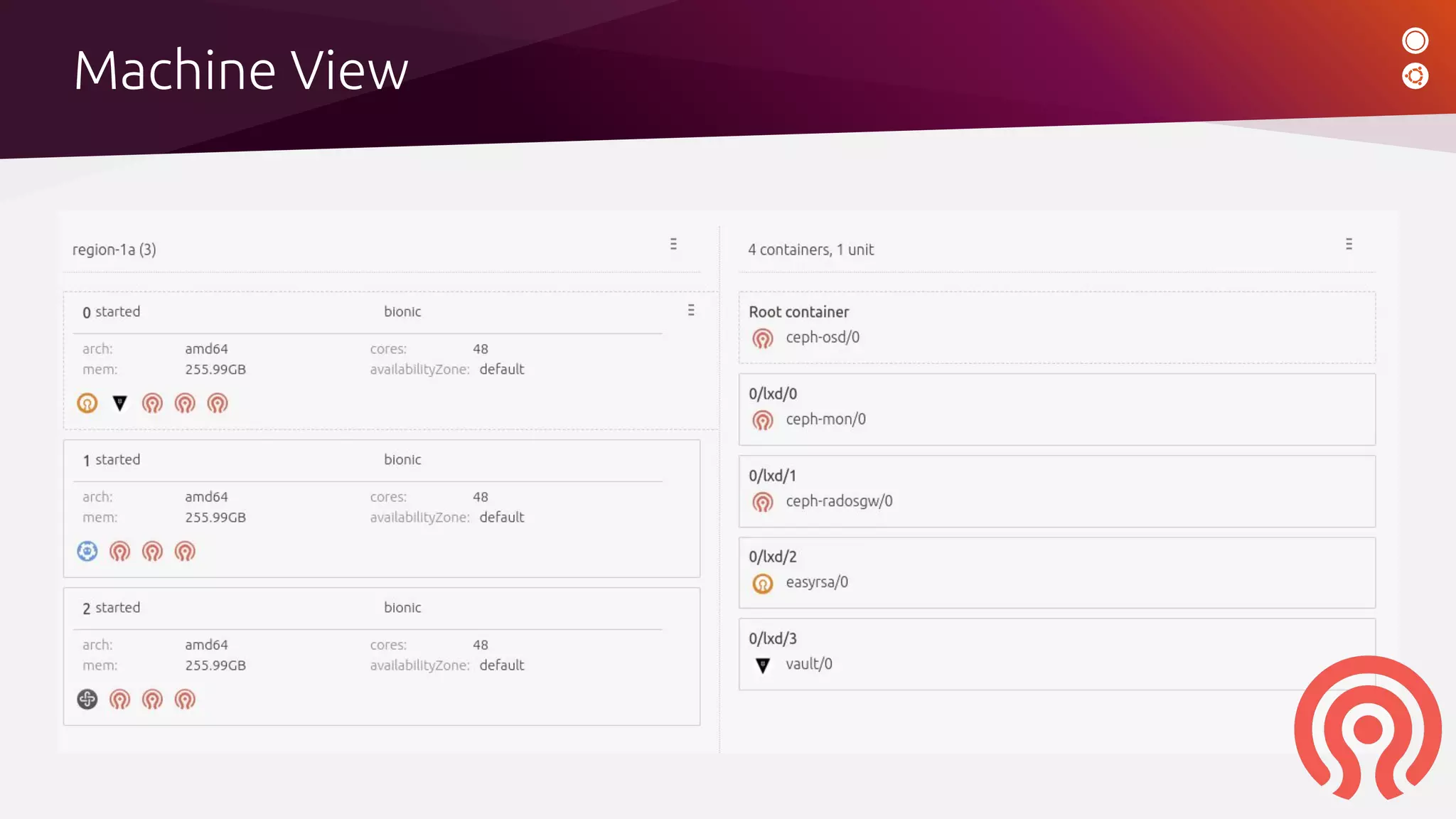

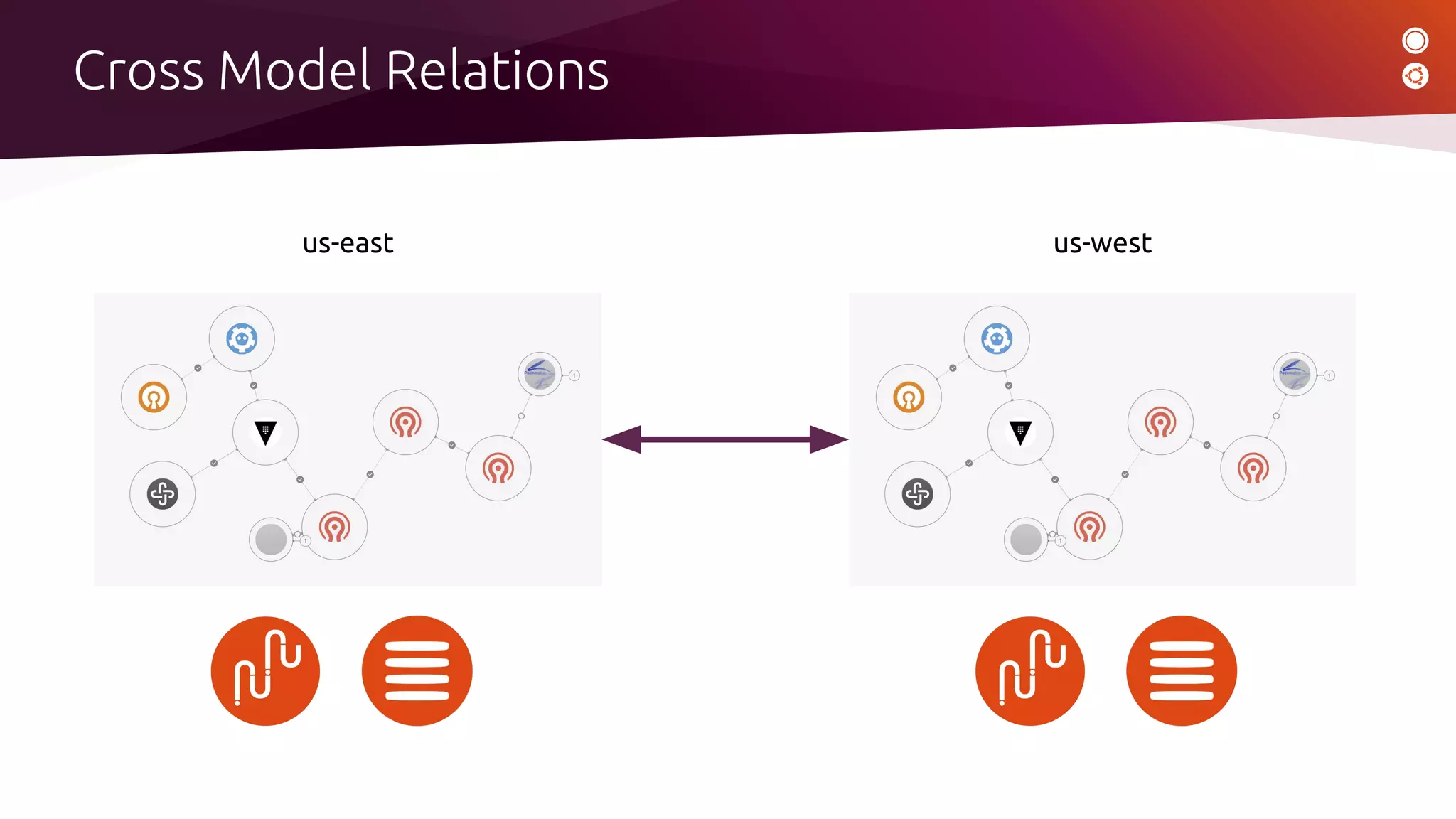

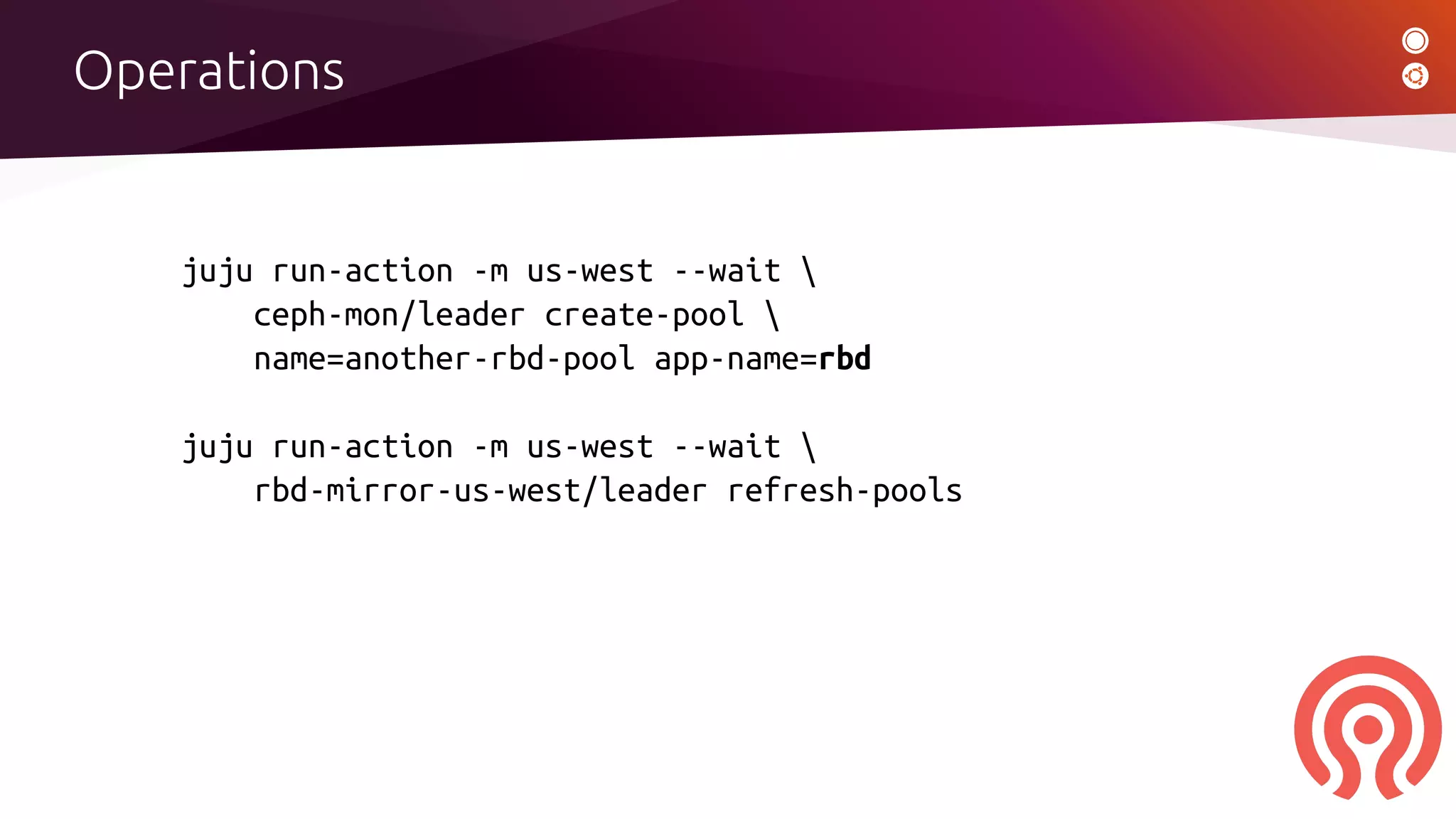

James Page presented on using Juju and charms to manage the operational complexity of Ceph deployments. Juju provides an auto-magic deployment tool and model-driven operations that can be used to deploy Ceph along with related applications like rbd-mirror across multiple data centers. The Ceph charms encapsulate operational knowledge to handle tasks like installation, configuration, upgrades, scaling, and health monitoring. Juju allows defining the application model and relating applications across models, and includes features like MAAS for server provisioning and LXD for containers. Demostrations showed using Juju actions to manage Ceph operations like creating pools, refreshing mirrors, and upgrading versions across availability zones.

![$ whois jamespage

[ ceph | ubuntu | debian| openstack | juju | charms ]](https://image.slidesharecdn.com/jamespage-managingcephoperationalcomplexityusingjuju-191105152834/75/Managing-Ceph-operational-complexity-with-Juju-2-2048.jpg)

![Operations

[ Demo ]](https://image.slidesharecdn.com/jamespage-managingcephoperationalcomplexityusingjuju-191105152834/75/Managing-Ceph-operational-complexity-with-Juju-16-2048.jpg)