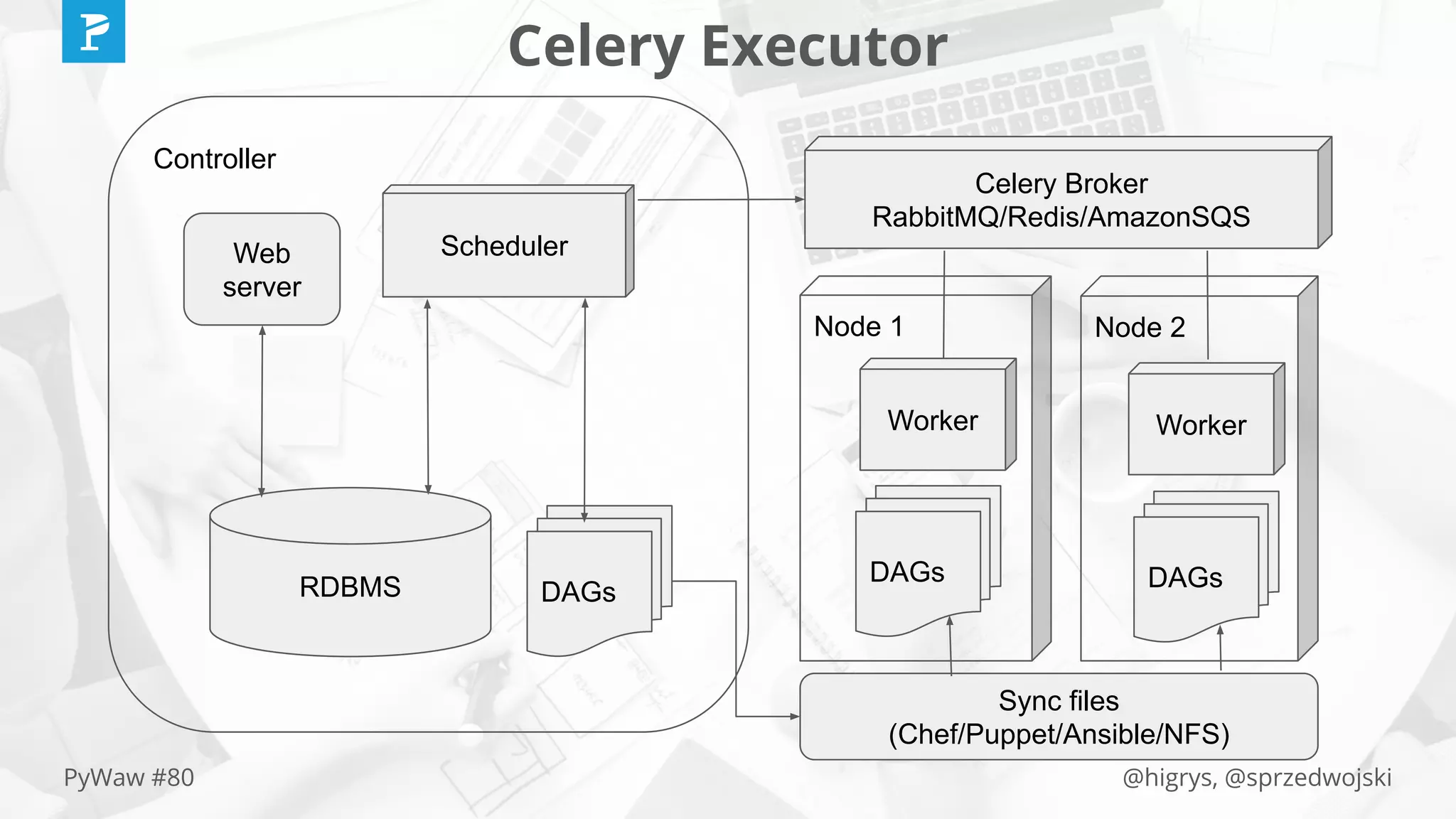

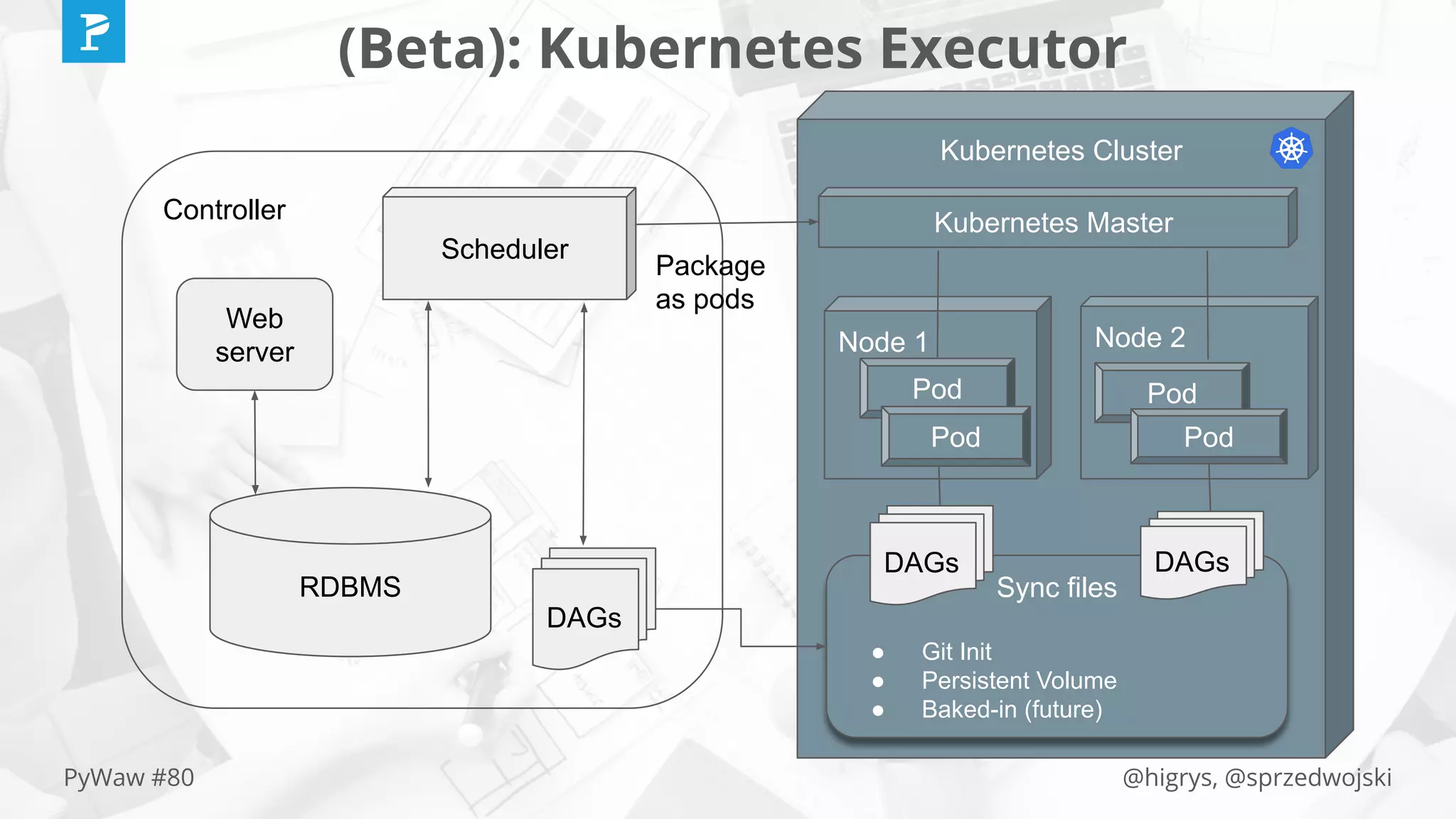

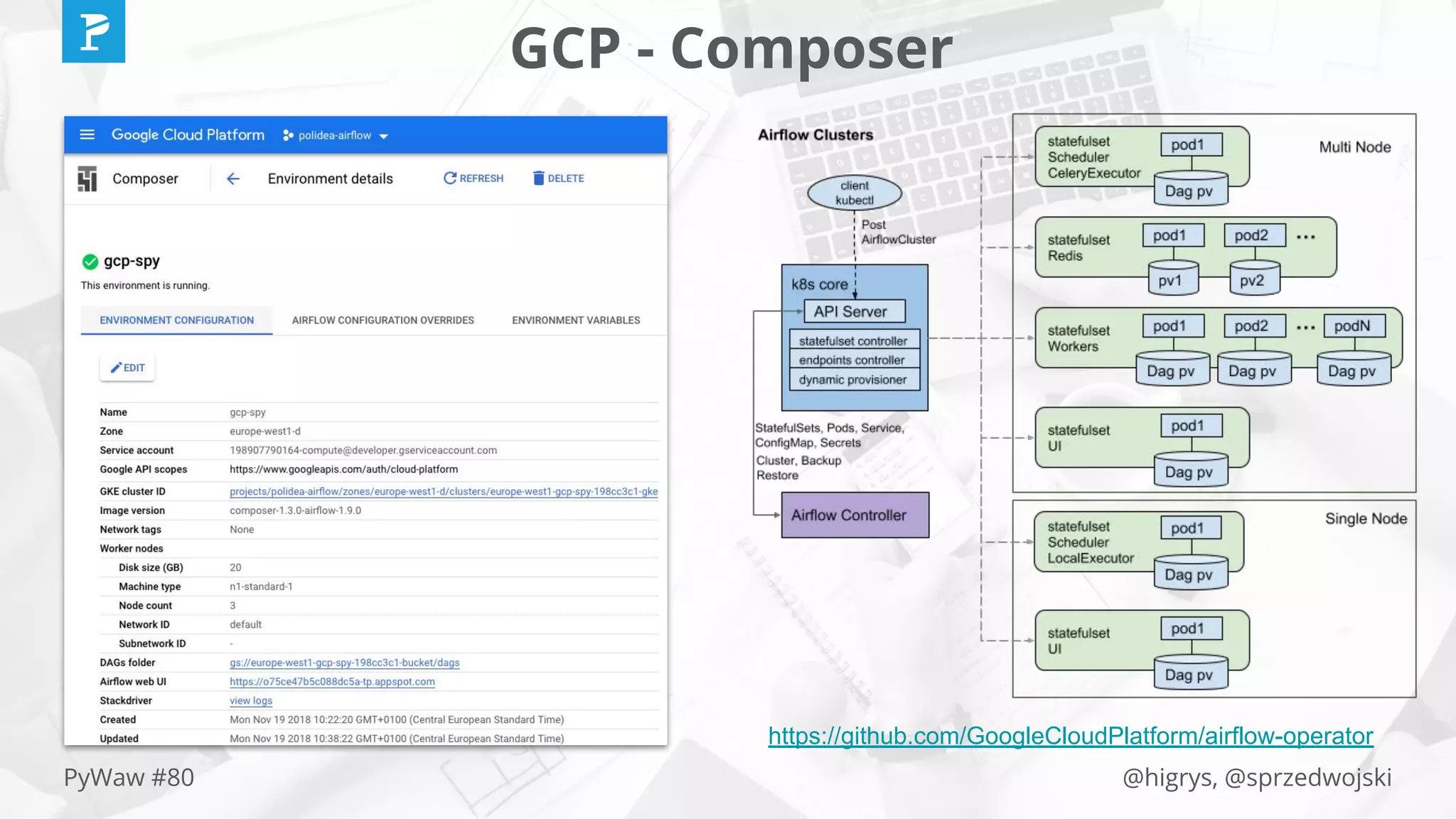

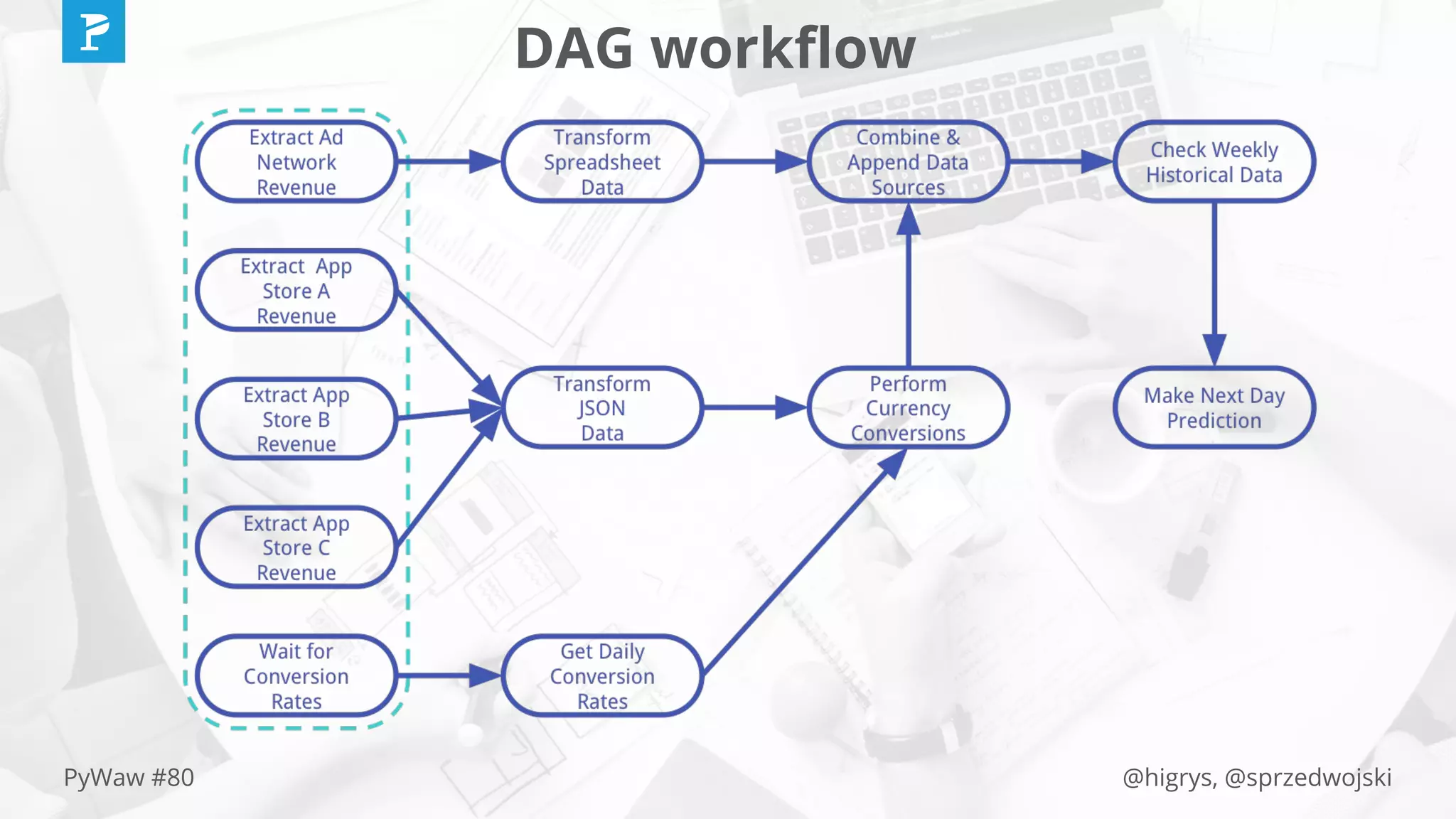

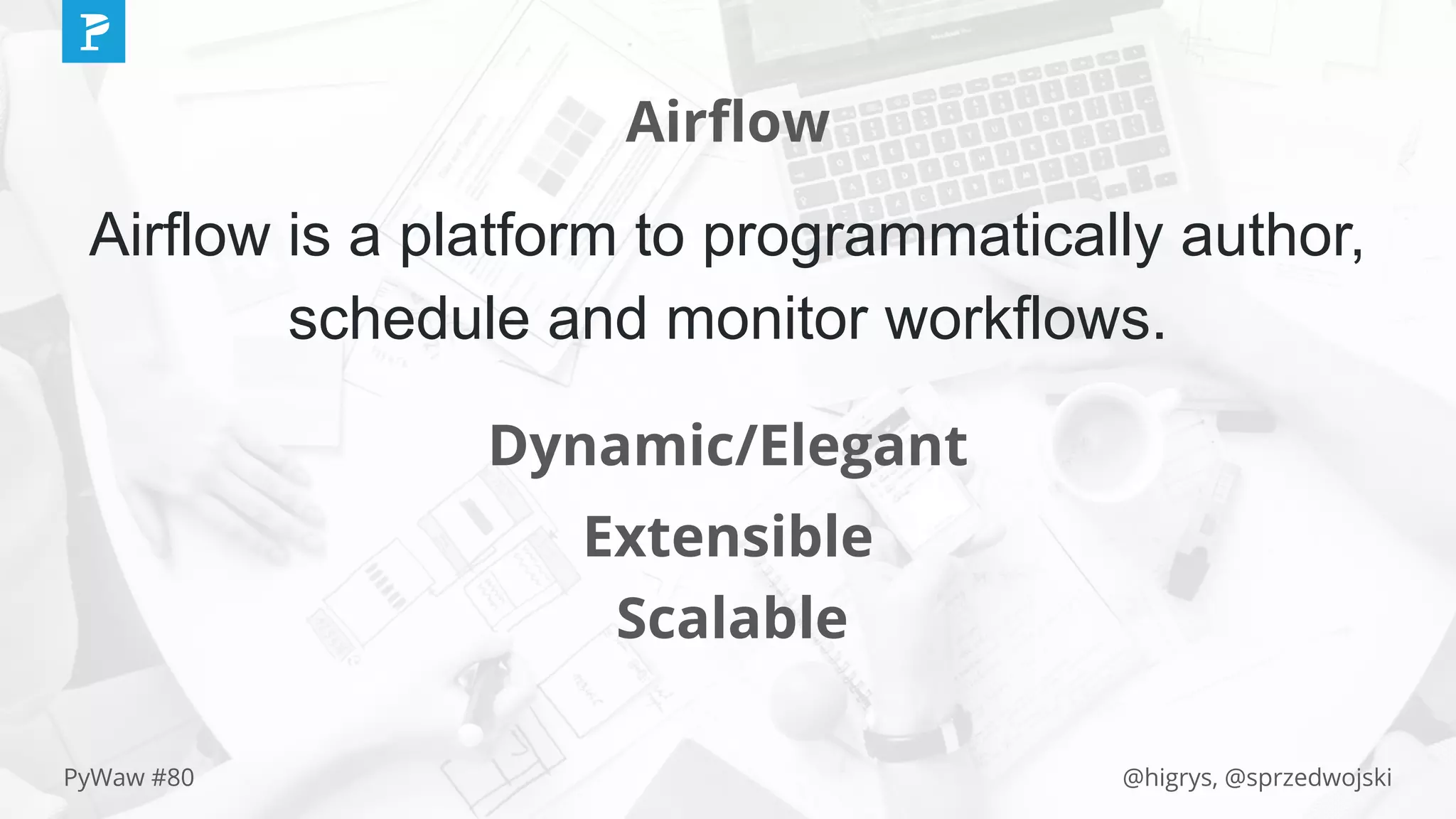

The document discusses Apache Airflow, a platform for creating and managing data pipelines through directed acyclic graphs (DAGs). It covers the comparison between Airflow and traditional cron jobs, use cases for Airflow such as ETL jobs and ML pipelines, and details about operators and task management. Additionally, it outlines example implementations, dependencies, and execution environments like local, Celery, and Kubernetes executors.

![@higrys, @sprzedwojskiPyWaw #80

List of instances

bash_task = BashOperator(

xcom_push=True,

bash_command=

"gcloud sql instances list | tail -n +2 | grep -oE '^[^ ]+' "

"| tr 'n' ' '",

task_id="gcp_service_list_instances_sql",

dag=dag

)](https://image.slidesharecdn.com/manageabledatapipelineswithairflowandkubernetes-november271145am-pywaw-191231142836/75/Manageable-data-pipelines-with-airflow-and-kubernetes-november-27-11-45-am-py-waw-24-2048.jpg)

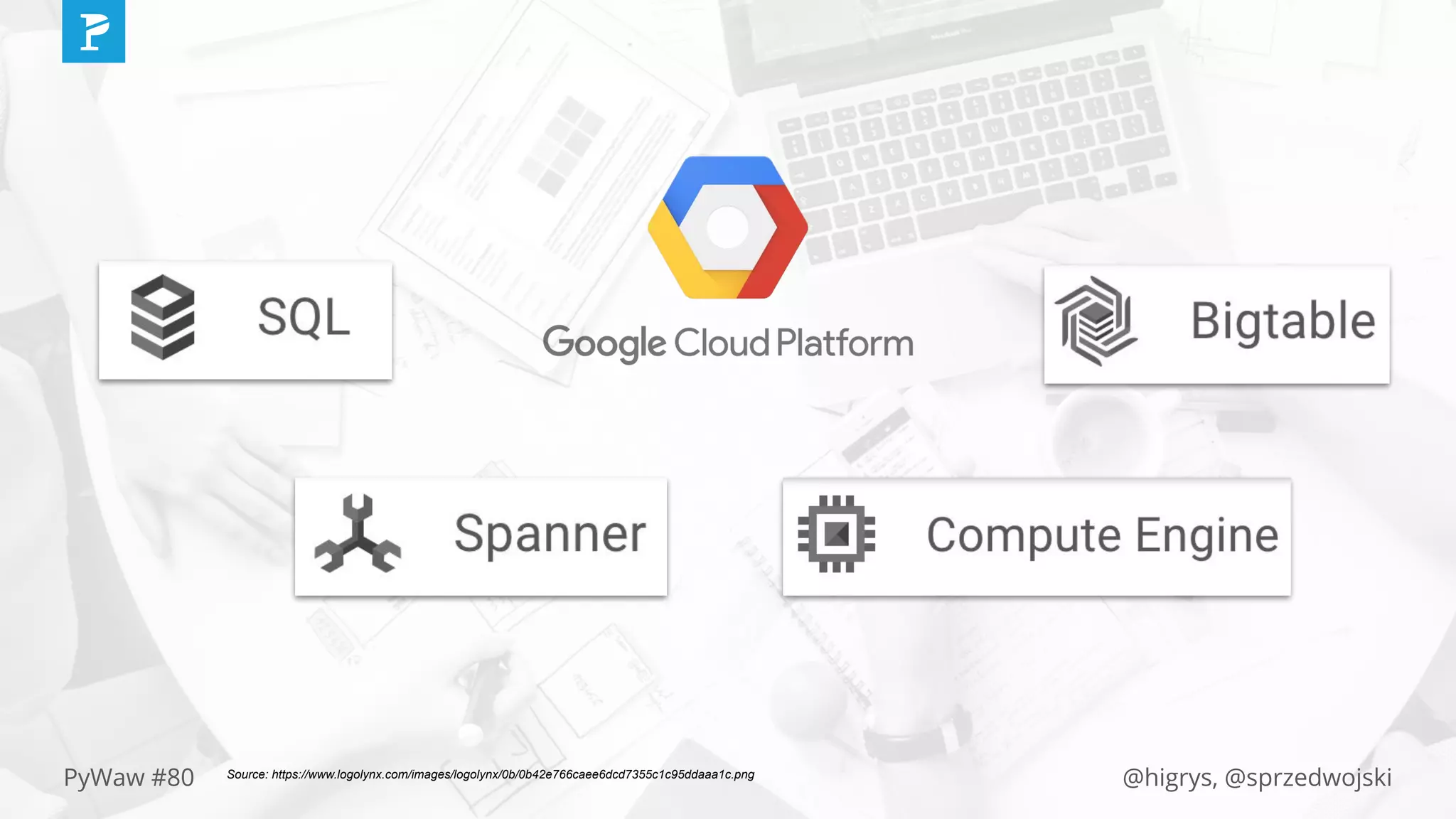

![@higrys, @sprzedwojskiPyWaw #80

All services

GCP_SERVICES = [

('sql', 'Cloud SQL'),

('spanner', 'Spanner'),

('bigtable', 'BigTable'),

('compute', 'Compute Engine'),

]](https://image.slidesharecdn.com/manageabledatapipelineswithairflowandkubernetes-november271145am-pywaw-191231142836/75/Manageable-data-pipelines-with-airflow-and-kubernetes-november-27-11-45-am-py-waw-25-2048.jpg)

![@higrys, @sprzedwojskiPyWaw #80

List of instances - all services

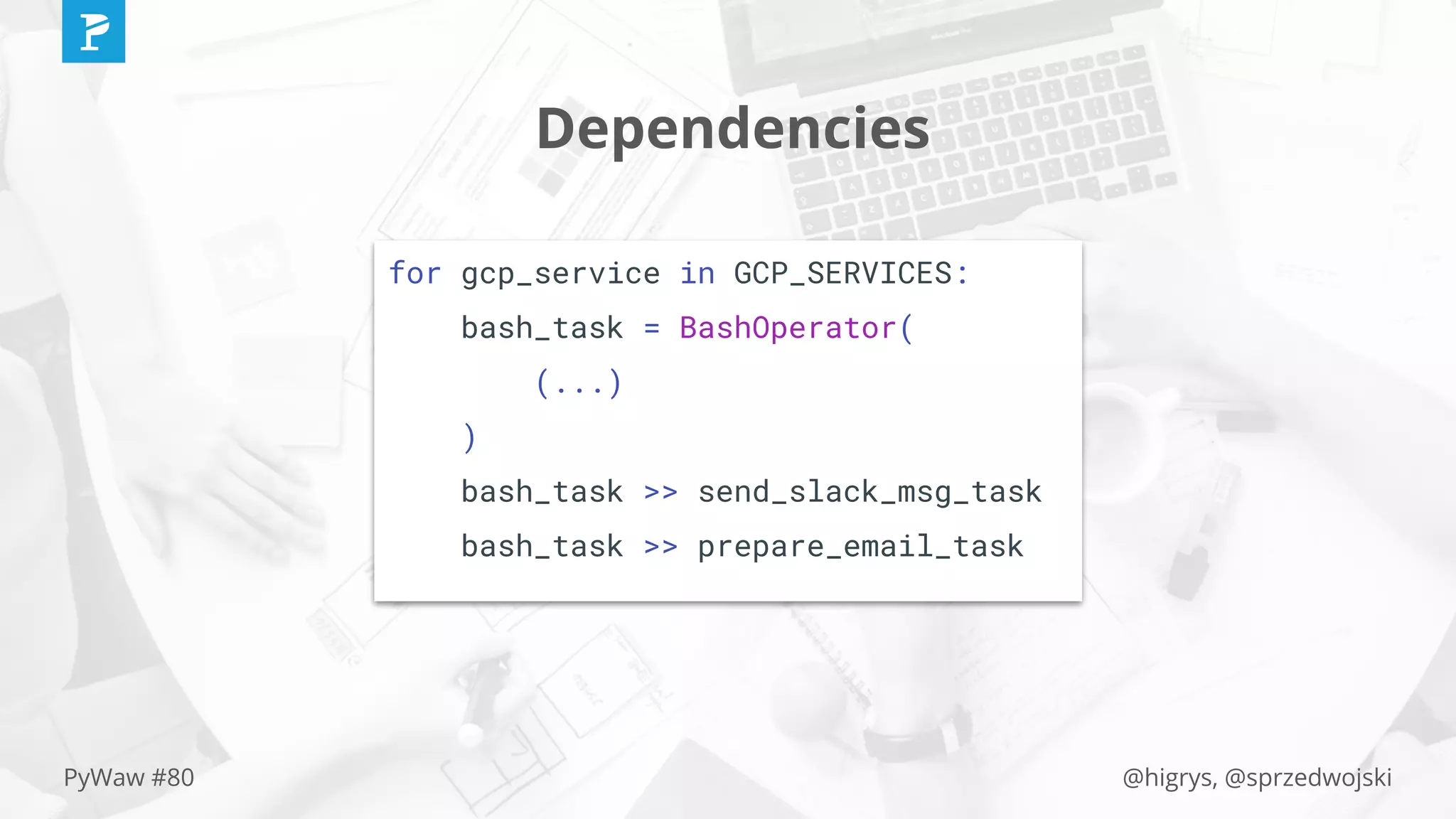

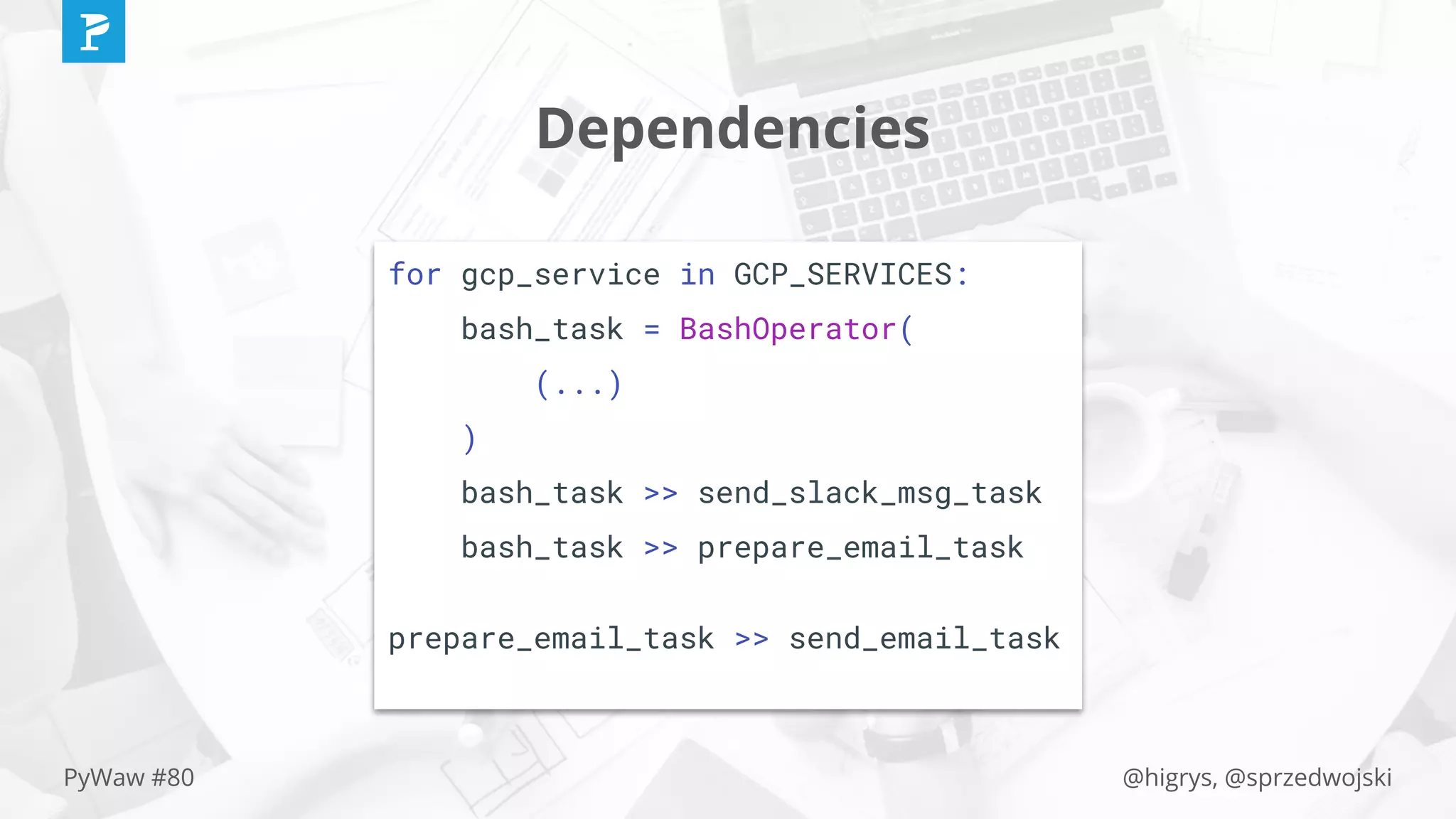

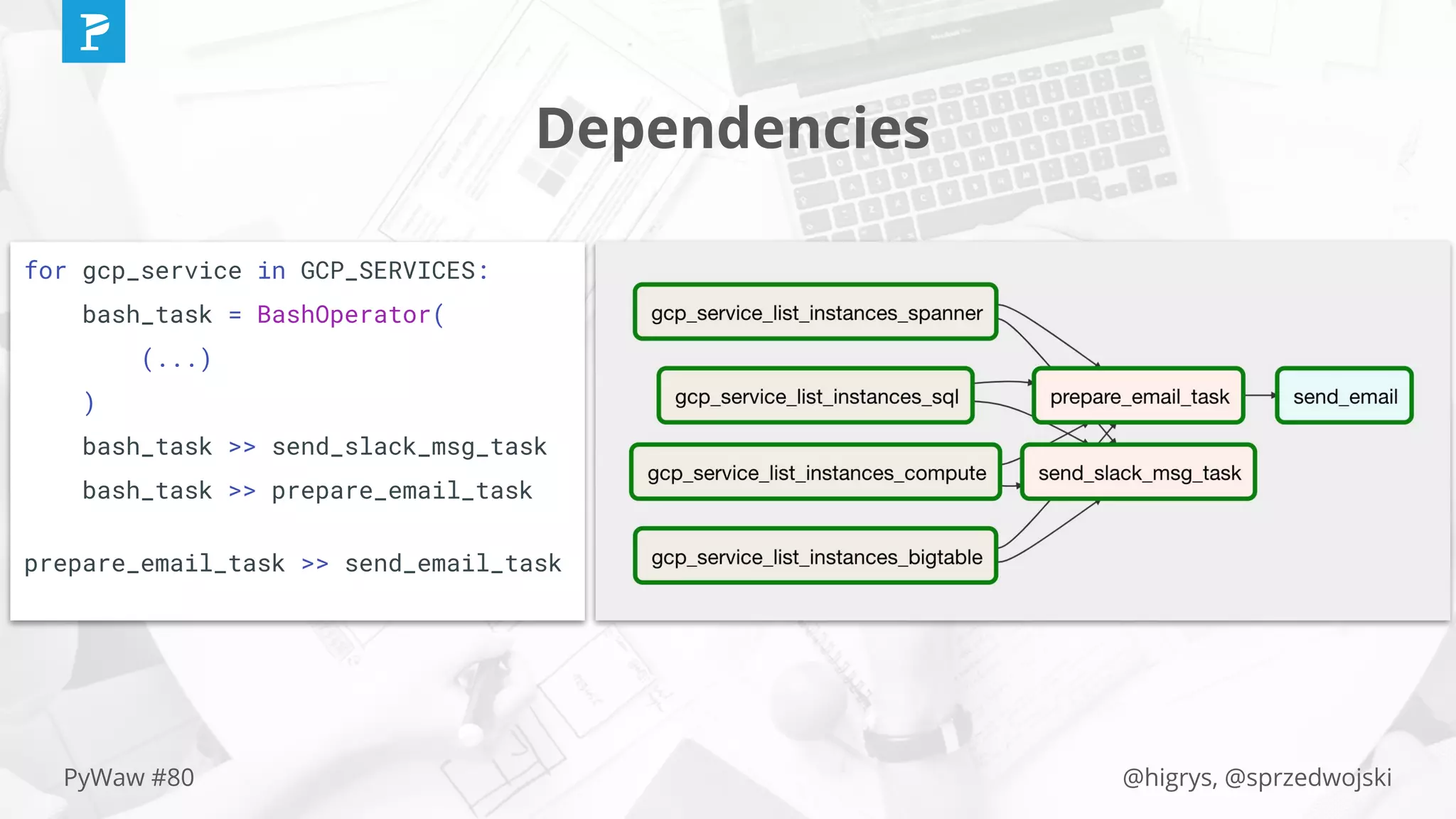

for gcp_service in GCP_SERVICES:

bash_task = BashOperator(

xcom_push=True,

bash_command=

"gcloud {} instances list | tail -n +2 | grep -oE '^[^ ]+' "

"| tr 'n' ' '".format(gcp_service[0]),

task_id="gcp_service_list_instances_{}".format(gcp_service[0]),

dag=dag

)](https://image.slidesharecdn.com/manageabledatapipelineswithairflowandkubernetes-november271145am-pywaw-191231142836/75/Manageable-data-pipelines-with-airflow-and-kubernetes-november-27-11-45-am-py-waw-26-2048.jpg)

![@higrys, @sprzedwojskiPyWaw #80

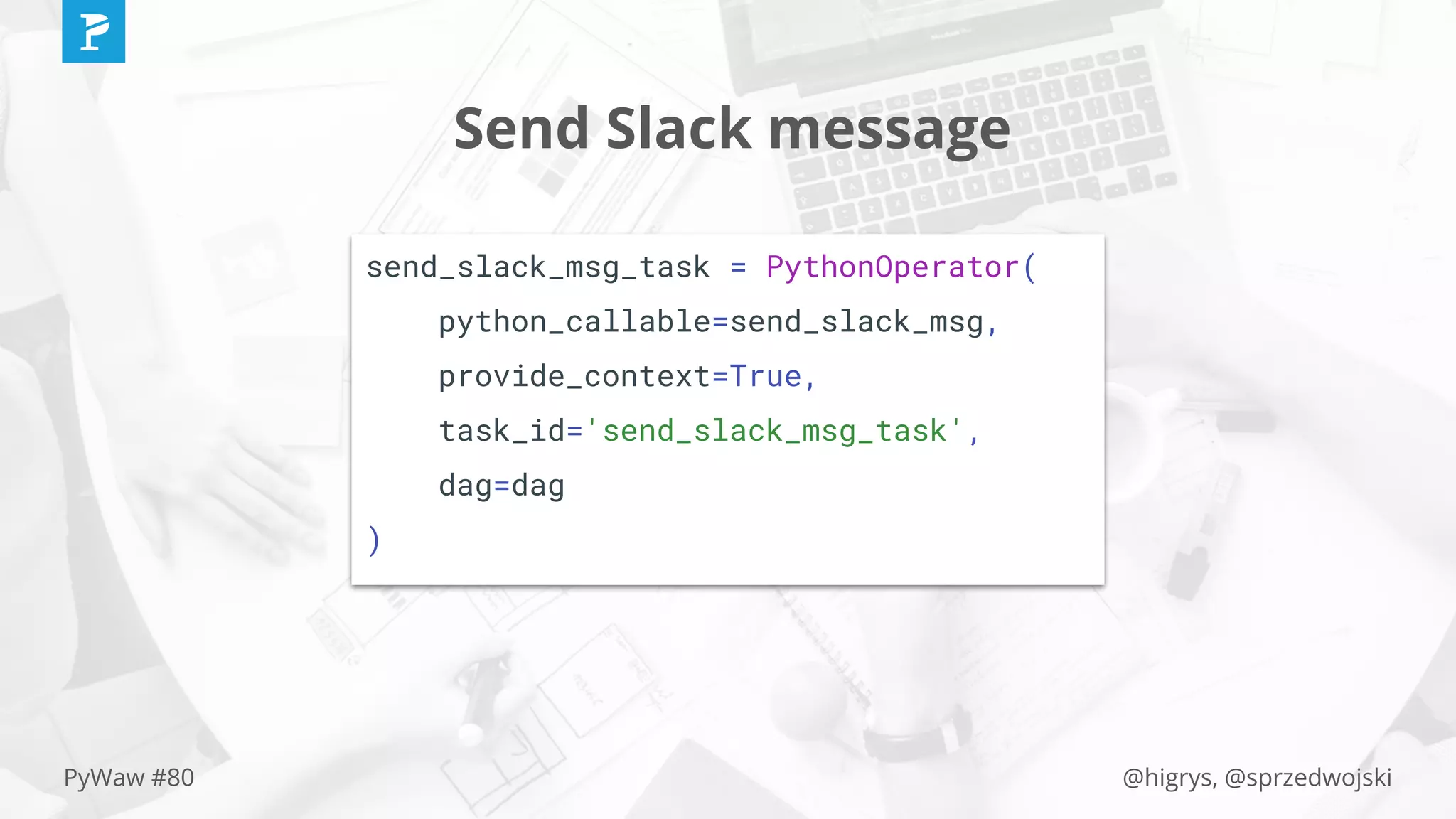

def send_slack_msg(**context):

(...)

for gcp_service in GCP_SERVICES:

result = context['task_instance'].

xcom_pull(task_ids='gcp_service_list_instances_{}'

.format(gcp_service[0]))

data = (...)

requests.post(

url=SLACK_WEBHOOK,

data=json.dumps(data),

headers={'Content-type': 'application/json'}

)](https://image.slidesharecdn.com/manageabledatapipelineswithairflowandkubernetes-november271145am-pywaw-191231142836/75/Manageable-data-pipelines-with-airflow-and-kubernetes-november-27-11-45-am-py-waw-28-2048.jpg)

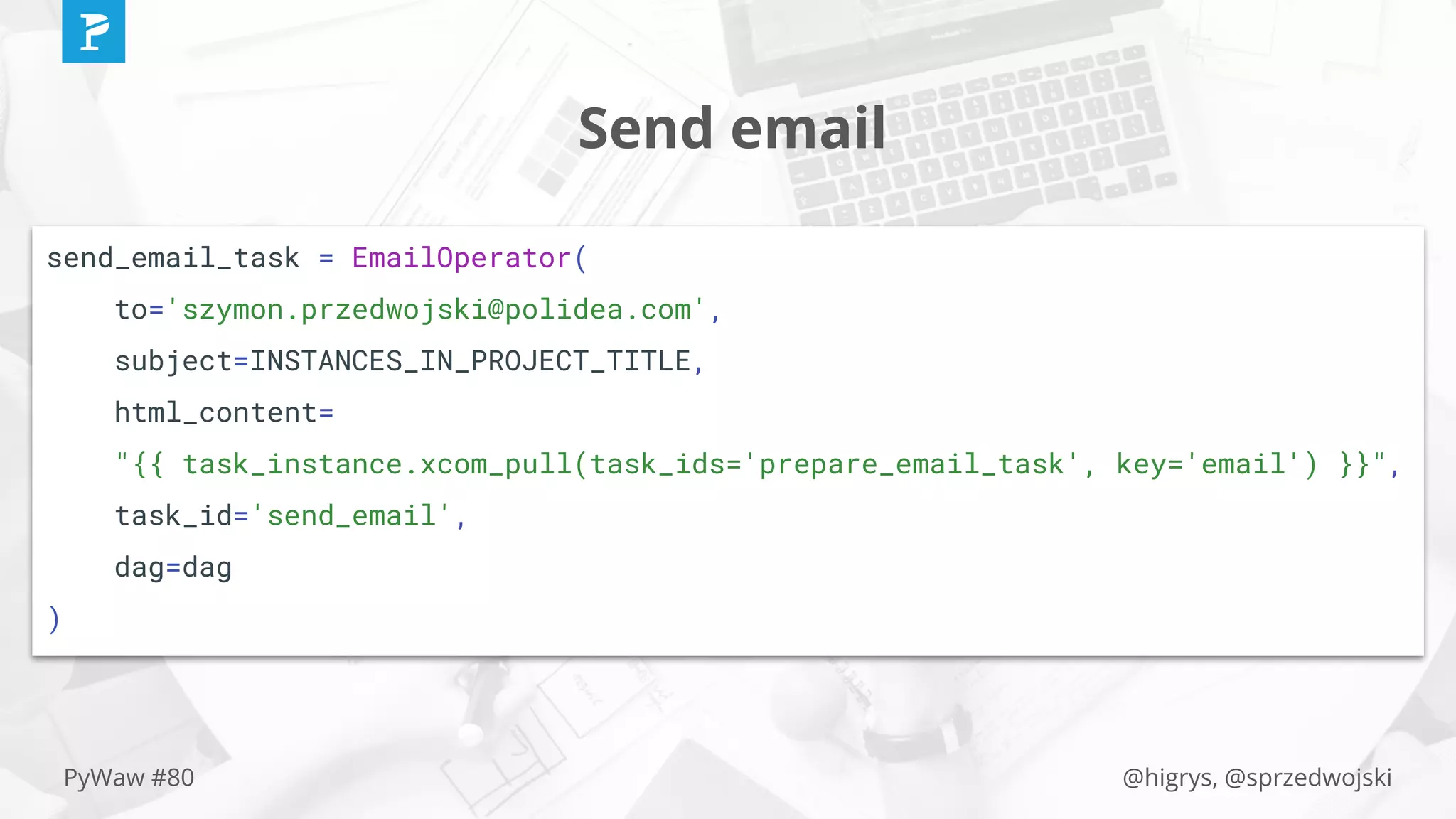

![@higrys, @sprzedwojskiPyWaw #80

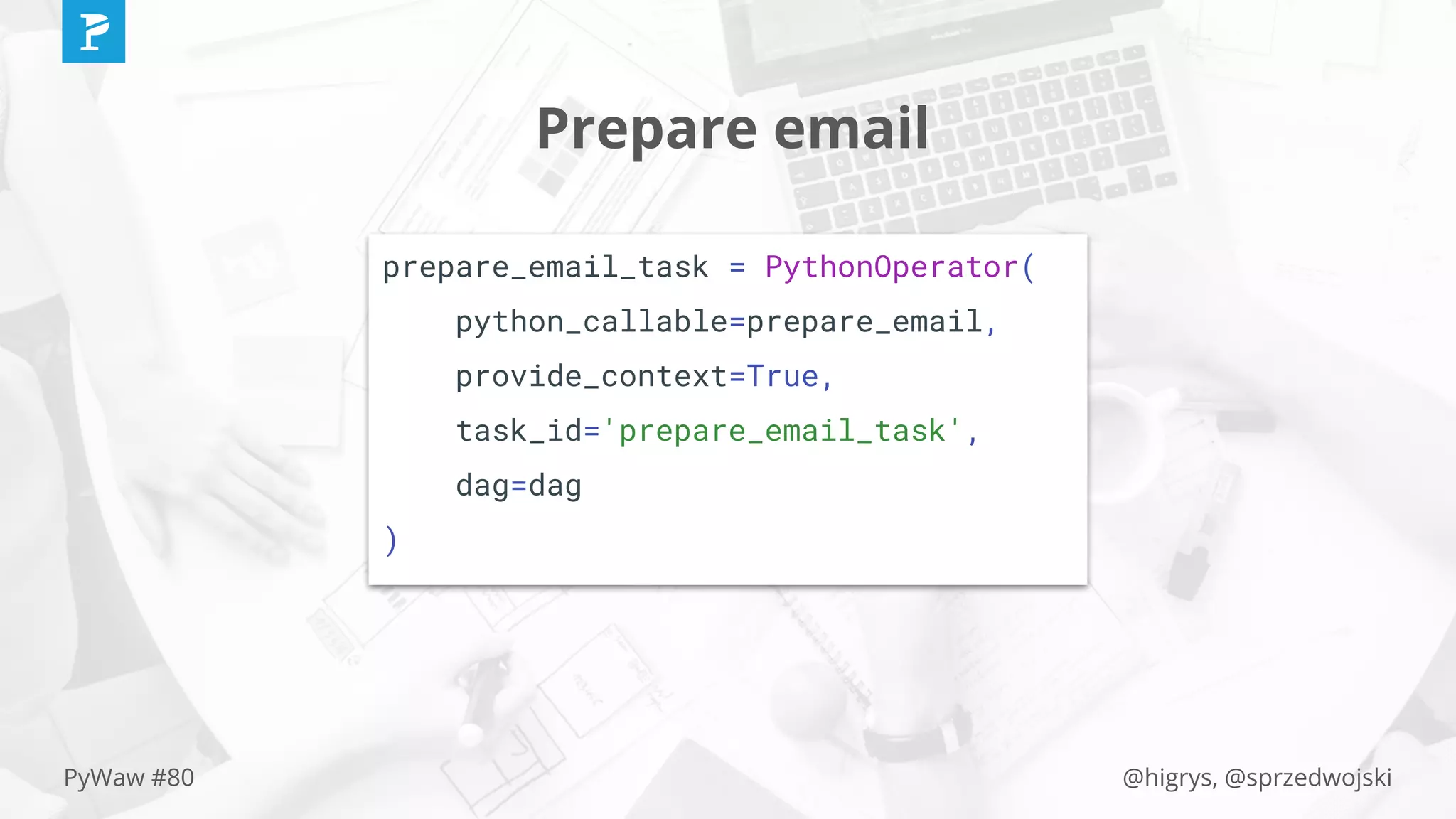

def prepare_email(**context):

(...)

for gcp_service in GCP_SERVICES:

result = context['task_instance'].

xcom_pull(task_ids='gcp_service_list_instances_{}'

.format(gcp_service[0]))

(...)

html_content = (...)

context['task_instance'].xcom_push(key='email', value=html_content)](https://image.slidesharecdn.com/manageabledatapipelineswithairflowandkubernetes-november271145am-pywaw-191231142836/75/Manageable-data-pipelines-with-airflow-and-kubernetes-november-27-11-45-am-py-waw-30-2048.jpg)