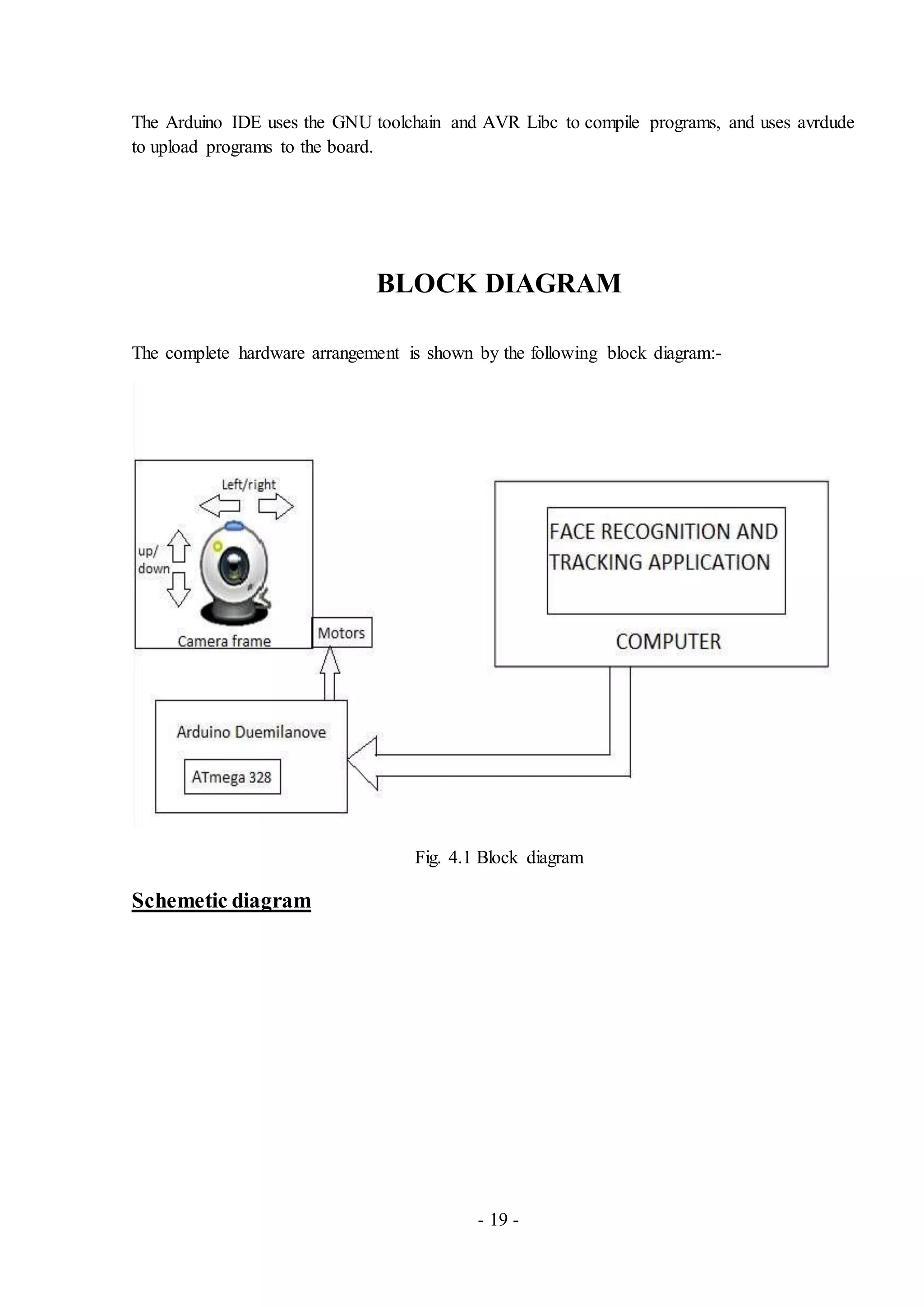

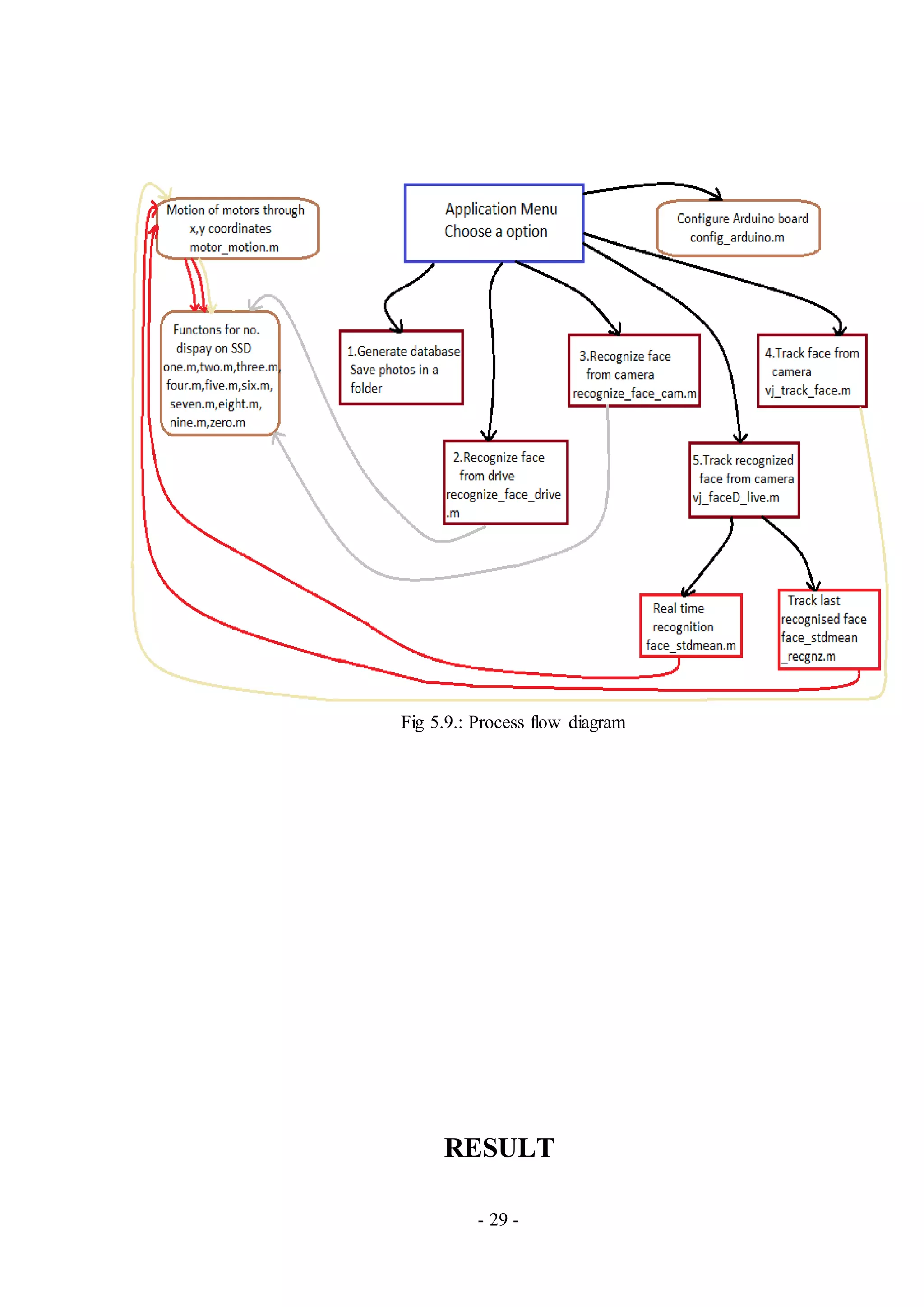

This document is a project report on a face recognition and tracking system. It includes an acknowledgements section thanking those who helped with the project. It also includes an abstract describing the project as building a system for face recognition and tracking using image processing and computer vision toolboxes in MATLAB. The document outlines the various chapters that will be included, such as introductions to image processing and the hardware and software used, including Arduino and MATLAB. It provides block diagrams of the overall system design and hardware.

![- 18 -

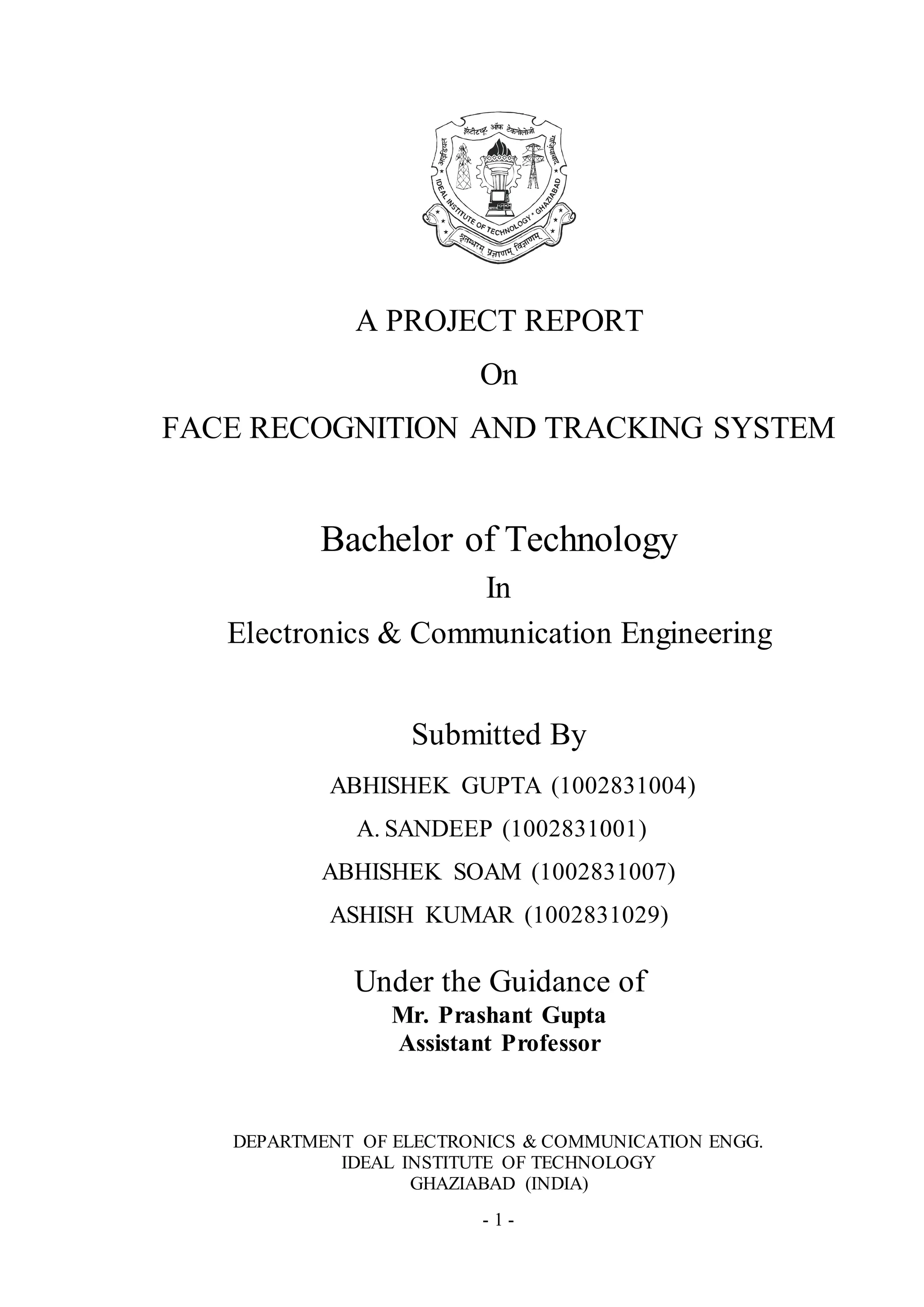

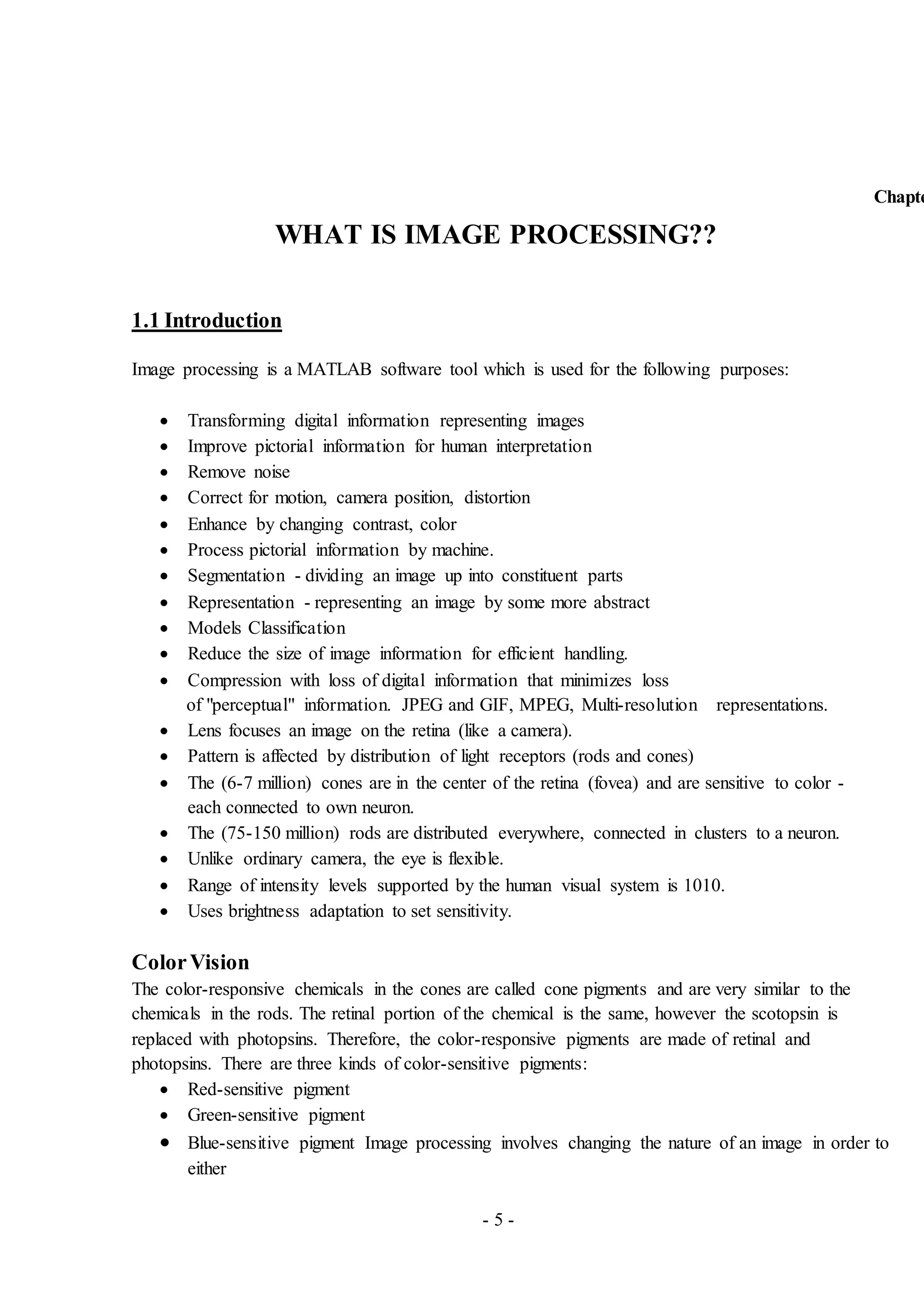

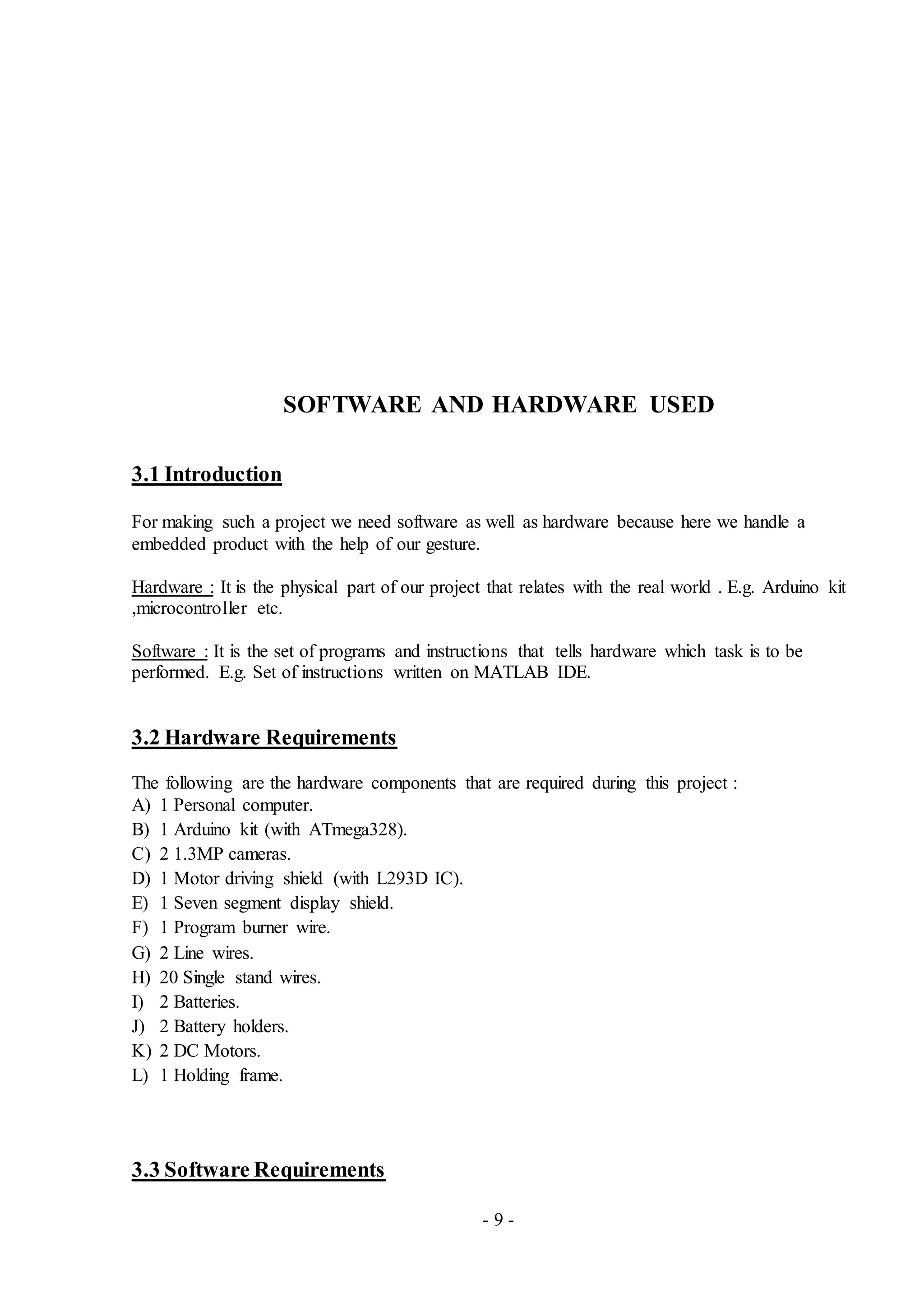

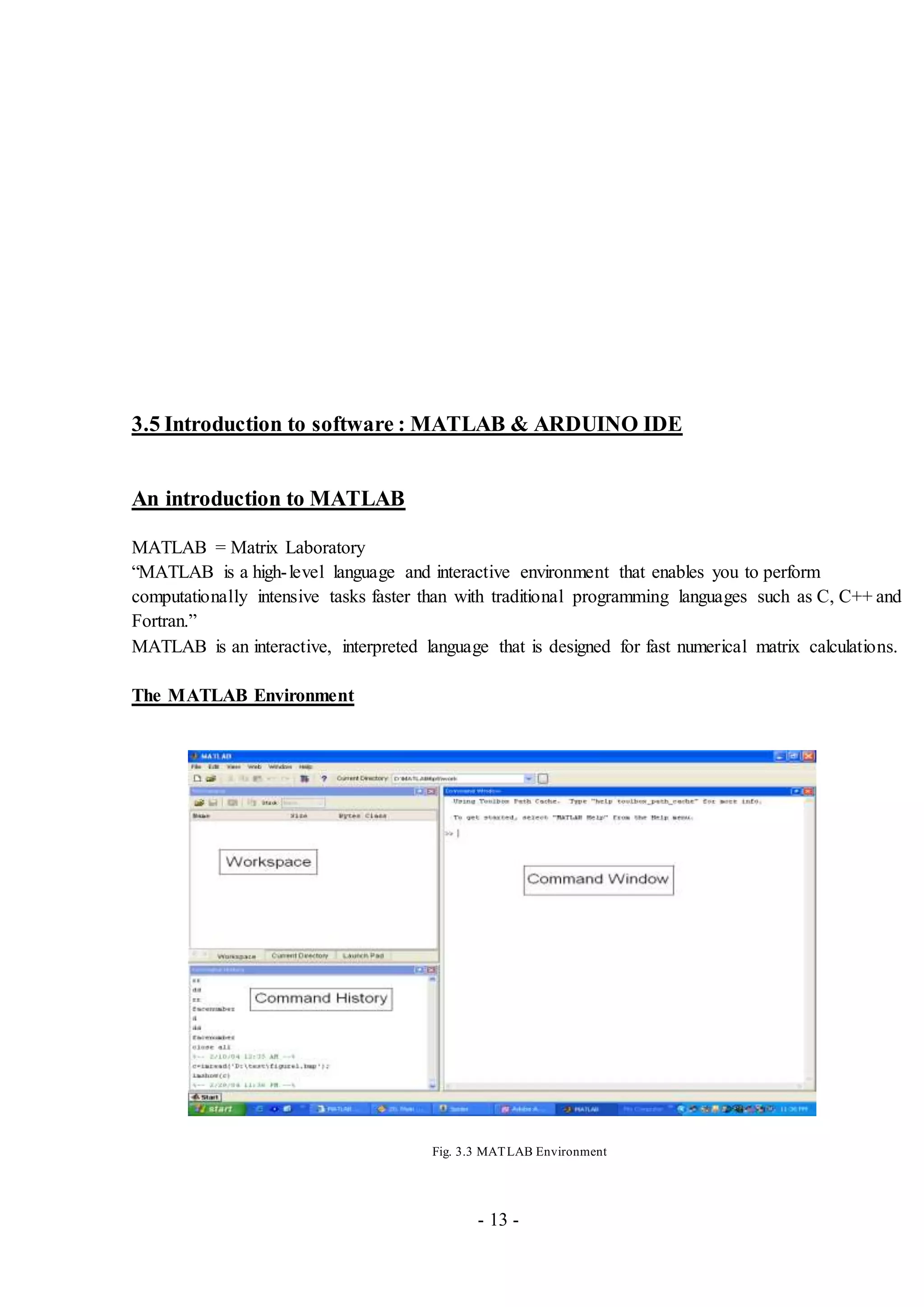

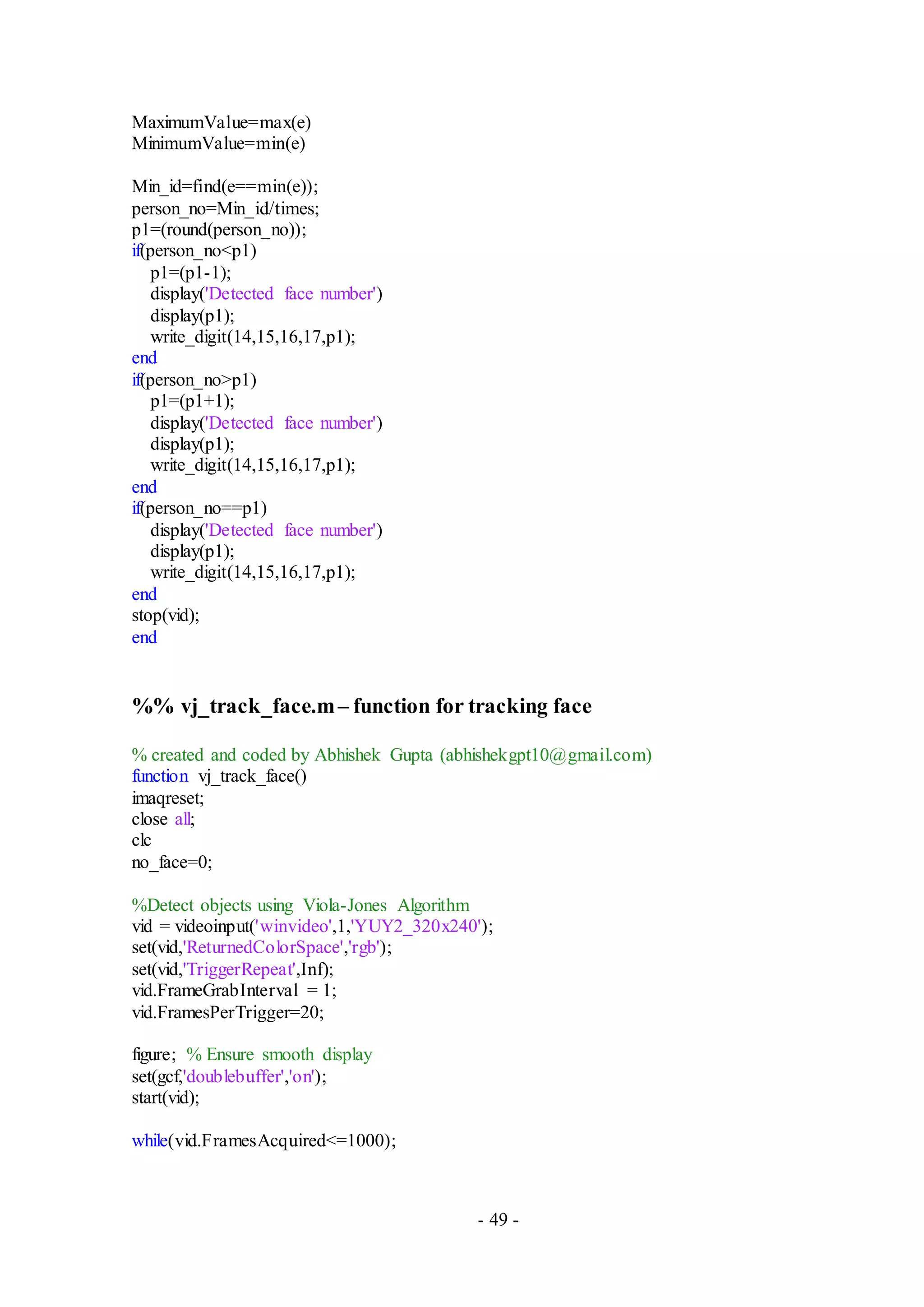

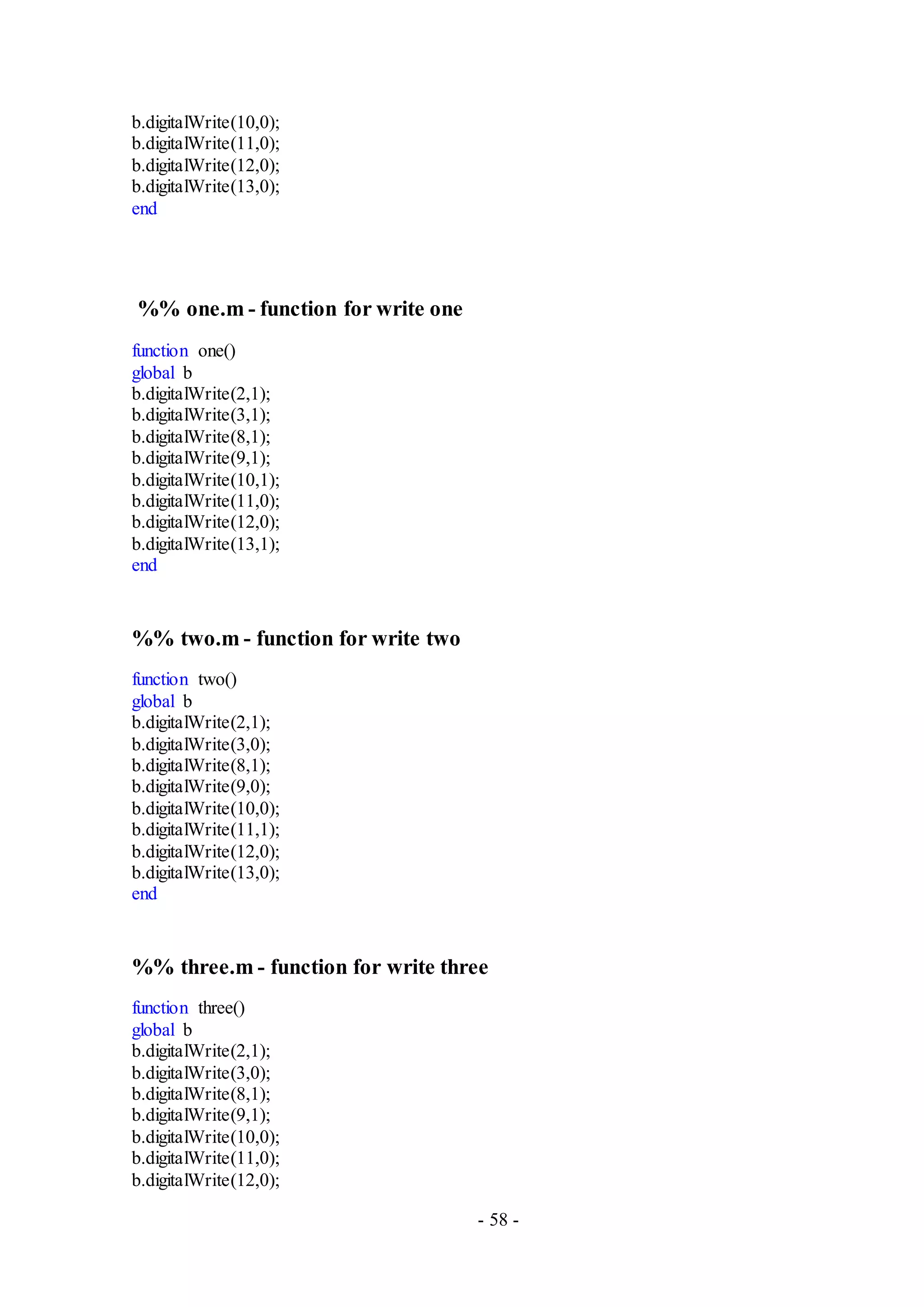

An introduction to Arduino IDE

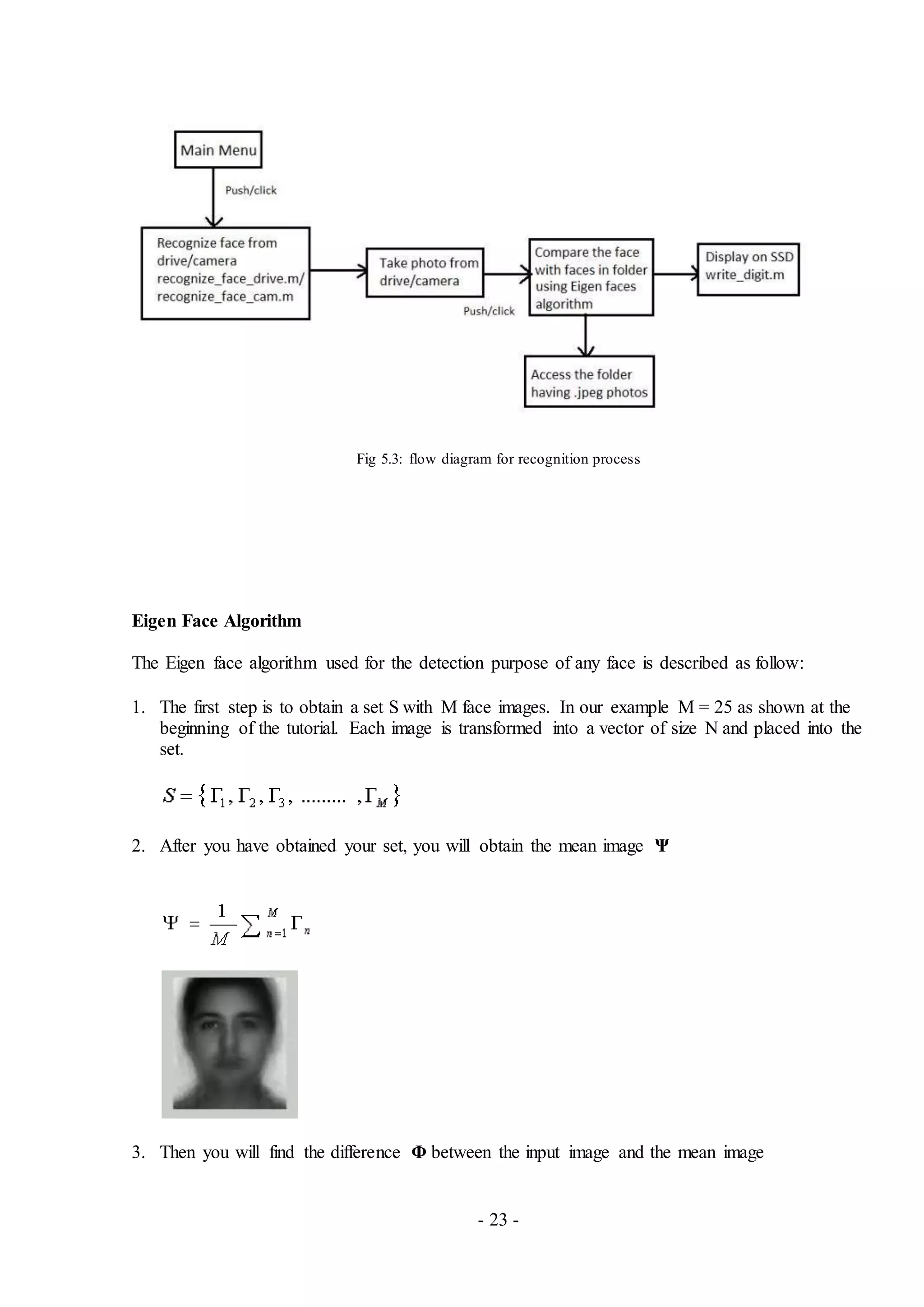

The Arduino integrated development environment (IDE) is a cross-platform application written

in Java, and is derived from the IDE for the Processing programming language and

the Wiring projects. It is designed to introduce programming to artists and other newcomers

unfamiliar with software development. It includes a code editor with features such as syntax

highlighting, brace matching, and automatic indentation, and is also capable of compiling and

uploading programs to the board with a single click. A program or code written for Arduino is

called a "sketch".

Arduino programs are written in C or C++. The Arduino IDE comes with a software library called

"Wiring" from the original Wiring project, which makes many common input/output operations

much easier. Users only need define two functions to make a runnable cyclic executive program:

setup(): a function run once at the start of a program that can initialize settings

loop(): a function called repeatedly until the board powers off

#define LED_PIN 13

void setup () {

pinMode (LED_PIN, OUTPUT); // Enable pin 13 for digital output

}

void loop () {

digitalWrite (LED_PIN, HIGH); // Turn on the LED

delay (1000); // Wait one second (1000 milliseconds)

digitalWrite (LED_PIN, LOW); // Turn off the LED

delay (1000); // Wait one second

}

It is a feature of most Arduino boards that they have an LED and load resistor connected between

pin 13 and ground, a convenient feature for many simple tests.[9] The previous code would not be

seen by a standard C++ compiler as a valid program, so when the user clicks the "Upload to I/O

board" button in the IDE, a copy of the code is written to a temporary file with an extra include

header at the top and a very simple main() function at the bottom, to make it a valid C++ program.](https://image.slidesharecdn.com/ccd30660-5031-44f9-a236-c4de61b893b7-150308124157-conversion-gate01/75/MAJOR-PROJECT-18-2048.jpg)

![- 39 -

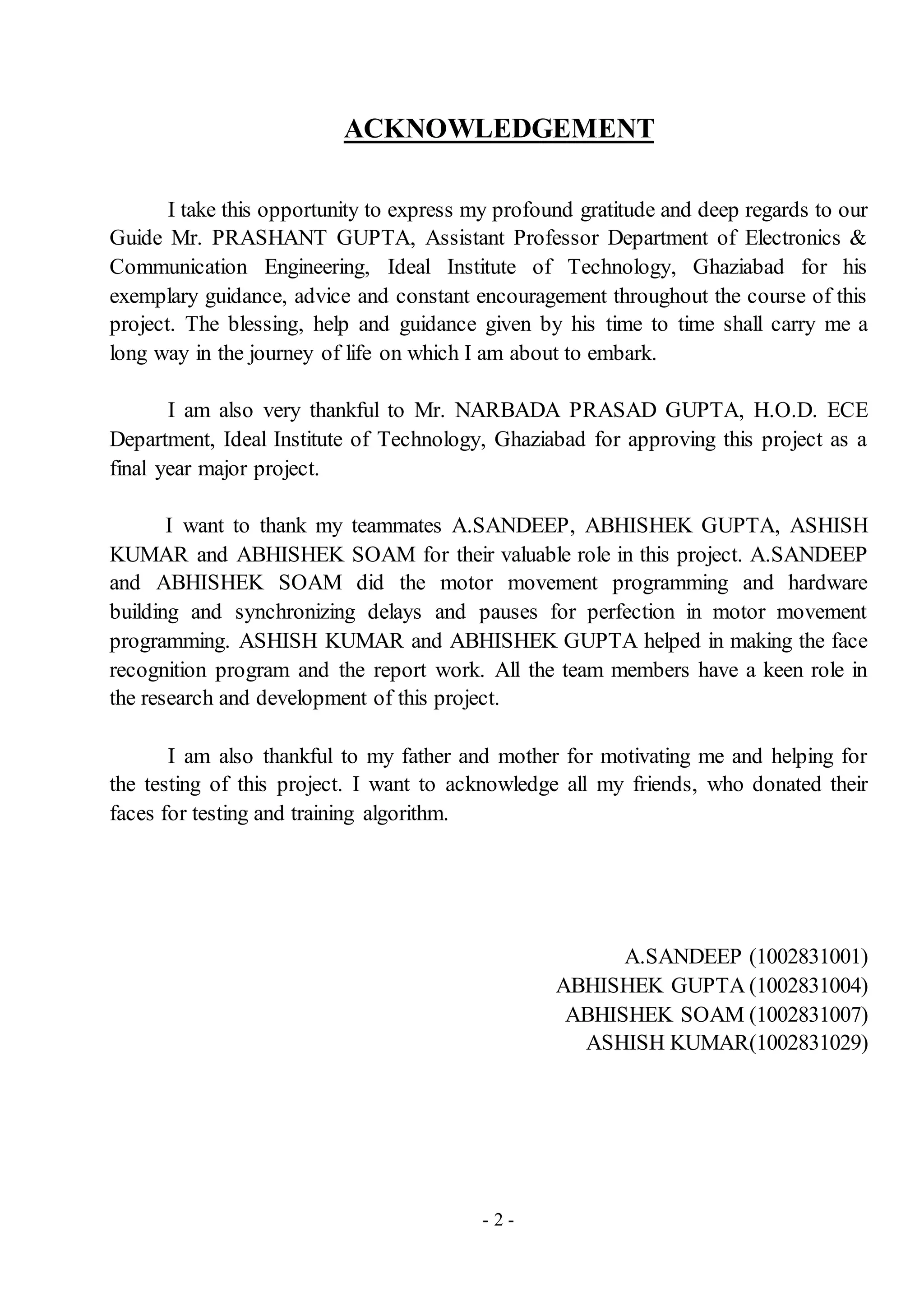

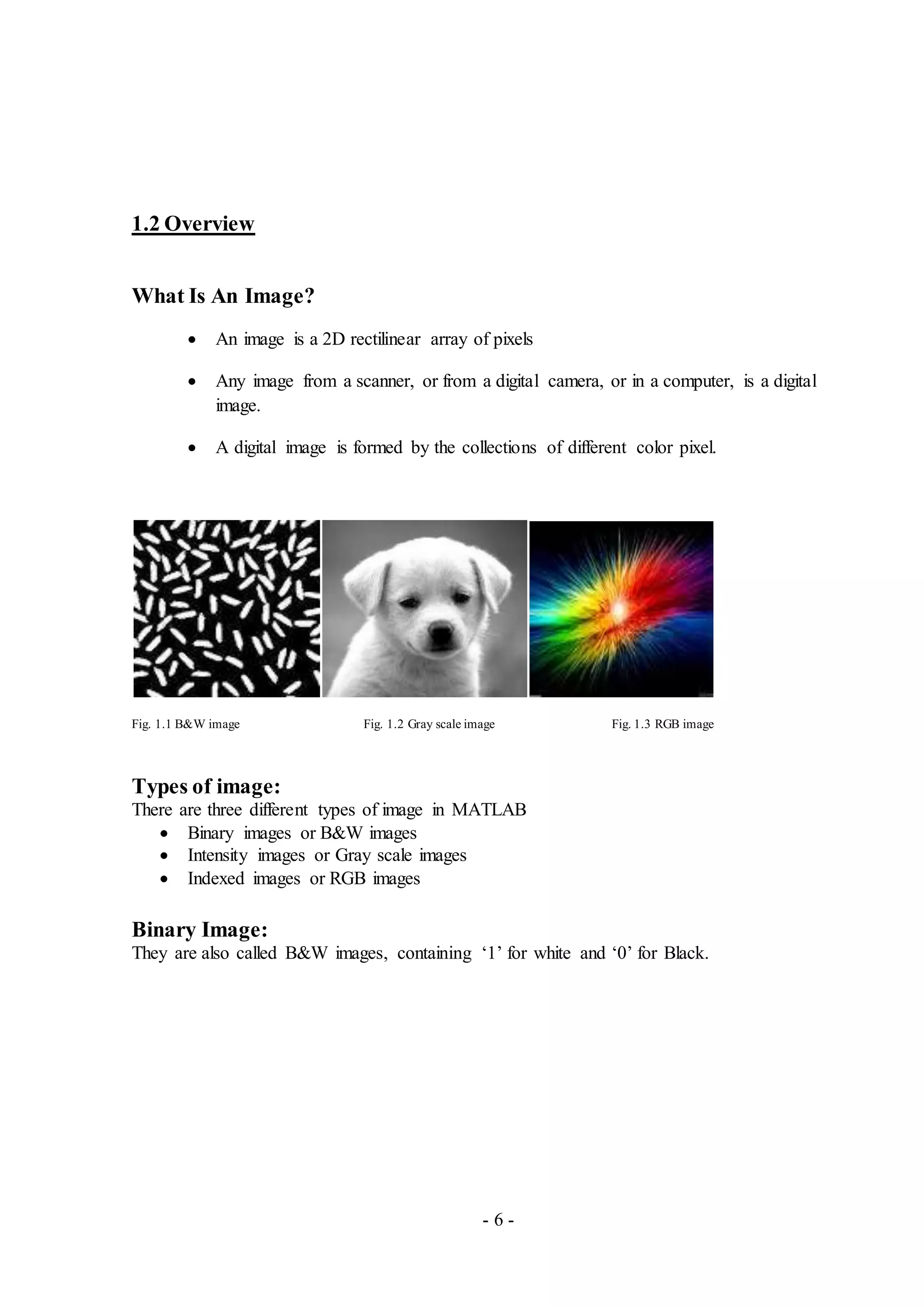

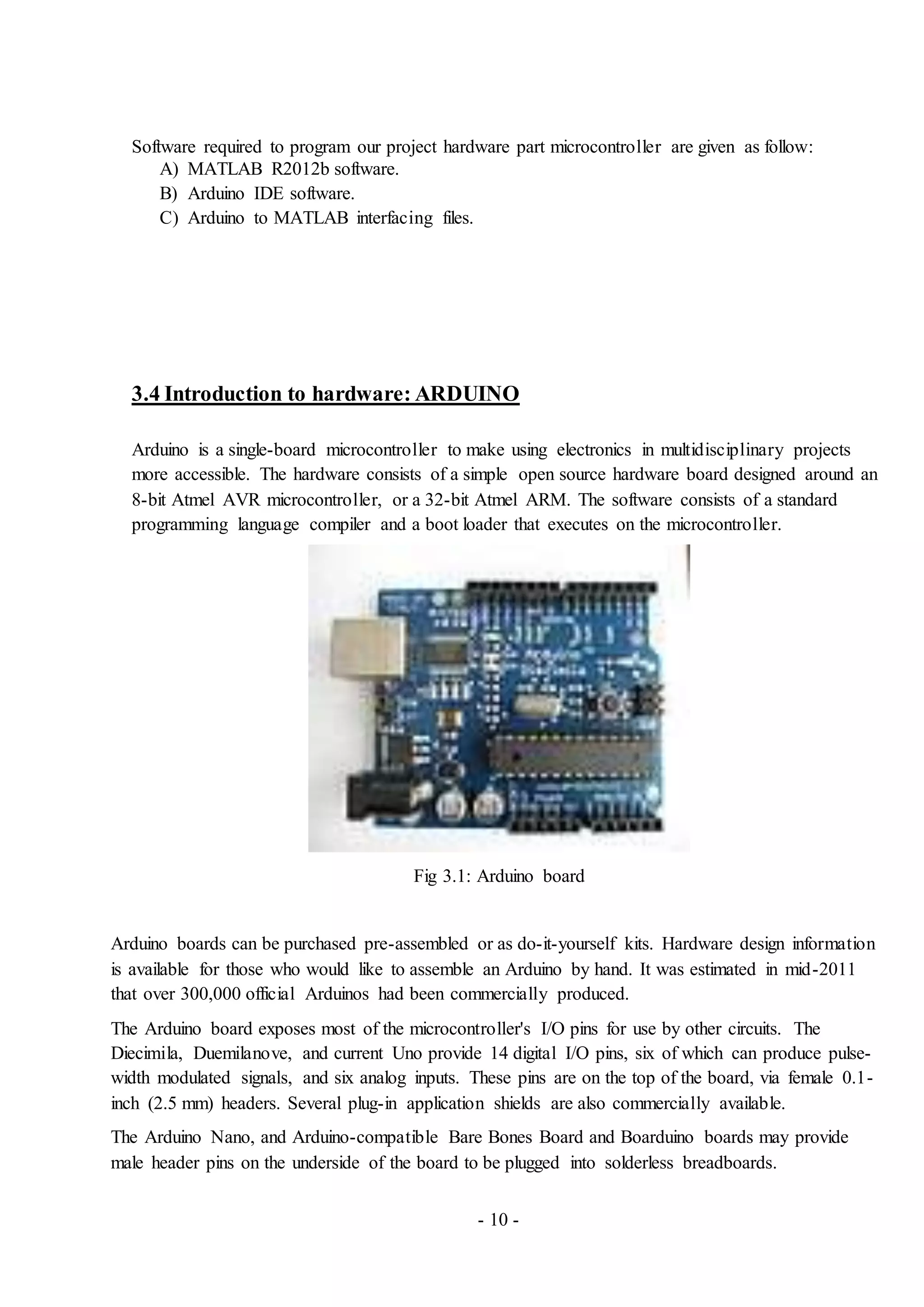

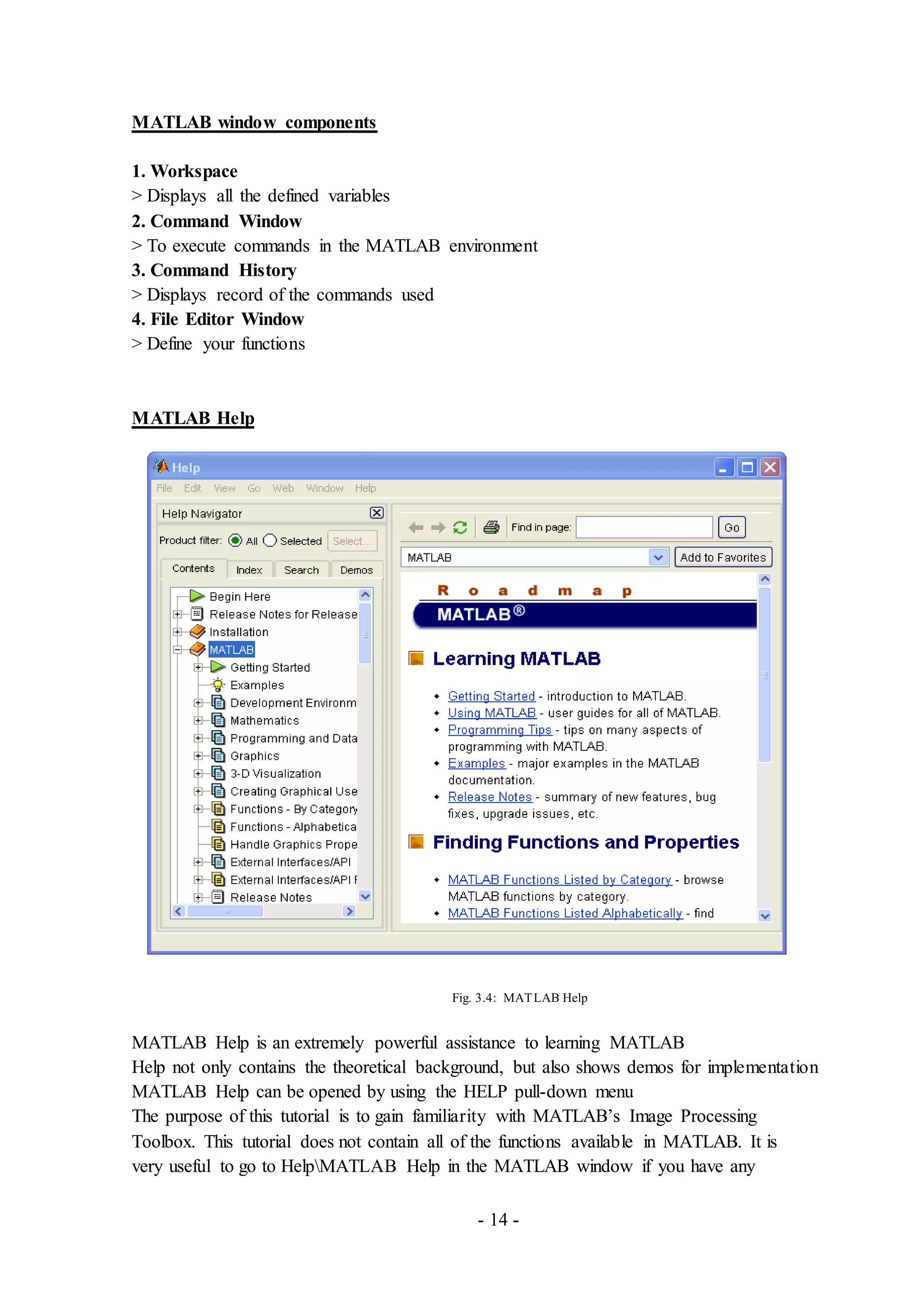

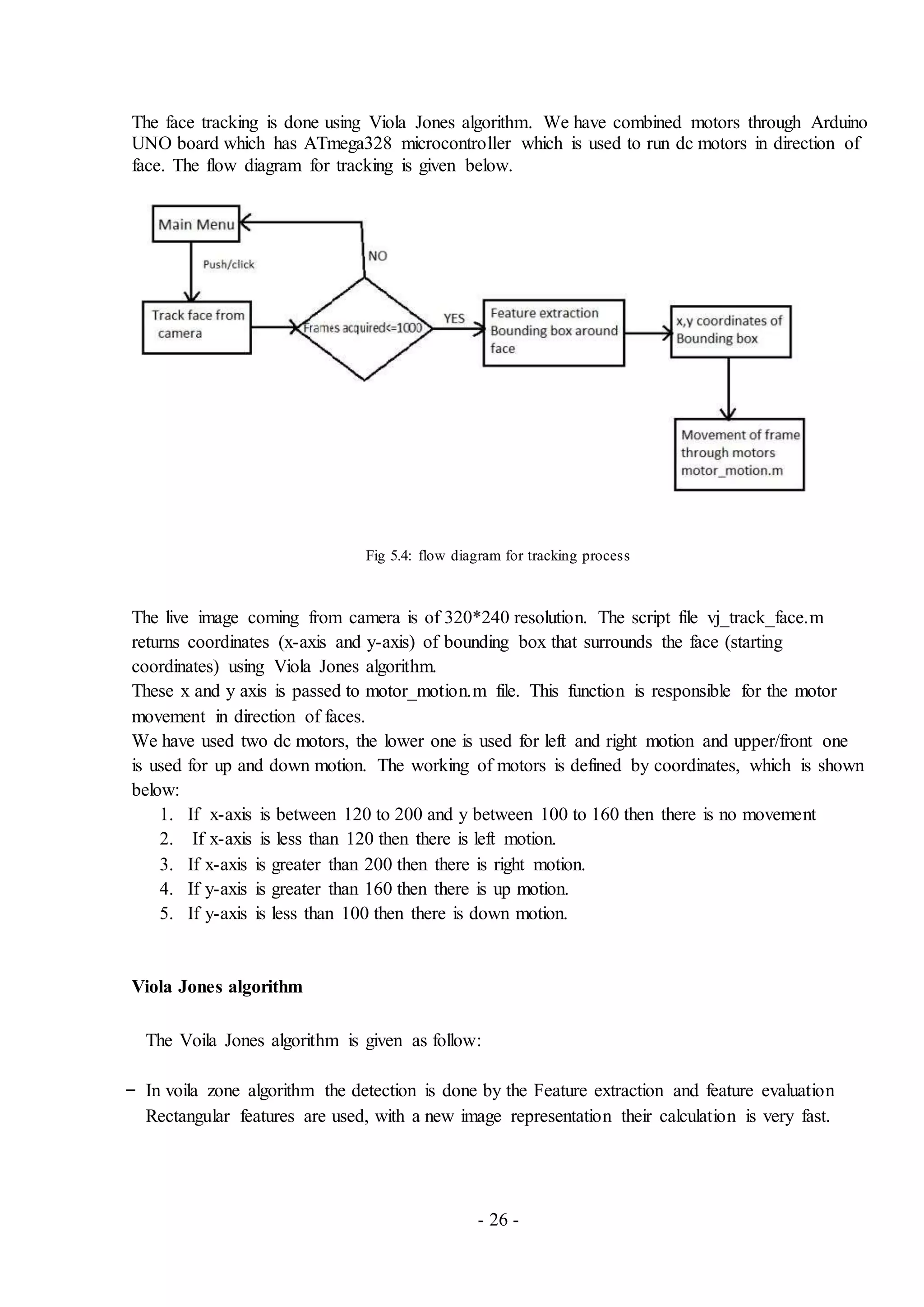

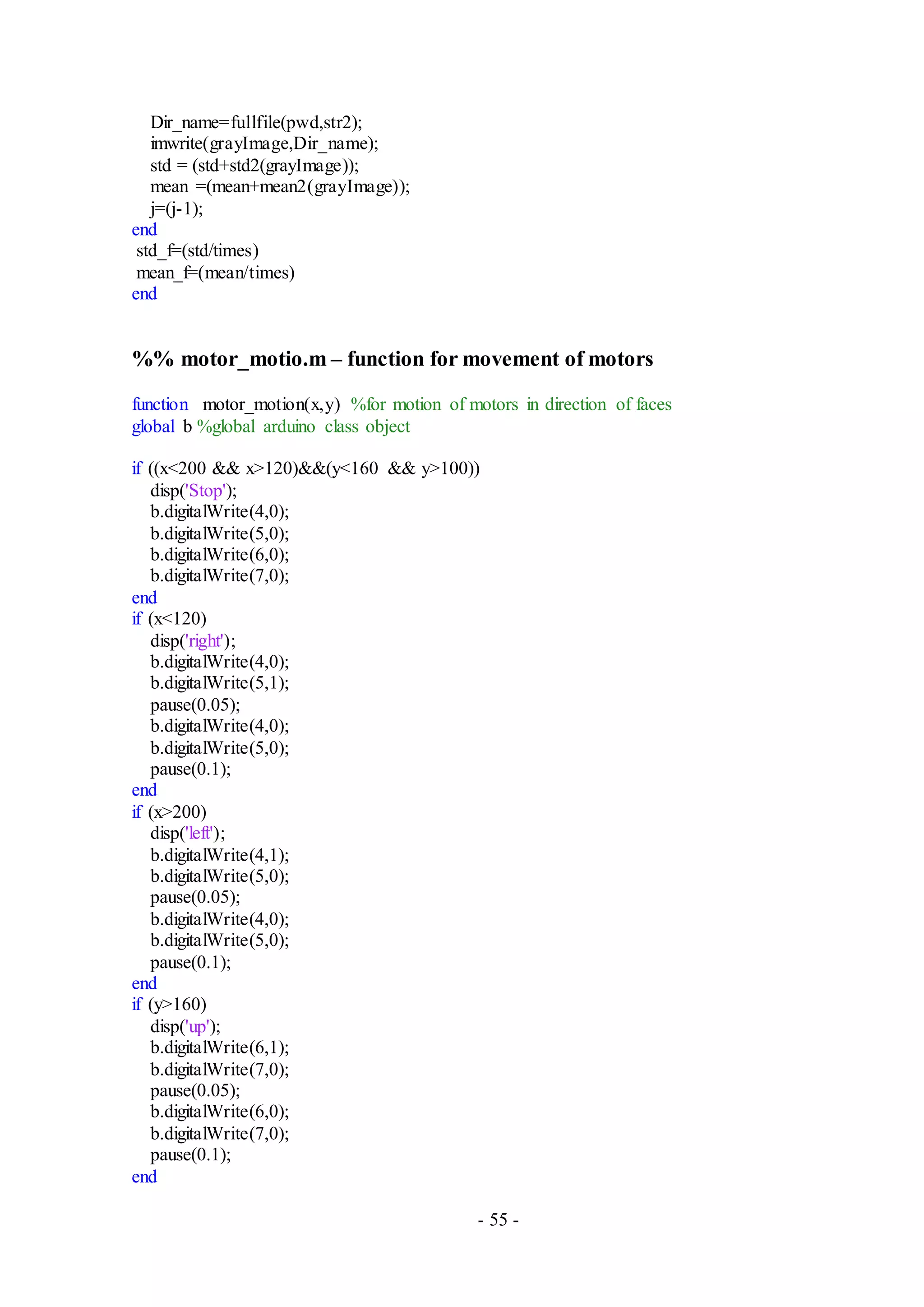

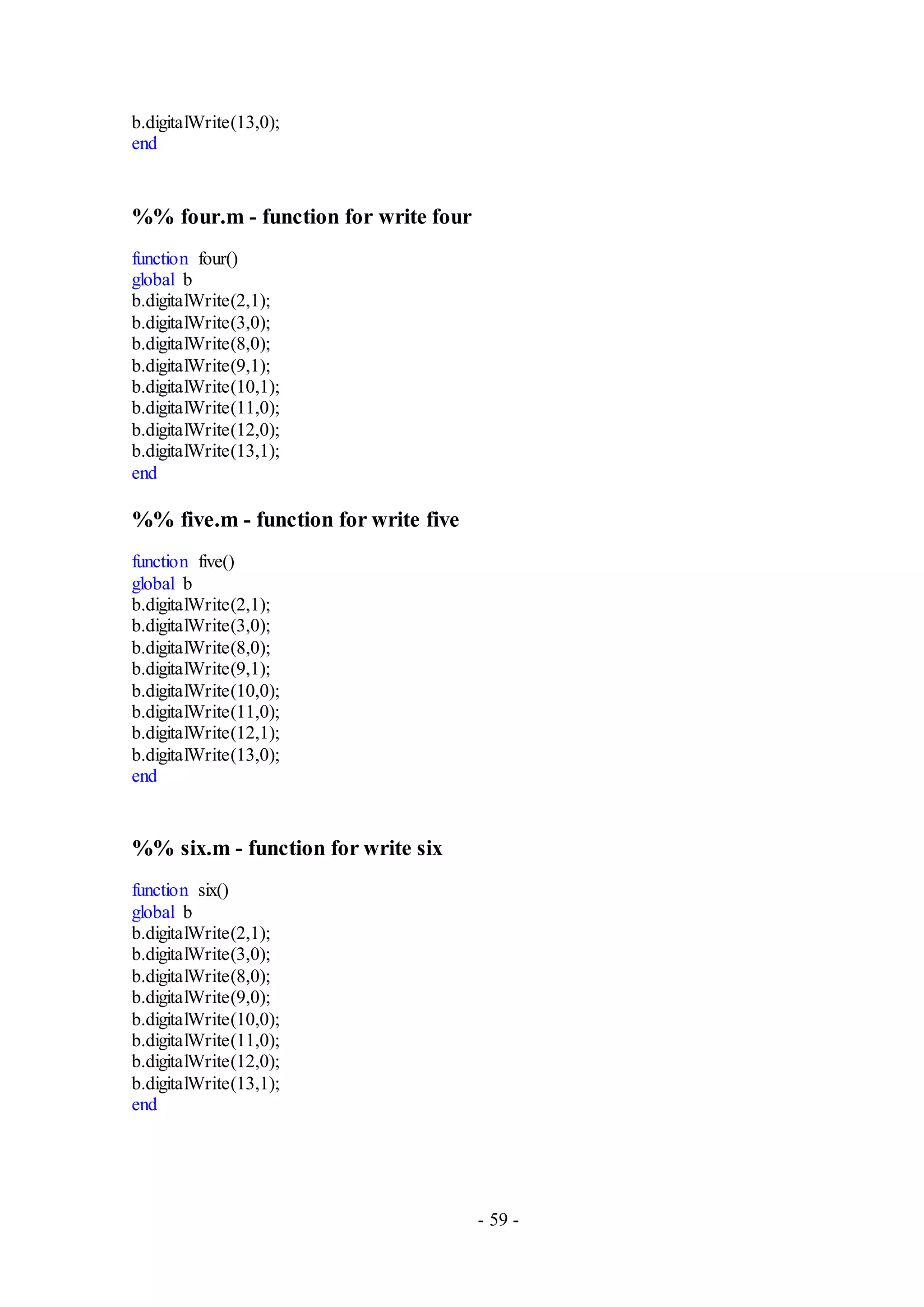

%% config_arduino.m– for configuring Arduino board

function config_arduino() %function for configuring arddino board

global b %global arduino class object

b=arduino('COM29');

b.pinMode(4,'OUTPUT'); % pin 4&5 for right & left and pin 6&7 for up & down

b.pinMode(5,'OUTPUT');

b.pinMode(6,'OUTPUT');

b.pinMode(7,'OUTPUT');

b.pinMode(2,'OUTPUT');

b.pinMode(3,'OUTPUT');

b.pinMode(8,'OUTPUT');

b.pinMode(9,'OUTPUT');

b.pinMode(10,'OUTPUT');

b.pinMode(11,'OUTPUT');

b.pinMode(12,'OUTPUT');

b.pinMode(13,'OUTPUT'); % pin 2,3,8,9,10,11,12,13 for seven segment display 8 leds

b.pinMode(14,'OUTPUT');

b.pinMode(15,'OUTPUT');

b.pinMode(16,'OUTPUT');

b.pinMode(17,'OUTPUT'); % pin 14,15,16,17 for multiplexing 4 seven segment display

end

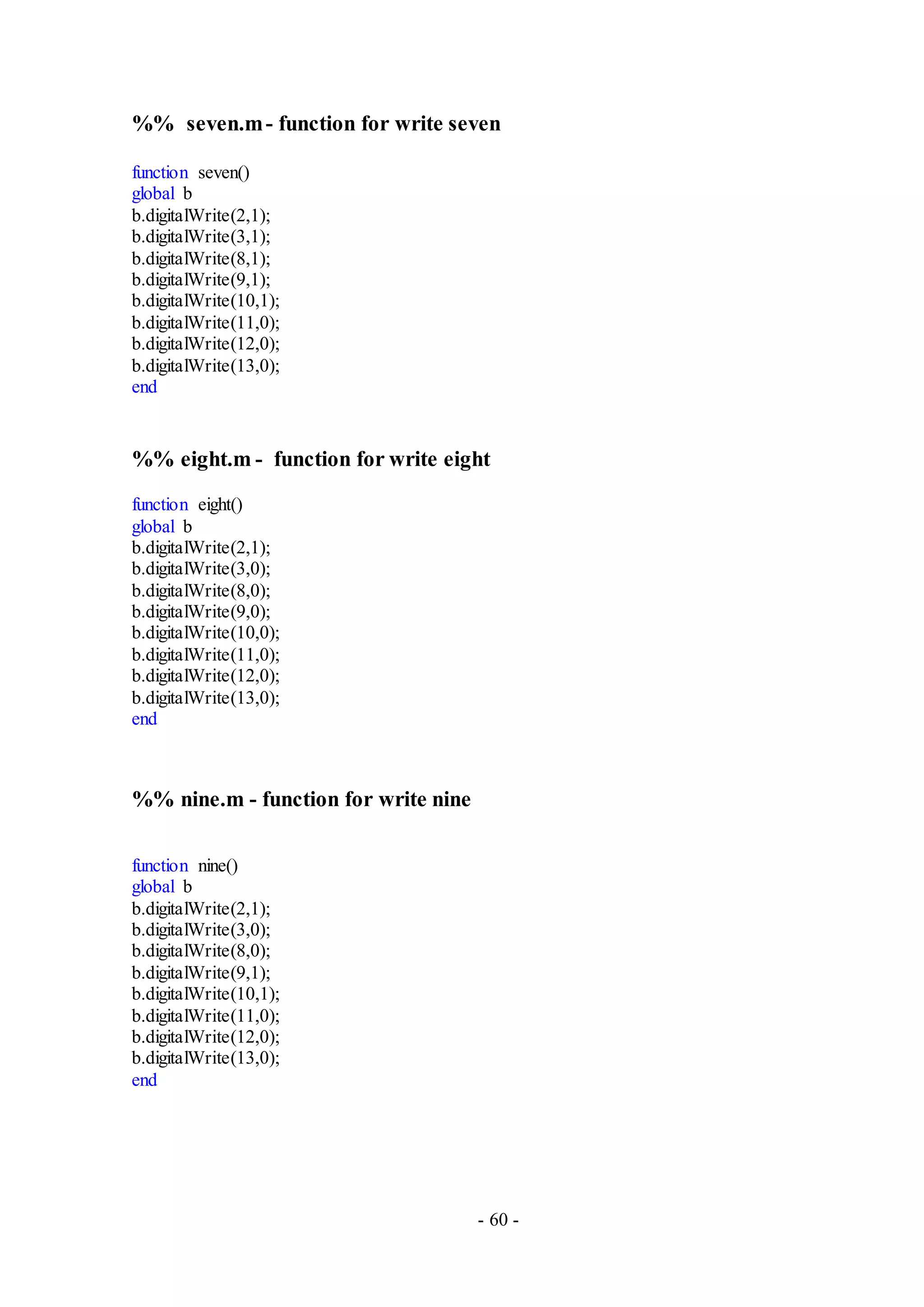

%% recognize_face_drive.m– function for matching face using Eigenface

algorithm from hard drive

% Thanks to Santiago Serrano

function Min_id = recognize_face_drive(M)

close all

clc

% number of images on your training set.

%Chosen std and mean.

%It can be any number that it is close to the std and mean of most of the images.

um=100;

ustd=80;

person_no=0;

times=5;

%read and show images(bmp);

S=[]; %img matrix

figure(1);

for i=1:M

str=strcat(int2str(i),'.jpg'); %concatenates two strings that form the name of the image

eval('img=imread(str);');](https://image.slidesharecdn.com/ccd30660-5031-44f9-a236-c4de61b893b7-150308124157-conversion-gate01/75/MAJOR-PROJECT-39-2048.jpg)

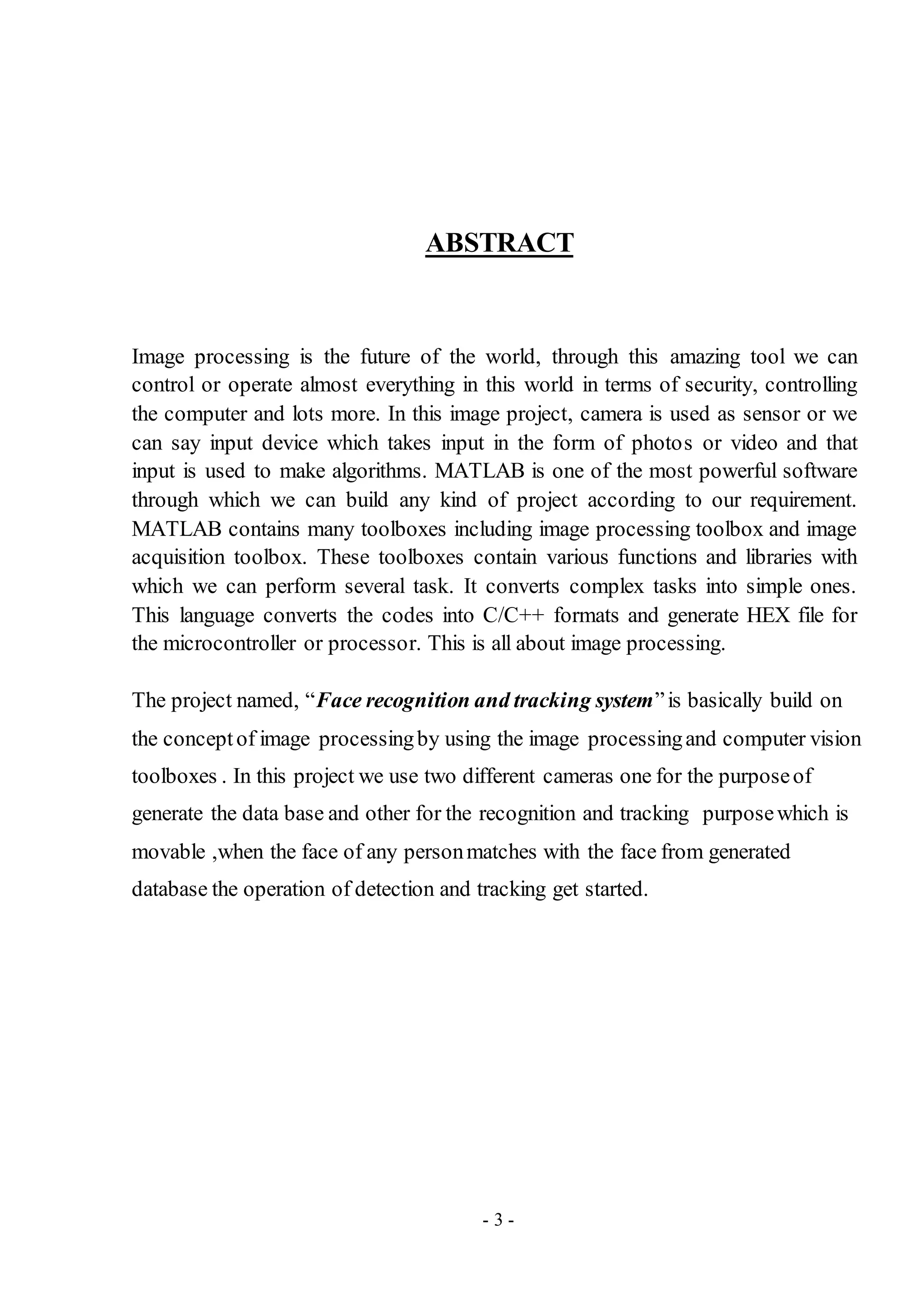

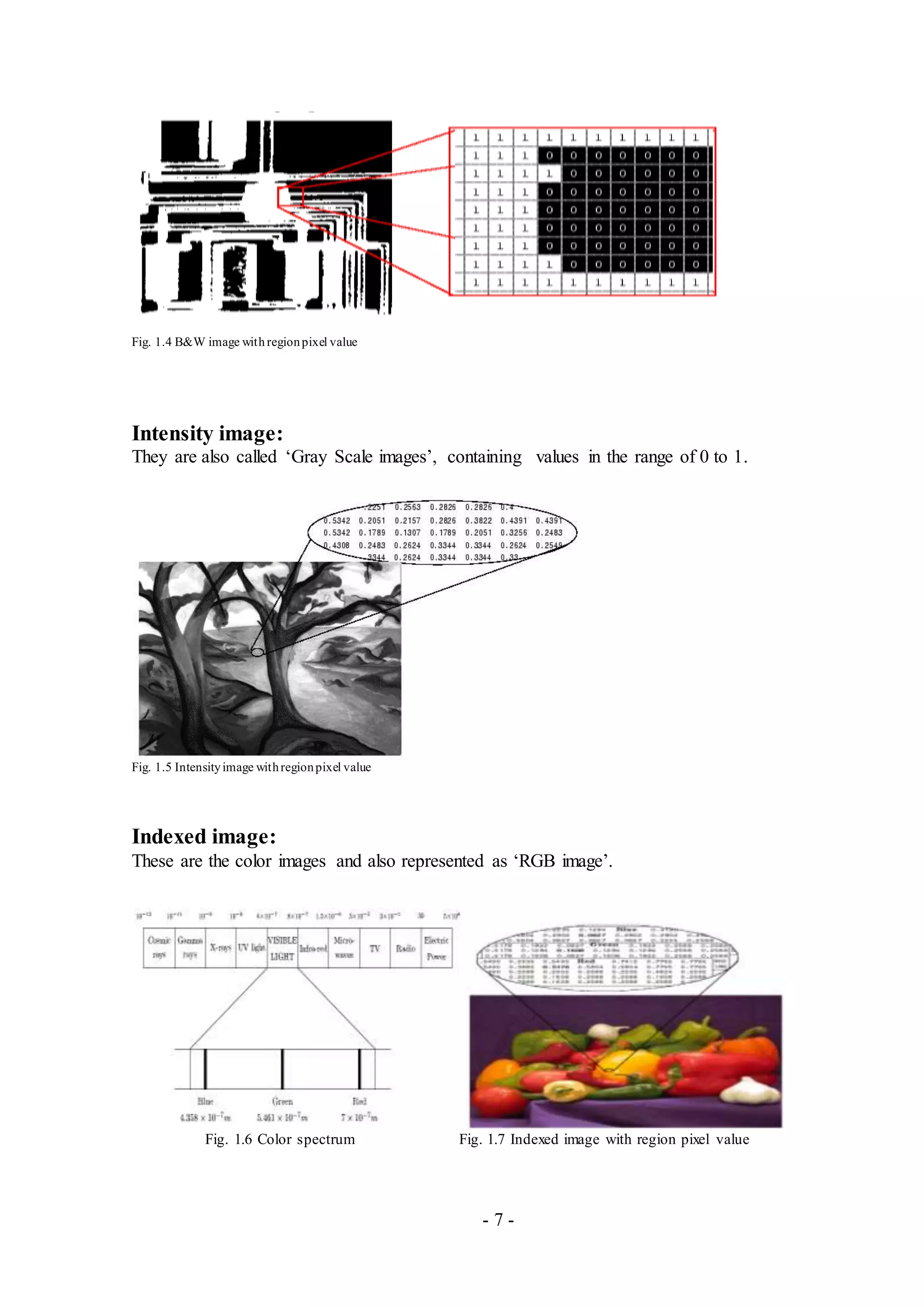

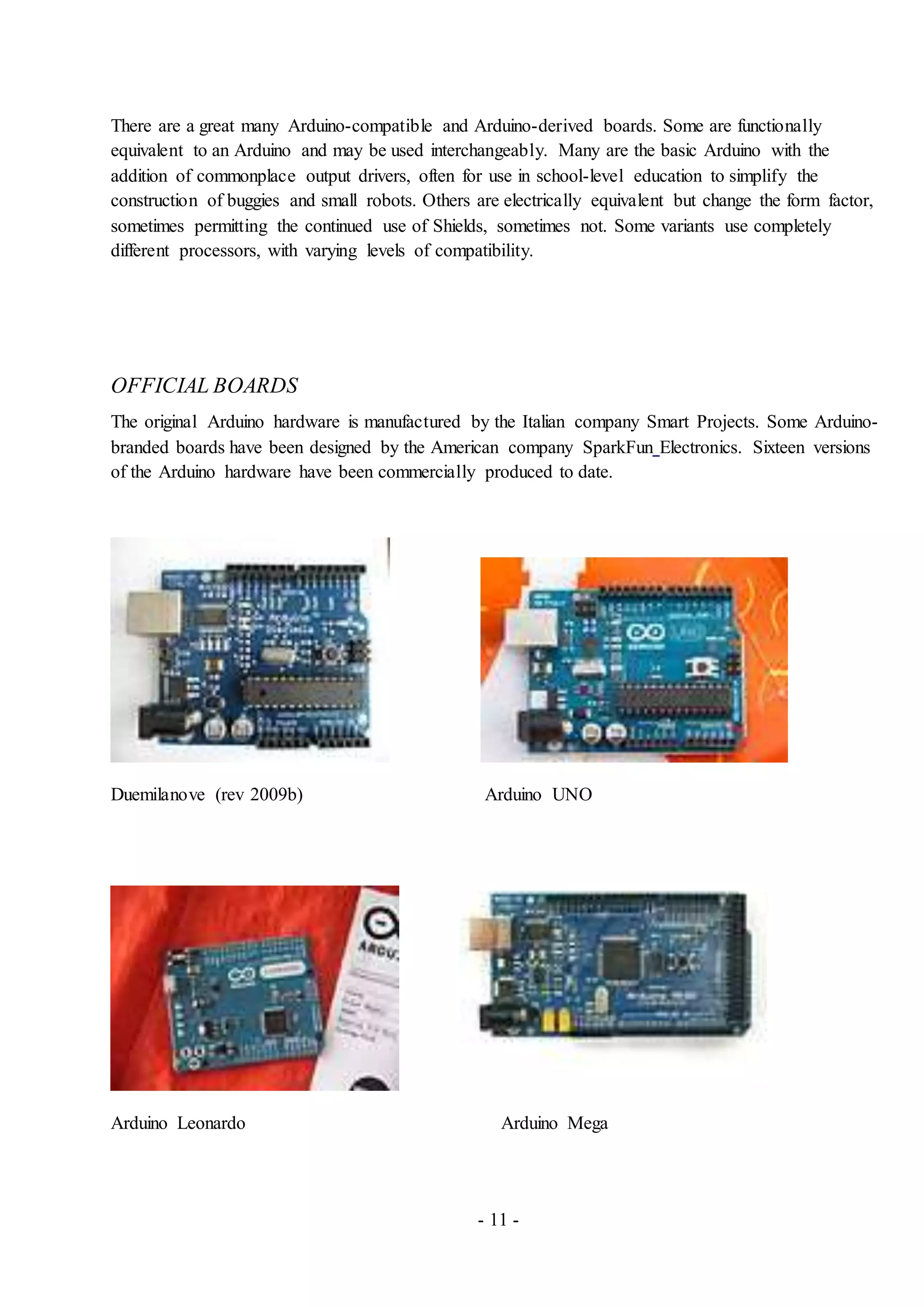

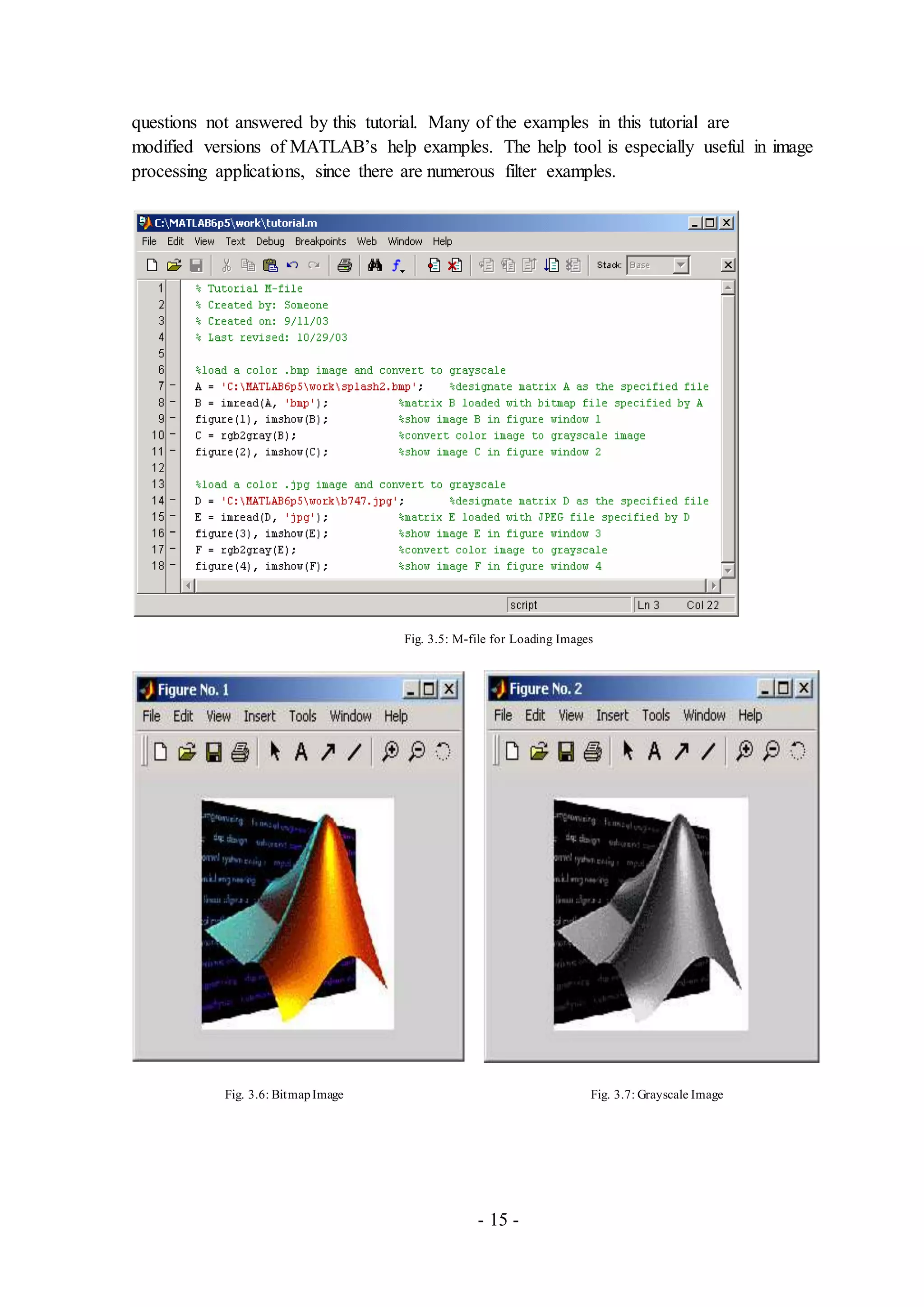

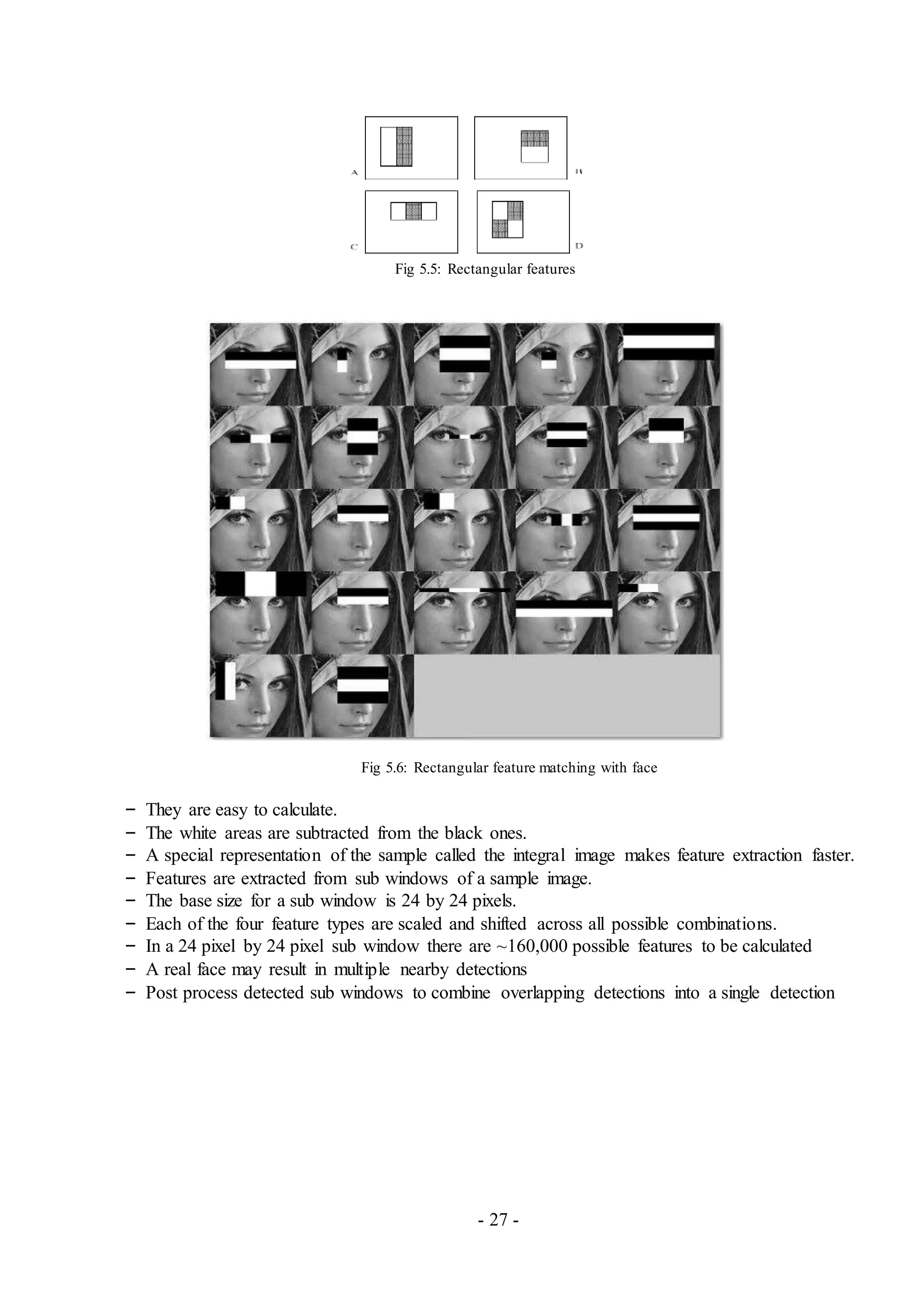

![- 40 -

%eval('img=rgb2gray(image);');

subplot(ceil(sqrt(M)),ceil(sqrt(M)),i)

imshow(img)

if i==3

title('Training set','fontsize',18)

end

drawnow;

[irow icol]=size(img); % get the number of rows (N1) and columns (N2)

temp=reshape(img',irow*icol,1); %creates a (N1*N2)x1 matrix

S=[S temp]; %X is a N1*N2xM matrix after finishing the sequence

%this is our S

end

%Here we change the mean and std of all images. We normalize all images.

%This is done to reduce the error due to lighting conditions.

for i=1:size(S,2)

temp=double(S(:,i));

m=mean(temp);

st=std(temp);

S(:,i)=(temp-m)*ustd/st+um;

end

%show normalized images

figure(2);

for i=1:M

str=strcat(int2str(i),'.jpg');

img=reshape(S(:,i),icol,irow);

img=img';

eval('imwrite(img,str)');

subplot(ceil(sqrt(M)),ceil(sqrt(M)),i)

imshow(img)

drawnow;

if i==3

title('Normalized Training Set','fontsize',18)

end

end

%mean image;

m=mean(S,2); %obtains the mean of each row instead of each column

tmimg=uint8(m); %converts to unsigned 8-bit integer. Values range from 0 to 255

img=reshape(tmimg,icol,irow); %takes the N1*N2x1 vector and creates a N2xN1 matrix

img=img'; %creates a N1xN2 matrix by transposing the image.

figure(3);

imshow(img);

title('Mean Image','fontsize',18)

% Change image for manipulation

dbx=[]; % A matrix](https://image.slidesharecdn.com/ccd30660-5031-44f9-a236-c4de61b893b7-150308124157-conversion-gate01/75/MAJOR-PROJECT-40-2048.jpg)

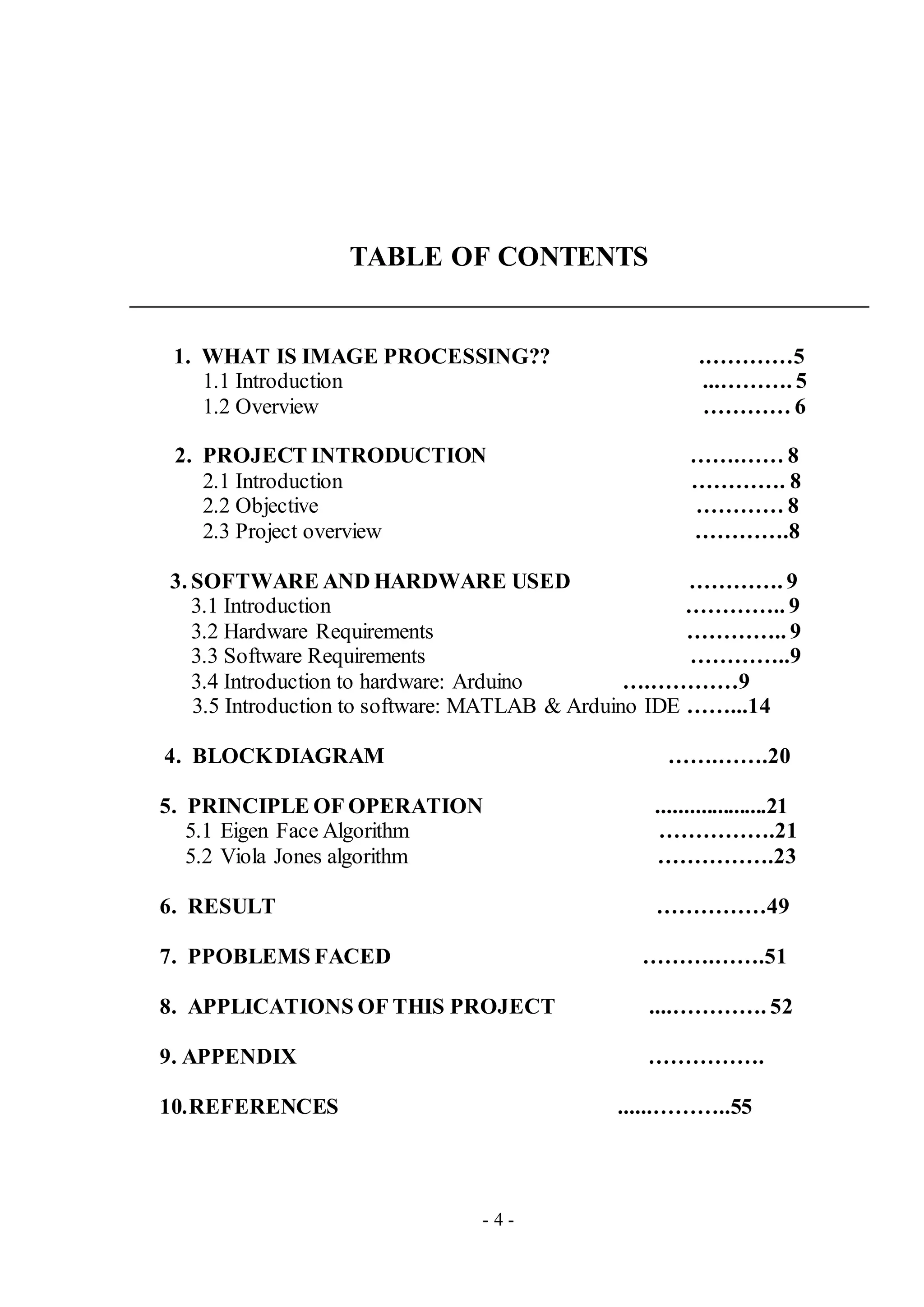

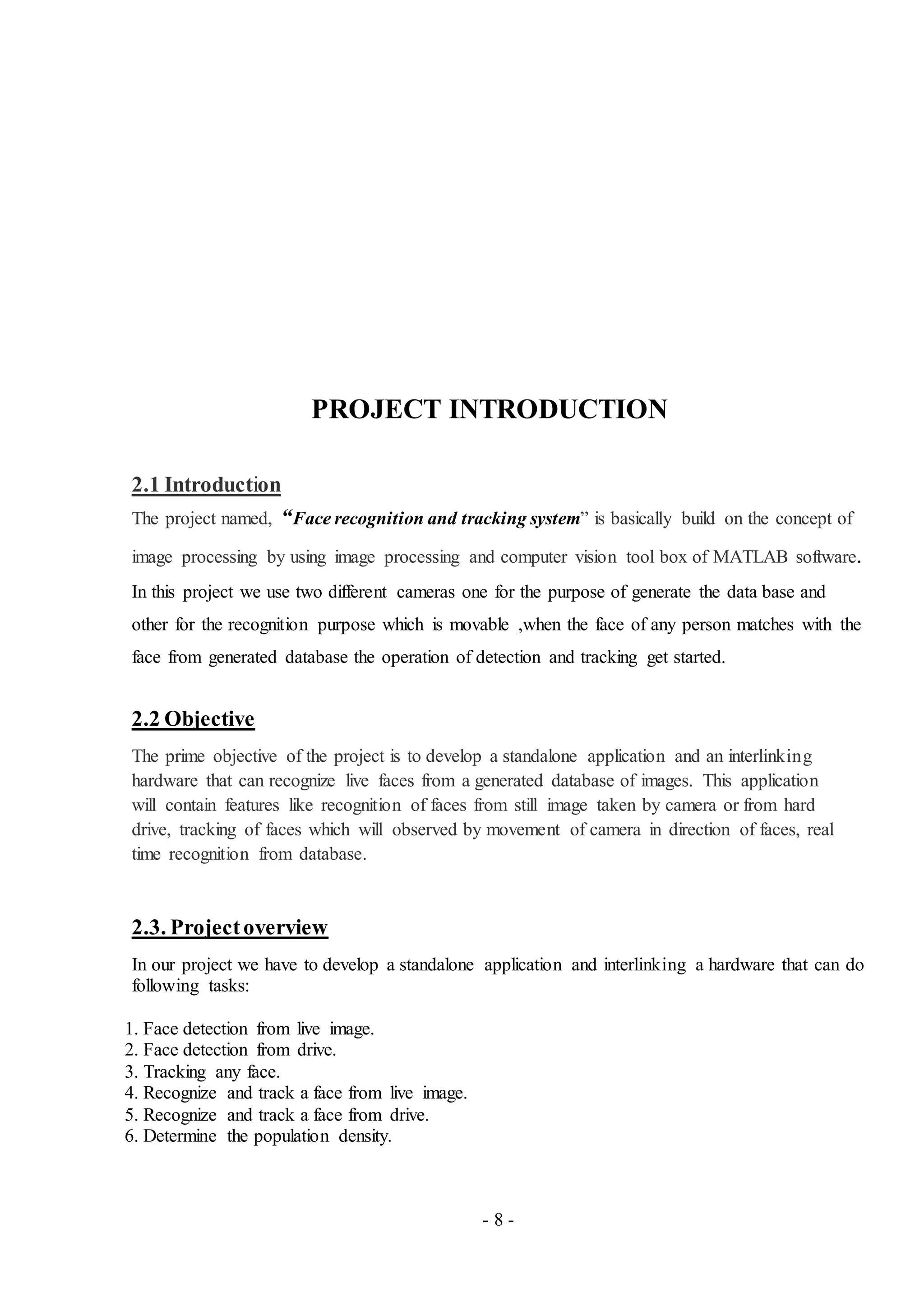

![- 41 -

for i=1:M

temp=double(S(:,i));

dbx=[dbx temp];

end

%Covariance matrix C=A'A, L=AA'

A=dbx';

L=A*A';

% vv are the eigenvector for L

% dd are the eigenvalue for both L=dbx'*dbx and C=dbx*dbx';

[vv dd]=eig(L);

% Sort and eliminate those whose eigenvalue is zero

v=[];

d=[];

for i=1:size(vv,2)

if(dd(i,i)>1e-4)

v=[v vv(:,i)];

d=[d dd(i,i)];

end

end

%sort, will return an ascending sequence

[B index]=sort(d);

ind=zeros(size(index));

dtemp=zeros(size(index));

vtemp=zeros(size(v));

len=length(index);

for i=1:len

dtemp(i)=B(len+1-i);

ind(i)=len+1-index(i);

vtemp(:,ind(i))=v(:,i);

end

d=dtemp;

v=vtemp;

%Normalization of eigenvectors

for i=1:size(v,2) %access each column

kk=v(:,i);

temp=sqrt(sum(kk.^2));

v(:,i)=v(:,i)./temp;

end

%Eigenvectors of C matrix

u=[];

for i=1:size(v,2)

temp=sqrt(d(i));

u=[u (dbx*v(:,i))./temp];

end](https://image.slidesharecdn.com/ccd30660-5031-44f9-a236-c4de61b893b7-150308124157-conversion-gate01/75/MAJOR-PROJECT-41-2048.jpg)

![- 42 -

%Normalization of eigenvectors

for i=1:size(u,2)

kk=u(:,i);

temp=sqrt(sum(kk.^2));

u(:,i)=u(:,i)./temp;

end

% show eigenfaces;

figure(4);

for i=1:size(u,2)

img=reshape(u(:,i),icol,irow);

img=img';

img=histeq(img,255);

subplot(ceil(sqrt(M)),ceil(sqrt(M)),i)

imshow(img)

drawnow;

if i==3

title('Eigenfaces','fontsize',18)

end

end

% Find the weight of each face in the training set.

omega = [];

for h=1:size(dbx,2)

WW=[];

for i=1:size(u,2)

t = u(:,i)';

WeightOfImage = dot(t,dbx(:,h)');

WW = [WW; WeightOfImage];

end

omega = [omega WW];

end

% Acquire new image

% Note: the input image must have a bmp or jpg extension.

% It should have the same size as the ones in your training set.

% It should be placed on your desktop

InputImage = input('Please enter the name of the image and its extension n','s');

InputImage = imread(strcat('E:',InputImage));

figure(5)

subplot(1,2,1)

imshow(InputImage); colormap('gray');title('Input image','fontsize',18)

input_img=rgb2gray(InputImage);

%imshow(input_img);

InImage=reshape(double(input_img)',irow*icol,1);

temp=InImage;

me=mean(temp);](https://image.slidesharecdn.com/ccd30660-5031-44f9-a236-c4de61b893b7-150308124157-conversion-gate01/75/MAJOR-PROJECT-42-2048.jpg)

![- 43 -

st=std(temp);

temp=(temp-me)*ustd/st+um;

NormImage = temp;

Difference = temp-m;

p = [];

aa=size(u,2);

for i = 1:aa

pare = dot(NormImage,u(:,i));

p = [p; pare];

end

ReshapedImage = m + u(:,1:aa)*p; %m is the mean image, u is the eigenvector

ReshapedImage = reshape(ReshapedImage,icol,irow);

ReshapedImage = ReshapedImage';

%show the reconstructed image.

subplot(1,2,2)

imagesc(ReshapedImage); colormap('gray');

title('Reconstructed image','fontsize',18)

InImWeight = [];

for i=1:size(u,2)

t = u(:,i)';

WeightOfInputImage = dot(t,Difference');

InImWeight = [InImWeight; WeightOfInputImage];

end

ll = 1:M;

figure(68)

subplot(1,2,1)

stem(ll,InImWeight)

title('Weight of Input Face','fontsize',14)

% Find Euclidean distance

e=[];

for i=1:size(omega,2)

q = omega(:,i);

DiffWeight = InImWeight-q;

mag = norm(DiffWeight);

e = [e mag];

end

kk = 1:size(e,2);

subplot(1,2,2)

stem(kk,e)

title('Eucledian distance of input image','fontsize',14)

MaximumValue=max(e)

MinimumValue=min(e)

Min_id=find(e==min(e));](https://image.slidesharecdn.com/ccd30660-5031-44f9-a236-c4de61b893b7-150308124157-conversion-gate01/75/MAJOR-PROJECT-43-2048.jpg)

![- 44 -

person_no=Min_id/times;

p1=(round(person_no));

if(person_no<p1)

p1=(p1-1);

display('Detected face number')

display(p1)

write_digit(14,15,16,17,p1);

end

if(person_no>p1)

p1=(p1+1);

display('Detected face number')

display(p1)

write_digit(14,15,16,17,p1);

end

if(person_no==p1)

display('Detected face number')

display(p1)

write_digit(14,15,16,17,p1);

end

end

%% recognize_face_cam.m– function for matching face using Eigenface

algorithm from camera

% Thanks to Santiago Serrano

function Min_id = recognize_face_cam(M)

imaqreset;

close all

clc

% number of images on your training set.

vid = videoinput('winvideo',1,'YUY2_320x240');

%Chosen std and mean.

%It can be any number that it is close to the std and mean of most of the images.

um=100;

ustd=80;

person_no=0;

times=5;

%read and show images(bmp);

S=[]; %img matrix

figure(1);

for i=1:M

str=strcat(int2str(i),'.jpg'); %concatenates two strings that form the name of the image

eval('img=imread(str);');

%eval('img=rgb2gray(image);');](https://image.slidesharecdn.com/ccd30660-5031-44f9-a236-c4de61b893b7-150308124157-conversion-gate01/75/MAJOR-PROJECT-44-2048.jpg)

![- 45 -

subplot(ceil(sqrt(M)),ceil(sqrt(M)),i)

imshow(img)

if i==3

title('Training set','fontsize',18)

end

drawnow;

[irow icol]=size(img); % get the number of rows (N1) and columns (N2)

temp=reshape(img',irow*icol,1); %creates a (N1*N2)x1 matrix

S=[S temp]; %X is a N1*N2xM matrix after finishing the sequence

%this is our S

end

%Here we change the mean and std of all images. We normalize all images.

%This is done to reduce the error due to lighting conditions.

for i=1:size(S,2)

temp=double(S(:,i));

m=mean(temp);

st=std(temp);

S(:,i)=(temp-m)*ustd/st+um;

end

%show normalized images

figure(2);

for i=1:M

str=strcat(int2str(i),'.jpg');

img=reshape(S(:,i),icol,irow);

img=img';

eval('imwrite(img,str)');

subplot(ceil(sqrt(M)),ceil(sqrt(M)),i)

imshow(img)

drawnow;

if i==3

title('Normalized Training Set','fontsize',18)

end

end

%mean image;

m=mean(S,2); %obtains the mean of each row instead of each column

tmimg=uint8(m); %converts to unsigned 8-bit integer. Values range from 0 to 255

img=reshape(tmimg,icol,irow); %takes the N1*N2x1 vector and creates a N2xN1 matrix

img=img'; %creates a N1xN2 matrix by transposing the image.

figure(3);

imshow(img);

title('Mean Image','fontsize',18)

% Change image for manipulation

dbx=[]; % A matrix

for i=1:M](https://image.slidesharecdn.com/ccd30660-5031-44f9-a236-c4de61b893b7-150308124157-conversion-gate01/75/MAJOR-PROJECT-45-2048.jpg)

![- 46 -

temp=double(S(:,i));

dbx=[dbx temp];

end

%Covariance matrix C=A'A, L=AA'

A=dbx';

L=A*A';

% vv are the eigenvector for L

% dd are the eigenvalue for both L=dbx'*dbx and C=dbx*dbx';

[vv dd]=eig(L);

% Sort and eliminate those whose eigenvalue is zero

v=[];

d=[];

for i=1:size(vv,2)

if(dd(i,i)>1e-4)

v=[v vv(:,i)];

d=[d dd(i,i)];

end

end

%sort, will return an ascending sequence

[B index]=sort(d);

ind=zeros(size(index));

dtemp=zeros(size(index));

vtemp=zeros(size(v));

len=length(index);

for i=1:len

dtemp(i)=B(len+1-i);

ind(i)=len+1-index(i);

vtemp(:,ind(i))=v(:,i);

end

d=dtemp;

v=vtemp;

%Normalization of eigenvectors

for i=1:size(v,2) %access each column

kk=v(:,i);

temp=sqrt(sum(kk.^2));

v(:,i)=v(:,i)./temp;

end

%Eigenvectors of C matrix

u=[];

for i=1:size(v,2)

temp=sqrt(d(i));

u=[u (dbx*v(:,i))./temp];

end

%Normalization of eigenvectors](https://image.slidesharecdn.com/ccd30660-5031-44f9-a236-c4de61b893b7-150308124157-conversion-gate01/75/MAJOR-PROJECT-46-2048.jpg)

![- 47 -

for i=1:size(u,2)

kk=u(:,i);

temp=sqrt(sum(kk.^2));

u(:,i)=u(:,i)./temp;

end

% show eigenfaces;

figure(4);

for i=1:size(u,2)

img=reshape(u(:,i),icol,irow);

img=img';

img=histeq(img,255);

subplot(ceil(sqrt(M)),ceil(sqrt(M)),i)

imshow(img)

drawnow;

if i==3

title('Eigenfaces','fontsize',18)

end

end

% Find the weight of each face in the training set.

omega = [];

for h=1:size(dbx,2)

WW=[];

for i=1:size(u,2)

t = u(:,i)';

WeightOfImage = dot(t,dbx(:,h)');

WW = [WW; WeightOfImage];

end

omega = [omega WW];

end

% Acquire new image from camera

preview(vid);

choice=menu('Push CAM button for taking pic',...

'CAM');

if(choice==1)

g=getsnapshot(vid);

end

rgbImage=ycbcr2rgb(g);

imwrite(rgbImage,'camshot.jpg');

closepreview(vid);

InputImage = imread('camshot.jpg');

figure(5)

subplot(1,2,1)

imshow(InputImage); colormap('gray');title('Input image','fontsize',18)

input_img=rgb2gray(InputImage);](https://image.slidesharecdn.com/ccd30660-5031-44f9-a236-c4de61b893b7-150308124157-conversion-gate01/75/MAJOR-PROJECT-47-2048.jpg)

![- 48 -

%imshow(input_img);

InImage=reshape(double(input_img)',irow*icol,1);

temp=InImage;

me=mean(temp);

st=std(temp);

temp=(temp-me)*ustd/st+um;

NormImage = temp;

Difference = temp-m;

p = [];

aa=size(u,2);

for i = 1:aa

pare = dot(NormImage,u(:,i));

p = [p; pare];

end

ReshapedImage = m + u(:,1:aa)*p; %m is the mean image, u is the eigenvector

ReshapedImage = reshape(ReshapedImage,icol,irow);

ReshapedImage = ReshapedImage';

%show the reconstructed image.

subplot(1,2,2)

imagesc(ReshapedImage); colormap('gray');

title('Reconstructed image','fontsize',18)

InImWeight = [];

for i=1:size(u,2)

t = u(:,i)';

WeightOfInputImage = dot(t,Difference');

InImWeight = [InImWeight; WeightOfInputImage];

end

ll = 1:M;

figure(68)

subplot(1,2,1)

stem(ll,InImWeight)

title('Weight of Input Face','fontsize',14)

% Find Euclidean distance

e=[];

for i=1:size(omega,2)

q = omega(:,i);

DiffWeight = InImWeight-q;

mag = norm(DiffWeight);

e = [e mag];

end

kk = 1:size(e,2);

subplot(1,2,2)

stem(kk,e)

title('Eucledian distance of input image','fontsize',14)](https://image.slidesharecdn.com/ccd30660-5031-44f9-a236-c4de61b893b7-150308124157-conversion-gate01/75/MAJOR-PROJECT-48-2048.jpg)

![- 50 -

FDetect = vision.CascadeObjectDetector; %To detect Face

I = getsnapshot(vid); %Read the input image

BB = step(FDetect,I); %Returns Bounding Box values based on number of objects

hold on

figure(1),imshow(I);

title('Face Detection');

for i = 1:size(BB,1)

no_face=size(BB,1);

write_digit(14,15,16,17,no_face);

rectangle('Position',BB(i,:),'LineWidth',2,'LineStyle','-','EdgeColor','y');

display(BB(1));

display(BB(2));

motor_motion(BB(1),BB(2));

hold off;

flushdata(vid);

end

end

stop(vid);

end

%% vj_faceD_live.m– function for tracking recognizedface

function vj_faceD_live(std,mean)

imaqreset;

close all

clc

detect=0;

std_2=0;

mean_2=0;

tlrnce=7;

while (1==1)

choice=menu('Face Recognition',...

'Real time recognition',...

'Track last recognised face',...

'Exit');

if (choice==1)

[std,mean]= face_stdmean();

%Detect objects using Viola-Jones Algorithm

vid = videoinput('winvideo',1,'YUY2_320x240');

set(vid,'ReturnedColorSpace','rgb');

set(vid,'TriggerRepeat',Inf);

vid.FrameGrabInterval = 1;

vid.FramesPerTrigger=20;](https://image.slidesharecdn.com/ccd30660-5031-44f9-a236-c4de61b893b7-150308124157-conversion-gate01/75/MAJOR-PROJECT-50-2048.jpg)

![- 52 -

stop(vid);

end

if(choice==2)

[std,mean]= face_stdmean_recgnz();

%Detect objects using Viola-Jones Algorithm

vid = videoinput('winvideo',1,'YUY2_320x240');

set(vid,'ReturnedColorSpace','rgb');

set(vid,'TriggerRepeat',Inf);

vid.FrameGrabInterval = 1;

vid.FramesPerTrigger=20;

figure; % Ensure smooth display

set(gcf,'doublebuffer','on');

start(vid);

while(vid.FramesAcquired<=600);

FDetect = vision.CascadeObjectDetector; %To detect Face

I = getsnapshot(vid); %Read the input image

BB = step(FDetect,I); %Returns Bounding Box values based on number of objects

hold on

if(size(BB,1) == 1)

I2=imcrop(I,BB);

gray_face=rgb2gray(I2);

std_2 = std2(gray_face);

mean_2 = mean2(gray_face);

%figure(1),imshow(gray_face);

end

figure(1),imshow(I);

title('Face Recognition');

display(std);

display(mean);

display(std_2);

display(mean_2);

for i = 1:size(BB,1)

if((((std_2<=(std+tlrnce))&&(std_2>=(std-

tlrnce))))&&((mean_2<=(mean+tlrnce))&&(mean_2>=(mean-tlrnce))))

rectangle('Position',BB(i,:),'LineWidth',4,'LineStyle','-','EdgeColor','g');

display('DETECTED');

detect=(detect+1)

if(detect==2)

display('tracking....');

detect=0;

motor_motion(BB(1),BB(2));%for motion of motors in direction of faces](https://image.slidesharecdn.com/ccd30660-5031-44f9-a236-c4de61b893b7-150308124157-conversion-gate01/75/MAJOR-PROJECT-52-2048.jpg)

![- 53 -

end

else

rectangle('Position',BB(i,:),'LineWidth',4,'LineStyle','-','EdgeColor','r');

display('NOT DETECTED');

end

hold off;

flushdata(vid);

end

end

stop(vid);

end

if(choice==3)

return

end

end

%% face_stdmean.m – function returns standard deviation and mean for real

time recognition

function [std_f,mean_f] = face_stdmean()

imaqreset;

close all;

clc;

i=1;

global std

global mean

global times

std=0;mean=0;

vid = videoinput('winvideo',1,'YUY2_320x240');

while (1==1)

choice=menu('Face Recognition',...

'Taking photos for recognition',...

'Exit');

if (choice==1)

FDetect = vision.CascadeObjectDetector;

preview(vid);

while(i<(times+1))

choice2=menu('Face Recognition',...

'Capture');

if(choice2==1)

g=getsnapshot(vid);

%saving rgb image in specified folder

rgbImage=ycbcr2rgb(g);

str=strcat(int2str(i),'f.jpg');

fullImageFileName = fullfile('E:New Folder',str);

imwrite(rgbImage,fullImageFileName);](https://image.slidesharecdn.com/ccd30660-5031-44f9-a236-c4de61b893b7-150308124157-conversion-gate01/75/MAJOR-PROJECT-53-2048.jpg)

![- 54 -

BB = step(FDetect,rgbImage);

I2=imcrop(rgbImage,BB);

%saving grayscale image in current directory

grayImage=rgb2gray(I2);

Dir_name=fullfile(pwd,str);

imwrite(grayImage,Dir_name);

std = (std+std2(grayImage));

mean =(mean+mean2(grayImage));

i=(i+1);

end

std_f=(std/times)

mean_f=(mean/times)

end

end

closepreview(vid);

if (choice==2)

std_f=(std/times)

mean_f=(mean/times)

return;

end

end

end

%% face_stdmean_recgnz.m– function returns standard deviation and mean

of last recognizedface

function [std_f,mean_f] = face_stdmean_recgnz()

close all;

clc;

global face_id

global std

global mean

global times

std=0;mean=0;

%i=face_id;

i=input('Enter face id for live recognition: ');

FDetect = vision.CascadeObjectDetector;

j=(i*times);

k=(j-times);

while(j>=(k+1))

str=strcat(int2str(j),'.jpg');

fullImageFileName = fullfile('E:New Folder',str);

I=imread(fullImageFileName);

BB = step(FDetect,I);

I2=imcrop(I,BB);

grayImage=rgb2gray(I2);

str2=strcat(int2str(j),'f.jpg');](https://image.slidesharecdn.com/ccd30660-5031-44f9-a236-c4de61b893b7-150308124157-conversion-gate01/75/MAJOR-PROJECT-54-2048.jpg)

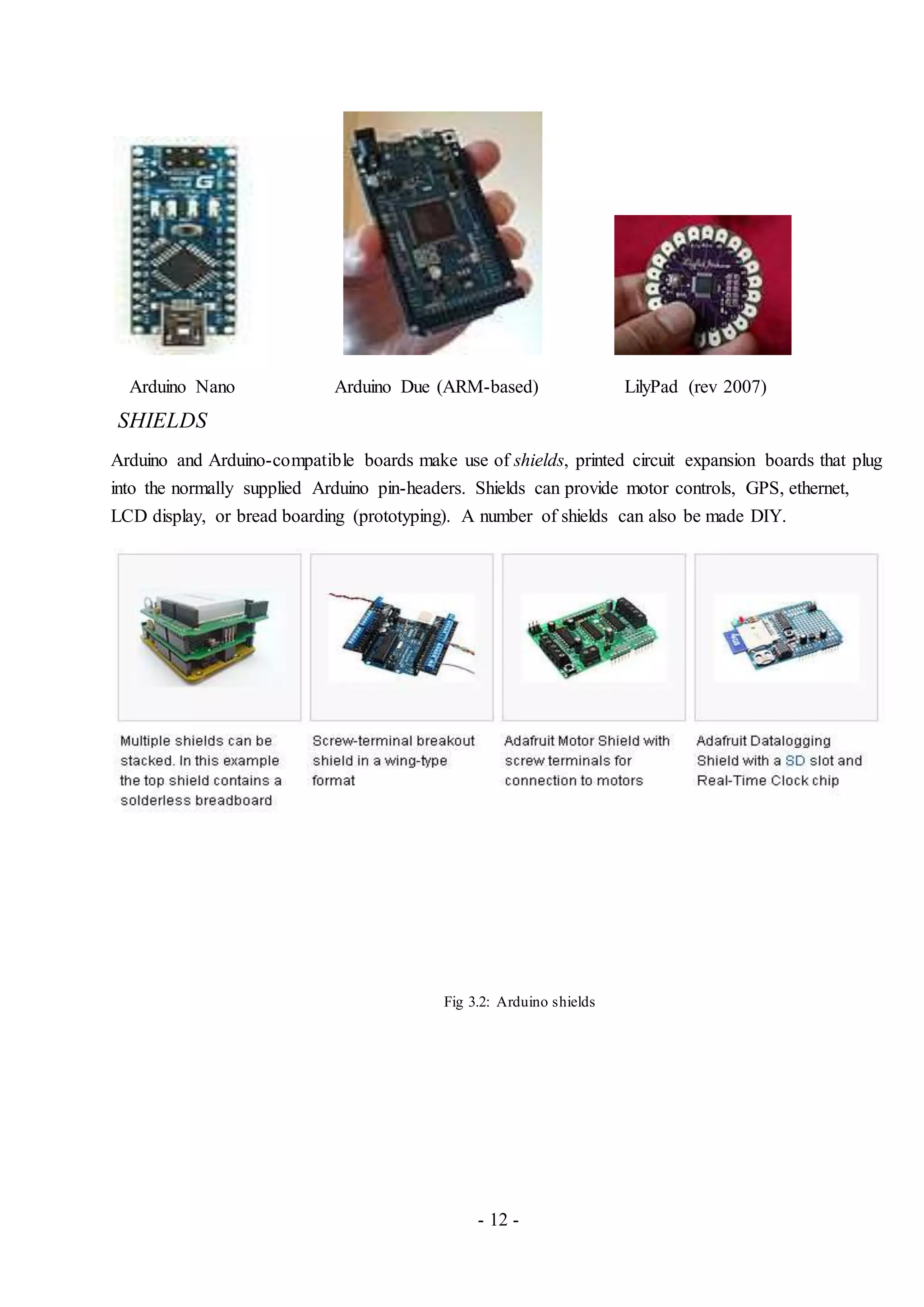

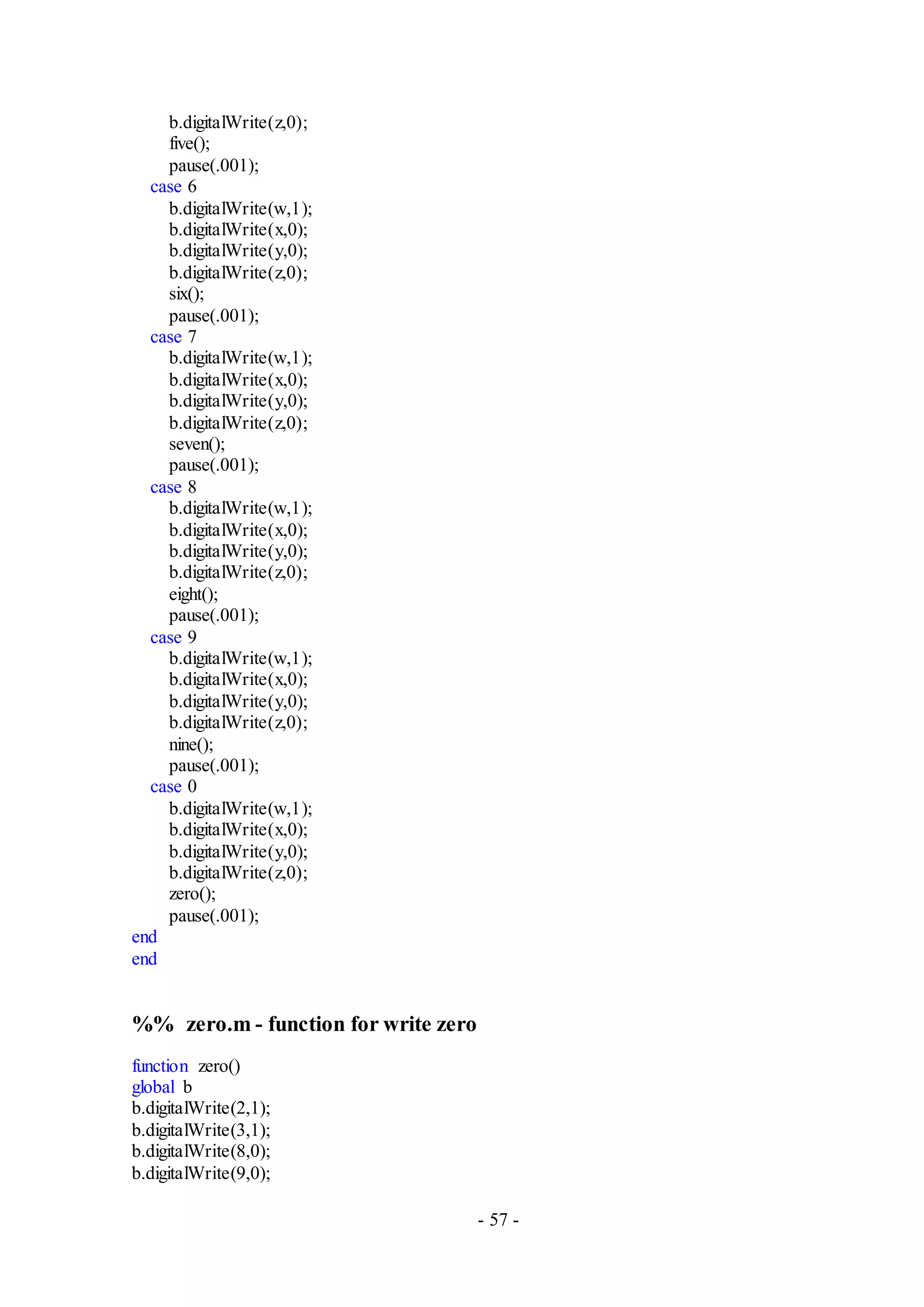

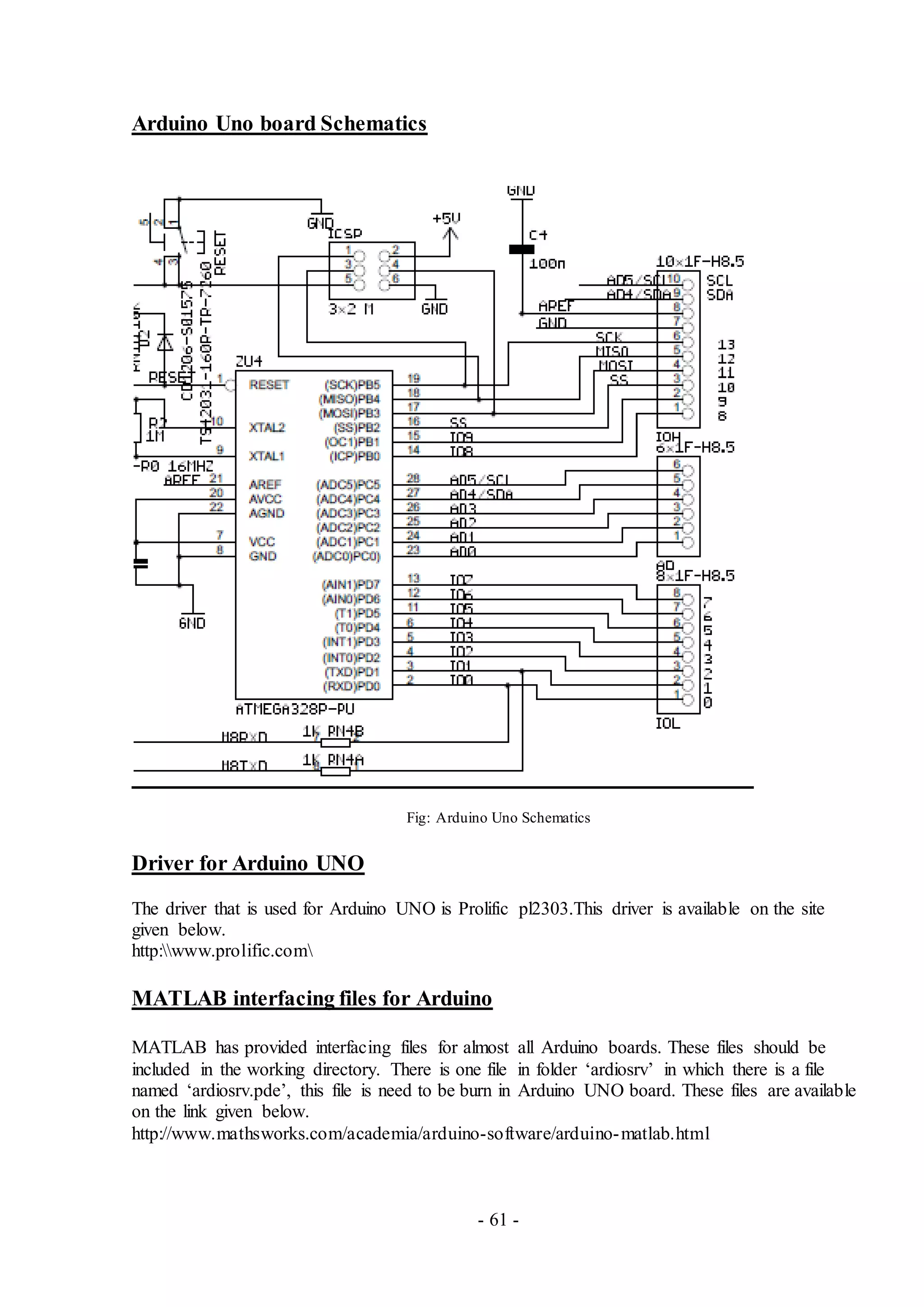

![- 62 -

REFERENCES

The usefull topics for my project are taken from these references :

[1] Santiago Serrano, Eigen Faces tutorial, Drexel University

[2] Padhraic Smyth, Face Detection using the Viola-Jones Method, Department Computer

Science University of California, Irvine.

[3] Abboud, F. Davoine, and M. Dang. Facial expression recognition

and synthesis based on an appearance model. Signal Process., Image Commun., 2004.

[2] J. Ahlberg. Candide-3 – an updated parametrised face. Technical report,

Link¨oping University, 2001.

[4] J. Ahlberg and R. Forchheimer. Face tracking for model-based coding

and face animation. International journal of imaging systems and technology, 2003.

[5] A. Azerbayejani and A. Pentland. Recursive estimation of motion, structure, and focal

length. IEEE PAMI, 1995.

[6] V. Belle, T. Deselaers, and S. Schiffer. Randomized trees for real-time

one-step face detection and recognition. 2008.

[7] M. J. Black and Y. Yacoob. Recognizing facial expressions in image

sequences using local parameterized models of image motion. IJCV, 1997.

[8] S. Basu, I. Essa, and A. Pentland. Motion regularization for model-based

head tracking. In CVPR 1996, 1996.

[9] M. L. Cascia, S. Scarloff, and V. Athitsos. Fast, reliable head tracking

under varying illumination: An approach based on registration of texturemapped

3d models. IEEE PAMI, 2000.](https://image.slidesharecdn.com/ccd30660-5031-44f9-a236-c4de61b893b7-150308124157-conversion-gate01/75/MAJOR-PROJECT-62-2048.jpg)