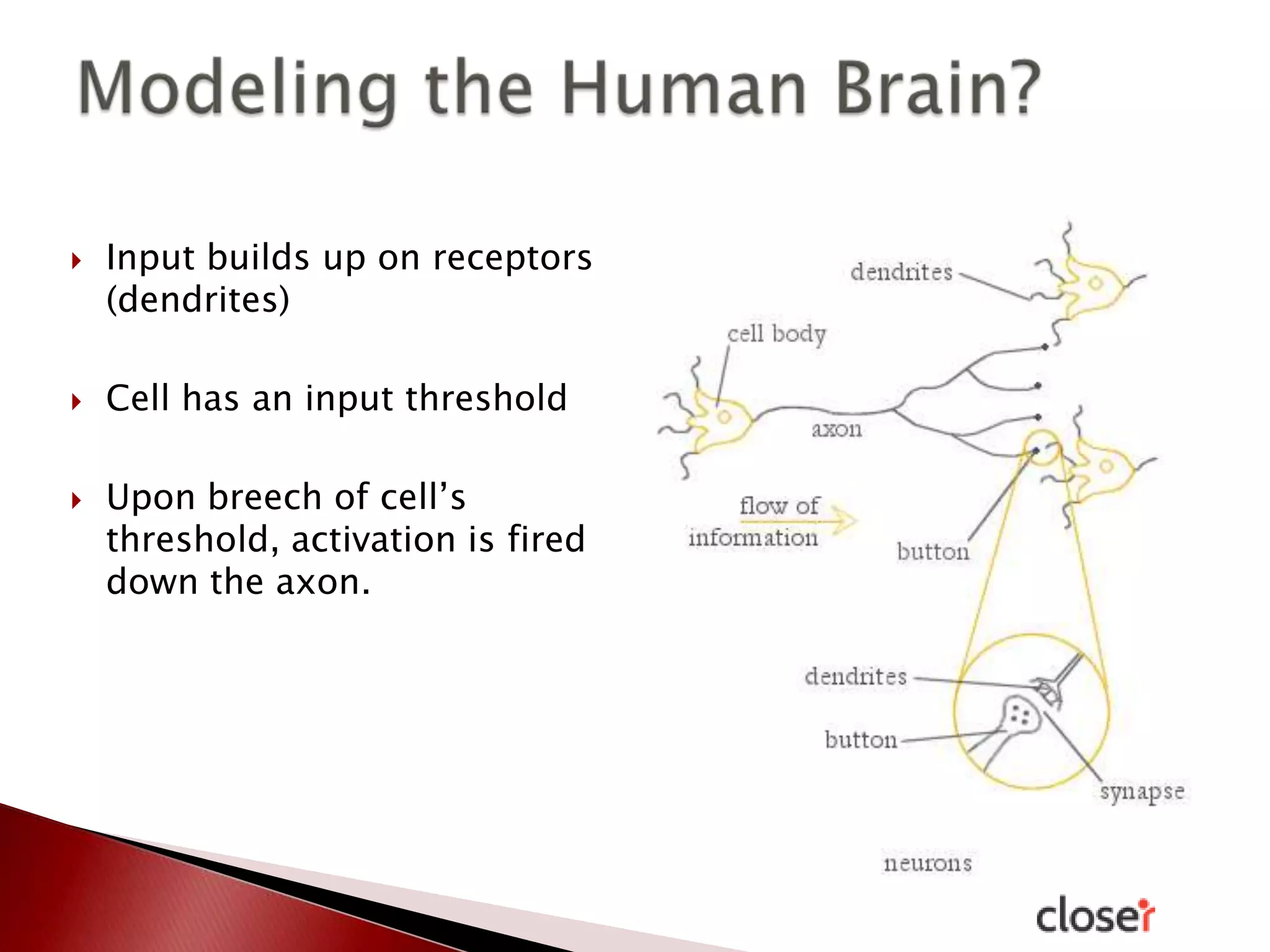

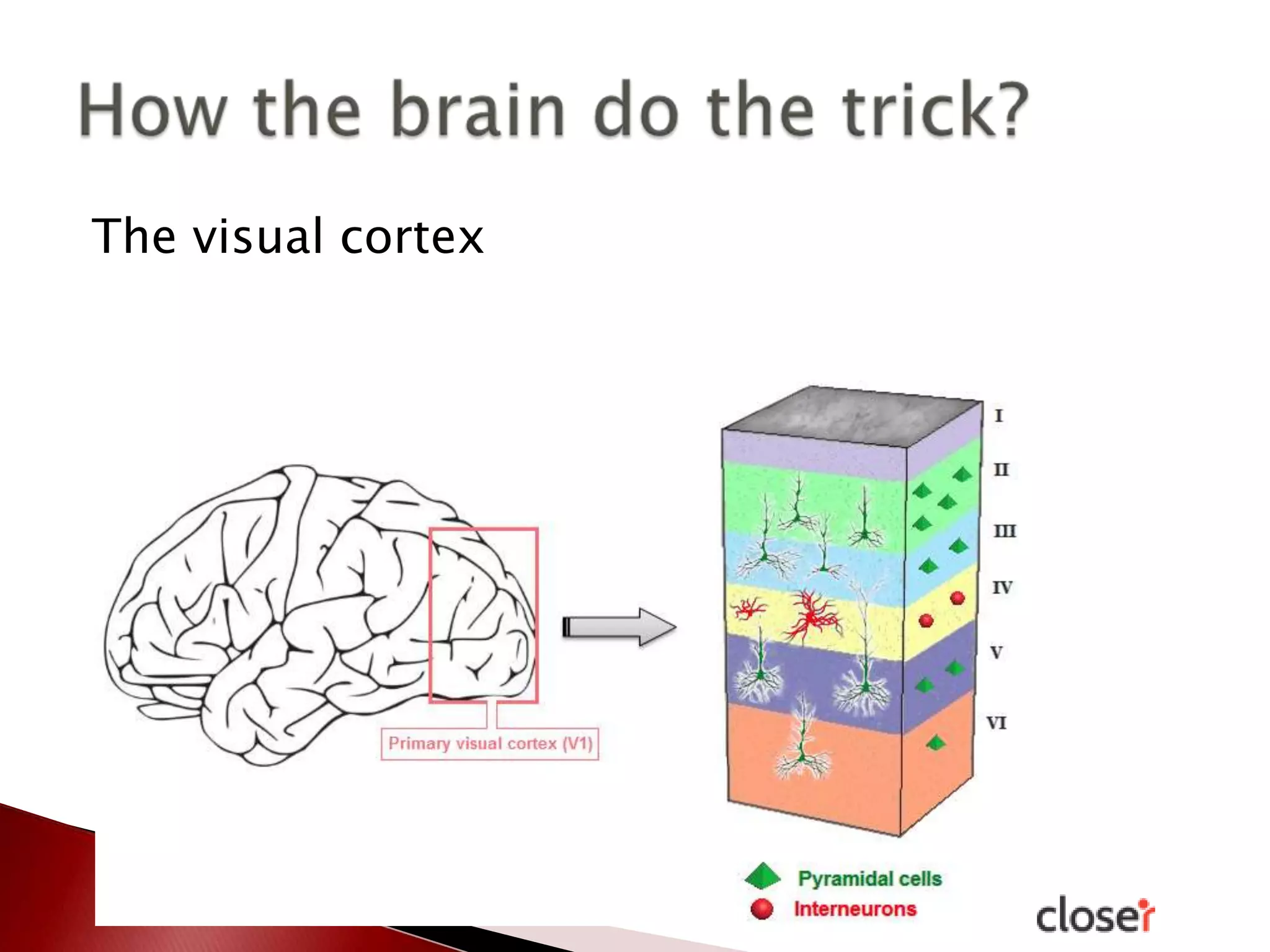

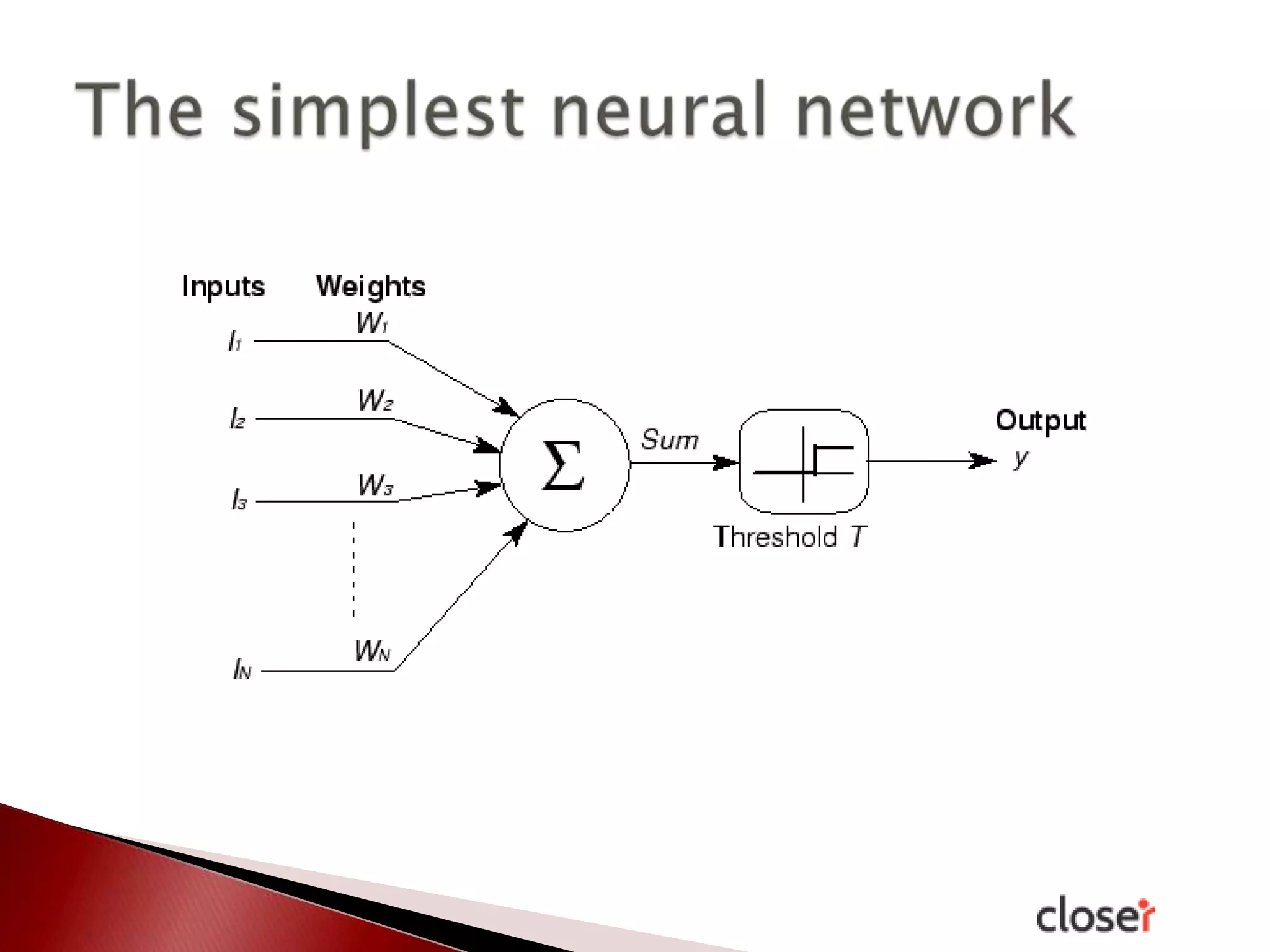

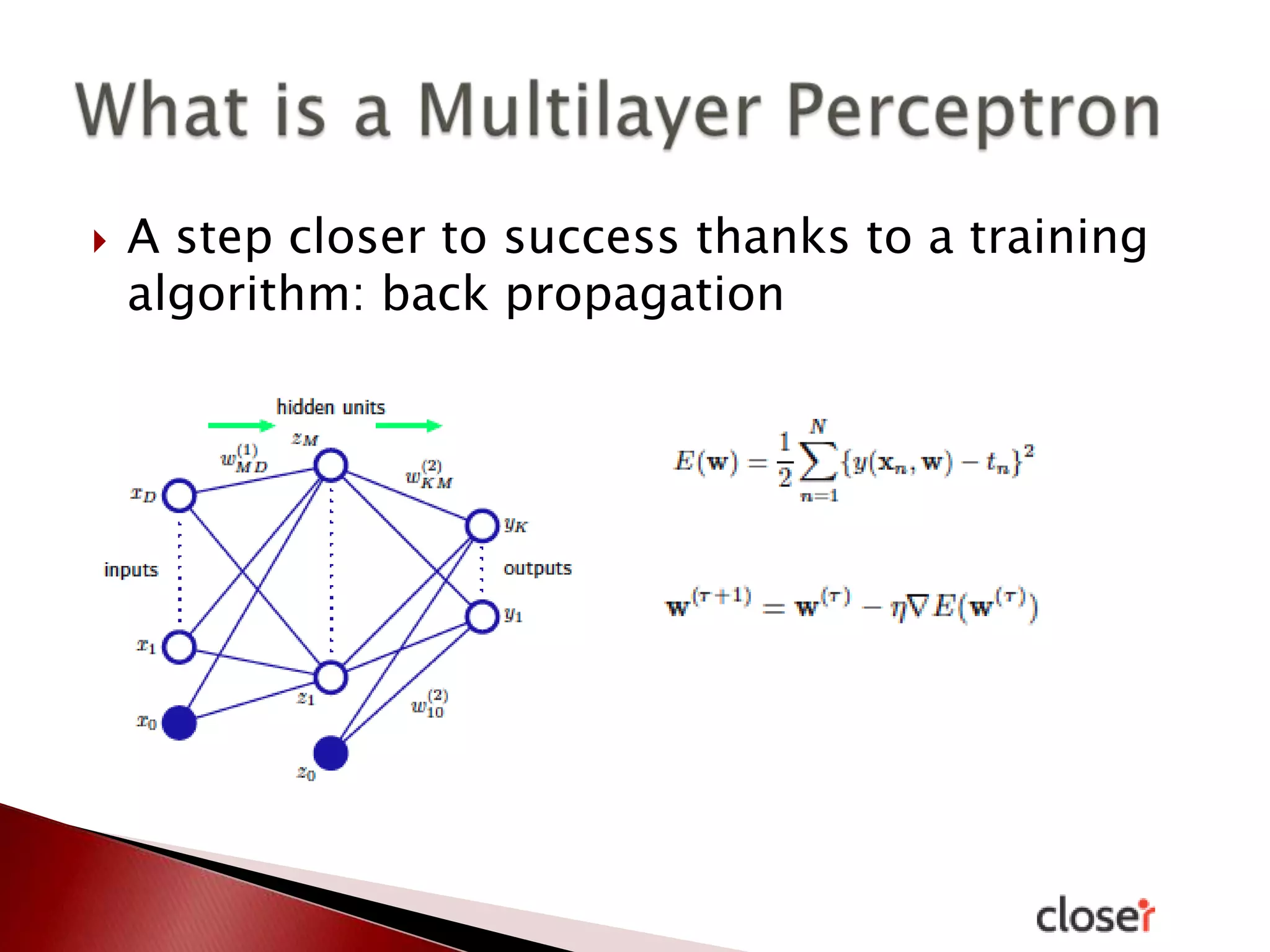

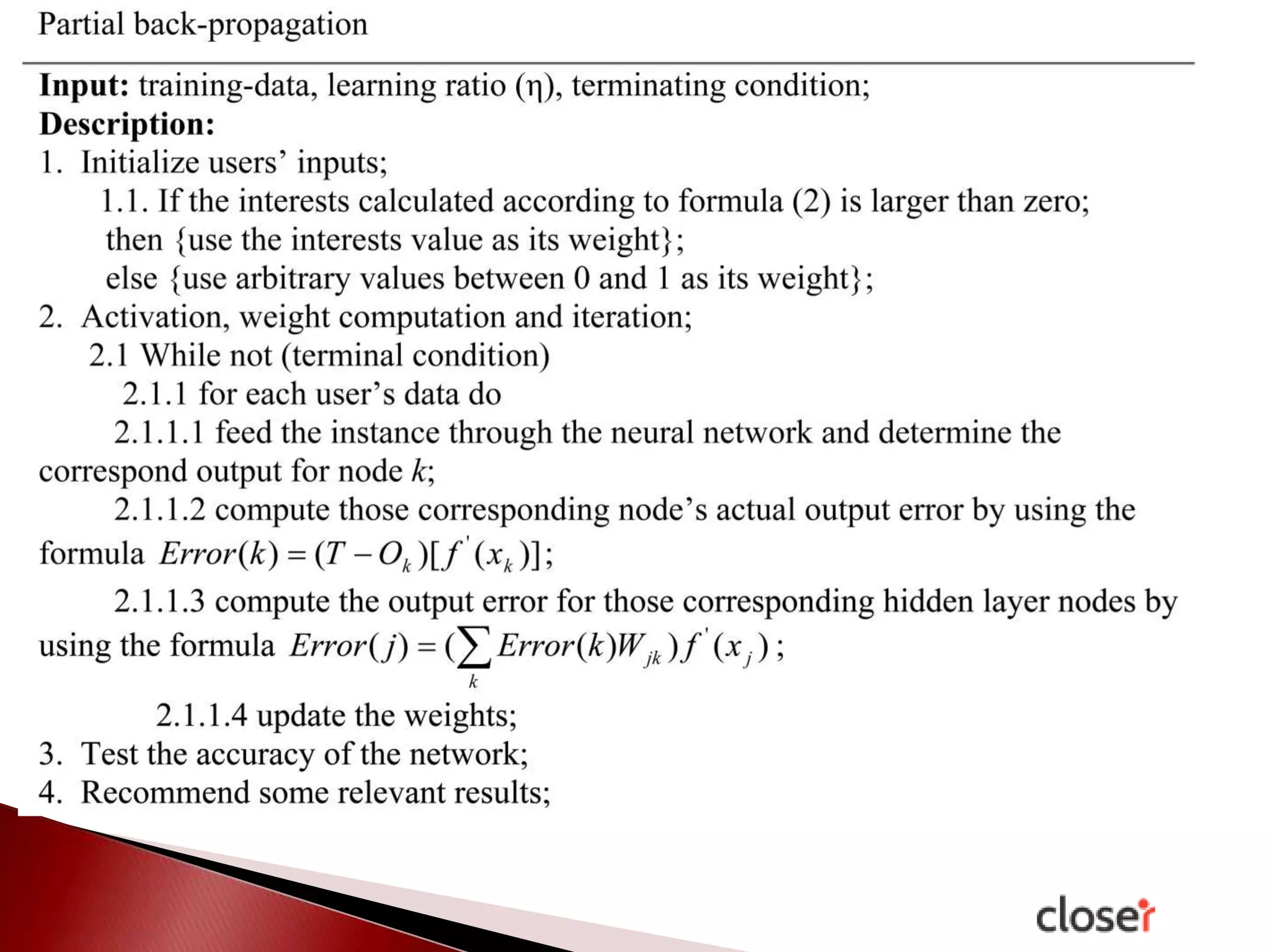

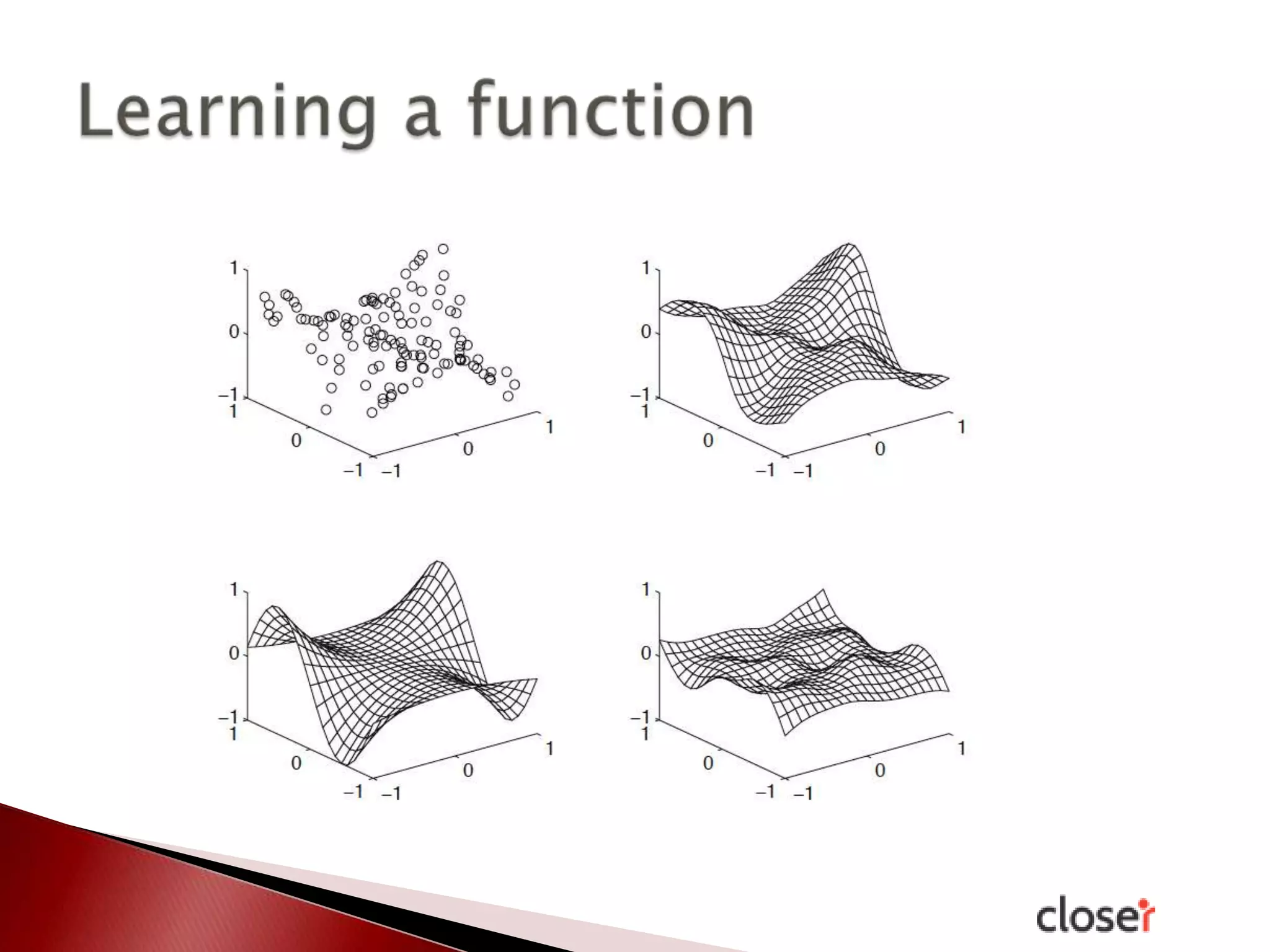

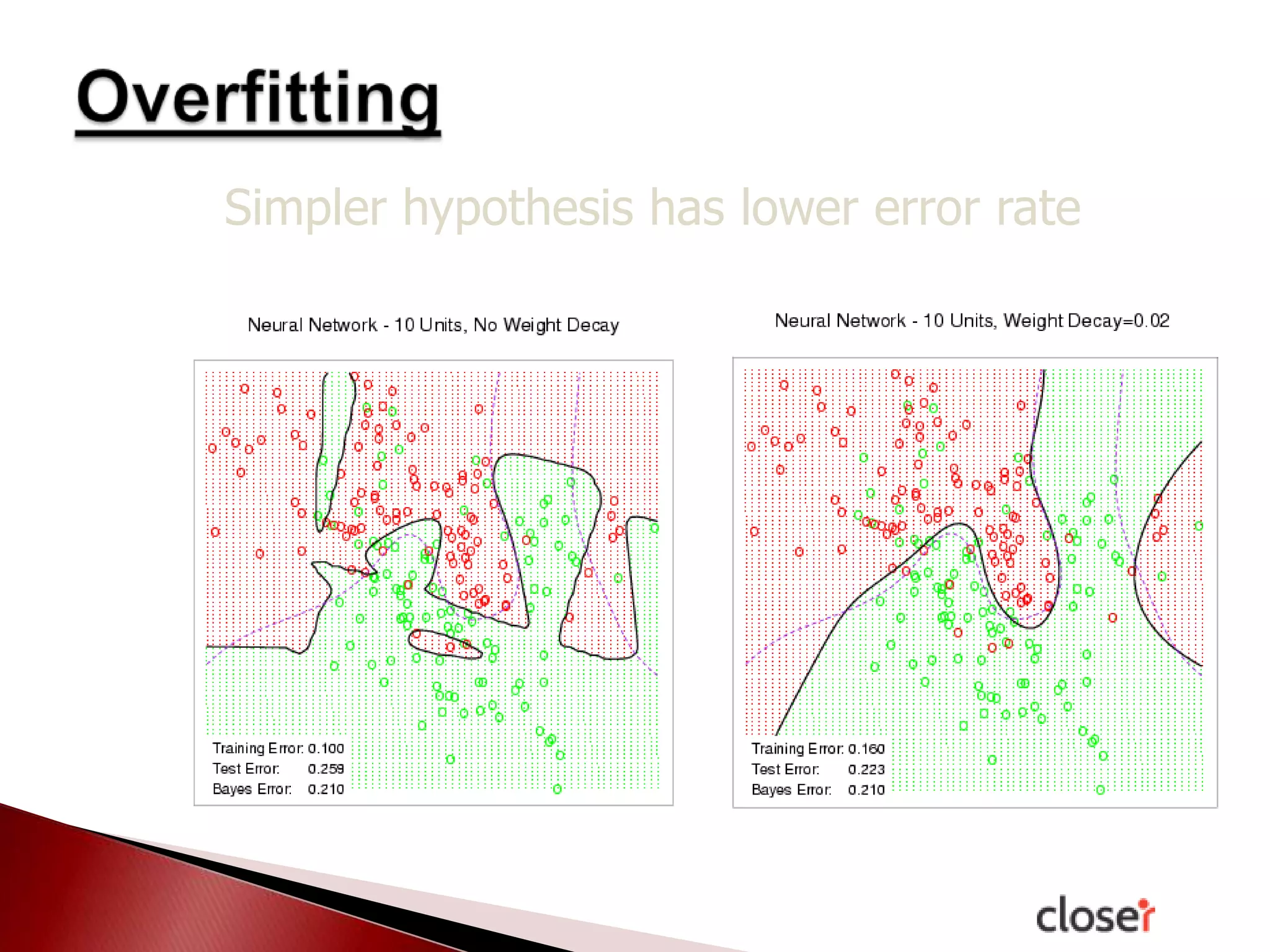

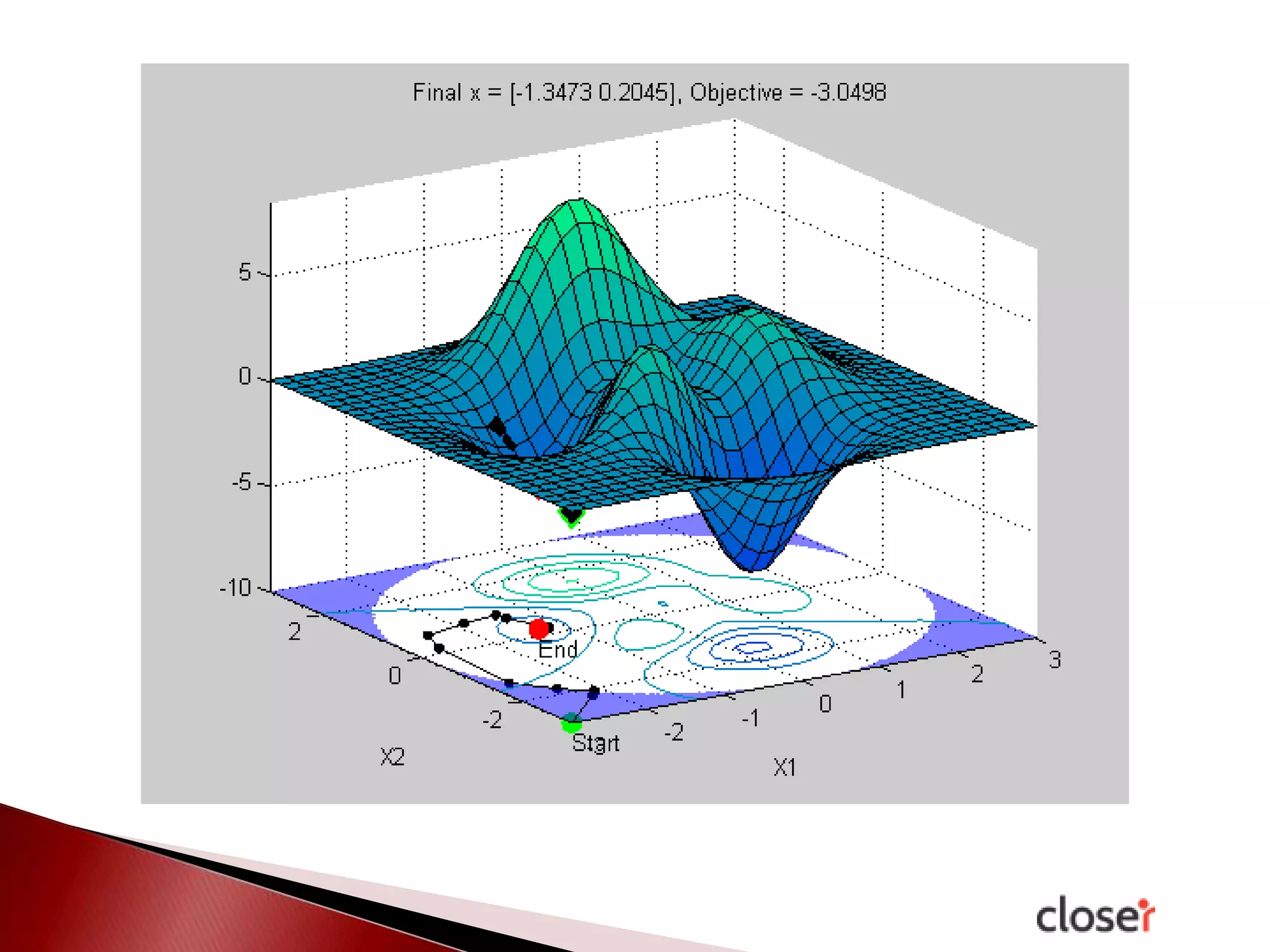

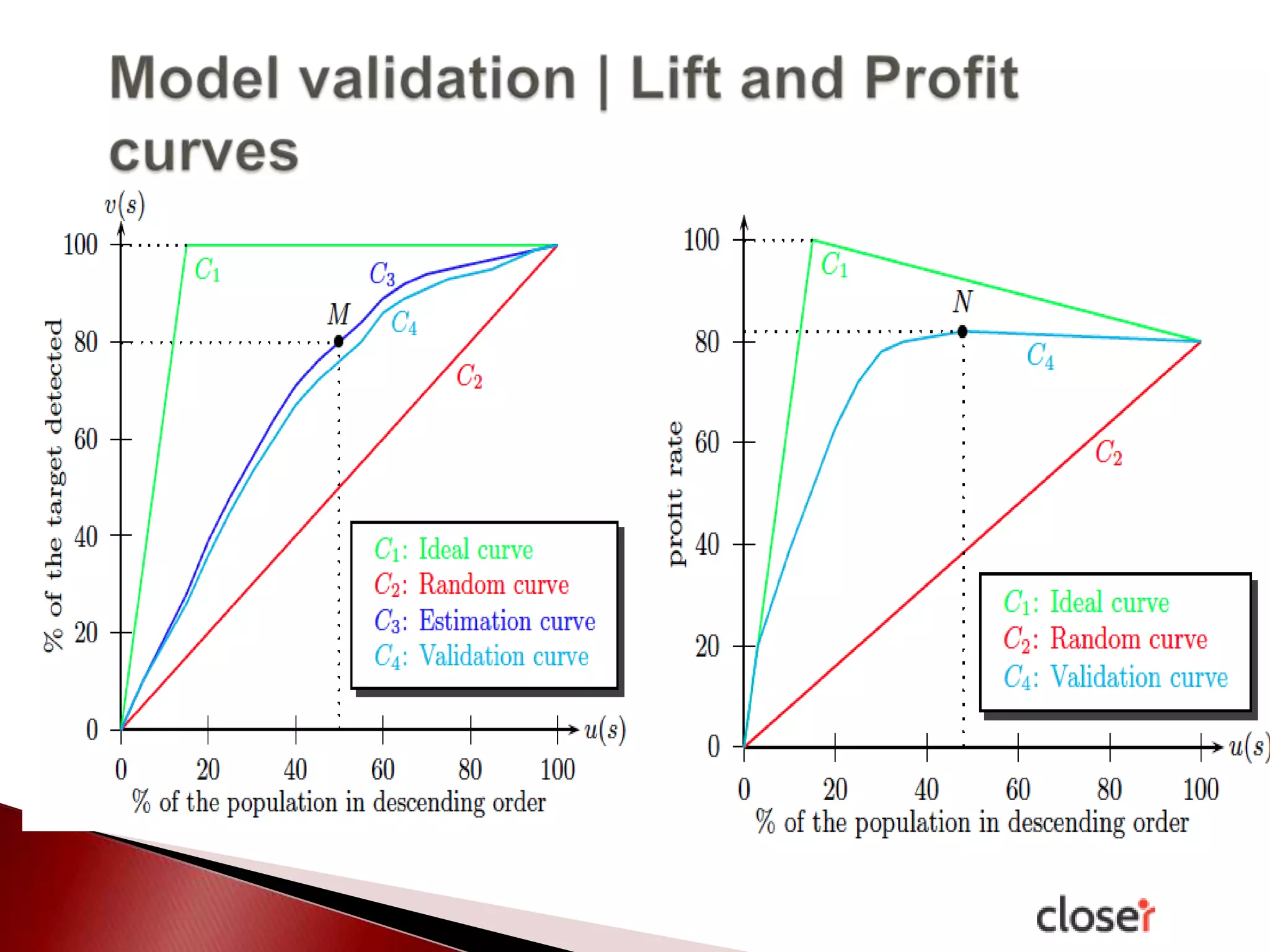

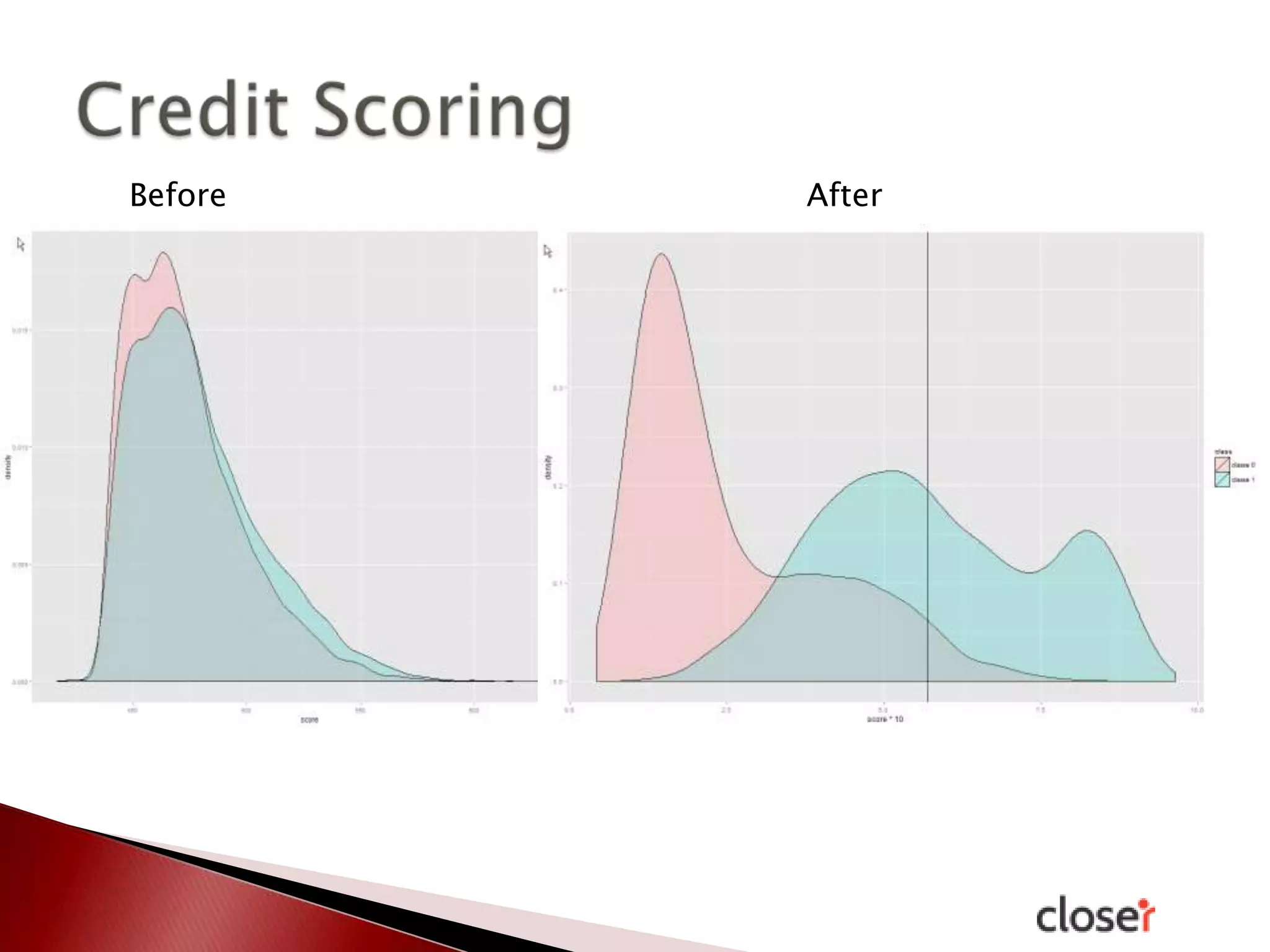

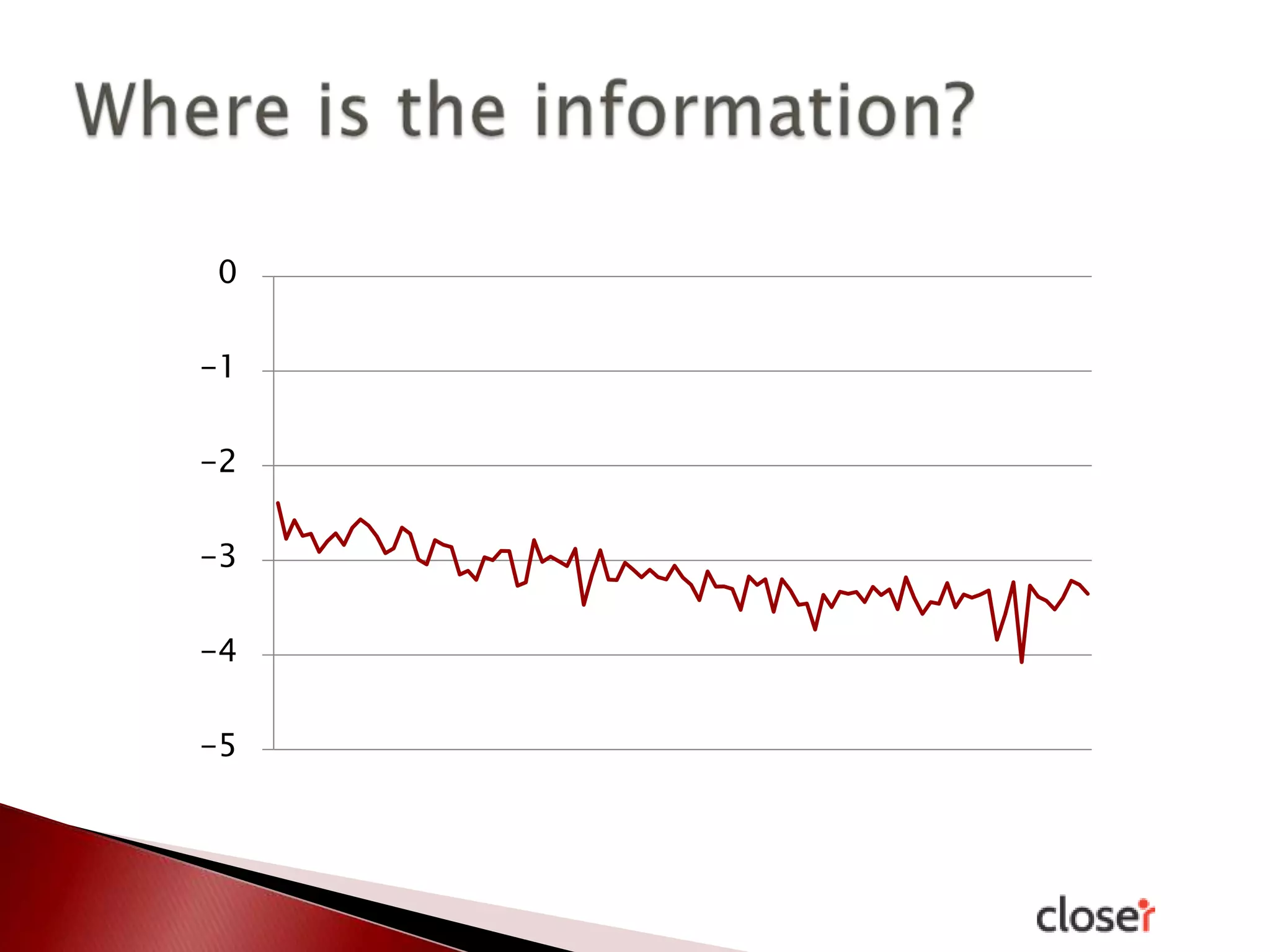

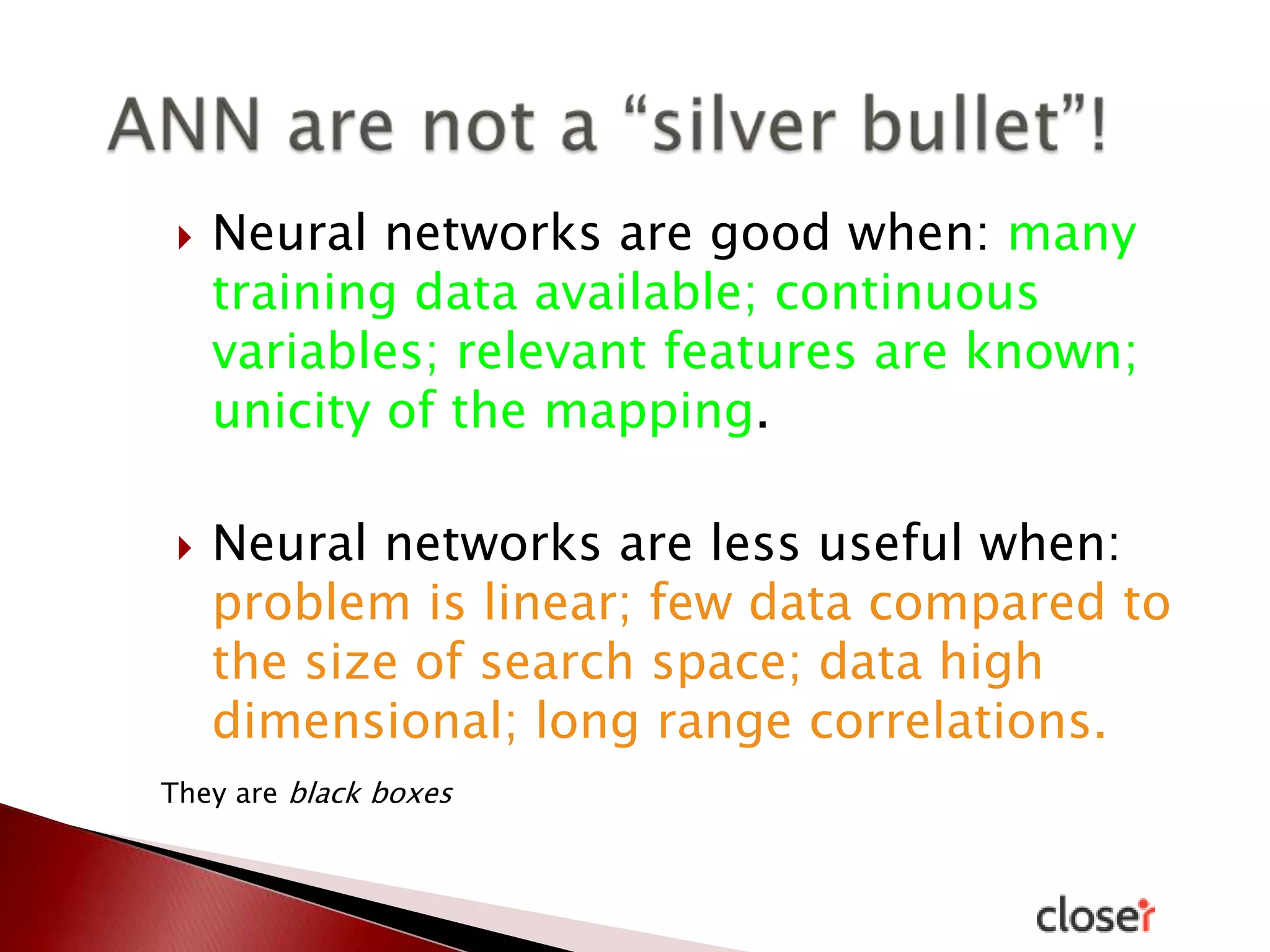

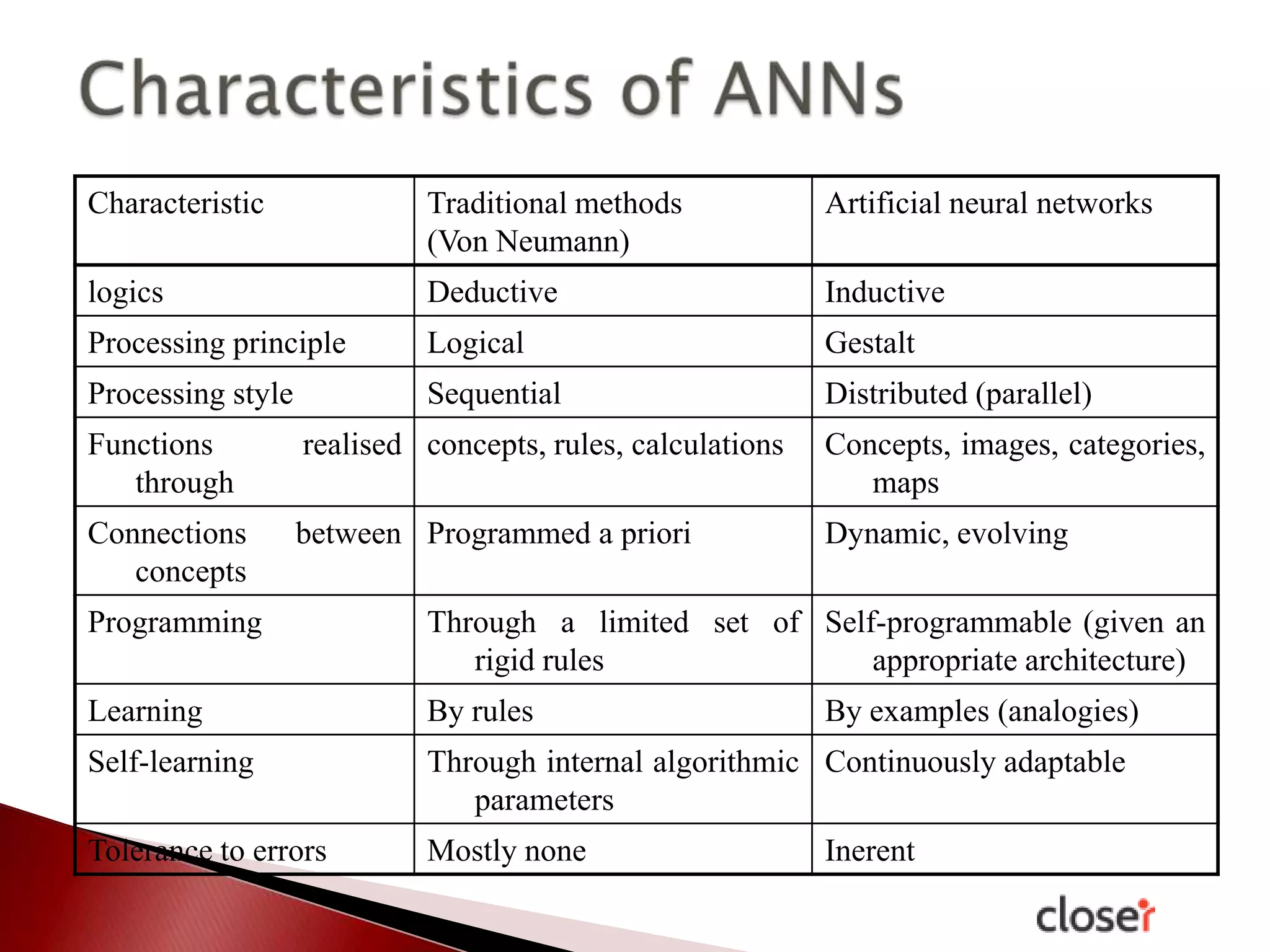

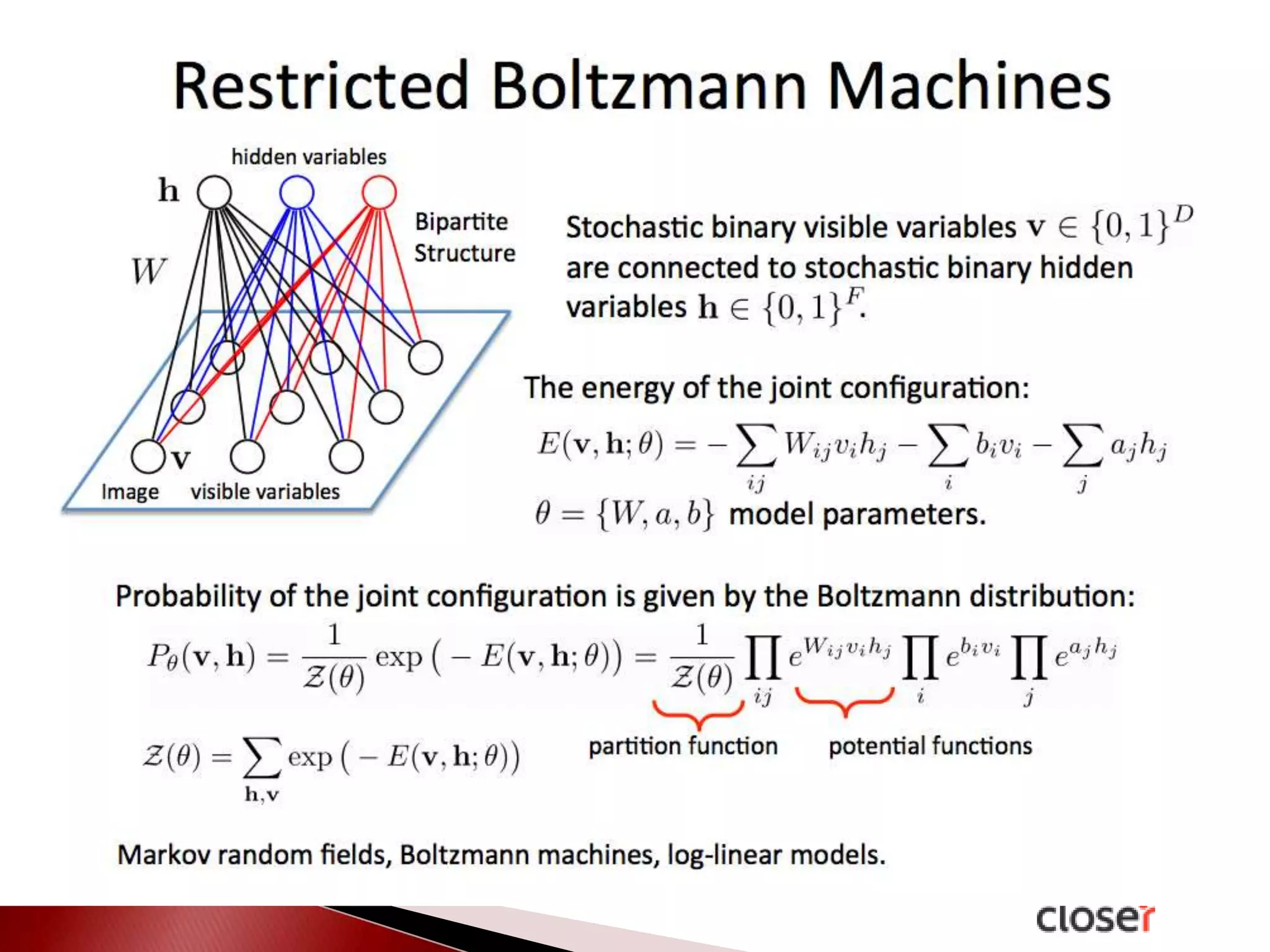

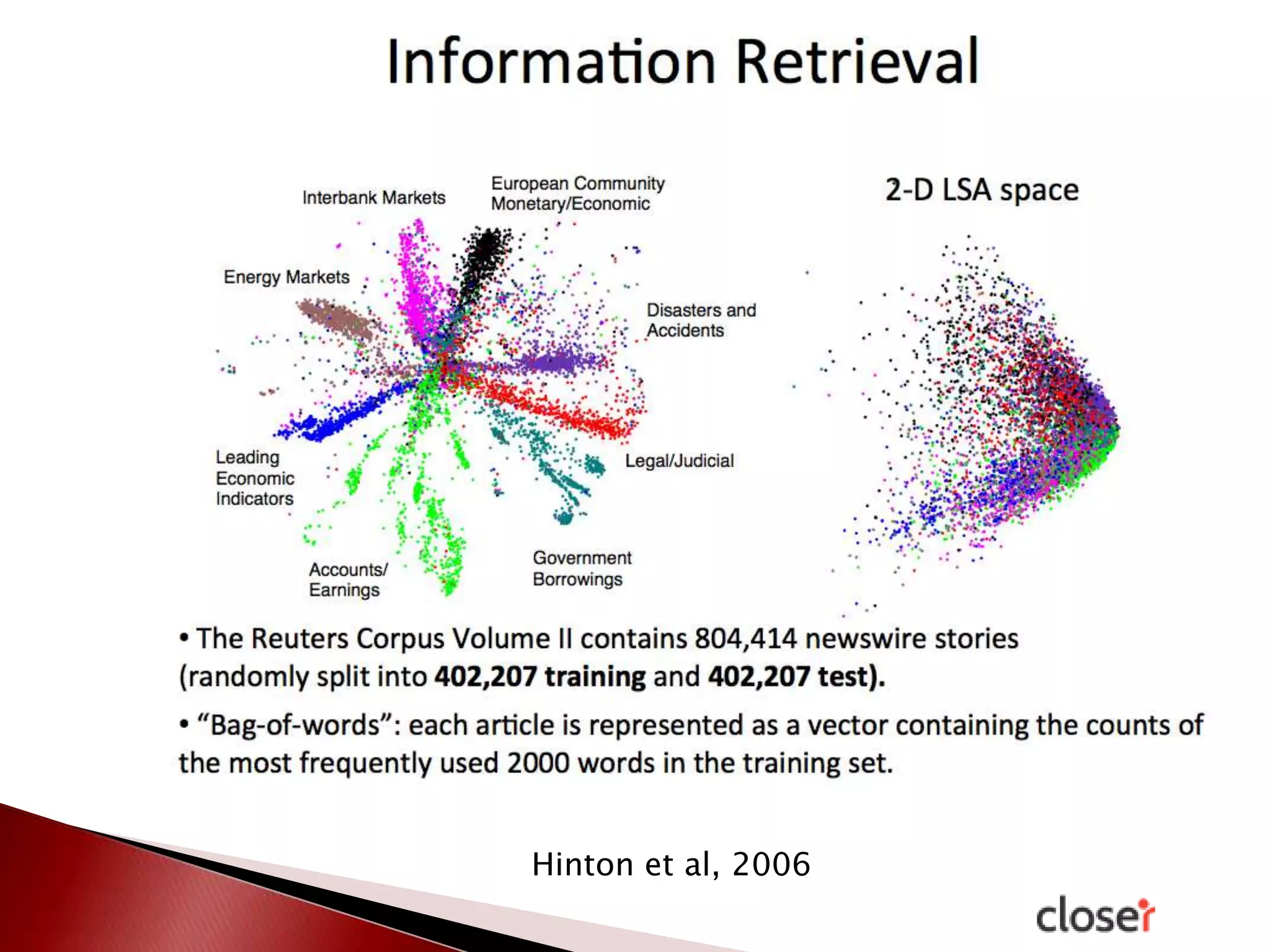

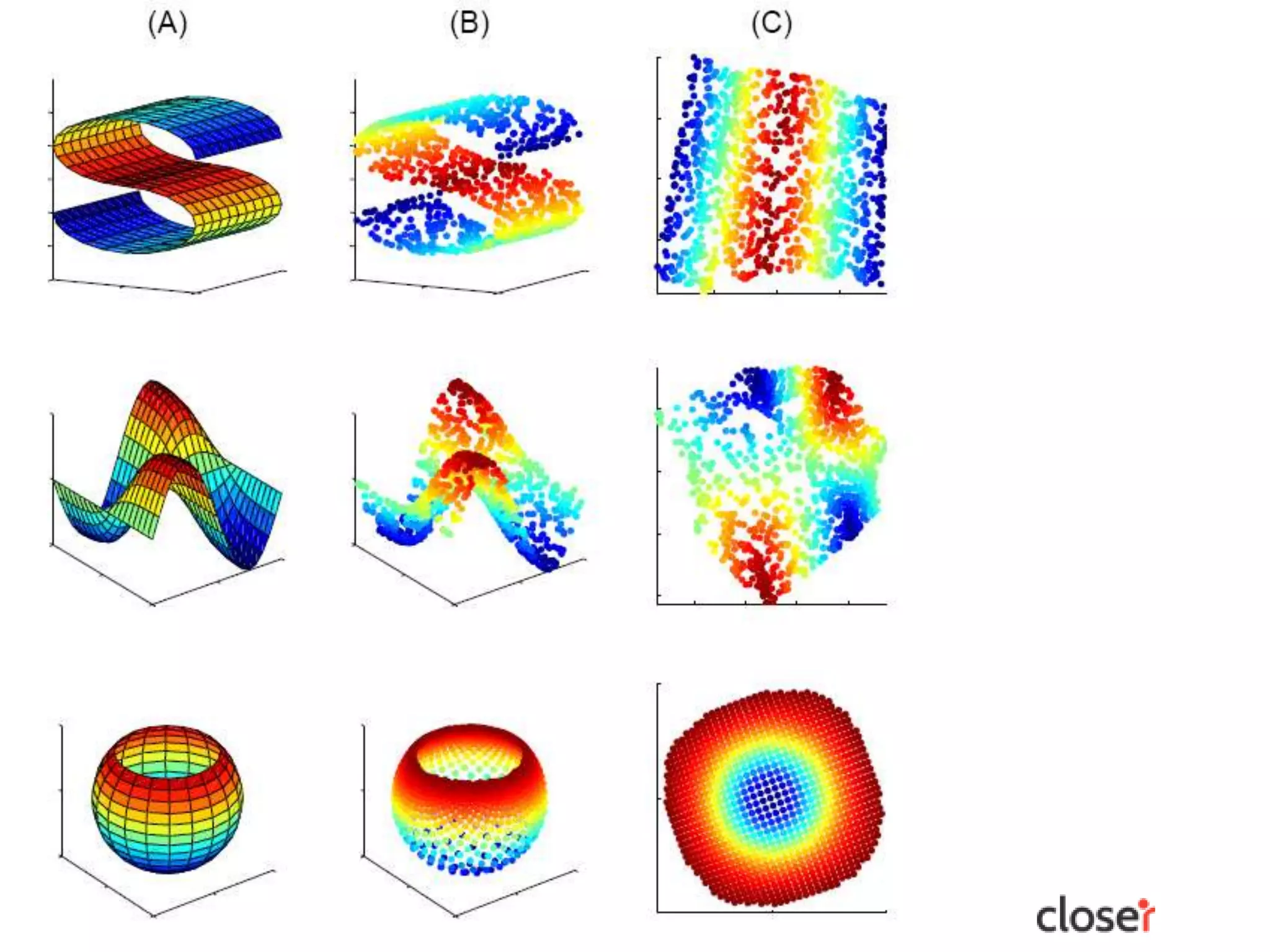

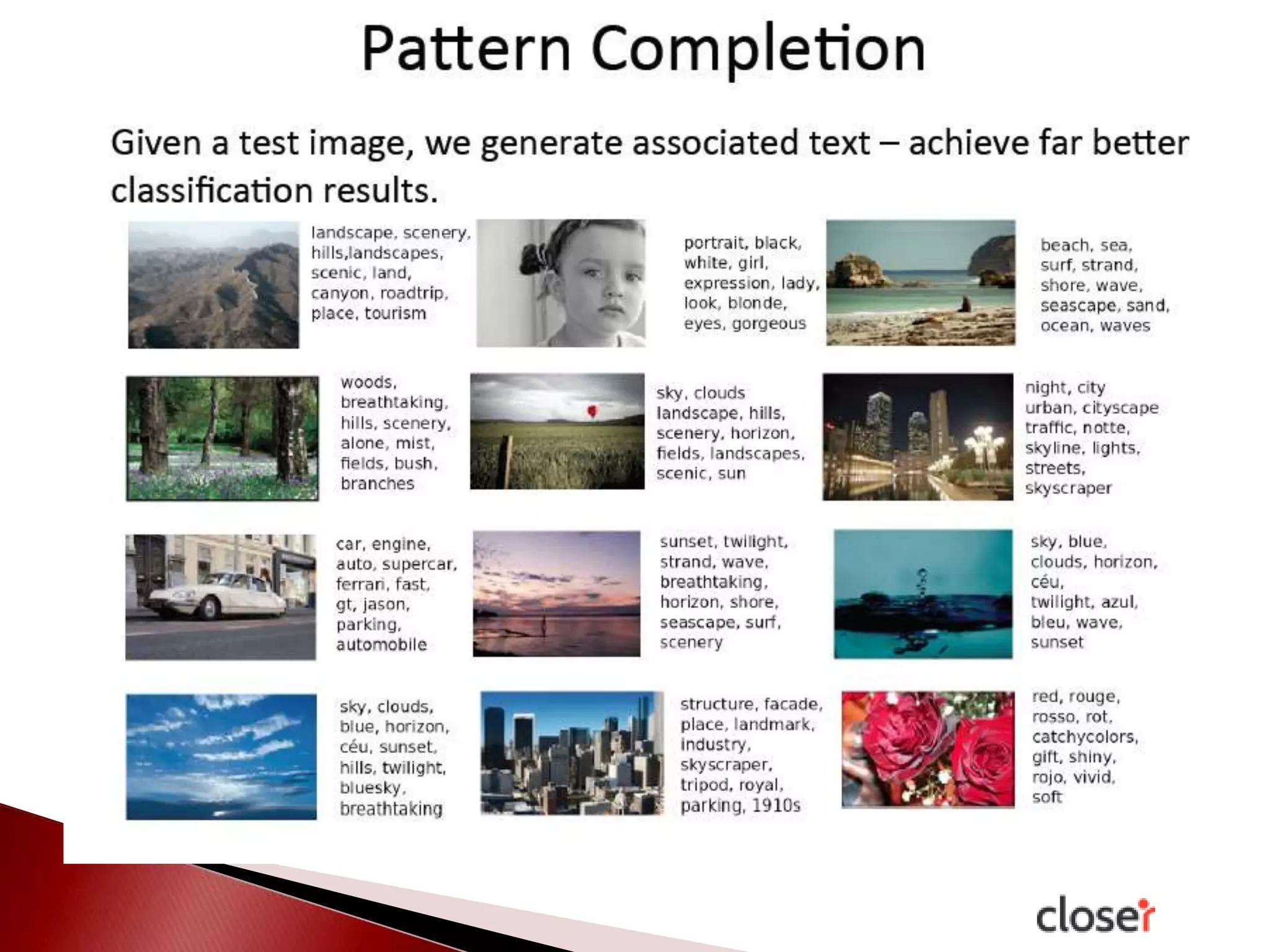

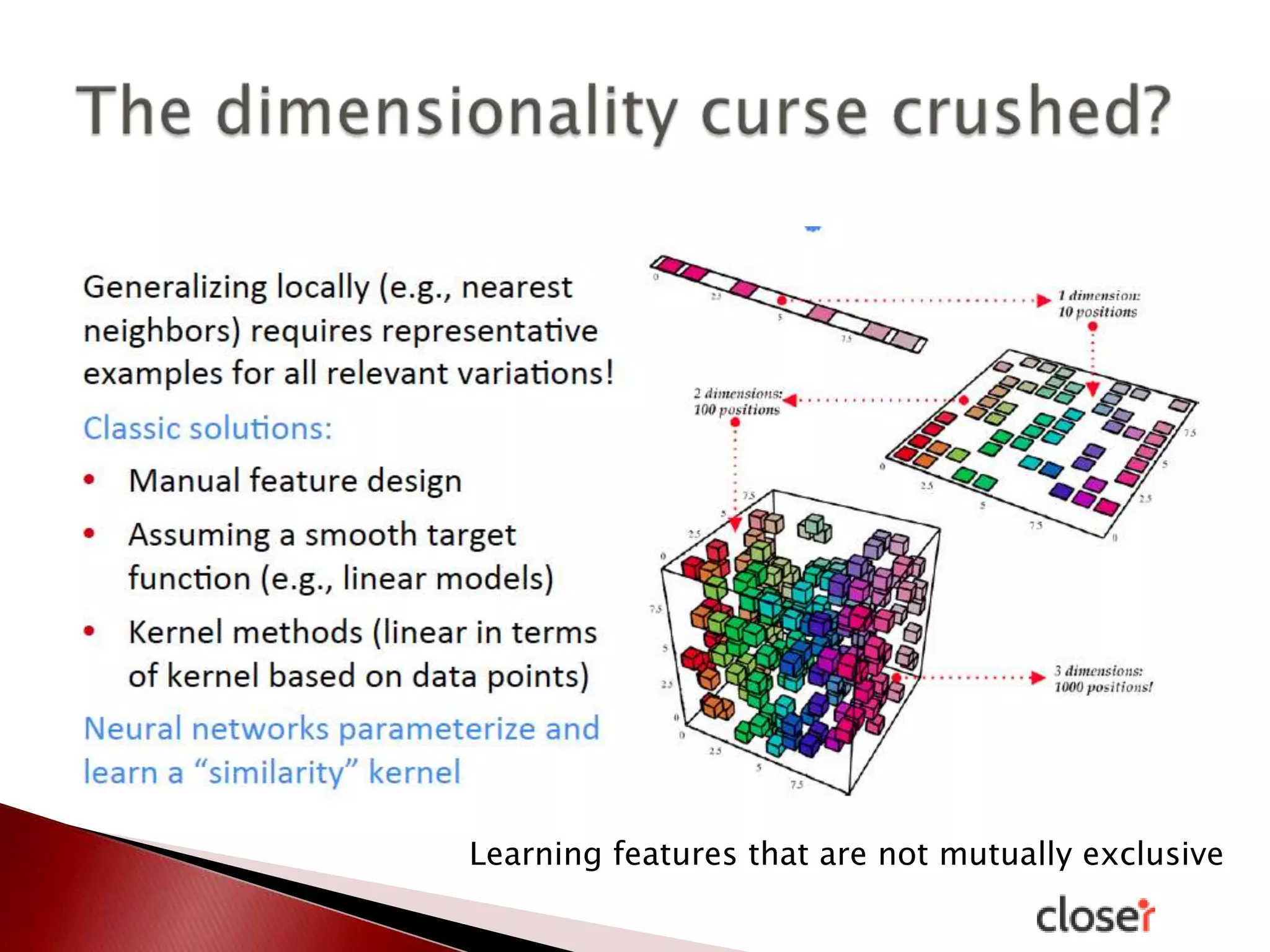

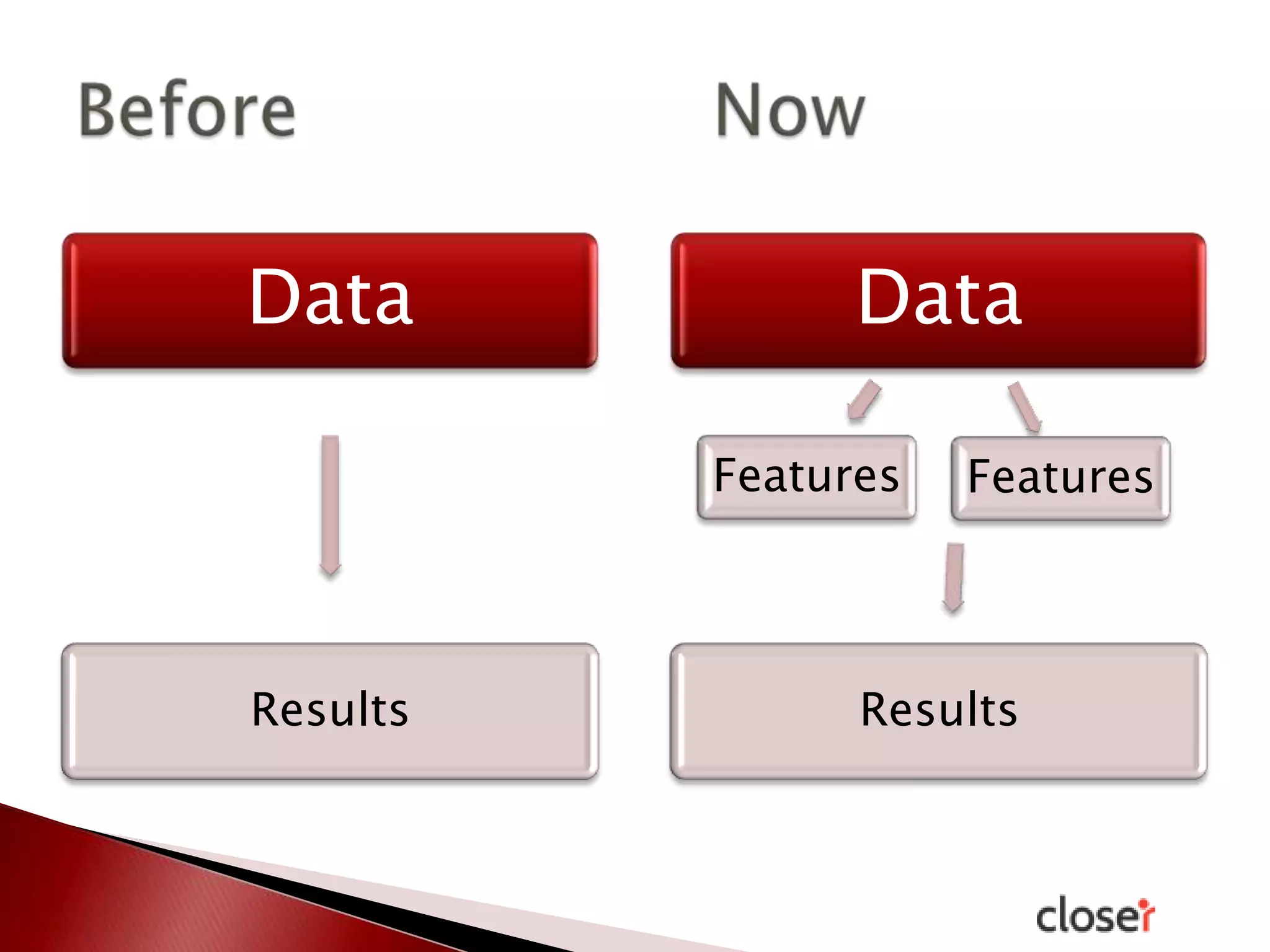

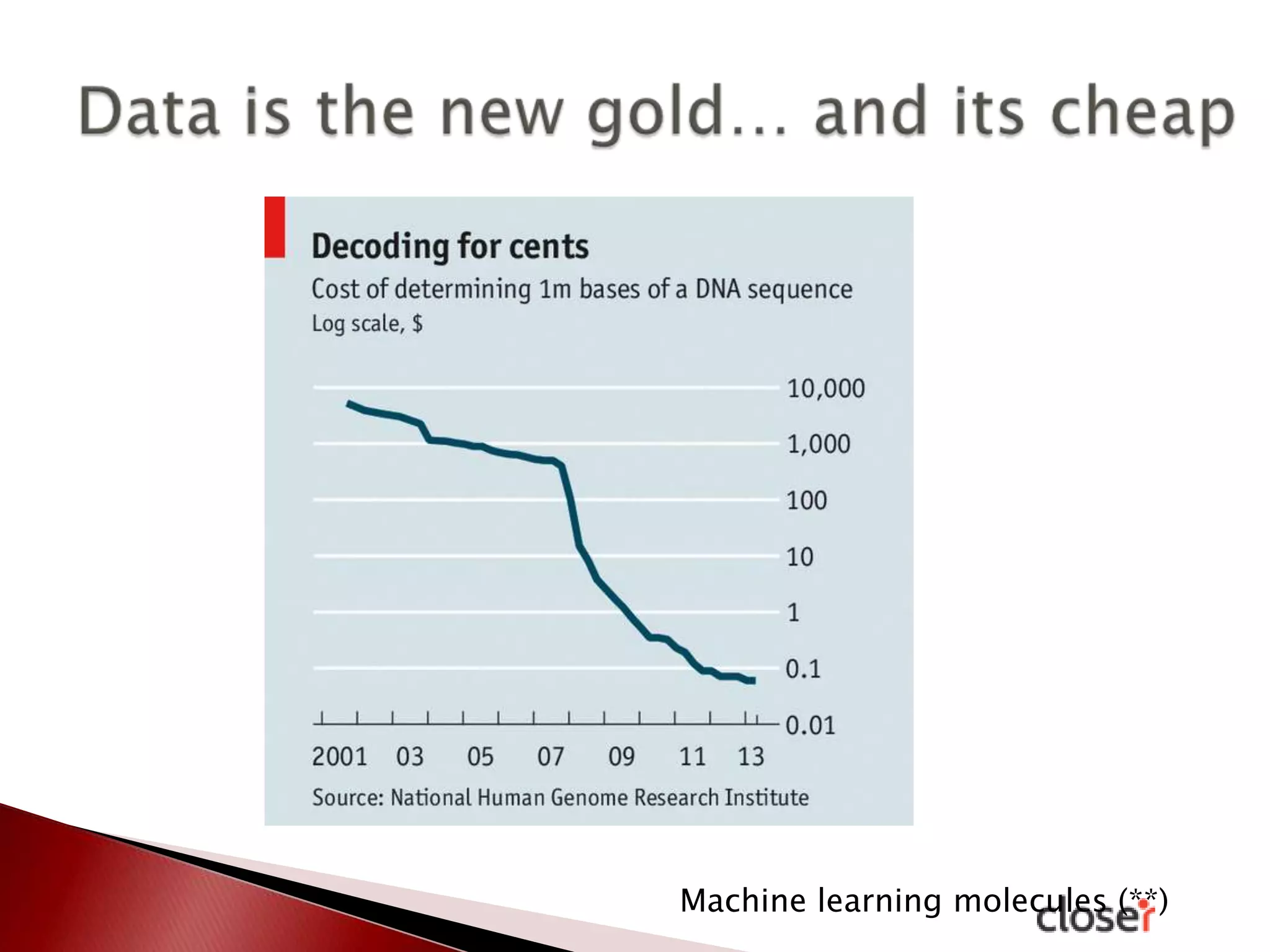

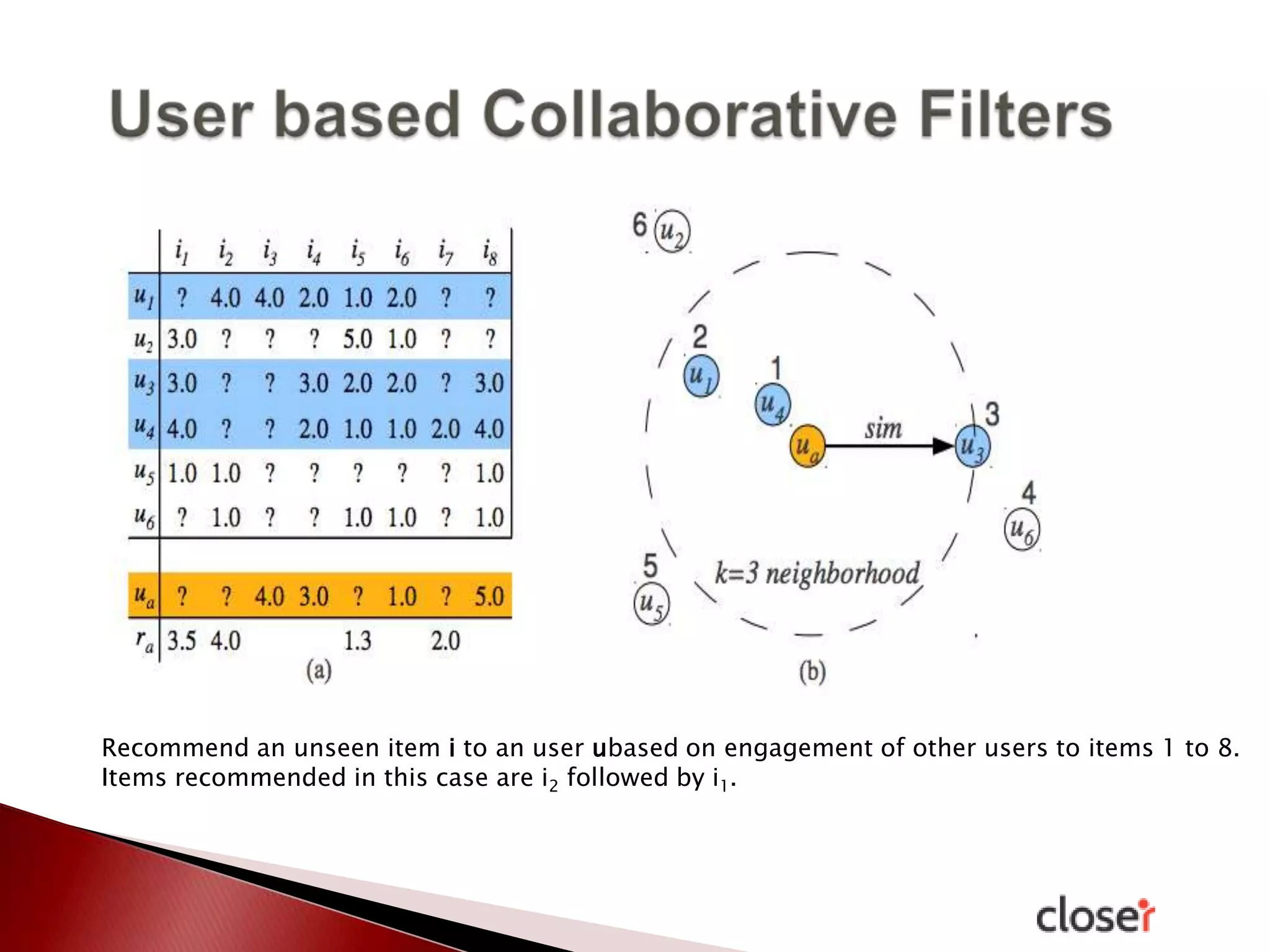

The document discusses the development and applications of machine learning, particularly focusing on neural networks and deep learning. It addresses challenges associated with neural networks, the importance of data in training models, and the capabilities and limitations of these algorithms. The text emphasizes the role of big data in transforming industries and highlights the need for careful interpretation of machine learning outputs.