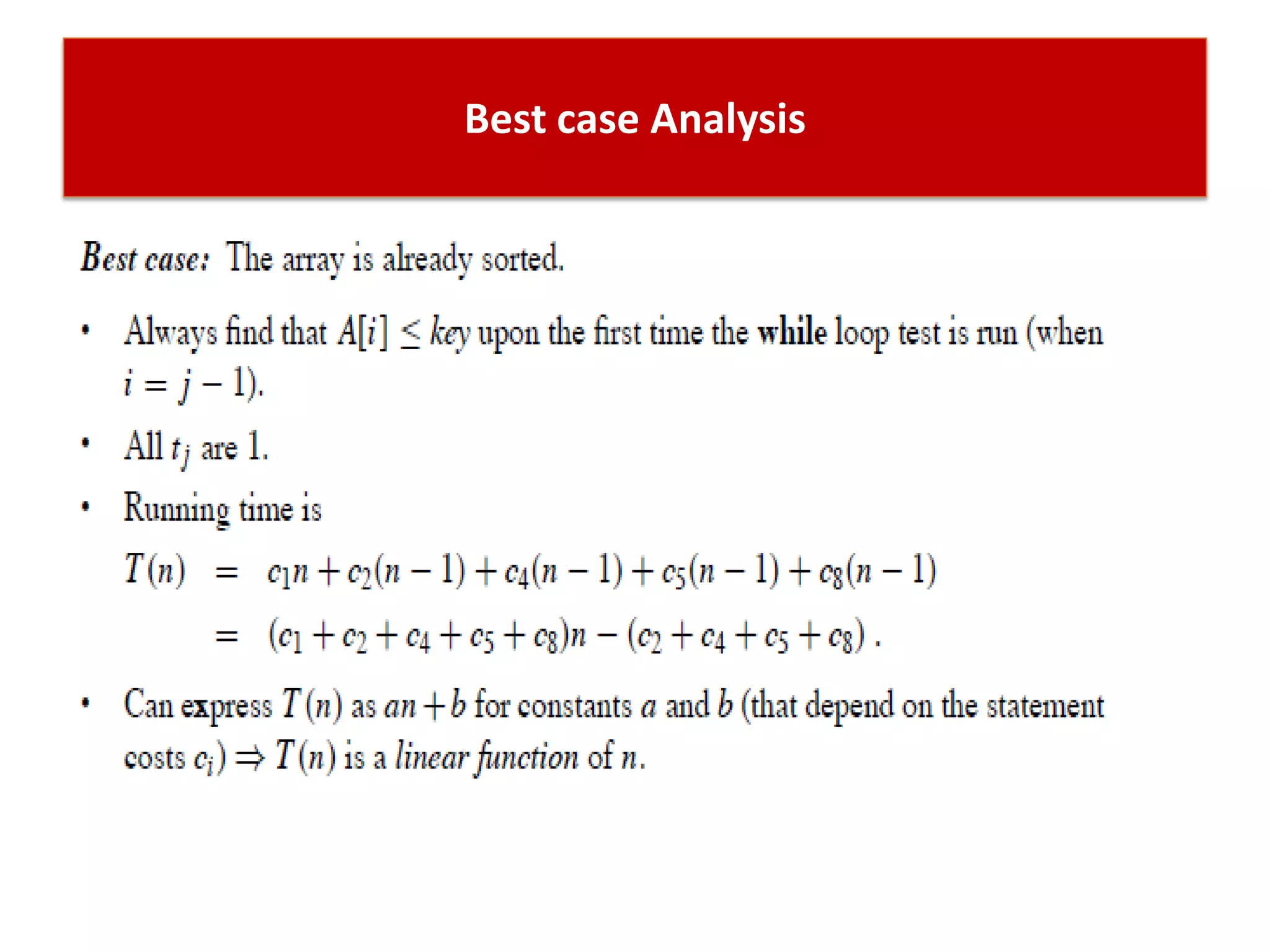

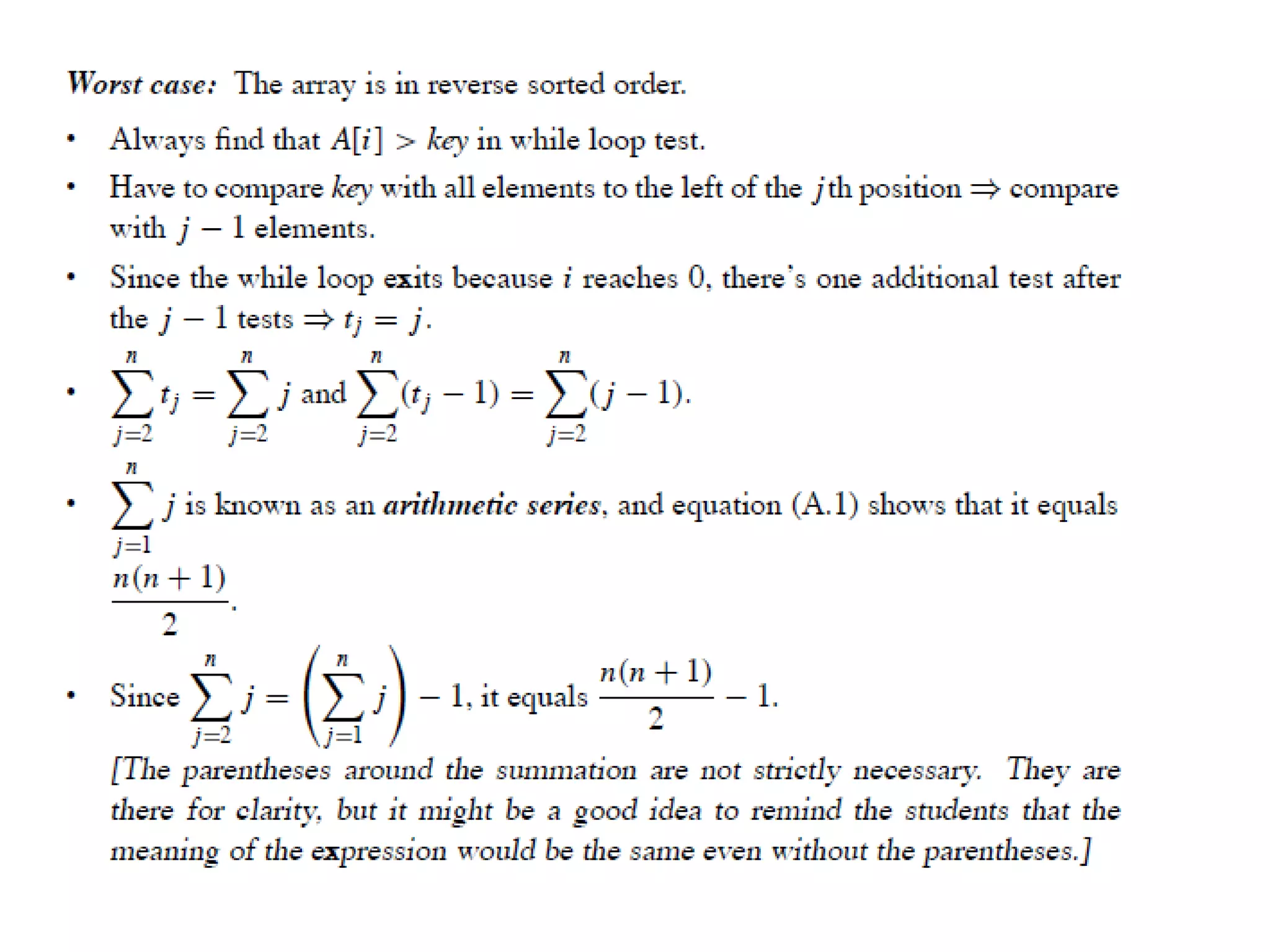

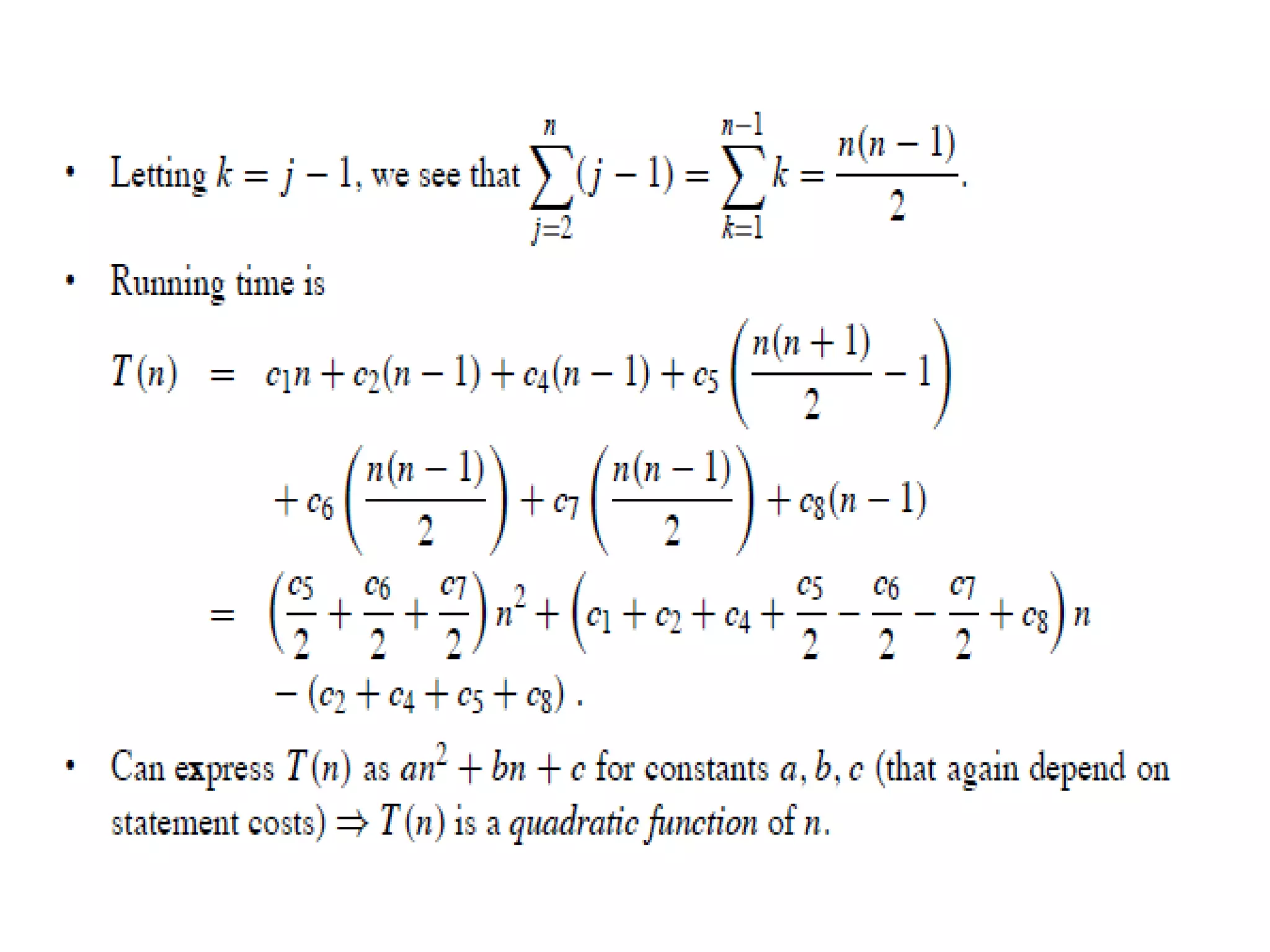

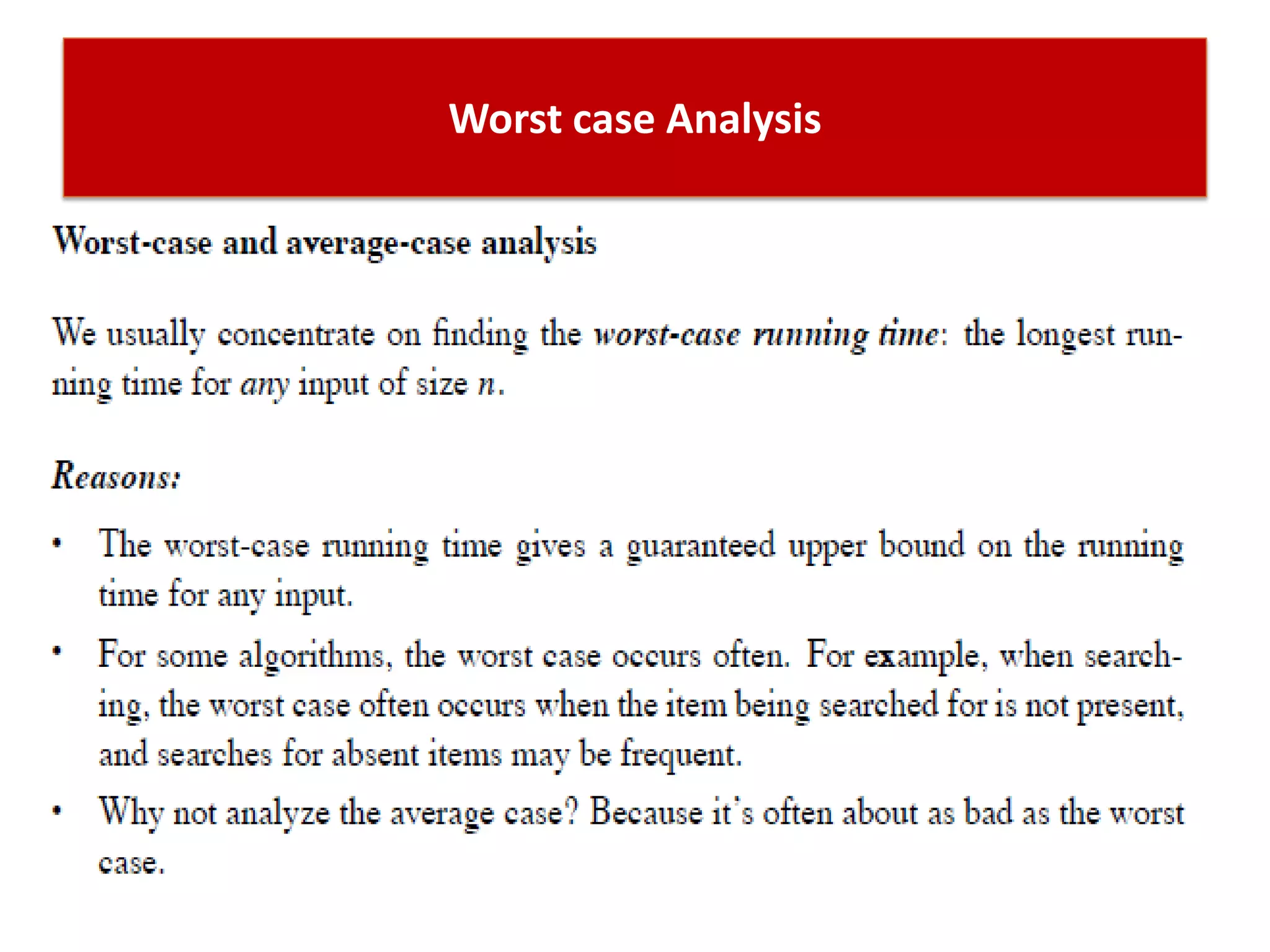

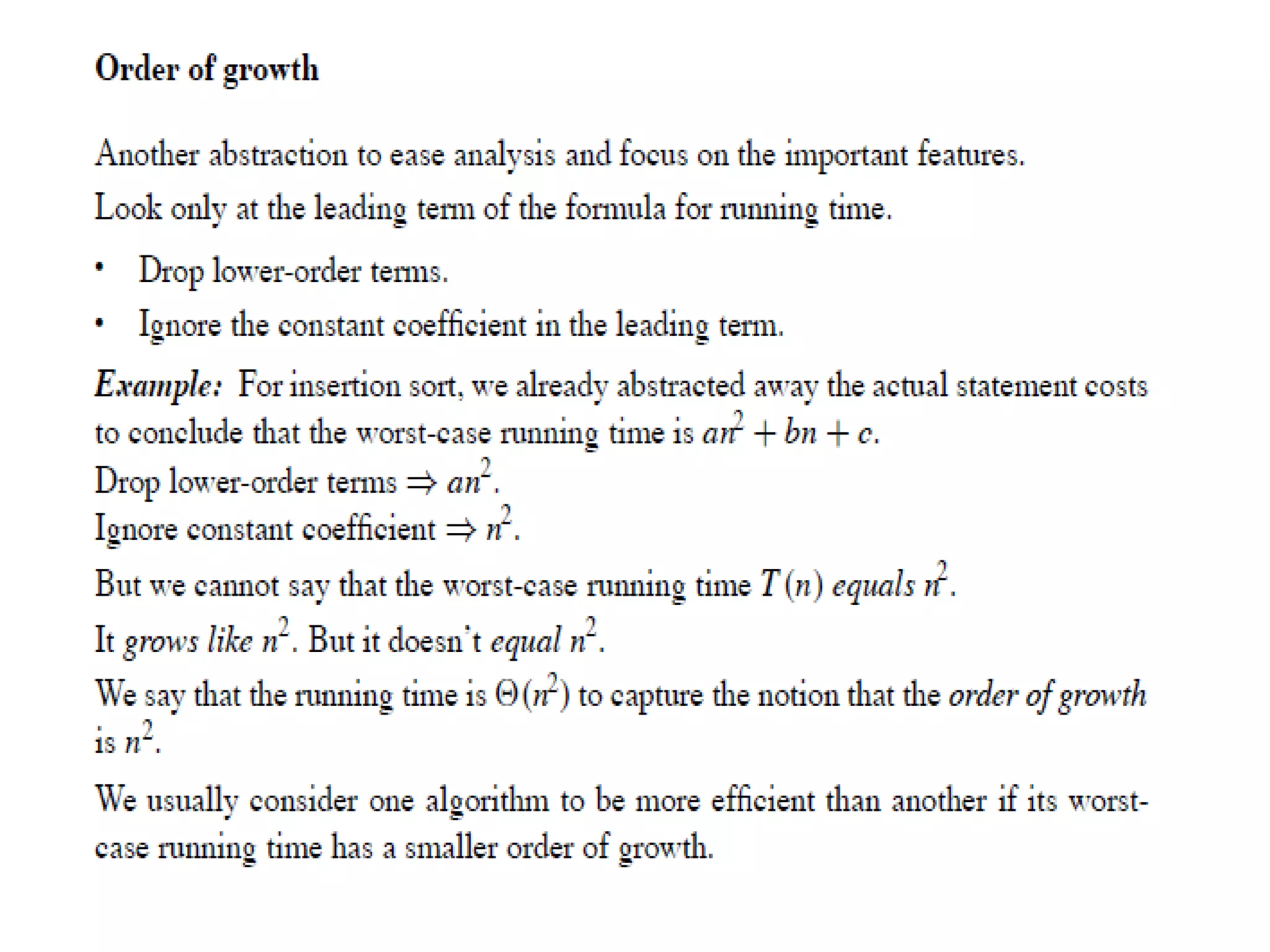

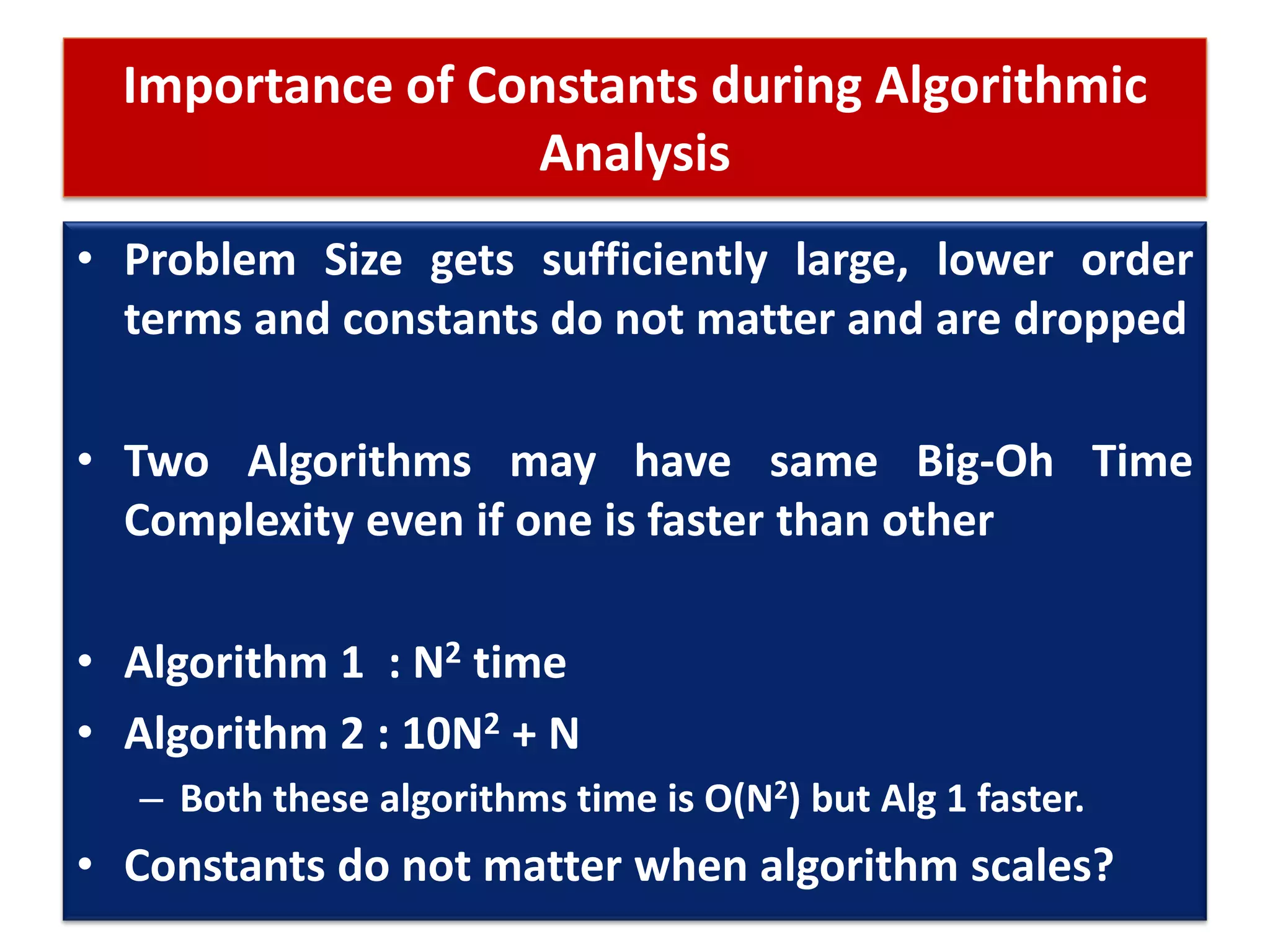

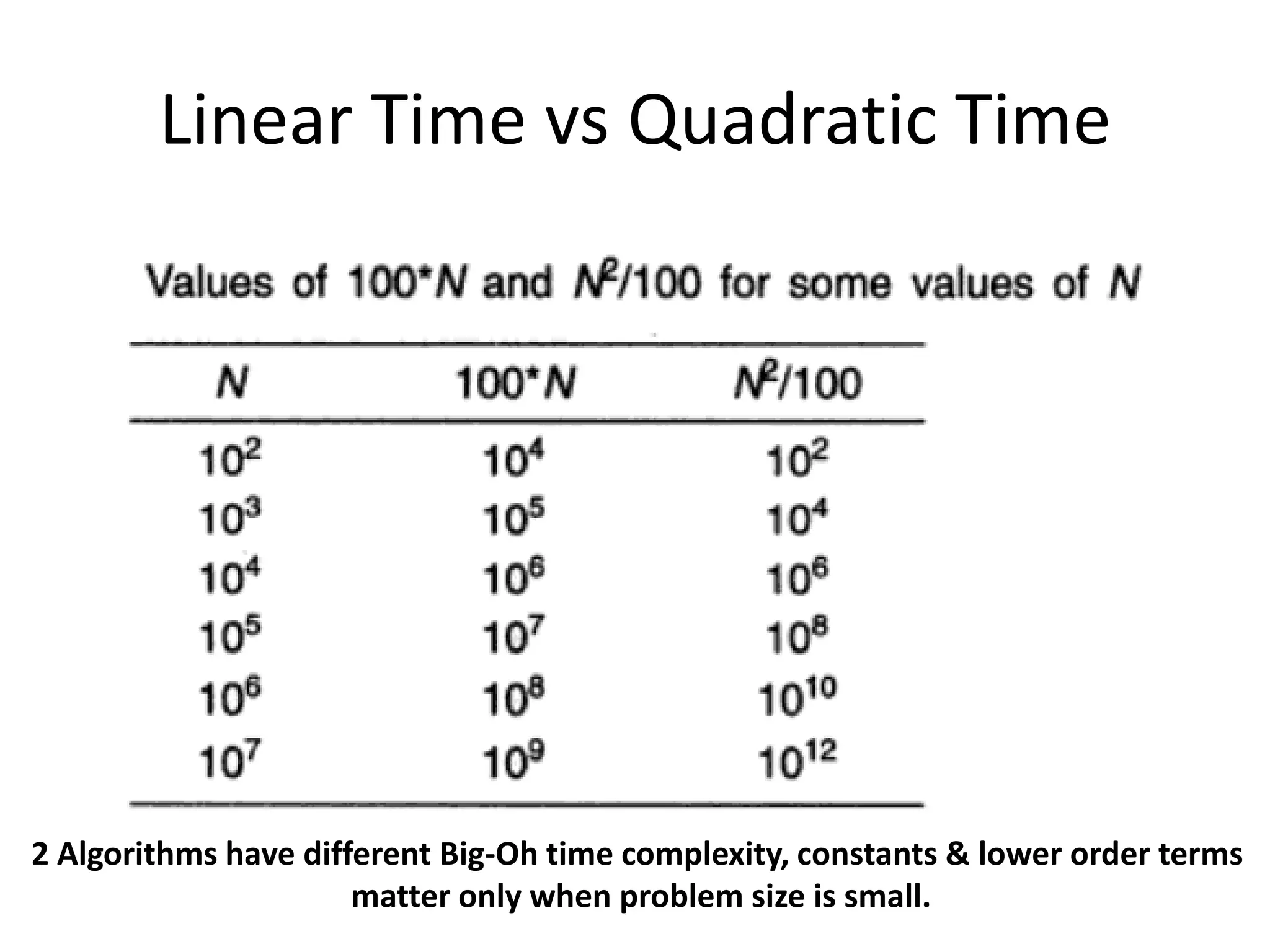

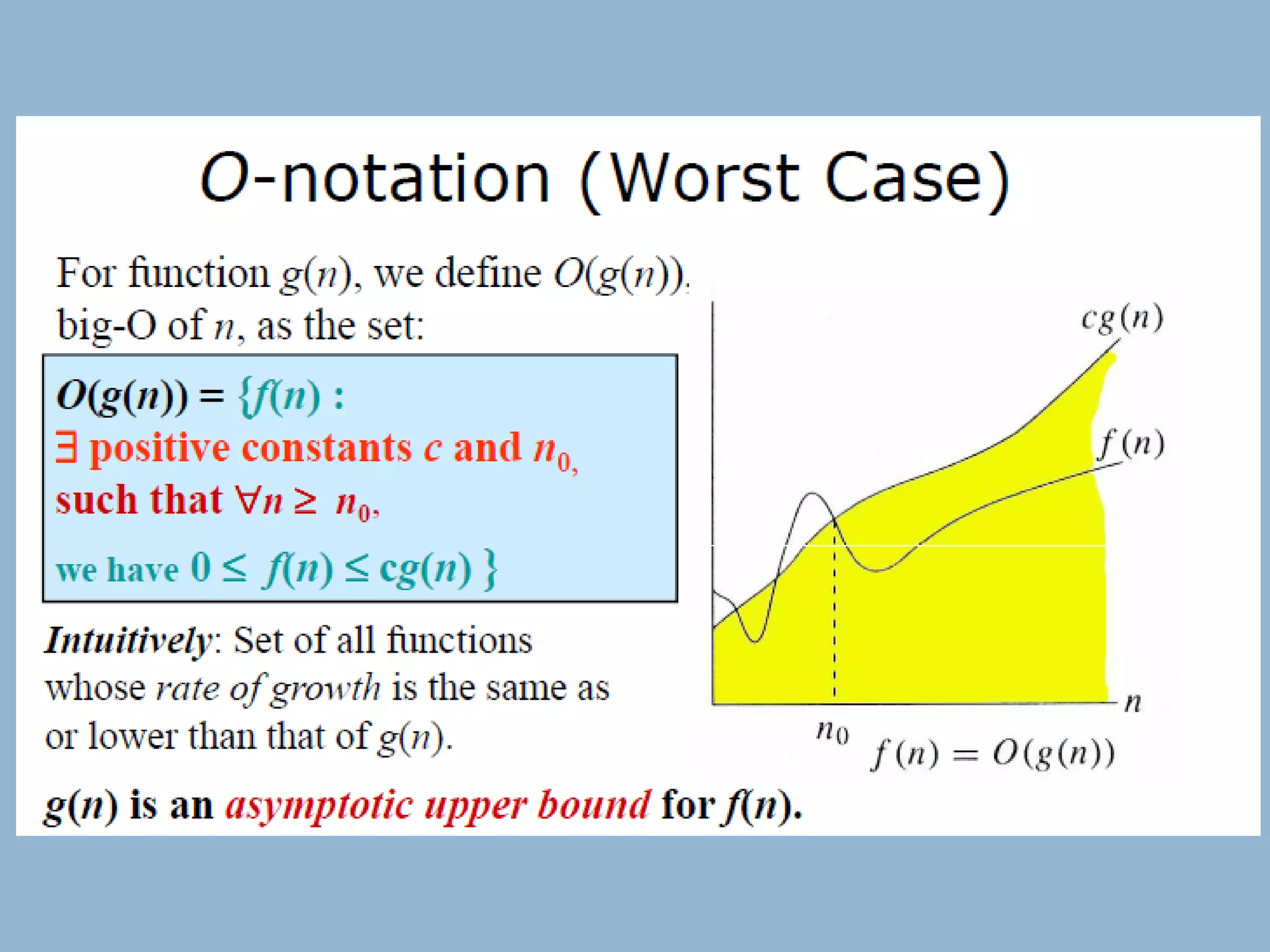

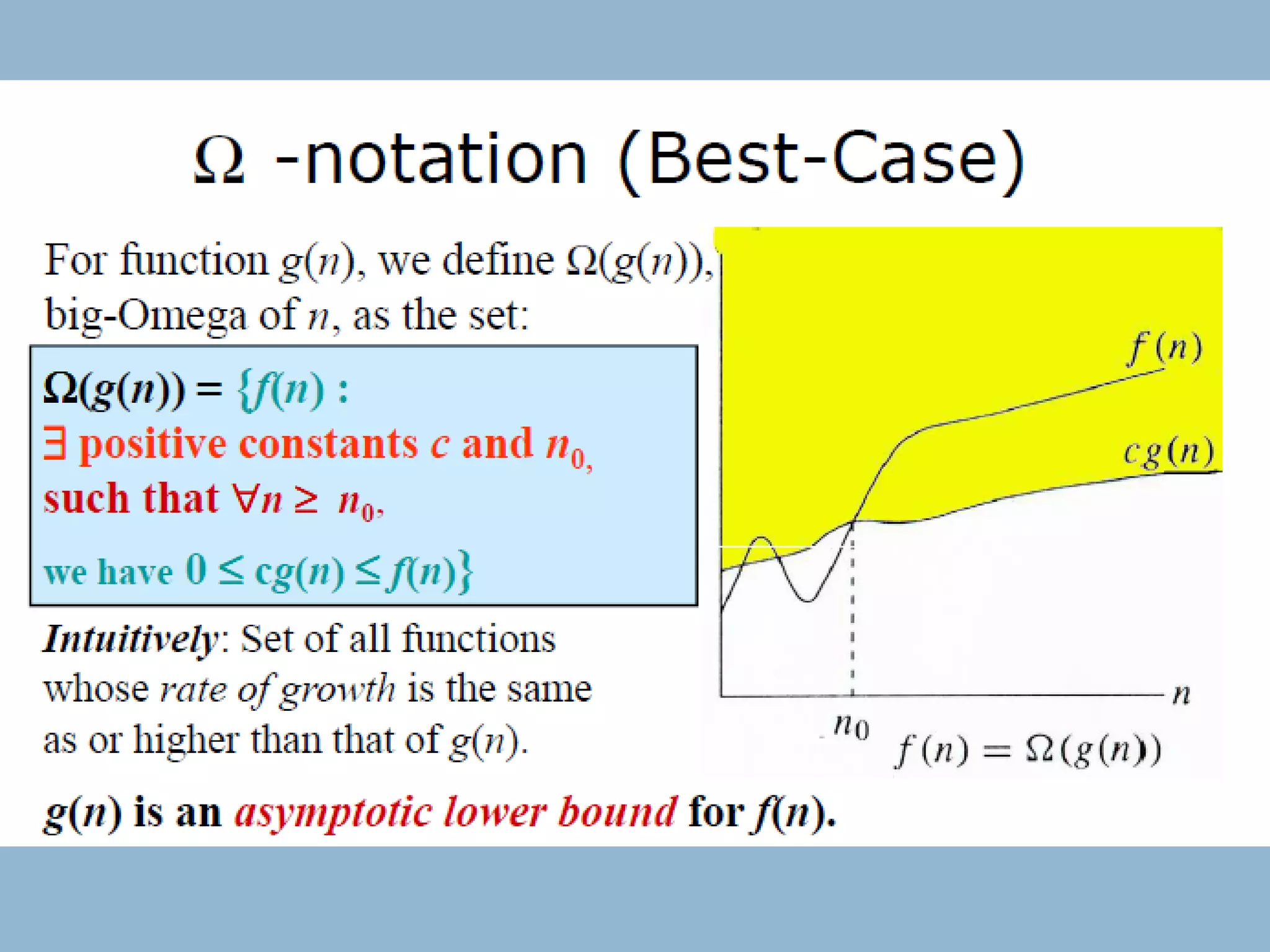

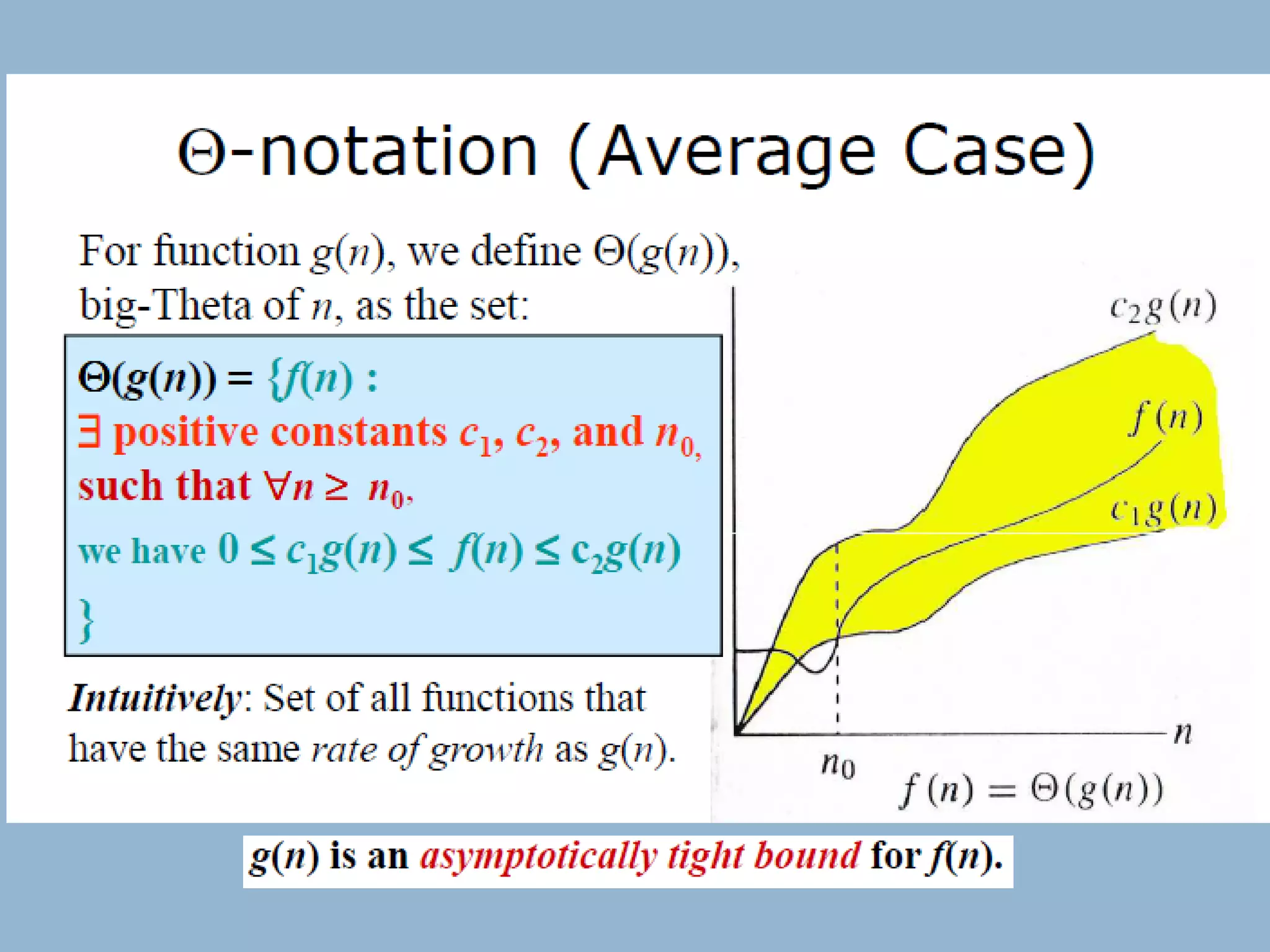

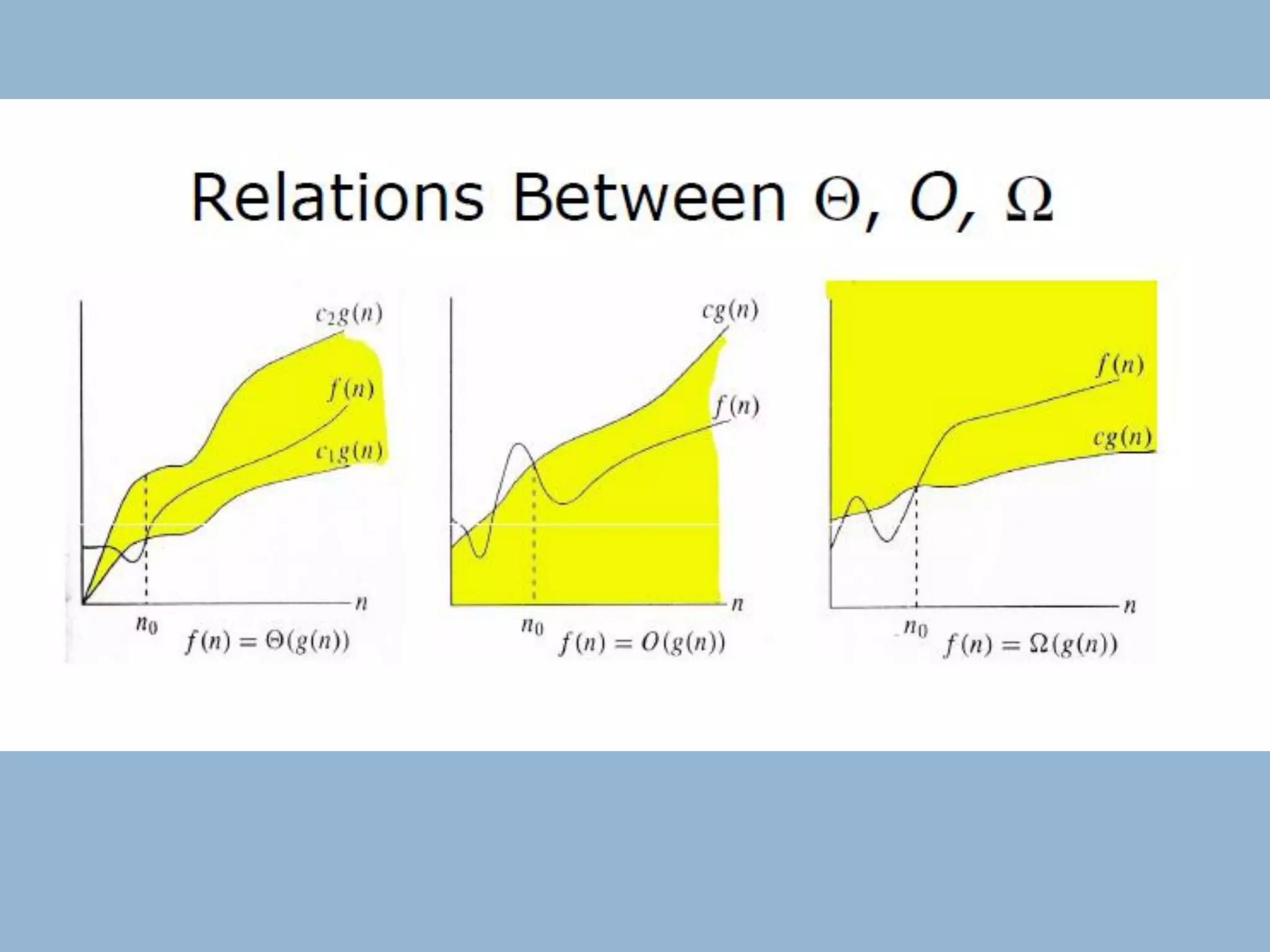

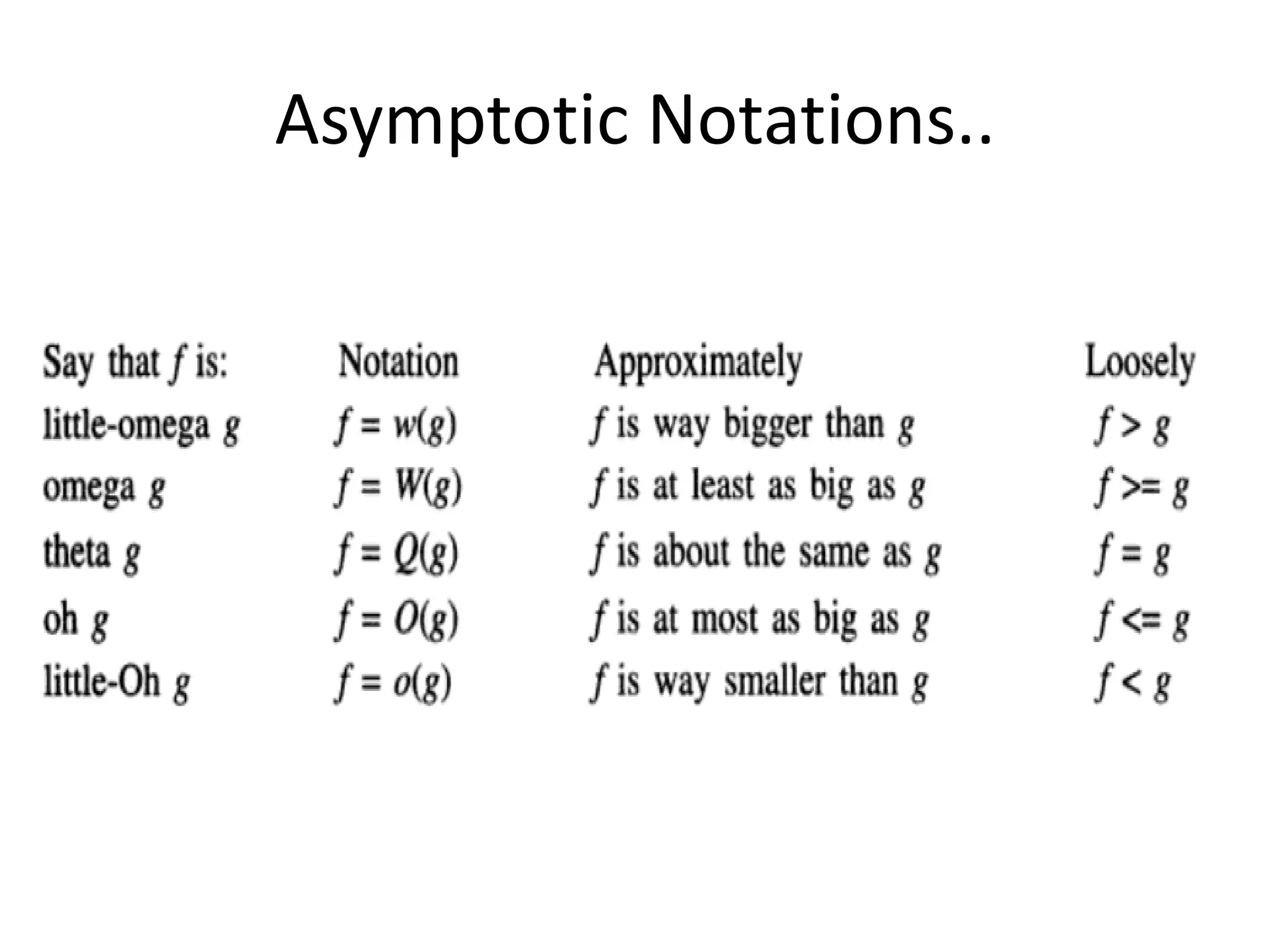

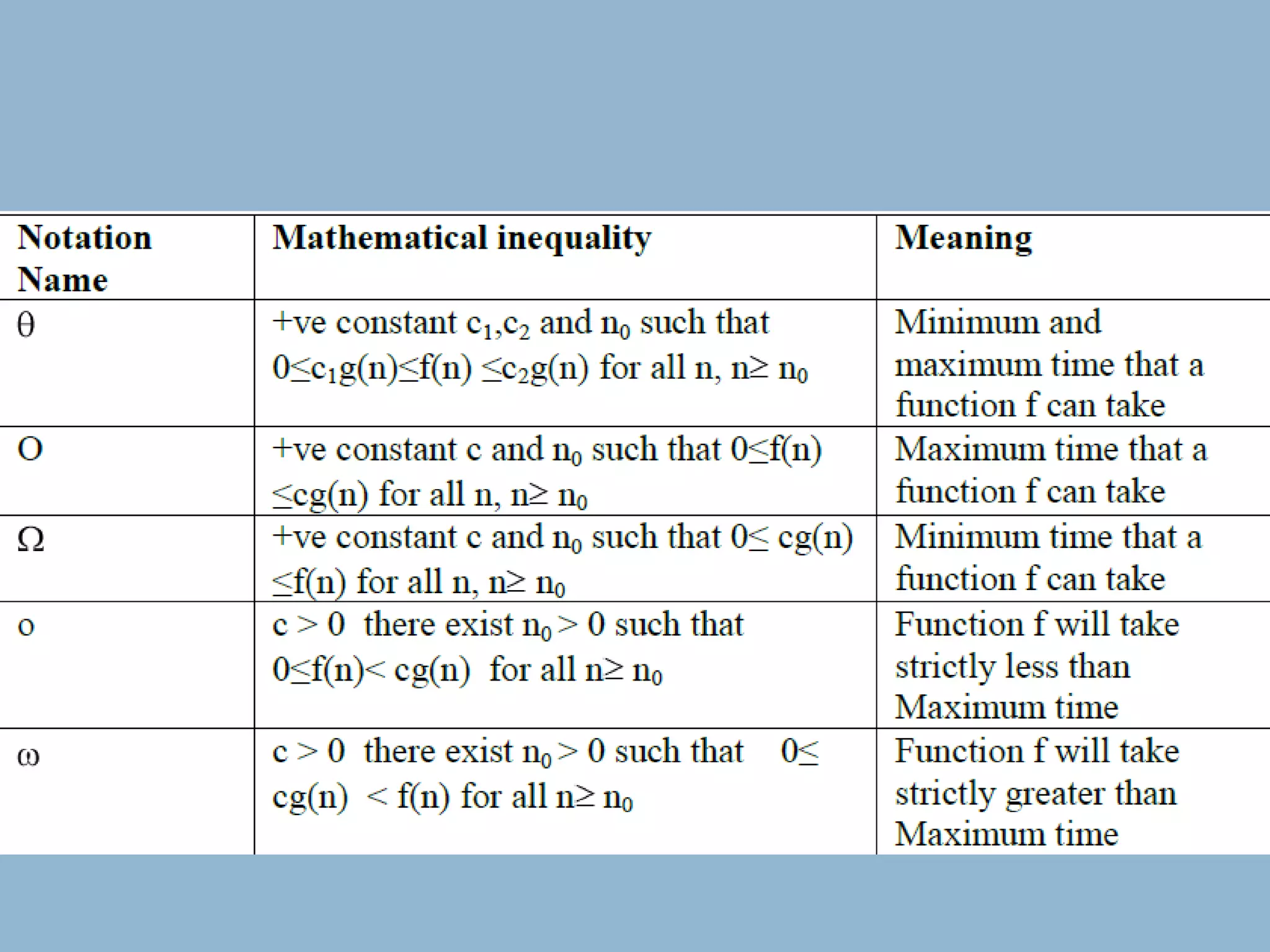

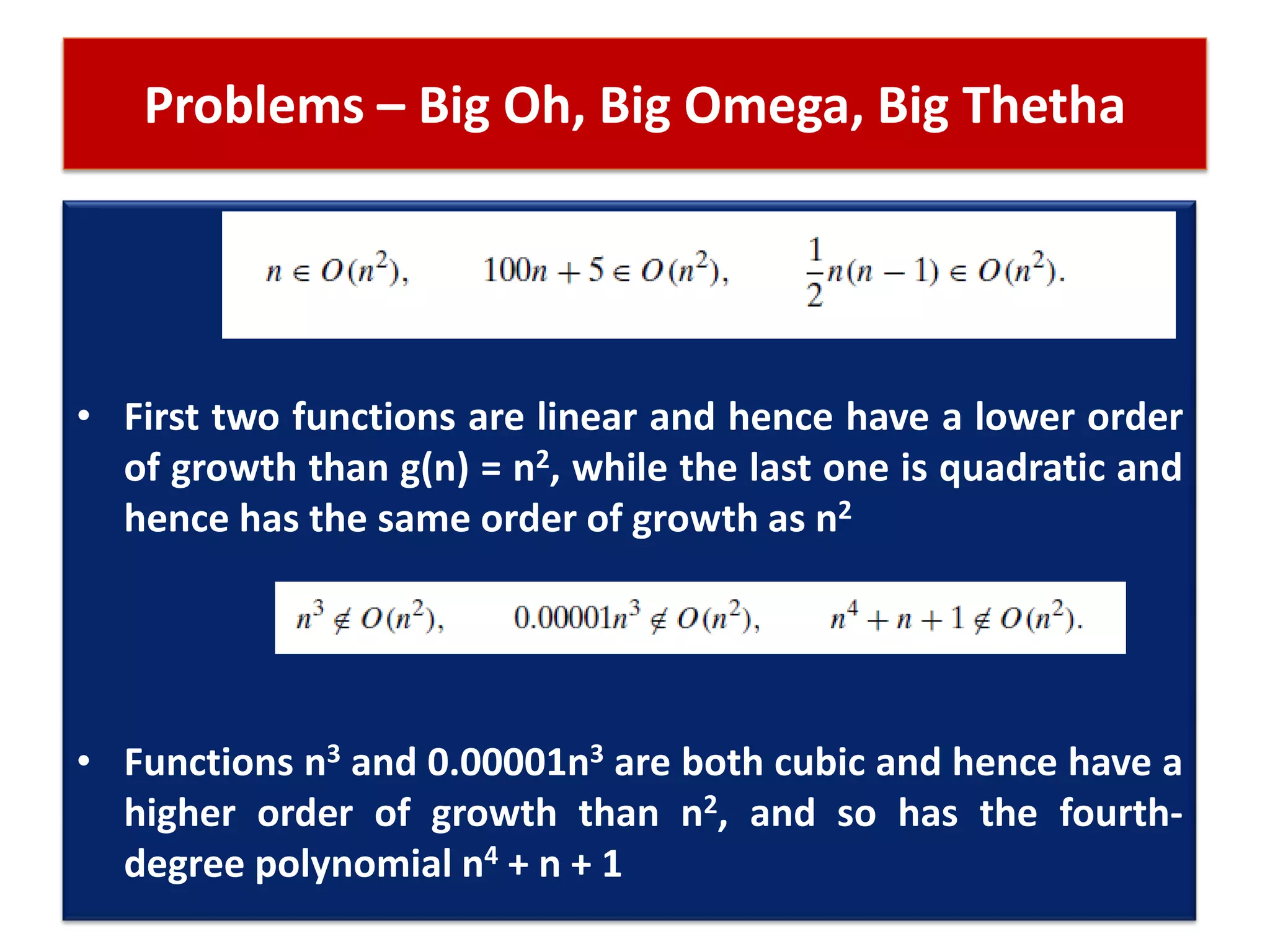

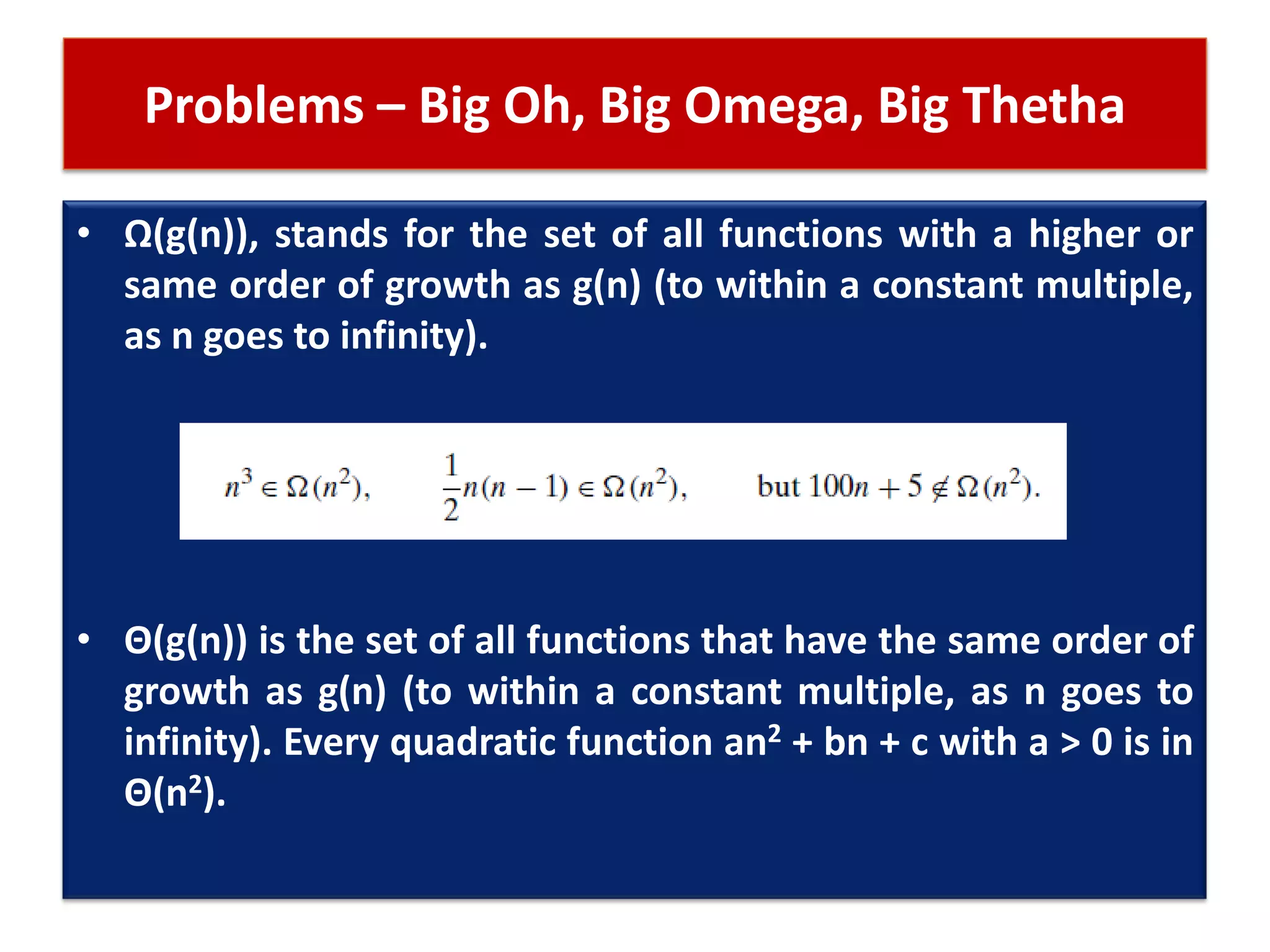

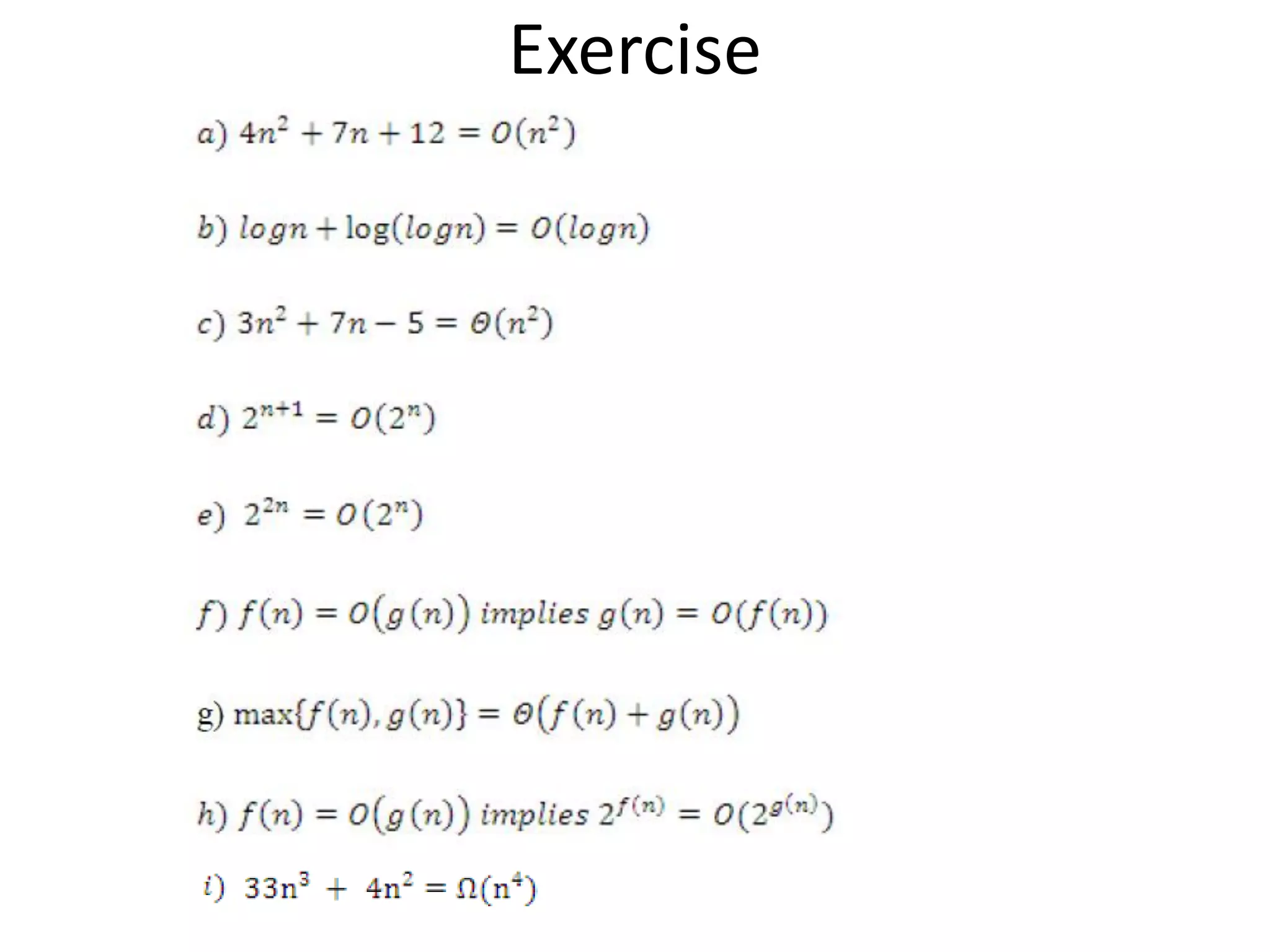

This document discusses asymptotic notations and their use in analyzing the time complexity of algorithms. It introduces the Big-O, Big-Omega, and Big-Theta notations for describing the asymptotic upper bound, lower bound, and tight bound of an algorithm's running time. The document explains that asymptotic notations allow algorithms to be compared by ignoring lower-order terms and constants, and focusing on the highest-order term that dominates as the input size increases. Examples are provided to illustrate the different orders of growth and the notations used to describe them.

![How to calculate running time then?

for (i=0; i < n ; i ++) // 1 ; n+1 ; n times

{

for (j=0; j < n ; j ++) // n ; n(n+1) ; n(n)

{

c[i][j] = a[i][j] + b[i][j];

}

}

3n2+4n+ 2 = O(n2)](https://image.slidesharecdn.com/lecture4-asymptoticnotations-160326043439/75/Lecture-4-asymptotic-notations-2-2048.jpg)