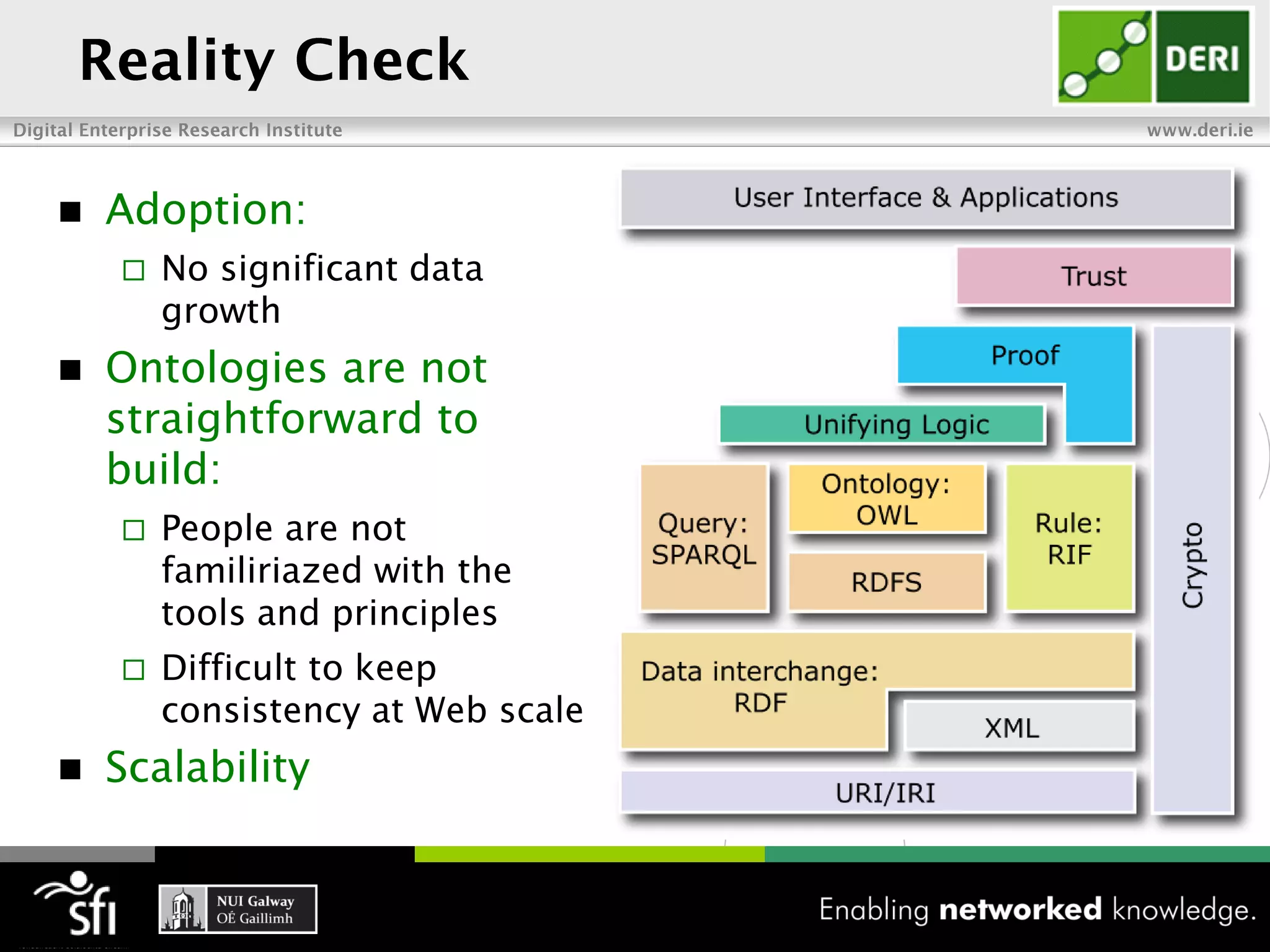

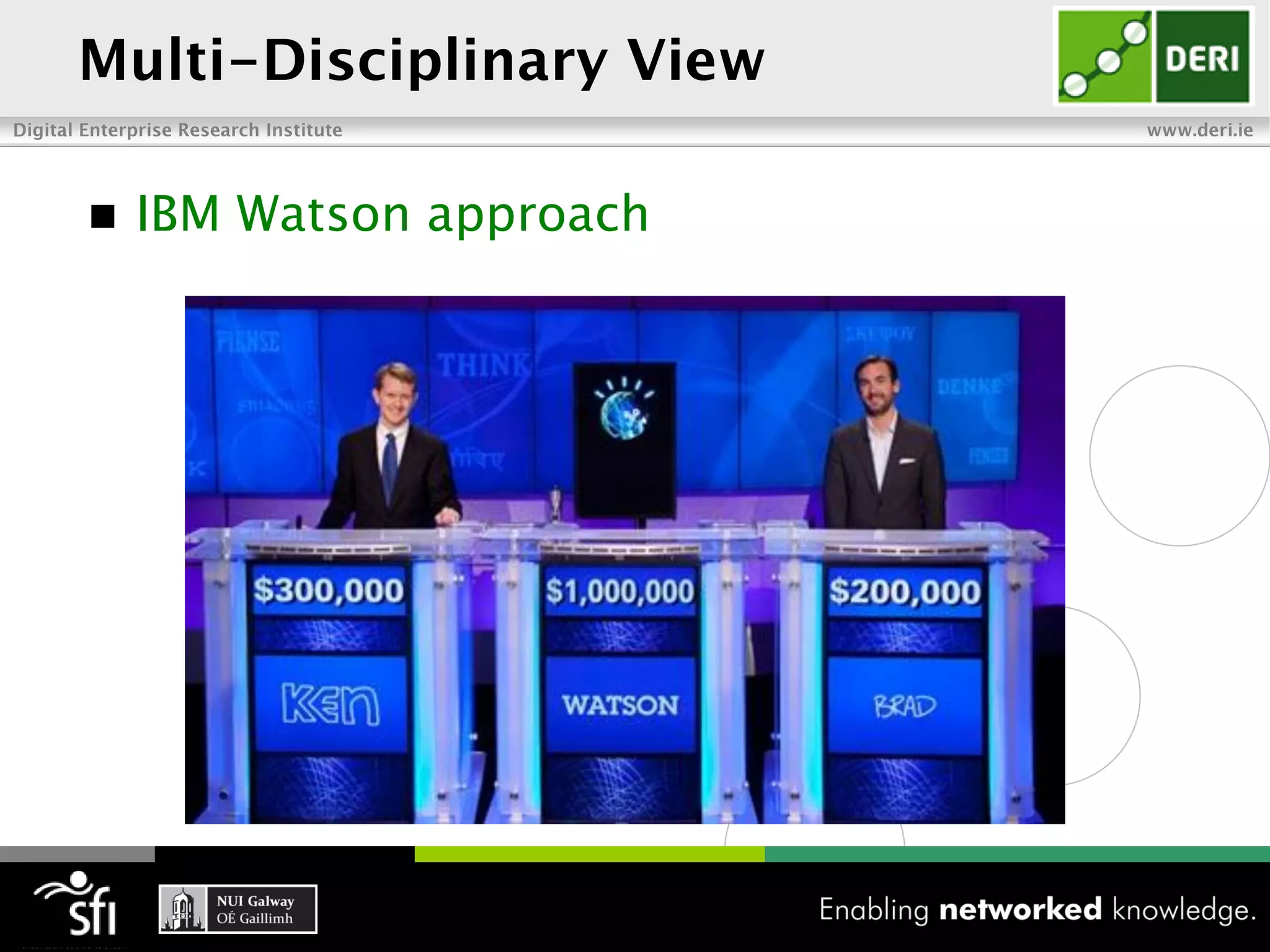

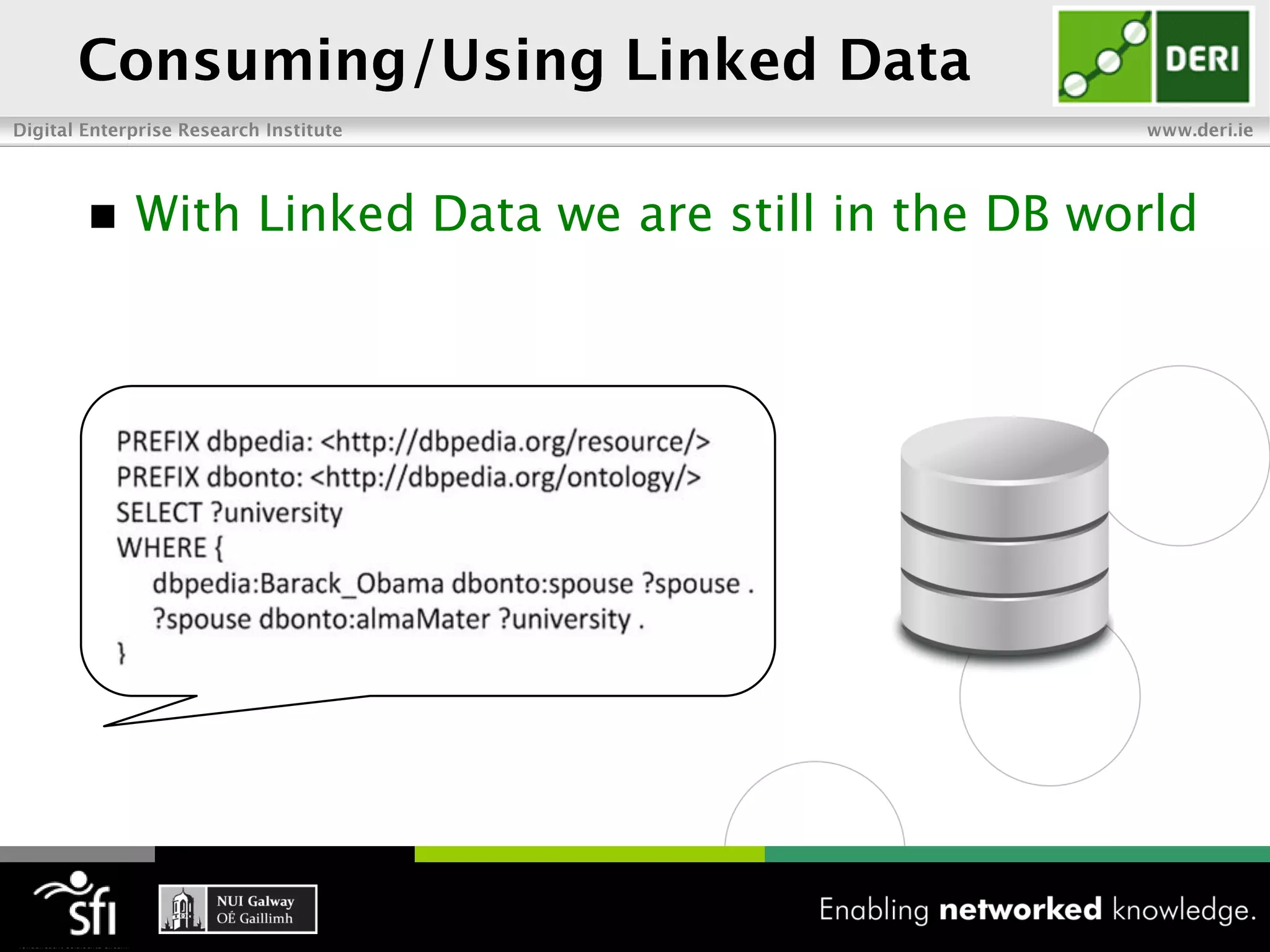

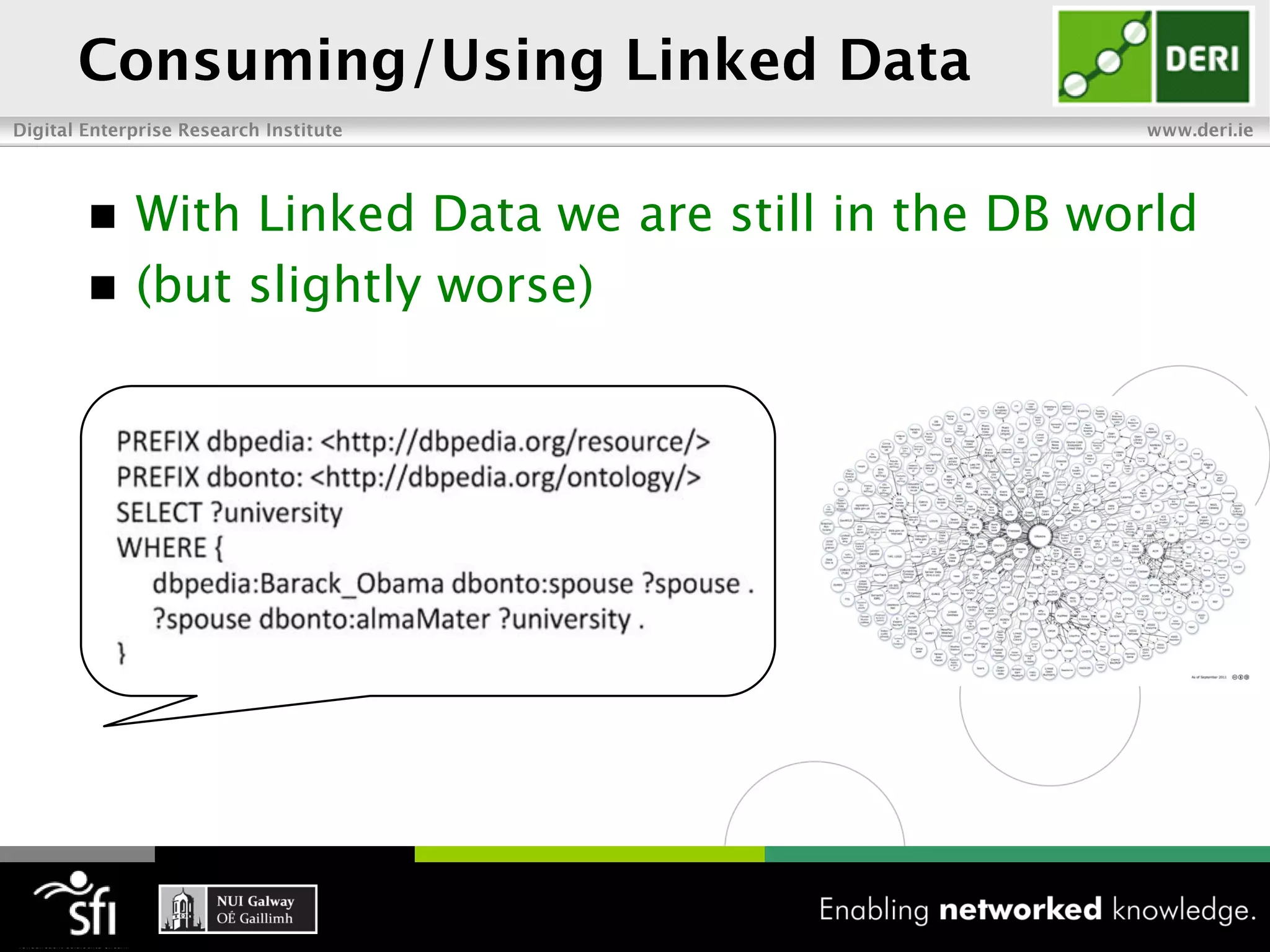

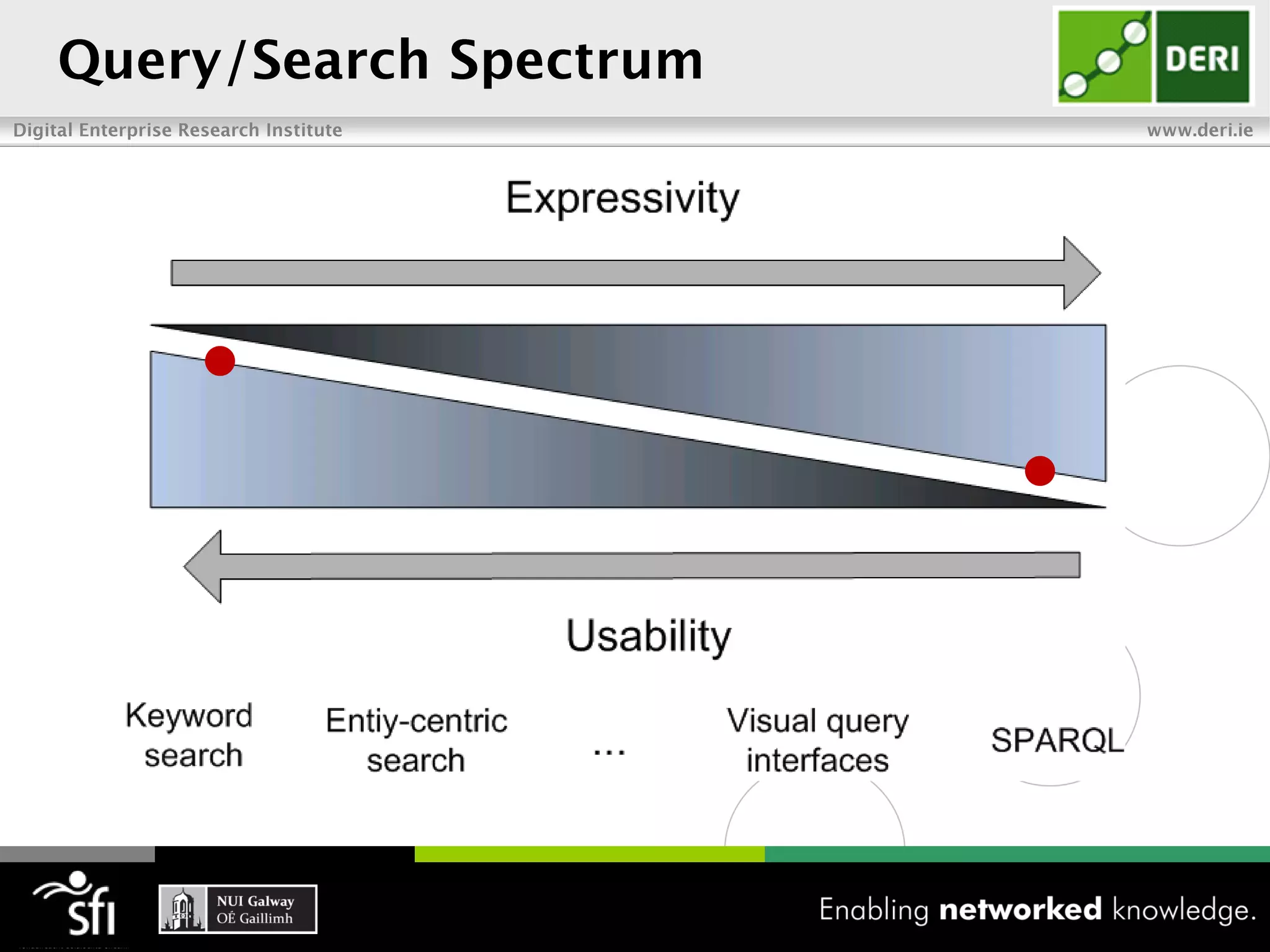

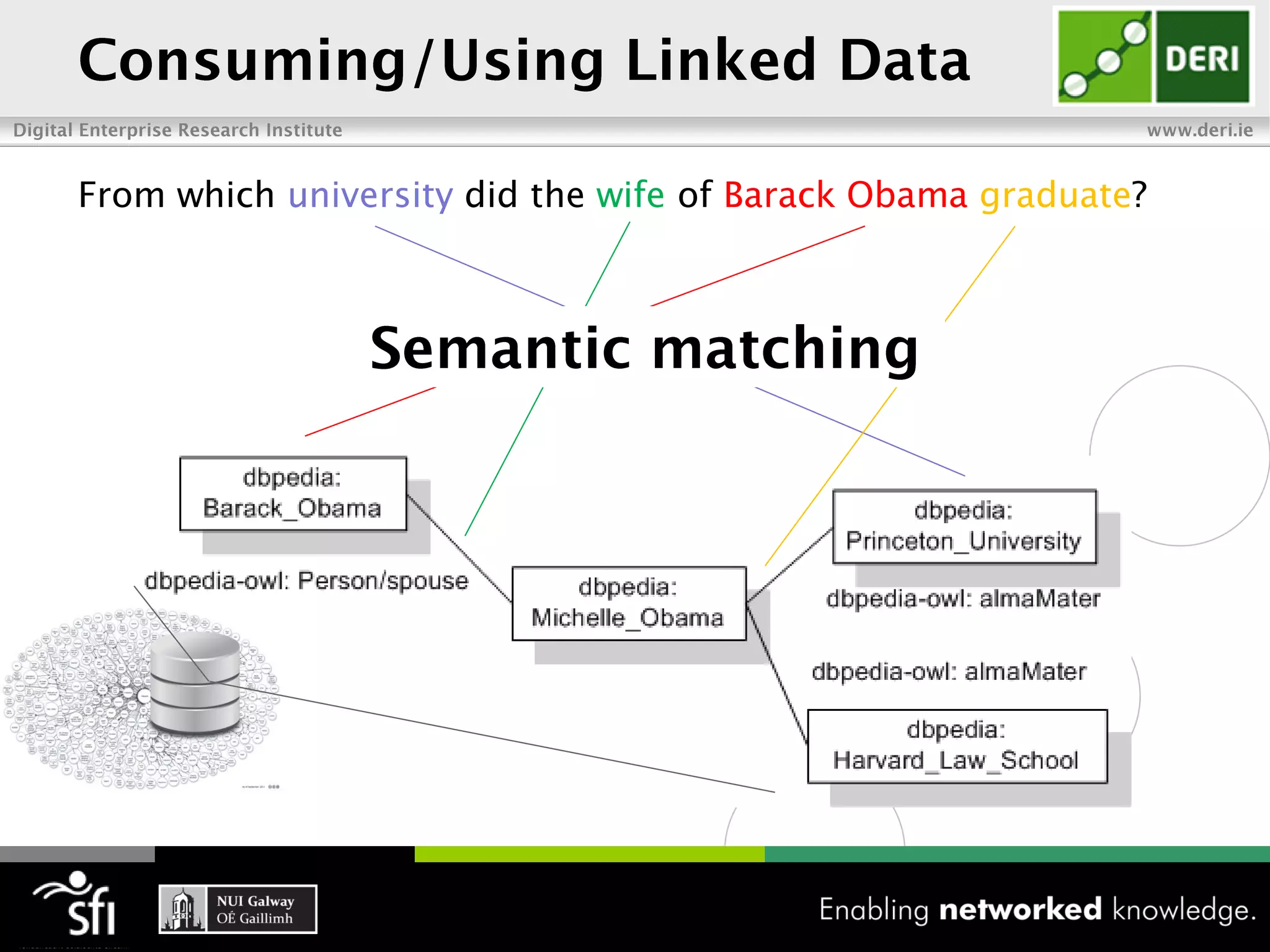

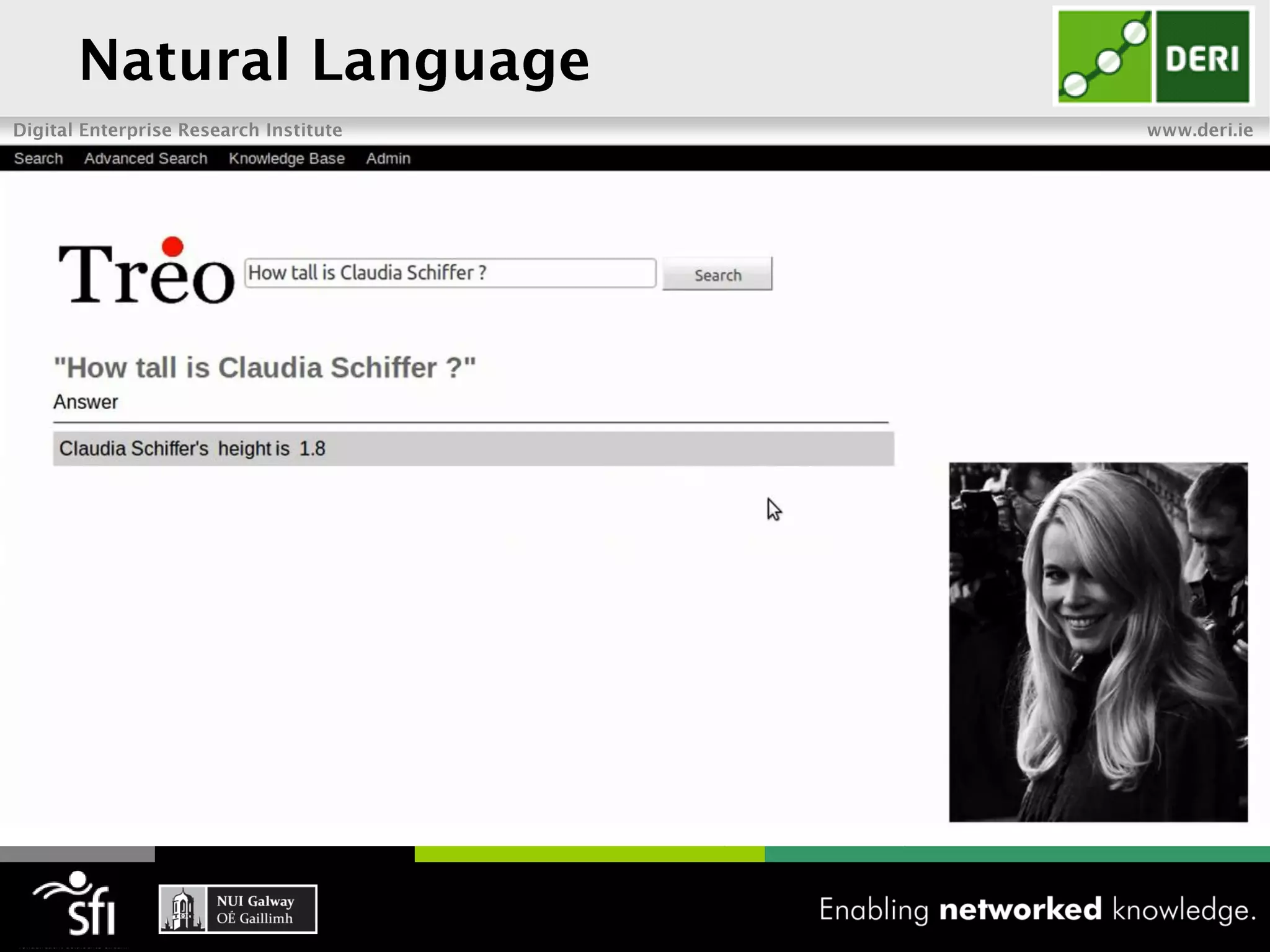

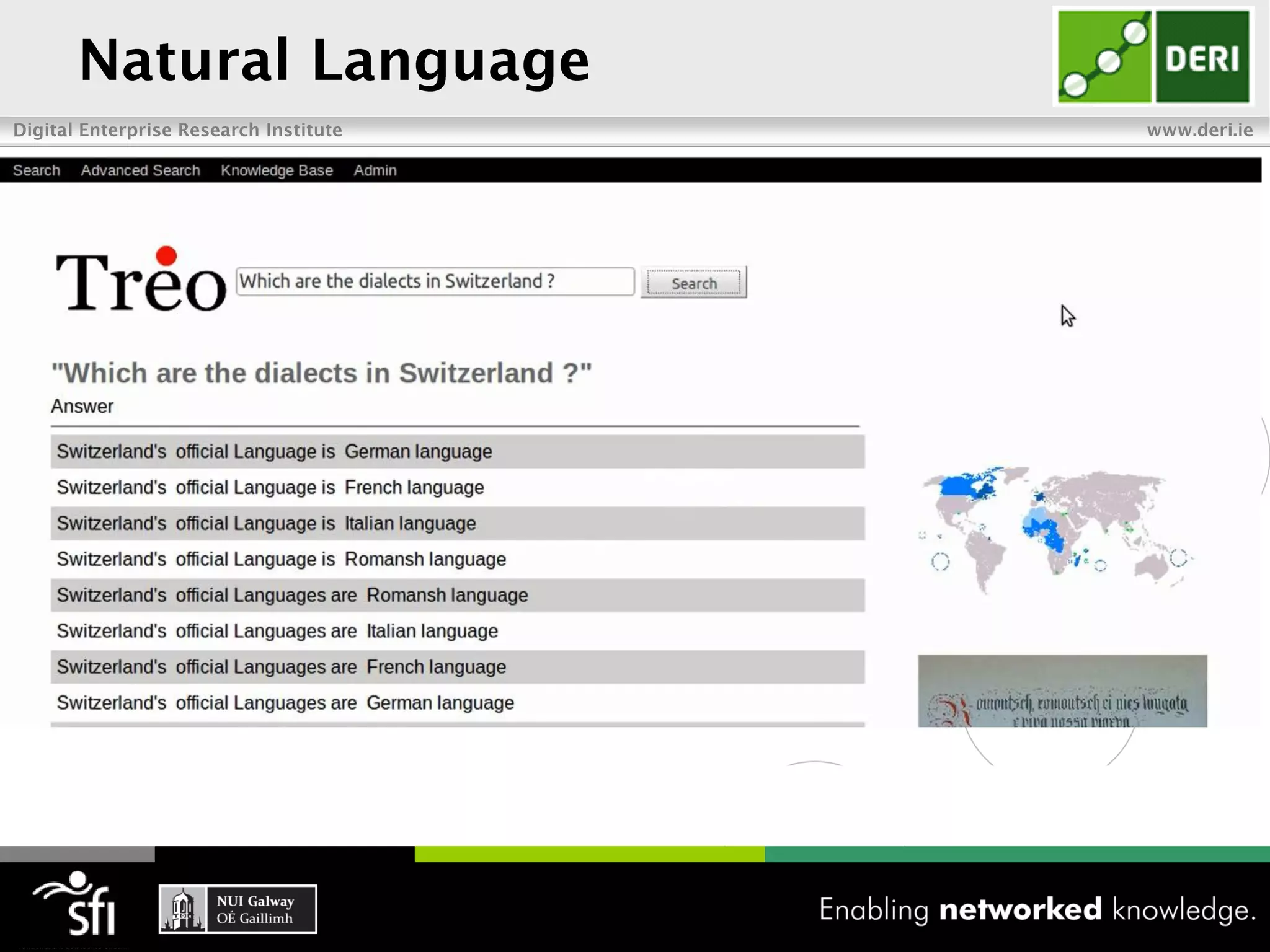

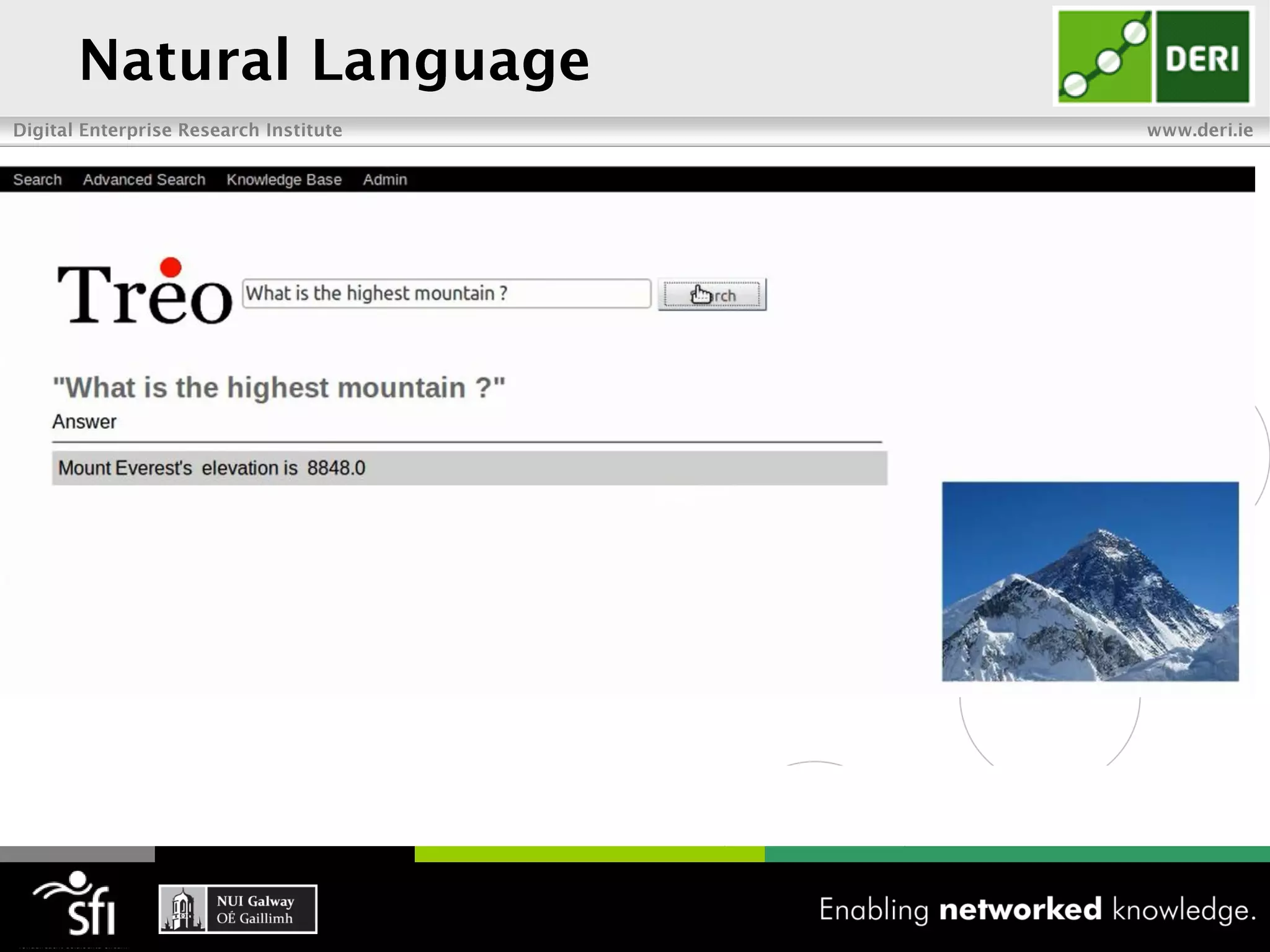

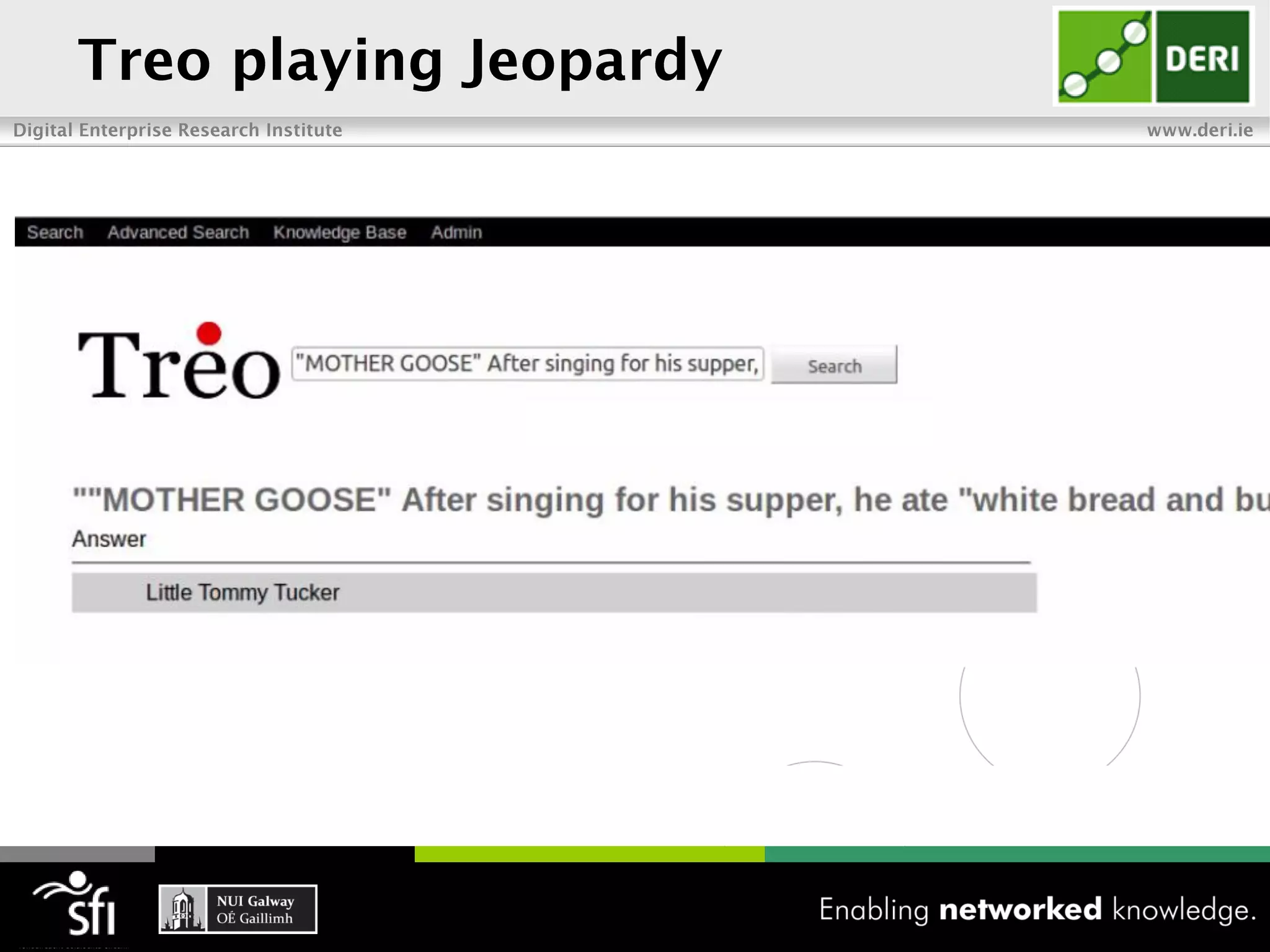

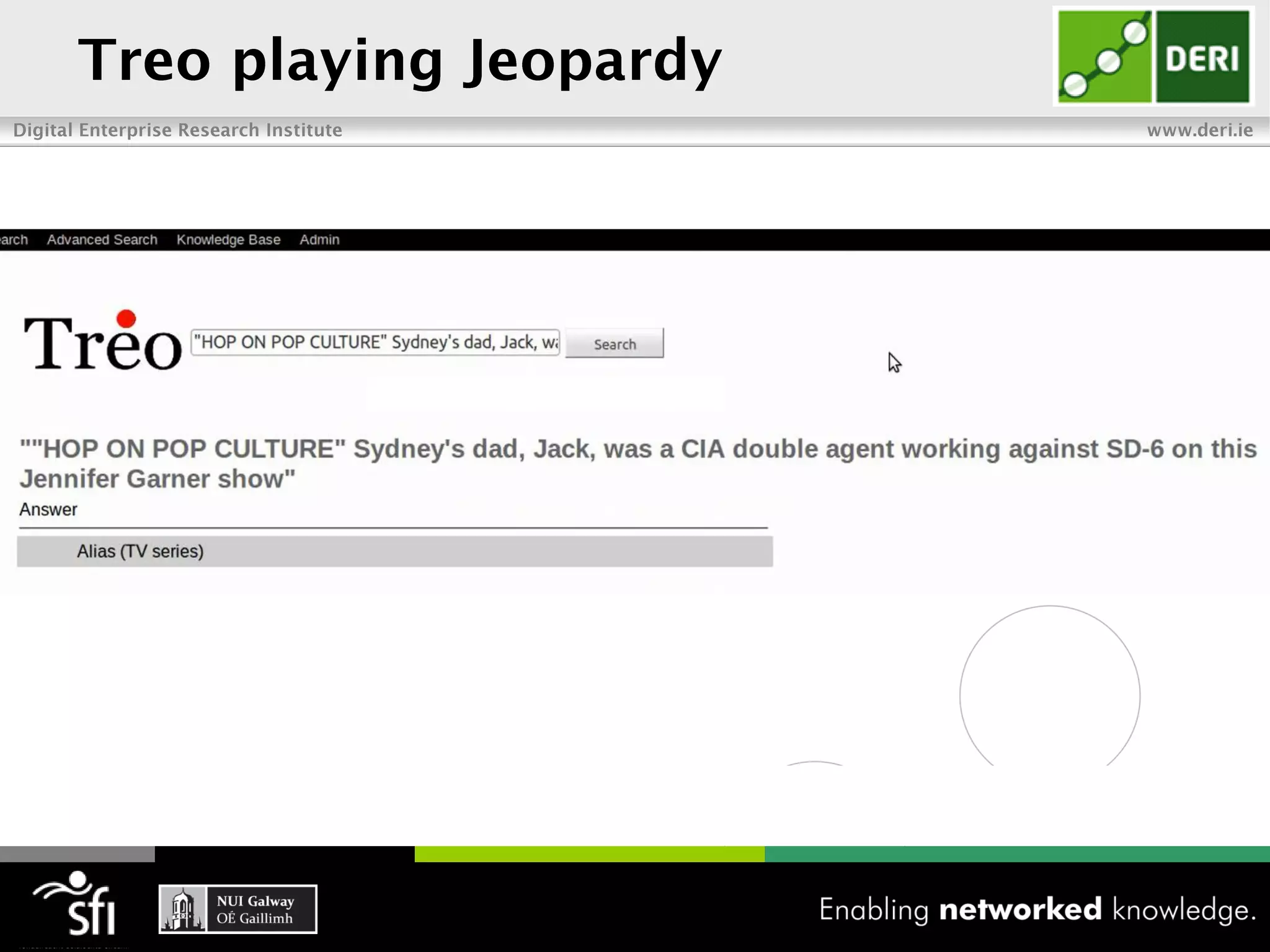

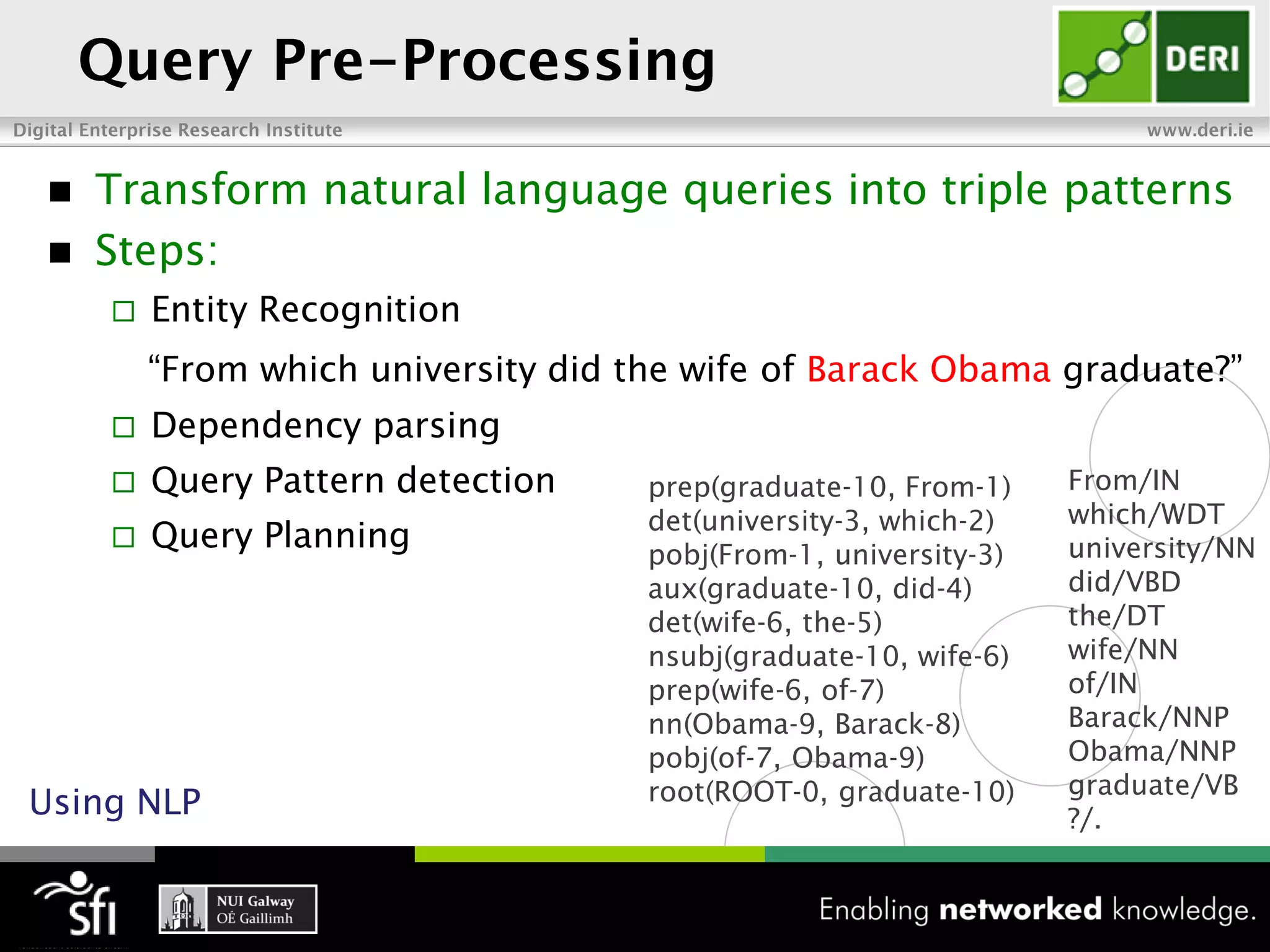

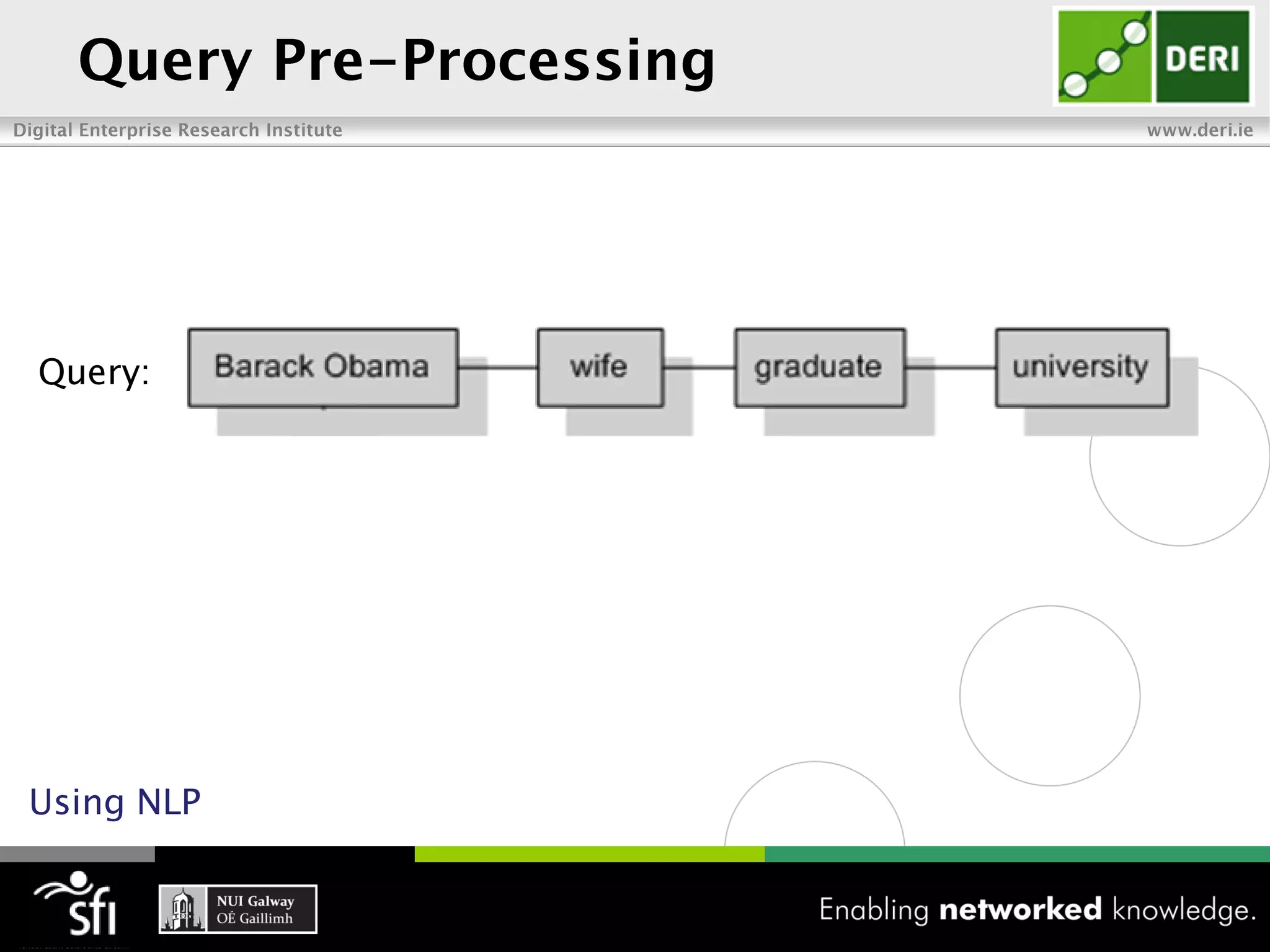

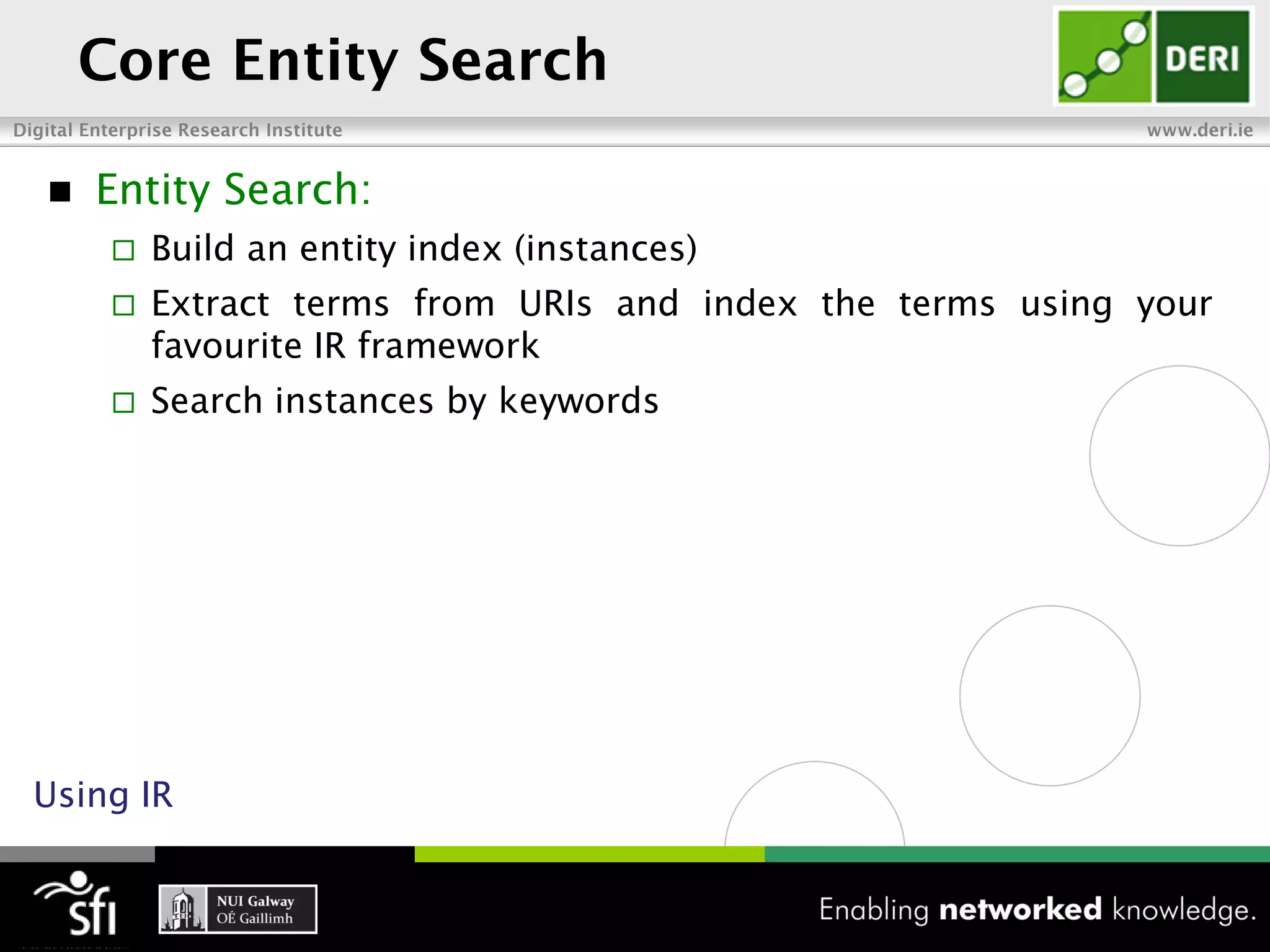

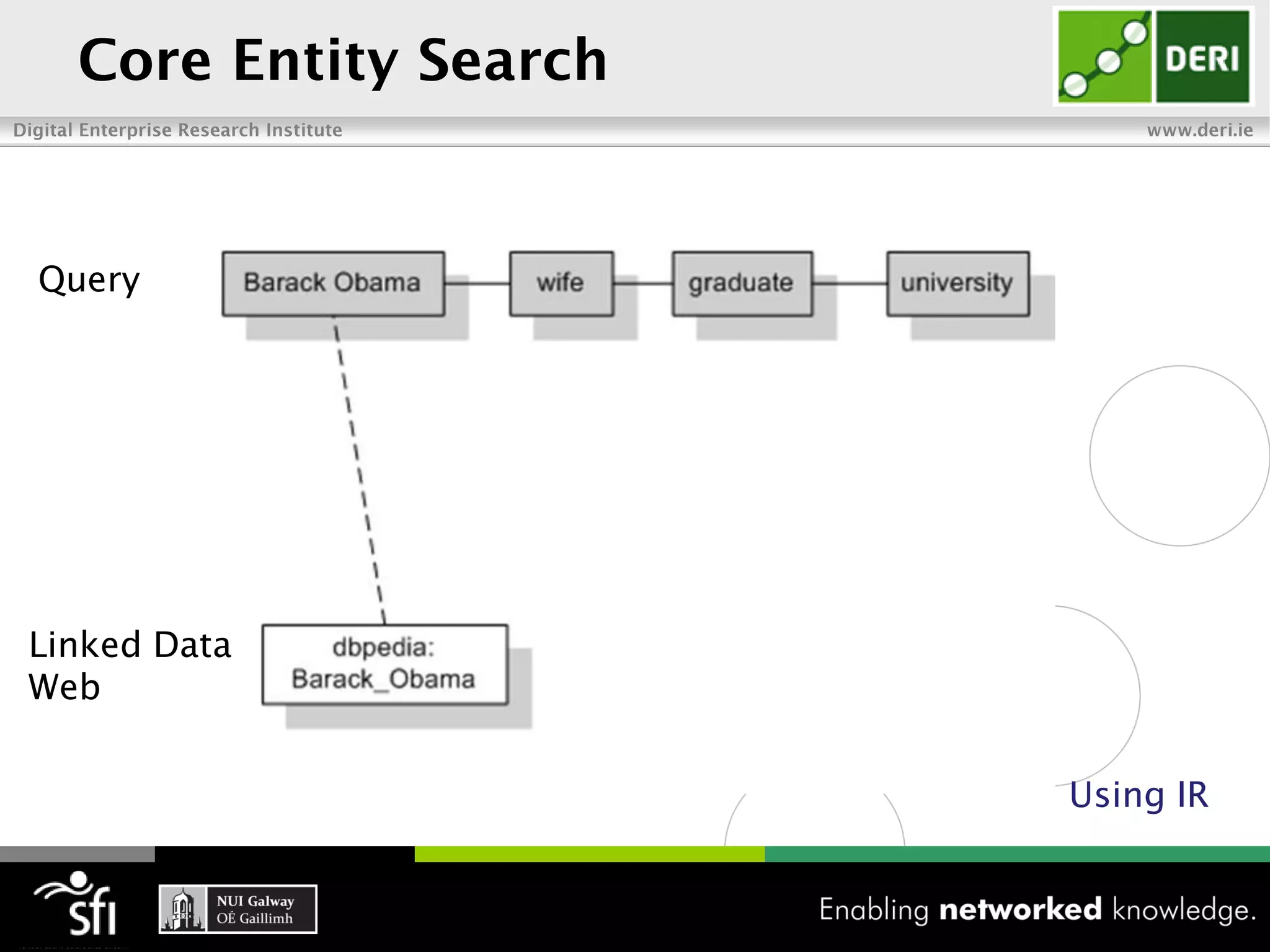

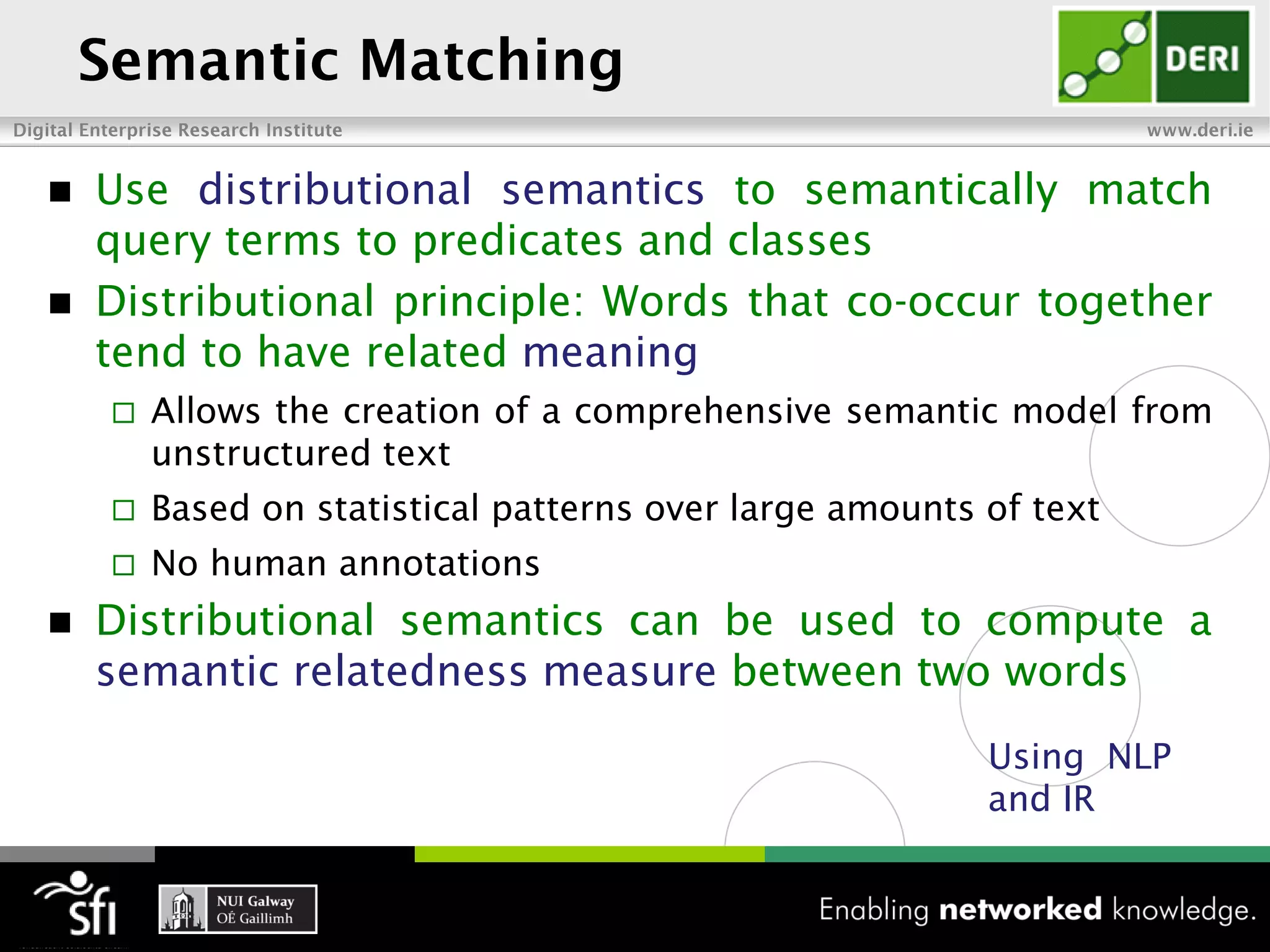

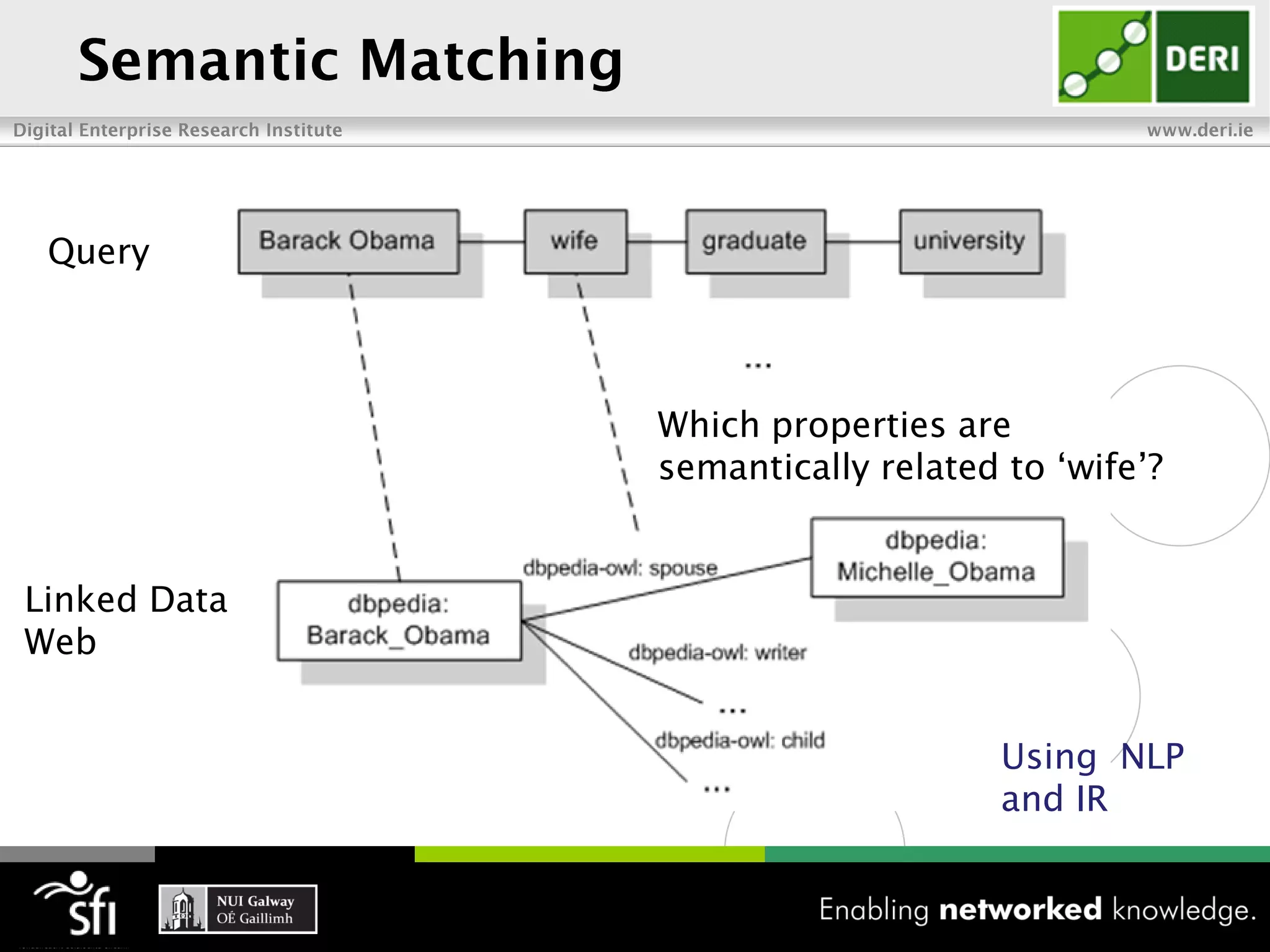

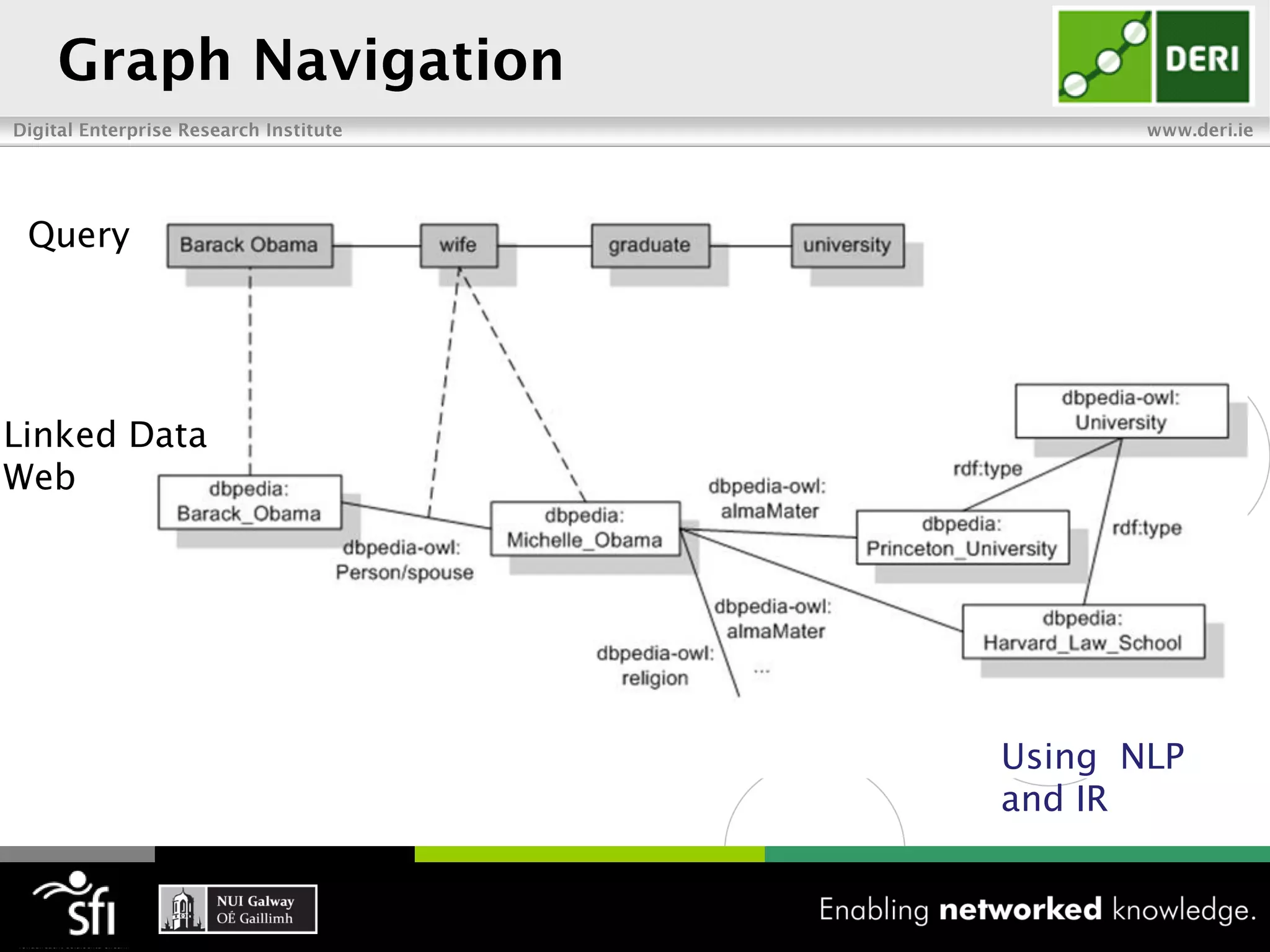

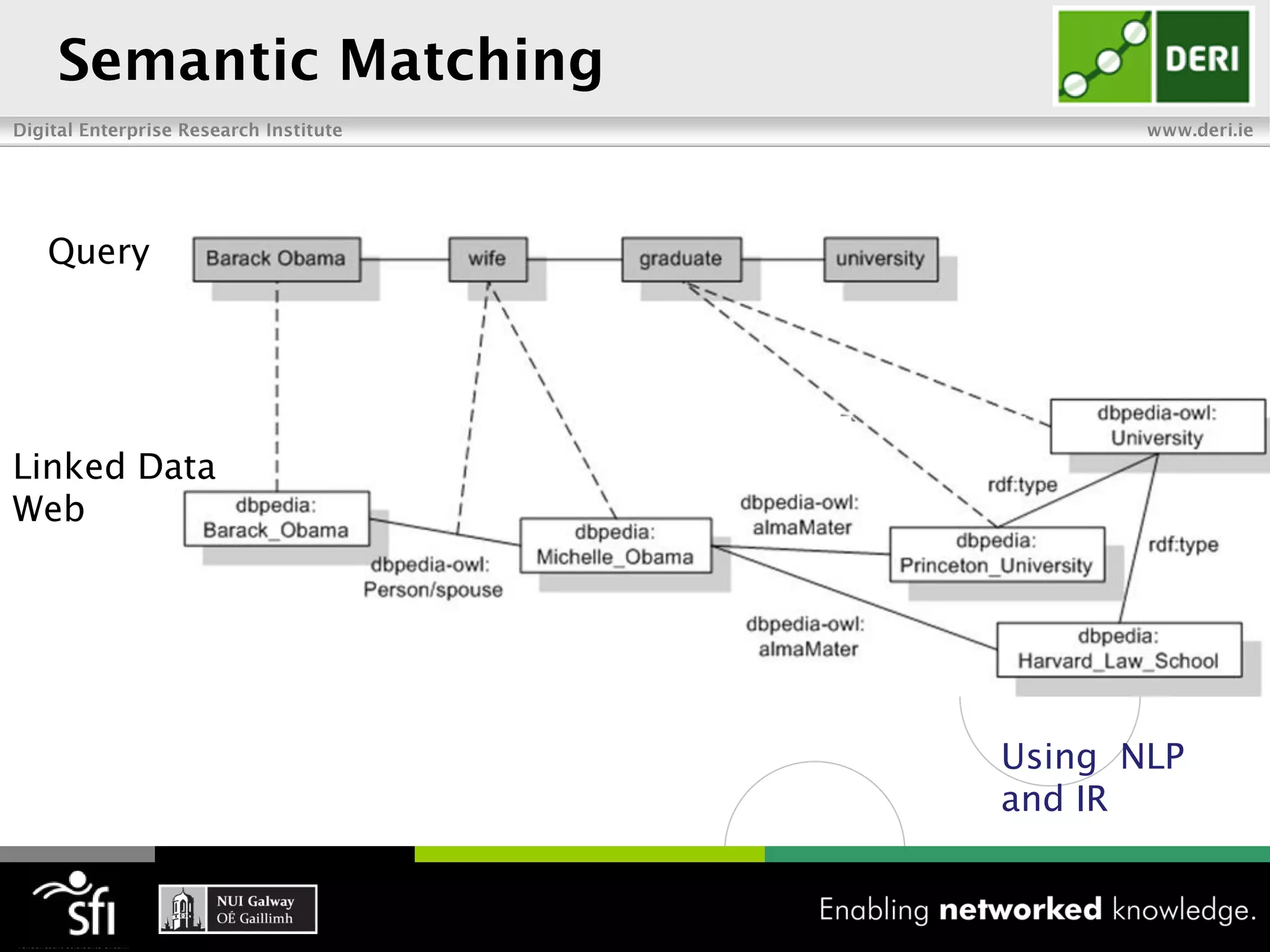

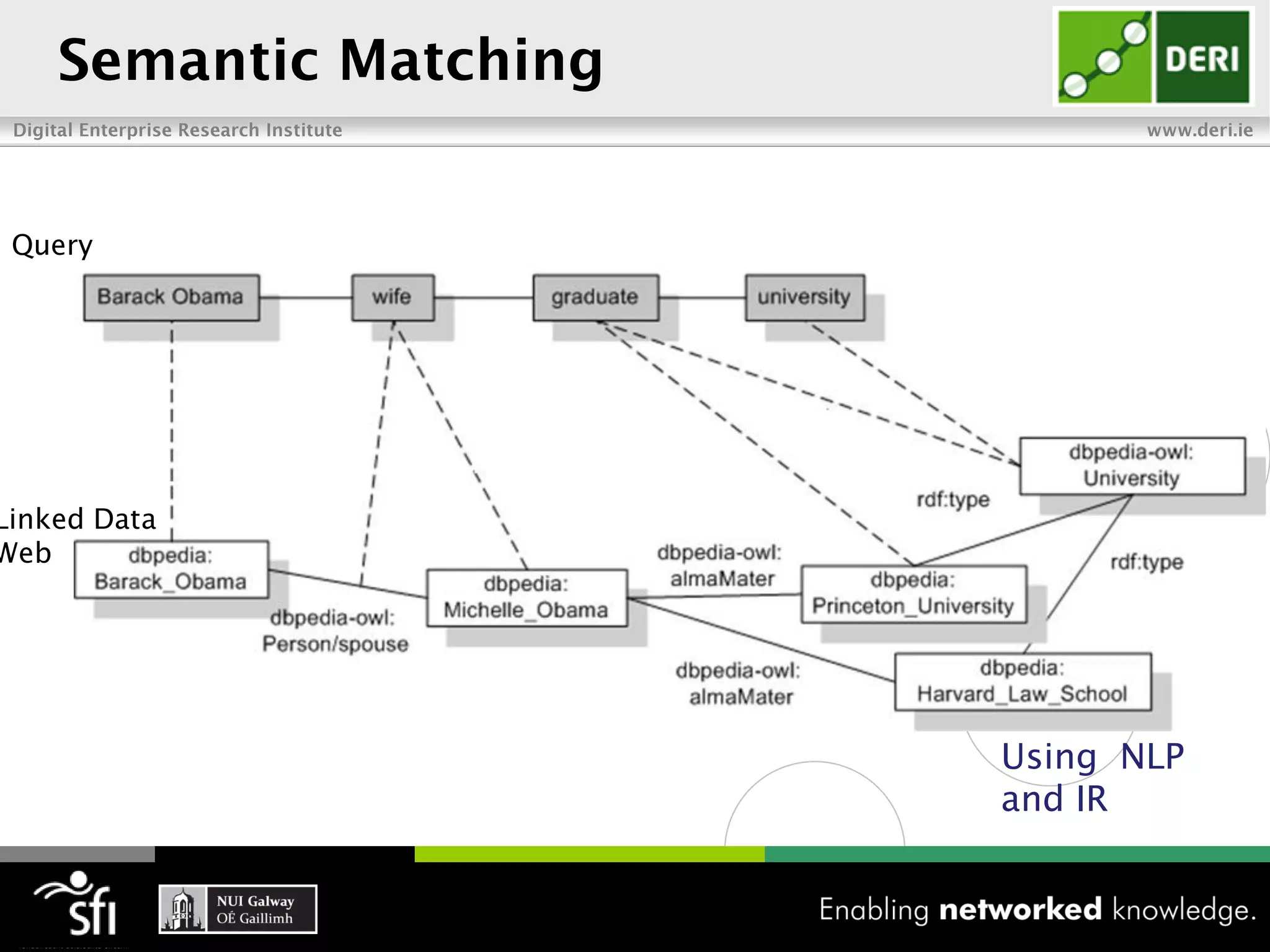

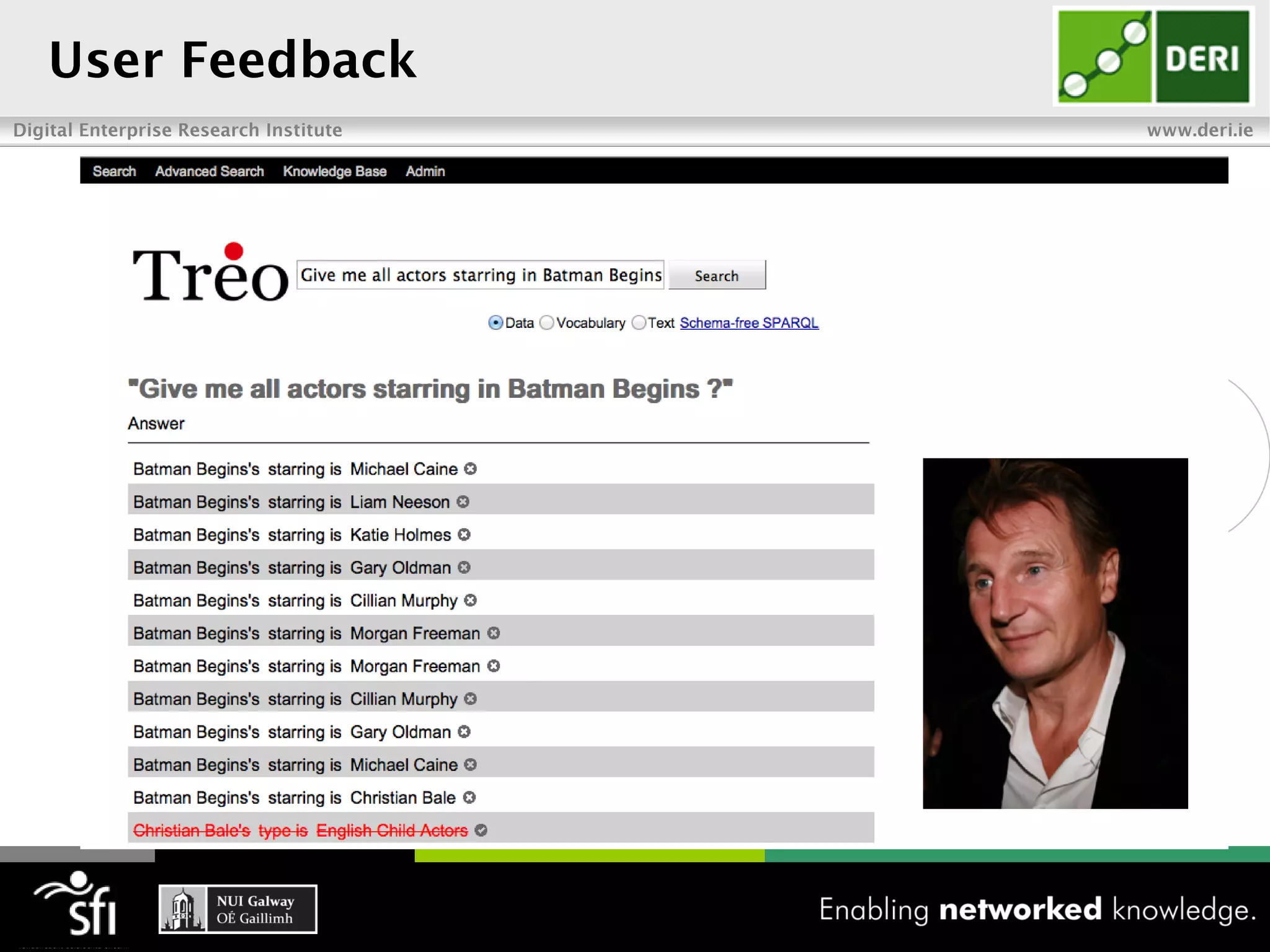

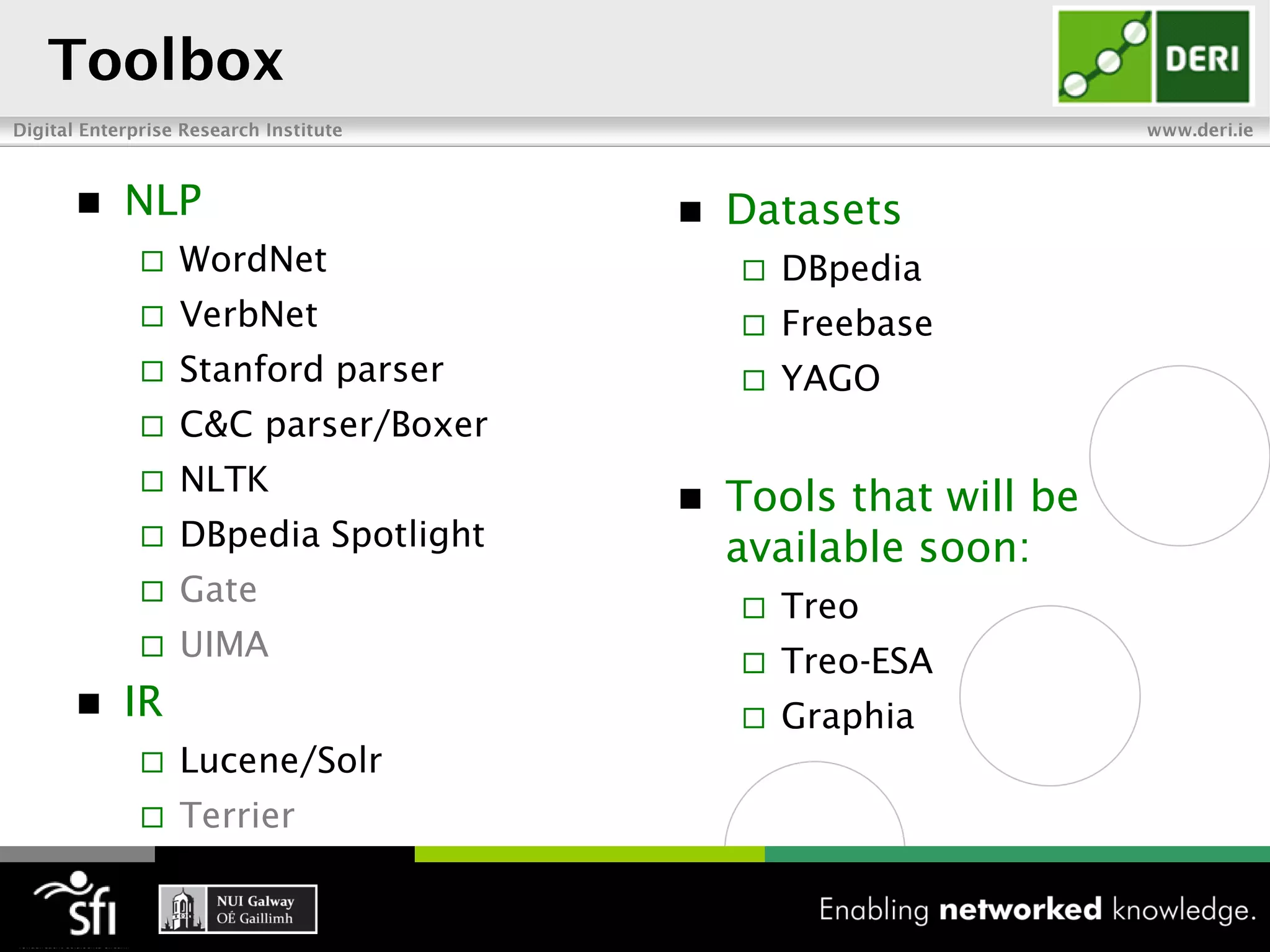

The document discusses the vision of the Semantic Web and how it has evolved since 2001. It describes how Linked Data has helped address earlier issues with consistency and scalability by providing a structured data representation at web scale. It also discusses how natural language processing, information retrieval, and distributional semantics can help bridge the gap between structured Linked Data and flexible natural language by semantically matching queries to knowledge graphs. The document concludes by outlining semantic application patterns that can be used to build intelligent systems by maximizing knowledge, allowing dynamic databases, and incorporating user feedback.