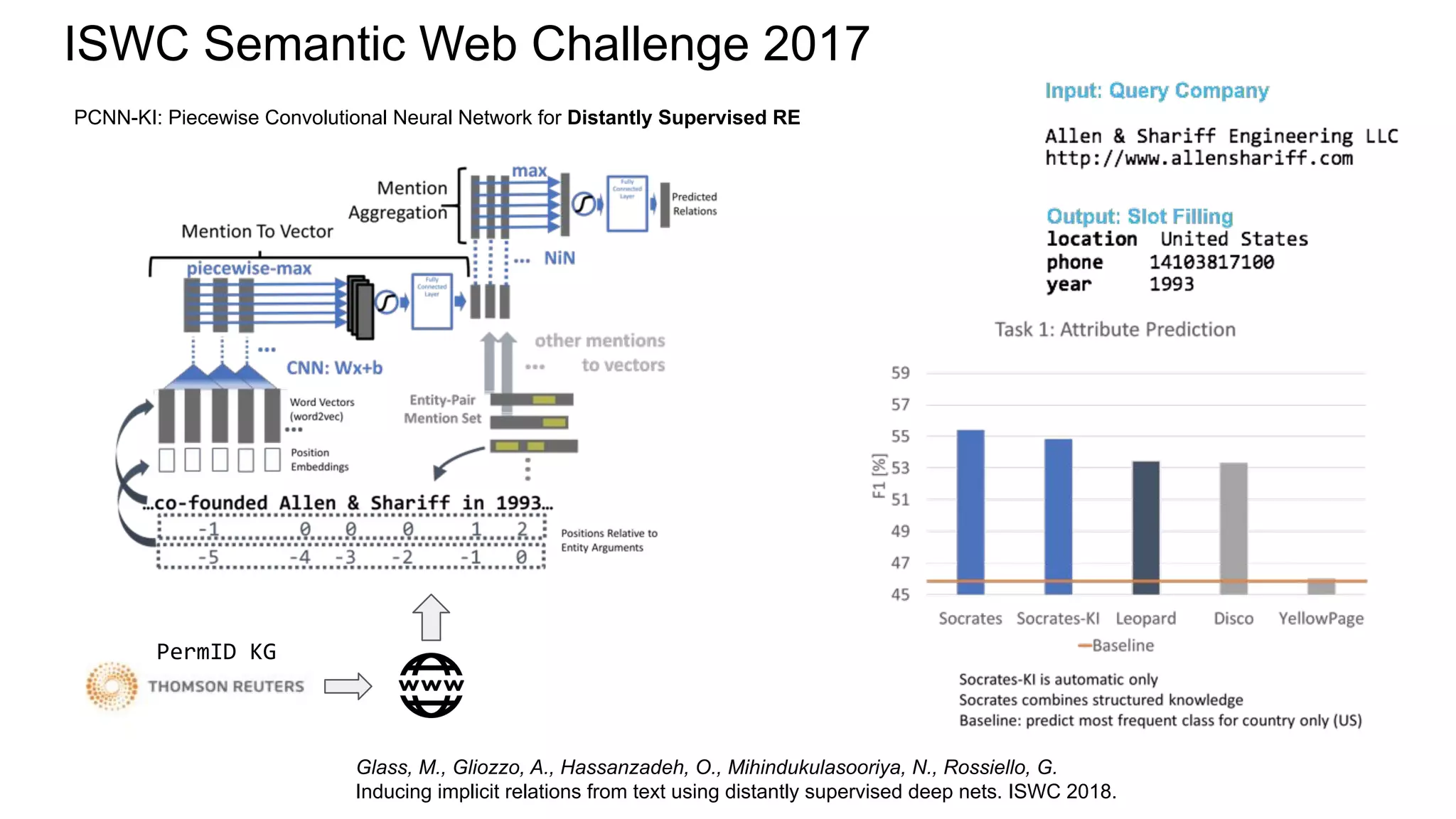

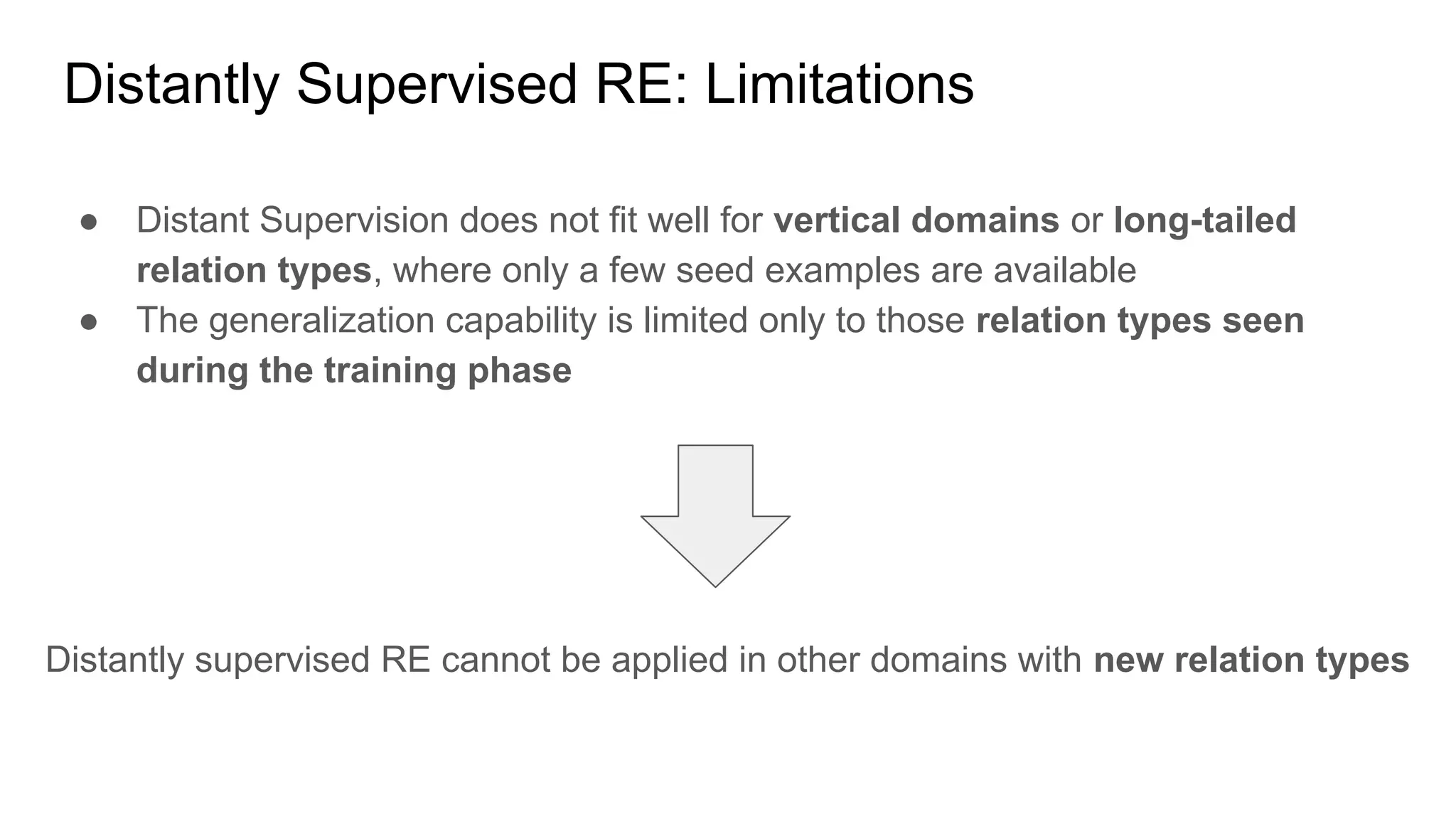

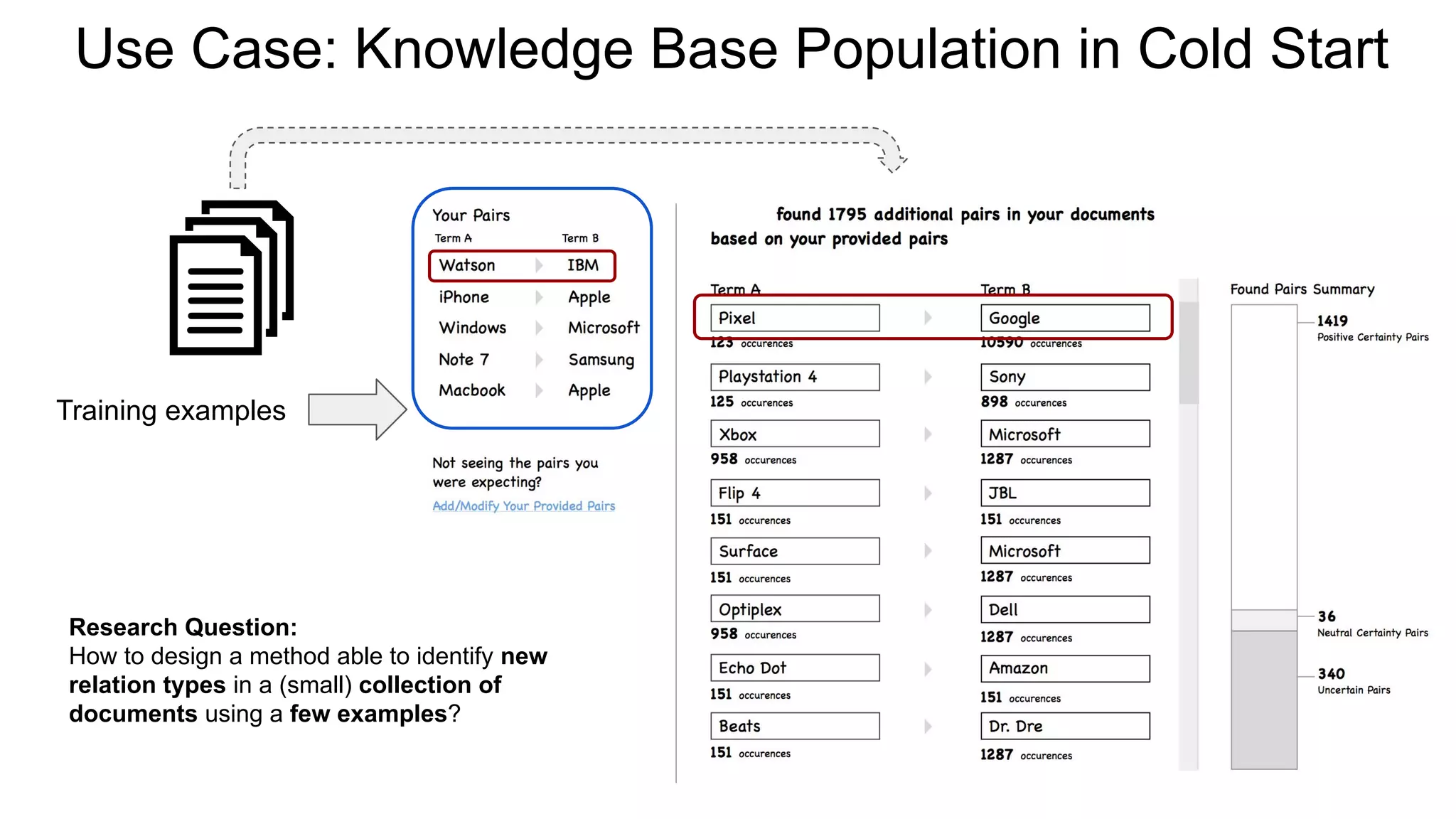

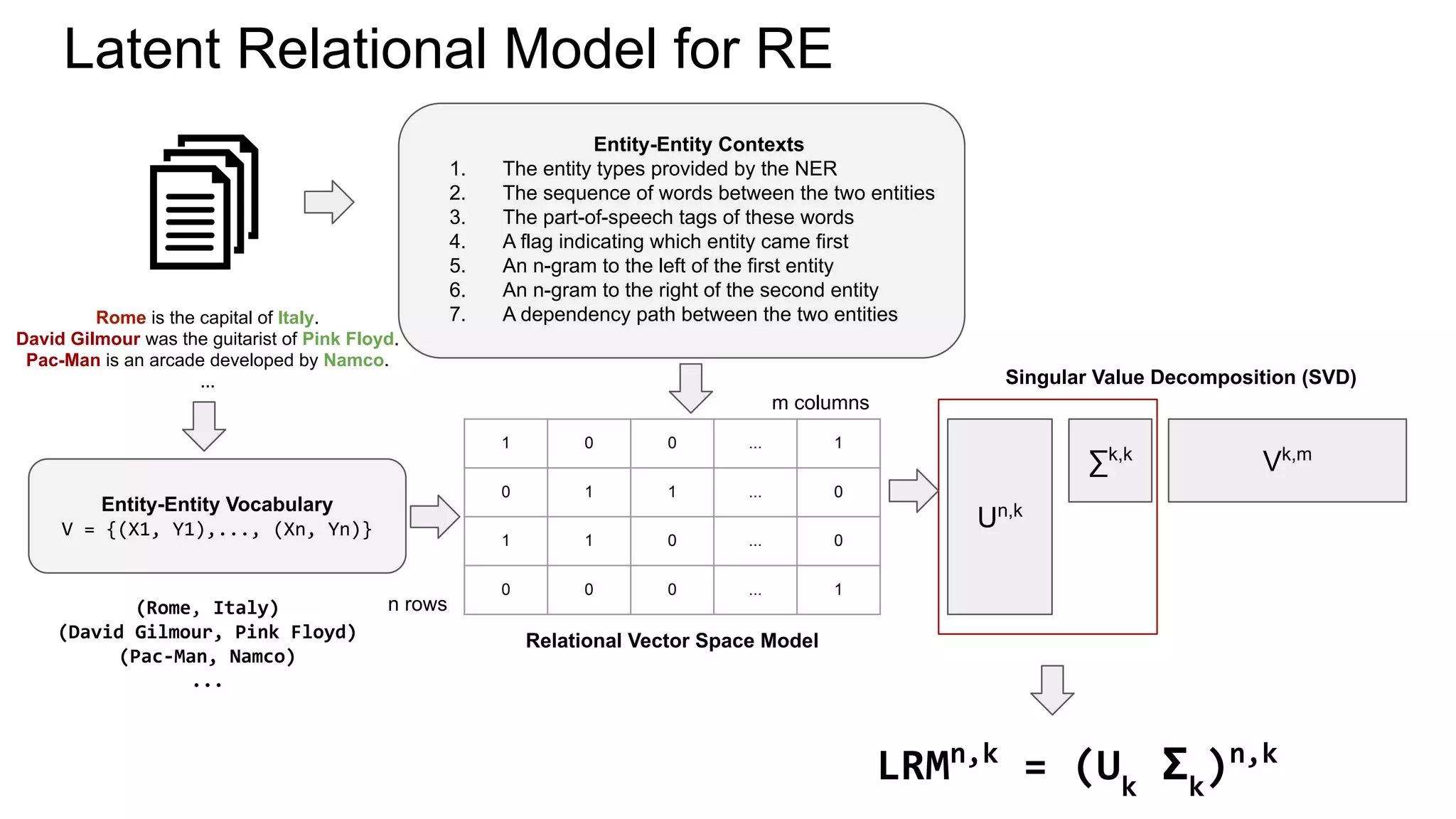

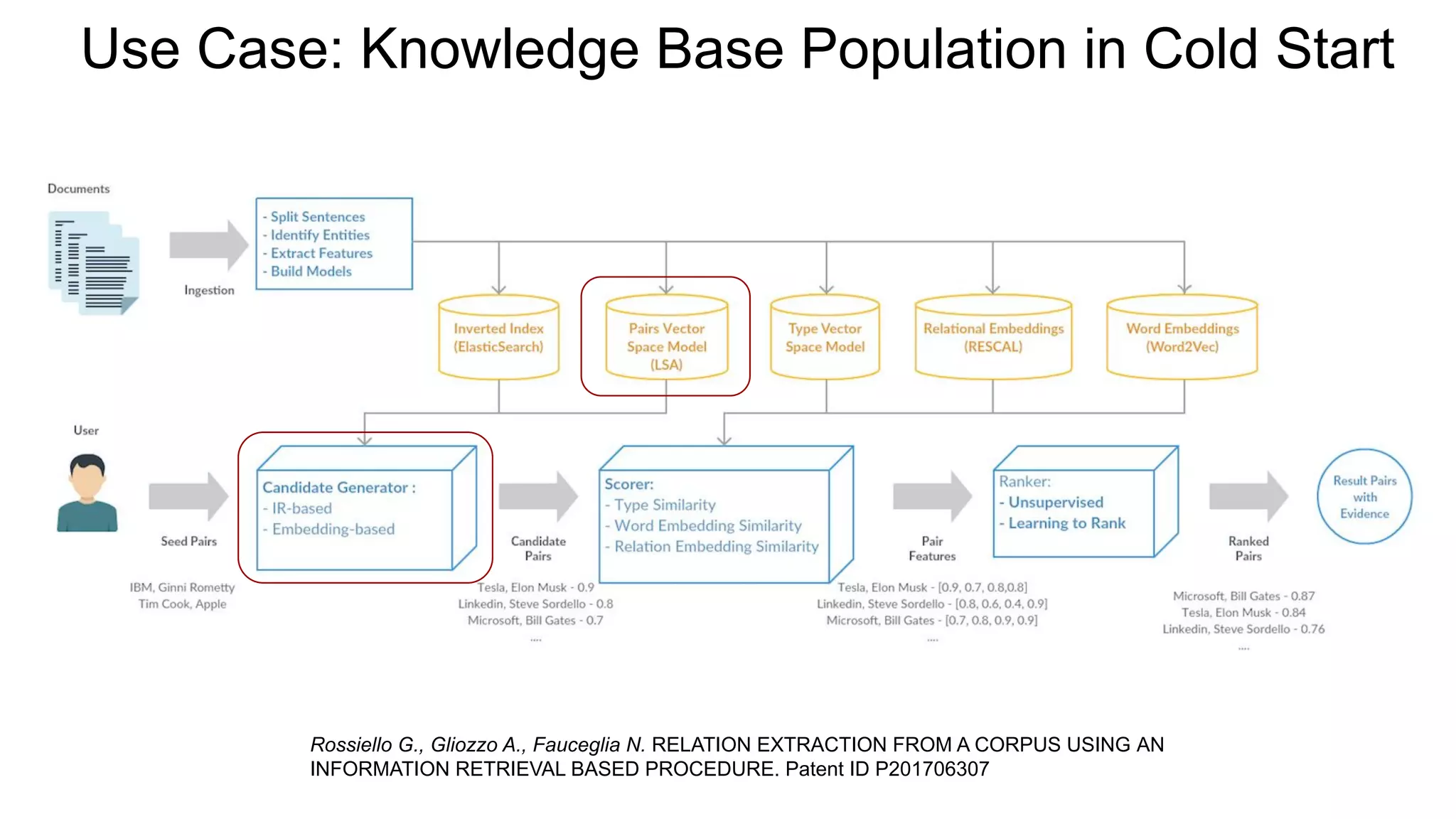

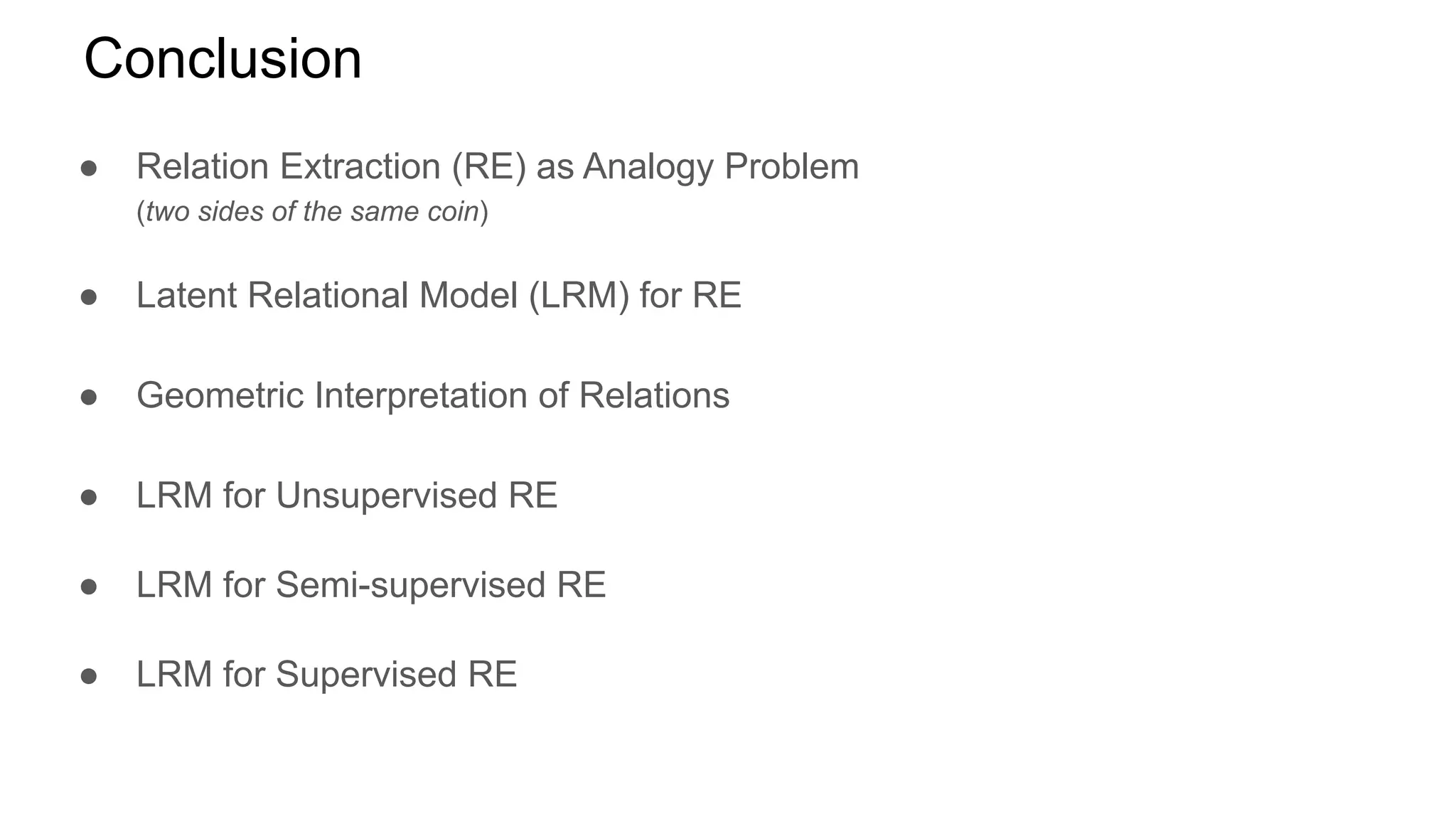

The document discusses a latent relational model for relation extraction aimed at transforming unstructured text into structured knowledge. It explores various approaches to relation extraction, including distant supervision and analogy methods, while highlighting limitations and potential applications like knowledge base population. The authors propose a geometric interpretation of relations in a relational vector space and suggest future work to address challenges in scaling and modeling relations.

![Relation Extraction Approaches

● Pattern-based [Hearst, 1992]

○ Hand-crafted rules

● Bootstrapping [Agichtein, 2000]

○ Semantic drift

● OpenIE [Banko, 2007; Fader, 2011; Mausam, 2012]

○ Lexicalized relations not in a canonical form

● Supervised [Jiang, 2007; Sun, 2014; Nguyen, 2015]

○ Manually annotated training examples

● Distant Supervision [Mintz, 2009; Lin, 2016; Glass, 2018]

○ An existing KB is used to generate training examples

○ Advantages from both bootstrapping and supervised RE](https://image.slidesharecdn.com/eswc2019gaetanorossiello-190607071835/75/Latent-Relational-Model-for-Relation-Extraction-4-2048.jpg)

![Word Analogy using Distributional Semantic Models

Vector offset with Word Embeddings

man : king = woman : ?

vec(king) - vec(man) + vec (woman) ≈ vec(queen)

vec(king) - vec(man) ≈ vec(queen) - vec(woman)

Mikolov, T., Chen, K., Corrado, G., & Dean, J. Efficient estimation of word representations in vector space. ICLR 2013.

Pennington, J., Socher, R., & Manning, C. D. Glove: Global vectors for word representation. EMNLP 2014.

Levy, O., Goldberg, Y., & Dagan, I. Improving distributional similarity with lessons learned from word embeddings. TACL 2015.

Limitations:

● Handling multi-word (e.g. Tom Hanks) with pre-trained word embedding models

● Handling unseen words/entities

● Not effective on SAT Analogy Questions [Church, 2017]](https://image.slidesharecdn.com/eswc2019gaetanorossiello-190607071835/75/Latent-Relational-Model-for-Relation-Extraction-9-2048.jpg)

![SAT Analogy Questions Dataset

● SAT = Scholastic Aptitude Test [Turney, 2003]

● 374 multiple-choice analogy questions; 5 choices per question

● Human performance: 81.5%

● SOTA - Latent Relational Analysis (LRA): 56.1%

Turney, P.D., and Littman, M.L. Corpus-based learning of analogies and semantic relations. Machine Learning. 2005.

Turney, P.D. Similarity of semantic relations. Computational Linguistics. 2006.

LRA

r1 = vec(mason:stone)

r2 = vec(carpenter:wood)

sim = cosine(r1, r2)](https://image.slidesharecdn.com/eswc2019gaetanorossiello-190607071835/75/Latent-Relational-Model-for-Relation-Extraction-10-2048.jpg)

![Geometric Interpretation of Relations

“A semantic relation R is a region in a relational vector space

LRMn,k

that outlines the boundaries among those entity-pair

vectors that are analogous to each other.”

Dataset: NYT-FB [Riedel, 2010]

New York, Brooklyn

Bill Gates, Microsoft

A:B=C:D ⇔ dist(r(A,B)

,r(C,D)

) <

t](https://image.slidesharecdn.com/eswc2019gaetanorossiello-190607071835/75/Latent-Relational-Model-for-Relation-Extraction-14-2048.jpg)

![LRM for Distantly Supervised Relation Extraction

Dataset: NTY-FB [Riedel, 2010]

Corpus: New York Times (2005-2007)

KG: Freebase

Relations/classes: 51

Training positive: 4700

Training negative: 63569

Test positive: 1950

Test negative: 94917

LRM: SVD [Halko, 2011] k=2000

Classifier: SVM one-vs-rest

ARES (Ours) = LRM + SVM](https://image.slidesharecdn.com/eswc2019gaetanorossiello-190607071835/75/Latent-Relational-Model-for-Relation-Extraction-15-2048.jpg)

![Limitations of LRM / Future Work

● NLP pipeline and SVD do not scale on very large corpora

○ Learning Relational Representations by Analogy

using Hierarchical Siamese Networks [Rossiello et al, NAACL 2019]

○ Variational Autoencoders

● LRM is not able to model the directionality of relations

○ founder(Person, Company) - OK

○ competitor(Company, Company) - OK

○ supplyTo(Company, Company) - KO!

● One entity-entity embedding encodes many relations

○ Contextual Relational Embeddings, like ELMO [Peters, 2018], BERT [Devlin, 2018]

○ Lookup tensor: [entity-entity, mention, vector]

● Extract n-ary Relations

○ Towards Unsupervised Semantic/Frame Parsing](https://image.slidesharecdn.com/eswc2019gaetanorossiello-190607071835/75/Latent-Relational-Model-for-Relation-Extraction-17-2048.jpg)