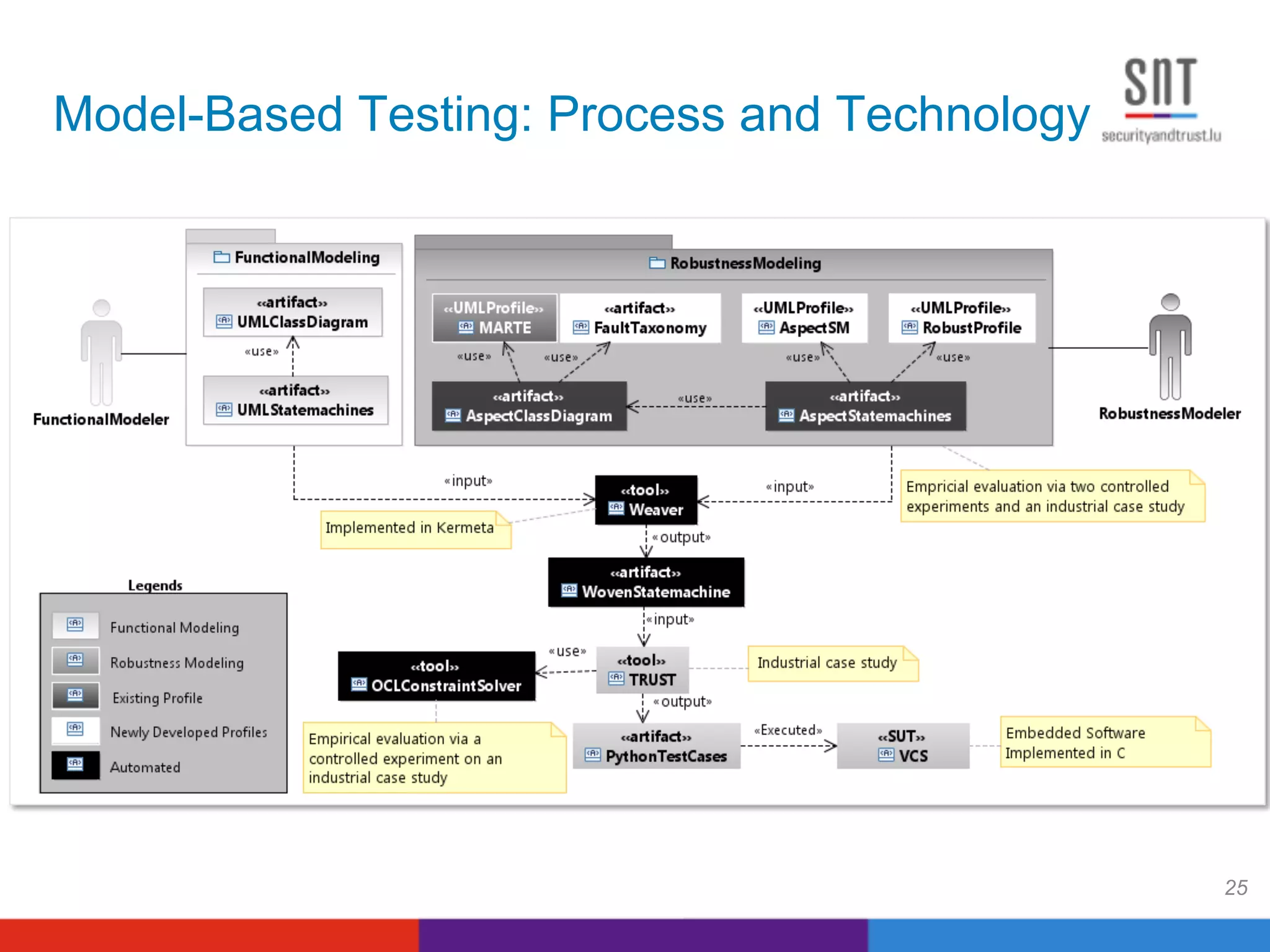

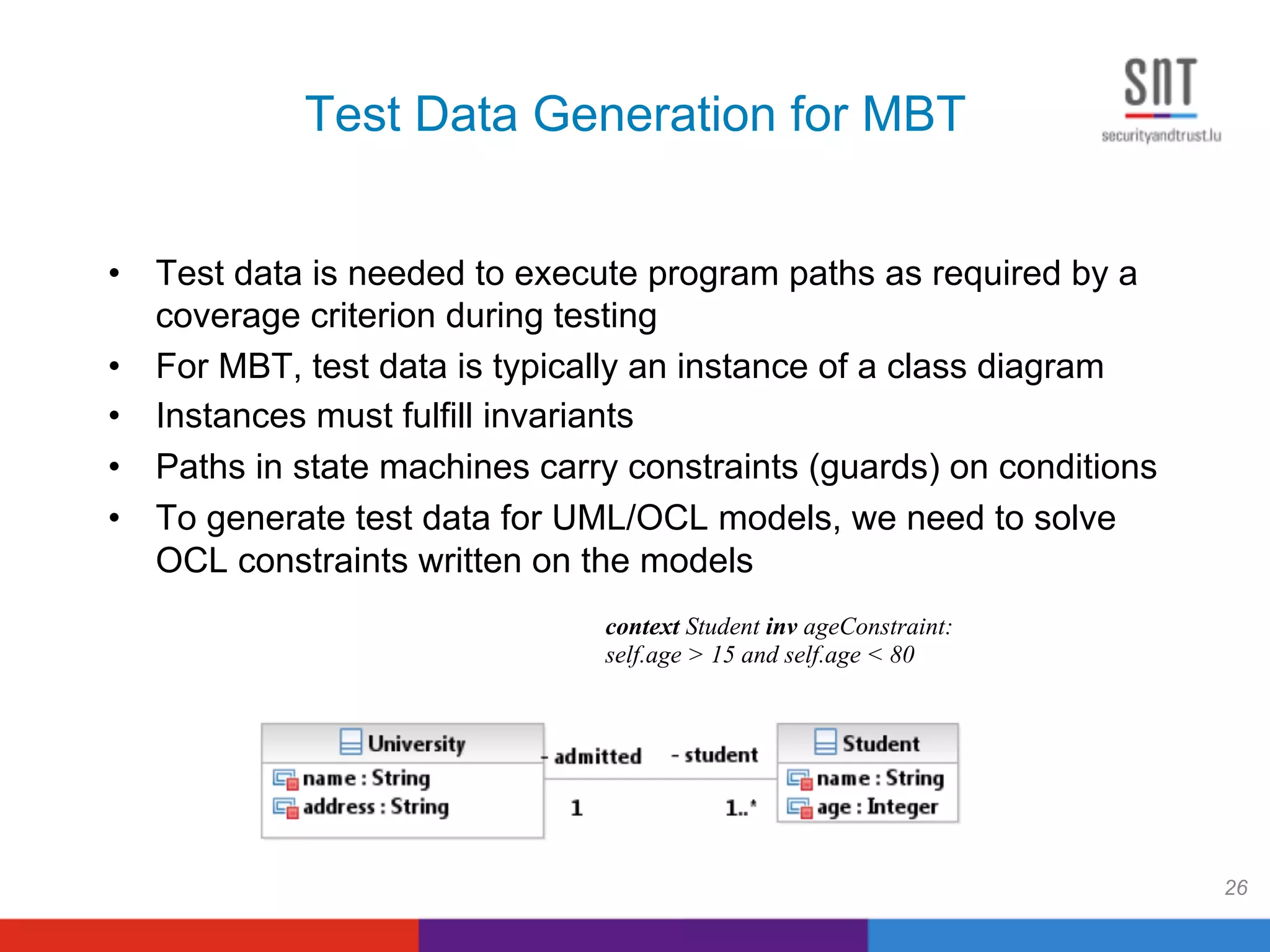

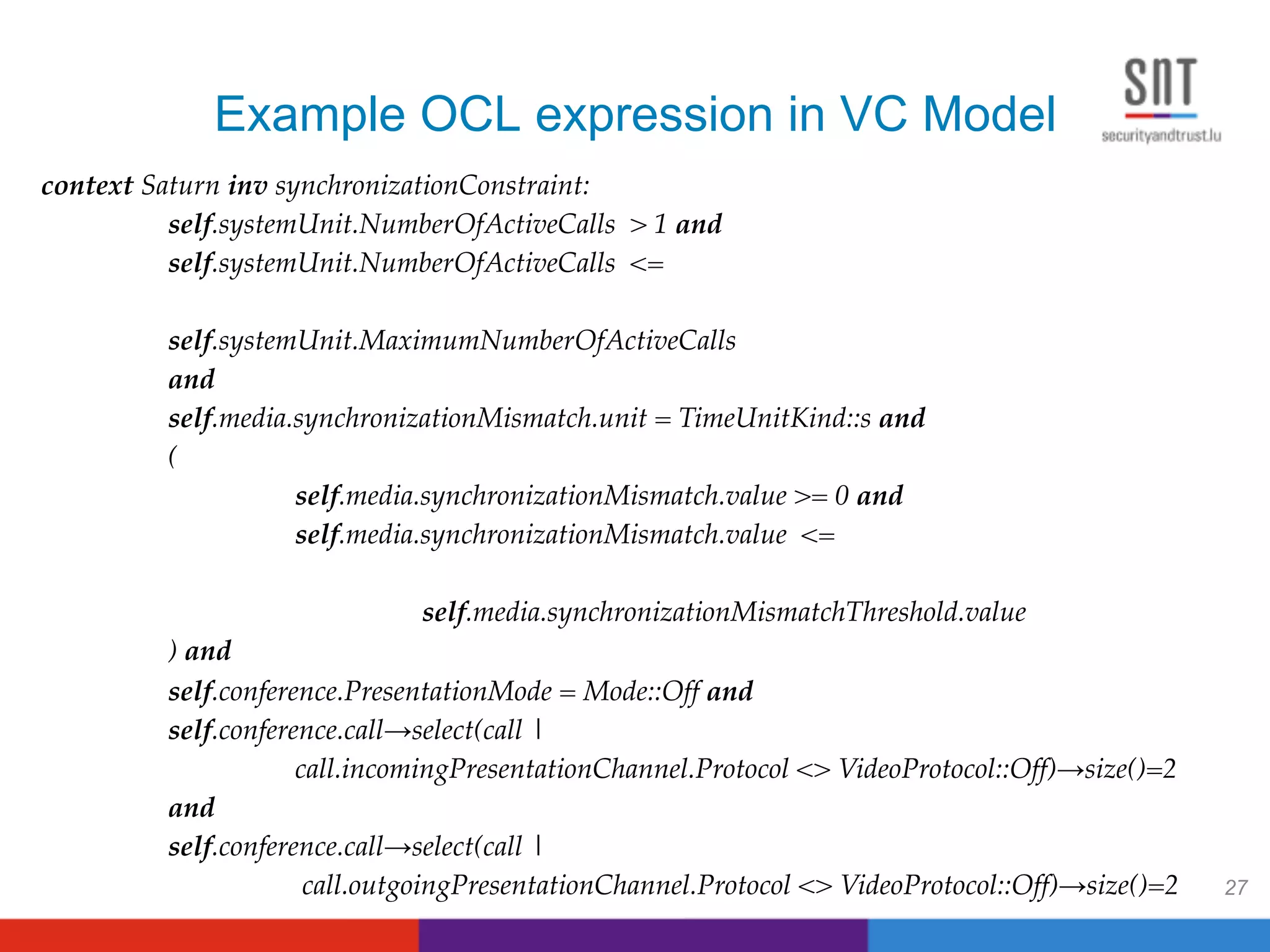

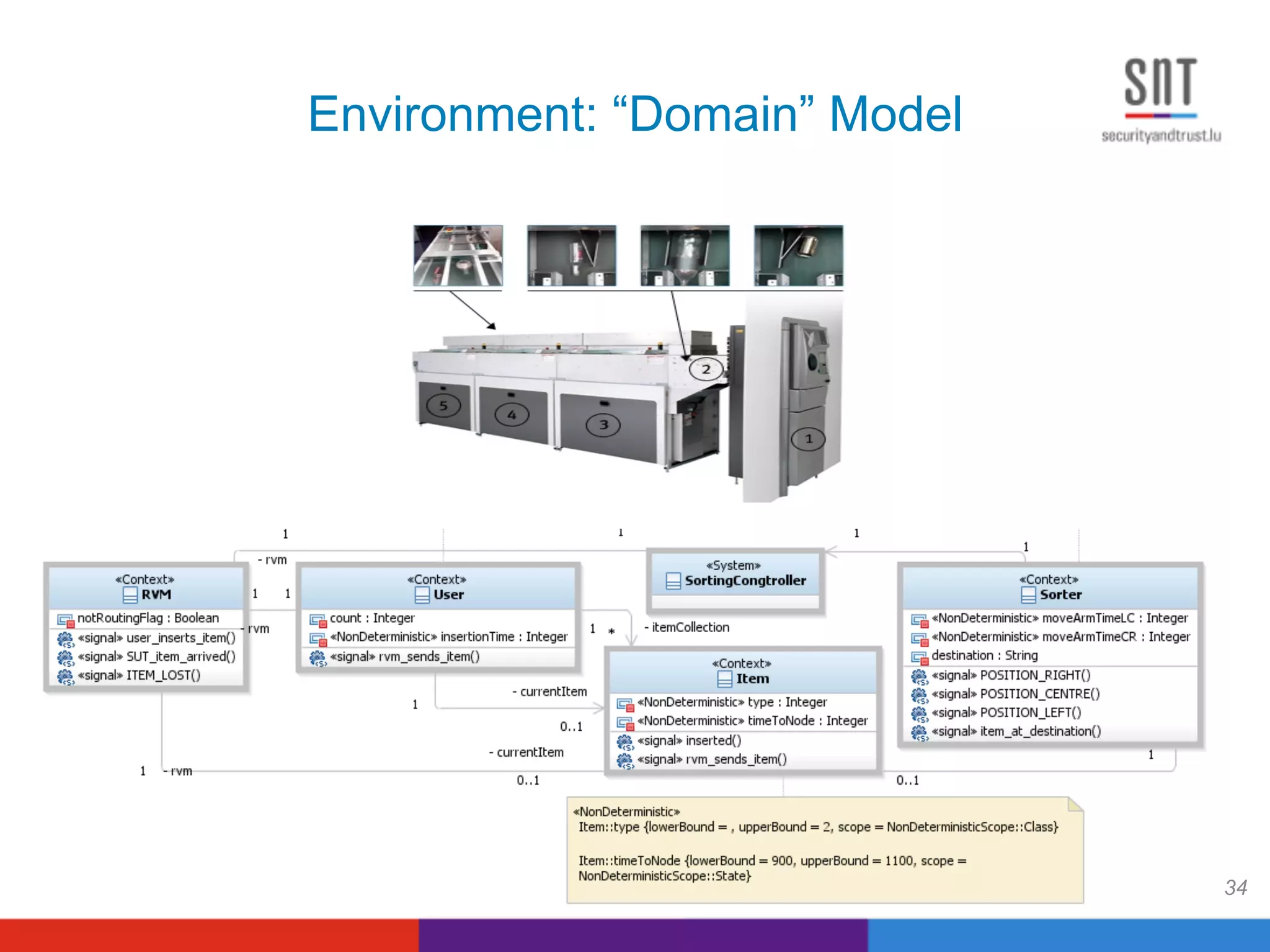

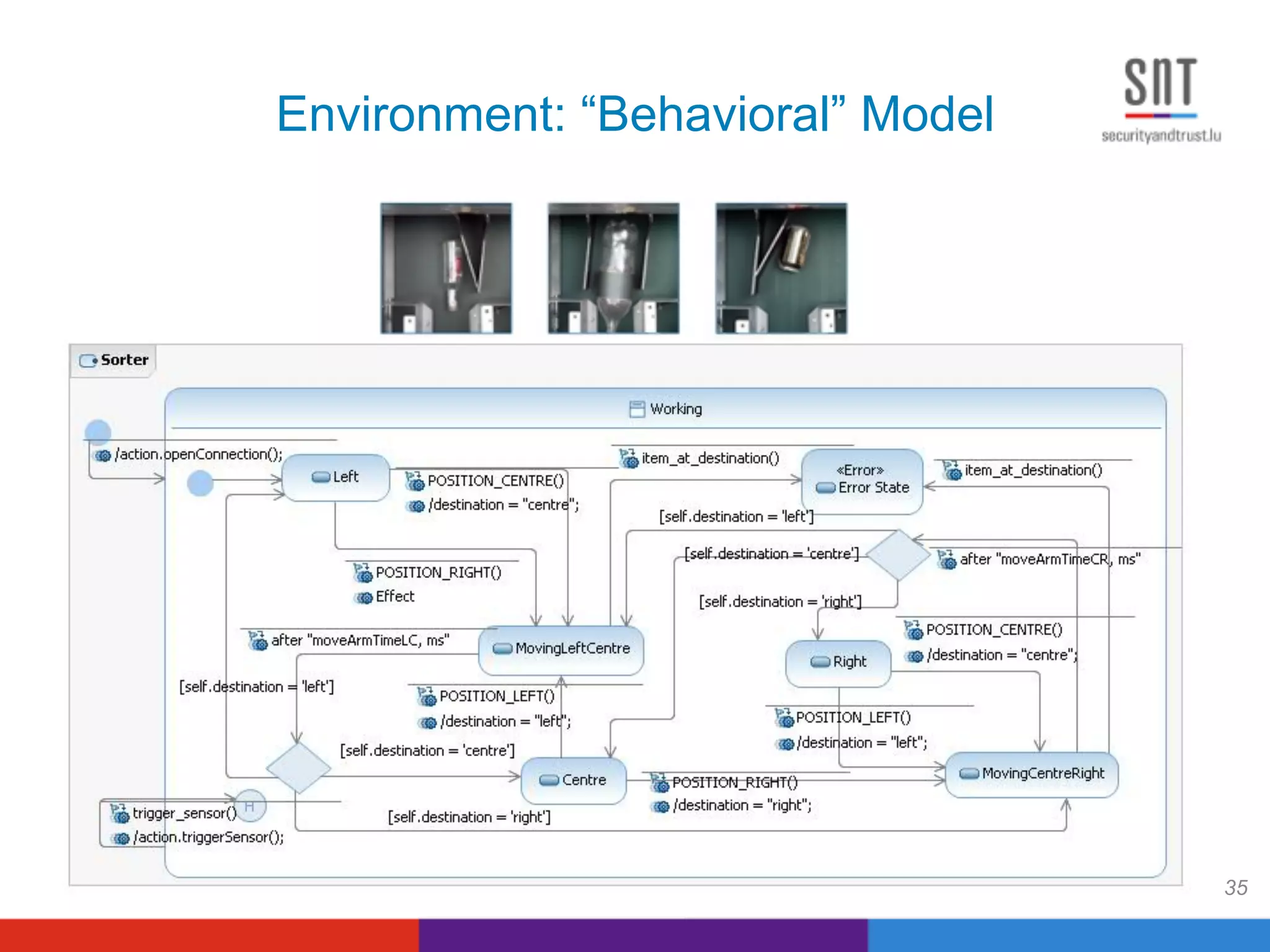

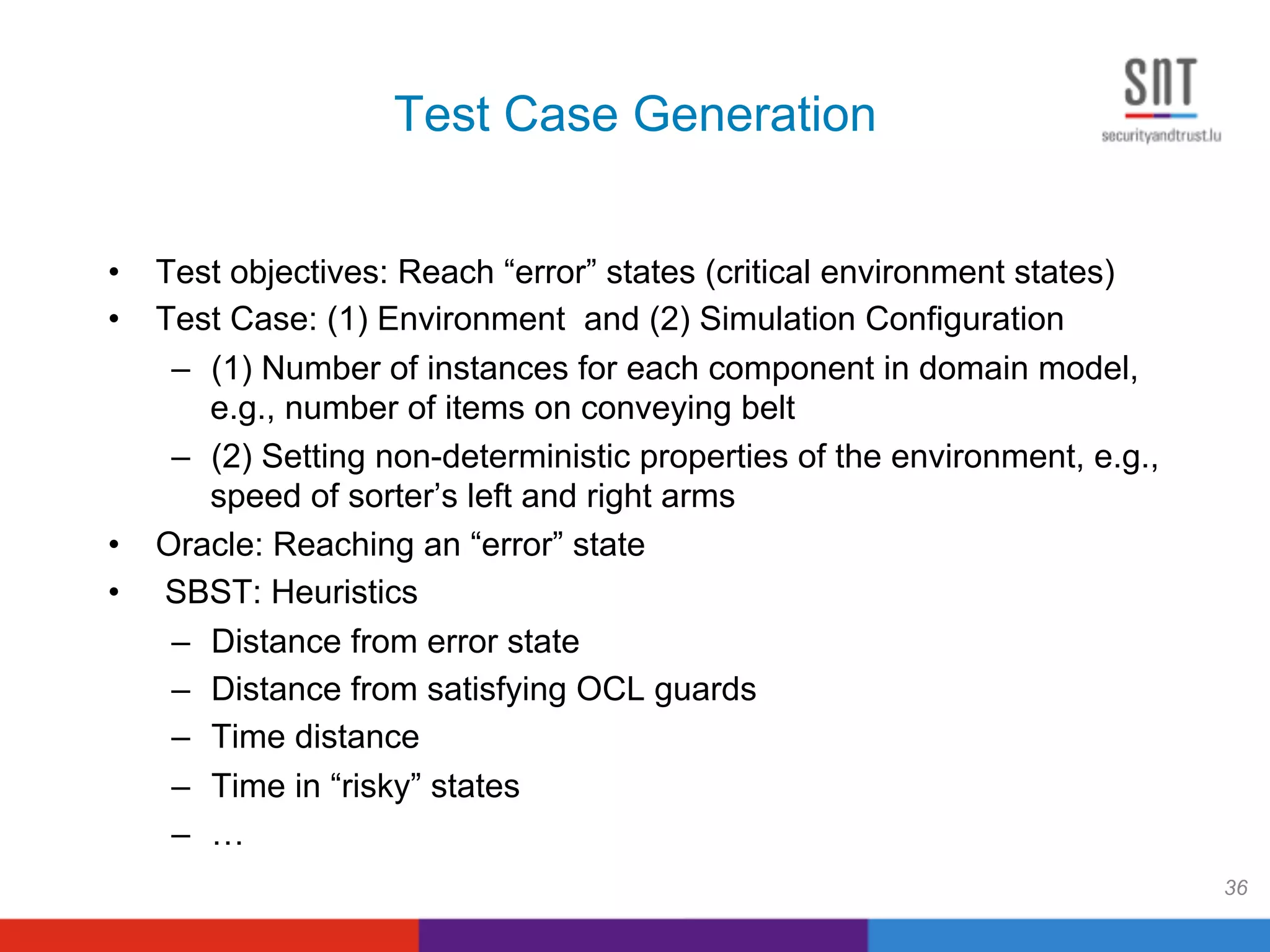

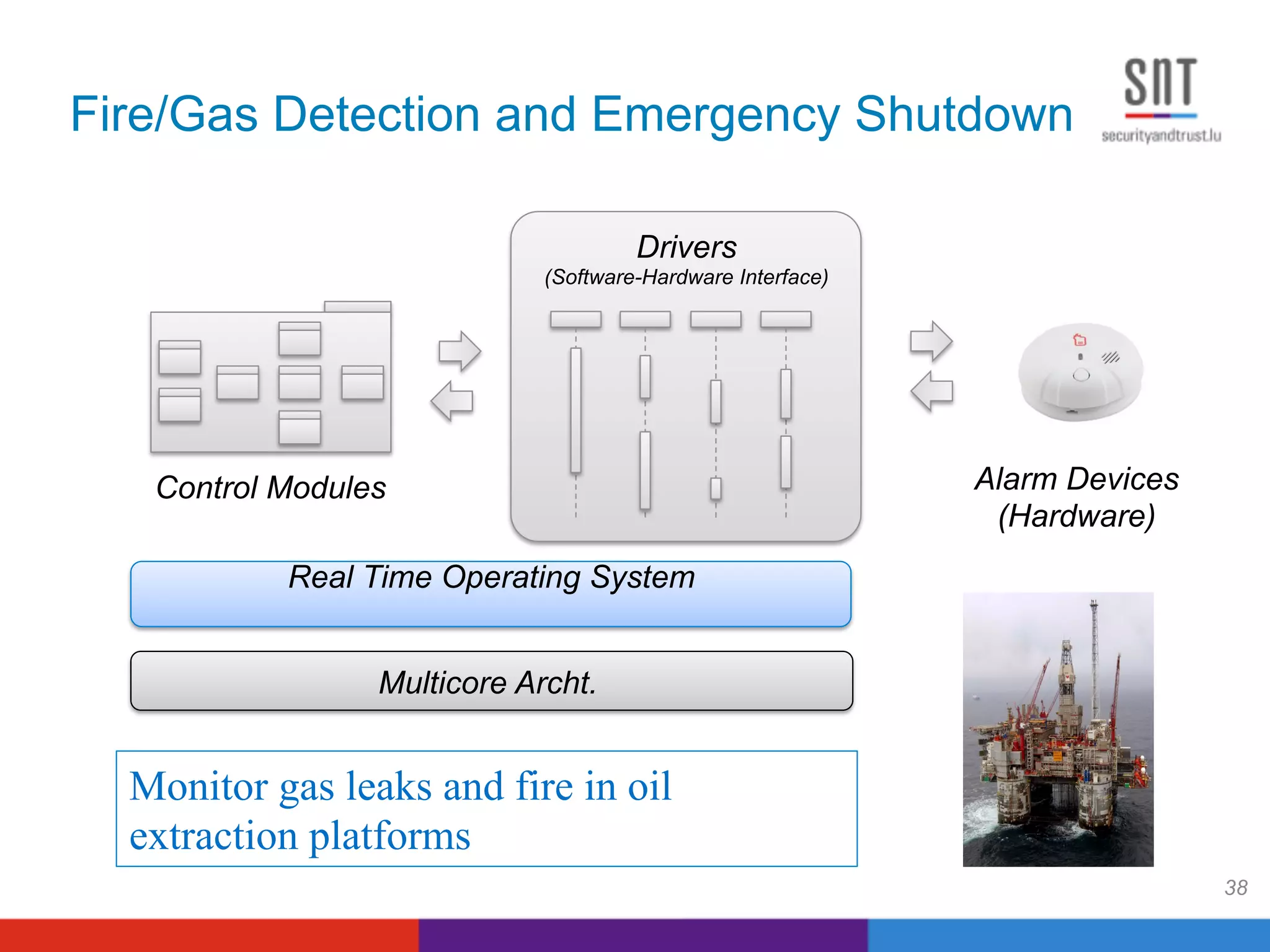

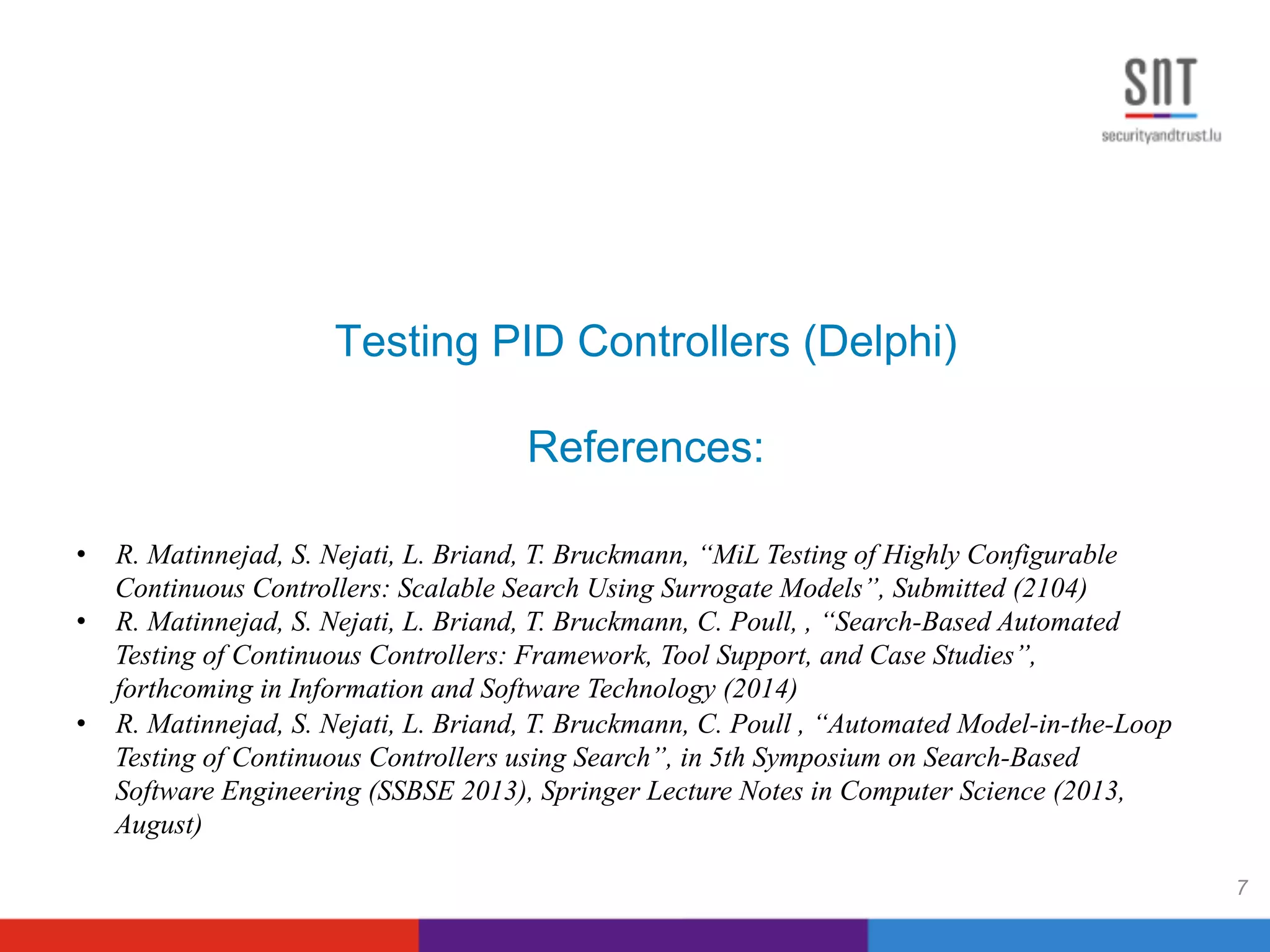

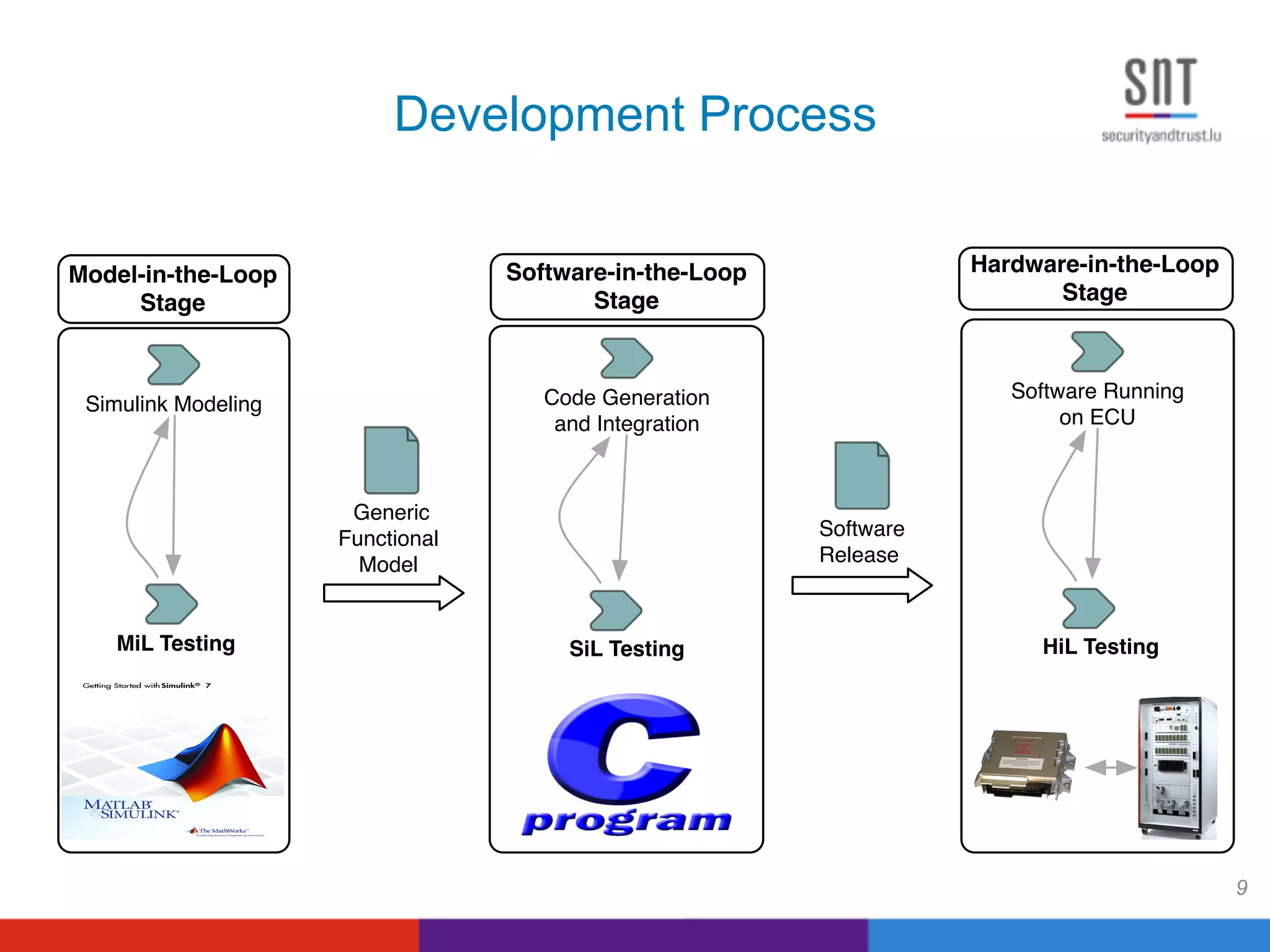

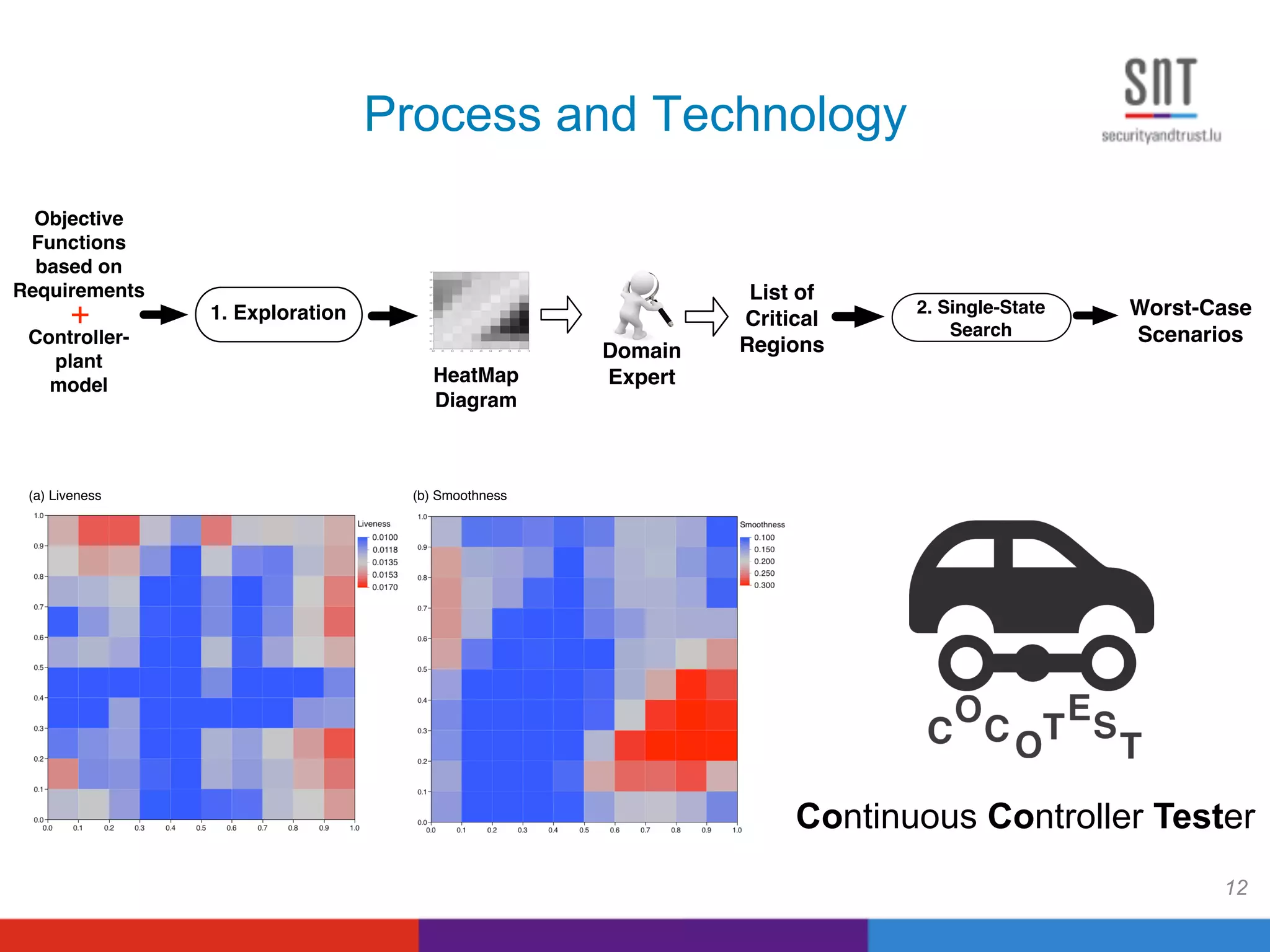

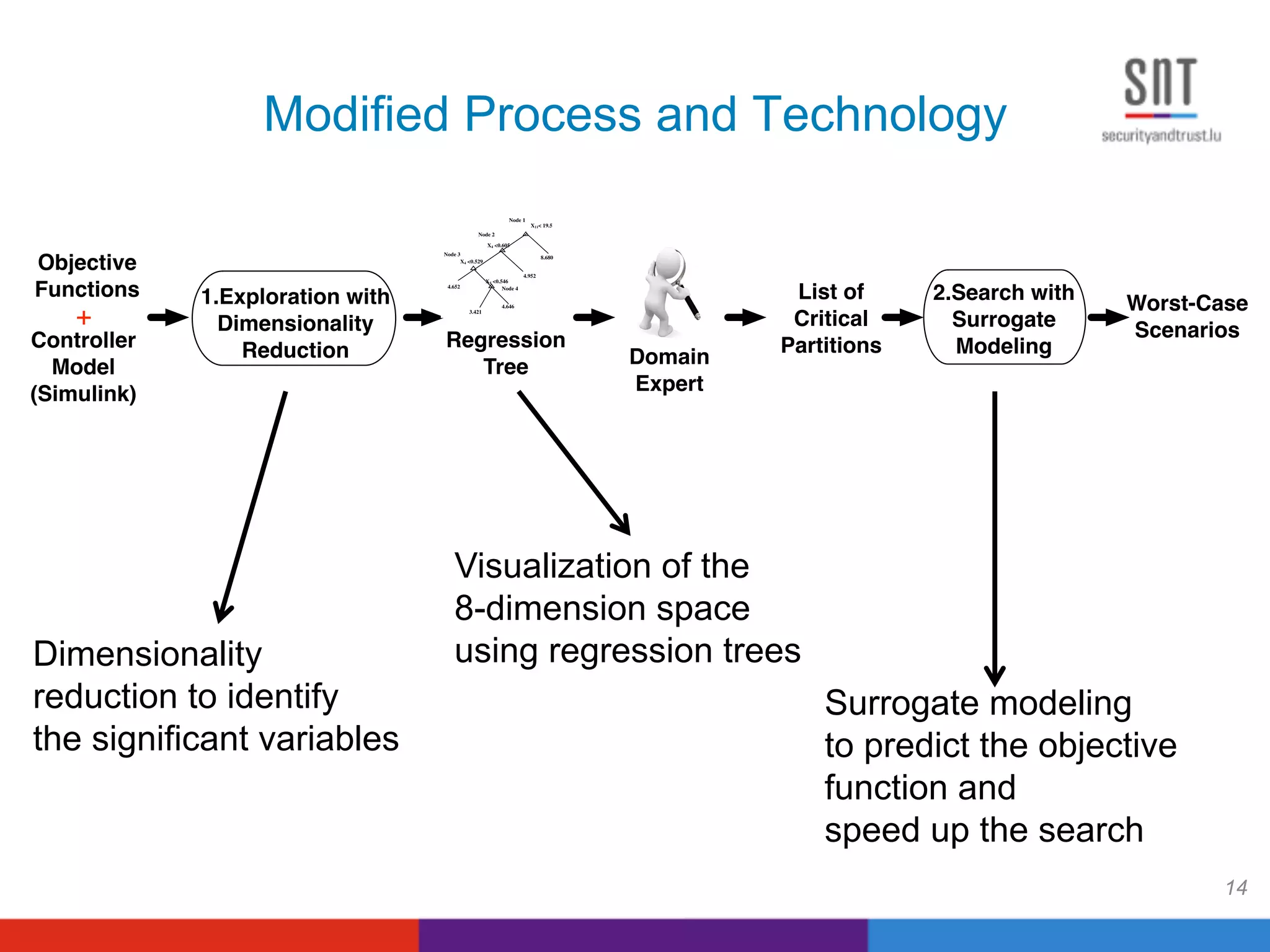

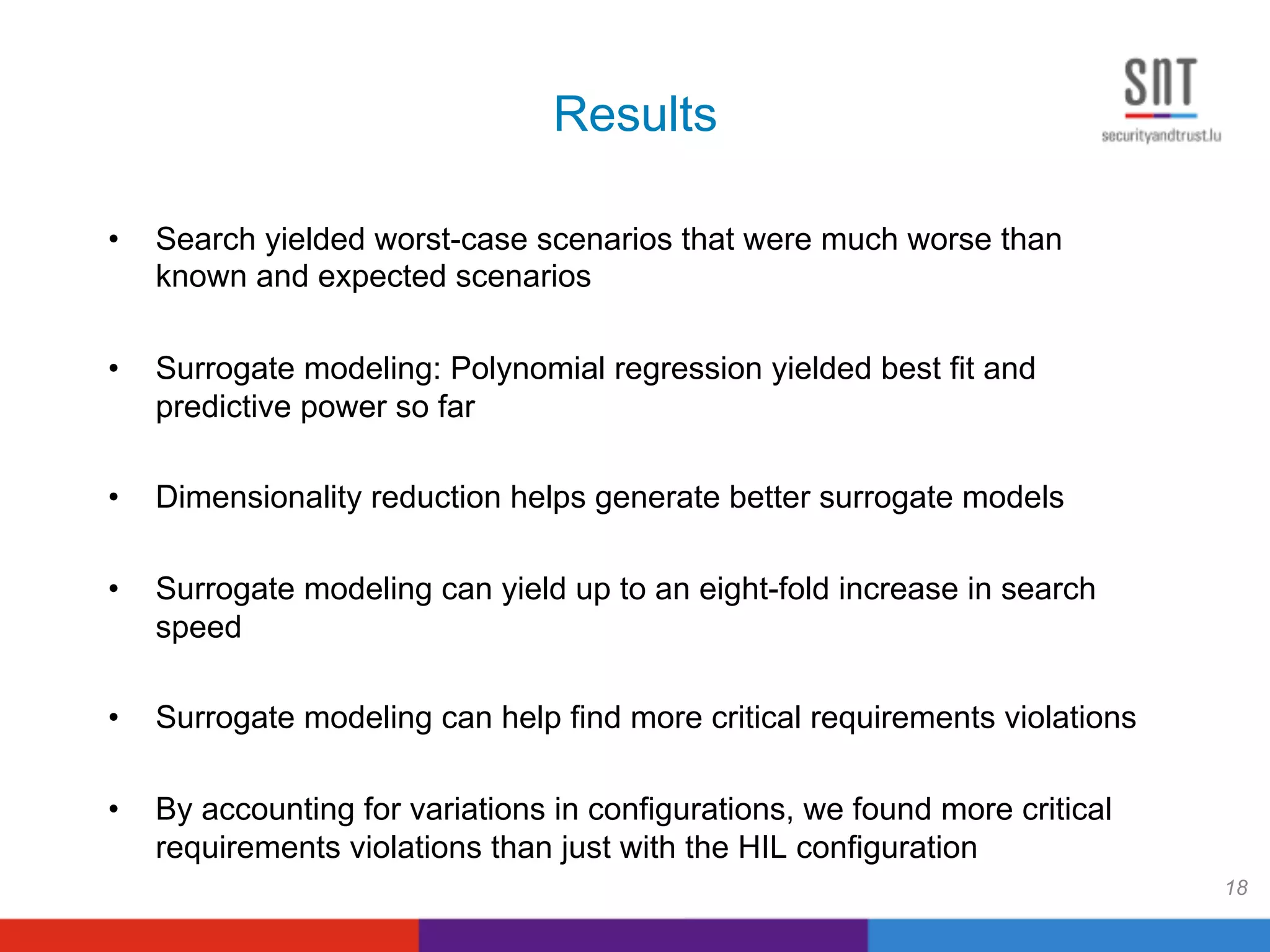

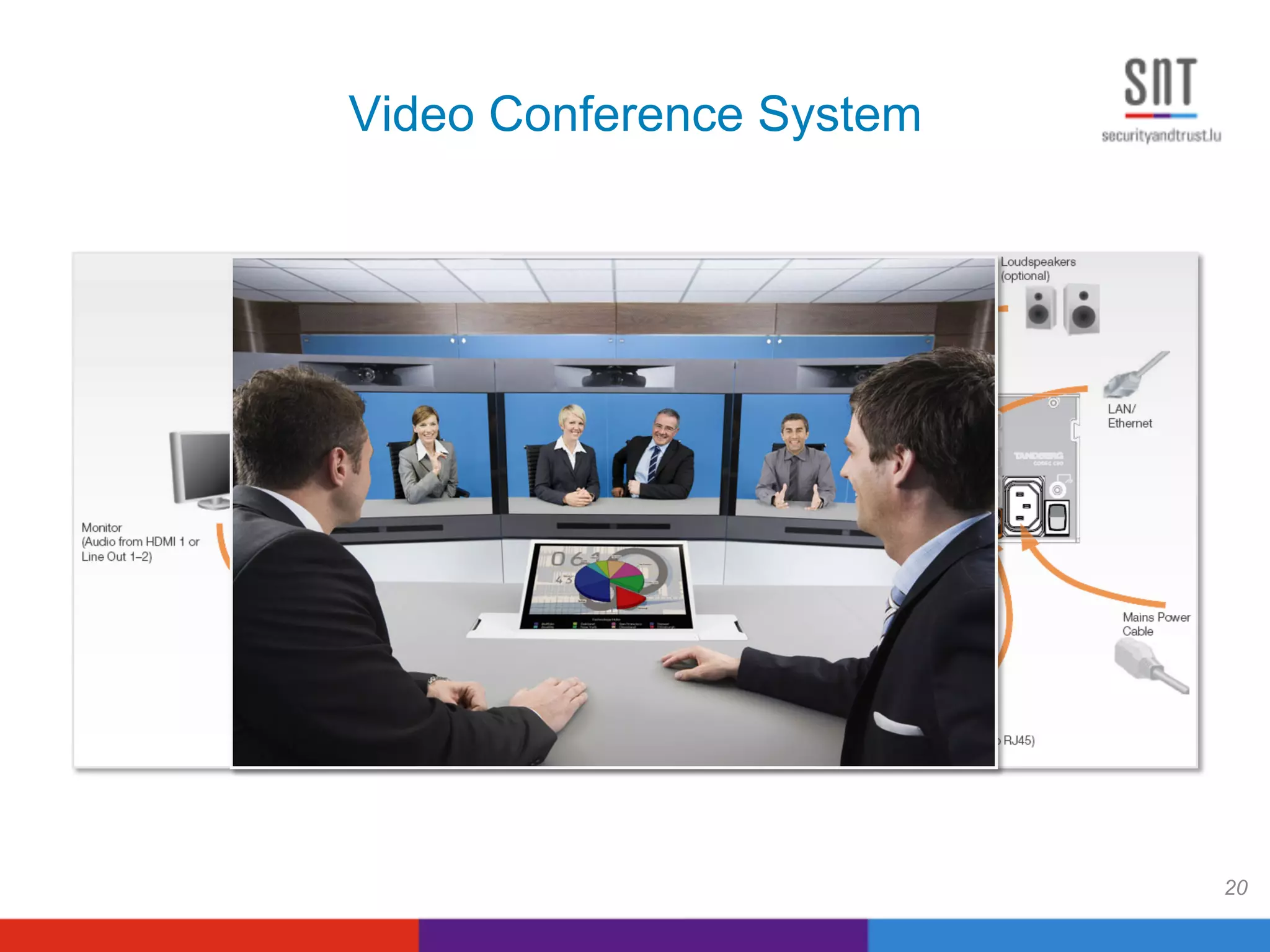

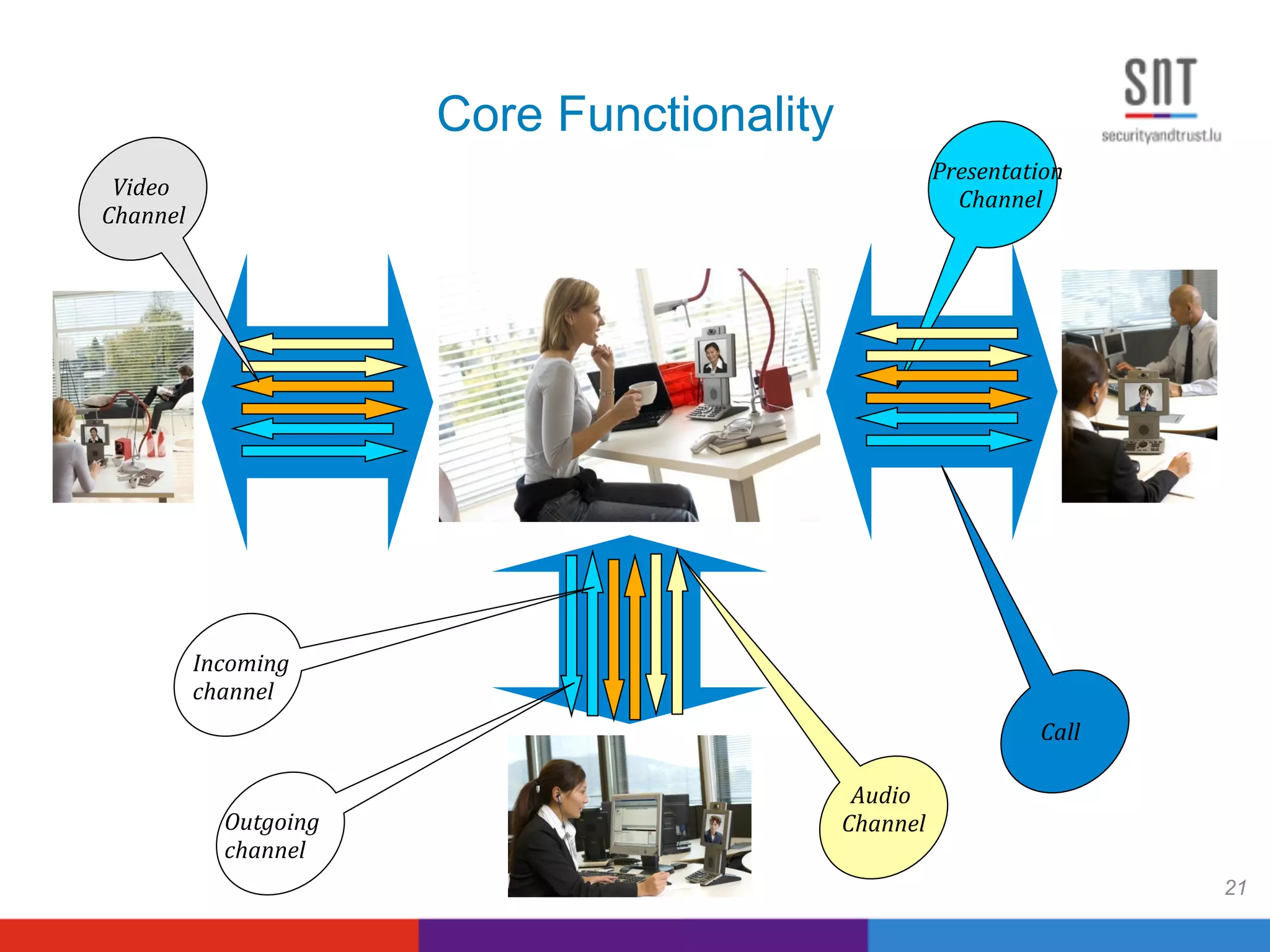

This document summarizes four research projects conducted in collaboration with industry partners on search-based software testing. It discusses projects on testing PID controllers with Delphi, robustness testing a video conferencing system with Cisco, environment-based testing of a seismic acquisition system with WesternGeco, and stress testing safety-critical drivers in the oil and gas industry with Kongsberg. It also outlines lessons learned from the collaborations and discusses effective models of collaborative research and innovation between academia and industry.

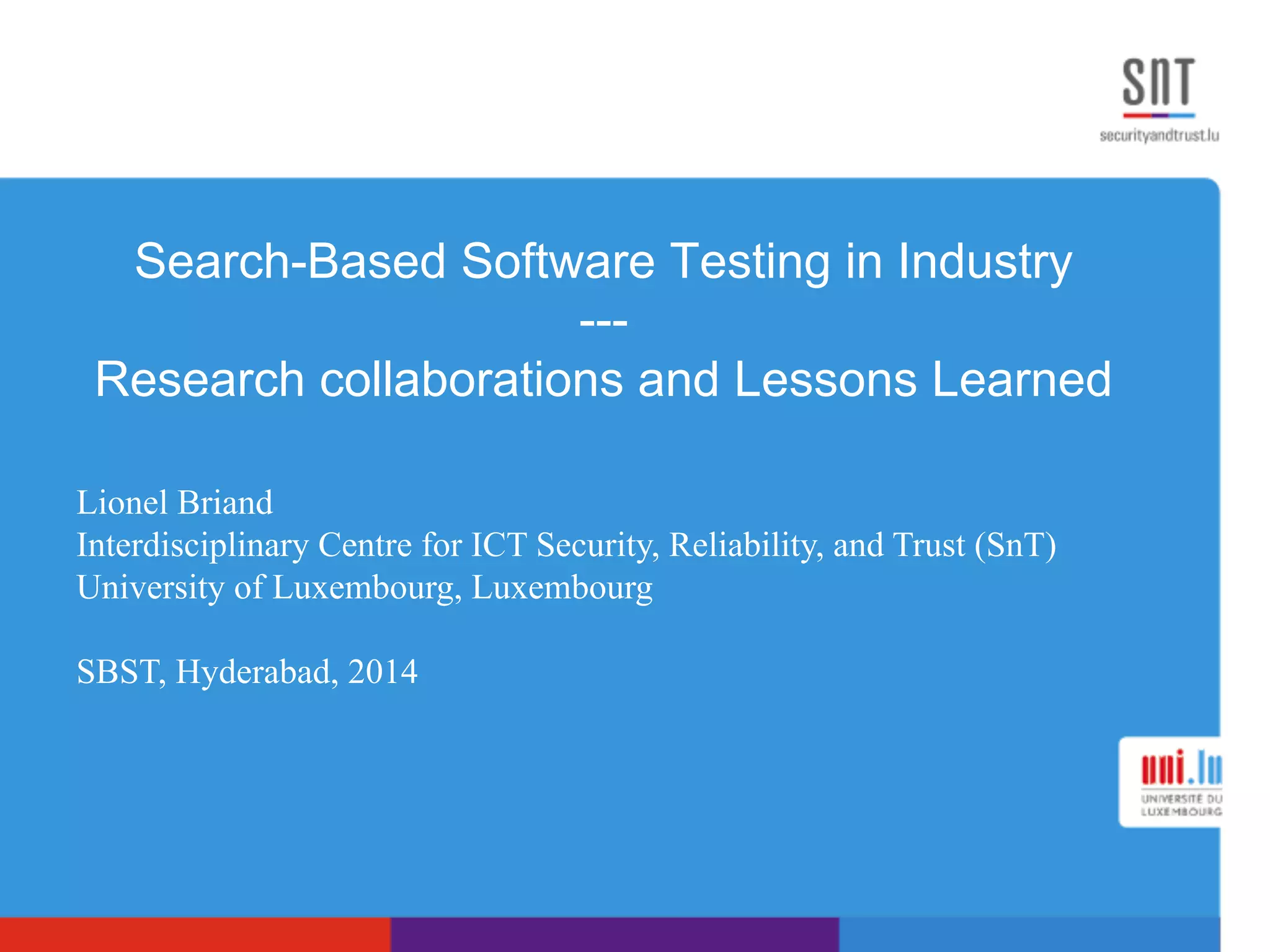

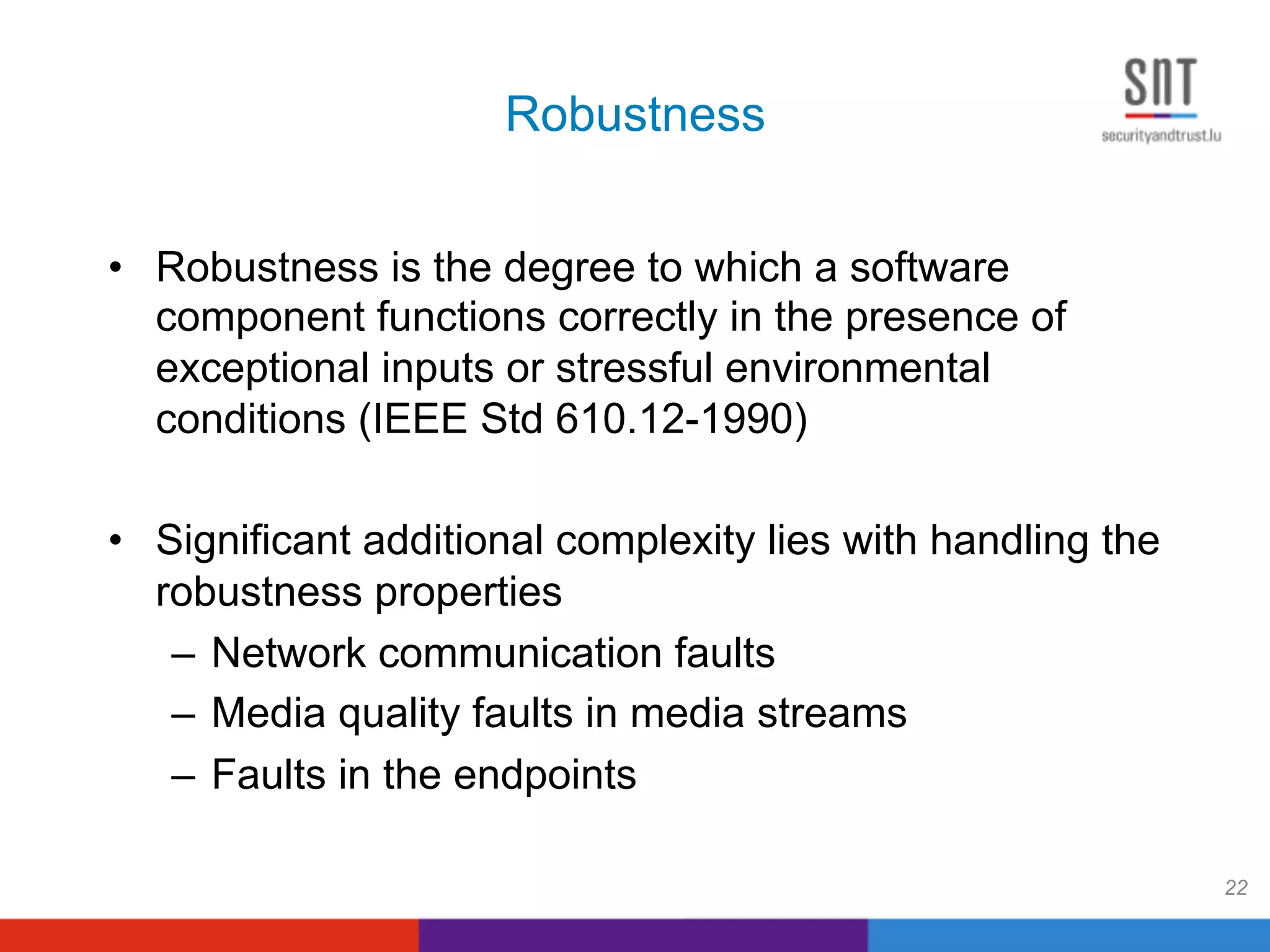

![Cross-Cutting Concern

23

NotFull

[0<#call

s<max]

Full

[#calls=

max]

dial()

dial()[#calls=max-1]

dial()

[#calls<max-1]

disconnect()

disconnect() [#calls=1]

disconnect()

[#calls>1]

Idle

[#calls=0

]

Recovery

[…]

After(time)

DisconnectAll()

PL>0 or PacketDelay>0 or ReorderDelay>0 or

corrupt>0 or Duplicate>0

PL=0 && PacketDelay=0 &&

ReorderDelay=0 && corrupt=0 && Duplicate=0

Cross-cutting

concern

Base model](https://image.slidesharecdn.com/briand-sbst-2014-140602224856-phpapp01/75/Keynote-SBST-2014-Search-Based-Testing-23-2048.jpg)