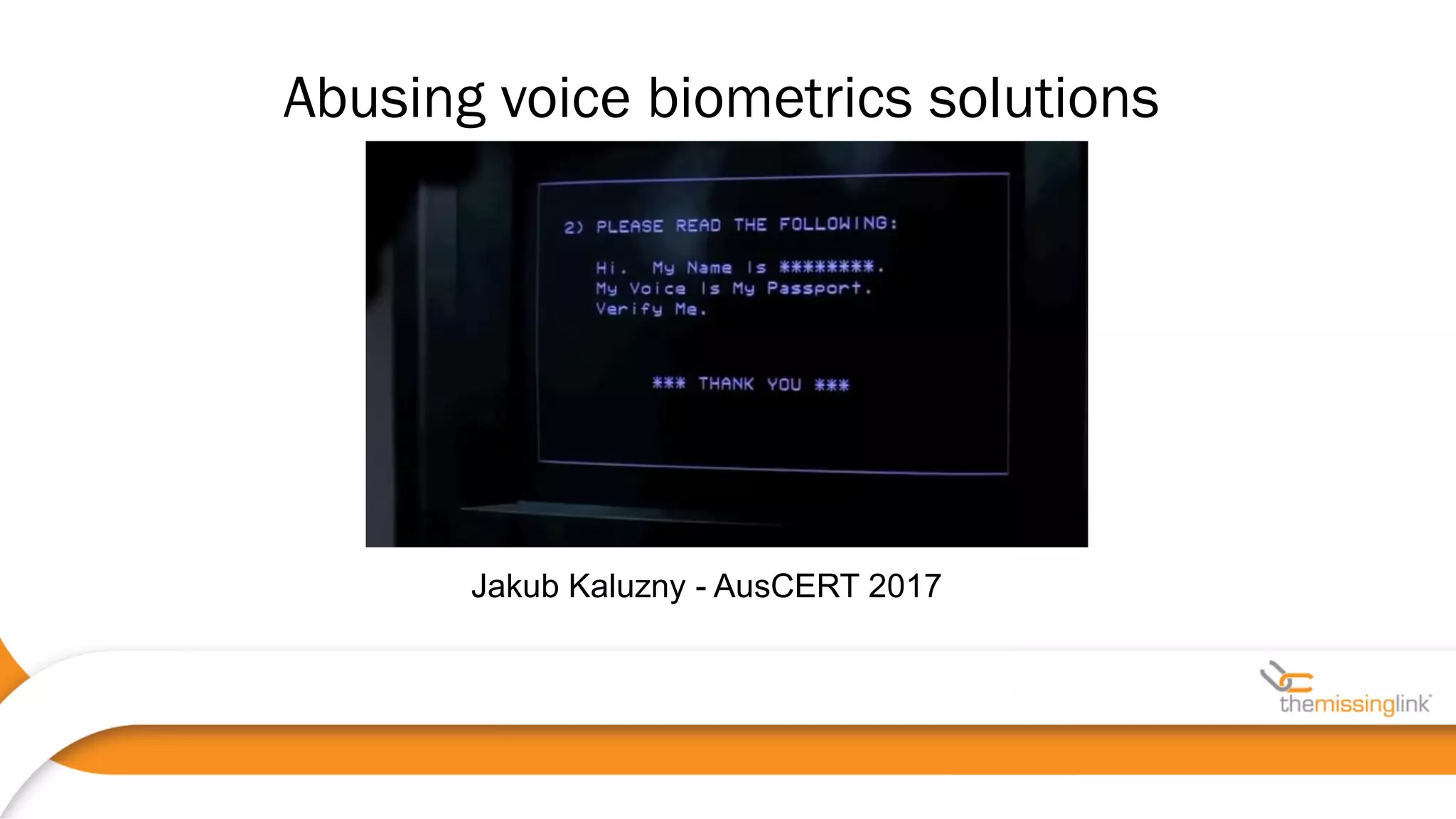

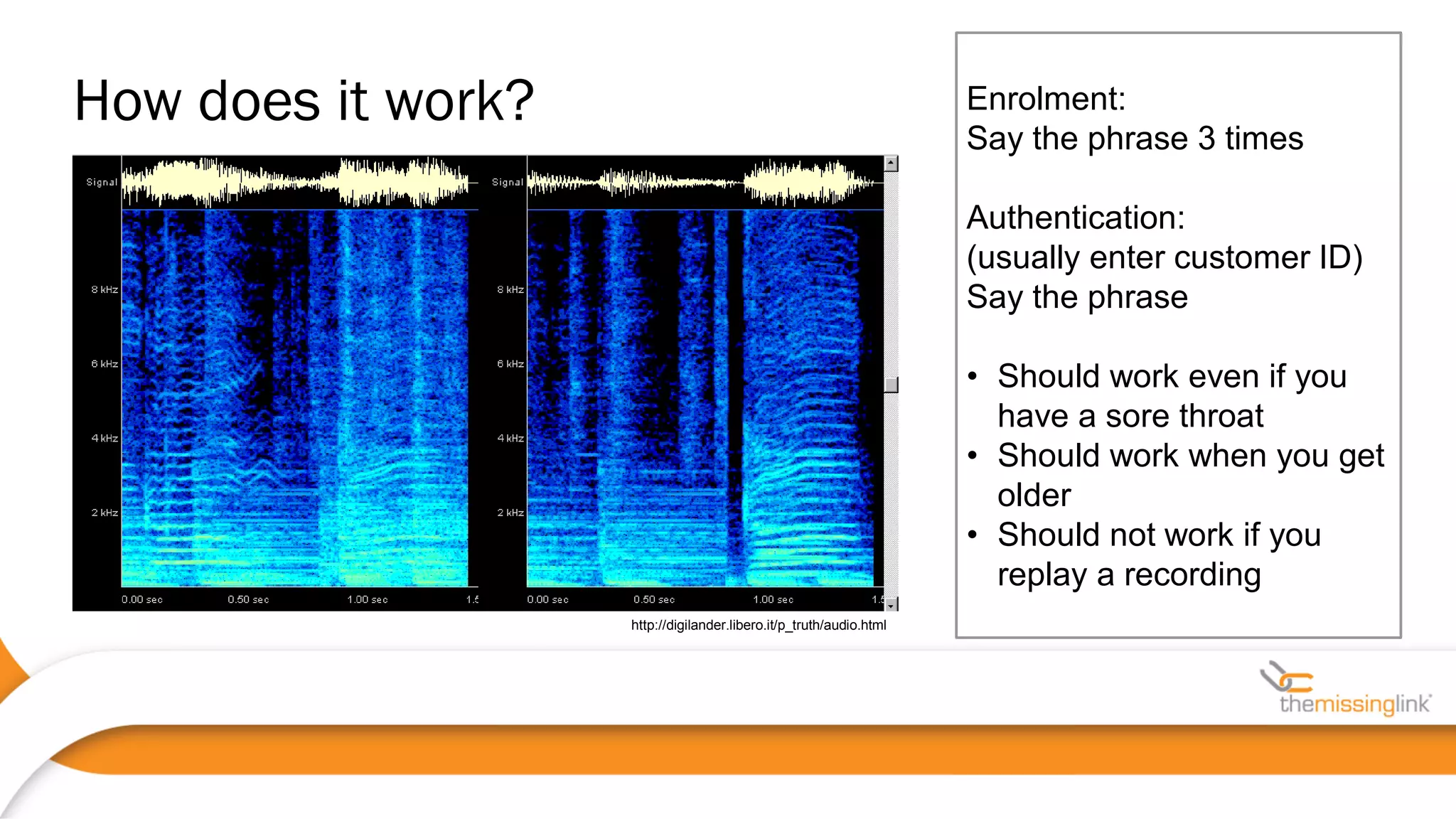

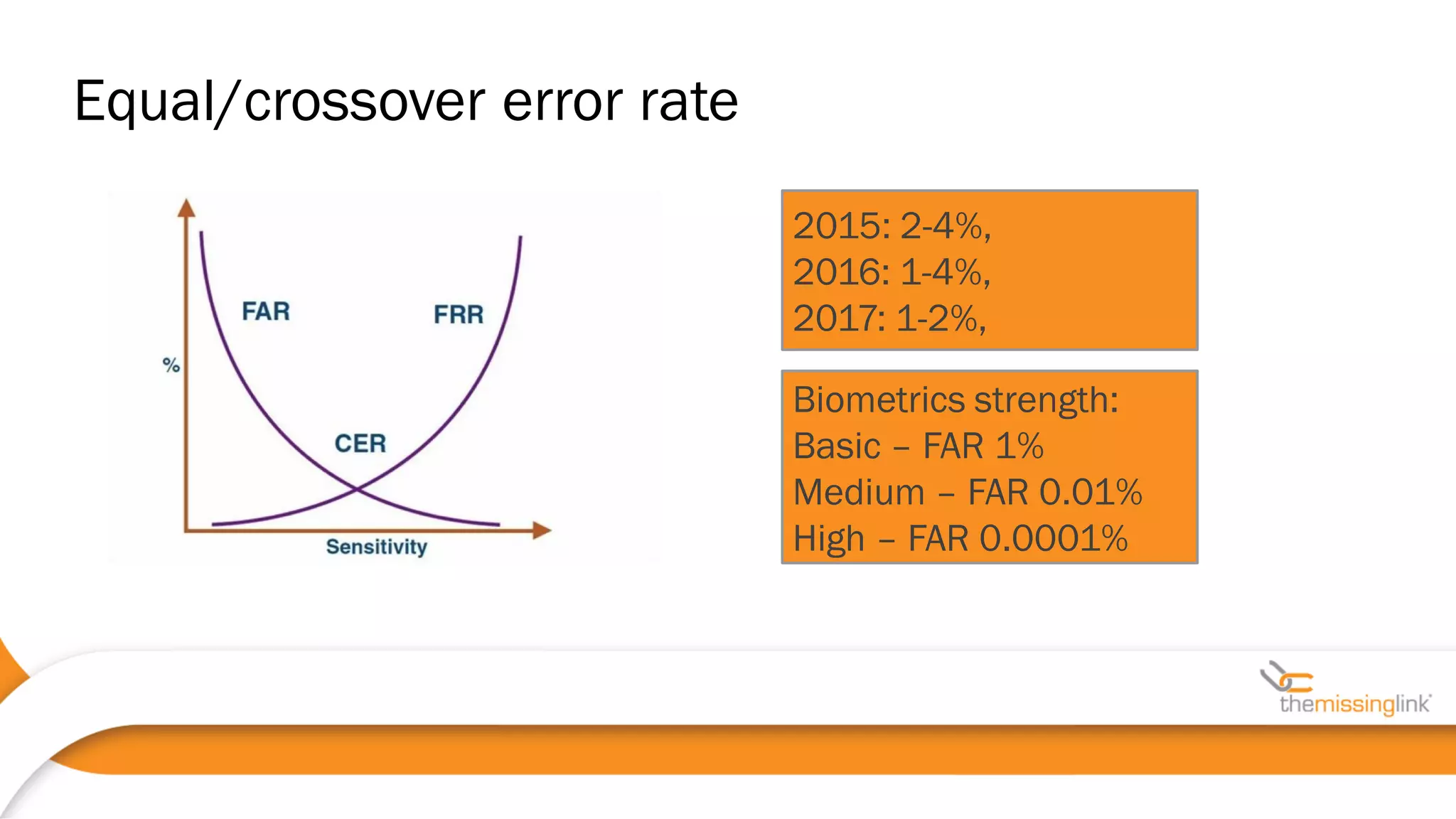

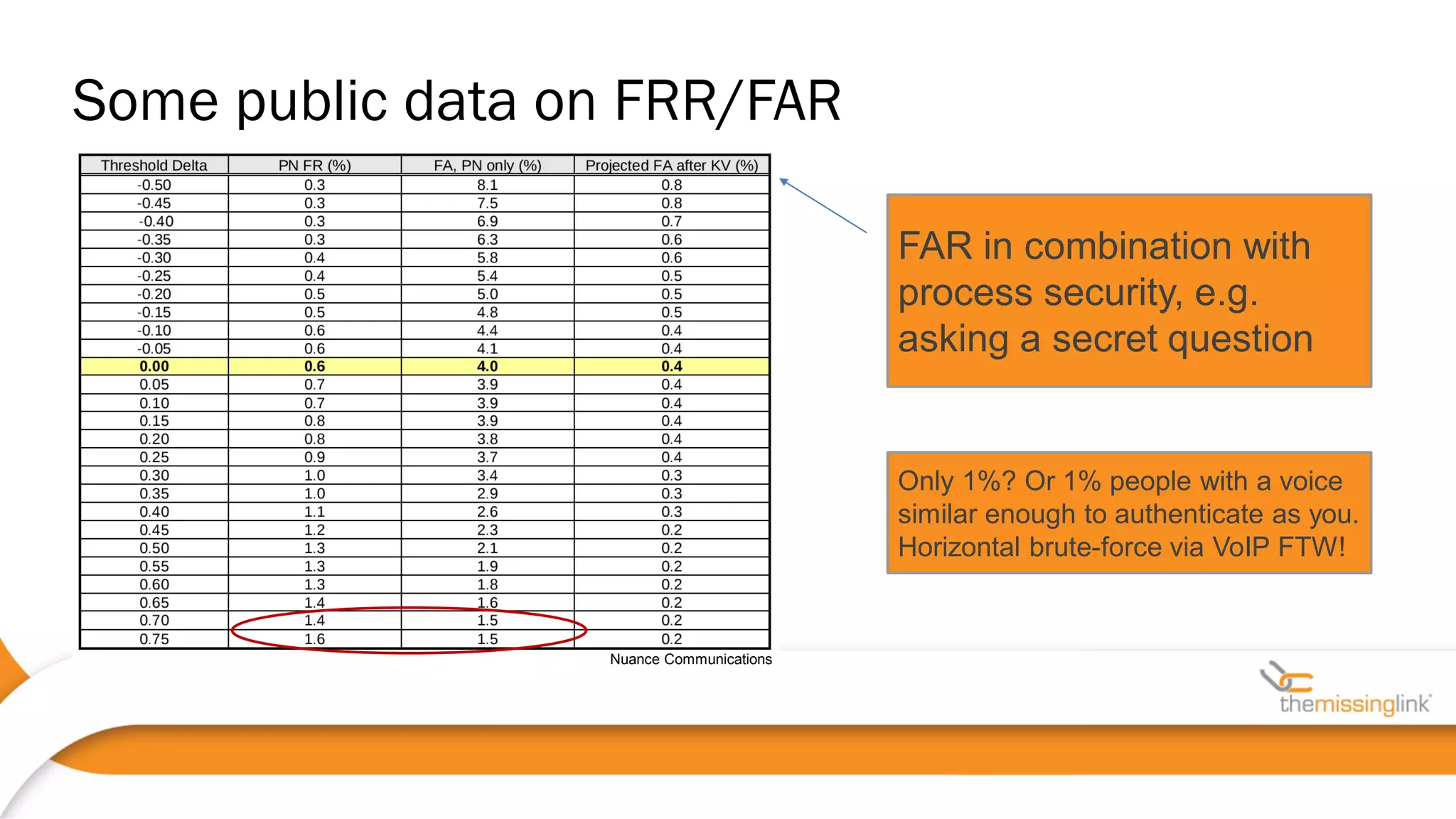

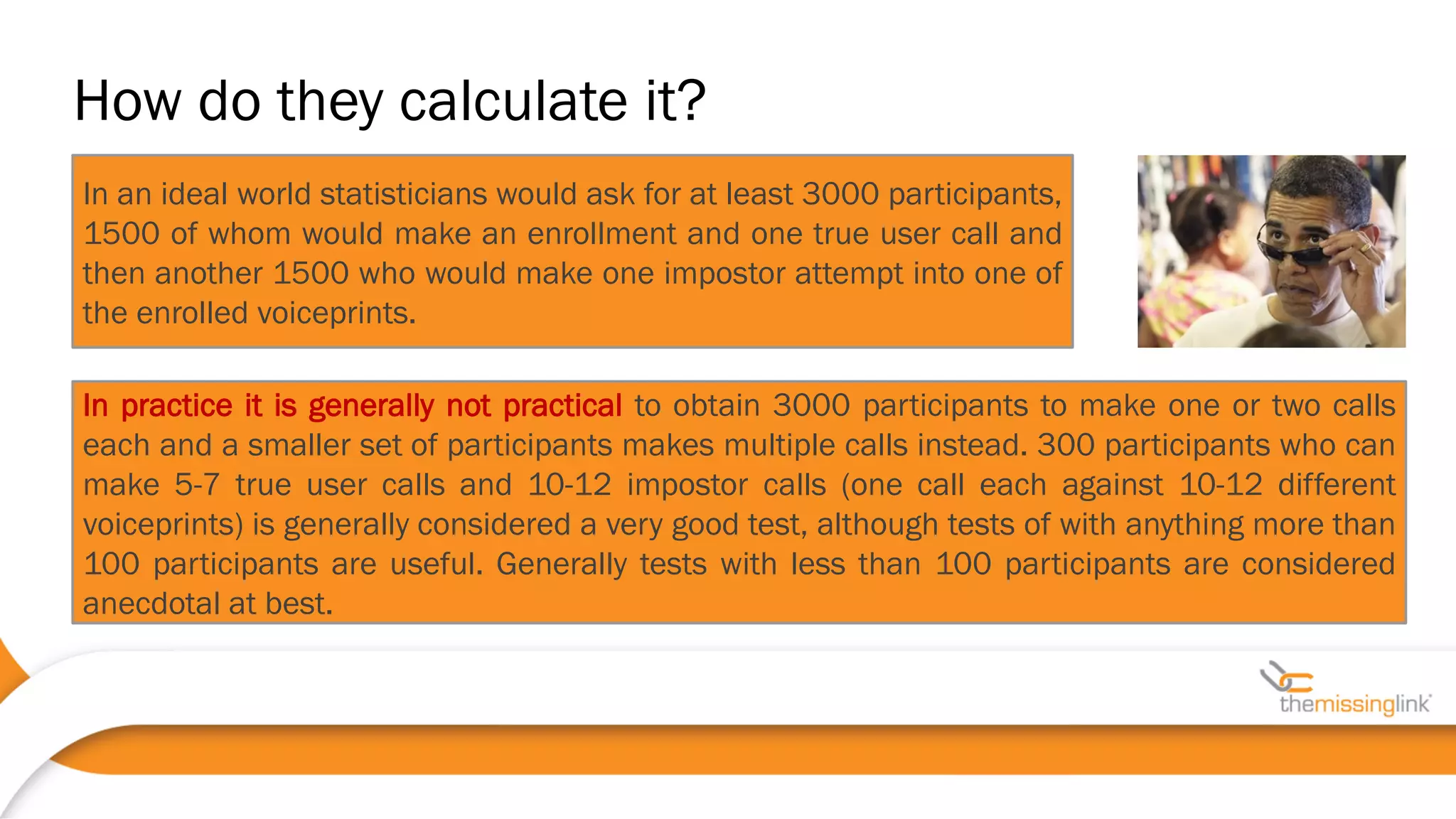

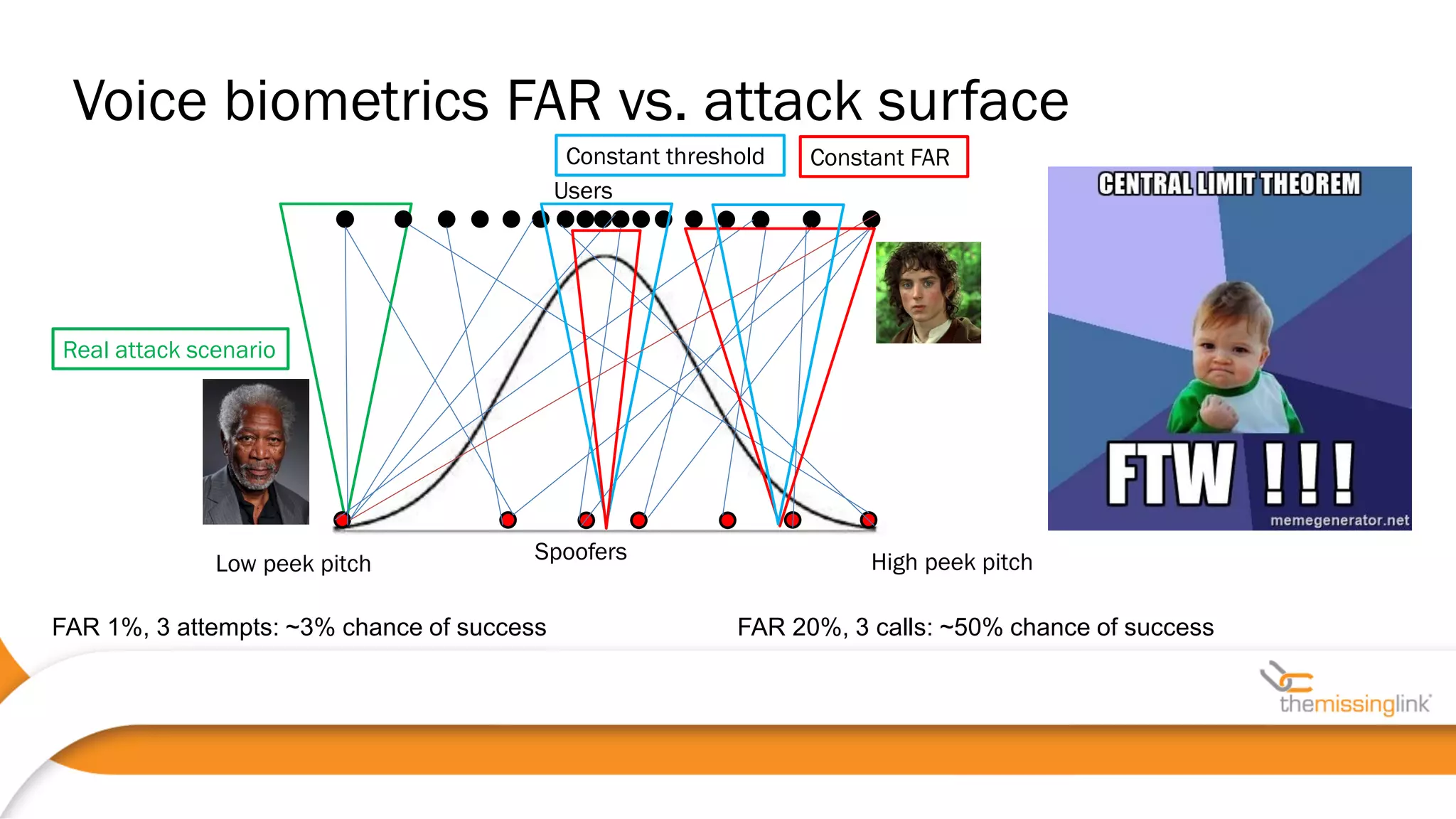

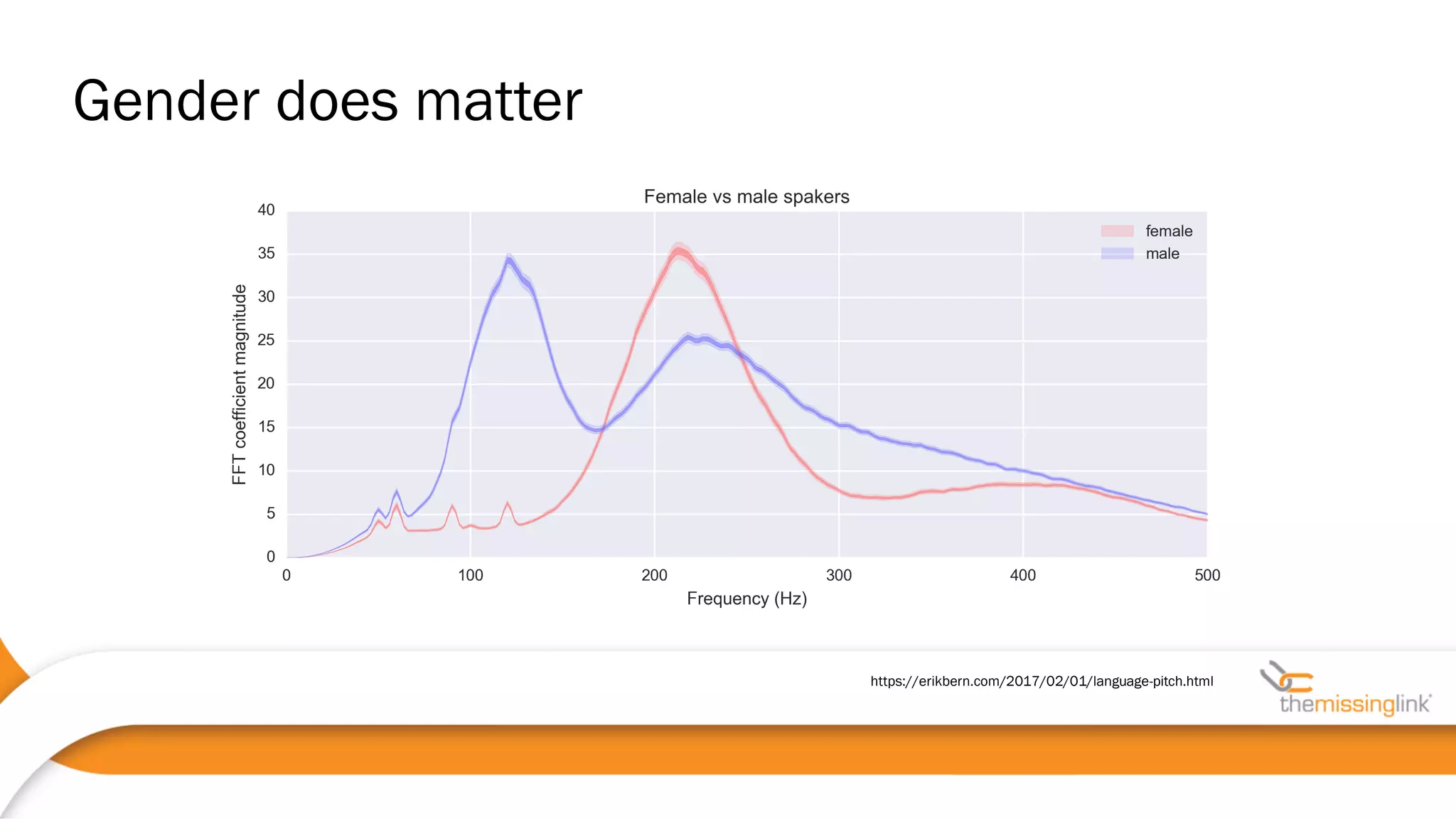

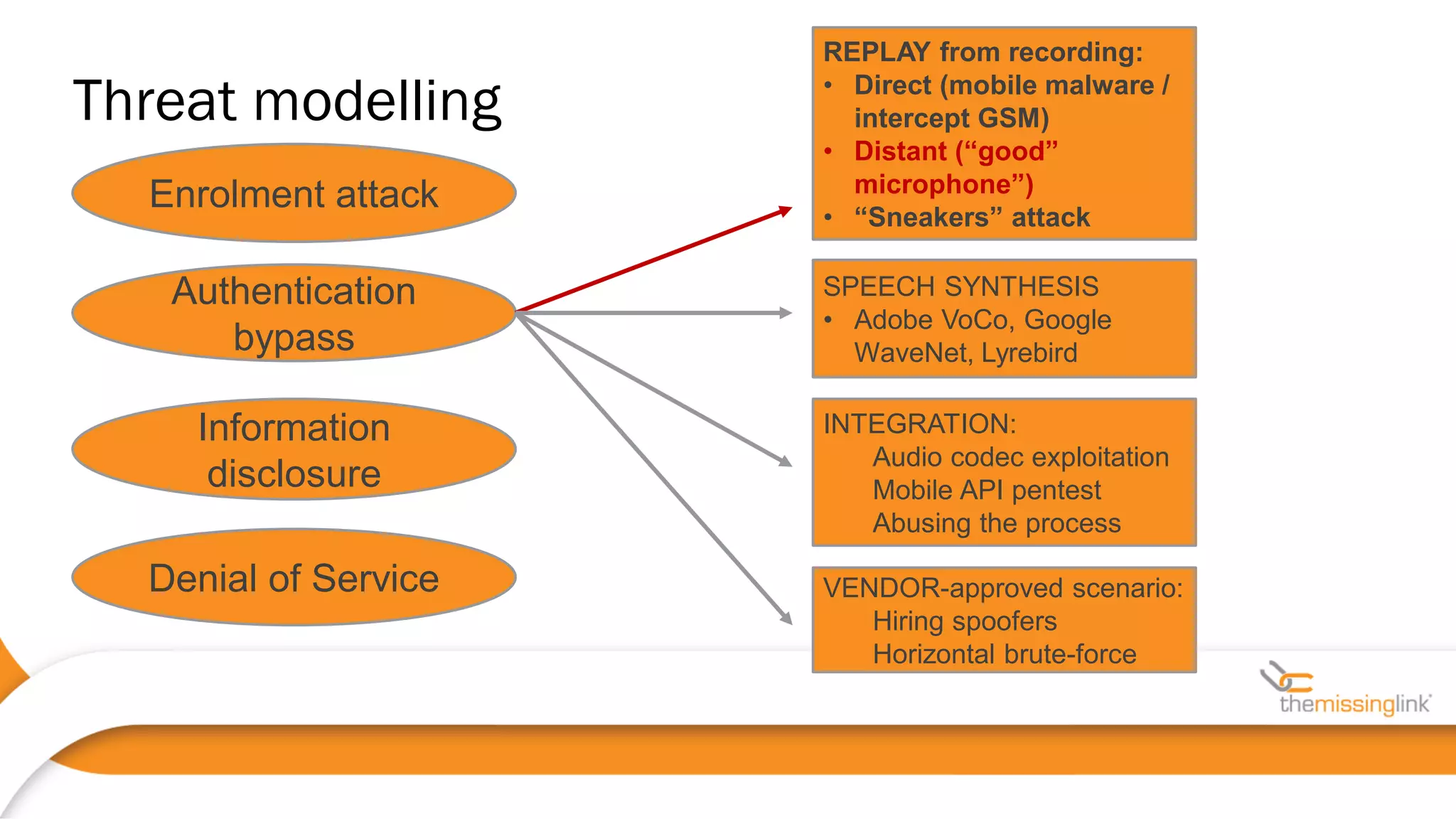

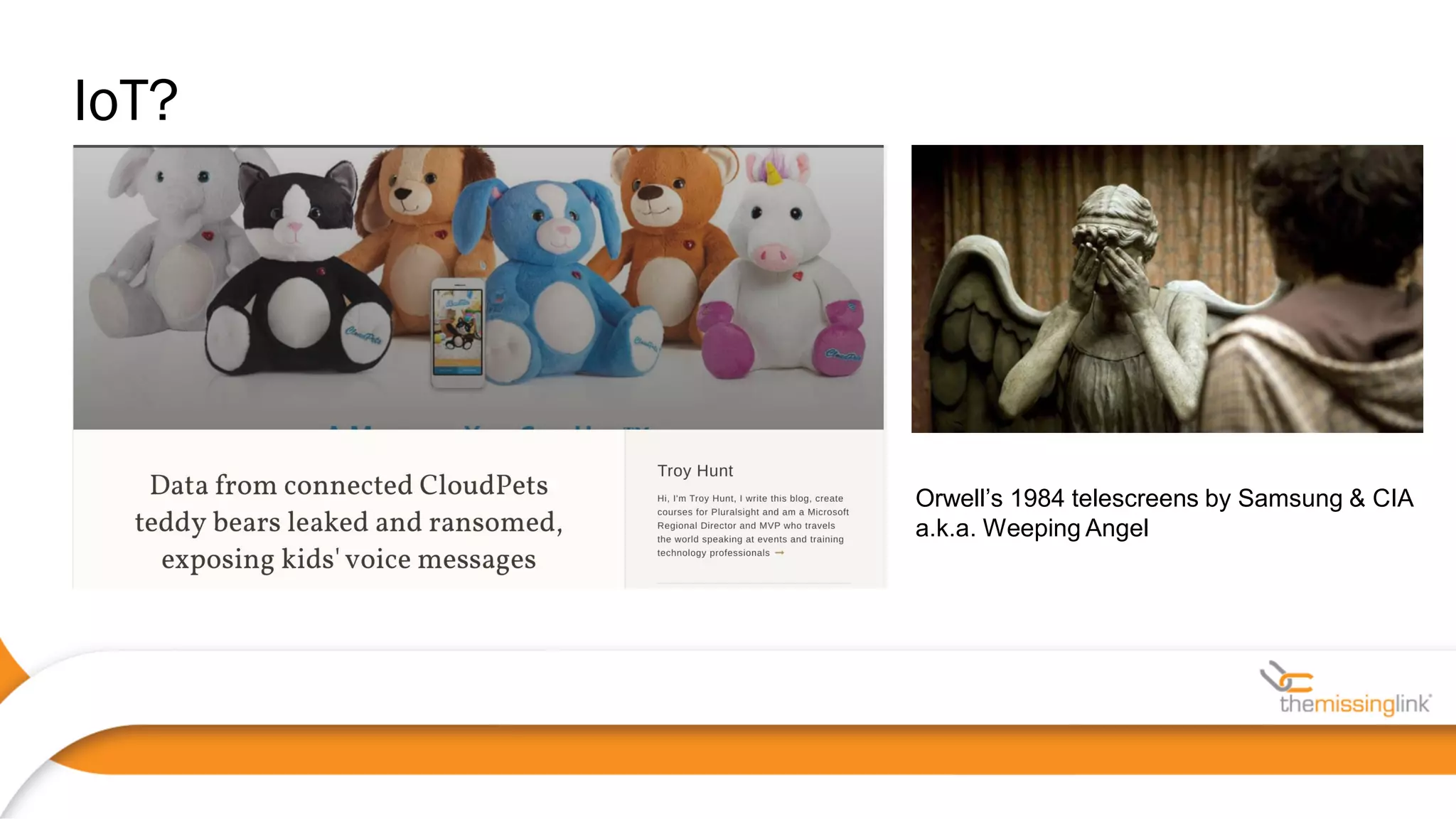

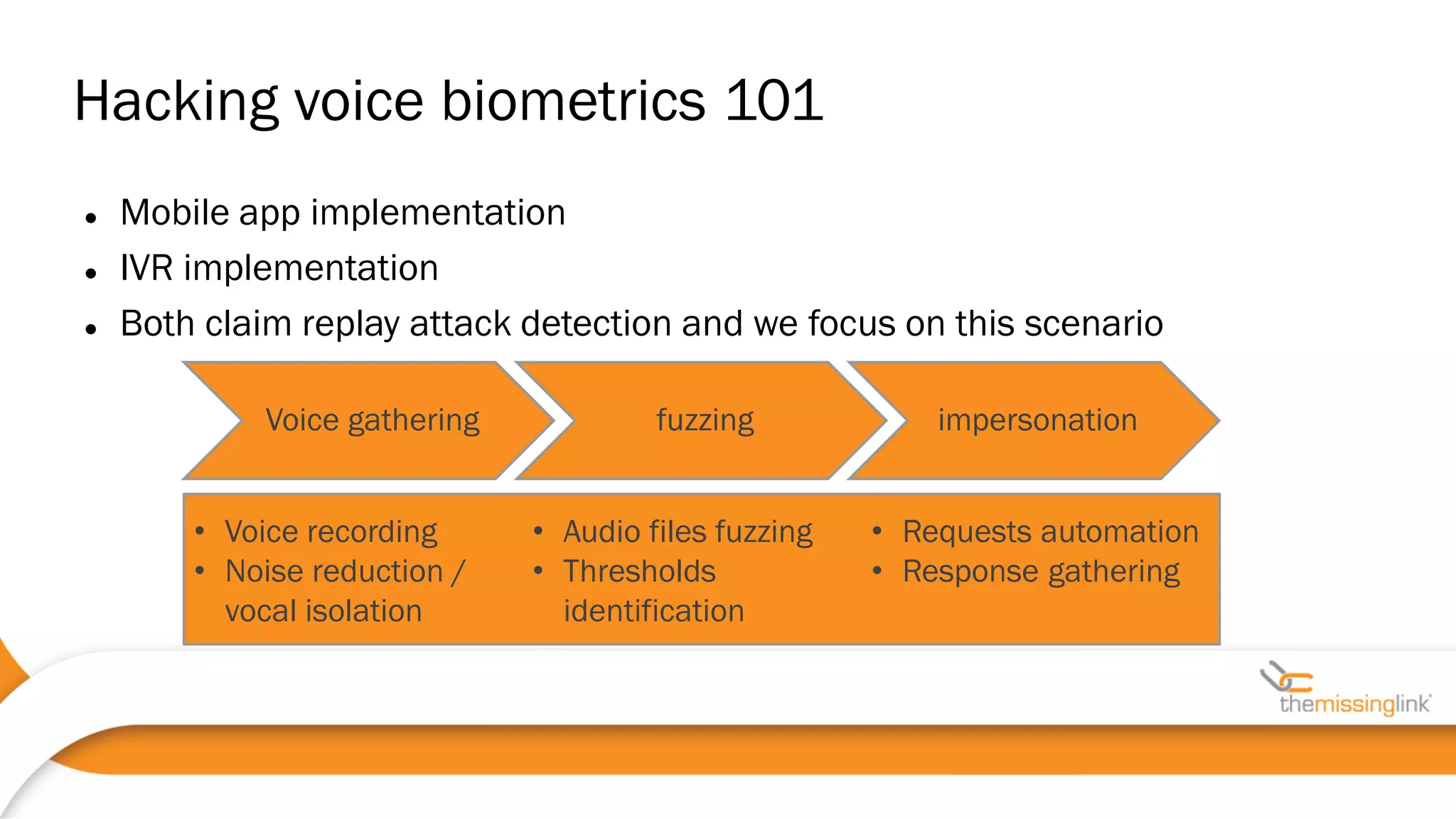

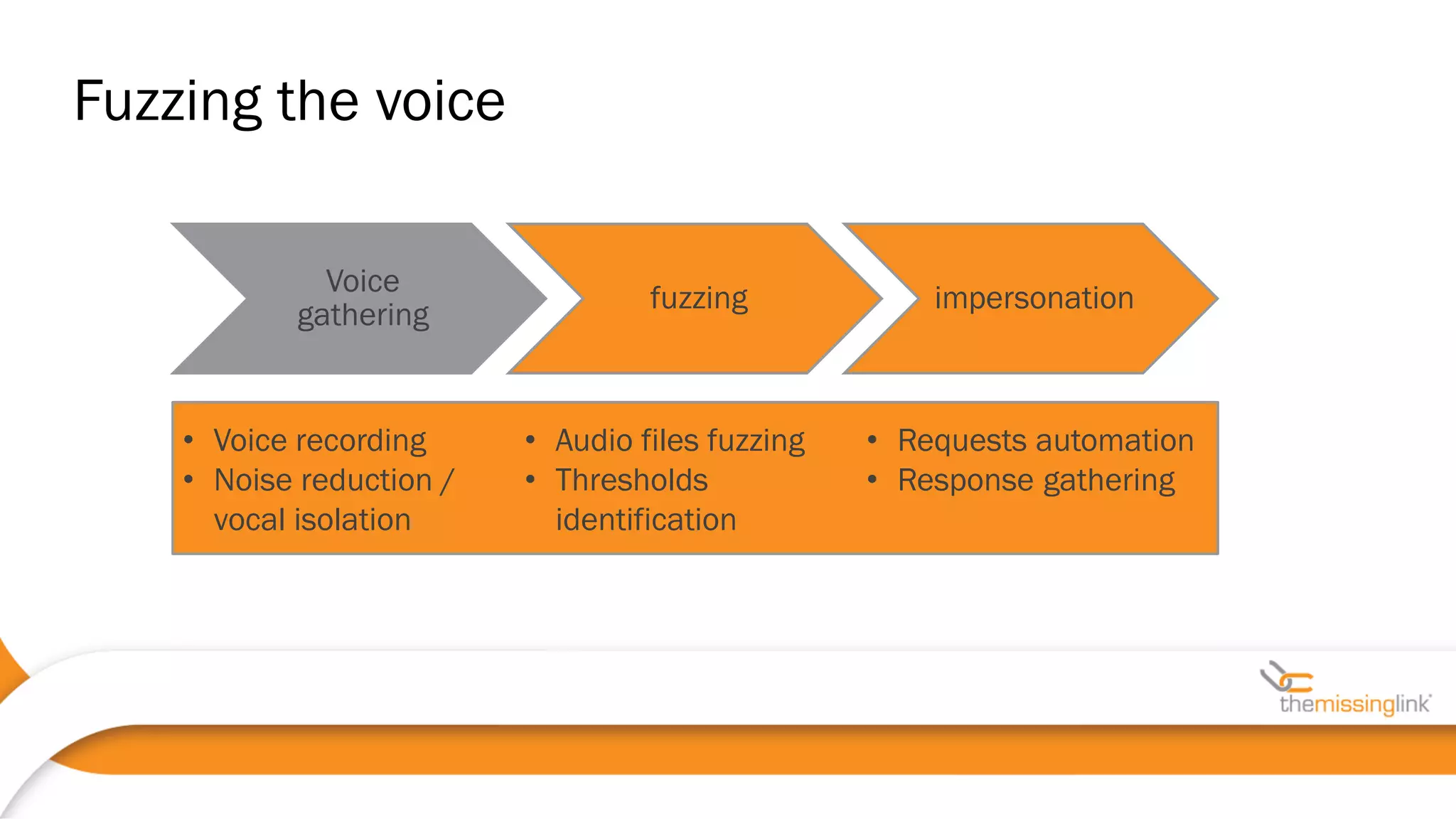

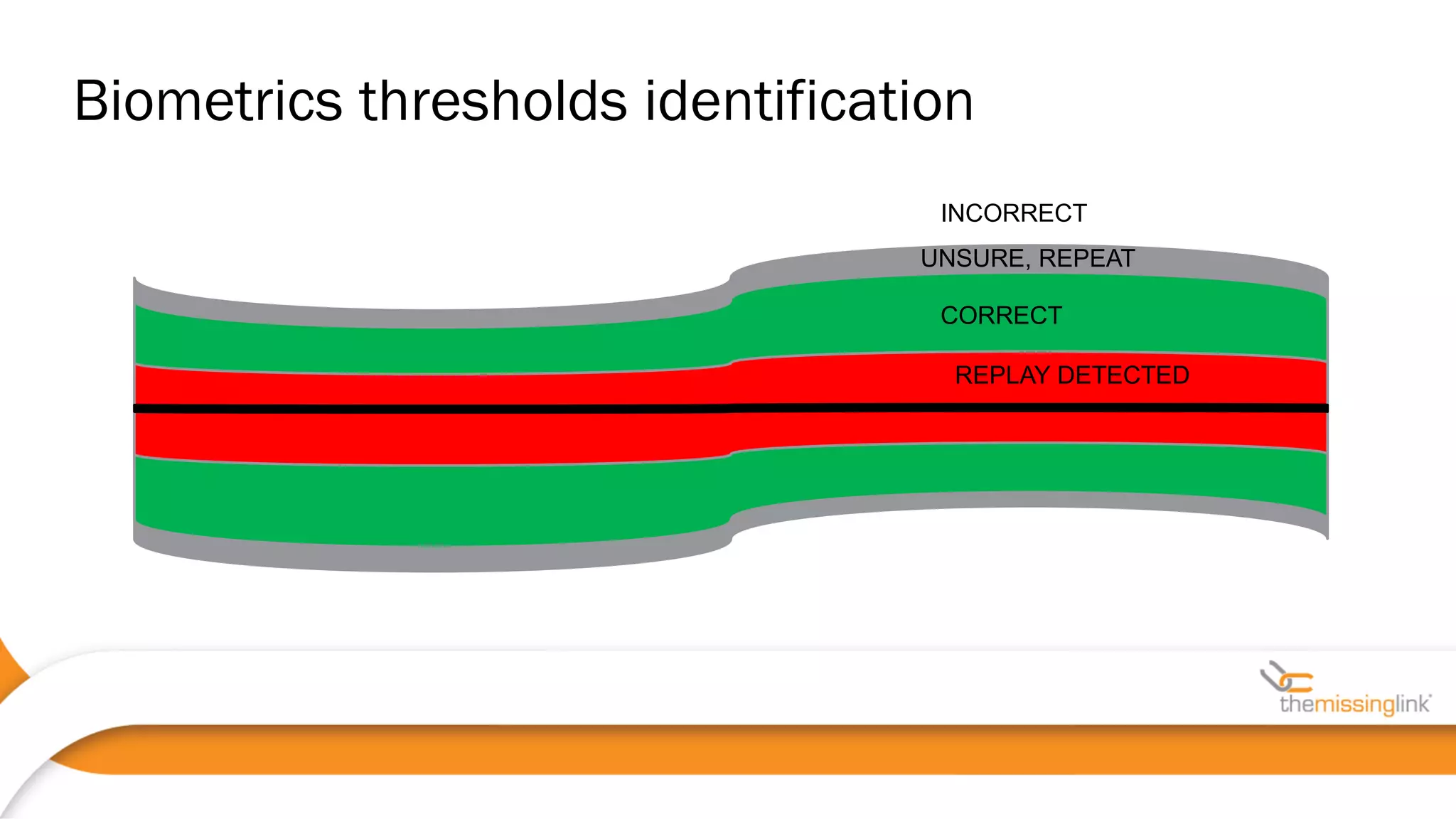

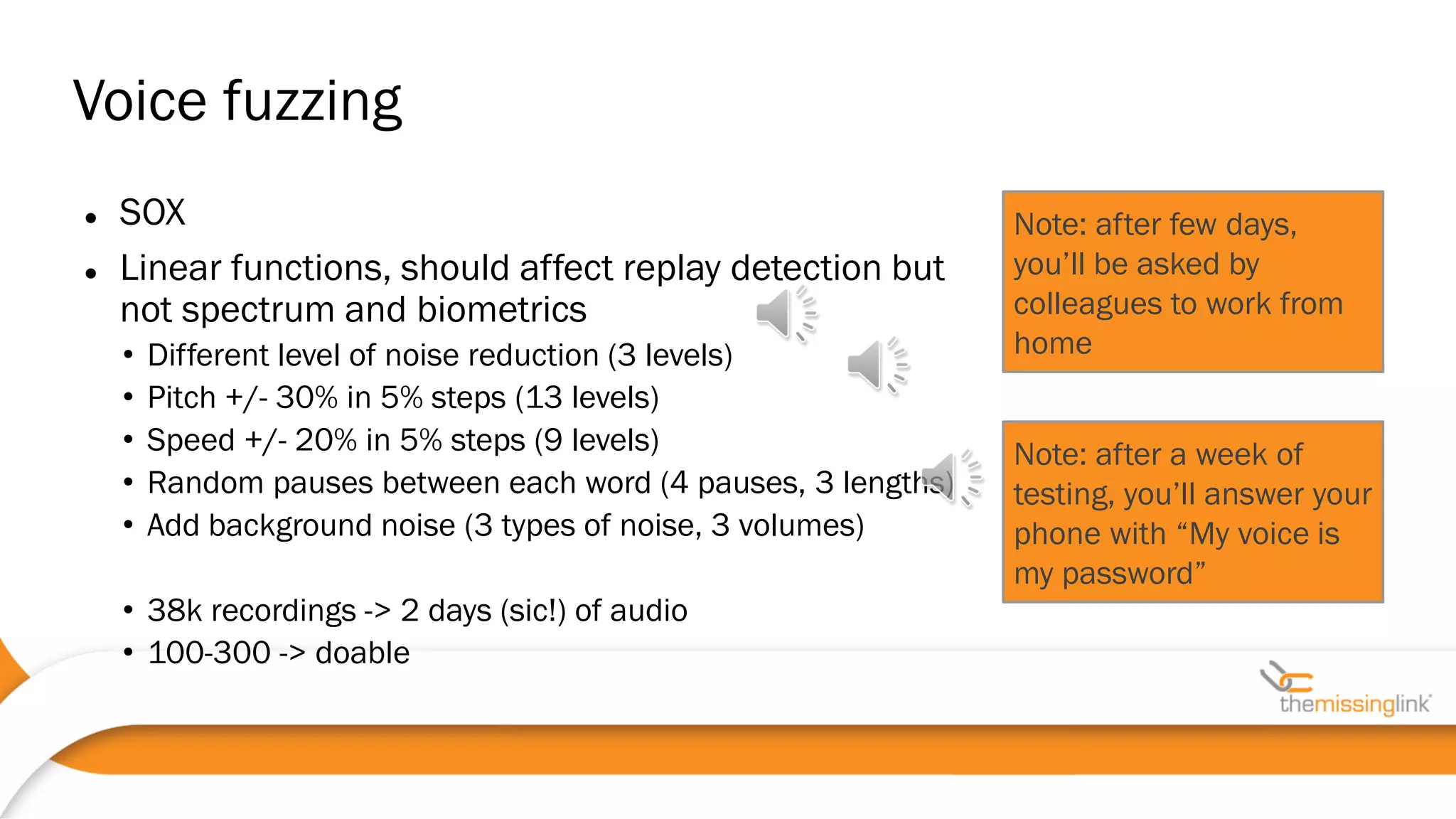

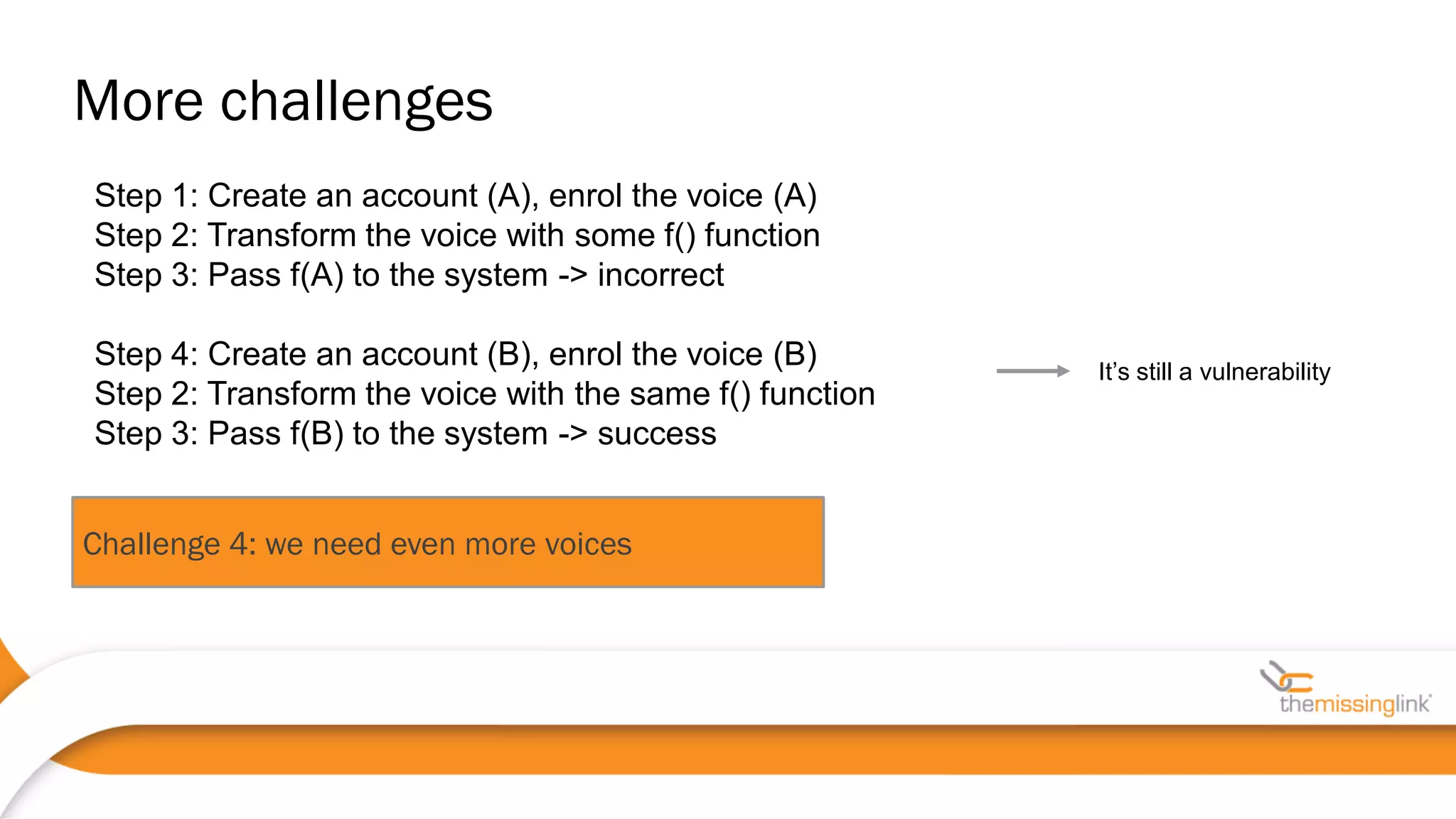

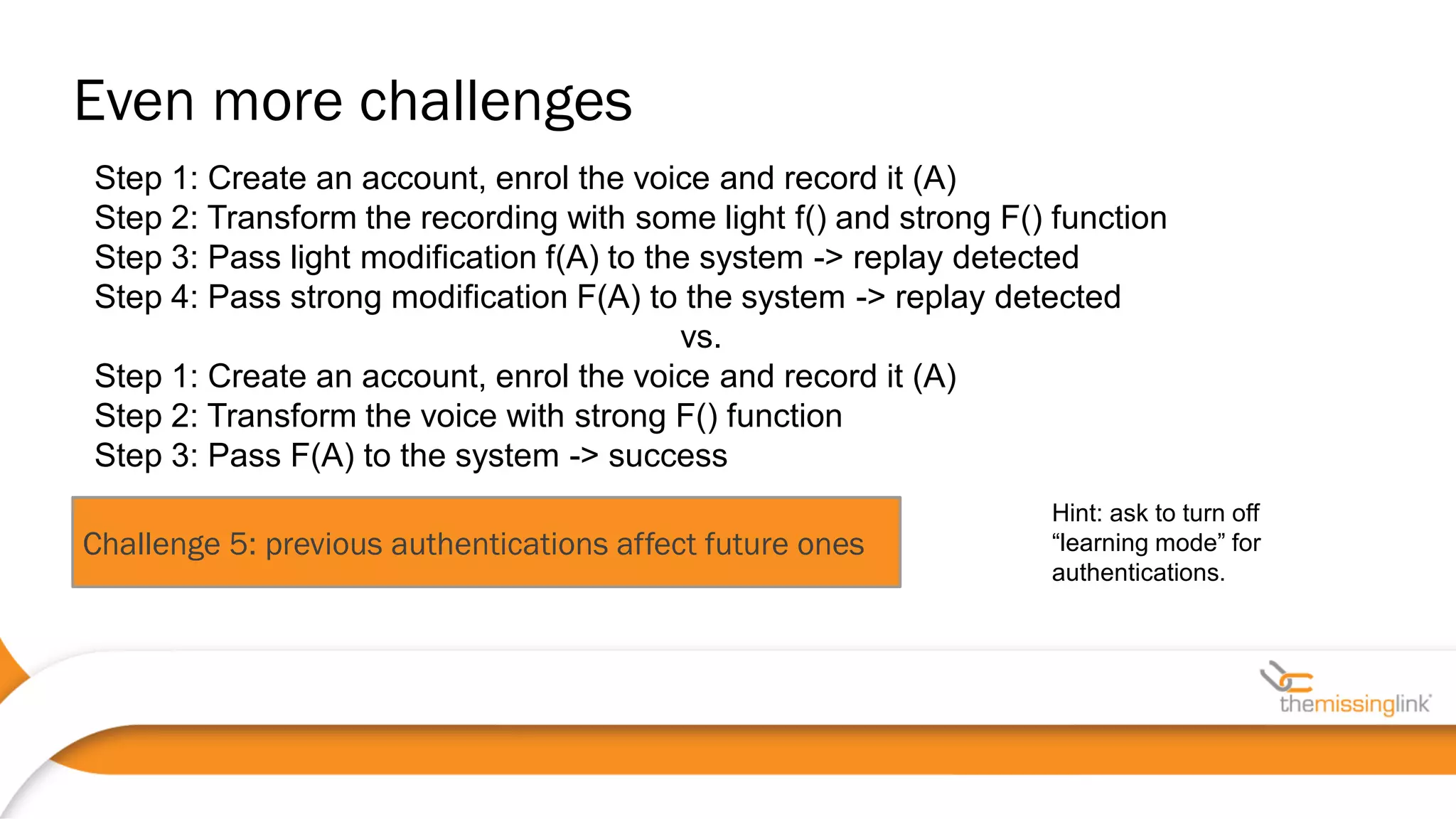

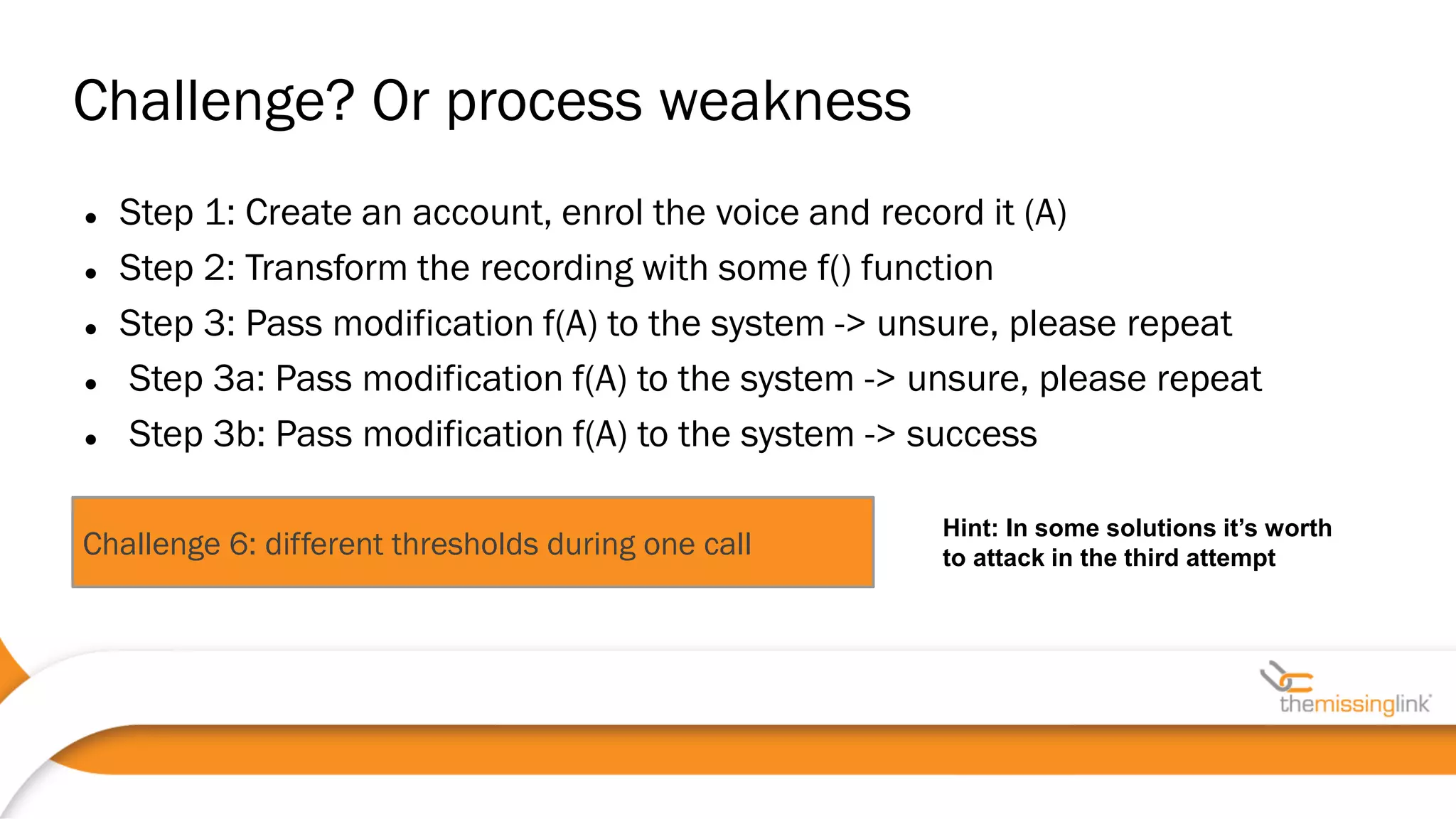

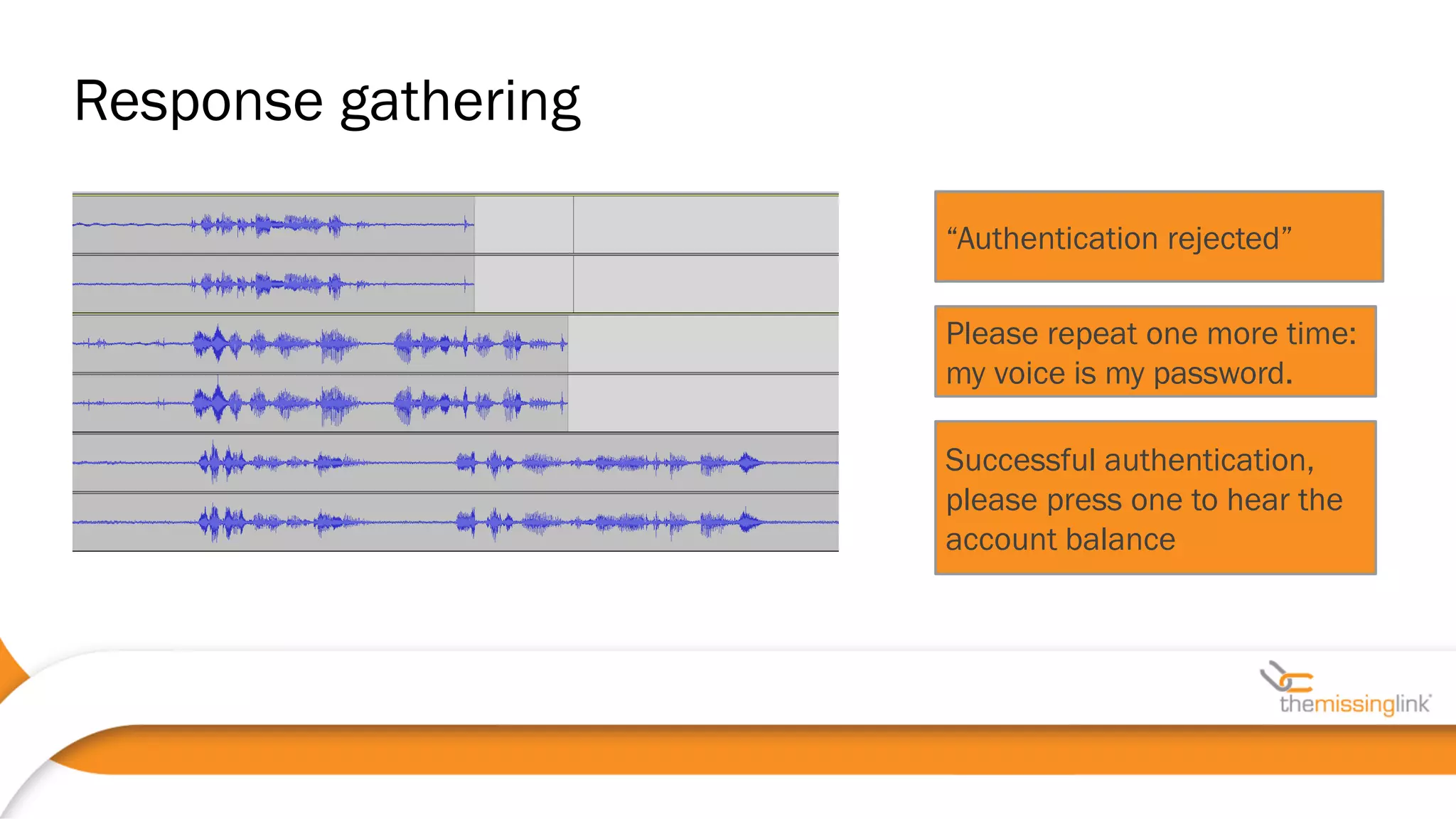

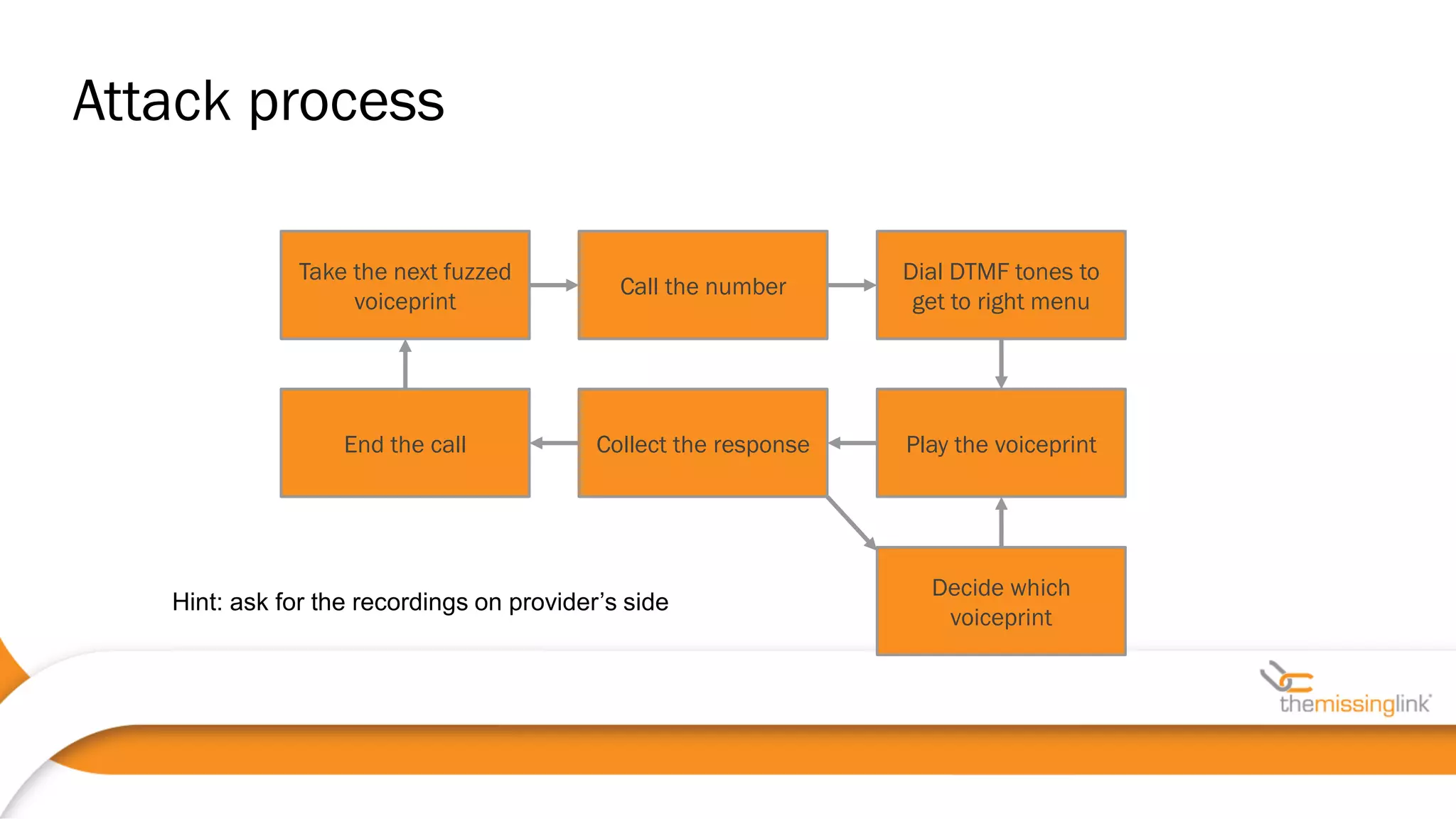

Jakub Kaluzny discusses the security challenges and potential vulnerabilities in voice biometrics systems, emphasizing the importance of pentesting due to the non-deterministic nature of such systems. The paper outlines various attack vectors, challenges in voice authentication, and the need for rigorous testing to ensure security. It concludes by highlighting collaboration with vendors and privacy movements as essential in addressing non-functional security requirements.

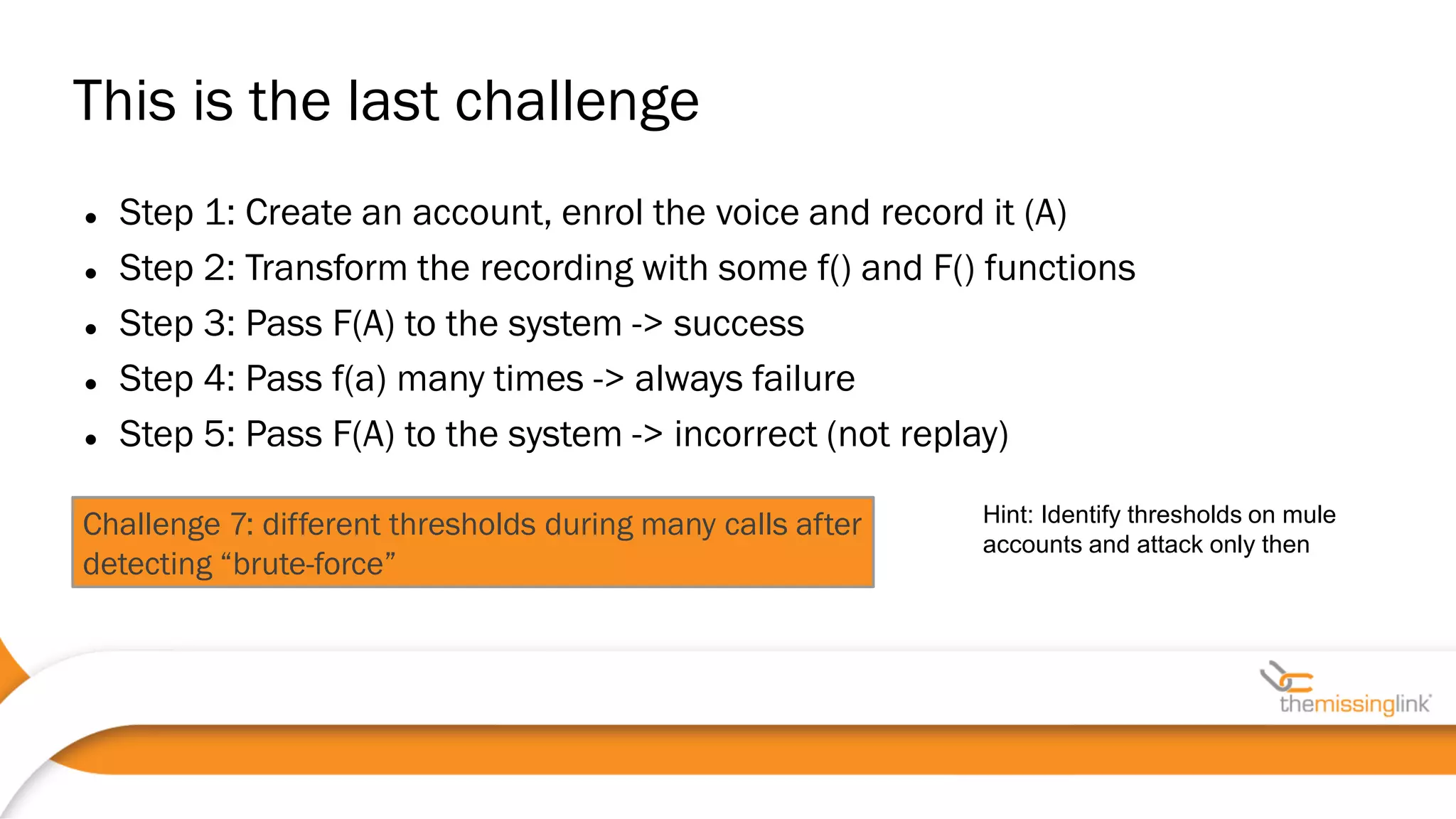

![Integration is the key element, example vulnerability in one of the systems:

POST /voice_auth HTTP/1.1

(…)

-----boundary12345

Content-disposition: form-data; name=d

Content-type: audio/x-wav

[WAV file]

-----boundary12345

Content-disposition: form-data; name=f

Content-Type: text/plain

44100 • Often, voice biometrics as a separate box

• Web/IVR/mobile code as a third-party lib, SDLC?

• HTTPS requests may have another endpoint (possible MiTM)

• Voice-authenticated sessions should be distinguished from

standard ones !

2MB file limit but

f=1 DoSed the system

Mobile applications](https://image.slidesharecdn.com/kaluznypentesting-voice-biometricsauscert2017-170528230458/75/Pentesting-voice-biometrics-solutions-AusCERT-2017-39-2048.jpg)