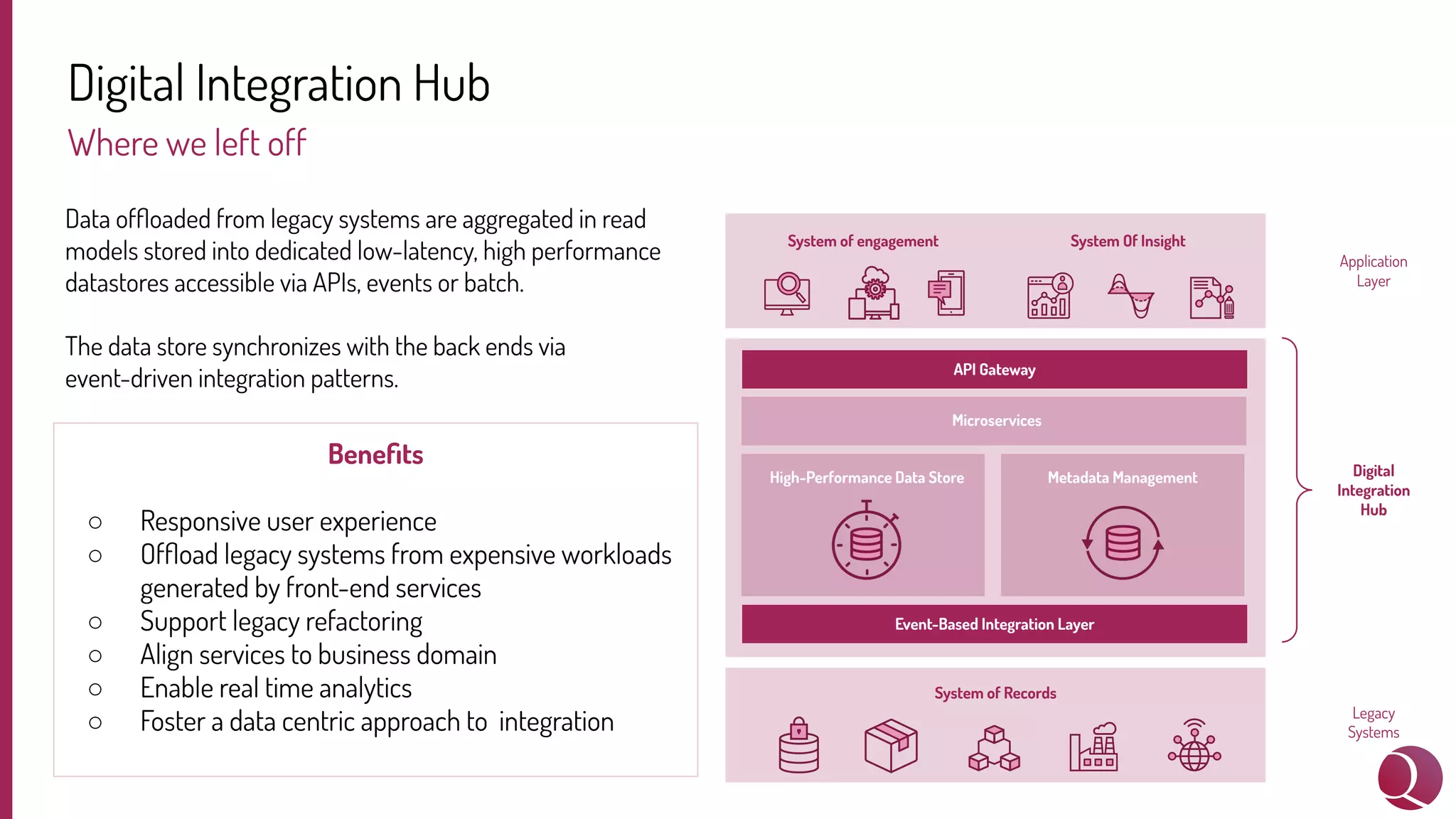

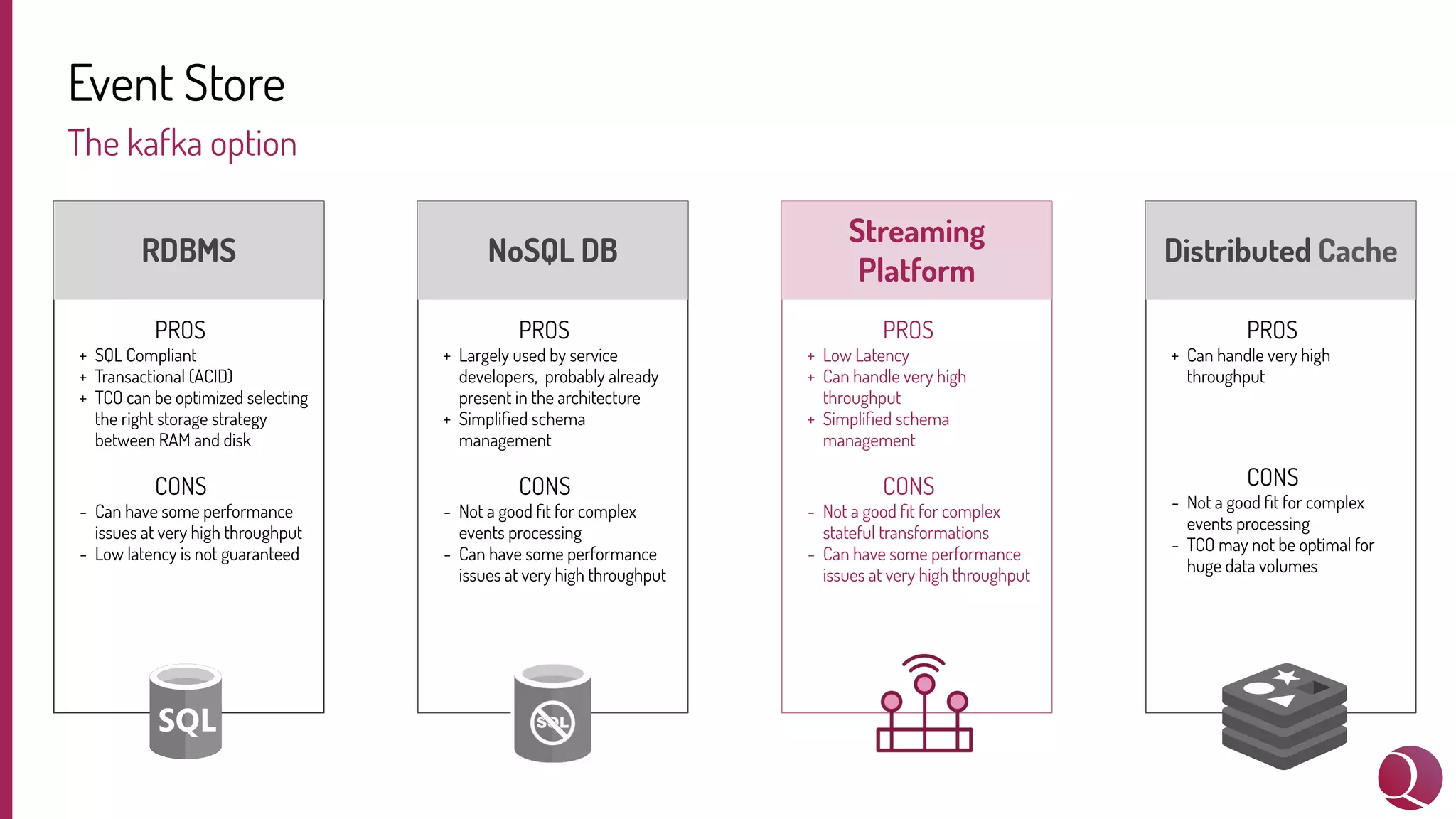

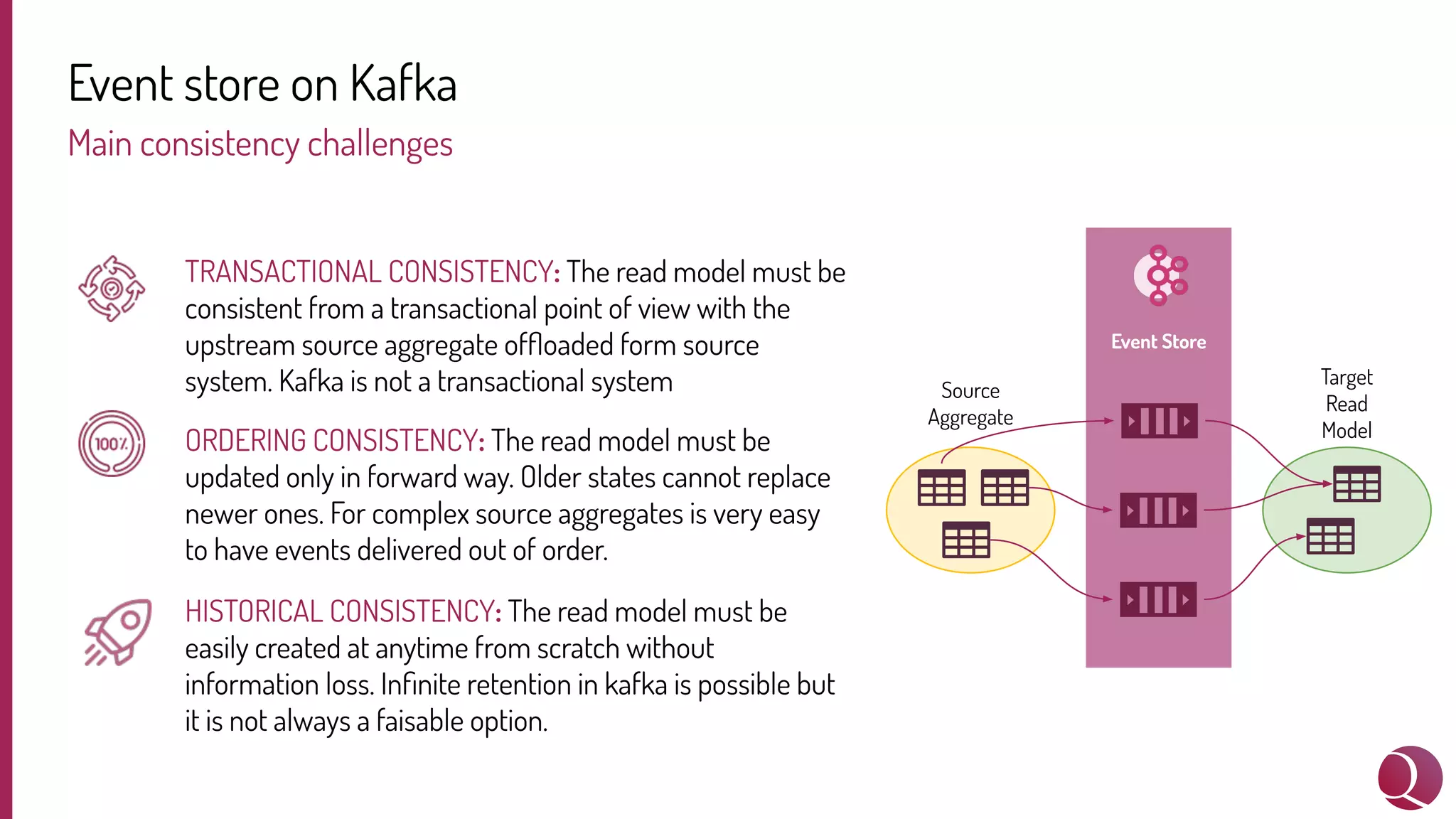

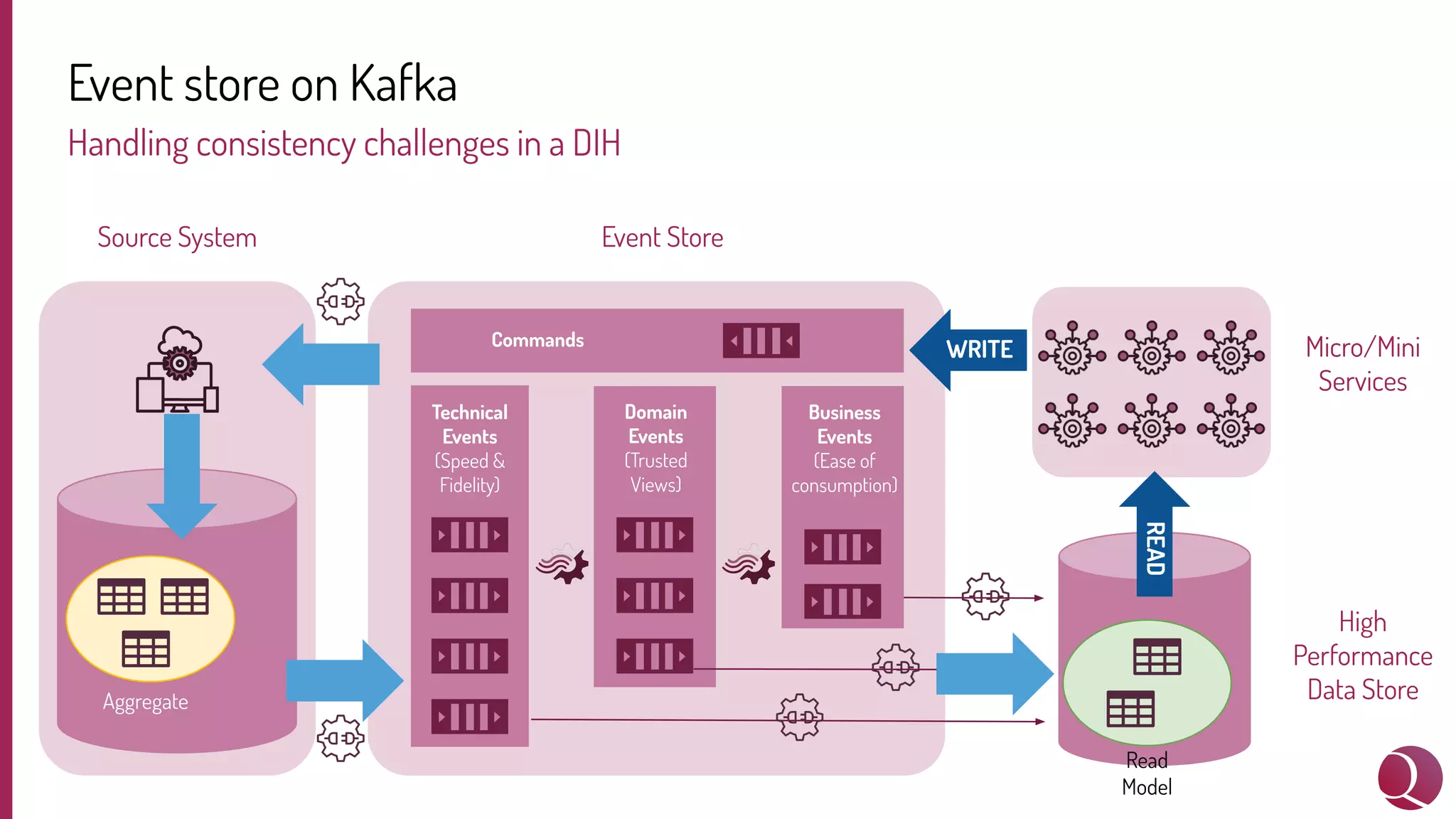

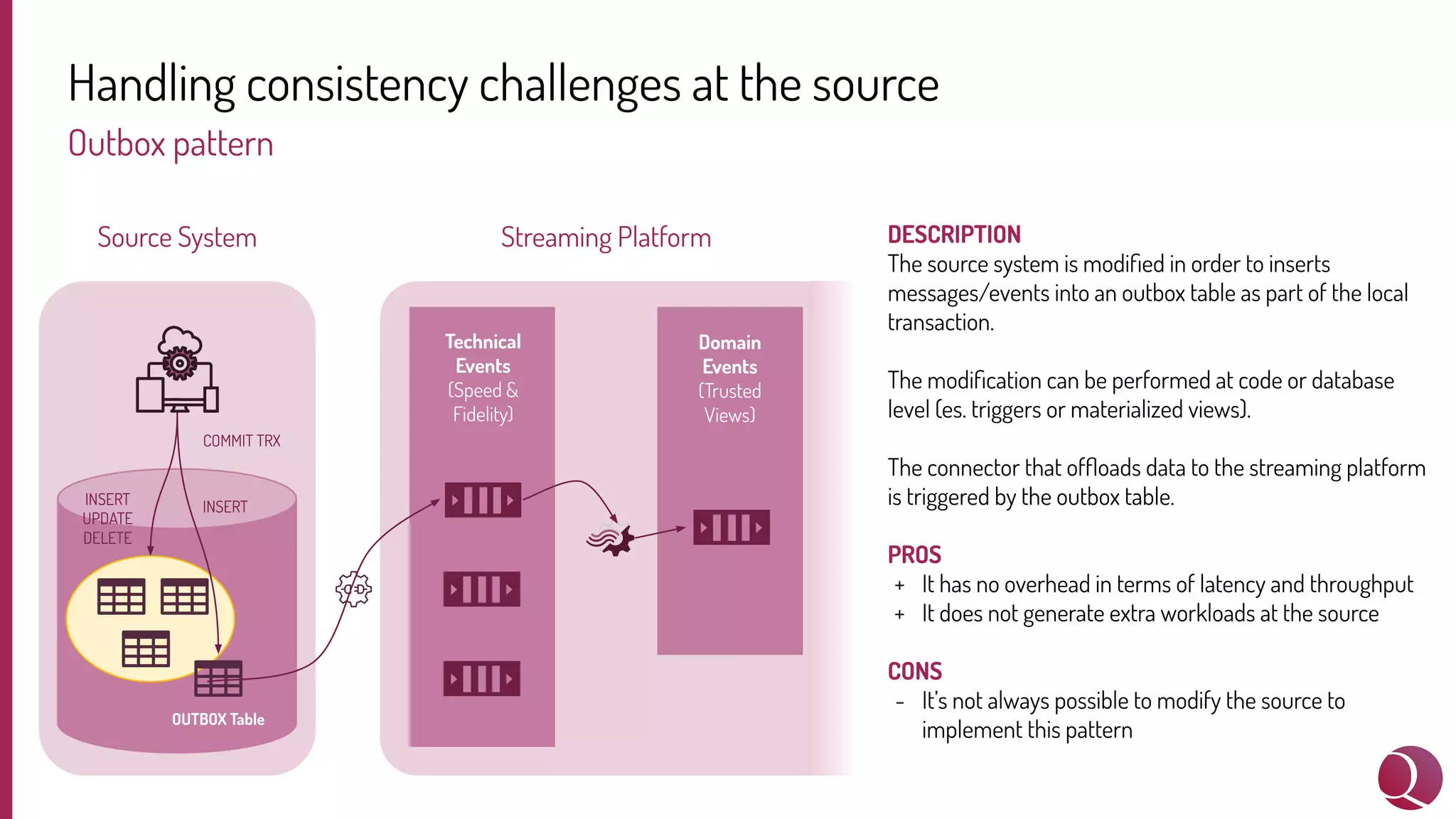

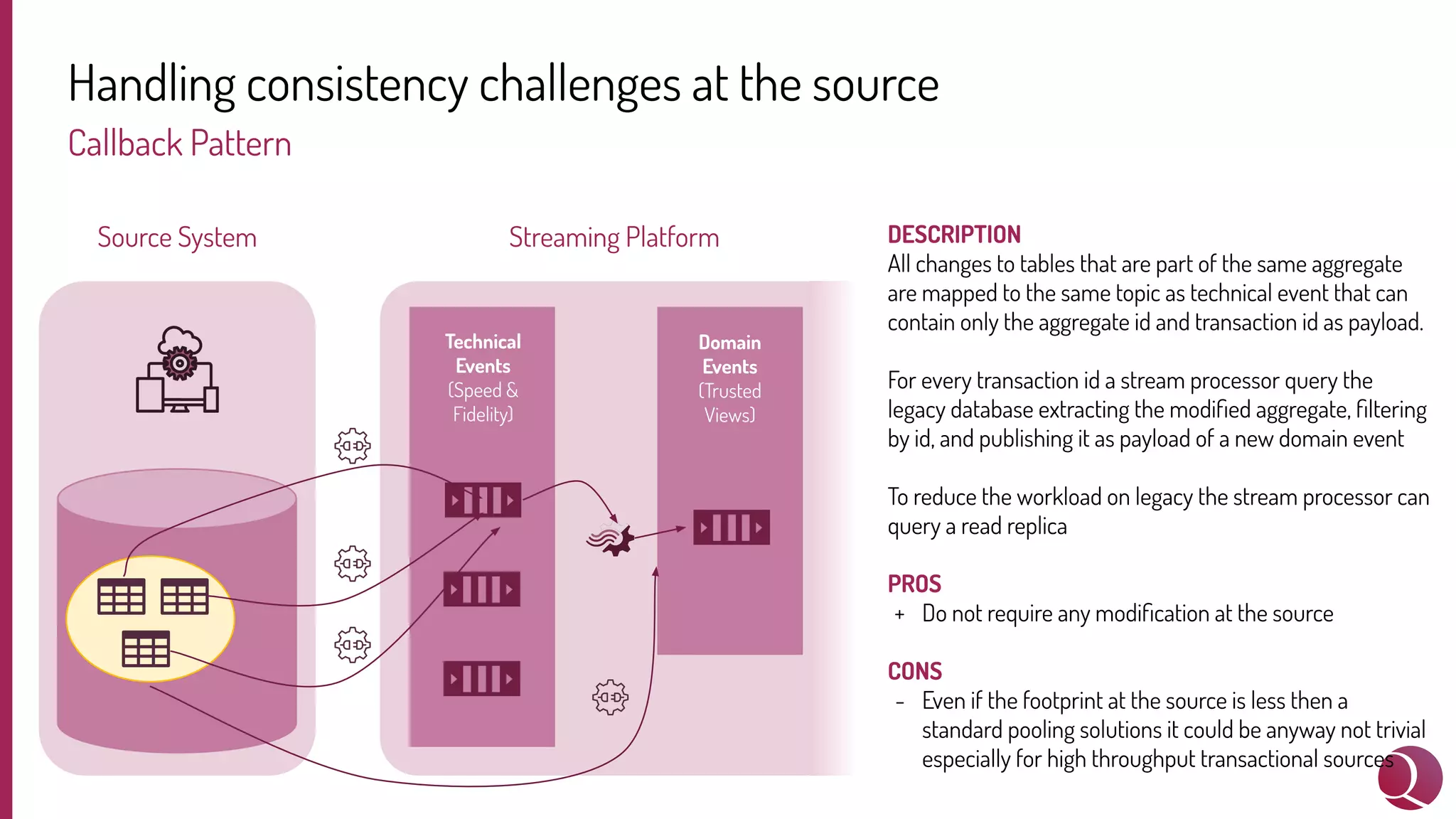

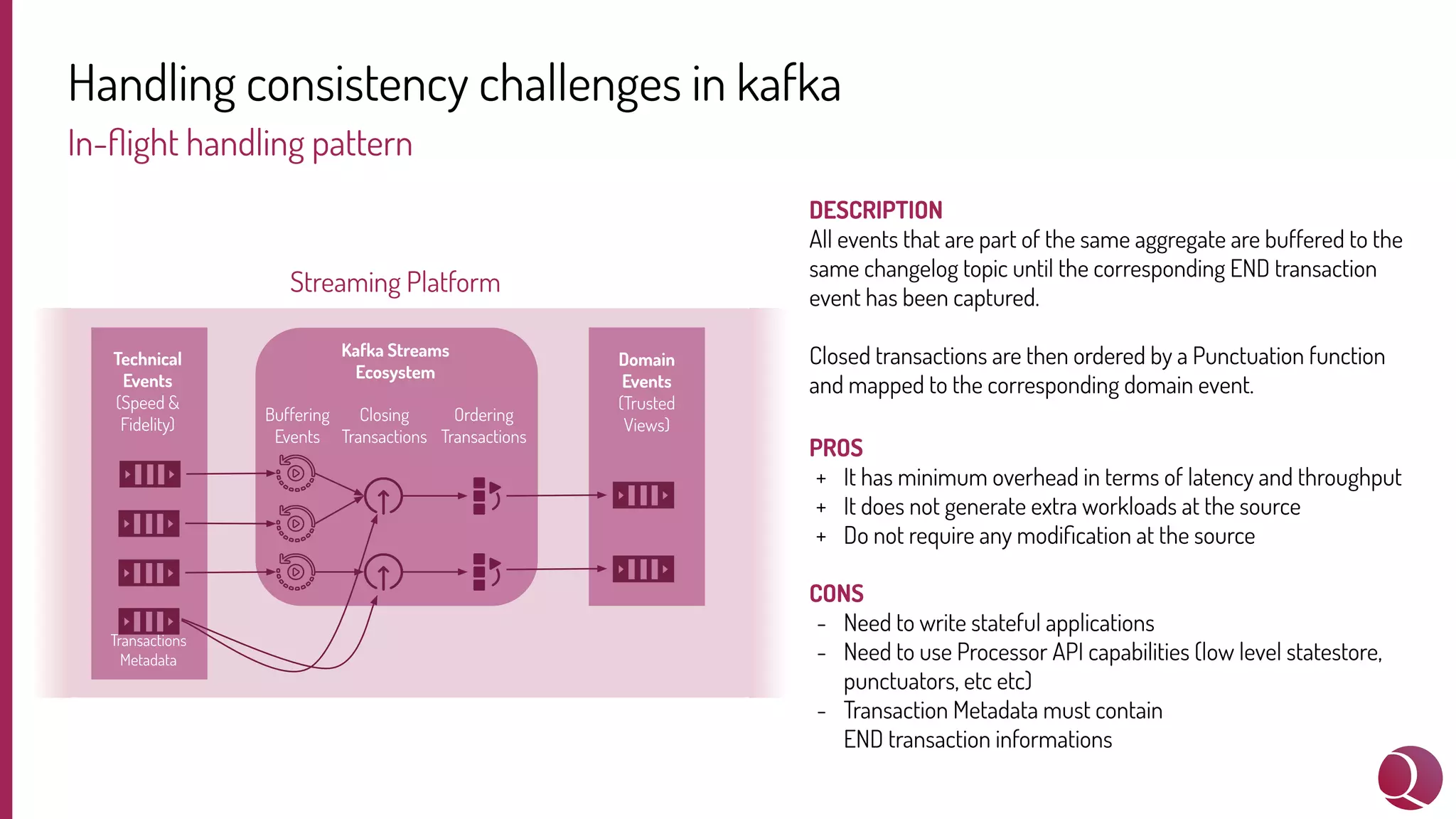

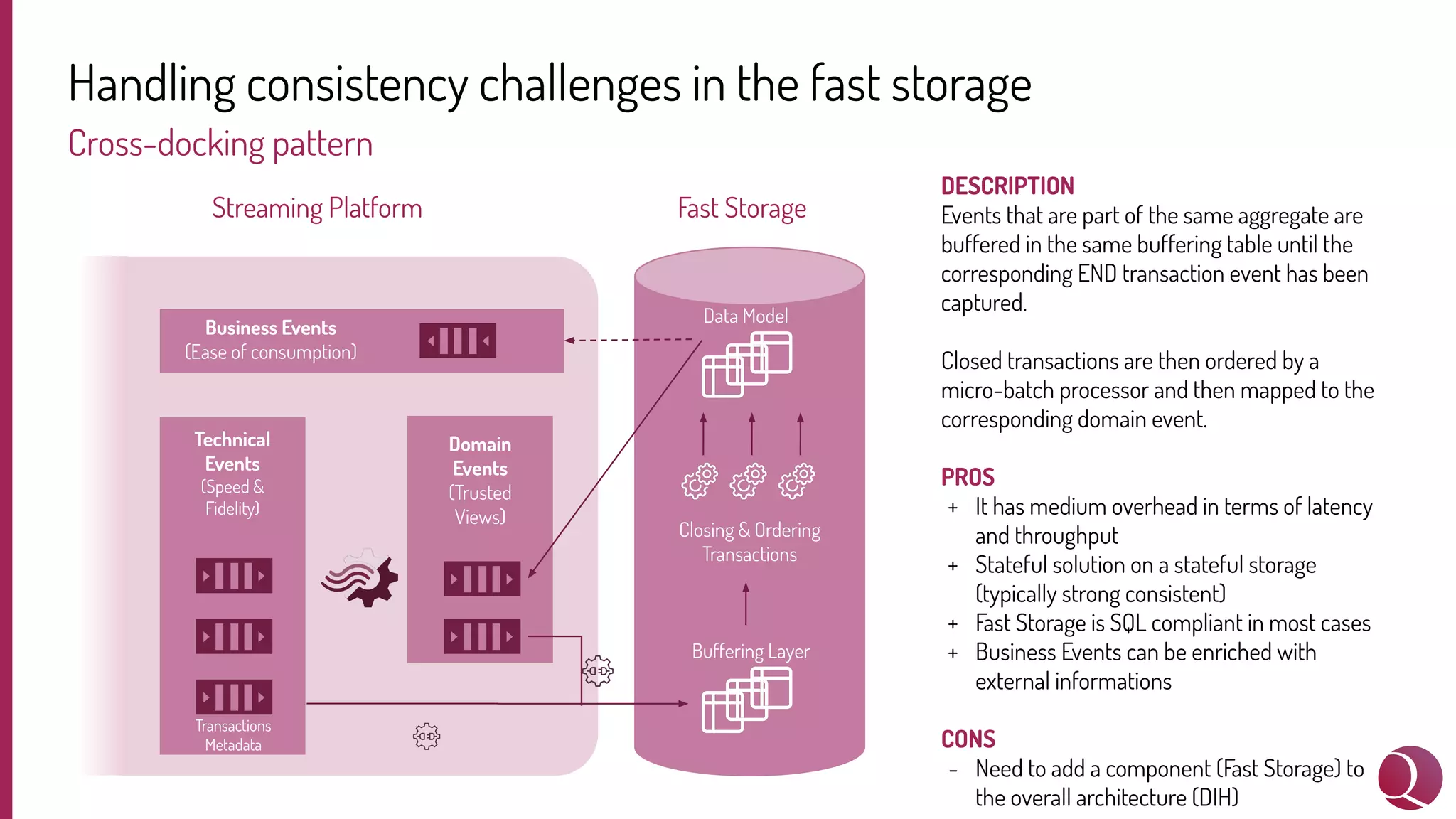

The document discusses handling consistency challenges when using a digital integration hub (DIH) with an event-driven architecture and Kafka as the event streaming platform. It describes three patterns for enforcing consistency: (1) using an outbox pattern at the source system, (2) using a callback pattern without modifying the source, and (3) buffering events in Kafka until transactions are closed. It also presents a cross-docking pattern that buffers events in a fast storage system before writing business events. Maintaining consistency in distributed systems is challenging, and the presenters evaluate different approaches and their tradeoffs.