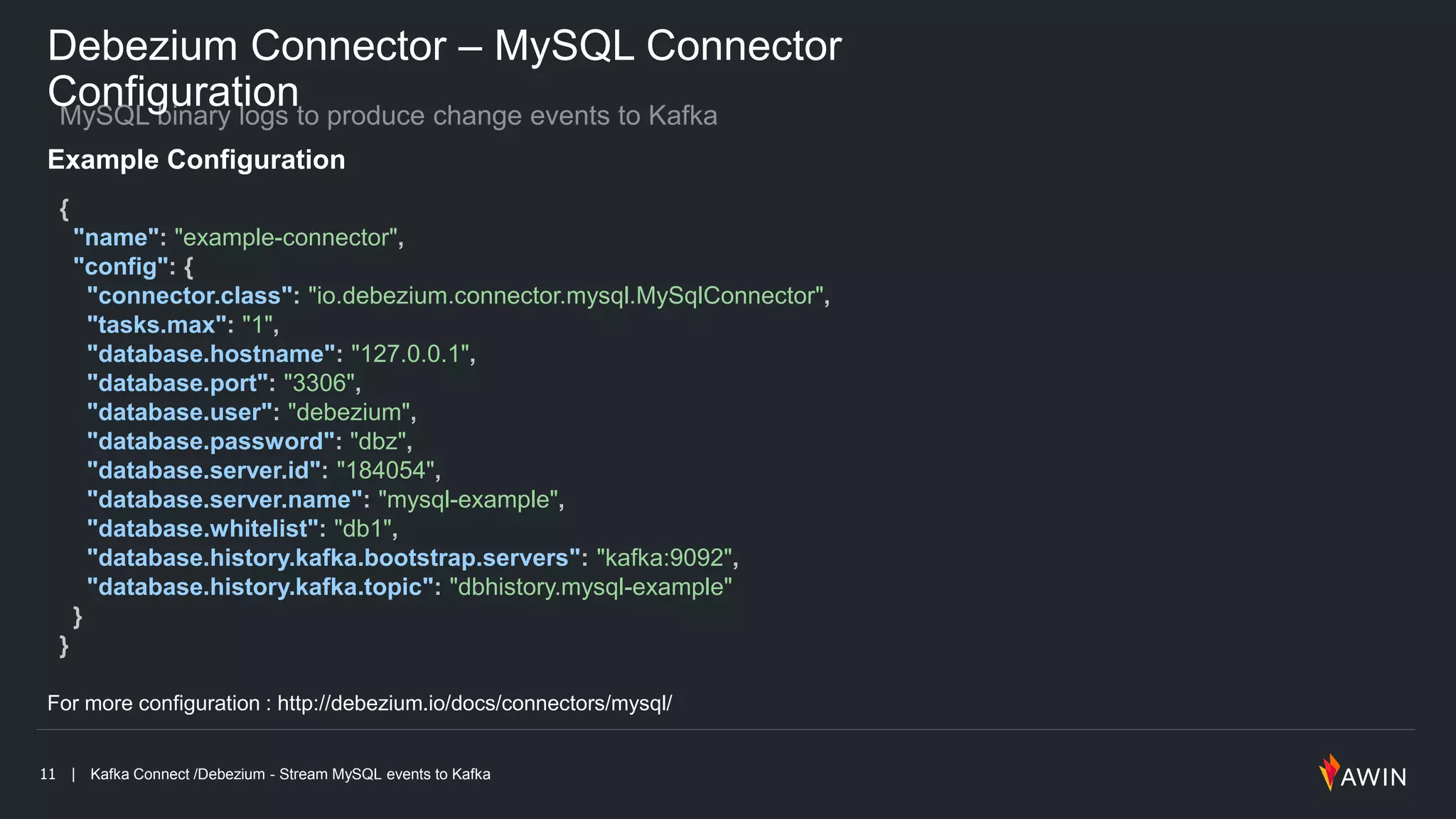

The document discusses Kafka Connect and Debezium, focusing on streaming MySQL events to Kafka for real-time data integration. It highlights the benefits of using change data capture (CDC) to synchronize data and manage event sourcing, while detailing connector configurations and operational setups. Additionally, it provides specific examples of Debezium configurations, REST API endpoints, and useful resources for further exploration.

![12 | Kafka Connect /Debezium - Stream MySQL events to Kafka

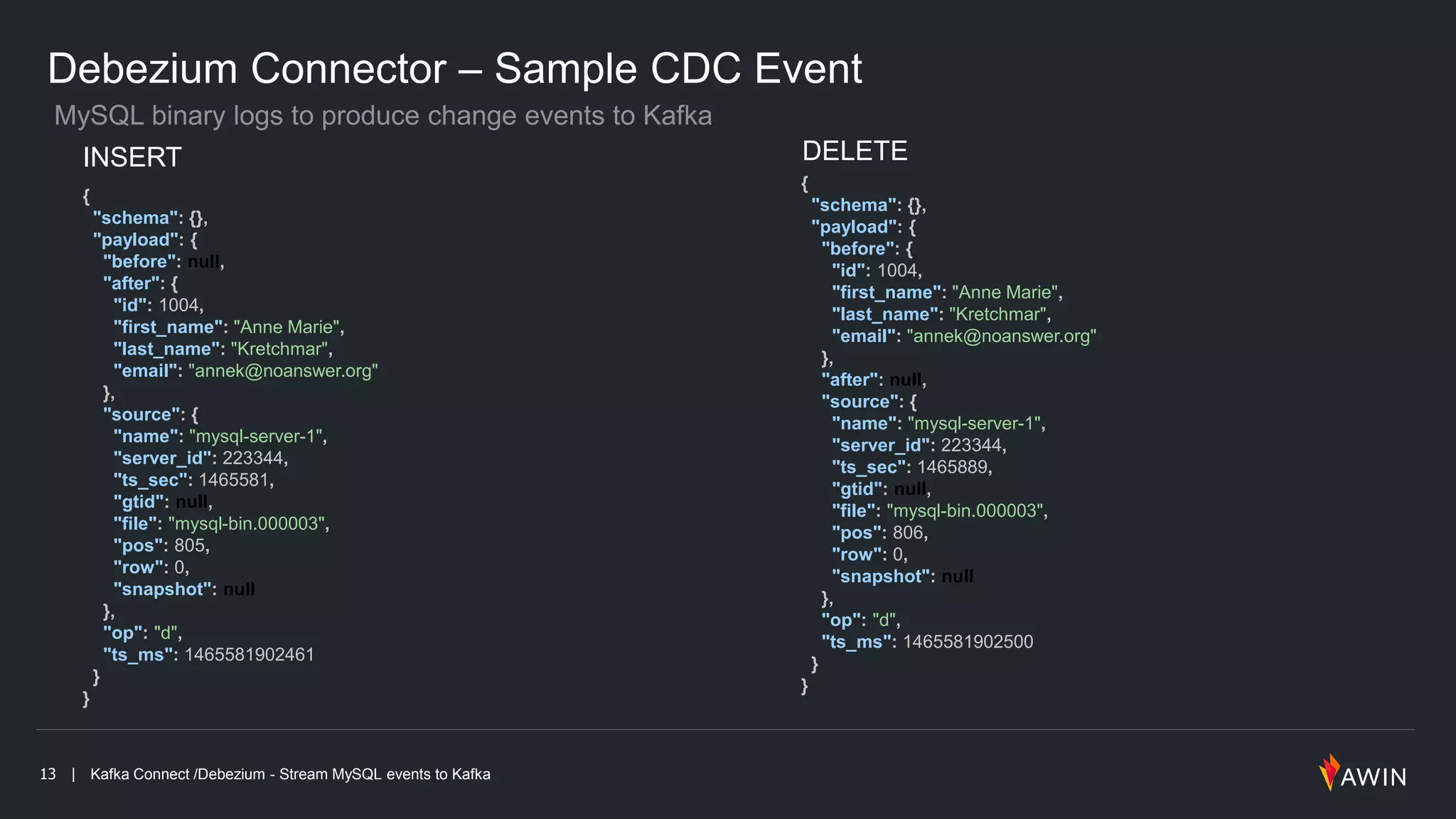

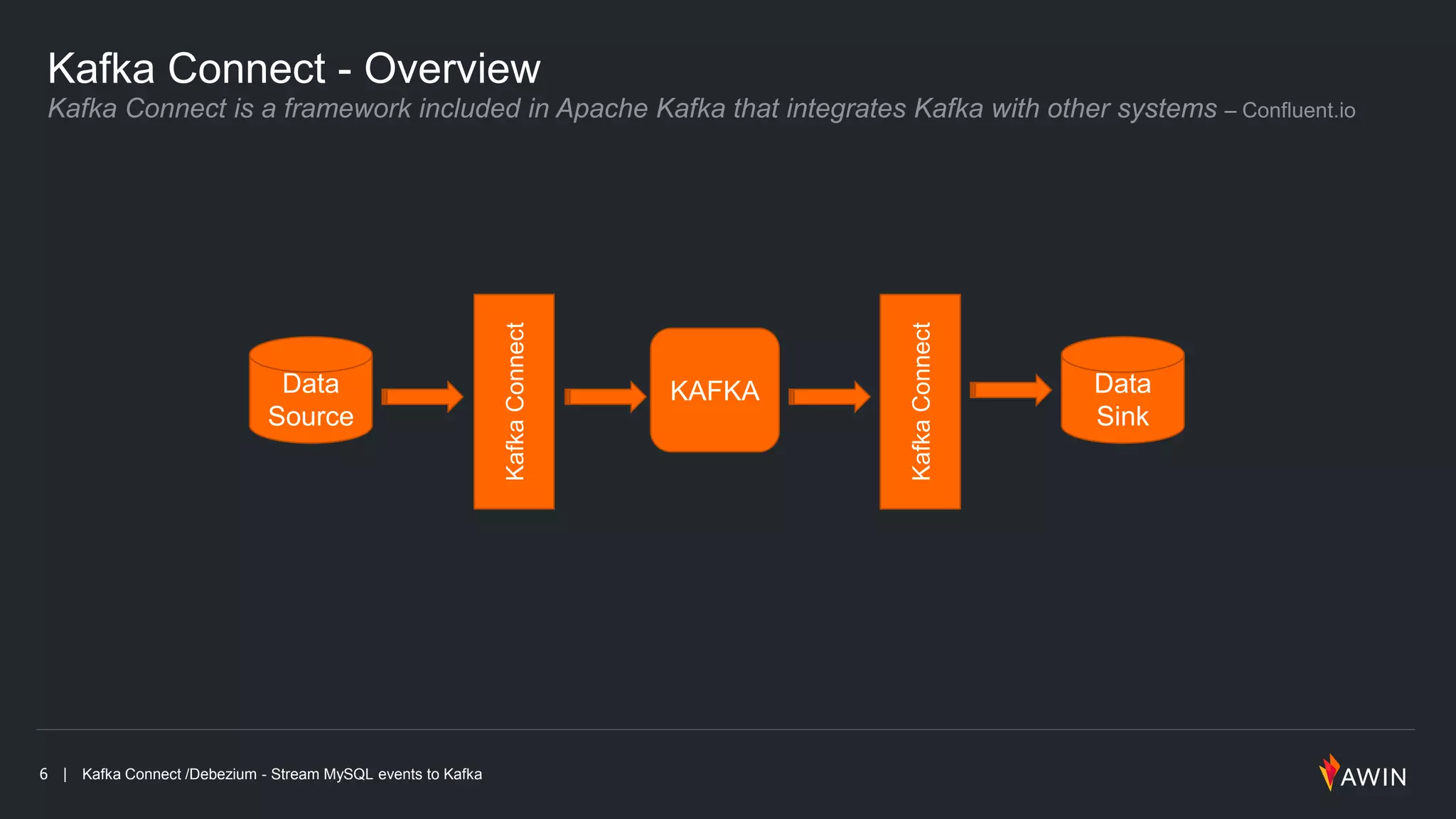

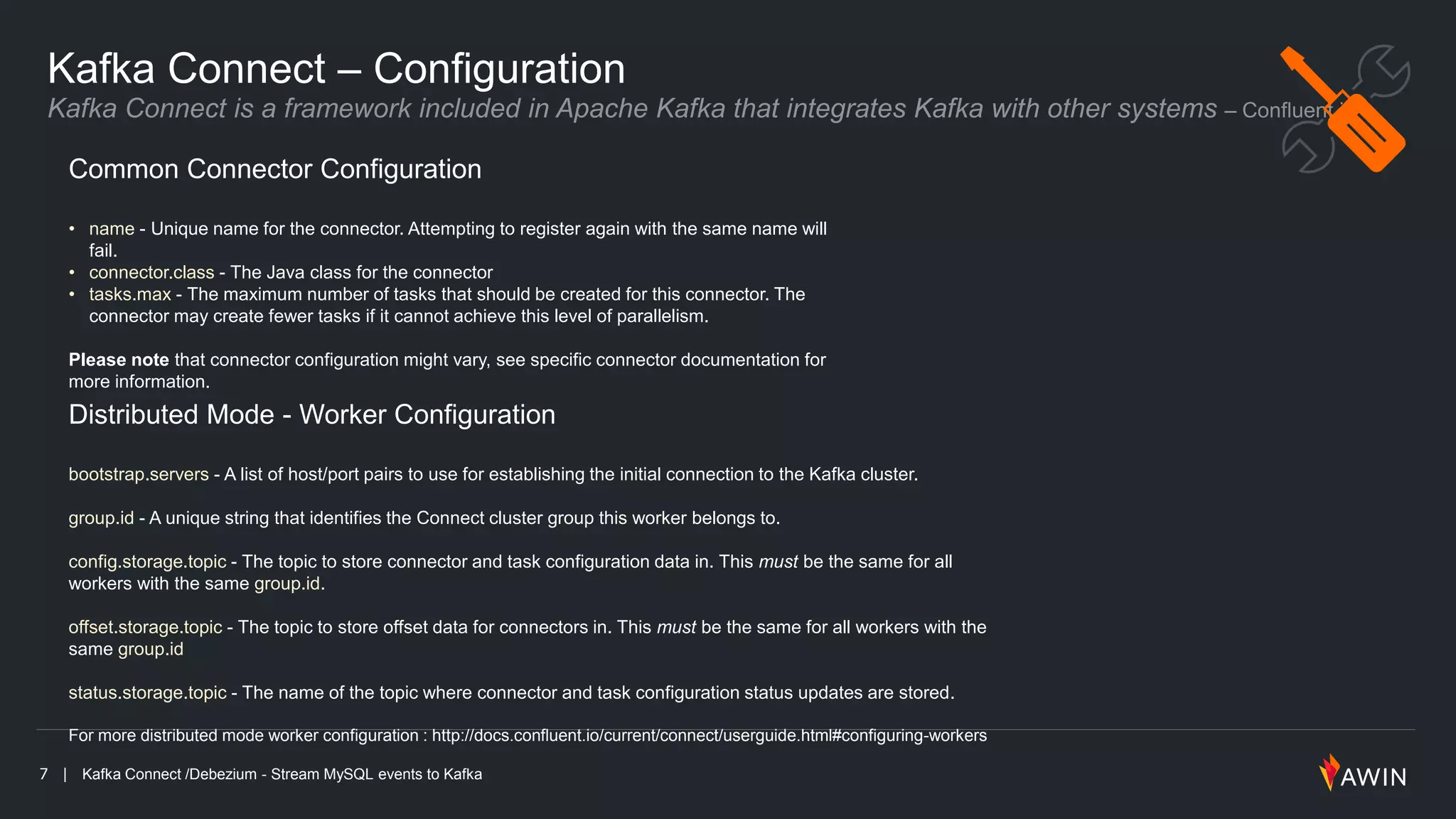

Debezium Connector – Add Connector to Kafka

Connect

For more configuration : http://debezium.io/docs/connectors/mysql/

More REST Endpoints : https://docs.confluent.io/current/connect/managing.html#using-the-rest-interface

List Available Connector plugins

$ curl -s http://kafka-connect:8083/connector-plugins

[

{

"class": "io.confluent.connect.jdbc.JdbcSinkConnector"

},

{

"class": "io.confluent.connect.jdbc.JdbcSourceConnector"

},

{

"class": "io.debezium.connector.mysql.MySqlConnector"

},

{

"class": "org.apache.kafka.connect.file.FileStreamSinkConnector"

},

{

"class": "org.apache.kafka.connect.file.FileStreamSourceConnector"

}

]

Add connector

$ curl -s -X POST -H "Content-Type: application/json" --data @connector-config.json http://kafka-connect:8083/conn

Remove connector

$ curl -X DELETE -H "Content-Type: application/json” http://kafka-connect:8083/connectors](https://image.slidesharecdn.com/kafkaconnect-debezium-180708202730/75/Kafka-Connect-debezium-12-2048.jpg)