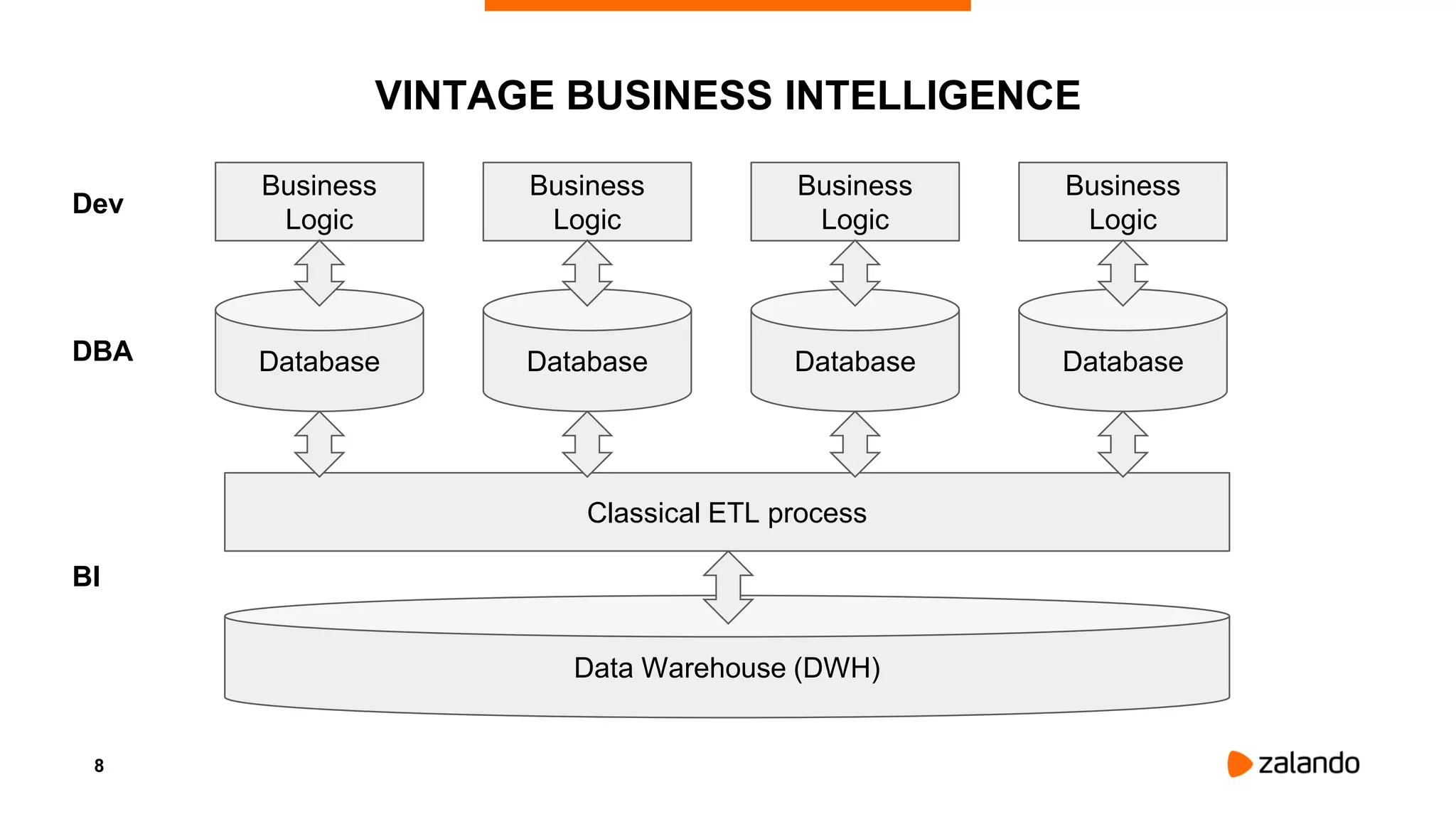

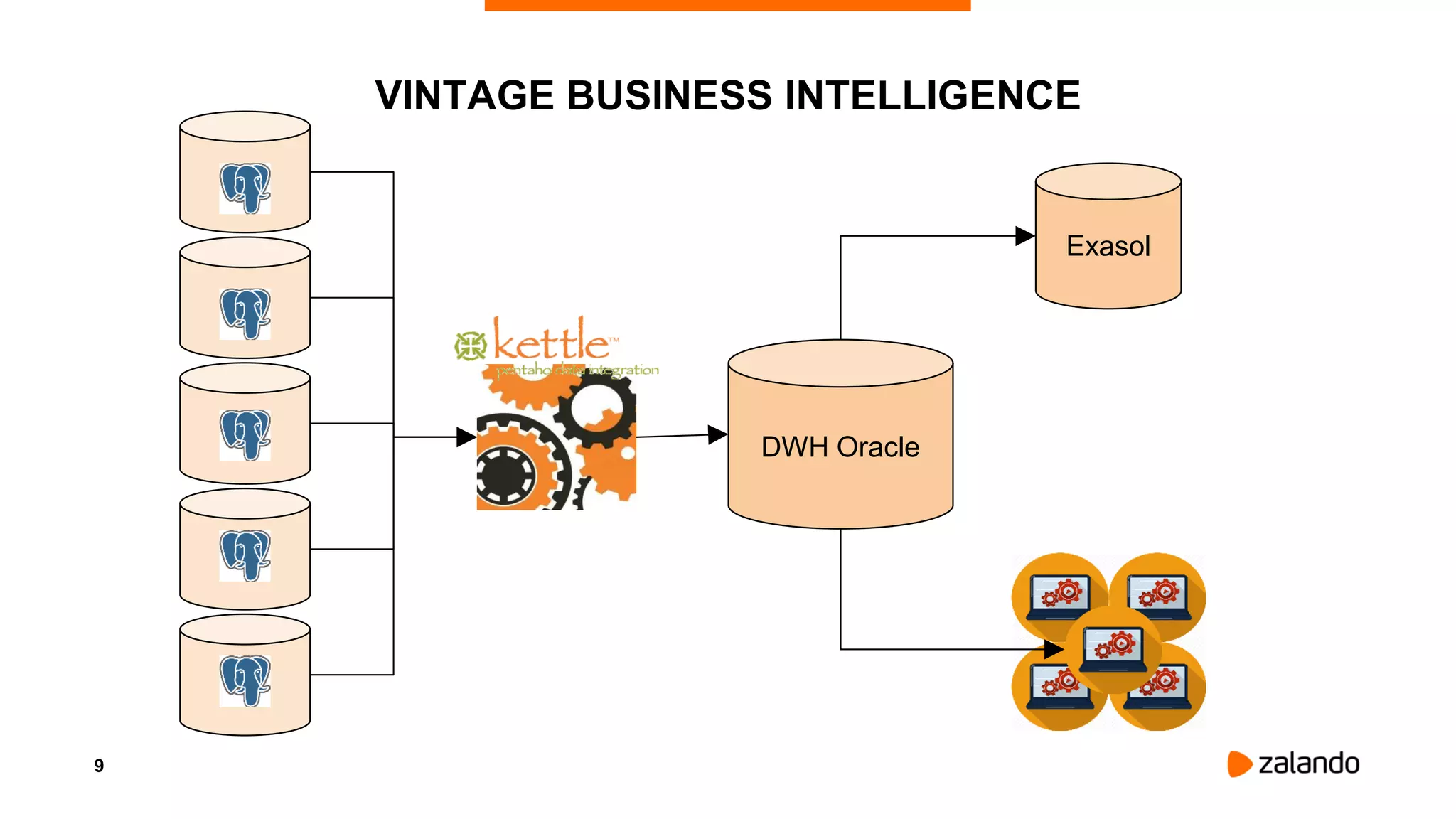

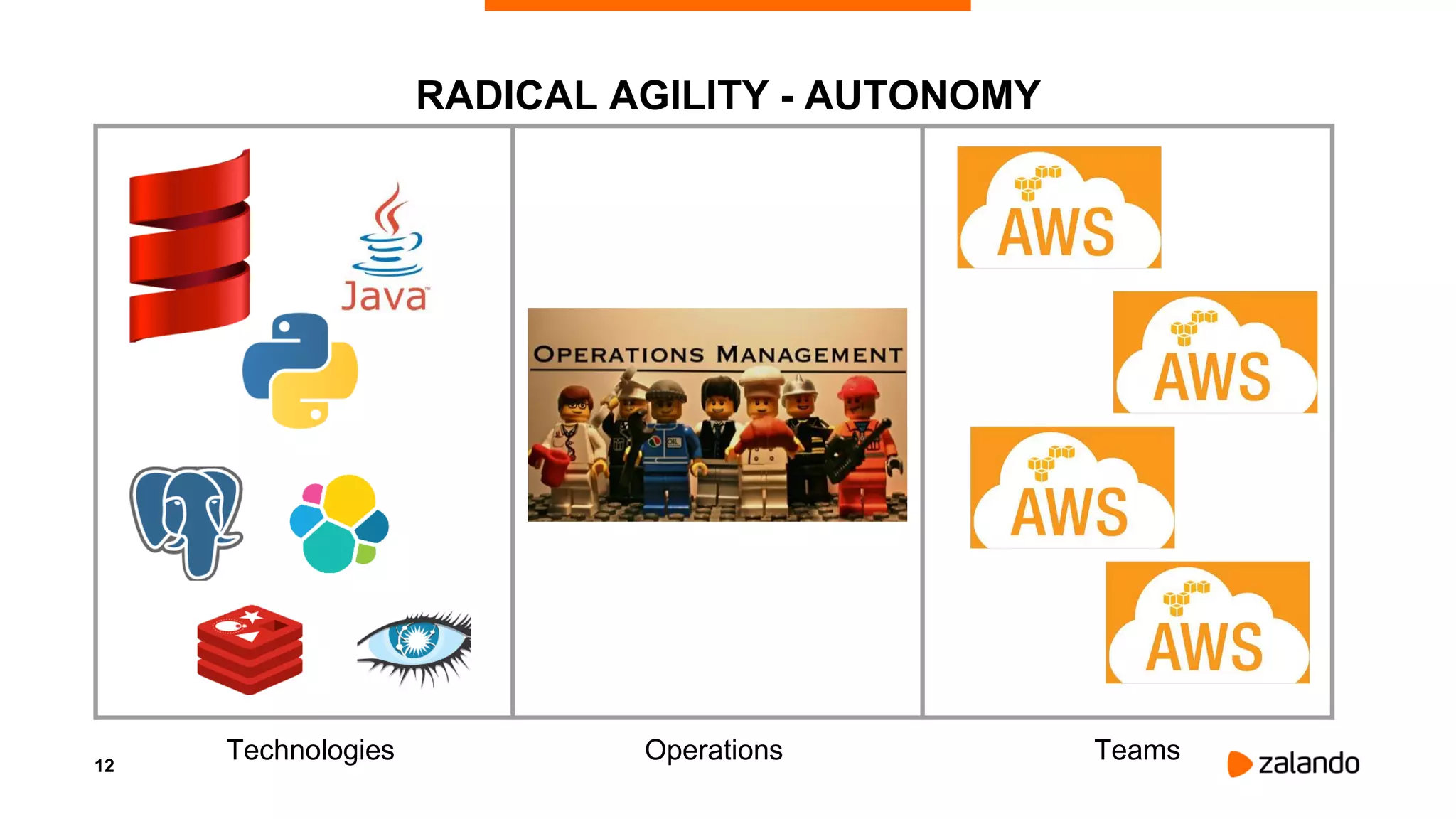

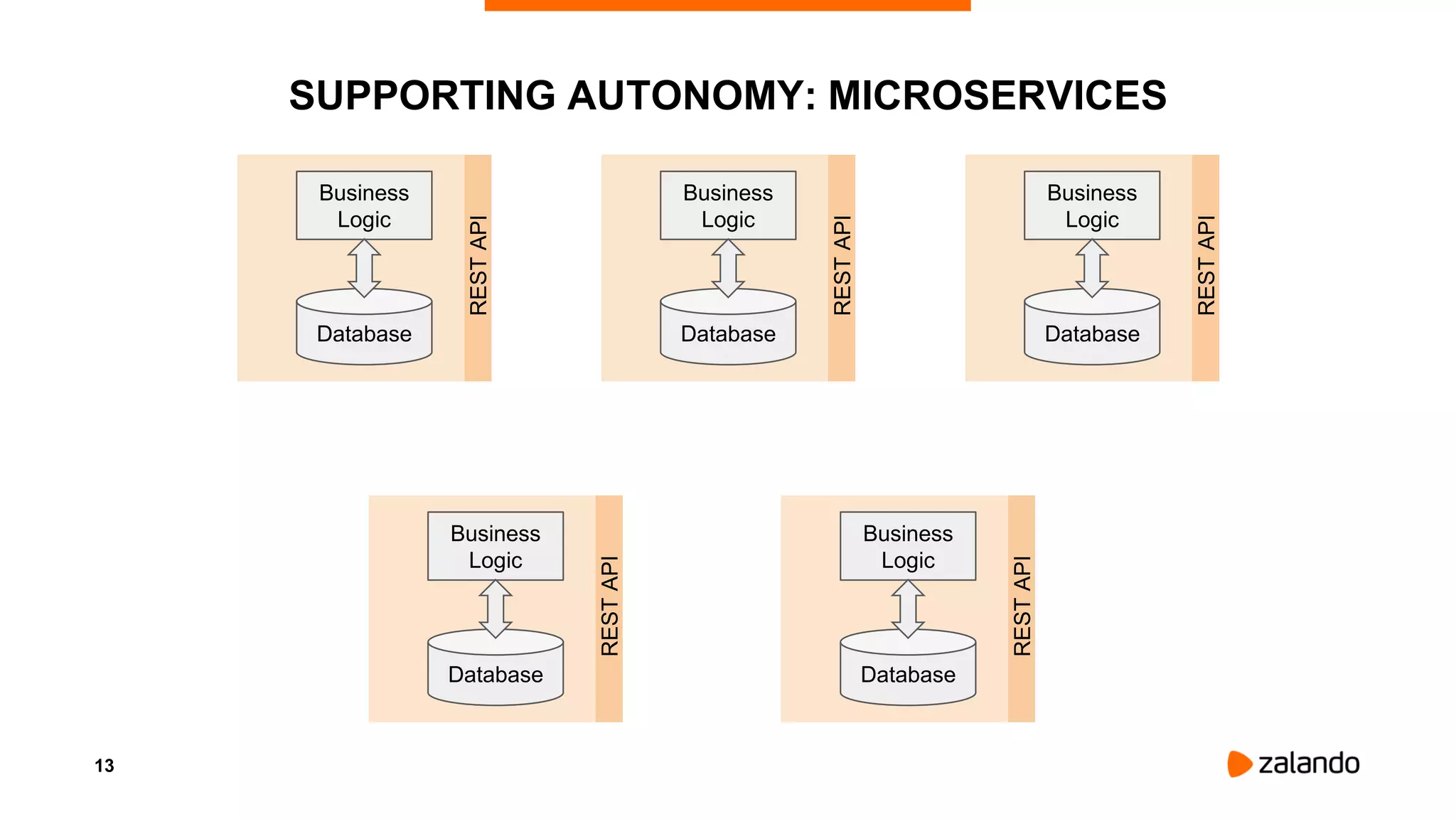

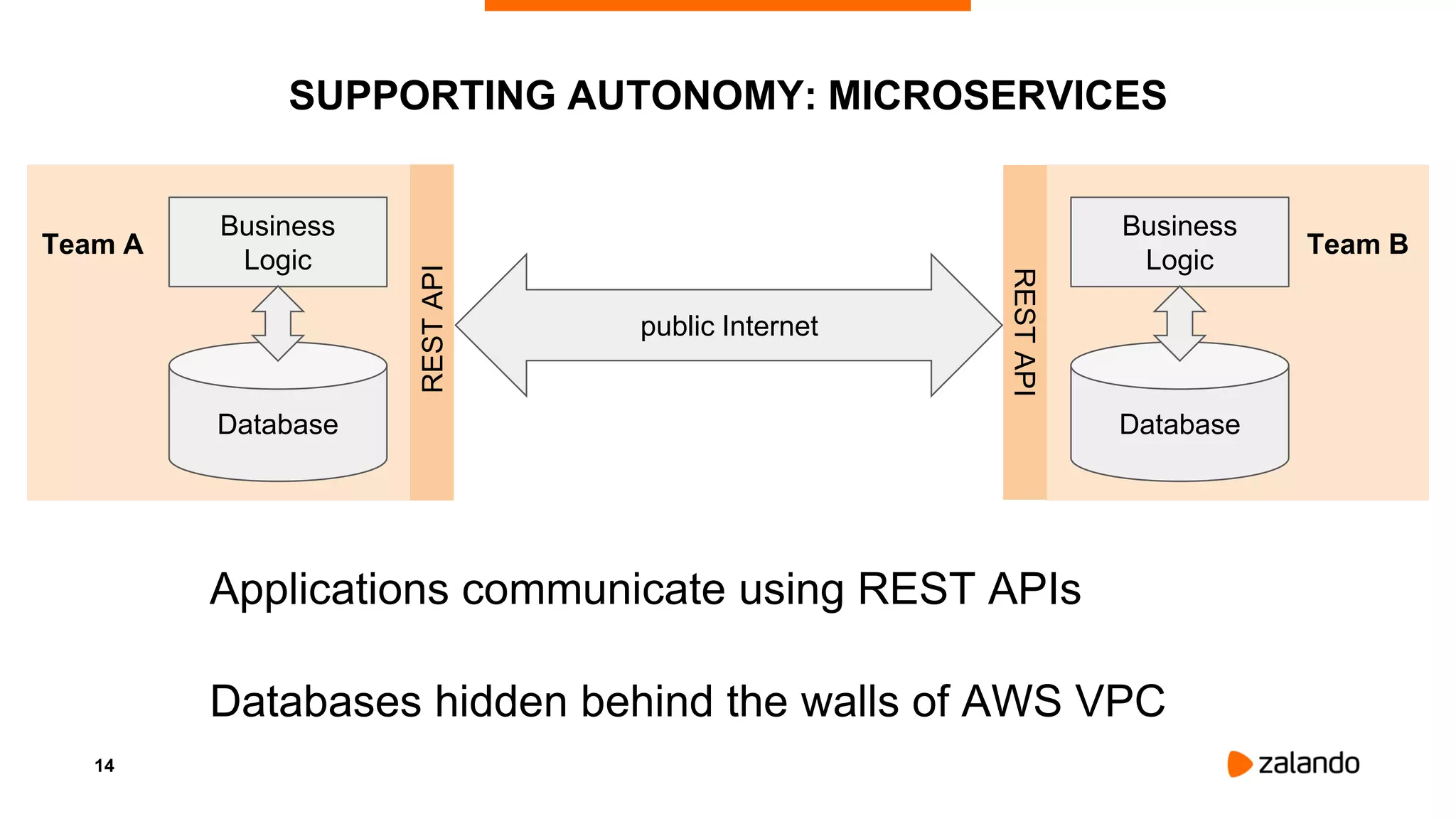

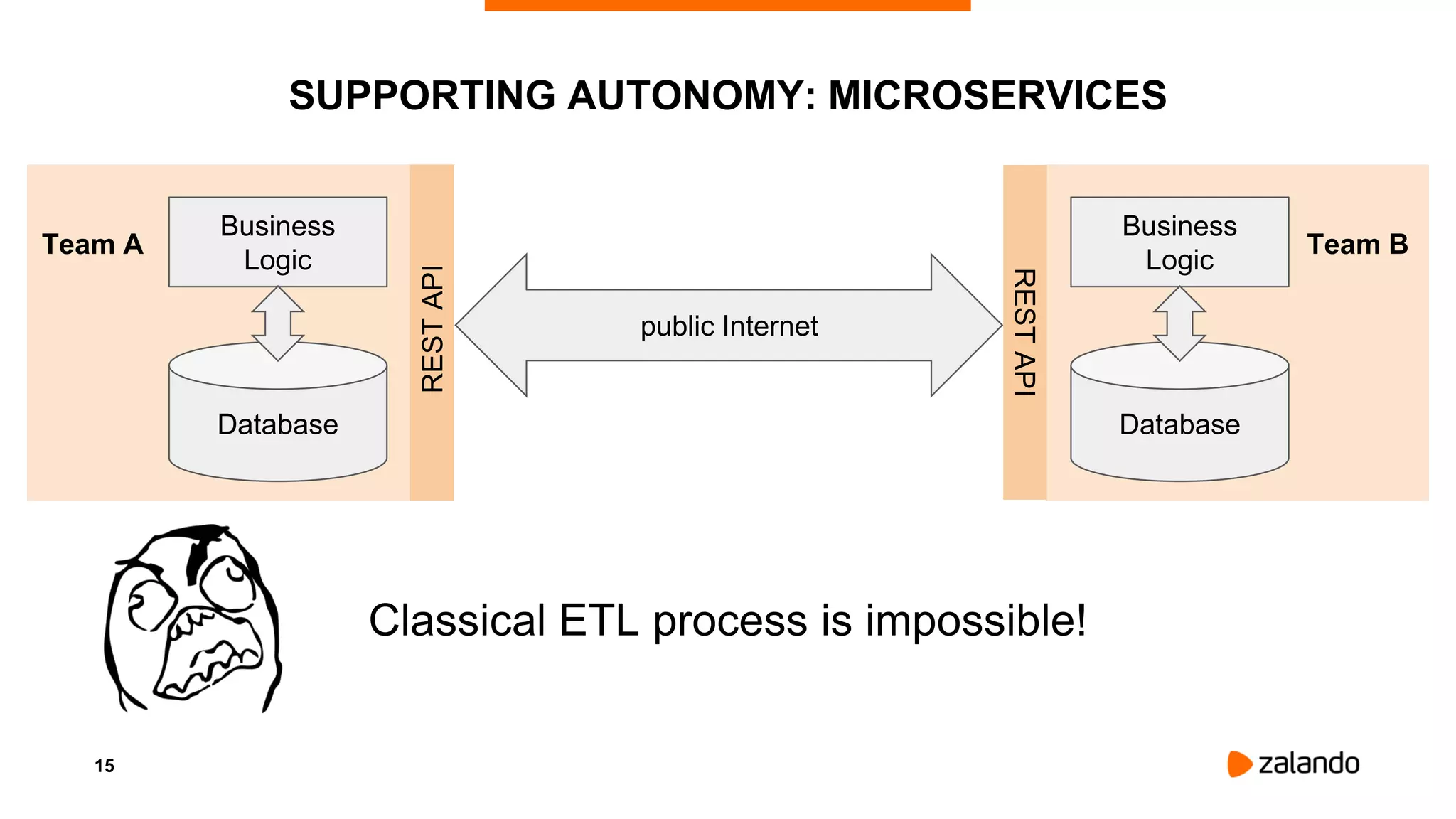

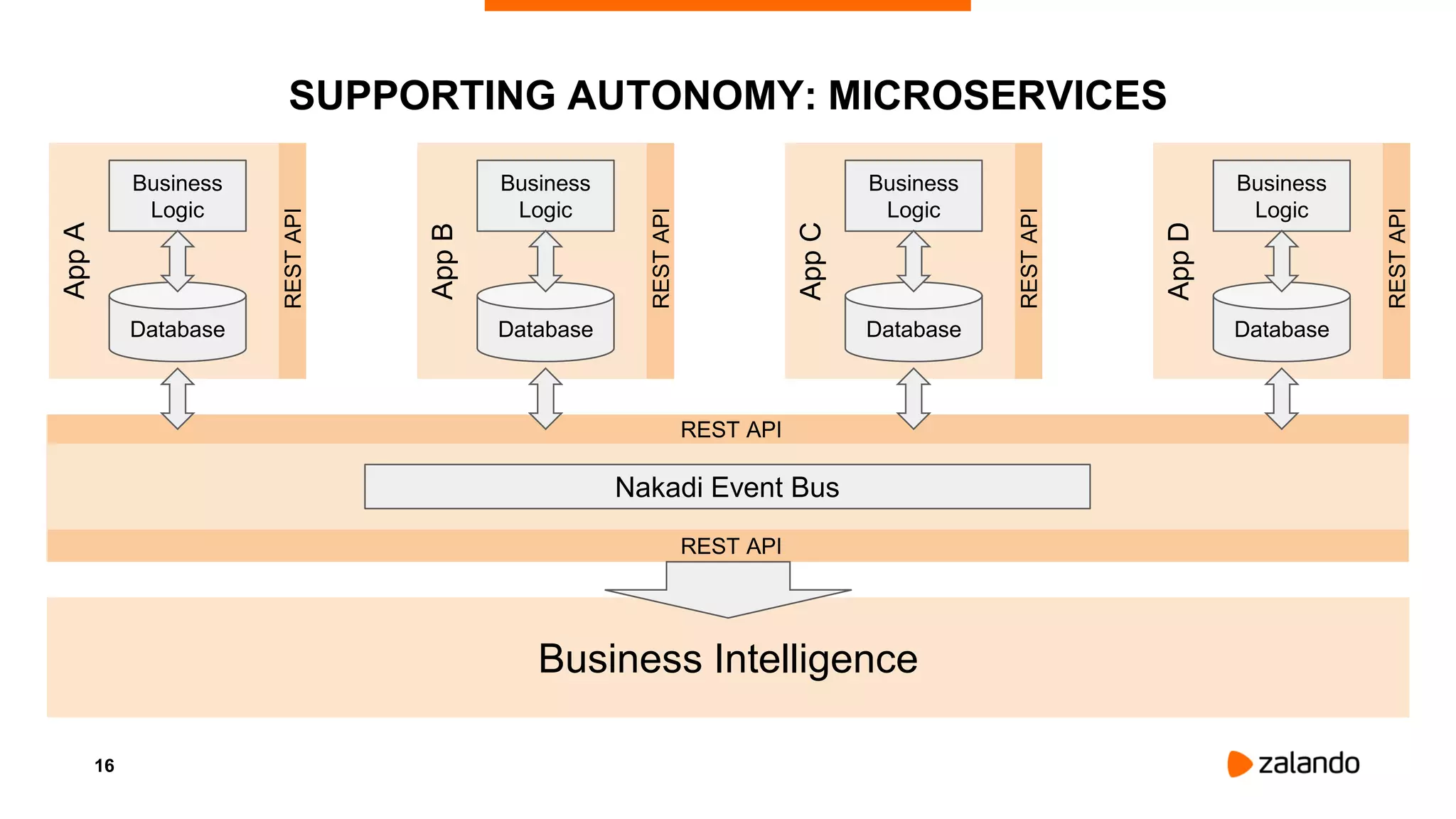

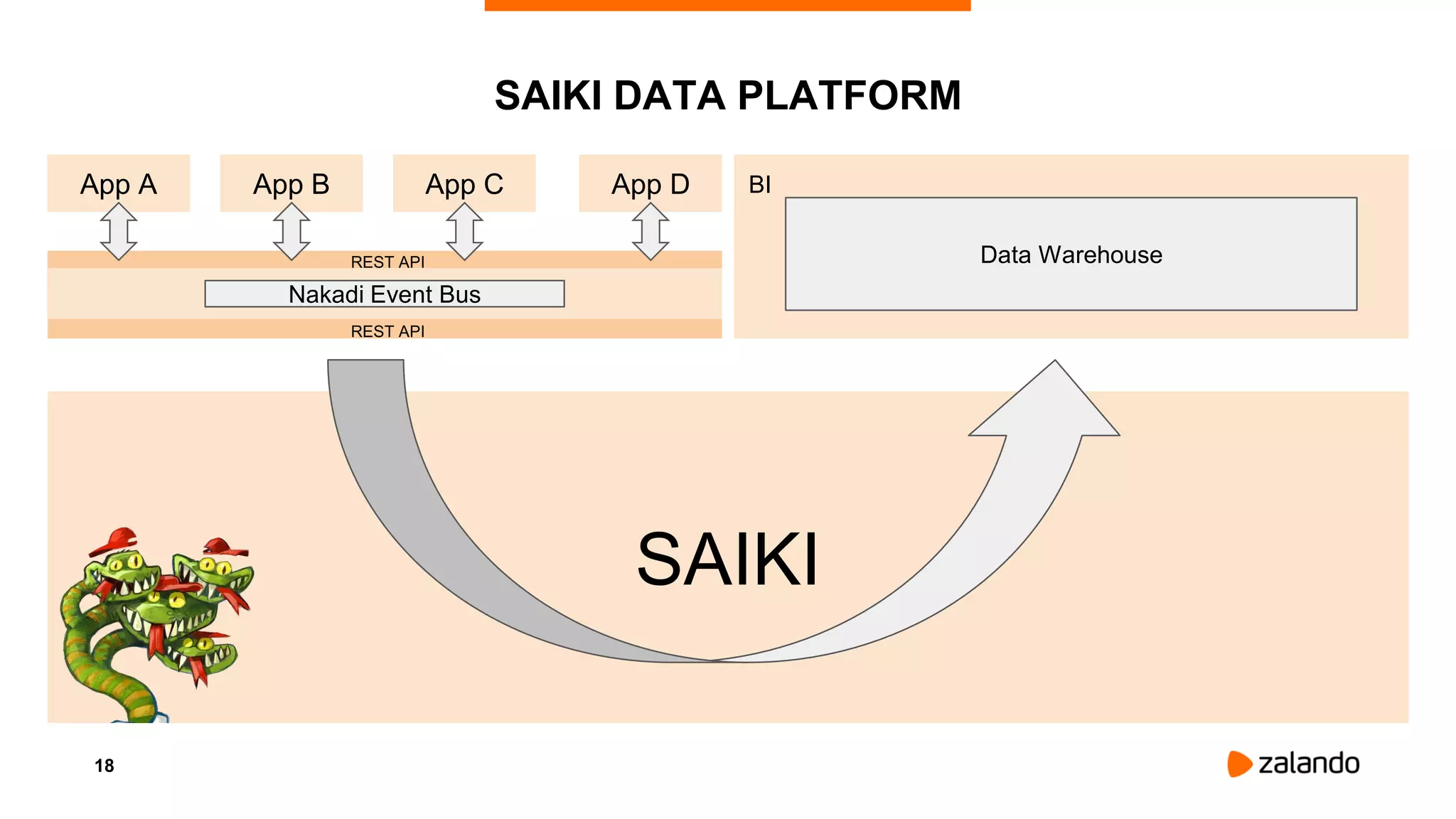

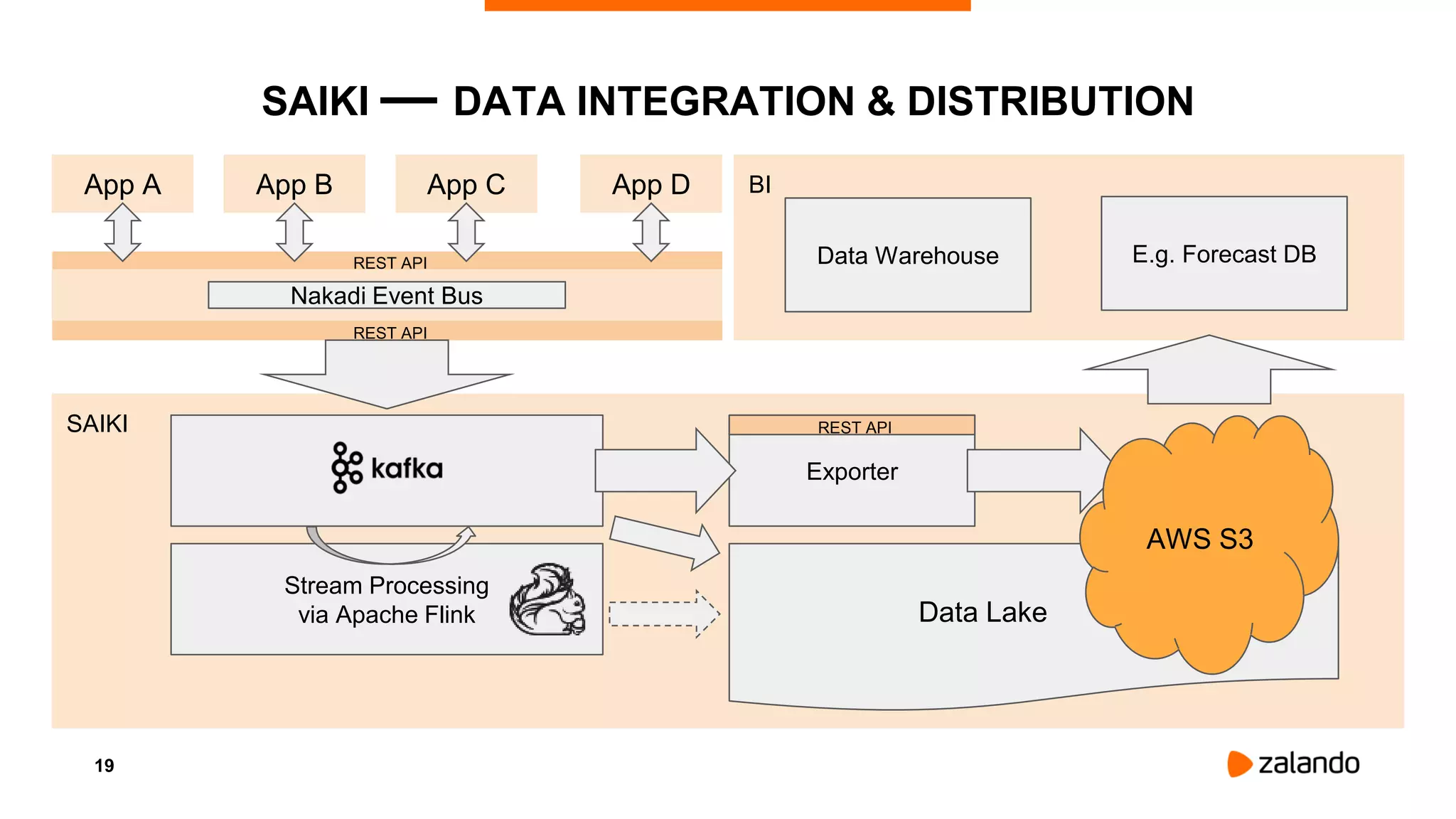

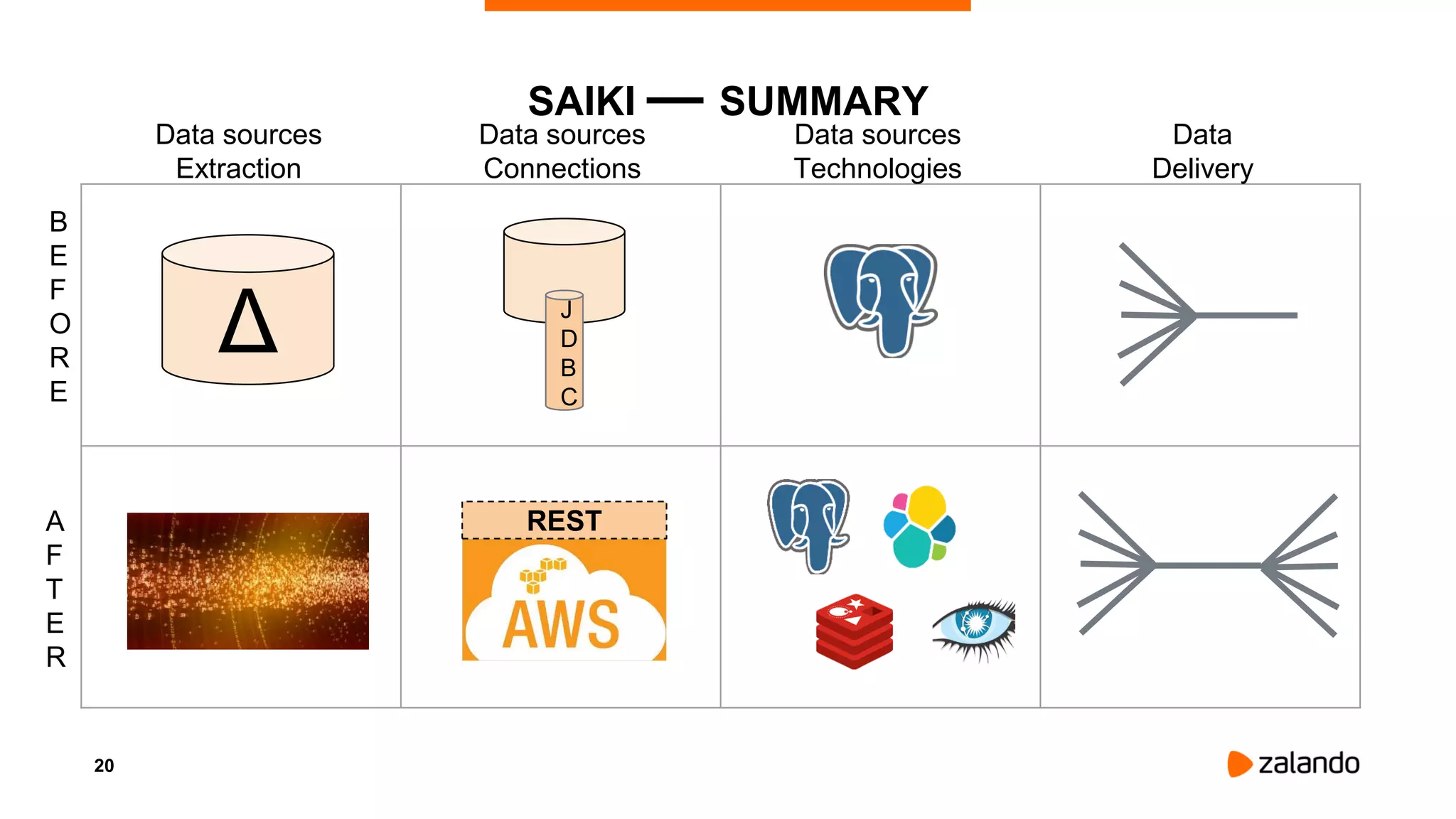

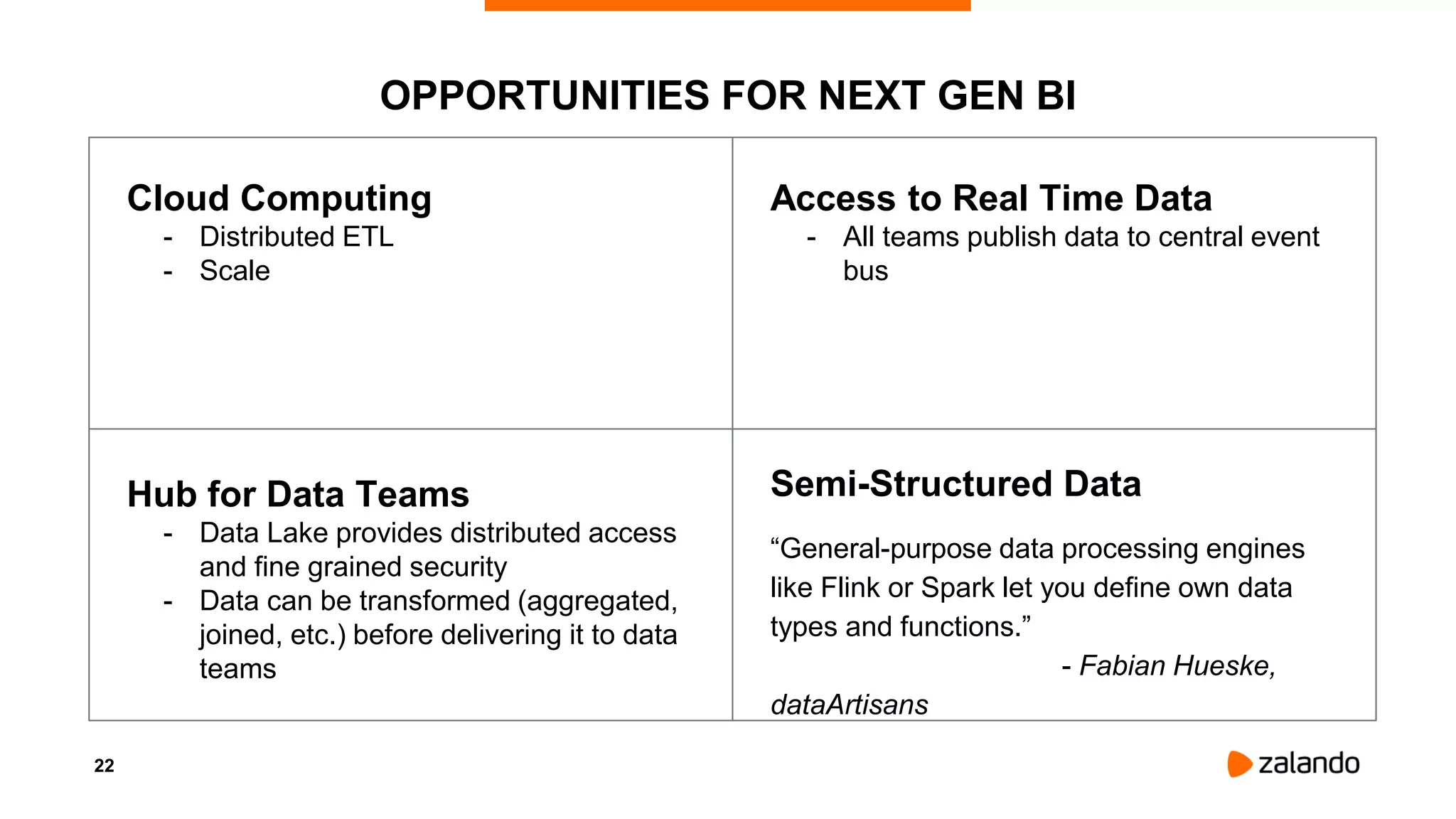

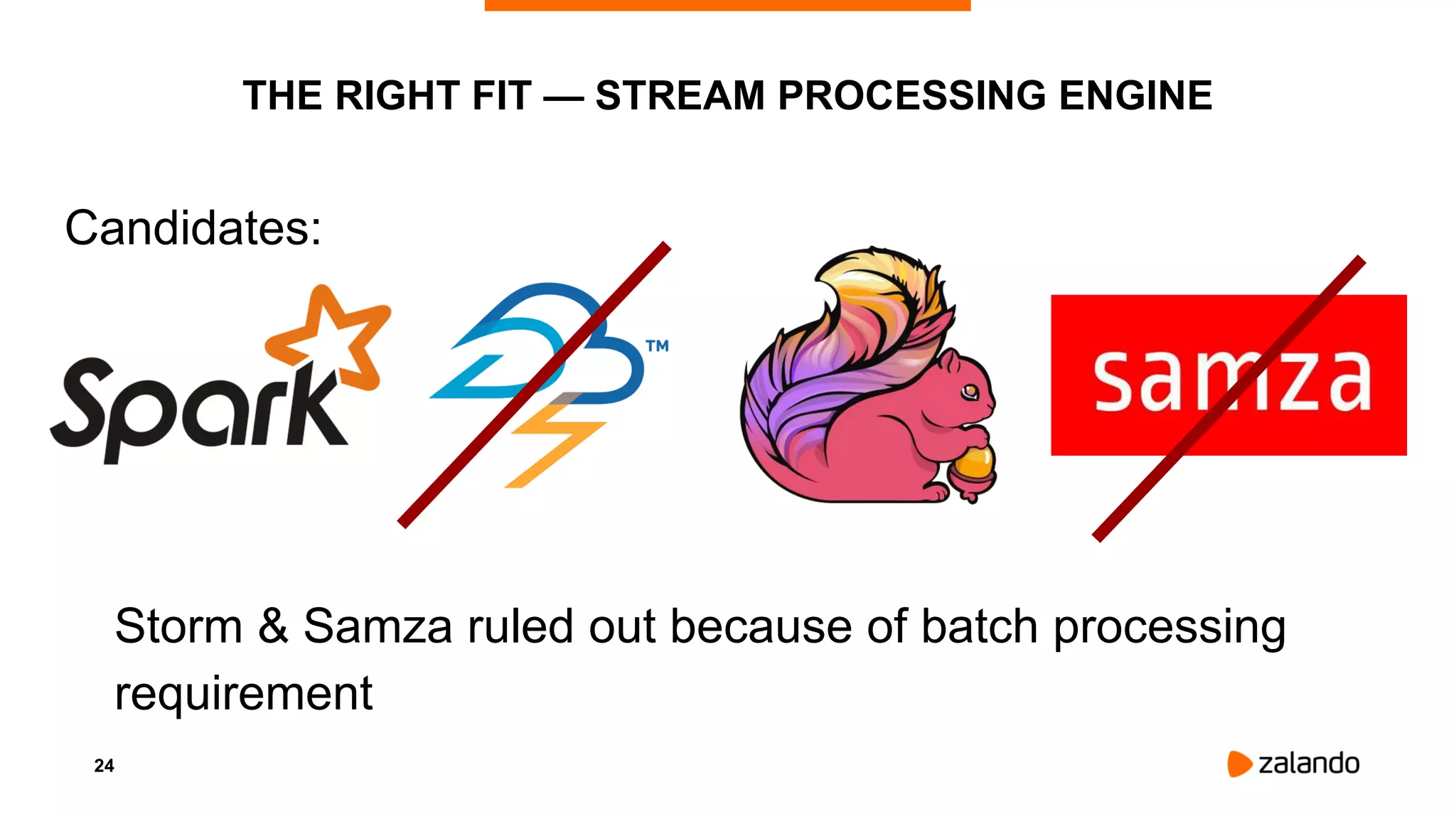

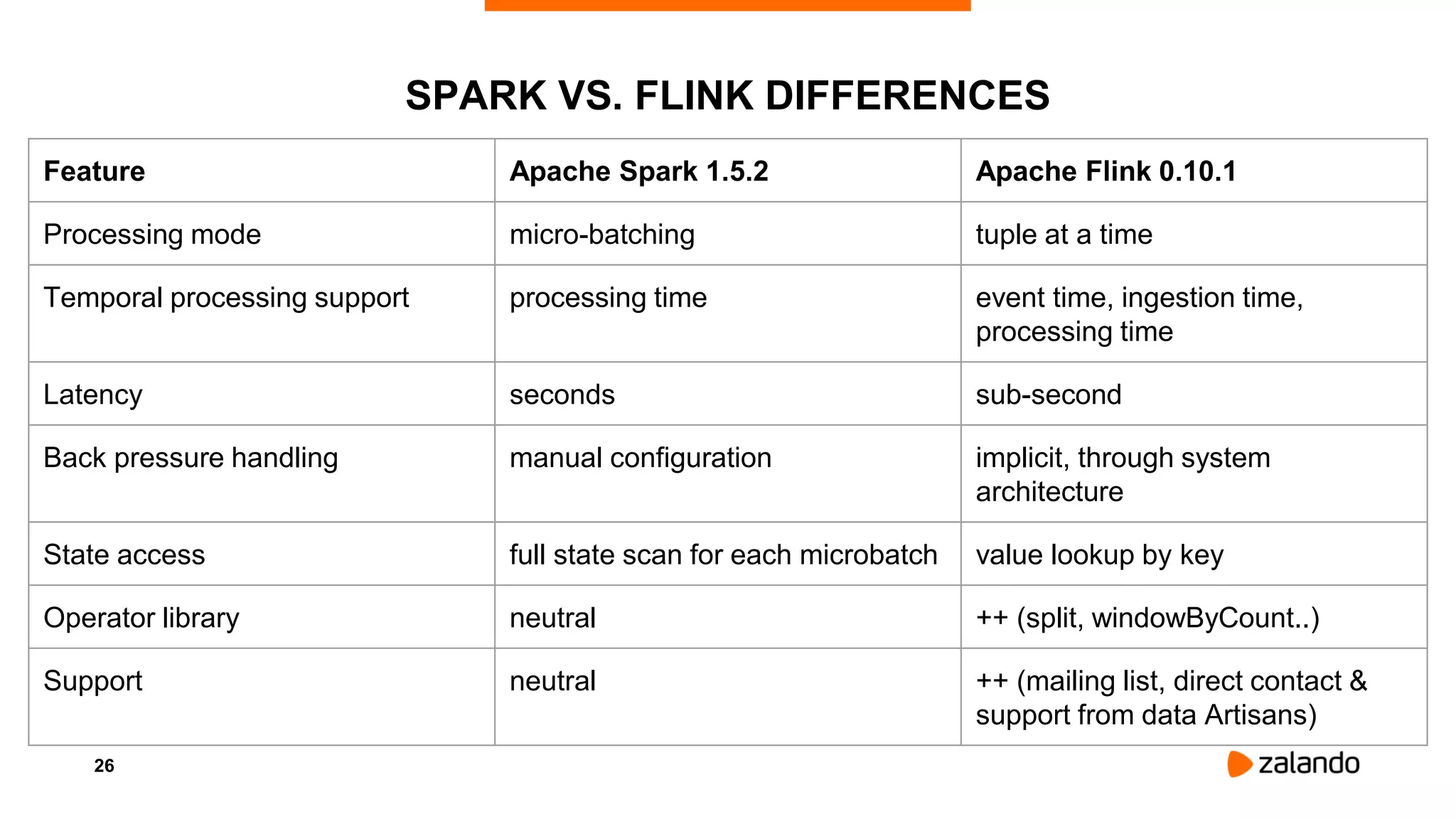

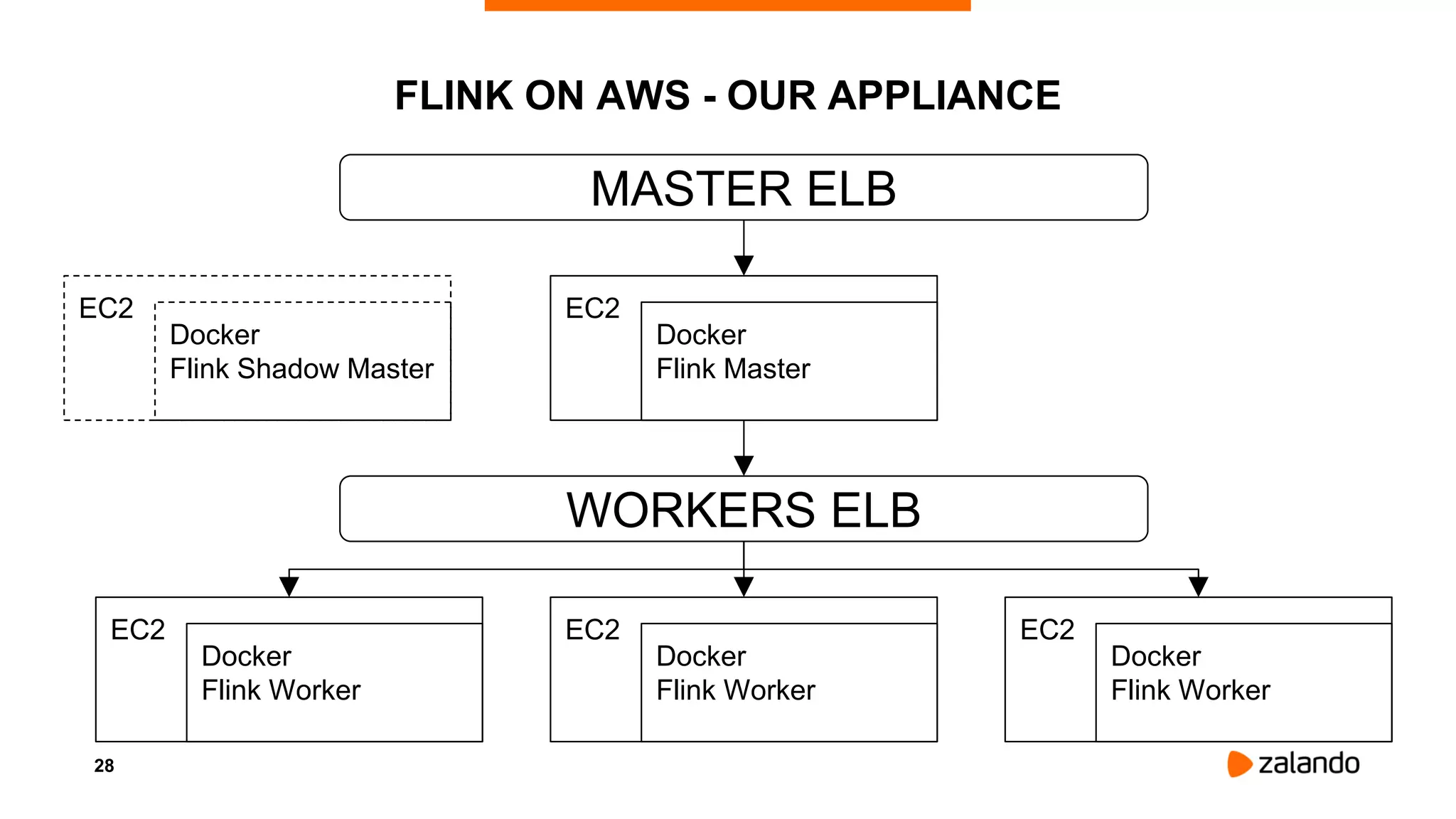

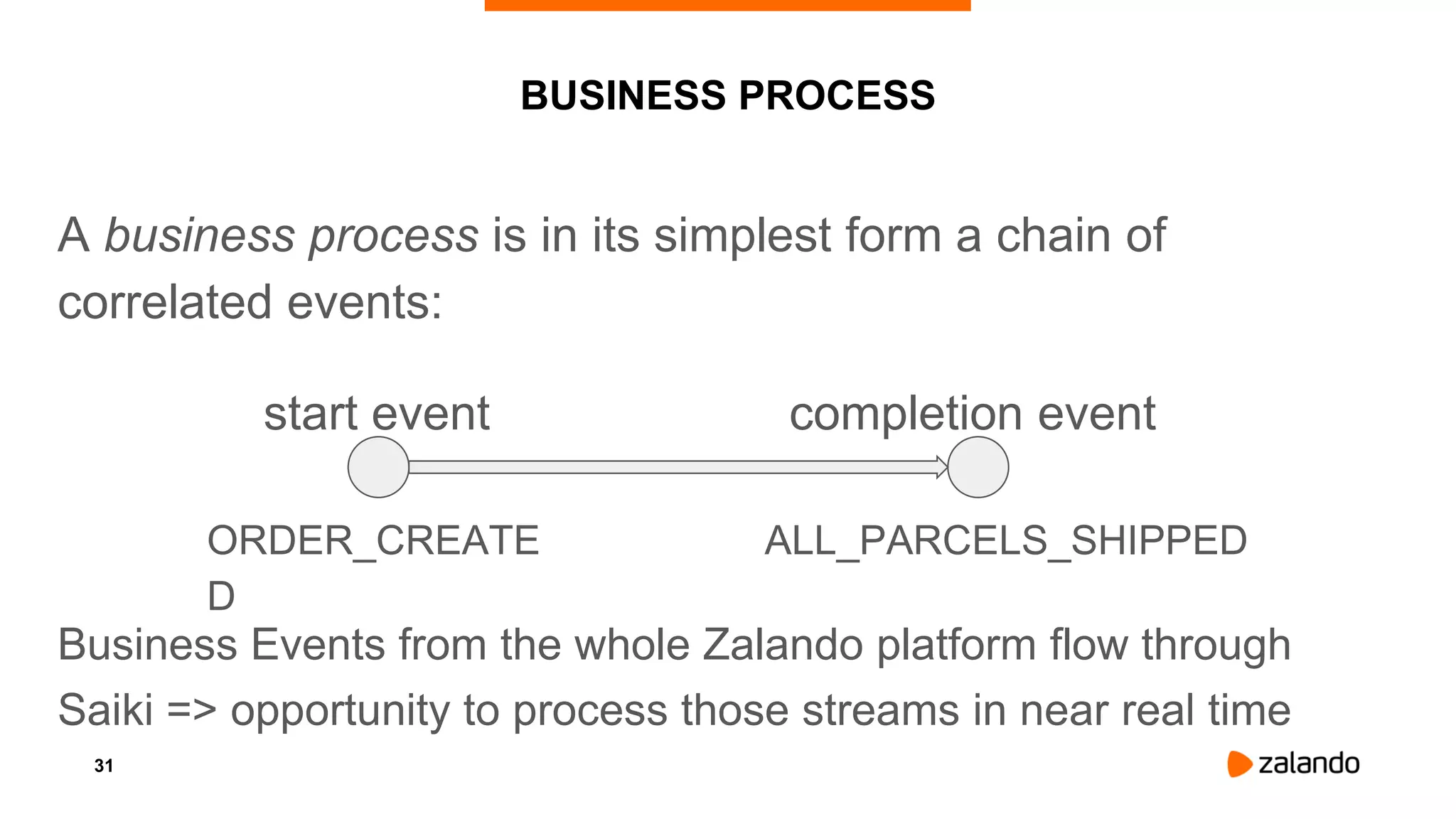

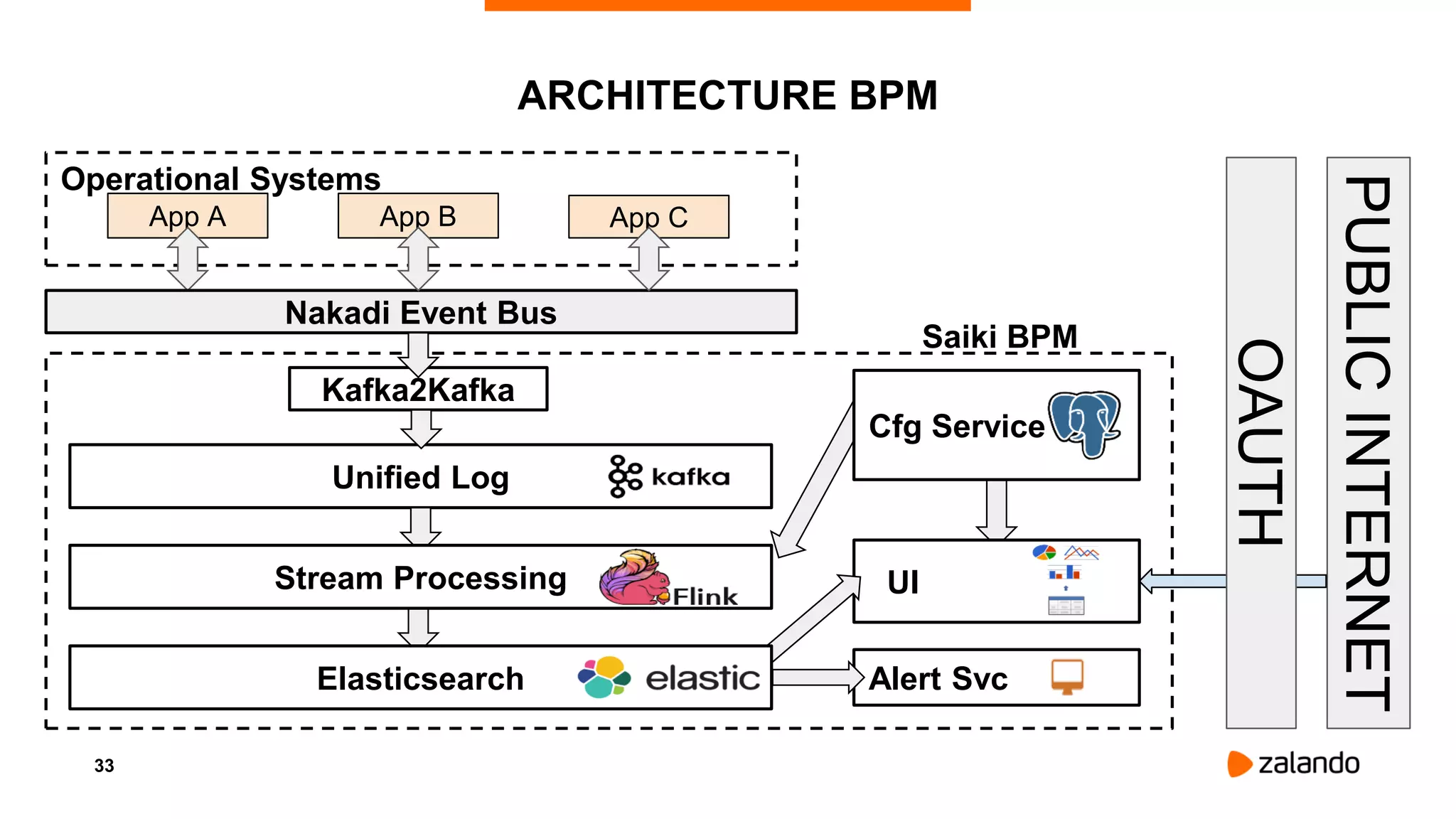

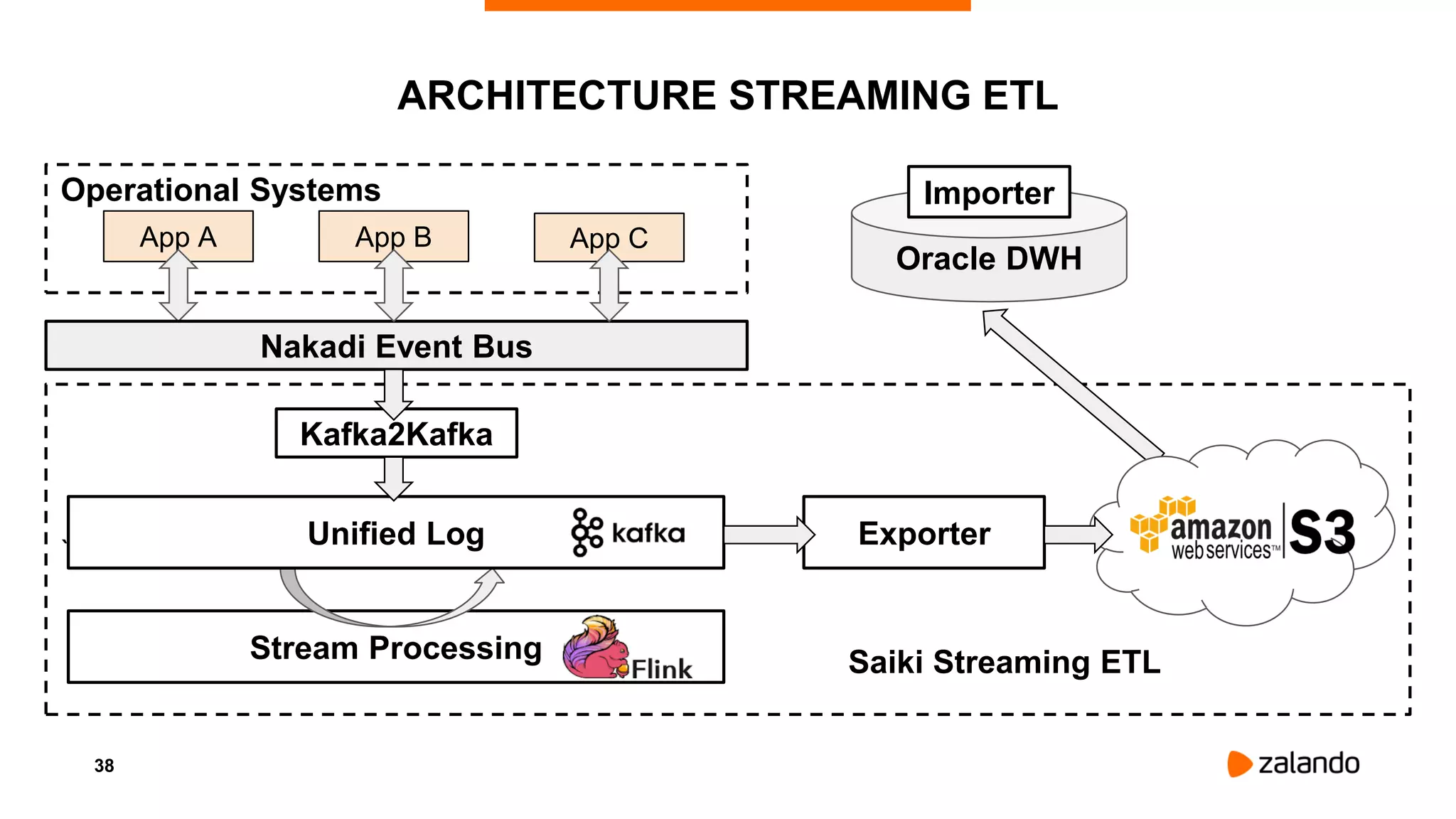

The document discusses Zalando's microservices architecture and the integration of Apache Flink for real-time data processing and business intelligence. It highlights various use cases including business process monitoring and continuous ETL, emphasizing Flink's advantages over other frameworks. The document concludes that Flink is well-suited for Zalando's next-generation BI platform due to its low latency and scalable stream processing capabilities.