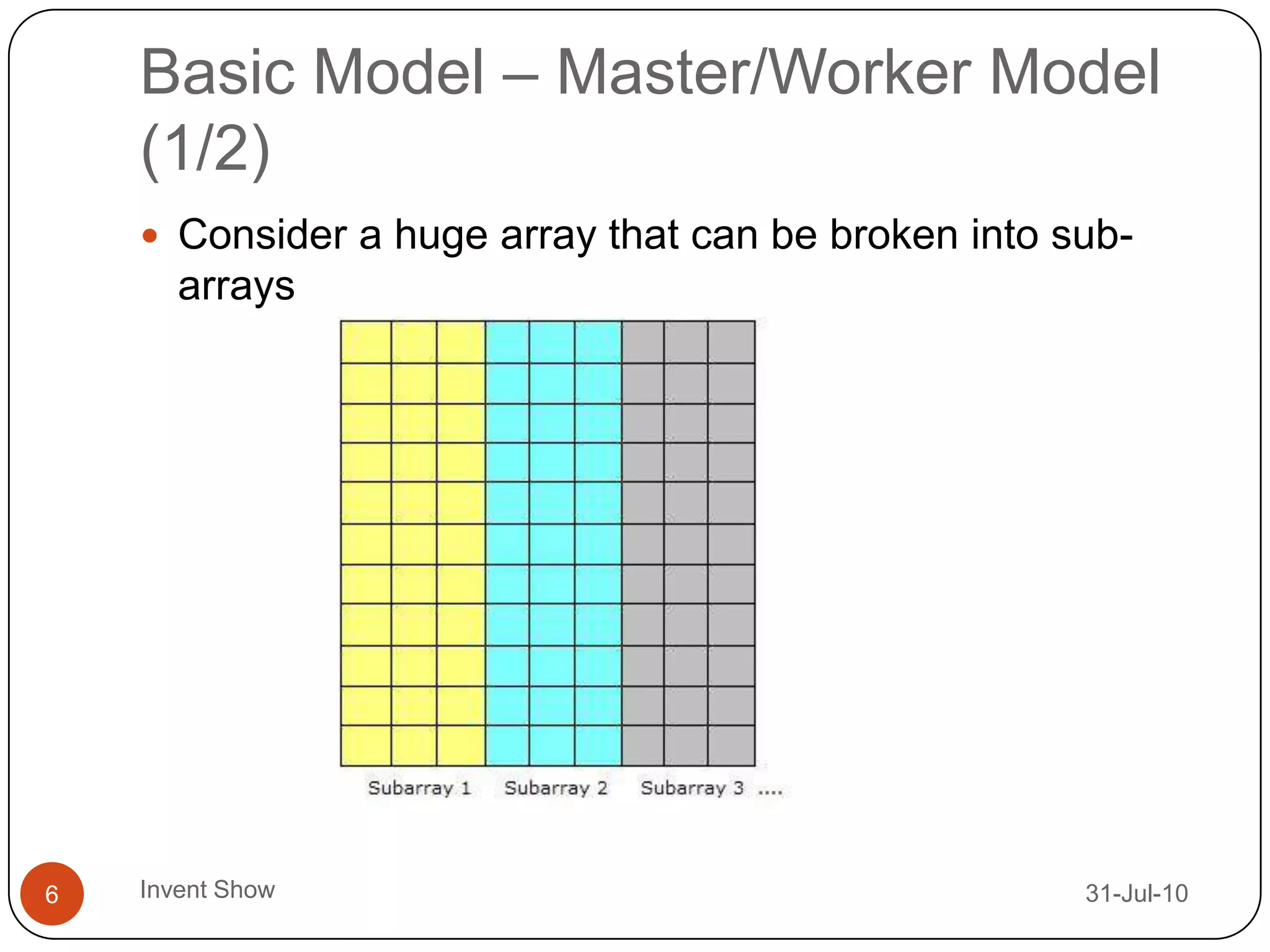

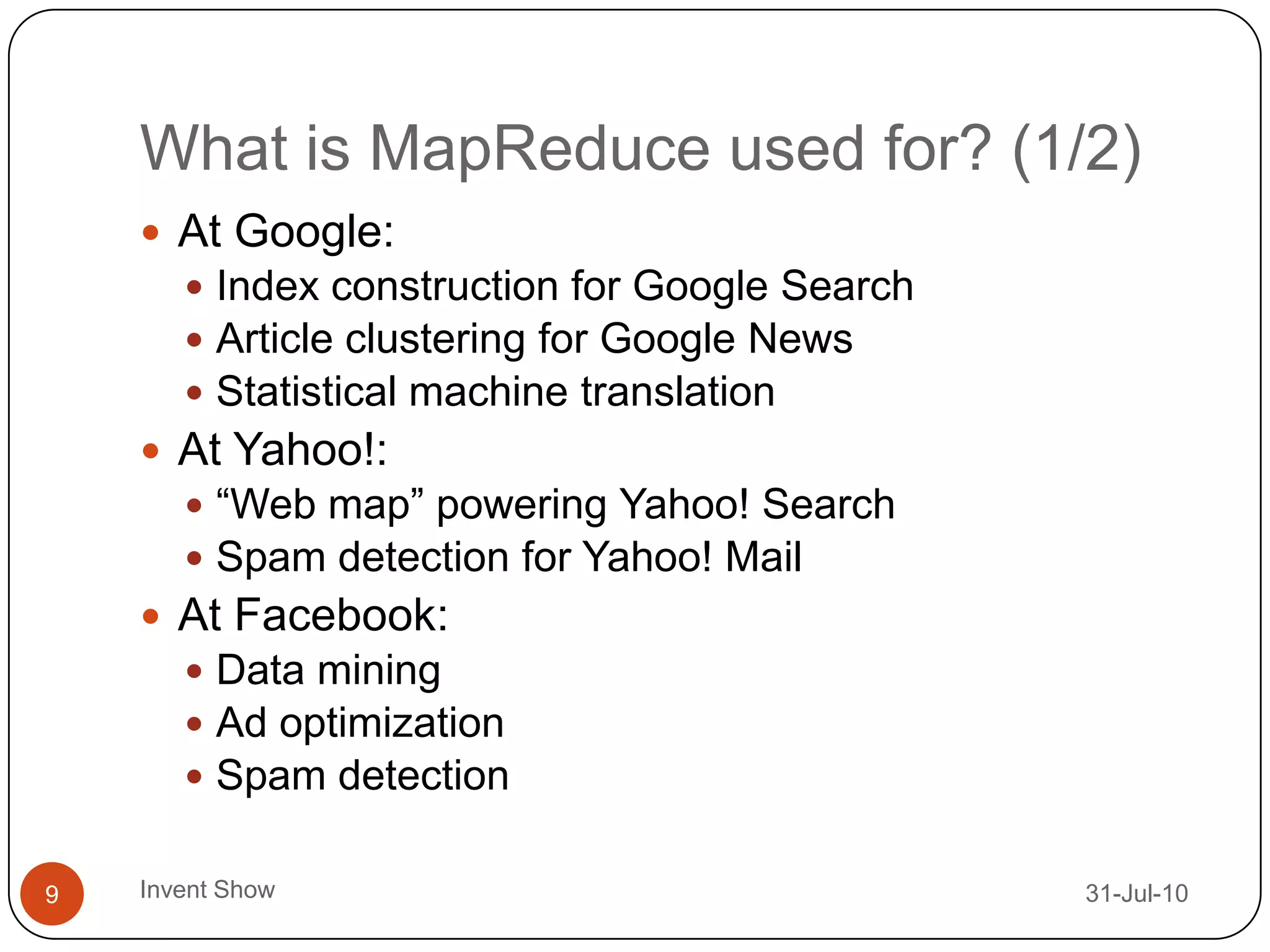

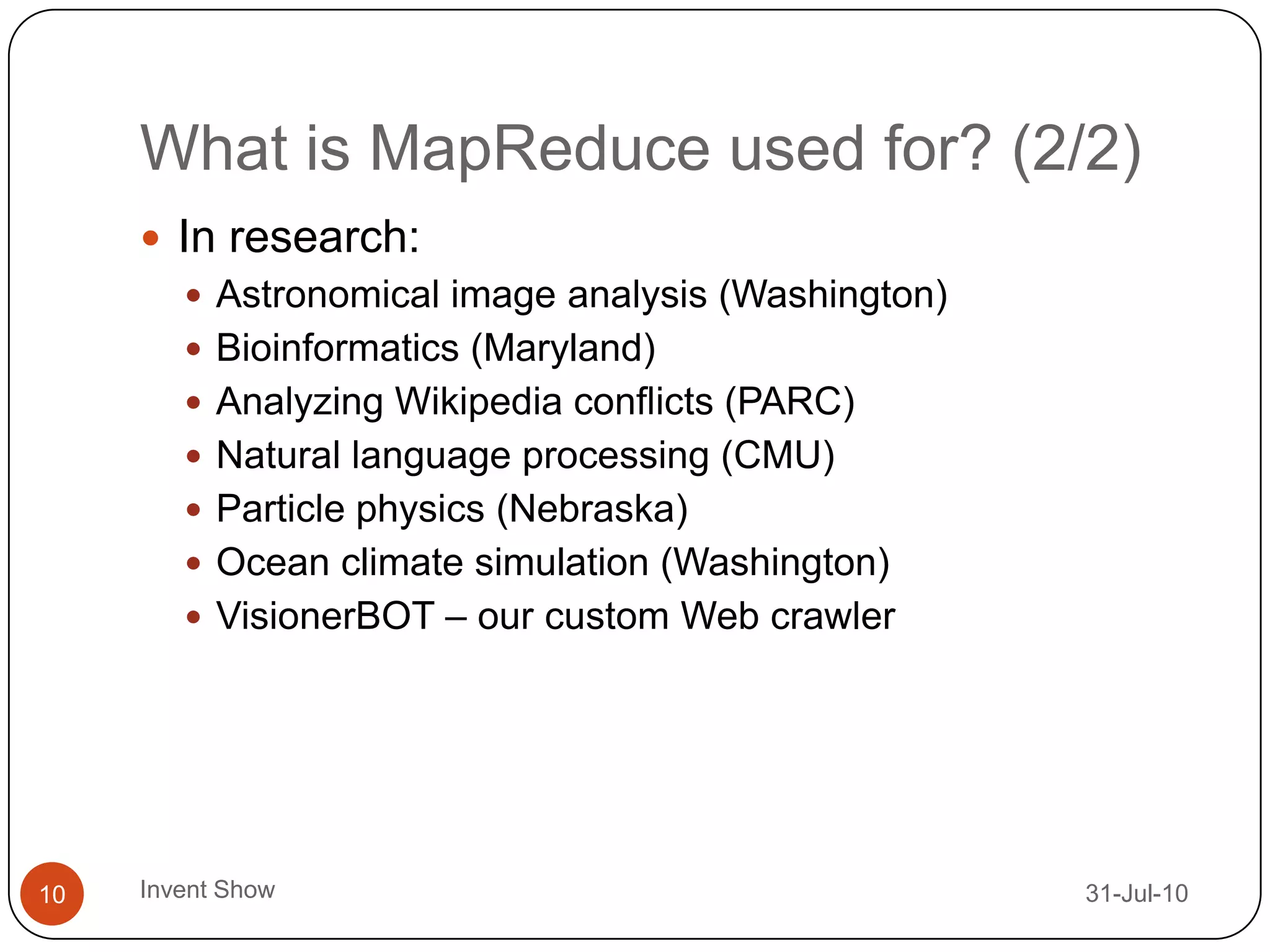

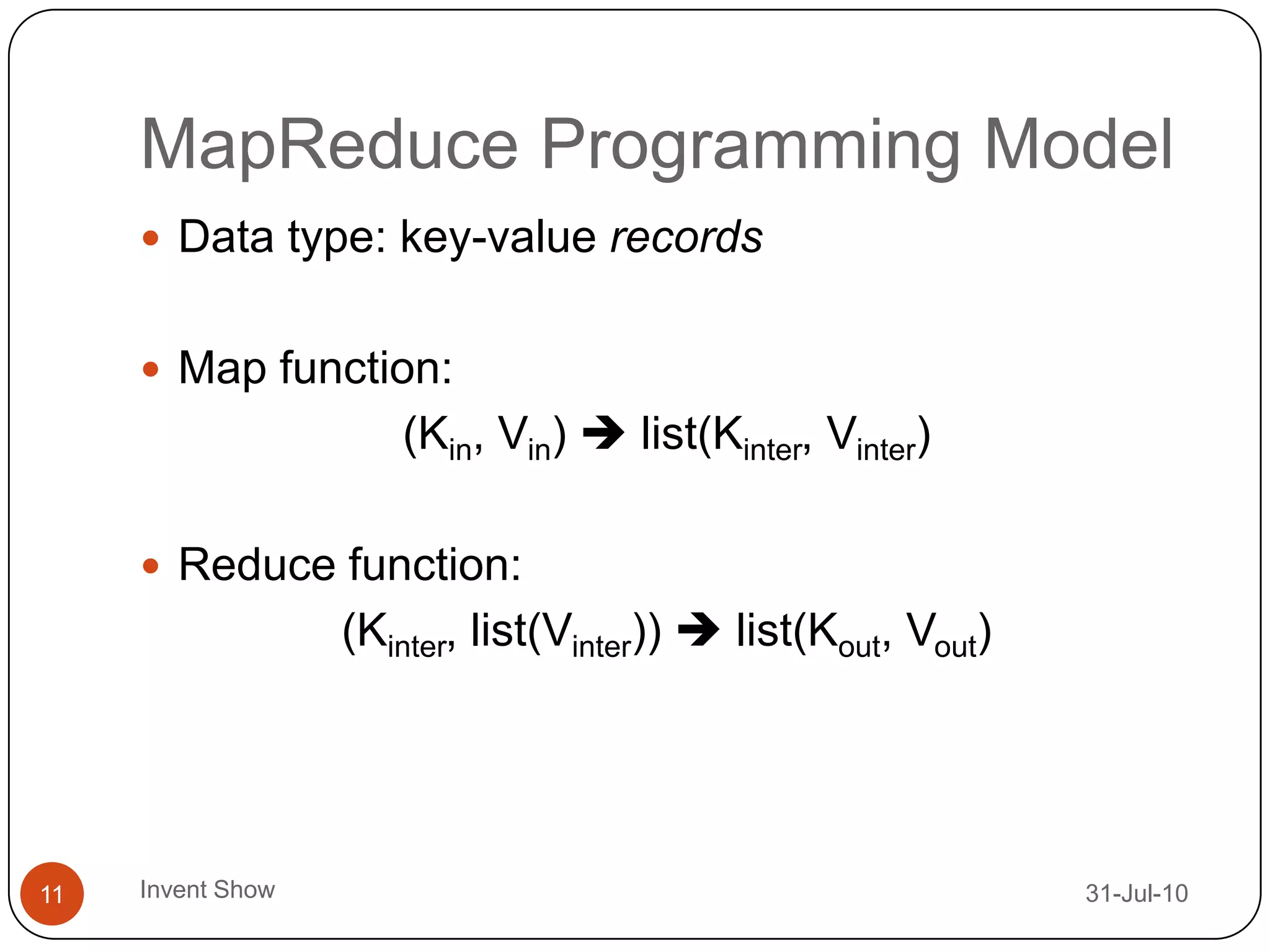

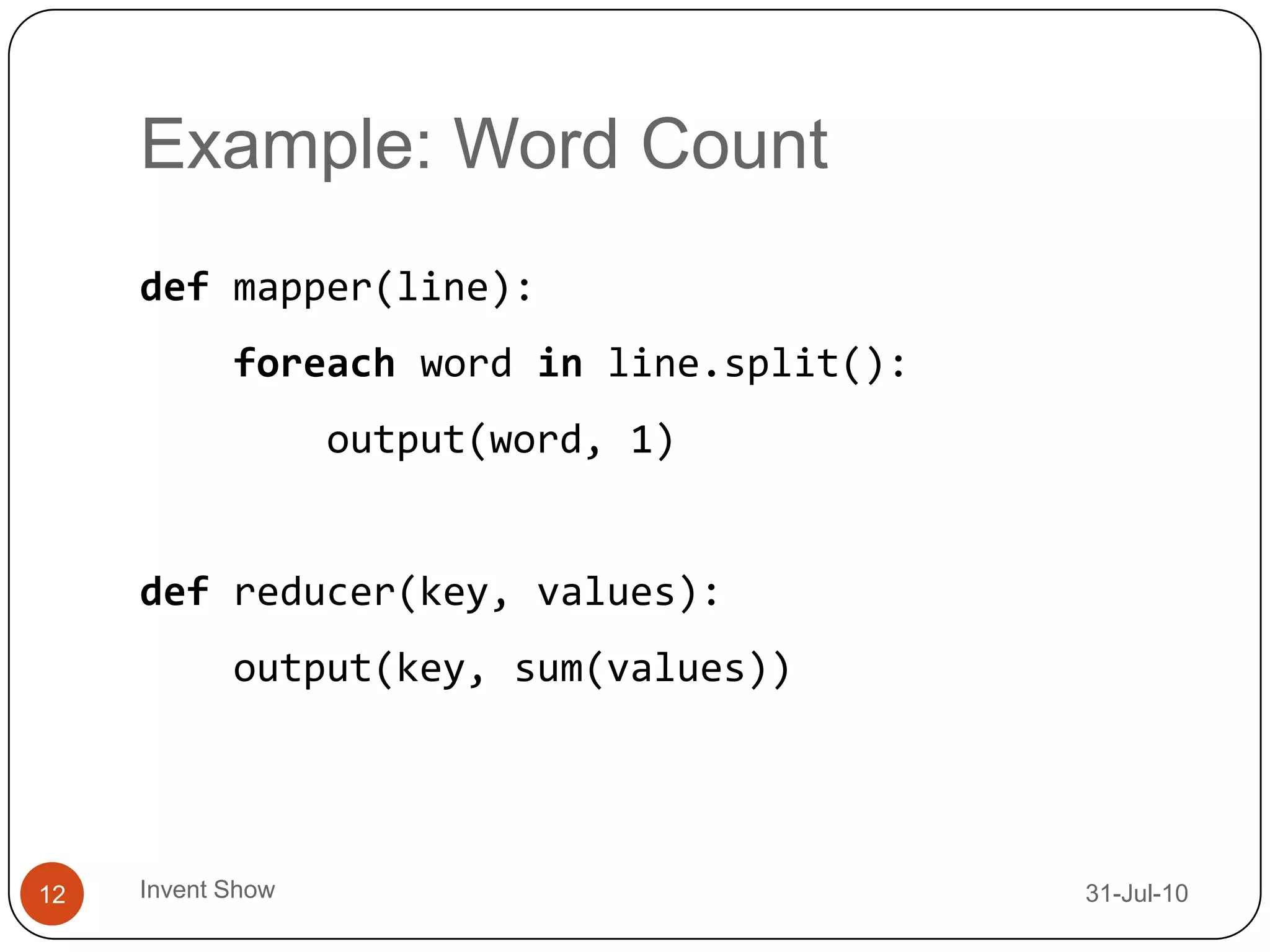

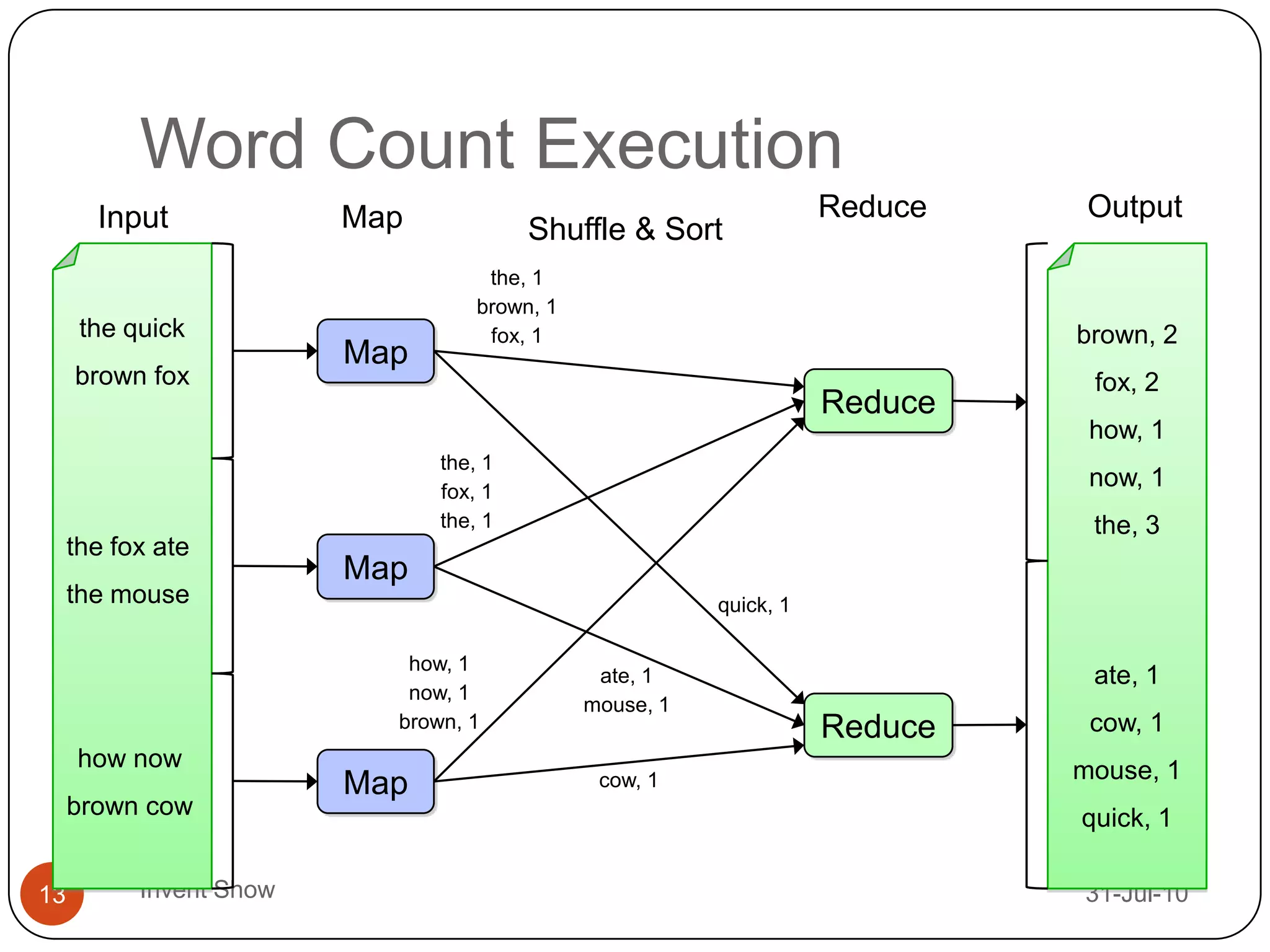

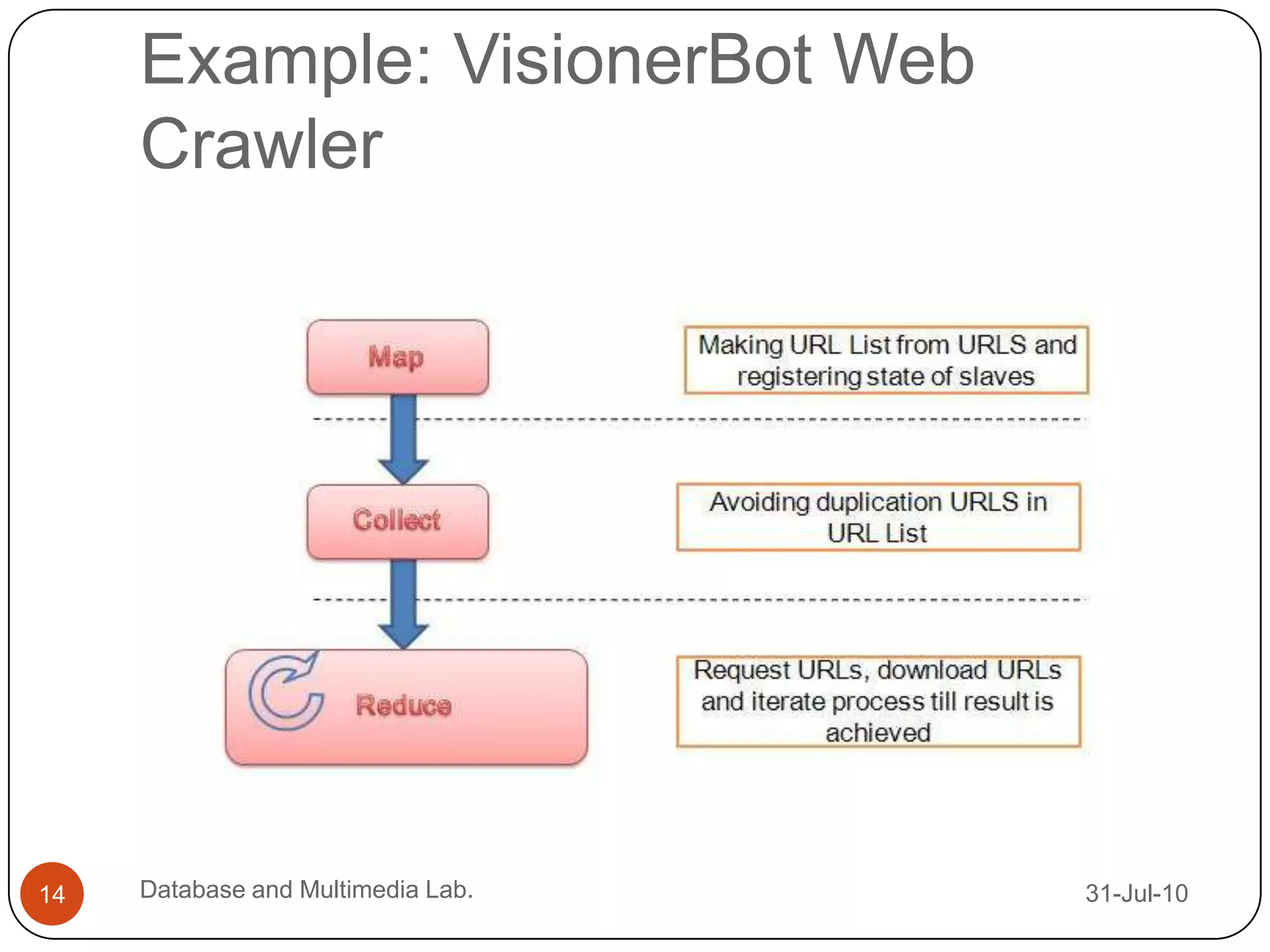

This document discusses the shift towards parallel computing due to physical limitations in processor speed improvements. It introduces MapReduce as a programming model for easily writing parallel programs to process large datasets across many computers. MapReduce works by splitting data, processing it in parallel via mapping functions, then collecting the results via reducing functions. Examples show how it can be used to count word frequencies or crawl the web in parallel.