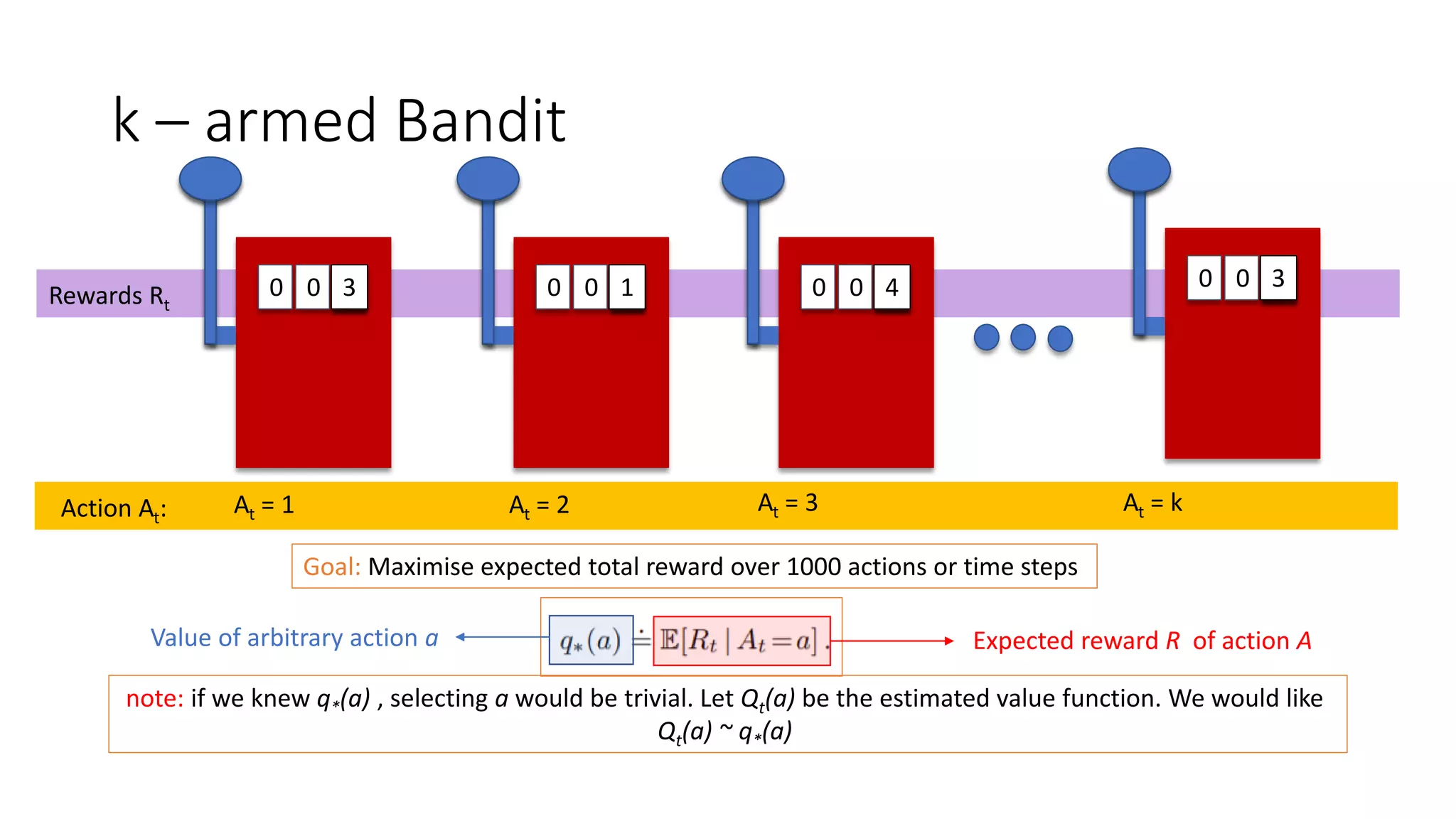

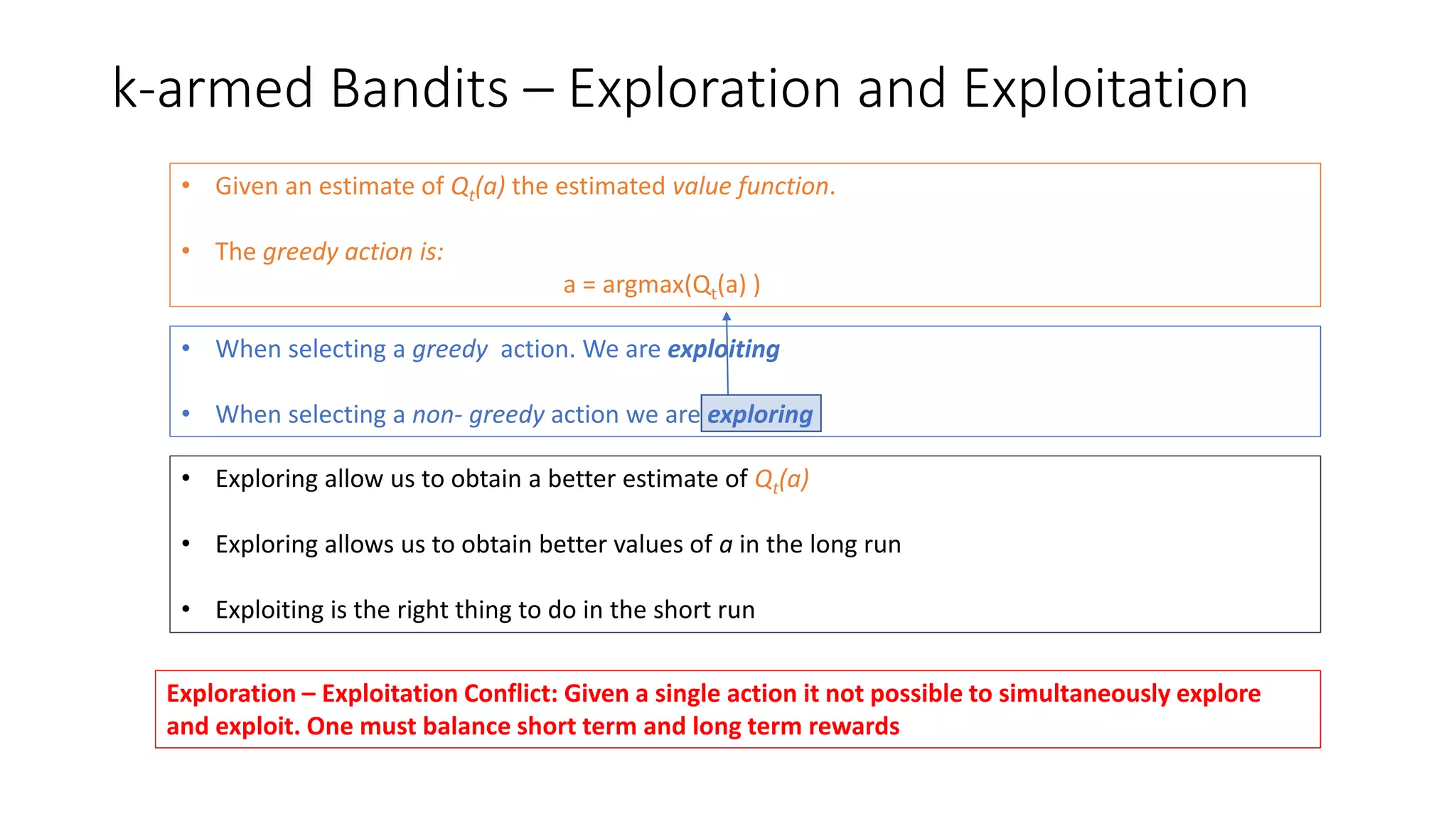

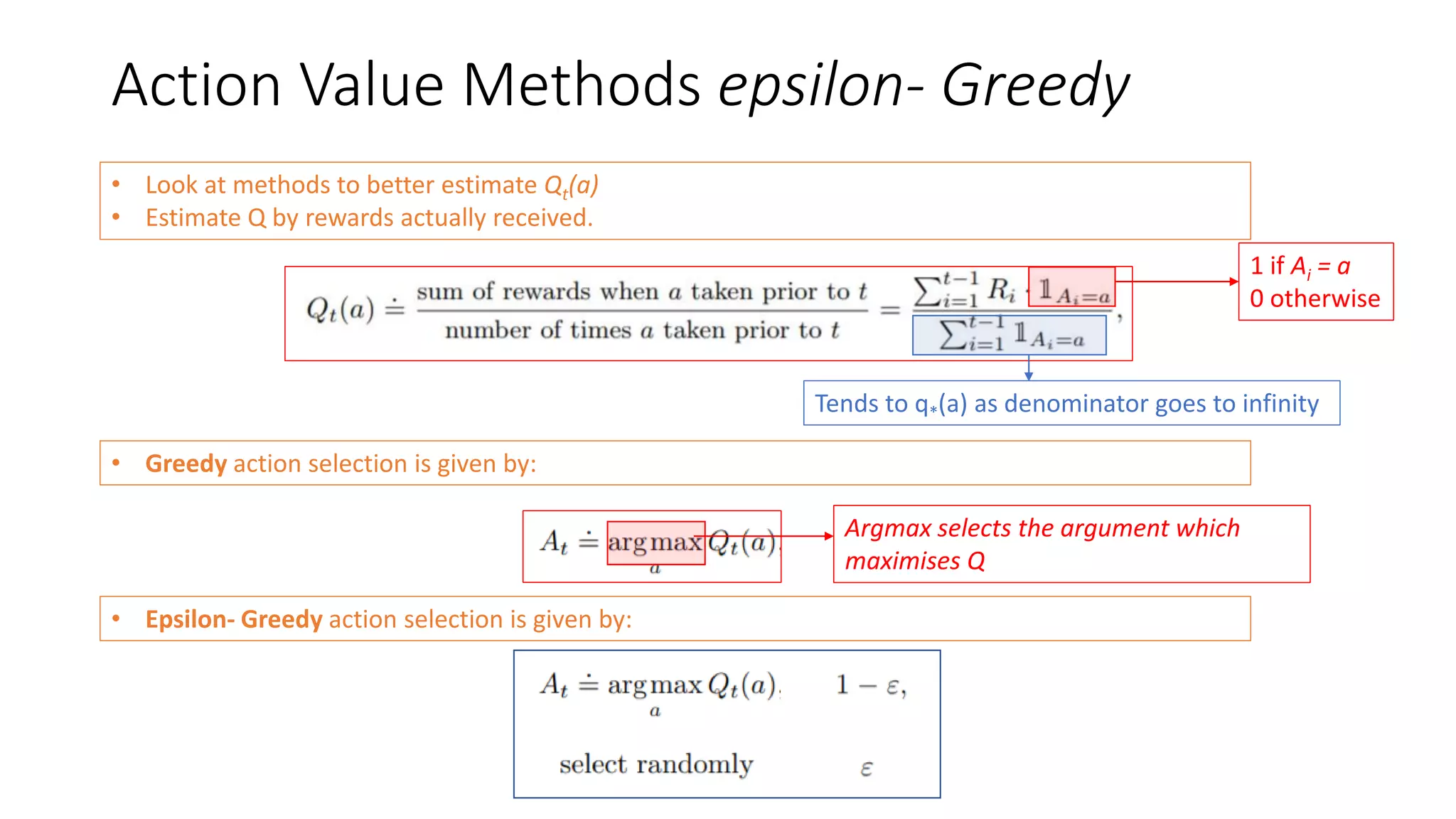

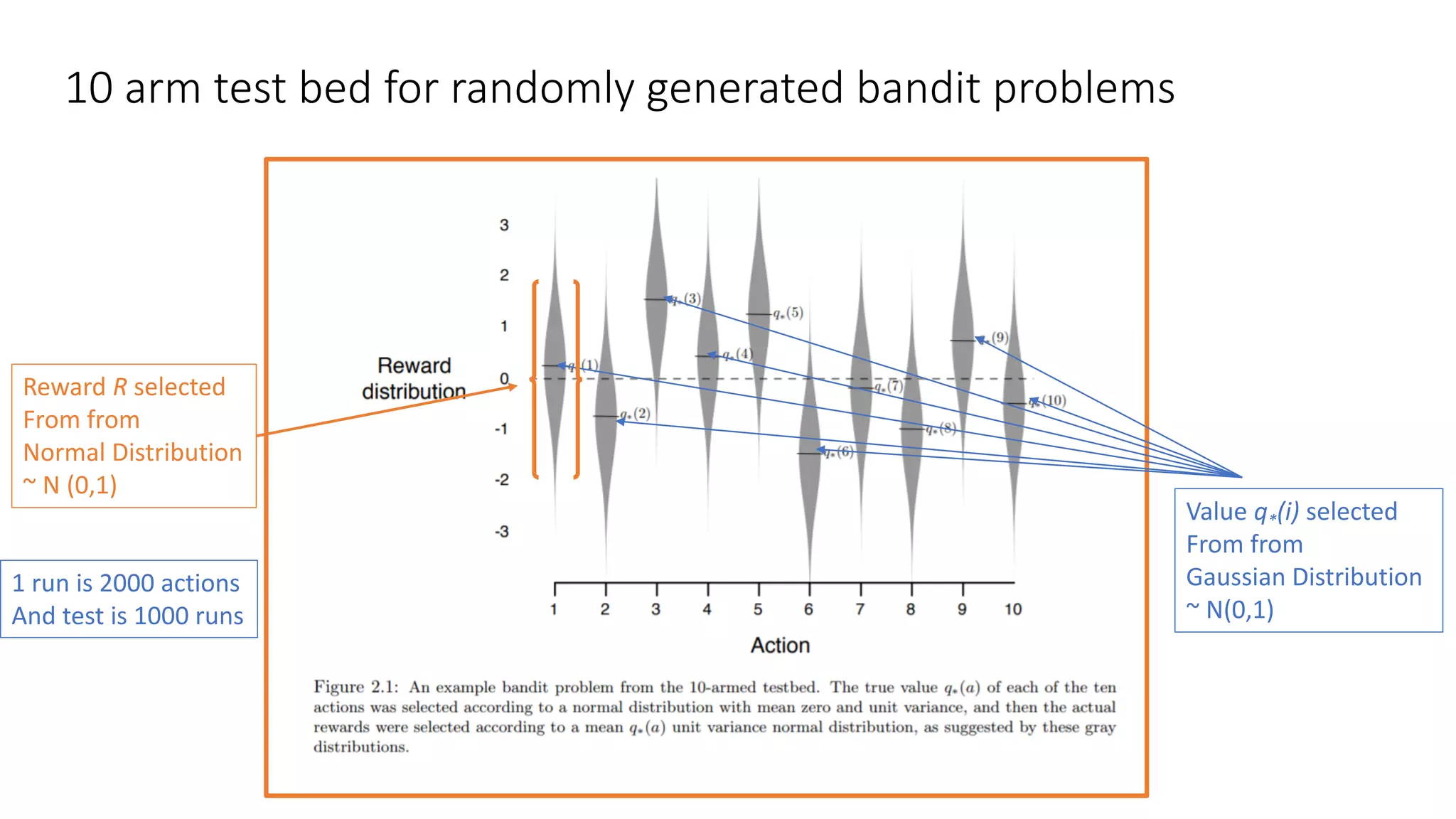

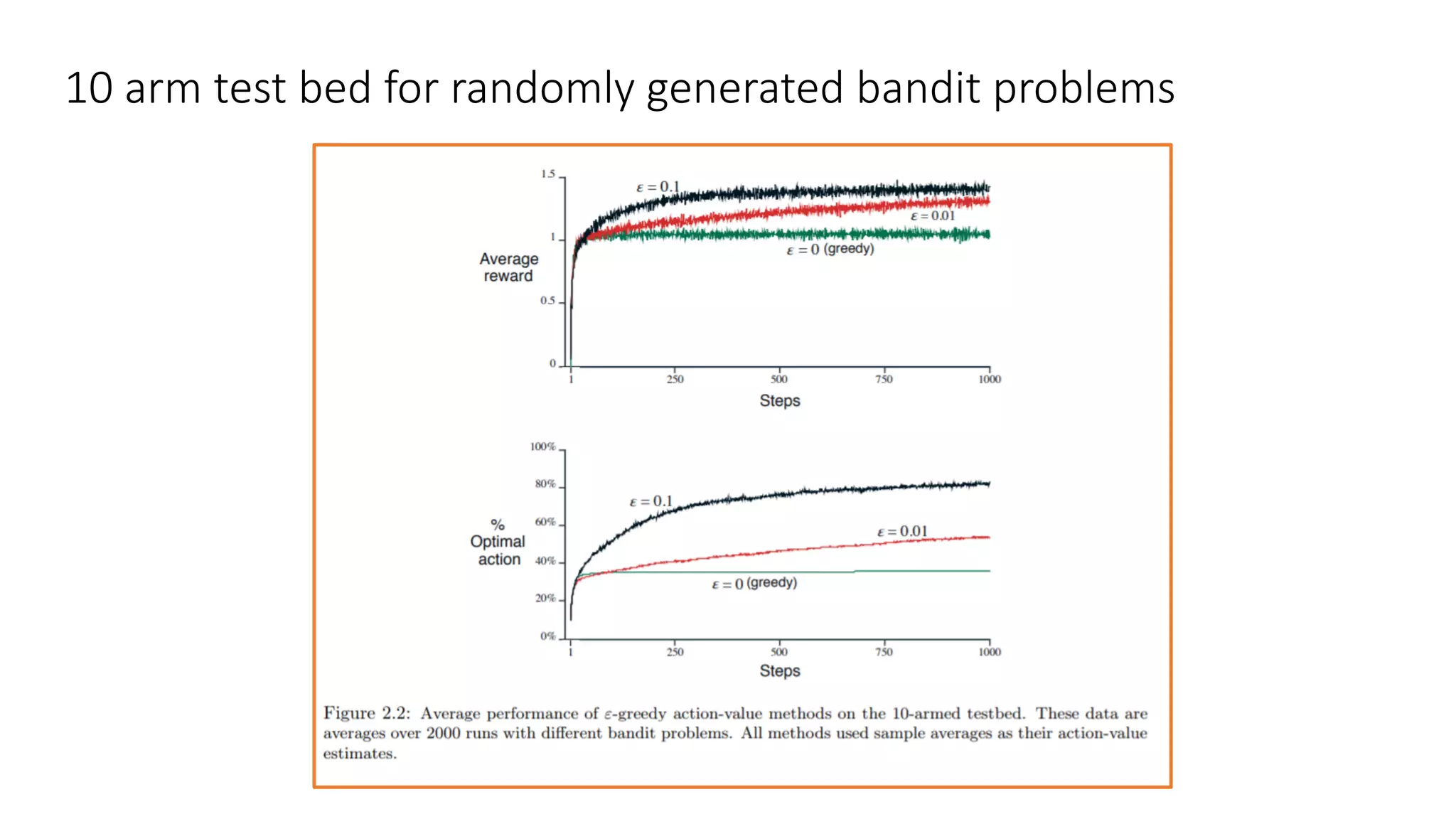

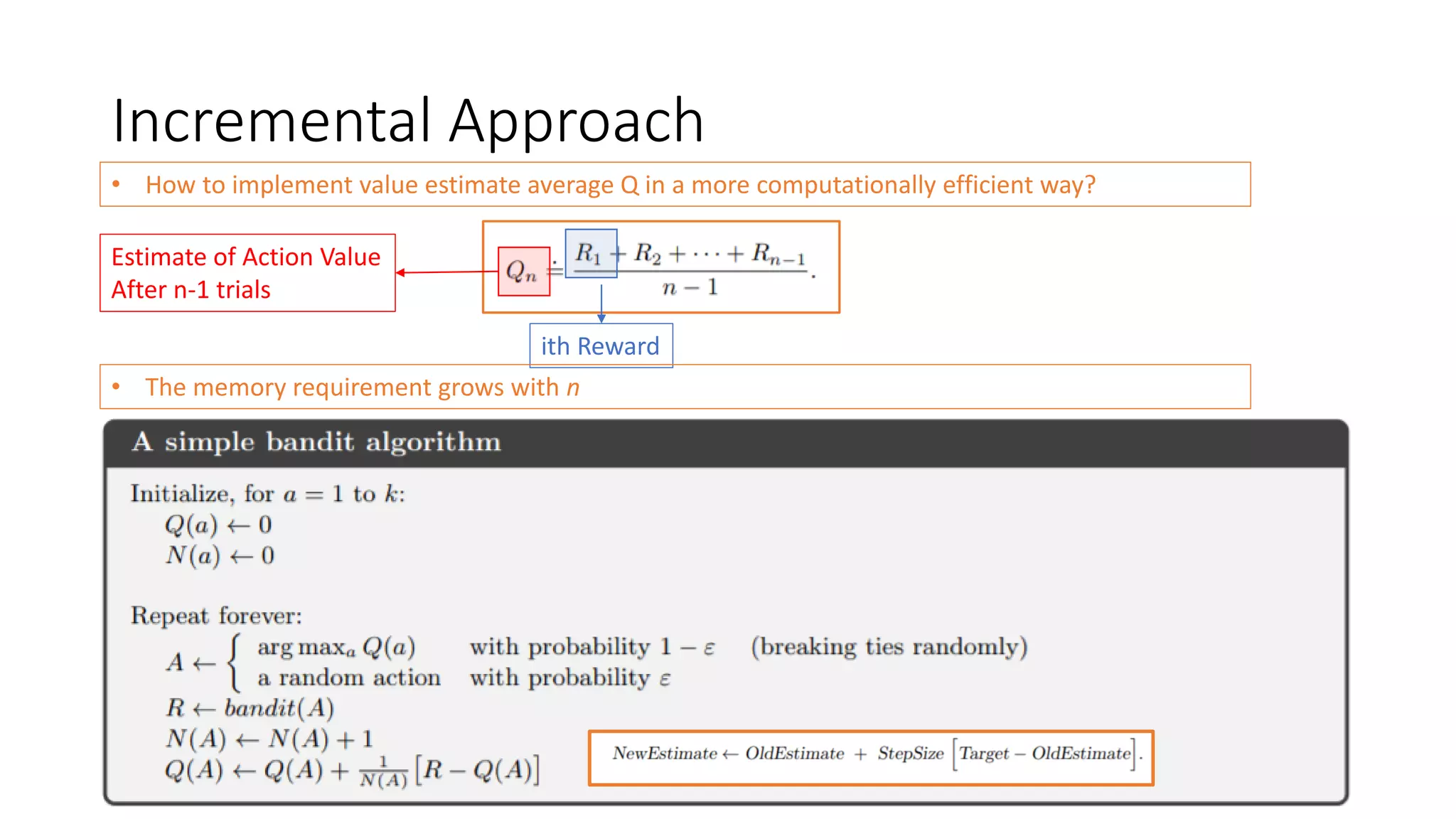

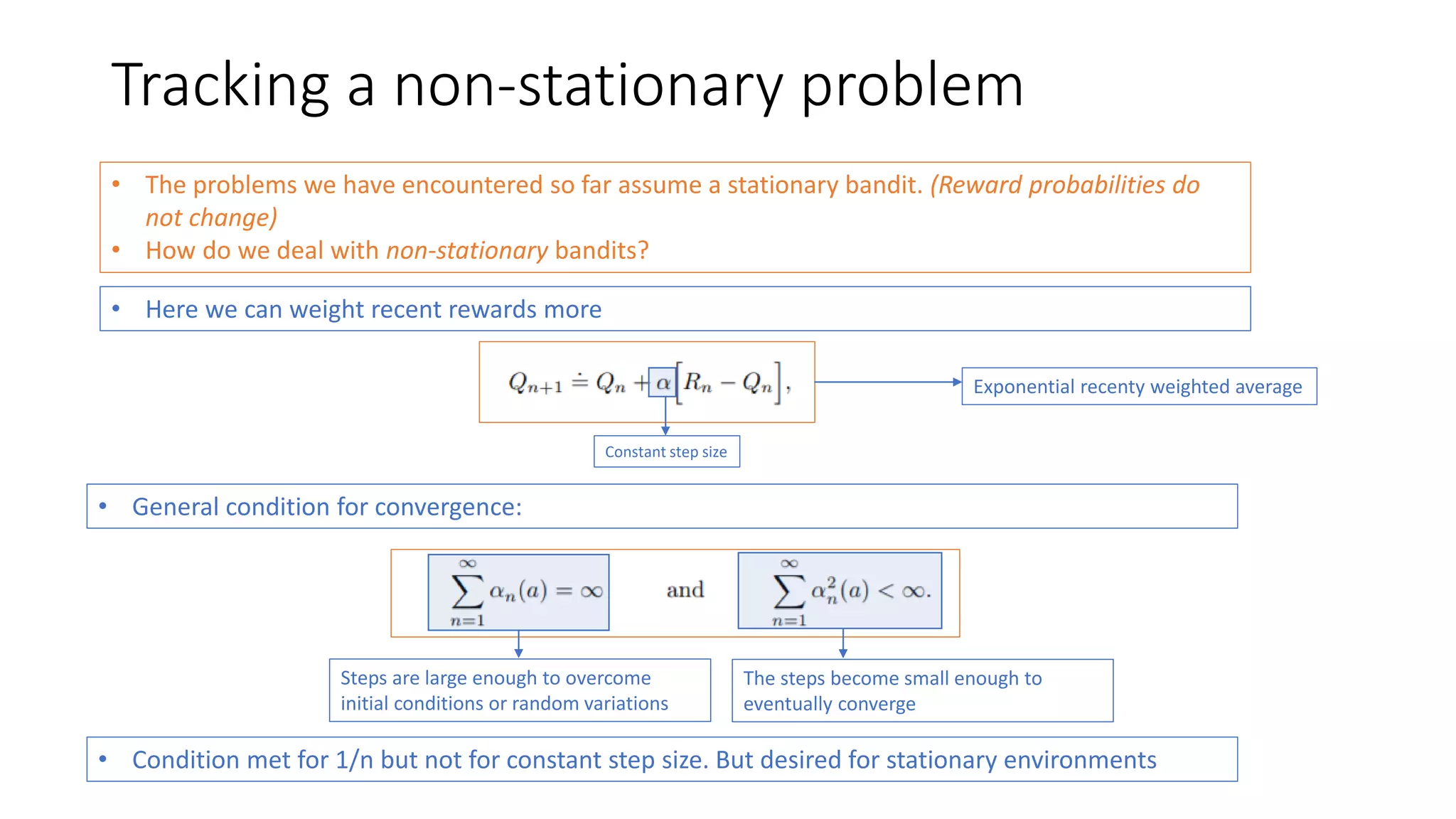

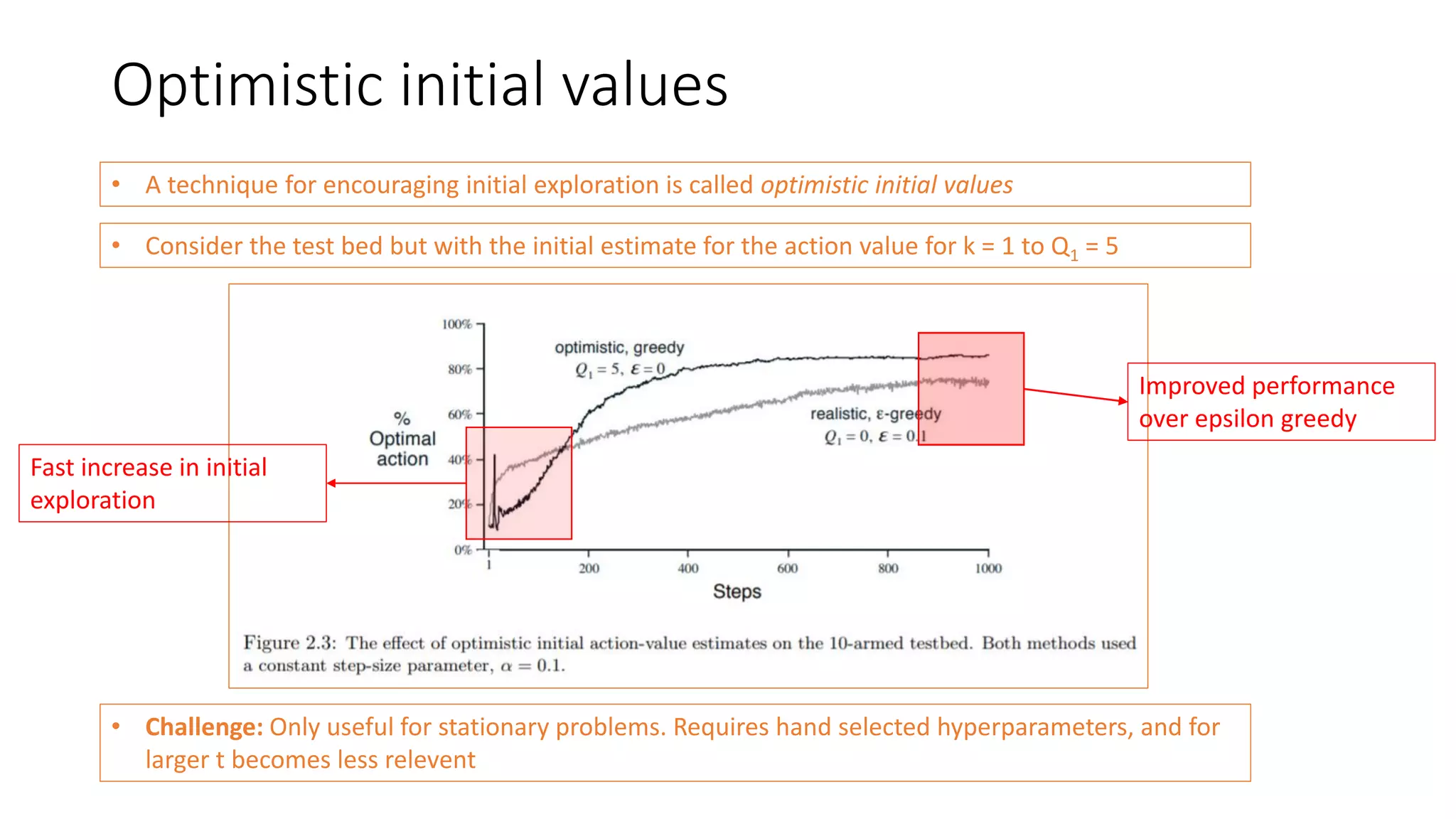

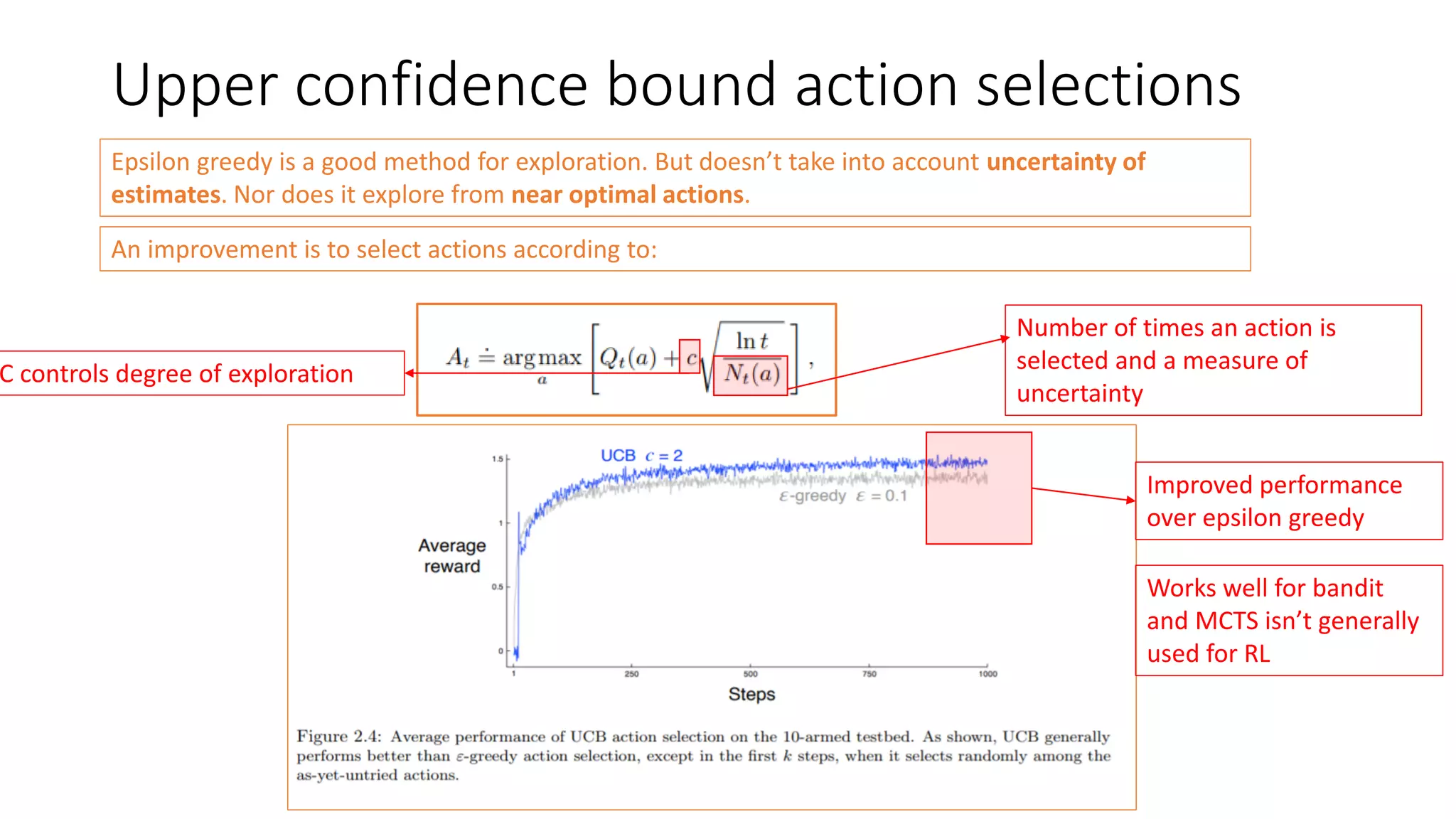

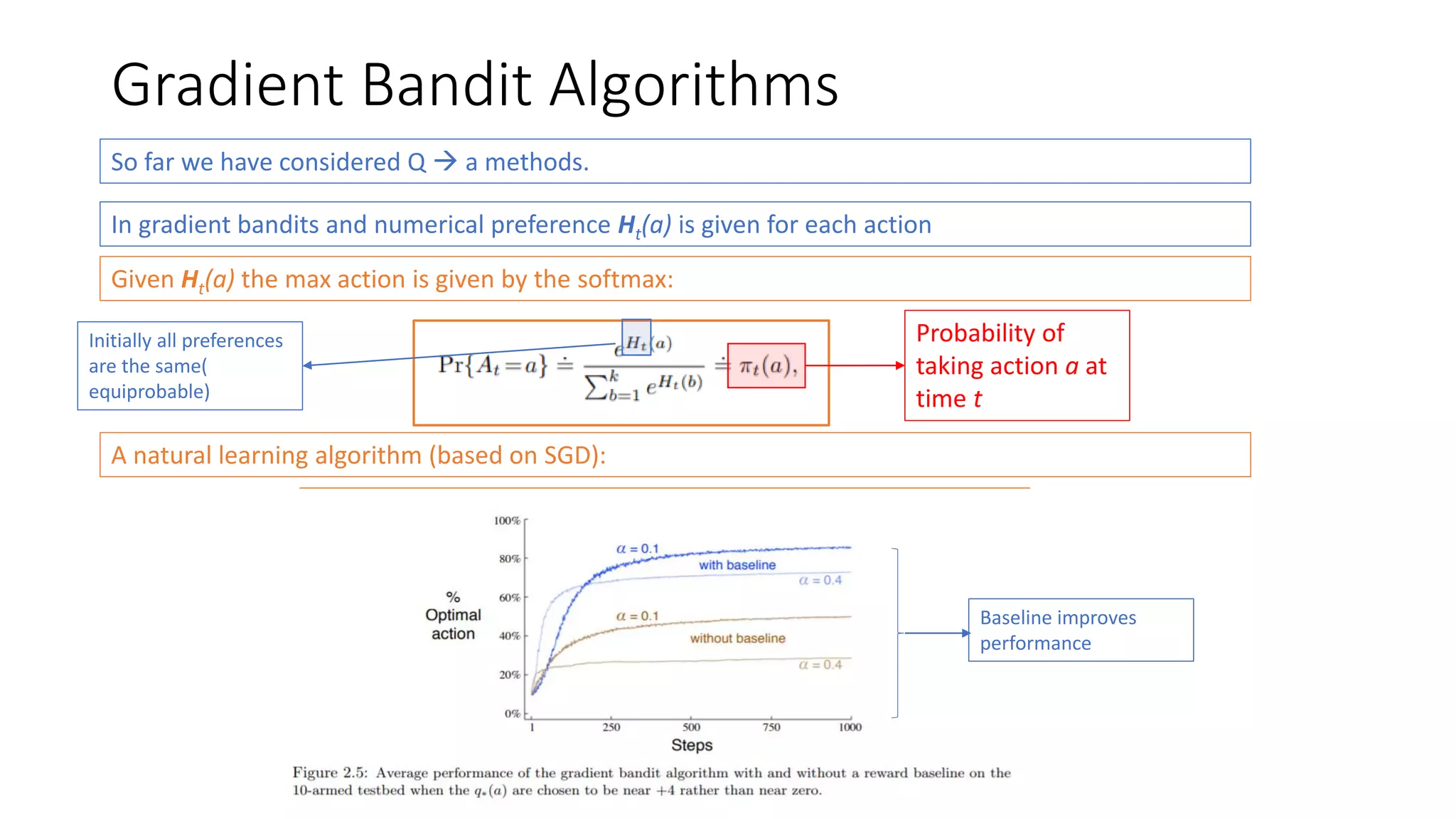

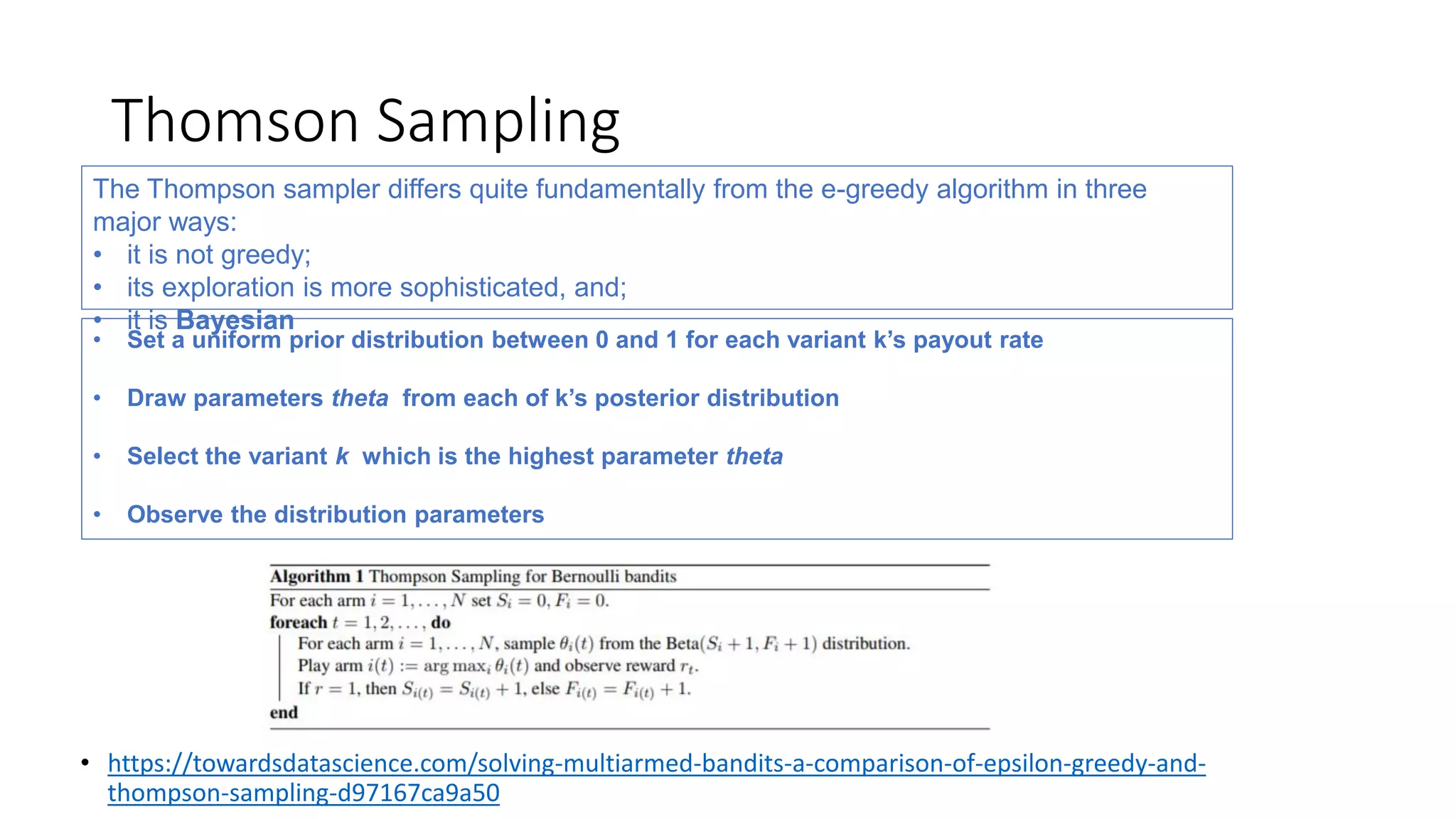

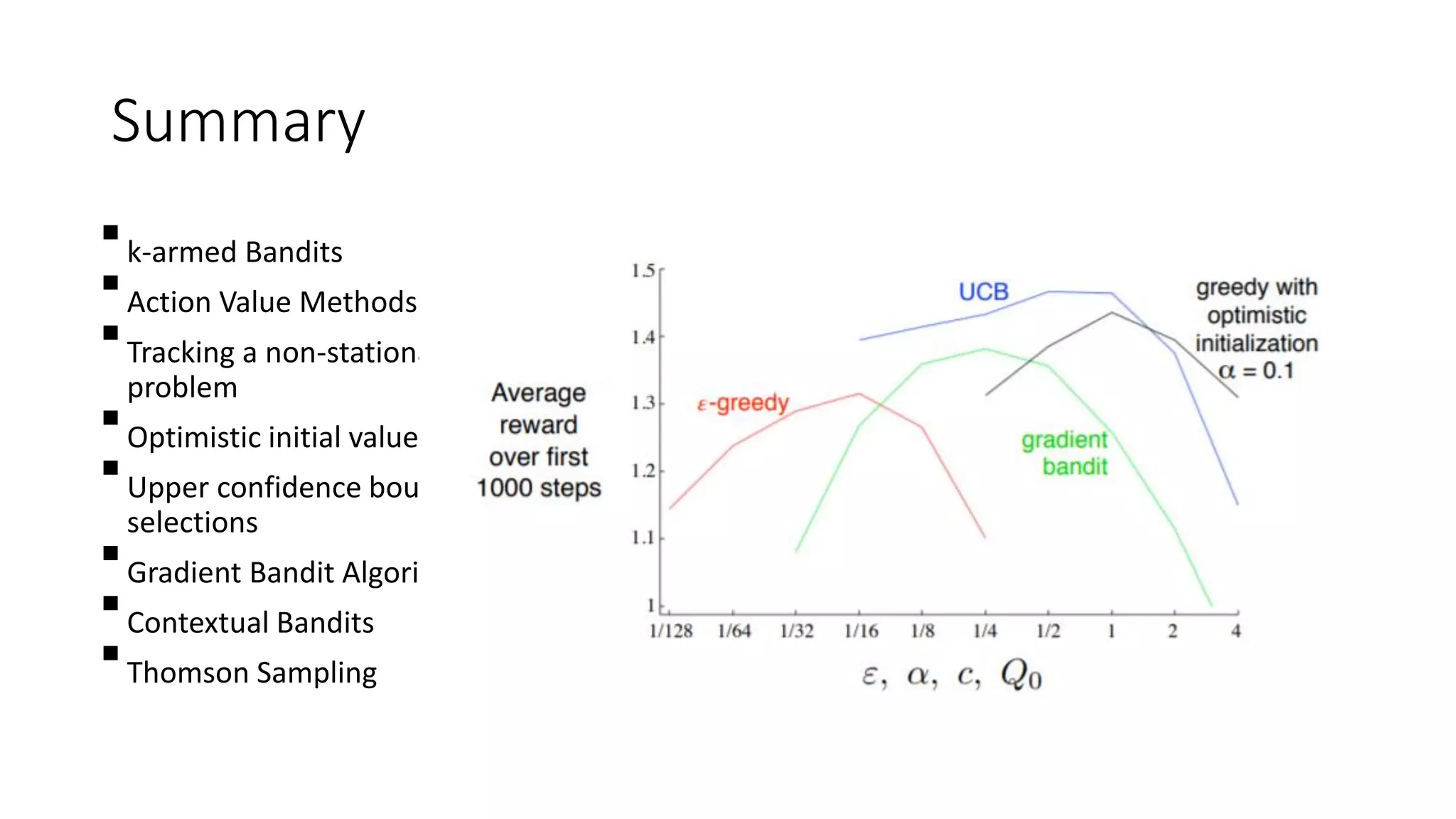

This document discusses various algorithms for multi-armed bandit problems including k-armed bandits, action value methods like epsilon-greedy, tracking non-stationary problems, optimistic initial values, upper confidence bound action selection, gradient bandit algorithms, contextual bandits, and Thomson sampling. The k-armed bandit problem involves choosing actions to maximize reward over time without knowing the expected reward of each action. The document outlines methods for balancing exploration of unknown actions with exploitation of best known actions.