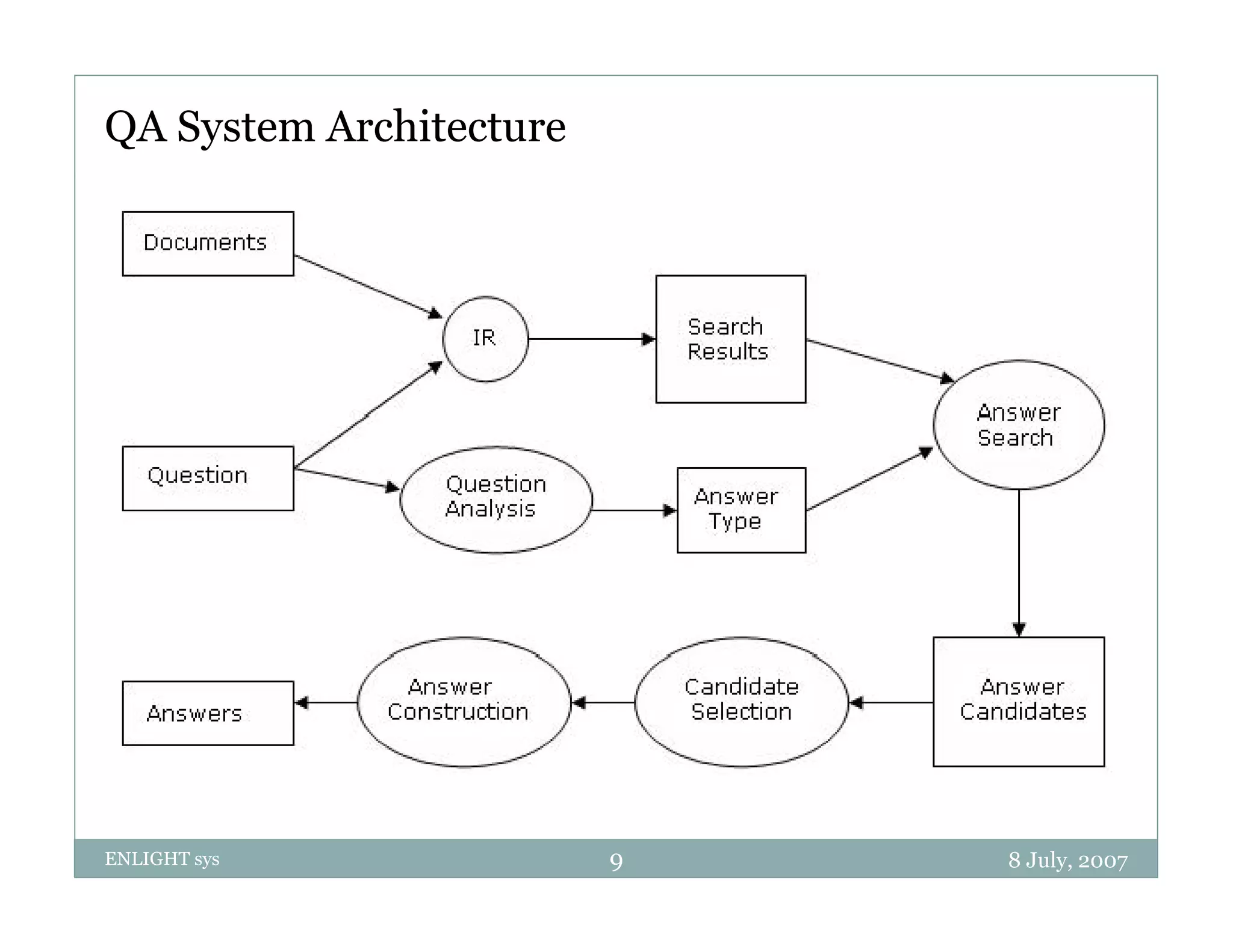

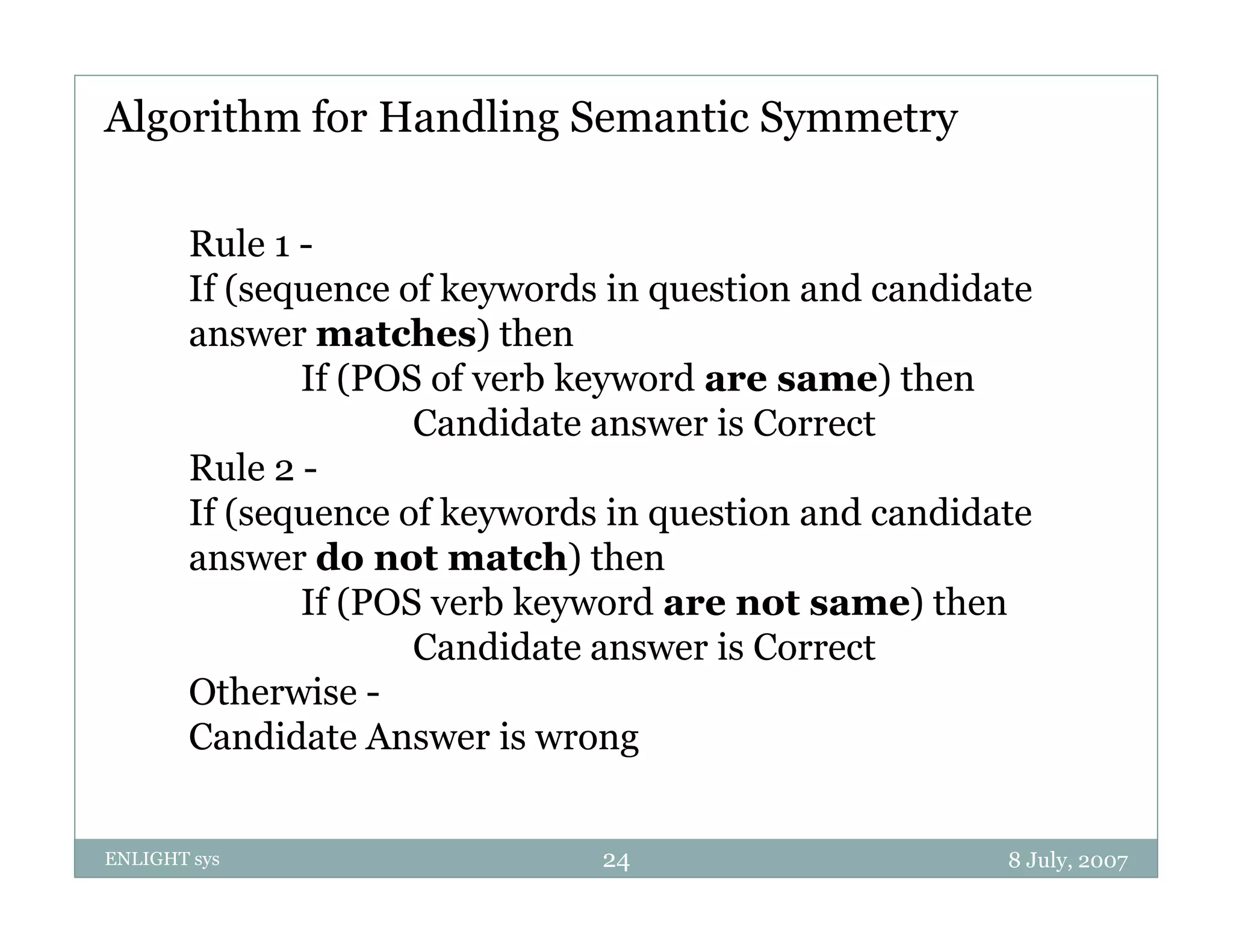

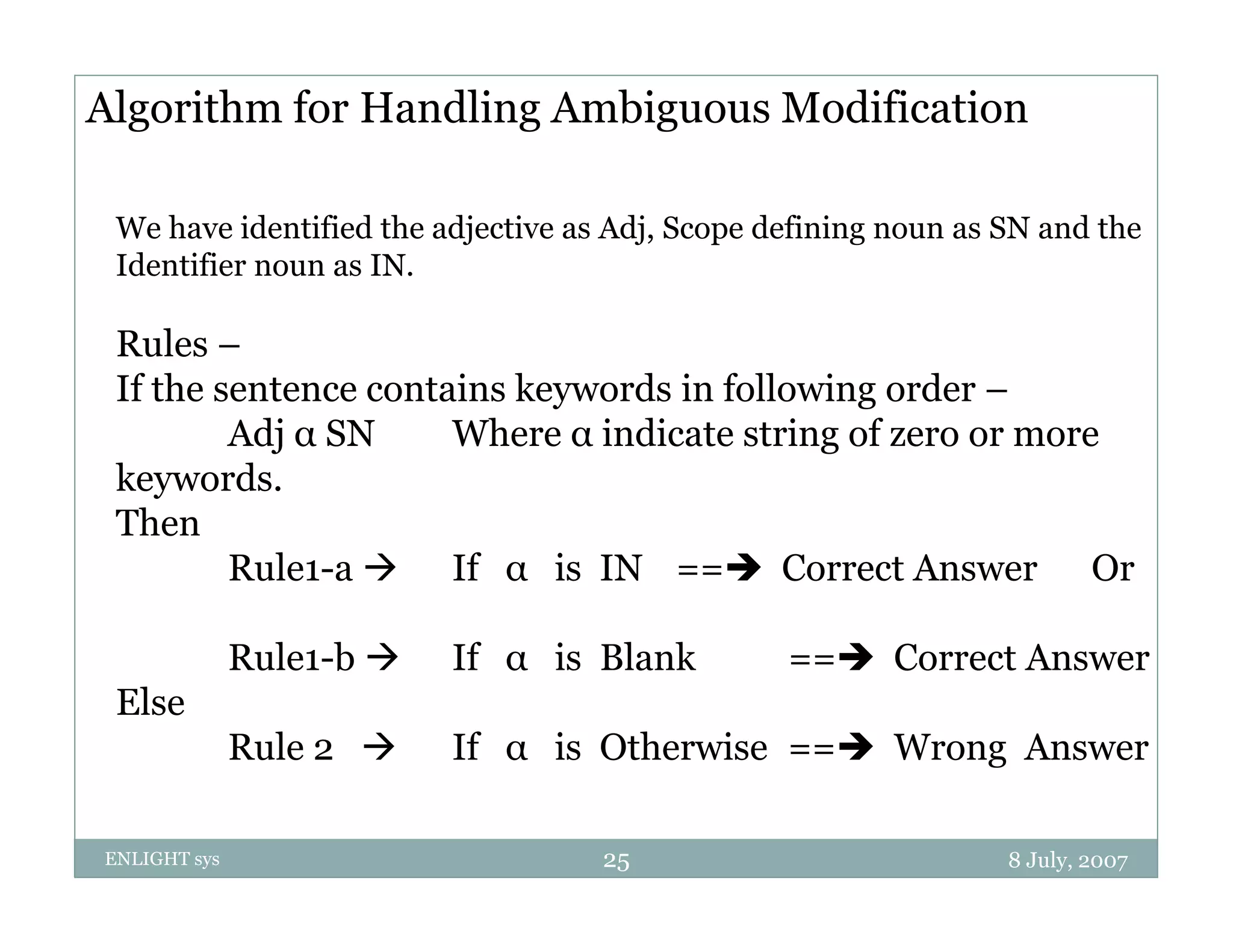

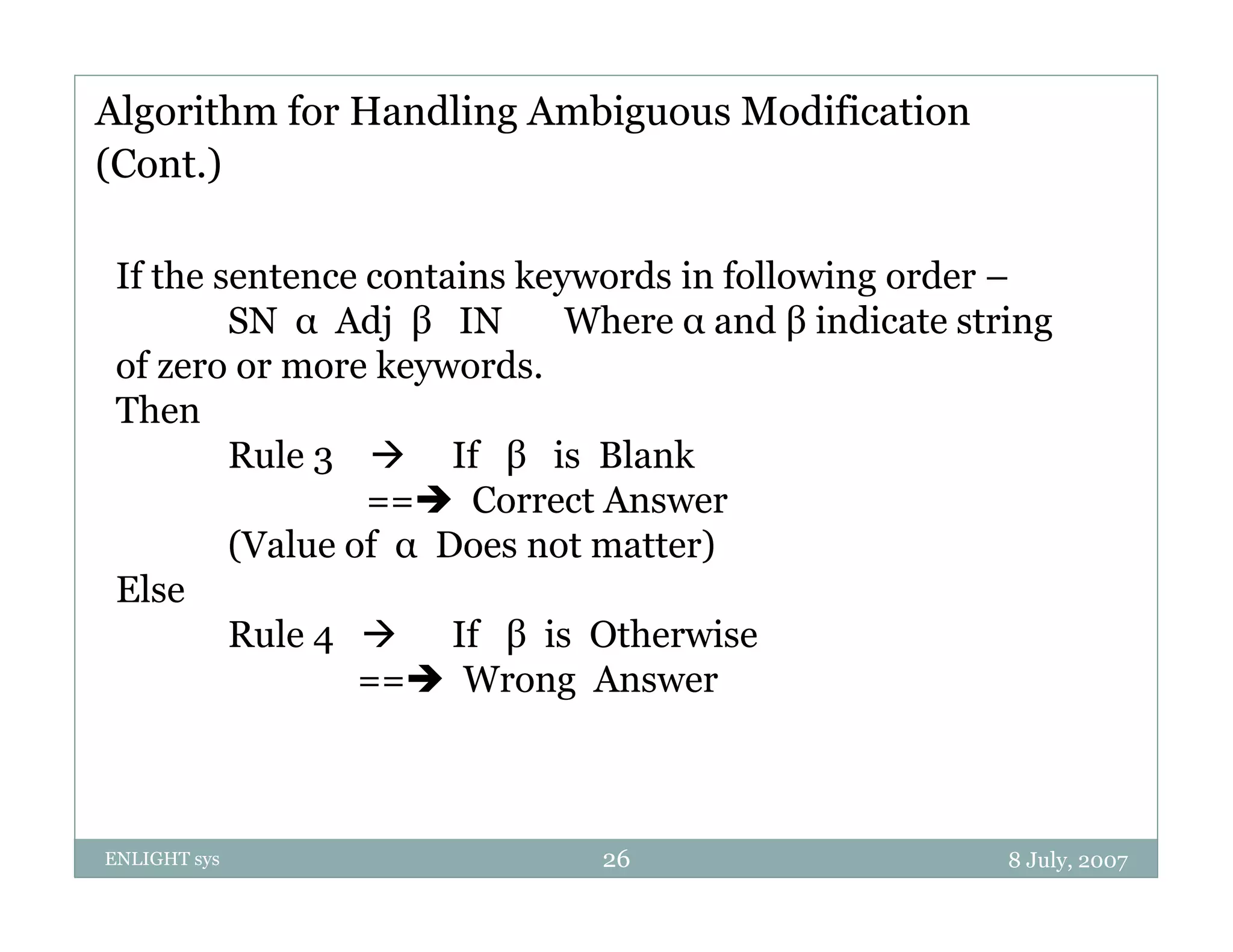

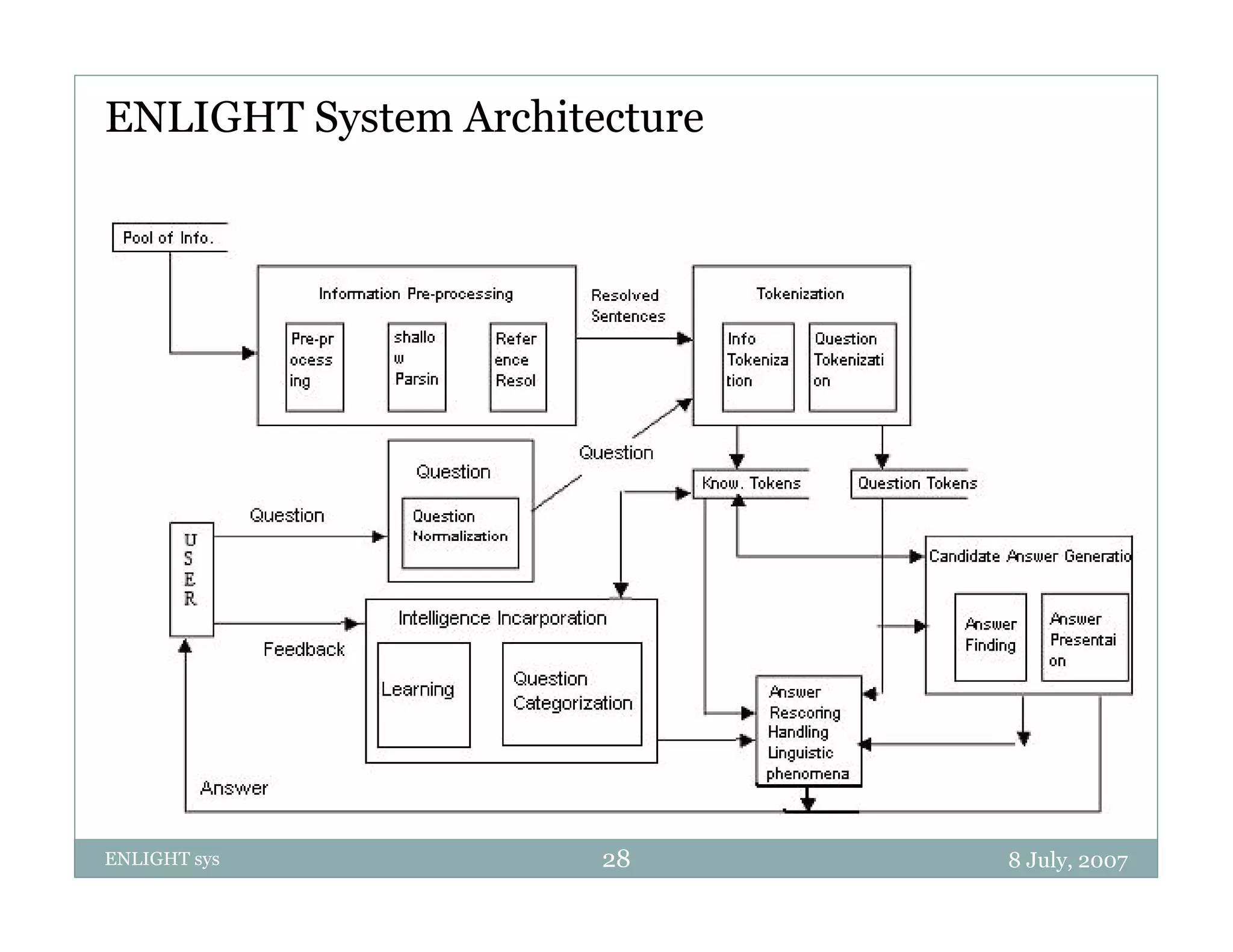

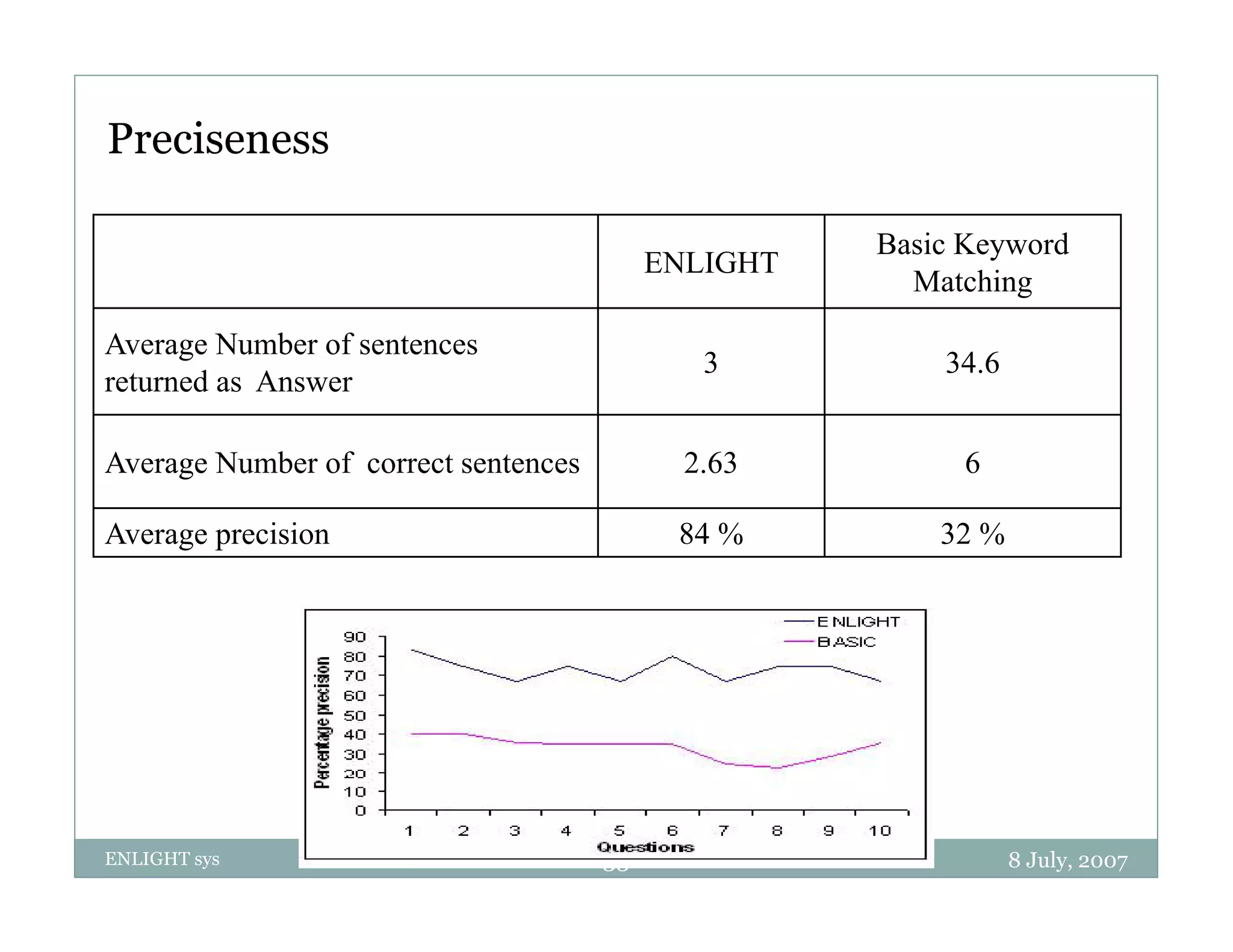

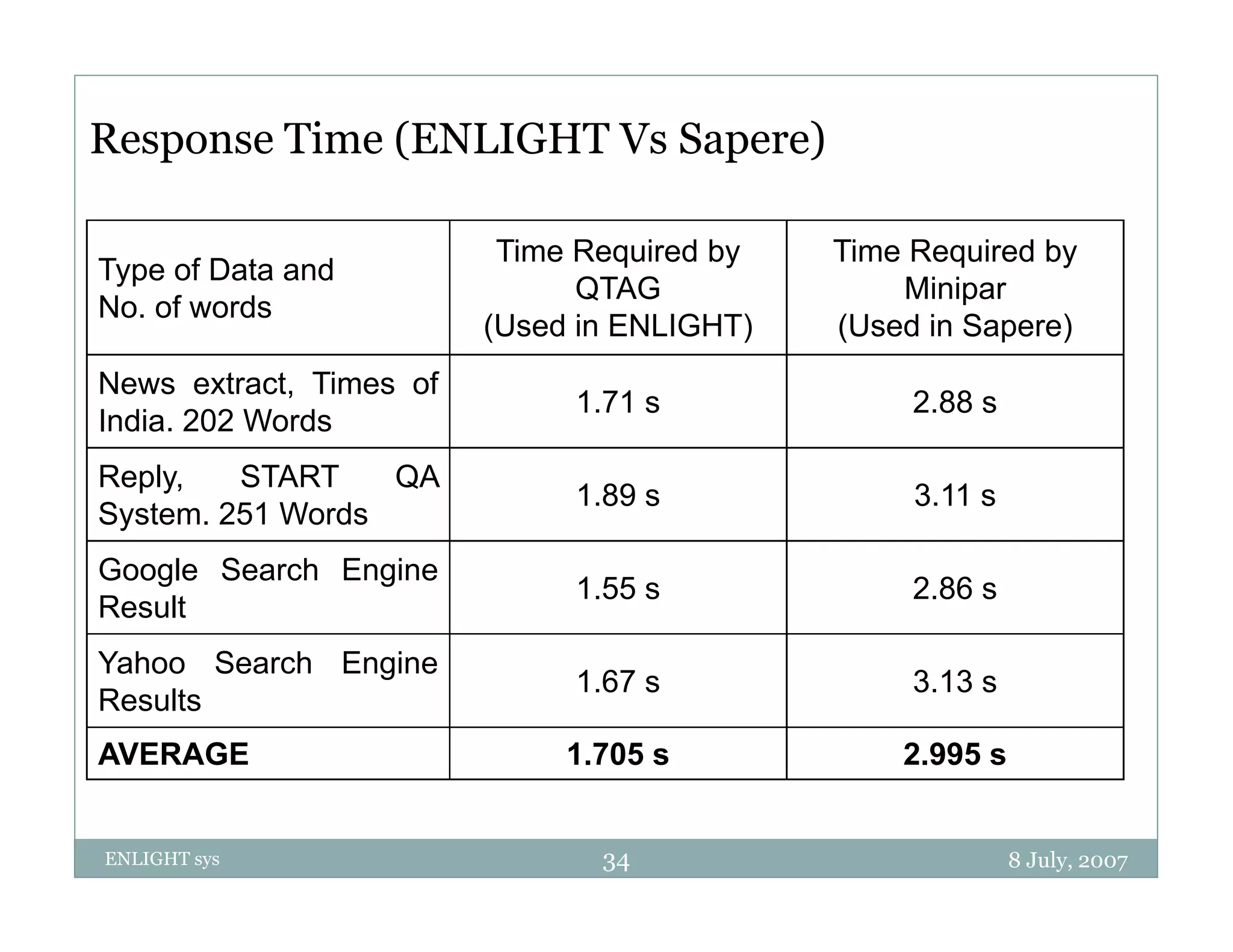

The document describes an intelligent natural language question answering system called ENLIGHT. It discusses what question answering is, how it relates to information retrieval and information extraction. It then covers the general approach taken by question answering systems, including question analysis, document retrieval and processing, answer extraction and construction. It also discusses techniques used by ENLIGHT like handling semantic symmetry, ambiguous modification and incorporating learning. ENLIGHT is shown to have better precision and faster response time compared to other systems.