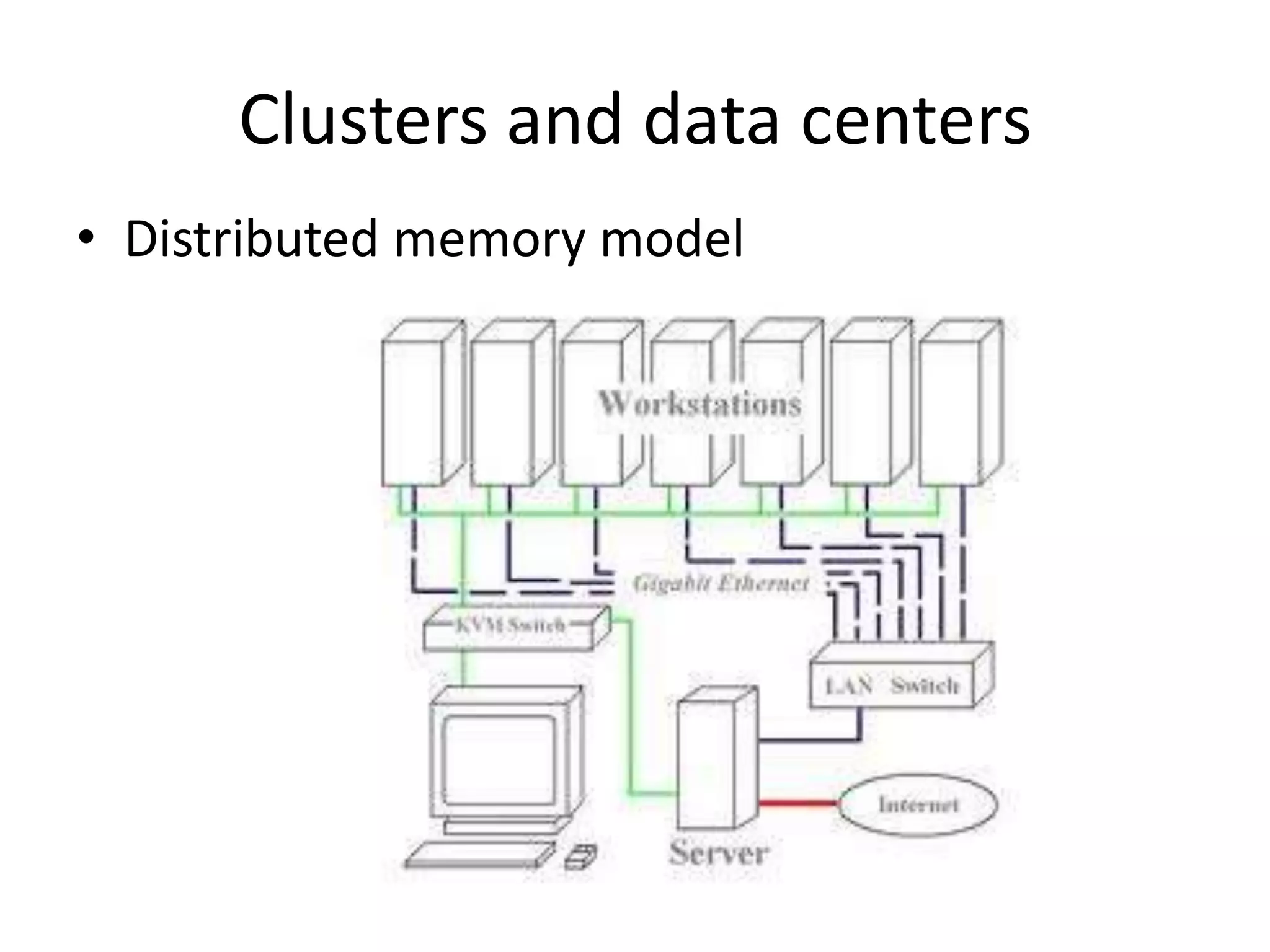

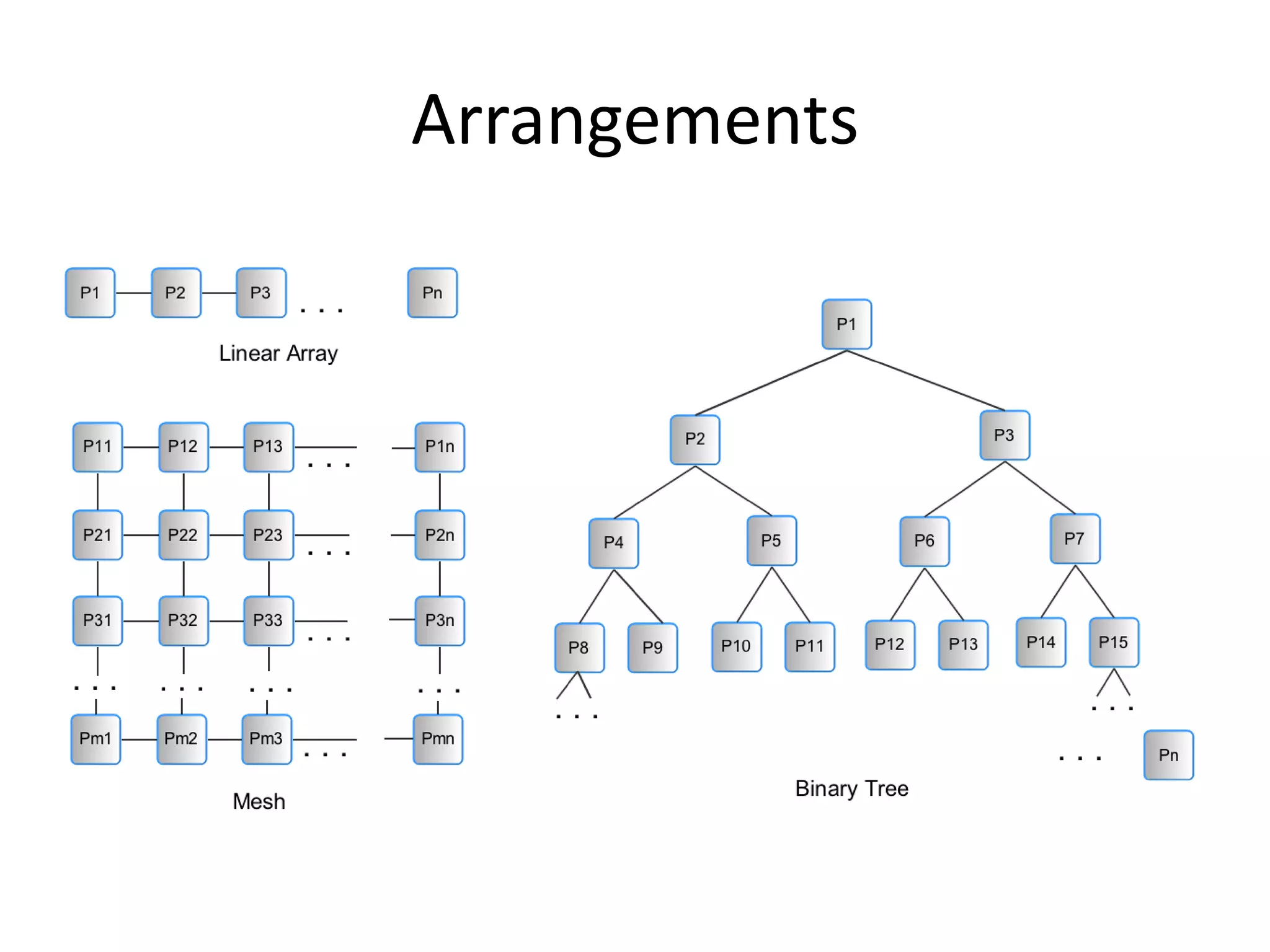

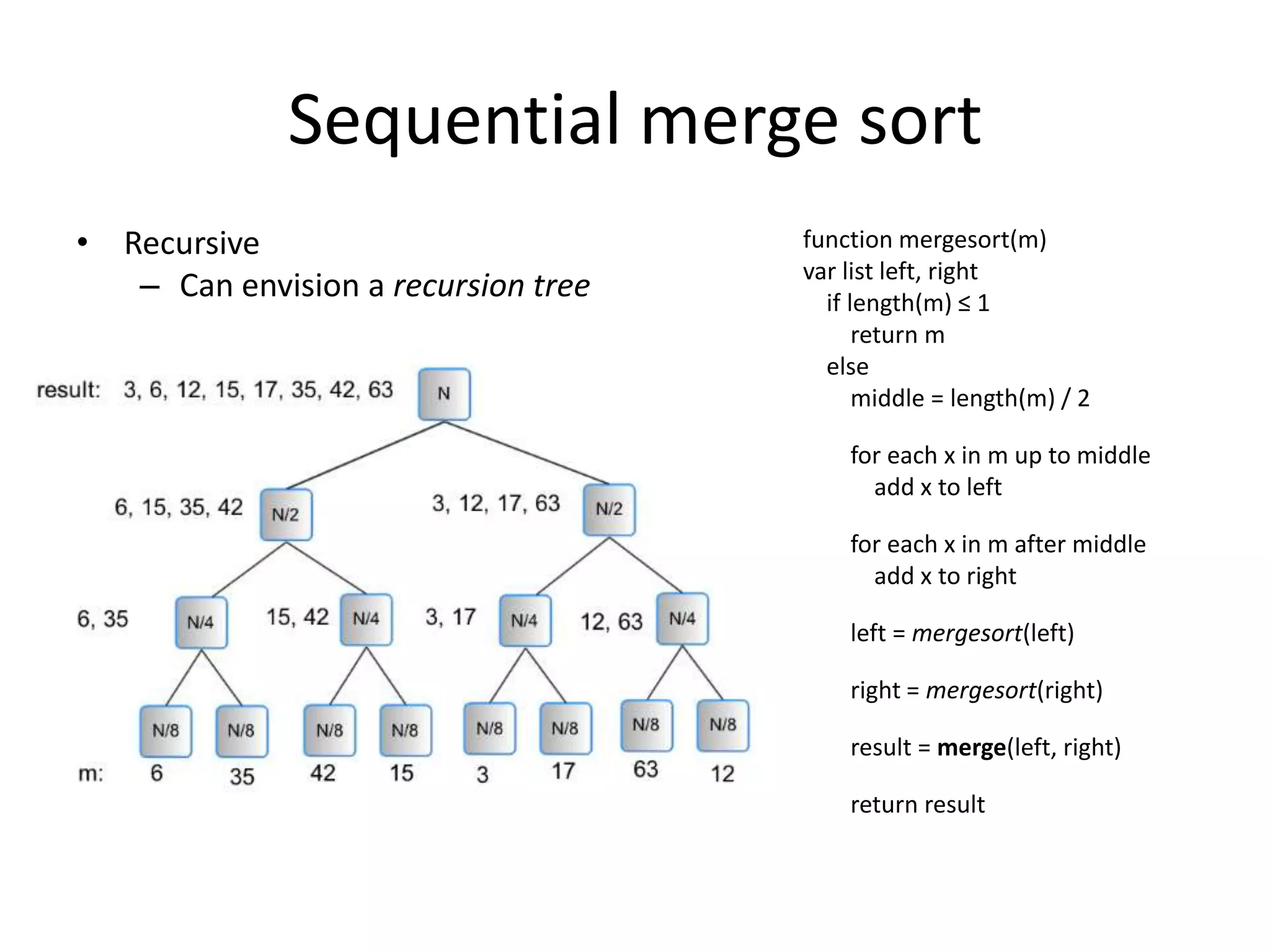

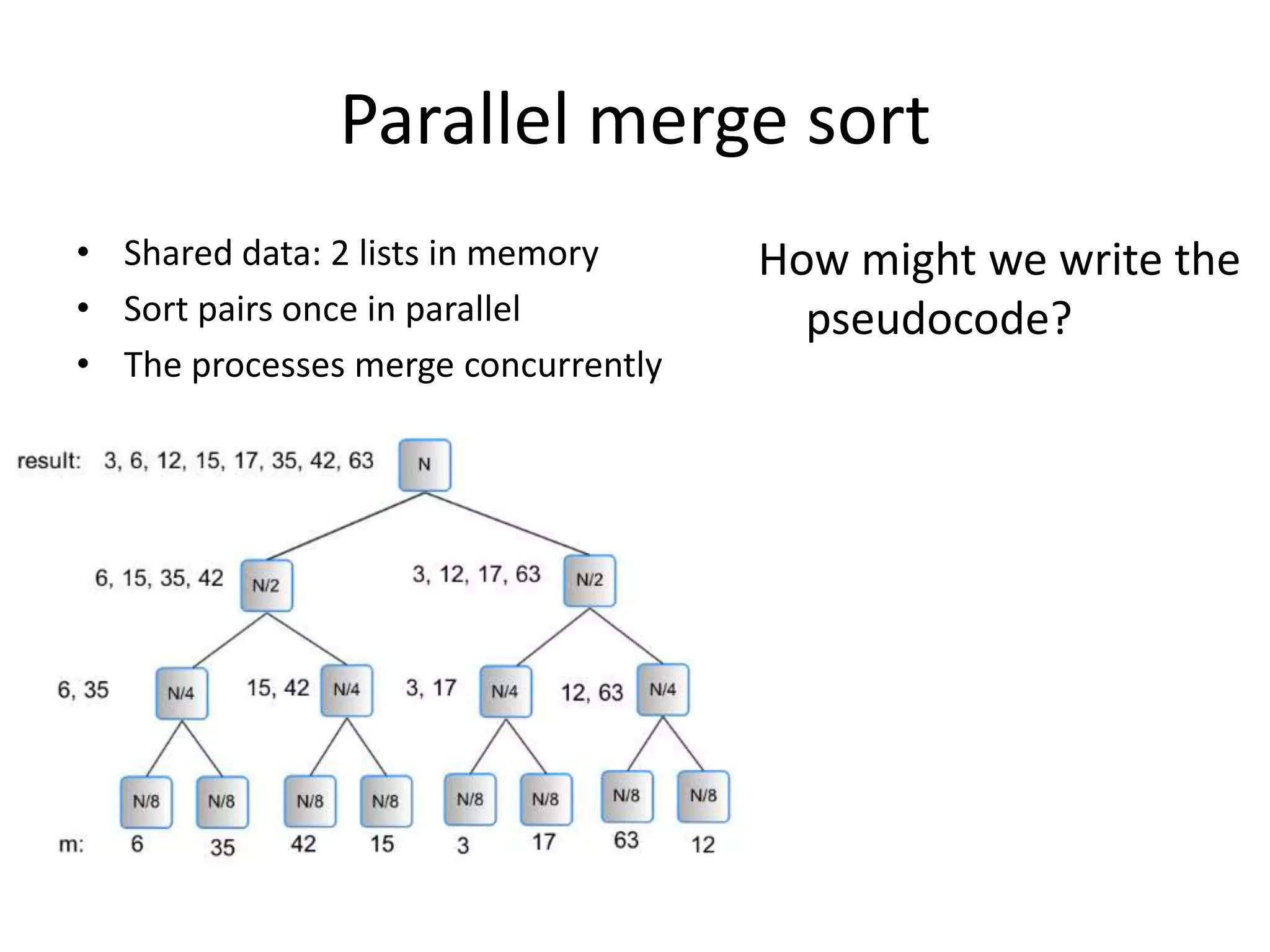

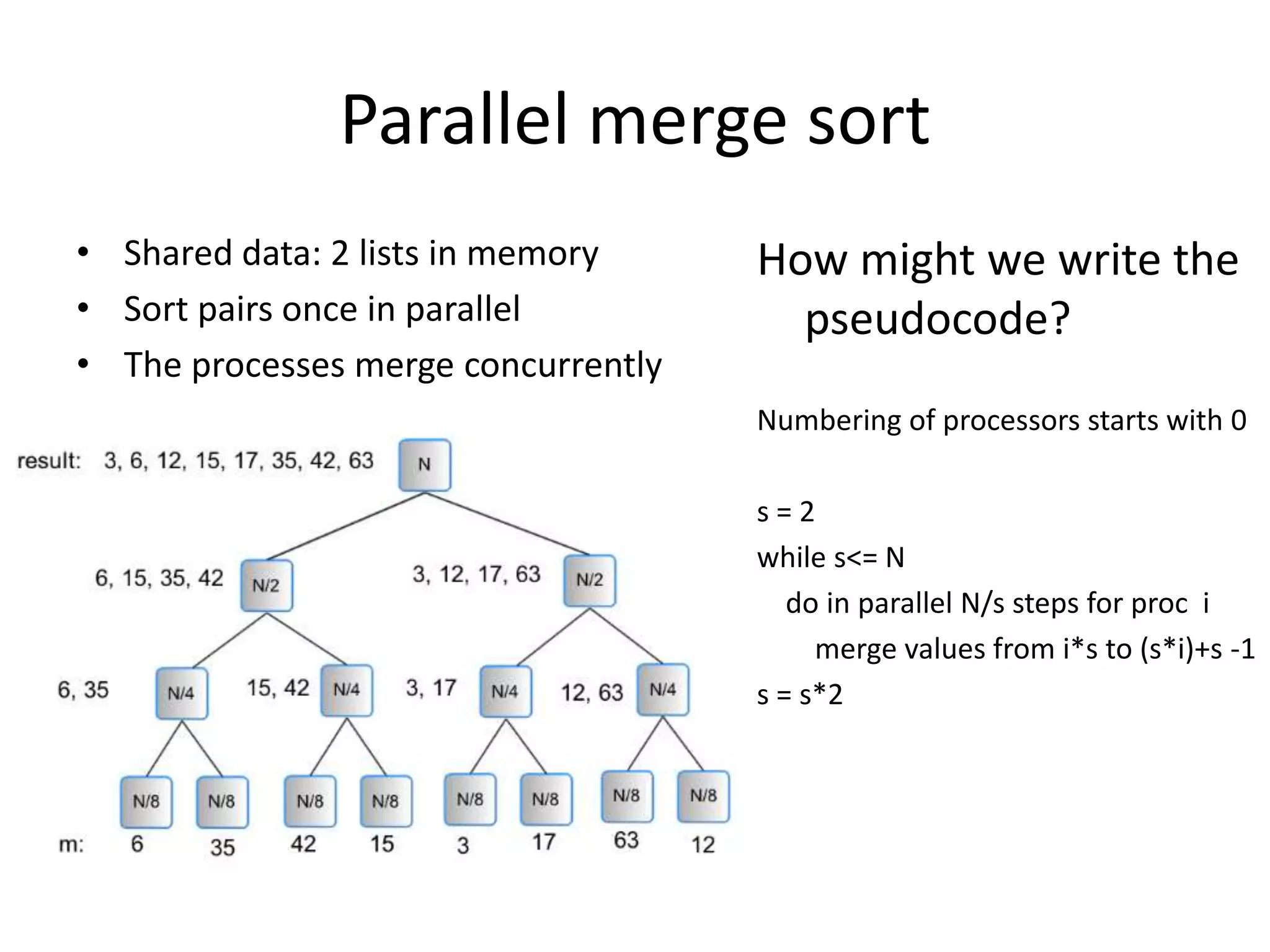

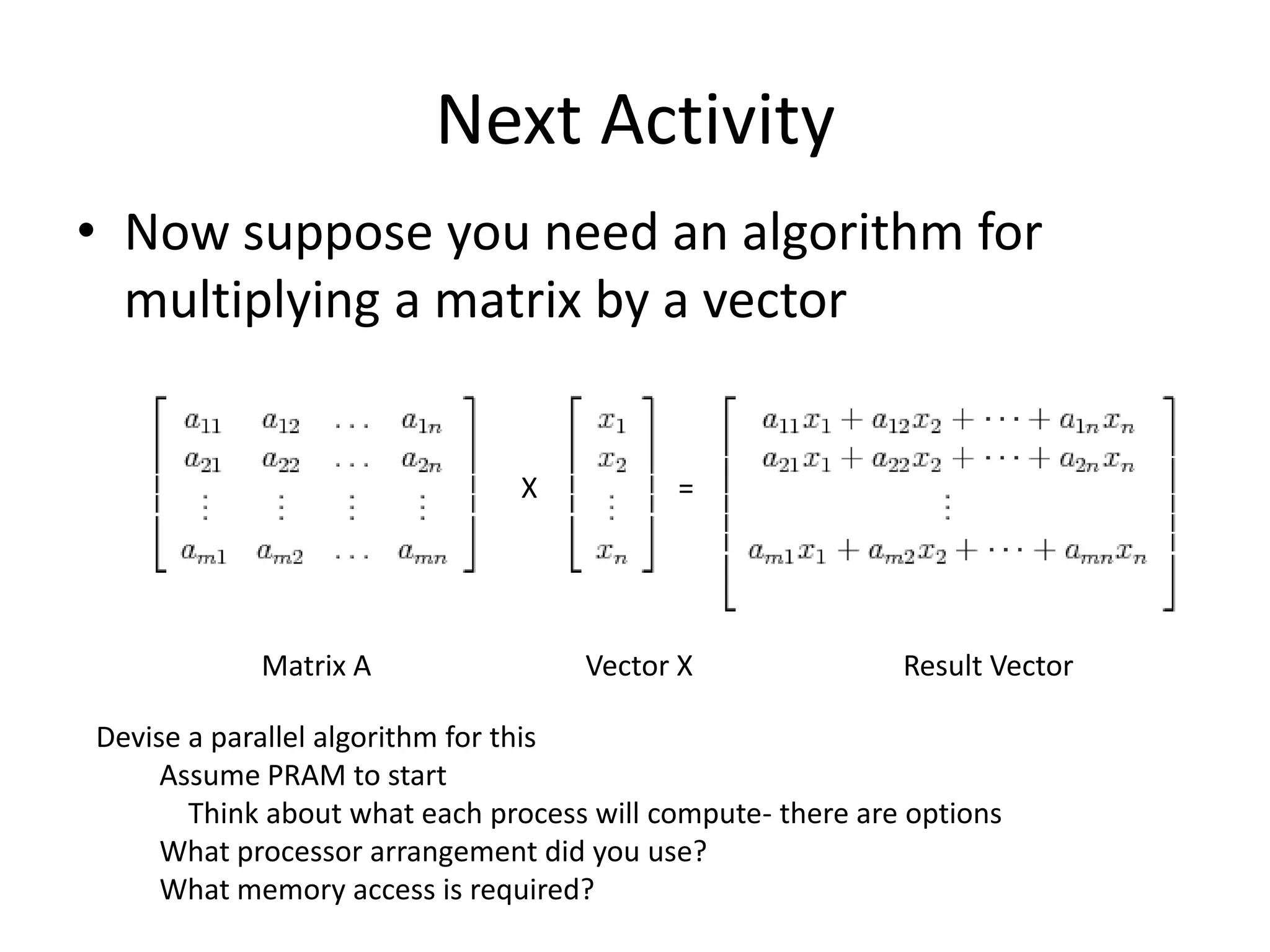

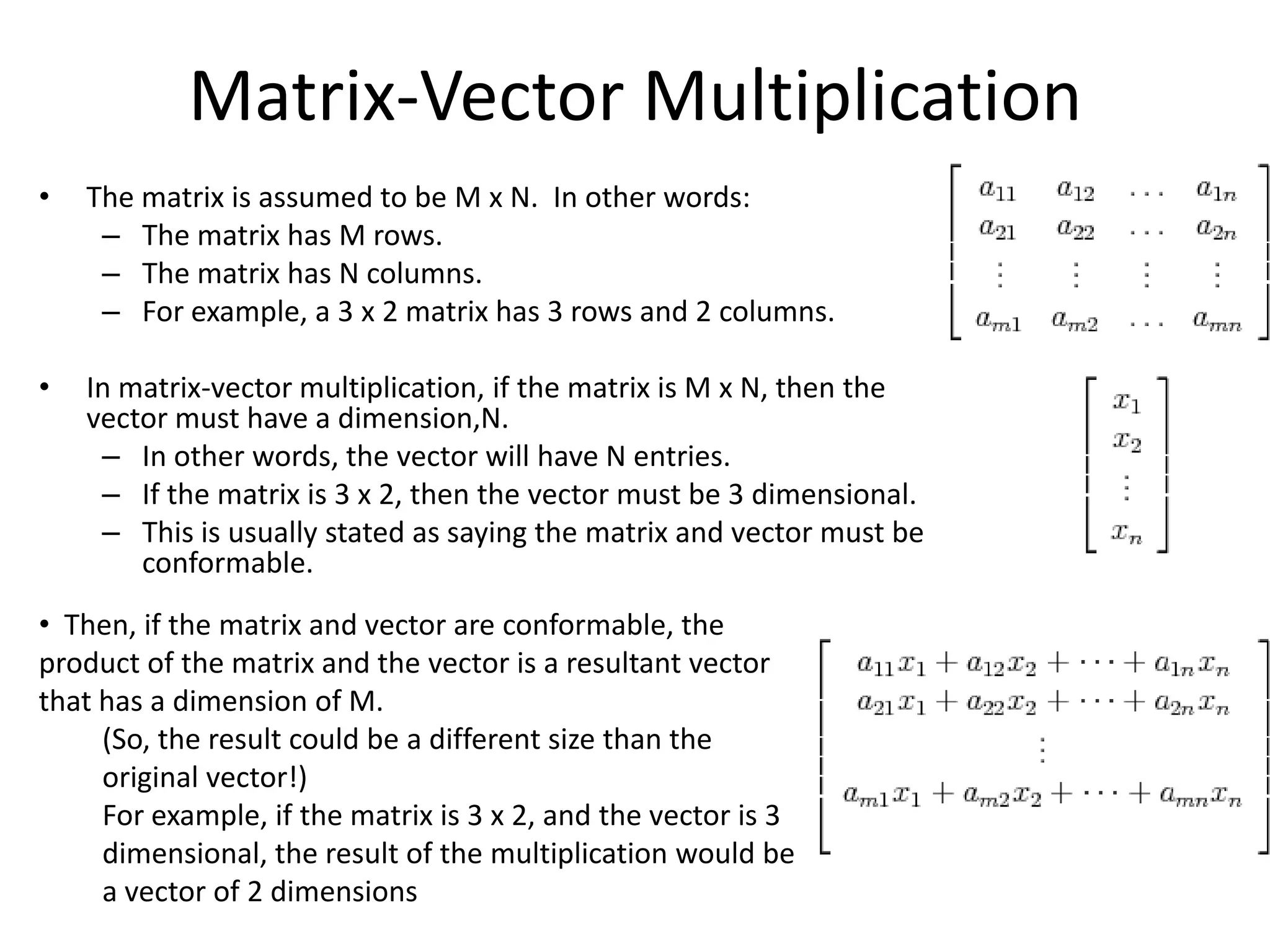

The document discusses parallel algorithms and parallel computing. It begins by explaining that as we consider parallel algorithms, we must understand the hardware they will run on. It then provides some history of parallel processing, noting that since 2005 multicore processors have become common. It introduces the PRAM model of parallel computation and discusses how this theoretical model can be useful for designing parallel algorithms even if not reflective of actual hardware. It provides examples of designing parallel sorting and summing algorithms based on this model.