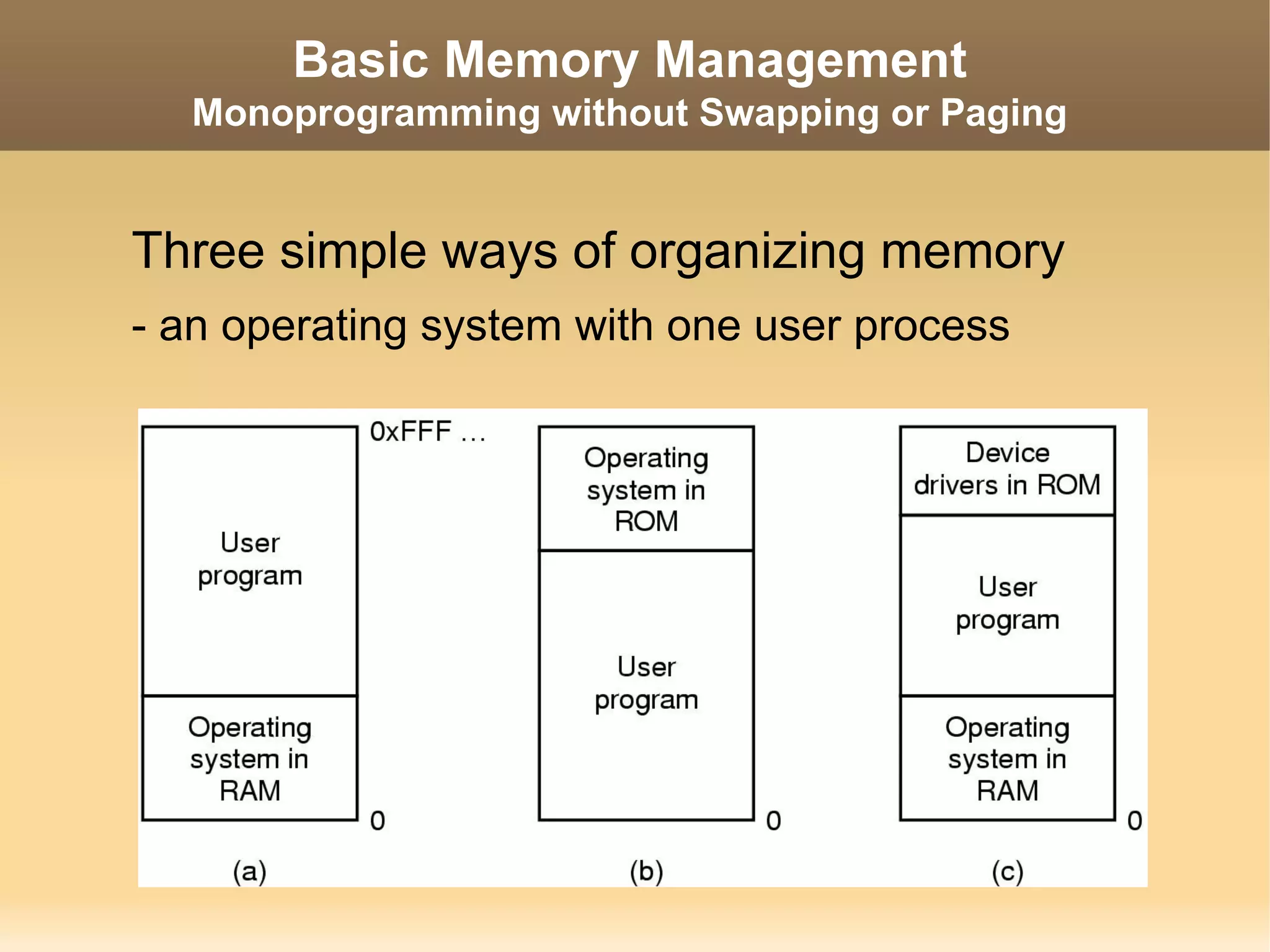

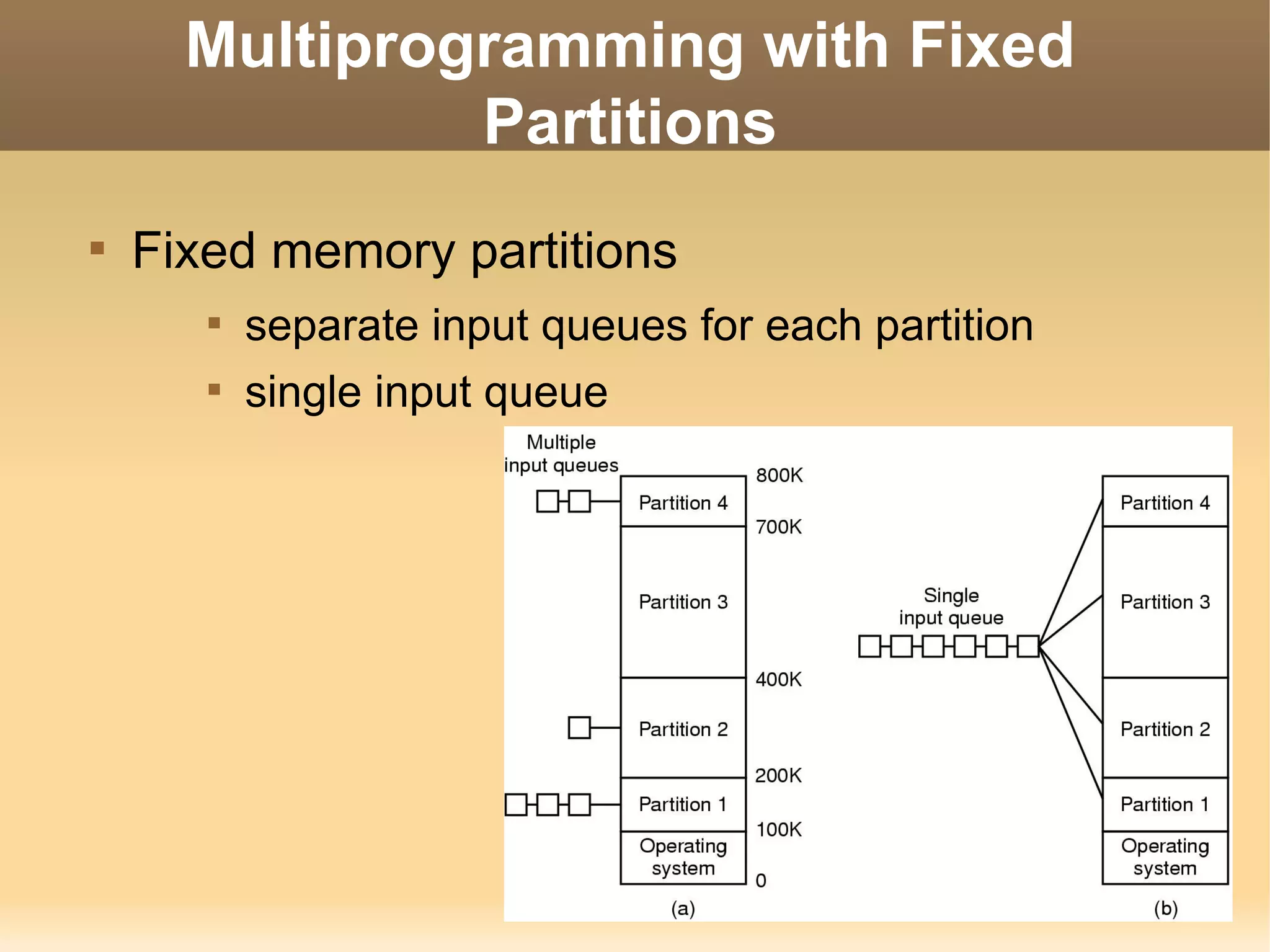

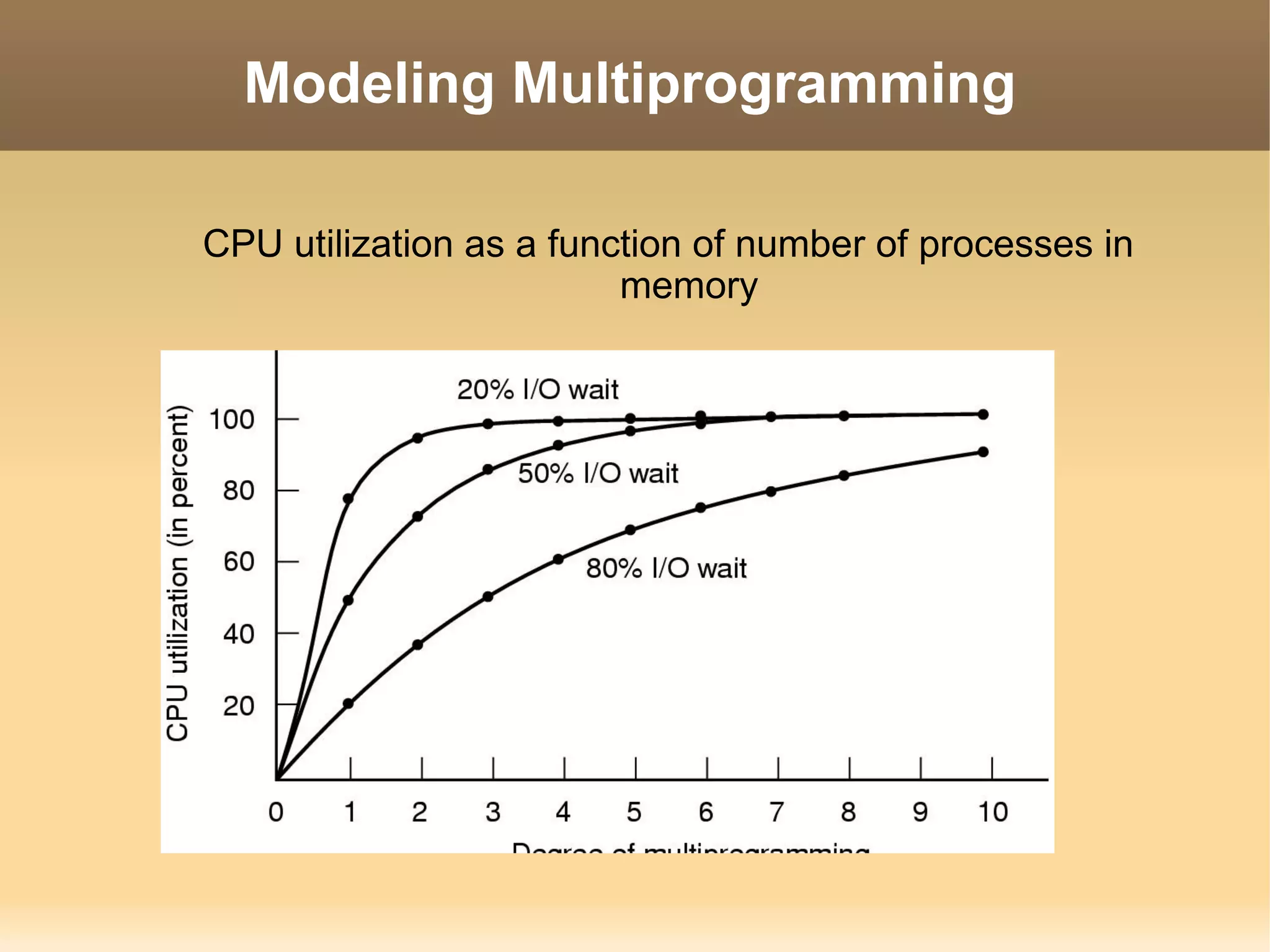

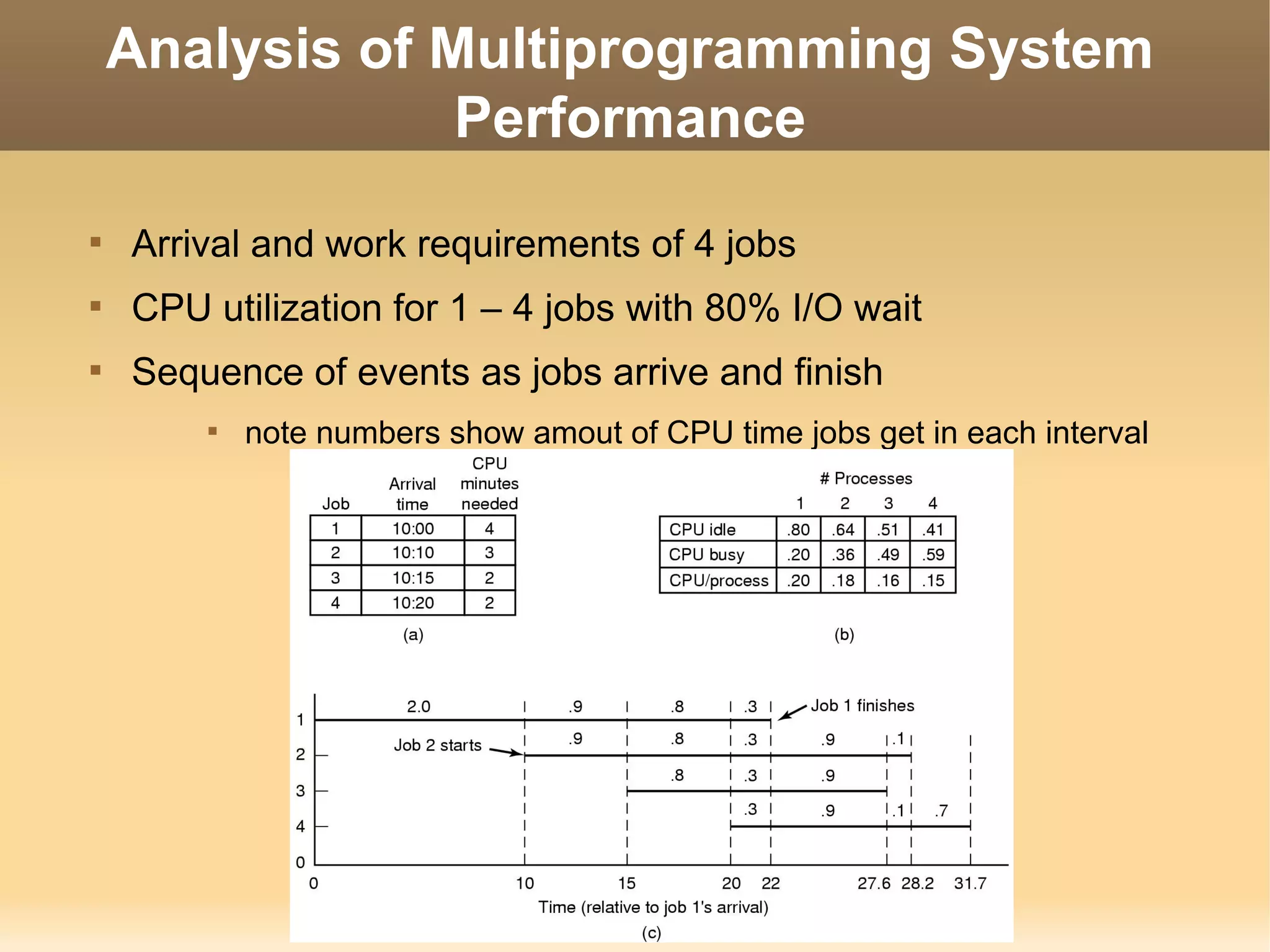

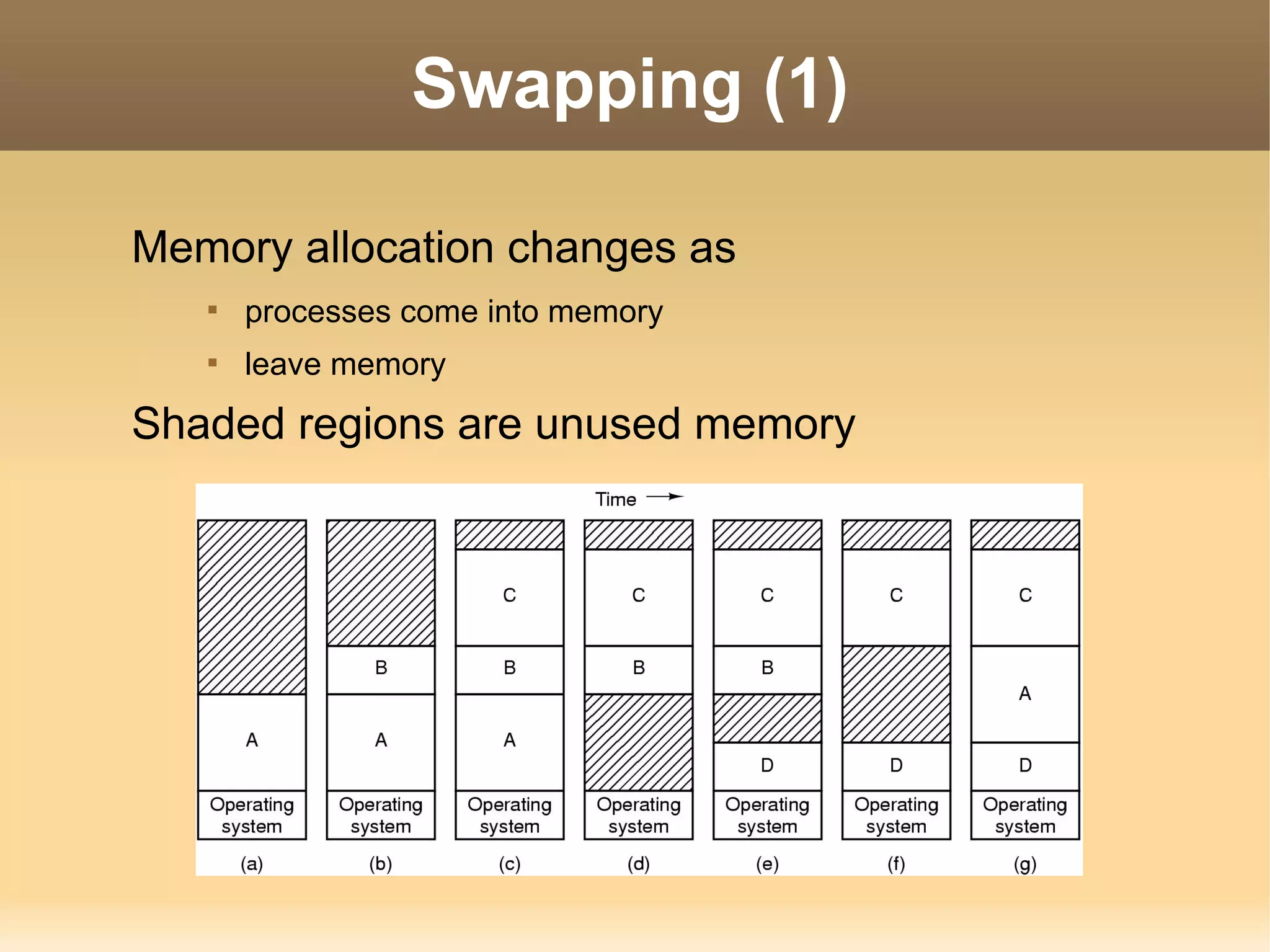

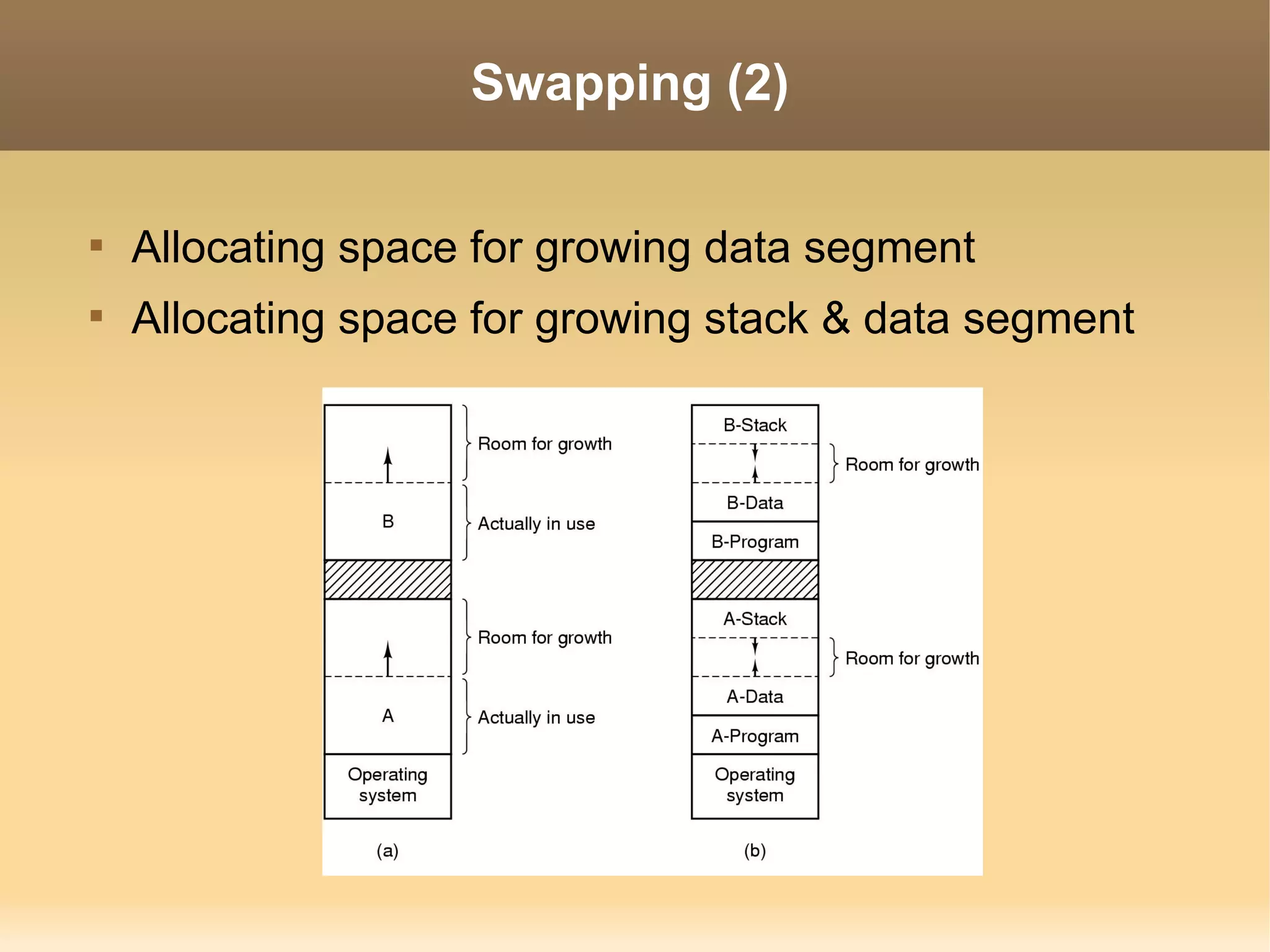

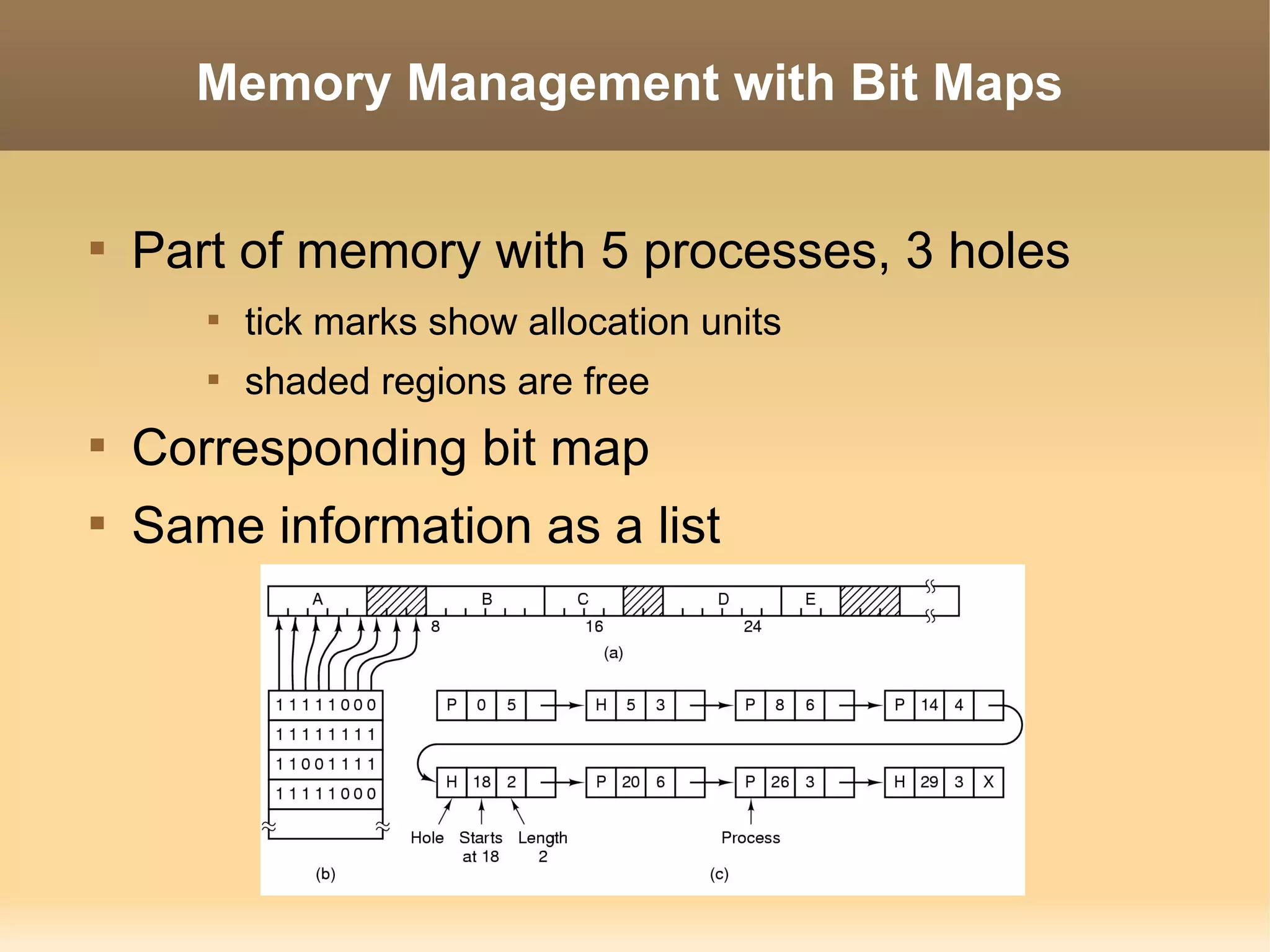

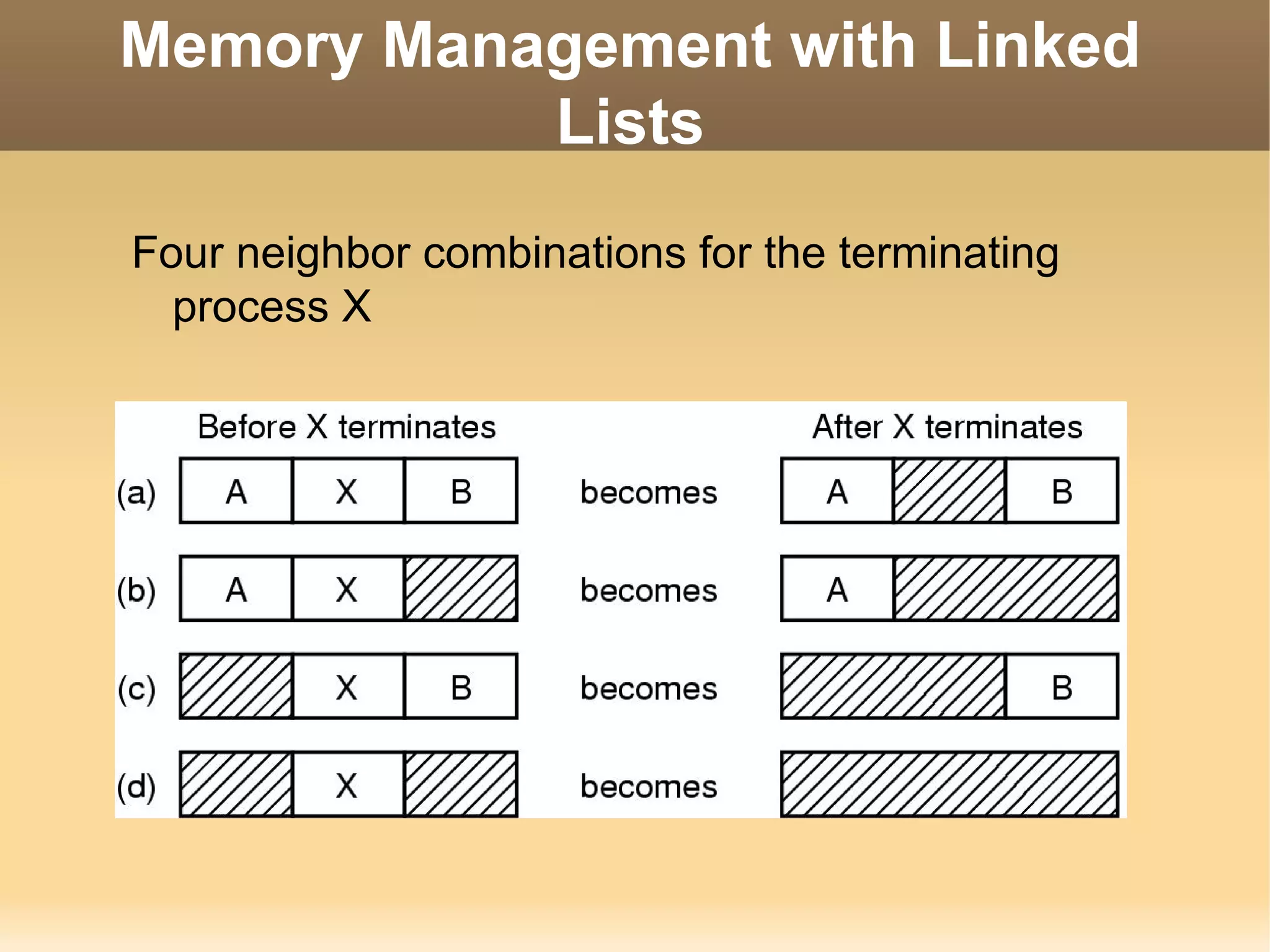

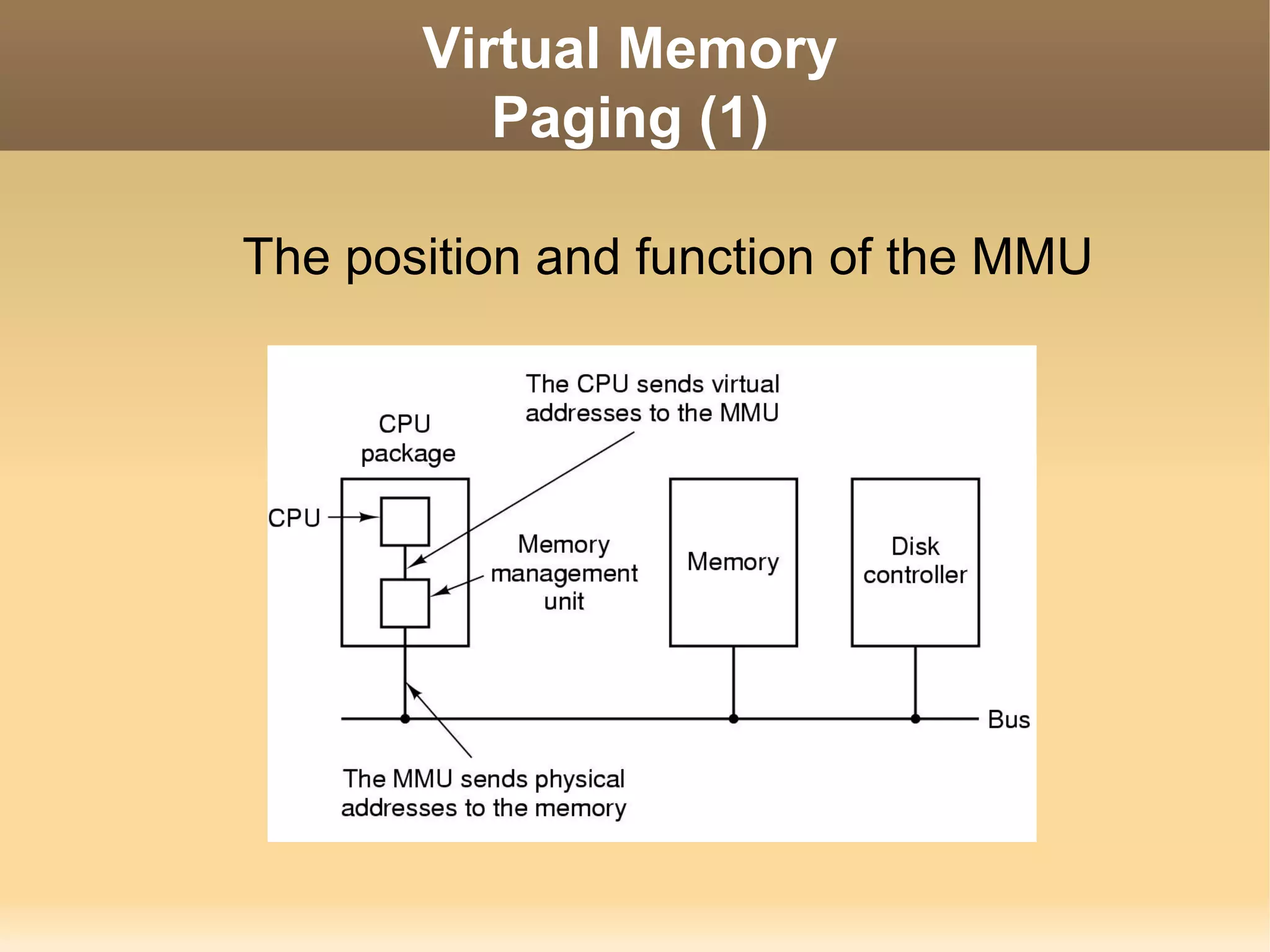

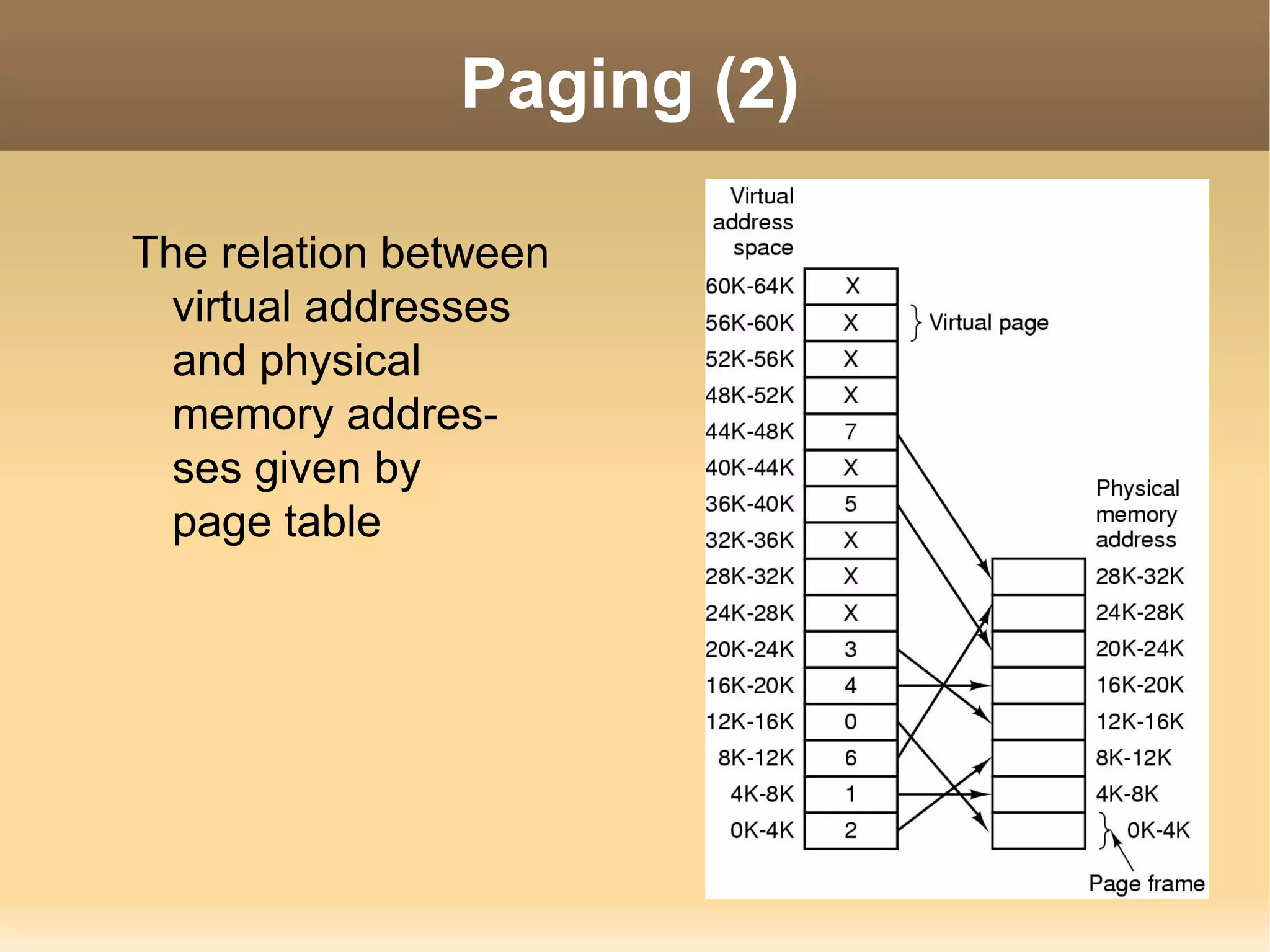

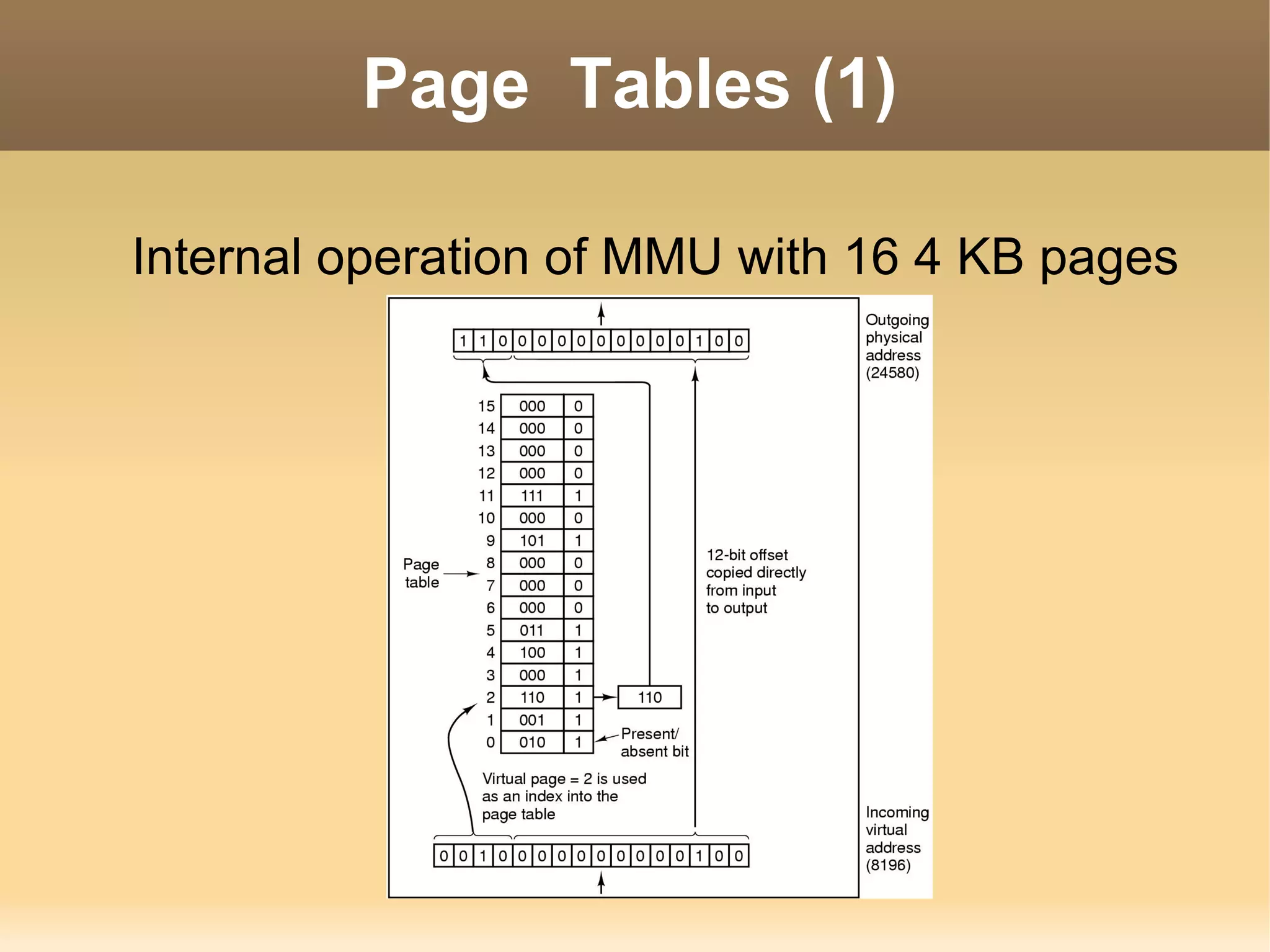

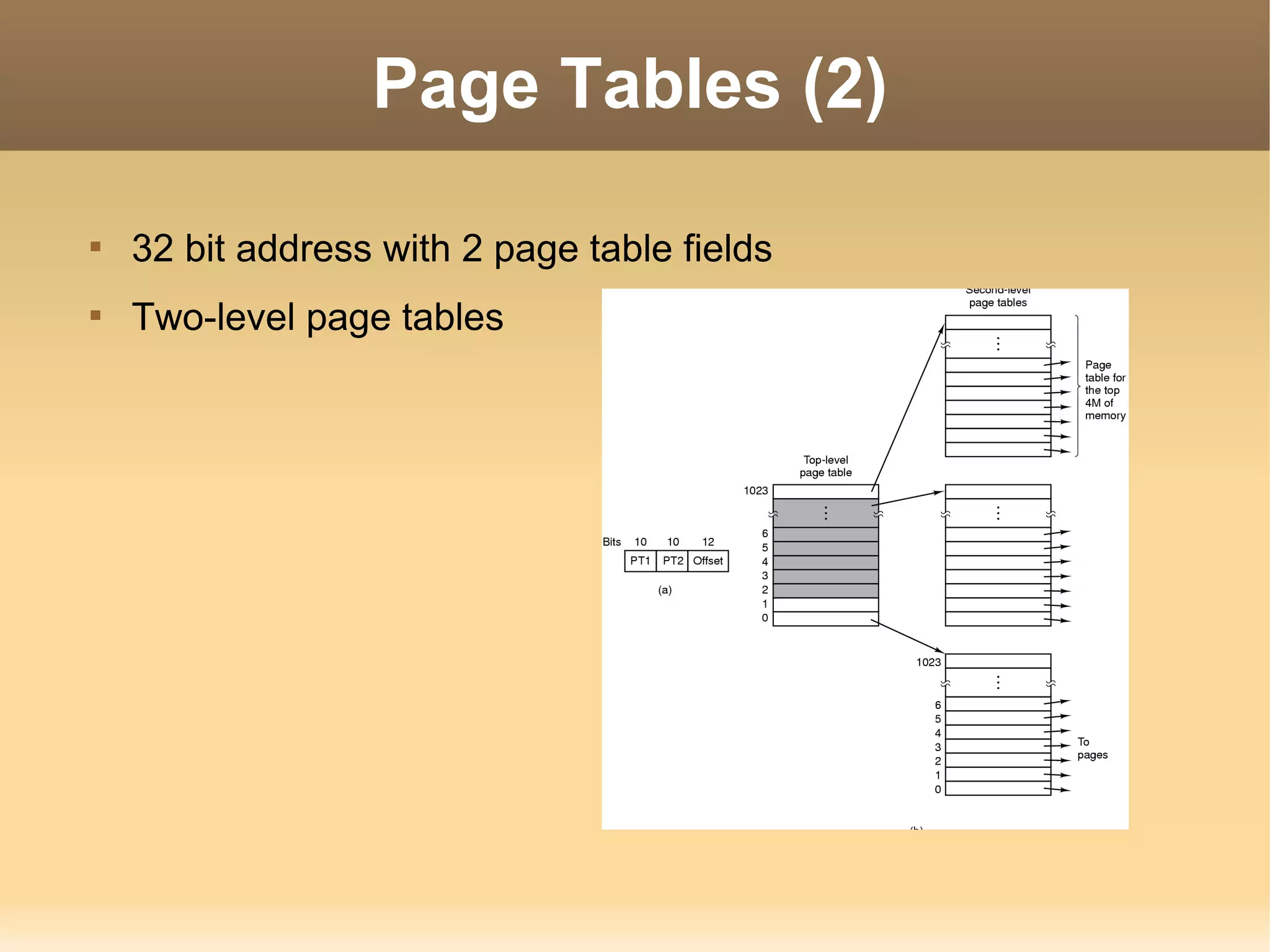

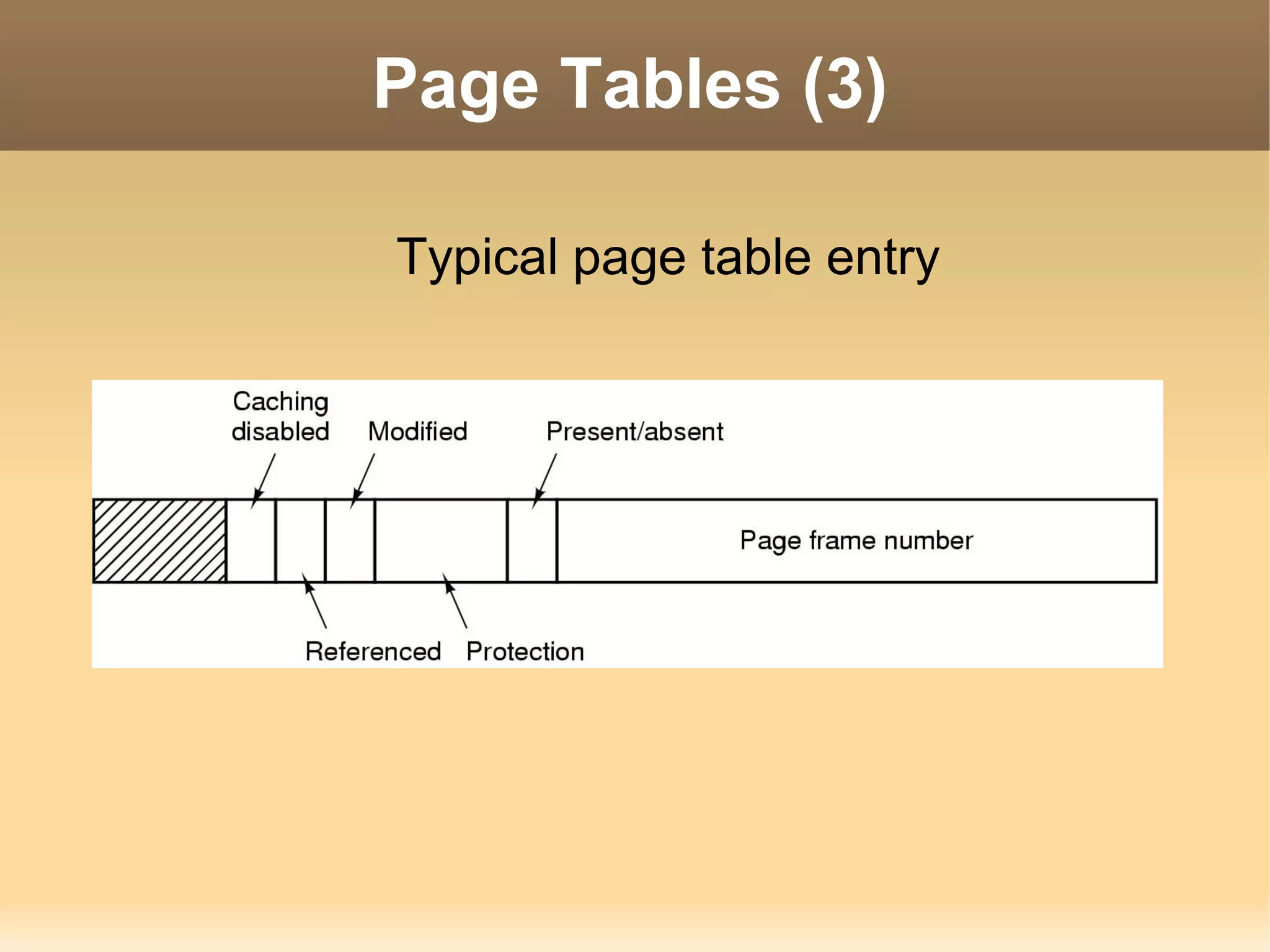

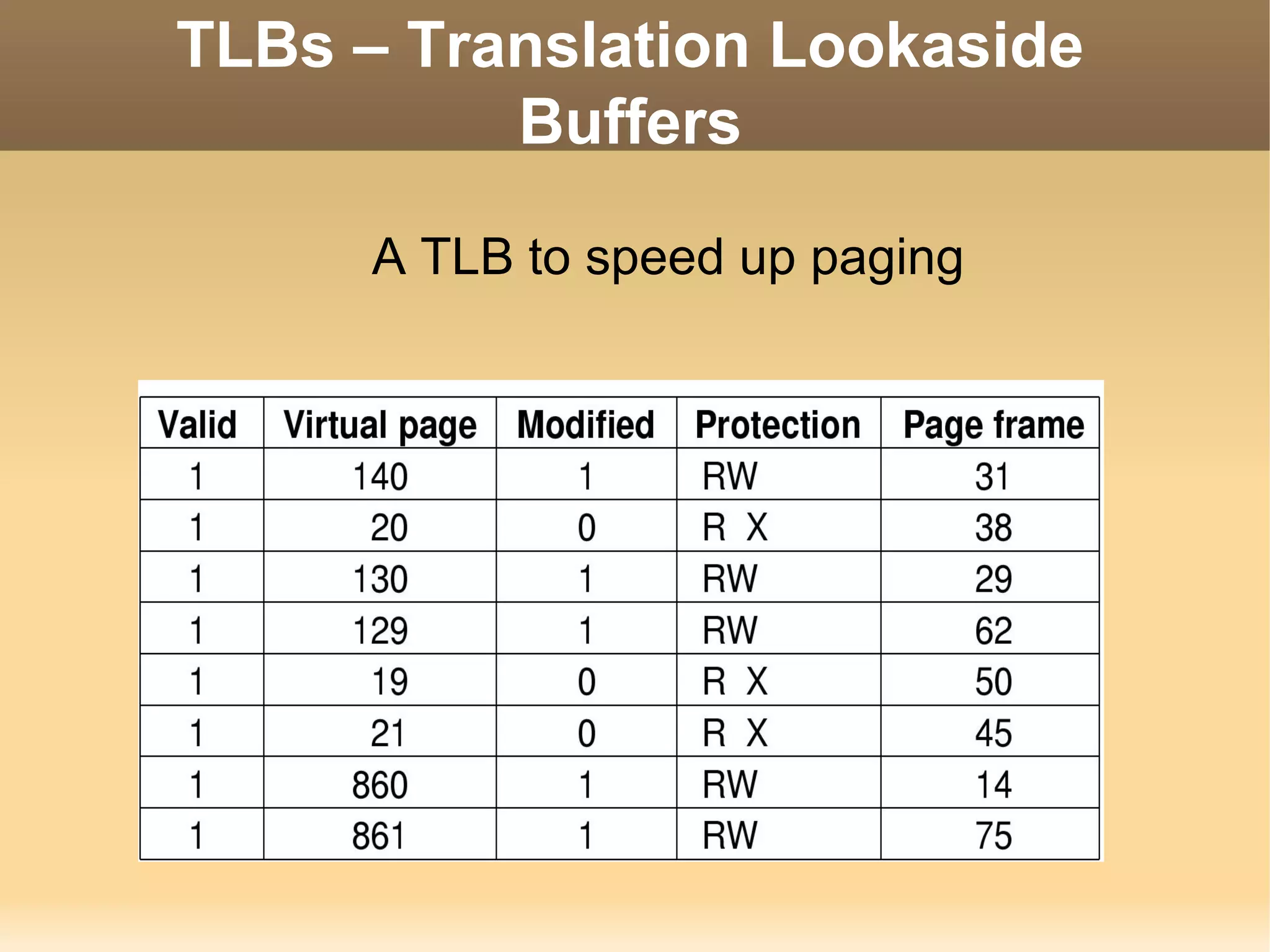

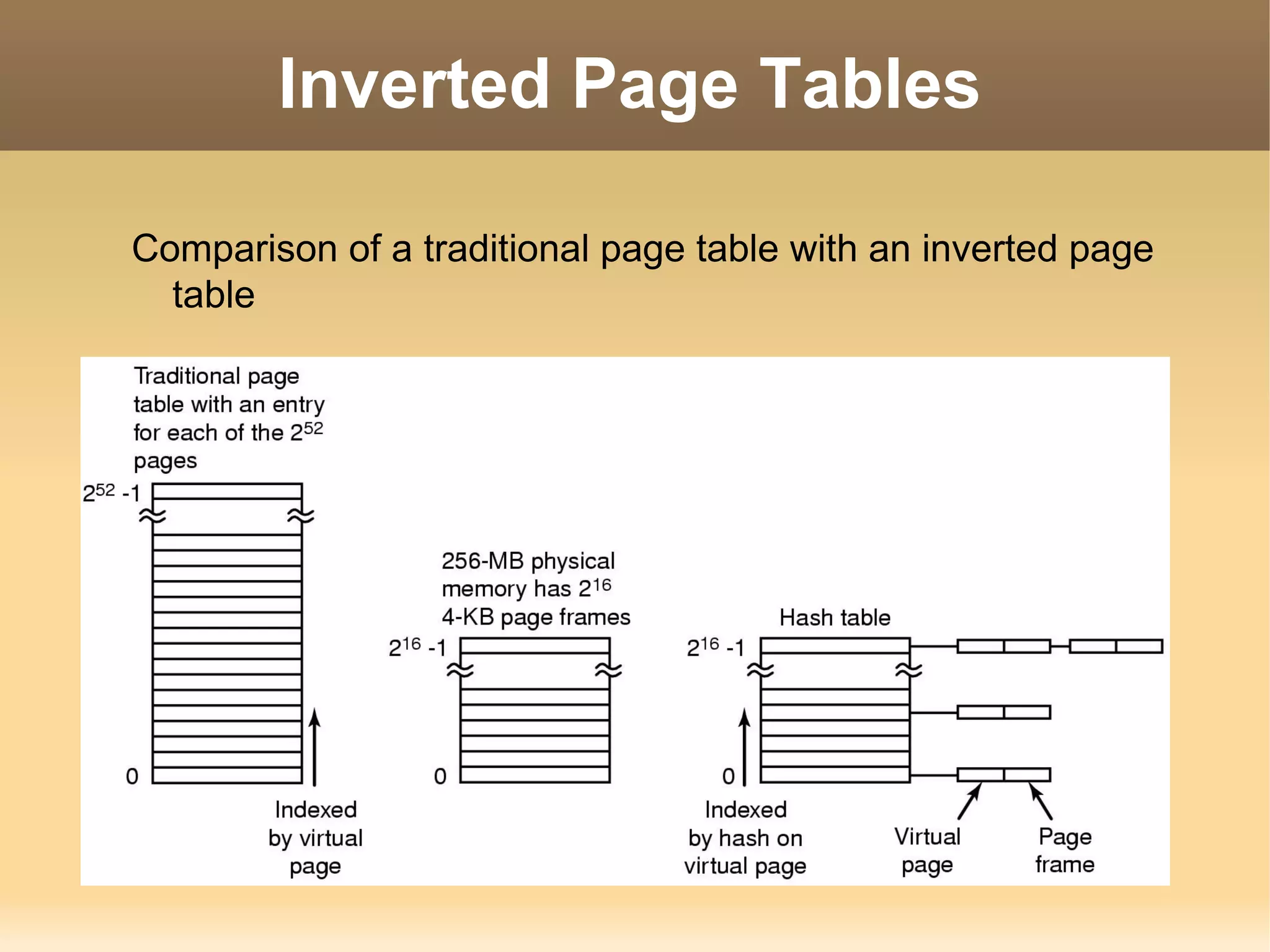

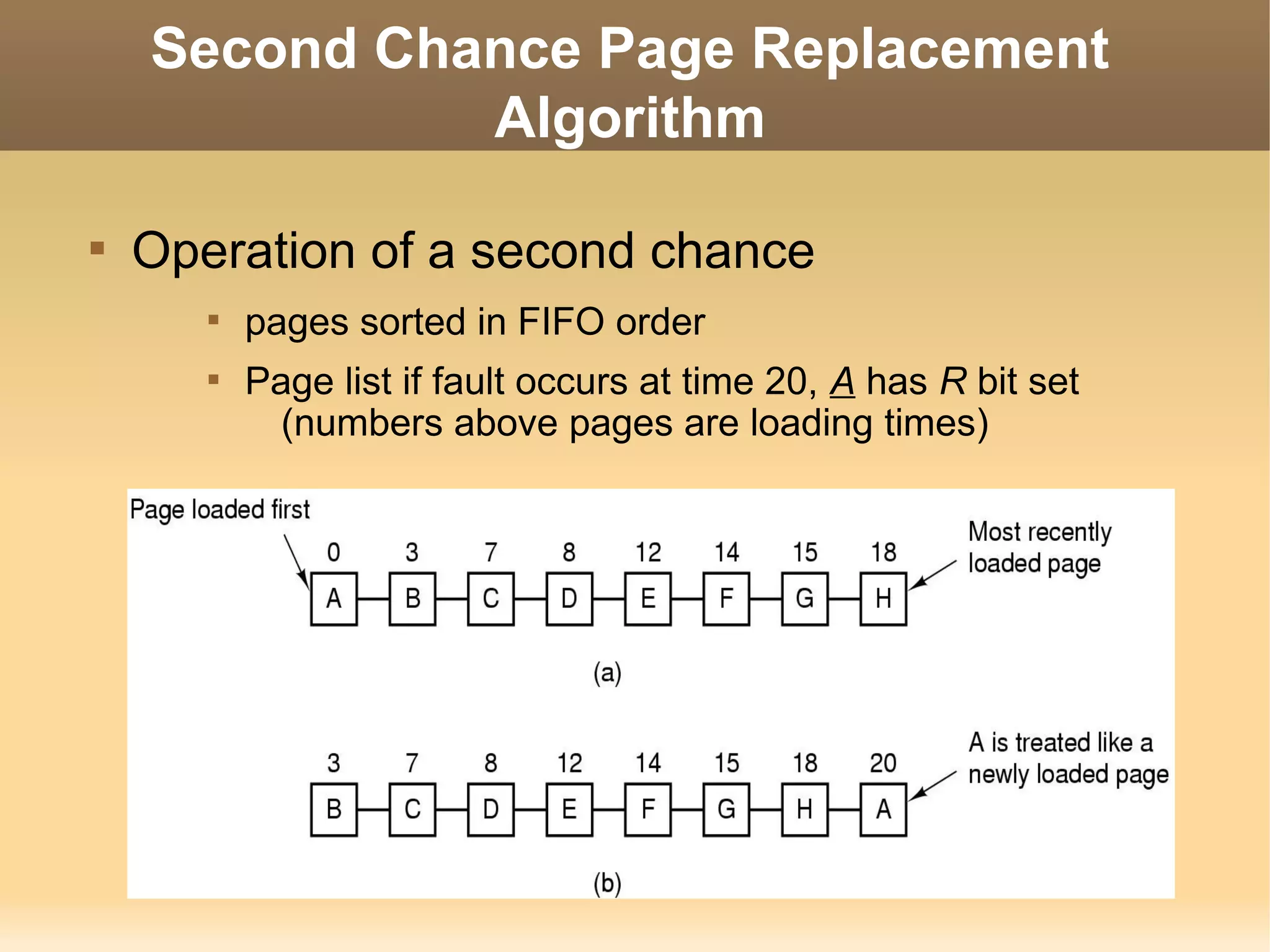

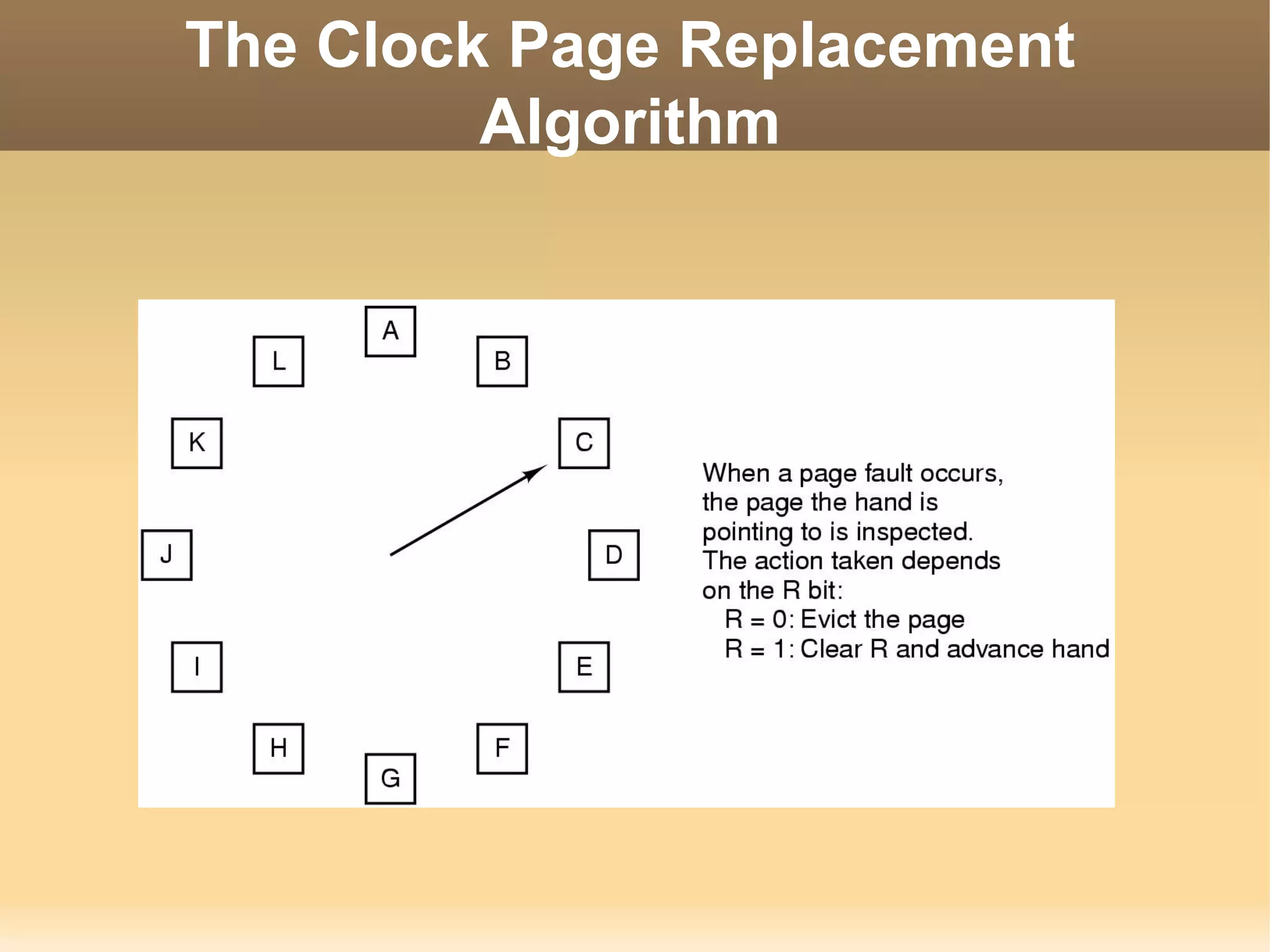

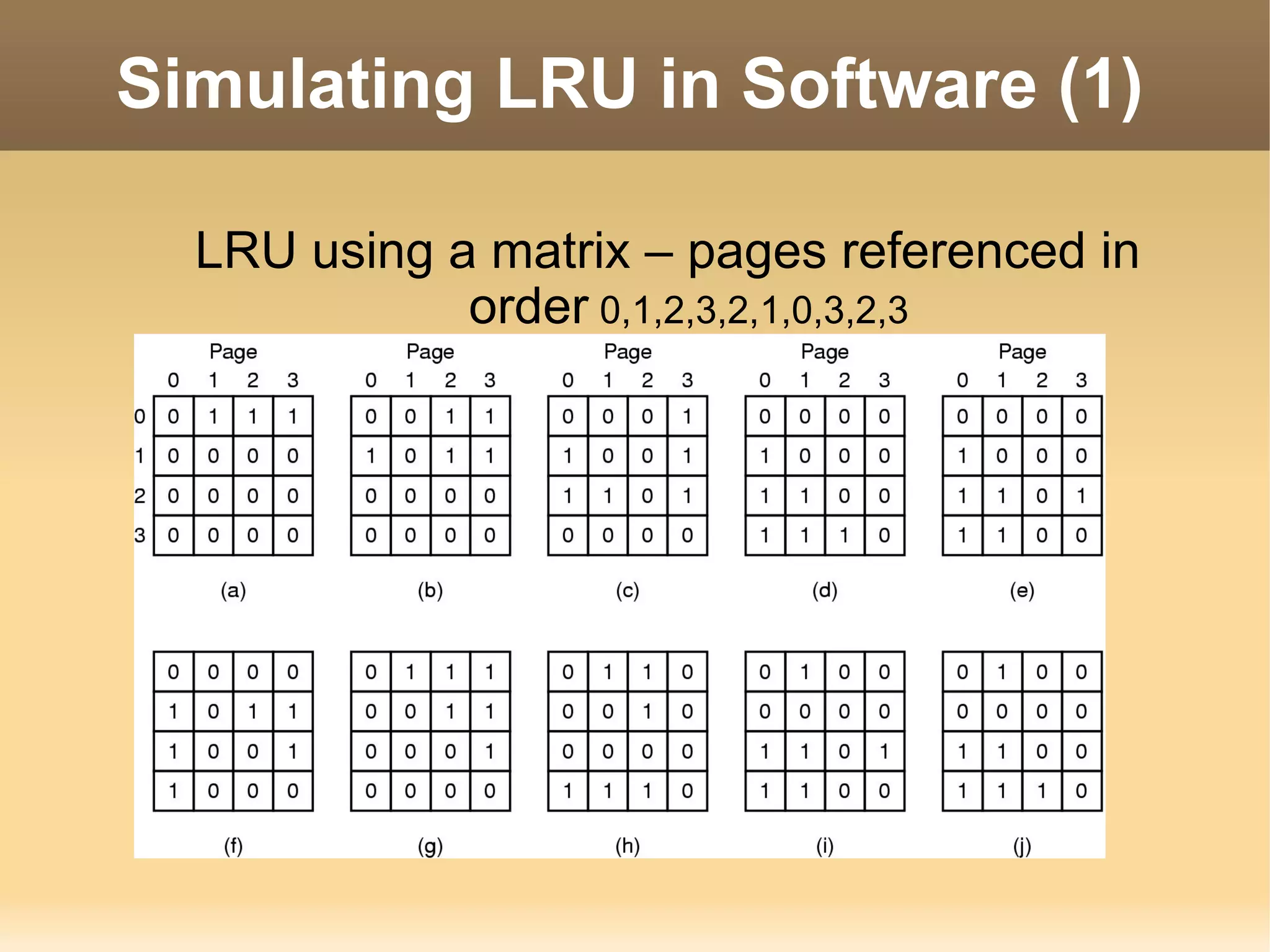

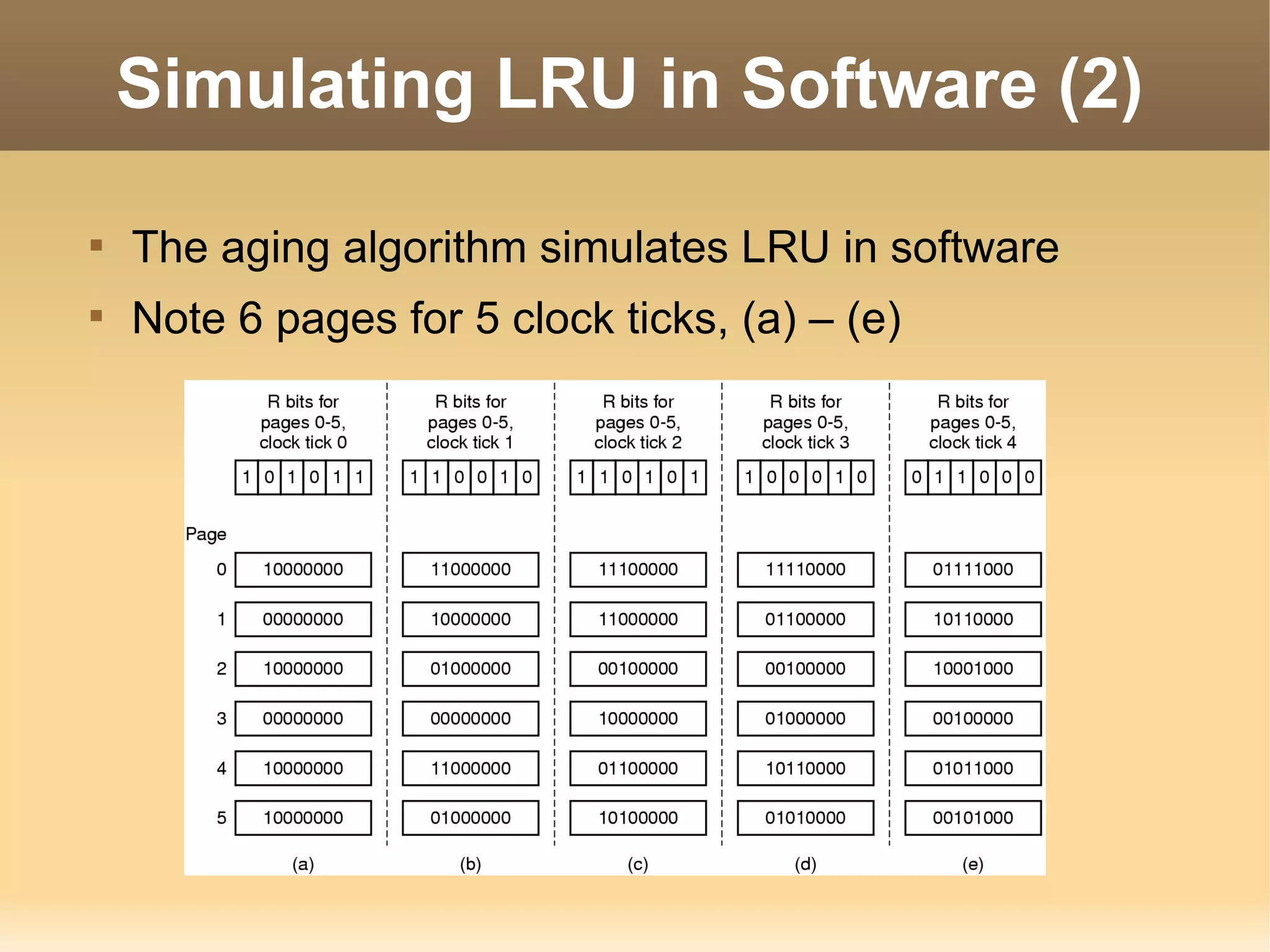

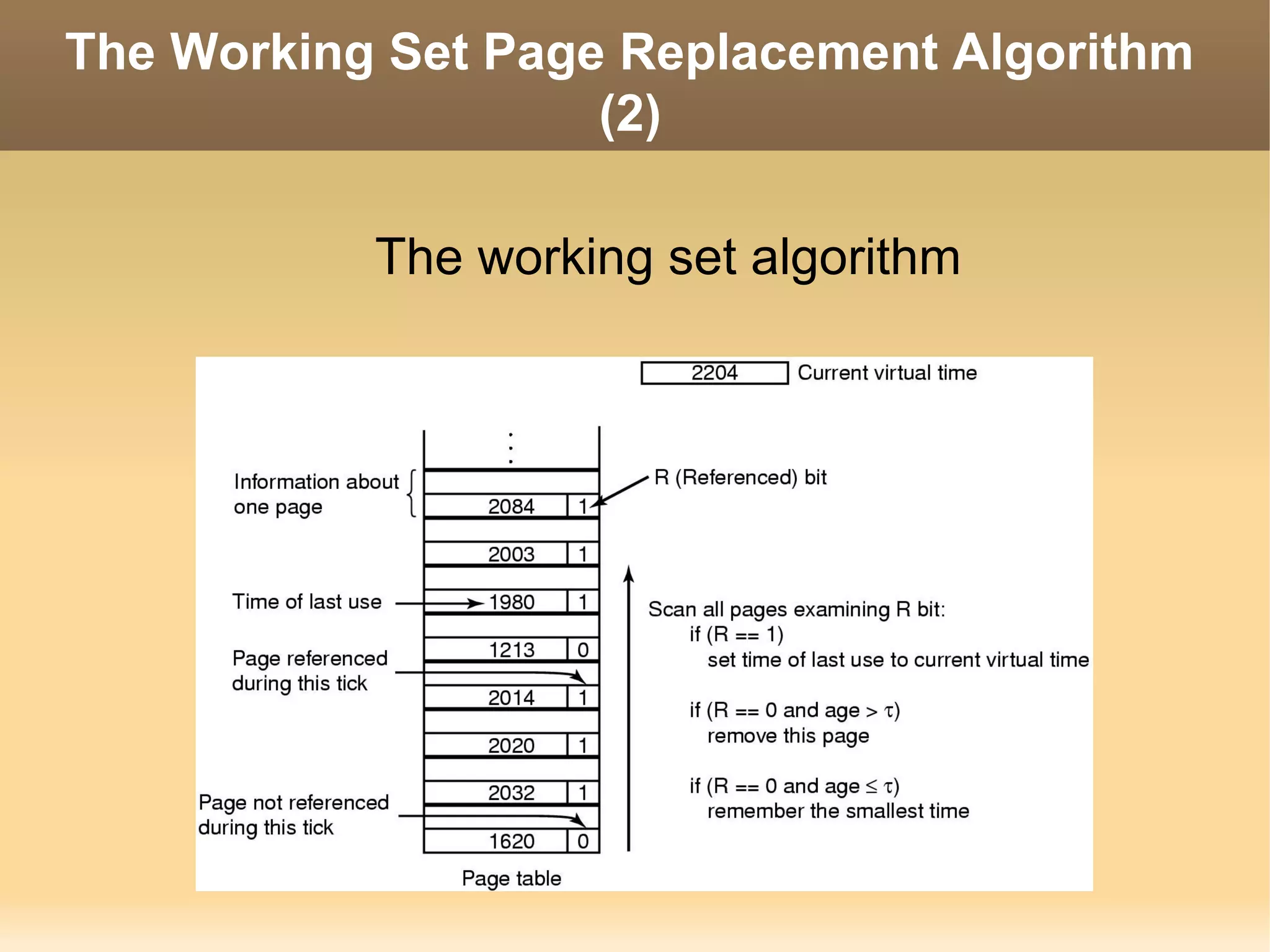

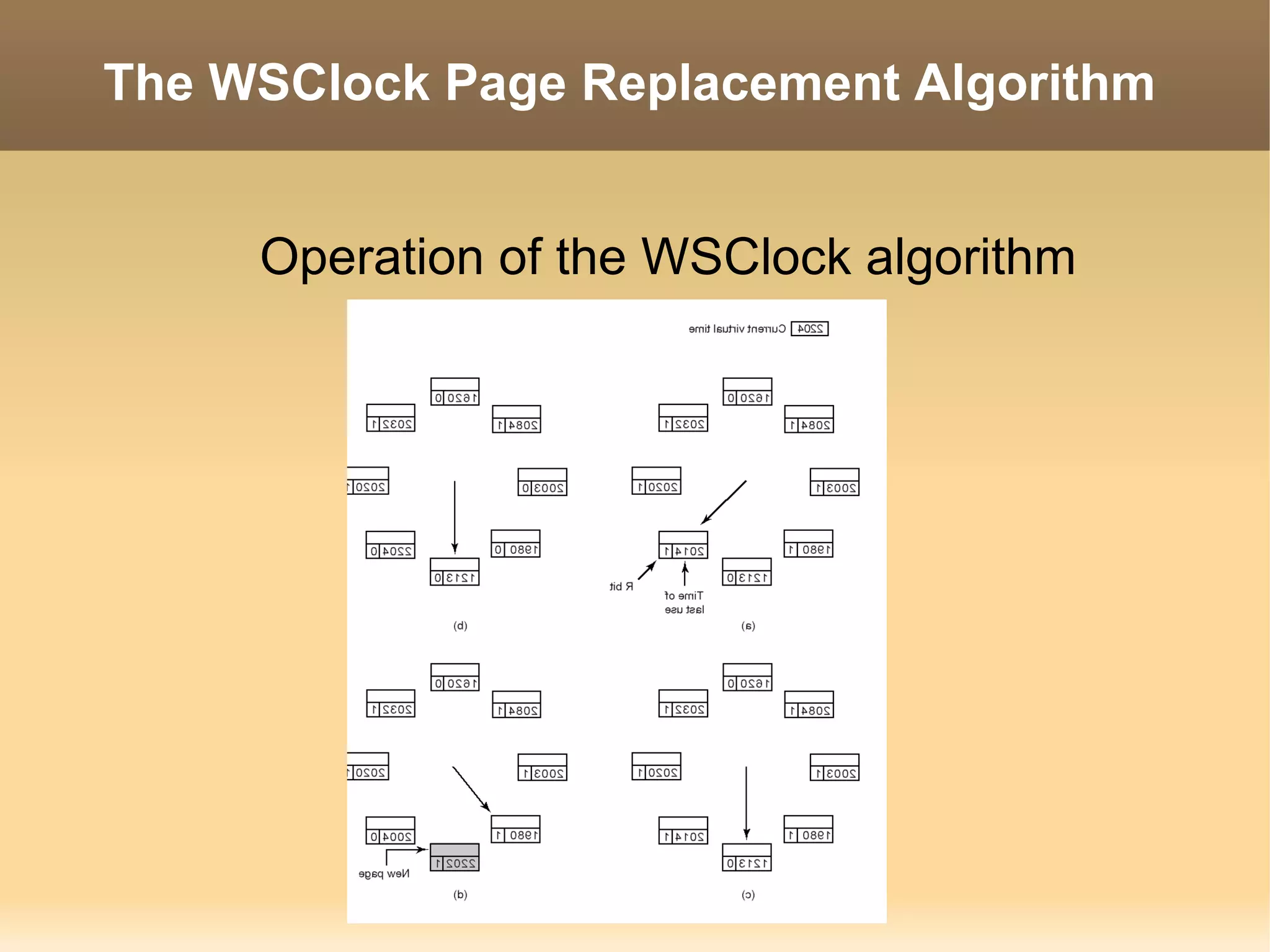

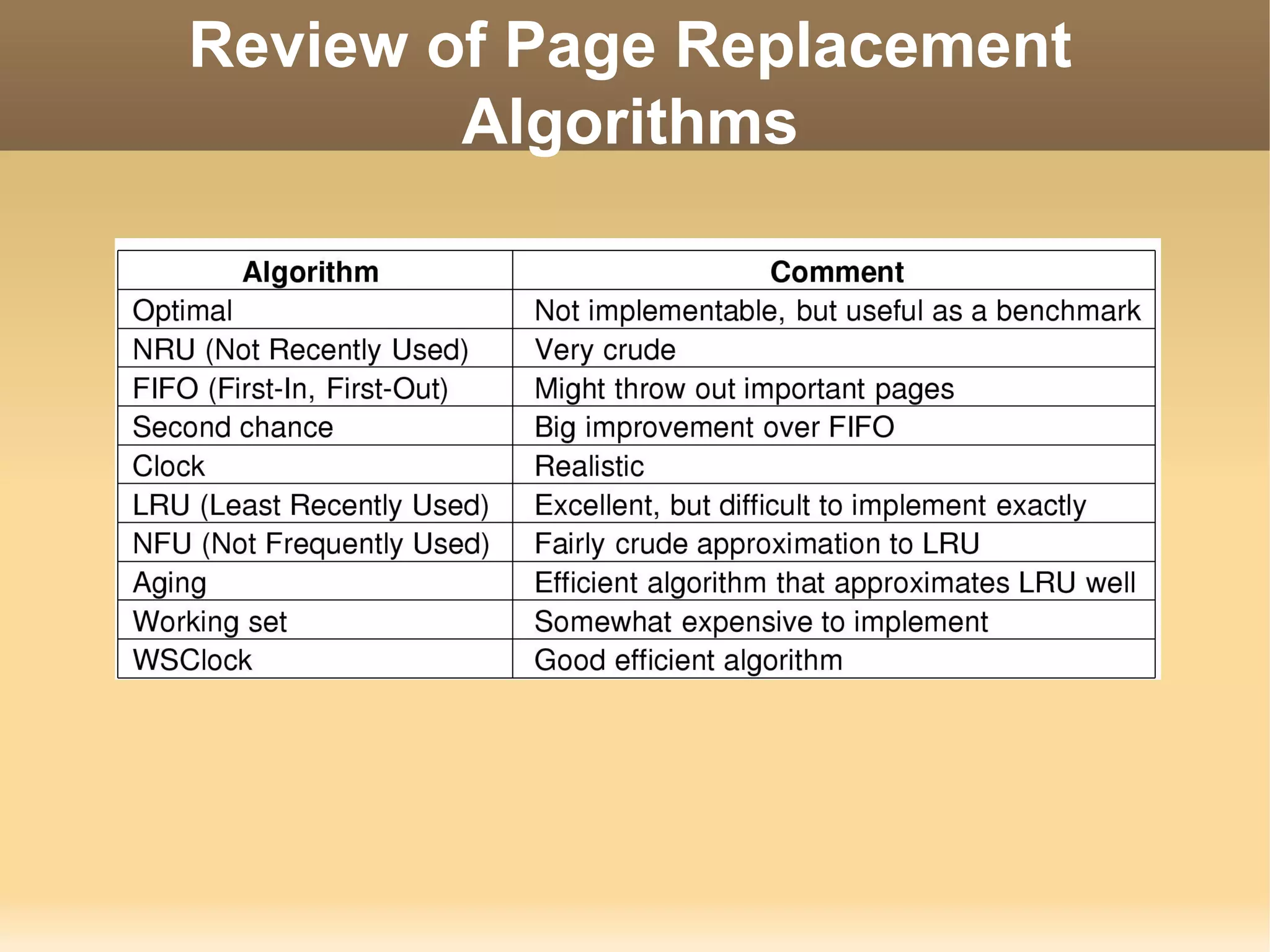

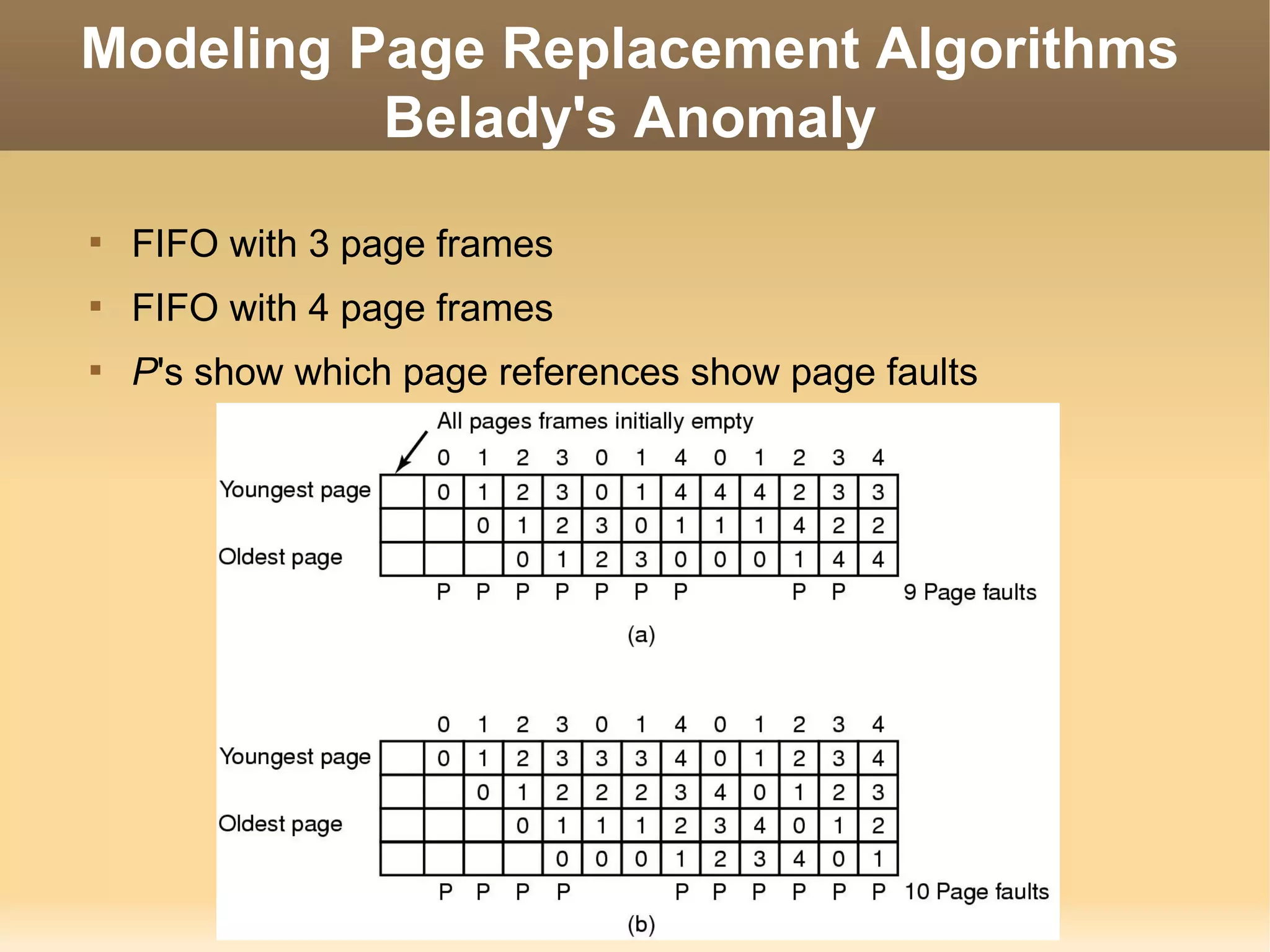

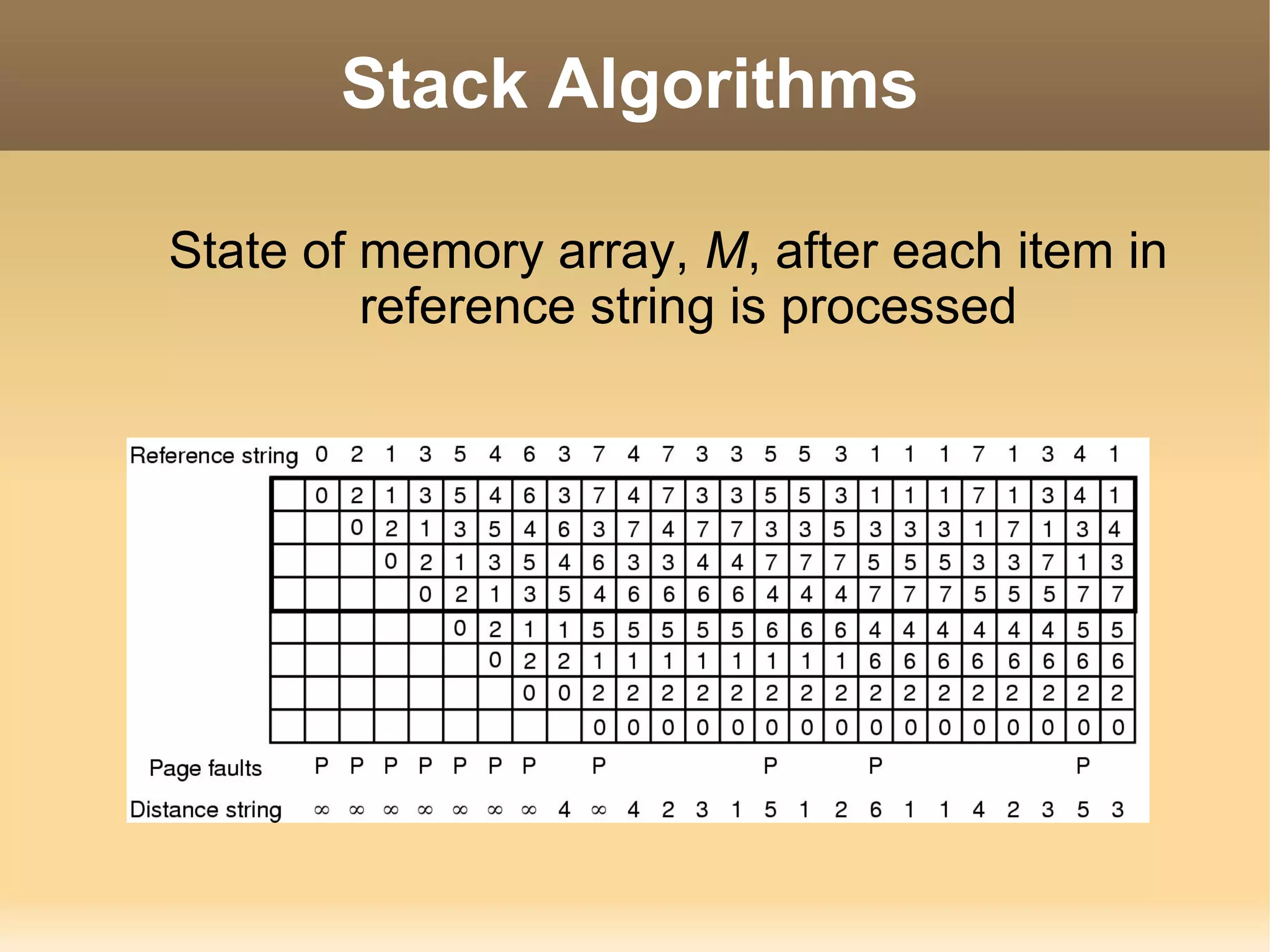

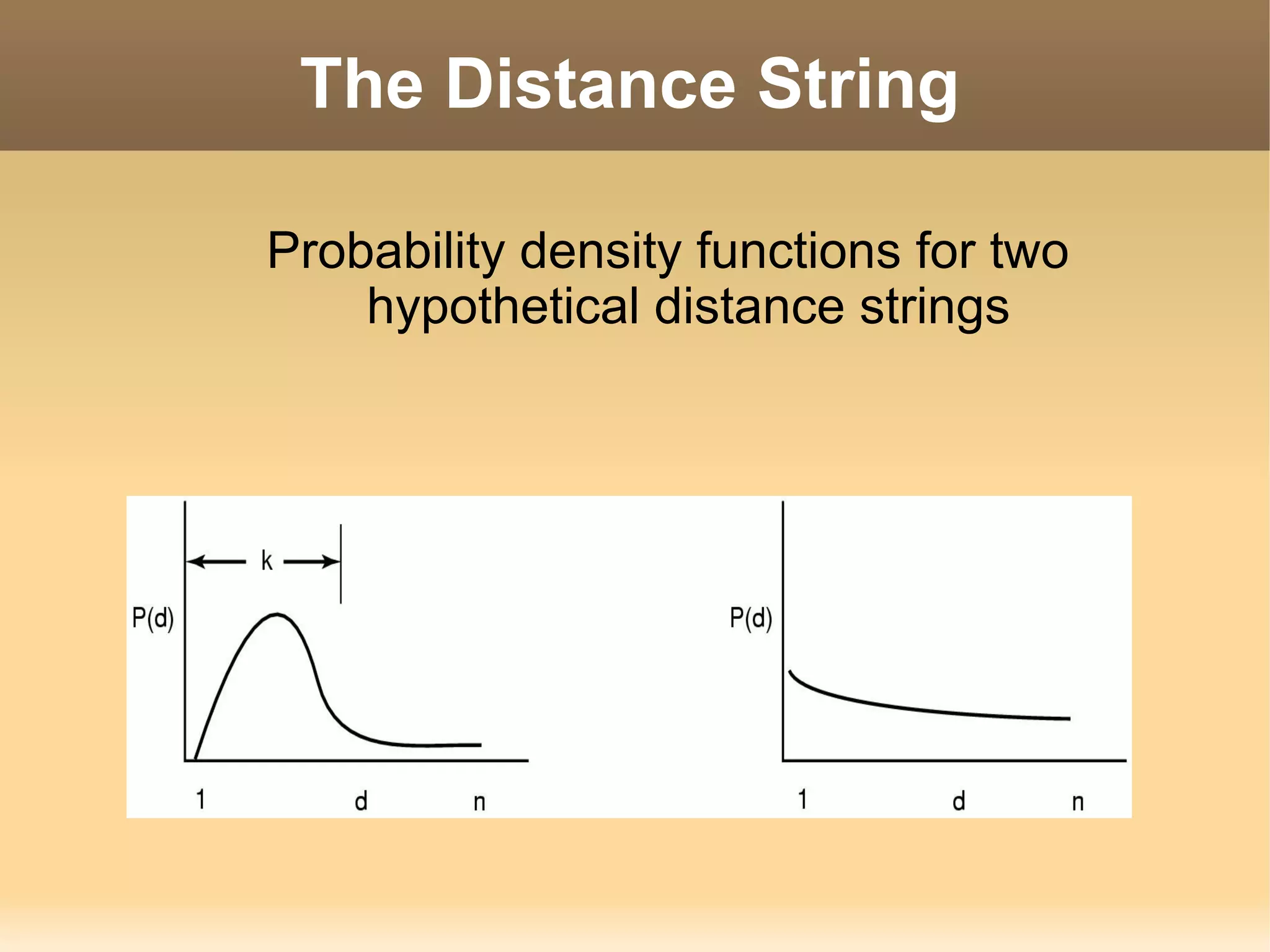

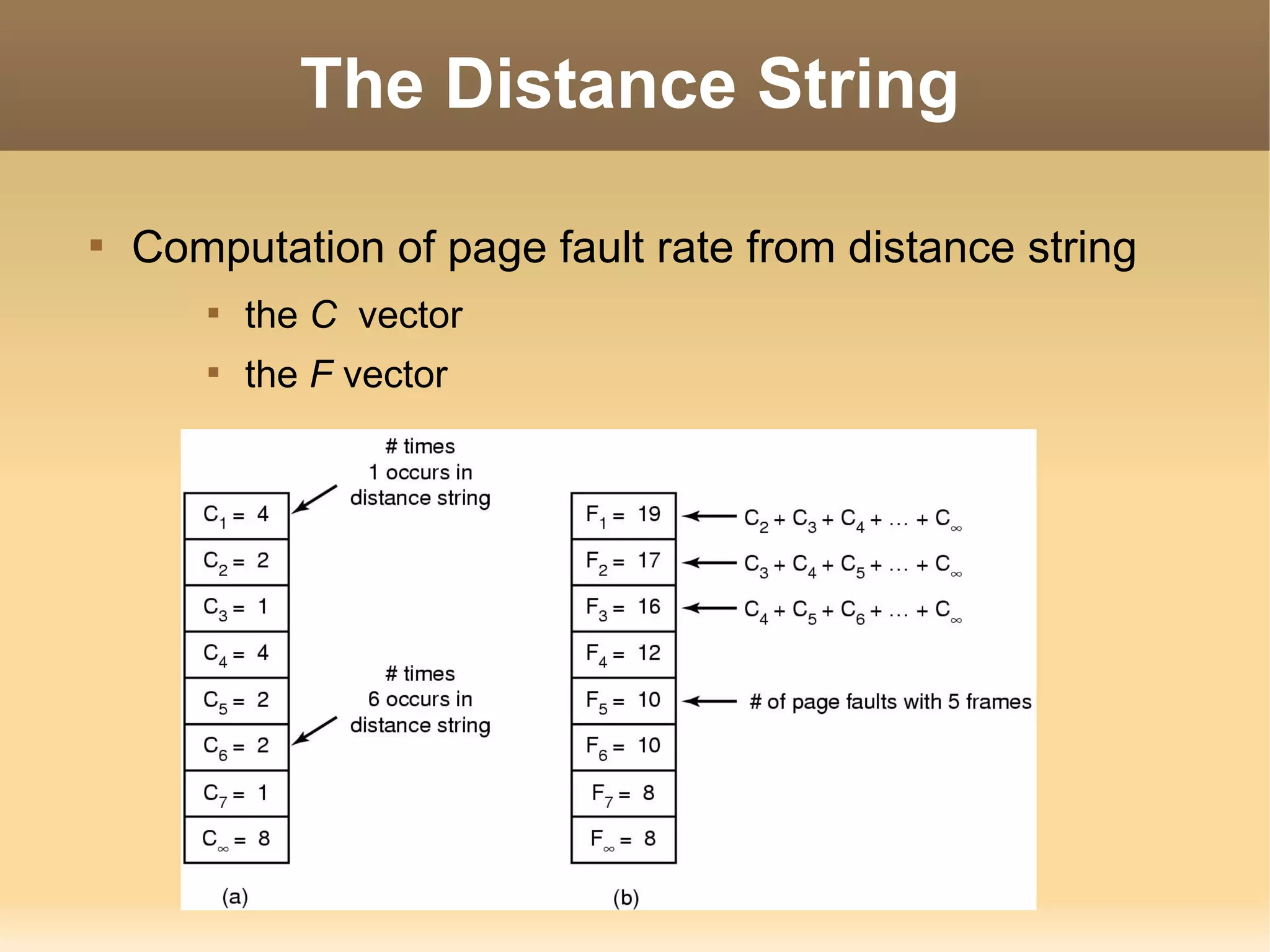

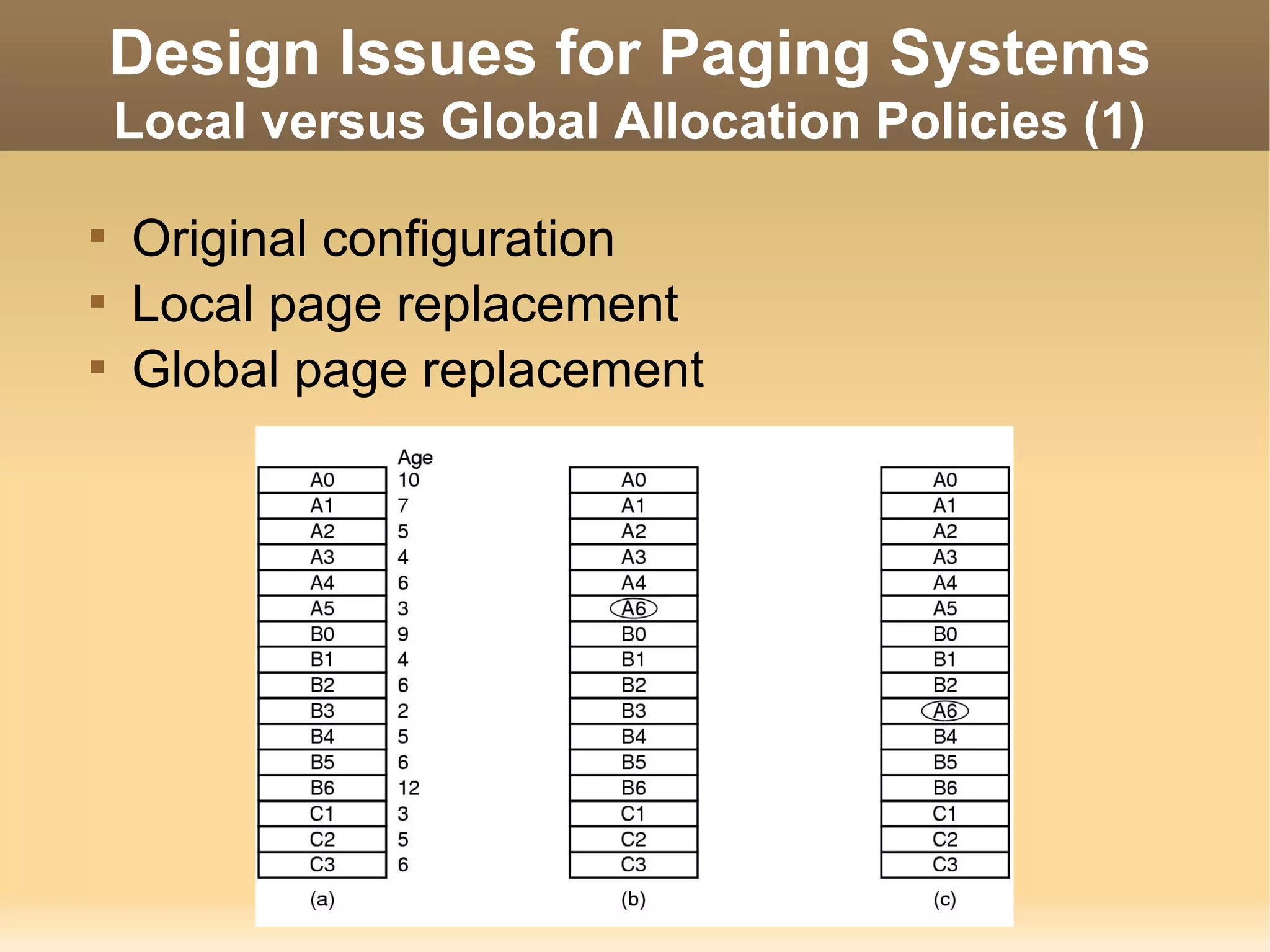

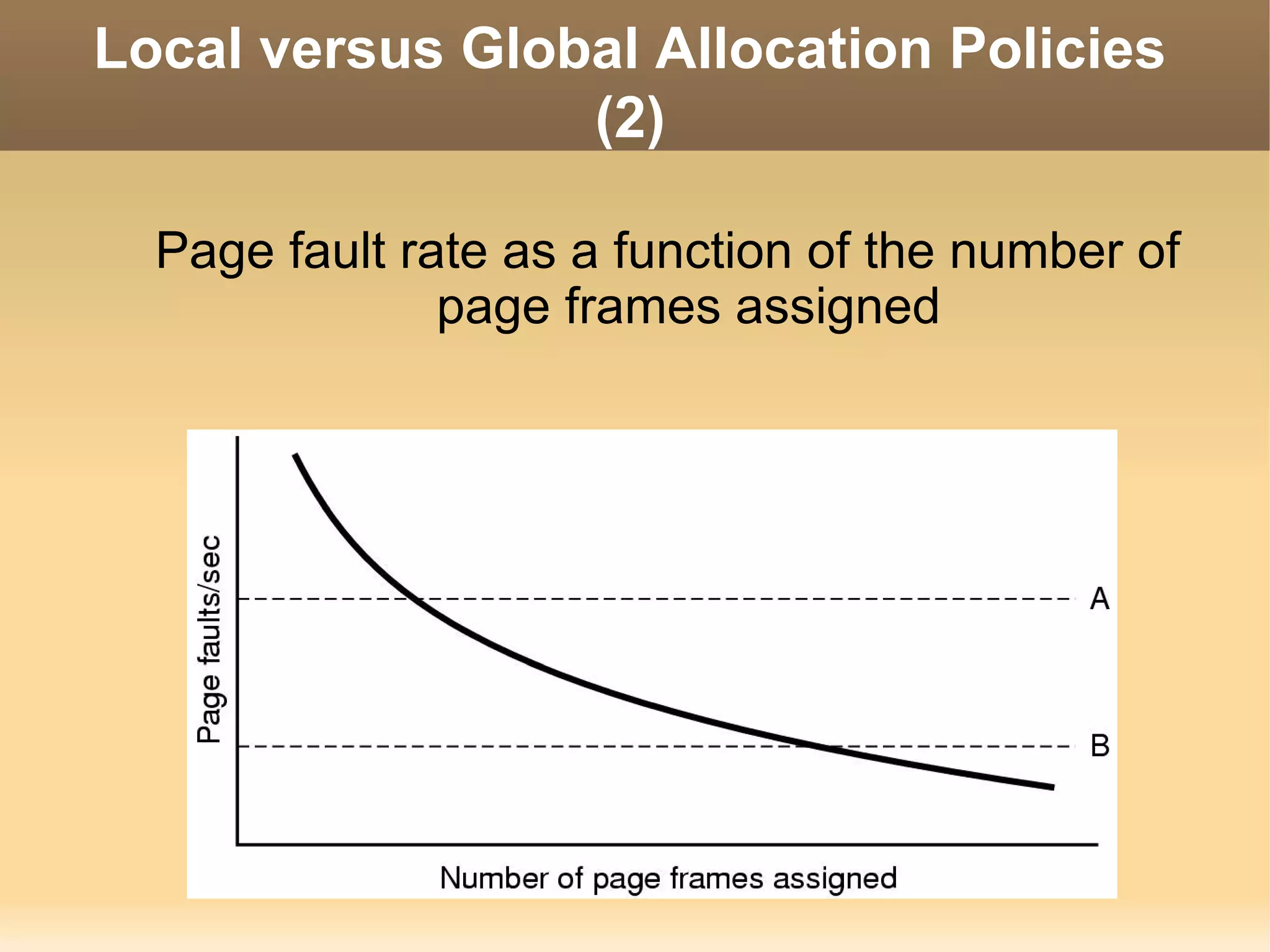

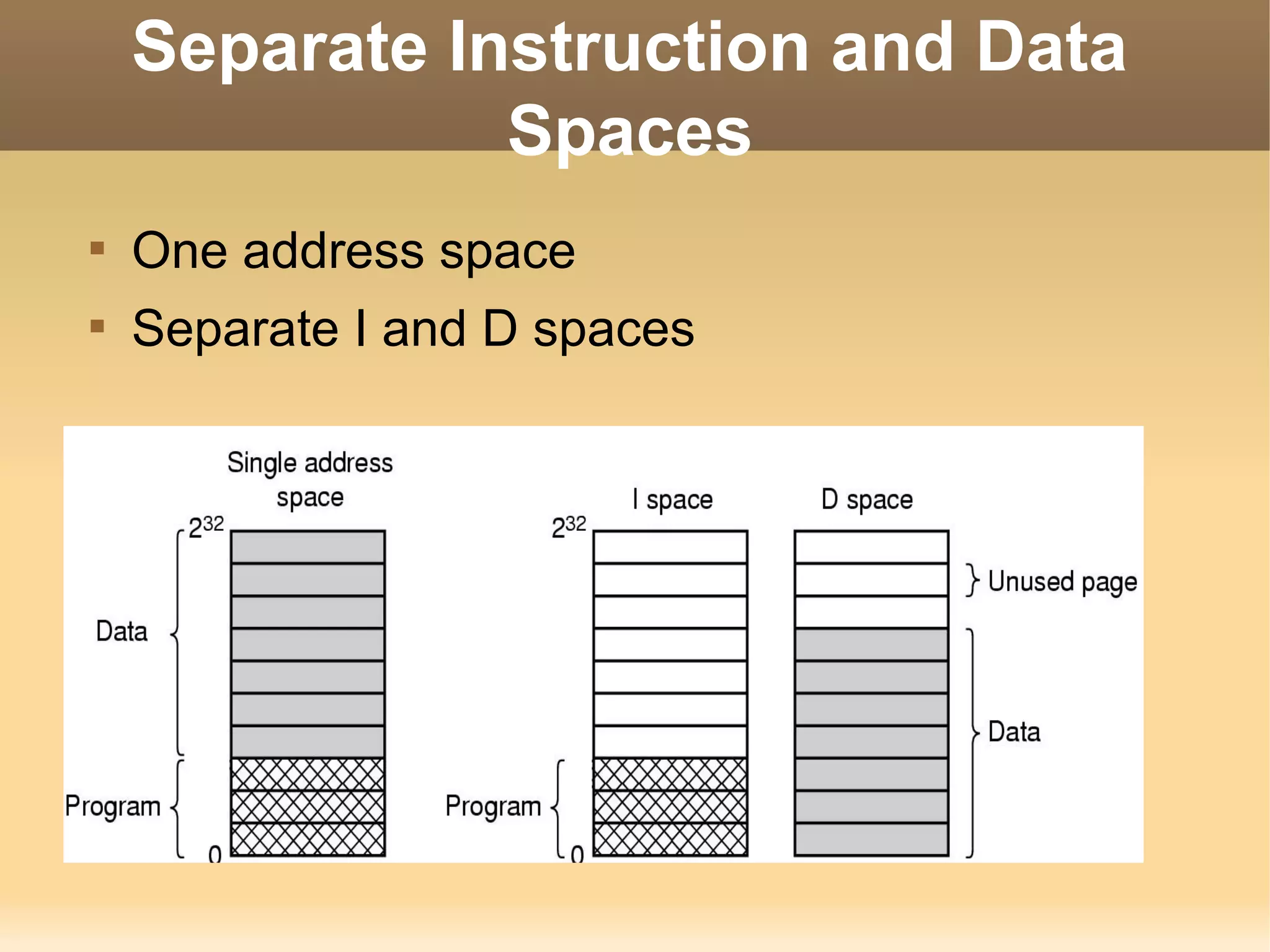

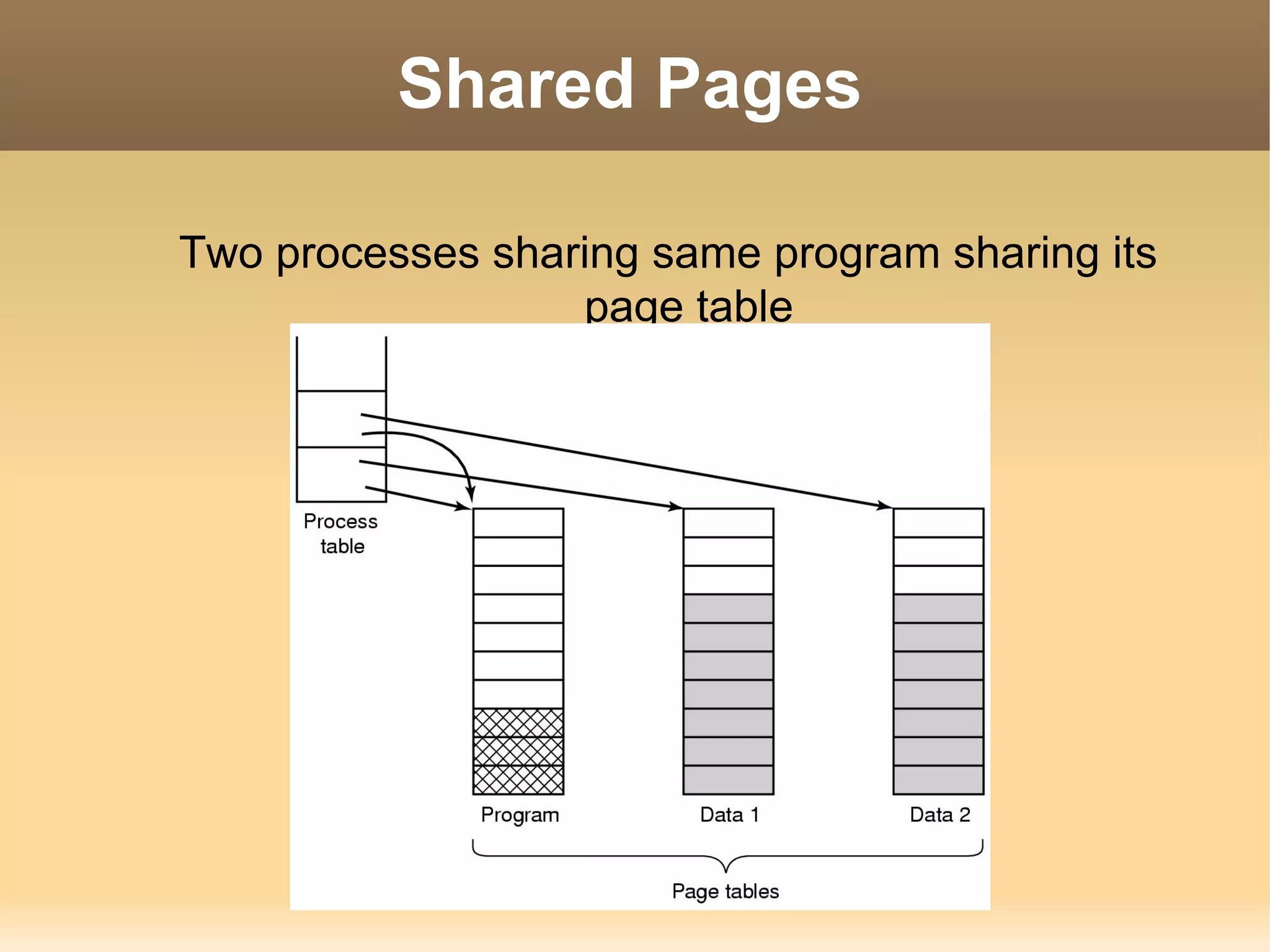

The document discusses various techniques for managing memory in an operating system. It covers memory hierarchies, motivations for memory management, basic approaches like fixed partitions and multiprogramming, and more advanced techniques like relocation, protection, swapping, paging using page tables and translation look-up buffers, and page replacement algorithms like FIFO, LRU, and working set. It also discusses design issues like local vs global allocation policies, page size selection, instruction/data separation, shared pages, and cleaning policies.