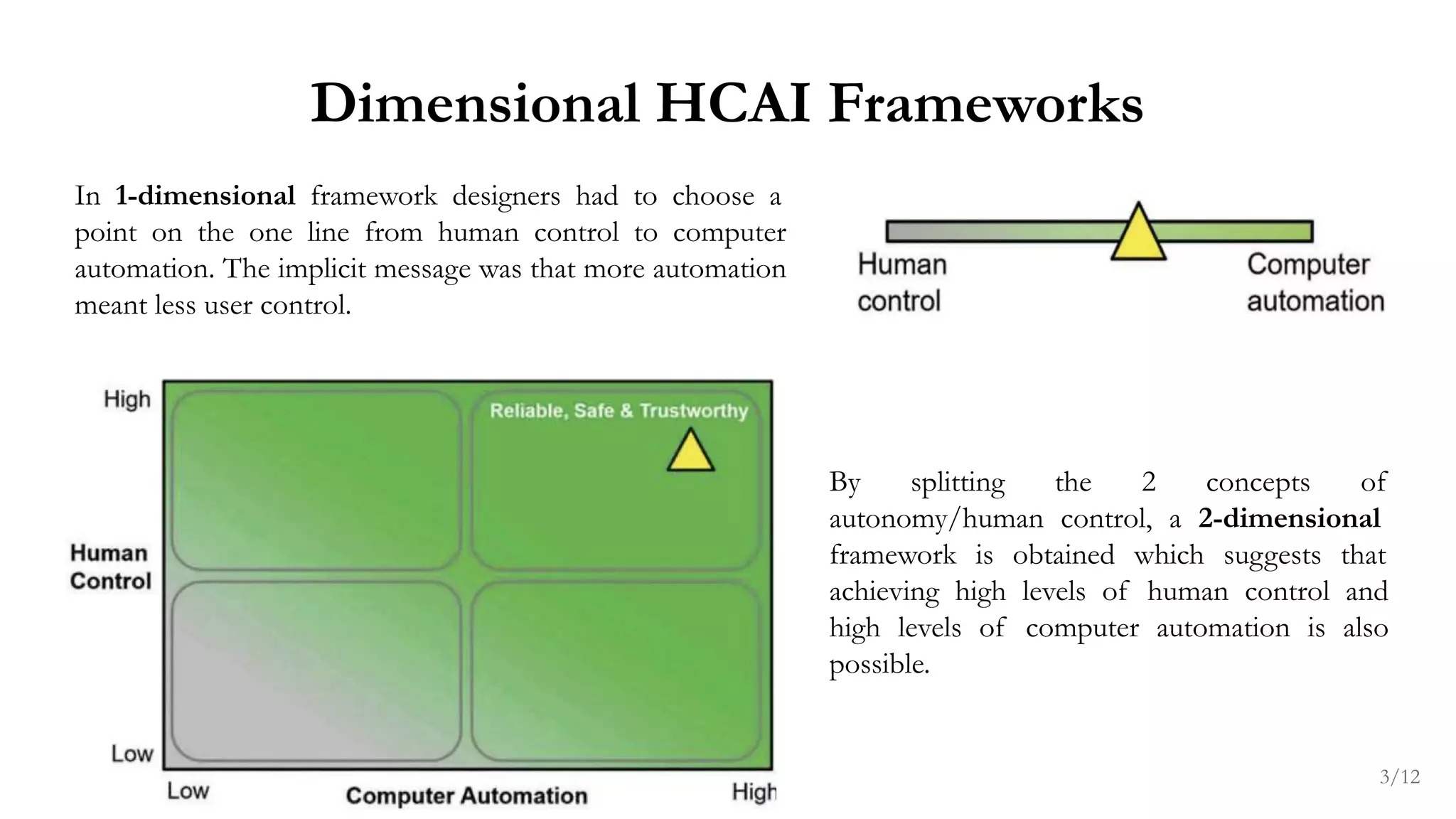

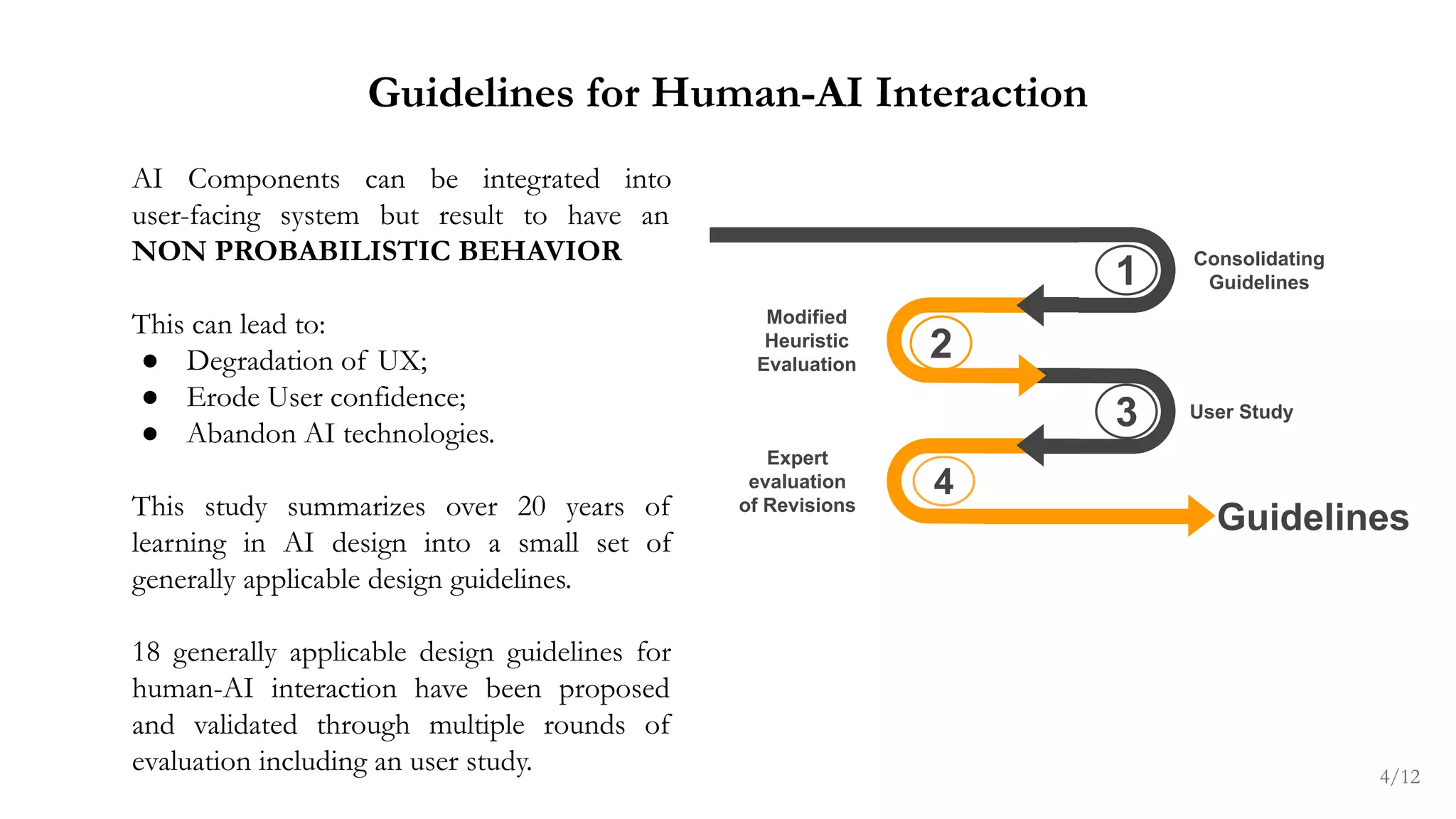

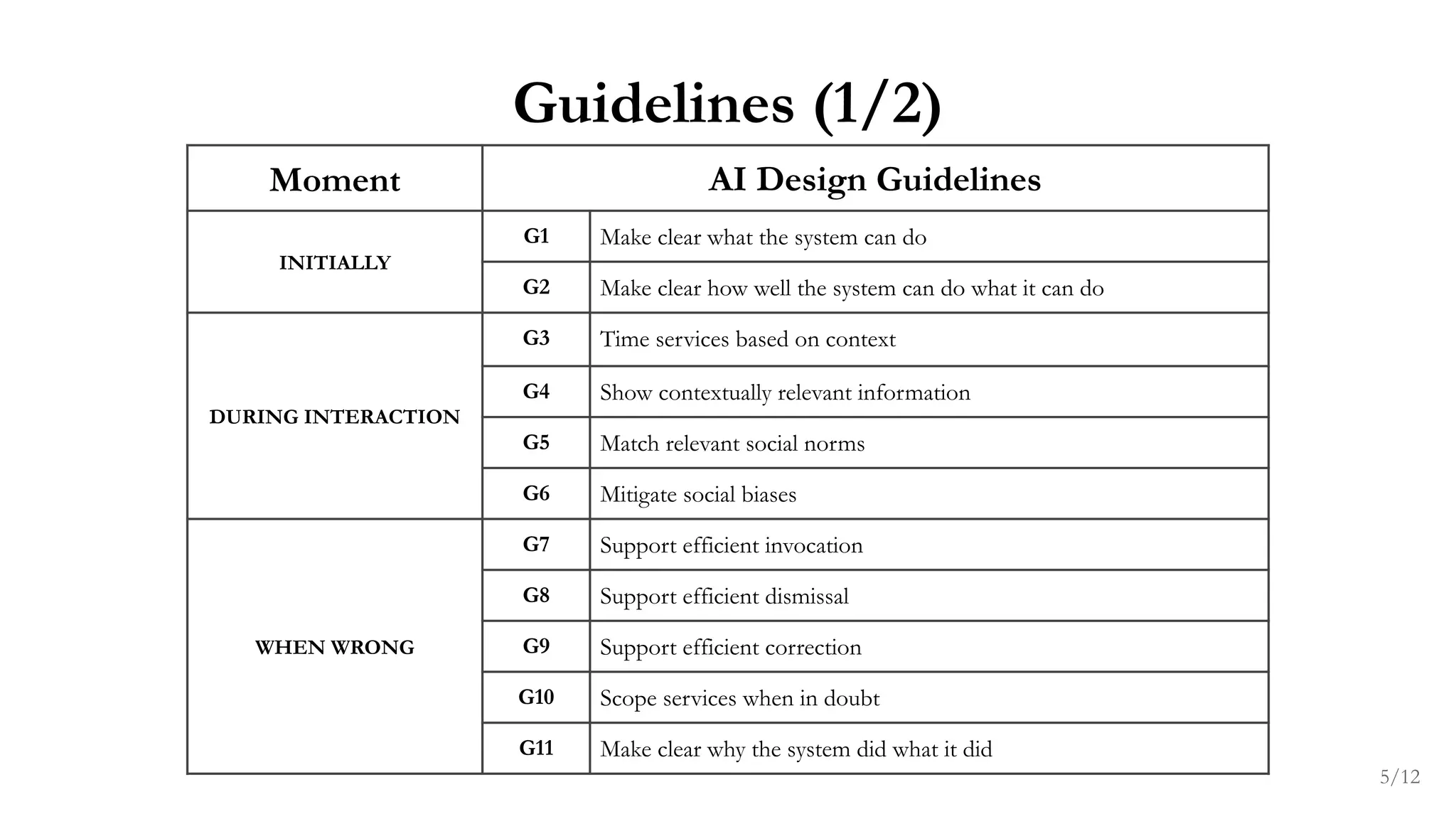

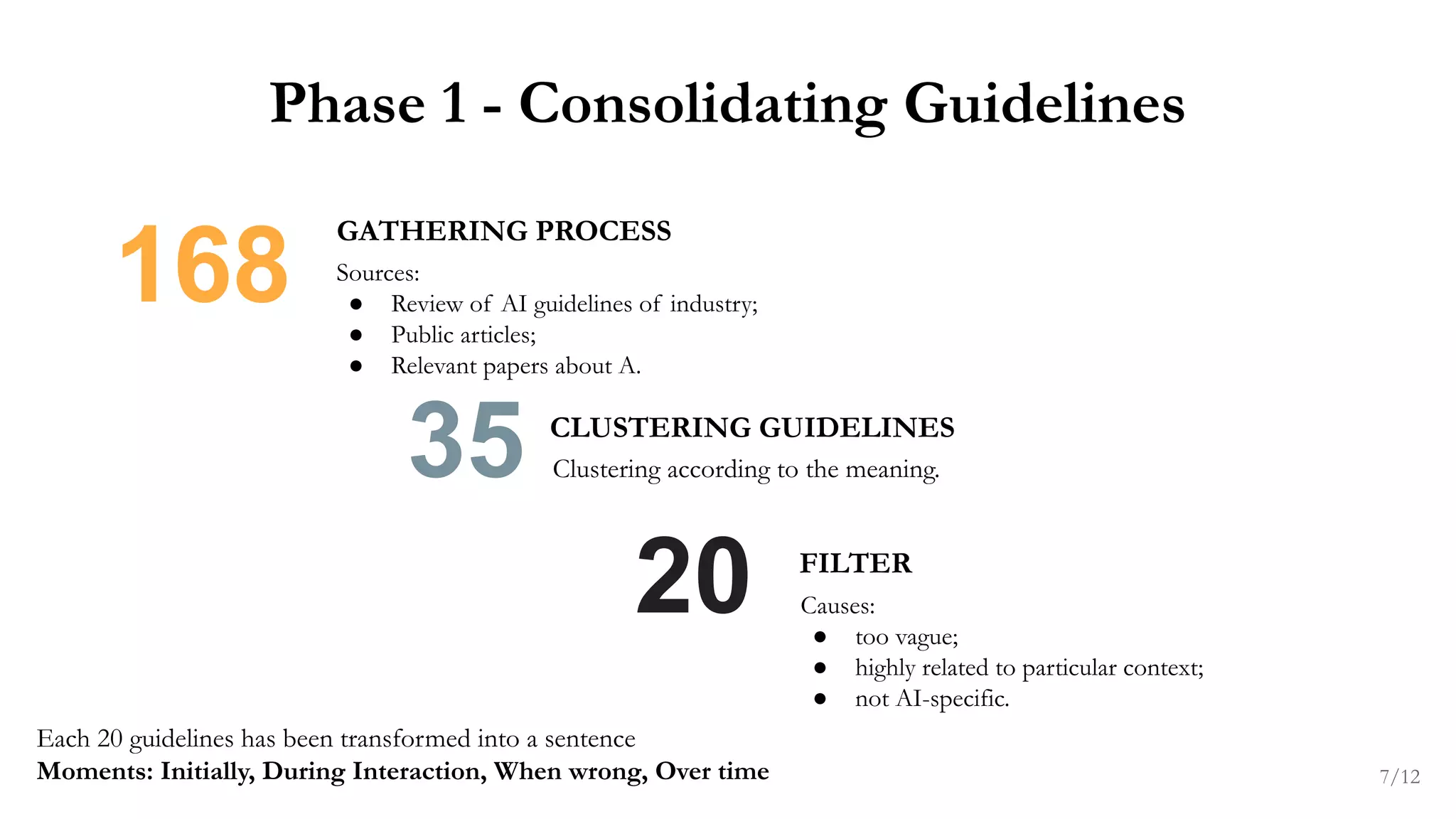

The document outlines guidelines for human-centered artificial intelligence (HCAI) focusing on reliability, safety, and trustworthiness in AI interactions. It presents 18 design guidelines validated through user studies and expert evaluations, emphasizing the importance of clear communication, social norms, and efficient user interactions. Additionally, it discusses the evolving ethical considerations in AI design and the need for further refinement of these guidelines as AI technology evolves.