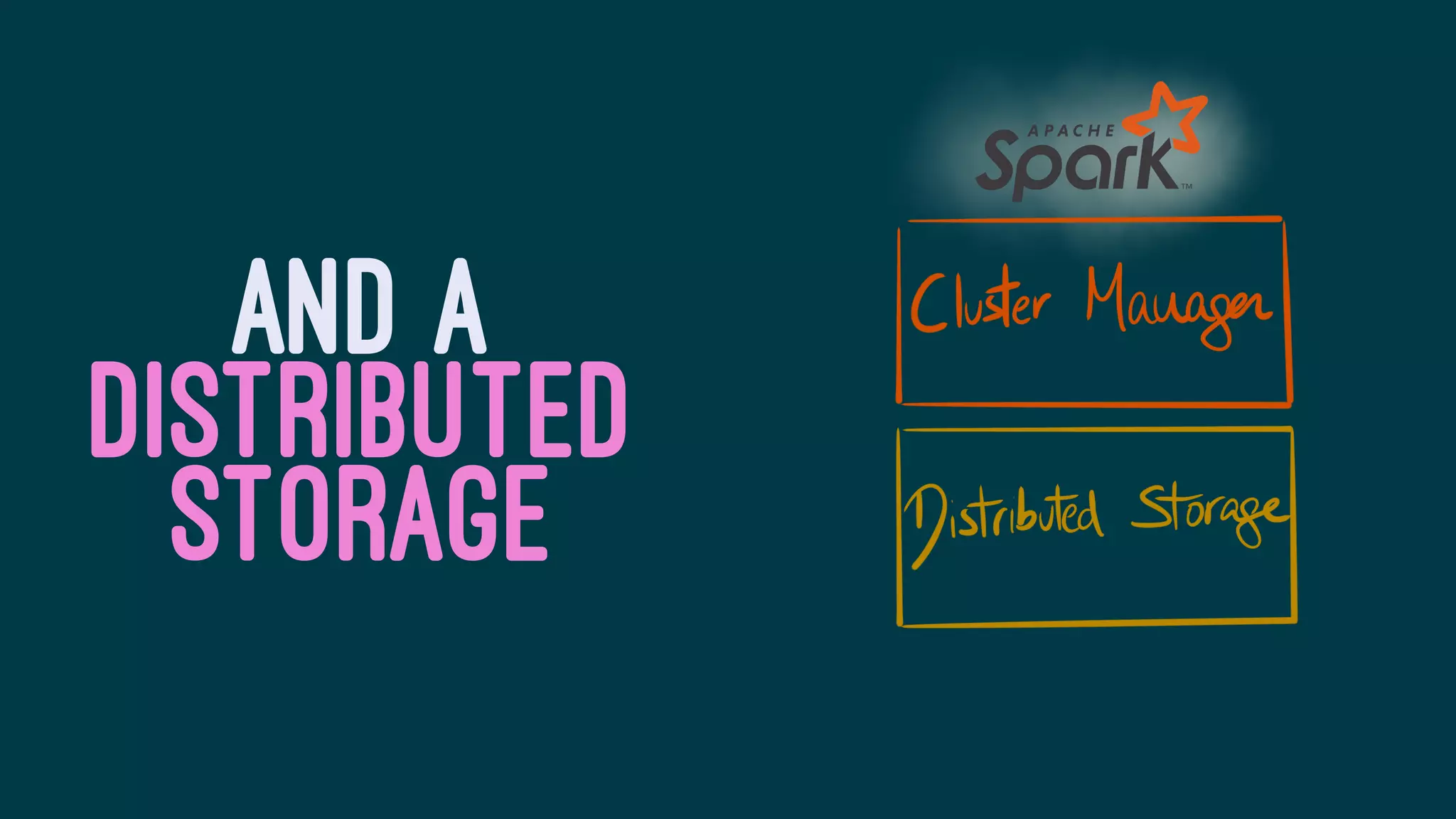

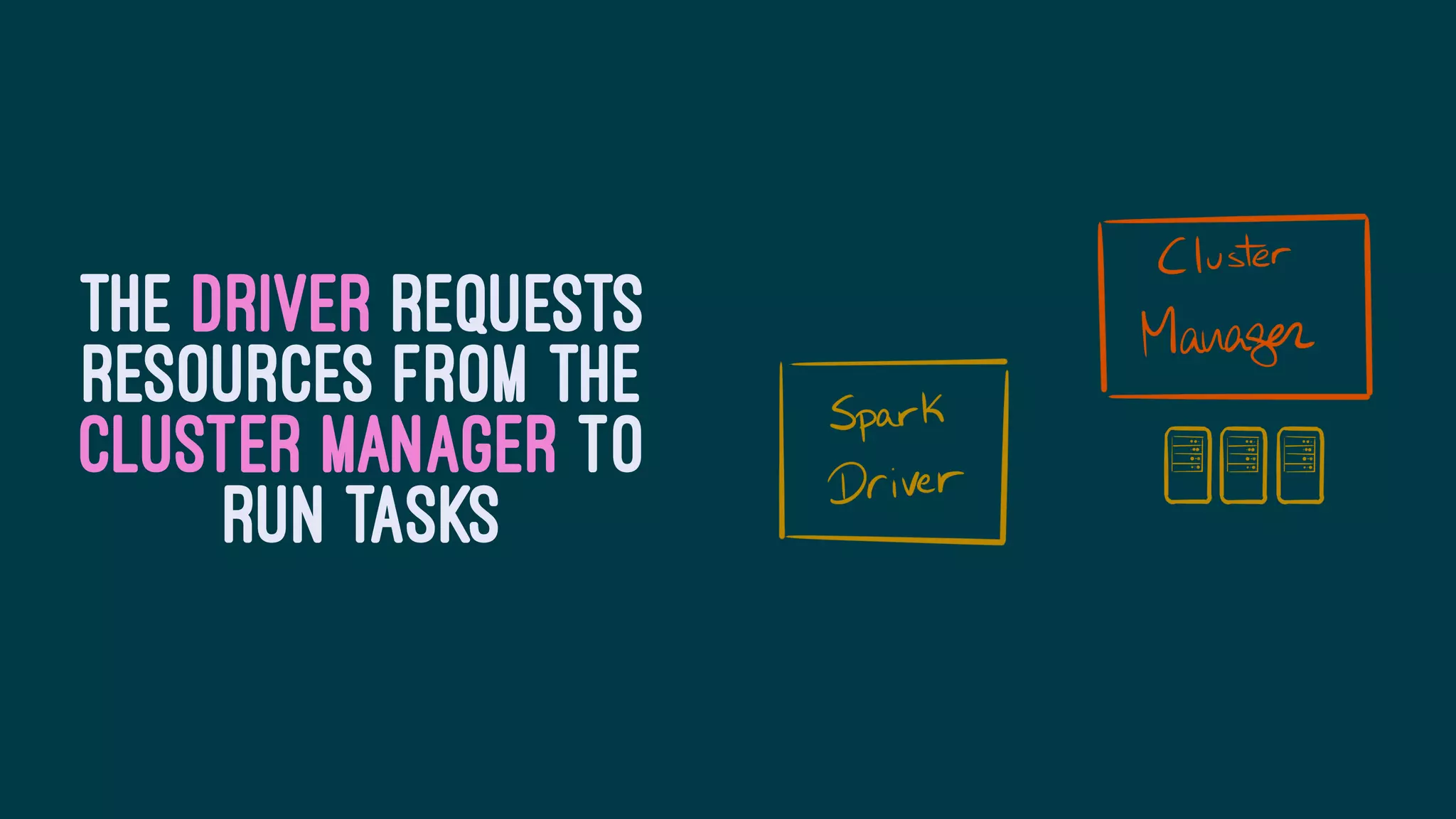

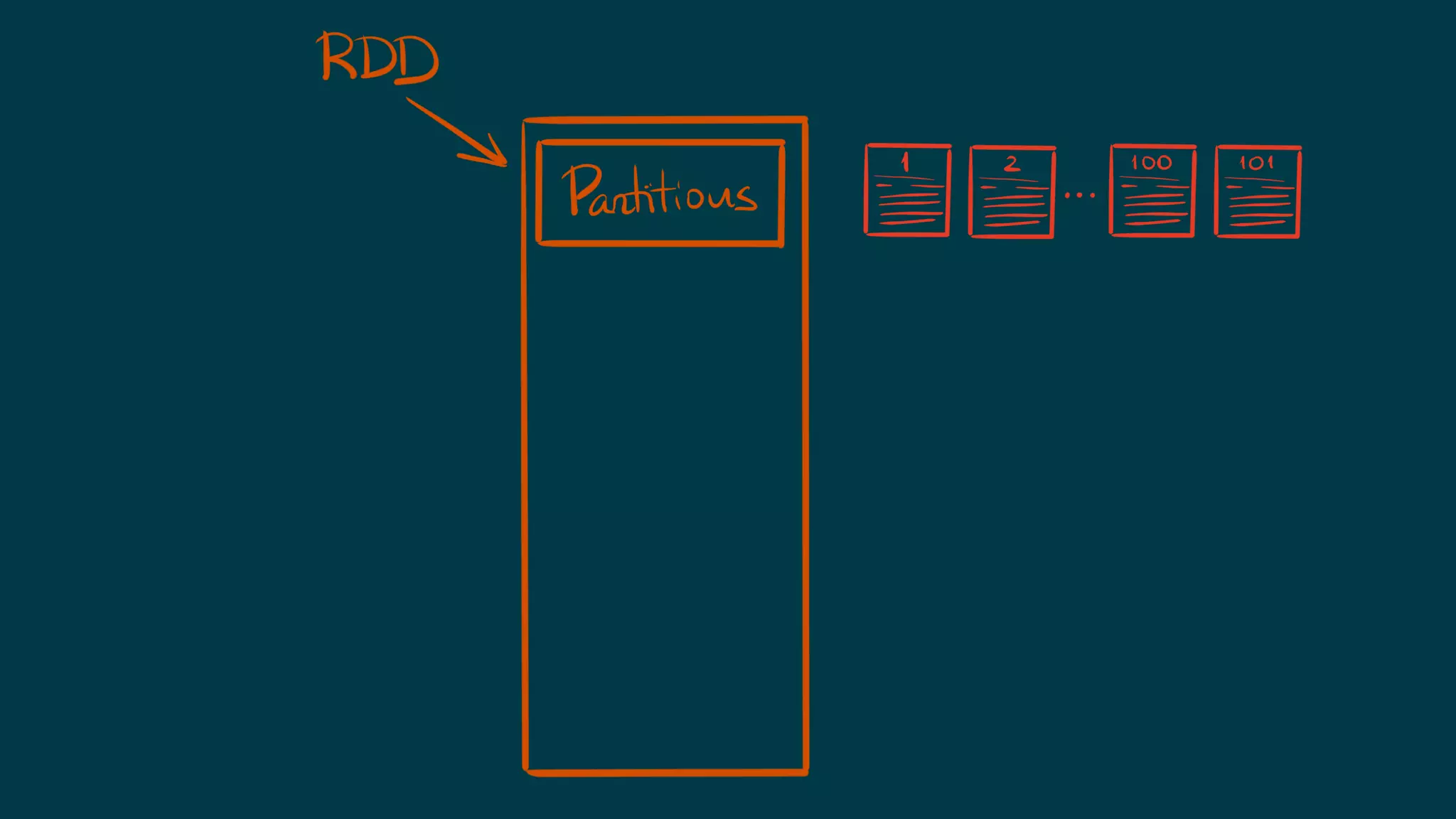

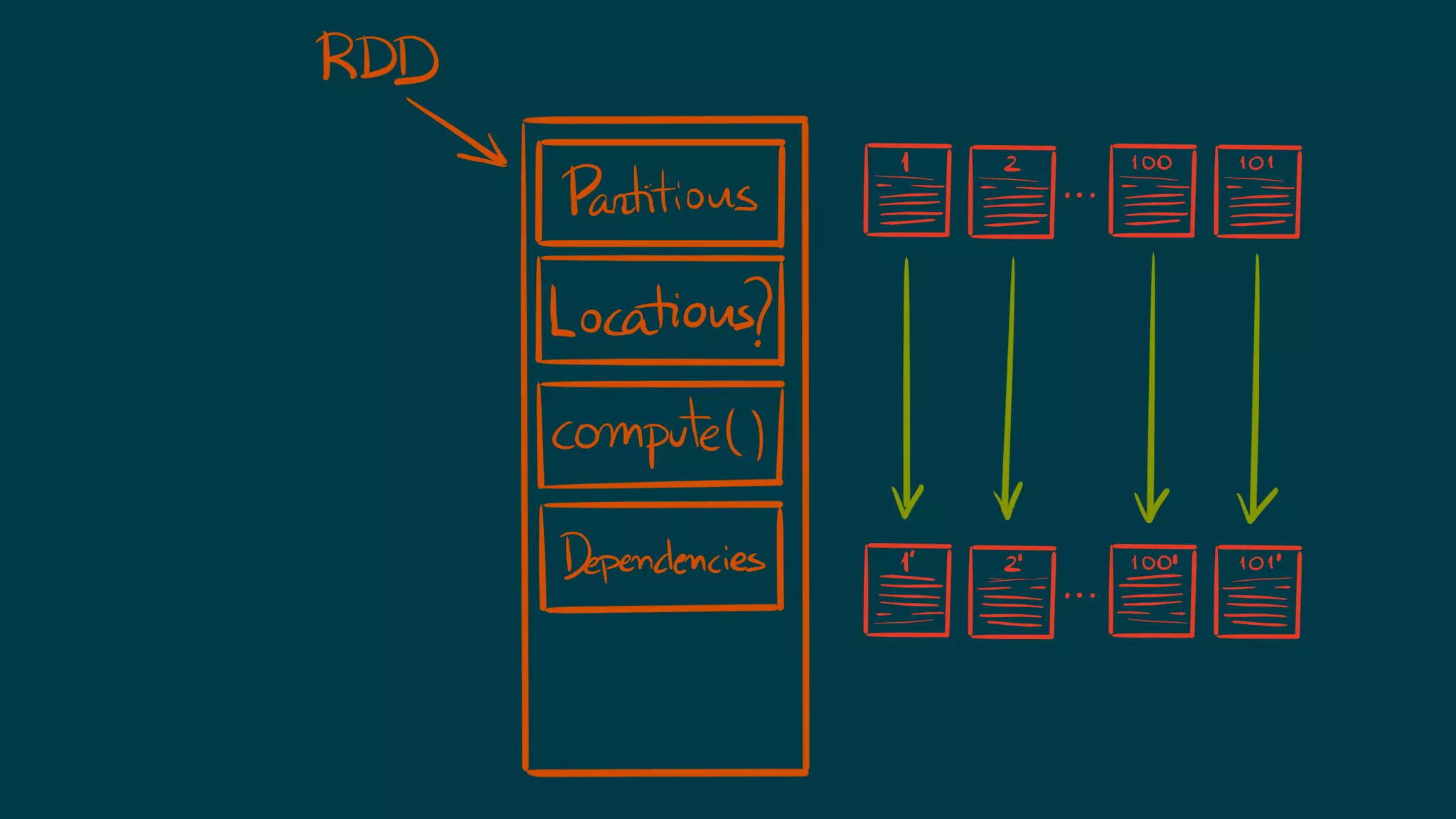

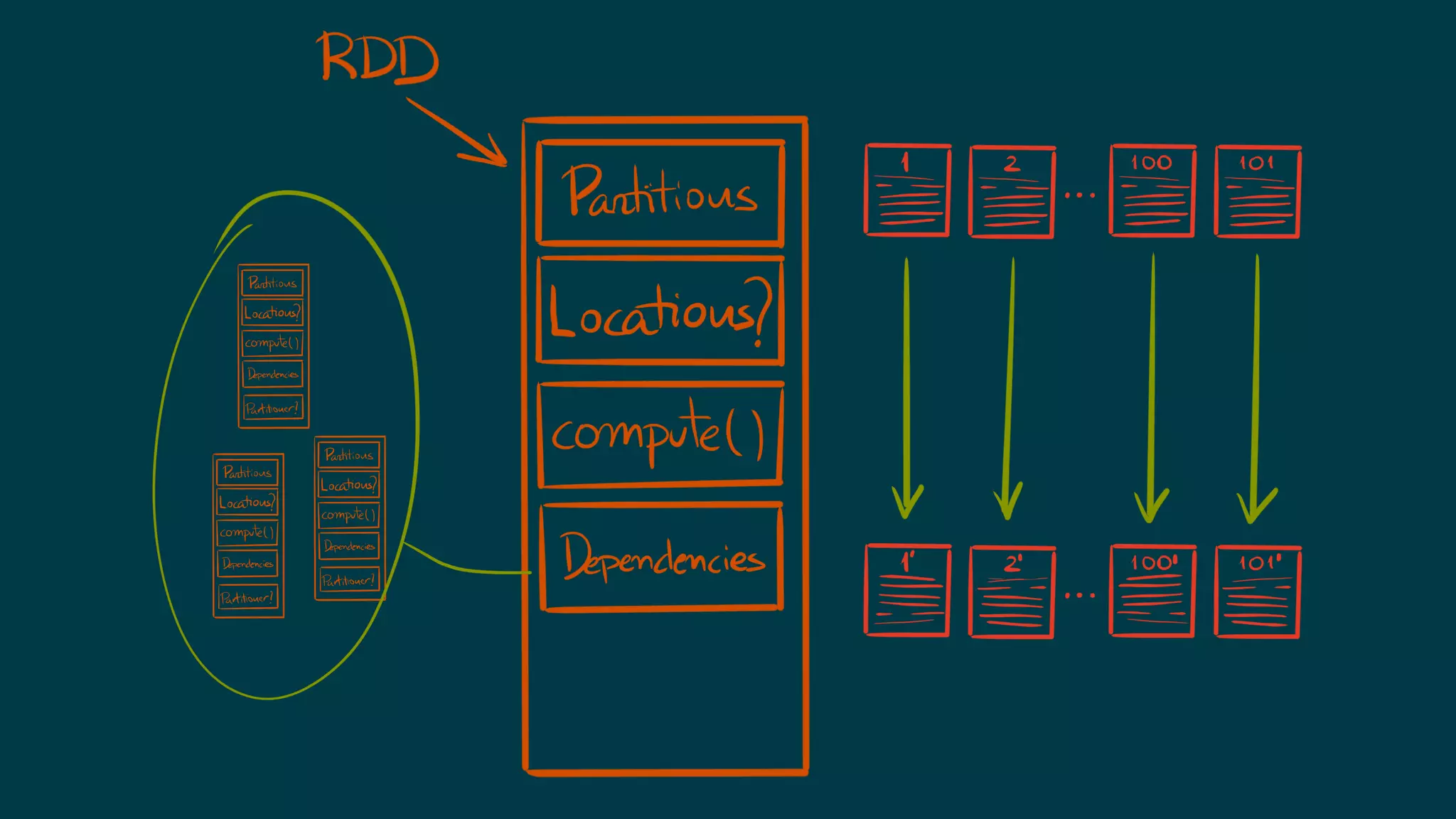

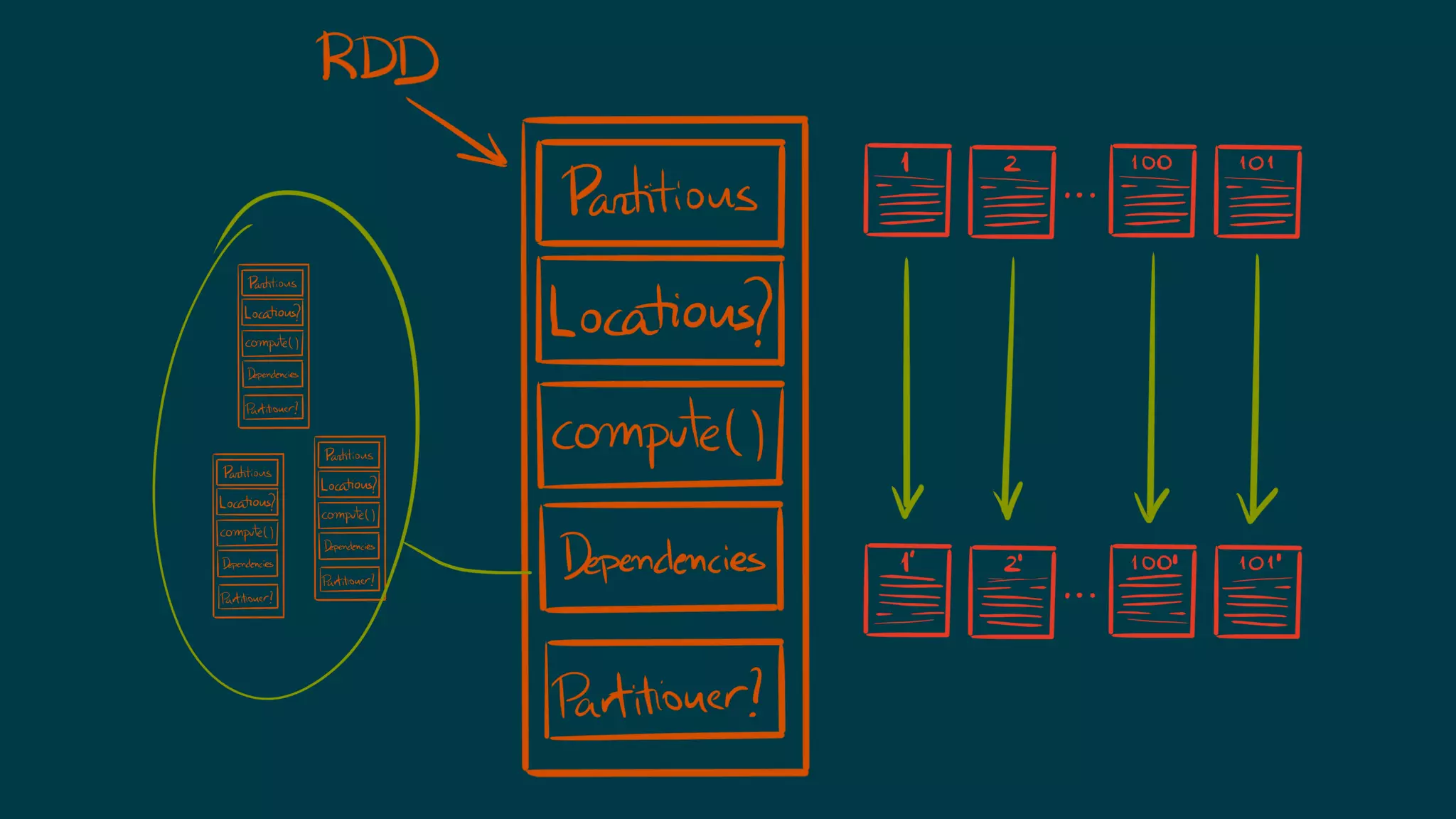

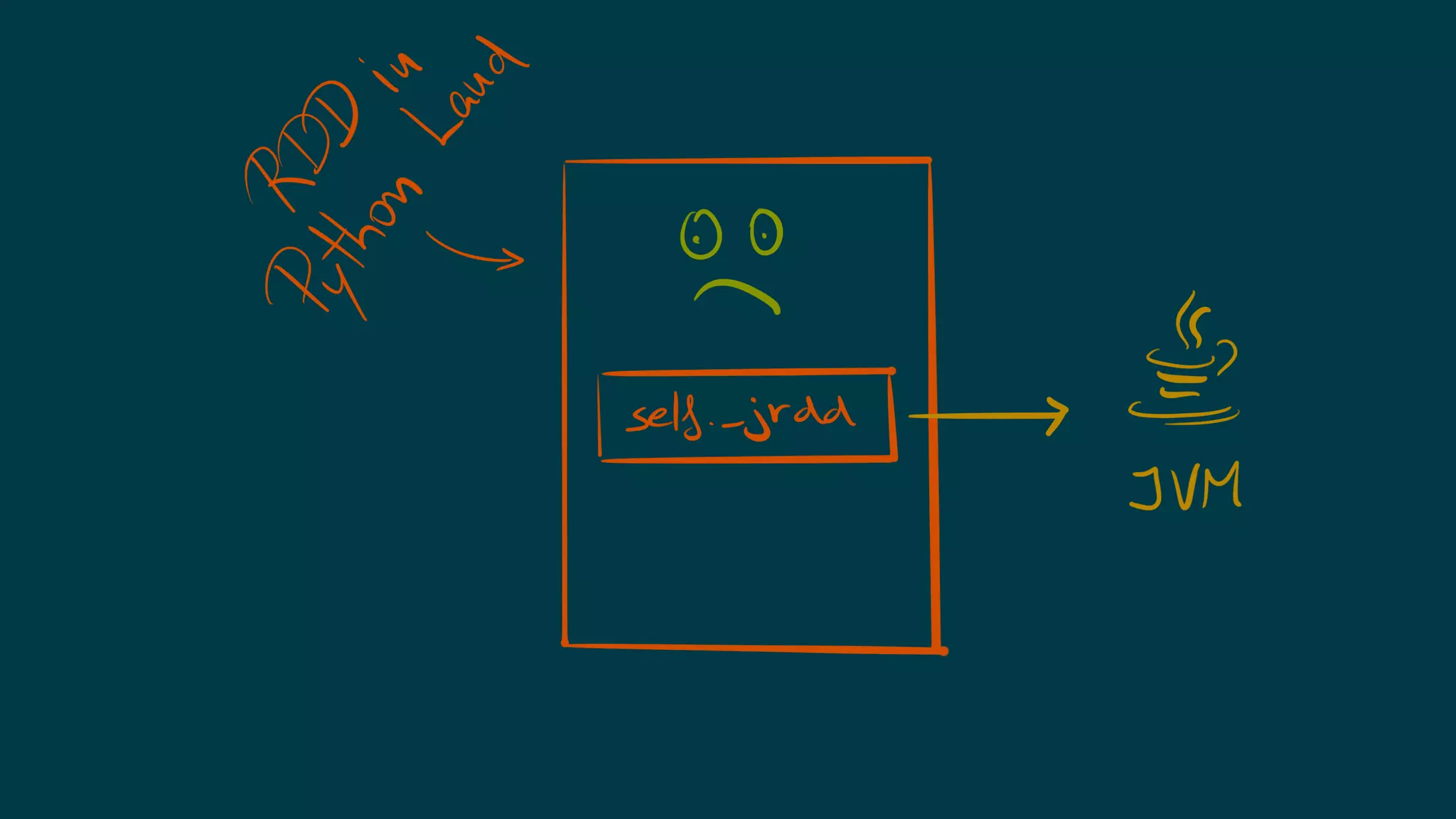

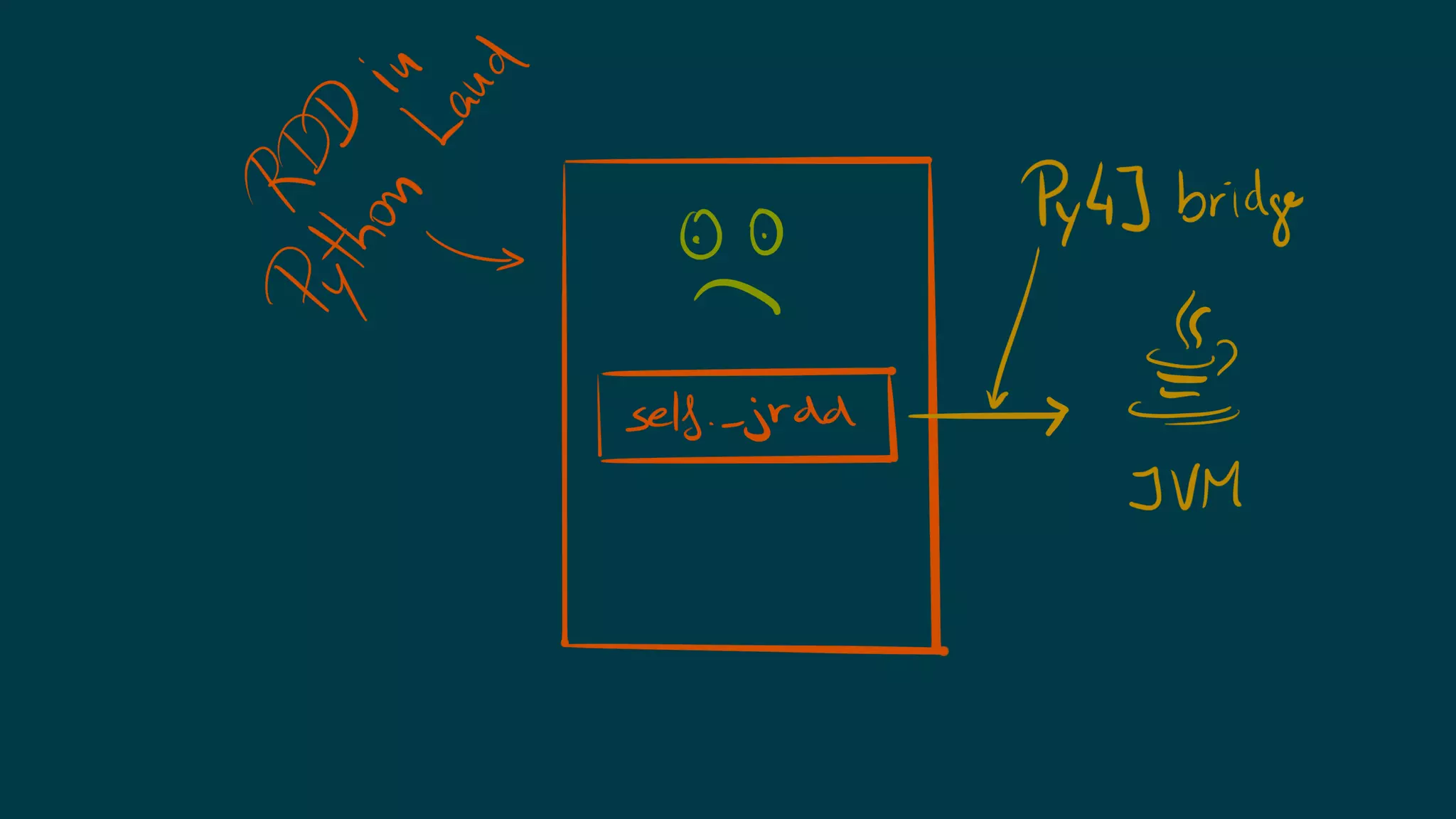

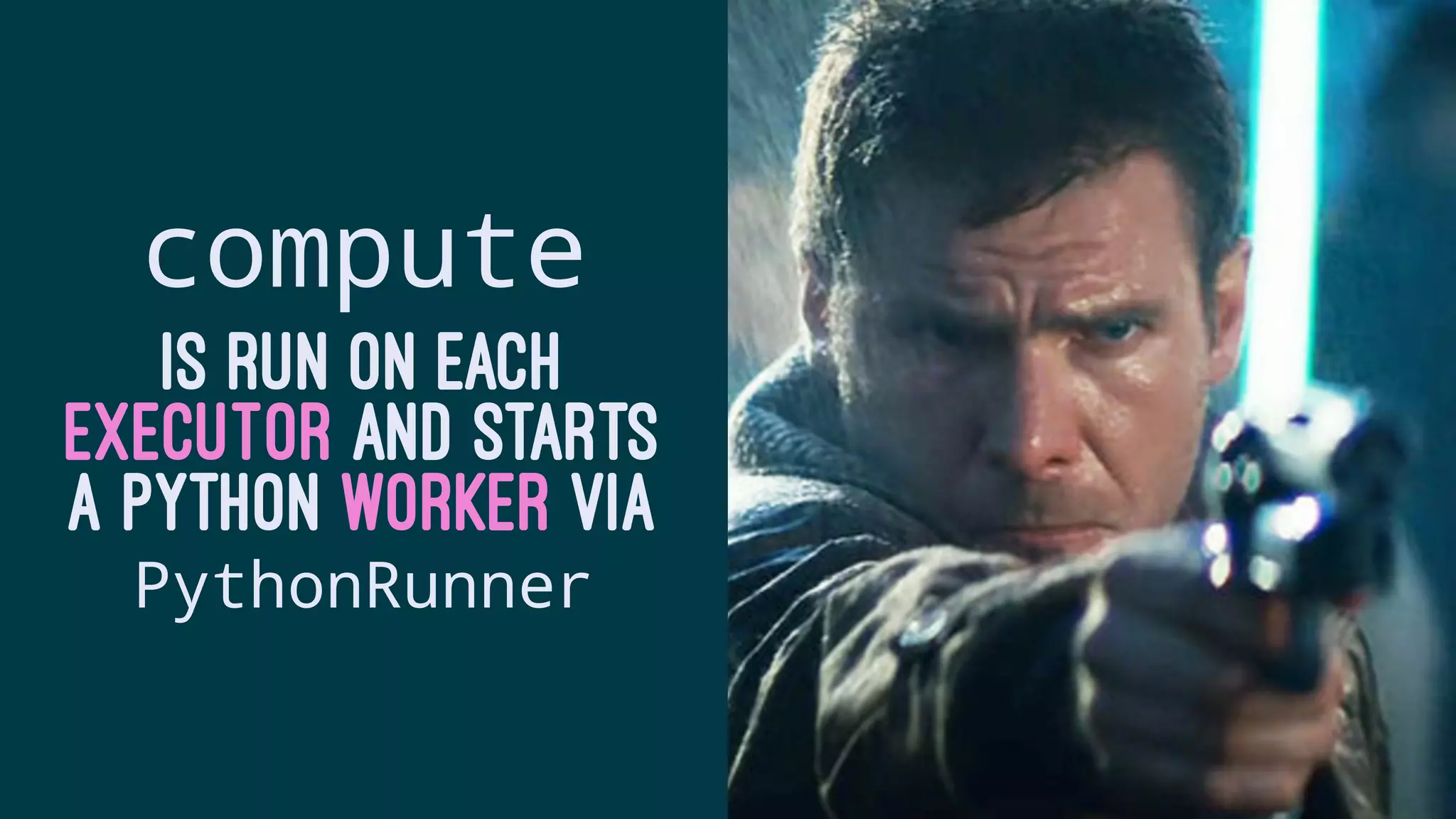

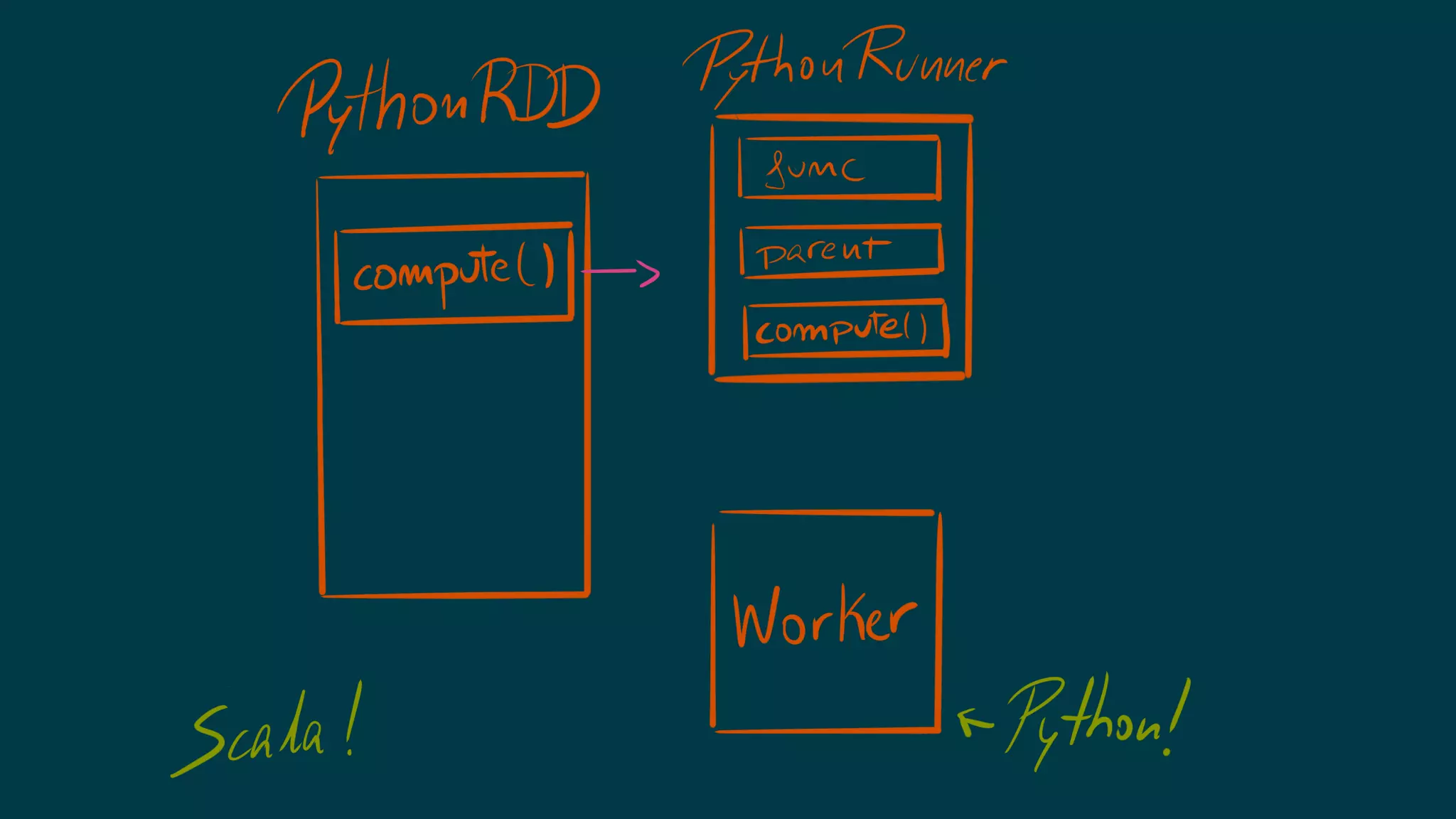

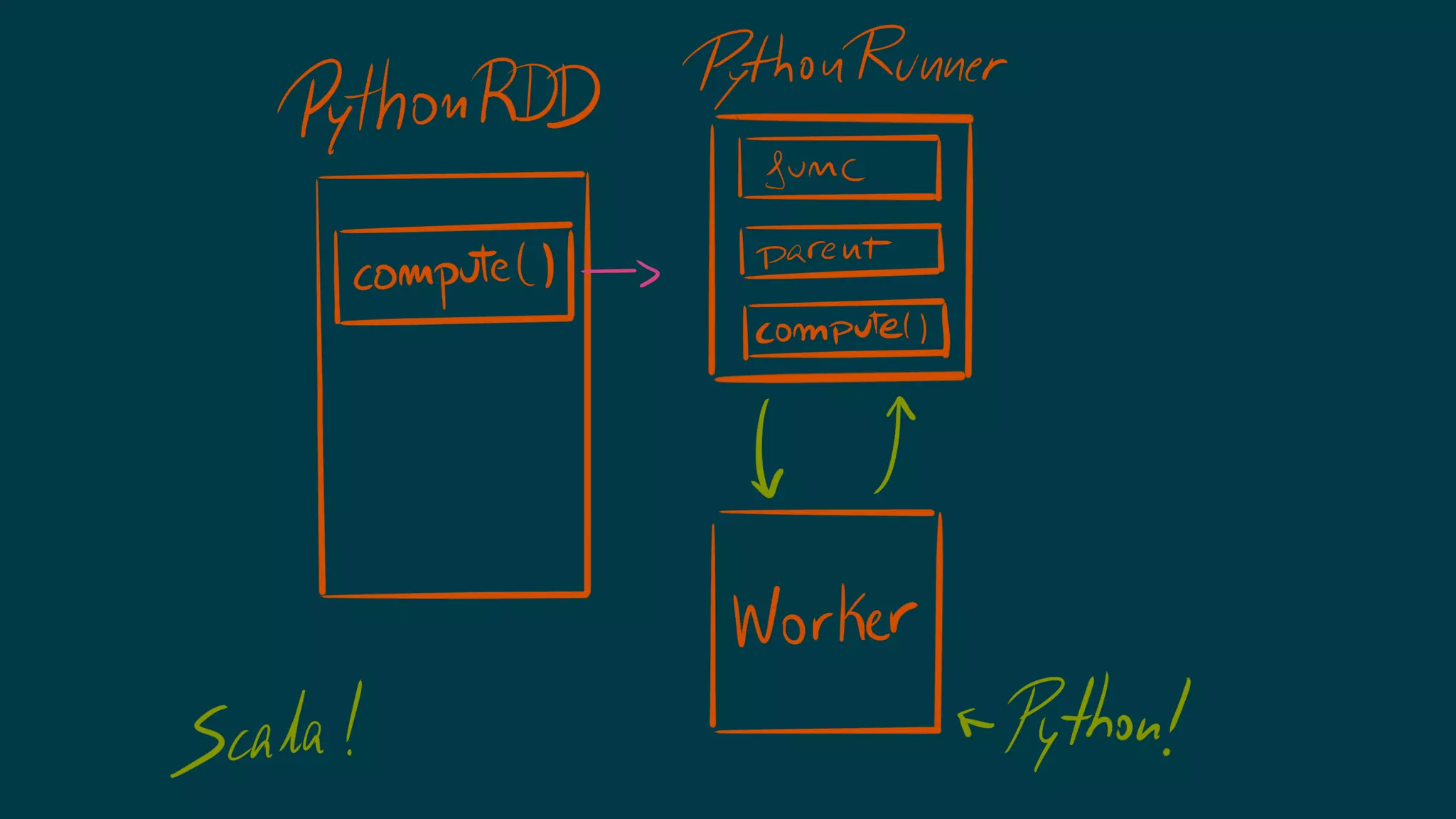

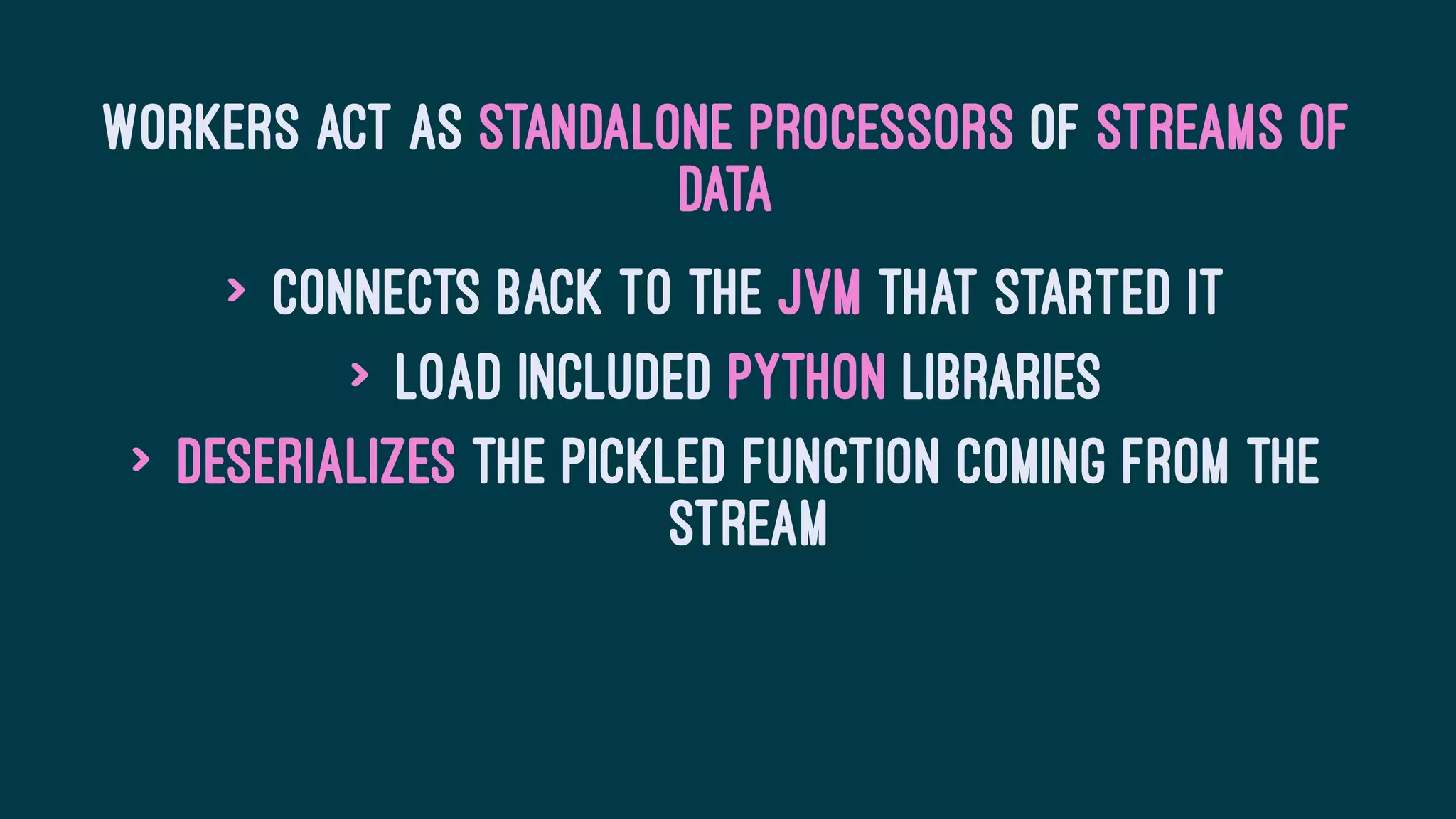

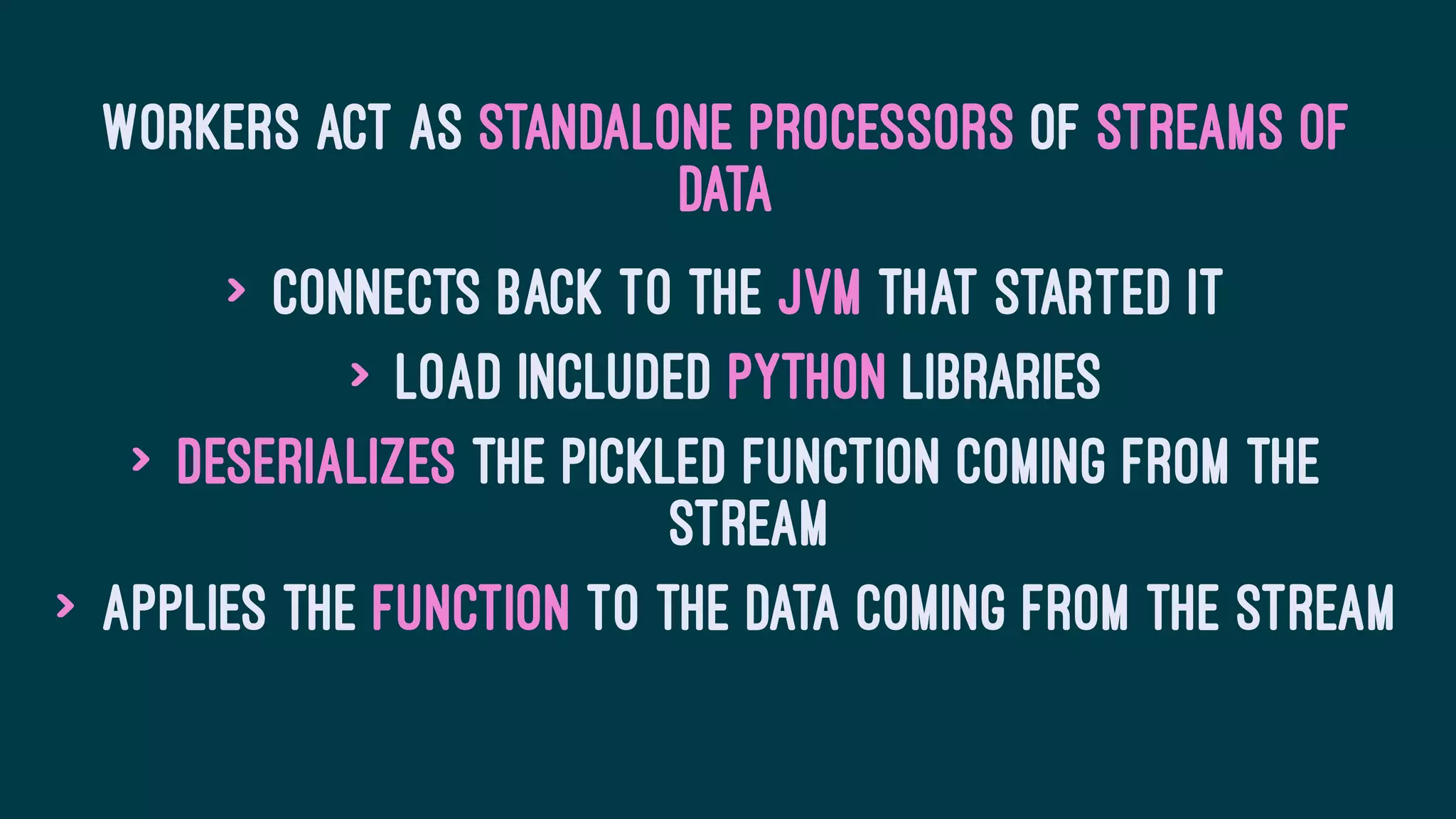

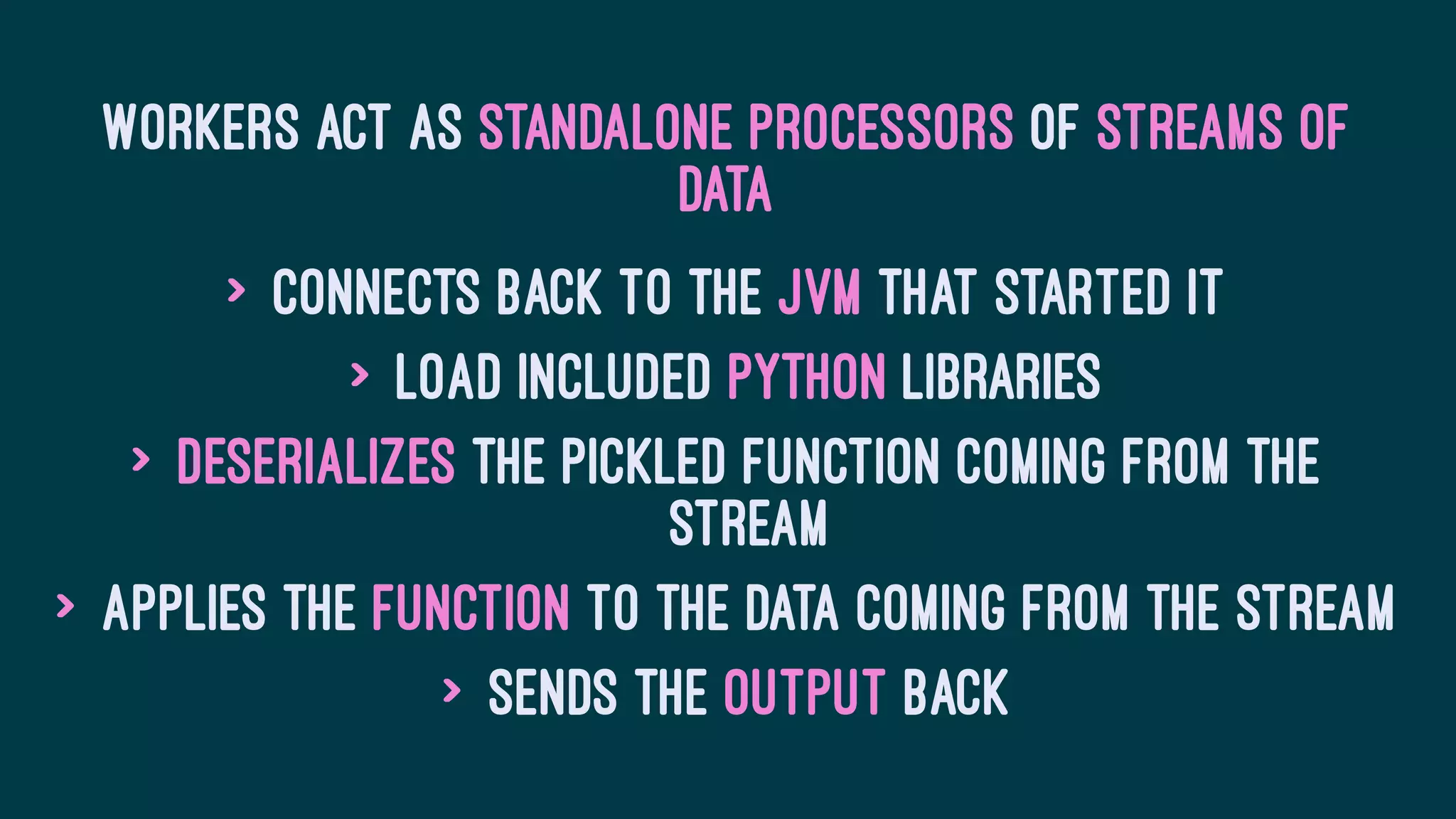

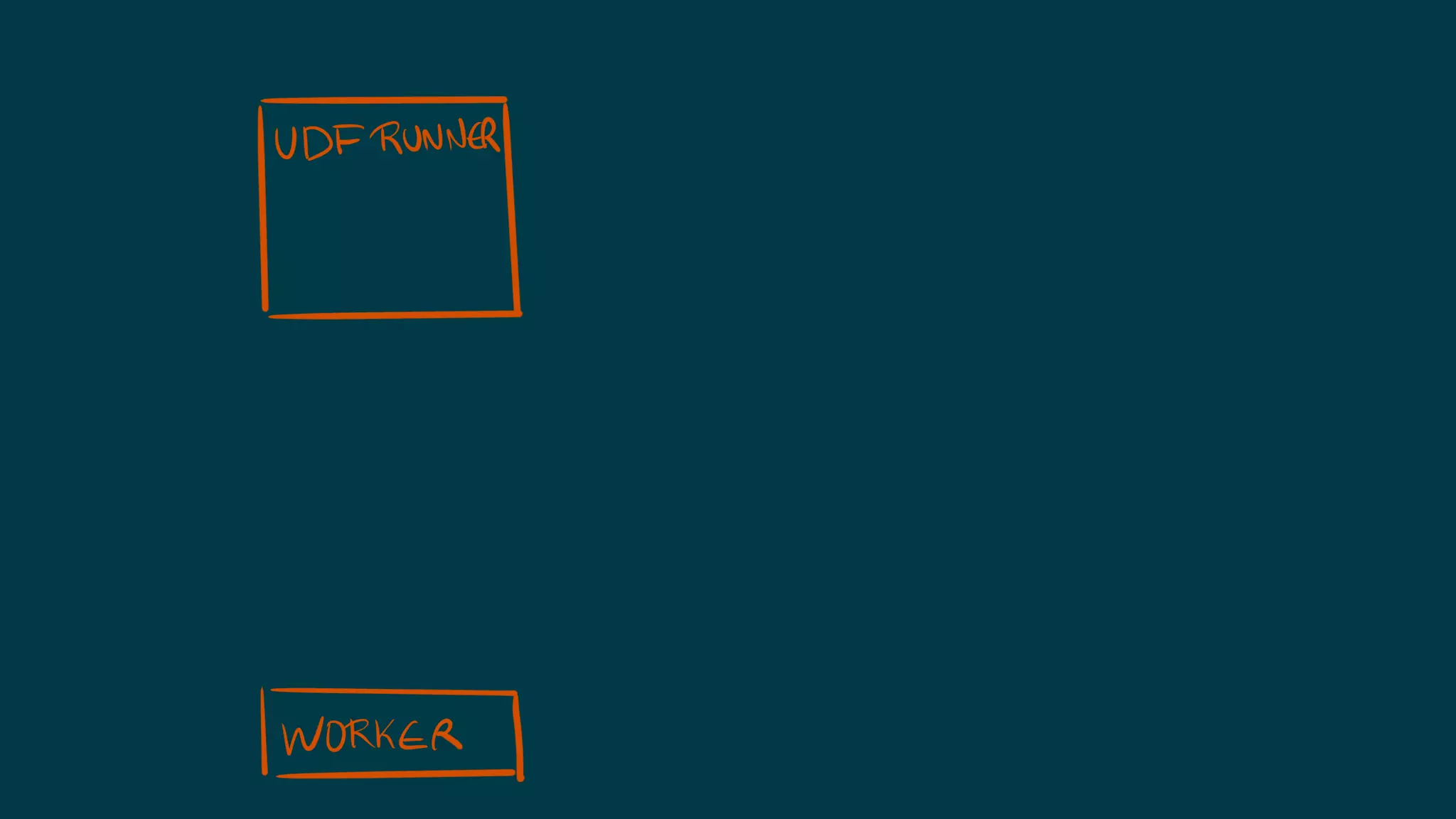

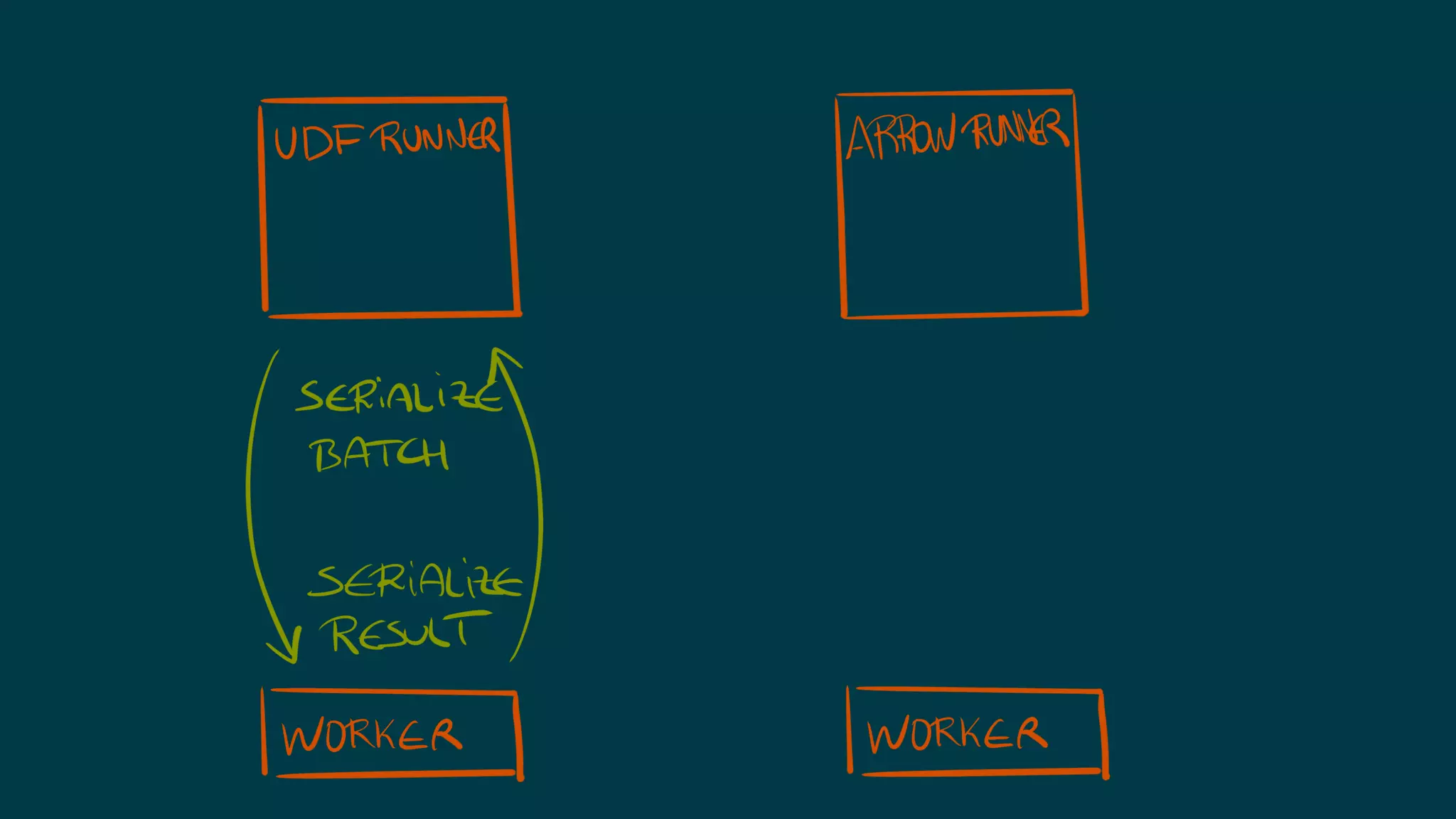

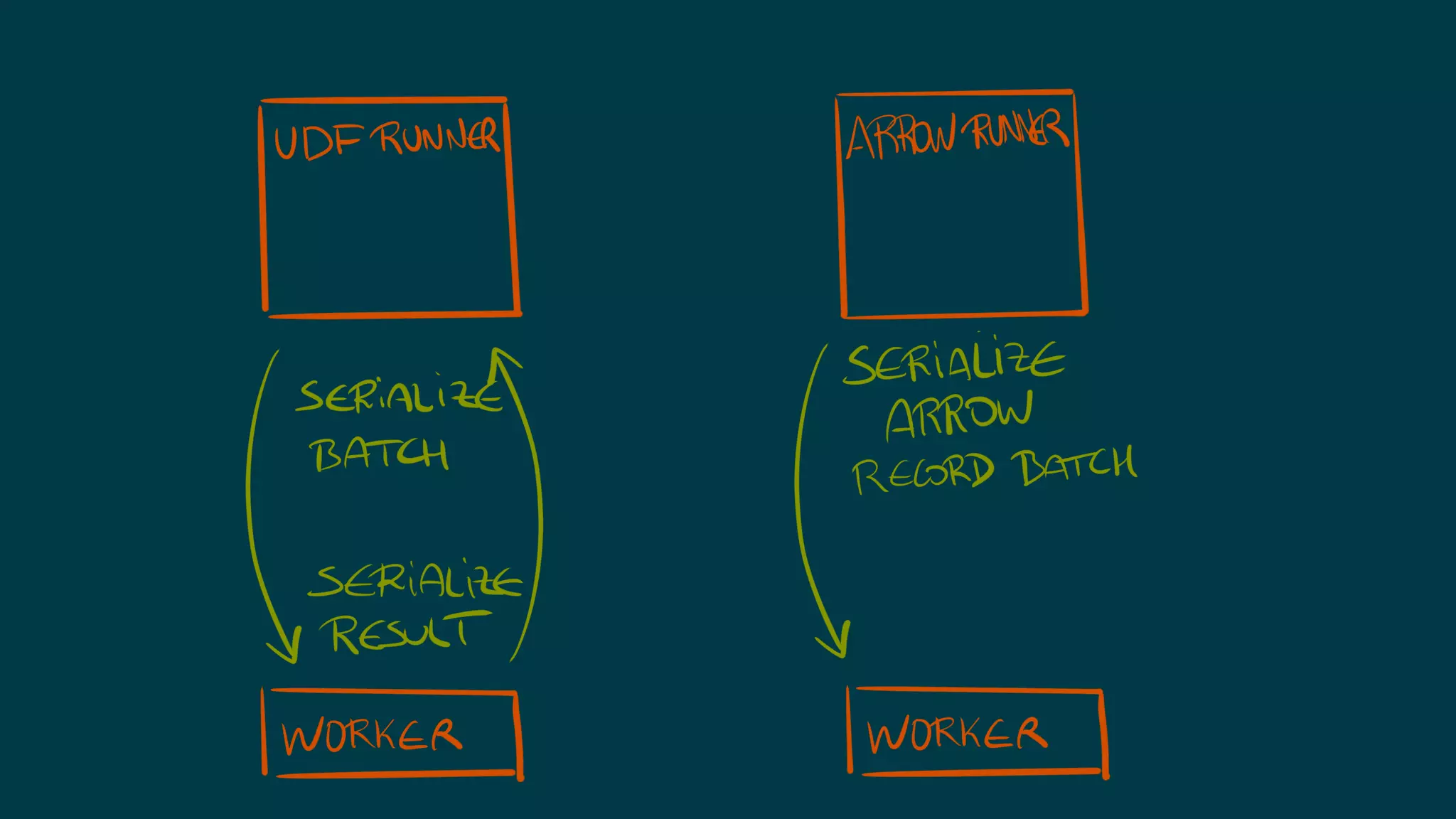

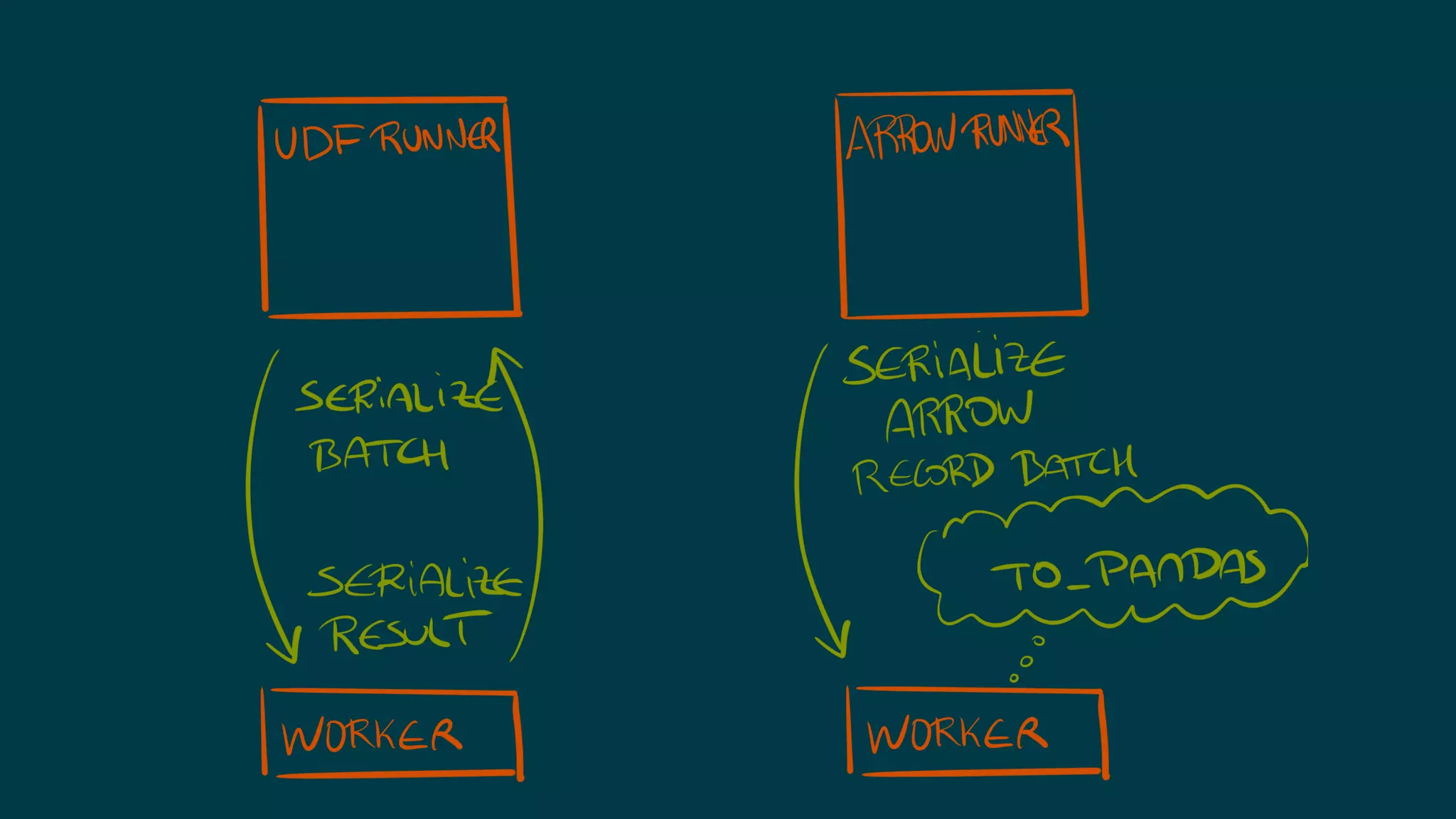

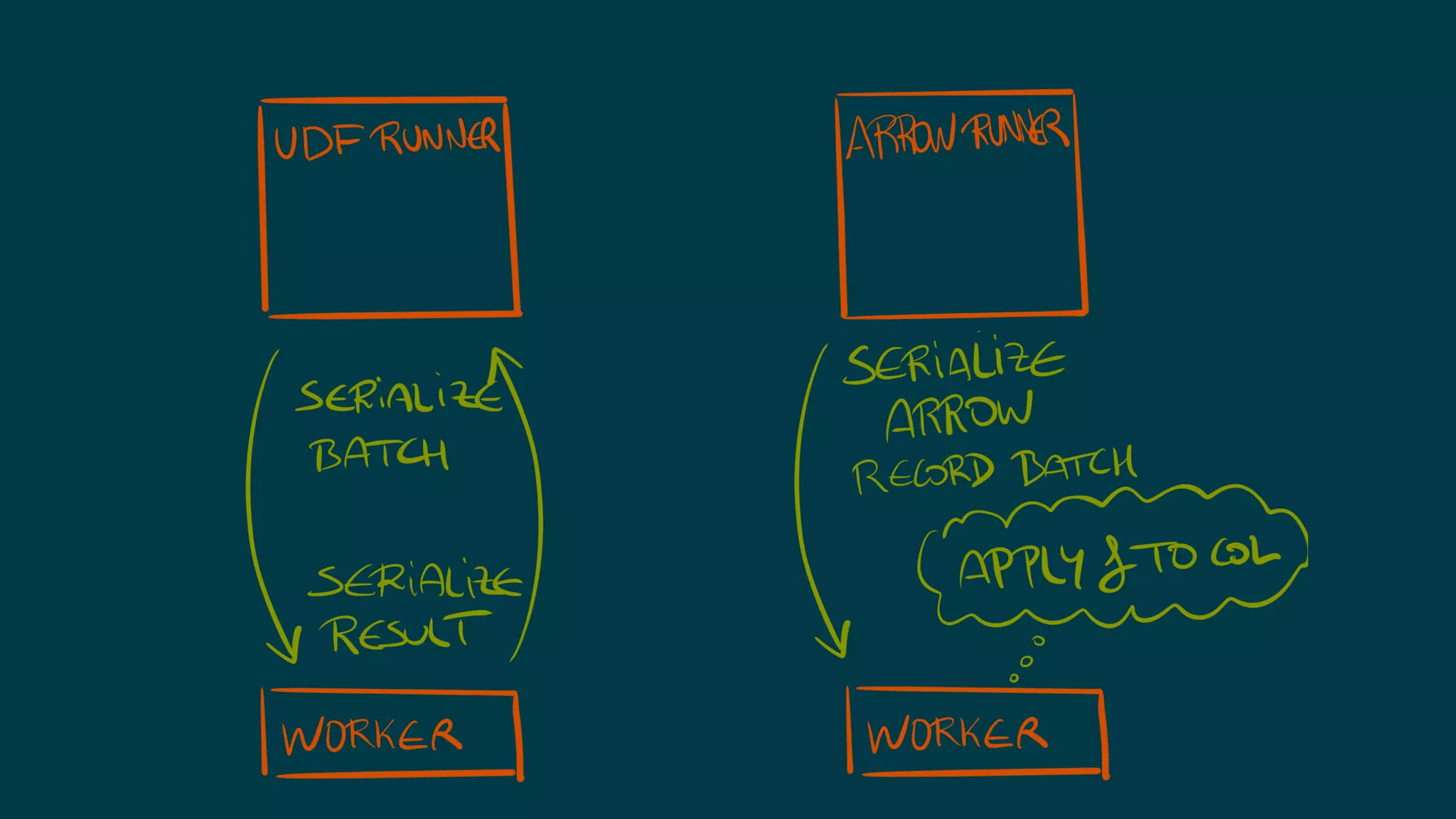

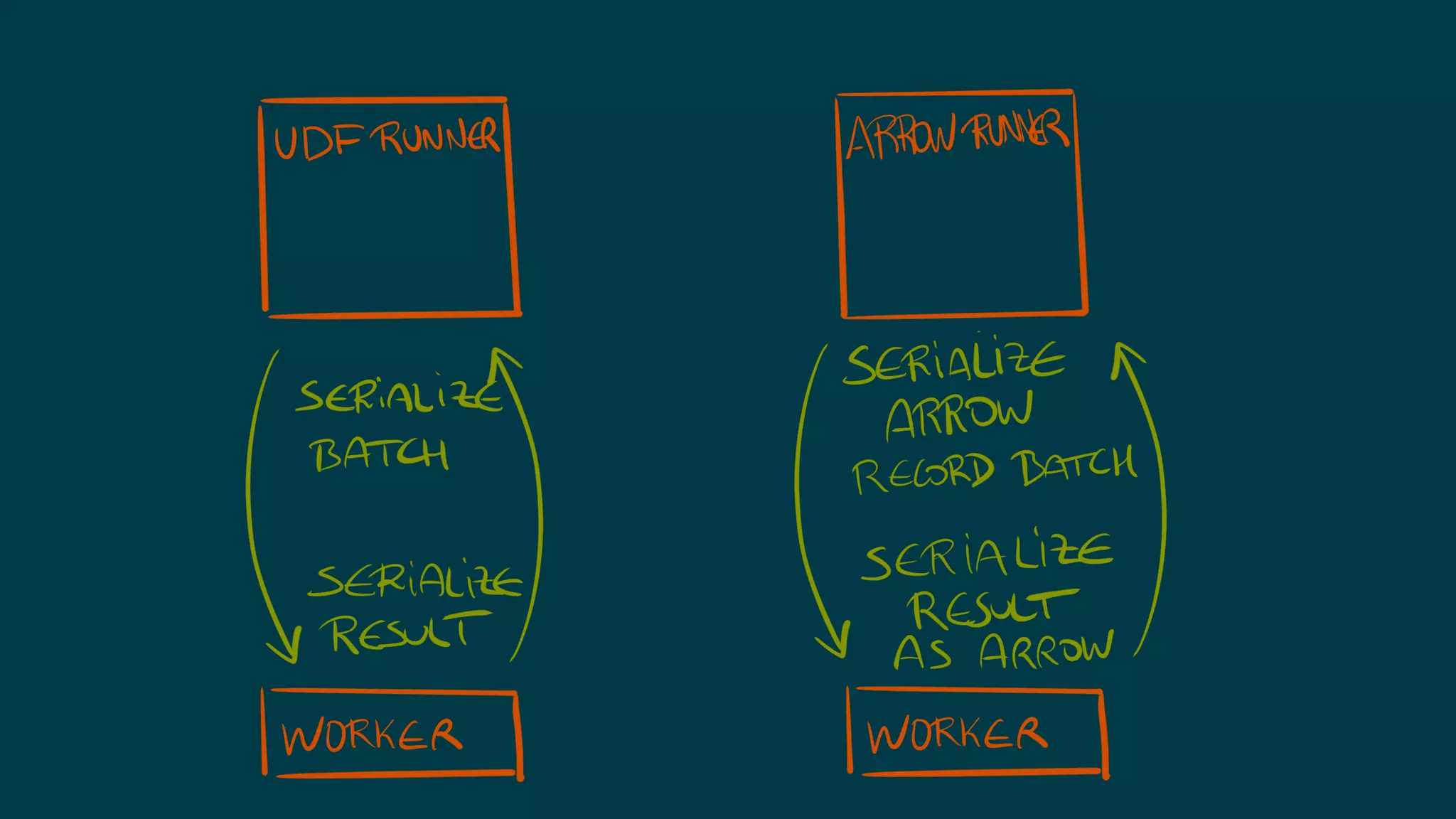

The document explains the functionalities and benefits of PySpark, Pandas, and Apache Arrow in data processing and analysis. It outlines how PySpark operates as a distributed computation framework, and highlights Arrow's efficiency in handling data through its cross-language columnar format, particularly emphasizing optimizations when interfacing with Pandas. Additionally, it discusses the performance improvements achievable in PySpark through the use of Arrow, noting that transformations in Pandas can significantly speed up execution times.