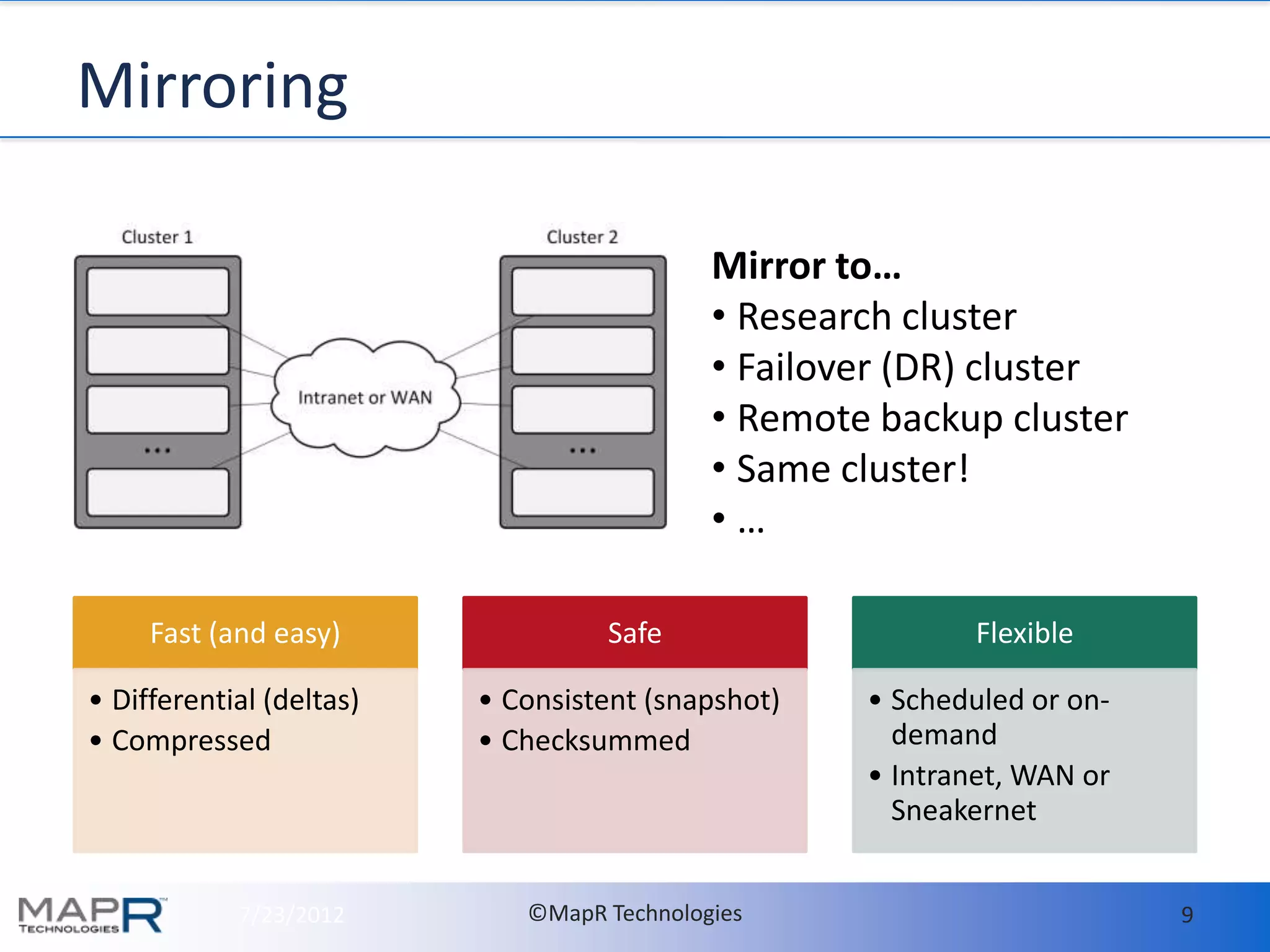

- MapR makes it easy to deploy and run HBase in production by providing high availability, data protection, and disaster recovery features out of the box. This includes setting up hourly snapshots and cross-datacenter mirroring with just a few commands.

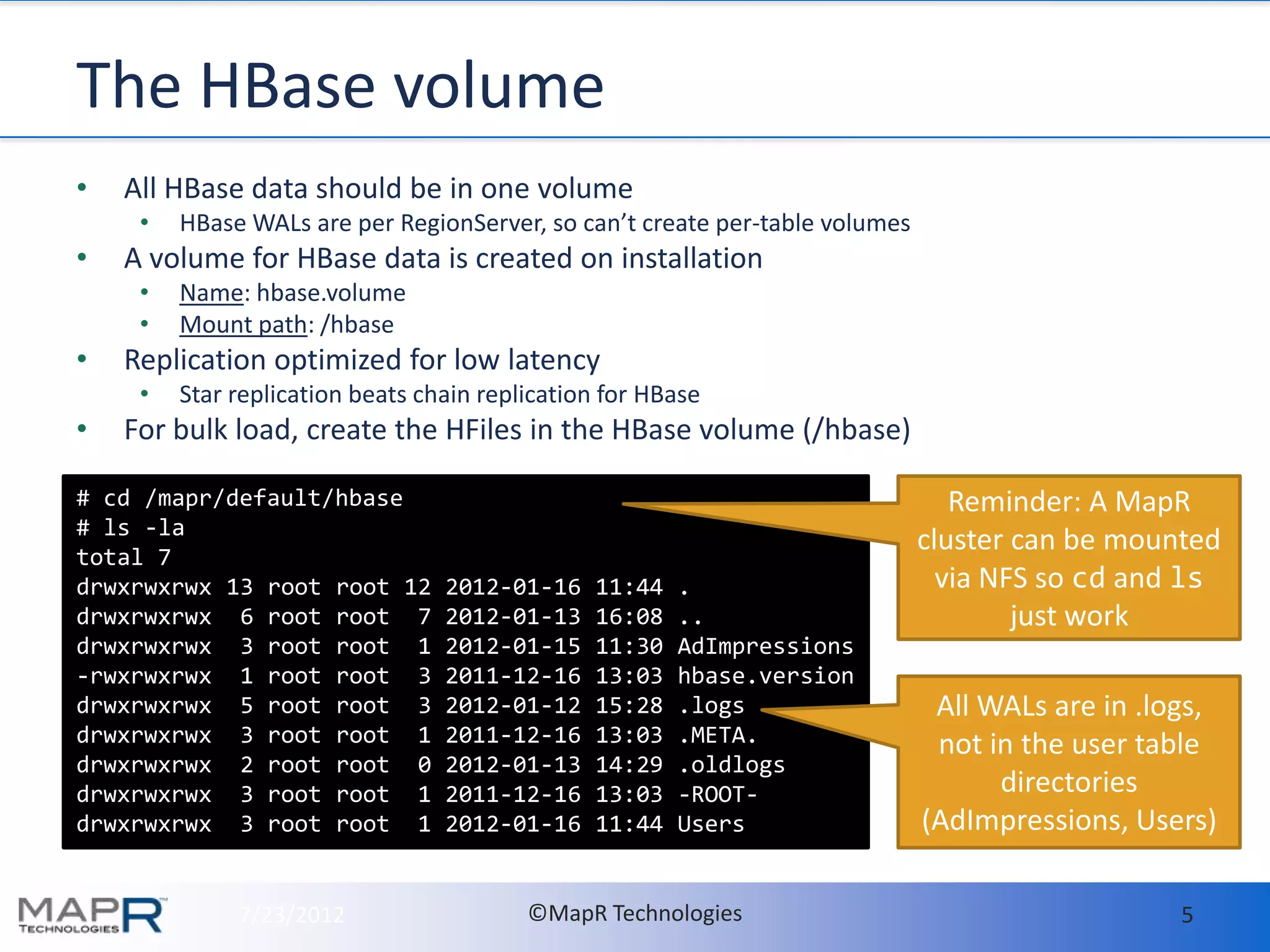

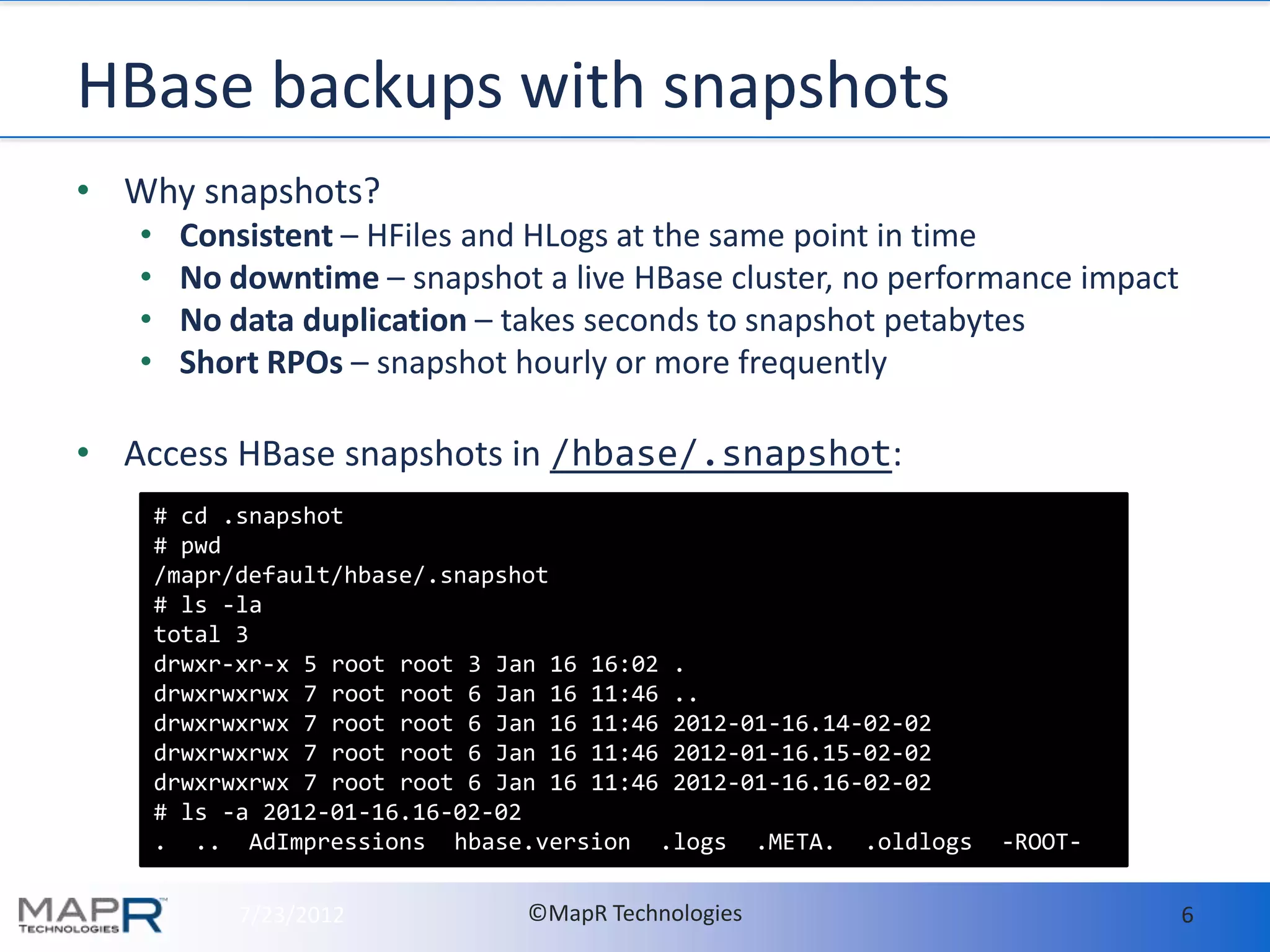

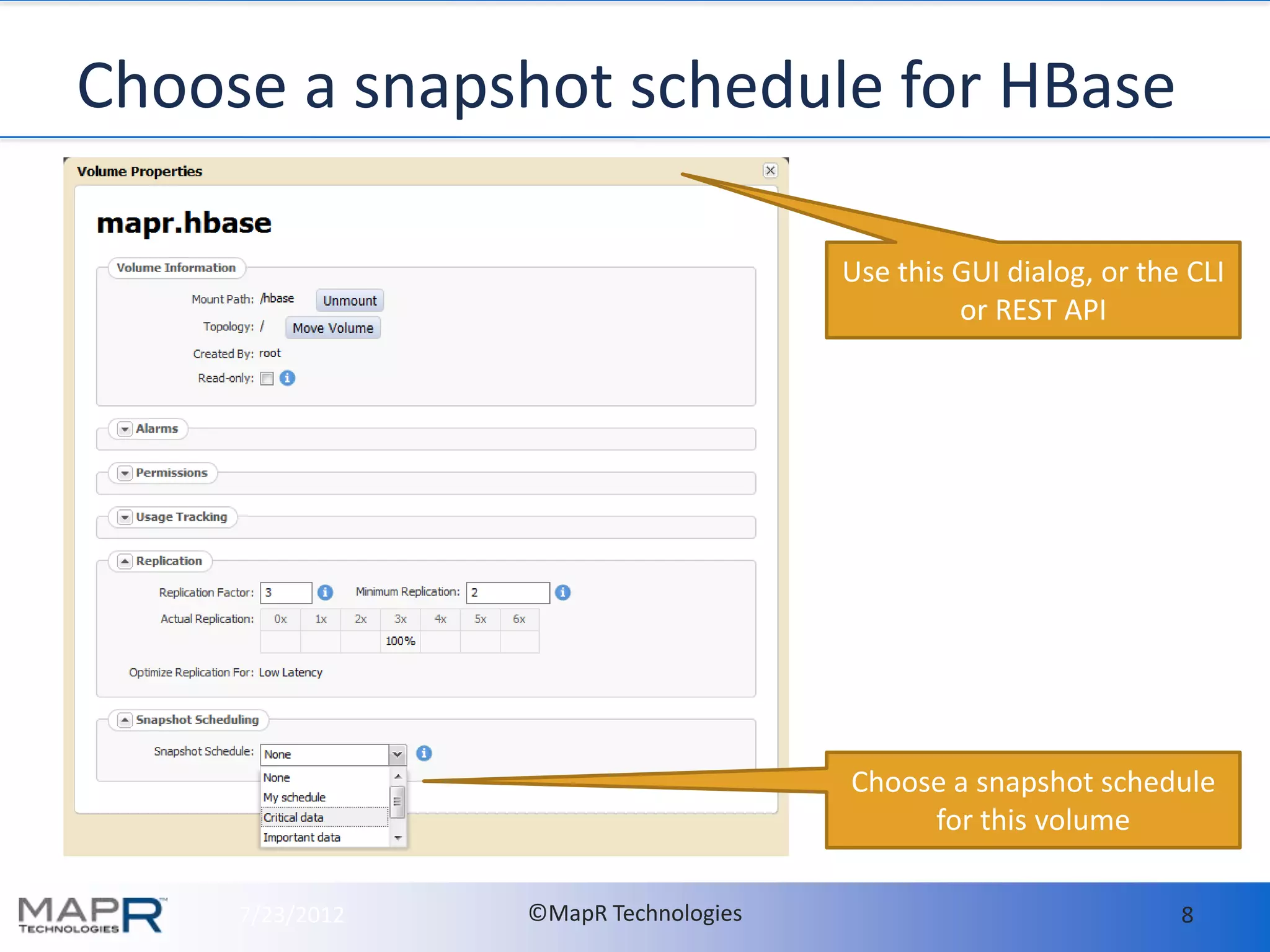

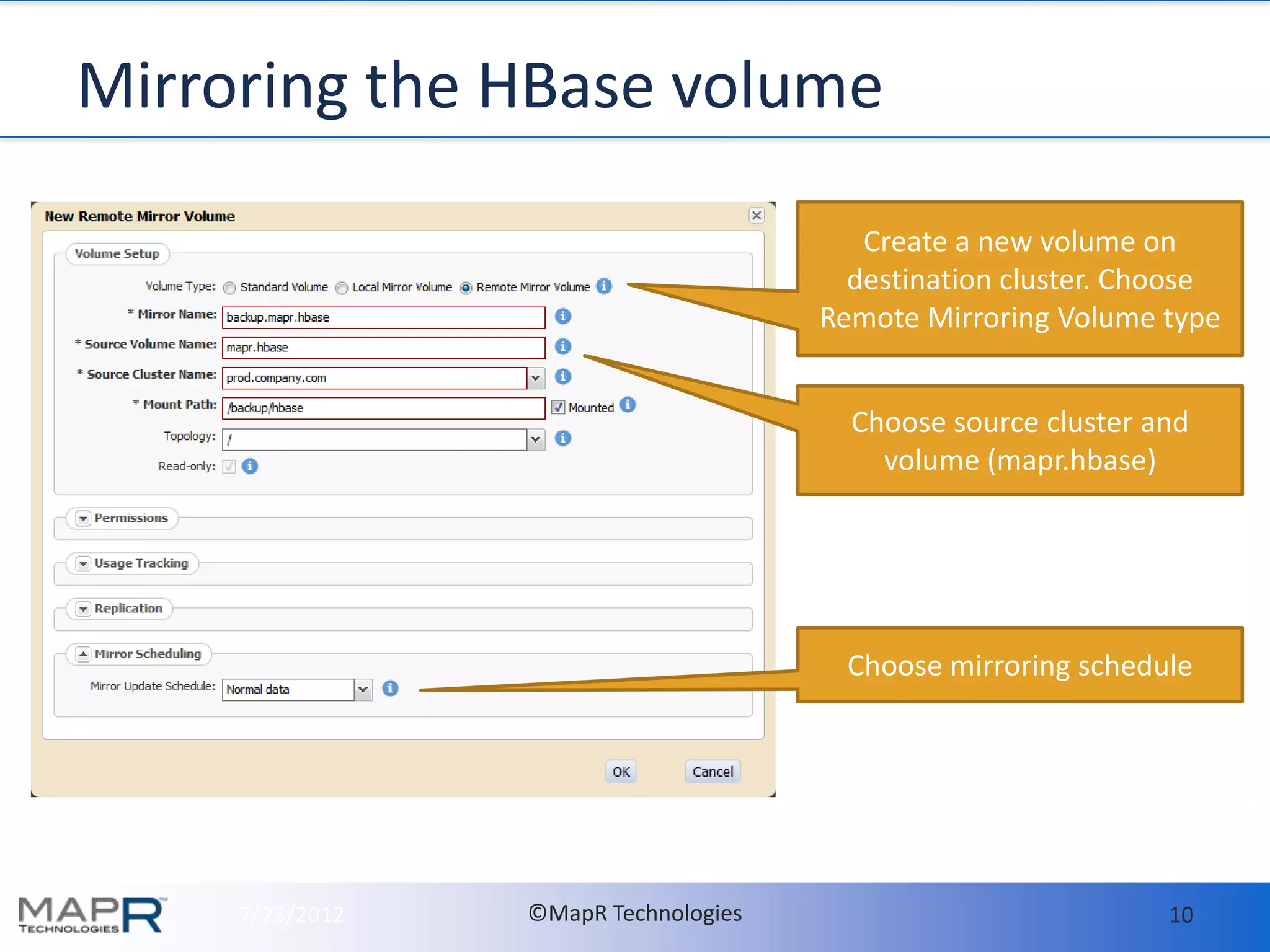

- With MapR, all HBase data should be stored in a single volume called "hbase.volume" for easy management. Snapshots are taken of this volume to enable consistent backups with no downtime. The snapshots can be mirrored to other clusters for disaster recovery.

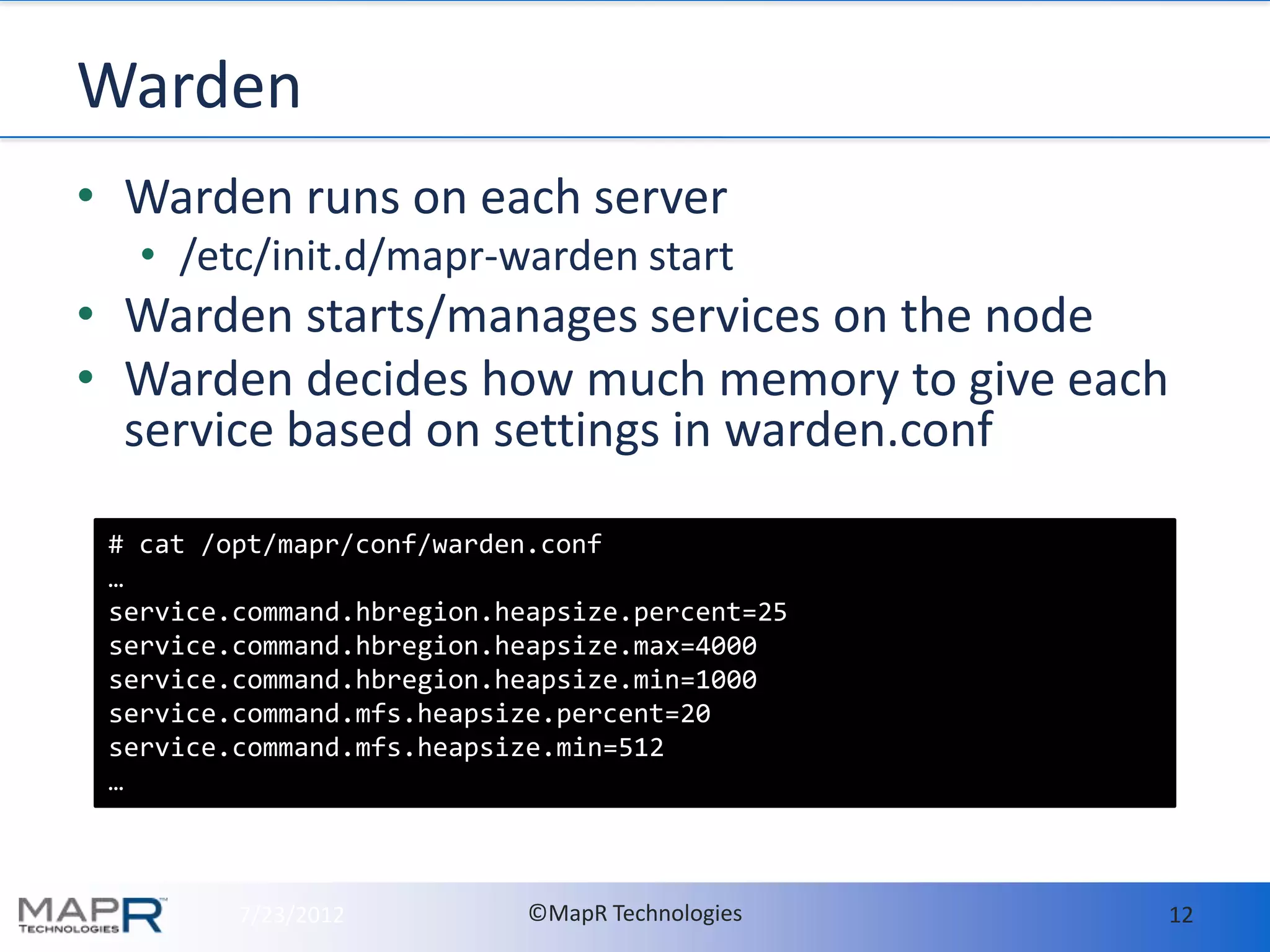

- Memory usage on RegionServers is automatically tuned by MapR's Warden service based on configuration. The defaults generally work well but guidelines are provided for tuning in large deployments.