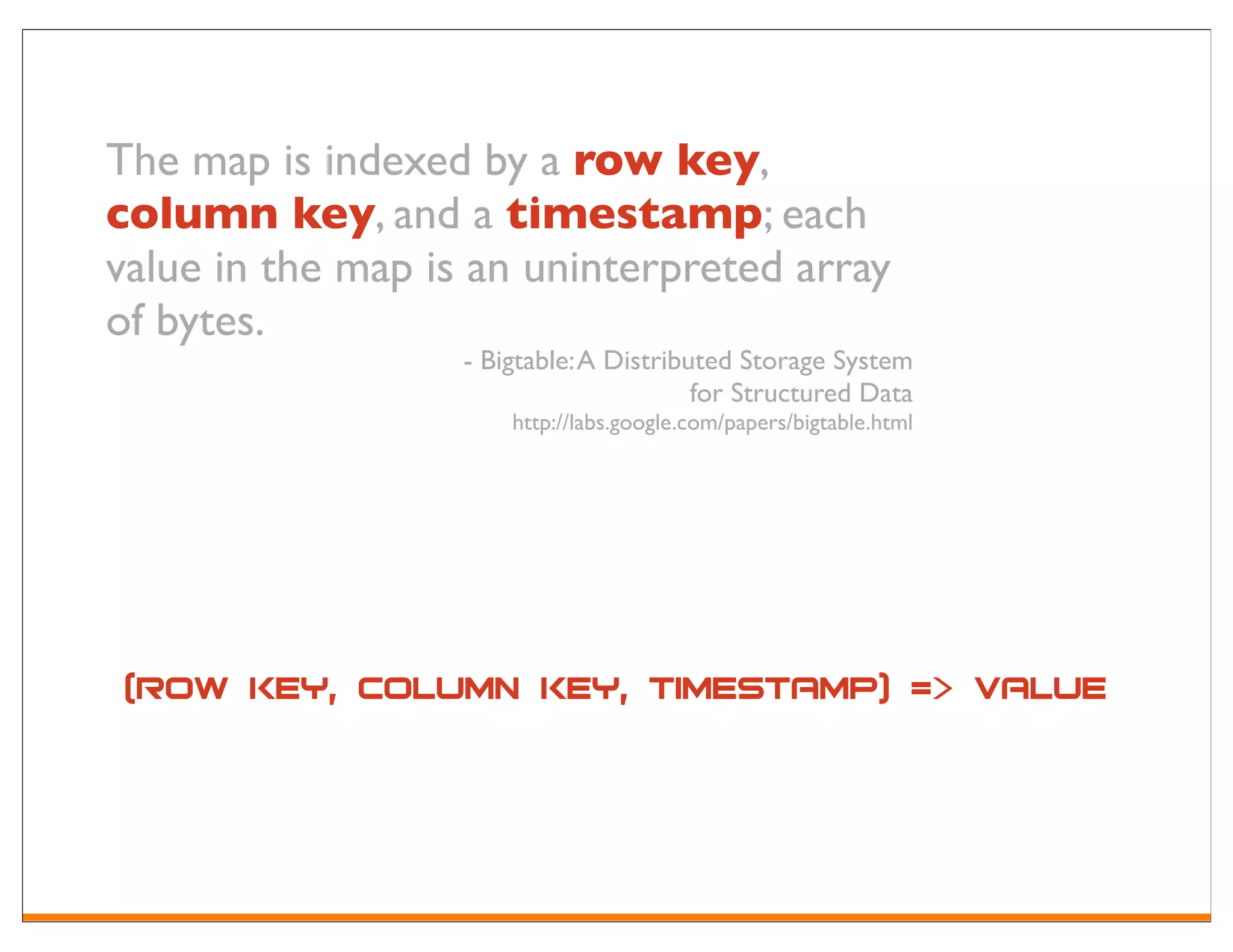

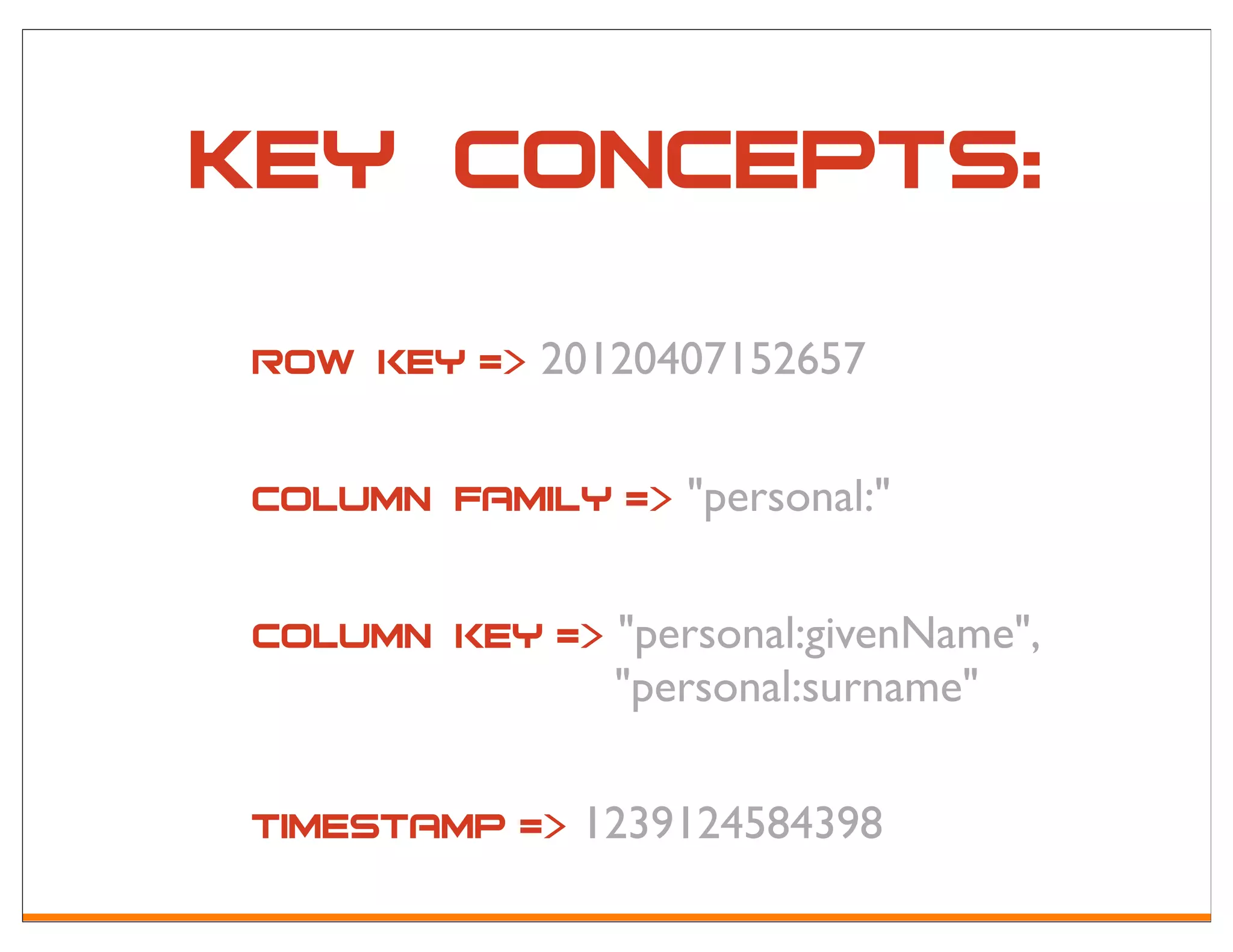

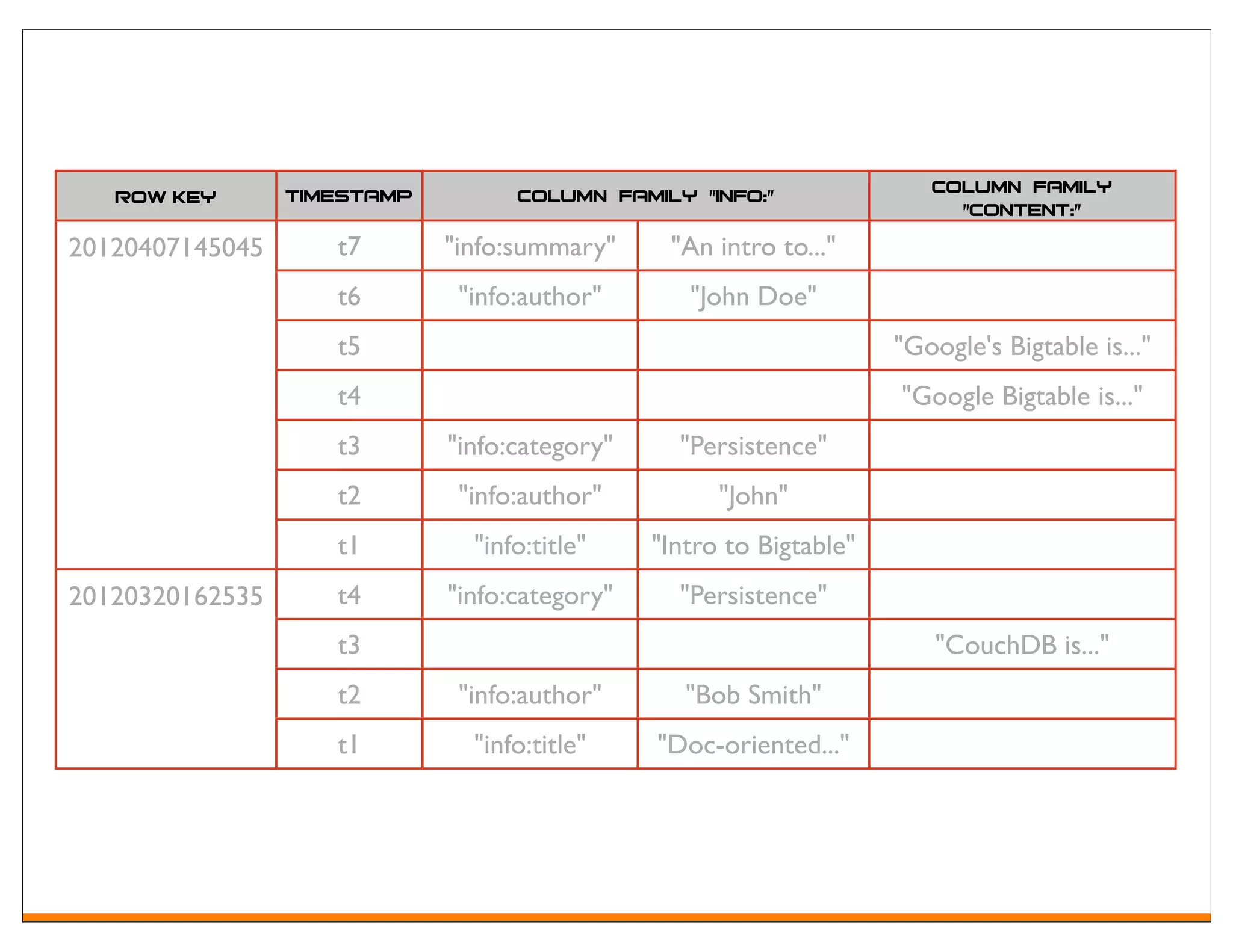

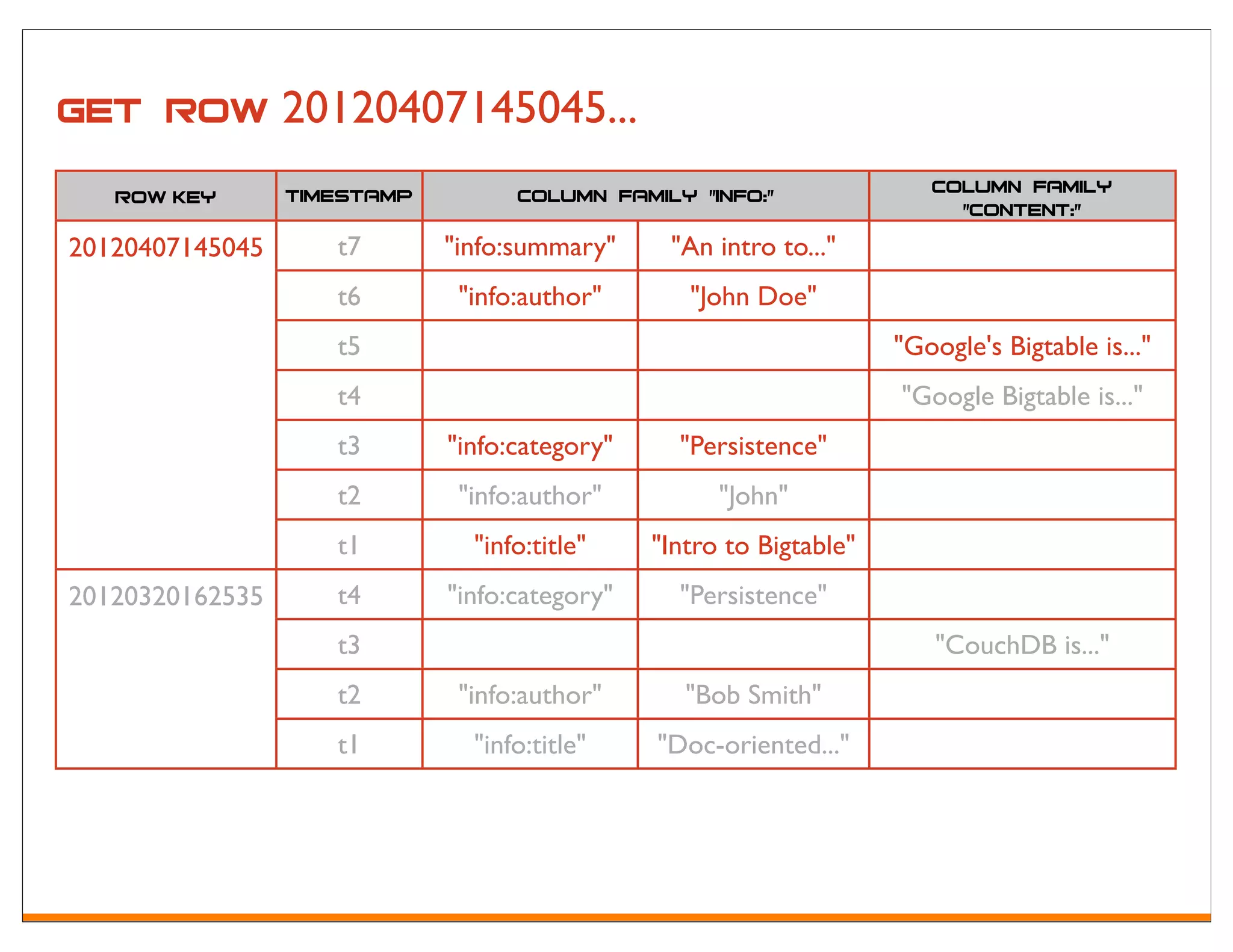

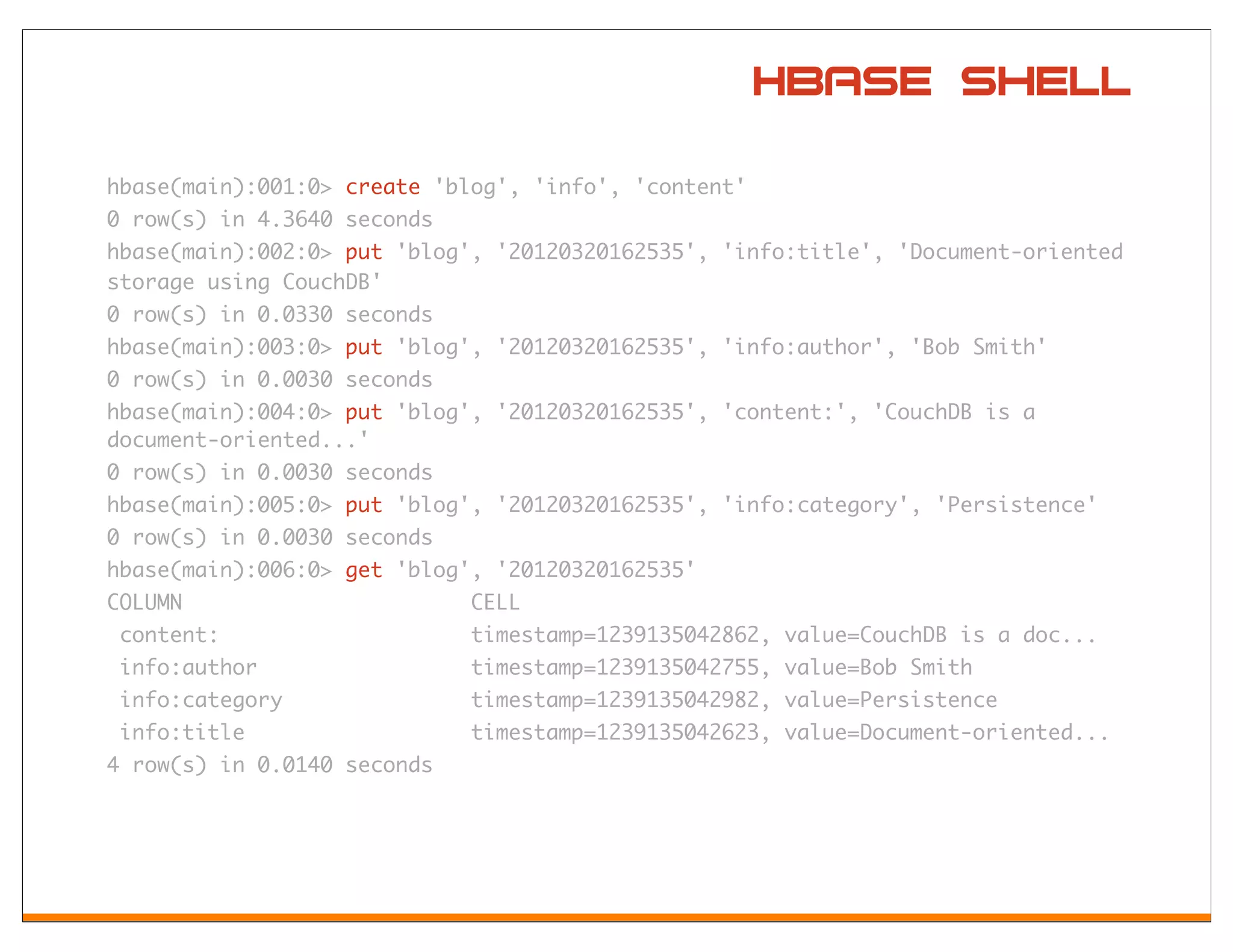

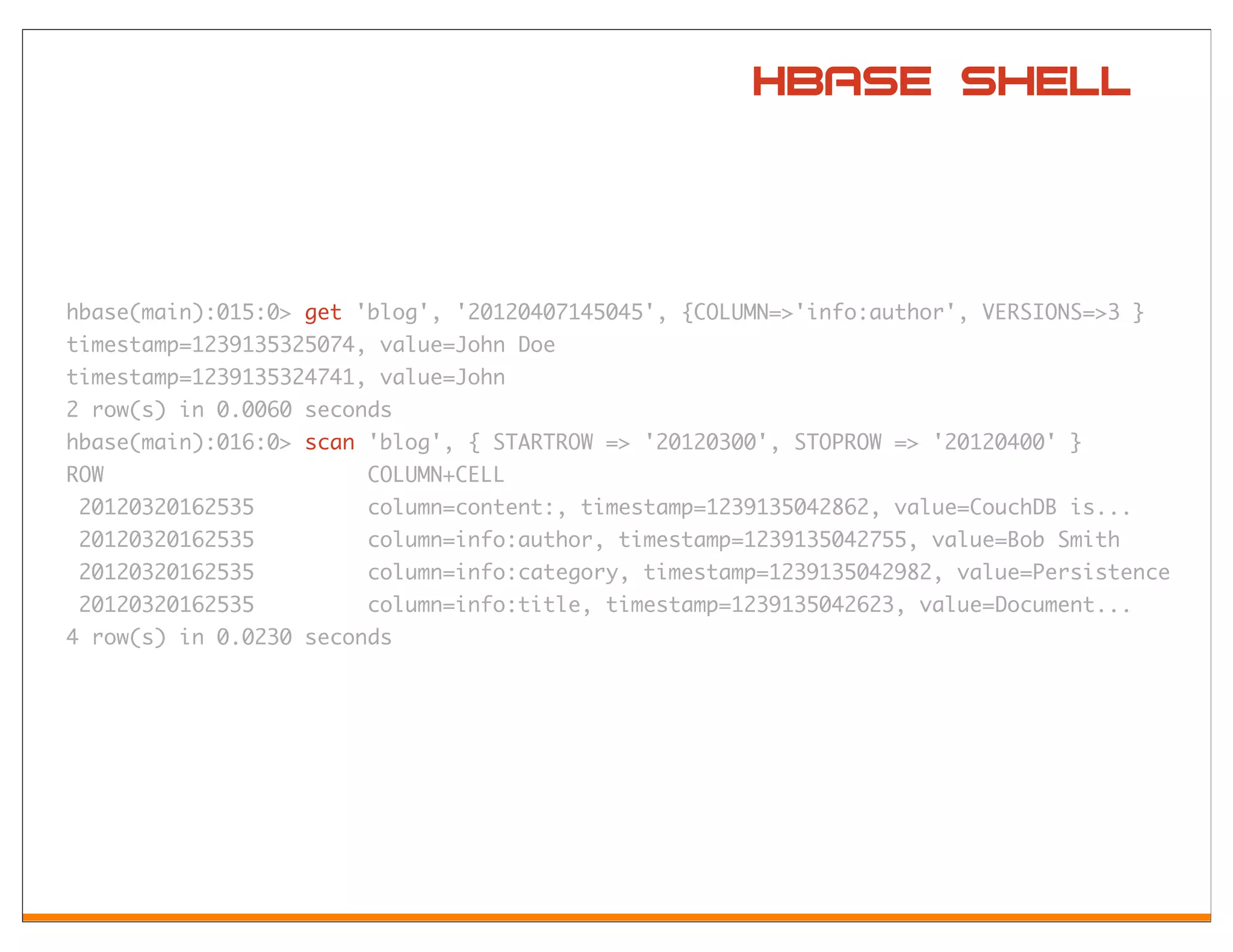

Apache HBase is an open-source, distributed, column-oriented storage system modeled after Google's Bigtable, designed to handle very large tables with billions of rows and millions of columns. It provides random, real-time read/write access, making it suitable for various big data applications. Key features include a sparse, multidimensional sorted map structure, versioning, and the ability to manage data through a row key, column key, and timestamp.

![Got byte[]?](https://image.slidesharecdn.com/hbase-lightningtalk-120427164435-phpapp01/75/HBase-Lightning-Talk-15-2048.jpg)