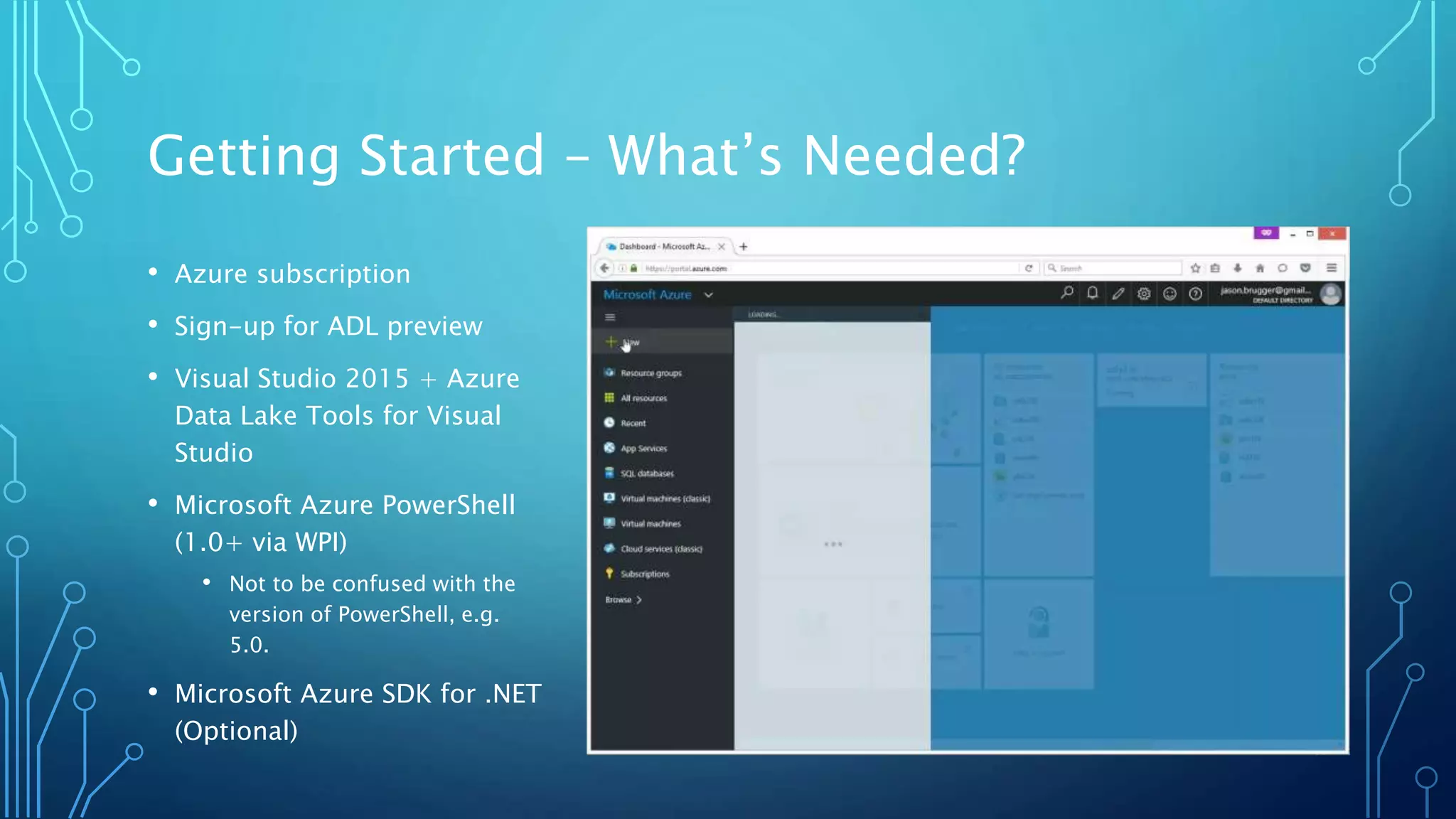

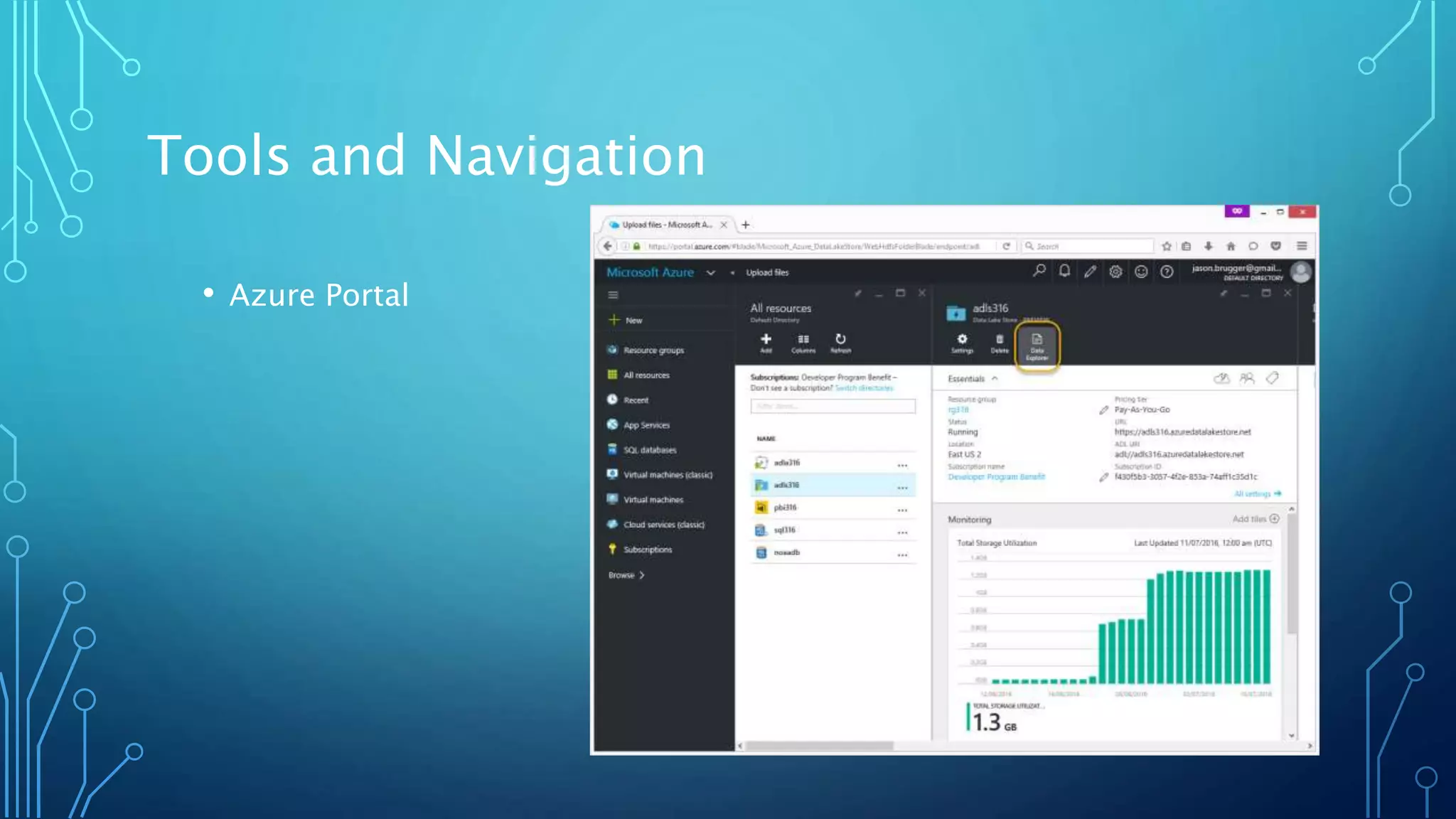

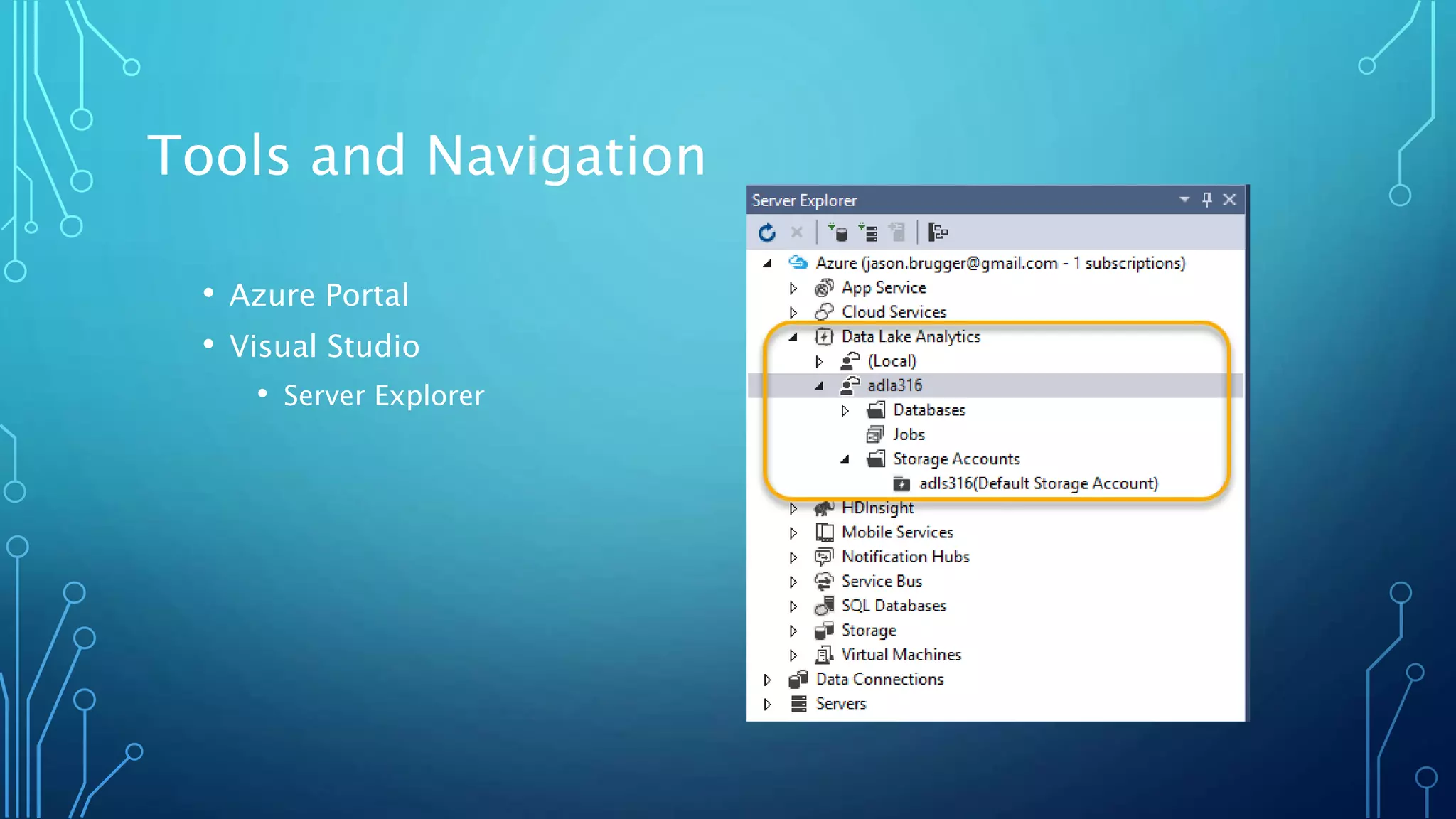

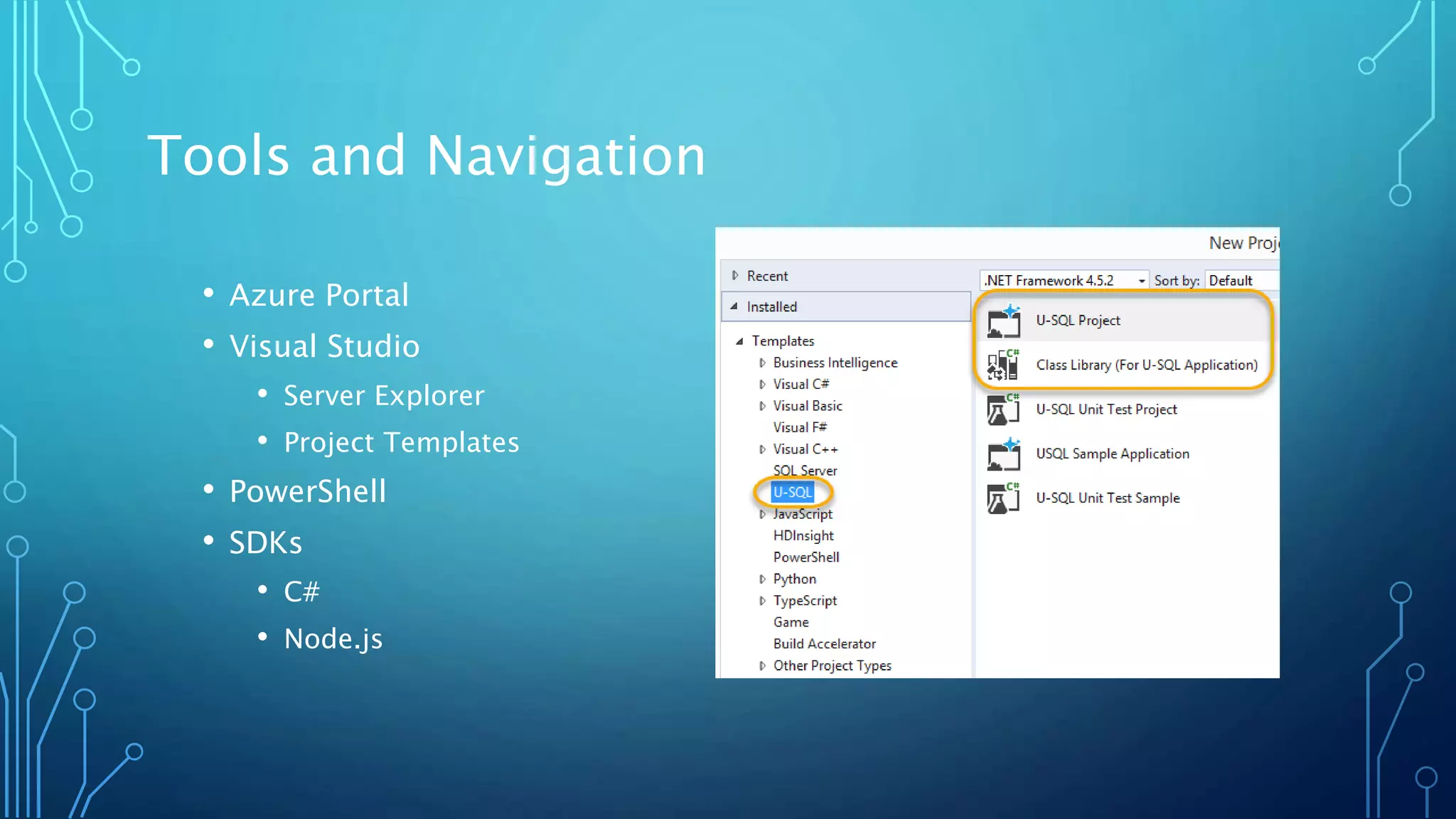

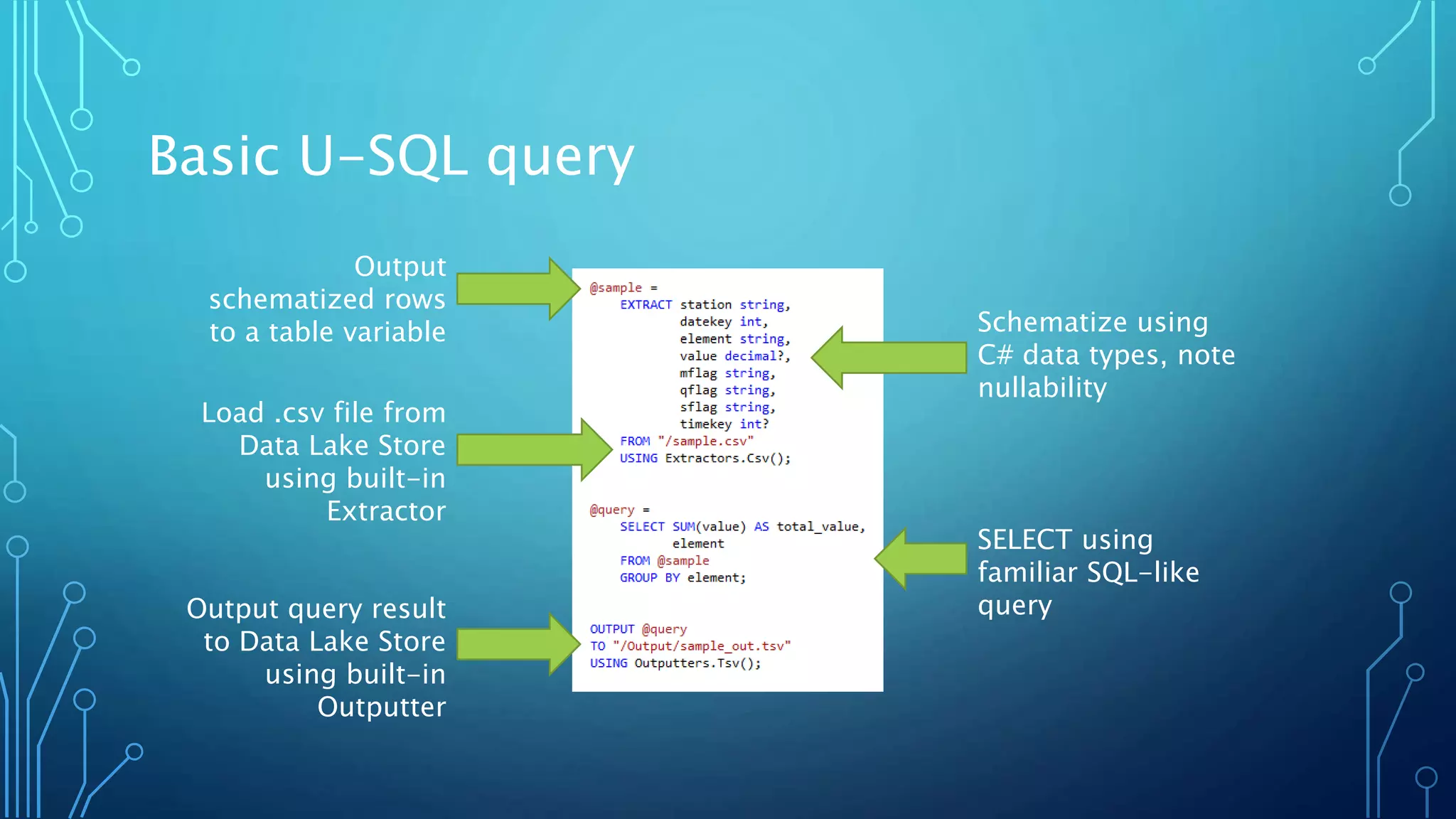

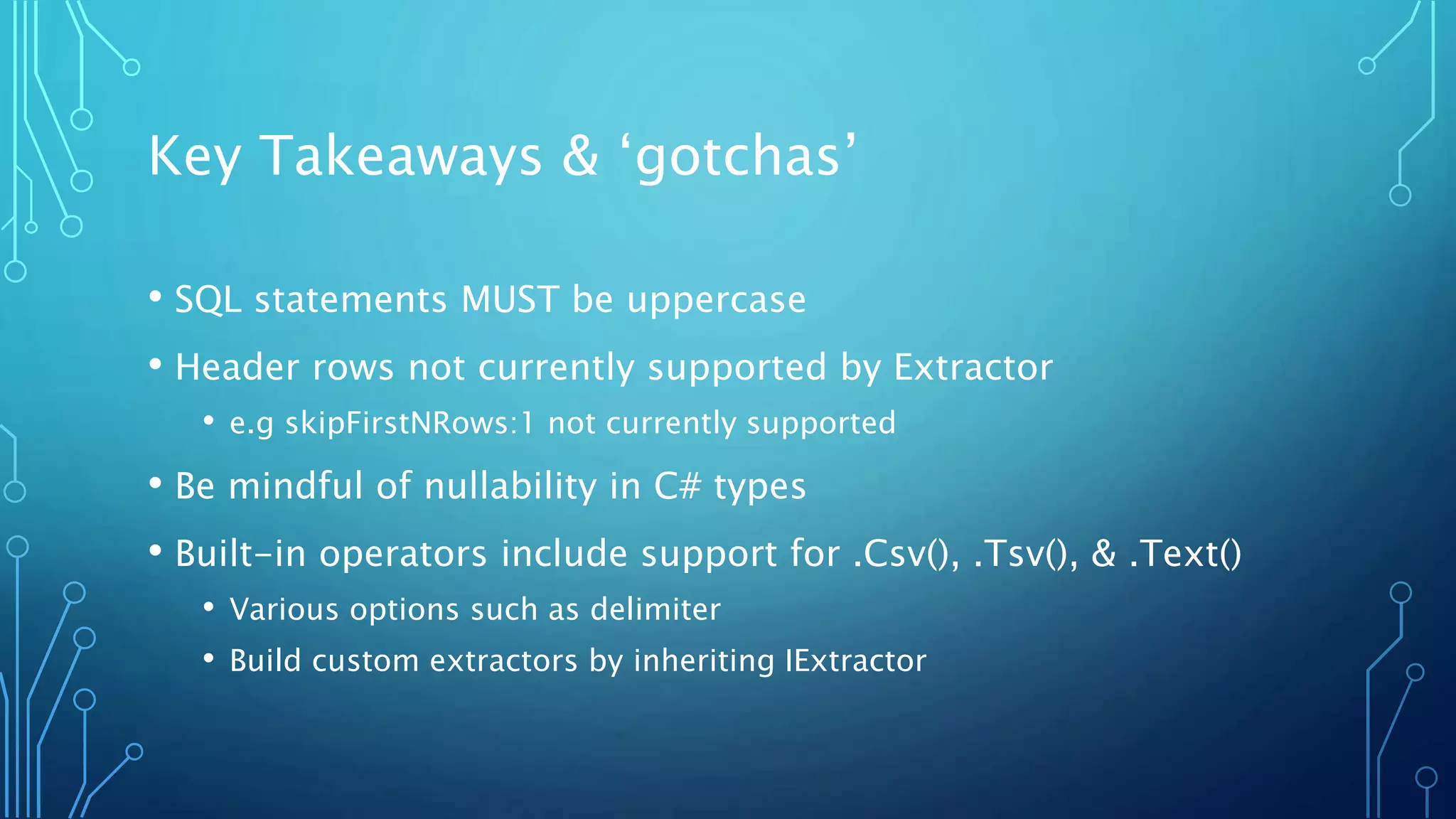

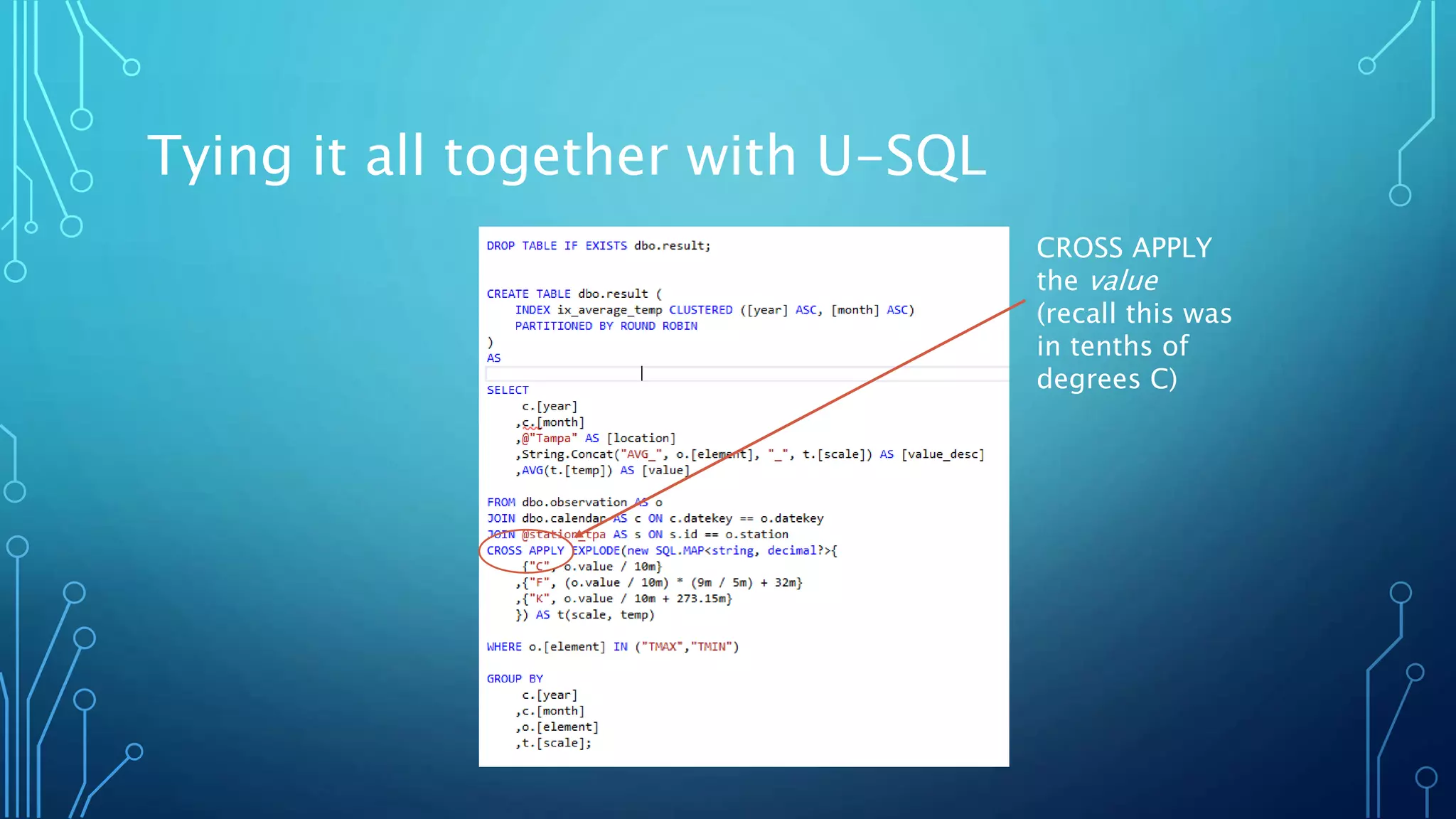

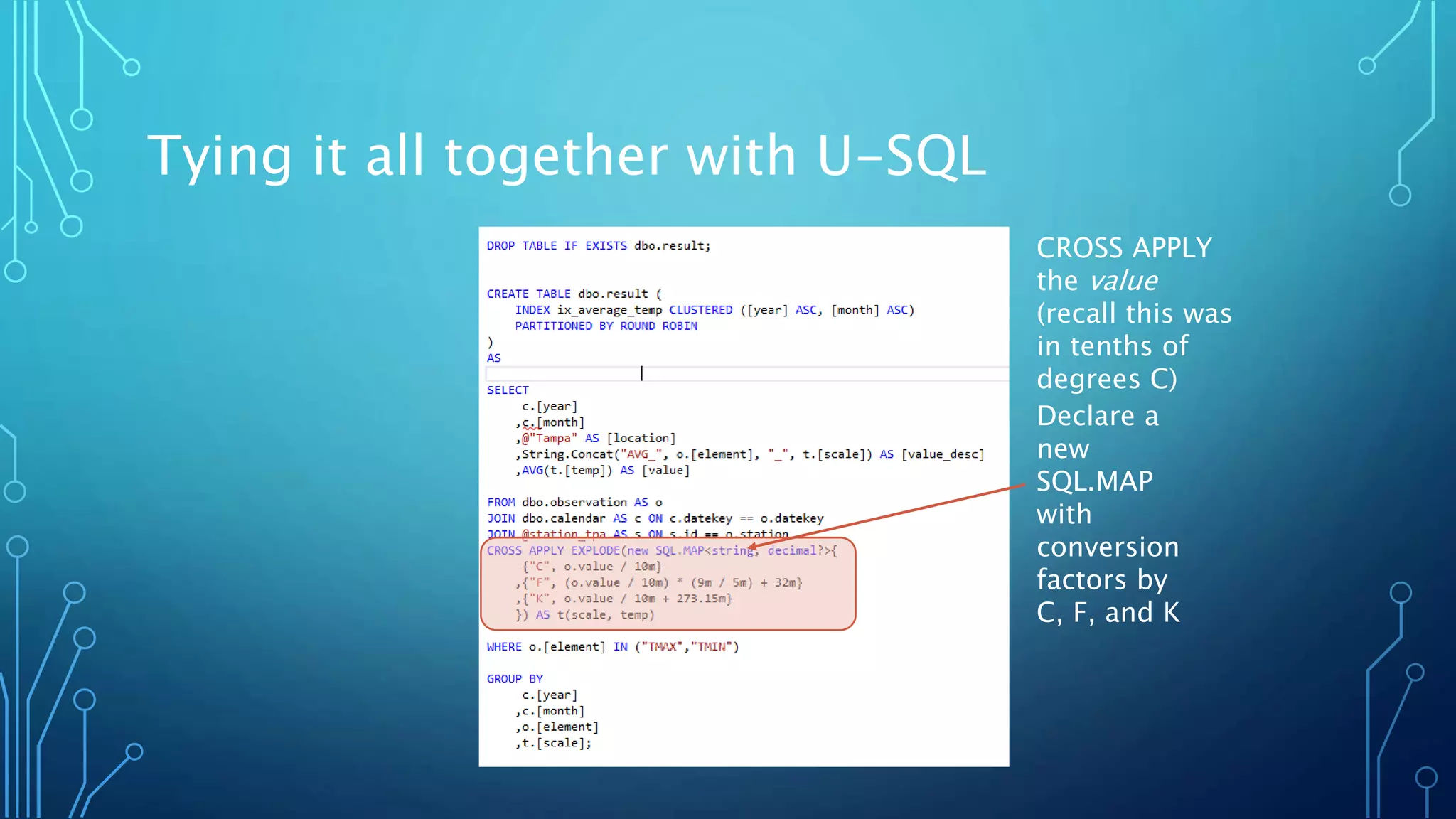

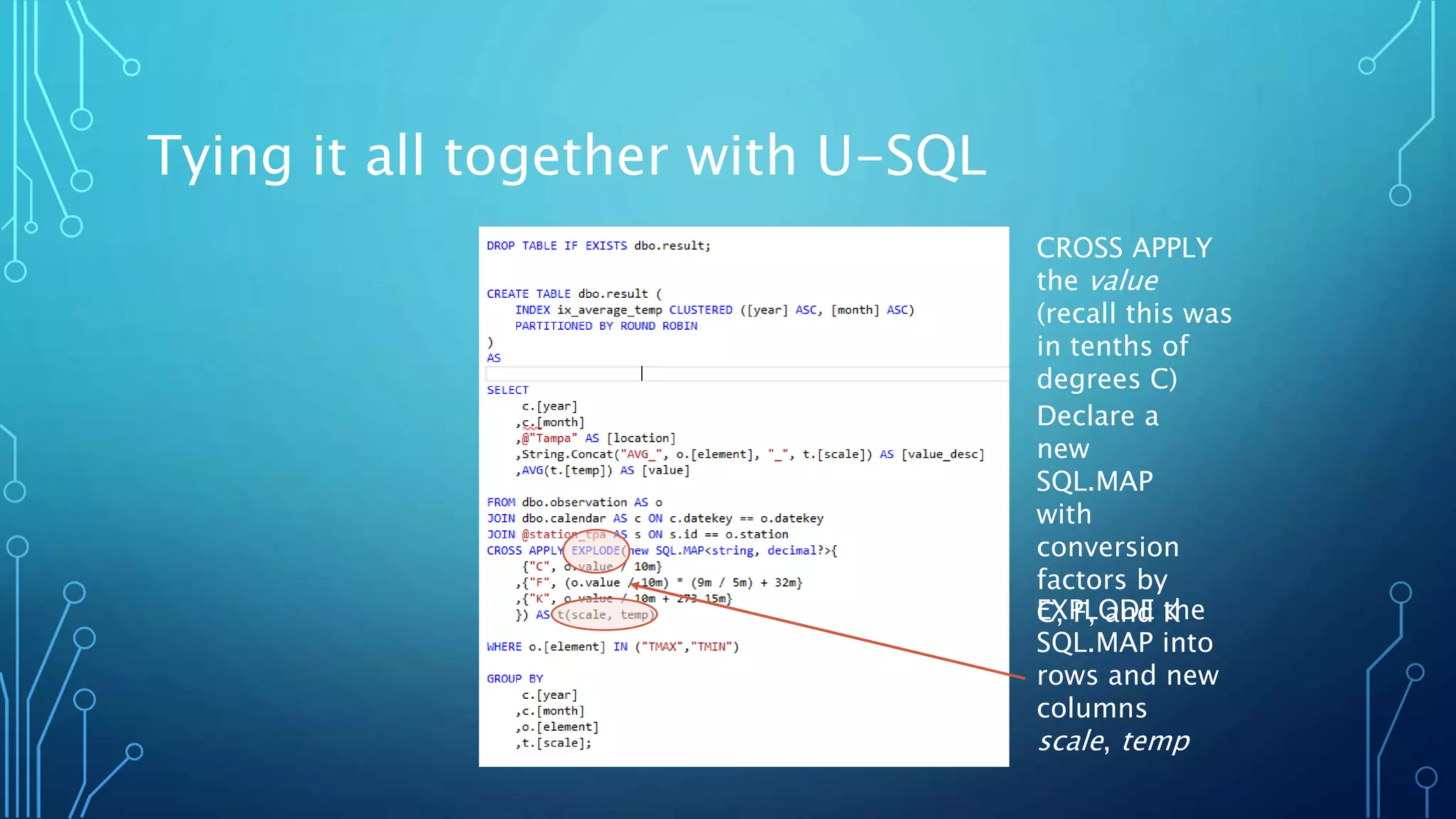

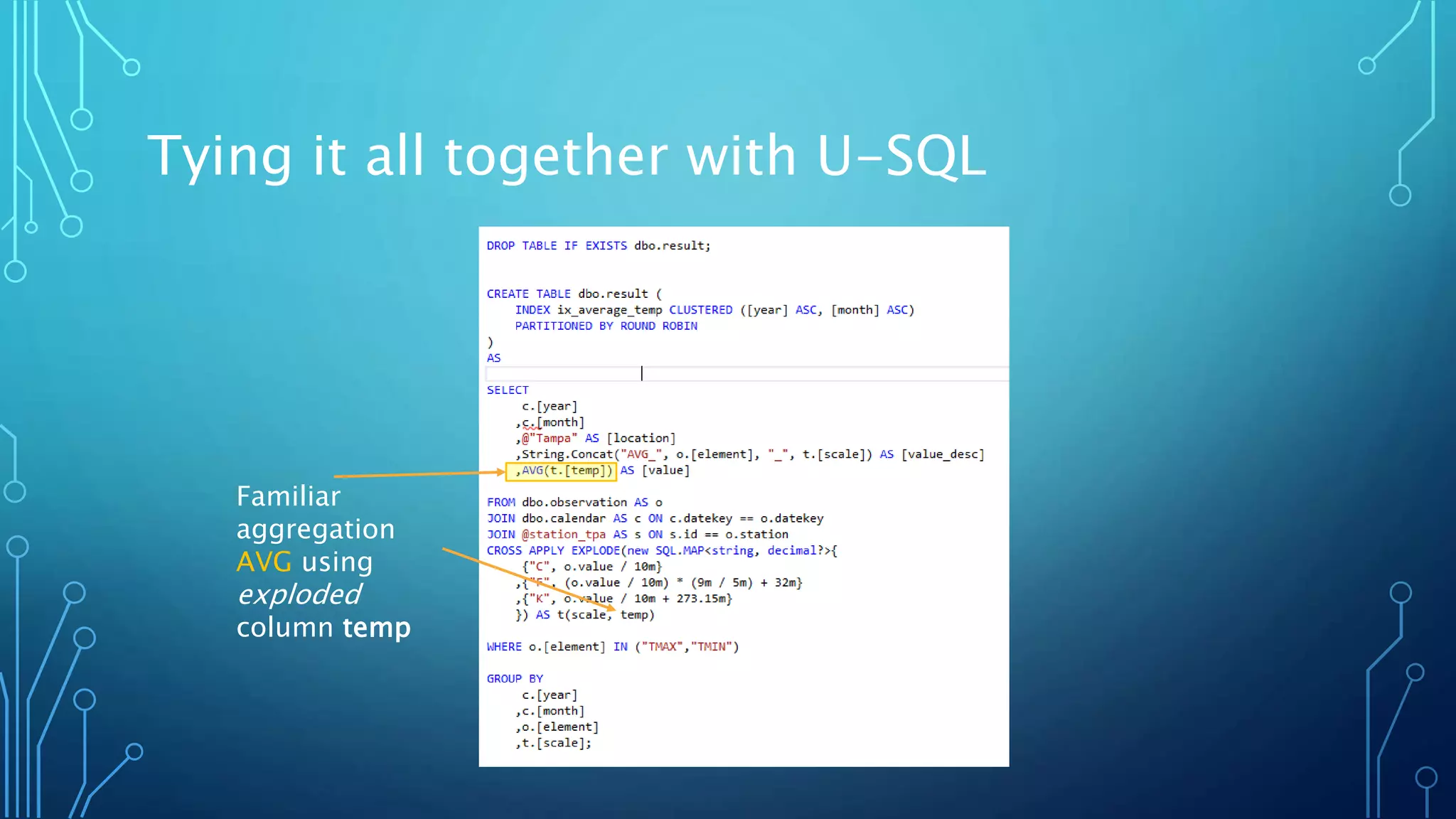

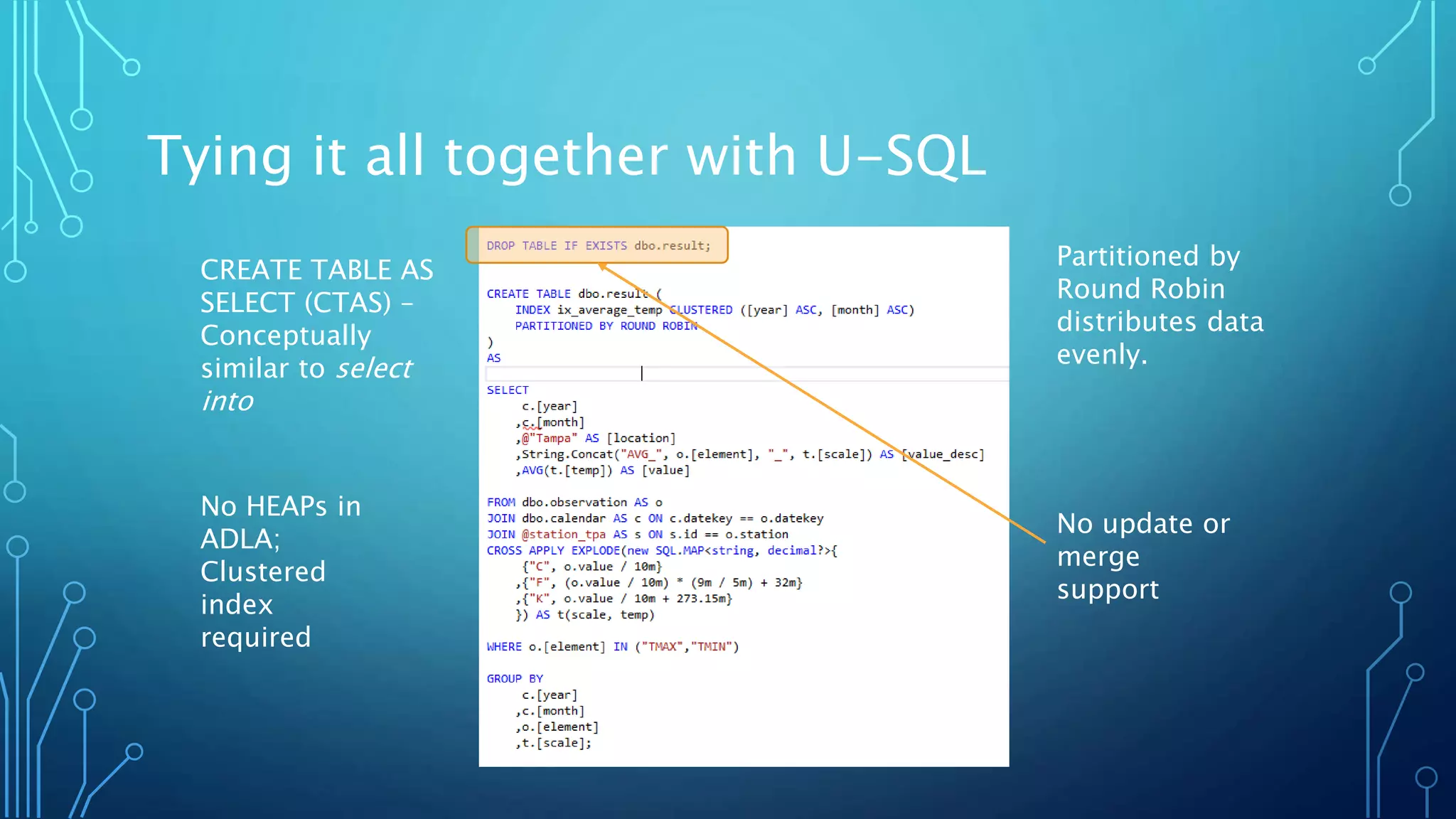

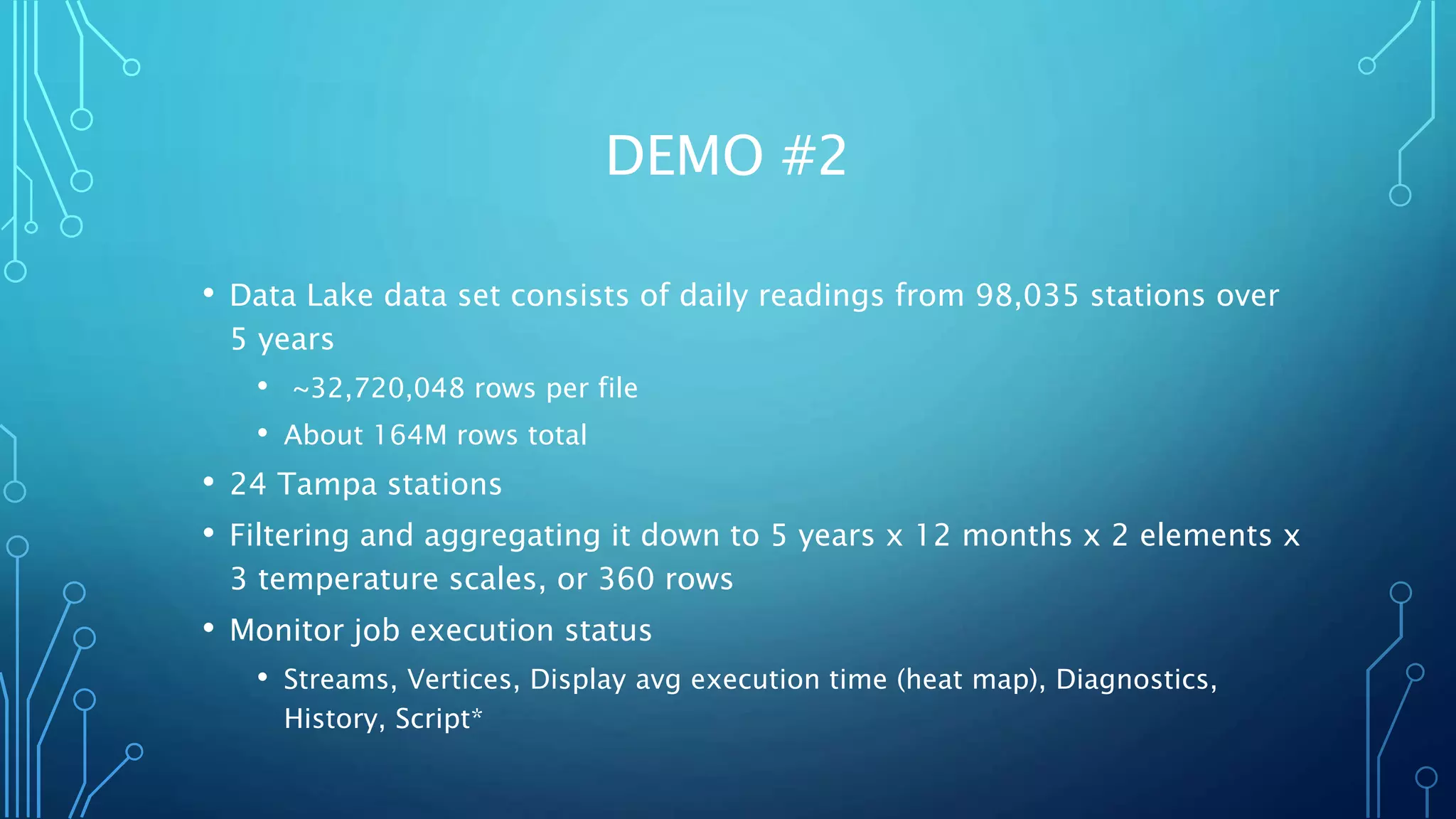

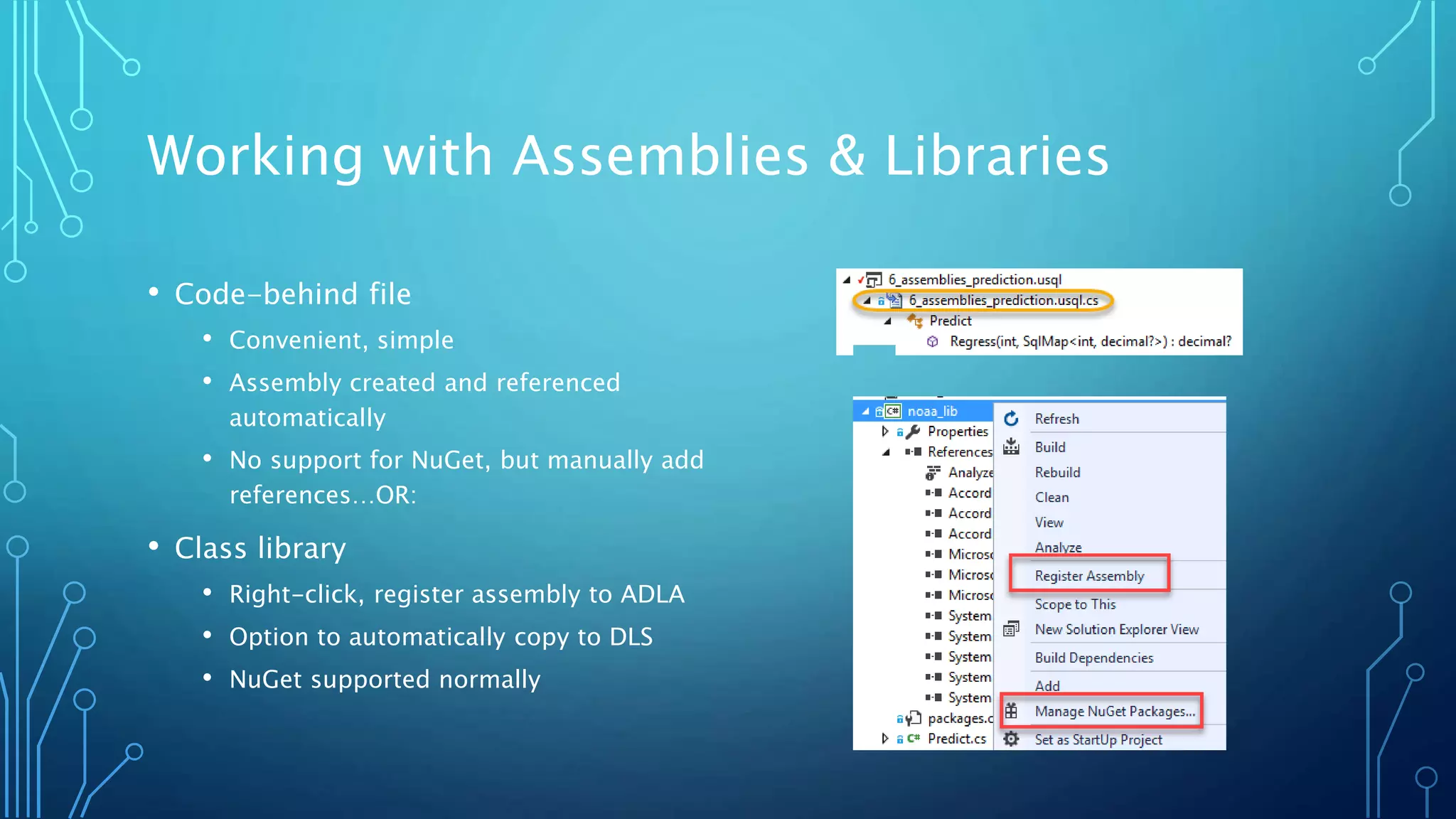

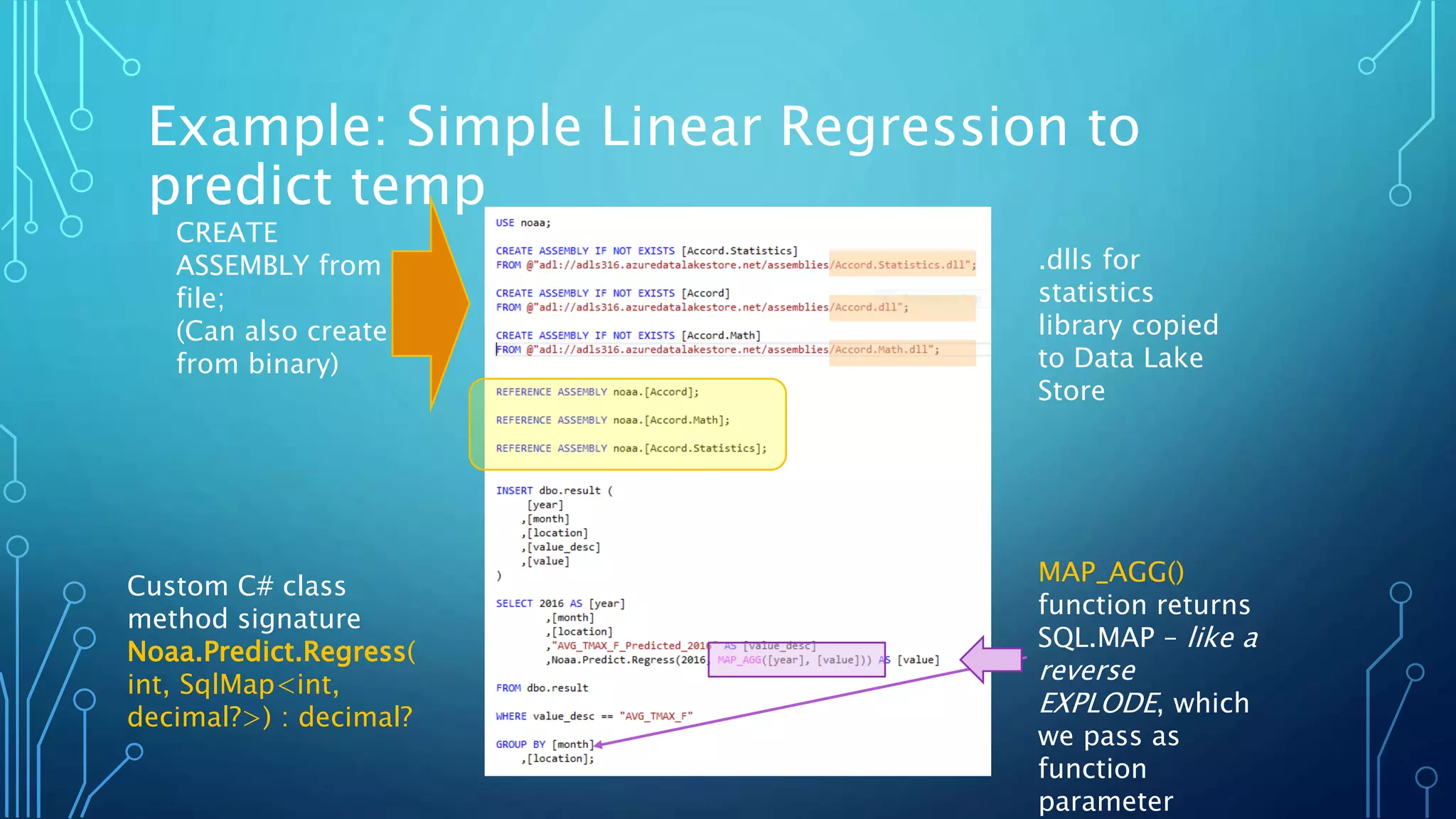

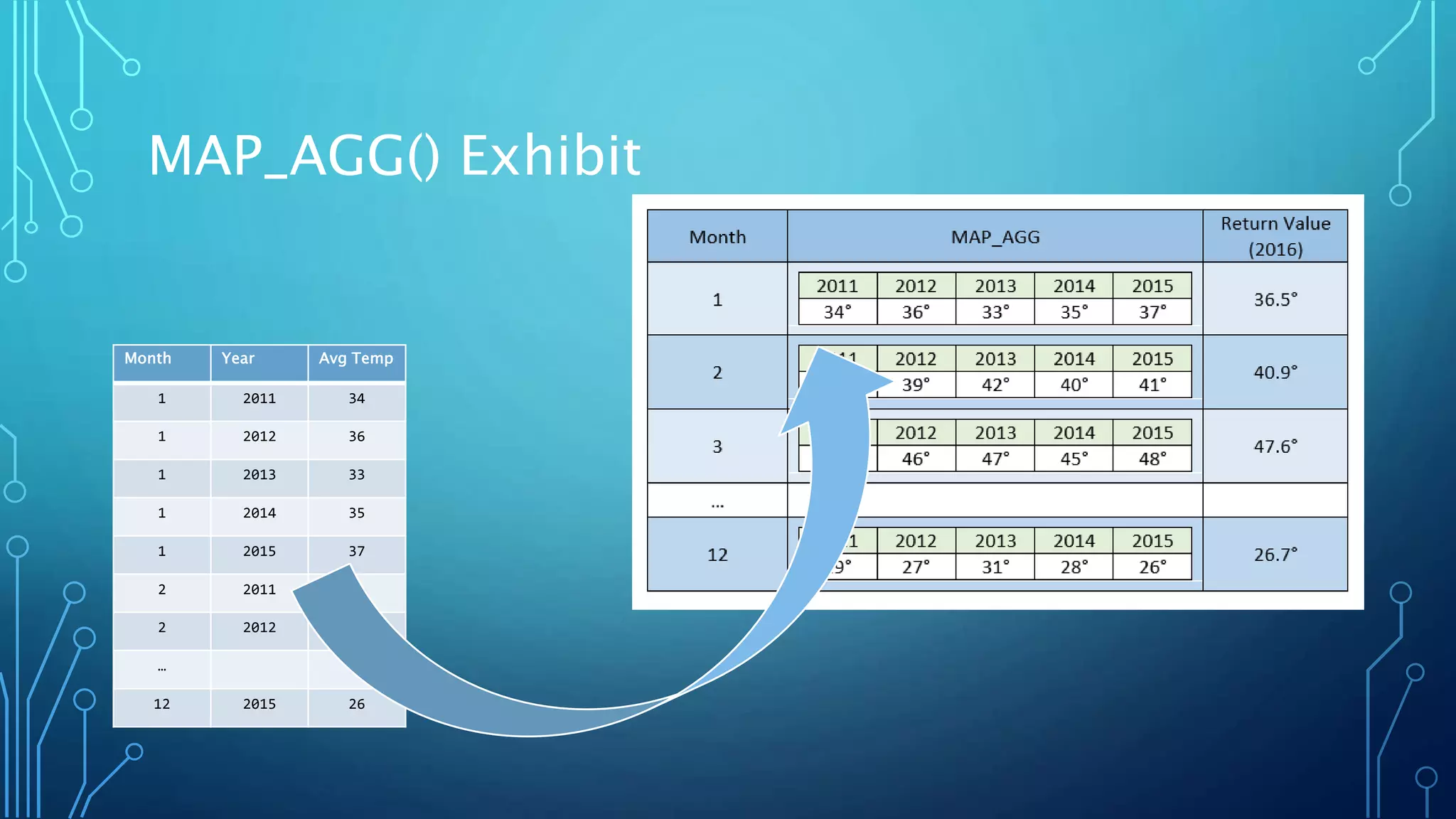

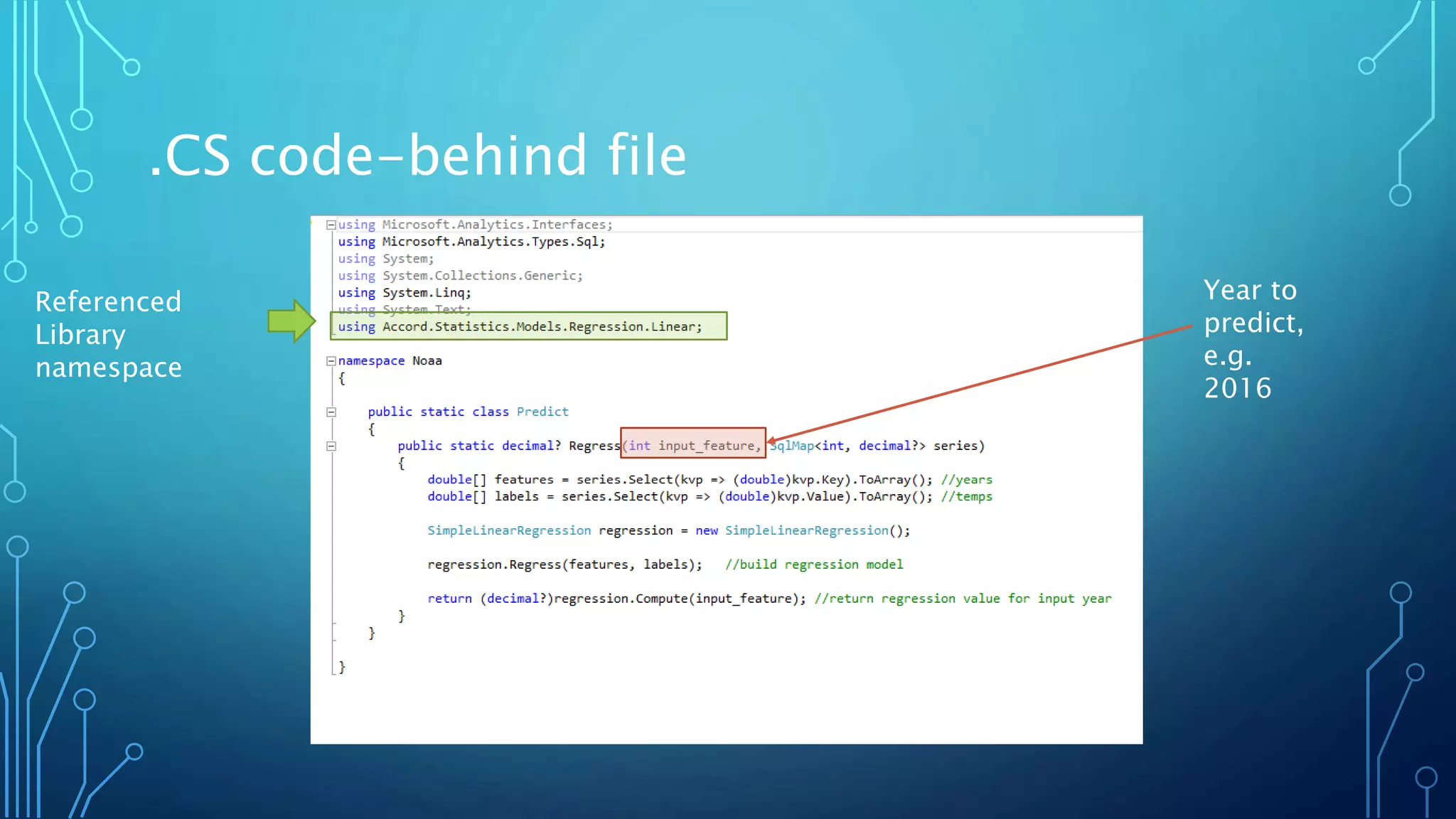

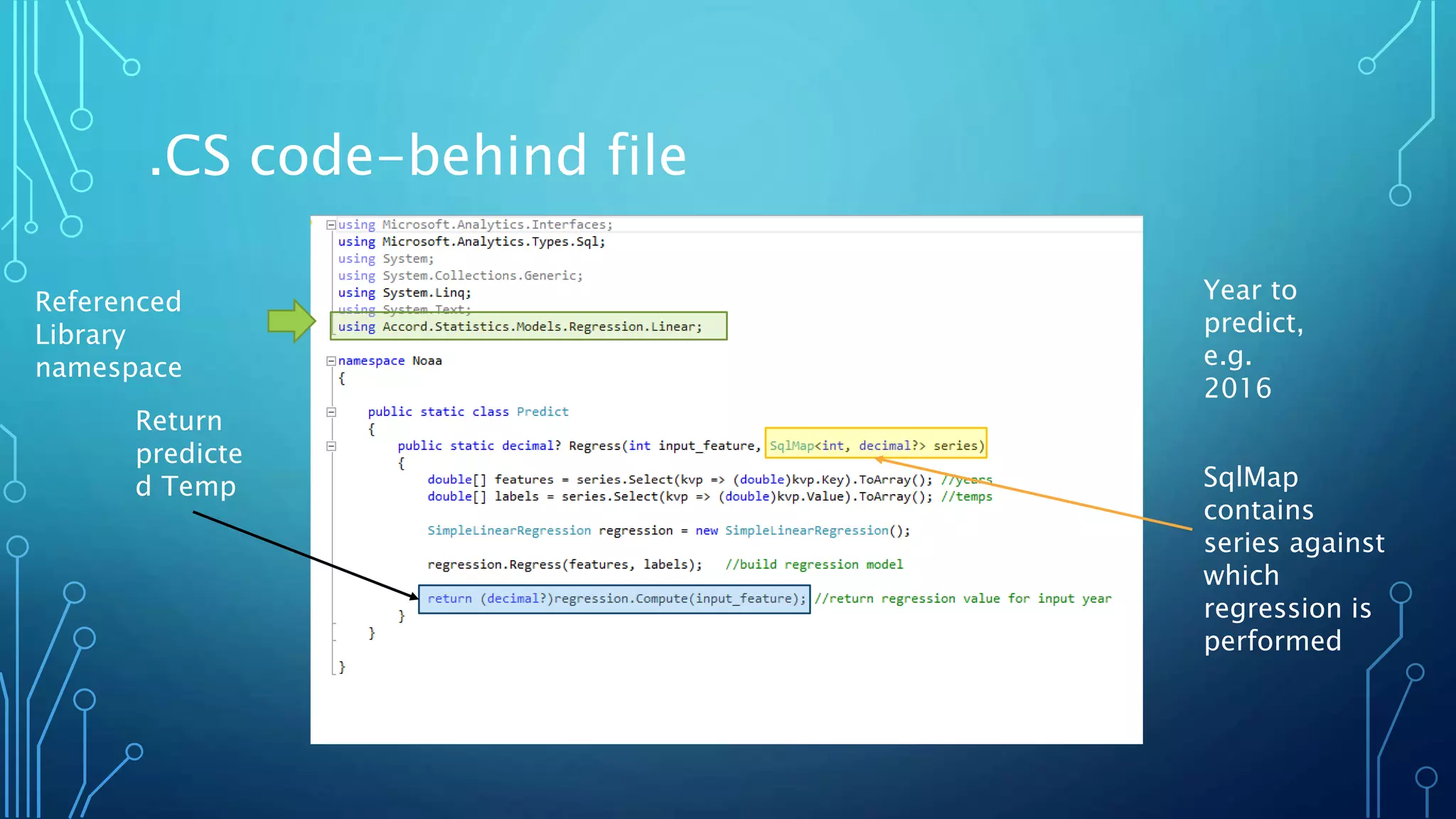

The document presents an introduction to U-SQL and Azure Data Lake Analytics (ADLA), highlighting the differences between traditional databases and big data solutions. It discusses data lakes as storage repositories for raw data, and describes how ADLA allows for big data computation in the cloud without the need for cluster provisioning. The document also covers key components needed to get started, tools and navigation, and includes examples such as querying data and working with APIs.

![Attribution

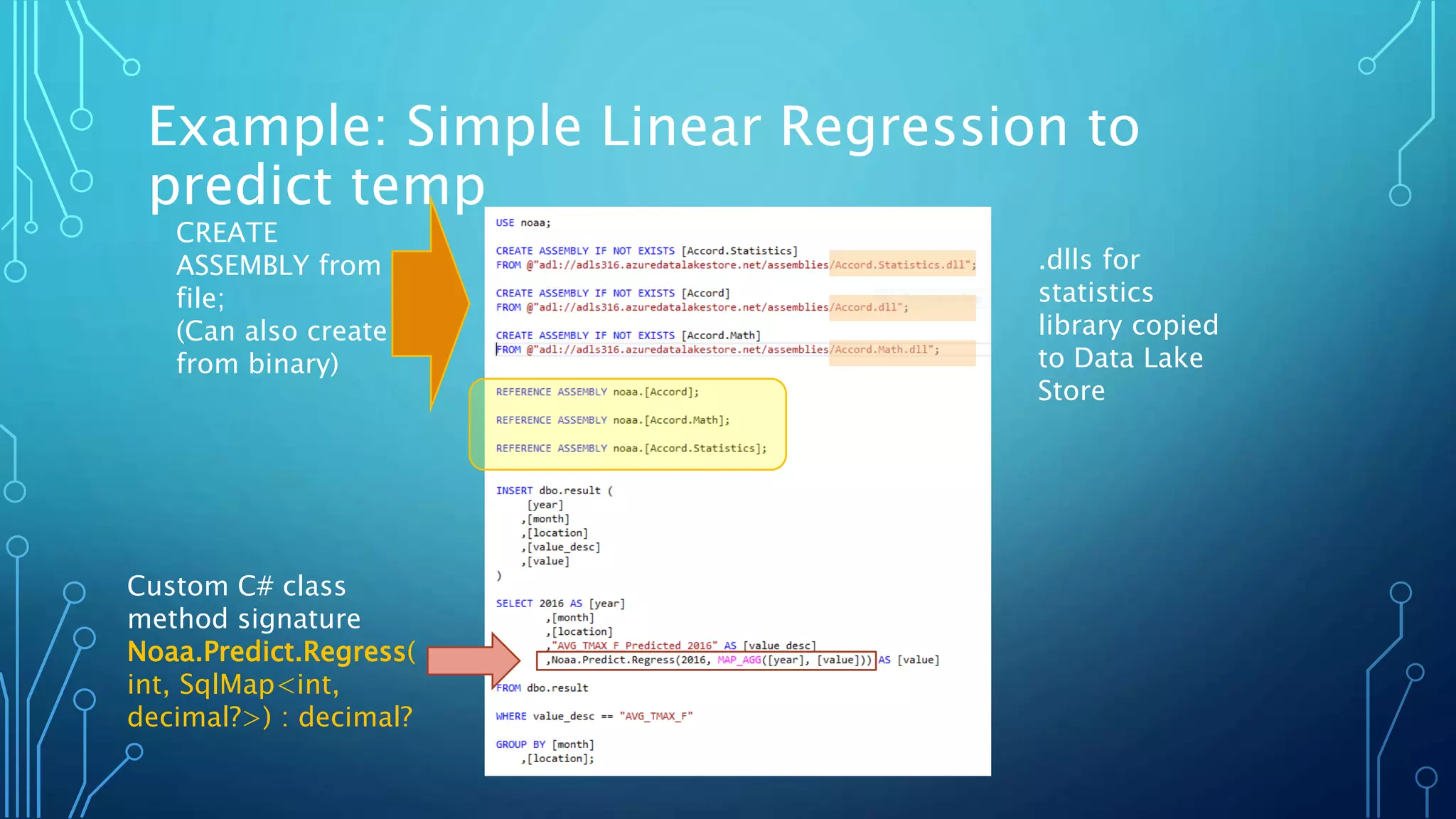

The Accord.NET Framework

Copyright (c) 2009-2014, César Roberto de Souza

<cesarsouza@gmail.com>

This library is free software; you can redistribute it and/or modify it under

the terms of the GNU Lesser General Public License as published by the

Free Software Foundation; either version 2.1 of the License, or (at your

option) any later version.

The copyright holders provide no reassurances that the source code

provided does not infringe any patent, copyright, or any other intellectual

property rights of third parties. The copyright holders disclaim any liability

to any recipient for claims brought against recipient by any third party

for infringement of that parties intellectual property rights.

This library is distributed in the hope that it will be useful, but WITHOUT

ANY WARRANTY; without even the implied warranty of MERCHANTABILITY

or FITNESS FOR A PARTICULAR PURPOSE. See the GNU Lesser General Public

License for more details.

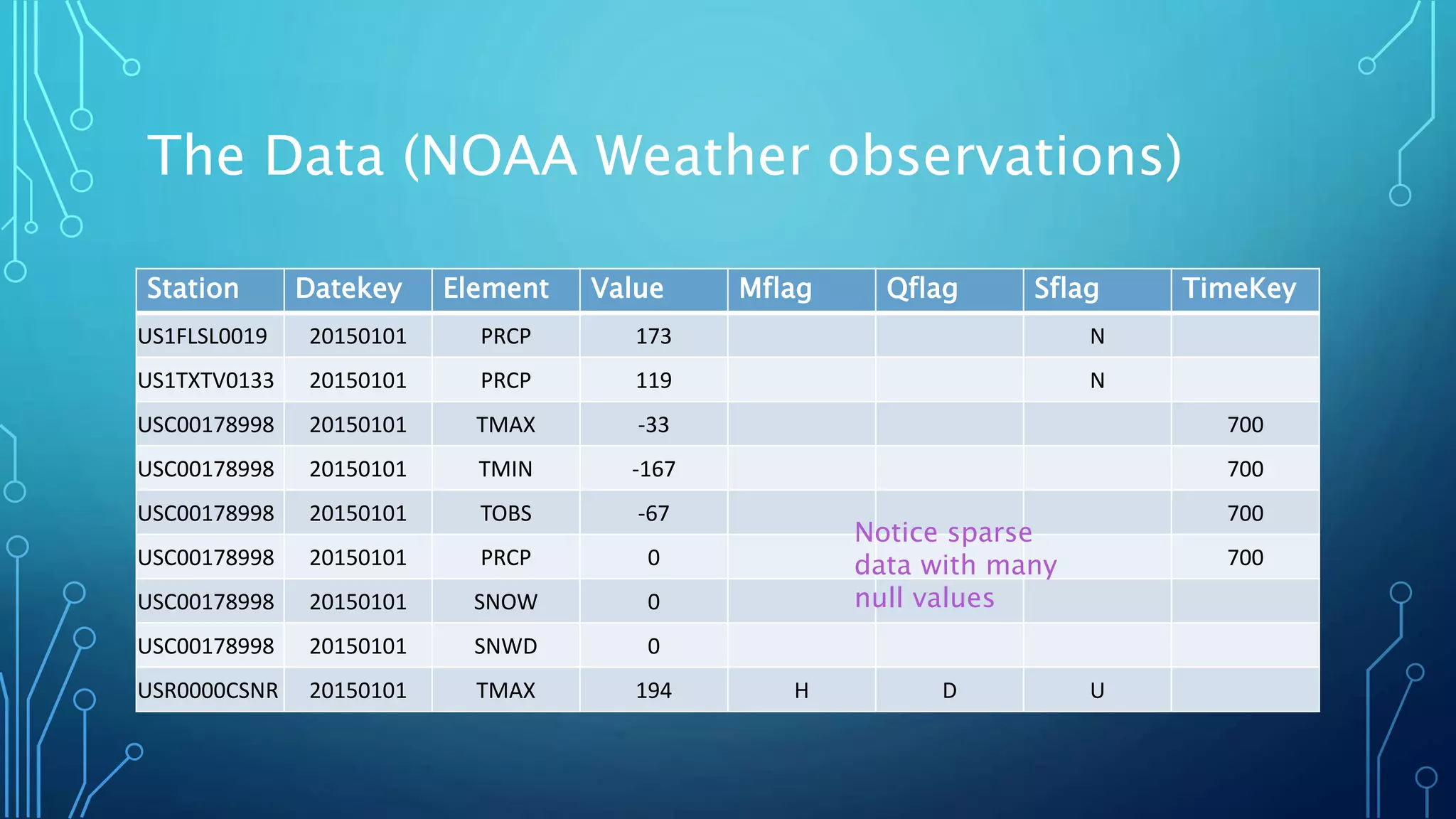

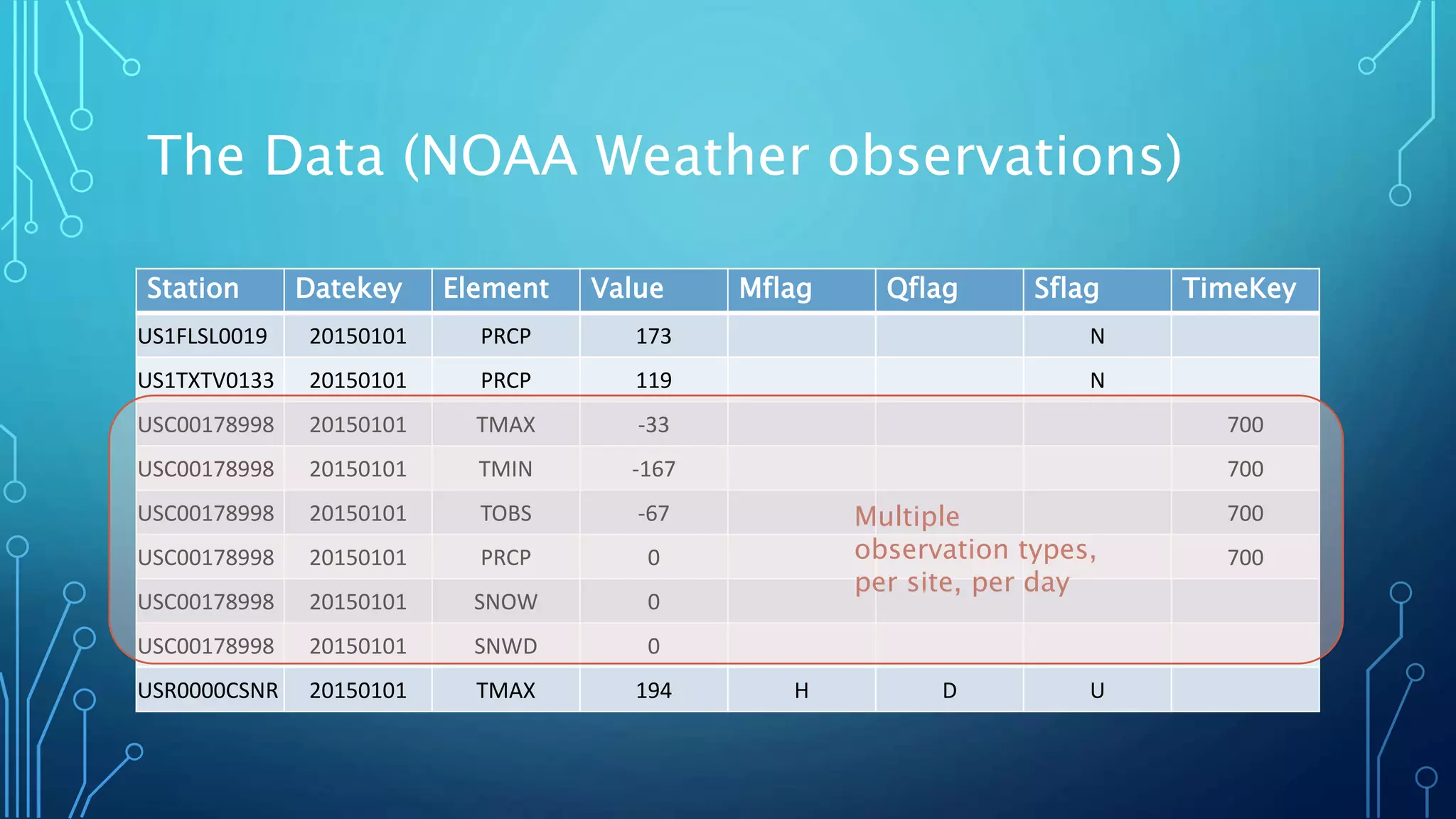

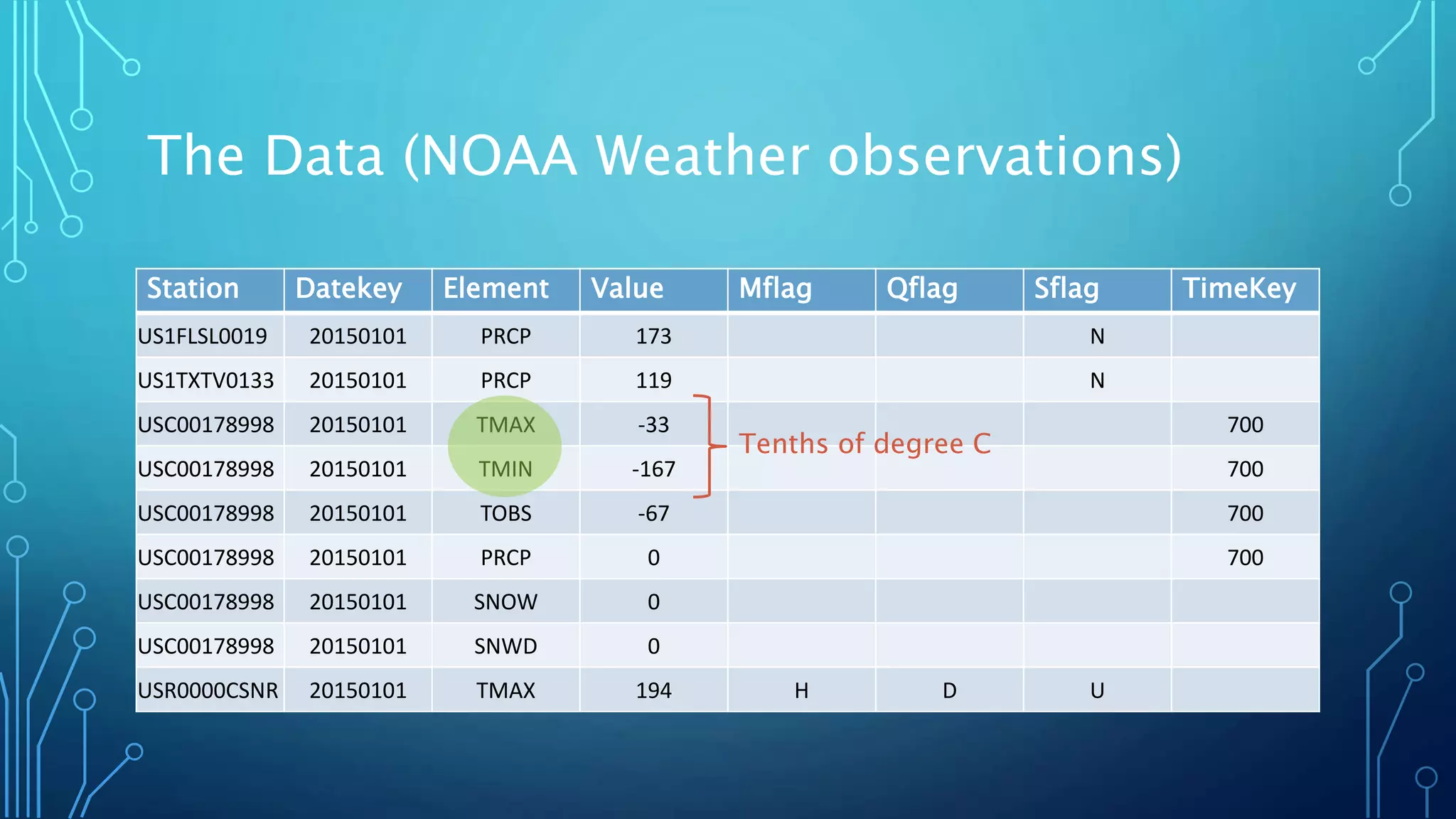

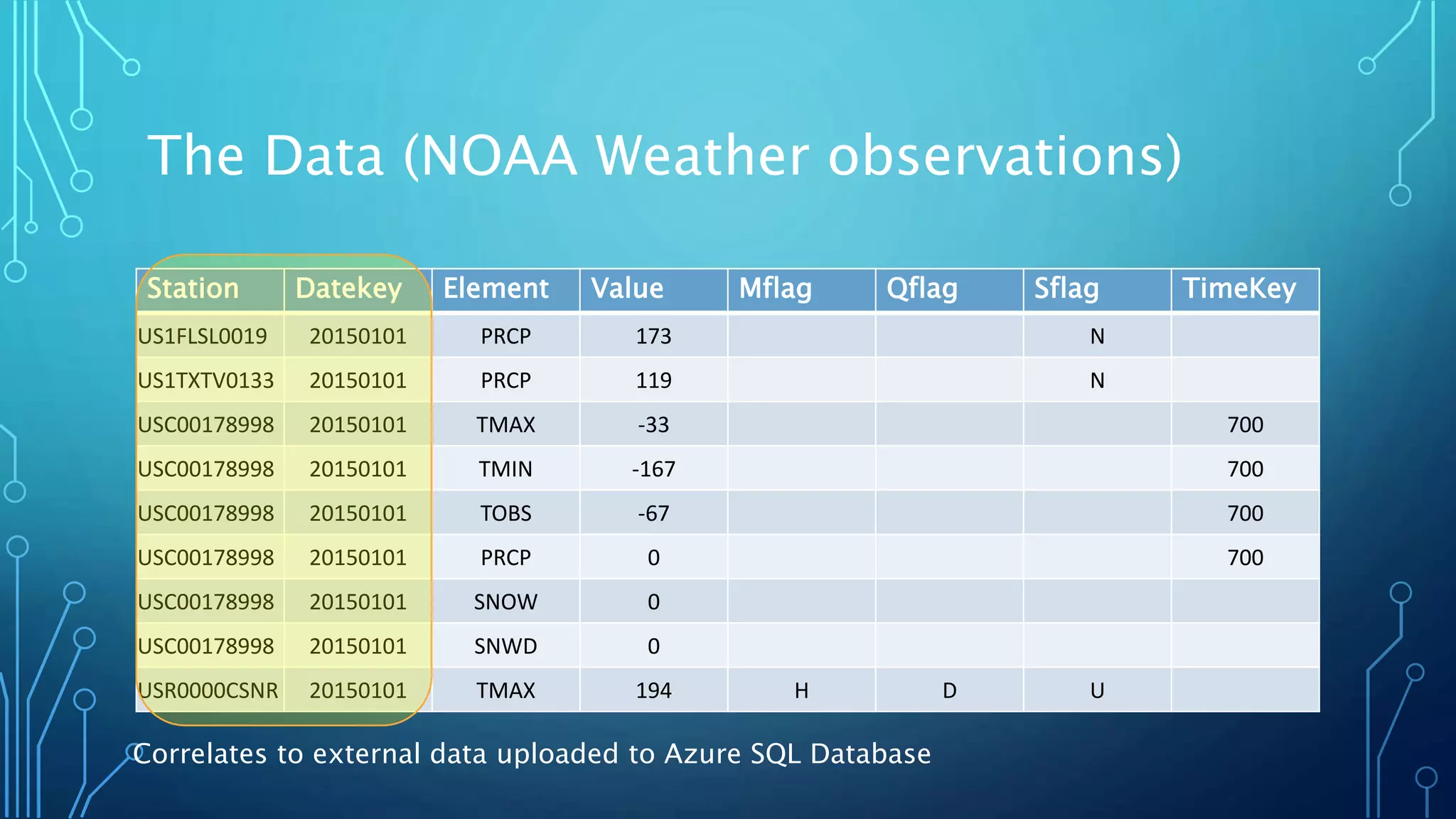

National Oceanic and Atmospheric Administration (NOAA)

README FILE FOR DAILY GLOBAL HISTORICAL CLIMATOLOGY NETWORK

(GHCN-DAILY) Version 3.22

How to cite:

Note that the GHCN-Daily dataset itself now has a DOI (Digital Object

Identifier)so it may be relevant to cite both the methods/overview journal

article as well as the specific version of the dataset used.

The journal article describing GHCN-Daily is:Menne, M.J., I. Durre, R.S.

Vose, B.E. Gleason, and T.G. Houston, 2012: An overview of the Global

Historical Climatology Network-Daily Database. Journal of Atmospheric

and Oceanic Technology, 29, 897-910, doi:10.1175/JTECH-D-11-

00103.1.

To acknowledge the specific version of the dataset used, please cite:Menne,

M.J., I. Durre, B. Korzeniewski, S. McNeal, K. Thomas, X. Yin, S. Anthony, R.

Ray, R.S. Vose, B.E.Gleason, and T.G. Houston, 2012: Global Historical

Climatology Network - Daily (GHCN-Daily), Version 3. [indicate subset used

following decimal, e.g. Version 3.12].

NOAA National Climatic Data Center. http://doi.org/10.7289/V5D21VHZ

[access date].](https://image.slidesharecdn.com/afirstlookatu-sqlonazuredata-split-160716154951/75/Hands-On-with-U-SQL-and-Azure-Data-Lake-Analytics-ADLA-45-2048.jpg)

![Bibliography

• Campbell, C. “Top Five Differences between Data Lakes and Data Warehouses.” Business Insights. Blue Granite, 26 Jan 2015. Web. https://www.blue-

granite.com/blog/bid/402596/Top-Five-Differences-between-Data-Lakes-and-Data-Warehouses

• Gopalan, R. (21 Jun 2016). U-SQL Part 4: Use custom code to extend U-SQL [Webinar]. PASS Big Data Virtual Chapter.

• Macauley, E. “Overview of Microsoft Azure Data Lake Analytics.” Microsoft Azure. Microsoft, 16 May 2016. Web. https://azure.microsoft.com/en-

us/documentation/articles/data-lake-analytics-overview/

• Reddy, S. (31 May 2016). Introduction to Azure Data Lake [Webinar]. PASS Big Data Virtual Chapter.

• Rossello, Justin. “Querying Azure SQL Database from an Azure Data Lake Analytics U-SQL Script.” eat{Code}live. 21 Nov 2015. Web.

http://eatcodelive.com/2015/11/21/querying-azure-sql-database-from-an-azure-data-lake-analytics-u-sql-script/

• Rouse, M. “Definition Data Lake.” SearchAws. TechTarget, May 2015. Web. http://searchaws.techtarget.com/definition/data-lake

• Rys, M. (8 Mar 2016). Introducing U-SQL; Part 2 of 2: Scaling U-SQL and doing SQL in U-SQL [Webinar]. PASS Big Data Virtual Chapter. Retrieved from

http://www.youtube.com/channel/UCkOKmMW_LEsACOqE8C1RWdw

• Rys, M. (16 Feb 2016). Introducing U-SQL; Part 1 of 2: Introduction and C# extensibility [Webinar]. PASS Big Data Virtual Chapter. Retrieved from

http://www.youtube.com/channel/UCkOKmMW_LEsACOqE8C1RWdw

• Rys, M., et. al. Azure/usql, (2016), GitHub repository, https://github.com/Azure/usql

• “U-SQL Language Reference.” Microsoft Azure. Microsoft, 28 Oct 2015. Web. https://msdn.microsoft.com/en-

US/library/azure/mt591959(Azure.100).aspx](https://image.slidesharecdn.com/afirstlookatu-sqlonazuredata-split-160716154951/75/Hands-On-with-U-SQL-and-Azure-Data-Lake-Analytics-ADLA-46-2048.jpg)