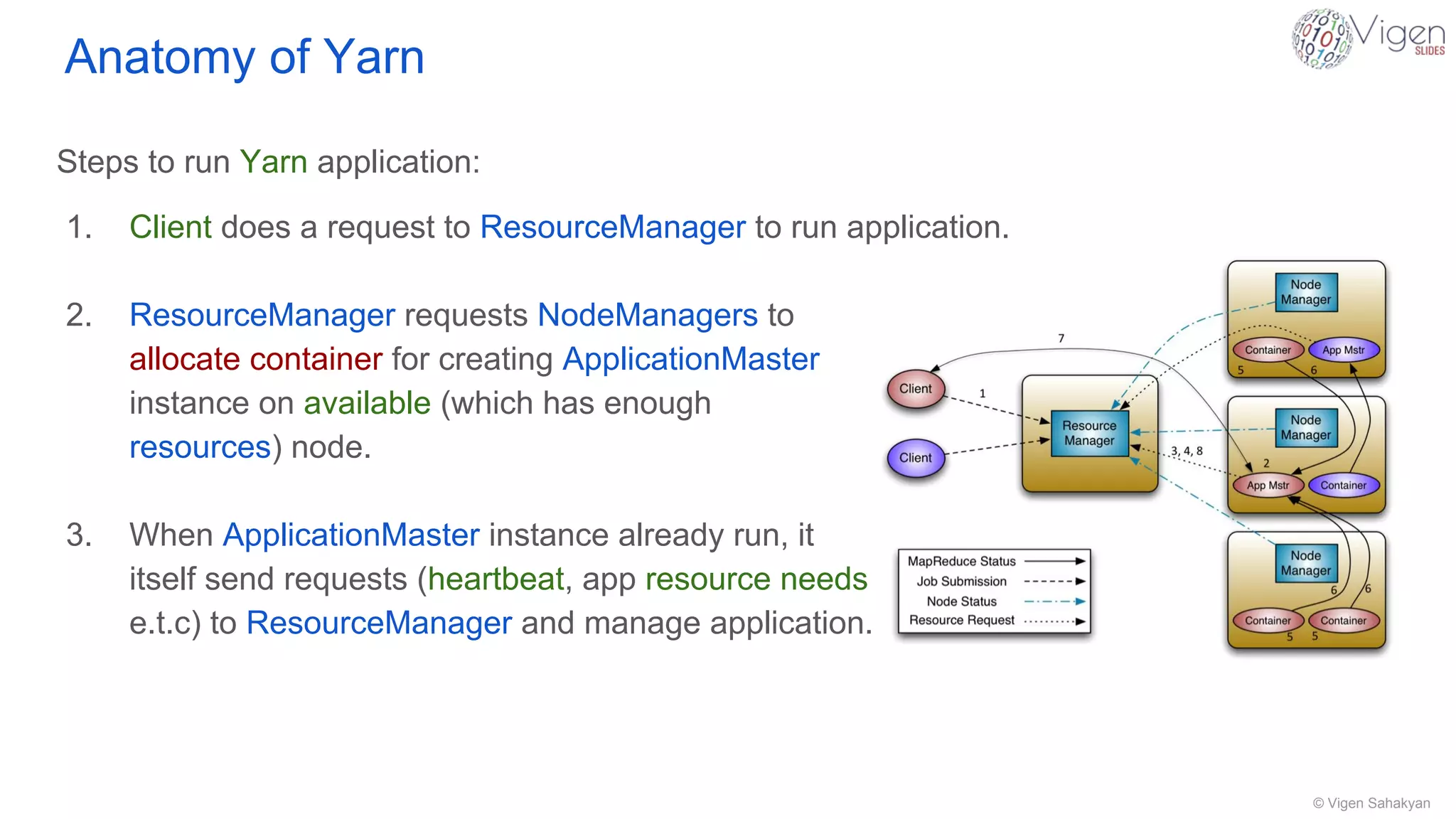

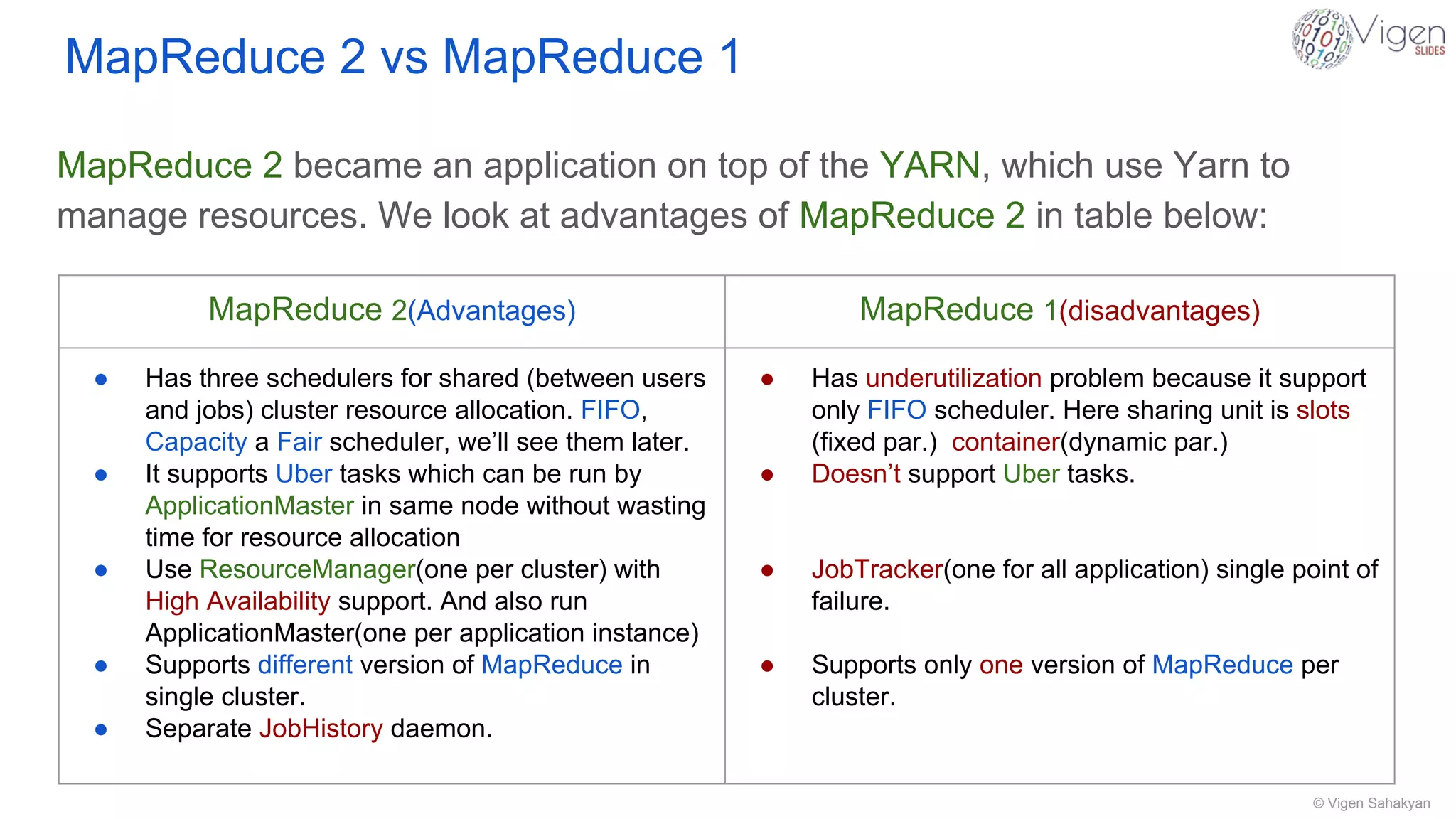

This document provides an overview of YARN (Yet Another Resource Negotiator), the resource management system for Hadoop. It describes the key components of YARN including the Resource Manager, Node Manager, and Application Master. The Resource Manager tracks cluster resources and schedules applications, while Node Managers monitor nodes and containers. Application Masters communicate with the Resource Manager to manage applications. YARN allows Hadoop to run multiple applications like Spark and HBase, improves on MapReduce scheduling, and transforms Hadoop into a distributed operating system for big data processing.