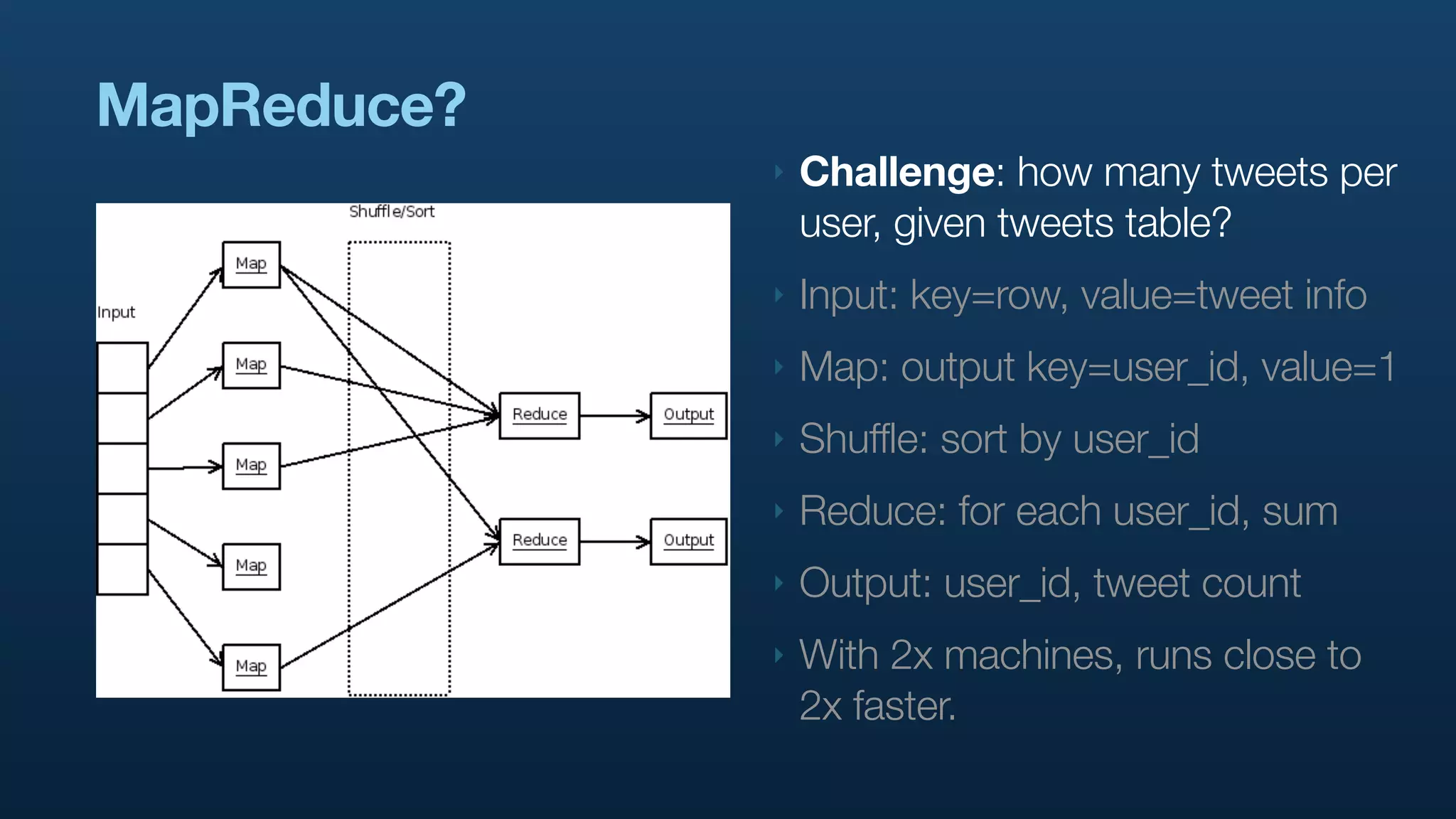

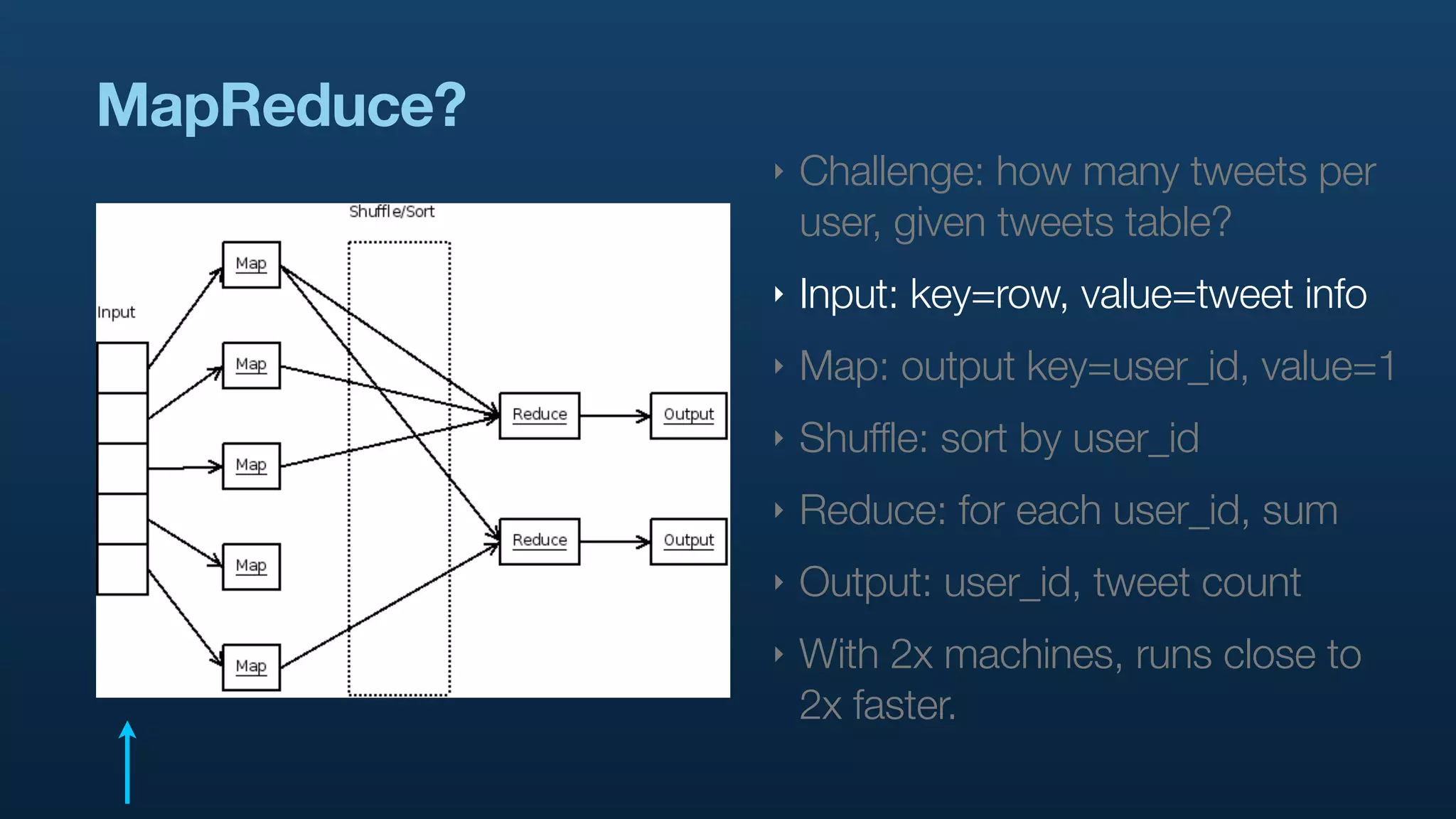

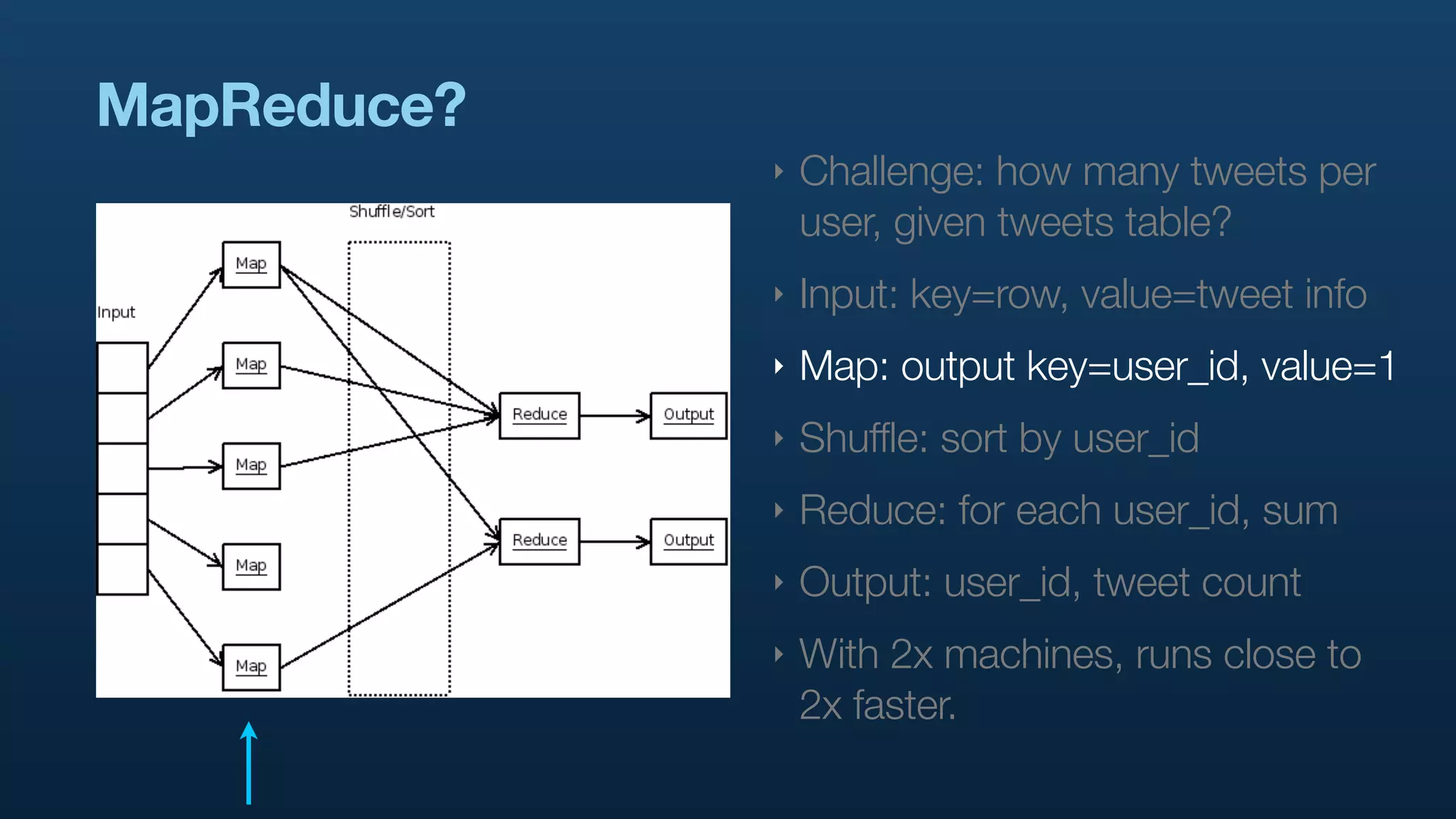

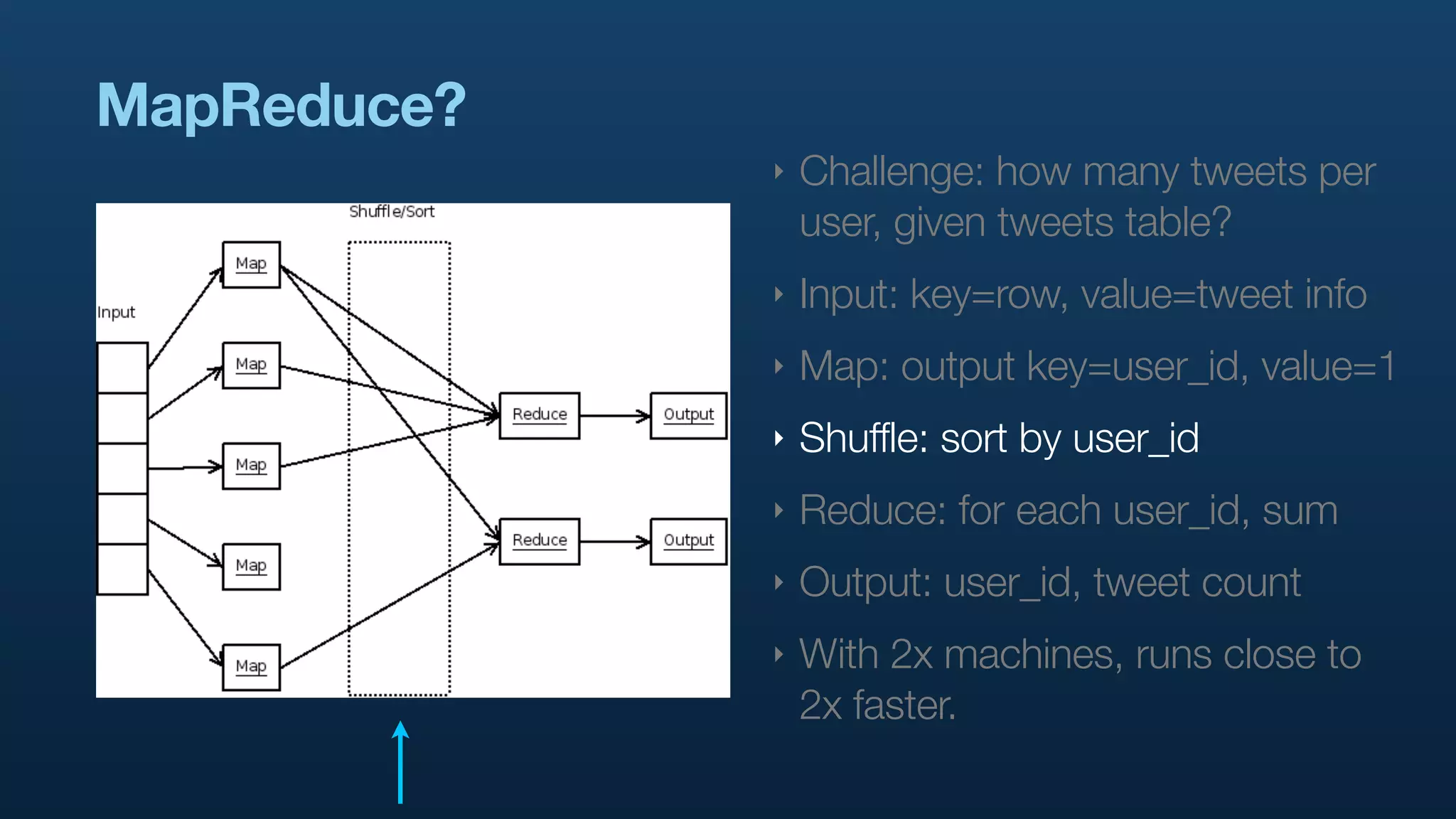

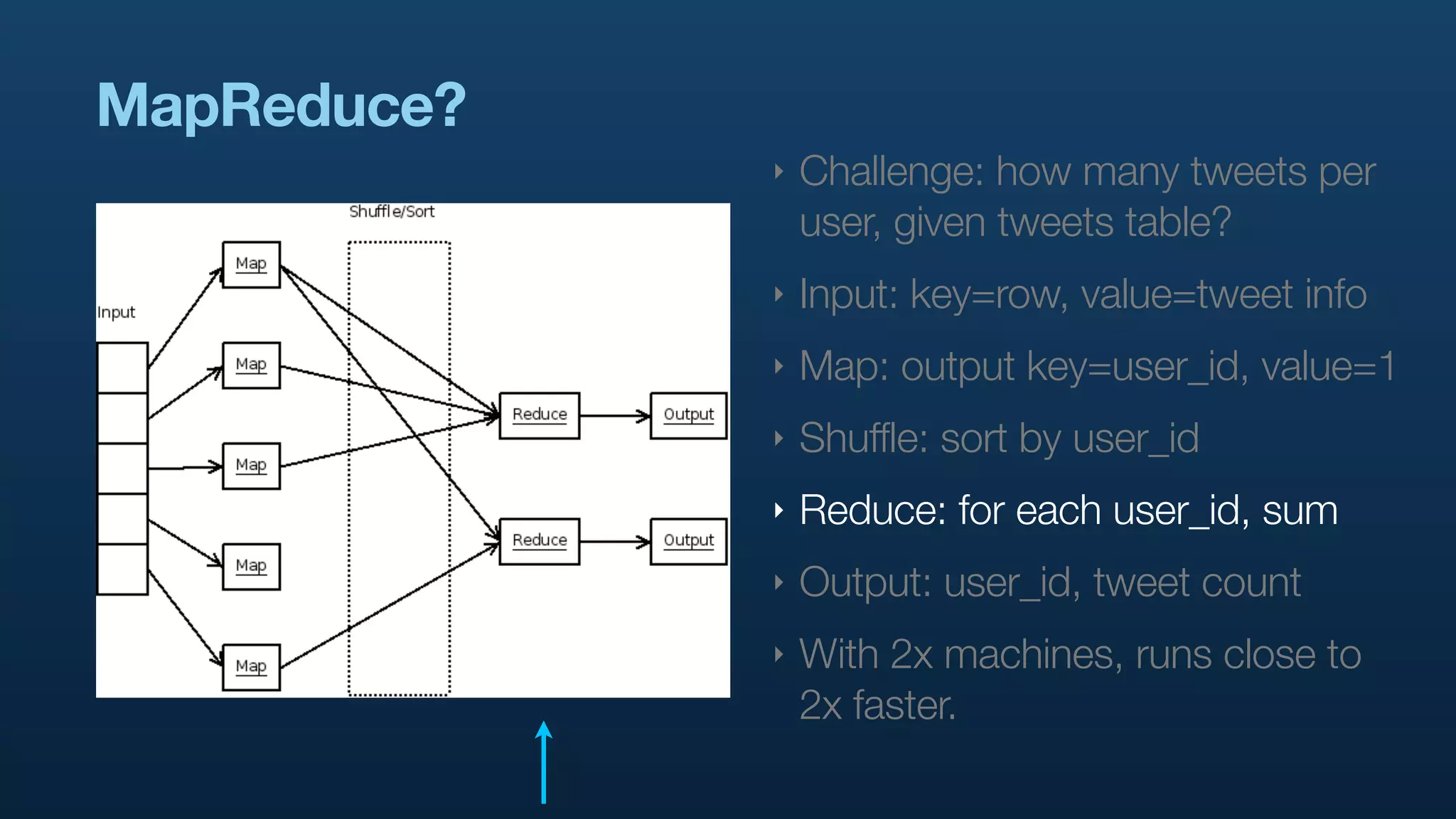

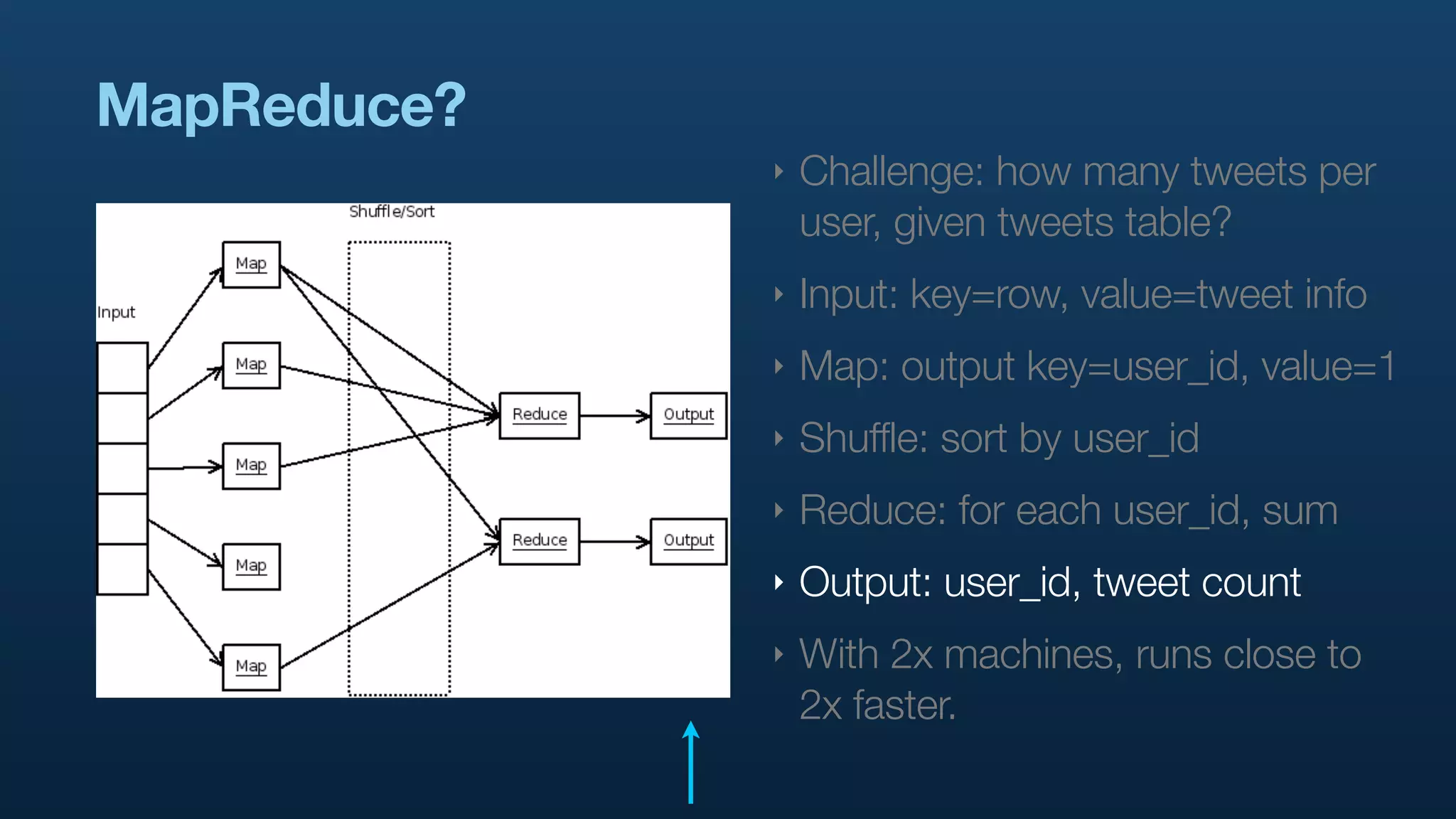

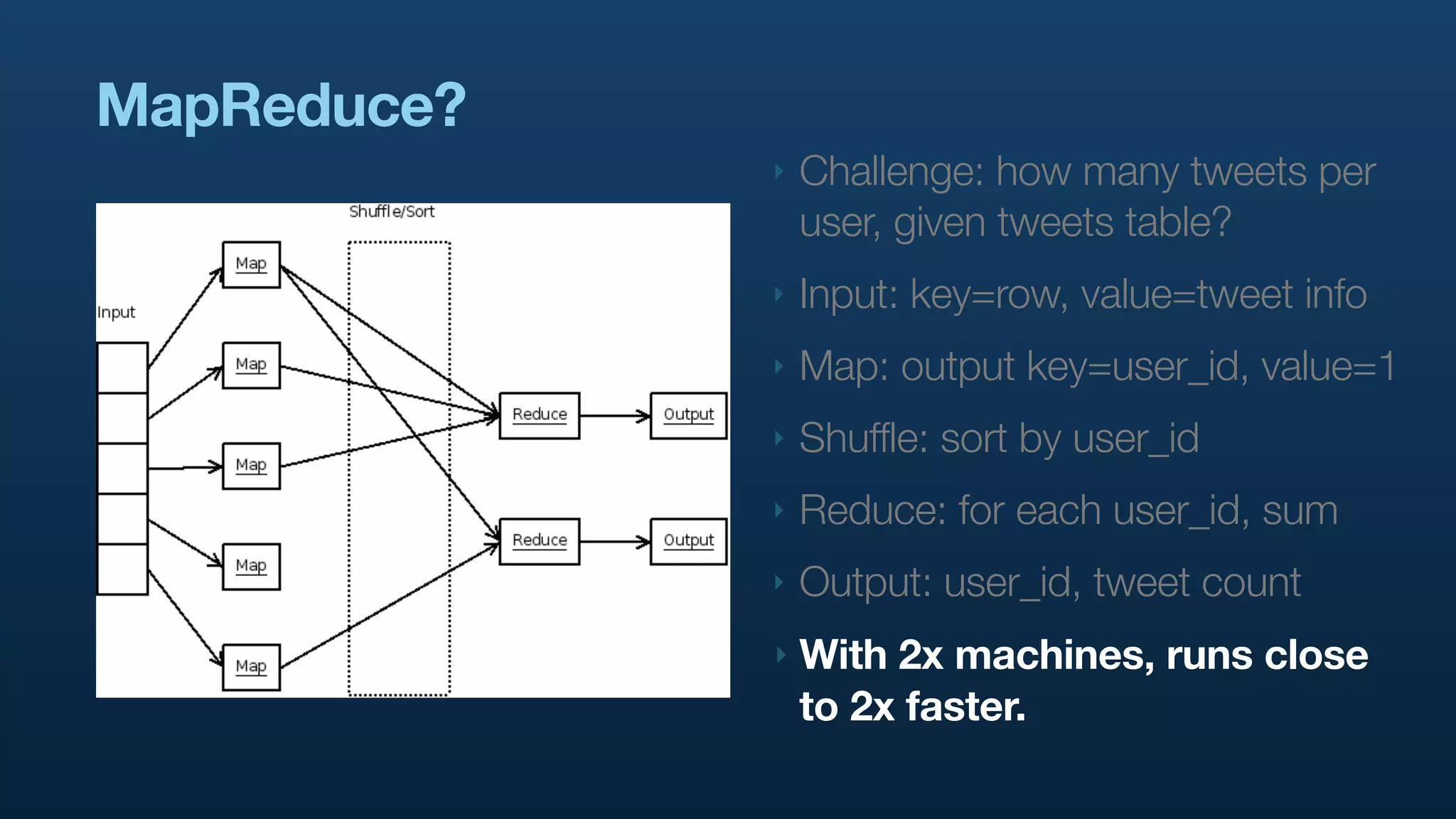

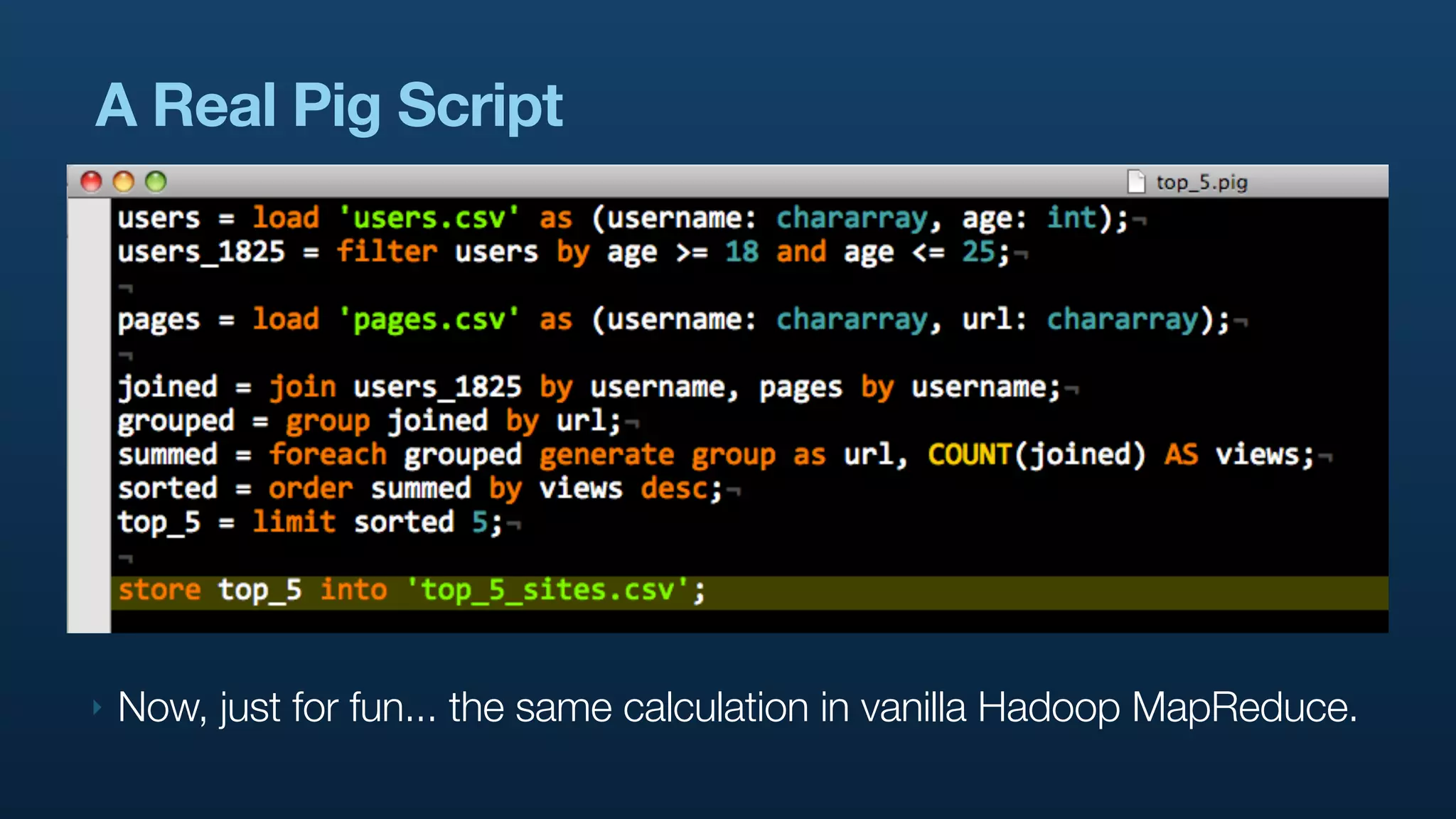

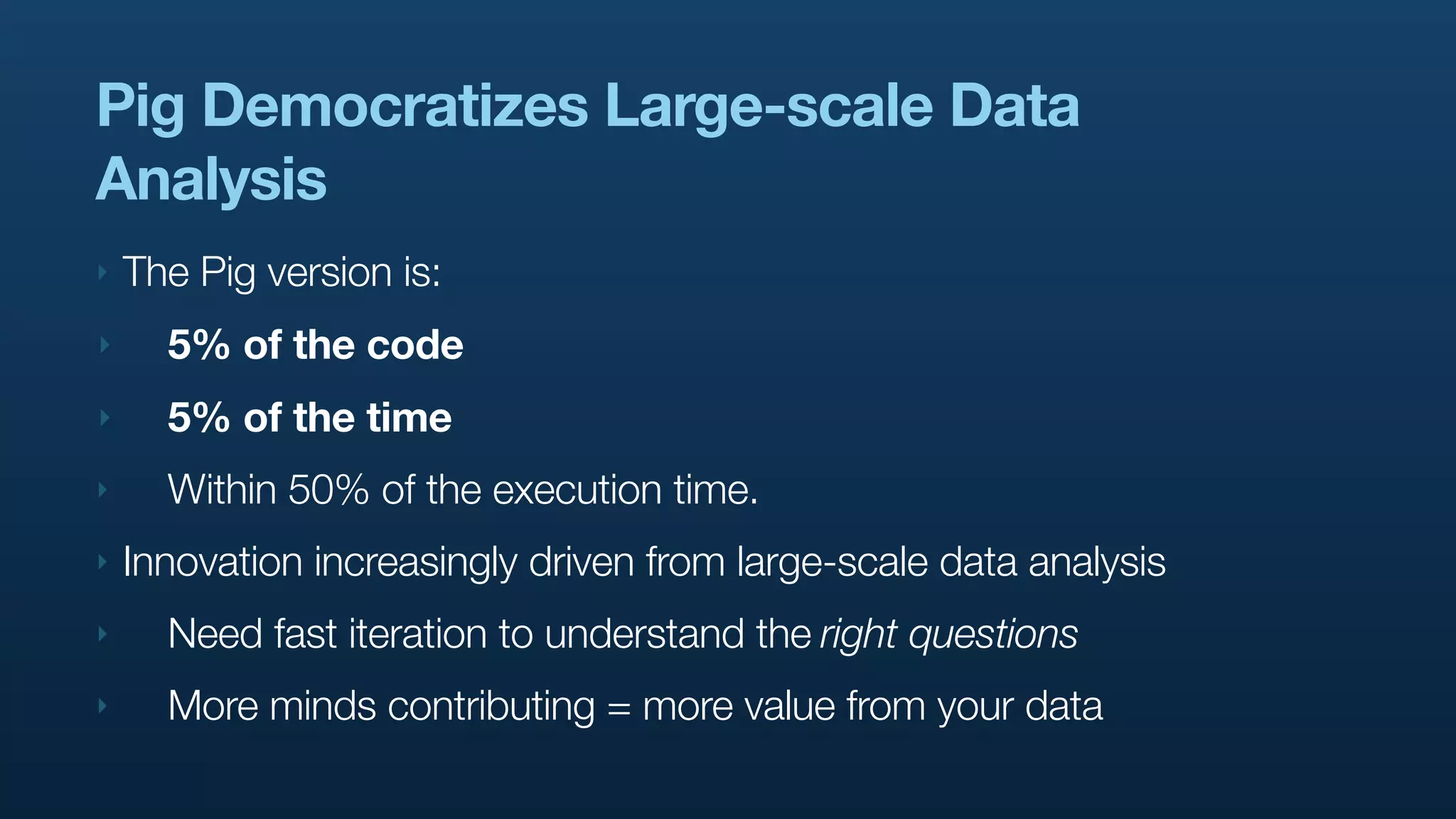

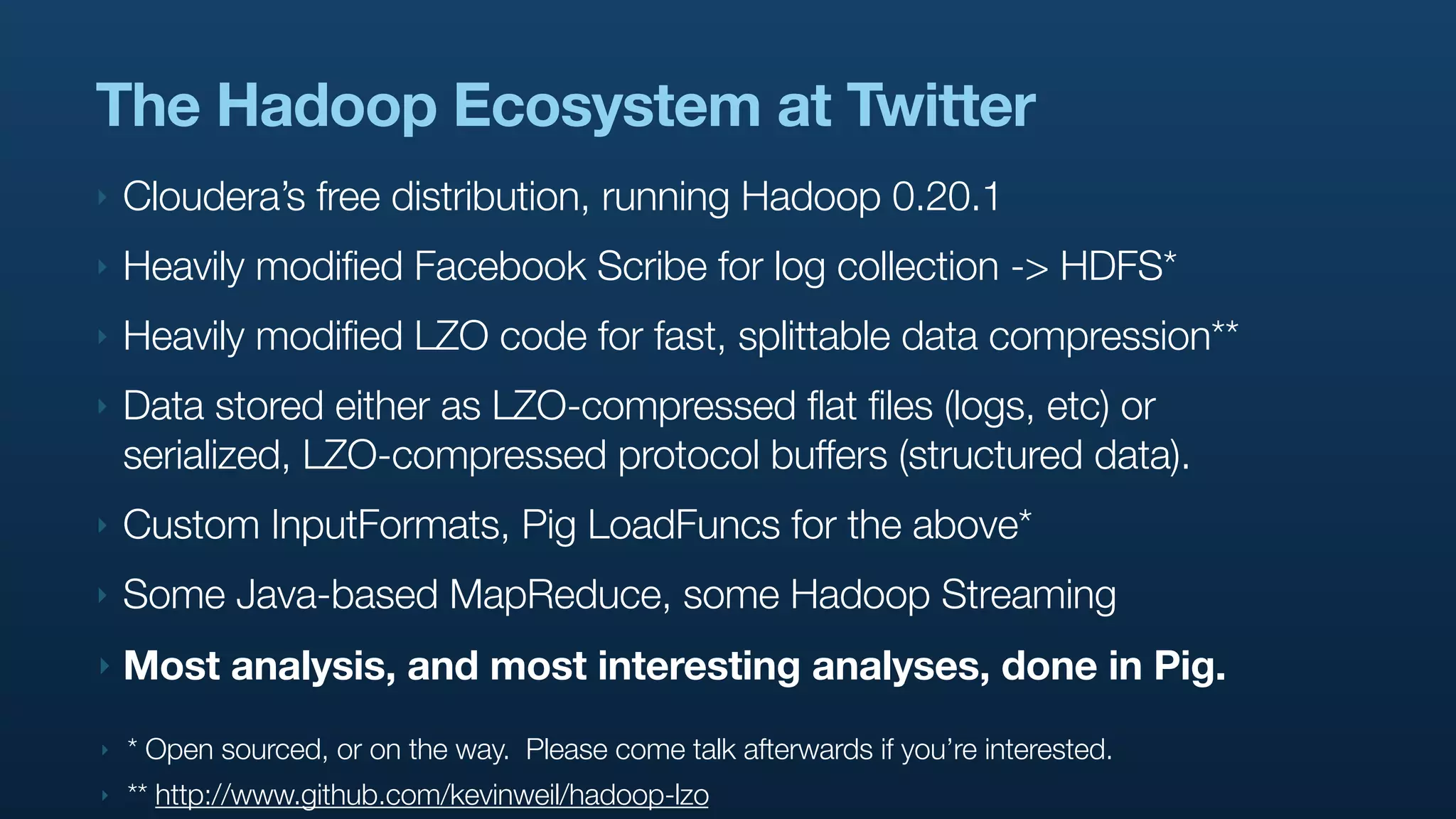

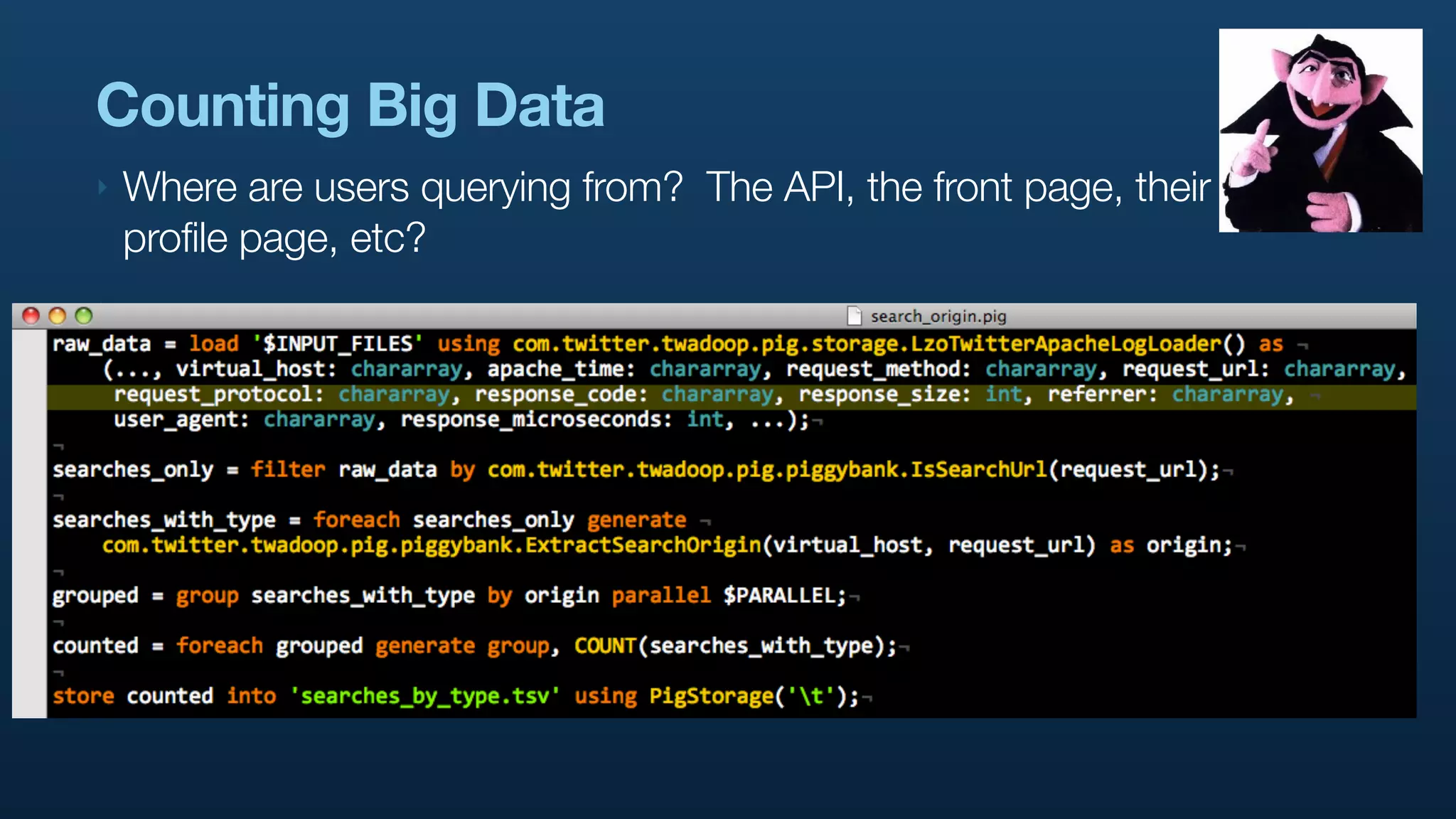

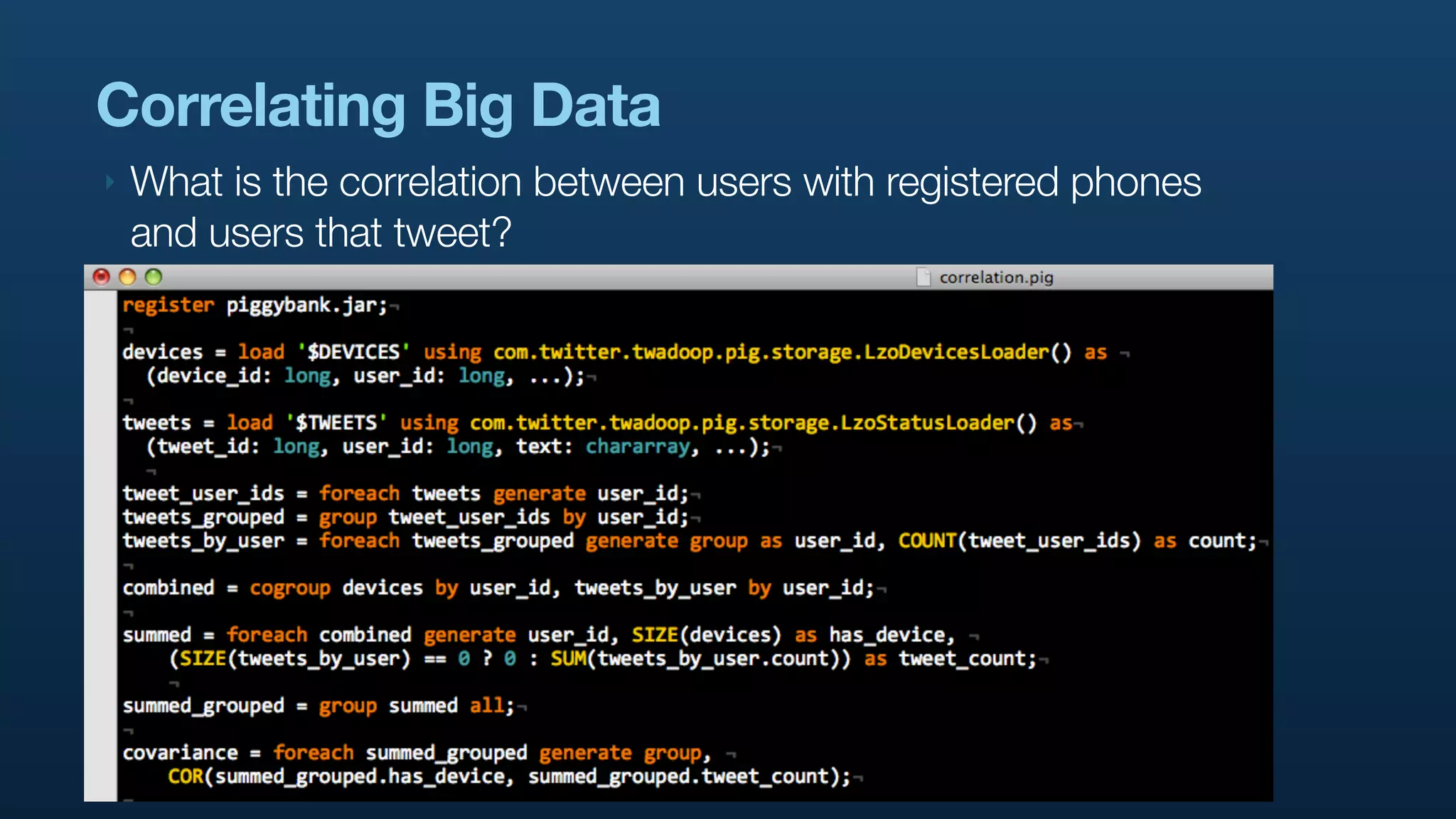

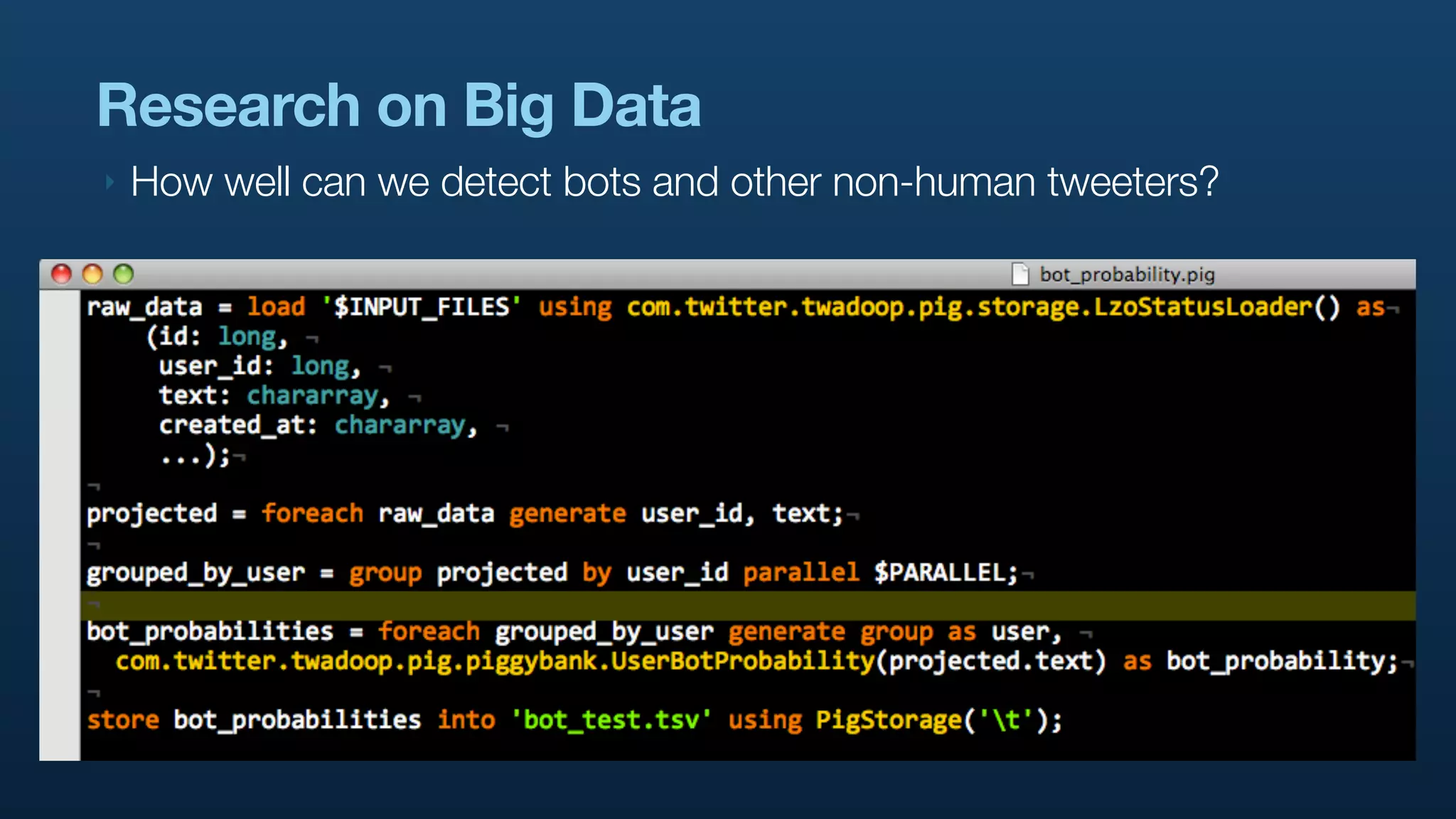

The document discusses the use of Hadoop and Pig for large-scale data processing at Twitter, highlighting the rapid growth of data and the need for efficient analytics. It emphasizes the advantages of Pig over traditional MapReduce for simplifying complex data tasks and enabling faster iteration and analysis. The talk concludes with insights on how Pig democratizes data analysis and the importance of machine learning and research on big data.