This document discusses GStreamer support and integration in WebKit. It covers:

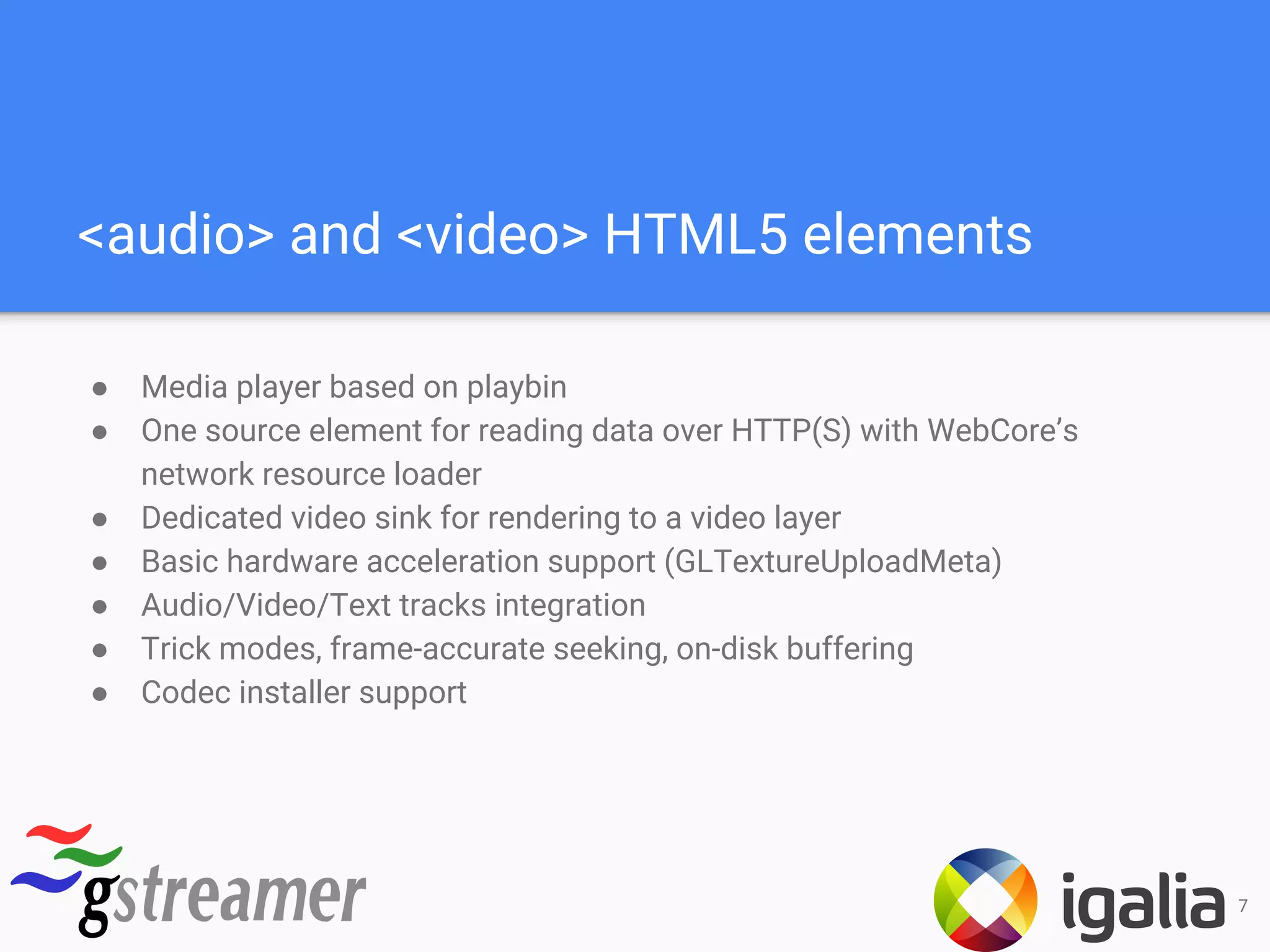

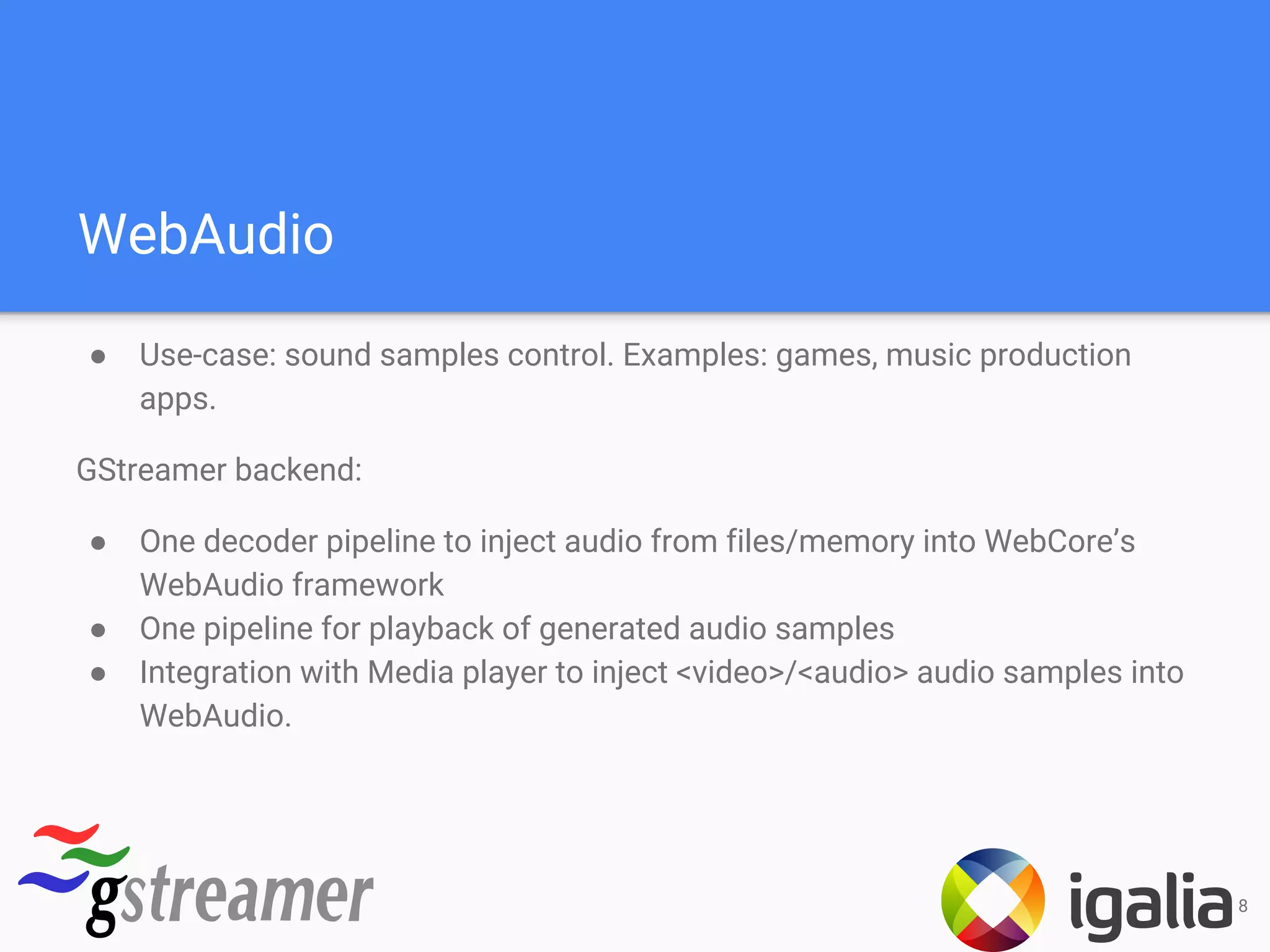

1. The current integration of GStreamer for HTML5 audio and video playback, as well as WebAudio.

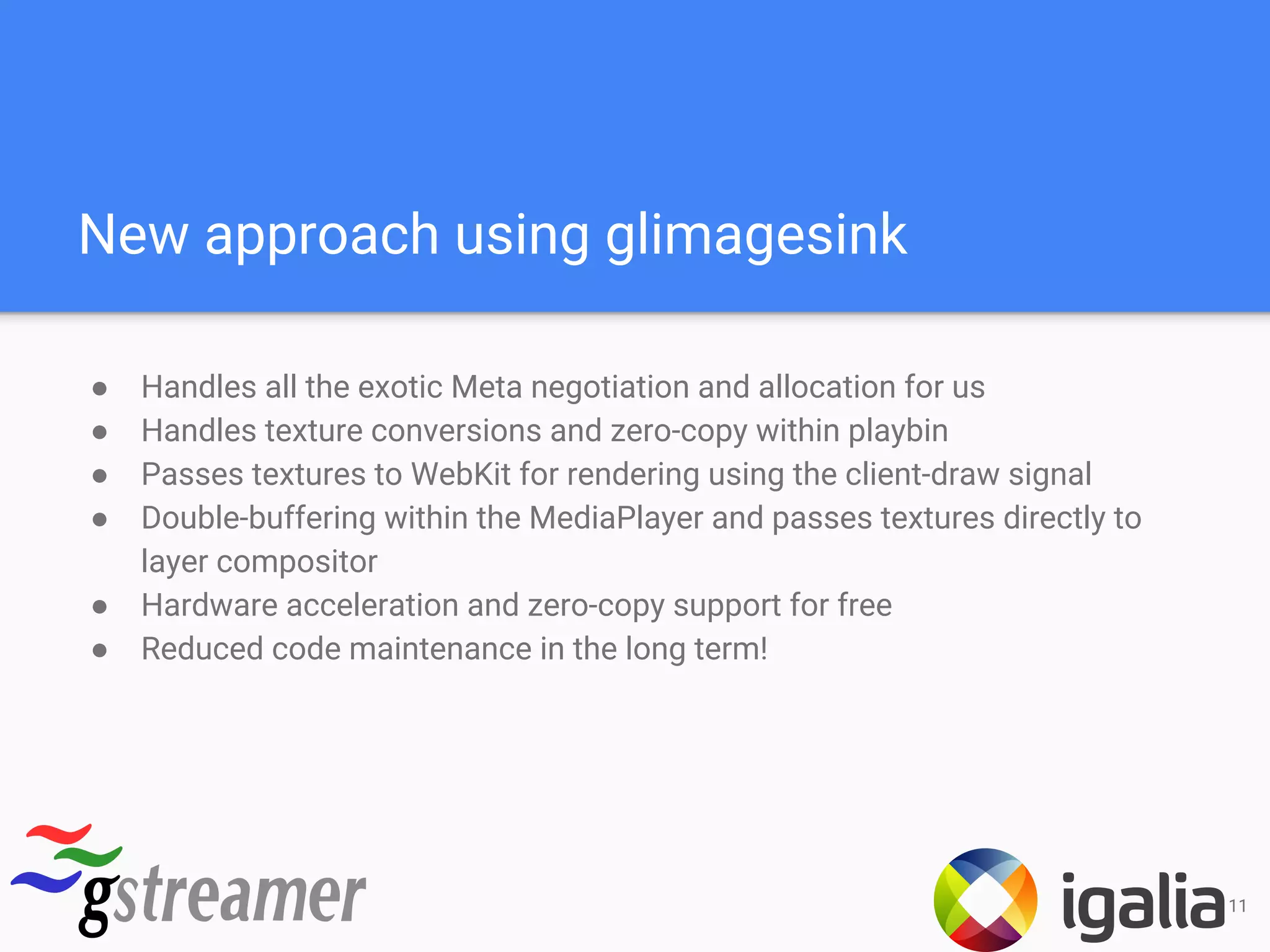

2. Plans for next-generation video rendering using GstGL to leverage hardware acceleration.

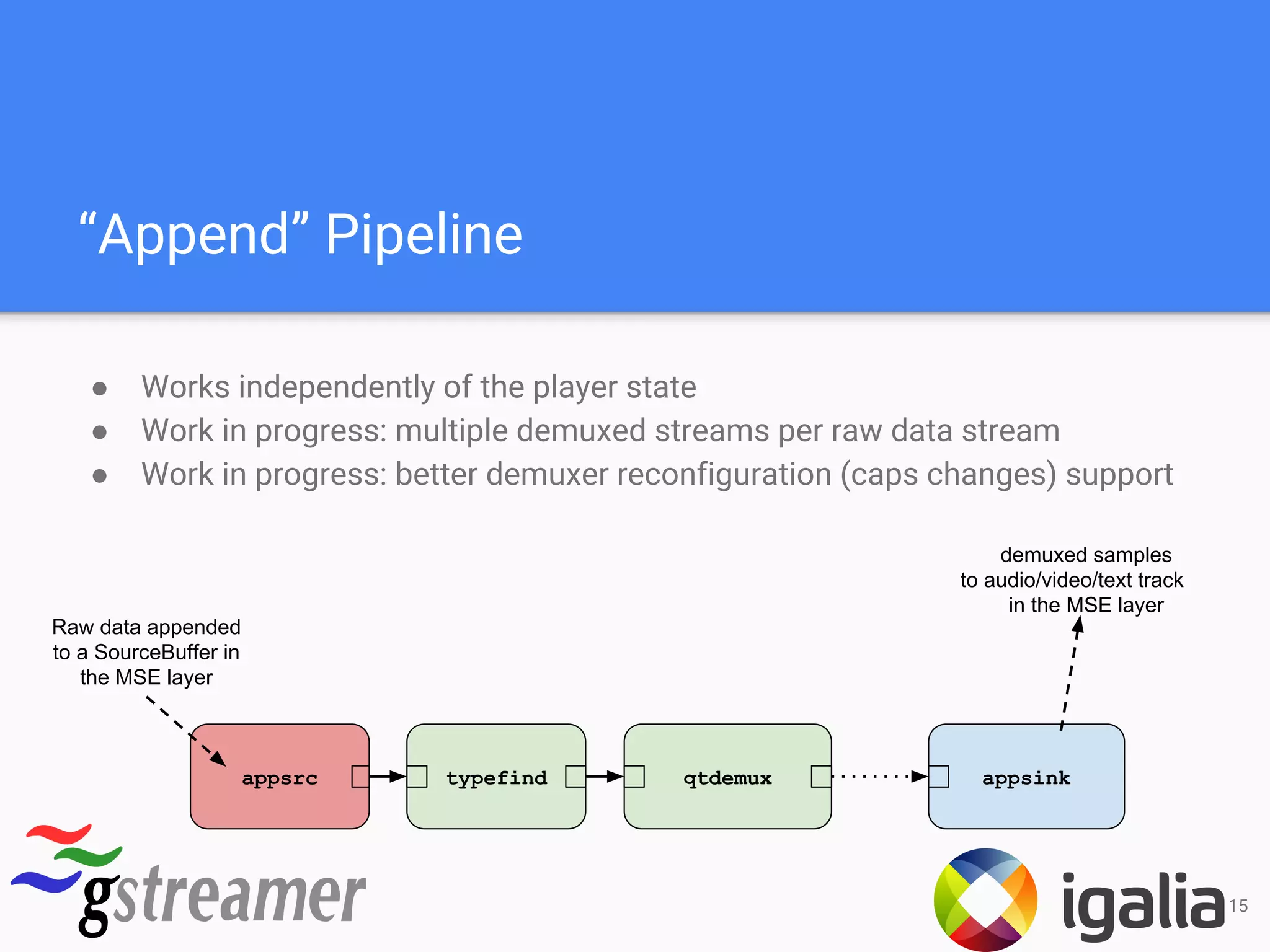

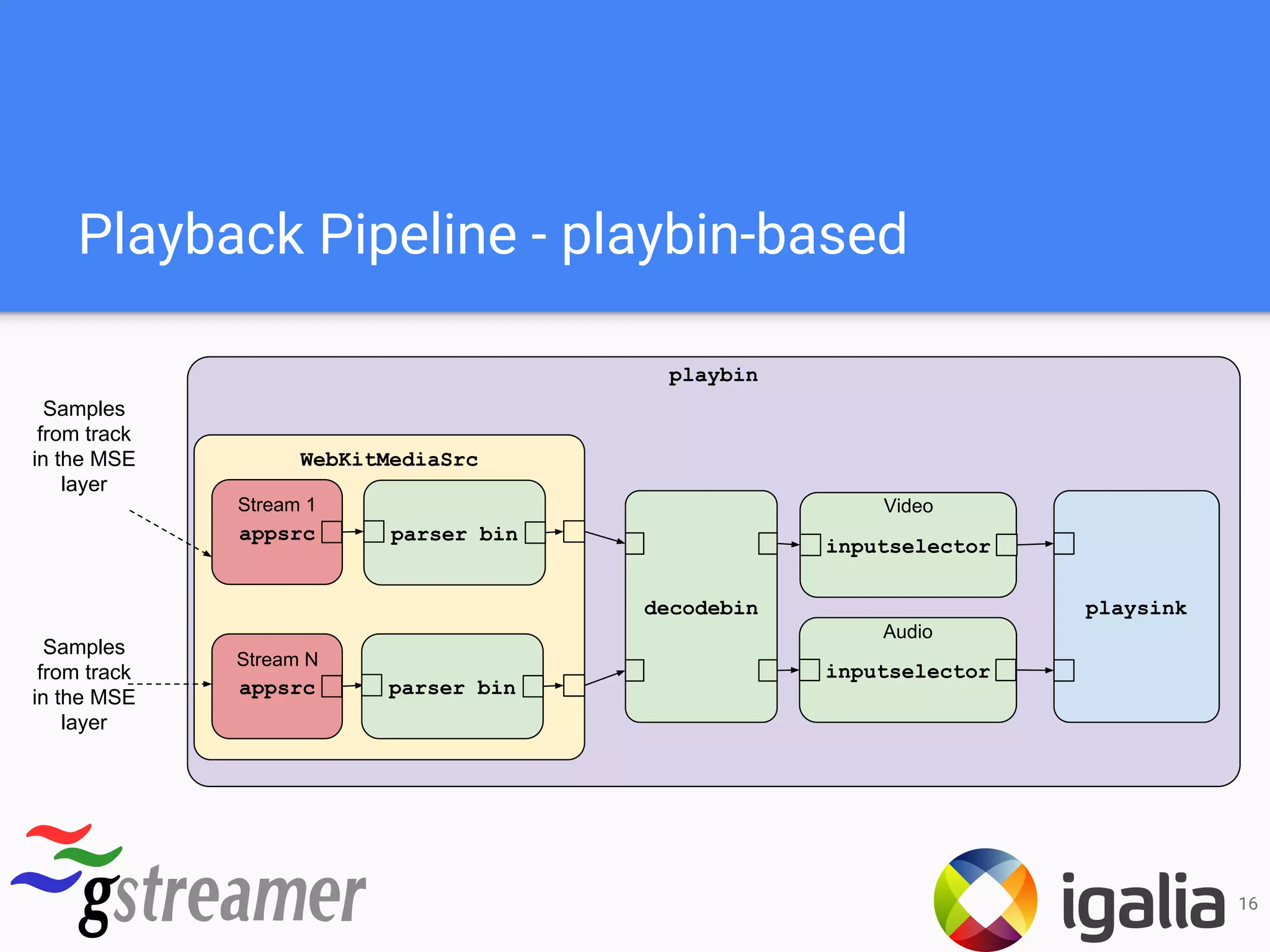

3. Support for adaptive streaming using Media Source Extensions and Dash playback via GStreamer.

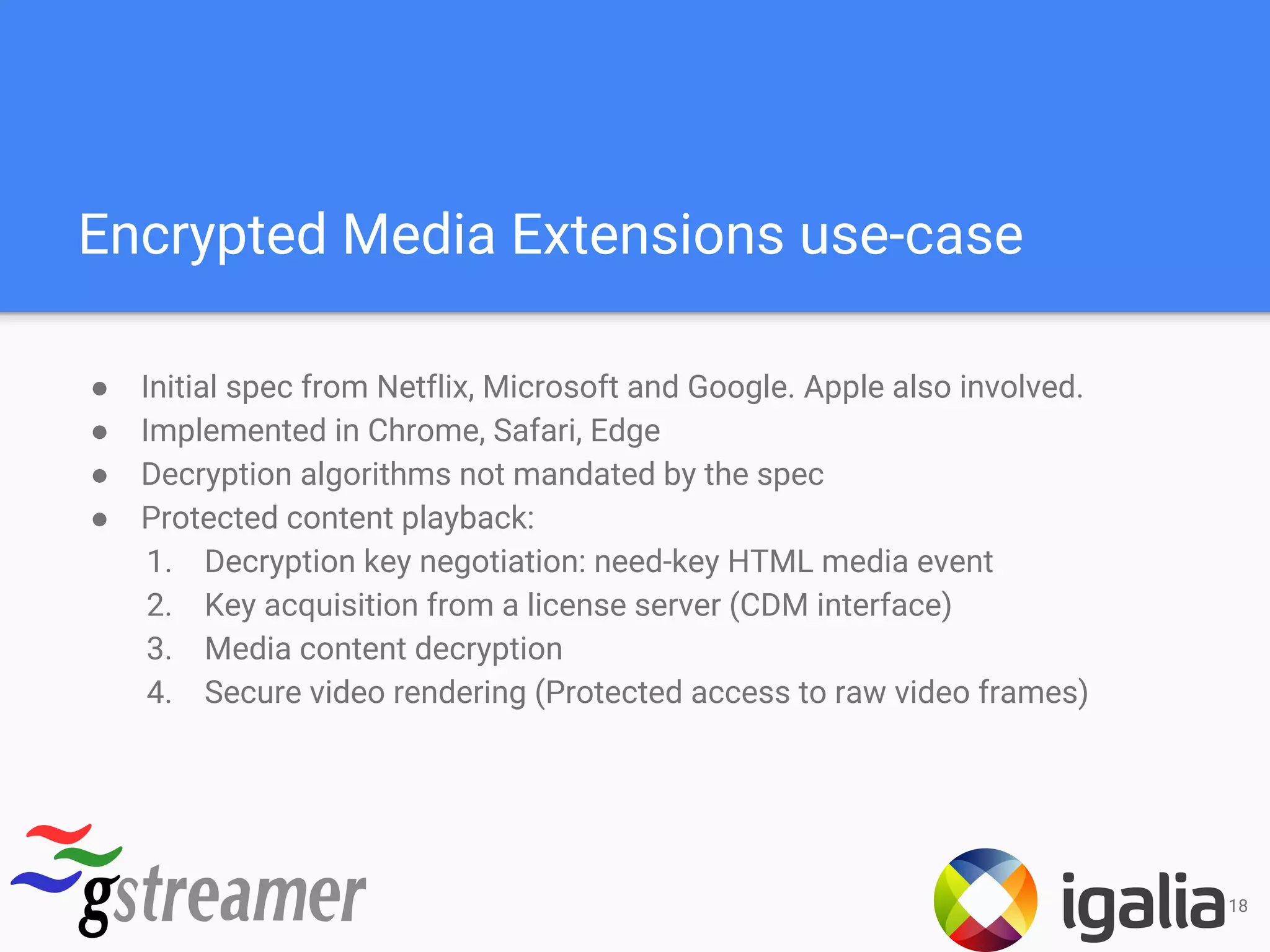

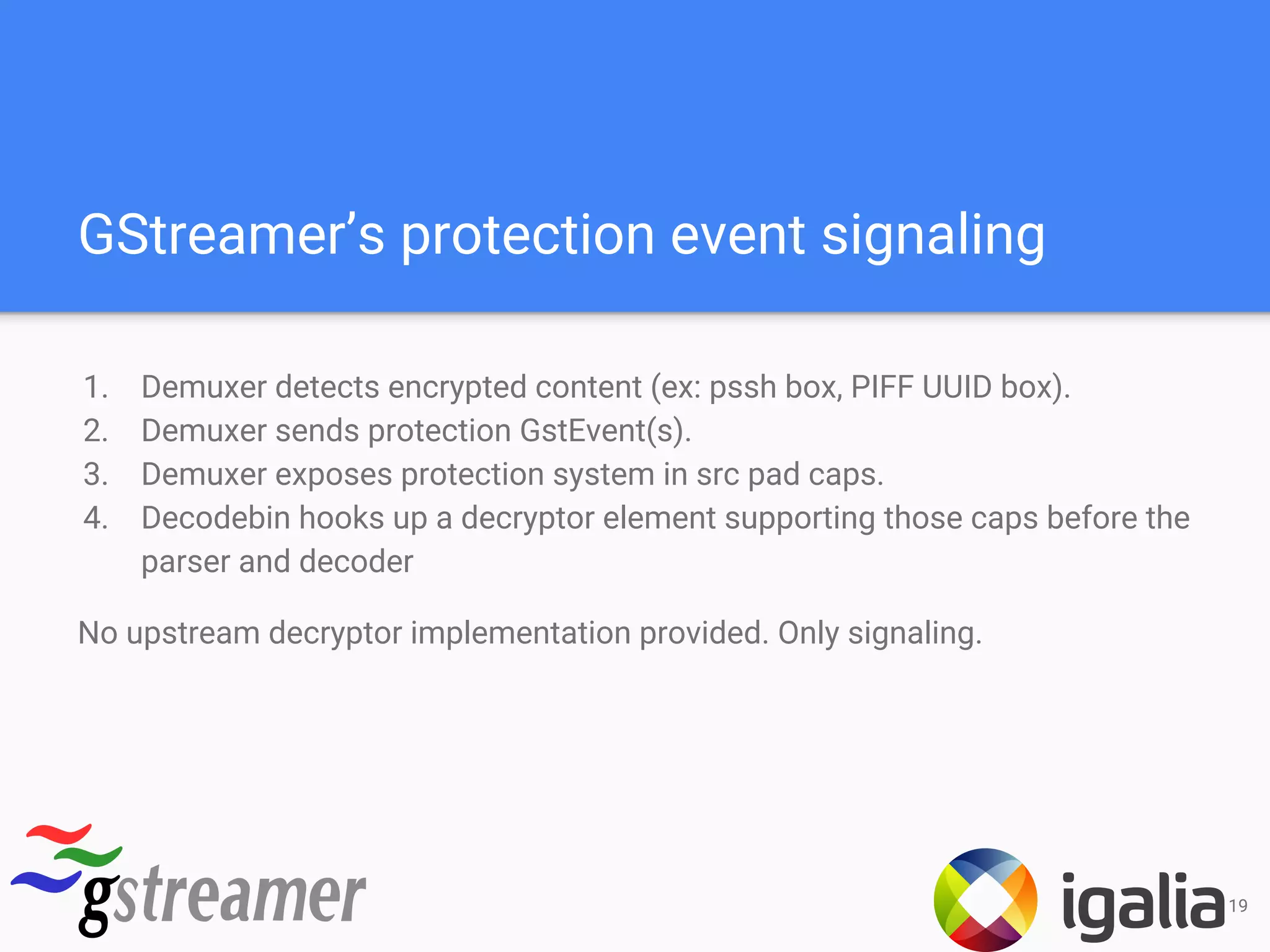

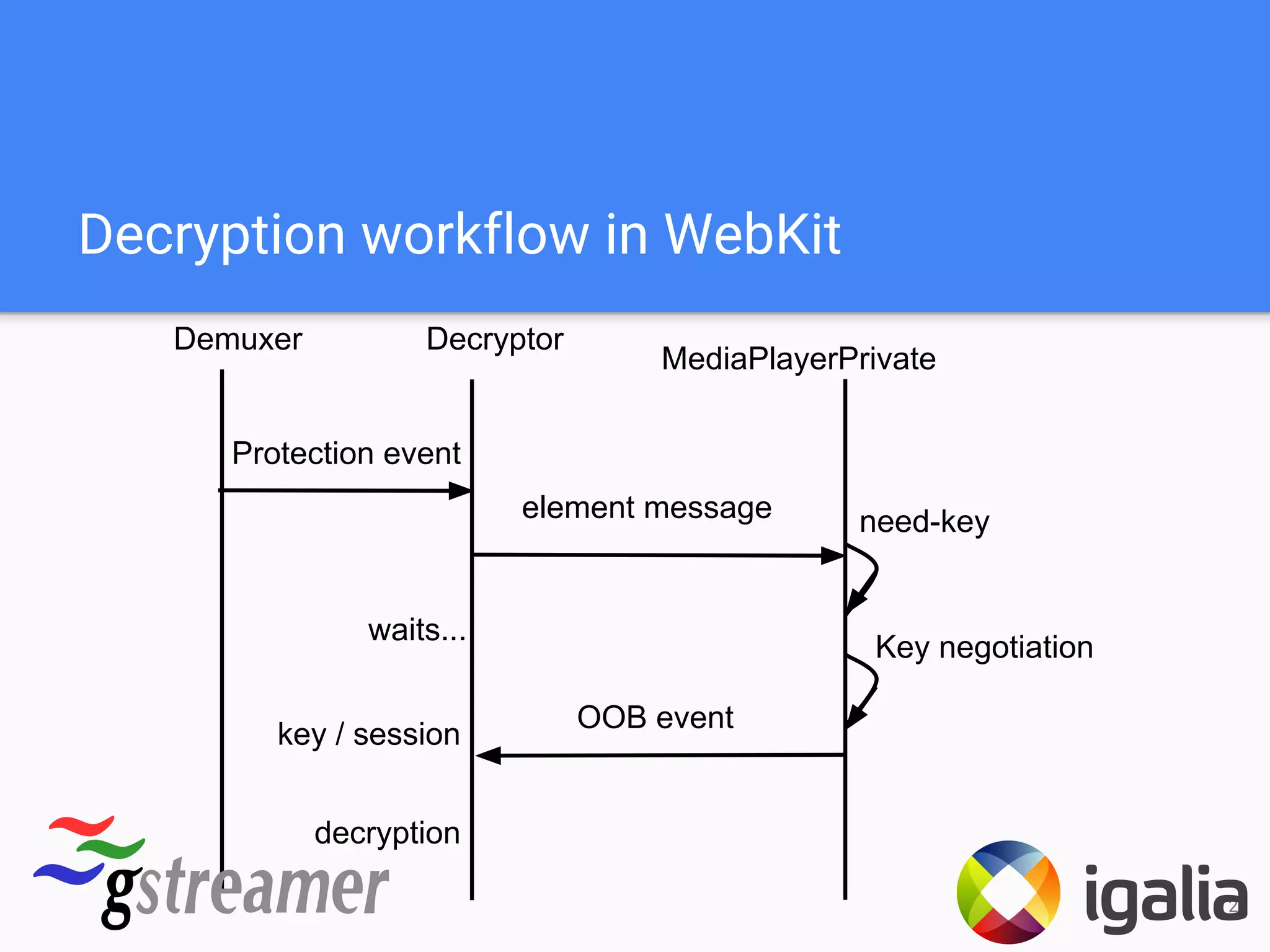

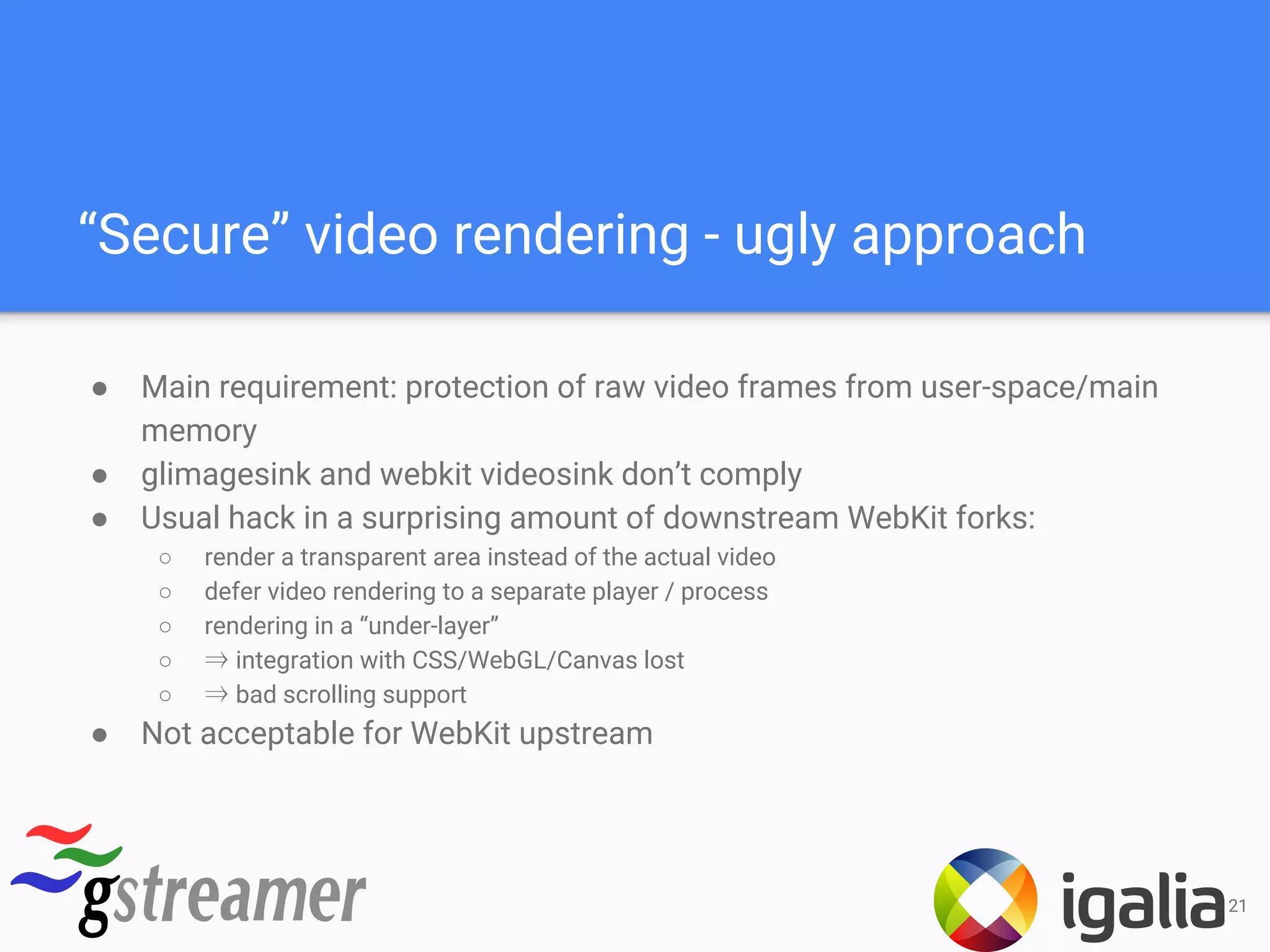

4. Encrypted media playback support via Encrypted Media Extensions for DRM protected content.

5. Progress on WebRTC integration for real-time communication capabilities.